1. Introduction

Network slicing is a key technology in the 5G networks ecosystem. It allows the creation of virtual network instances tailored to specific requirements [

1]. With its own set of network resources, characteristics, and capabilities spanning several distributed domains. To ensure the interoperability and consistency of network slices across different network segments, standardization bodies such as the Third Generation Partnership Project (3GPP) and the European Telecommunications Standards Institute (ETSI) have defined specifications and standards which include functional architectures, interface specifications, and management frameworks [

2]. These standardization efforts provide a solid foundation for developing and deploying interoperable network slicing solutions across multi-domain and multi-tenant environments that can support a wide range of use cases.

Network slicing is particularly important to support heterogeneous vertical applications with diverse performance requirements, such as emergency communications, enhanced mobile broadband (eMBB), ultra-reliable low-latency communications (URLLC), and high-capacity services enabled by technologies such as massive MIMO. These scenarios require dynamic and efficient resource allocation mechanisms capable of adapting to rapidly changing traffic demands while maintaining strict service-level agreements. Recent works have demonstrated the benefits of AI-driven resource management and network slicing solutions for supporting heterogeneous services, including deep reinforcement learning approaches for massive MIMO resource allocation and dynamic RAN slicing for URLLC and eMBB services [

3,

4]. Such scenarios further motivate the need for predictive and adaptive orchestration mechanisms such as the one proposed in this work.

Effective lifecycle management plays a crucial role in network slicing, as it encompasses not only the creation, configuration, activation, deactivation, and termination of slices but also the continuous monitoring and reporting of network slices status to ensure that performance requirements are achieved. Technologies such as Software-Defined Networking (SDN) and Network Function Virtualization (NFV) significantly contribute to enable efficient and flexible lifecycle management. These technologies bring their programmability and capacity to enable the dynamic configuration and deployment of network resources and services, ensuring the flexibility and scalability required for network slicing. Resource allocation is another crucial aspect of network slicing. Effective resource allocation is essential for ensuring network slices achieve the performance objectives while optimizing resource utilization. Different resource allocation techniques have been proposed, ranging from static resource allocation, which assigns resources based on predefined policies, to dynamic resource allocation, which adapts to the demand in real-time.

Resource allocation techniques must be applied to the different network segments involved in network slices: radio, access, transport, and core networks, as well as edge and core clouds. The variety of infrastructure ecosystems that compose these segments makes the process of planning, allocating, and managing the resources assigned to network slices a very complex task. This complexity is further amplified in emerging 6G scenarios that integrate terrestrial and non-terrestrial networks (NTNs), requiring unified frameworks for dynamic traffic management across heterogeneous network segments [

5]. For this purpose, appropriate orchestration techniques and platforms must be used in network slice management and orchestration frameworks, especially for environments with limited resources.

To ensure optimal performance of VNFs, service providers and vertical customers often adopt an over-provisioning approach, ensuring reliable service delivery even during periods of high traffic demand. As a result, this inefficiency hinders the effective utilization of network resources, constraining Mobile Network Operators (MNOs) from provisioning new network slices to a broader range of vertical customers, particularly in resource-constrained environments, such as edge computing.

The solutions proposed are focused on applying cloud computing scaling techniques to the VNFs, assuming the availability of sufficient resources in the computing domains to cope with the increase in resource demand. The challenges of allocating resources are well-known as Nondeterministic Polynomial (NP)-hard problems [

6]. These issues have been tackled through mathematical techniques, including Integer Linear Programming (ILP) and Mixed ILP (MILP), as documented in previous research [

7,

8].

However, the existing approaches still need to fully address the challenge of optimizing resource utilization in scenarios where scaling procedures are impractical due to resource limitations. In those cases, new scalability mechanisms could profit from resources already assigned but underutilized. For that purpose, efficient machine learning based algorithms are needed to predict the evolution of resource usage, as well as new mechanisms to allow the sharing of resources among VNFs.

While previous works such as [

3,

4,

5,

6,

7,

8] address resource management and network slicing orchestration from different perspectives, most existing solutions primarily rely on reactive mechanisms or focus on isolated optimization stages. In contrast, the proposed approach integrates predictive resource estimation with a reactive VNF sharing mechanism within a standardized orchestration framework. This combination enables proactive scaling decisions while maintaining adaptability during runtime, thus improving orchestration efficiency under dynamically changing service demands and network conditions.

This paper contributes to the study of network slice VNF resource optimization problem in resource constrained situations. A machine learning based VNF resource sharing scheme is proposed, as well as a hierarchical monitoring system which provides real-time information for resource optimization. Although the use of machine learning algorithms is an essential approach, it is not the central innovation in this study. The proposed model implements optimization functionalities based on proactive schemes, such as deep learning techniques for CPU resource usage prediction and reactive ones for resource sharing between VNFs.

Contributions

The proposed system for predictive network slicing resource orchestration comprises the following key contributions:

An extended closed-loop platform to enable optimal zero-touch resource lifecycle management of network slices, compliant with 3GPP and ETSI standards.

A hierarchical monitoring system seamlessly integrated with 3GPP’s network slicing management functions that continuously feed the resource optimization system with real-time data.

A resource optimization module that includes: deep learning schemes to predict resource consumption; machine learning schemes to profile and characterize VNFs; and reactive algorithms to share resources between VNFs. This module is proposed as a new component of the 3GPP network slice management architectural framework.

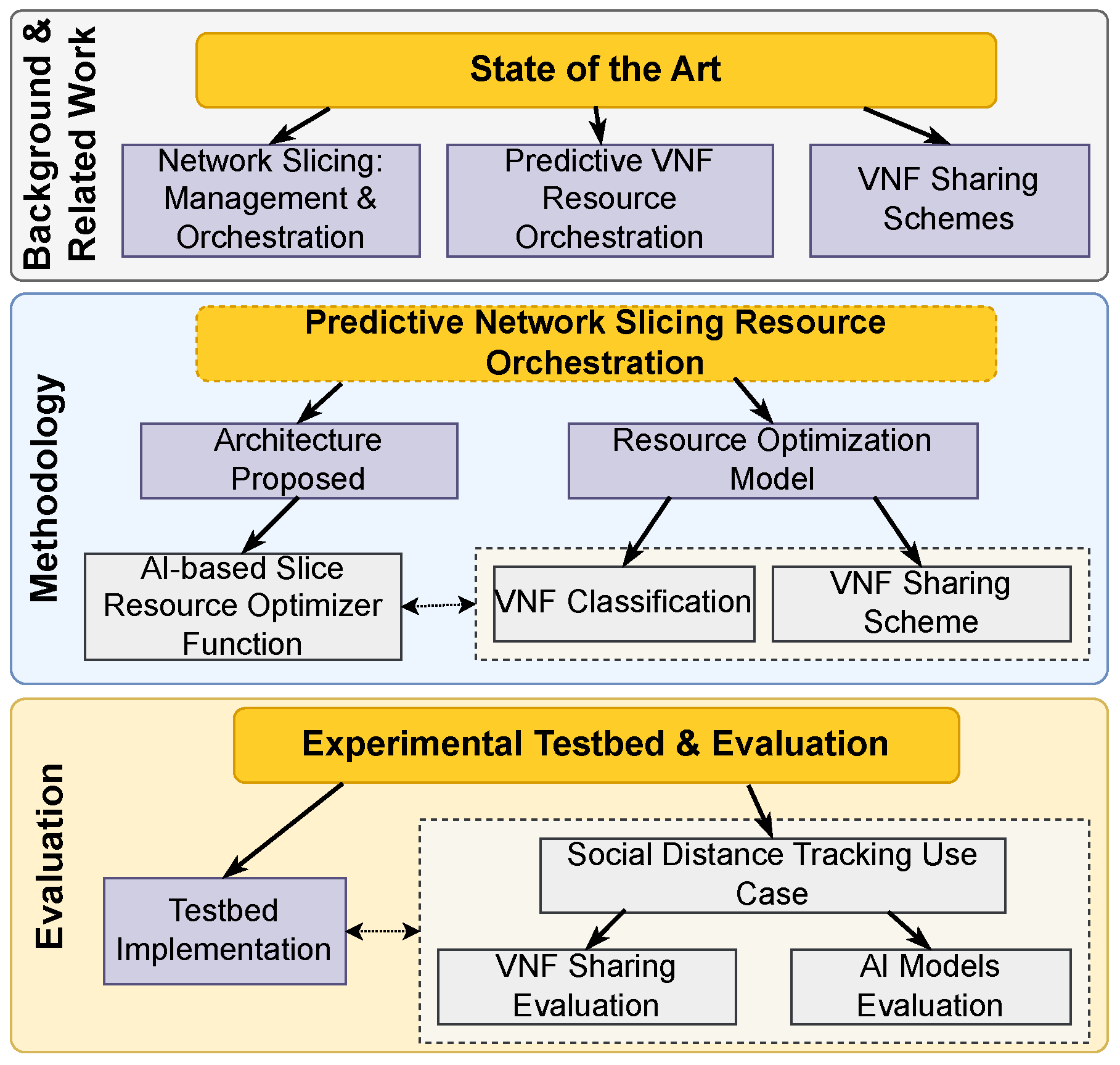

The structure of the paper is depicted in

Figure 1 and is organized as follows:

Section 2 presents the background literature on the management and orchestration of network slices in NFV environments and provides a detailed survey on predictive VNF resource orchestration and VNF-sharing schemes.

Section 3 introduces the architectural framework of the proposed system.

Section 4 describes the architecture of the AI-based slice resource optimizer, where the workflow of metrics and data used to train the deep learning models is presented. In addition, the resource optimization model based on VNF classification and VNF sharing principles is detailed in

Section 5.

Section 6 describes the experimental testbed configuration, while

Section 7 presents the architecture of the proposed deep learning models evaluated through a real use case. The results of the system model, together with the evaluation of the deep learning algorithms, are presented in

Section 8. Finally, conclusions and future work are summarized in

Section 9.

2. Backgrund and Related Works

This section describes the main concepts related to the architectural frameworks for the network slicing lifecycle management and orchestration. In addition, a survey on the VNF resource orchestration and VNF sharing schemes is presented.

2.1. Network Slicing: Management and Orchestration

Several telecommunications standardization bodies have defined concepts and architectural frameworks related to the management and orchestration of network slices [

9,

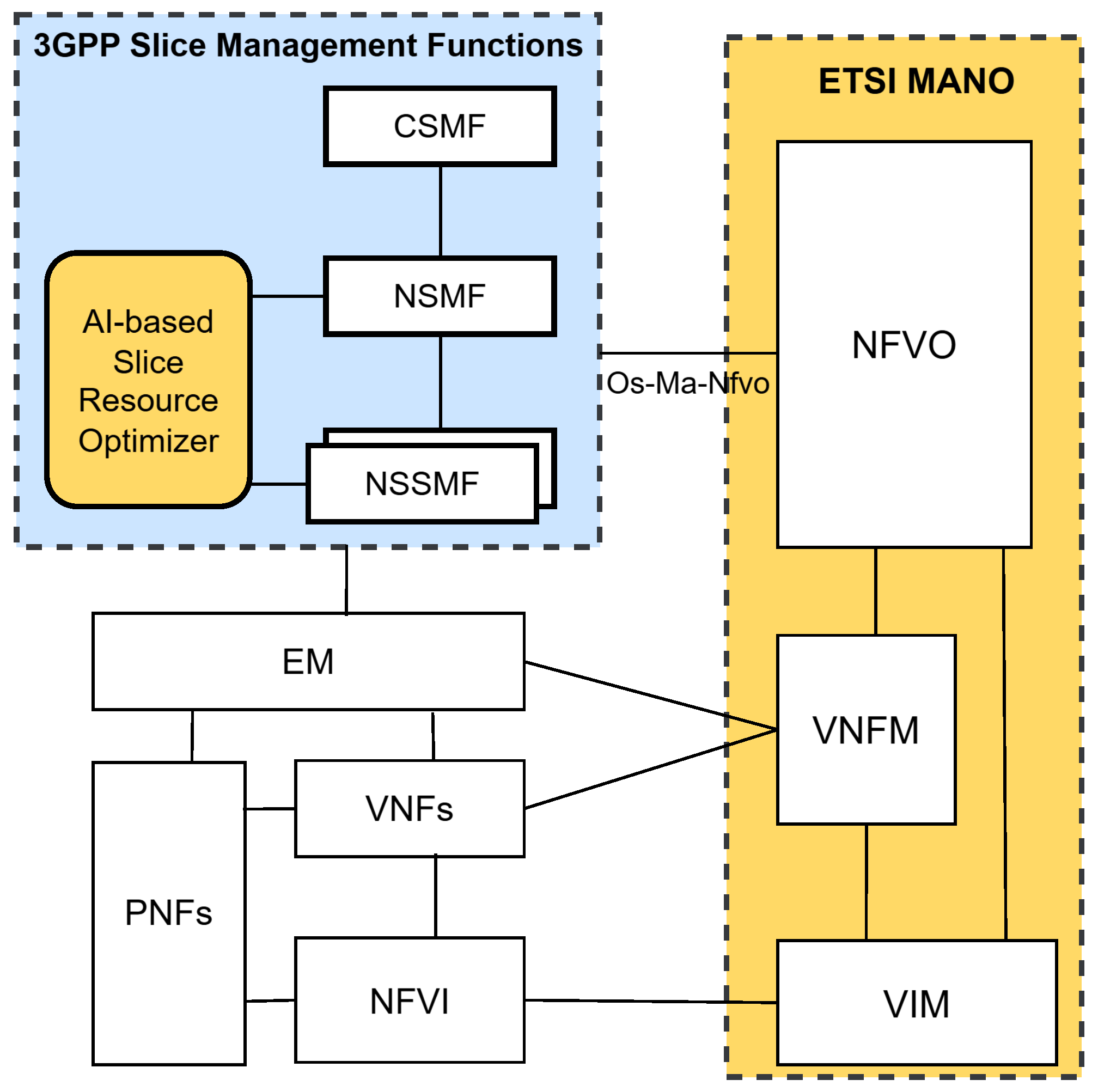

10]. This work focuses on combining of the ETSI Management and Orchestration (MANO) architectural framework for managing VNFs and the 3GPP network slicing management model to provide optimal resource allocation for VNFs utilizing AI-based network slice orchestration. In [

2], ETSI defines a model for integrating the ETSI NFV MANO framework and the 3GPP network slicing management model, as shown in

Figure 2.

The 3GPP network slicing management model is composed of three main management functions: the Communication Service Management Function (CSMF), the network slice management function (NSMF), and the Network Slice Subnet Management Function (NSSMF) [

11]. CSMF allows verticals and end users to interact with the management and provision system to design and configure network slices that will be instantiated over the network operator’s infrastructure. CSMF sends the service profile created by users to NSMF as a Network Slice Template (NEST). NEST includes information about the network slice performance parameters such as coverage, QoS, isolation, and traffic policies [

12]. NSMF reads and translates the attributes defined in NEST into subnet descriptors that are comprehensible and managed by the NSSMFs of each network segment, which may include access, transport, and cloud networks. The NSSMFs interact with the controllers responsible for managing the allocation of physical and virtual resources within each network segment to create and configure the subnet slices using the descriptors specified by the NSMF.

The main components of ETSI MANO are the NFV Orchestrator (NFVO), the VNF Manager (VNFM), and the Virtual Infrastructure Manager (VIM). NFVO is the main component that orchestrates and manages the VNFs’ lifecycle through the interaction with the VNFM and VIMs. Actions, operations, and configurations executed during the VNF instantiation and runtime stages are performed by VNFM. Finally, network services and VNFs are defined by standardized descriptors.

NSSMFs can use VNF descriptors to define slice subnet instances in cloud domains [

13]. Using the Os-Ma-nfvo reference point, NSSMFs can determine whether the cloud environments support the requirements of a network slice instance and orchestrate virtualized services. Proper mechanisms are needed in multi-domain and multi-tenant environments, so each tenant can manage the slice resources through isolated or independent components [

14]. These mechanisms need to allow tenants to manage the lifecycle of VNFs distributed over several computing domains and perform optimization operations in the allocated resources to their slices. In this regard, both academia and industry organizations such as 5G Public Private Partnership (5G-PPP) have proposed architectural platforms and mechanisms that optimize the lifecycle operations of VNFs (e.g., placement, deployment, scheduling, scaling, and migration) to meet and ensure service performance [

15,

16]. For example, 5G-TRANSFORMER (

https://5g-ppp.eu/5g-transformer/, accessed on 23 February 2026), 5GROWTH (

https://5g-ppp.eu/5growth/, accessed on 23 February 2026), and 5G-MonArch (

https://5g-monarch.eu/, accessed on 23 February 2026) European Horizon 2020 (H2020) projects have focused their efforts on designing innovative computational platforms that enable the simultaneous management and provision of heterogeneous communication services required by various vertical customers (e.g., Industry 4.0, automotive industry, and Virtual MNOs (MVNO) through the customization of 5G network slices based on SDN/NFV technologies [

17].

Several frameworks have addressed the challenges of multi-agent coordination and automated embedding. In [

18], a multi-agent deep reinforcement learning (MADDPG) framework is proposed to enhance adaptive resource allocation in 5G networks, highlighting the impact of actor-critic relationships in slice management. Similarly, the work in [

19] introduces a branch-and-bound optimization framework with flexible VNF ordering, demonstrating that relaxing fixed sequence constraints significantly improves resource utilization. Furthermore, an automated edge slicing approach using federated learning is presented in [

20], where slice templates are predicted through a distributed learning model to reduce orchestrator overhead and ensure zero-touch principles at the edge.

2.2. Predictive VNF Resource Orchestration

Through the implementation of dynamic resource allocation techniques [

21], network slice management can effectively adapt to the evolving resource requirements of VNFs during runtime, thereby addressing the temporal variability in resource demand.

Several works have been proposed on the automation of VNF deployment, scaling, migration, and resource allocation using time series-driven machine learning techniques [

22]. For example, in [

23], predictive VNF autoscaling in multi-domain 5G networks is proposed, comparing centralized and distributed artificial intelligence resource orchestration techniques based on historical traffic demand. But network slicing or edge computing environments are not taken into consideration. In [

24] and [

25], evolutive and recurrent neural networks are proposed to predict host CPU utilization over NFVI environments. However, these works do not take into consideration lifecycle management of network slices or procedures to assure VNF performance when resources get exhausted. In [

26], a workload prediction system for cloud domains based on machine learning and time-series datasets is presented. But the proposal lacks concrete approaches on integrating these techniques in network slicing management and orchestration systems.

Other works have focused on efficiently allocating resources to Virtual Machines (VMs) by profiling and predicting the performance of VNFs using machine learning algorithms. Resources such as CPU, RAM, storage, and network bandwidth used by the VNFs during the runtime are studied in [

27,

28,

29] to select key performance indicators that can be the basis of VNF profiles allowing service providers to optimize resource allocation. In [

30,

31,

32,

33,

34], VNF resource requirements estimation is based on dynamic traffic flow conditions, using different algorithms such as Support Vector Regression (SVR), Long Short-Term Memory (LSTM), and Feed-Forward Neural Networks (FNNs). However, these works do not address the need for closed-loop management and orchestration systems based on monitoring systems that would feed optimization functions based on machine learning predictions.

Another aspect that must be considered is compliance with the Service Level Agreement (SLA). Robust monitoring systems are necessary to meet the network service level objectives managed by the orchestration systems [

35]. In terms of network slicing, AI-based controllers should be adapted to the network slicing management systems to react autonomously, considering the required network service level. In [

36], an intelligent control layer powered by deep learning models is proposed for Vehicle to Everything (V2X) services that span multiple domains. The work discusses the automation of network slicing deployment based on historical data of vehicular networks. However, the proposed network slicing architecture is not aligned with the 3GPP specification. In [

37], an intelligent network slicing lifecycle management architecture for 5G mobile networks is proposed. The proposal defines an intent-based component for providing well-defined network slice configurations with zero-touch principles. The solution implements deep learning models together with scaling mechanisms to automate resource management during the VNF runtime. The present work differs from [

37] by implementing an extended 3GPP network slicing management architecture incorporating resource optimization functions. Also, this manuscript provides an alternative to reuse available resources from other VNFs by applying VNF-sharing schemes based on artificial intelligence.

Predictive orchestration has evolved toward hybrid and graph-based modeling to capture complex traffic patterns. A hybrid architecture combining Gated Recurrent Units (GRU) and Transformers is explored in [

38] for dynamic IoT slice orchestration, showing improvements in minimizing packet loss through real-time resource adaptation. To address spatio-temporal correlations among network entities, the authors in [

39] propose an end-to-end model integrating Graph Neural Networks (GNNs) and LSTM, which accurately predicts both VNF and link states within the 5G Core. Additionally, the challenge of intelligent profiling is addressed in [

40], where reinforcement learning is utilized to characterize the link between resource allocation and performance, enabling autonomous orchestration based on online multi-objective profiling. Furthermore, emerging quantum computing paradigms are being explored for network slicing resource management, where quantum deep reinforcement learning agents can optimize resource allocation with fewer parameters and enhanced computational efficiency [

41].

2.3. VNF Sharing Schemes

The VNF sharing scheme has been introduced in the literature as an alternative to the traditional scaling procedures, not only to meet the SLAs contracts, but also to optimize the computing resources in scenarios where several VNF-based network services coexist in NFVI domains with limited resources. The VNF sharing principle is a technique used when a high traffic demand exhausts the resources of a specific VNF, to leverage computing resources from other VNFs providing similar services. This sharing scheme can be applied when insufficient resources are available within the NFV domain to perform traditional vertical and horizontal scaling procedures. VNF sharing is a key mechanism in addressing resource constraints in NFV environments, allowing for more efficient use of available computing resources and improving service delivery capabilities to end- users. The VNF sharing scheme provides a significant advantage to service providers and network operators by reducing the operational costs associated with the deployment and runtime lifecycle stages. By leveraging shared computing resources, improved NFV infrastructure scalability, greater operational efficiency, and reduced capital expenditure can be achieved. In this sense, the authors in [

42] propose a mathematical algorithm to enable VNF sharing based on traffic prioritization. The paper focuses on reducing costs by sharing VNFs among several network services requiring the same VNFs deployed on a single NFVI.

Similarly, in [

43], an automatic monitoring system that implements a mathematical mechanism to share resources between VNFs in one node is defined. The work proposes a resource allocation mechanism to optimize the VNF requests and deployment. The algorithms meet the VNFs requirements that compose network services, maximizing the request acceptance and avoiding delays on sensitive services. The solutions proposed in [

42,

43] differ from the present work in the use of reactive algorithms, i.e., they act when the resource threshold is reached. The present work relies on future predictions to anticipate resource sharing actions.

Recent studies have also explored decentralized and security-centric frameworks for resource sharing. Specifically, in [

44], a blockchain-based framework is proposed to enhance resource sharing and access control during VNF instantiation, utilizing a Stackelberg game model to balance costs and utility. From a security perspective, the work in [

45] introduces a security-aware VNF sharing model that optimizes resource utilization while ensuring slice isolation through specific constraints on traffic exposure and untrusted access. Furthermore, for heterogeneous environments such as air-ground integrated networks, a task-similarity-based VNF aggregation scheme is presented in [

46] to achieve load balancing and minimize resource consumption before service chain mapping.

In addition, automating decisions and reducing time delays associated with the placement of network services is a well- recognized challenge in the field. The authors in [

47,

48] propose a priority-based VNF-sharing algorithm to reduce costs and maximize the resources during the service deployment time. The scheme considers different aspects to solve the problem, such as traffic load, service categories, and service completion time. The mathematical solution in [

48] uses an integer quadratically constrained program model to minimize the deployment cost by sharing VNFs while meeting the QoS parameters. In [

47], the authors use an ILP scheme to address the same context. Neither of these works consider multi-domain and multi-tenancy contexts where several network services belonging to network slices are deployed in the same infrastructure domains.

Similarly, the work [

49] proposes VNF sharing schemes to optimize the service scheduling process. For this purpose, the mathematical model includes a weight-based VNF sharing approach to improve the consumption of computing resources. This work uses a benchmarking approach to evaluate and compare with alternative approaches. Finally, in [

50], the authors explore an interesting scenario of sharing virtualized cache functions among Internet Service Providers (ISPs) that share a common physical infrastructure. The discussion is mainly focused on the implications of limiting the storage capacity at the edge sites when deciding to share the caches among ISPs. Simulations to investigate the potential efficiencies that can be gained by sharing a virtual cache function compared to the traditional approach of independent virtual caches operated by each ISP are highlighted.

In this light, the VNF sharing model presented in this work differs from the conventional mathematical methods found in the literature. To the best of our knowledge, no prior studies have followed a similar methodology combining the algorithms proposed here for VNF resource sharing.

Table 1 summarizes the features of the different VNF sharing approaches compared with the work presented. The proposed model integrates deep learning algorithms into the 3GPP network slicing management systems, enabling the prediction of computing resource consumption and facilitating VNF sharing among network services. By accurately predicting computing resource consumption, the model can help network operators to avoid overprovisioning. Through its ability to enable VNF sharing, the model can enhance the scalability and flexibility of network services, ultimately improving the delivery of high-quality services to end-users.

From the reviewed literature, it can be observed that most existing solutions primarily focus on predictive allocation or reactive orchestration mechanisms independently, with limited attention to scenarios involving constrained infrastructure resources or services exhibiting highly variable resource consumption patterns. Consequently, current approaches may struggle to maintain orchestration efficiency when resource availability becomes limited or service demand fluctuates significantly over time. To address this gap, the approach proposed in this work combines predictive VNF resource estimation with a reactive VNF sharing mechanism, enabling proactive orchestration decisions while dynamically redistributing resources among services during runtime. This integrated strategy improves adaptability and resource utilization efficiency under dynamic service conditions while remaining compatible with standardized orchestration frameworks.

3. Predictive Network Slice Resource Orchestration Architecture

This section details the architecture, main concepts, and components of the predictive network slicing resource orchestration system proposed in this article. Broadly speaking, the system extends the 3GPP network slice management framework by adding a hierarchical monitoring system and an AI-based resource optimizer function. The integration of these new components allows the prediction of VNF computational resource utilization, facilitating proactive decision-making to prevent service degradation and minimize deployment time delays.

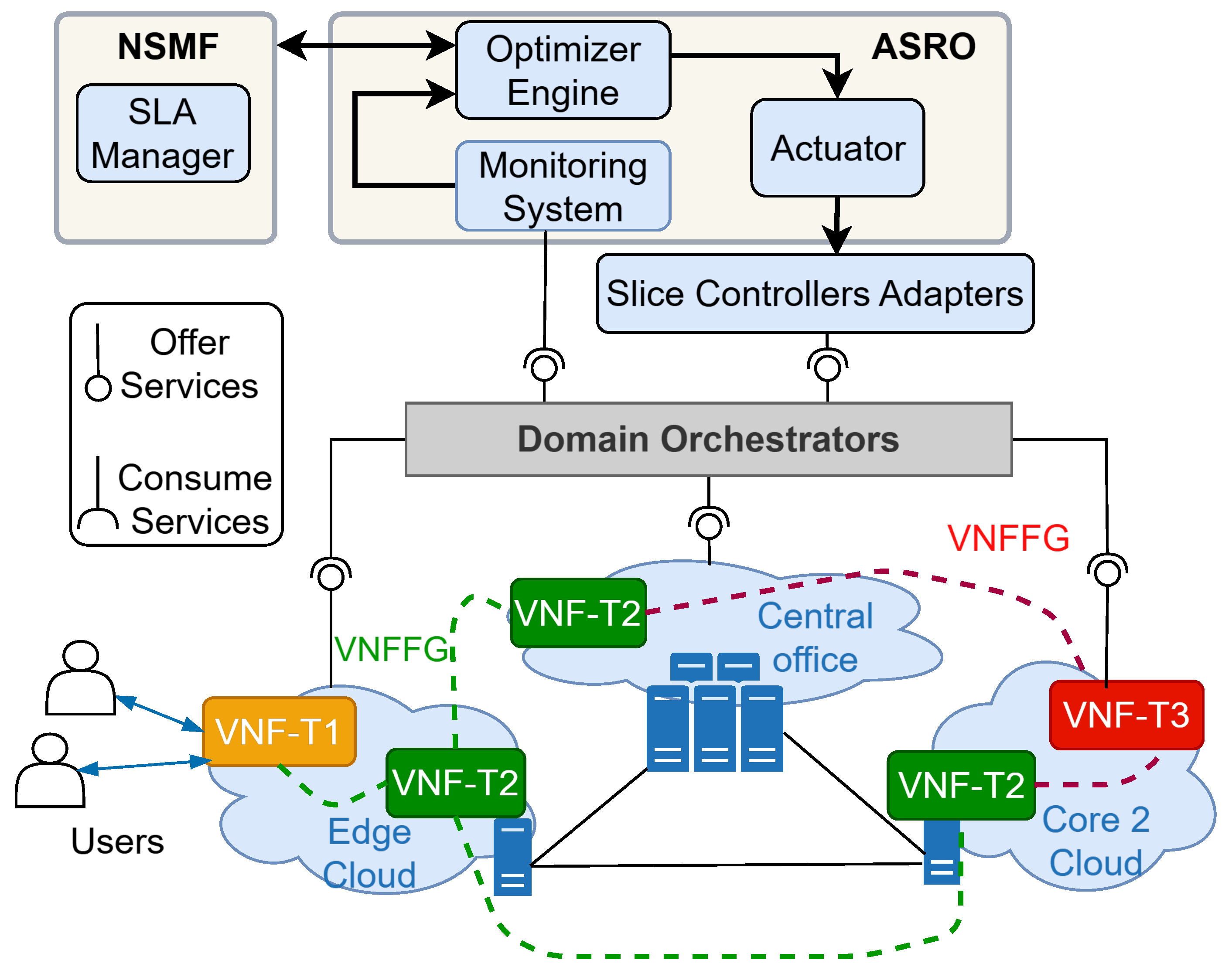

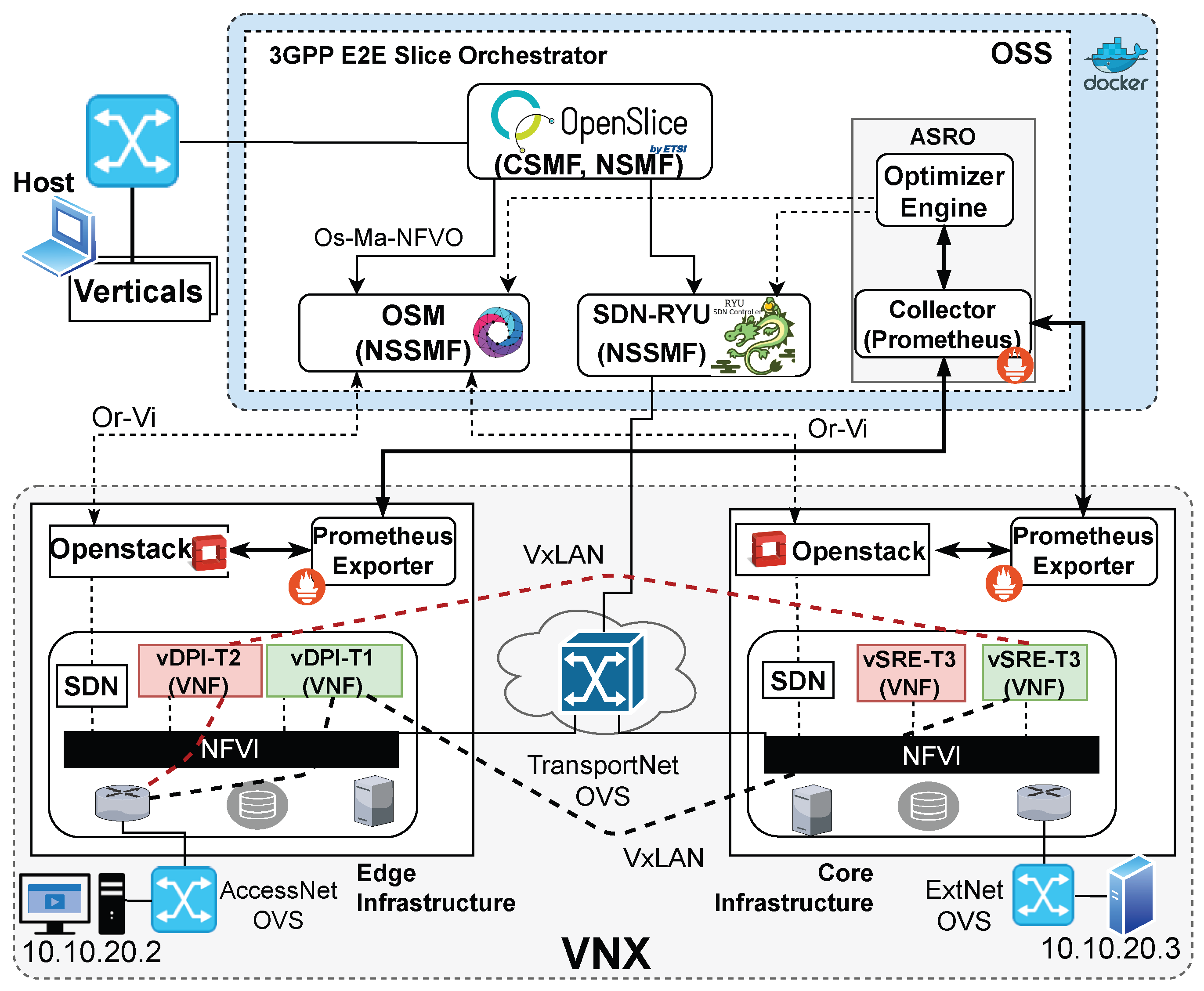

The proposed architecture is shown in

Figure 3, highlighting in red boxes the new components added to the 3GPP architecture: the monitoring agents and collectors, strategically deployed across diverse network elements and domains; and the AI-based Slice Resource Optimizer (ASRO), which uses the data obtained by the monitoring collectors to train machine and deep learning models. Specifically, the architectural framework depicted in

Figure 3 comprises two main layers: the Infrastructure layer and the Management & Orchestration (M&O ) layer.

The orchestration mechanism adopted in this work is based on the standardized distributed network slice management framework defined by 3GPP, which coordinates slice deployment and runtime operations across multiple infrastructure domains. The contribution of this work lies in extending this orchestration architecture through the integration of the AI-based Slice Resource Optimizer (ASRO), which combines predictive resource utilization estimation using deep learning models with a reactive algorithm that enables resource sharing among VNFs when scaling actions cannot be performed due to resource limitations. This combined mechanism allows both proactive and runtime optimization of slice resources while preserving SLA compliance.

While

Section 3 introduces the architectural extensions, the detailed orchestration workflows executed during slice deployment and runtime operation are illustrated later in

Section 8.1. Compared with related orchestration solutions summarized in

Section 2 and

Table 1, which often rely on static or reactive mechanisms, the proposed approach integrates predictive intelligence and runtime resource sharing within a multi-domain orchestration framework to improve resource utilization efficiency.

The proposed orchestration framework is applicable to both telco and cloud environments where virtualized network services are deployed over distributed computational infrastructures. In telecom operator scenarios, the solution operates across edge, aggregation, and core cloud domains managed through standardized 3GPP network slice orchestration components, enabling proactive resource management for network slices serving different vertical services. Likewise, the framework can also be applied in general cloud environments supporting NFV infrastructures, where virtualized network functions or cloud-native network services require dynamic and efficient resource allocation. This flexibility allows the proposed approach to support hybrid deployments combining telecom and cloud infrastructures while maintaining SLA compliance and efficient resource utilization.

3.1. Infrastructure Layer

It encompasses the complete set of physical or virtual resources available to a Mobile Network Operator (MNO), such as the equipment in access networks, cloud infrastructures, or transport networks, which is used to offer end-to-end network services to its vertical customers.

The resources in this layer can be virtualized and split into logical segments to guarantee the isolation of the diverse network services offered to each vertical. To achieve isolation, each network segment is administered by managers and orchestrators responsible for coordinating the provisioning and interconnection of virtualized services. For instance, VIMs ensure the exclusive utilization of computational resources among tenants, whereas NFVOs manage the lifecycle of VNFs deployed in the resource allocation designated for each tenant. In addition, communication between VNFs is coordinated through SDN controllers, which can streamline traffic flows between VNFs. SDN controllers are employed in access and transport networks to segregate resources and traffic among verticals across end-to-end communications.

3.2. M&O Layer

This layer is responsible for managing the lifecycle of network slices and governing the virtualized resources allocated to each vertical, in addition to the physical resources owned by the MNO.

In the 3GPP architectural model, the CSMF allows the definition and creation of network slices by means of a graphical user interface (GUI) that provides access to the catalogs of network services and VNFs, as well as information about the physical resources (e.g., access, transport, and cloud networks) available in the infrastructure layer. Once the network slice is defined, it is translated into a template including all the relevant information later sent to the NSMF module through its NBI. The NSMF then uses the Slice Resource Controller module to split the template into sub- slices, each one including the network resources for each network segment. Later, each sub-slice template is sent to its NSSMF module, which instantiates the virtualized services over the resources of each network segment. For example, the NSSMF of a cloud domain will receive the descriptors of the network services to be onboarded and deployed by the NFVOs they manage. Alternatively, the NSSMF of an access or transport network receives service templates in terms of applications to be installed on the SDN controllers they manage.

As mentioned, the extensions proposed in this paper consist of two subsystems:

The hierarchical monitoring system, consisting of a set of Monitoring Agents that extract metrics at the system level from each device in each network segment and export them to be collected at each NSSMF Monitoring Aggregators, which send them to the centralized Monitoring System server where they are filtered, stored, and optionally plotted by a visualizer. The metrics to be collected are defined by the network slicing operator or user during the design of their services.

The AI-based Slice Resource Optimizer (ASRO). ASRO is an autonomous resource management component that consumes the metrics collected by the monitoring system to make predictions about resource usage using artificial intelligence techniques, to optimize resource utilization. To this end, ASRO must constantly interact with the SLA Manager component, which manages the performance policy configurations across all network segments. For this purpose, it is proposed that NSMF implements an eastbound interface (EBI) that enables ASRO to gather SLA- related policy information to perform autonomous operations over the virtualized services. The following section provides more details on the ASRO component.

4. AI-Based Slice Resource Optimizer Function

The paramount aspect of the M&O layer is to effectively manage and optimize the allocation of resources based on service requirements, across all network segments comprising a given network slice. The ASRO component is responsible for enforcing this functionality within the M&O layer by performing three basic operations: (i) data collection and analysis of metrics related to the utilization of network and computational resources; (ii) filtering, segmentation, and pre-processing of the metrics to train machine learning models to be used in resource utilization predictions; (iii) performance of resource optimization actions, including but not limited to traffic balancing, service scaling, and Virtual Network Function (VNF) sharing, based on predictions generated by deep learning models. These actions are carried out across various management and orchestration components that constitute the M&O layer. Each operation is performed respectively by the Monitoring System, the Optimizer Engine and the Actuator modules of the ASRO component, shown in

Figure 4.

Thus, the metrics collected from the different monitoring aggregators used in the network slice are centralized in the monitoring system. The monitoring aggregators are added as endpoints within the centralized monitoring system server (centralized collector server). Therefore, the centralized server does not interact directly with the monitoring agents, facilitating the management of metrics at a higher level of abstraction. The metrics are stored in a timeseries database to be accessible by external components and to be plotted by the visualizer module as human readable.

Then, the metrics are sent to the optimizer engine component to obtain the predictions by applying different algorithms. The predictive analysis is carried out on an individual basis for every VNF within the diverse range of network services that have been deployed by the operator. First, the data are pre-processed and filtered to select the most relevant features to optimize the resources. Consequently, using the resulting filtered data, the predictive algorithms generate forecasts as outputs that are subsequently used by the optimization algorithm. The actuator module acquires the outputs generated by the optimizer engine as inputs to undertake the configuration operations by engaging with network segment controllers, namely NFVOs (e.g., VNFMs) and SDN controllers. For example, the configuration operations can be applications executed by the SDN Controllers, while on the VNFMs they can be VNF-related Day2 configurations.

Service performance compliance is supervised by the SLA Manager component, which stores service-level policies and continuously verifies, through monitoring systems, that performance indicators remain within permitted operational thresholds. During orchestration decisions, the ASRO component consults these SLA constraints when processing resource utilization predictions to determine whether VNF sharing or traffic redirection actions are required. While this work focuses on evaluating improvements in computational resource utilization achieved through predictive orchestration, SLA compliance is inherently preserved in the orchestration workflow. A comprehensive service-level evaluation would require constructing multiple service scenarios due to the diversity of deployed services, which is beyond the scope of the present study.

From an operational perspective, this orchestration workflow is executed continuously to support multiple network slices and services running across distributed infrastructure domains. Therefore, scalability becomes an important aspect of the optimizer operation. The computational complexity of the proposed orchestration mechanism mainly depends on the number of monitored VNF instances and network slices deployed in the infrastructure. The predictive component implemented in ASRO performs resource utilization estimation independently for each monitored VNF, resulting in a computational cost that scales approximately linearly with the number of VNFs and slices being managed.

Likewise, the reactive resource-sharing algorithm evaluates candidate VNF instances only within the affected infrastructure domain when resource limitations are detected, leading to a complexity proportional to the number of locally available instances rather than the entire system. Moreover, prediction and optimization tasks are executed periodically and can be distributed across orchestration components, allowing parallel processing in multi-domain environments. Consequently, although the workload increases with the number of managed slices and services, the distributed execution model ensures that the orchestration framework remains practical and scalable for large-scale deployments while maintaining acceptable operational overhead.

Dataset

The monitoring system is responsible for collecting metrics and building datasets in real time. However, to define an initial predictive model, a training dataset containing the historical computational consumption metrics of virtual machines is required. For this purpose, the publicly available dataset GWA-T-13 of the German cloud center provider, MATERNA, is used. This dataset contains real monitoring measurements collected from virtual machines running production workloads in a distributed datacenter environment over several months. These measurements include resource allocation and utilization metrics, which are used to train and evaluate the predictive models employed in this work.

Nevertheless, the objective of the envisioned monitoring system is to generate fresh data within predefined time windows set by the operator. This capability will enable both periodic and real-time retraining of the machine learning models, optimizing their performance. The dataset is composed of three files containing metrics collected over three months from 520, 527 and 547 VMs deployed in the distributed datacenter. The aggregate resources designated for the VMs encompass 49 Hosts, 69 CPU cores, and 6780 GB of RAM. The workloads on the VMs are created by highly critical business applications deployed by MATERNA’s customers. The dataset comprises 12 features that concern the allocation and utilization of resources for each virtual machine (see

Figure 5). The reader can refer to [

51] for more details on the dataset.

To train the predictive models utilizing the most relevant features of the dataset, feature selection was implemented using a correlation analysis. The results indicate moderate correlations between network throughput and the utilization of CPU (MHz), RAM (KBs), and storage (write KB/s). Thus, the subsequent experiments were performed using only these 4 features, following the same approach as in [

37]. These features were utilized by the predictive models to predict the forthcoming resource consumption of each VM.

Although the training dataset originates from enterprise cloud workloads, the predictive models operate on generic resource consumption patterns rather than application-specific characteristics. CPU and memory utilization dynamics exhibit transferable temporal behaviors across different VNF types. Moreover, in operational deployments, the prediction models are periodically retrained using telemetry collected from the operator’s infrastructure, enabling adaptation to service-specific workloads while maintaining orchestration effectiveness.

5. Resource Optimization Model

Figure 6 illustrates the proposed resource optimization model. The system encompasses two key management stages within the network slice lifecycle. The first stage, referred to as commissioning/composition, involving VNF resource definition and placement, relies on the VNF classification approach. The second stage, operation/monitoring, focuses on computational resource optimization during VNF runtime using the VNF sharing approach. The details of both the VNF classification and VNF sharing mechanisms are explained in this section.

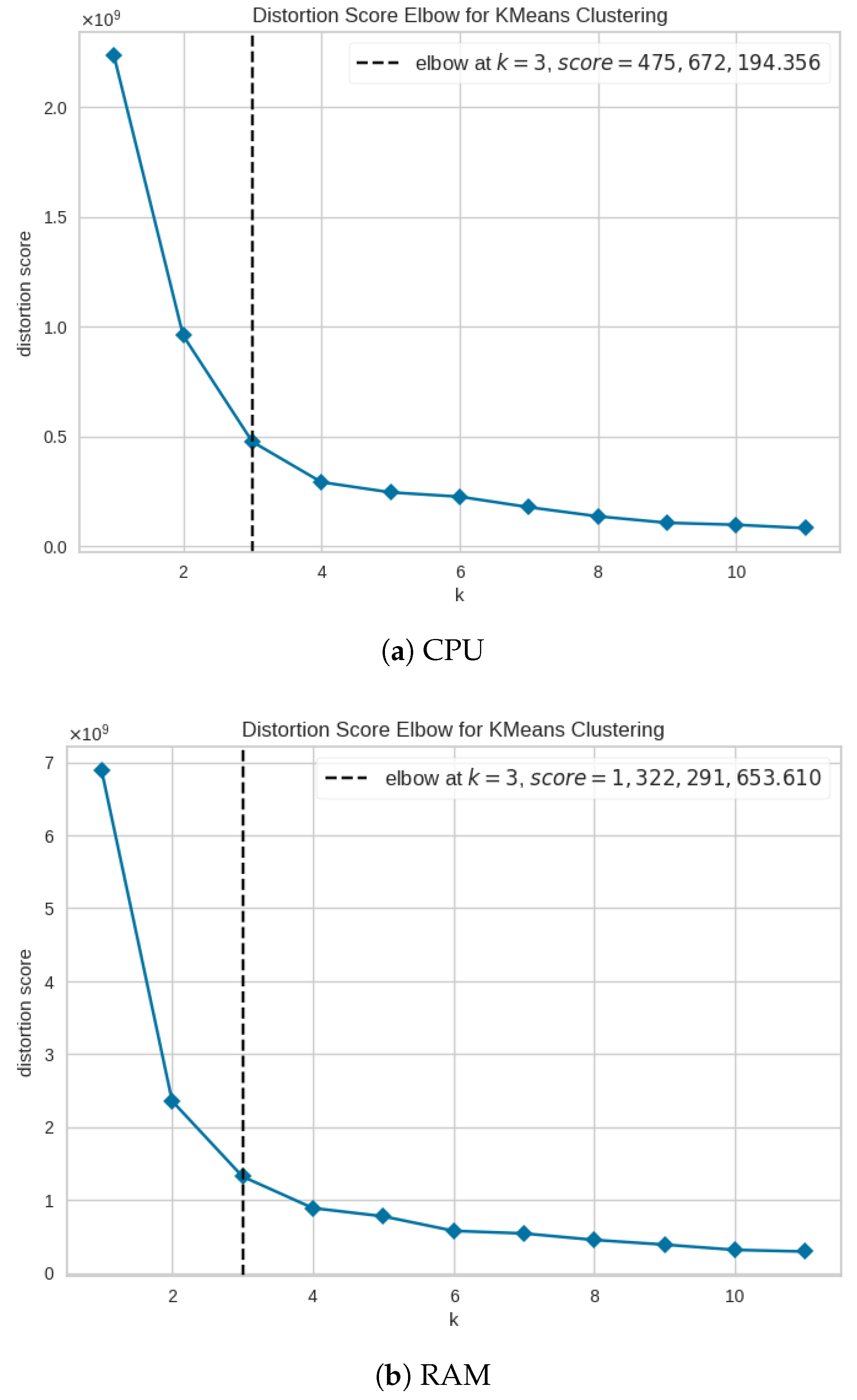

5.1. VNF Classification

The objective of VNF classification is to enhance the allocation of VNFs across distributed computational domains by taking into consideration the specific resource and performance requirements of each VNF. As shown in

Table 2, three types of VNFs are proposed according to the service specification defined by GSMA for network slices such as enhanced Mobile BroadBand (eMBB), Ultra-Reliable Low Latency Communications (URLLC), and massive Machine-Type Communications (mMTC). These VNF types are based on performance indicators such as bandwidth, latency, and service types, which need to be converted into physical and virtual resource requirements. Therefore, they are aligned with the three common types of clouds domains: edge, central office, and public/core. The edge cloud typically has more constrained resources, whereas the public/core clouds tend to offer greater resource capacity.

For VNF classification, the VMs workload trace data of GWA-T-13 dataset was used. In this analysis, the results were achieved by analyzing separately the features including CPU and RAM provided in the VMs workload trace data. The results using the elbow method suggest that the dataset should be split into three groups as shown in

Figure 7a,b. In both figures, the x-axes represent different k-values, and the y-axes represent their corresponding distortion score.

Figure 7a refers to the CPU feature while

Figure 7b refers to RAM. Using a k-value equal to 3, the k-means algorithm was trained and used to assign three different labels to the VNFs named as T1, T2 and T3.

As the dataset is periodically updated using data collected within temporal windows by the monitoring system, label adjustments are made to ensure the ongoing preservation of the proportional distribution of the three VNF types. Nonetheless, in cases where outliers are detected in the newly acquired datasets, the previous threshold values are retained as a point of reference for making necessary label adjustments.

According to the analysis of the results obtained with the classification method defined above on the dataset,

Table 3 shows the definition of the VNF profiles for each type. It is worth mentioning that these are reference values and may vary according to the historical update of the dataset and according to the types of services. In summary, it can be concluded that low-resource VM-based VNFs are characterized by having no more than than 4 vCPUs and 8 GB RAM. This group of VNFs is assigned the label T1. While high resources can be defined to VNFs with requirements greater than 8 vCPUs and more than 16 GB of RAM. This group is assigned the T3 label. VNFs with intermediate resources will have the T2 label.

This approach facilitates the placement optimization of VNFs and mitigates the problem of resource overprovisioning through a two-fold rationale. During the service design phase, network managers have the option to either specify the necessary resources for each VNF or to define their performance characteristics (e.g., latency, throughput; refer to

Table 2). In the first scenario, during the deployment process, the system identifies the VNF type of each VNF and strategically selects the most suitable available computational node. In the second scenario, leveraging performance indicators mapping, the system autonomously allocates computational resources to the VNFs according to the VNF type, and upon deployment, it also selects the most advantageous computational node. Therefore, it allows the management and orchestration system, powered by the ASRO component, to act as an automatic selector/configurator of VNF resources based on the type of services or network slices.

5.2. VNF Sharing

In order to maximize the efficiency of the available computational resources, this study proposes the implementation of a VNF sharing scheme which incorporates both deep learning techniques and a reactive algorithm in a complementary manner. A deep learning algorithm is used to predict the utilization of computational resources by considering the traffic load of VNFs, whereas the reactive algorithm is utilized to share the available VNF computing resources among other VNFs with depleted resources.

As explained in Section Dataset, while conducting correlation analysis among the CPU, RAM, storage, and network throughput values, a direct proportional relationship was identified in the dataset among network throughput, CPU, RAM, and storage [

17]. In other words, as network throughput increases, CPU, RAM, and storage also increase proportionally. Consequently, the VNF Sharing Algorithm, although capable of predicting all four parameters, focuses only on CPU, RAM, and storage values.

The notation used in the proposed VNF sharing algorithm is detailed in

Table 4. Algorithm 1 uses the following parameters as input. Let

be the group of available clouds with cloud identifiers (e.g., edge, core/public). Let

be the indicator of each service deployed by the MNO, and let

be the VNFs that comprise any service or network slice. A network service can be defined by

to represent VNFs instantiated on the clouds (NFVI) belonging to any vertical. With these parameters, the algorithm creates and updates the database. The database maintains records for individual VNF instances, which are identified along with specific identifiers for their corresponding network services or slices, as well as details of the cloud infrastructure on which they are deployed. Additionally, the database provides information on the operational status of the VNFs, clearly differentiating between shared and non-shared resources.

Algorithm 1 starts receiving predicted VNF resource usage () from the deep learning algorithm (e.g., LSTM) (ln. 1). Here, y represents the set of compute resources, specifically CPU, RAM, and storage. These parameters are evaluated to determine if any resource y exceeds the threshold () defined during the service commissioning (ln. 4). If a resource threshold is reached or exceeded, the algorithm searches for VNF candidates of the same type providing the same service. To ensure optimal orchestration, the system selects the candidate with the lowest current workload using an argmin function (ln. 6). Once a candidate is identified, the algorithm retrieves its current computing usage () and verifies its residual capacity. Specifically, it validates that the available resources in can absorb the predicted excess load from while maintaining a safety margin to prevent secondary overloading (ln. 7).

If the is in the same cloud as the and meets the capacity requirements, the algorithm calculates the sharing factor (), the sharing percentage (), and the associated sharing cost () (ln. 9–12). The sharing cost is based on the workload cost () within a specific timeframe. Workload refers to the tasks and operations a (virtual) machine must handle, including resource processes, within a defined timeframe. Then, the traffic flow is balanced between the VNF’s predicted and sharable () by using a SDN controller (ln. 13). The configurations in to enable interfaces to allow incoming traffic are performed via the VNFM which executes Day 2 primitives (ln. 14). Day 2 primitives refers to the execution of configuration actions on a specific VNF during its runtime stage.

If the is not in the same cloud as the , the algorithm determines if the roundtrip time (RTT) between and satisfies the specified SLA (ln. 16). RTT represents the time required for a message to travel from the sender to the receiver, as well as the time required to acknowledge the received message. If the RTT meets the SLA, the SDN Controller routes the traffic flows and estimates the sharing percentage (ln. 17–20). If the RTT does not meet the SLA and the cloud where the is located has available resources, Algorithm 1 initiates the VNF scaling procedure (ln. 21–23). Alternatively, it may recommend modifying the SLA to create a new VNF topology and instantiate new VNFs in other domains (ln. 25). Following these actions, the database is updated with the sharing status of the VNFs that share resources until the computing workload drops below the threshold value (ln. 34). To ensure system stability and prevent the “ping-pong” effect—where the system frequently toggles sharing on and off due to minor traffic oscillations—a hysteresis margin is implemented. The sharing mechanism remains active until the predicted resource usage drops below a security threshold, defined as . Only when this condition is met does the ASRO component command the SDN controller to release the shared flows and restore the VNF to its “normal” operational state (ln. 30–32).

Additionally, load balancing is applied between VNFs through an SDN application executed in the SDN controller infrastructure. The ASRO component executes the traffic rules through the northbound interface of the NSSMF component in charge of managing the SDN controller. To guarantee traffic security and isolation, the vertical can set VPN connections between the VNFs (shared and exhausted), by using Day 2 primitives. It is important to note that the VNF resource sharing schema results in a reduction in instantiation costs when compared to deploying an additional VNF as a replica.

| Algorithm 1 VNF Sharing Procedure |

- 1:

Read DB() - 2:

Input: , - 3:

Get Predicted usage and Thresholds - 4:

if any resource satisfies then - 5:

Check if there are VNF of the same type - 6:

- 7:

while do - 8:

if is in the same cloud () of then - 9:

Sharing factor - 10:

Sharing percentage - 11:

Sharing cost - 12:

return - 13:

State “sharing” - 14:

Allocation Flows - 15:

else - 16:

Determine RTT to Cloud - 17:

if RTT ≤ SLA then - 18:

Repeat steps (9–12) - 19:

State “sharing” - 20:

Flow Allocation - 21:

else - 22:

if resource available then - 23:

Run Scaling-out Procedure - 24:

else - 25:

Setting new SLA (KPIs) - 26:

Setting VNFFG - 27:

end if - 28:

end if - 29:

end if - 30:

end while - 31:

else if then ▹ Hysteresis: stop sharing if load < 90% of VT - 32:

State “normal” - 33:

Release Allocated Flows - 34:

else - 35:

Reject Request - 36:

end if - 37:

Update DB()

|

From an orchestration perspective, the computational complexity of the sharing process grows approximately linearly with the number of active services and candidate VNFs evaluated during sharing decisions, resulting in a complexity proportional to , where S represents the number of active services and the number of VNFs of the required type. To ensure operational stability, resource sharing actions are triggered based on predicted threshold violations rather than instantaneous fluctuations, thus preventing oscillatory reconfiguration actions. Moreover, service isolation is maintained since traffic flows remain logically separated even when processing resources are shared across VNFs, ensuring both scalability and service integrity.

6. Experimental Testbed Configuration

This section presents the experimental testbed where the components of the M&O and NFVI layers are virtualized and deployed. This environment is used to validate the VNF sharing algorithm between two network services. In addition, it describes the configuration and architecture of the deep learning models used in the present work.

Figure 8 illustrates a realistic multidomain-enabled 5G network slicing orchestration testbed that comprises the 3GPP slice management and orchestration functions, monitoring system, and two NFVI domains. To validate the deployment of network services with sharing capabilities belonging to a specific vertical, the following software components are used. To implement the CSMF and NSMF components, the OpenSlice (

https://osl.etsi.org/documentation/latest/, accessed on 23 February 2026) platform is used as the operations support system. OpenSlice allows the definition of high-level network slice service specifications, which are composed of network service descriptors and VNF descriptors that must be onboarded to the NFVO. For this purpose, OpenSlice communicates with Open Source MANO (OSM) (

https://osm.etsi.org, accessed on 23 February 2026) via the SOL 006 standardized API to execute basic service lifecycle operations. OSM is used as an NFVO to oversee the instantiation of VNFs over multiple NFVI domains. Thus, it performs the role of the NSSMF. The VNFs instantiated in the testbed are described in

Section 8.

Furthermore, two NFVI domains are implemented to deploy VNFs with lower or higher resources. The NFVI domains represent the edge cloud and the core cloud, the former with limited resources and the latter with higher resources. Both are managed by OpenStack (

https://www.openstack.org, accessed on 23 February 2026), which is the de facto open-source platform in cloud orchestration to deploy and manage VM-based VNFs. The connectivity between the NFVIs is provided by open virtual switches managed by the SDN Ryu (

https://ryu-sdn.org, accessed on 23 February 2026) controller. The Ryu controller represents the transport network orchestrator performing the role of an NSSMF. OpenStack (Antelope) and Ryu SDN controller (version 2.3.1) were deployed over Ubuntu 20.04 LTS to ensure a stable environment for NFV measurements.

The monitoring system comprises the data collector and ASRO components. The data collector is implemented by the Prometheus (

https://prometheus.io, accessed on 23 February 2026) server toolkit, which is an open-source monitoring system that enables time series collection using a pull model over HTTP. Prometheus centralizes the metrics gathered from the node exporters, which are agents in charge of collecting and pushing the metrics to the Prometheus server. Node exporters in the testbed are executed as Docker containers inside of each cloud domain to collect the OpenStack metrics. On the other hand, the ASRO component is implemented as a Docker container in which the metrics collected from the Prometheus server are serialized and parameterized to be used by the deep learning models. Then, once ASRO predicts the thresholds, it interacts with the SDN Ryu controller to install the necessary flows on the Open Virtual Switches (OVSs). To ensure the replicability of the latency and resource consumption results, the monitoring granularity was set to 1s in Prometheus to align with the 30s traffic profile windows, ensuring that transient CPU spikes during VNF sharing were accurately captured (

https://www.openvswitch.org, accessed on 23 February 2026).

Finally, the OpenStack domains are implemented using the Virtual Networks over Linux (VNX) (

https://vnx.dit.upm.es, accessed on 23 February 2026) tool. VNX enables the design of network topologies comprising virtualized network elements defined as both kernel-based VMs and Linux Containers (LXC). The network topologies are implemented through scripts defined in XML. All the components of the platform are deployed over a physical server equipped with an Intel i7-8700 CPU with 12 cores and 32 GB of RAM. The Intel i7-8700 CPU operated at a base frequency of 3.20 GHz, reaching up to 4.60 GHz in Turbo mode, with Intel Hyper-Threading enabled to support parallel VNF execution.

7. Deep Learning Models

The subsequent section delves into the utilization of Deep Learning (DL) models, specifically LSTM and Transformers, as potential alternatives to the resource optimization problem within the ASRO component. However, despite the benchmarking among these two types of DL algorithms, the proposed system employs a singular algorithm during runtime for the purpose of forecasting computational resources. It is important to clarify that the predictive models utilized in this work are trained in a centralized manner using historical monitoring data collected from the infrastructure. Federated Learning (FL) is not employed in the current implementation. Nevertheless, the proposed orchestration architecture is compatible with FL paradigms, which could be explored in future work to enable distributed model training across multiple administrative domains.

These predictive models were selected and evaluated due to their capacity to handle sequential data (e.g., time series). Also, these predictive models have achieved state-of-the-art results in different applications [

52]. In this study, these algorithms were used to predict future resource utilization in a VNF: RAM, CPU, storage, and network throughput.

The dataset used for model training consists of periodically collected resource utilization metrics, including CPU and memory consumption of VNFs. These metrics were sampled at fixed time intervals, enabling the construction of temporal sequences for forecasting tasks.

Prior to model training, the data underwent preprocessing that included the construction of sliding temporal windows, which were used as input sequences for forecasting. All input features were normalized to the range before being fed into the models, ensuring numerical stability during optimization and mitigating scale-related biases in the learning process. For performance evaluation, a hold-out validation strategy was used for performance evaluation. In specific, the dataset was split chronologically, assigning the earliest 80% of observations to the training set and the remaining 20% to the testing set. The temporal order was strictly preserved, ensuring that past data are used for training and future data for testing. This prevents information leakage and enables a realistic assessment of predictive performance under operational conditions.

Training DL models is challenging due to the high number of parameters in each processing layer. To identify an appropriate DL architecture and a set of parameters for each predictive model, an optimization process was performed using the hyperband method [

53]. In this process, parameters such as learning rate, number of layers and their relevant parameters (number of neurons, number of filters and kernel sizes, number of heads, head size, etc.) were fine tuned.

Regarding the training stage, the Mean Absolute Error (MAE) was used as a loss function, and the multivariate Root Mean Square Error (RMSE) was used as a performance metric. The RMSE value is a mathematical method widely used to evaluate regression-focused algorithms. Therefore, the closer the RMSE value to zero, the better the predictive capability of the model. Specifically, the multivariate RMSE was computed through the average (uniform weighted) of the individual RMSE values in each output. The ADAM optimizer [

54] was used as the optimization algorithm, and an early-stopping strategy was used to prevent overfitting and unnecessary computing. The early-stopping strategy stops the training when a performance metric does not present any improvement during a determinate number of training epochs. In this study, the patience parameter used in the early-stopping strategy was set to 10 and the test-loss (e.g., MAE) was selected as monitoring metric.

7.1. LSTM Model

LSTM models are deep neural networks used for time series processing and forecasting. They are a type of Recurrent Neural Network (RNN) aimed to deal with the vanishing gradient problem present in traditional RNNs. These recurrent networks are characterized by including a memory space controlled through different gates (input gate, output gate, and forget gate), which circumvents the problem of gradient explosion [

55].

The LSTM model allows the analysis of sequential data by using previous time steps to model the time dependencies in the data.

Figure 9 shows the architecture of the LSTM model including the input data and the predicted output. Data are provided as three previous (and consecutive) timestep windows Xt − 2, Xt − 1, Xt, and Yt + 1 as the model’s output. Yt + 1 is the predicted future utilization of the VNFs resources at the next time step.

The number of past timesteps used as input for prediction was selected based on empirical evaluation performed during the model training phase. Several window sizes were tested to analyze their impact on prediction accuracy and computational overhead. Experimental observations showed that increasing the number of past timesteps beyond three did not lead to significant accuracy improvements while increasing training and inference costs. Therefore, a window size of three timesteps was adopted as an effective trade-off between prediction performance and computational efficiency, enabling timely orchestration decisions.The LSTM model is composed of five layers: the first is a recurrent layer with 40 cells, with tanh activation function, connected to three dense (full connected) layers with 128, 64 and 32 neurons with the Rectified Linear Unit (ReLU) as activation function, and an output layer (with sigmoid activation function) with four neurons, each trained to estimate the expected resource utilization of CPU, RAM, storage, and network throughput. A dropout of 0.2 was used in the LSTM model and dense layers to avoid overfitting issues [

56].

The LSTM model is composed of five layers: the first is a recurrent layer with 40 cells, with tanh activation function, connected to three dense (full connected) layers with 128, 64 and 32 neurons with the ReLU as activation function, and an output layer (with sigmoid activation function) with four neurons, each trained to estimate the expected resource utilization of CPU, RAM, storage, and network throughput. A dropout of 0.2 was used in the LSTM model and dense layers to avoid overfitting issues [

56].

7.2. Transformer Models

Transformer models were introduced in [

57] as a novel architecture for sequence-to-sequence learning. They include an attention mechanism that looks at an input sequence and determines at each step what other parts of the sequence are important [

58].

Transformers differ from existing methods for sequence learning (Gated Recurrent Unit (GRU), LSTM, etc.) because they do not imply the use of any recurrent networks. The standard (sequence-to-sequence) transformer models are composed of two parts, namely encoder and decoder, which consist of modules that can be stacked on top of each other multiple times. Each module consists mainly of multi-head attention and feedforward layers. Another part of the transformer model is positional encoding of the input data, because there are no recurrent networks that can remember how the sequence is fed into the model. Hence positional encoding is used to assign a relative position to each element of the input sequence.

Figure 10 shows the transformer model with the input data and the predicted output.

In this model, the decoder part has been completely removed because in this application the objective is to predict the next time-step (sequence-to-one) rather than a sequence of results (sequence-to-sequence) as is the case in the standard Transformer. Therefore, only a few attention blocks were connected to dense layers to perform the prediction, as suggested in [

59]. Data are provided in the same way for the LSTM model (three previous time steps Xt − 2, Xt − 1, Xt, and Yt + 1 as the model output). The transformer model is composed of four encoder blocks, each one with a multi-head attention block with 4 heads and a feed forward section composed of two One-Dimensional Convolutional Neural Network (1D-CNN) layers (with ReLU activation function). The output of the encoder blocks is connected to a global average pooling layer, and the output is connected to three dense layers with 128, 128 and 32 neurons (with ReLU activation function). The output layer has four neurons and a sigmoid activation function (as in the LSTM model) for predicting the resource usage of the CPU, RAM, storage, and network throughput. A dropout of 0.1 was used in the encoder block, and 0.2 was used in the fully connected layers to avoid overfitting.

8. Evaluation and Results

This section highlights the advantages and benefits of the proposed system, which are validated and evaluated through a use case. First, a detailed description of the use case is presented, which encompasses the network service and the subsequent interactions among system components during the network service deployment and sharing of VNFs. Furthermore, a detailed analysis of resource consumption for a specific VNF in a network service is presented. Finally, an impact assessment is conducted to evaluate the potential benefits of resource sharing between VNFs across different network services for resource optimization.

8.1. Social Distance Tracking Use Case

The COVID-19 pandemic has presented significant challenges to the planning and execution of large-scale events. Among the most widely adopted measures is social distancing, which has become the cornerstone of public health policies aimed to reduce contagion rates. However, the applicability of this use case extends beyond pandemic contexts to any scenario requiring the monitoring and assurance of physical distance for safety and operational efficiency. For instance, this system is vital in industrial environments to ensure safe separation between personnel and heavy machinery, and in high-traffic logistics centers to prevent bottlenecks and ensure workplace safety standards. In this sense, the use case defined here aims to use artificial intelligence techniques to track people through video cameras in public events to detect and measure the distance between them. The tracking system generates alarms or reports to users (e.g., event organizers and control institutions) when the distances previously imposed by the user are not kept.

As shown in

Figure 11, the network service consists of:

A virtual Access Network (vAN) that acts as a router or OVS for routing packets.

A virtual Deep Packet Inspection (vDPI) used to analyze and detect anomalies in the traffic flow.

A virtual Smart Recognition Engine (vSRE) implemented for video processing, object detection, and distance estimation between people. vSRE implements and runs the artificial intelligence algorithms that are oriented toward object detection. The details of the algorithm implemented by vSRE are beyond the scope of this study.

A virtual Web application (vWapp) monitors events in real-time.

Therefore, a network service made of four VNFs is required to deploy the use case. The VNFs are instantiated and distributed across different computational domains, requiring the deployment of multiple network slices to cover different zones and events. The workflow for deploying a network slice is depicted in

Figure 12.

The process is initiated by the user (e.g., operator or vertical) through the CSMF portal. Upon receiving the user’s requirements, the CSMF transmits them to the NSMF for translation into computational (NFV) and network (SDN) resource specifications. This translation involves mapping the requirements into NSSMF management calls to deploy VNFs and configure their network interconnection. The NSMF also configures the necessary metric parameters for service monitoring within the ASRO monitoring system. Once these tasks are successfully executed, the user is notified regarding the deployment completion.

Several experiments have been conducted based on the network service described above. First, two network services were deployed on two OpenStack-based compute nodes. vAN and vDPI were instantiated on the edge cloud, whereas vSRE and vWApp were deployed on the core cloud (see

Figure 8). An increase in the number of cameras would cause an overload on the vDPI, primarily because of the excessive amount of analyzed data streams. Therefore, when ASRO predicts that the vDPI will reach its threshold, ASRO activates the VNF sharing process and balances the traffic between two vDPI instances deployed at the cloud edge. This means that the vDPI of Service 2 is shared with Service 3, where the vDPI will be exhausted, as shown in

Figure 11. In addition, traffic balancing is executed by installing flow rules in the OVS running on the vAN.

The SDN controller receives from ASRO the configuration parameters that define the flow tables based on source–destination IP addresses, percentage of bandwidth, and quality of service. Communication between vDPIs and vSRE is implemented via VxLAN tunnels to minimize routing in the transport network. Moreover, each VNF was assigned one vCPU, 1 GB of RAM and 10 GB of storage to provide more consistent outcomes. The maximum CPU threshold levels of the VNFs were customized according to the classification defined in

Section 4.

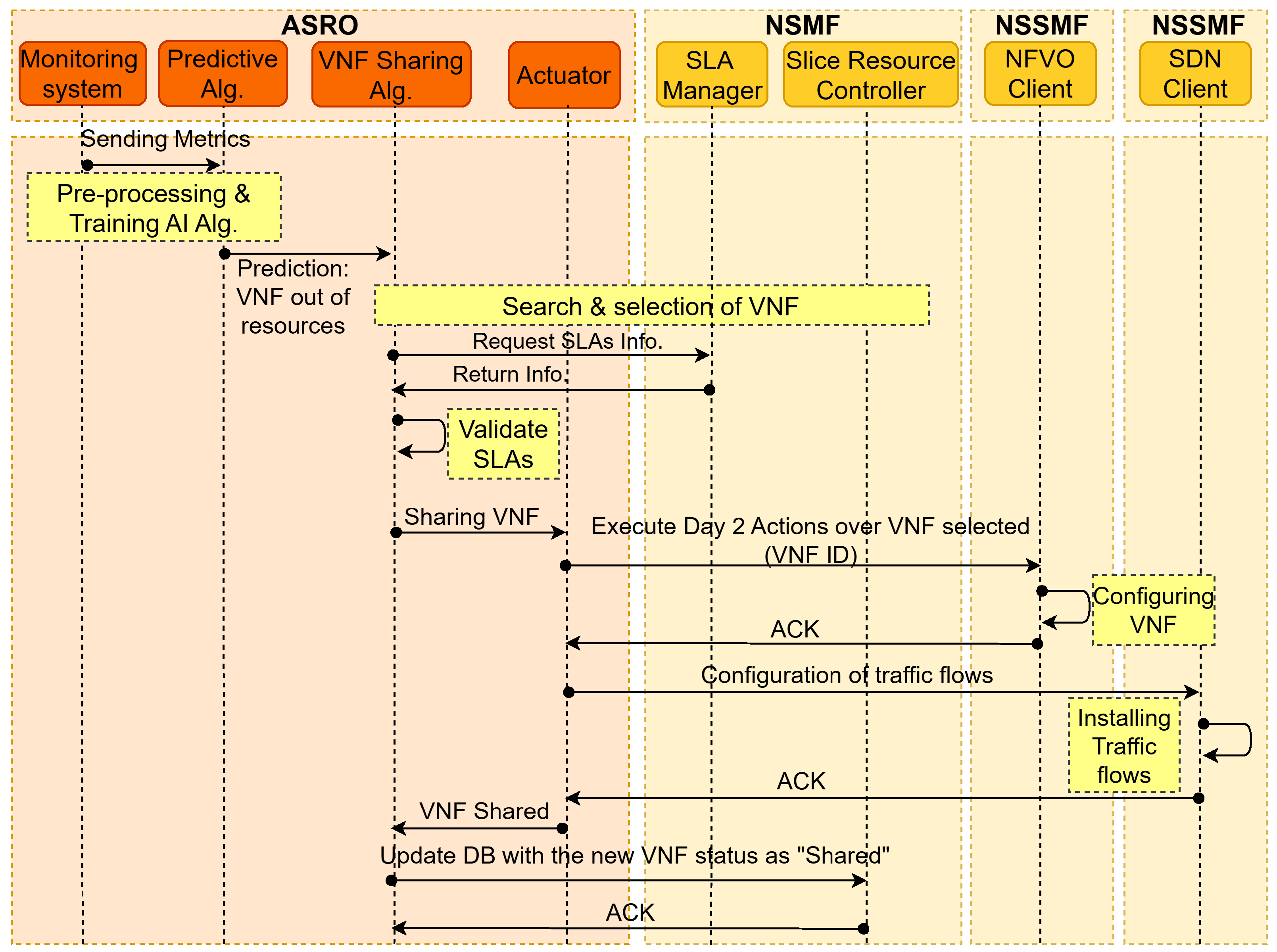

Figure 13 depicts the workflow of the VNF sharing process through the interaction of the ASRO component modules and the network slice management and orchestration functions. Essentially, it illustrates the pipeline followed by ASRO modules during the processes of metric extraction and prediction, which provide input to the VNF-sharing algorithms for executing actions on VNFs. At this stage, ASRO communicates with NSMF to verify the availability of VNFs and SLA parameters. Lastly, ASRO interacts with the NSSMFs to configure the shared VNFs and install traffic.

8.2. Exhaustive VNF Evaluation

In order to profile and characterize the performance of the vDPI, bandwidth consumption profiles have been defined. The main idea is to inject traffic with different bandwidth rates to stress the VNFs and determine not only the CPU consumption but also its real threshold. Traffic flows increases simulate the introduction of additional video cameras and consequently the number of data streams to be processed. Only CPU consumption has been considered because this unit exhibits the most pronounced change within the all-in-one testbed when synthetic traffic is introduced.

Therefore, to simulate the increase in traffic flow, a script has been developed that generates traffic transmission profiles every 30 s with exponentially increasing bandwidth rates. For this purpose, the iPerf tool was used. For example, the initial UDP bandwidth is set to 2 Mb, increasing to 4 Mb after 30 s. and so on. This process is repeated until a bandwidth of 600 Mb is reached.

Regarding the vDPI network element, tests were conducted for scenarios where it delivers DPI services and also for scenarios where it exclusively functions as a forwarding switch, e.g., in trusted scenarios where DPI services are not required. DPI functionalities were implemented using the OpenDPI tool, which enables protocol identification using signature-based inspection and port-based classification techniques. In the experimental setup considered in this work, port-based classification was explicitly employed due to its lower computational overhead, making it suitable for edge-cloud deployments where computing resources are limited. Compared with more complex DPI mechanisms based on deep packet payload inspection or regular-expression matching, port-based inspection significantly reduces processing requirements while still enabling adequate traffic classification for the considered use case. This trade-off allows the vDPI function to operate efficiently without introducing excessive computational load that could affect orchestration decisions and resource optimization processes.

Further, the measurements were gathered through Prometheus exporters, which collect metrics from computing nodes and VNFs. The Prometheus exporter captures the metrics at the operating system level and pushes them to the Prometheus Server.

The experimental results in

Figure 14 show a comparison of vDPI CPU usage with and without the DPI service.

Figure 14a illustrates the percentage of CPU consumption, relative to the total CPU allocation for the network slice in the edge cloud. The utilization of CPU resources, expressed as a percentage, exceeds 24% of the allocated CPU resources for the network slice in the edge cloud when vDPI provides the DPI service. However, when vDPI works as a switch, only 5% of the CPU is used.

On the other hand, the CPU utilization percentage consumed by vDPI is shown in

Figure 14b. When the DPI service is used, the CPU uses 60% of the allocated vCPU, however when the vDPI is used only as a switch, the CPU uses 30% of the resources. In the current experimental configuration, sharing processes are initiated when the ASRO system forecasts that CPU consumption at the vDPI is expected to surpass 60%, to prevent vDPI overloading. This means that, to avoid performance loss, the resources allocated to vDPI should dynamically be increased when the data rate exceeds 70 MB of bandwidth. Verticals and application developers can profile their services based on resource use and performance with the help of these stress tests. With this knowledge, according to the classification of VNFs, they can simply label their service into one of three types, and the system can automatically allocate the most suitable resources. Alternatively, they can assign resources during the service creation phase and the system detects the VNF type to place it on the most optimal node. Service profiling is not a mandatory requirement but serves as a valuable validation test for service providers.

8.3. Evaluation of Shared VNFs

An SDN-based application was developed to facilitate resource sharing between the exhausted and shared VNFs. This application operates by balancing the network traffic between these VNFs, and is executed on the SDN RYU controller following the prediction of the vDPI upper threshold exceedance by the ASRO system. Once the VNF sharing algorithm finds the appropriate VNF for sharing resources, the ASRO component modifies the SDN application parameters accordingly. These modifications typically involve adjustments of the port bandwidth limit, Quality of Service (QoS) queues, and the definition of source and destination IP addresses. Subsequently, the traffic flows are installed on the OVS that is deployed within the vAN network function.

For this experiment, the same script described in the previous subsection were used to generate the bandwidthbased traffic profiles. As shown in

Figure 15, the CPU resource usage between vDPI-Shared and vDPI-Exhausted is compared. The comparison is performed only between the vDPI network functions running the traffic analysis service. An investigation of resource allocation in the vDPI network function was conducted, as shown in

Figure 15a,b. In terms of the resources allocated to each slice, only 3.5% of the total CPU resources allocated to vDPI-Shared were shared with vDPI-Exhausted. Furthermore,

Figure 15b illustrates that vDPI-Shared shares a maximum of 10% of its CPU resources with vDPI-exhausted, whereas vDPI-exhausted utilizes up to 28% of the available CPU resources. These outcomes align with expectations, given that the resource-sharing algorithm is designed to enforce a percentage limit on the resources that can be shared between vDPIs.

It is worth emphasizing that the CPU consumption of vDPI-exhausted during the provision of the DPI service using vDPI-shared resources is reduced by 30% compared to the CPU consumption when vDPI-exhausted operates as a standalone VNF, as shown in

Figure 14b. This phenomenon is partially attributable to the parallelization of processes across multiple CPU cores or threads, which optimizes resource utilization. This optimized resource utilization is essential to comply with the SLA parameters and to minimize any degradation in the quality of service experienced by end users.

8.4. Deep Learning Model Evaluation

The proposed prediction algorithms were evaluated using the DL architectures described in

Section 7.1 and

Section 7.2, for the LSTM and Transformer models, and the set parameters obtained during the optimization stage using the hyperband method. The best prediction result was achieved with the LSTM model with an RMSE of 0.0189, whereas the transformer model obtained an RMSE of 0.0259. Consequently, in the present evaluation, the LSTM model generated a better (lower) RMSE value, providing a better level of prediction.

Table 5 presents the computational environment in which the models were generated and trained. The LSTM and Transformer models were compared in terms of the time consumed during the training process. In addition, the configuration parameters used in each model and the computational resources defined in a server to train these models are presented. It is worth mentioning that the current study undertakes an initial comparative analysis between LSTM and transformer implementations. The primary aim of this study is to develop a resource optimization framework that can effectively accommodate any type of machine learning model. However, it is crucial to conduct a comprehensive evaluation of these models, which is planned as an integral part of future research endeavors.

The data processing, training, and evaluation experiments were performed on an Intel Xeon 2.30 GHz processor, 12 GB random access memory (RAM), and a 12 GB NVIDIA Tesla K80 graphics accelerator card. The implementation, evaluation, and training of the models (LSTM and Transformer) were performed in Python programming language (version 3.6), using the Tensorflow system, where the Keras high-level libraries (version 2.4) were executed [

17]. This infrastructure information assumes relevance in scenarios wherein the operator requires the execution of online training for deep learning models. This implies the periodic integration of newly acquired data from the infrastructure within predefined time intervals.

Although a direct quantitative comparison with state-of-the-art orchestration solutions is challenging due to differences in architectural scope and evaluation assumptions, the obtained results allow drawing qualitative comparisons with traditional orchestration approaches reported in the literature. In particular, conventional reactive orchestration mechanisms typically trigger scaling actions only after resource saturation occurs, which may lead to SLA violations. In contrast, the proposed architecture integrates predictive resource estimation through ASRO, enabling proactive orchestration decisions before critical thresholds are reached. Furthermore, the inclusion of a reactive VNF resource-sharing mechanism allows maintaining service continuity when scaling actions are constrained by infrastructure limitations. These architectural enhancements collectively contribute to improved resource utilization efficiency and more resilient slice orchestration compared to purely reactive or static approaches.

9. Conclusions