Federated Intrusion Detection via Unidirectional Serialization and Multi-Scale 1D Convolutions with Attention Reweighting

Abstract

1. Introduction

- (1)

- Serialized architecture for flow-based federated IDS. We propose a unidirectional serialization scheme that converts tabular flow records into short ordered sequences and feeds these into multi-scale 1D convolutional filters. This design captures local correlations among normalized flow features without resorting to heavy sequence models (e.g., recurrent or transformer architectures), yielding a compact model suitable for edge deployment.

- (2)

- Attention-based channel reweighting for heterogeneous traffic features. We introduce a channel-wise attention module that learns to emphasize informative feature channels prior to classification. In contrast to earlier deep learning NIDS that rely either on fixed feature selection or unweighted convolutions [1,2,3,13,14,15], this mechanism adapts to distributional differences across clients and datasets while maintaining a modest parameter count.

- (3)

- Federated training under explicitly quantified Non-IID partitions. We train the proposed model under a cross-silo FL setting with sample-size-weighted FedAvg and construct client datasets using Dirichlet-based label-skew partitioning. We quantify Non-IID severity via the Jensen–Shannon divergence between local and global label distributions [7,16] and report performance under multiple client configurations, thereby making explicit the degree of heterogeneity under which the reported accuracies are obtained.

- (4)

- Reproducibility protocol and deployment-oriented analysis. Beyond reporting standard detection metrics, we detail the model configuration, hyperparameters, random seeds, data splitting ratios, and evaluation protocol, including client sampling, communication rounds, and stopping criteria. We further analyze training time and discuss communication behavior as the number of clients grows, and we discuss deployment considerations and limitations in terms of outdated benchmarks, potential dataset artifacts, and unmodeled adversarial threats [17,18]. From a system perspective, the term deployment-oriented is used in this study in a precise and limited sense rather than as a generic label.

2. Related Work

2.1. Deep Learning for Intrusion Detection

2.2. Benchmark Datasets and Evaluation Pitfalls

2.3. Federated Learning for Security Analytics

2.4. Security and Privacy in Federated Learning

3. Methodology

3.1. Problem Setting and Notation

3.2. Unidirectional Serialization of Tabular Flow Records

3.3. Multi-Scale 1D Convolutional Backbone

3.4. Channel-Wise Attention Reweighting

3.5. Federated Optimization via FedAvg

3.6. Non-IID Client Partitioning and Quantification

3.7. Threat Model, Privacy Considerations, and Limitations

3.8. Reproducibility Protocol

4. Experiments and Results

4.1. Datasets, Client Configurations, and Experimental Setup

4.2. Evaluation Metrics

4.3. Overall Performance on Benchmark Datasets

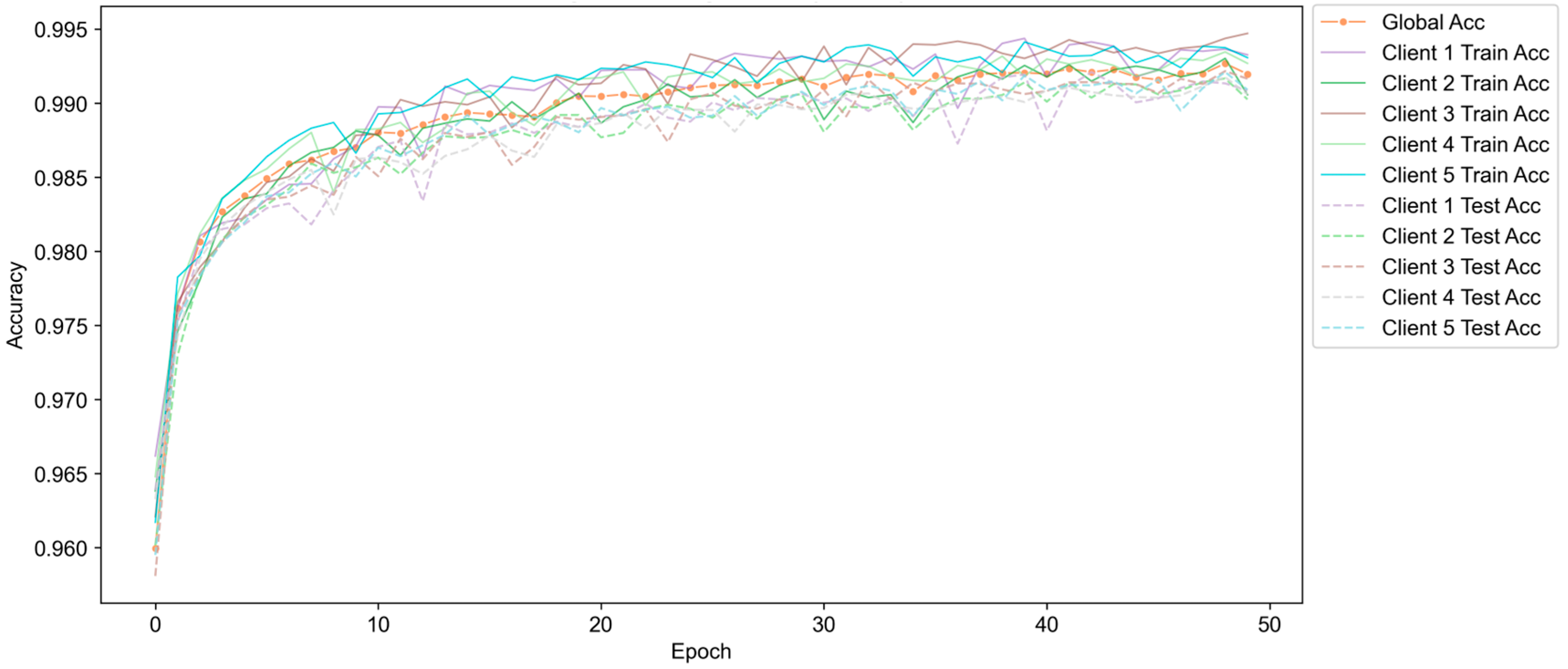

4.4. Convergence Behaviour, Accuracy Dips, and Communication Analysis

4.5. Robustness to Non-IID Data and Client Configurations

4.6. Ablation on Architectural Components

4.7. Protocol Checks for High Performance and Overfitting Risks

- (1)

- Verifying that train, validation, and test splits contain no duplicate records.

- (2)

- Confirming that scaling and normalization parameters are computed exclusively from training data.

- (3)

- Ensuring that label encodings and class mappings are consistent across splits and clients.

- (4)

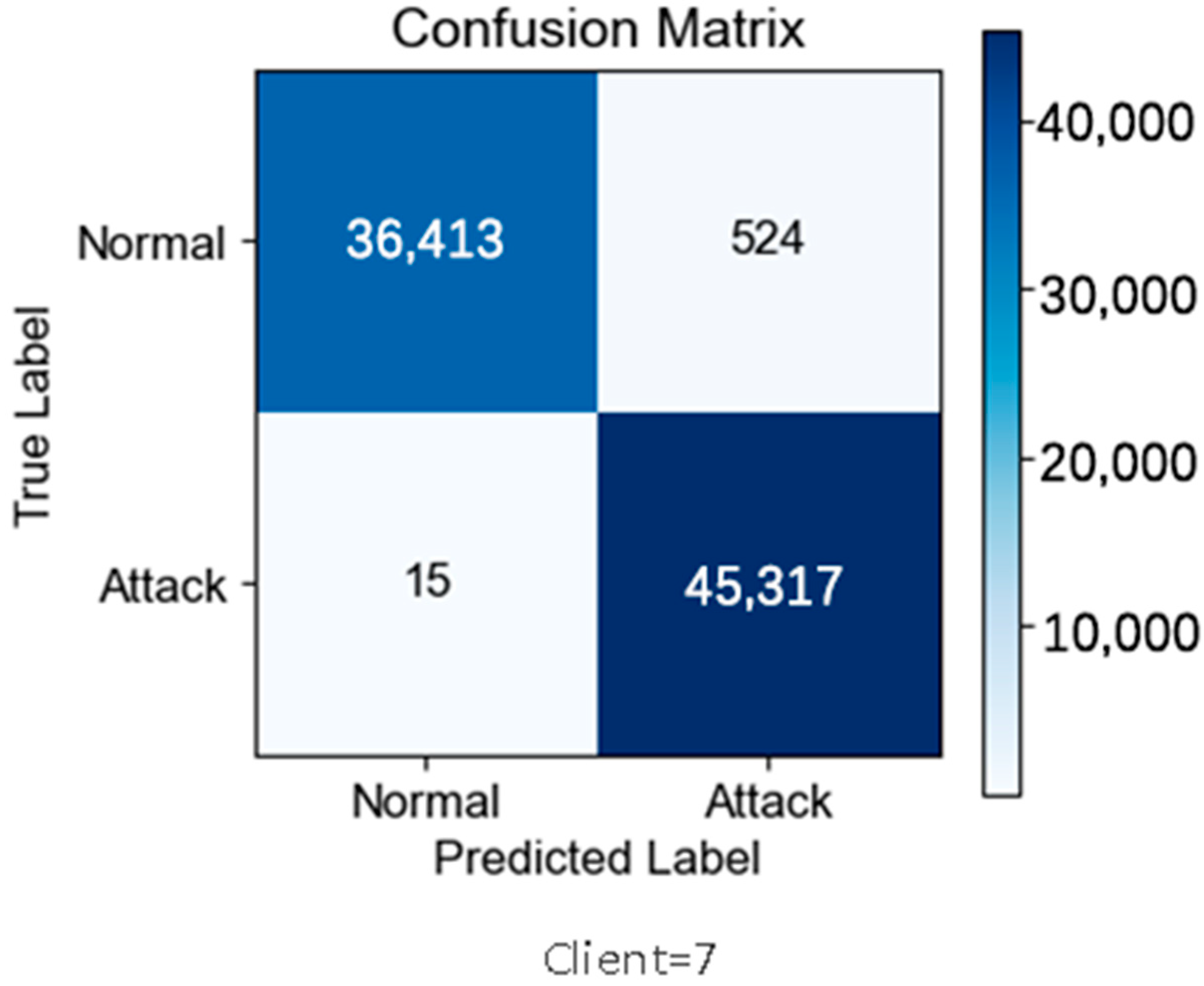

- Inspecting confusion matrices and per-class metrics to detect anomalously perfect performance concentrated on a subset of classes.

5. Discussion

5.1. Centralized vs. Federated Intrusion Detection

5.2. Comparison with Federated IDS Baselines

5.3. Dataset Artifacts, Class Imbalance, and Benchmark Saturation

5.4. Non-IID Scenarios and Realistic Edge Deployments

5.5. Security Vulnerabilities and Future Hardening

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| IDS | Intrusion Detection System |

| NIDS | Network Intrusion Detection System |

| FL | Federated Learning |

| CNN | Convolutional Neural Network |

| 1D-CNN | One-Dimensional Convolutional Neural Network |

| JSD | Jensen–Shannon Divergence |

| IID | Independent and Identically Distributed |

| Non-IID | Non-Independent and Identically Distributed |

| ACC | Accuracy |

| Prec | Precision |

| Rec | Recall |

| F1 | F1-score |

References

- Buczak, A.L.; Guven, E. A survey of data mining and machine learning methods for cyber security intrusion detection. IEEE Commun. Surv. Tutor. 2016, 18, 1153–1176. [Google Scholar] [CrossRef]

- Khraisat, A.; Gondal, I.; Vamplew, P.; Kamruzzaman, J. Survey of intrusion detection systems: Techniques, datasets and challenges. Cybersecurity 2019, 2, 20. [Google Scholar] [CrossRef]

- Ferrag, M.A.; Maglaras, L.; Moschoyiannis, S.; Janicke, H. Deep learning for cyber security intrusion detection: Approaches, datasets, and comparative study. J. Inf. Secur. Appl. 2020, 50, 102419. [Google Scholar] [CrossRef]

- Li, T.; Sahu, A.K.; Talwalkar, A.; Smith, V. Federated learning: Challenges, methods, and future directions. IEEE Signal Process. Mag. 2020, 37, 50–60. [Google Scholar] [CrossRef]

- Lim, W.Y.B.; Luong, N.C.; Hoang, D.T.; Jiao, Y.; Liang, Y.-C.; Yang, Q.; Miao, C. Federated learning in mobile edge networks: A comprehensive survey. IEEE Commun. Surv. Tutor. 2020, 22, 2031–2063. [Google Scholar] [CrossRef]

- Kairouz, P.; McMahan, H.B. Advances and open problems in federated learning. Found. Trends Mach. Learn. 2021, 14, 1–210. [Google Scholar] [CrossRef]

- Ma, X.; Zhu, J.; Lin, Z.; Chen, S.; Qin, Y. A state-of-the-art survey on solving Non-IID data in federated learning. Future Gener. Comput. Syst. 2022, 135, 244–258. [Google Scholar] [CrossRef]

- Yin, X.; Zhu, Y.; Hu, J. A comprehensive survey of privacy-preserving federated learning: A taxonomy, review, and future directions. ACM Comput. Surv. 2021, 54, 1–36. [Google Scholar] [CrossRef]

- Gosselin, R.; Vieu, L.; Loukil, F.; Benoit, A. Privacy and Security in Federated Learning: A Survey. Appl. Sci. 2022, 12, 9901. [Google Scholar] [CrossRef]

- Shan, F.; Mao, S.; Lu, Y.; Li, S. Differential Privacy Federated Learning: A Comprehensive Review. Int. J. Adv. Comput. Sci. Appl. 2024, 15, 220. [Google Scholar] [CrossRef]

- Zhang, X.; Luo, Y.; Li, T. A Review of Research on Secure Aggregation for Federated Learning. Future Internet 2025, 17, 308. [Google Scholar] [CrossRef]

- Lin, J. Divergence measures based on the Shannon entropy. IEEE Trans. Inf. Theory 1991, 37, 145–151. [Google Scholar] [CrossRef]

- Ring, M.; Wunderlich, S.; Scheuring, D.; Landes, D.; Hotho, A. A survey of network-based intrusion detection data sets. Comput. Secur. 2019, 86, 147–167. [Google Scholar] [CrossRef]

- Milenkoski, A.; Vieira, M.; Kounev, S.; Avritzer, A.; Payne, B.D. Evaluating computer intrusion detection systems: A survey of common practices. ACM Comput. Surv. 2015, 48, 1–41. [Google Scholar] [CrossRef]

- de Oliveira, J.A.; Gonçalves, V.P.; Meneguette, R.I.; de Sousa, R.T., Jr.; Guidoni, D.L.; Oliveira, J.C.M.; Rocha Filho, G.P. F-NIDS—A Network Intrusion Detection System based on federated learning. Comput. Netw. 2023, 236, 110010. [Google Scholar] [CrossRef]

- Alsamiri, J.; Alsubhi, K. Federated Learning for Intrusion Detection Systems in Internet of Vehicles: A General Taxonomy, Applications, and Future Directions. Future Internet 2023, 15, 403. [Google Scholar] [CrossRef]

- Buyuktanir, B.; Altinkaya, Ş.; Karatas Baydogmus, G.; Yildiz, K. Federated learning in intrusion detection: Advancements, applications, and future directions. Clust. Comput. 2025, 28, 473. [Google Scholar] [CrossRef]

- Wei, K.; Li, J.; Ding, M.; Ma, C.; Yang, H.H.; Farokhi, F.; Jin, S.; Quek, T.Q.; Poor, H.V. Federated learning with differential privacy: Algorithms and performance analysis. IEEE Trans. Inf. Forensics Secur. 2020, 15, 3454–3469. [Google Scholar] [CrossRef]

- Zhou, X.; Xu, M.; Wu, Y.; Zheng, N. Deep Model Poisoning Attack on Federated Learning. Future Internet 2021, 13, 73. [Google Scholar] [CrossRef]

- Manzoor, H.U.; Shabbir, A.; Chen, A.; Flynn, D.; Zoha, A. A Survey of Security Strategies in Federated Learning: Defending Models, Data, and Privacy. Future Internet 2024, 16, 374. [Google Scholar] [CrossRef]

- Aziz, R.; Banerjee, S.; Bouzefrane, S.; Le Vinh, T. Exploring Homomorphic Encryption and Differential Privacy Techniques towards Secure Federated Learning Paradigm. Future Internet 2023, 15, 310. [Google Scholar] [CrossRef]

- Belenguer, A.; Pascual, J.A.; Navaridas, J. A review of federated learning applications in intrusion detection systems. Comput. Netw. 2025, 258, 111023. [Google Scholar] [CrossRef]

- Devine, M.; Ardakani, S.P.; Al-Khafajiy, M.; James, Y. Federated Machine Learning to Enable Intrusion Detection Systems in IoT Networks. Electronics 2025, 14, 1176. [Google Scholar] [CrossRef]

- Olanrewaju-George, B.; Pranggono, B. Federated learning-based intrusion detection system for the internet of things using unsupervised and supervised deep learning models. Cyber Secur. Appl. 2025, 3, 100068. [Google Scholar] [CrossRef]

- Al Tfaily, F.; Ghalmane, Z.; Brahmia, M.E.A.; Hazimeh, H.; Jaber, A.; Zghal, M. Graph-based federated learning approach for intrusion detection in IoT networks. Sci. Rep. 2025, 15, 41264. [Google Scholar] [CrossRef]

- Klinkhamhom, C.; Boonyopakorn, P.; Wuttidittachotti, P. MIDS-GAN: Minority Intrusion Data Synthesizer GAN—An ACON Activated Conditional GAN for Minority Intrusion Detection. Mathematics 2025, 13, 3391. [Google Scholar] [CrossRef]

- Li, B.; Li, J.; Jia, M. ADFCNN-BiLSTM: A Deep Neural Network Based on Attention and Deformable Convolution for Network Intrusion Detection. Sensors 2025, 25, 1382. [Google Scholar] [CrossRef]

- Cui, B.; Chai, Y.; Yang, Z.; Li, K. Intrusion Detection in IoT Using Deep Residual Networks with Attention Mechanisms. Future Internet 2024, 16, 255. [Google Scholar] [CrossRef]

- Ji, C.; He, S.; Dai, W. A Federated Learning Based Intrusion Detection Method with Multi-Scale Parallel Convolution and Adaptive Soft Prediction Clustering. Electronics 2025, 14, 4705. [Google Scholar] [CrossRef]

- Yu, J.; Wang, G.; Shi, N.; Saxena, R.; Lee, B. A Multi-View-Based Federated Learning Approach for Intrusion Detection. Electronics 2025, 14, 4166. [Google Scholar] [CrossRef]

| # Clients | Accuracy (%) | Precision (%) | Recall (%) | F1 (%) | Training Time (s) |

|---|---|---|---|---|---|

| 4 | 99.24 | 99.58 | 98.84 | 99.21 | 3056 |

| 5 | 99.31 | 99.48 | 99.10 | 99.29 | 3614 |

| 6 | 99.38 | 99.61 | 99.14 | 99.37 | 4108 |

| 7 | 99.24 | 99.61 | 98.81 | 99.21 | 4226 |

| 8 | 99.26 | 99.43 | 99.05 | 99.24 | 4381 |

| # Clients | Accuracy (%) | Precision (%) | Recall (%) | F1 (%) | Training Time (s) |

|---|---|---|---|---|---|

| 7 | 99.82 | 99.85 | 99.78 | 99.82 | 589 |

| 8 | 99.84 | 99.87 | 99.81 | 99.84 | 772 |

| 9 | 99.86 | 99.87 | 99.84 | 99.86 | 853 |

| 10 | 99.79 | 99.79 | 99.78 | 99.79 | 995 |

| # Clients | Accuracy (%) | Precision (%) | Recall (%) | F1 (%) | Training Time (s) |

|---|---|---|---|---|---|

| 5 | 98.97 | 99.00 | 98.97 | 98.98 | 297 |

| 6 | 98.96 | 98.96 | 99.00 | 98.97 | 320 |

| 7 | 99.02 | 99.01 | 99.05 | 99.02 | 377 |

| 8 | 98.94 | 98.90 | 99.03 | 98.97 | 420 |

| 9 | 98.93 | 98.94 | 98.94 | 98.94 | 516 |

| 10 | 98.93 | 99.02 | 98.83 | 98.92 | 604 |

| Configuration | Multi-Scale Conv. | Channel Attention | ACC (%) | Macro F1 (%) | |

|---|---|---|---|---|---|

| Full model (baseline) | 64 | √ | √ | 99.38 | 99.37 |

| Short sequence | 16 | √ | √ | 98.31 | 98.30 |

| Degenerate serialization | 1 | √ | √ | 81.83 | 80.30 |

| No multi-scale convolutions (kernel size 3 only) | 64 | × | √ | 99.23 | 99.22 |

| No attention module | 64 | √ | × | 99.25 | 99.24 |

| No multi-scale and no attention | 64 | × | × | 98.49 | 98.47 |

| Method | Dataset | ACC (%) | F1-Score (%) | Notes |

|---|---|---|---|---|

| FedMSP-SPEC [26] | UNSW-NB15 | 88.28 | 88.18 | FL IDS, Dirichlet α = 1 |

| Multi-view FL CAE-NSVM [27] | UNSW-NB15 | – | 82.6 | Multi-view FL, 3 clients |

| Serialized 1D-CNN with attention (this work) | UNSW-NB15 | 99.38 | 99.37 | Same FL setting as Table 1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Li, W.; Gao, D.; Zhang, T. Federated Intrusion Detection via Unidirectional Serialization and Multi-Scale 1D Convolutions with Attention Reweighting. Future Internet 2026, 18, 117. https://doi.org/10.3390/fi18030117

Li W, Gao D, Zhang T. Federated Intrusion Detection via Unidirectional Serialization and Multi-Scale 1D Convolutions with Attention Reweighting. Future Internet. 2026; 18(3):117. https://doi.org/10.3390/fi18030117

Chicago/Turabian StyleLi, Wenqing, Di Gao, and Tianrong Zhang. 2026. "Federated Intrusion Detection via Unidirectional Serialization and Multi-Scale 1D Convolutions with Attention Reweighting" Future Internet 18, no. 3: 117. https://doi.org/10.3390/fi18030117

APA StyleLi, W., Gao, D., & Zhang, T. (2026). Federated Intrusion Detection via Unidirectional Serialization and Multi-Scale 1D Convolutions with Attention Reweighting. Future Internet, 18(3), 117. https://doi.org/10.3390/fi18030117