1. Introduction

In contemporary 5G network environments, intrusion detection systems (IDSs) increasingly face a structural trade-off between detection accuracy and operational efficiency. As traffic becomes more heterogeneous, dense, and latency-sensitive—driven by diverse service classes such as eMBB, mMTC, and uRLLC—improvements in detection performance are frequently achieved at the expense of computational cost, interpretability, or deployability. Designing IDS that remain both accurate and practical under such constraints remains an open challenge.

Traditional signature-based intrusion detection mechanisms are increasingly ineffective in this setting, as they fail to generalize to evolving and previously unseen attack patterns. Recent surveys emphasize that adaptive, data-driven detection approaches are essential for modern 5G infrastructures [

1]. Consequently, learning-based IDS—spanning classical machine learning (ML) and deep learning (DL) techniques—have become the dominant research direction in 5G security.

Early learning-based IDS studies were largely conducted on synthetic or semi-synthetic benchmarks such as NSL-KDD, UNSW-NB15, and CICIDS2017. Although these datasets enabled reproducible experimentation, they have been widely criticized for outdated traffic profiles and limited realism [

2,

3]. To address these shortcomings, recent work has shifted toward datasets collected from functional 5G testbeds. The 5G-NIDD dataset represents a notable step in this direction, offering realistic benign and malicious traffic derived from an operational 5G environment. Studies based on 5G-NIDD report lower—but more credible—performance ceilings, with classical ML models typically achieving accuracies around 99.4–99.6% under rigorous preprocessing and leakage-free protocols [

4].

Classical machine learning approaches—including decision trees, random forests, support vector machines, and k-nearest neighbors—remain attractive for intrusion detection due to their transparency, low computational cost, and ease of deployment. Tree-based models in particular are valued for their explicit decision rules, which align well with auditing and explainability requirements in security-critical systems [

2,

5]. However, such models typically rely on a single global decision boundary. As traffic distributions become more heterogeneous and imbalanced, this global boundary becomes increasingly fragile, leading to persistent class-specific errors that are difficult to resolve through tuning alone [

4].

Deep learning models address these representational limitations by learning hierarchical or temporal features directly from traffic data. Convolutional and recurrent architectures, attention mechanisms, and Transformer-based models have reported strong detection performance on 5G-NIDD and related datasets [

6]. Transfer learning strategies further extend deep models to data-scarce scenarios, as demonstrated in frameworks such as DTL-5G [

7], while autoencoder–hypernetwork combinations have reported near-ceiling accuracy under controlled conditions [

8]. Despite these advances, deep learning–based IDS often introduce substantial computational overhead, increased memory footprint, and limited interpretability. Their reliance on complex preprocessing pipelines and heavy architectures complicates deployment in latency-sensitive or resource-constrained 5G environments, particularly at the network edge.

To balance accuracy and practicality, hybrid and ensemble IDS architectures have also been explored. Common strategies include combining multiple learners through stacking, boosting, or voting, or pairing deep feature extractors with classical classifiers [

9,

10]. While these approaches can improve robustness, they typically rely on uniform aggregation, evaluating all models for every record regardless of uncertainty. As a result, they inherit much of the latency and complexity of their most expensive components and do not fully resolve the trade-off between accuracy, efficiency, and interpretability [

11].

A key observation motivating this work is that intrusion detection errors are not uniformly distributed across classes. In practice, a classifier trained on heterogeneous traffic is frequently distracted by competing benign and malicious patterns, leading to class-specific misclassifications near decision boundaries. Rather than increasing model depth or aggregating more learners to average out this ambiguity, we argue that a more effective strategy is structured decision validation: selectively re-examining only those predictions that are most likely to be erroneous, using specialized expertise tailored to the predicted class.

Based on this insight, we propose a conditional counter-inspection architecture, a lightweight two-stage intrusion detection architecture. An initial decision-tree classifier acts as a fast filter, providing a coarse prediction for each traffic record. Validation is performed through conditional counter-inspection: only experts trained on the opposite class of the initial prediction are evaluated, and their predictions are combined through a unanimous dissent rule. The initial classification is revised if and only if all counter-class experts disagree with it. This design enables targeted correction of difficult cases while preserving efficiency, and interpretability.

Experiments conducted on the 5G-NIDD dataset in a binary benign/malicious setting demonstrate that the proposed architecture consistently outperforms both a standalone decision tree and flat voting over the same experts. Statistical and ablation analyses confirm that the observed gains arise from selective validation and the unanimous dissent mechanism rather than from expert aggregation or increased model complexity. At the same time, the proposed architecture operates with microsecond-level inference latency per record, a compact memory footprint, and fully auditable decision paths, making it suitable for practical and resource-constrained 5G intrusion detection deployments.

The main contributions of this work are summarized as follows:

We propose a conditional counter-inspection architecture that departs from flat voting and uniform ensemble aggregation. Instead of evaluating all component models for every traffic record, the proposed design performs routing-based inference, activating only a subset of class-biased expert trees conditioned on the initial prediction. This selective execution reduces unnecessary model evaluations while preserving corrective capability.

- 2.

Noise-aware expert specialization through controlled training

We introduce a curriculum-biased training strategy that enables expert models to specialize by restricting exposure to non-target class samples. By controlling the input distribution during training, each expert focuses on class-specific decision regions, improving discrimination near class-ambiguous boundaries without increasing model depth or capacity.

- 3.

Deterministic unanimous-dissent decision logic

We formalize a unanimous-dissent rule for conditional validation, under which the initial classification is revised only when all counter-class experts agree on the opposite label. This decision logic enforces conservative correction, avoids over-adjustment, and preserves full interpretability through deterministic decision paths.

- 4.

Deployability-oriented evaluation and causal analysis

We provide a comprehensive evaluation on the 5G-NIDD dataset, including detection performance, robustness, efficiency, and routing cost measured as the number of tree traversals per record. Through ablation studies and paired statistical testing, we show that performance gains arise from routing-based selective validation and the unanimous-dissent decision logic, rather than from expert multiplicity or flat aggregation.

2. Materials and Methods

2.1. Overview of the Conditional Counter-Inspection Pipeline

This work proposes a lightweight intrusion detection architecture that augments a standard decision-tree classifier with a conditional counter-inspection layer. The pipeline is composed of two stages:

Initial filtering stage, trained on the full training dataset, provides a fast and interpretable initial classification.

Conditional counter-inspection stage, composed of a small set of class-biased decision-tree experts, selectively validates the initial prediction. Only experts trained on the opposite class of the initial decision are evaluated, and decision revision is governed by a unanimous dissent rule.

Crucially, all models in the pipeline are standard CART decision trees trained with default settings and a fixed random seed. Performance gains arise solely from training data exposure and selective validation, not from architectural complexity or hyperparameter tuning. The overall conditional counter-inspection architecture is illustrated in

Figure 1.

Lightweight Design Clarification

In this work, “lightweight” refers to three concrete properties:

All components are shallow decision trees, ensuring low model complexity.

Inference cost is strictly bounded: each record traverses at most four trees (one global model and up to three counter-class experts), compared to seven in flat voting.

The framework does not rely on boosting, deep neural networks, probabilistic gating, or calibration layers.

The routing mechanism activates only class-relevant experts, avoiding full ensemble evaluation while preserving corrective capacity.

2.2. Dataset and Experimental Preparation

2.2.1. Dataset Description

We evaluate the proposed architecture on the publicly available 5G-NIDD dataset [

12], which was collected from an operational 5G testbed and contains realistic benign traffic alongside diverse attack scenarios. The dataset is widely used for intrusion detection research in modern mobile networks and provides a representative flow-level view of 5G traffic under both normal and adversarial conditions [

13].

In this study, we operate on the released tabular representation of 5G-NIDD, which describes each network flow using a rich set of timing, size, loss, and protocol-related attributes.

In addition to 5G-NIDD, we evaluate the proposed architecture on the UNSW-NB15 benchmark, an intrusion detection dataset introduced by Moustafa and Slay [

14]. UNSW-NB15 contains modern synthetic benign and malicious network traffic generated within a controlled cyber range environment. We use the official training and testing split provided by the authors to ensure reproducibility and comparability with prior studies.

2.2.2. Preprocessing

To ensure data integrity and compatibility with decision-tree models, the following preprocessing steps are applied:

Categorical encoding: All categorical features (Proto, sDSb, dDSb, Cause, State) are transformed using one-hot encoding.

Missing value handling: Missing entries are replaced with an explicit Unknown category to preserve records and avoid biased imputation.

Column filtering: Auxiliary metadata fields (Attack Type, Attack Tool) are removed to prevent label leakage, as their presence would implicitly disclose the class during training and evaluation. This filtering also reflects realistic deployment conditions.

Label mapping: The target variable is standardized to binary values: 0 (benign) and 1 (malicious).

Leakage prevention: Hash-based row checks and feature-level consistency tests are performed post-encoding to verify that no flow appears in both training and testing sets.

After preprocessing, the dataset is stratified into 80% training (972,712 flows) and 20% testing (243,178 flows). The test split remains strictly unseen until final evaluation.

2.2.3. Terminology and Unit of Prediction

Although the 5G-NIDD dataset originates from raw packet captures, all experiments in this work are conducted on the publicly released tabular CSV representation, in which each row corresponds to a single traffic record derived from flow-level aggregation. Accordingly, throughout this paper, the term record denotes one dataset row, and inference latency is reported per record. The term packet is reserved exclusively for raw pcap-level analysis, which is outside the scope of this study. Accordingly, all latency and efficiency metrics reported in this paper are expressed per record.

2.3. First Layer: Global Decision Tree

The first layer of the pipeline is a global CART decision tree trained on the full training dataset without class bias, reweighting, or resampling. This model serves as a fast and interpretable initial filter, capturing general decision boundaries across both benign and malicious traffic.

Formally, the global model produces an initial prediction:

where 0 denotes benign traffic and 1 denotes malicious traffic.

Although effective, a single global decision boundary may remain vulnerable to ambiguity near class boundaries due to exposure to heterogeneous traffic patterns. The second layer of the pipeline is specifically designed to selectively validate such borderline cases.

2.4. Second Layer: Counter-Inspection

2.4.1. Motivation: Specialization Through Controlled Data Exposure

Rather than increasing model complexity or introducing heterogeneous learners, the counter-inspection layer is constructed by training multiple identical decision-tree models on deliberately biased data subsets. The central idea is to control what each expert observes, not how it learns.

Each expert is trained to be class-dominant: it fully observes one class while being exposed only to a limited fraction of the opposite class. This deliberate imbalance induces distinct inductive biases among experts while preserving model simplicity, interpretability, and homogeneity.

All experts remain standard CART decision trees trained with identical settings; differences in behavior arise exclusively from differences in data exposure rather than from architectural choices or hyperparameter tuning.

2.4.2. Curriculum-Biased Training Set Construction

Let

and

denote the benign and malicious subsets of the training data, respectively. For each fraction:

we construct biased training sets as follows:

Each training set preserves all records of the majority class while including only a fraction of the minority class. This ensures that each expert remains majority-dominant while being selectively exposed to counter-class patterns.

Smaller fractions yield narrow specialists, while larger fractions produce broader experts with increased contextual awareness.

2.4.3. Expert Training

Using the biased datasets defined above, six experts are trained independently:

Malicious-biased experts: .

Benign-biased experts: .

All experts are trained using CART decision trees with identical hyperparameters. The class exposure ratios for all expert models are reported in

Table 1.

Because all experts share the same learning algorithm and capacity, any observed specialization emerges solely from controlled data exposure, ensuring a transparent and interpretable design.

We adopt three experts per class, corresponding to the first three curriculum steps (0.10–0.30). A saturation analysis (

Appendix A) shows that these steps capture the majority of performance gains, while additional experts yield diminishing returns or bias erosion at increased cost.

2.4.4. Expert Number and Curriculum Justification

The proposed architecture employs three malicious-biased experts and three benign-biased experts, corresponding to minority exposure fractions

. This choice is informed by a depth analysis of standalone expert performance across increasing exposure levels (

Appendix A).

Validation results show that increasing minority exposure from 0.10 to 0.20 yields a substantial improvement in F1-score and a marked reduction in false-positive rate for malicious-biased experts. However, gains beyond 0.30 exhibit clear saturation, with diminishing improvements in F1 and only marginal reductions in FPR. Similar behavior is observed for benign-biased experts: fraction 0.20 achieves peak F1 performance, while further increases introduce bias erosion, reflected by rising false-positive rates and stagnating F1.

The plateau analysis confirms that the first three curriculum steps capture the majority of specialization benefits, while extending exposure to 0.40 produces negligible or inconsistent gains relative to additional computational overhead.

Using three experts per class also ensures a minimal odd-number configuration compatible with the unanimous-dissent rule, preventing tie conditions while preserving bounded inference cost (maximum four tree traversals).

Therefore, the selection of six experts represents a principled trade-off between specialization depth, correction stability, and lightweight deployment constraints.

2.5. Full Pipeline: Conditional Counter-Inspection

2.5.1. Conditional Expert Activation

The global decision tree G(x) provides the initial prediction:

where 0 denotes benign and 1 denotes malicious traffic.

Rather than querying all experts, counter-inspection is conditional:

If G(x) = 0, only malicious-biased experts are activated.

If G(x) = 1, only benign-biased experts are activated.

This design restricts computation to counter-class validation, focusing inference on class-specific uncertainty while avoiding unnecessary expert evaluation.

2.5.2. Counter-Inspection Semantics

Expert validation is performed exclusively by the set of counter-class experts associated with the initial prediction. Each expert provides an independent assessment of whether the initial decision should be reconsidered. The role of this layer is not to refine the decision progressively, but to validate or veto the initial classification through collective agreement. This formulation ensures that decision revision occurs only under strong and consistent counter-evidence.

2.5.3. Unanimous-Dissent Rule and Logical Formulation

Decision revision is governed by a unanimous-dissent rule. The global decision is revised if and only if all counter-experts unanimously disagree.

The final prediction

is defined as:

This logical formulation directly captures the counter-inspection mechanism and ensures deterministic, conservative decision revision. The full step-by-step inference process is detailed in Algorithm 1.

| Algorithm 1: Conditional counter-inspection inference procedure with unanimous-dissent decision rule. |

Require: Feature vector x

Ensure: Final prediction (x) ∈ {0, 1}

1: Compute the initial prediction using the global decision tree:

← G(x)

2: = 0 (initially classified as benign) then

3: for each malicious-biased expert ,

4: = 0 then

5: return (x) = 0 // retain benign classification.

6: else (all malicious-biased experts disagree),

7: return (x) = 1 // unanimous dissent → revise to malicious.

8: = 1, initially classified as malicious),

9: for each benign-biased expert ,

10: = 1, then

11: return (x) = 1 // retain malicious classification.

12: else (all benign-biased experts disagree),

13: return (x) = 0 // unanimous dissent → revise to benign |

This design ensures that the initial decision is revised only under unanimous counter-class agreement, preventing over-correction while preserving precision gains and reducing unnecessary model evaluations.

Unlike classical ensemble methods, the proposed architecture does not aggregate predictions from multiple models. Instead, it performs conditional validation of a single global decision, where expert models act as veto mechanisms rather than contributors to a combined score. As a result, expert multiplicity alone does not guarantee performance gains, which arise specifically from selective routing and the unanimous-dissent decision rule.

2.6. Distinction from Cascaded and Gated Expert Architectures

While the proposed architecture shares superficial similarities with cascaded classifiers and gated expert systems, it differs fundamentally in its objective and decision logic. Traditional cascades are designed to progressively increase model complexity, where early stages reject easy samples to reduce computational load in subsequent stages. Their primary goal is computational efficiency through hierarchical filtering. In contrast, our architecture does not escalate complexity across stages; it maintains homogeneous shallow trees and introduces a corrective validation layer that targets class-specific errors of a single primary model.

Similarly, classical ensemble and mixture-of-experts models aggregate multiple predictors to improve average generalization performance. In such systems, all models typically contribute symmetrically through weighted voting, probability averaging, or learned gating networks that dynamically assign responsibility. These frameworks optimize predictive diversity and collective strength. By contrast, the proposed method does not perform prediction aggregation. The global tree remains the sole predictive authority, and expert models are activated only to validate or challenge its output. Their role is strictly corrective rather than contributive.

Moreover, veto-based or confidence-driven systems often rely on probabilistic thresholds or meta-learned gating mechanisms to override predictions. In contrast, our framework introduces a deterministic unanimous-dissent rule: a label is modified only when all activated experts contradict the global decision. This eliminates probabilistic arbitration and avoids additional calibration or meta-learning layers.

Expert specialization is also constructed differently. Rather than using bootstrap resampling, random feature subspaces, or architectural heterogeneity to induce diversity, specialization is achieved through controlled minority exposure during training. This curriculum-biased mechanism shapes experts into targeted detectors of class-specific misclassifications rather than alternative global classifiers.

Therefore, the methodological novelty lies not in the presence of multiple trees per se, but in the integration of (i) role separation between prediction and validation, (ii) selective activation of expert subsets rather than full ensemble aggregation, (iii) curriculum-induced specialization, and (iv) deterministic unanimous correction under bounded inference traversal. This combination distinguishes the proposed framework from classical ensemble aggregation, cascade filtering, and probabilistic gating architectures.

2.7. Multi-Class Scalability

Although experiments in this work focus on binary intrusion detection, the conditional counter-inspection mechanism extends directly to multi-class settings.

Let

be a primary classifier such that:

where

denotes the number of classes.

For each class

, we define a class-specific expert group:

Each expert is trained using controlled exposure so that class remains dominant in its training distribution, while other classes are included in limited proportions. This induces class-specific specialization without architectural modification.

During inference, once the global classifier produces a prediction

, only the experts in

are activated. We define a unanimous-dissent condition as:

The final prediction is then defined as:

That is, the global decision is retained unless all activated experts unanimously contradict it. When unanimous dissent occurs, the label is reassigned to the most frequently predicted alternative class among the activated experts.

This extension preserves the same structural principles as the binary case:

A single primary predictor;

Selective activation of only class-relevant experts;

Deterministic correction under unanimous dissent;

Bounded inference traversal.

A full empirical evaluation of the multi-class extension is left for future work.

3. Results

This section is structured to progressively evaluate the proposed framework.

Section 3.1 isolates the added value of the conditional counter-inspection layer by analyzing how it augments the behavior of the base decision-tree model in terms of accuracy, error correction, efficiency, interpretability, and robustness. Building on this analysis,

Section 3.2 evaluates the complete two-layer pipeline and positions its performance and deployability relative to state-of-the-art intrusion detection methods on the 5G-NIDD dataset.

3.1. Added Value of Conditional Counter-Inspection

3.1.1. Incremental Enhancement: Impact of Conditional Counter-Inspection

We first evaluate the decision-tree model that constitutes the base layer of the proposed pipeline, trained on the full training set. This global decision tree serves as a transparent reference point for all subsequent comparisons and enables a direct assessment of the incremental improvements introduced by conditional counter-inspection. As reported in

Table 2, the standalone tree already achieves strong detection performance on the 5G-NIDD test set, with an F1-score of 0.99966. Nevertheless, it still produces non-negligible false negatives and false positives, which are critical in intrusion detection settings.

We then assess whether augmenting this base model with the conditional counter-inspection layer improves detection quality without modifying or retraining the global tree.

Table 3 reports the resulting performance comparison, while

Table 4 details the corresponding confusion-matrix statistics. The proposed pipeline achieves an F1-score of 0.99981, reflecting consistent improvements across all metrics. In particular, false negatives are reduced from 51 to 27 (−47.1%), and false positives from 48 to 30 (−37.5%). These gains indicate that targeted counter-inspection effectively corrects class-specific errors made by the global tree, reducing both missed intrusions and false alarms without altering the base model.

From an operational perspective, these improvements are significant. Reducing false negatives directly limits undetected attacks, while reducing false positives alleviates analyst workload and alert fatigue. Importantly, these benefits are achieved through selective validation rather than through retraining, tuning, or increasing the complexity of the global model.

3.1.2. Cost-Sensitive Evaluation

In intrusion detection systems, error types do not carry equal consequences. False negatives (missed attacks) typically incur significantly higher operational risk than false positives. To reflect this asymmetry, we evaluate the global model (G) and the proposed conditional counter-inspection (CS) architecture under a cost-sensitive formulation:

where λ lambda represents the penalty assigned to false negatives.

Table 5 reports the results for

. The proposed architecture consistently reduces the total cost-weighted risk across all penalty levels.

Key observations:

At , risk reduces from 1068 (G) to 570 (CS).

At , risk reduces from 2598 (G) to 1380 (CS).

The relative reduction in total risk remains close to 50% across all tested penalty factors, indicating stability of the correction mechanism under increasingly severe attack cost assumptions.

These results confirm that the proposed validation framework improves not only aggregate metrics but also operationally meaningful cost-sensitive risk, reinforcing its suitability for real-world 5G intrusion detection environments.

3.1.3. Ablation Analysis: Source of Performance Gains

To identify the mechanisms responsible for the observed performance improvements, we conduct two controlled ablation studies. The first isolates the effect of conditional routing, while the second evaluates the impact of the decision revision rule, independently of routing and model composition.

Ablation A—Does conditional routing matter beyond flat voting?

Objective: This ablation examines whether the observed performance gains arise from conditional routing itself, rather than from the presence of multiple expert models.

Compared configurations: All configurations rely on the same CART models and training data; only the inference strategy differs:

G (Global tree): a single decision tree trained on the full dataset (1 tree per record).

FV (Flat voting): uniform aggregation of all models, where every record is evaluated by the global tree and all six experts (7 trees per record).

Proposed pipeline: a conditional counter-inspection strategy in which the global prediction is validated only by counter-class experts, and revised under unanimous dissent (4 trees per record on average, −42.86% routing cost).

Results and statistical significance:

Table 6 reports paired McNemar tests comparing error rates between configurations on the test set (N = 243,178).

Key observations.

The proposed pipeline significantly improves over the global tree (p = 1.35 × 10−6) and over flat voting (p = 2.06 × 10−6).

Flat voting does not yield a statistically significant improvement over the global tree (p = 1.0), despite evaluating all experts.

Because flat voting and the proposed pipeline reuse exactly the same expert models, these results isolate conditional routing as the primary source of improvement, rather than expert multiplicity.

Deployability implication: Flat voting incurs the full ensemble cost (7 trees per record) without measurable benefit, whereas the proposed pipeline achieves higher accuracy with fewer evaluations (4 trees/record), making conditional routing both more effective and more efficient.

Ablation 2—Does the unanimous-dissent flip rule matter?

Objective: This ablation isolates the impact of the decision revision rule, independently of routing or model composition.

Variant definition (fixed routing, different dissent thresholds): All variants follow identical conditional routing: the global prediction is computed first, and only counter-class experts are evaluated. They differ only in the rule used to revise the decision:

Any-dissent rule: flip the global decision if at least one counter-class expert disagrees (highly permissive).

Majority-dissent rule: flip if at least two out of three counter-class experts disagree (moderately permissive).

Unanimous-dissent rule (proposed): flip only if all counter-class experts disagree (conservative).

This controlled setup eliminates confounding factors related to routing, training data, or model capacity. Performance comparison:

Table 7 summarizes accuracy and F1-score for each variant.

Key observations:

The unanimous-dissent rule achieves the strongest overall performance (Accuracy = 0.99977, F1-score = 0.99981).

The majority-dissent rule performs slightly worse but remains competitive (Accuracy = 0.99973, F1-score = 0.99978).

The any-dissent rule degrades performance below that of the global tree (Accuracy = 0.99954, F1-score = 0.99962), indicating excessive and erroneous decision reversals.

In addition to performance metrics, paired McNemar tests were conducted to statistically validate the effect of the decision revision rule under fixed routing and identical trained components. The unanimous-dissent rule significantly outperforms the any-dissent variant (

p = 2.0 × 10

−8), yielding marked reductions in both false-positive and false-negative rates, while the difference between unanimous and majority dissent is not statistically significant (

p = 0.1698). Full statistical results are reported in

Appendix B.

3.1.4. Efficiency and Footprint

To verify that the observed accuracy gains do not incur proportional computational overhead, we evaluate inference latency and model footprint. As reported in

Table 8, the proposed pipeline operates in the microsecond inference regime (≈1–2 µs per record under CPU-only execution), while substantially reducing the number of model evaluations compared to flat voting. Inference latency was measured using best-of-n per-record timing on a single CPU environment and should be interpreted as indicative rather than absolute.

Specifically, conditional counter-inspection evaluates, on average, four decision trees per record, whereas flat voting requires seven trees per record, corresponding to a 42.86% reduction in routing cost (measured as the number of tree traversals per record). This reduction is achieved without sacrificing detection performance, confirming that efficiency gains stem from selective model activation rather than architectural simplification.

In addition, the complete serialized pipeline—including the global tree and all six expert models—remains compact, with a total size of approximately 201 kB. This footprint corresponds to Python pickle serialization of scikit-learn CART models and provides a practical estimate of deployable memory usage. The combination of CPU-only inference, compact model size, and the absence of complex pre-processing supports deployment in resource-constrained 5G environments, such as edge nodes or embedded monitoring systems.

3.1.5. Interpretability and Auditability of the Full Pipeline

Because all learners in the proposed architecture are homogeneous CART decision trees, interpretability is preserved at two complementary levels: (i) model-level transparency for the global tree and each class-biased expert, and (ii) pipeline-level auditability enabled by explicit conditional routing and the unanimous-dissent rule.

(i) Model-level specialization (Global vs. experts): To verify that the six class-biased experts are not redundant copies of the global tree, we report impurity-based feature importances for each model.

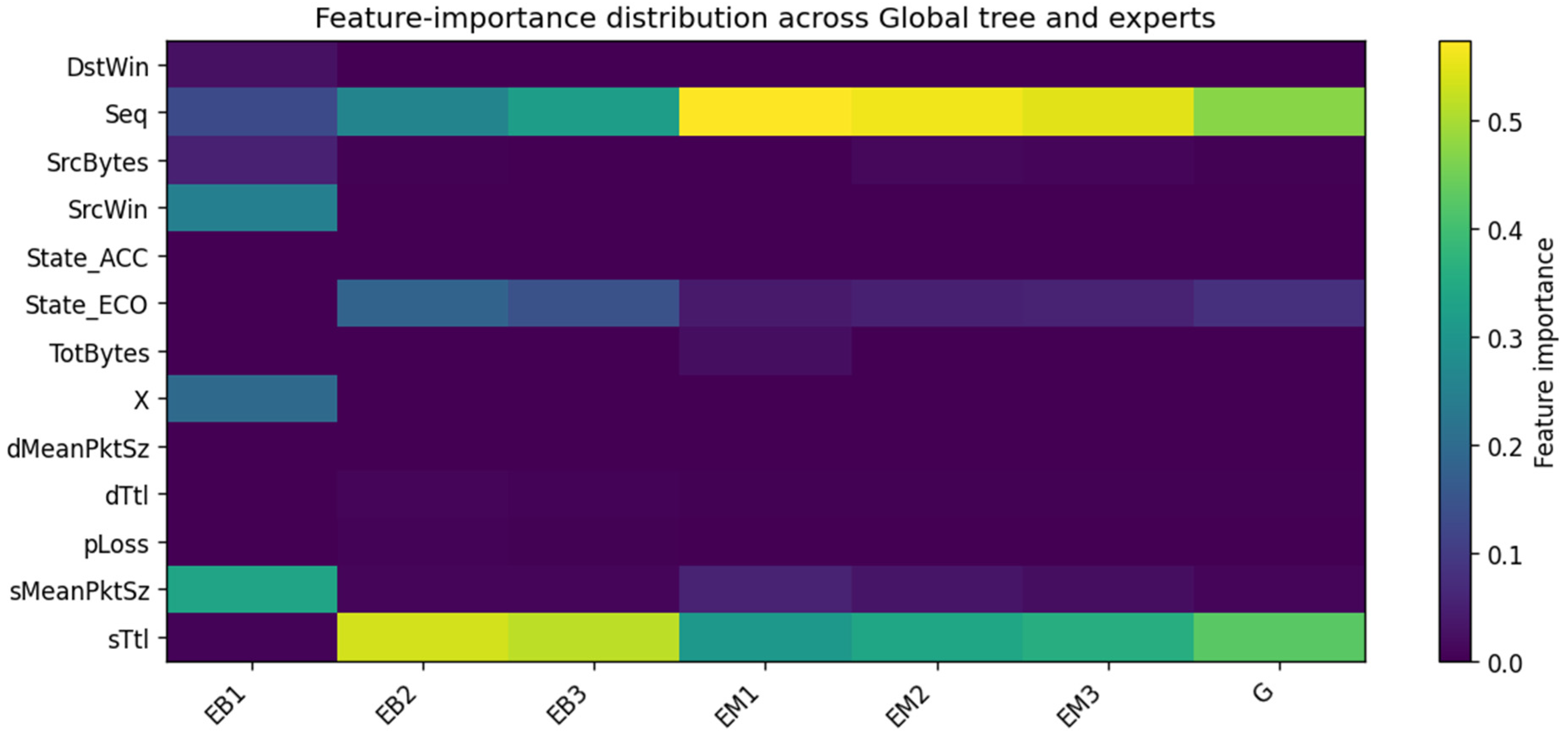

Table 9 summarizes the top-8 ranked features for the global model (G) and the experts (EM1–EM3: malicious-biased experts; EB1–EB3: benign-biased experts). The global tree is dominated by Seq and sTtl, whereas experts exhibit distinct emphasis patterns. For instance, malicious-biased experts (EM*) remain strongly driven by Seq/sTtl, while benign-biased experts (EB*) elevate other discriminative signals (e.g., sMeanPktSz, SrcWin, and occasionally X). This diversity supports the intended effect of class bias: despite identical hyperparameters, experts exhibit data-induced specialization rather than acting as redundant replicas of the global tree.

For clarity, we focus on the top-ranked features, as lower-ranked attributes contribute marginally to the decision process. Overall, the pipeline’s discriminative power is primarily driven by Seq and sTtl across the global tree and malicious experts, while benign experts exhibit complementary reliance on size-related features (e.g., sMeanPktSz, SrcWin), supporting the intended specialization effect. The distribution of feature importance across the global tree and the class-biased experts is further visualized in

Figure 2, which highlights both shared dominant predictors and specialization patterns induced by biased training exposure.

Quantitative Overlap Analysis

To complement the qualitative feature-importance inspection, we quantify structural similarity between the global tree and each expert using Top-k feature overlap and Jaccard similarity (k = 8).

Overlap values range from 62.5% to 100%, with Jaccard coefficients between 0.45 and 1.00. These results indicate that while experts remain partially aligned with the global model—preserving core discriminative signals—they also exhibit measurable structural divergence induced by controlled minority exposure.

This quantitative analysis confirms that specialization is neither arbitrary nor enforced through architectural heterogeneity; rather, it emerges naturally from curriculum-biased training while maintaining alignment with the global decision structure.

Controlled Disagreement Analysis

To characterize the behavior of the counter-inspection mechanism, we analyze routing-aware disagreement between the global model and the experts. Unanimous counter-class dissent occurs in only 0.0296% of test instances, with disagreement entropy equal to 0.009 bits.

Although disagreement is rare, these events correspond precisely to the subset of instances where correction is necessary. The resulting selective reversals reduce both false positives and false negatives, as demonstrated in

Section 3.1.1 and

Section 3.1.2.

The low entropy indicates that routing behavior is highly deterministic rather than unstable. Thus, the expert layer does not introduce ensemble noise; instead, it performs sparse, high-precision corrections on ambiguous boundary cases.

(ii) Pipeline-level auditability (end-to-end routing trace): Beyond per-model transparency, our pipeline is auditable at inference time because routing is deterministic: the global tree predicts first, then only counter-class experts are consulted, and the decision flips only under unanimous dissent.

Table 10 illustrates a representative test record where our pipeline corrects the global prediction. The trace explicitly records: (1) the global prediction, (2) which experts were triggered by conditional routing, (3) their predictions, and (4) whether unanimous dissent was activated. This provides a concrete, record-level explanation of why the final decision differs from the global model.

We emphasize that the reported feature importances are impurity-based and therefore descriptive rather than causal; however, when combined with explicit decision paths and the routing trace, they provide a practical and lightweight explanation mechanism suitable for IDS auditing in latency-constrained environments.

3.1.6. Robustness Across Data Splits

To assess the stability of the proposed pipeline under data re-partitioning, we evaluate the full architecture across five independent stratified 80/20 splits (random seeds: 11, 22, 33, 44, 55) while keeping the methodology unchanged, including the expert fractions (0.1/0.2/0.3). Results are reported for the global tree (G), flat voting (FV), and the proposed pipeline.

Error-rate stability (FNR/FPR):

Figure 3a,b report the false negative rate (FNR) and false positive rate (FPR) across splits. The proposed pipeline yields consistently lower FNR (fewer missed attacks) and generally lower FPR (fewer false alarms), showing that the observed performance gains translate into operationally meaningful error reductions.

Compact stability summary:

Figure 3c summarizes robustness using the mean ± standard deviation of F1 across splits. The proposed pipeline achieves the highest mean F1 while maintaining low variance, supporting stable gains under data re-partitioning.

F1-score stability:

Figure 3d reports the F1-score across the five splits. The proposed pipeline consistently achieves the highest F1-score, indicating that the improvement is reproducible and not driven by a particular partition.

Robustness Under Attack Prevalence Shift

To evaluate stability under varying class distributions, we simulate different malicious prevalence levels ranging from 50% down to 0.1%, while preserving the same trained models. Results show that the proposed pipeline consistently maintains strong recall (up to 1.000 at extreme imbalance) while reducing false positives relative to the global tree.

At 0.001 prevalence, the pipeline achieves perfect recall (FNR = 0) and improves precision from 0.6667 (G) to 0.7619, demonstrating better discrimination under extreme rarity. Across all tested prevalence levels, CS preserves or improves F1-score and maintains a lower FPR (0.000314 vs. 0.000502 for G).

These results confirm that the correction mechanism remains stable under severe class imbalance, a critical requirement in operational IDS environments where attack traffic is typically rare.

3.2. Benchmarking on 5G-NIDD: Performance and Deployability

3.2.1. Classical Benchmarking Against ML Baselines (Closest Experimental Alignment)

We first position the proposed pipeline against classical machine-learning baselines reported under a closely aligned supervised, tabular IDS setting on 5G-NIDD. To reflect deployment practicality, we additionally report serialized model size when it is explicitly disclosed in the source study [

15].

Table 11 summarizes representative ML baselines and ensembles together with their reported model sizes (when available), and includes the proposed pipeline as a reference point.

All reported results correspond to binary benign/malicious classification on 5G-NIDD, as stated in the original study [

15]; differences in preprocessing or optimization strategies may exist, and results are included to contextualize accuracy–footprint trade-offs under the same dataset family, rather than to claim strict experimental equivalence.

Beyond near-ceiling predictive quality, the proposed pipeline avoids the typical footprint cost associated with ensemble methods. Performance gains are achieved through structured specialization and conditional routing rather than always-on aggregation. Based on the serialized sizes reported in

Table 11, the proposed pipeline (≈201 kB) is approximately 187× smaller than LightGBM, 229× smaller than Random Forest, and over 2100× smaller than KNN, while operating in the same high-accuracy regime.

3.2.2. Deployability-Oriented Benchmarking Against Deep Learning Systems (Cost at Similar Reported Accuracy)

Deep learning IDS studies are often not strictly like-for-like with tabular ML pipelines because they frequently change representation, optimize different objectives (binary vs. multi-class or attack-specific detection), and make stronger hardware assumptions. Rather than forcing strict experimental equivalence, we adopt a reported-value, deployment-driven comparison that asks a narrower question: among studies reporting strong detection performance and explicitly disclosing inference time, what is the reported inference-cost regime?

In 5G monitoring pipelines, inference latency directly constrains whether detection can be performed inline, near-inline, or only offline; therefore, order-of-magnitude differences between microsecond- and millisecond-scale inference are operationally decisive, even at similar accuracy levels.

Accordingly,

Table 12 lists recent deep IDS models that report both performance and inference time, alongside the proposed pipeline.

Figure 4 visualizes the latency regime gap (µs vs. ms) using only disclosed values.

These visuals do not claim strict parity across methodologies; instead, they show that the proposed pipeline operates in the microsecond-per-record regime while maintaining near-ceiling detection quality, whereas the reported deep models lie in the millisecond regime under their respective setups. This cost-centric view directly supports suitability for low-latency, high-throughput 5G monitoring.

Using only reported values, proposed pipeline sits in the microsecond inference regime, while the cited deep models that disclose inference time typically fall in the 0.06–2.78 ms range per record, corresponding to an approximately 102–103× slower inference regime, even when their predictive quality is strong.

This supports the claim that, at high accuracy, our architecture delivers that performance at a deployability-friendly latency scale suitable for low-latency, high-throughput 5G monitoring—without needing representational transformations or deep inference stacks.

Together, these two benchmarking tiers show that the proposed pipeline attains state-of-the-art detection quality within a uniquely lightweight and low-latency operating regime, motivating the design analysis presented next.

3.3. Cross-Dataset Validation on UNSW-NB15

To assess the robustness of the proposed conditional counter-inspection architecture beyond 5G-NIDD, we conducted additional experiments on the UNSW-NB15 benchmark using its official train/test split.

UNSW-NB15 presents a substantially more heterogeneous traffic distribution than 5G-NIDD, with broader feature variability and a more challenging class structure. On this dataset, the standalone global decision tree (G) achieved an accuracy of 89.18%, with a false positive rate (FPR) of 3.70% and a false negative rate (FNR) of 14.16%.

When the conditional counter-inspection layer was applied, the overall accuracy improved to 89.62%. More importantly, the mechanism produced simultaneous reductions in both major error types:

False positives decreased from 2074 to 1515 (−559 instances, corresponding to a 26.9% relative reduction).

False negatives decreased from 16,904 to 16,688 (−216 instances).

FPR decreased from 3.70% to 2.71%.

FNR decreased from 14.16% to 13.98%.

The reduction in false positives is particularly significant for operational intrusion detection systems, where excessive alerts can overwhelm analysts and degrade response efficiency. In large-scale 5G deployments processing millions of flows daily, a relative reduction of approximately 27% in false positives translates into a substantial decrease in unnecessary investigations. Simultaneously, reducing false negatives enhances protection against undetected malicious activity.

These findings confirm that the proposed conditional validation mechanism remains effective under heterogeneous traffic conditions and does not depend on dataset-specific separability properties. While this study focused on interpretable decision-tree models to preserve simplicity and bounded inference cost, further gains may be achievable by exploring alternative lightweight base classifiers within the same counter-inspection framework in future work.

4. Discussion

This study addresses a recurring limitation in learning-based intrusion detection for 5G networks: the structural trade-off between detection accuracy and operational efficiency. While prior work has predominantly pursued higher accuracy through increased model complexity—via deeper architectures or larger ensembles—the results presented here demonstrate that substantial performance gains can instead be achieved through architectural selectivity.

The proposed conditional counter-inspection architecture builds on the observation that misclassifications in intrusion detection are often class-specific and concentrated near ambiguous decision regions. Rather than relying on a single global model or uniformly aggregating multiple experts to resolve this ambiguity, the proposed approach introduces selective validation, whereby only experts relevant to the initial prediction are consulted. This design departs from conventional ensemble strategies that evaluate all models for every instance and therefore incur unnecessary computational cost.

Experimental results on the 5G-NIDD dataset confirm that this selective validation strategy yields meaningful improvements. Compared to a strong baseline decision tree trained on the full dataset, the proposed architecture simultaneously reduces both false positives and false negatives—a result that is rarely achieved through simple post-processing or threshold tuning. Importantly, ablation experiments show that these gains do not arise from the mere presence of additional experts. Flat voting over the same expert models fails to improve performance, whereas conditional counter-inspection combined with a unanimous-dissent decision rule yields statistically significant error reduction. This indicates that decision logic and selective activation, rather than ensemble size, are the primary drivers of improvement.

From a deployment perspective, the proposed architecture preserves the advantages traditionally associated with classical machine learning models. Inference remains in the microsecond regime per record, model size remains on the order of hundreds of kilobytes, and training time is measured in seconds rather than minutes or hours. These properties contrast with many deep learning–based intrusion detection pipelines, which often require complex preprocessing, GPU acceleration, and substantially larger memory footprints. While such models may offer advantages for fine-grained or multi-class analysis, their operational cost can limit applicability in latency-sensitive or resource-constrained 5G environments.

Interpretability is another important strength of the proposed approach. Because all components are decision trees, each prediction can be traced through explicit decision paths, both at the initial classification stage and within the counter-inspection layer. This transparency is particularly valuable in security-critical settings, where auditability and explainability are often as important as raw detection performance.

Finally, although the present study focuses on binary benign/malicious detection using tabular flow-level records, the architectural principles introduced here are not inherently limited to this setting. Conditional routing, class-biased specialization, and unanimous-dissent decision rules could be extended to multi-class detection, alternative data representations, or hybrid pipelines combining lightweight models at different stages. These directions represent promising avenues for future research.