Generative AI in Medicine and Healthcare: Promises, Opportunities and Challenges

Abstract

:1. Introduction

2. Background

3. Current Efforts of Applying Generative AI and LLMs in Medicine and Healthcare

3.1. Clinical Administration Support

3.2. Clinical Decision Support

3.3. Patient Engagement

3.4. Synthetic Data Generation

3.5. Professional Education

3.6. Examples from Europe and Asia

4. Discussion

4.1. Can We Trust Generative AI? Is It Clinically Safe and Reliable?

4.2. Clinical Evaluation, Regulation and Certification Challenges

4.3. Privacy Concerns

4.4. Copyright and Ownership Issues

4.5. Solutions on the Horizon

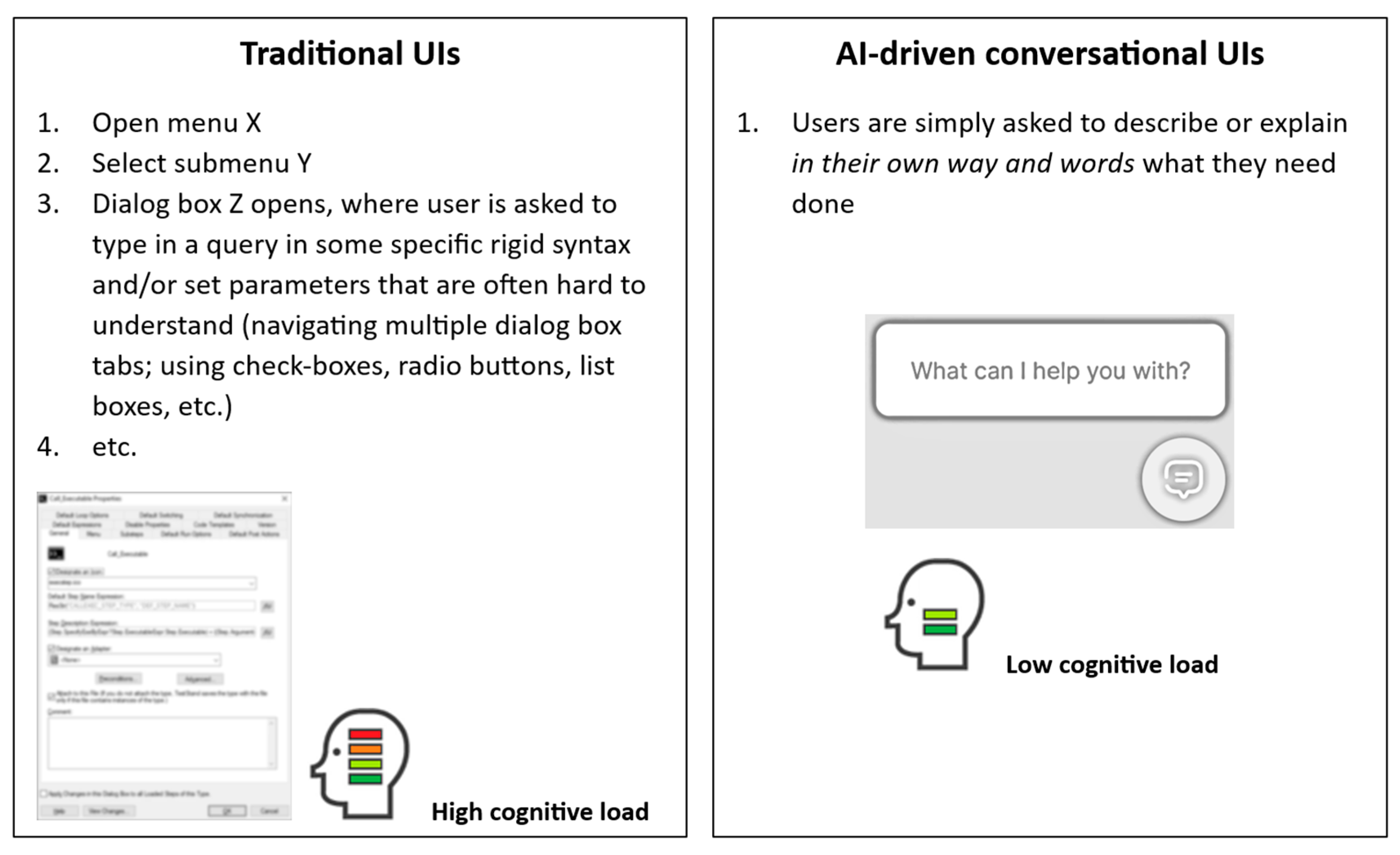

4.6. Opportunities for Custom Solutions and Much Improved User Interfaces

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Dale, R. GPT-3: What’s it good for? Nat. Lang. Eng. 2021, 27, 113–118. [Google Scholar] [CrossRef]

- Aydın, Ö.; Karaarslan, E. OpenAI ChatGPT Generated Literature Review: Digital Twin in Healthcare; SSRN 4308687; SSRN: Rochester, NY, USA, 2022; Available online: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4308687 (accessed on 29 December 2022).

- Liu, S.; Wright, A.P.; Patterson, B.L.; Wanderer, J.P.; Turer, R.W.; Nelson, S.D.; McCoy, A.B.; Sittig, D.F.; Wright, A. Using AI-generated suggestions from ChatGPT to optimize clinical decision support. J. Am. Med. Inform. Assoc. 2023, 30, 1237–1245. [Google Scholar] [CrossRef]

- Lecler, A.; Duron, L.; Soyer, P. Revolutionizing radiology with GPT-based models: Current applications, future possibilities and limitations of ChatGPT. Diagn. Interv. Imaging 2023, 104, 269–274. [Google Scholar] [CrossRef]

- Savage, N. Drug discovery companies are customizing ChatGPT: Here’s how. Nat. Biotechnol. 2023, 41, 585–586. [Google Scholar] [CrossRef]

- Eysenbach, G. The role of ChatGPT, generative language models, and artificial intelligence in medical education: A conversation with ChatGPT and a call for papers. JMIR Med. Educ. 2023, 9, e46885. [Google Scholar] [CrossRef] [PubMed]

- Xue, V.W.; Lei, P.; Cho, W.C. The potential impact of ChatGPT in clinical and translational medicine. Clin. Transl. Med. 2023, 13, e1216. [Google Scholar] [CrossRef] [PubMed]

- Sallam, M. ChatGPT utility in healthcare education, research, and practice: Systematic review on the promising perspectives and valid concerns. Healthcare 2023, 11, 887. [Google Scholar] [CrossRef]

- Patel, A.; Arasanipalai, A. Applied Natural Language Processing in the Enterprise: Teaching Machines to Read, Write, and Understand; O’Reilly Media: Sebastopol, CA, USA, 2021; ISBN 9781492062578. Available online: https://www.oreilly.com/library/view/applied-natural-language/9781492062561/ch01.html (accessed on 11 May 2021).

- Kilicoglu, H.; Shin, D.; Fiszman, M.; Rosemblat, G.; Rindflesch, T.C. SemMedDB: A PubMed-scale repository of biomedical semantic predications. Bioinformatics 2012, 28, 3158–3160. [Google Scholar] [CrossRef] [PubMed]

- Wu, Y.; Xu, J.; Jiang, M.; Zhang, Y.; Xu, H. A Study of Neural Word Embeddings for Named Entity Recognition in Clinical Text. In AMIA Annual Symposium Proceedings; American Medical Informatics Association: Bethesda, MD, USA, 2015; Volume 2015, pp. 1326–1333. [Google Scholar]

- Śniegula, A.; Poniszewska-Marańda, A.; Chomątek, Ł. Towards the Named Entity Recognition Methods in Biomedical Field. In SOFSEM 2020: Theory and Practice of Computer Science, Proceedings of the 46th International Conference on Current Trends in Theory and Practice of Informatics, SOFSEM 2020, Limassol, Cyprus, 20–24 January 2020; Proceedings 46; Springer International Publishing: Berlin/Heidelberg, Germany, 2020; pp. 375–387. [Google Scholar]

- Chen, L.; Gu, Y.; Ji, X.; Sun, Z.; Li, H.; Gao, Y.; Huang, Y. Extracting medications and associated adverse drug events using a natural language processing system combining knowledge base and deep learning. J. Am. Med. Inform. Assoc. 2020, 27, 56–64. [Google Scholar] [CrossRef]

- Wu, S.T.; Sohn, S.; Ravikumar, K.E.; Wagholikar, K.; Jonnalagadda, S.R.; Liu, H.; Juhn, Y.J. Automated chart review for asthma cohort identification using natural language processing: An exploratory study. Ann. Allergy Asthma Immunol. 2013, 111, 364–369. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. In Advances in Neural Information Processing Systems; NIPS 2017; NIPS: Denver, CO, USA, 2017; p. 30. Available online: https://papers.nips.cc/paper_files/paper/2017/file/3f5ee243547dee91fbd053c1c4a845aa-Paper.pdf (accessed on 12 June 2017).

- VanBuskirk, A. A Brief History of the Generative Pre-Trained Transformer (GPT) Language Models (31 March 2023). Available online: https://blog.wordbot.io/ai-artificial-intelligence/a-brief-history-of-the-generative-pre-trained-transformer-gpt-language-models/ (accessed on 26 July 2023).

- O’Laughlin, D. AI Foundations Part 1: Transformers, Pre-Training and Fine-Tuning, and Scaling (11 April 2023). Available online: https://www.fabricatedknowledge.com/p/ai-foundations-part-1-transformers (accessed on 26 July 2023).

- Spataro, J. Introducing Microsoft 365 Copilot—Your Copilot for Work. Official Microsoft Blog. March 2023. Available online: https://news.microsoft.com/reinventing-productivity/ (accessed on 22 July 2023).

- Nuance. Nuance Dragon Medical One. Available online: https://www.nuance.com/healthcare/provider-solutions/speech-recognition/dragon-medical-one.html (accessed on 22 July 2023).

- Suki. Suki Assistant. Available online: https://www.suki.ai/technology/ (accessed on 22 July 2023).

- Corti. AI-Powered Patient Triaging. Available online: https://www.corti.ai/solutions/engage (accessed on 22 July 2023).

- Rahaman, M.S.; Ahsan, M.M.; Anjum, N.; Rahman, M.M.; Rahman, M.N. The AI Race Is on! Google’s Bard and OpenAI’s ChatGPT Head to Head: An Opinion Article; SSRN 4351785; SSRN: Rochester, NY, USA, 2023; Available online: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4351785 (accessed on 8 February 2023).

- Ellen, A.I. Your Smart AI Companion with Voice. Available online: https://round-spear-8489.typedream.app/ (accessed on 22 July 2023).

- Board of Innovation. Ellen AI. Available online: https://healthcare.boardofinnovation.com/ellen-ai/ (accessed on 22 July 2023).

- Board of Innovation. Glass AI. Available online: https://healthcare.boardofinnovation.com/glass-ai/ (accessed on 22 July 2023).

- Glass Health. Glass AI. Available online: https://glass.health/ai (accessed on 22 July 2023).

- Regard. Torrance Memorial Medical Center Reduces Physician Burnout, Increases Annual Revenue by $2 Million with the Help of Regard Case Study (September 2022). Available online: https://withregard.com/case-studies/tmmc-reduces-burnout (accessed on 22 July 2023).

- Sharma, S. F.A.S.T.—Meta AI’s Segment Anything for Medical Imaging. RedBrick AI 10 April 2023. Available online: https://blog.redbrickai.com/blog-posts/fast-meta-sam-for-medical-imaging (accessed on 22 July 2023).

- Kirillov, A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Gustafson, L.; Xiao, T.; Whitehead, S.; Berg, A.C.; Lo, W.Y.; et al. Segment anything. arXiv 2023, arXiv:2304.02643. [Google Scholar] [CrossRef]

- Paige. Paige FullFocus. Available online: https://paige.ai/clinical/ (accessed on 22 July 2023).

- Raciti, P.; Sue, J.; Retamero, J.A.; Ceballos, R.; Godrich, R.; Kunz, J.D.; Casson, A.; Thiagarajan, D.; Ebrahimzadeh, Z.; Viret, J.; et al. Clinical Validation of Artificial Intelligence–Augmented Pathology Diagnosis Demonstrates Significant Gains in Diagnostic Accuracy in Prostate Cancer Detection. Arch. Pathol. Lab. Med. 2022. [Google Scholar] [CrossRef]

- Kahun. Evidence-Based AI Designed for Clinical Reasoning. Available online: https://www.kahun.com/technology (accessed on 22 July 2023).

- Ben-Shabat, N.; Sharvit, G.; Meimis, B.; Joya, D.B.; Sloma, A.; Kiderman, D.; Shabat, A.; Tsur, A.M.; Watad, A.; Amital, H. Assessing data gathering of chatbot based symptom checkers-a clinical vignettes study. Int. J. Med. Inform. 2022, 168, 104897. [Google Scholar] [CrossRef]

- Hippocratic, A.I. Benchmarks. Available online: https://www.hippocraticai.com/benchmarks (accessed on 22 July 2023).

- Ayers, J.W.; Poliak, A.; Dredze, M.; Leas, E.C.; Zhu, Z.; Kelley, J.B.; Faix, D.J.; Goodman, A.M.; Longhurst, C.A.; Hogarth, M.; et al. Comparing Physician and Artificial Intelligence Chatbot Responses to Patient Questions Posted to a Public Social Media Forum. JAMA Intern. Med. 2023, 183, 589–596. [Google Scholar] [CrossRef]

- Gridspace. Explore Ways to Build a Better Customer Experience with Conversational AI. Available online: https://resources.gridspace.com/ (accessed on 22 July 2023).

- Syntegra. Data-Driven Innovation through Advanced AI. Available online: https://www.syntegra.io/technology (accessed on 22 July 2023).

- Muniz-Terrera, G.; Mendelevitch, O.; Barnes, R.; Lesh, M.D. Virtual cohorts and synthetic data in dementia: An illustration of their potential to advance research. Front. Artif. Intell. 2021, 4, 613956. [Google Scholar] [CrossRef] [PubMed]

- OpenAI. DALL-E 2. Available online: https://openai.com/dall-e-2 (accessed on 22 July 2023).

- Adams, L.C.; Busch, F.; Truhn, D.; Makowski, M.R.; Aerts, H.J.W.L.; Bressem, K.K. What Does DALL-E 2 Know About Radiology? J. Med. Internet Res. 2023, 25, e43110. [Google Scholar] [CrossRef]

- Sabzalieva, E.; Valentini, A. ChatGPT and Artificial Intelligence in Higher Education: Quick Start Guide. UNESCO. 2023. Available online: https://www.iesalc.unesco.org/wp-content/uploads/2023/04/ChatGPT-and-Artificial-Intelligence-in-higher-education-Quick-Start-guide_EN_FINAL.pdf (accessed on 22 April 2023).

- Venkatesh, K.; Kamel Boulos, M.N. (Eds.) Theme Issue: ChatGPT and Generative Language Models in Medical Education. JMIR Medical Education 2023. Available online: https://mededu.jmir.org/themes/1302-theme-issue-chatgpt-and-generative-language-models-in-medical-education (accessed on 31 July 2023).

- Unlearn, A.I. AI-Powered Digital Twins of Individual Patients. Available online: https://www.unlearn.ai/technology (accessed on 22 July 2023).

- Bertolini, D.; Loukianov, A.D.; Smith, A.M.; Li-Bland, D.; Pouliot, Y.; Walsh, J.R.; Fisher, C.K. Modeling Disease Progression in Mild Cognitive Impairment and Alzheimer’s Disease with Digital Twins. arXiv 2020, arXiv:2012.13455. [Google Scholar]

- Abridge. Abridge. Available online: https://www.abridge.com/our-technology (accessed on 22 July 2023).

- Krishna, K.; Khosla, S.; Bigham, J.P.; Lipton, Z.C. Generating SOAP notes from doctor-patient conversations using modular summarization techniques. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), Online, 2–5 August 2021; pp. 4958–4972. [Google Scholar] [CrossRef]

- Philips. Philips Joins Forces with AWS to bring Philips HealthSuite Imaging PACS to the Cloud and Advance AI-Enabled Tools in Support of Clinicians (17 April 2023). Available online: https://www.philips.com/a-w/about/news/archive/standard/news/press/2023/20230417-philips-joins-forces-with-aws-to-bring-philips-healthsuite-imaging-pacs-to-the-cloud-and-advance-ai-enabled-tools-in-support-of-clinicians.html (accessed on 18 August 2023).

- SayHeart. SayHeart—Humanizing Health. Available online: https://sayheart.ai/ (accessed on 18 August 2023).

- Matsuzoe, R. Japan to Develop Generative AI to Speed Scientific Discovery (30 July 2023). Nikkei Asia 2023. Available online: https://asia.nikkei.com/Business/Technology/Japan-to-develop-generative-AI-to-speed-scientific-discovery (accessed on 18 August 2023).

- Lee, P.; Goldberg, C.; Kohane, I. The AI Revolution in Medicine: GPT-4 and Beyond, 1st ed.; Pearson: London, UK, 2023; ISBN-10: 0138200130/ISBN-13: 978-0138200138; Available online: https://www.amazon.com/AI-Revolution-Medicine-GPT-4-Beyond/dp/0138200130/ (accessed on 14 April 2023).

- PubMed Query Using the Term ‘ChatGPT’. Available online: https://pubmed.ncbi.nlm.nih.gov/?term=chatgpt&sort=date (accessed on 31 July 2023).

- Strickland, E. Dr. ChatGPT Will Interface with You Now: Questioning the Answers at the Intersection of Big Data and Big Doctor (7 July 2023). IEEE Spectrum 2023. Available online: https://spectrum.ieee.org/chatgpt-medical-exam (accessed on 28 July 2023).

- Hillier, M. Why Does ChatGPT Generate Fake References? (20 February 2023). Available online: https://teche.mq.edu.au/2023/02/why-does-chatgpt-generate-fake-references/ (accessed on 28 July 2023).

- McGowan, A.; Gui, Y.; Dobbs, M.; Shuster, S.; Cotter, M.; Selloni, A.; Goodman, M.; Srivastava, A.; Cecchi, G.A.; Corcoran, C.M. ChatGPT and Bard exhibit spontaneous citation fabrication during psychiatry literature search. Psychiatry Res. 2023, 326, 115334. [Google Scholar] [CrossRef] [PubMed]

- Proser, Z. Retrieval Augmented Generation (RAG): Reducing Hallucinations in GenAI Applications. Available online: https://www.pinecone.io/learn/retrieval-augmented-generation/ (accessed on 7 August 2023).

- Seghier, M.L. ChatGPT: Not all languages are equal. Nature 2023, 615, 216. [Google Scholar] [CrossRef]

- OpenAI. Models. Available online: https://platform.openai.com/docs/models/overview (accessed on 28 July 2023).

- Meta. Meta and Microsoft Introduce the Next Generation of Llama (18 July 2023). Available online: https://about.fb.com/news/2023/07/llama-2/ (accessed on 28 July 2023).

- Google. Bard. Available online: https://bard.google.com/ (accessed on 28 July 2023).

- Moshirfar, M.; Altaf, A.W.; Stoakes, I.M.; Tuttle, J.J.; Hoopes, P.C. Artificial Intelligence in Ophthalmology: A Comparative Analysis of GPT-3.5, GPT-4, and Human Expertise in Answering StatPearls Questions. Cureus 2023, 15, e40822. [Google Scholar] [CrossRef]

- Chen, L.; Zaharia, M.; Zou, J. How is ChatGPT’s behavior changing over time? arXiv 2023. [Google Scholar] [CrossRef]

- UK Medicines & Healthcare products Regulatory Agency. Software and Artificial Intelligence (AI) as a Medical Device (Guidance, Updated 26 July 2023). Available online: https://www.gov.uk/government/publications/software-and-artificial-intelligence-ai-as-a-medical-device/software-and-artificial-intelligence-ai-as-a-medical-device (accessed on 28 July 2023).

- Meskó, B.; Topol, E.J. The imperative for regulatory oversight of large language models (or generative AI) in healthcare. NPJ Digit. Med. 2023, 6, 120. [Google Scholar] [CrossRef]

- BBC News. ChatGPT Banned in Italy over Privacy Concerns (1 April 2023). Available online: https://www.bbc.co.uk/news/technology-65139406 (accessed on 1 April 2023).

- BBC News. ChatGPT Accessible Again in Italy (28 April 2023). Available online: https://www.bbc.co.uk/news/technology-65431914 (accessed on 28 April 2023).

- OpenAI. New Ways to Manage Your Data in ChatGPT (25 April 2023). Available online: https://openai.com/blog/new-ways-to-manage-your-data-in-chatgpt (accessed on 26 April 2023).

- NVIDIA Corporation. NVIDIA HGX AI Supercomputer. Available online: https://www.nvidia.com/en-gb/data-center/hgx/ (accessed on 7 August 2023).

- UK Cabinet Office. Guidance to Civil Servants on Use of Generative AI (Published 29 June 2023). Available online: https://www.gov.uk/government/publications/guidance-to-civil-servants-on-use-of-generative-ai/guidance-to-civil-servants-on-use-of-generative-ai (accessed on 21 July 2023).

- Nolan, M. Llama and ChatGPT Are Not Open-Source—Few Ostensibly Open-Source LLMs Live up to the Openness Claim. IEEE Spectrum 27 July 2023. Available online: https://spectrum.ieee.org/open-source-llm-not-open (accessed on 28 July 2023).

- Claburn, T. Microsoft, OpenAI Sued for $3B after Allegedly Trampling Privacy with ChatGPT. The Register 28 June 2023. Available online: https://www.theregister.com/2023/06/28/microsoft_openai_sued_privacy/ (accessed on 29 June 2023).

- Zhang, D.; Finckenberg-Broman, P.; Hoang, T.; Pan, S.; Xing, Z.; Staples, M.; Xu, X. Right to be Forgotten in the Era of Large Language Models: Implications, Challenges, and Solutions. arXiv 2023. [Google Scholar] [CrossRef]

- Thampapillai, D. Two Authors Are Suing OpenAI for Training ChatGPT with Their Books. Could They Win? The Conversation 7 July 2023. Available online: https://theconversation.com/two-authors-are-suing-openai-for-training-chatgpt-with-their-books-could-they-win-209227 (accessed on 10 July 2023).

- Rao, R. Generative AI’s Intellectual Property Problem Heats up AIs producing Art or Inventions Have to Navigate a Hostile Legal Landscape, and a Consensus Is Far Away. IEEE Spectrum 13 June 2023. Available online: https://spectrum.ieee.org/generative-ai-ip-problem (accessed on 15 June 2023).

- Ozcan, S.; Sekhon, J.; Ozcan, O. ChatGPT: What the Law Says About Who Owns the Copyright of AI-Generated Content. The Conversation 17 April 2023. Available online: https://theconversation.com/chatgpt-what-the-law-says-about-who-owns-the-copyright-of-ai-generated-content-200597 (accessed on 18 April 2023).

- Microsoft Corporation. Summary of Changes to the Microsoft Services Agreement—30 September 2023. Available online: https://www.microsoft.com/en-us/servicesagreement/upcoming-updates (accessed on 11 August 2023).

- European Parliament (News). EU AI Act: First Regulation on Artificial Intelligence (14 June 2023). Available online: https://www.europarl.europa.eu/news/en/headlines/society/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence (accessed on 16 June 2023).

- OpenAI. API Reference—OpenAI API. Available online: https://platform.openai.com/docs/api-reference/introduction (accessed on 30 June 2023).

- OpenAI. ChatGPT Plugins (23 March 2023). Available online: https://openai.com/blog/chatgpt-plugins (accessed on 24 March 2023).

- OpenAI. Chat Plugins—Introduction. Available online: https://platform.openai.com/docs/plugins/introduction (accessed on 28 July 2023).

- Naskar, R. Google Bard Extensions May Be Coming Soon to Compete with ChatGPT. Neowin 18 July 2023. Available online: https://www.neowin.net/news/google-bard-extensions-may-be-coming-soon-to-compete-with-chatgpt/ (accessed on 18 July 2023).

- Petal/Paladin Max, Inc. GPT-Trainer. Available online: https://gpt-trainer.com/ (accessed on 14 July 2023).

- Velvárt, A. How Will AI Affect User Interfaces? Linkedin 2023. Available online: https://www.linkedin.com/pulse/how-ai-affect-user-interfaces-andr%2525C3%2525A1s-velv%2525C3%2525A1rt/ (accessed on 26 July 2023).

- Gautam, R. OSM-GPT: An Innovative Project Combining GPT-3 and the Overpass API to Facilitate Easy Feature Discovery on OpenStreetMap. Available online: https://github.com/rowheat02/osm-gpt (accessed on 26 July 2023).

- Venkatesh, K.P.; Brito, G.; Kamel Boulos, M.N. Health Digital Twins in Life Science and Health Care Innovation. Annu. Rev. Pharmacol. Toxicol. 2024, 64. in press. [Google Scholar] [CrossRef]

- Can AI Code Beat Saber? Watch ChatGPT Try (YouTube Video, 7 May 2023). Available online: https://www.youtube.com/watch?v=E2rktIcLJwo (accessed on 2 June 2023).

- Tao, R.; Xu, J. Mapping with ChatGPT. ISPRS Int. J. Geo-Inf. 2023, 12, 284. [Google Scholar] [CrossRef]

- Srivastava, J.; Routray, S.; Ahmad, S.; Waris, M.M. Internet of Medical Things (IoMT)-Based Smart Healthcare System: Trends and Progress. Comput. Intell. Neurosci. 2022, 2022, 7218113. [Google Scholar] [CrossRef]

- Dilibal, C.; Davis, B.L.; Chakraborty, C. Generative Design Methodology for Internet of Medical Things (IoMT)-based Wearable Biomedical Devices. In Proceedings of the 2021 3rd International Congress on Human-Computer Interaction, Optimization and Robotic Applications (HORA), Ankara, Turkey, 11–13 June 2021; IEEE: Piscataway, NJ, USA, 2021. [Google Scholar] [CrossRef]

- Yellig, J. Where ChatGPT Fits in the Internet of Things (6 July 2023). IoT World Today (Informa) 2023. Available online: https://www.iotworldtoday.com/connectivity/where-chatgpt-fits-in-the-internet-of-things (accessed on 16 August 2023).

- Wong, B.; Info-Tech Research Group. How Generative AI is Changing the Game in Healthcare (5 April 2023). LinkedIn 2023. Available online: https://www.linkedin.com/pulse/future-here-how-generative-ai-changing-game-healthcare/ (accessed on 16 August 2023).

- Candemir, S.; Nguyen, X.V.; Folio, L.R.; Prevedello, L.M. Training Strategies for Radiology Deep Learning Models in Data-limited Scenarios. Radiol. Artif. Intell. 2021, 3, e210014. [Google Scholar] [CrossRef] [PubMed]

- Cynerio. Cynerio Harnesses the Power of Generative AI to Revolutionize Healthcare Cybersecurity (27 June 2023). Available online: https://www.cynerio.com/blog/cynerio-harnesses-the-power-of-generative-ai-to-revolutionize-healthcare-cybersecurity (accessed on 16 August 2023).

- Sabry Abdel-Messih, M.; Kamel Boulos, M.N. ChatGPT in Clinical Toxicology. JMIR Med. Educ. 2023, 9, e46876. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, P.; Kamel Boulos, M.N. Generative AI in Medicine and Healthcare: Promises, Opportunities and Challenges. Future Internet 2023, 15, 286. https://doi.org/10.3390/fi15090286

Zhang P, Kamel Boulos MN. Generative AI in Medicine and Healthcare: Promises, Opportunities and Challenges. Future Internet. 2023; 15(9):286. https://doi.org/10.3390/fi15090286

Chicago/Turabian StyleZhang, Peng, and Maged N. Kamel Boulos. 2023. "Generative AI in Medicine and Healthcare: Promises, Opportunities and Challenges" Future Internet 15, no. 9: 286. https://doi.org/10.3390/fi15090286

APA StyleZhang, P., & Kamel Boulos, M. N. (2023). Generative AI in Medicine and Healthcare: Promises, Opportunities and Challenges. Future Internet, 15(9), 286. https://doi.org/10.3390/fi15090286