1. Introduction

The stock market is a dynamic and volatile environment influenced by numerous unpredictable factors, including economic indicators, corporate actions, geopolitical events, and investor sentiment [

1]. These factors contribute to a constantly changing environment that poses both potential gains and significant risks for market participants. Accurate forecasting of stock prices is essential for investors, policymakers, and financial analysts to make informed, risk-conscious decisions [

2]. It allows for more strategic asset allocation, enhances portfolio management, and supports regulatory planning. However, the complexity and nonlinear nature of financial time series make this task particularly challenging [

3].

Recent advances in machine learning and deep learning have improved predictive accuracy by capturing complex nonlinear patterns in financial data; however, most existing models remain fundamentally deterministic and provide only point estimates [

4]. In high-risk financial environments, the absence of uncertainty information limits their practical usefulness, as decision makers require not only expected outcomes but also an understanding of forecast confidence and potential risk exposure. This has motivated growing interest in Bayesian probabilistic models, which offer a principled framework for integrating uncertainty into stock price forecasting by producing full predictive distributions rather than single-value predictions. Such uncertainty-aware forecasting is particularly valuable during periods of market instability, where reliable risk quantification is as critical as point prediction accuracy.

Despite the growing body of work on Bayesian forecasting, a critical gap remains: existing studies tend to evaluate Bayesian models in isolation, making direct performance comparisons unreliable due to differences in datasets, preprocessing pipelines, and evaluation protocols. Moreover, the majority of such studies focus on developed markets such as the United States or European exchanges, leaving emerging markets and the Johannesburg Stock Exchange (JSE) in particular substantially under-explored. This study directly addresses both gaps by conducting the first unified, condition-controlled comparison of three leading Bayesian approaches on JSE-listed securities, thereby providing evidence that is both methodologically rigorous and contextually novel.

1.1. Rationale

Stock markets are influenced by multiple unpredictable factors [

5]. Traditional statistical and machine learning models have made a substantial impact in forecasting stock prices, yet they fail to capture the inherent uncertainty and risk associated with financial markets. The major limitation of conventional machine learning models is their inability to quantify uncertainty, which is critical for risk assessment in financial decision making [

1,

6,

7].

Bayesian probabilistic models, such as Bayesian neural networks (BNNs), Gaussian process regression (GPR), and Bayesian long short-term memory (Bayesian LSTM), have emerged as effective approaches for quantifying uncertainty, thereby offering more robust and interpretable stock price predictions. Recent research highlights the advantages of Bayesian LSTM over conventional deep learning models in stock price forecasting [

8]. The BNNs provide an advanced approach by introducing Bayesian inference into neural networks, which allows for uncertainty quantification in predictions [

9]. Their results demonstrate that BNNs outperform traditional methods and provide better uncertainty quantification, especially in high-volatility periods such as during the coronavirus pandemic of the year 2019.

1.2. Review of the Literature

Traditional time series models have been the foundation for stock forecasting. The AutoRegressive Integrated Moving Average (ARIMA) model excels at modeling linear trends and seasonal structure in historical price series [

10]. ARIMA assumes stationarity (after differencing) and fits autoregressive and moving average terms to the data. In finance, however, pure ARIMA often underestimates volatility, which led to the introduction of Generalized AutoRegressive Conditional Heteroskedasticity (GARCH) models to capture time-varying volatility in returns. Despite their theoretical appeal, traditional models have limitations, as they struggle with highly nonlinear patterns or regime shifts in stock data [

11].

Recent advancements in machine learning and deep learning have significantly transformed stock market forecasting. Techniques such as artificial neural networks (ANN), support vector machines (SVMs), random forest (RF), and deep learning architectures such as LSTM networks have shown substantial improvements in capturing nonlinear dependencies and hidden patterns in financial data [

12]. Ref. [

13] contributed a comprehensive comparison of deep learning and machine learning algorithms, showing that LSTM performed best, followed by SVMs, with ANNs and RF lagging behind.

Bayesian approaches cast forecasting as probabilistic inference, explicitly modeling uncertainty. The BNN is a neural network with weight distributions instead of point weights [

9,

14]. During training, Bayesian inference learns a posterior distribution over weights, and thus predictions are distributions. This sampling scheme enables efficient posterior inference despite the high-dimensional parameter space.

The GPR represents a foundational technique in Bayesian machine learning, offering a non-parametric, probabilistic framework for regression tasks [

1]. Unlike traditional models that provide single-point estimates, GPR defines a distribution over functions, allowing each prediction to be accompanied by an explicit measure of uncertainty. Recent studies have explored GPR in financial forecasting with promising results [

10,

15].

The integration of Bayesian inference into the LSTM architecture has led to Bayesian LSTM models that combine the sequential learning capabilities of LSTMs with the uncertainty modeling strengths of Bayesian methods [

8,

16]. Ref. [

8] demonstrated that Bayesian LSTM consistently outperformed both RNNs and standard LSTM in terms of the Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and Mean Squared Error (MSE) while producing well-calibrated prediction intervals.

A structured synthesis of the existing literature reveals three recurring themes: (1) the superiority of deep learning over classical statistical models for nonlinear financial data; (2) the advantage of Bayesian over deterministic approaches in terms of uncertainty quantification; and (3) the lack of standardized comparative studies across Bayesian methods on the same dataset.

Table 1 summarizes the representative studies across these themes.

Despite these advancements, several research gaps remain. Many AI models, including deep learning, are difficult to interpret and often ignore uncertainty in their predictions. Crucially, GPR, Bayesian LSTM, and BNNs have typically been studied in isolation; no published work has placed all three under identical experimental conditions on the same financial time series, making it impossible to draw reliable conclusions about their relative merits. Furthermore, applications to emerging market equities, particularly on the JSE, are sparse. The present study is designed to fill both of these gaps simultaneously by implementing all three approaches within a unified pipeline on JSE data, using consistent preprocessing, identical train/validation/test splits, and the same evaluation metrics.

1.3. Research Highlights and Contributions of the Study

This study provides a comprehensive comparative evaluation of three Bayesian probabilistic methods for stock price forecasting on the Johannesburg Stock Exchange, demonstrating that BNNs achieve an optimal balance between predictive accuracy and uncertainty quantification, particularly for volatile financial time series.

The major contributions of this study are as follows:

Contribution to methods: A systematic implementation and comparison of three Bayesian approaches under standardized conditions using the same dataset, preprocessing pipeline, and evaluation metrics, addressing the gap in the literature where these models are typically evaluated in isolation.

Contribution to findings: Empirical evidence that BNNs outperform both GPR and Bayesian LSTM in terms of point forecast accuracy (lowest MAE and RMSE) while maintaining well-calibrated uncertainty intervals, making them the most practical choice for risk-conscious financial forecasting.

Identification of model-specific strengths: GPR excels in stable market regimes with limited data, Bayesian LSTM provides the most conservative (widest) uncertainty estimates suitable for risk-averse applications, and BNNs offer the best trade-off between accuracy and uncertainty quantification.

Application to the South African context: First empirical comparison of these Bayesian methods on JSE-listed companies (FirstRand and Discovery), extending the literature on probabilistic forecasting to emerging markets.

2. Methods

2.1. Data Source and Study Area

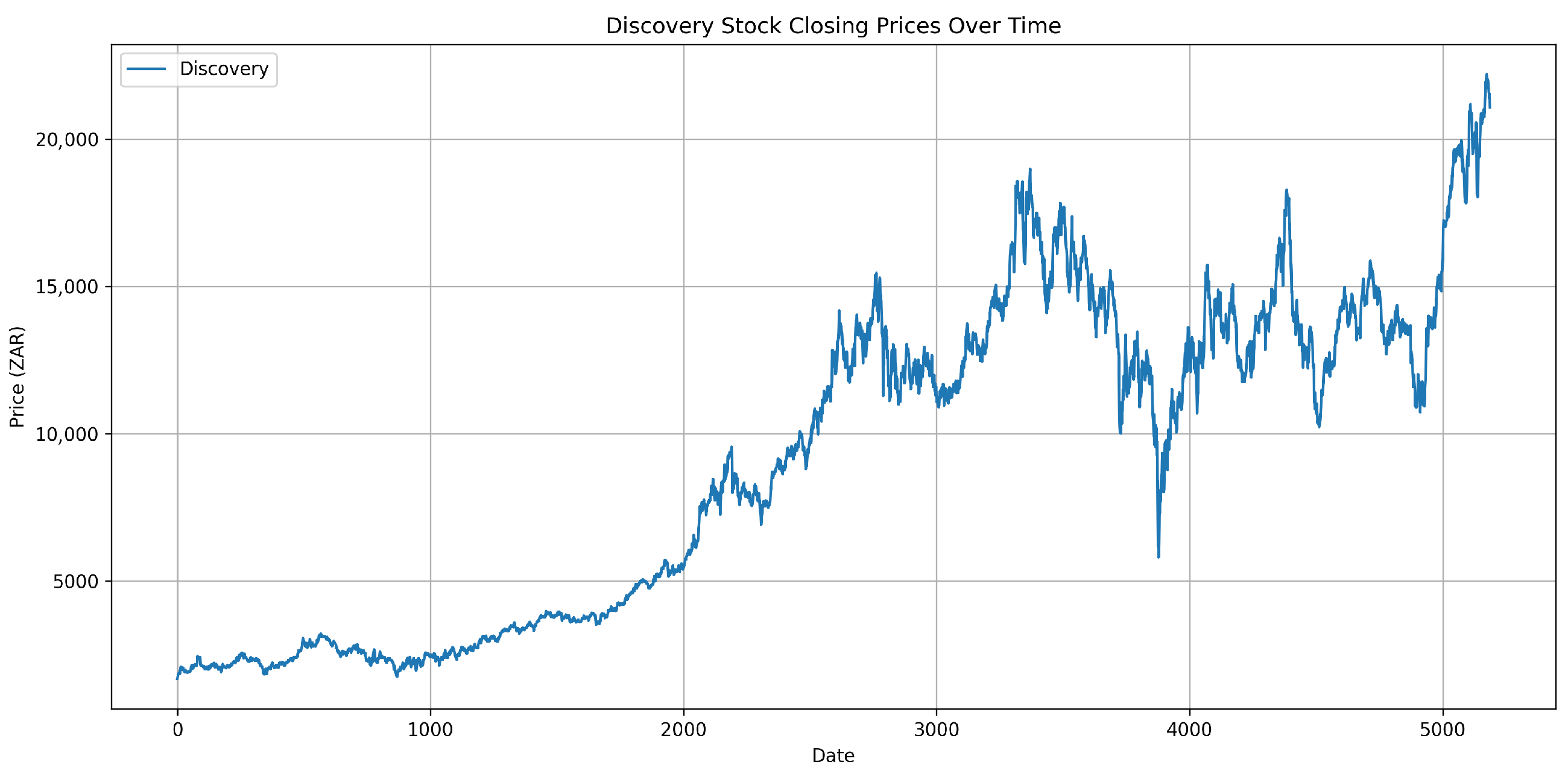

This study utilized secondary data obtained from Yahoo Finance through the open-source Python package yfinance, which provides access to publicly available financial market data. The dataset comprised historical daily stock prices for companies listed on the JSE, with a focus on FirstRand Limited and Discovery Limited. The time frame spanned from January 2005 to June 2025, capturing 5187 observations including key market attributes: open, high, low, close (OHLC) prices and trading volume.

2.2. Data Preprocessing and Feature Engineering

Before model training, raw financial data undergo several preprocessing steps. Stock prices are normalized using Z-score standardization (standard scaling) to improve model convergence and gradient stability. Z-score normalization was preferred over Min-Max scaling because the latter compresses values into a fixed range, which is highly sensitive to outliers, a common occurrence in financial time series. Log returns were not used as the primary normalization method because the models operate on price levels and require inverse transformation back to interpretable price units for evaluation. Z-score standardization produces values on an unbounded scale (including negative values, which reflect observations below the historical mean), and this is both expected and appropriate within the modeling framework.

Given a column of data, the mean and standard deviation were computed as follows:

Each value was then scaled as

To strengthen predictive power, feature engineering was applied to extract technical indicators that captured momentum, trends, and volatility. All five OHLCV columns were used as model inputs, with the closing price serving as the prediction target. The following technical indicators were computed from the closing price series using the pandas-ta library in Python:

Relative strength index (RSI): A 14-period RSI was computed, yielding a single bounded oscillator value per time step.

Moving average convergence divergence (MACD): The standard MACD configuration was used, with a fast exponential moving average (EMA) period of 12, slow EMA period of 26, and a signal line period of 9. Only the MACD line (fast EMA minus slow EMA) was retained as a feature; the signal line and histogram were excluded to avoid multicollinearity.

Simple moving average (SMA-20): A 20-period simple moving average of the closing price.

Exponential moving average (EMA-20): A 20-period exponential moving average of the closing price.

Bollinger bands (BBs): Computed with a 20-period window and 2 standard deviations. Both the upper and lower bands were retained as separate features, together providing a 4-feature Bollinger input (upper band, lower band, rolling mean, and bandwidth). Only the upper and lower band values were used as input features; the rolling mean was equivalent to the SMA-20, and the bandwidth was omitted to reduce redundancy.

All engineered technical indicators were subjected to the same Z-score standardization procedure (Equations (

1) and (

2)) applied to the raw OHLCV features. Standardization was applied separately to each feature column using statistics computed exclusively on the training set, with the resulting parameters then applied to the validation and test sets to prevent data leakage. The final input feature matrix therefore comprised 11 standardized columns: OHLCV, RSI, MACD, SMA-20, EMA-20, BB-Upper, and BB-Lower. The first 20 observations of the dataset (one full Bollinger/SMA window) were discarded due to NaN values arising from the rolling window computation, leaving 5167 usable observations.

The data were split into training (60%), validation (20%), and testing (20%) sets. Rolling windows and sequence generation techniques were applied for the LSTM-based models. A sequence window of 60 time steps was used as input for the Bayesian LSTM, meaning each training sample consisted of 60 consecutive daily observations. For the BNN and GPR, the same 60-step window features were flattened into a single input vector per observation to conform to the feedforward and kernel-based architectures, respectively.

2.3. Bayesian Neural Networks (BNNs)

BNNs extend traditional feedforward neural networks by treating weights and biases as probability distributions rather than fixed parameters. The models were implemented in Python 3.11 using the following key libraries: PyTorch 2.0 for BNN implementation, TensorFlow/Keras 2.13 for Bayesian LSTM, and scikit-learn 1.3 for GPR. Supporting data manipulation and feature engineering relied on pandas 2.0, numpy 1.24, and pandas-ta 0.3. All experiments were conducted on a single GPU (NVIDIA GeForce RTX 3060, 12 GB of VRAM) under Ubuntu 22.04.

In this study, the BNN architecture comprised two fully connected hidden layers with 64 and 32 neurons using the ReLU activation function. A Gaussian prior with zero mean and unit variance was placed over all weights and biases. Variational inference was implemented via Bayes using Backprop [

14], with the ELBO objective optimized using the Adam optimizer (learning rate

) over 200 epochs. A batch size of 32 and a dropout rate of 0.1 were applied for regularization. At test time,

Monte Carlo samples were drawn from the approximate posterior to estimate the predictive mean and variance. The prior distribution over weights

W and biases

b is typically Gaussian:

The posterior distribution given data

was obtained via Bayes’ theorem:

Since the true posterior is intractable, variational inference was used to approximate it by minimizing the Kullback–Leibler divergence between the approximate distribution

and the true posterior:

The evidence lower bound (ELBO) objective is

For prediction,

S samples

were drawn from the approximate posterior, yielding predictions

. The predictive mean and variance are

2.4. Bayesian LSTM

Bayesian LSTM extends conventional LSTM by incorporating Bayesian inference to quantify uncertainty in sequential predictions. The Bayesian LSTM implemented here consisted of two stacked LSTM layers with 50 units each, followed by a dense output layer. A sequence window of 60 time steps was used as input. Bayesian inference was achieved through Monte Carlo Dropout [

8], where dropout (rate

) was applied at both the training and test times to approximate posterior sampling. The model was trained using the Adam optimizer (learning rate

), with a mean squared error loss function over 100 epochs and a batch size of 32. At inference,

forward passes were performed to obtain the predictive distribution. The posterior over-model parameters

are expressed by

For a new input sequence

, the predictive distribution was obtained by integrating over parameter configurations:

This integral was approximated using variational inference with the same ELBO objective as in Equation (

6). At test time,

S samples of weights were drawn to compute the predictive mean and variance as in Equation (

7).

2.5. Gaussian Process Regression (GPR)

The GPR is a non-parametric Bayesian approach that models distributions over functions directly. In this study, a composite kernel was employed: the radial basis function (RBF) kernel combined with a white noise kernel to capture residual noise, i.e.,

, where

ℓ is the length scale,

is the signal variance, and

is the noise variance. The kernel hyperparameters were optimized by maximizing the log marginal likelihood (Equation (

16)) using L-BFGS-B with five random restarts to mitigate local optima. A Gaussian process is defined as a collection of random variables, any finite number of which have a joint Gaussian distribution:

where

is the mean function (typically zero) and

is the covariance (kernel) function. The joint distribution of training outputs

and test outputs

is

With independent and identically distributed Gaussian noise

, the predictive distribution for the test inputs was

where

The 95% prediction intervals were calculated as follows:

The hyperparameters

and noise variance

were estimated by maximizing the log marginal likelihood:

2.6. Evaluation Metrics

Three metrics were used to evaluate model performance.

Mean squared error (MSE):

Mean absolute error (MAE):

Root mean square error (RMSE):

2.7. Uncertainty Quantification Metrics

To provide a rigorous quantitative evaluation of the probabilistic outputs, two additional metrics were used [

8].

The prediction interval coverage probability (PICP) measures the proportion of actual observations that fall within the predicted 95% confidence interval:

A well-calibrated model should yield a PICP for a 95% interval.

The mean prediction interval width (MPIW) measures the average width of the predictive intervals, reflecting the sharpness of the uncertainty estimates:

A lower MPIW indicates sharper (more informative) intervals, provided that the PICP remains at or near the nominal coverage level. Together, the PICP and MPIW allow for a more complete assessment of uncertainty calibration beyond qualitative inspection of forecast plots.

4. Discussion

The empirical results reveal distinct performance patterns across the three Bayesian models and two companies, providing valuable insights into the strengths and limitations of each approach for stock price forecasting.

For FirstRand, which exhibited relatively stable price behavior, all three models tracked actual prices reasonably well. The BNN delivered the most accurate forecasts with the lowest MAE (63.78) and RMSE (83.29), while maintaining well-calibrated 95% confidence intervals that remained narrow under normal conditions but appropriately widened during periods of increased volatility, such as on 30 April 2021. This finding aligns with Chandra and He [

9], who demonstrated that BNNs outperform traditional models during market turbulence by better capturing nonlinear relationships and quantifying predictive uncertainty.

The GPR produced moderate but consistent results for FirstRand (MAE = 72.27, RMSE = 102.80), with relatively narrow confidence intervals reflecting stable uncertainty estimates. The model performed particularly well in tracking gradual price movements, consistent with Bisht et al. [

1], who found that GPR can effectively capture nonlinearities while maintaining well-calibrated confidence intervals in smaller datasets. However, the wider interval observed on 30 April 2021 demonstrates GPR’s ability to signal increased uncertainty during market turbulence.

Bayesian LSTM generated the widest prediction intervals, reflecting its conservative approach to uncertainty estimation. However, this came at the expense of point forecast precision, with the highest errors among the three models (MAE = 133.49, RMSE = 175.21). While Wang and Qi [

8] emphasized Bayesian LSTM’s advantage in enhancing predictive robustness under volatile conditions, our findings suggest that this robustness may manifest primarily through uncertainty quantification rather than point forecast accuracy.

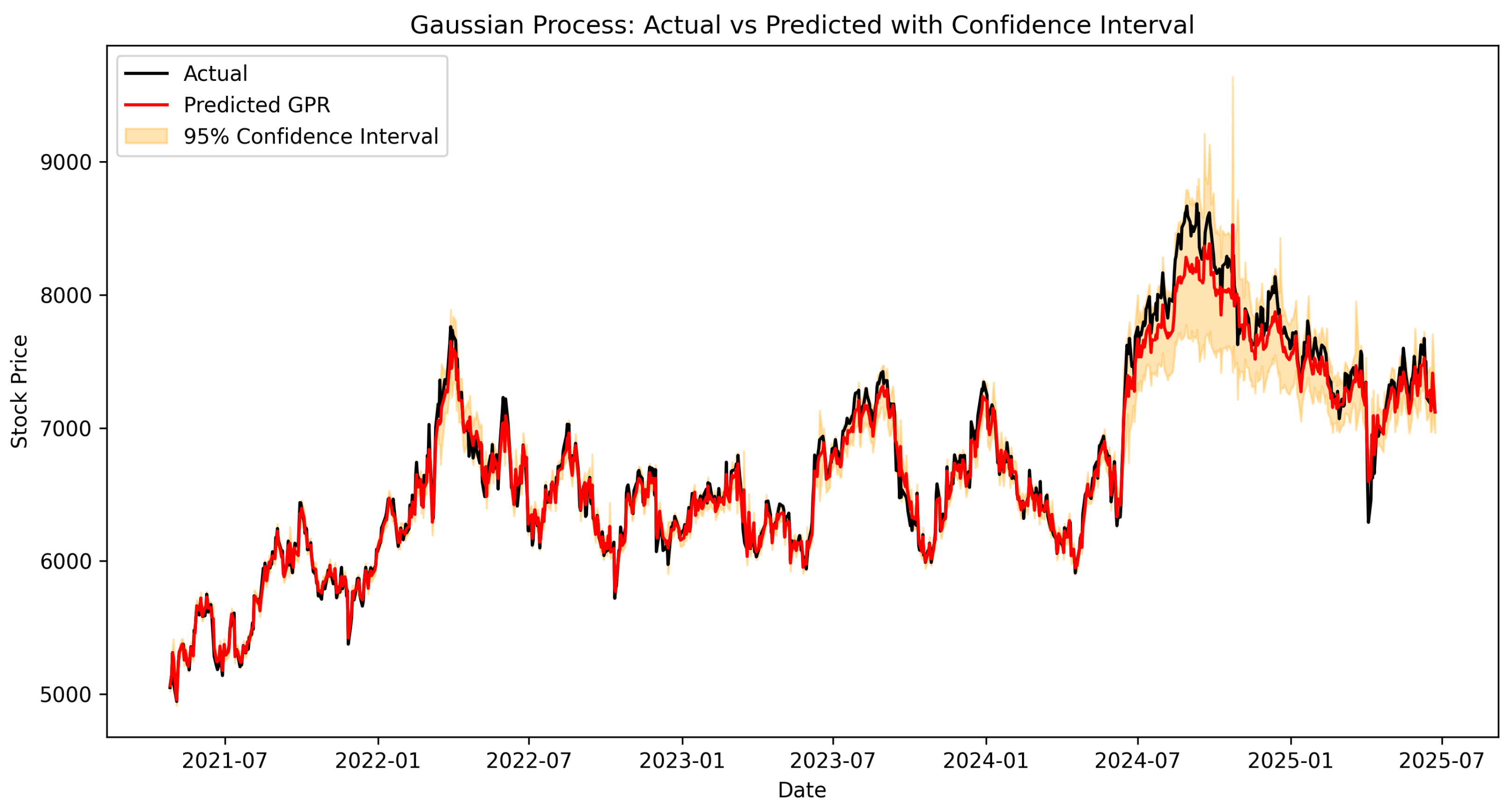

For Discovery, which exhibited substantially higher volatility (standard deviation of 5404 compared with FirstRand’s 2186), all models faced greater challenges. GPR’s performance degraded notably (MAE = 115.15, RMSE = 226.19), with wider confidence intervals reflecting the model’s difficulty in capturing rapid price fluctuations. This decline underlines GPR’s limited scalability in fast-changing markets, as noted in the literature.

Bayesian LSTM recorded the highest errors for Discovery (MAE = 337.39, RMSE = 452.78), with incredibly broad confidence intervals that, while successfully encompassing the true price paths, highlighted the elevated risk profile of the stock. This suggests that Bayesian recurrent models may prioritize stability and risk awareness over precision, making them valuable in uncertainty-sensitive applications where conservative estimates are preferred.

The BNN again stood out for Discovery, achieving lower errors (MAE = 144.56, RMSE = 201.50) than the other two models while maintaining reasonable uncertainty bands, though these remained wider than those observed for FirstRand. This superior performance on volatile data aligns with Chandra and He’s [

9] findings that BNNs excel during periods of increased market turbulence by effectively modeling complex nonlinearities while quantifying predictive uncertainty.

Cross-model comparison revealed that no single Bayesian model was universally superior. Instead, the effectiveness of each approach was conditional on the objectives of the user and the prevailing market environment. BNNs achieved the best balance between point accuracy and calibrated uncertainty intervals, making them most suitable when both priorities were important. GPR performed reliably under stable conditions with limited data requirements. Bayesian LSTM provided the most conservative uncertainty estimates, making it preferable for risk-averse applications, where capturing extreme outcomes is critical.

These findings reinforce the value of Bayesian approaches in financial forecasting, as they embed uncertainty directly into predictions. This dual advantage of accuracy and interpretability advances the methodological landscape and offers practical benefits to analysts, investors, and policymakers operating in inherently uncertain environments.

The performance differences observed across the models can be understood through the lens of data structure and model architecture. GPR’s degradation on Discovery is mechanistically linked to the cubic computational cost of kernel matrix inversion (), which limits its practical window length and thus its ability to capture long-range dependencies under high volatility. Bayesian LSTM’s wide intervals are a structural consequence of Monte Carlo Dropout; by retaining stochastic units at test time, the model aggregates variance from all network layers, producing conservative but high-coverage intervals that are particularly valuable during volatility regimes such as the post-COVID-19 recovery period. Regarding BNN’s superior accuracy, the uncertainty trade-off stems from its direct parameterization of weight distributions, which allows it to adapt posterior uncertainty to the local data density without the bandwidth constraints of kernel methods or the sequential bias of recurrent architectures.

The findings carry concrete recommendations for financial practitioners. Portfolio managers seeking to optimize risk-adjusted returns in stable large-cap environments (analogous to FirstRand’s price behavior) should consider GPR as a computationally efficient and interpretable alternative. For volatile growth stocks (analogous to Discovery), BNNs offer the best combination of prediction accuracy and calibrated uncertainty, directly supporting value-at-risk (VaR) calculations and position sizing decisions. Risk officers and compliance teams for whom regulatory capital depends on conservative worst-case scenarios may find Bayesian LSTM’s wider intervals more appropriate, as they are less likely to underestimate tail risk during market dislocations. These prescriptions move beyond generic recommendations of “match model to risk preference” by linking specific model behaviors to concrete financial use cases.

5. Conclusions

This study investigated the effectiveness of three Bayesian probabilistic methods, such as Gaussian process regression, for stock price forecasting on the JSE. Using daily data for FirstRand and Discovery Limited from January 2005 to June 2025, models were evaluated by both point forecast accuracy and uncertainty quantification capabilities.

The quantitative evaluation across both stocks confirms three clear model-specific patterns. The BNNs consistently achieved the lowest point forecast errors and near-nominal PICP values (0.95 for FirstRand, 0.94 for Discovery) with relatively compact interval widths, establishing them as the most versatile Bayesian forecasting tool across varying market conditions. GPR delivered well-calibrated intervals in stable regimes but deteriorated markedly under Discovery’s higher volatility, reflecting the structural limitations of kernel-based methods in fast-moving markets. Bayesian LSTM produced the widest intervals (highest MPIW) at adequate coverage, confirming its suitability for risk-averse applications, where capturing extreme outcomes takes priority over precision. Importantly, all three Bayesian approaches outperformed both the ARIMA and standard LSTM baselines, providing direct empirical evidence that probabilistic modeling adds measurable value over deterministic alternatives.

The study makes three contributions: (1) the first condition-controlled comparison of GPR, Bayesian LSTM, and BNNs on JSE-listed equities; (2) a comprehensive uncertainty evaluation using both qualitative interval plots and quantitative PICP and MPIW metrics; and (3) concrete, mechanism-grounded recommendations for matching the Bayesian model choice to financial use cases, market volatility regimes, and institutional risk mandates.

In practical terms, short-term traders and portfolio managers prioritizing point accuracy and balanced uncertainty should favor BNNs. Risk officers requiring conservative worst-case interval coverage for regulatory capital calculations will find Bayesian LSTM more appropriate. GPR remains a competitive and computationally interpretable option for stable markets or smaller datasets, where kernel smoothness assumptions are well founded.

5.1. Limitations

Several limitations of the present study should be acknowledged. First, the analysis was restricted to two JSE-listed companies, which limits the generalizability of the findings. Second, while the inclusion of non-Bayesian baselines contextualized the results, a broader benchmark set including XGBoost, random forest, and Transformer-based architectures would further strengthen the comparative evaluation. Third, the GPR implementation was constrained by computational scalability; the use of full-data kernel inference limited the practical window size, and this may disadvantage GPR relative to the neural approaches. Fourth, the study did not account for transaction costs, slippage, or market impact, which are critical factors in translating forecast accuracy into actual trading performance. Fifth, the model hyperparameters were tuned on the validation set using a fixed protocol; a more exhaustive search (e.g., Bayesian optimization) may yield further performance improvements.

5.2. Future Research Directions

Building directly on these limitations, future work should (1) extend the analysis to a broader panel of JSE-listed securities and international emerging markets; (2) incorporate macroeconomic indicators such as interest rates, exchange rates, and commodity prices as additional features; (3) integrate alternative data sources, including news sentiment indices and social media signals; (4) explore scalable sparse GP approximations (e.g., inducing-point methods) to overcome GPR’s computational bottleneck; and (5) embed the probabilistic forecasts within realistic trading simulations, accounting for transaction costs, to assess practical financial utility and risk-adjusted returns.