Surrogate-Assisted Slime Mould Algorithm Considering a Dual-Based Merit Criterion for Global Database Management

Abstract

1. Introduction

2. Surrogate Models

Radial Basis Function Neural Network

3. Slime Mould Algorithm

Bound Checking

4. Proposed Methodology

4.1. Global Database Management Strategy

4.2. Surrogate Building

| Algorithm 1 Pseudocode of the Surrogate-Assisted Slime Mould Algorithm (SASMA) and the employed parameters. |

|

4.3. A Novel Surrogate-Assisted Metaheuristic: SASMA

5. Case Studies and Benchmarking

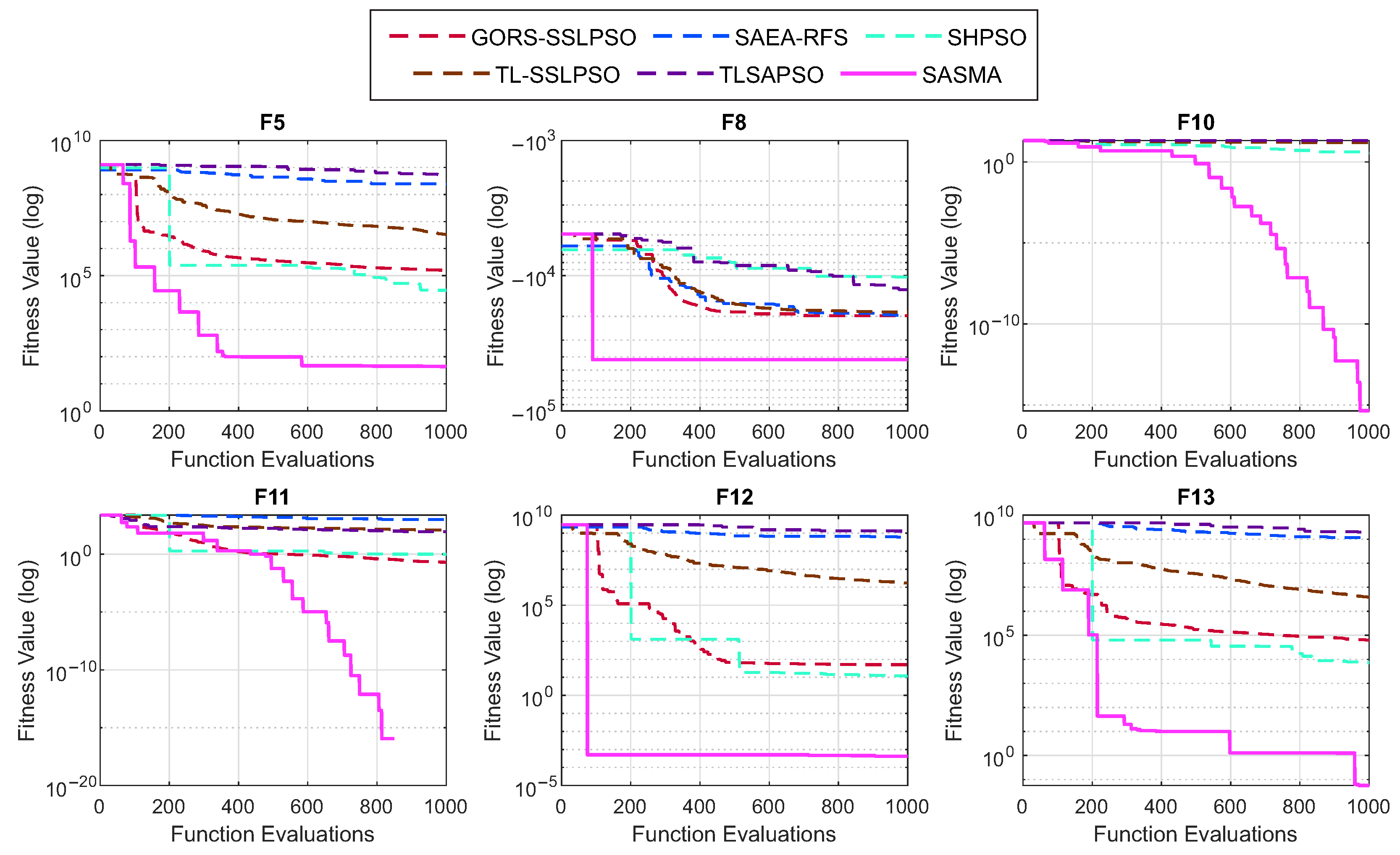

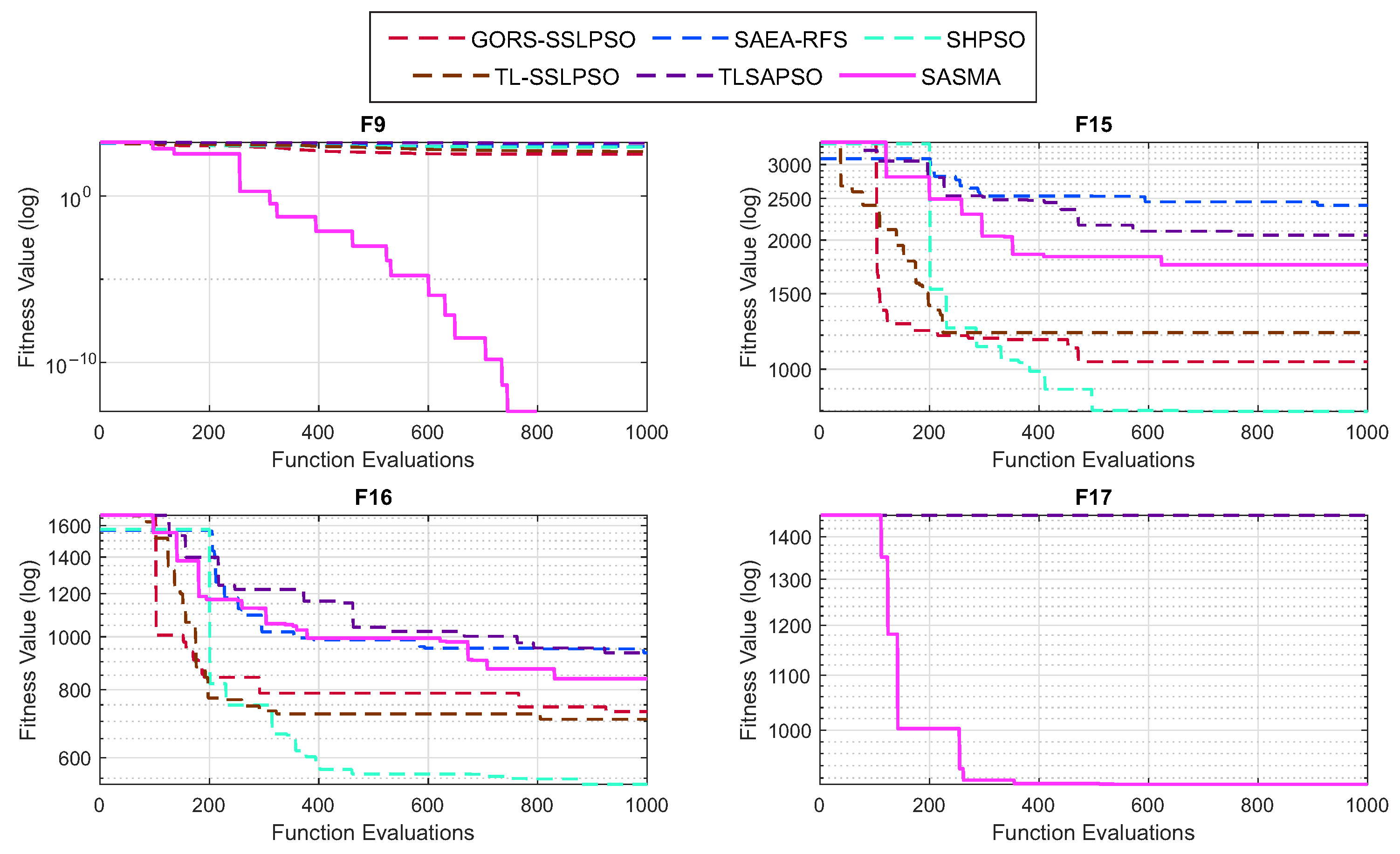

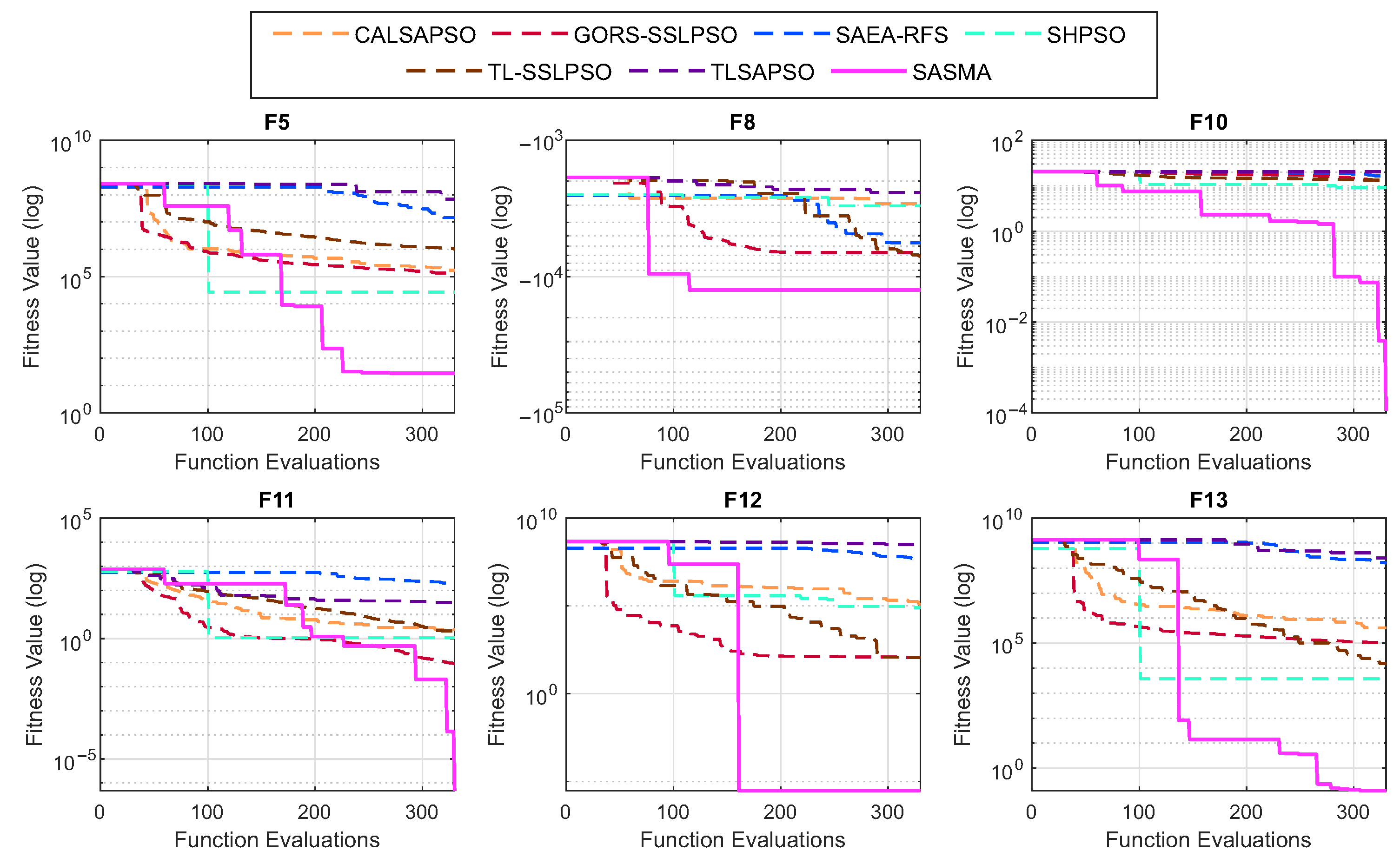

5.1. Case Study I: Mathematical Test Functions and Optimization Results

5.2. Case Study II: 25 Truss Bar Design (Continuous) Problem

Optimization Results

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| Function | Name | Range | |

|---|---|---|---|

| Sphere | 0 | ||

| Schwefel 2.22 | 0 | ||

| Schwefel 1.2 | 0 | ||

| Schwefel 2.21 | 0 | ||

| Rosenbrock | 0 | ||

| Step | 0 | ||

| Quartic | 0 | ||

| Schwefel 2.26 | |||

| Rastrigin | 0 | ||

| Ackley | 0 | ||

| Griewank | 0 | ||

| Penalized 1 | 0 | ||

| Penalized 2 | 0 | ||

| Ellipsoid | 0 | ||

| Shifted Rotated Rastrigin (F10 in [67]) | |||

| Rotated Hybrid Composition (F16 in [67]) | 120 | ||

| Rotated Hybrid Composition (F19 in [67]) | 10 |

| Test Function/Metric | GORS-SSLPSO | SAE-ARFS | SHPSO | TL-SSLPSO | TLSAPSO | SASMA | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Mean | ||||||||||||

| F1 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F2 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F3 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F4 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F5 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F6 | St. Dev. | + | + | − | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F7 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F8 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F9 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F10 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F11 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F12 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F13 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F14 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F15 | St. Dev. | − | + | − | − | + | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F16 | St. Dev. | − | − | − | − | = | ||||||

| Min | ||||||||||||

| Mean | ||||||||||||

| F17 | St. Dev. | + | + | + | + | + | ||||||

| Min | ||||||||||||

| Win | 15 | 16 | 14 | 15 | 16 | |||||||

| Tie | 0 | 0 | 0 | 0 | 1 | |||||||

| Lose | 2 | 1 | 3 | 2 | 0 | |||||||

| Friedman Test | GORS-SSLPSO | SAE-ARFS | SHPSO | TL-SSLPSO | TLSAPSO | SASMA | |

|---|---|---|---|---|---|---|---|

| Mean Rank | F1 | 2.31 | 5.23 | 2.77 | 3.91 | 5.77 | 1.00 |

| F2 | 2.11 | 3.71 | 4.00 | 4.17 | 6.00 | 1.00 | |

| F3 | 2.26 | 5.49 | 3.46 | 3.40 | 5.40 | 1.00 | |

| F4 | 3.54 | 4.80 | 2.00 | 3.71 | 5.94 | 1.00 | |

| F5 | 3.00 | 5.00 | 2.00 | 4.00 | 6.00 | 1.00 | |

| F6 | 1.43 | 5.14 | 1.91 | 3.89 | 5.86 | 2.77 | |

| F7 | 2.86 | 5.00 | 2.14 | 4.00 | 6.00 | 1.00 | |

| F8 | 2.17 | 3.57 | 5.77 | 3.26 | 5.23 | 1.00 | |

| F9 | 2.00 | 5.00 | 3.94 | 3.06 | 6.00 | 1.00 | |

| F10 | 4.06 | 4.94 | 2.00 | 3.00 | 6.00 | 1.00 | |

| F11 | 2.37 | 6.00 | 2.86 | 4.03 | 4.74 | 1.00 | |

| F12 | 2.71 | 5.00 | 2.29 | 4.00 | 6.00 | 1.00 | |

| F13 | 2.91 | 5.00 | 2.09 | 4.00 | 6.00 | 1.00 | |

| F14 | 2.06 | 5.09 | 2.94 | 4.00 | 5.91 | 1.00 | |

| F15 | 2.40 | 5.20 | 1.06 | 2.54 | 5.31 | 4.49 | |

| F16 | 3.09 | 4.00 | 1.06 | 2.00 | 5.34 | 5.51 | |

| F17 | 4.63 | 4.74 | 2.80 | 2.69 | 5.14 | 1.00 | |

| Algorithm | Parameter Settings |

|---|---|

| PSO w/inertia factor | |

| PSO w/constriction factor | |

| WOA | |

| GWO | |

| GSA | |

| FPA | |

| BA | |

| GSK | |

| SASMA |

Appendix B

References

- Liu, W.; Wang, J. Recursive elimination current algorithms and a distributed computing scheme to accelerate wrapper feature selection. Inf. Sci. 2022, 589, 636–654. [Google Scholar] [CrossRef]

- Ozbay, F.A.; Alatas, B. A Novel Approach for Detection of Fake News on Social Media Using Metaheuristic Optimization Algorithms. Elektron. Elektrotech. 2019, 25, 62–67. [Google Scholar] [CrossRef]

- Yang, X.S. Nature-Inspired Optimization Algorithms, 2nd ed.; Academic Press: Cambridge, MA, USA, 2020; pp. 1–310. [Google Scholar] [CrossRef]

- Molina, D.; LaTorre, A.; Herrera, F. An Insight into Bio-inspired and Evolutionary Algorithms for Global Optimization: Review, Analysis, and Lessons Learnt over a Decade of Competitions. Cogn. Comput. 2018, 10, 517–544. [Google Scholar] [CrossRef]

- Gutjahr, W.J.; Montemanni, R. Stochastic Search in Metaheuristics. Int. Ser. Oper. Res. Manag. Sci. 2019, 272, 513–540. [Google Scholar] [CrossRef]

- Kennedy, J.; Eberhart, R. Particle swarm optimization. In Proceedings of ICNN’95—International Conference on Neural Networks 1995; IEEE: Piscataway, NJ, USA, 1995; Volume 4, pp. 1942–1948. [Google Scholar] [CrossRef]

- Yang, X.S.; Deb, S. Cuckoo search: Recent advances and applications. Neural Comput. Appl. 2014, 24, 169–174. [Google Scholar] [CrossRef]

- Das, S.; Mullick, S.S.; Suganthan, P.N. Recent advances in differential evolution—An updated survey. Swarm Evol. Comput. 2016, 27, 1–30. [Google Scholar] [CrossRef]

- Mirjalili, S.; Lewis, A. The Whale Optimization Algorithm. Adv. Eng. Softw. 2016, 95, 51–67. [Google Scholar] [CrossRef]

- Li, Y.; Lin, X.; Liu, J. An improved gray wolf optimization algorithm to solve engineering problems. Sustainability 2021, 13, 3208. [Google Scholar] [CrossRef]

- Piotrowski, A.P.; Napiorkowski, J.J.; Piotrowska, A.E. Population size in Particle Swarm Optimization. Swarm Evol. Comput. 2020, 58, 100718. [Google Scholar] [CrossRef]

- Morales-Castañeda, B.; Zaldívar, D.; Cuevas, E.; Fausto, F.; Rodríguez, A. A better balance in metaheuristic algorithms: Does it exist? Swarm Evol. Comput. 2020, 54, 100671. [Google Scholar] [CrossRef]

- Sun, C.; Jin, Y.; Zeng, J.; Yu, Y. A two-layer surrogate-assisted particle swarm optimization algorithm. Soft Comput. 2015, 19, 1461–1475. [Google Scholar] [CrossRef]

- Tang, Z.; Xu, L.; Luo, S. Adaptive dynamic surrogate-assisted evolutionary computation for high-fidelity optimization in engineering. Appl. Soft Comput. 2022, 127, 109333. [Google Scholar] [CrossRef]

- Srithapon, C.; Fuangfoo, P.; Ghosh, P.K.; Siritaratiwat, A.; Chatthaworn, R. Surrogate-Assisted Multi-Objective Probabilistic Optimal Power Flow for Distribution Network with Photovoltaic Generation and Electric Vehicles. IEEE Access 2021, 9, 34395–34414. [Google Scholar] [CrossRef]

- Tong, H.; Huang, C.; Minku, L.L.; Yao, X. Surrogate models in evolutionary single-objective optimization: A new taxonomy and experimental study. Inf. Sci. 2021, 562, 414–437. [Google Scholar] [CrossRef]

- Li, F.; Li, Y.; Cai, X.; Gao, L. A surrogate-assisted hybrid swarm optimization algorithm for high-dimensional computationally expensive problems. Swarm Evol. Comput. 2022, 72, 101096. [Google Scholar] [CrossRef]

- Zhou, Z.; Ong, Y.S.; Nguyen, M.H.; Lim, D. A study on polynomial regression and Gaussian Process global surrogate model in hierarchical surrogate-assisted evolutionary algorithm. In 2005 IEEE Congress on Evolutionary Computation; IEEE: Piscataway, NJ, USA, 2005; Volume 3, pp. 2832–2839. [Google Scholar] [CrossRef]

- Han, D.; Du, W.; Wang, X.; Du, W. A surrogate-assisted evolutionary algorithm for expensive many-objective optimization in the refining process. Swarm Evol. Comput. 2022, 69, 100988. [Google Scholar] [CrossRef]

- Liu, Q.; Jin, Y.; Heiderich, M.; Rodemann, T. Surrogate-assisted evolutionary optimization of expensive many-objective irregular problems. Knowl.-Based Syst. 2022, 240, 108197. [Google Scholar] [CrossRef]

- Tian, J.; Sun, C.; Tan, Y.; Zeng, J. Granularity-based surrogate-assisted particle swarm optimization for high-dimensional expensive optimization. Knowl.-Based Syst. 2020, 187, 104815. [Google Scholar] [CrossRef]

- Clarke, S.M.; Griebsch, J.H.; Simpson, T.W. Analysis of support vector regression for approximation of complex engineering analyses. J. Mech. Des. Trans. ASME 2005, 127, 1077–1087. [Google Scholar] [CrossRef]

- Volz, V.; Rudolph, G.; Naujoks, B. Investigating uncertainty propagation in surrogate-assisted evolutionary algorithms. In GECCO ’17: Proceedings of the Genetic and Evolutionary Computation Conference; Association for Computing Machinery, Inc.: New York, NY, USA, 2017; Volume 8, pp. 881–888. [Google Scholar] [CrossRef]

- Liu, J.; Wang, Y.; Sun, G.; Pang, T. Multisurrogate-Assisted Ant Colony Optimization for Expensive Optimization Problems With Continuous and Categorical Variables. IEEE Trans. Cybern. 2021, 52, 11348–11361. [Google Scholar] [CrossRef]

- Cai, X.; Qiu, H.; Gao, L.; Jiang, C.; Shao, X. An efficient surrogate-assisted particle swarm optimization algorithm for high-dimensional expensive problems. Knowl.-Based Syst. 2019, 184, 104901. [Google Scholar] [CrossRef]

- Eason, J.; Cremaschi, S. Adaptive sequential sampling for surrogate model generation with artificial neural networks. Comput. Chem. Eng. 2014, 68, 220–232. [Google Scholar] [CrossRef]

- Zhang, T.; Li, F.; Zhao, X.; Qi, W.; Liu, T. A Convolutional Neural Network-Based Surrogate Model for Multi-objective Optimization Evolutionary Algorithm Based on Decomposition. Swarm Evol. Comput. 2022, 72, 101081. [Google Scholar] [CrossRef]

- Wang, W.; Liu, H.L.; Tan, K.C. A Surrogate-Assisted Differential Evolution Algorithm for High-Dimensional Expensive Optimization Problems. IEEE Trans. Cybern. 2022, 53, 2685–2697. [Google Scholar] [CrossRef] [PubMed]

- Chen, C.; Wang, X.; Dong, H.; Wang, P. Surrogate-assisted hierarchical learning water cycle algorithm for high-dimensional expensive optimization. Swarm Evol. Comput. 2022, 75, 101169. [Google Scholar] [CrossRef]

- Yu, M.; Liang, J.; Zhao, K.; Wu, Z. An aRBF surrogate-assisted neighborhood field optimizer for expensive problems. Swarm Evol. Comput. 2022, 68, 100972. [Google Scholar] [CrossRef]

- Liu, Y.; Liu, J.; Tan, S. Decision space partition based surrogate-assisted evolutionary algorithm for expensive optimization. Expert Syst. Appl. 2023, 214, 119075. [Google Scholar] [CrossRef]

- Chu, S.C.; Du, Z.G.; Peng, Y.J.; Pan, J.S. Fuzzy Hierarchical Surrogate Assists Probabilistic Particle Swarm Optimization for expensive high dimensional problem. Knowl.-Based Syst. 2021, 220, 106939. [Google Scholar] [CrossRef]

- Li, H.; Chen, L.; Zhang, J.; Li, M. A Multi-Surrogate Assisted Multi-Tasking Optimization Algorithm for High-Dimensional Expensive Problems. Algorithms 2025, 18, 4. [Google Scholar] [CrossRef]

- Pan, J.S.; Zhang, L.G.; Chu, S.C.; Shieh, C.S.; Watada, J. Surrogate-Assisted Hybrid Meta-Heuristic Algorithm with an Add-Point Strategy for a Wireless Sensor Network. Entropy 2023, 25, 317. [Google Scholar] [CrossRef]

- Chen, W.; Dong, H.; Wang, P.; Wang, X. Surrogate-assisted global transfer optimization based on adaptive sampling strategy. Adv. Eng. Inform. 2023, 56, 101914. [Google Scholar] [CrossRef]

- Younis, A.; Dong, Z. High-Fidelity Surrogate Based Multi-Objective Optimization Algorithm. Algorithms 2022, 15, 279. [Google Scholar] [CrossRef]

- Dong, H.; Wang, P.; Yu, X.; Song, B. Surrogate-assisted teaching-learning-based optimization for high-dimensional and computationally expensive problems. Appl. Soft Comput. 2021, 99, 106934. [Google Scholar] [CrossRef]

- Chen, G.; Zhang, K.; Xue, X.; Zhang, L.; Yao, C.; Wang, J.; Yao, J. A radial basis function surrogate model assisted evolutionary algorithm for high-dimensional expensive optimization problems. Appl. Soft Comput. 2022, 116, 108353. [Google Scholar] [CrossRef]

- Yu, H.; Tan, Y.; Sun, C.; Zeng, J. A generation-based optimal restart strategy for surrogate-assisted social learning particle swarm optimization. Knowl.-Based Syst. 2019, 163, 14–25. [Google Scholar] [CrossRef]

- Yu, H.; Tan, Y.; Zeng, J.; Sun, C.; Jin, Y. Surrogate-assisted hierarchical particle swarm optimization. Inf. Sci. 2018, 454–455, 59–72. [Google Scholar] [CrossRef]

- Hu, P.; Pan, J.S.; Chu, S.C.; Sun, C. Multi-surrogate assisted binary particle swarm optimization algorithm and its application for feature selection. Appl. Soft Comput. 2022, 121, 108736. [Google Scholar] [CrossRef]

- Dong, H.; Li, X.; Yang, Z.; Gao, L.; Lu, Y. A two-layer surrogate-assisted differential evolution with better and nearest option for optimizing the spring of hydraulic series elastic actuator. Appl. Soft Comput. 2021, 100, 107001. [Google Scholar] [CrossRef]

- Ji, X.; Zhang, Y.; Gong, D.; Sun, X. Dual-Surrogate-Assisted Cooperative Particle Swarm Optimization for Expensive Multimodal Problems. IEEE Trans. Evol. Comput. 2021, 25, 794–808. [Google Scholar] [CrossRef]

- Li, F.; Shen, W.; Cai, X.; Gao, L.; Wang, G.G. A fast surrogate-assisted particle swarm optimization algorithm for computationally expensive problems. Appl. Soft Comput. 2020, 92, 106303. [Google Scholar] [CrossRef]

- Zhao, F.; Zhang, H.; Wang, L.; Ma, R.; Xu, T.; Zhu, N.; Jonrinaldi. A surrogate-assisted Jaya algorithm based on optimal directional guidance and historical learning mechanism. Eng. Appl. Artif. Intell. 2022, 111, 104775. [Google Scholar] [CrossRef]

- Pan, J.S.; Liu, N.; Chu, S.C.; Lai, T. An efficient surrogate-assisted hybrid optimization algorithm for expensive optimization problems. Inf. Sci. 2021, 561, 304–325. [Google Scholar] [CrossRef]

- Loshchilov, I. Surrogate-Assisted Evolutionary Algorithms. Ph.D. Thesis, Université Paris Sud–Paris XI, Paris, France, 2013. [Google Scholar]

- Dong, H.; Dong, Z. Surrogate-assisted grey wolf optimization for high-dimensional, computationally expensive black-box problems. Swarm Evol. Comput. 2020, 57, 100713. [Google Scholar] [CrossRef]

- Dash, C.S.K.; Behera, A.K.; Dehuri, S.; Cho, S.B. Radial basis function neural networks: A topical state-of-the-art survey. Open Comput. Sci. 2016, 6, 33–63. [Google Scholar] [CrossRef]

- Cavoretto, R.; Rossi, A.D.; Mukhametzhanov, M.S.; Sergeyev, Y.D. On the search of the shape parameter in radial basis functions using univariate global optimization methods. J. Glob. Optim. 2021, 79, 305–327. [Google Scholar] [CrossRef]

- Zendehboudi, A.; Saidur, R.; Mahbubul, I.M.; Hosseini, S.H. Data-driven methods for estimating the effective thermal conductivity of nanofluids: A comprehensive review. Int. J. Heat Mass Transf. 2019, 131, 1211–1231. [Google Scholar] [CrossRef]

- Bagheri, S.; Konen, W.; Bäck, T. Comparing Kriging and Radial Basis Function Surrogates. In Proceedings of the Proceedings-27. Workshop Computational Intelligence; Hoffmann, F., Huellermeier, E., Mikut, R., Eds.; Scientific Publishing: Singapore, 2017; pp. 243–259. [Google Scholar]

- Vahabli, E.; Rahmati, S. Application of an RBF neural network for FDM parts’ surface roughness prediction for enhancing surface quality. Int. J. Precis. Eng. Manuf. 2016, 17, 1589–1603. [Google Scholar] [CrossRef]

- Demuth, H.B.; Beale, M.H.; Jess, O.D.; Hagan, M.T. Neural Network Design, 2nd ed.; Martin Hagan: Cramlington, UK, 2014; p. 800. [Google Scholar]

- Jawad, J.; Hawari, A.H.; Javaid Zaidi, S. Artificial neural network modeling of wastewater treatment and desalination using membrane processes: A review. Chem. Eng. J. 2021, 419, 129540. [Google Scholar] [CrossRef]

- Bornatico, R.; Hüssy, J.; Witzig, A.; Guzzella, L. Surrogate modeling for the fast optimization of energy systems. Energy 2013, 57, 653–662. [Google Scholar] [CrossRef]

- Regis, R.G. Multi-objective constrained black-box optimization using radial basis function surrogates. J. Comput. Sci. 2016, 16, 140–155. [Google Scholar] [CrossRef]

- Cavoretto, R.; Rossi, A.D.; Lancellotti, S. Bayesian approach for radial kernel parameter tuning. J. Comput. Appl. Math. 2024, 441, 115716. [Google Scholar] [CrossRef]

- Li, S.; Chen, H.; Wang, M.; Heidari, A.A.; Mirjalili, S. Slime mould algorithm: A new method for stochastic optimization. Future Gener. Comput. Syst. 2020, 111, 300–323. [Google Scholar] [CrossRef]

- Clerc, M. Confinements and Biases in Particle Swarm Optimization; HAL Open Science: Villeurbanne, France, 2006. [Google Scholar]

- Regis, R.G.; Shoemaker, C.A. A Stochastic Radial Basis Function Method for the Global Optimization of Expensive Functions. Informs J. Comput. 2007, 19, 497–509. [Google Scholar] [CrossRef]

- Fu, G.; Sun, C.; Tan, Y.; Zhang, G.; Jin, Y. A surrogate-assisted evolutionary algorithm with random feature selection for large-scale expensive problems. In Parallel Problem Solving from Nature—PPSN XVI; Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics); Springer: Cham, Switzerland, 2020; Volume 12269, pp. 125–139. [Google Scholar] [CrossRef]

- Yu, H.; Kang, L.; Tan, Y.; Sun, C.; Zeng, J. Truncation-learning-driven surrogate assisted social learning particle swarm optimization for computationally expensive problem. Appl. Soft Comput. 2020, 97, 106812. [Google Scholar] [CrossRef]

- Wang, H.; Jin, Y.; Doherty, J. Committee-Based Active Learning for Surrogate-Assisted Particle Swarm Optimization of Expensive Problems. IEEE Trans. Cybern. 2017, 47, 2664–2677. [Google Scholar] [CrossRef]

- Bento, P. SASMA Benchmark Code. 2023. Available online: https://gitfront.io/r/pedrobento/hWud6E83nsJt/SASMAsims/ (accessed on 11 January 2026).

- Kassoul, K.; Zufferey, N.; Cheikhrouhou, N.; Belhaouari, S.B. Exponential Particle Swarm Optimization for Global Optimization. IEEE Access 2022, 10, 78320–78344. [Google Scholar] [CrossRef]

- Suganthan, P.N.; Hansen, N.; Liang, J.J.; Deb, K.; Chen, Y.P.; Auger, A.; Tiwari, S. Problem Definitions and Evaluation Criteria for the CEC 2005 Special Session on Real-Parameter Optimization; Technical Report January; Nanyang Technological University: Singapore, 2005. [Google Scholar]

- Ma, J.; Li, H.; Ma, J.; Li, H. Research on Rosenbrock Function Optimization Problem Based on Improved Differential Evolution Algorithm. J. Comput. Commun. 2019, 7, 107–120. [Google Scholar] [CrossRef]

- Guo, Z.; Huang, H.; Deng, C.; Yue, X.; Wu, Z. An Enhanced Differential Evolution with Elite Chaotic Local Search. Comput. Intell. Neurosci. 2015, 2015, 583759. [Google Scholar] [CrossRef]

- Bala, I.; Yadav, A. Gravitational Search Algorithm: A State-of-the-Art Review. In Proceedings of the Harmony Search and Nature Inspired Optimization Algorithms; Yadav, N., Yadav, A., Bansal, J.C., Deep, K., Kim, J.H., Eds.; Springer: Singapore, 2019; pp. 27–37. [Google Scholar]

- Abdel-Basset, M.; Shawky, L.A. Flower pollination algorithm: A comprehensive review. Artif. Intell. Rev. 2019, 52, 2533–2557. [Google Scholar] [CrossRef]

- Fister, I.; Fister, I.; Yang, X.S.; Fong, S.; Zhuang, Y. Bat algorithm: Recent advances. In Proceedings of the 2014 IEEE 15th International Symposium on Computational Intelligence and Informatics (CINTI); IEEE: Piscataway, NJ, USA, 2014; pp. 163–167. [Google Scholar] [CrossRef]

- Mohamed, A.W.; Abutarboush, H.F.; Hadi, A.A.; Mohamed, A.K. Gaining-Sharing Knowledge Based Algorithm with Adaptive Parameters for Engineering Optimization. IEEE Access 2021, 9, 65934–65946. [Google Scholar] [CrossRef]

- Camp, C.V.; Farshchin, M. Design of space trusses using modified teaching–learning based optimization. Eng. Struct. 2014, 62–63, 87–97. [Google Scholar] [CrossRef]

- Ghannadiasl, A.; Zarbilinezhad, M. CGO and SNS Optimization Algorithm for the Structures with Discontinuous and Continuous Variables. Comput. Intell. Neurosci. 2022, 2022, 4211707. [Google Scholar] [CrossRef]

| Algorithm | Parameter Settings |

|---|---|

| TLSAPSO | |

| SAE-ARFS | |

| SHPSO | |

| TL-SSLPSO | |

| CALSAPSO | |

| GORS-SSLPSO | |

| SASMA (SMA) |

| Function No. | Function Name | fmin | Properties |

|---|---|---|---|

| F1 | Sphere | 0 | Unimodal |

| F2 | Schwefel 2.22 | 0 | Unimodal |

| F3 | Schwefel 1.2 | 0 | Unimodal |

| F4 | Schwefel 2.21 | 0 | Unimodal |

| F5 | Rosenbrock | 0 | Multimodal with narrow valley |

| F6 | Step | 0 | Unimodal |

| F7 | Quartic | 0 | Unimodal |

| F8 | Schwefel 2.26 | Multimodal | |

| F9 | Rastrigin | 0 | Very complicated Multimodal |

| F10 | Ackley | 0 | Multimodal |

| F11 | Griewank | 0 | Multimodal |

| F12 | Penalized (1) | 0 | Multimodal |

| F13 | Penalized (2) | 0 | Multimodal |

| F14 | Ellipsoid | 0 | Unimodal |

| F15 | Shifted Rotated Rastrigin (F10 in [67]) | −330 | Very complicated Multimodal |

| F16 | Rotated Hybrid Composition (F16 in [67]) | 120 | Very complicated Multimodal |

| F17 | Rotated Hybrid Composition (F19 in [67]) | 10 | Very complicated Multimodal |

| Test Function/Metric | CALSAPSO | GORS-SSLPSO | SAE-ARFS | SHPSO | TL-SSLPSO | TLSAPSO | SASMA | |||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Mean | ||||||||||||||

| F1 | St. Dev. | + | − | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F2 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F3 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F4 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F5 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | 6.372 × 100 | |||||||||||||

| F6 | St. Dev. | + | − | + | + | + | + | 1.214 × 100 | ||||||

| Min | 5.842 × 10−4 | |||||||||||||

| Mean | ||||||||||||||

| F7 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F8 | St. Dev. | + | + | + | + | + | + | 2.298 × 103 | ||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F9 | St. Dev. | + | + | + | + | + | + | 2.745 × 101 | ||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F10 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F11 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F12 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F13 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F14 | St. Dev. | + | + | + | + | + | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F15 | St. Dev. | − | − | = | − | − | = | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F16 | St. Dev. | + | − | = | − | − | + | |||||||

| Min | ||||||||||||||

| Mean | ||||||||||||||

| F17 | St. Dev. | + | = | + | = | = | + | |||||||

| Min | ||||||||||||||

| Win | 16 | 12 | 15 | 14 | 14 | 16 | ||||||||

| Tie | 0 | 1 | 2 | 1 | 1 | 1 | ||||||||

| Lose | 1 | 4 | 0 | 2 | 2 | 0 | ||||||||

| Friedman Test | CALSAPSO | GORS-SSLPSO | SAE-ARFS | SHPSO | TL-SSLPSO | TLSAPSO | SASMA | |

|---|---|---|---|---|---|---|---|---|

| Mean Rank | F1 | 4.23 | 1.46 | 6.49 | 3.43 | 4.34 | 6.51 | 1.54 |

| F2 | 7.00 | 3.94 | 4.54 | 3.46 | 2.23 | 5.83 | 1.00 | |

| F3 | 6.97 | 2.31 | 5.74 | 4.06 | 2.83 | 5.09 | 1.00 | |

| F4 | 6.57 | 3.71 | 6.23 | 2.23 | 3.11 | 5.14 | 1.00 | |

| F5 | 4.83 | 3.86 | 6.00 | 2.83 | 2.49 | 7.00 | 1.00 | |

| F6 | 4.29 | 1.00 | 6.29 | 3.46 | 3.97 | 6.71 | 2.29 | |

| F7 | 4.17 | 3.40 | 6.00 | 3.20 | 3.23 | 7.00 | 1.00 | |

| F8 | 5.80 | 2.46 | 3.91 | 6.57 | 2.46 | 5.20 | 1.60 | |

| F9 | 3.20 | 3.06 | 5.89 | 5.29 | 2.69 | 6.83 | 1.06 | |

| F10 | 6.71 | 4.06 | 4.63 | 2.94 | 2.46 | 6.20 | 1.00 | |

| F11 | 4.66 | 2.11 | 7.00 | 3.17 | 4.11 | 5.94 | 1.00 | |

| F12 | 4.20 | 3.71 | 6.11 | 2.60 | 3.49 | 6.89 | 1.00 | |

| F13 | 4.54 | 4.11 | 6.06 | 2.40 | 2.94 | 6.94 | 1.00 | |

| F14 | 3.77 | 2.20 | 6.26 | 4.03 | 4.00 | 6.74 | 1.00 | |

| F15 | 4.49 | 1.97 | 5.34 | 2.83 | 1.20 | 6.40 | 5.77 | |

| F16 | 5.77 | 3.26 | 4.54 | 2.31 | 1.86 | 5.46 | 4.80 | |

| F17 | 5.94 | 3.11 | 5.63 | 2.97 | 2.34 | 5.66 | 2.34 | |

| Algorithm | Mean | STD | Min | Mean Rank | Signed Rank |

|---|---|---|---|---|---|

| PSO w/inertia | 4.80 | + | |||

| PSO w/constr | 4.11 | + | |||

| WOA | 8.97 | + | |||

| GWO | 4.83 | + | |||

| GSA | 7.43 | + | |||

| FPA | 6.60 | + | |||

| BA | 9.97 | + | |||

| GSK | 1.00 | − | |||

| SMA | 4.17 | + | |||

| SASMA | 3.11 | + |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Bento, P.; Pombo, J.; Nunes, H.; Calado, M.; Mariano, S. Surrogate-Assisted Slime Mould Algorithm Considering a Dual-Based Merit Criterion for Global Database Management. Algorithms 2026, 19, 265. https://doi.org/10.3390/a19040265

Bento P, Pombo J, Nunes H, Calado M, Mariano S. Surrogate-Assisted Slime Mould Algorithm Considering a Dual-Based Merit Criterion for Global Database Management. Algorithms. 2026; 19(4):265. https://doi.org/10.3390/a19040265

Chicago/Turabian StyleBento, Pedro, José Pombo, Hugo Nunes, Maria Calado, and Sílvio Mariano. 2026. "Surrogate-Assisted Slime Mould Algorithm Considering a Dual-Based Merit Criterion for Global Database Management" Algorithms 19, no. 4: 265. https://doi.org/10.3390/a19040265

APA StyleBento, P., Pombo, J., Nunes, H., Calado, M., & Mariano, S. (2026). Surrogate-Assisted Slime Mould Algorithm Considering a Dual-Based Merit Criterion for Global Database Management. Algorithms, 19(4), 265. https://doi.org/10.3390/a19040265