Uncovering Several Degrees of Anxiety in Mexican Students Through Advanced Deep Learning Techniques

Abstract

1. Introduction

2. Related Works

3. Methodology

3.1. Dataset Creation: Anxiety Dataset

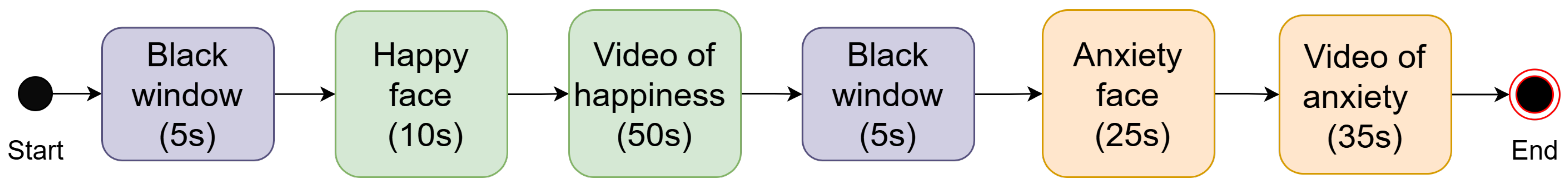

3.1.1. Experimental Protocol and Videotaping

- Requiring glasses for close vision during the test. We considered that glasses could obscure relevant facial gestures or introduce image distortions.

- An inability to tie hair away from the face. Loose hair or hair that wholly or partially covers the face may prevent the correct detection of facial gestures.

3.1.2. Labeling

3.2. Preprocessing

3.3. Model Training: AnxietyNet

- represents the input value (or logit) for the i-th class.

- is the exponential of the input value for the i-th class.

- represents the sum of the exponentials of all K input values (logits) (the result).

- is the probability of the i-th class, with all probabilities summing to 1.

- L is the average categorical cross-entropy loss over N samples.

- N is the number of samples in the batch.

- is a binary indicator (0 or 1) if class c is the correct classification for sample i.

- is the predicted probability of sample i belonging to class c.

4. Results

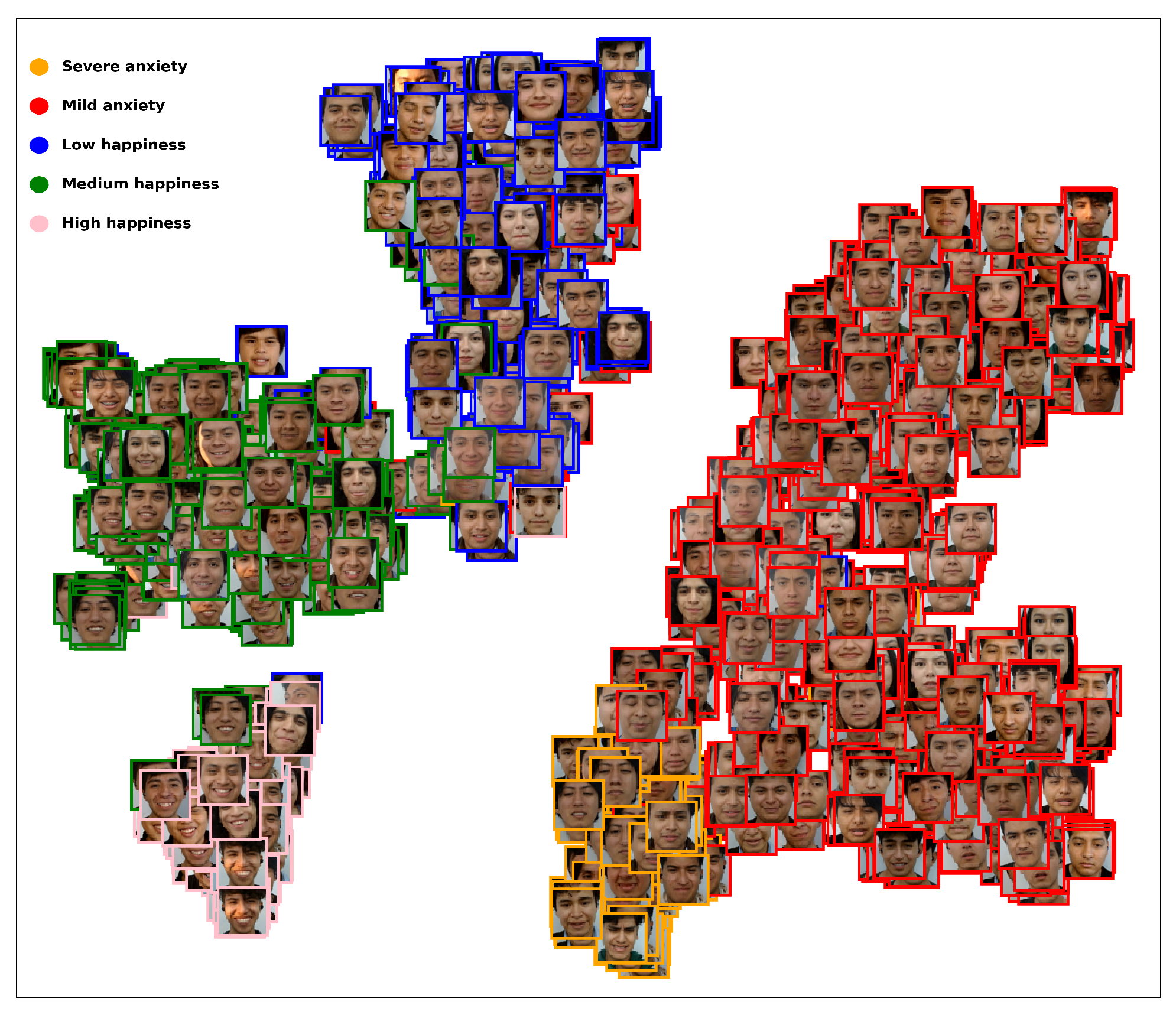

4.1. Labeling Assigments

- Initial round: This round comprised 11 labeling levels, ranging from −5 (intense happiness) to +5 (intense anxiety); the labelers’ consensus was then used to determine the final label for each image.Result: We observed a high concentration of data in a few categories and very little in the rest, creating a significant dataset imbalance that may bias the model toward the majority categories and reduce its ability to detect minority emotions. We decided to deprecate these labels.

- Second round: The number of levels was reduced to seven, as shown in Figure 6.

- Third round: The number of levels was six. In this version, we merged class 5 (medium anxiety) with class 6 (high anxiety) and renamed class 5 (severe anxiety). Therefore, we used the first four labels in purple and the label in blue in Figure 7.

- Final round: We reduced the labels to five classes, so we used the previous four purple labels (high happiness, medium happiness, low happiness, and neutral); we combined purple class 4 (low anxiety) and blue new class 5 (severe anxiety), yielding new green class 4 (anxiety), as seen in Figure 7.

4.2. Testing Models

4.3. State-of-the-Art Comparison

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Cooray, S.E.; Bakala, A. Anxiety disorders in people with learning disabilities. Adv. Psychiatr. Treat. 2005, 11, 355–361. [Google Scholar] [CrossRef]

- Liu, W.; Zhang, R.; Wang, H.; Rule, A.; Wang, M.; Abbey, C.; Singh, M.K.; Rozelle, S.; She, X.; Tong, L. Association between anxiety, depression symptoms, and academic burnout among Chinese students: The mediating role of resilience and self-efficacy. BMC Psychol. 2024, 12, 335. [Google Scholar] [CrossRef] [PubMed]

- Lizarte Simón, E.J.; Gijón Puerta, J.; Galván Malagón, M.C.; Khaled Gijón, M. Influence of self-efficacy, anxiety and psychological well-being on academic engagement during university education. Educ. Sci. 2024, 14, 1367. [Google Scholar] [CrossRef]

- Ghattas, A.H.S.; El-Ashry, A.M. Perceived academic anxiety and procrastination among emergency nursing students: The mediating role of cognitive emotion regulation. BMC Nurs. 2024, 23, 670. [Google Scholar] [CrossRef] [PubMed]

- Rastogi, S.; Gupta, S.; Deepak, D.; Mishra, B.N.; Gore, R.; Singh, V. A Systematic Literature Review on Anxiety Among Undergraduate Students: Causes and Coping Strategies. Ann. Neurosci. 2025, 09727531251366078. [Google Scholar] [CrossRef]

- Silva-Ramos, M.F.; López-Cocotle, J.J.; Meza-Zamora, M.E.C. Estrés académico en estudiantes universitarios. Investig. Cienc. 2020, 28, 75–83. [Google Scholar] [CrossRef]

- Spitzer, R.L.; Kroenke, K.; Williams, J.B.W.; Löwe, B. A brief measure for assessing generalized anxiety disorder: The GAD-7. Arch. Intern. Med. 2006, 166, 1092–1097. [Google Scholar] [CrossRef]

- Lovibond, P.F.; Lovibond, S.H. The structure of negative emotional states: Comparison of the Depression Anxiety Stress Scales (DASS) with the Beck Depression and Anxiety Inventories. Behav. Res. Ther. 1995, 33, 335–343. [Google Scholar] [CrossRef]

- Ahmed, I.; Hazell, C.M.; Edwards, B.; Glazebrook, C.; Davies, E.B. A systematic review and meta-analysis of studies exploring prevalence of non-specific anxiety in undergraduate university students. BMC Psychiatry 2023, 23, 240. [Google Scholar] [CrossRef]

- Zhang, H.; Feng, L.; Li, N.; Jin, Z.; Cao, L. Video-based stress detection through deep learning. Sensors 2020, 20, 5552. [Google Scholar] [CrossRef]

- Ding, D.; Xu, W.; Liu, X.; Zhu, T. Facial video based stress detection for enhancing ecological validity. Acta Psychol. 2025, 255, 104877. [Google Scholar] [CrossRef]

- Singh, A.; Kumar, D. Computer assisted identification of stress, anxiety, depression (SAD) in students: A state-of-the-art review. Med. Eng. Phys. 2022, 110, 103900. [Google Scholar] [CrossRef] [PubMed]

- Simonyan, K.; Zisserman, A. Two-stream convolutional networks for action recognition in videos. Adv. Neural Inf. Process. Syst. 2014, 27. Available online: https://proceedings.neurips.cc/paper_files/paper/2014/file/ca007296a63f7d1721a2399d56363022-Paper.pdf (accessed on 5 January 2026).

- Tran, D.; Bourdev, L.; Fergus, R.; Torresani, L.; Paluri, M. Learning spatiotemporal features with 3d convolutional networks. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 4489–4497. [Google Scholar]

- Donahue, J.; Anne Hendricks, L.; Guadarrama, S.; Rohrbach, M.; Venugopalan, S.; Saenko, K.; Darrell, T. Long-term recurrent convolutional networks for visual recognition and description. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 2625–2634. [Google Scholar]

- Arnab, A.; Dehghani, M.; Heigold, G.; Sun, C.; Lučić, M.; Schmid, C. Vivit: A video vision transformer. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Virtual, 11–17 October 2021; pp. 6836–6846. [Google Scholar]

- Gavrilescu, M.; Vizireanu, N. Predicting depression, anxiety, and stress levels from videos using the facial action coding system. Sensors 2019, 19, 3693. [Google Scholar] [CrossRef]

- Wang, C.; Liang, L.; Liu, X.; Lu, Y.; Shen, J.; Luo, H.; Xie, W. Multimodal fusion diagnosis of depression and anxiety based on face video. In Proceedings of the 2021 IEEE International Conference on Medical Imaging Physics and Engineering (ICMIPE), Hefei, China, 13–14 November 2021; pp. 1–7. [Google Scholar]

- Grimm, B.; Talbot, B.; Larsen, L. PHQ-V/GAD-V: Assessments to identify signals of depression and anxiety from patient video responses. Appl. Sci. 2022, 12, 9150. [Google Scholar] [CrossRef]

- Li, X.; Lu, L.; Yi, X.; Wang, H.; Zheng, Y.; Yu, Y.; Wang, Q. LI-FPN: Depression and anxiety detection from learning and imitation. In Proceedings of the 2023 IEEE International Conference on Bioinformatics and Biomedicine (BIBM), Istanbul, Turkey, 5–8 December 2023; pp. 567–573. [Google Scholar]

- Wu, J.; Chen, D.; Ren, Z.; Li, Y.; Li, H.; Liu, Z. Adv-FVMamba: Anxiety Disorders Recognition in Imbalanced Video Datasets Using Adversarial Entropy Loss. In Proceedings of the 2024 17th International Congress on Image and Signal Processing, BioMedical Engineering and Informatics (CISP-BMEI), Shanghai, China, 26–28 October 2024; pp. 1–6. [Google Scholar]

- Xu, X.; Zhang, X.; Zhang, Y. Faces of the Mind: Unveiling Mental Health States Through Facial Expressions in 11,427 Adolescents. arXiv 2024, arXiv:2405.20072. [Google Scholar] [CrossRef]

- Lu, L.; Jiang, Y.; Li, X.; Wang, H.; Zou, Q.; Wang, Q. Depression and anxiety detection method based on serialized facial expression imitation. Eng. Appl. Artif. Intell. 2025, 149, 110354. [Google Scholar] [CrossRef]

- Hernández Sampieri, R.; Fernández Collado, C.; Baptista Lucio, P. Metodología de la Investigación, 6th ed.; McGraw-Hill: Mexico City, Mexico, 2014. [Google Scholar]

- World Medical Association. World Medical Association Declaration of Helsinki: Ethical principles for medical research involving human participants. JAMA 2025, 333, 71–74. [Google Scholar] [CrossRef]

- Hamilton, M. The assessment of anxiety states by rating. Br. J. Med. Psychol. 1959, 32, 50–55. [Google Scholar] [CrossRef]

- Villarreal-Zegarra, D.; Paredes-Angeles, R.; Mayo-Puchoc, N.; Arenas-Minaya, E.; Huarcaya-Victoria, J.; Copez-Lonzoy, A. Psychometric properties of the GAD-7 (General Anxiety Disorder-7): A cross-sectional study of the Peruvian general population. BMC Psychol. 2024, 12, 183. [Google Scholar] [CrossRef]

- Lugaresi, C.; Tang, J.; Nash, H.; McClanahan, C.; Uboweja, E.; Hays, M.; Zhang, F.; Chang, C.L.; Yong, M.G.; Lee, J.; et al. Mediapipe: A framework for building perception pipelines. arXiv 2019, arXiv:1906.08172. [Google Scholar] [CrossRef]

- Wang, Y.; Yan, S.; Liu, Y.; Song, W.; Liu, J.; Chang, Y.; Mai, X.; Hu, X.; Zhang, W.; Gan, Z. A survey on facial expression recognition of static and dynamic emotions. arXiv 2024, arXiv:2408.15777. [Google Scholar] [CrossRef]

- Ji, S.; Xu, W.; Yang, M.; Yu, K. 3D convolutional neural networks for human action recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 221–231. [Google Scholar] [CrossRef] [PubMed]

- Huang, J.; Zhou, W.; Li, H.; Li, W. Attention-based 3D-CNNs for large-vocabulary sign language recognition. IEEE Trans. Circuits Syst. Video Technol. 2019, 29, 2822–2832. [Google Scholar] [CrossRef]

- Montaha, S.; Azam, S.; Rafid, A.K.M.R.H.; Hasan, M.Z.; Karim, A.; Islam, A. Timedistributed-cnn-lstm: A hybrid approach combining cnn and lstm to classify brain tumor on 3d mri scans performing ablation study. IEEE Access 2022, 10, 60039–60059. [Google Scholar] [CrossRef]

- Chowanda, A. Spatiotemporal features learning from song for emotions recognition with time distributed CNN. In Proceedings of the 2021 1st International Conference on Computer Science and Artificial Intelligence (ICCSAI), Jakarta, Indonesia, 28 October 2021. [Google Scholar]

- Joshi, A.; Kale, S.; Chandel, S.; Pal, D.K. Likert scale: Explored and explained. Br. J. Appl. Sci. Technol. 2015, 7, 396–403. [Google Scholar] [CrossRef]

- Russell, J.A. A circumplex model of affect. J. Personal. Soc. Psychol. 1980, 39, 1161–1178. [Google Scholar] [CrossRef]

- Hossin, M.; Sulaiman, M.N. A review on evaluation metrics for data classification evaluations. Int. J. Data Min. Knowl. Manag. Process 2015, 5, 1. [Google Scholar]

| Hyperparameter | Value |

|---|---|

| Epochs | 35 |

| Batch size | 8 |

| Learning rate | |

| Optimizer | ADAM |

| Loss function | Categorical cross-entropy |

| Architecture | Accuracy | Precision | Recall | F1-Score | Specificity | Sensitivity |

|---|---|---|---|---|---|---|

| CNN3D *1 | 0.7996 | 0.8070 | 0.7096 | 0.7423 | 0.9416 | 0.7096 |

| TD-CNN *1 | 0.8071 | 0.7849 | 0.7340 | 0.7547 | 0.9443 | 0.7340 |

| CNN3D-ATT *1 | 0.7996 | 0.8112 | 0.7352 | 0.7620 | 0.9419 | 0.7352 |

| CNN3D *2 | 0.7041 | 0.7481 | 0.6900 | 0.7082 | 0.9374 | 0.6900 |

| TD-CNN *2 | 0.7340 | 0.7590 | 0.7368 | 0.7453 | 0.9443 | 0.7368 |

| CNN3D-ATT *2 | 0.7228 | 0.7416 | 0.7178 | 0.7261 | 0.9421 | 0.7178 |

| CNN3D *3 | 0.6947 | 0.6331 | 0.6242 | 0.6244 | 0.9458 | 0.6242 |

| TD-CNN *3 | 0.7078 | 0.6868 | 0.6420 | 0.6568 | 0.9476 | 0.6420 |

| CNN3D-ATT *3 | 0.7022 | 0.6970 | 0.6378 | 0.6588 | 0.9462 | 0.6378 |

| Architecture | Accuracy | Precision | Recall | F1-Score | Specificity | Sensitivity | MAE | RMSE | MSE |

|---|---|---|---|---|---|---|---|---|---|

| CNN3D-ATT *1 | 0.7996 | 0.8112 | 0.7352 | 0.7620 | 0.9419 | 0.7352 | 0.2134 | 0.4971 | 0.2471 |

| CNN3D-ATT *2 | 0.5224 | 0.4369 | 0.5265 | 0.44652 | 0.8758 | 0.5265 | 0.33472 | 0.4765 | 0.2271 |

| Authors | Dataset | Emotions | Used Models/Levels of Intensity | Reported Metrics |

|---|---|---|---|---|

| Gavrilescu et al. (2019) [17] | FACS–DASS *1 | Depression, stress, anxiety | FDASSNN/5 levels | Accuracy = 77.9% |

| Wang et al. (2021) [18] | By authors *1 | Depression, anxiety, without disorders | CNN+LSTM/binary classification | Precision = 0.7208 |

| Grimm et al. (2022) [19] | By authors *1 | Anxiety | GAD-V/4 levels | AUC = 0.909 |

| Li et al. (2023) [20] | VFEM *1 | Depression, anxiety | ResNet-18/binary classification | F1-score = 0.82 *2 |

| Wu et al. (2024) [21] | QADAVB *1 | Anxiety | Adv-FVMMAmba/ binary clasification | F1-score = 0.751 *2 |

| Xu et al. (2024) [22] | FACES *1 | Depression, stress, anxiety | ML algorithms/binary classification | F1-score = 0.66 *2 |

| Lu et al. (2025) [23] | VFEM *1 | Depression, anxiety | SFE-Former/binary clasification | F1-score = 0.882 *2 |

| AnxietyNet | Anxiety dataset *3 | Happiness, anxiety | CNN3D, TD-CNN, CNN3D + attention/7, 6, 5 levels | F1-score = 0.7620 *4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Moreno-Armendáriz, M.A.; Lara-Cázares, A.; Castillo-González, J.; Galdo-Navarro, H.V. Uncovering Several Degrees of Anxiety in Mexican Students Through Advanced Deep Learning Techniques. Algorithms 2026, 19, 235. https://doi.org/10.3390/a19030235

Moreno-Armendáriz MA, Lara-Cázares A, Castillo-González J, Galdo-Navarro HV. Uncovering Several Degrees of Anxiety in Mexican Students Through Advanced Deep Learning Techniques. Algorithms. 2026; 19(3):235. https://doi.org/10.3390/a19030235

Chicago/Turabian StyleMoreno-Armendáriz, Marco A., Arturo Lara-Cázares, Jared Castillo-González, and Halder V. Galdo-Navarro. 2026. "Uncovering Several Degrees of Anxiety in Mexican Students Through Advanced Deep Learning Techniques" Algorithms 19, no. 3: 235. https://doi.org/10.3390/a19030235

APA StyleMoreno-Armendáriz, M. A., Lara-Cázares, A., Castillo-González, J., & Galdo-Navarro, H. V. (2026). Uncovering Several Degrees of Anxiety in Mexican Students Through Advanced Deep Learning Techniques. Algorithms, 19(3), 235. https://doi.org/10.3390/a19030235