1. Introduction

The state of health (SOH) of lithium-ion batteries serves as a fundamental indicator for energy optimization and safety warning in battery management systems (BMS) [

1]. However, battery aging processes exhibit strong nonlinearity and time-varying characteristics. In practical operation, dynamic fluctuations in operating conditions, ambient temperature, and charge–discharge rates frequently induce distribution shifts in monitoring data, which leads to a pronounced degradation in the generalization performance of models trained under single operating conditions when deployed across different scenarios [

2]. Consequently, enhancing the feature extraction capability and robustness of data-driven models under complex operating conditions remains a critical challenge in SOH estimation.

While data-driven approaches have made significant progress in SOH estimation, their evolution reflects a sequence of architectural attempts to balance local sensitivity, temporal dependency modeling, and cross-condition robustness. Early studies relied on handcrafted degradation indicators derived from voltage, differential voltage, and incremental capacity (IC) curves, which were then mapped to SOH using conventional regression models such as Gaussian Process Regression (GPR) [

3], semi-supervised transfer component analysis [

4], or interval prediction frameworks [

5]. Although these feature-based approaches offered interpretability and relatively low computational complexity, their reliance on fixed feature representations made them sensitive to noise and inadequate for capturing the full nonlinear evolution of battery degradation under varying operating conditions.

To bypass the limitations of manual feature engineering, the field shifted toward deep learning, prominently utilizing Long Short-Term Memory (LSTM) architectures. LSTM-based models leveraged gated memory mechanisms to learn temporal degradation dynamics directly from sequential measurements [

6,

7,

8]. Compared with static regression models, LSTMs improved adaptability to nonlinear aging processes. Subsequent enhancements incorporated attention mechanisms and convolutional front-ends to improve feature selectivity and local pattern extraction [

9,

10,

11]. However, despite their ability to model short- and medium-range temporal dependencies, recurrent architectures remain constrained by sequential information flow, limited parallelization, and gradient attenuation over long sequences. These structural characteristics reduce their efficiency in modeling long-range dependencies across the entire battery life cycle and limit their responsiveness to abrupt regeneration phenomena.

Transformer-based architectures were subsequently introduced to address these long-range dependency bottlenecks. By employing multi-head self-attention, Transformer models capture global correlations across multivariate time-series more effectively than recurrent networks [

12,

13]. Their parallel computation paradigm improves scalability and enables holistic degradation modeling. Nevertheless, pure Transformer models inherently lack convolutional inductive bias, which diminishes sensitivity to localized degradation behaviors. Moreover, their reliance on fixed-activation Multi-Layer Perceptron (MLP) blocks in feed-forward layers may restrict functional approximation capacity when modeling highly nonlinear, multi-stage aging trajectories.

To reconcile local and global modeling capabilities, hybrid convolutional neural network (CNN) and Transformer frameworks were proposed. Gu et al. and Zhang et al. integrated convolutional modules with attention-based encoders to enhance representational robustness and multi-scale degradation perception [

14,

15]. Although these architectures improve local–global interaction, their structural fusion is often empirically designed rather than systematically optimized, and most still employ conventional MLP-based regression heads. As a result, nonlinear approximation efficiency remains fundamentally bounded by fixed activation functions.

Beyond architectural design, transfer-learning strategies have been explored to mitigate distributional shifts across batteries and operating conditions. Wu et al. proposed a transfer-stacking framework to enhance cross-battery generalization, while related works in multivariate time-series modeling have emphasized representation alignment techniques [

16,

17,

18]. Although these approaches improve inductive transfer under limited labeled data, they primarily address feature alignment rather than enhancing the intrinsic representational capacity of the backbone model. Consequently, cross-condition robustness often depends on additional fine-tuning or complex domain adaptation procedures.

Collectively, despite continuous architectural advancements from feature-based regression to recurrent, attention-based, hybrid, and transfer-learning paradigms, existing methods still struggle to simultaneously achieve strong local feature sensitivity, effective long-range dependency modeling, high-capacity nonlinear function approximation, and robust cross-condition generalization within a unified framework. To address these limitations, this study proposes an end-to-end multi-view deep learning framework termed Conformer-KAN, which integrates a convolution-augmented Transformer (Conformer) with a Kolmogorov–Arnold Network (KAN) for SOH estimation. By incorporating convolutional inductive bias, attention-based global modeling, and spline-based adaptive nonlinear mapping into a single architecture, the proposed framework aims to improve both representational capacity and generalization robustness under complex operating conditions. The main contributions of this study are summarized as follows:

- (1)

Multi-view feature representation: A unified multi-view input layer integrates physical signals and mechanism-informed descriptors with temporal alignment, improving robustness to noise and distribution shifts across operating conditions.

- (2)

Conformer encoder structure: A Macaron-style Conformer combines multi-head self-attention and gated local convolution to jointly model localized degradation signatures and long-range temporal dependencies.

- (3)

KAN-based prediction module: A Kolmogorov–Arnold Network with learnable B-spline edge functions replaces conventional MLP heads, enhancing nonlinear function approximation for complex SOH degradation trajectories.

The remainder of this paper is organized as follows.

Section 2 introduces the proposed Conformer-KAN framework, including the multi-view feature representation, the convolution-augmented Conformer encoder, the KAN-based nonlinear modeling module, and the multi-dimensional attention prediction layer.

Section 3 presents the experimental validation, covering dataset construction, implementation settings, ablation studies, comparative evaluations with baseline models, and generalization analysis. Finally,

Section 4 summarizes the key contributions of this study and provides concluding remarks.

2. Conformer-KAN Method

2.1. Overall Network Architecture

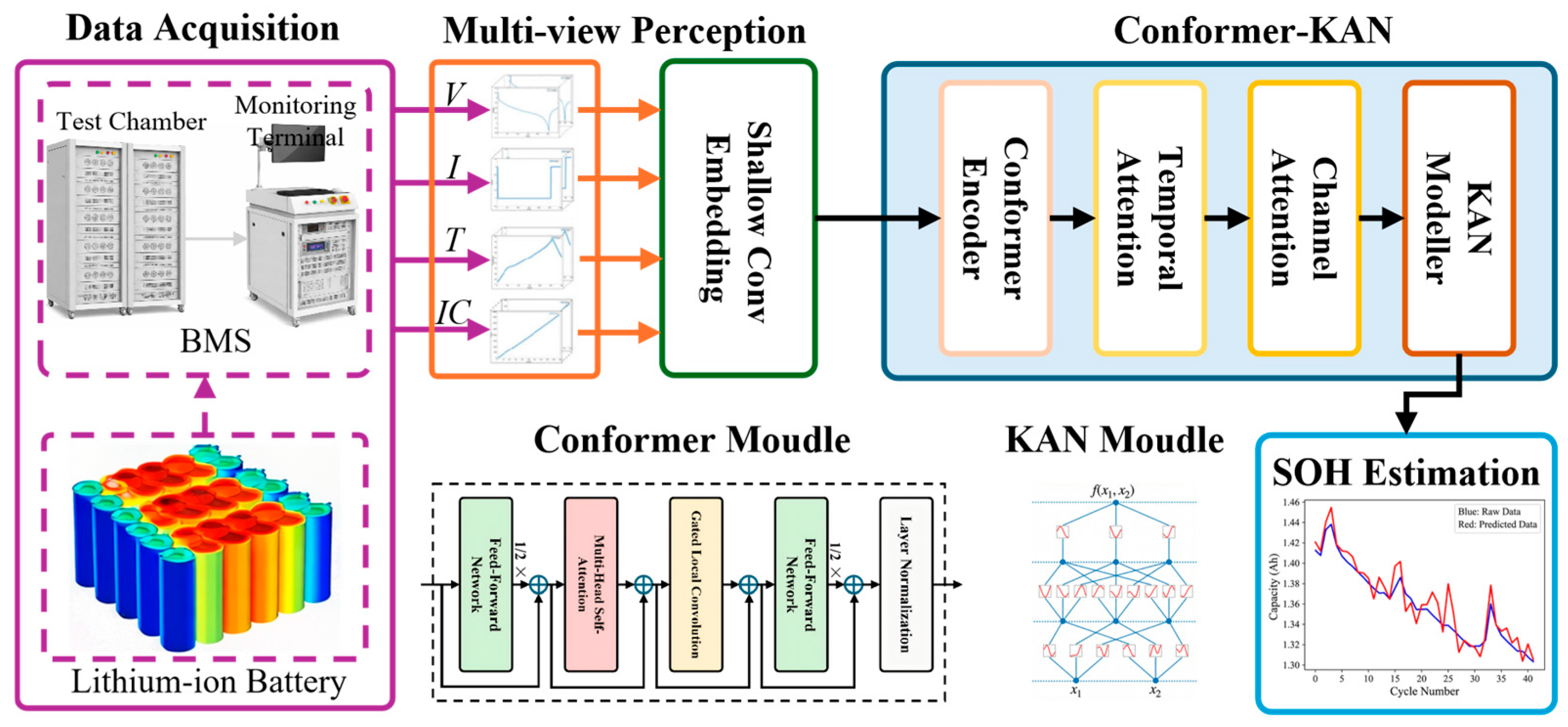

To address the strong nonlinearity of battery aging, localized regeneration signatures, and distribution shifts across operating conditions, we propose an end-to-end SOH estimator termed Conformer-KAN (

Figure 1). Given a multivariate charging-stage sequence, the method follows a four-stage pipeline: (i) multi-view feature construction and temporal alignment, (ii) shallow convolutional embedding to obtain a unified latent representation, (iii) a Macaron-style Conformer encoder that alternates global self-attention and gated local convolution for joint local–global temporal modeling, and (iv) an attention-guided regression head in which a Kolmogorov–Arnold Network (KAN) provides adaptive spline-based nonlinear approximation.

Formally, for each cycle (or charging segment) we construct an input tensor , where is the resampled sequence length and is the number of input channels (multi-view features). The shallow embedding maps to . The Conformer encoder produces , which is subsequently fused by temporal/channel attention to obtain . Finally, KAN regresses as the predicted SOH.

2.2. Multi-View Feature Representation

A multi-view feature representation is constructed to integrate directly measured physical signals with mechanism-informed degradation descriptors, thereby improving robustness under measurement noise and cross-condition distribution shifts. All features are extracted from the constant-current (CC) charging stage to maintain consistency of operating conditions across cycles. Two complementary views are considered: (i) a physical view consisting of terminal voltage

, charging current

, and surface temperature

; and (ii) a mechanism-informed view given by the incremental capacity (

IC) curve, computed from capacity–voltage pairs

as

Because numerical differentiation is highly sensitive to measurement noise, the discrete sequence is smoothed before finite-difference approximation. Specifically, Gaussian smoothing is applied using a kernel size of 11 and standard deviation . These smoothing parameters are fixed across all experiments and are not estimated from test data. After smoothing, the IC curve is obtained by numerical differentiation of the filtered sequence.

To construct a unified input representation, the mechanism-informed

IC feature is temporally aligned with the raw physical signals. Although

is initially defined in the voltage domain, the CC charging stage provides a monotonic voltage trajectory

, enabling each

IC sample to be mapped to its corresponding time index. This yields a time-aligned sequence

, which is synchronized with

,

, and

. Consequently, all four modalities are treated as parallel time-series channels. Each channel is then resampled to a fixed length

by linear interpolation, producing a normalized multi-view tensor

where

corresponds to voltage, current, temperature, and

IC features.

To ensure reproducibility and prevent data leakage, dataset splitting is performed at the battery level before normalization. Min–max normalization statistics are computed using the training split only and subsequently applied to the validation and test splits. The resulting tensor

is projected by a one-dimensional shallow convolution module (SConv) into the latent representation

using kernel size 5 and stride 1, followed by normalization and dropout. This operation performs initial cross-channel fusion and noise suppression, and the resulting representation serves as the input to the Conformer encoder.

2.3. Conformer Encoder

In this study, the Conformer is adopted as a battery-oriented temporal encoder within the proposed SOH estimation framework. Its use is coupled with multi-view feature construction, temporal alignment in the charging stage, and a local–global temporal encoding design to accommodate degradation evolution and regeneration-related fluctuations. Under this formulation, the Conformer serves as a structured backbone for jointly modeling localized aging signatures and long-range dependencies in charging trajectories. Compared with CNN–LSTM pipelines, the encoder enables parallel computation while retaining convolutional inductive bias; compared with pure Transformer architectures, it improves local sensitivity through gated depthwise convolution.

The encoder consists of

identical Conformer blocks. Each block transforms the representation according to

Through iterative stacking, the feature representation is progressively refined while preserving the temporal dimension . The final encoder output is denoted as .

2.3.1. Gated Local Convolution

To compensate for the lack of local inductive bias in self-attention mechanisms, a Gated Local Convolution Unit (GLCU) is introduced. The GLCU adopts a bottleneck structure composed of pointwise convolution, gated activation, and depthwise convolution, aiming to enhance sensitivity to localized voltage fluctuations and temperature inflection points induced by capacity regeneration [

19].

Given an input feature tensor

, where

denotes the temporal length and

model represents the feature channel dimension, the computation is formulated as follows:

where, LN(·) and BN(·) denote layer normalization and batch normalization, respectively. Conv(·) indicates one-dimensional pointwise convolution, DWConv(·) represents one-dimensional depthwise convolution, GLU(·) denotes the gated linear unit, and Swish(·) is the Swish activation function.

2.3.2. Conformer Encoder Structure

As shown in

Figure 2, the Conformer encoder adopts a Macaron-style architecture. Each encoding block consists of two half-step Feed-Forward Networks (FFNs), one Multi-Head Self-Attention (MHSA) module, and one GLCU, arranged in an alternating manner.

The feature propagation at the

l-th layer is defined as follows. First, a half-step FFN introduces nonlinear mapping:

Next, MHSA captures long-range degradation trends:

Then, GLCU enhances local temporal perception

Finally, another half-step FFN reconstructs the output features:

Through hierarchical stacking of these Conformer blocks, the encoder progressively integrates multi-scale local fluctuations and long-range degradation dynamics, producing the final representation , which is subsequently fed into the multi-dimensional attention fusion module.

2.4. Multi-Dimensional Attention Fusion

To further enhance representation quality before regression, we introduce a multi-dimensional attention fusion module that adaptively re-weights encoder features along both temporal and channel dimensions. This design aims to (i) identify degradation-relevant temporal segments within the charging trajectory and (ii) suppress redundant or noise-sensitive feature channels prior to nonlinear mapping.

Denote

with

representing the feature vector at time step

. Temporal attention (TA) evaluates the relative importance of each time step by computing attention weights across the temporal dimension. The attention score for time step

is defined as

where

is a learnable parameter vector. The normalized attention weight is obtained via softmax:

The temporally aggregated representation is then computed as

Given the temporally fused representation

, channel attention (CA) further models inter-channel dependencies to refine feature discriminability. Channel-wise weights are computed as

where

,

denotes the sigmoid activation function, and MLP represents a lightweight two-layer feed-forward network. The final fused feature vector is obtained via element-wise modulation:

where

denotes element-wise multiplication.

2.5. KAN-Based Modeller

To overcome the efficiency bottleneck of conventional MLPs in function approximation, a KAN is introduced as the nonlinear modeling core, as illustrated in

Figure 3. Unlike fixed activation functions, KAN parameterizes activations along network edges using learnable spline functions, enabling adaptive fitting of complex SOH evolution trajectories.

According to the Kolmogorov–Arnold representation theorem, a multivariate function can be expressed as a finite superposition of univariate functions [

20]. Let

denote the fused representation from the attention module. A KAN layer maps

to

as

where

denotes the learnable edge function from input

to output

,

is the bias term. Each edge function is parameterized as a base activation combined with a B-spline expansion:

where

is the

-th B-spline basis of order

defined over a knot grid,

is the number of spline bases (grid resolution), and

are learnable parameters. This design enables the regression head to adaptively approximate multi-stage and highly nonlinear degradation trajectories that are difficult to fit with fixed-activation MLPs.

Beyond generic nonlinear approximation, the use of KAN is motivated by the shape characteristics of battery SOH trajectories under heterogeneous operating conditions. In practice, SOH evolution may exhibit piecewise-varying slopes, local regeneration-like deviations, and condition-dependent nonlinear transitions. Compared with fixed-activation MLP heads, the spline-parameterized edge functions in KAN provide more flexible local function-shape adaptation, which helps the regression head better fit such irregular degradation patterns while maintaining stable performance under cross-condition shifts.

2.6. SOH Prediction and Optimization

For SOH estimation, the final KAN regression head produces a scalar prediction:

The entire network is trained end-to-end using the mean squared error (MSE) loss:

where

is the mini-batch,

is the ground-truth SOH,

is the predicted SOH.

For reproducibility, all architectural and training hyperparameters are explicitly specified. The resampled sequence length is fixed to , and the multi-view input dimension is , corresponding to voltage, current, temperature, and IC features. The shallow convolutional embedding maps the input to an embedding dimension using a one-dimensional convolution with kernel size 5, stride 1, and dropout rate 0.1. The Conformer encoder consists of stacked blocks, each with embedding dimension , feed-forward hidden dimension , and attention heads. The depthwise convolution within the GLCU adopts a kernel size of 15, and dropout with rate 0.1 is applied in the FFN, MHSA, and convolutional modules. The multi-dimensional attention fusion layer preserves the embedding dimension and generates temporal and channel attention weights through a two-layer projection with hidden dimension 64. In the KAN module, the input dimension is and the output dimension is 1 for SOH regression. Each edge function is parameterized using cubic B-spline bases of order with grid resolution , and the knot grid is uniformly initialized over the normalized input range .

All dataset splits are conducted at the battery level prior to normalization, and normalization statistics are computed exclusively from the training set and then applied to validation and test sets to avoid data leakage. Hyperparameters are selected using only the training and validation splits and remain fixed across all cross-condition experiments to ensure fair comparison. The complete training procedure of the proposed method is summarized in Algorithm 1.

| Algorithm 1 Training procedure of the Conformer-KAN framework for SOH estimation |

Input: Battery dataset ; maximum number of training epochs E; batch size B

Output: Trained model parameters - 01.

Initialize model parameters randomly - 02.

Data preprocessing: resample all sequences in to a fixed length L, normalize features to [0, 1], and compute IC curves - 03.

While do - 04.

Shuffle dataset and divide it into mini-batches of size B - 05.

For each mini-batch do - 06.

Step 1: Multi-view feature embedding - 07.

- 08.

Step 2: Conformer-KAN encoding - 09.

For to N do - 10.

- 11.

End For - 12.

Step 3: Multi-dimensional attention fusion - 13.

- 14.

- 15.

Step 4: SOH prediction and optimization - 16.

- 17.

Compute loss: - 18.

Update parameters: - 19.

End For - 20.

- 21.

End While - 22.

Return trained parameters

|

3. Results and Analysis

3.1. Dataset Construction

To comprehensively evaluate the prediction accuracy and generalization capability of the proposed Conformer-KAN model across different material systems, battery formats, and operating conditions, two widely adopted benchmark datasets are employed: the lithium-ion battery dataset released by the National Aeronautics and Space Administration (NASA) and the battery aging dataset provided by the University of Oxford. A detailed comparison of key dataset parameters is summarized in

Table 1 [

21].

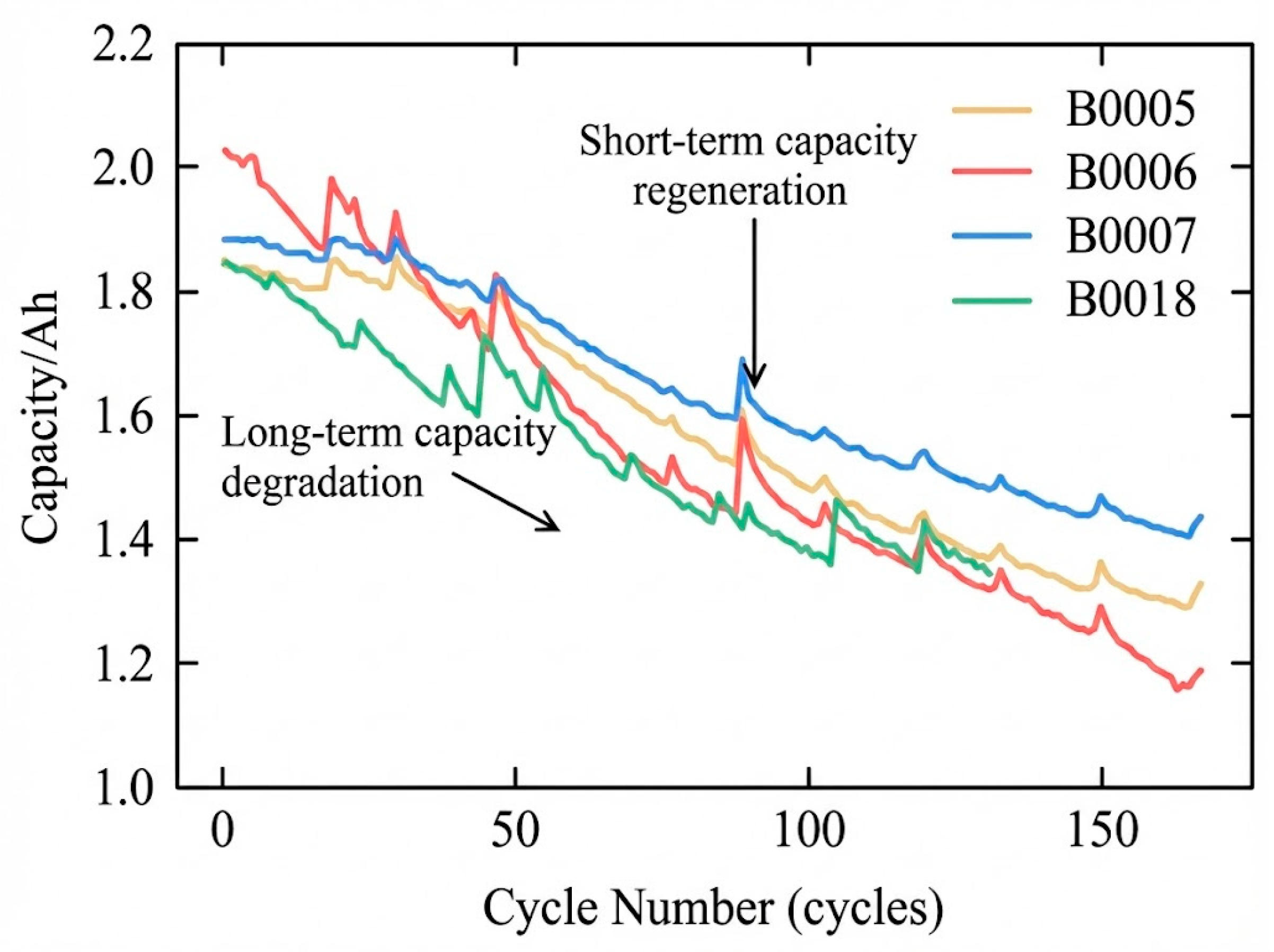

NASA dataset: Four cylindrical lithium-ion batteries, labeled B0005, B0006, B0007, and B0018, are selected. The cathode material is lithium nickel cobalt aluminum oxide, with a rated capacity of 2.0 Ah. All experiments are conducted at 24 °C under a constant-current–constant-voltage (CC–CV) charging protocol with a charging current of 1.5 A, followed by 2 A constant-current discharge. The corresponding SOH trajectories exhibit pronounced nonlinearity and capacity regeneration phenomena, making this dataset particularly suitable for evaluating a model’s sensitivity to localized transient fluctuations, as illustrated in

Figure 4.

Oxford dataset: This dataset consists of eight prismatic pouch lithium-ion batteries (Cell 1–Cell 8) with lithium cobalt oxide cathodes and a rated capacity of 0.74 Ah. The aging experiments are performed at an elevated temperature of 40 °C using a 1 C constant-current cycling protocol. Owing to its distinct physical form factor and higher noise level, this dataset is designed to assess the cross-condition transferability and generalization performance of SOH estimation models.

The Oxford dataset is used as a cross-domain validation benchmark to examine the robustness of SOH estimation under heterogeneous battery conditions. Relative to the NASA dataset, it exhibits multiple sources of distributional shift, including differences in cell format (prismatic pouch versus cylindrical), cathode material system, ambient/cycling temperature, and charge–discharge protocol. These discrepancies affect both degradation trajectories and signal characteristics, thereby providing a more stringent setting for evaluating cross-condition generalization than single-dataset testing alone.

In this study, SOH is adopted as the aging indicator and is defined as the ratio between the current maximum available capacity

and the nominal initial capacity

, expressed as:

To construct high signal-to-noise ratio inputs, only voltage, current, and temperature measurements recorded during the constant-current charging stage are extracted for analysis. The datasets are partitioned as follows:

NASA dataset: A hold-out strategy is employed, where B0006, B0007, and B0018 are used for training and B0005 is reserved for testing, aiming to evaluate baseline prediction accuracy.

Oxford dataset: Cell 6 is selected as the target-domain test battery, while the remaining cells are used for training, in order to assess cross-domain generalization capability.

For hyperparameter selection and early stopping, a validation split is constructed from the source-domain training batteries only, and the held-out test batteries are used exclusively for final evaluation. The current study adopts a fixed hold-out protocol for controlled comparison; more extensive cross-battery repeated validation will be explored in future work.

3.2. Experimental Setup

All models in this study are implemented using Python 3.8 and the PyTorch 1.10 deep learning framework. The experiments are conducted on a workstation equipped with an Intel Core i9-12900K CPU and an NVIDIA GeForce RTX 3090 GPU.

Model training is performed using the Adam optimizer, with an initial learning rate set to 0.001, which is dynamically adjusted using a cosine annealing schedule. Network weights are initialized using a normal distribution to facilitate faster convergence. The batch size is set to B = 32, and the maximum number of training epochs is set to E = 200.

To quantitatively evaluate the performance of SOH estimation, three widely used metrics are adopted: Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), and the Coefficient of Determination (

R2) [

22]. Lower RMSE and MAE values, together with an

R2 value closer to unity, indicate higher estimation accuracy. The corresponding definitions are given as follows:

where,

and

denote the ground-truth and predicted SOH values of the

-th sample, respectively;

represents the mean of the ground-truth SOH values; and

is the total number of samples.

To ensure fair comparison, all baseline methods are trained under the same data partition protocol, preprocessing pipeline, optimizer family, and early-stopping criterion whenever applicable. Hyperparameters are selected using the validation split only, and the best checkpoint is chosen according to validation RMSE. In addition to single-run performance, repeated experiments with different random seeds are conducted for the main methods, and the mean ± standard deviation are reported for the primary metrics. Model complexity and computational cost (including parameter count and inference time) are also reported to provide a balanced comparison between accuracy and deployment efficiency.

3.3. Ablation Study

To systematically investigate the contributions of the core components in the proposed Conformer-KAN framework, including the multi-view input strategy, the Conformer encoder, and the KAN-based modeling module, a comprehensive ablation study is conducted. Four representative variant models are constructed for comparison:

Transformer-MLP: A baseline model consisting of a standard Transformer encoder followed by an MLP-based prediction head, without local convolution enhancement or the KAN structure.

Conformer-MLP: A variant in which the standard Transformer is replaced by a Conformer encoder, aiming to evaluate the benefit of joint local–global feature modeling for temporal representation.

Transformer-KAN: A model that retains the standard Transformer encoder while replacing the MLP with a KAN module, designed to assess the nonlinear approximation capability of KAN.

Conformer-KAN: The complete framework proposed in this study, integrating both the Conformer encoder and the KAN-based modeling module.

The quantitative evaluation results summarized in

Table 2, together with the full life cycle SOH prediction curves illustrated in

Figure 5, show consistent performance differences among the variant models. By comparing Transformer-MLP with Conformer-MLP, it is observed that introducing the Conformer architecture substantially improves temporal representation quality, with the RMSE decreasing from 0.019 ± 0.003 to 0.011 ± 0.002 (approximately 42.1% reduction) and the

increasing from 0.886 ± 0.015 to 0.939 ± 0.011. This trend is consistent with the role of the gated local convolution unit in complementing self-attention with stronger local inductive bias, thereby improving sensitivity to localized fluctuations and transition regions in battery degradation trajectories. The corresponding increase in parameter count (from 1.25 M to 1.58 M) and inference time (from 2.5 ms/sample to 3.1 ms/sample) remains moderate.

In terms of nonlinear modeling capability, the comparison between Transformer-MLP and Transformer-KAN shows that replacing the MLP head with KAN further improves prediction accuracy, with the RMSE reduced to 0.009 ± 0.002, the MAE reduced to 0.86 ± 0.07 × 10−2, and the increased to 0.975 ± 0.006. This performance gain is consistent with the adaptive function-shape modeling capability of KAN, whose learnable B-spline-based edge activations provide greater flexibility than fixed-activation MLP layers when fitting stage-wise nonlinear SOH trajectories. Notably, Transformer-KAN uses fewer parameters (1.10 M) than Transformer-MLP (1.25 M), but incurs higher inference latency (6.8 ms/sample vs. 2.5 ms/sample), indicating an accuracy–efficiency trade-off associated with spline-based function evaluation.

Ultimately, the proposed Conformer-KAN model achieves the best overall prediction performance by combining the local–global temporal modeling strength of the Conformer with the adaptive nonlinear approximation capability of KAN. Specifically, it achieves the lowest MAE (0.53 ± 0.04 × 10−2) and RMSE (0.006 ± 0.001), together with the highest (0.987 ± 0.003) among all variants. The prediction curves further show that Conformer-KAN provides the closest trajectory alignment to the ground-truth SOH over the full life cycle, including regions with localized capacity drop and recovery-like fluctuations. In terms of computational characteristics, Conformer-KAN maintains a parameter count of 1.42 M (lower than Conformer-MLP at 1.58 M) while exhibiting the highest inference time (7.5 ms/sample), which reflects the additional computational overhead introduced by the KAN module. Overall, the ablation results provide empirical support for the complementary benefits of Conformer-based temporal encoding and KAN-based nonlinear regression under the present hold-out evaluation setting.

3.4. Comparative Experiments

To verify the superiority of the proposed Conformer-KAN method in lithium-ion battery SOH estimation, three representative data-driven approaches are selected as benchmark models for comparison. The configurations of the baseline models are summarized as follows:

AST-LSTM: An attention-based spatio-temporal long short-term memory network that integrates attention mechanisms with LSTM architecture, aiming to evaluate its capability in capturing long-sequence dependencies [

6].

CNN-LSTM: A hybrid architecture that cascades CNN and LSTM networks, where CNNs are employed for local feature extraction and LSTMs are responsible for temporal dependency modeling [

10].

CNN-Trans: A hybrid CNN–Transformer model that leverages the global self-attention mechanism of Transformers, representing the current mainstream approach in SOH estimation [

14].

The SOH prediction trajectories produced by different models are illustrated in

Figure 6. As observed, the prediction curve generated by Conformer-KAN shows the closest alignment with the ground-truth SOH degradation trajectory over the full life cycle. In the early aging stage and in regions with localized capacity drops or recovery-like fluctuations, both AST-LSTM and CNN-LSTM exhibit noticeable phase lag and amplitude deviation, suggesting that recurrent-dominant architectures have limited responsiveness to abrupt nonlinear variations. Although CNN-Trans benefits from global self-attention and improves overall trend fitting compared with LSTM-based methods, it still shows local oscillatory behavior in fluctuation-sensitive regions, indicating that global dependency modeling alone may be insufficient for robust local feature tracking under noisy conditions.

In contrast, Conformer-KAN achieves more stable local trajectory tracking while preserving key degradation characteristics, including localized recovery-like deviations. This behavior is consistent with the complementary roles of the Conformer encoder and KAN regression head: the Conformer enhances joint local–global temporal representation through gated local convolution and self-attention, while KAN provides adaptive nonlinear mapping for stage-wise SOH evolution. As a result, the proposed model exhibits improved robustness under complex degradation patterns without relying solely on recurrent temporal processing.

The quantitative comparison presented in

Table 3 further supports these observations. Conformer-KAN achieves the best overall performance across all evaluation metrics, with MAE = 0.53 ± 0.04 × 10

−2, RMSE = 0.006 ± 0.001, and

R2 = 0.987 ± 0.003. Compared with AST-LSTM (RMSE = 0.017 ± 0.002), the RMSE is reduced by approximately 64.7%; compared with CNN-Trans (RMSE = 0.010 ± 0.001), the RMSE is reduced by approximately 40.0%. These results indicate that the proposed Conformer-KAN framework provides stronger predictive accuracy for high-noise and strongly nonlinear SOH trajectories under the present evaluation setting.

From the perspective of computational characteristics, Conformer-KAN has a parameter count of 1.42 M, which is comparable to CNN-Trans (1.36 M) and higher than AST-LSTM (0.92 M) and CNN-LSTM (1.18 M). However, its inference time (7.5 ms/sample) is higher than the MLP-based baselines (e.g., 3.8 ms/sample for CNN-Trans), reflecting the additional computational overhead introduced by the spline-based function evaluation in the KAN module. This result suggests a clear accuracy–efficiency trade-off: Conformer-KAN offers the best prediction performance at the cost of increased inference latency, while still maintaining a moderate model size.

Overall, the comparative experiments provide consistent empirical evidence that Conformer-KAN improves SOH estimation accuracy and robustness relative to representative recurrent and hybrid baselines on the NASA B0005 dataset, while also clarifying the associated computational trade-offs.

3.5. Generalization Performance Validation

To further evaluate the generalization capability of the proposed model across different battery packaging formats, material systems, and operating conditions, cross-domain validation experiments are conducted using the Oxford battery aging dataset.

The quantitative prediction results on Oxford Cell 6 are summarized in

Table 4. Under this cross-domain setting, Conformer-KAN achieves the best overall performance among the compared methods, with MAE = 0.31 ± 0.03 × 10

−2, RMSE = 0.003 ± 0.001, and

R2 = 0.994 ± 0.002. Relative to CNN-Trans (RMSE = 0.005 ± 0.001), the RMSE is reduced by approximately 40.0%; relative to CNN-LSTM (RMSE = 0.006 ± 0.001), the reduction is approximately 50.0%. These results suggest that the proposed framework maintains stronger predictive accuracy and stability than representative recurrent and hybrid baselines under distributional shifts between datasets.

The full life cycle SOH prediction curves for Oxford Cell 6 are illustrated in

Figure 7. Compared with the NASA B0005 case, the Oxford trajectory is relatively smoother in its global degradation trend but contains dense local fluctuations, making it a complementary test case for evaluating cross-condition robustness. In this setting, AST-LSTM and CNN-LSTM show larger local deviations, while CNN-Trans improves overall trend fitting but still exhibits oscillatory behavior in fluctuation-sensitive regions. By contrast, Conformer-KAN provides closer trajectory alignment to the ground-truth SOH curve across the full life cycle, including local variation regions. This behavior is consistent with the proposed architectural design, in which the Conformer encoder enhances joint local–global temporal representation and the KAN head improves adaptive nonlinear regression for heterogeneous degradation patterns.

From the perspective of computational characteristics, the parameter count and inference time of each model remain consistent with the results reported in

Table 3 because the model architectures are unchanged across datasets. Conformer-KAN has a parameter count of 1.42 M and an inference time of 7.5 ms/sample, which is higher in latency than the MLP-based baselines but remains within a moderate model-size range. Therefore, the Oxford results further support an accuracy–efficiency trade-off consistent with previous experiments: the proposed model achieves the strongest predictive performance under cross-domain conditions at the cost of increased inference time due to spline-based KAN computation.

Overall, the cross-domain validation on Oxford Cell 6 provides additional empirical evidence that Conformer-KAN improves SOH estimation accuracy and robustness under heterogeneous operating conditions. At the same time, these results should be interpreted within the scope of the current experimental protocol (single target battery under a hold-out setting), and broader validation on more diverse datasets and deployment scenarios remains an important direction for future work.

4. Conclusions

To address the challenges of strong nonlinearity, transient disturbances, and cross-condition distribution shifts in lithium-ion battery SOH estimation, this study presented a deep learning framework termed Conformer-KAN, which combines a convolution-augmented Conformer encoder with a KAN-based regression module. Under the experimental settings considered in this work, the main findings can be summarized as follows.

- (1)

Joint local–global temporal representation improves SOH feature modeling. By combining gated local convolution with multi-head self-attention, the Conformer encoder enhances sensitivity to localized degradation fluctuations while preserving the ability to model long-range temporal dependencies. The ablation and comparative results consistently indicate that this local–global encoding strategy provides more accurate trajectory tracking than recurrent-dominant and standard Transformer-based baselines, particularly in fluctuation-sensitive regions.

- (2)

KAN-based regression improves adaptive nonlinear fitting for complex degradation trajectories. Replacing a conventional MLP head with a spline-parameterized KAN module improves the flexibility of nonlinear function approximation for SOH mapping. The ablation results show that the KAN head contributes additional error reduction, especially when modeling stage-wise and highly nonlinear degradation behavior, supporting its role as an effective nonlinear regressor in the proposed framework.

- (3)

The proposed framework shows improved predictive accuracy under the current in-domain and cross-domain evaluation settings. On the NASA B0005 test battery and the Oxford Cell 6 cross-domain test battery, Conformer-KAN achieves the best overall performance among the compared methods (e.g., RMSE values of 0.006 and 0.003, respectively, with high values). These results provide empirical evidence that the proposed design can improve robustness under heterogeneous battery conditions and distribution shifts within the scope of the datasets and protocols used in this study.

Overall, the results suggest that Conformer-KAN is a promising approach for SOH estimation under complex operating conditions, particularly when both local degradation sensitivity and nonlinear regression capacity are required. However, several limitations should be noted. First, the current evaluation is based on a limited number of public datasets and primarily uses hold-out testing protocols; broader validation with additional chemistries, operating profiles, and repeated cross-validation settings is still needed. Second, although the proposed model achieves the best predictive accuracy, the KAN module introduces additional inference latency relative to MLP-based baselines, which should be considered in resource-constrained BMS deployment scenarios. Third, the present experiments evaluate offline prediction performance, while practical deployment may involve sensor mismatch, missing data, online adaptation, and long-term domain drift.

Future work will therefore focus on (i) broader multi-dataset and multi-condition validation with stronger statistical testing, (ii) lightweight and hardware-aware optimization of the Conformer-KAN architecture for embedded deployment, and (iii) online updating and domain adaptation strategies to improve robustness under real-world operating variability.