1. Introduction

The large-scale collection and analysis of visual data from social networks and online platforms is a central component of modern big data analytics and AI-driven data science [

1,

2]. Profile images, in particular, provide rich semantic information that supports applications such as demographic analysis, personalization, and social data mining. However, regulations such as the General Data Protection Regulation [

3] classify facial images as highly sensitive personal data because they inherently contain biometric identifiers.

Responsible data-driven analytics require processing facial images in a way that protects individual identity while preserving analytical utility. Although traditional face anonymization techniques, such as blurring, pixelation, and black-box masking, are easy to deploy, they severely degrade visual quality and semantic information, limiting their usefulness for downstream analytics. Furthermore, these techniques remain vulnerable to modern face recognition systems, particularly when combined with reconstruction or super-resolution attacks.

Recent work has explored generative approaches to face anonymization and de-identification. Methods such as DeepPrivacy and CIAGAN use conditional generative adversarial networks to replace facial identities while maintaining visual realism [

4,

5]. Other approaches anonymize faces by manipulating the latent representations of pre-trained generators while preserving specific attributes [

6]. Another line of work integrates formal privacy mechanisms by perturbing disentangled identity representations prior to reconstruction [

7]. Despite these advances, however, empirical studies consistently demonstrate that image-conditioned synthesis and latent-space anonymization may retain residual identity-related cues, such as facial geometry or correlated appearance features, enabling re-identification under strong adversarial models [

8,

9].

These findings motivate a fundamentally different design principle. Rather than modifying identity-bearing images, we focus on face pseudonymization through reconstruction. Pseudonymization replaces identity-bearing images with realistic surrogates that do not correspond to any real individuals while preserving non-identifying semantic attributes that are relevant for analytics. Our approach is unique in that it removes visual identity signals early in the pipeline and reconstructs faces solely from abstracted semantic representations. Recent advances in multimodal generative models facilitate the synthesis of high-quality images based on textual descriptions [

10,

11,

12]. However, the existing pipelines typically rely on large language models (LLMs) to generate these descriptions, which introduces stochasticity, inconsistency, and attribute hallucination [

13]. These issues are problematic in privacy-critical and reproducibility-focused data science pipelines.

In this work, we present a template-driven multimodal face pseudonymization framework designed for privacy-preserving big data analytics. Our approach uses a FaceNet-based CelebA attribute classifier [

14,

15,

16] to extract non-identifying facial attributes and DeepFace [

17] to extract high-level demographic and emotion attributes. These attributes are then converted into natural-language descriptions via a deterministic template-based mapping process. This results in an identity-agnostic controlled semantic representation. We use the resulting text as the sole conditioning input to Janus-Pro, a multimodal text-to-image generation model, to synthesize a novel non-identifiable face [

12]. We evaluated our framework using the CelebA dataset under a strong adversarial threat model. We also conducted an ablation study that compared deterministic template-based text generation with an alternative based on LLMs.

This paper makes the following contributions:

We propose a template-driven multimodal face pseudonymization framework that reconstructs facial images from abstracted semantic attributes alone, enabling privacy-preserving big data analytics.

We introduce a deterministic attribute-to-text representation that improves reproducibility and reduces unintended information leakage compared to LLM-based prompting.

We provide a comprehensive empirical evaluation under a strong re-identification and linkability threat model, demonstrating substantial identity protection while preserving semantic utility.

We conduct an ablation study comparing template-based and LLM-based text generation to highlight privacy–utility trade-offs.

2. Related Work

This section reviews the prior work that is relevant to privacy-preserving facial image processing and generative anonymization. First, we discuss the generative approaches to face anonymization and de-identification, focusing on empirical evidence of residual identity leakage. Next, we summarize the work on facial attribute recognition, which forms the basis of the semantic abstraction used in our framework. Finally, we discuss the advances in text-to-image generation and privacy-preserving visual analytics.

2.1. Face Anonymization and De-Identification

Early approaches to protecting face privacy rely on visual obfuscation techniques such as blurring, pixelation, or masking. Although simple to deploy, these methods substantially degrade semantic information and have been shown to be vulnerable to modern face recognition and reconstruction attacks, as demonstrated by McPherson et al. [

18] and Li et al. [

19].

More recent work therefore adopts learning-based de-identification, aiming to preserve utility while suppressing identity. GAN-based approaches such as DeepPrivacy [

4] introduce conditional generative adversarial networks that anonymize faces while preserving pose and background context, enabling downstream learning on anonymized data. CIAGAN [

5] extends this idea by offering conditional control over identity anonymization in images and videos, enabling more diverse and controllable identity replacement. Another line of work performs anonymization through latent-space manipulation by optimizing latent representations of pre-trained generators to suppress identity while maintaining specified facial attributes [

6]. While these approaches often produce visually convincing results, they commonly rely on image-conditioned synthesis or latent codes derived from the original image. Consequently, residual identity-related structure (e.g., facial geometry, correlated appearance cues, or contextual information) may persist and remain exploitable by strong recognition models.

Beyond purely empirical designs, several methods integrate formal mechanisms to provide tunable privacy–utility trade-offs. IdentityDP [

7] incorporates differential privacy into face anonymization by perturbing disentangled identity representations prior to reconstruction, offering theoretical guarantees while controlling privacy strength. In practice, however, stronger protection can increase system complexity and may reduce visual fidelity or semantic consistency, and the translation from theoretical guarantees to empirical robustness against modern face recognition systems may depend on the threat model and evaluation protocol.

Empirical studies also demonstrate that modifying selected attributes does not necessarily remove all identity cues. PrivacyNet [

8] shows that semi-adversarial perturbations can obscure targeted attributes while preserving other information; nevertheless, under strong adversarial settings, structural facial signals may still suffice for identity inference. Diffusion-based approaches have recently improved visual quality while retaining semantics. DiffuShield [

9] aims to enhance identity removal while maintaining realism but still highlights a fundamental trade-off between privacy protection and utility. Beyond these, recent diffusion pipelines such as Face Anonymization Made Simple [

20] and NullFace [

21] report strong realism and semantic retention and, in some cases, support training-free or localized anonymization. However, many diffusion de-identification methods still depend on inversion or reconstruction from the source image, which can retain subtle identity structure and introduce stochastic variability across runs.

In parallel, reversible designs have regained attention in scenarios where authorized recovery is required. SDN [

22] anonymizes and de-anonymizes faces through password- and attribute-controlled representations, and iFADIT [

23] explores invertible identity transforms with secret-key control. While reversibility can be beneficial for controlled-access applications, it also introduces operational risks (e.g., key management and misuse) and may be undesirable in strict anonymization settings.

Table 1 summarizes representative approaches, their typical evaluation focus, and recurring gaps. Two limitations appear repeatedly: (i) many high-fidelity pipelines retain an input-conditioned pathway (direct conditioning, inversion, or latents/embeddings derived from the original), which may preserve correlated identity cues and thus leave a residual linkability channel; and (ii) stochastic components (e.g., sampling noise or prompt variability) can hinder reproducibility in analytics-oriented deployments.

In contrast to the above families of methods, our work follows a different design principle: we remove identity-carrying visual signals early and perform face pseudonymization through text-only reconstruction from abstracted semantic attributes encoded via deterministic templates. By using generated text as the sole conditioning input for the text-to-image model, our pipeline avoids direct image-conditioned synthesis and thereby reduces the risk of residual identity leakage pathways that originate from inversion or latents derived from the source image. Moreover, deterministic template-based attribute-to-text conversion reduces stochasticity and unintended attribute drift compared to free-form prompt generation, which supports reproducible anonymization for downstream analytics.

Consequently, evaluation focuses on representation-level privacy rather than pixel-level perturbation strength. Unlike modern diffusion- or GAN-based de-identification approaches that operate directly in pixel space, our method performs identity dissociation through semantic abstraction prior to reconstruction.

2.2. Facial Attribute Recognition

Facial attribute recognition has been extensively studied using benchmark datasets such as CelebA, which provides 40 binary attributes related to facial appearance and accessories [

15]. FaceNet-based representations are commonly used as feature backbones for identity recognition and attribute prediction. In this study, we use an open-source FaceNet-based attribute classifier for the CelebA dataset [

16]. High-level demographic and affective attributes, including age, gender, emotion, and ethnicity, are inferred using the DeepFace framework [

17]. These attributes are widely used in social data analytics but are insufficient to uniquely identify individuals, making them suitable for pseudonymization pipelines.

2.3. Text-to-Image Generation and Prompt-Based Pipelines

Text-to-image generation has advanced rapidly alongside the development of diffusion models and unified multimodal architectures [

10,

11]. Janus-Pro further unifies multimodal understanding and generation within a single architecture, enabling text-conditioned image synthesis [

12]. It should be noted that modern text-to-image pipelines no longer rely solely on raw text strings. Instead, they increasingly integrate large language models or LLM-like components to preprocess, restructure, or enrich textual inputs before image synthesis. For instance, Gani et al. present an LLM-based framework that breaks down intricate prompts into organized semantic elements before transmitting them to the image generation model [

24]. Similarly, recent multimodal pipelines use LLMs to guide prompt interpretation, layout planning, and semantic alignment between text and image generation modules [

25].

Beyond free-form prompt rewriting, recent work has explored using LLMs for structured data-to-text conversion. For example, Lee et al. demonstrate that LLMs can effectively transform structured JSON representations into natural-language descriptions for downstream analytical and generative tasks [

13]. This line of work highlights the growing adoption of LLMs as intermediate semantic translators between structured data and generative models. Although LLM-based prompt construction increases flexibility and linguistic expressiveness, it introduces stochasticity that can enrich or alter attribute descriptions in unpredictable ways. In privacy-critical settings, this lack of determinism increases the risk of unintended information leakage.

In contrast, our work deliberately avoids generative or stochastic JSON-to-text conversion. Instead, we use a deterministic template-based mapping that produces facial descriptions containing only attributes. This mapping forms an identity-agnostic controlled semantic representation that is explicitly tailored for privacy-preserving and reproducibility-focused analytics.

2.4. Privacy-Preserving Visual Analytics

While formal differential privacy provides mathematically rigorous guarantees [

26], applying it to high-dimensional facial images often results in reduced utility or visual quality due to the magnitude of perturbations required in pixel space [

7]. Accordingly, many recent works adopt empirical privacy evaluation paradigms that are commonly evaluated using empirical metrics such as re-identification accuracy, face embedding similarity, and unlinkability under strong adversarial models [

4,

6,

8]. Our evaluation follows this practice, focusing on practical privacy guarantees that are relevant to large-scale analytics.

Thus, our approach does not claim formal differential privacy guarantees. Rather, it constitutes an architectural privacy mechanism based on information abstraction and non-invertibility. Privacy is enforced through the removal of fine-grained biometric detail rather than statistical perturbation using noise injection or probabilistic output bounding. This distinction clarifies that the proposed method complements, rather than replaces, formal differential privacy mechanisms.

3. Problem Definition

We address the issue of preserving privacy through face pseudonymization for visual data analytics. Given an input image containing a human face, the goal is to create a new image of the person’s face that retains specific non-identifying visual features while preventing the original individual from being re-identified by human observers or automated recognition systems.

Let denote an input image containing a face. This image x contains both identity-bearing information, such as biometric facial structure, and non-identifying semantic characteristics, such as age range, hairstyle, facial expression, and accessories. The goal of pseudonymization is to generate a synthesized image such that:

Instead of transforming or modifying the original image, we use a reconstruction-based approach. In this setting, the synthesized image

is generated from an abstracted representation of facial characteristics and does not constitute a direct transformation of

x. This design choice decouples identity information from visual appearance by construction. We assume the existence of an attribute extraction process that maps an input image

x to a set of probabilistic facial attributes. Let

denote the set of facial attributes considered by the attribute extractor. For an input image

x, the extractor produces a set of attribute–score pairs

where

denotes the total number of facial attributes and

is the confidence score associated with attribute

. While these attributes capture fine-grained and high-level semantic characteristics, they are insufficient to uniquely identify an individual. The pseudonymization problem addressed in this work can then be stated as learning a mapping

such that the synthesized image

reflects the semantic content encoded in

while suppressing identity-bearing information.

In addition to privacy and utility, we impose practical constraints on the solution. The mapping should be deterministic and reproducible. It should also avoid relying on identity-conditioned visual inputs during generation and provide an interpretable representation that enables explicit control over which attributes are expressed. These requirements justify the structured template-driven reconstruction approach.

Section 4 describes the concrete instantiation of the attribute extraction process and the reconstruction function.

4. Our Approach

This section describes the proposed template-driven framework for face pseudonymization. Our method removes identity-bearing visual information and reconstructs facial images from non-identifying semantic attributes alone. First, we outline the overall pipeline. Then, we detail face localization, probabilistic attribute extraction, deterministic attribute-to-text conversion, and text-to-image-based face synthesis.

4.1. Method Overview

The proposed framework performs face pseudonymization through a structured multimodal pipeline that separates identity-bearing visual information from non-identifying semantic attributes. Given an input image

x, a face is first localized using FaceNet, and the detected bounding box is expanded to define a region of interest (RoI) that includes hair style and selected accessories, which are often excluded by tight face crops. From the expanded RoI, probabilistic facial attributes are extracted using a FaceNet-based CelebA attribute classifier and the DeepFace framework [

16,

17]. These attributes are then organized into categories based on empirical data, serialized into a structured JSON representation, and converted into a natural-language description using a deterministic template-based mapping. This description forms an identity-agnostic controlled semantic representation. In this context, attribute categorization is applied as a separate post-processing step rather than being assumed by the underlying attribute extraction models. Finally, the generated text serves as the sole input for conditioning a text-to-image generation model. This model synthesizes realistic non-identifiable facial images, enabling feature-preserving face pseudonymization with reduced risk of residual identity leakage.

Figure 1 illustrates the overall pseudonymization pipeline, highlighting the transition from face detection and expanded RoI extraction to probabilistic attribute representation, deterministic text generation, and text-to-image-based face synthesis. First, faces are detected and localized in an input image using FaceNet. Then, the detected bounding boxes are expanded to include contextual cues, such as hairstyle and accessories. Probabilistic facial attributes are then extracted from the expanded regions of interest using a FaceNet-based CelebA attribute classifier and the DeepFace framework. These attributes are then organized into structured JSON-encoded feature representations and converted into deterministic textual descriptions via template-based generation. The generated descriptions are then used as the sole conditioning input for text-to-image generation, producing realistic non-identifiable facial images suitable for downstream analytics.

4.2. Face Detection and Expanded Region of Interest

The first step of our pipeline, given an input image

x, is to localize the face and define an RoI for subsequent attribute extraction. A face detector produces the initial bounding box

which tightly encloses the facial region. However, tight face crops are insufficient for capturing appearance cues that are relevant to downstream attribute prediction and text-based reconstruction. Several attribute groups used in our framework, such as hair style and accessories like neckties or necklaces, can partially or fully extend beyond the facial bounding box. This issue is exacerbated by common FaceNet-style preprocessing, which aligns and crops faces within a relatively small facial region.

To address this issue, we increase the size of the detected bounding box using anisotropic margins to include hair and the context of the upper body:

where

denotes left, top, right, and bottom expansion factors, and

constrains the expanded box to the image boundaries. In practice, we choose larger top and bottom expansions to capture hair and accessories below the chin, respectively.

The resulting cropped region is used as input for the attribute extractors. This RoI design improves coverage of hair- and accessory-related attributes while maintaining a consistent privacy-preserving pipeline: only the derived attribute representation is retained for subsequent template-based text generation and synthesis.

4.3. FaceNet-Based Attribute Representation

Fine-grained facial attributes are extracted using a FaceNet-based attribute classifier trained on the CelebA dataset [

16]. The classifier outputs a flat set of probabilistic attribute scores, corresponding to the original CelebA attribute vocabulary. Formally, let

denote the FaceNet-based attribute extractor, where

corresponds to the CelebA attributes. Given an input image

x, the model produces an attribute score vector

where each element

denotes the model’s sigmoid-normalized confidence score for the presence of the

i-th facial attribute.

At this stage, the attribute representation is unstructured and does not impose any semantic grouping or hierarchy. Attributes such as Big_Nose, Wavy_Hair, Wearing_Hat, or Smiling are treated independently and represented solely by their associated probability scores. This step produces a serialized JSON-formatted key–value representation that preserves the full set of probabilistic scores, avoiding thresholding and binarization. An example output is shown below:

"Big_Nose": 0.1965,

"Wavy_Hair": 0.1330,

"Wearing_Hat": 0.5017,

"Smiling": 0.0904,

"Male": 0.8596,

…

This probabilistic flat attribute representation captures attribute uncertainty and mixed facial traits while remaining explicitly decoupled from identity embeddings. Semantic categorization and feature aggregation are not performed at this stage.

4.4. DeepFace-Based Semantic Attributes

To complement the fine-grained CelebA-based attributes, we use the DeepFace framework [

17] to extract high-level semantic attributes. The DeepFace models we use provide probabilistic predictions for demographic and affective characteristics rather than hard categorical labels. Specifically, DeepFace is used to estimate

Formally, let

denote the DeepFace (DF) attribute extractor. For an input image

x, the model outputs a set of probabilistic attributes

where each attribute is represented either as a normalized confidence score or as a probability distribution over predefined classes.

Unlike the fine-grained CelebA attributes, the DeepFace attributes capture global semantic properties, such as demographic appearance and emotional state. While these attributes may be correlated with identity at the population level, they are not sufficient for identifying individuals uniquely. Therefore, they are treated as non-identifying semantic features within our pseudonymization approach.

4.5. Empirical Attribute Categorization

The attribute extractors described in

Section 4.3 and

Section 4.4 produce a flat unstructured set of probabilistic attribute scores. While this representation is suitable for attribute prediction, it is not directly amenable to deterministic text generation or controlled feature selection. We therefore introduce an explicit categorization step that organizes attributes into semantically coherent groups. Let

denote the set of probabilistic FaceNet-based (FN) CelebA attributes, where

is an attribute label and

its predicted confidence. Similarly, let

denote the set of high-level demographic and affective attributes predicted by DeepFace (DF). For an input image, DeepFace produces

where

denotes the number of DeepFace attributes,

the

j-th attribute, and

its associated confidence score. We define a categorization function

which maps each attribute to exactly one category

, where

denotes the set of thirteen attribute categories. Rather than organizing by a predefined theoretical taxonomy, each category is organized by attributes that share visual characteristics and descriptive functions. This categorization was derived empirically to improve interpretability and facilitate deterministic template-based text generation. The categories are defined as follows:

General Demographics: age, gender, and dominant ethnicity.

Facial Structure and Shape: attributes describing facial geometry (e.g., face shape, nose, and cheekbones).

Hair Color: color-related hair attributes.

Hair Style: structural hair attributes (e.g., baldness and texture).

Facial Hair: beard- and mustache-related attributes.

Eyebrows: eyebrow-related attributes.

Accessories: glasses, hats, jewelry, and neckties.

Makeup: cosmetic-related attributes.

Skin Characteristics: skin tone and texture cues.

Expression: mouth state and facial expression cues.

Subjective Appearance: attributes reflecting perceived age or attractiveness.

Affective State: seven emotion-related attributes: anger, disgust, fear, happiness, sadness, surprise, and neutral.

Ethnicity: six coarse-grained ethnicities: Asian, Indian, black, white, Middle Eastern, and Latino/Hispanic.

Formally, each category

is represented as a subset

containing attribute–confidence pairs originating from either FaceNet or DeepFace. The categorization does not alter the underlying probabilistic attribute values. Instead, it provides a structured representation that enables the selection, aggregation, and verbalization of attributes in a deterministic manner during template-based text generation. No identity-related assumptions are introduced at this stage. In addition to semantic grouping, we further distinguish facial attributes based on their representational type, which determines how they are interpreted and verbalized during template-based text generation. Specifically, each attribute is assigned to one of three types: Boolean, scalar, or gradient.

Boolean attributes represent the presence or absence of a visual feature (e.g., Eyeglasses and Wearing_Hat). These attributes are interpreted as binary decisions using a fixed confidence threshold. If the predicted probability exceeds the threshold, the attribute is considered present. Otherwise, it is omitted from the textual description.

Scalar attributes represent features that can be described independently of other attributes but vary in intensity (e.g., Heavy_Makeup and Bags_Under_Eyes). These attributes are mapped to a small number of intensity levels and mapped to corresponding linguistic expressions (e.g., “slight”, “moderate”, and “strong”).

Gradient attributes describe visual properties whose interpretation depends on the relative strength of multiple related attributes. Examples include hair color or facial structure, where attributes such as Black_Hair, Brown_Hair, and Gray_Hair jointly determine the final description. Gradient attributes are therefore interpreted in relation to each other, enabling composite descriptions such as “predominantly dark hair with gray tones”.

This type-based distinction is empirically motivated and reflects the way facial attributes are naturally described in language. While it does not alter the underlying probabilistic predictions, it provides additional structure for deterministic and interpretable text generation.

4.6. Text Generation

After attribute extraction and empirical categorization, the structured facial attributes are converted into natural-language descriptions using a deterministic template-based text generation mechanism. This step translates probabilistic machine-readable attribute representations into human-interpretable descriptions while maintaining control over the semantic content expressed. Let

denote the set of empirically defined attribute categories described in

Section 4.5. A fixed template rule is defined for each category

, mapping selected attribute–confidence pairs to corresponding textual phrases. These template rules operate on probabilistic attribute scores, selecting dominant attributes according to predefined criteria, such as top-

k selection or confidence thresholds. They do not introduce additional semantic information. Formally, the template-based text generation function can be expressed as

where

denotes the space of generated textual descriptions. The resulting description is constructed by concatenating category-specific phrases in a fixed order, yielding a reproducible and deterministic output. Template rules explicitly account for the representational type of each attribute. Boolean, scalar, and gradient attributes are processed using different deterministic strategies to ensure consistent natural-language descriptions. For Boolean attributes, template rules apply a fixed confidence threshold. Attributes that exceed the threshold are expressed as simple presence statements (e.g., “wearing glasses”), while those below the threshold are omitted entirely. For scalar attributes, probabilistic scores are converted into ordered intensity levels (e.g., levels 1 to 5). Each level is associated with a predefined linguistic modifier, enabling descriptions such as “slightly”, “moderately”, or “strongly”. Scalar attributes are expressed independently and do not require reference to other attributes. Gradient attributes are expressed using template rules that operate on groups of related attributes. Rather than selecting a single dominant attribute, the rules evaluate relative probabilities to construct composite descriptions. For instance, hair color could be described as “mostly black with noticeable gray tones” if multiple color attributes exhibit comparable confidence values.

The template-based generation process distinguishes between attribute types, preserving nuanced visual characteristics while remaining fully deterministic and reproducible. In particular, no attribute values are inferred or hallucinated beyond the structured input representation. For example, a high probability for

Wearing_Hat triggers a Boolean description, whereas

Heavy_Makeup is verbalized based on its intensity level. In contrast, hair color is described by considering multiple gradient attributes together, resulting in composite phrases that reflect mixed visual traits.

Figure 1 illustrates an example transformation, detailing the deterministic conversion from structured JSON-encoded attributes to natural-language descriptions using fixed template rules. Given a JSON-formatted attribute representation containing probabilistic values for attributes such as gender, age, hair color, facial structure, and expression, the template rules generate the following textual descriptions for the first and second sentences:

A profile portrait of a 32-year-old man, Asian, with a neutral expression. The hair appears as black hair, combined by natural hair texture and subtle wavy hair.

Notably, the template-based approach avoids stochastic prompt rewriting and does not infer or hallucinate attributes beyond those explicitly present in the structured representation. Therefore, the generated text constitutes an identity-agnostic controlled semantic representation that is well-suited for privacy-preserving face pseudonymization and reproducible analytics pipelines.

4.7. Text-to-Image Generation

The final stage of the pipeline synthesizes a pseudonymized facial image from the deterministic textual description generated by the template-based step. The text-to-image model uses the generated description as its only input to produce a realistic facial image that does not correspond to any real person. Formally, let

denote the text-to-image generation model, where

is the space of textual descriptions and

the space of generated images. Given a template-generated description

, the model outputs a synthesized image

A noteworthy aspect is that the generator does not receive any image-conditioned inputs, identity embeddings, or latent representations derived from the original image. This design removes identity by construction, preventing the direct transfer of biometric information from the source image. It also enables feature-preserving face pseudonymization, aligning with the requirements of privacy-preserving big data analytics, where balancing realism, reproducibility, and identity protection is essential.

5. Template-Based Text Generation

This section introduces the template-based text generation approach used to convert structured facial attributes into natural-language descriptions. First, we describe the design and construction of the template. Then, we analyze its combinatorial capacity and empirical practicability, quantifying the expressive diversity achievable under a fixed linguistic structure.

5.1. Template

To illustrate the deterministic process of generating text from attributes used in our framework, we first present an example of the structured attribute representation produced by the pipeline. After the steps of face detection, RoI expansion, and attribute extraction, the probabilistic attributes from FaceNet and DeepFace are combined into a single JSON structure. This structure serves as the only input for template-based text generation. This data contains probabilistic scores for all extracted facial attributes, including demographic, affective, and appearance-related characteristics. DeepFace-based attributes (e.g., age, gender, emotion, and ethnicity) are represented as normalized confidence values or probability distributions, while CelebA-based attributes are represented as continuous scores in the range

. Text generation proceeds deterministically by processing attribute categories in a fixed semantic order. This order reflects the typical description of facial characteristics in natural language and remains constant across all experiments. Depending on the presence or absence of attributes, the template generates at most seven sentences. The maximum length of the generated text is 512 tokens as Janus-Pro [

12] does not appear to produce a better image if the text is too long. The sequence (S) of text generation is illustrated below.

S1: Demographics and emotions (+optional secondary ethnicity clause).

S2: Hair (+optional makeup clause).

S3: Grooming.

S4: Prominent and subtle facial features.

S5: Skin and expression details.

S6: Accessories.

S7: Subjective impression.

Accordingly, the whole template is depicted as follows:

Text Generation Template:

- S1:

A profile portrait of a {age}-year-old {gender}, {primary_ethnicity}

{secondary_ethnicity_clause}, with a {emotion} expression.

- S2:

The hair appears as {hair_description},

complemented by {hair_style_description}.

- S3:

Grooming details indicate {facial_hair_description}.

- S4:

Facial features include {prominent_facial_features}, while more subtle traits such as {subtle_facial_features} are also present.

- S5:

Skin and complexion details show {skin_description}, and the overall expression is characterized by {expression_details}.

- S6:

Accessories include {accessories_description}.

- S7:

The overall impression is {subjective_impression}.

Applying the above rules to the example excerpt yields the following first textual description: A profile portrait of a 30-year-old man, Asian with a slight Latino-Hispanic background, with a happy expression., given that

"ethnicity": {

"asian": 72.82,

"indian": 8.69,

"black": 7.89,

"white": 0.16,

"middle eastern": 0.03,

"latino hispanic": 10.38

}.

After applying generation processing to all attribute categories, we finally obtain the following text for the combined JSON data regarding the profile image.

A profile portrait of a 30-year-old man, asian with a slight latino hispanic background, with a happy expression. The hair appears as black hair, complemented by natural hair texture and subtle wavy hair. Grooming details indicate neat grooming. Facial features include prominent nose, soft nose tip contour, full lips, very bushy eyebrows, visible double chin, and moderate cheekbones, while more subtle traits such as subtle under-eye bags and subtly oval face are also present. Skin and complexion details show even complexion and even-toned cheeks, and the overall expression is characterized by neutral expression and subtly open mouth. Accessories include minimal accessories. The overall impression is not described as attractive and young-looking.

The combination rules and intensity expressions are derived from the empirical statistics observed in the dataset. For instance, secondary ethnicity is only blended if the associated constraint is satisfied. This is defined as follows:

This constraint states that the secondary ethnicity will only be present if the probability of the primary ethnicity is under 85% and the probability of the secondary ethnicity is over 10%. Otherwise, it will be omitted. The secondary ethnicity is embedded in the larger clause “with a slight {ethnicity} background”, as seen in the defined phrase_template.

To generate text based on the given template, the semantic property of attributes should be integrated into the sentence construction to get the properly distinguished description text of a profile picture. As described in

Section 4.5, attributes are processed according to their semantic type. Boolean attributes are included in the description if their confidence value exceeds a fixed threshold. This threshold is empirically determined as the mean confidence value computed over training images for which the attribute is annotated as present. Scalar attributes are discretized into ordered intensity levels based on confidence intervals derived from empirical score distributions. Each level corresponds to a predefined linguistic modifier (e.g., “slight”, “moderate”, or “strong”). Gradient attributes are processed jointly within their respective groups. For a dominant attribute

with a mean score

, a secondary attribute

is included if its confidence value is within a tolerance interval

where

is defined as the difference between the empirical mean scores of positive and negative samples for

. This rule enables composite descriptions when multiple related attributes exhibit comparable strengths.

Taking all these factors into account, the above example of the generated text shows how probabilistic attributes are translated into a coherent reproducible natural-language description that preserves nuanced visual characteristics. Secondary ethnic attributes and mixed hair characteristics are included only when their confidence values satisfy the empirically defined delta criterion. This prevents over-description and unintended attribute hallucination.

5.2. Combinatorial Capacity of the Text Template

The proposed text-generation template adheres to a fixed sentence structure. Consequently, all variability in the generated descriptions comes from the discrete attribute realizations alone. Therefore, the combinatorial capacity of the system can be analyzed independently of linguistic structure and depends solely on how different attribute realizations combine.

5.2.1. Attribute Contributions to Combinatorial Diversity

In this analysis, only distinctions between attribute types that affect combinatorial diversity are relevant. Scalar attributes contribute independently to the combinatorial space. After being discretized into a finite number of intensity levels, each scalar attribute multiplies the number of possible texts by the number of its discrete realizations. Gradient attributes contribute jointly within predefined semantic groups. Rather than contributing independently, gradient attributes interact with other attributes within the same category (e.g., hair color, hair style, and facial hair). Within such a group, either one dominant attribute or two attributes may be expressed simultaneously, depending on their relative confidence. This interaction introduces structured combinatorial variation that cannot be modeled as simple independent multiplication. Boolean attributes contribute a small finite number of deterministic states (e.g., expressed as present, expressed as absent using a neutral counter-phrase, or omitted) and therefore also act as bounded multiplicative factors. Categorical attributes contribute one discrete choice per category and do not introduce internal interaction.

5.2.2. Intensity Discretization and Linguistic Realization

Both scalar and gradient attributes are discretized into a fixed number of ordered intensity levels derived from positive and negative empirical means. The default configuration used throughout this work employs five intensity levels: strong negative, negative, subtle, positive, and strong positive. Each attribute is associated with a unique set of natural-language realizations for its intensity levels. While the number of intensity levels is shared across attributes, the linguistic expressions assigned to each level are attribute-specific and adapted to the underlying visual property. For a cheekbone-related attribute, for example, the five intensity levels may be realized as follows in

Table 2.

Different attribute-specific expressions are used for other attributes (e.g., hair texture, makeup intensity, lip color, or facial expression) at the same intensity levels while preserving the same ordered structure. This design maintains linguistic naturalness without altering the underlying combinatorial model.

5.2.3. Grouped Gradient Attributes

Suppose a gradient group

G consists of

n attributes, each of which is discretized into

L intensity levels. Since either one attribute or two attributes may be expressed jointly, the number of realizations contributed by a single gradient group is

5.2.4. Approximate Combinatorial Capacity

The total number of distinct texts that can be generated by the template is approximately

5.2.5. Categorical Component

The term

captures the contribution of all categorical variables that determine the high-level semantic framing of a generated description. These variables do not interact with intensity discretization or form groups. Instead, each categorical attribute contributes a single global discrete choice to the entire text. Formally,

is computed as the product of the cardinalities of all categorical dimensions that are expressed explicitly in the template. In the present configuration, these dimensions include age representation, gender, facial expression, and ethnicity. Age is treated as a categorical variable via an explicit discretization scheme. Although the framework supports various representations, such as continuous age values or alternative binning strategies, an eight-group discretization is used here as an illustrative example. This yields a factor of eight. Gender is a factor of two because the template is restricted to the two gender categories provided by the dataset. Facial expression is modeled as a categorical variable with one mandatory primary expression and one optional secondary expression. If the confidence of the primary expression falls below a predefined threshold, a secondary expression is selected from the remaining classes. Otherwise, no secondary expression is included. Thus, a facial expression is modeled with one mandatory primary category and one optional secondary category. This yields a maximum of

possible configurations. Ethnicity contributes two categorical factors. First, a primary ethnicity is always selected from a set of six categories. Second, an optional secondary ethnicity is selected if the primary ethnicity’s confidence falls below a predefined threshold. In this case, exactly one secondary ethnicity is selected from the remaining categories. From a combinatorial perspective, this mechanism yields up to six distinct ethnicity configurations: one without a secondary ethnicity and up to five with a secondary ethnicity. Combining all categorical dimensions yields

This value is an upper bound because the secondary ethnicity and secondary expression clause activate only under specific confidence conditions. Nevertheless, provides a principled estimate of the categorical contribution to the overall combinatorial capacity.

5.2.6. Gradient Component

Using five intensity levels () and gradient groups

hair color ();

hair style ();

facial hair ();

the combined contribution of all gradient groups is

as calculated from Equation (

1).

5.2.7. Scalar Component

After excluding the grouped gradient and non-utilized attributes (i.e., male and blurry), 19 scalar attributes remain. Since each attribute is discretized into five intensity levels and contributes independently, the combined contribution is

5.2.8. Boolean Component

There are five Boolean attributes, each of which has two deterministic states (present or omitted). This yields

5.2.9. Overall Capacity

Combining all the components yields an approximate upper bound on the number of distinct texts.

5.2.10. Interpretation of Text Generation Capacity

Although the template’s sentence structure is fixed, the combination of independent scalar attributes and interacting gradient attribute groups creates an enormous combinatorial space. Linguistic realizations specific to each attribute at every intensity level preserve semantic naturalness while maintaining a well-defined analyzable combinatorial structure.

5.3. Quantitative and Qualitative Evaluation of Template-Based Prompt Diversity

Although the proposed template permits a theoretically extensive combinatorial space (exceeding possible attribute combinations), practical differentiability must be empirically validated. Therefore, the effectiveness of the template-based prompt generation was assessed on two complementary levels: (i) quantitative structural diversity and (ii) qualitative multimodal differentiation in joint text–image embedding space.

5.3.1. Quantitative Structural Diversity Analysis

A total of prompts were generated using the proposed template. Structural similarity was evaluated through exhaustive pairwise comparison, resulting in prompt pairs.

Prior to comparison, prompts were normalized by replacing digits of age with a variable x, standardizing whitespace, and lowercasing all characters. Character-level sequence similarity was then calculated, and pairs were categorized into similarity intervals (e.g., >90%, 80–, and 70–).

No exact duplicates were observed after normalization. Only 429 out of 498,501 pairs (≈0.086%) exhibited similarity above (only 1 case over and 84 cases between 80 and ), and no large clusters of near-identical prompts were detected.

These results indicate that, despite the fixed syntactic structure of the template, combinatorial attribute variation produces structurally distinct textual instances at scale.

5.3.2. Qualitative Multimodal Differentiation via CLIP Embeddings

Structural diversity alone does not guarantee effective visual differentiation. To evaluate practical separability of generated outputs, we performed a multimodal embedding analysis using contrastive language–image pre-training (CLIP) [

27], considering that internal hidden-state representations of autoregressive language models such as Janus-Pro are optimized for next-token prediction rather than for semantically calibrated sentence-level embedding geometry. These representations are strongly influenced by shared prefix structure and positional encoding, which can lead to artificially high cosine similarity even for semantically distinct prompts. In fact, preliminary analysis confirmed that internal Janus hidden representations exhibited near-collapsed cosine similarity due to shared structural prefixes, further supporting the use of contrastively trained embeddings for meaningful separation analysis.

CLIP is trained with a contrastive objective to align text and image representations in a shared embedding space. Cosine similarity in CLIP space is directly optimized to reflect cross-modal semantic correspondence. Therefore, CLIP embeddings provide a more appropriate and interpretable metric for evaluating practical differentiability of generated image–prompt pairs.

Consequently, we evaluated multimodal diversity using CLIP image and text embeddings across 999 generated image–prompt pairs. Top-1 retrieval accuracy was defined as the proportion of correctly matched image–prompt pairs.

The global separation score was , reflecting moderate but consistent diversity within a homogeneous portrait domain.

The mean nearest-neighbor separation was (corresponding to a mean nearest-neighbor cosine similarity of approximately ), indicating that each image has a structurally similar counterpart within the portrait domain. However, no instances exceeded the collapse threshold (), suggesting the absence of visual mode collapse.

The collapse rate for was , indicating no visual mode collapse.

Text-to-image retrieval achieved a Top-1 accuracy of , substantially exceeding random chance (≈0.1% for 999 samples).

These results indicate that, despite strong structural similarity of the prompts, the generated images remain distinguishable in multimodal representation space. The absence of any collapse cases indicates that the template-based prompting does not induce visual mode collapse, even though all images share a common portrait structure.

Taken together, these findings suggest that, while the template maintains structural consistency for generation stability, it produces measurable multimodal differentiation across instances.

6. Experiments and Evaluation

After determining the combinatorial capacity of the proposed text generation template, a series of experiments are conducted to evaluate its effectiveness in generating pseudonymized profile images. First, the experimental setup is described, and then several experiments and evaluation protocols are used to assess the proposed approach to generating pseudonymized images. These experiments include attribute-level statistical consistency, identity dissociation, and an ablation study that compares template-based and LLM-based prompt generation strategies. Together, these experiments provide a comprehensive evaluation of semantic fidelity and privacy preservation.

6.1. Experimental Setup

For all experiments, a random sample of 1000 images was taken from the CelebA dataset. One image was excluded due to a failure to extract facial attributes, resulting in 999 images being used for evaluation. A structured attribute representation (

combined.json) is generated for each selected image using pre-trained facial analysis models. This representation includes demographic attributes, categorical predictions, and continuous attribute scores. These structured representations are used to generate template-based natural-language descriptions via the deterministic text-generation pipeline described in

Section 5. These descriptions serve as input prompts for a text-to-image generation model. Then, pseudonymized images are generated using the multimodal text-to-image model Janus-Pro. All prompts are constrained to exclude explicit identifiers and rely exclusively on the derived attribute descriptions. The evaluation is performed on four complementary levels:

A statistical similarity evaluation measures how well the generated images preserve attribute-level distributions relative to the original images.

An identity dissociation evaluation assesses whether personal identity information is effectively removed in the generated images.

A prompt-generation strategy comparison contrasts template-based text generation with LLM-based free-form text generation in terms of their impact on attribute distribution preservation.

A qualitative visual comparison contrasts representative images generated via template-based and LLM-based text generation.

The first two levels address two equally important yet different aspects: semantic fidelity at the population level and privacy preservation at the individual level. The third strategy compares the performance of template-based and LLM-based text generation. As a final evaluation step, a small set of images generated using template- and LLM-based text generation are visually inspected. This qualitative comparison highlights differences in visual plausibility and realism that may not be fully captured by distributional statistics, complementing the quantitative analyses.

6.1.1. Experiment 1: Attribute-Level Statistical Consistency

The first evaluation determines if the pseudonymized images are consistent with the original images in terms of facial attributes without requiring visual identity preservation. To this end, the same attribute extraction pipeline used for the original CelebA images is applied to the generated images, producing a second set of combined.json files. Then, attribute statistics are computed in parallel for both sets. Suitable evaluation metrics at this level include:

Differences between empirical means and variances of continuous attributes.

Distributional similarity measures such as Jensen–Shannon divergence [

28] and Wasserstein distance [

29] for scalar and gradient attributes.

Agreement rates for categorical attributes (e.g., dominant expression or gender).

Importantly, these statistics are computed independently for the original and generated image sets and compared in a parallel manner, ensuring that the evaluation captures population-level consistency rather than instance-level similarity.

6.1.2. Experiment 2: Identity Dissociation

The second evaluation explicitly focuses on identity privacy. The objective is to verify that the generated images cannot be reliably linked back to the original individuals. To conduct this evaluation, a pre-trained face recognition or identity verification model is applied to pairs of original and generated images. Similarity scores are then computed for each pair. A successful pseudonymization is characterized by

In this context, a high probability of failure in identity verification is expected, indicating that, although attribute-level semantics are preserved, identity-specific features are not.

6.1.3. Experiment 3: Comparison with LLM-Based Prompt Generation

To evaluate the impact of the prompt generation strategy on downstream image statistics, an additional comparison was conducted using a large language model as an alternative text generator. We used the local Mistral-7B model via Ollama (

https://ollama.com/library/mistral, accessed on 30 January 2026.). For this evaluation, the same structured attribute representations (

combined.json) are provided as input to Mistral, which generates free-form natural-language descriptions without an explicit template constraint. These descriptions are then used as prompts for the same text-to-image model (Janus-Pro) to generate images. Attribute extraction is subsequently applied to the generated images, yielding a third set of

combined.json files. This setup allows for a controlled comparison of three conditions—original images, template-based images, and LLM-based images—while keeping the image generation model fixed.

6.2. Attribute-Level Evaluation Results

This section presents the results of the statistical evaluation at the attribute level. It compares the distributions of attributes extracted from the original CelebA images with those extracted from the corresponding pseudonymized images generated via the proposed text-to-image pipeline.

Figure 2 serves as a visual reference for the attribute statistics reported in the subsequent analysis. Facial attributes are extracted from the original image (a) and used to generate the image shown in (b) via template-based text generation.

6.2.1. Categorical Attribute Agreement

Categorical attributes, such as dominant facial expression, gender, and primary ethnicity, are evaluated using agreement rates. For each image, the dominant category predicted from the original image is compared with the dominant category predicted from the generated image. The agreement rate is defined as the fraction of samples for which the two predictions coincide:

where

K is the total number of pair images, and

and

denote the categorical predictions for the original and generated images, respectively. This metric directly measures semantic consistency for attributes that lack an inherent order or numerical scale. It is important to note that agreement at the categorical level does not imply identity preservation and is therefore evaluated independently of identity-related metrics.

Table 3 summarizes agreement rates for categorical attributes. Gender exhibits the highest agreement rate (0.98), reflecting its coarse granularity and strong visual salience. Other categorical attributes show moderate agreement rates (approximately 0.63~0.72), indicating that these attributes are preserved at a semantic level while allowing substantial variation.

None of the categorical attributes reach a level of perfect agreement. This supports the conclusion that identity-specific information is not preserved at the categorical level.

6.2.2. Continuous Attribute Statistics

For continuous attributes (including both scalar and gradient attributes), empirical means and variances are computed separately for the original images and the generated images. Let

a denote a continuous attribute and

K a set of images with attribute scores

. For each attribute, the empirical mean

and variance

are estimated for both datasets. The empirical mean

and variance

are defined as

The absolute differences

quantify systematic shifts and changes in variability introduced by the generation process. Mean differences indicate whether an attribute is consistently amplified or attenuated in the generated images. Meanwhile, variance differences reveal potential mode collapse or over-regularization effects. While this analysis provides a simple interpretable first-order assessment of semantic preservation, it does not capture higher-order distributional changes. For the Jensen–Shannon divergence, values below

indicate a nearly identical distributional structure. Values between

and

indicate mild structural changes. Values up to approximately

indicate pronounced but controlled variation. For the Wasserstein distance, which is bounded by the range of attributes

, values below

correspond to negligible shifts, values between

and

to small shifts, and values between

and

to moderate shifts. Across all evaluated attributes, the majority fall into the low or moderate regions for both measures. The attributes exhibiting the highest Jensen–Shannon divergence are stylistic and show increased variance, indicating diversification rather than distributional collapse. No attribute exhibits extreme Jensen–Shannon divergence and a large Wasserstein distance, which supports the conclusion that semantic structure is preserved while allowing for controlled stylistic flexibility.

Table 4 reports empirical means, variances, and distributional similarity measures for representative continuous attributes. Across the majority attributes, differences in empirical means remain small, and variances are preserved or slightly increased. This indicates that the generation process does not collapse the attribute diversity and does not introduce systematic global shifts.

The attributes reported in

Table 4 can be grouped into distinct classes according to their observed statistical behavior. Several attributes exhibit particularly high stability, including

Bald and

Pointy Nose, and occupy the upper block of the table. These attributes exhibit negligible mean shifts, very low Jensen–Shannon divergence, and minimal Wasserstein distance. Their distributions remain almost unchanged, indicating that stable geometry-driven facial characteristics are reliably preserved by the generation process. A subset of attributes, represented by

Black Hair and

Attractive, form the middle block of the table. For these attributes, moderate changes in mean values and distributional distances are observed. The corresponding Jensen–Shannon divergence and Wasserstein distance indicate controlled variation rather than structural distortion, suggesting that semantically salient attributes are adjusted within a bounded and interpretable range. Other attributes, shown in the lower block of the table and represented by

Bangs and

Arched Eyebrows, exhibit the largest distributional differences. These attributes show increased Jensen–Shannon divergence and variance while maintaining moderate Wasserstein distances. This pattern reflects a change in distributional shape and dispersion rather than a strong global shift, which is consistent with the inherently ambiguous and appearance-dependent nature of stylistic facial features. Taken together,

Table 4 reveals a consistent relationship between attribute class and statistical behavior. Attributes with a strong structural grounding remain highly stable, semantically meaningful attributes show moderate and controlled shifts, and stylistic attributes display increased flexibility. The analysis indicates that none of the attributes simultaneously exhibit extreme divergence and large geometric displacement, supporting the conclusion that the method preserves population-level semantic structure while allowing controlled stylistic variation.

6.2.3. Representative Distributional Patterns

Figure 3 illustrates empirical distributions for three representative continuous attributes. The

Smiling attribute serves as a stable control case, showing nearly identical distributions for original and generated images. Both the central tendency and variance are preserved, confirming that the generation pipeline does not introduce systematic drift when an attribute is structurally well-defined. In contrast, the

Black Hair attribute exhibits a moderate rightward shift and increased spread, indicating controlled stylistic variation consistent with dataset bias and gradient-based attribute grouping. Finally, the

Arched Eyebrows attribute demonstrates a pronounced distributional change with increased variance and a long tail, reflecting its sensitivity to fine-grained stylistic interpretation in text-to-image generation.

Together, these examples support the numerical findings reported in

Table 4 and provide intuitive evidence of controlled semantic variation that shows the proposed method preserves structural attributes while allowing greater flexibility for stylistic attributes, without collapsing distributions or reproducing identity-specific patterns. This behavior is consistent with the design goals of pseudonymized image generation and supports the suitability of the method for privacy-preserving applications. The distribution overview of the metrics is shown in

Figure 4.

6.3. Identity Dissociation Evaluation

While Experiment 1 assesses semantic and attribute fidelity, the second experiment focuses on the core privacy objective of the pipeline: the generated profile images should not remain linkable to the original individuals. We therefore evaluate identity dissociation by testing whether a face recognition system can still verify an identity match between an original image and its pseudonymized counterpart .

To evaluate identity dissociation, a face-recognition-based verification protocol is employed. This choice is motivated by the fact that modern face recognition systems are explicitly optimized to capture identity-defining features while being invariant to pose, expression, and other superficial variations. Consequently, the failure of such systems to verify identity provides strong evidence that identity information has been effectively removed. We evaluate identity dissociation using multiple pre-trained face recognition models with different architectures and training paradigms. Specifically, the following models are considered: FaceNet512, Dlib, VGG-Face, and ArcFace. All models are accessed through the

DeepFace framework [

17], which provides a unified and reproducible interface to widely used face recognition backbones. Importantly, none of the models are fine-tuned on CelebA or on any data related to the present experiments. All evaluations are conducted using fixed off-the-shelf model weights to avoid dataset leakage and to ensure a conservative assessment of identity preservation.

Identity verification is performed by comparing each original image with its corresponding generated image and computing similarity scores in the respective embedding spaces. A verification is considered successful if the similarity exceeds the default decision threshold of the respective model. In addition to single-model verification rates, we analyze cross-model agreement to assess whether identity matches are consistent across multiple independent recognition systems.

6.3.1. Protocol (1:1 Identity Verification)

For each of the original-generated pairs, we compute a binary verification decision using the standard verify procedure of each recognition model. In practice, this consists of (i) detecting and aligning the face, (ii) computing an embedding vector for each image, and (iii) comparing the pairwise distance (or similarity) against the model-specific recommended threshold. Pairs for which a face cannot be detected in either image are excluded from verification to avoid inflating privacy scores by detection failures.

6.3.2. Face Recognition Backbones

To reduce the risk that the evaluation is overly coupled to a single embedding space or decision threshold, we use four widely adopted verification backbones: FaceNet512 (Model 1), dlib (Model 2), VGG-Face (Model 3), and ArcFace (Model 4). Each model is applied with its default preprocessing and default verification threshold, reflecting a realistic attacker model that uses off-the-shelf configurations.

6.3.3. Re-Identification Indices

Let

N be the number of evaluation pairs

, where

is the original image and

is its generated (pseudonymized) counterpart. For each verification model

m, we obtain a binary decision

where

indicates that the model verifies the pair as the same identity, and

indicates that it does not.

Furthermore, for a fixed model

m, we define a per-pair success variable

The re-identification rate of model

m is the average of these successes across all pairs:

This value lies in and can be interpreted as the fraction of pairs that model m can verify as the same person.

6.3.4. Consensus Re-Identification Rate

Single-model decisions can be sensitive to threshold choice or domain shift. To reduce this sensitivity, we additionally report a consensus metric that requires agreement across multiple models. Let

denote the set of evaluated models. For each pair

i, we count how many models verify the pair:

We then mark pair

i as a consensus re-identification if at least

k models verify it:

Finally, the consensus re-identification rate is

where

yields increasingly conservative definitions of a privacy failure. In other words,

counts only those cases where at least two independent models agree that the pseudonymized image can be verified as the same person.

6.3.5. Experimental Results

The experimental results are shown in

Table 5. Across the evaluated set, FaceNet512 produced no successful verifications (Model 1:

), indicating strong identity dissociation under this backbone. The remaining recognizers reported a limited number of successful verifications (Model 3: 33; Model 4: 33; Model 2 produced the largest set of positives in our experiments (119)). The results show that agreement between different recognizers was rare: only 25 pairs (≈2.5%) were verified by at least two models, and only six pairs (≈0.6%) were simultaneously verified by three models (dlib, VGG-Face, and ArcFace). We treat these consensus positives as the most relevant failure cases for qualitative inspection since they are less likely to be explained by model-specific threshold effects.

This second experiment directly captures the privacy goal of pseudonymization: low and low indicate that the generated images preserve attribute-level semantics while disrupting identity-specific cues sufficiently to prevent reliable linkage back to the original individuals. Using multiple backbones and reporting both single-model and consensus rates provides a more robust estimate of identity leakage than relying on a single recognizer.

Overall, the results demonstrate that identity verification fails with high probability, especially under stricter or modern recognition models, supporting the effectiveness of the proposed pseudonymization approach. In addition, the cross-model evaluation strategy provides a reliable assessment of identity dissociation and reduces the likelihood of drawing conclusions based on model-specific sensitivities.

6.4. Prompt-Generation Strategy Comparison (Template vs. LLM)

In addition to evaluating the consistency of attributes between the original and generated images, we compare the prompt-generation strategies used to drive the text-to-image model. Specifically, we contrast (i) deterministic template-based descriptions and (ii) free-form LLM-based descriptions (Mistral-7B) while keeping the downstream image generator (Janus-Pro) and the attribute extraction pipeline constant. This isolates the impact of the text prompt on the resulting population-level attribute statistics derived from the generated images.

Figure 5 summarizes the mean attribute values for the original images and for the two prompt strategies after image generation and re-extraction of the combined JSON data. Overall, both prompt strategies reproduce the global structure of the attribute landscape: highly prevalent attributes remain prevalent and rare attributes largely remain rare. This indicates that the pipeline preserves population-level semantics under both forms of prompt construction.

6.4.1. Where Templates and LLM Prompts Behave Similarly

A large proportion of attributes have similar mean values under both strategies. Coarse and visually salient properties, such as gender balance and several accessory-related attributes, show only minor differences between the two strategies. This suggests that, once the structured attribute representation is established, both prompt-generation methods provide Janus-Pro with sufficient information to reproduce these population-level trends.

6.4.2. Systematic Strategy-Dependent Shifts

Despite the overall similarity,

Figure 5 reveals several consistent strategy-dependent effects:

Expression/affect. The template-based prompts yield a noticeably stronger shift toward the happy category and a corresponding reduction in neutral (and partially sad) compared to the original distribution. The LLM-based prompts track the original affect distribution more closely, indicating that free-form descriptions can reduce the positivity bias induced by deterministic phrasing.

Subjective attributes. Both strategies increase attributes such as Attractive (and slightly Young) relative to the original set. This pattern is consistent with known generative priors toward aesthetically idealized faces. The LLM-based prompts tend to accentuate this effect marginally, plausibly because unconstrained natural language introduces additional positively valenced descriptors beyond the structured attribute input.

Hair-related and fine stylistic attributes. Differences are most pronounced for attributes that are inherently stylistic and ambiguous in text-to-image rendering (e.g., hair details such as Bangs and color-related attributes). Both strategies preserve the broad hair-attribute structure, but the LLM-based prompts exhibit slightly larger shifts for a subset of these fine-grained properties, consistent with the additional degrees of freedom in free-form text generation.

6.4.3. Interpretation

In summary, the comparisons suggest a trade-off. Template-based prompting provides stronger control and reproducibility, but it can introduce biases induced by phrasing (notably toward positive affect). In contrast, LLM-based prompting better matches certain original distributions (e.g., expression), although it slightly increases drift in subjective or stylistic attributes. These observations are derived solely from the re-extracted combined JSON data and therefore capture population-level semantic effects rather than instance-level visual similarity.

6.5. Cross-Model Identity Verification and Qualitative Comparison: Template vs. LLM Prompts

To complement the attribute-level analysis (see

Section 6.2) with an explicit privacy check and a visual plausibility assessment, we jointly analyze (i) cross-model identity verification and (ii) qualitative differences between template-based and LLM-based prompt generation. The central question is whether the preservation of semantic facial attributes comes at the cost of identity leakage, and how the two prompt strategies differ in the visual robustness of the generated faces.

6.5.1. Cross-Model Identity Verification Under Both Prompt Strategies

Identity dissociation is evaluated with four off-the-shelf face recognition backbones (FaceNet512, Dlib, VGG-Face, and ArcFace) via the

DeepFace framework [

17]. We reuse the verification decision variables and consensus re-identification formulation introduced earlier (see

Section 6.3) and focus on a strict cross-model agreement criterion (at least three models verifying the same original-generated pair). This conservative criterion emphasizes high-confidence matches and reduces the influence of model-specific thresholds or occasional false positives. Under this

consensus rule on the full evaluation set (

), only isolated cases remain linkable:

pairs (

) for template-based prompting and

pairs (

) for LLM-based prompting. Thus, even under a conservative attacker model that requires agreement across multiple embedding spaces, identity verification succeeds only rarely, supporting the conclusion that identity dissociation holds for the overwhelming majority of samples.

6.5.2. Qualitative Inspection of Rare High-Confidence Matches

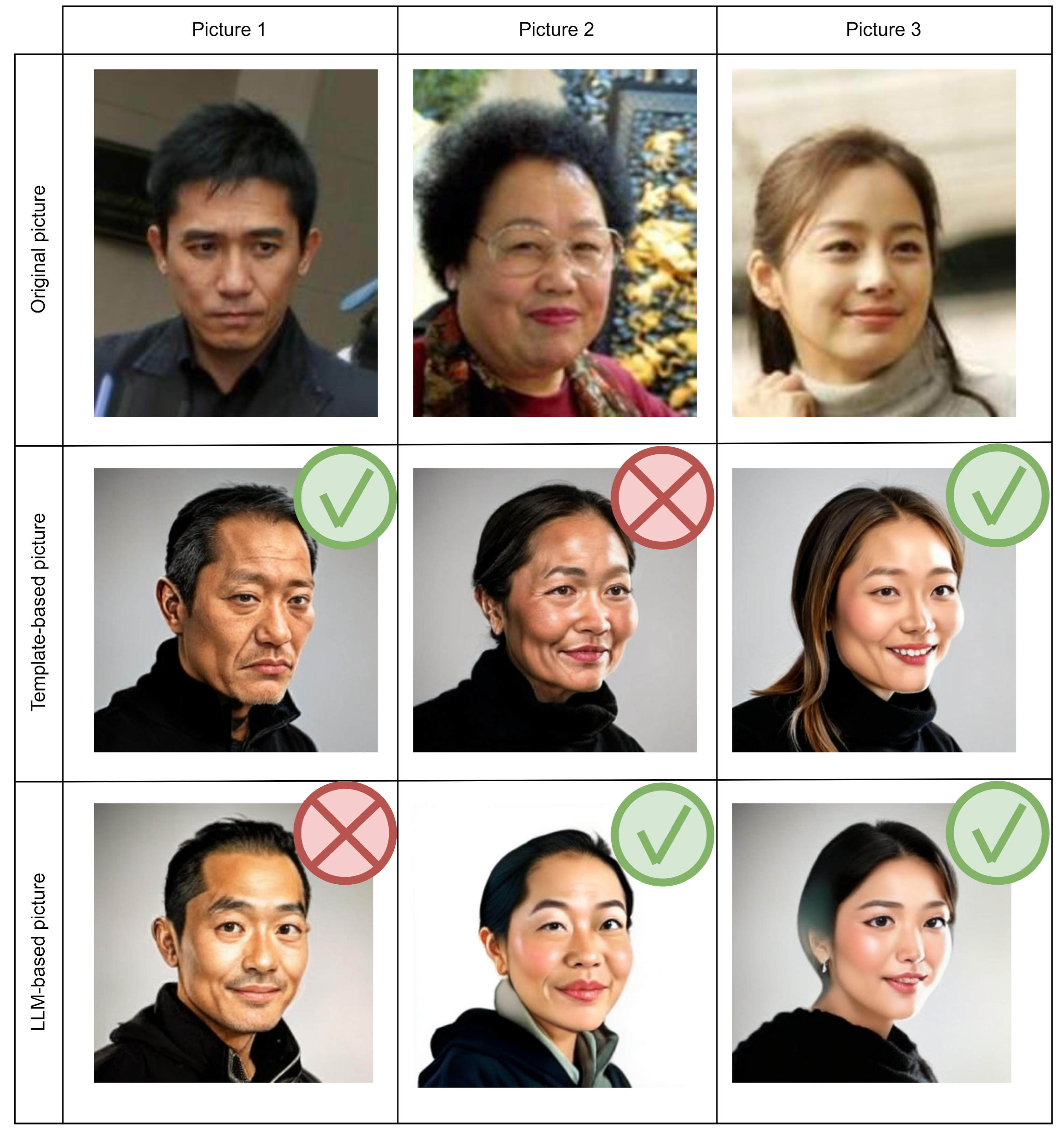

To better understand the few pairs that pass the strict cross-model criterion, we manually inspect representative examples and visualize them in

Figure 6. Each column corresponds to one subject (top: original CelebA; middle: template-based generation; bottom: LLM-based generation). Green check marks indicate cases where the corresponding generated image is verified as identical to the original by at least three of the four recognition models; red crosses indicate that fewer than three models verify the match.

Two patterns are notable. First, high-confidence matches are typically not stable across prompt strategies: in most examples, only one of the two generation approaches triggers a consensus match. Second, overlap across strategies is extremely rare. This suggests that these rare privacy failures are not a deterministic consequence of the structured attribute representation alone but emerge from infrequent generative realizations in combination with the sensitivities of particular recognition backbones.

6.5.3. Side-by-Side Visual Comparison: Realism and Typical Artifacts

Beyond privacy, we qualitatively compare image realism and typical failure modes by generating two pseudonymized images per original—one from the deterministic template and one from the LLM (Mistral) description—while keeping Janus-Pro fixed.

Figure 7 provides a representative overview where both generations are not verified as identity matches.