1. Introduction

1.1. Application Context

In engineering practice, technical documentation must undergo rigorous compliance checks to ensure reliability and consistency. Conventional verification procedures typically follow a multi-step workflow that mobilizes significant amounts of highly qualified personnel. Although indispensable, these activities consist largely of repetitive and time-consuming tasks that provide limited added value relative to the level of expertise required and remain susceptible to human error. Automated document validation offers a means to address these limitations. When integrated within existing control workflows, it can reduce undetected errors, enhance overall documentation quality, and lower the number of person-hours devoted to routine verification, thereby enabling experts to concentrate on tasks of higher technical complexity. In addition, early and systematic validation can help limit the risk of costly rework, project delays, and budget overruns. Consequently, the automation of document verification supports more efficient resource allocation and contributes to the robustness of engineering project outcomes.

This paper is part of a project to address the automation of technical document validation across diverse engineering disciplines and document formats, with the objective of developing a robust and efficient large-scale compliance pipeline. A key requirement of this pipeline is its adaptability to domain-specific or project-specific rules, which must be defined and managed by end users. Consequently, the understandability and customizability of the system represent central design objectives. This software integrates user-oriented functionalities, including visual feedback and correction suggestions, either as annotations directly overlaid on the document or as margin comments. Additionally, the system generates a separate quality check report, thereby combining automated compliance assessment with user guidance to support iterative document refinement.

1.2. Title Block Compliance Check

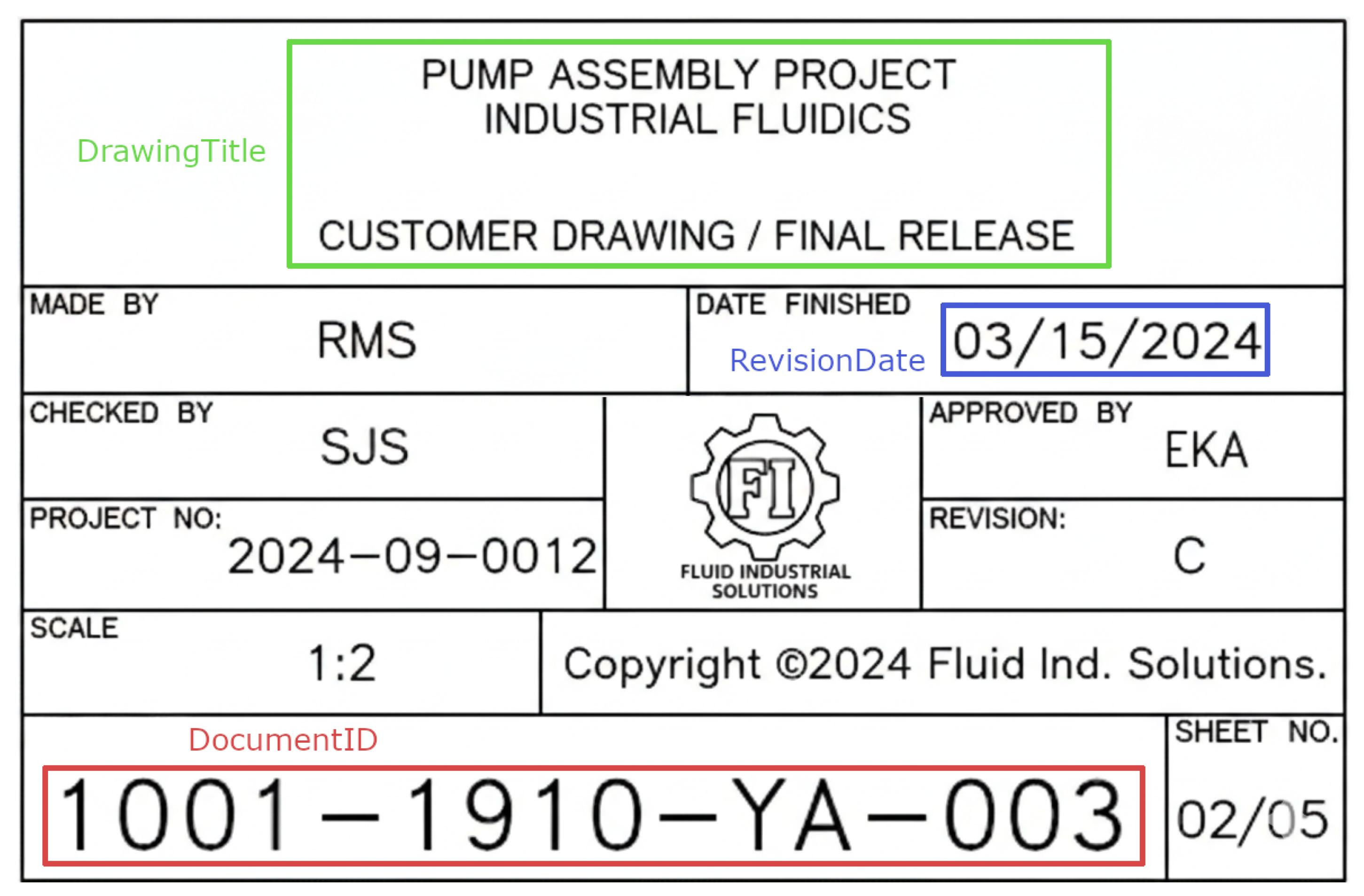

A major aspect of technical document verification concerns the title block. A title block is an irregular table, generally located on the first page of a text document or in the corner of an industrial drawing, which functions both as a summary of the document’s metadata and as its formal identifier. It commonly includes the document revision history, its identification information and the applicability context for the remainder of the document, see

Figure 1. Ensuring the accuracy of the title block is critical, as inconsistencies or irregularities transmitted to a client or integrated into internal tracking and approval process may constitute a contractual non-conformance, potentially triggering internal investigations that are costly in terms of time and resources. This underscores the need for automating title block verification to reduce the workload on engineers while proactively minimizing errors in client deliverables. In industrial contexts, this need is further amplified by the heterogeneity of document production environments. Engineering deliverables are often produced by multiple contractors and subcontractors, who may rely on different authoring tools and do not necessarily benefit from dedicated title block validation features embedded in their design software. As a result, compliance cannot be guaranteed at creation time and must instead be assessed a posteriori on the delivered documents, independently of the software originally used to generate them. Moreover, because the title blocks considered in this work typically originate from modern digital workflows (e.g., native CAD exports or high-quality PDFs), image degradation is not the primary difficulty addressed here. Rather, the challenge lies in reliably identifying structural non-compliances (e.g., missing or merged cells, misplaced fields, inconsistent tabular organization) and communicating them in an actionable manner.

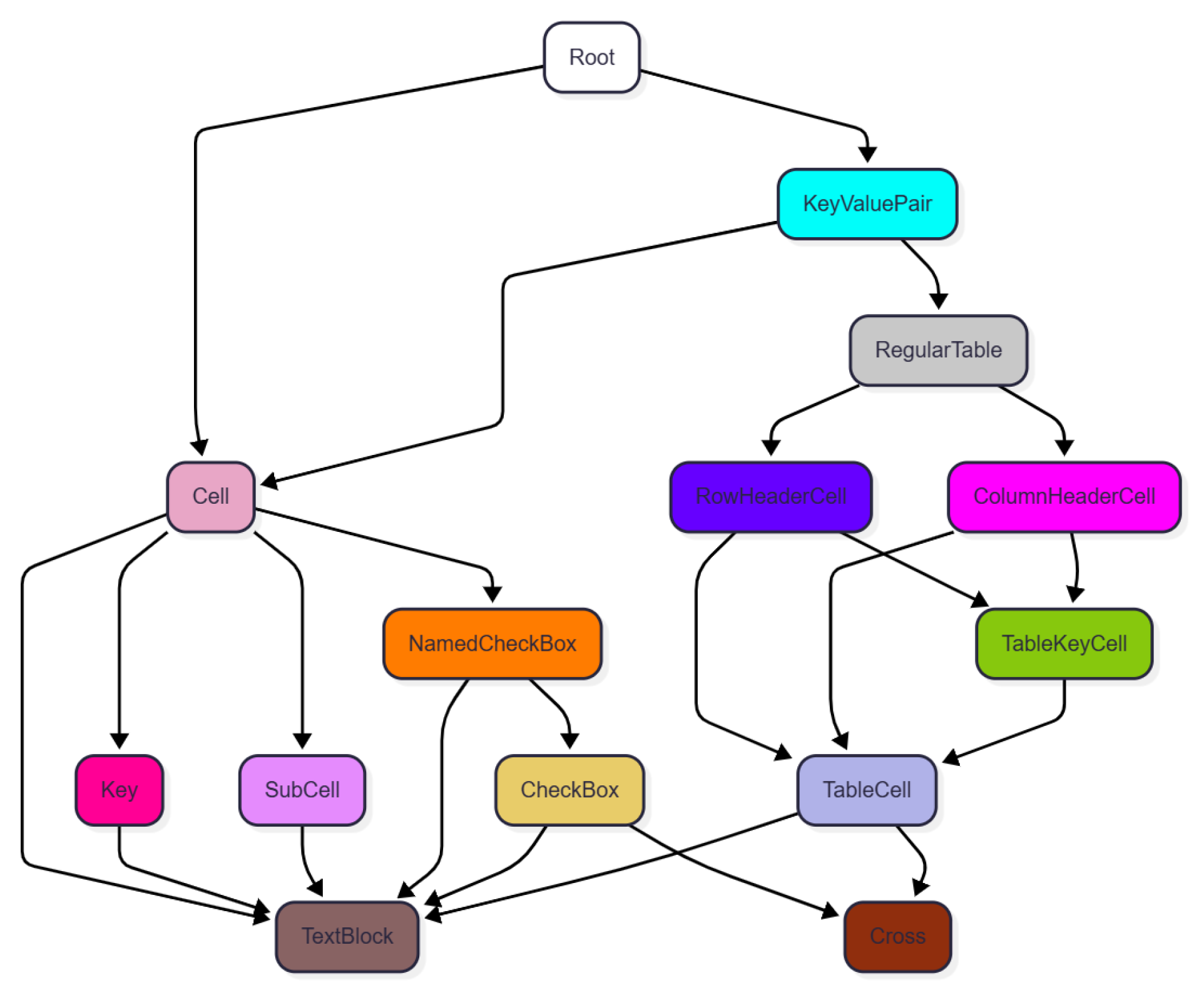

Importantly, this challenge is not limited to hierarchical or nested information extraction: effective compliance checking also requires explicit detection of violations of structural consistency constraints and the production of interpretable, localized feedback that can be used within existing workflows. Therefore, we propose a title block quality control methodology comprised of three main steps. The information extraction step consists of reading the contents of the title block in a structured format, typically as a set of key–value pairs. The compliance and consistency verification step ensures that the structure and information contained in the title block comply with a predefined set of rules, often specific to a given client or project. Finally, the feedback provision step communicates the results of the compliance verification to the user, including warning messages and corrective suggestions for each identified non-compliance.

To the best of our knowledge, no prior work in the literature has formally addressed the problem of automated title block compliance checking fully. In the following discussion, we present existing approaches in the literature and relevant off-the-shelf tools for title block processing and their shortcomings compared to the title block quality control steps stated above.

2. Related Work and Existing Solutions for Title Block Compliance Checking

Automated compliance checking of engineering documentation combines document understanding—to extract structured information from heterogeneous layouts—with explicit rule evaluation and feedback provision. In the specific case of title blocks (also referred to as title boxes), the task goes beyond extracting a few metadata fields: it requires interpreting an irregular, often highly customized table, detecting both content-wise and structural non-compliances, and reporting them in a form that supports existing control workflows.

This section reviews academic contributions on title block processing [

2,

3,

4,

5,

6,

7,

8], together with commercial solutions for title block extraction [

9,

10,

11] and general document understanding systems. We cover cloud-based platforms with customizable extraction models [

12,

13,

14], as well as local building blocks and on-premise document AI approaches that can be integrated into industrial pipelines [

15,

16,

17,

18,

19,

20,

21,

22]. Throughout the review, we assess each approach with respect to the three stages of a compliance pipeline: structured extraction, rule-based verification, and feedback provision to the user.

2.1. Scope and Evaluation Criteria: From Extraction to Compliance

Most work on title block processing targets information extraction as an end goal, for applications such as drawing retrieval, indexing, or downstream analytics [

2,

3,

4,

5,

6,

7,

8]. In contrast, title block

compliance checking requires additional capabilities. First, the extracted representation must preserve enough layout information to support structural constraints (e.g., missing or merged cells, misplaced fields, unexpected table topology). Second, the system must explicitly identify violations rather than merely producing a “best-effort” extraction. Third, results must be communicated through interpretable and actionable feedback (e.g., localized warnings and correction suggestions) to support iterative refinement.

Accordingly, we evaluate existing approaches based on (i) how structure-aware their outputs are (key–value pairs versus grid- or entity-based layouts), (ii) their ability to distinguish acceptable variability from non-compliances, and (iii) whether they provide workflow-oriented feedback mechanisms.

2.2. Compliance Requirements and Standards

Title block compliance targets are typically defined through a combination of formal standards and project- or company-specific rules. Some title block standards exist, such as ISO 7200:2004 [

23], which specify expected fields, general layout, and representation guidelines. While such standards provide prescriptions, they also leave some freedom in implementation, and adherence is variable in practice due to legacy conventions, domain constraints, and contractor-specific templates [

3]. In addition, organizations frequently define internal rules (naming conventions, controlled vocabularies, cross-document consistency requirements) that complement or override standard recommendations.

From an operational perspective, compliance checks can be grouped into four families:

Presence and format compliance: required fields are present, non-empty, and follow standard patterns (e.g., drawing number syntax, date formats).

Standard-conformance compliance: structure and content conform to standards such as ISO 7200:2004 and to company variants (e.g., sheet sizes, border positions, title block position).

Semantic compliance: values belong to controlled vocabularies (units, material codes, project codes). In practice, this is often approximated by dictionaries or pattern-based post-processing of Optical Character Recognition (OCR) outputs [

4,

9].

Cross-document compliance: title block values are consistent with other project artifacts such as bills of materials (BOM), checklists, and revision histories. Industrial case studies mention cross-checking title block information against BOMs and tag lists [

4,

8].

2.3. Title Block Information Extraction as a Pipeline

Title block information extraction can be decomposed into three subtasks: the title block localization task, the structural modeling task, and the text recognition and semantic labelling task. This decomposition is particularly relevant for compliance, as structural constraints and error explanations depend on the availability and fidelity of intermediate representations.

2.3.1. Localization of the Title Block Region

The localization task identifies the region containing the title block on the drawing sheet. Typical methods include (i) rule-based geometric heuristics that assume bottom-right position, margins, or standard sheet layouts [

7], (ii) morphological and structural analysis of connected components and frames [

3], and (iii) CNN-based object detection models [

5,

6] such as YOLO [

24]. In modern digital workflows, where drawings are often exported as vector PDFs or high-quality raster images, localization is typically robust. However, localization errors propagate to downstream structure inference and may be mistaken for actual structural non-compliances.

2.3.2. Structural Modeling and Layout Reconstruction

The structural modeling task aims to represent the internal structure of the title block (cells, fields, labels) in a layout-independent way. Reliably interpreting a title block layout is challenging because title blocks often exhibit highly irregular structures. In practice, a title block may combine nested generic entities in varying configurations. These can include form and comb fields containing textual entries; selection fields, typically represented by checkboxes storing boolean values; and tabular fields corresponding to regular tables with column or row headers. Capturing such heterogeneity is essential to enable structural constraints and to produce meaningful feedback when non-compliances occur. Template-based approaches commonly represent title blocks as grids, model line topology using intersection-point matrices or graphs, and then perform (possibly fuzzy) matching against reference templates to handle multi-style and multi-template cases [

4]. Earlier systems also rely on structural cues extracted from line detections and connected components to reconstruct a plausible table layout before field assignment [

3].

2.3.3. Text Recognition and Semantic Field Labeling

The text recognition and semantic labelling task aims to recognize the textual content and map it to semantic fields (drawing number, revision, material, etc.). The typical output of this task is a set of key–value pairs. Field assignment can be achieved through geometric and format-based rules (e.g., “cell at row

X, column

Y is the drawing identifier”) in engineering drawing pipelines [

8]. More recent approaches combine OCR outputs with learned models to infer field labels, including deep learning pipelines for title block processing [

2,

5]. Vision–language models can also be used for post-processing, for instance by transforming extracted text and positional cues into structured JSON metadata [

5], or by improving robustness to recognition noise through transformer-based text recognition modules [

2]. From a compliance perspective, semantic labelling is necessary but insufficient if the extracted representation discards the spatial organization that supports structural rules.

2.4. Title Block Structural and Content-Wise Rule Checking

While information extraction has been widely studied, explicit rule checking is less frequently formalized as a standalone research objective. In practice, compliance is often implemented as a downstream layer that consumes extracted fields and evaluates them against organization-specific rules. Industrial systems may combine learned detectors and OCR with such rule layers, for quality assurance or production-oriented checks, even when the underlying rule sets are not publicly disclosed [

9]. Academic and industrial pipelines also report content-wise validation steps such as dictionary-based normalization, pattern enforcement, and cross-checks against external sources (e.g., BOMs or tag lists) [

4,

8].

However, two limitations are recurrent. First, many systems assume that structural variability is “handled” by robust extraction, and therefore do not expose structural anomalies (e.g., missing cells) as explicit violations. Second, rule checking components are often designed for a narrow schema (fixed field sets and positions) and are difficult to adapt to contractor-specific title blocks without substantial engineering effort. These observations motivate approaches that keep an explicit representation of the title block structure and that treat both structural and content-wise constraints as first-class compliance objectives.

2.5. Feedback Provision and Workflow Integration

Feedback and workflow integration determine whether automated checks can be adopted in engineering practice. Existing systems commonly connect title block extraction to document search or indexing, or to downstream inspection and QA pipelines [

2,

5]. Commercial tools typically provide interfaces to visualize extracted entities or to define extraction templates, supporting integration with Computer Assisted Design (CAD) or Building Information Modeling (BIM) or document management ecosystems [

9,

10,

11].

Some off-the-shelf solutions incorporate a graphical user interface, either to allow users to define a title block template in template-based approaches [

10,

11], or to visualize detected entities in data-driven approaches [

9,

12,

13], as presented in

Figure 2. While these interfaces facilitate visualization and configuration, they typically provide limited support for expressing and diagnosing structural violations in heterogeneous title blocks. In addition, the nested and irregular structure of title block entities is not always explicitly represented, which can hinder user interpretation in the presence of extraction errors. In contrast, compliance-oriented systems must provide localized and interpretable feedback that highlights both structural and content-wise non-conformances and supports correction.

2.6. Commercial and Cloud Document AI Solutions

Commercial solutions address title block extraction either as a domain-specific feature integrated into engineering software ecosystems, or as an instance of general document understanding. The latter category has recently been reshaped by generative AI-based services capable of extracting structured information from a wide variety of document types.

2.6.1. Title-Block-Oriented Products and CAD/BIM Tools

Commercial solutions for title block extraction considered here include Werk24 (V2.3.0) [

9], Kahua [

10] (V2025.5), and AutoCAD (V25.1) [

11]. Kahua and AutoCAD integrate title block processing into broader software environments (e.g., BIM/CAD or document management workflows), typically exposing configuration interfaces to define the expected fields and their locations. Werk24 provides extraction as an independent service and focuses on mechanical engineering drawings, including domain-specific metadata. Such products are effective within their target contexts, but their assumptions and field schemas may not transfer to heterogeneous contractor deliverables or to title blocks that deviate from the supported domain conventions.

2.6.2. Generic Document Understanding Services with Customization

The extraction of information from title blocks can be regarded as a specific instance of document layout understanding, which involves identifying and structuring entities within documents ranging from highly structured to unstructured formats. Cloud-based services such as Microsoft Azure Document Intelligence, Google Cloud Document AI, and Amazon Bedrock Data Automation provide solutions capable of performing layout-aware extraction across many standard document types. Among these, the respective custom models—Azure’s Custom Template [

12], Google’s Custom Extractor [

13], and Amazon’s Custom Blueprint mechanisms [

14]—are of particular interest for title blocks, as they combine pre-trained models with configurable schemas to adapt to domain-specific layouts. Model adaptation is typically supported through annotated training examples, with Azure and Google providing graphical annotation interfaces, while Amazon adopts an instruction-based mechanism for specifying fields to extract.

Although these platforms can deliver strong key–value extraction performance, their primary objective is information extraction rather than compliance. As a result, structural anomalies may be absorbed by the model’s robustness and not surfaced as explicit violations. When layout outputs (tables or regions) are available, they can serve as a basis for structural checks, but additional steps are required to link keys and values and to enforce domain-specific and project-specific constraints.

2.7. Local Deep Learning Document Understanding Solutions

Beyond cloud services, a growing ecosystem of local document AI toolkits and models can be deployed on-premise and integrated into industrial pipelines. These approaches are typically designed for generic document image analysis (forms, invoices, receipts, scientific articles) rather than engineering drawings, but they provide reusable components for layout parsing and information extraction.

LayoutParser [

15] is a unified toolkit for document layout analysis that facilitates the construction of pipelines combining layout detection, OCR, and structured representations. It provides abstractions to integrate state-of-the-art detectors and can therefore be used as an implementation backbone for title block localization and layout reconstruction. LayoutLMv3 [

16] is a multimodal transformer pre-trained with unified text and image masking, targeting downstream tasks such as document classification and information extraction. In such models, OCR tokens and their bounding boxes are central inputs, which makes them suitable when reliable OCR and spatial annotations are available. Finally, Donut [

17] proposes an OCR-free end-to-end transformer that directly generates structured outputs from document images. While attractive for simplified pipelines, OCR-free generation can reduce interpretability and control, which is a concern for compliance scenarios where explicit localization of violations is required.

2.8. OCR Pipelines for Raster/Scanned Drawings Solutions

When engineering documents are available as scans or rasterized PDFs, OCR becomes a key dependency of the extraction pipeline. Local OCR engines and libraries provide practical options for on-premise deployment. Tesseract [

18] is a long-standing open-source OCR engine widely used as a baseline. More recent deep-learning-based toolkits, such as PaddleOCR [

19], docTR [

20], and EasyOCR [

21], offer modular pipelines that combine text detection and text recognition models and can be adapted to domain-specific fonts and rendering conditions. In addition, general-purpose vision frameworks such as Detectron2 [

22] can be used to train custom detectors or segmenters to localize title blocks or specific graphical elements (e.g., revision tables) prior to OCR.

From a compliance perspective, OCR robustness reduces the risk of content-wise false alarms, but it does not address structural violations unless the downstream representation preserves layout and supports explicit constraint checking.

2.9. Method Families and Their Implications for Compliance

Across both academic and commercial solutions, two methodological families are commonly encountered: non-data-driven approaches based on rules and templates, and data-driven approaches that infer structure from visual and textual content. These families offer different trade-offs in terms of interpretability, robustness, and suitability for structural compliance checking.

2.9.1. Non-Data-Driven Approaches: Heuristics and Template Matching

Non-data-driven approaches include heuristic methods and template-based methods. Heuristic methods define rules based on expected border positions and margins of title blocks, text density, and line detection cues [

3,

10,

11]. They are typically interpretable and can provide explicit error conditions (e.g., missing borders or unexpected geometry), which is useful for compliance. However, they often assume high-quality “pixel perfect” documents and can be fragile when templates vary significantly. Template-based methods represent title blocks as grids or tables and perform matching against a library of reference templates, possibly with fuzzy matching to accommodate geometric variations [

4]. When template libraries capture expected variants, such methods can naturally preserve structure and therefore support structural rule checking; the main limitation lies in maintaining comprehensive template coverage across contractors and projects.

2.9.2. Data-Driven Approaches: CNN, Transformers and VLM/LLM-Based Systems

Data-driven approaches infer title block structure using learned models. Deep learning computer vision pipelines typically rely on CNN-based detectors (and more recently vision transformers, or ViTs) for localization and on OCR models for text extraction, sometimes combined with specialized segmentation components [

2,

5,

6,

15,

16,

17,

22]. More recently, multimodal systems combine visual features with large vision–language models (e.g., GPT-4o [

25], Qwen2-VL [

26]) to jointly reason about layout and content, producing structured metadata directly [

2,

5]. Cloud document understanding platforms also fall into this category, as they use pre-trained models (and sometimes agentic orchestration) to extract key–value pairs and tables from documents [

12,

13,

14].

Data-driven solutions are generally robust to layout distortions and image noise, in contrast to domain-specific software that often assumes stricter input quality conditions. This robustness also explains their widespread adoption for legacy document digitization, where degradations and acquisition artifacts are common. However, it can come at the cost of compliance sensitivity: layout anomalies such as missing cells or unintended merges may be absorbed as normal variability rather than being identified as explicit violations. In compliance-oriented settings, this motivates the use of structure-preserving outputs and, more broadly, hybrid architectures that complement data-driven extraction with explicit structural constraint checking.

2.9.3. Illustrative Case Study: Key–Value Pair Extraction vs Layout Preservation

To illustrate how extraction outputs impact compliance,

Figure 3 reports a simple experiment conducted on two title blocks (

Figure 3a,b) that share the same information but exhibit clearly different structures. We used the Azure Document Intelligence Studio (V4.0)

General Document model [

27], whose application scope is sufficiently close to our use-case for this demonstration. When prompted to extract title-block key–value pairs, the model returns the same four key–value pairs for both inputs (

Figure 3b,e), despite the structural discrepancy between the two layouts. In this setting, the output is unsuitable as a first stage of a pipeline aiming at downstream structural violation detection, because the spatial organization of the elements is not preserved. By contrast, when prompted to output the document layout, the model produces tabular structures (

Figure 3c,f) in which positional information is retained. This output can serve as a basis for structural compliance checking, provided that an additional step is introduced to reliably associate each key with its corresponding value.

2.10. Synthesis, Research Gap, and Contributions

Overall, the literature and available tools indicate that title block localization and field extraction can be addressed effectively in many contexts, either through template-based reasoning [

4] or data-driven pipelines [

2,

5]. In parallel, commercial products and cloud platforms provide accessible extraction capabilities and user-facing interfaces for template configuration or visualization [

9,

10,

12]. Nevertheless, formal, structure-aware compliance checking with interpretable feedback is not treated as a first-class problem. Compliance is typically implemented as an implicit downstream layer on top of extraction, often tailored to a fixed schema and a narrow application domain [

8,

9]. In particular, structural consistency constraints (e.g., missing or merged cells) are rarely surfaced explicitly by robust data-driven extractors. These limitations motivate approaches that preserve structure, support user-defined rule sets, and provide actionable feedback aligned with engineering control workflows. To address this gap, we propose a rule-driven, template-based approach that (i) extracts title block information while preserving its structural organization, (ii) verifies both structural and content-wise constraints against user-defined rules, and (iii) provides explicit and localized feedback with correction guidance in a human-in-the-loop setting.

5. Discussion

5.1. Title Block Extraction Performance

Our method demonstrates the ability to successfully match a wide range of generic entities commonly found in title blocks and to flag non-compliances, provided that a shared template is available. The matching procedure, while still imperfect, has shown some robustness to typical distortions between source and target title blocks while giving the user the ability to control possible variabilities through a graphical interface.

However, two main limitations remain in the current implementation. First, comb fields are not yet supported and require the addition of specific rules to enable accurate matching in the target title block. Second, while the model is able to flag several keys in the presence of minor spelling non-compliances, such discrepancies are not currently consistently reported to the user as warning messages. When the bounding box of a key in the target title block does not fully cover the words containing typographical errors, the matching operation may fail silently and not trigger a warning. This limitation highlights the constraints of using the longest common substring as the sole similarity metric for key matching. Potential improvements include the integration of alternative similarity measures during the envelope construction phase, such as the Levenshtein distance, which accounts for character-level substitutions. Another limitation of the system is that it uses title block cells as anchor points during the matching step, and therefore assumes that the title block is defined as a set of well-formed cells, with no “floating” information. This assumption is consistent with existing standards (ISO 7200:2004 [

23]), but implies that the system cannot robustly handle poorly defined cells, such as those bounded by dotted lines. This limitation is, however, shared with other title block extraction software reported in the literature [

9].

5.2. User-Facing Visualization and Feedback Quality

Template creation can be performed entirely through the graphical interface, which allows users to define annotation bounding boxes and optional metadata. As illustrated in the overlaid annotation figures, the annotation process is user-friendly, as the model does not require precise bounding box placement for accurate interpretation. The resulting matched annotations can be superimposed on the target title block, providing users with visual feedback on the hierarchical structure of the extracted entities. When combined with the generated warning logs, this visualization framework offers reliable diagnostic support, helping users to identify and understand both content-related errors (e.g., misspellings) and layout-related issues (e.g., missing or misplaced entities) within the title block.

5.3. Further Research Directions

5.3.1. Generalization Capabilities of the Method on a Broader Dataset

The generalization capabilities of the proposed method should be evaluated on a broader and more diverse corpus of title blocks. At present, we are not aware of any public dataset specifically designed for industrial title block structural compliance checking, i.e., providing both title block content and explicit annotations of structural or layout non-compliances. This gap likely reflects the fact that title block structural compliance is rarely formalized as a benchmark task in the document analysis literature.

To support objective and reproducible evaluation, we propose a synthetic benchmarking strategy. The approach consists of starting from an existing public table dataset, such as PubTables-1M [

28], or document layout dataset, and then procedurally injecting controlled non-compliances (e.g., missing mandatory fields, swapped blocks, forbidden relocations, invalid nesting, or inconsistent table structures). Such a benchmark would enable systematic stress-testing of the violation detection component and a more rigorous assessment of the relevance and clarity of the feedback generated for the user.

5.3.2. Deployment and User Evaluation in Operational Conditions

Although the proposed strategy shows robustness under the distortions considered in our experiments, both its resilience to real production variability and its usability must still be validated in an operational setting. As a next step, we plan to integrate the method into the production infrastructure and evaluate it under real-world conditions. This deployment will involve developing a dedicated annotation interface to replace COCO Annotator, with the goal of presenting warning messages directly within the graphical environment.

After deployment, we will organize workshops with end users to collect qualitative feedback on two aspects: (i) the accuracy of the extracted key–value pairs across a diverse set of industrial title blocks and (ii) the ease of use of the production graphical interface. To support this evaluation and promote consistent usage, a user guide with illustrative annotation examples is currently being prepared.

5.3.3. Scalable Management of Multiple Templates

Because the proposed framework relies on manually defined templates, its scalability when a large number of templates coexist in an industrial environment warrants further consideration. In our expected use case, title block templates are often client- or subcontractor-dependent; accordingly, templates can first be organized by target client. To select a template within a client-specific subset, we envision combining two complementary procedures.

Key-term driven preselection: maintain a lookup table that lists the key terms associated with each template. For a given document, OCR-detected text blocks can be compared against this inventory to propose the most likely template, for instance by selecting the template whose key terms are most consistently present. This step requires an appropriate text similarity measure and can be complemented by the coarse structural description provided by the initial annotation graph.

User-assisted disambiguation: if multiple templates remain plausible, the graphical interface can offer a side-by-side visualization to help the user validate the best match by visually comparing candidate templates against the target title block.

In addition, templates may be enriched with metadata such as a standardized template name, a version identifier, and provenance information. The interface could further support a diff-like comparison workflow to distinguish highly similar variants. While variability handling may limit the number of templates required in practice, this assumption should be validated on operational datasets.

5.3.4. Improvement of the Key–Value Pair Export Format

An additional aspect identified for improvement concerns the readability and usability of the exported key–value pair format. Preliminary workshops with end users, aimed at evaluating the quality of the extracted key–value pairs, have led to the definition of several new annotation categories and processing rules designed to enhance this aspect of the system:

Introduction of a RadioButton annotation category to represent multiple mutually exclusive Boolean values as a single formatted output.

Modification of the SubCell annotation category to allow customization of cell content processing when a cell contains multiple key–value pairs.

Addition of a user-selectable export option for regular tables, allowing data to be exported either as individual key–value pairs for each non-null element (suitable for sparsely populated tables such as applicability tables) or as grouped sets of key–value pairs (e.g., the individual lines of history revision tables).

6. Conclusions

This study reframes title block processing as compliance checking, where the objective is to preserve structure, validate layout/content rules, and generate feedback that engineers can act upon. The proposed framework achieves this by combining (i) a lightweight annotation workflow for capturing end-user knowledge of a title block template and (ii) a graph-based representation that supports structure-aware matching and rule evaluation on new documents.

Across two experiments on industrial title blocks from the nuclear domain, the method demonstrates strong robustness to realistic variability. It matches compliant entities with 98–99% detection performance and identifies most injected deviations, reaching an 84% warning (alarm) accuracy under real-case distortions. Importantly, the approach successfully transfers tabular entities under changes in dimensions and headers when such variability is declared acceptable at the template level, and it provides interpretable overlays and warning messages to support diagnosis.

The current system remains limited by incomplete support for certain entity types (notably comb fields) and by key-matching sensitivity when minor spelling differences occur or when bounding boxes do not fully cover the relevant tokens. Future work will focus on (i) extending entity coverage and similarity measures, (ii) integrating warnings directly into a dedicated annotation/review interface, (iii) scaling template selection and versioning in multi-contractor environments, and (iv) developing a broader benchmark—potentially via controlled synthetic distortions—to evaluate structural compliance detection more rigorously.