Real-Time Arm Gesture Recognition Using 3D Skeleton Joint Data

Abstract

1. Introduction

2. Related Work

3. Arm Gesture Recognition

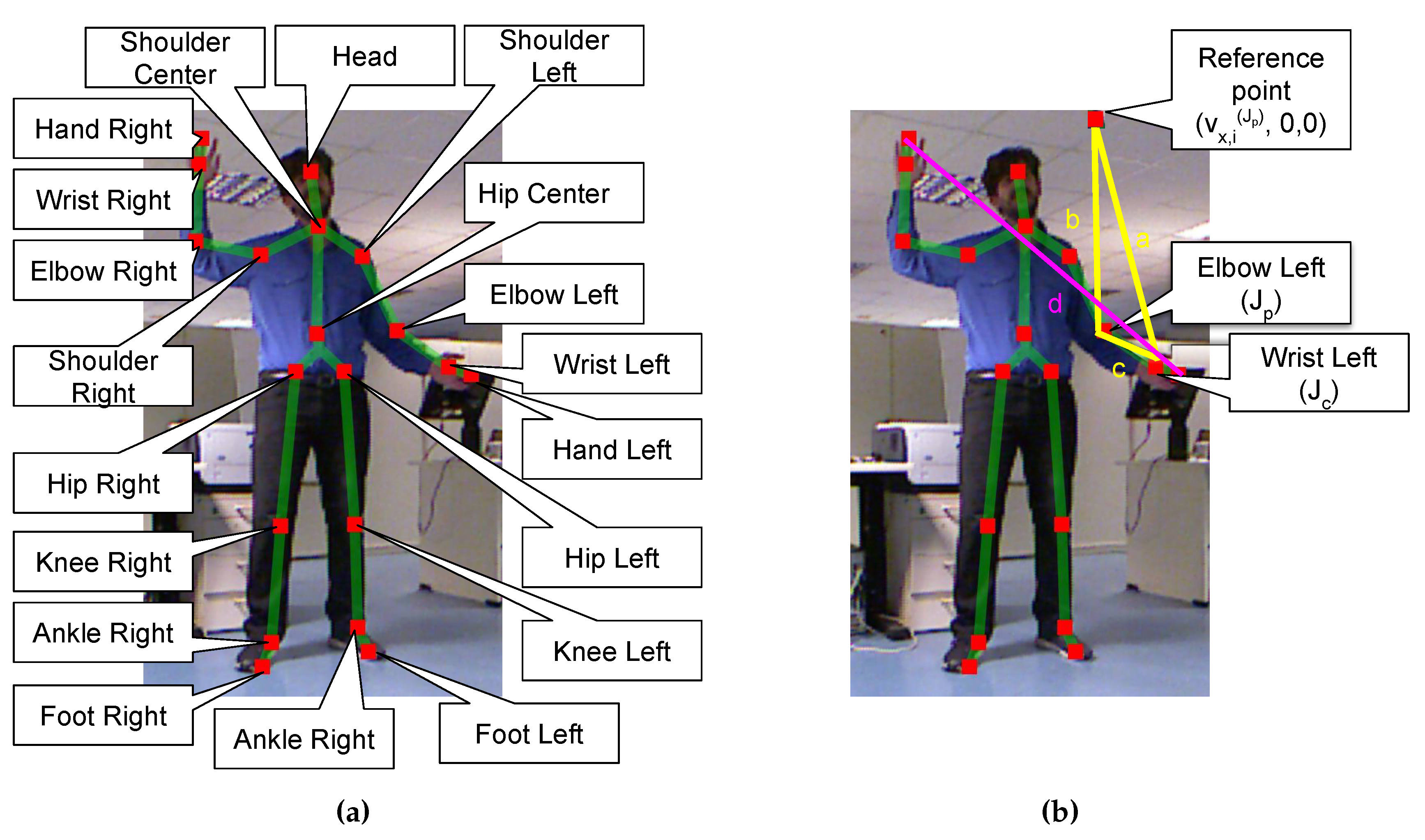

3.1. The Microsoft Kinect SDK

3.2. Gesture Recognition

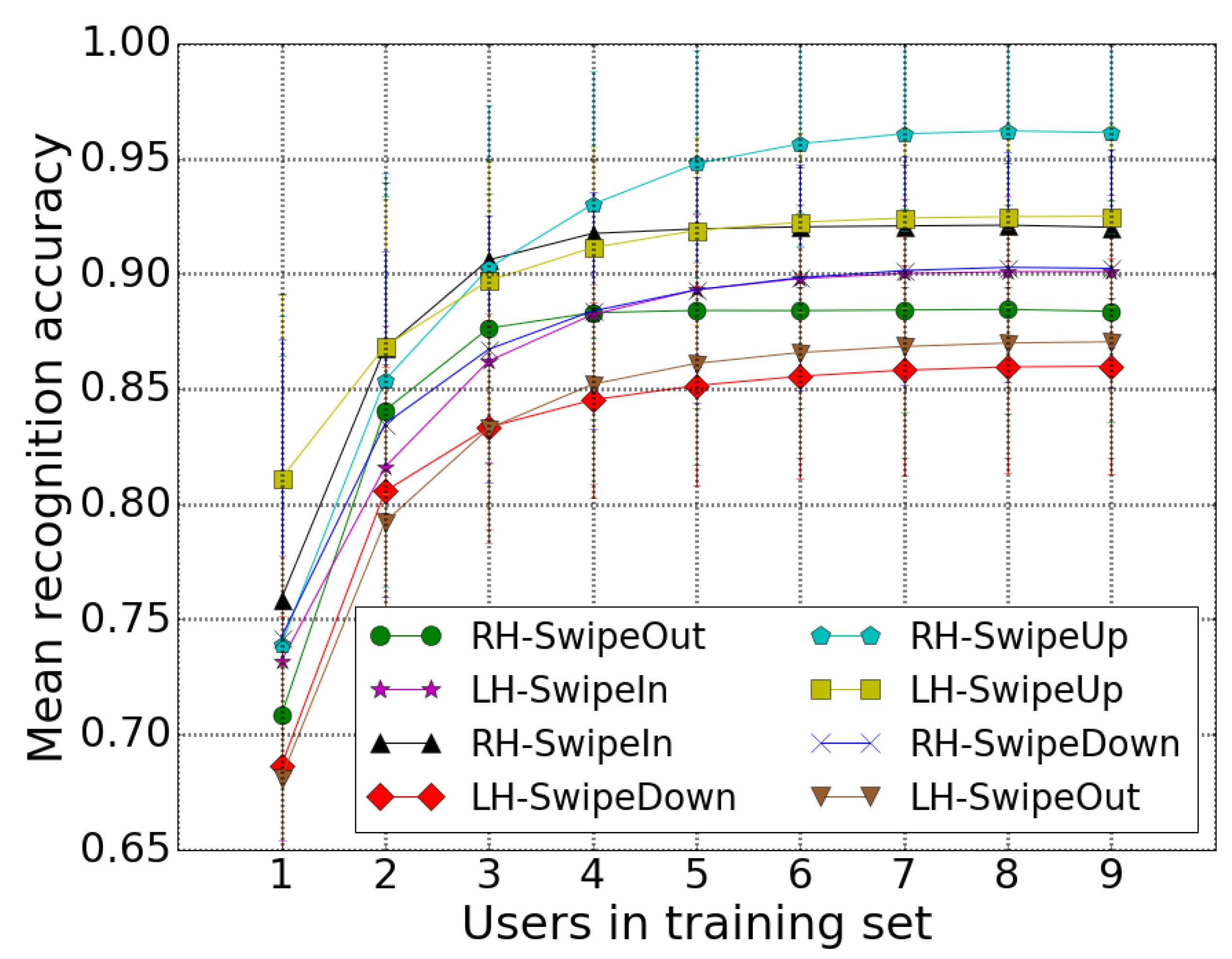

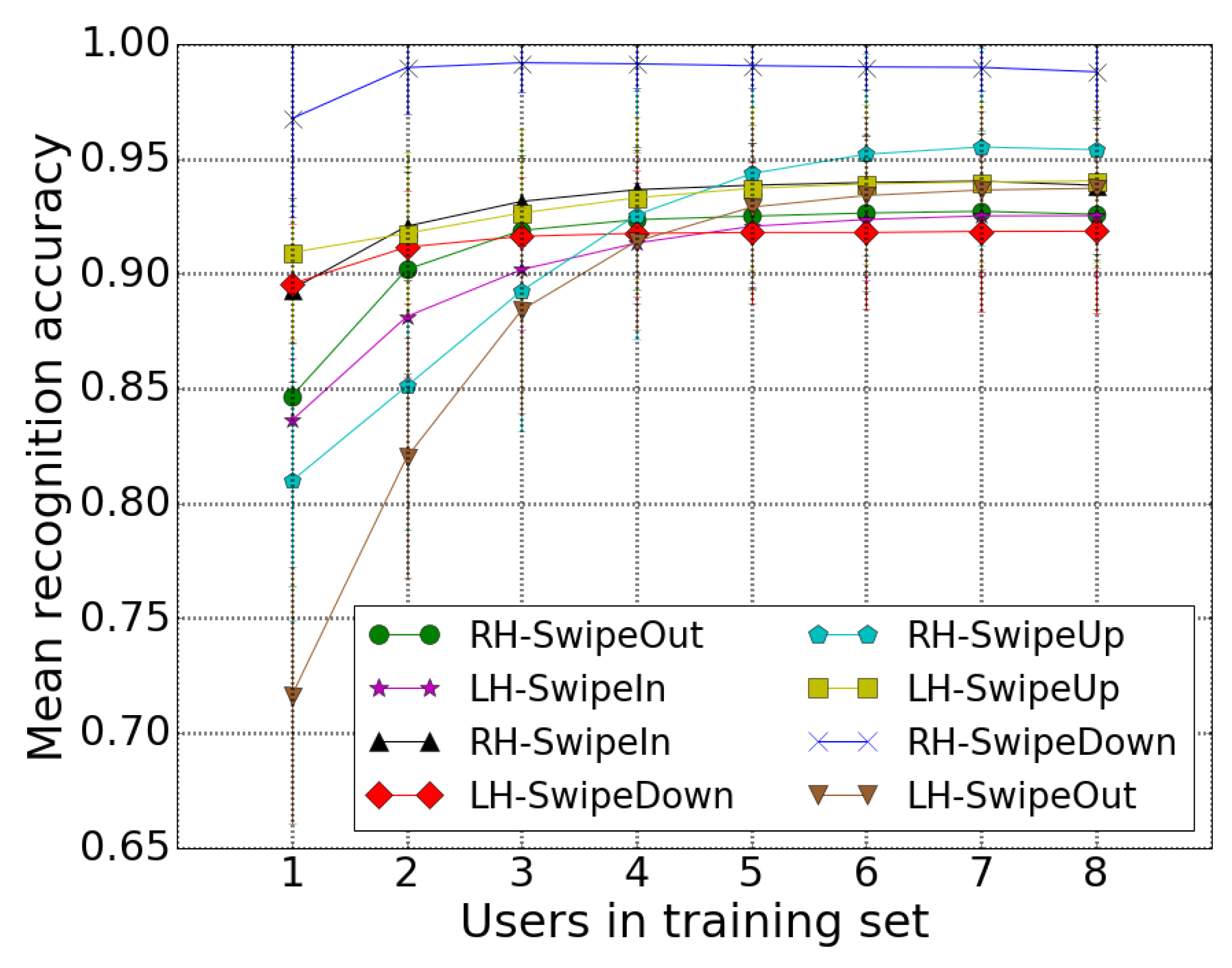

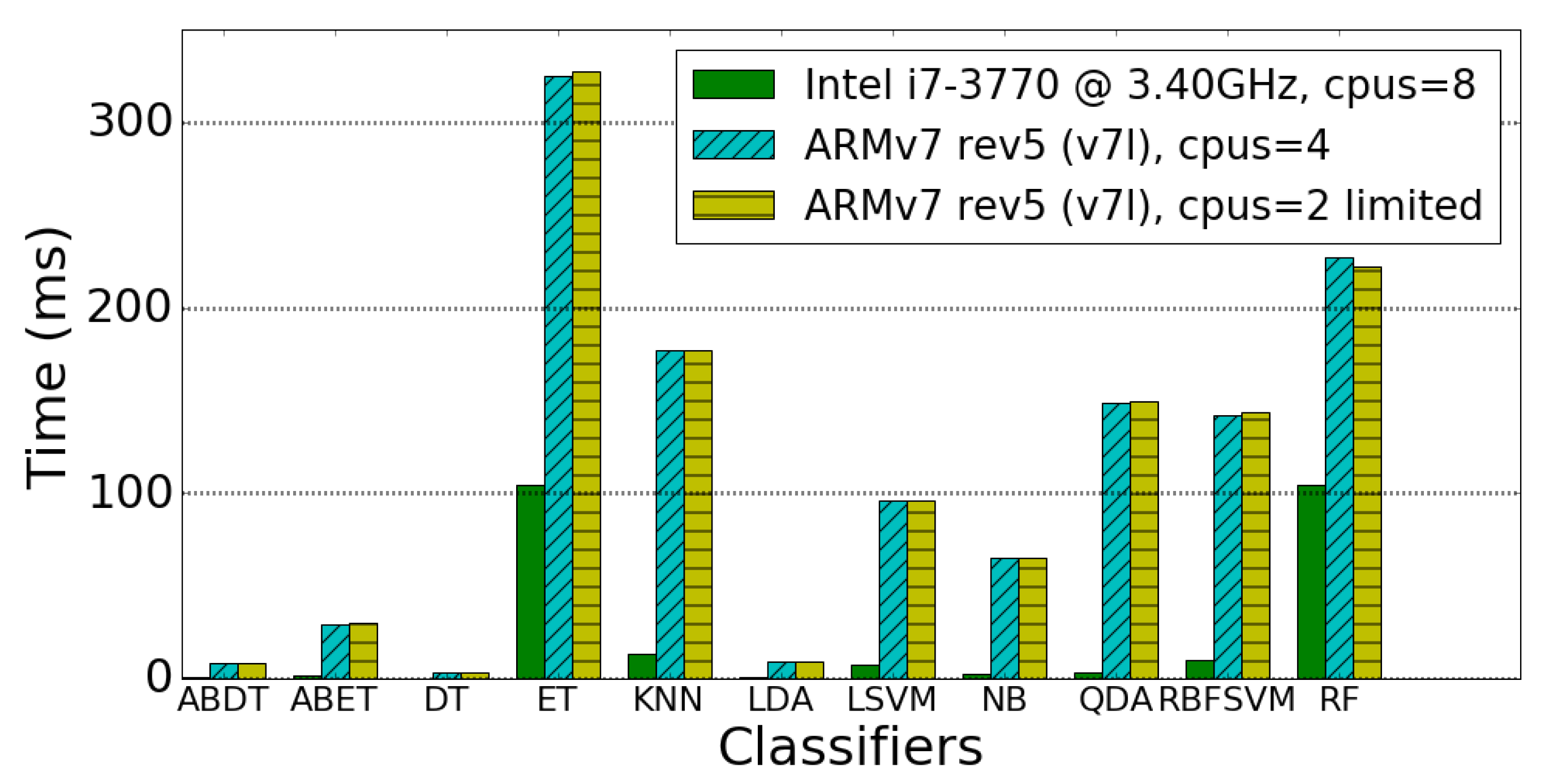

4. Experimental Results

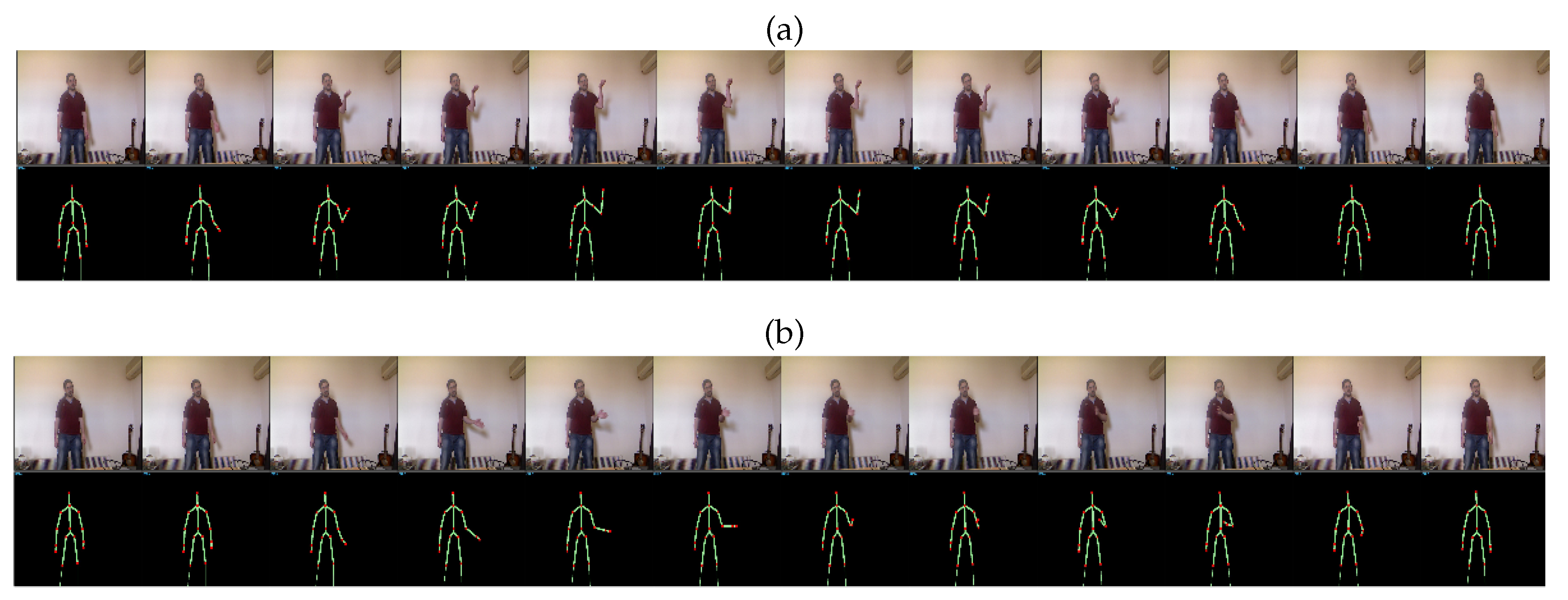

4.1. Dataset

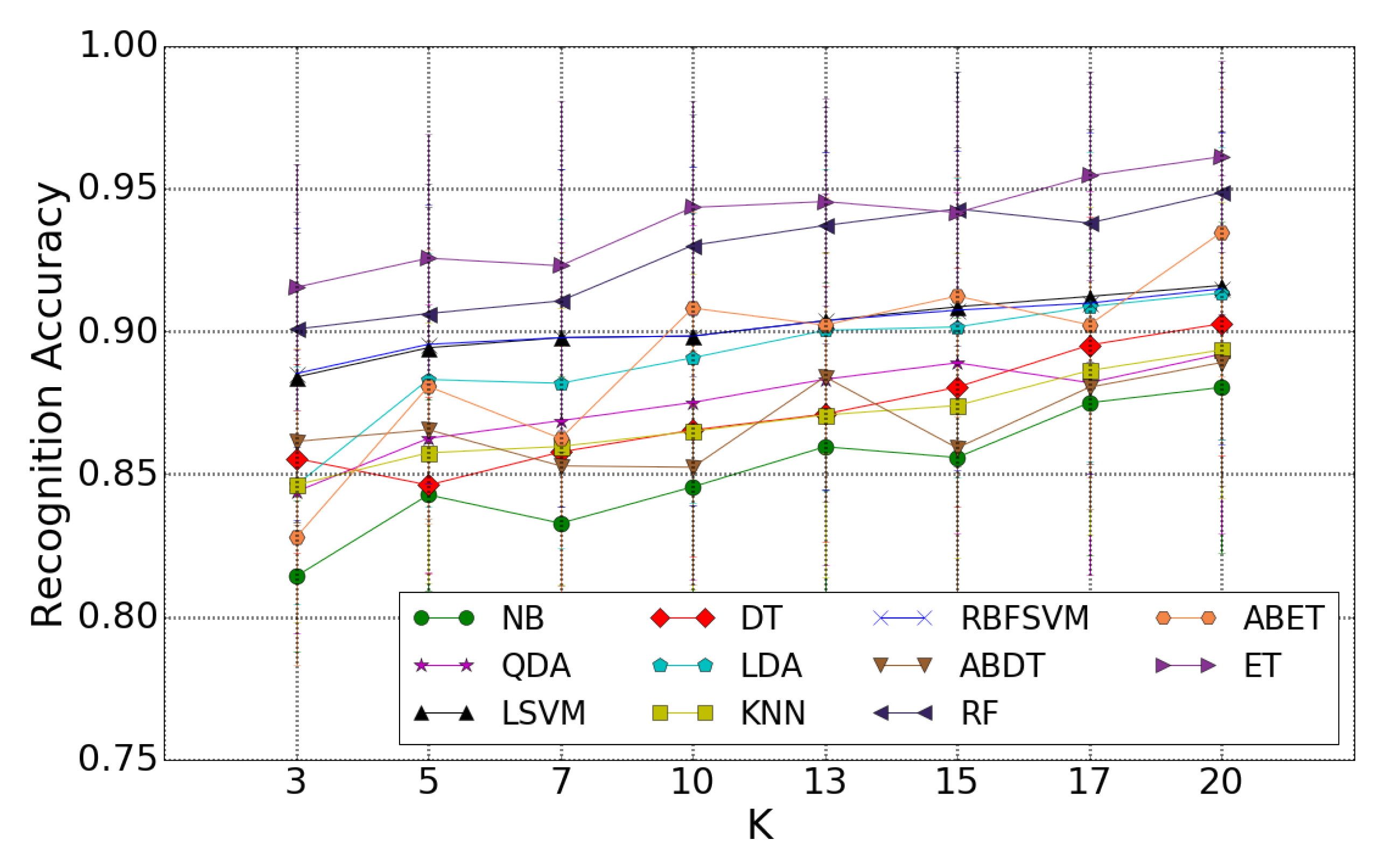

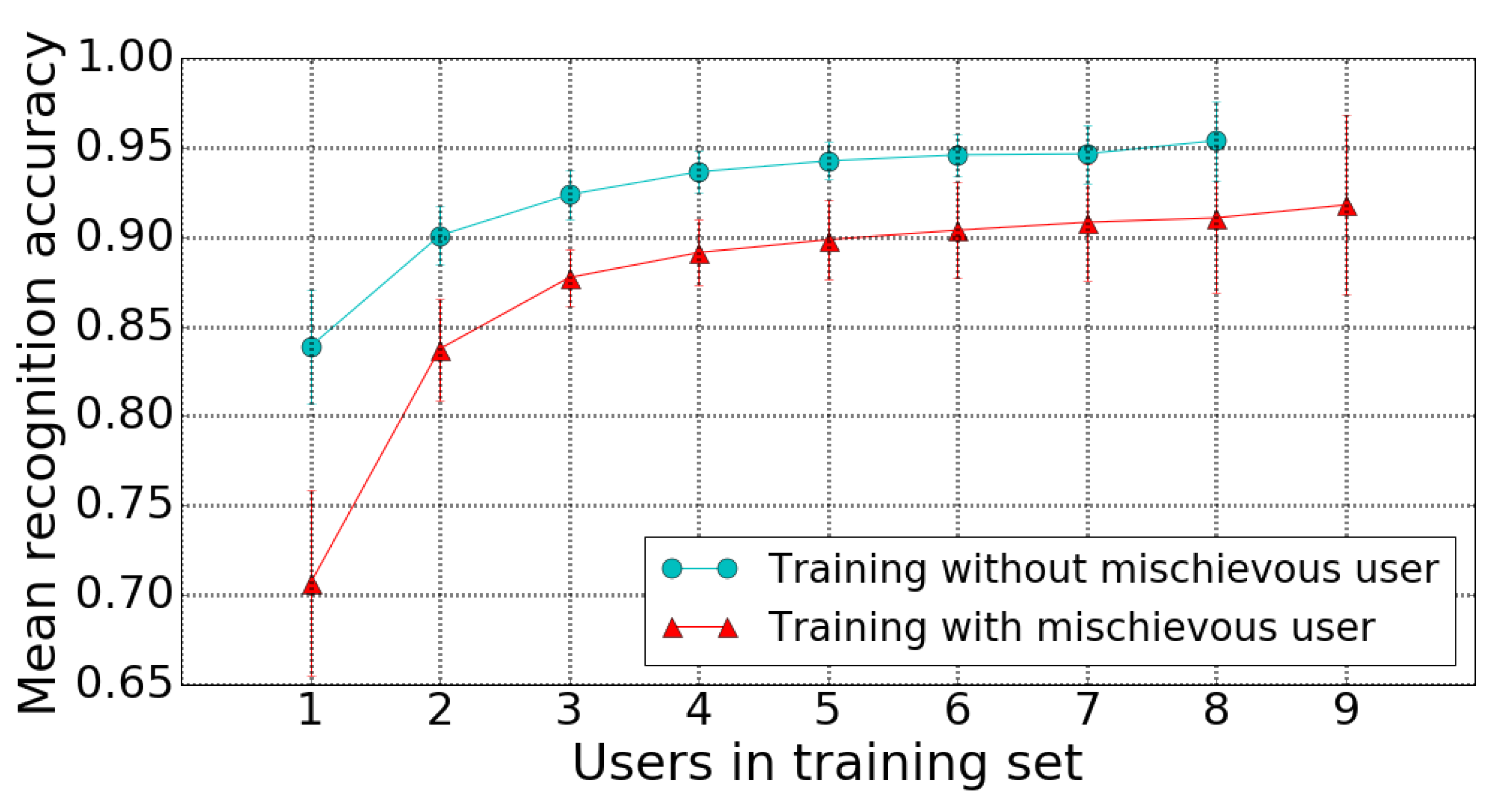

4.2. Experiments

4.3. Comparisons to the State-of-the-Art

5. Conclusions and Discussion

Author Contributions

Funding

Conflicts of Interest

References

- Bhattacharya, S.; Czejdo, B.; Perez, N. Gesture classification with machine learning using kinect sensor data. In Proceedings of the 2012 Third International Conference on Emerging Applications of Information Technology, Kolkata, India, 30 November–1 December 2012. [Google Scholar]

- Lai, K.; Konrad, J.; Ishwar, P. A gesture-driven computer interface using kinect. In Proceedings of the Southwest Symposium on Image Analysis and Interpretation (SSIAI), Santa Fe, NM, USA, 22–24 April 2012. [Google Scholar]

- Mangera, R.; Senekal, F.; Nicolls, F. Cascading neural networks for upper-body gesture recognition. In Proceedings of the International Conference on Machine Vision and Machine Learning, Prague, Czech Republic, 14–15 August 2014. [Google Scholar]

- Miranda, L.; Vieira, T.; Martinez, D.; Lewiner, T.; Vieira, A.W.; Campos, M.F. Real-time gesture recognition from depth data through key poses learning and decision forests. In Proceedings of the 25th IEEE Conference on Graphics, Patterns and Images (SIBGRAPI), Ouro Preto, Brazil, 22–25 August 2012. [Google Scholar]

- Ting, H.Y.; Sim, K.S.; Abas, F.S.; Besar, R. Vision-based human gesture recognition using Kinect sensor. In The 8th International Conference on Robotic, Vision, Signal Processing Power Applications; Springer: Singapore, 2014. [Google Scholar]

- Albrecht, T.; Muller, M. Dynamic Time Warping (DTW). In Information Retrieval for Music and Motion; Springer: Berlin/Heidelberg, Germany, 2009; pp. 70–83. [Google Scholar]

- Celebi, S.; Aydin, A.S.; Temiz, T.T.; Arici, T. Gesture Recognition using Skeleton Data with Weighted Dynamic Time Warping. In Proceedings of the VISAPP, Barcelona, Spain, 21–24 February 2013; pp. 620–625. [Google Scholar]

- Reyes, M.; Dominguez, G.; Escalera, S. Feature weighting in dynamic timewarping for gesture recognition in depth data. In Proceedings of the 2011 IEEE International Conference on Computer Vision Workshops (ICCV Workshops), Barcelona, Spain, 6–13 November 2011. [Google Scholar]

- Ribó, A.; Warcho, D.; Oszust, M. An Approach to Gesture Recognition with Skeletal Data Using Dynamic Time Warping and Nearest Neighbour Classifier. Int. J. Intell. Syst. Appl. 2016, 8, 1–8. [Google Scholar] [CrossRef]

- Ibanez, R.; Soria, Á.; Teyseyre, A.; Campo, M. Easy gesture recognition for Kinect. Adv. Eng. Softw. 2014, 76, 171–180. [Google Scholar] [CrossRef]

- Anuj, A.; Mallick, T.; Das, P.P.; Majumdar, A.K. Robust control of applications by hand-gestures. In Proceedings of the 5th Computer Vision Fifth National Conference on Pattern Recognition, Image Processing and Graphics (NCVPRIPG), Patna, India, 16–19 December 2015. [Google Scholar]

- Gonzalez-Sanchez, T.; Puig, D. Real-time body gesture recognition using depth camera. Electron. Lett. 2011, 47, 697–698. [Google Scholar] [CrossRef]

- Gu, Y.; Do, H.; Ou, Y.; Sheng, W. Human gesture recognition through a kinect sensor. In Proceedings of the IEEE International Conference on Robotics and Biomimetics (ROBIO), Guangzhou, China, 11–14 December 2012. [Google Scholar]

- Tran, C.; Trivedi, M.M. 3-D posture and gesture recognition for interactivity in smart spaces. IEEE Trans. Ind. Inform. 2012, 8, 178–187. [Google Scholar] [CrossRef]

- Yin, Y.; Davis, R. Real-time continuous gesture recognition for natural human-computer interaction. In Proceedings of the IEEE Symposium on Visual Languages and Human-Centric Computing (VL/HCC), Melbourne, Australia, 28 July–1 August 2014. [Google Scholar]

- Lin, C.; Wan, J.; Liang, Y.; Li, S.Z. Large-Scale Isolated Gesture Recognition Using a Refined Fused Model Based on Masked Res-C3D Network and Skeleton LSTM. In Proceedings of the 13th IEEE International Conference on Automatic Face and Gesture Recognition, Xi’an, China, 15–19 May 2018. [Google Scholar]

- Wang, H.; Wang, L. Modeling temporal dynamics and spatial configurations of actions using two-stream recurrent neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Mathe, E.; Mitsou, A.; Spyrou, E.; Mylonas, P. Arm Gesture Recognition using a Convolutional Neural Network. In Proceedings of the 2018 13th International Workshop on Semantic and Social Media Adaptation and Personalization (SMAP), Zaragoza, Spain, 6–7 September 2018. [Google Scholar]

- Zhang, L.; Zhu, G.; Shen, P.; Song, J.; Shah, S.A.; Bennamoun, M. Learning Spatiotemporal Features Using 3DCNN and Convolutional LSTM for Gesture Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3120–3128. [Google Scholar]

- Mitra, S.; Acharya, T. Gesture recognition: A survey. IEEE Trans. Syst. Man Cybern. Part C Appl. Rev. 2007, 37, 311–324. [Google Scholar] [CrossRef]

- Wang, S.B.; Quattoni, A.; Morency, L.P.; Demirdjian, D.; Darrell, T. Hidden conditional random fields for gesture recognition. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, New York, NY, USA, 17–22 June 2006; Volume 2, pp. 1521–1527. [Google Scholar]

- Zhang, Z. Microsoft kinect sensor and its effect. IEEE Multimedia 2012, 19, 4–10. [Google Scholar] [CrossRef]

- Shotton, J.; Sharp, T.; Kipman, A.; Fitzgibbon, A.; Finocchio, M.; Blake, A.; Cook, M.; Moore, R. Real-time human pose recognition in parts from single depth images. Commun. ACM 2013, 56, 116–124. [Google Scholar] [CrossRef]

- Sheng, J. A Study of Adaboost in 3d Gesture Recognition; Department of Computer Science, University of Toronto: Toronto, ON, Canada, 2003. [Google Scholar]

- Rubine, D. Specifying Gestures by Example; ACM: New York, NY, USA, 1991; Volume 25, pp. 329–337. [Google Scholar]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Vapnik, V.N. Statistical Learning Theory; Wiley: Hoboken, NJ, USA, 1998. [Google Scholar]

- Cover, M.T.; Hart, E.P. Nearest neighbor pattern classification. IEEE Trans. Inf. Theory 1967, 13, 21–27. [Google Scholar] [CrossRef]

- Domingos, P.; Pazzani, M. On the optimality of the simple Bayesian classifier under zero-one loss. Mach. Learn. 1997, 29, 103–130. [Google Scholar] [CrossRef]

- McLachlan, G. Discriminant Analysis and Statistical Pattern Recognition; Wiley: Hoboken, NJ, USA, 2004. [Google Scholar]

- Breiman, L.; Friedman, J.; Stone, C.J.; Olshen, R.A. Classification and Regression Trees; Taylor & Francis: Abingdon, UK, 1984. [Google Scholar]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Geurts, P.; Ernst, D.; Wehenkel, L. Extremely randomized trees. Mach. Learn. 2006, 63, 3–42. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R.E. A decision-theoretic generalization of on-line learning and an application to boosting. In Computational Learning Theory; Springer: Berlin/Heidelberg, Germany, 1995; pp. 23–37. [Google Scholar]

- Li, W.; Zhang, Z.; Liu, Z. Action recognition based on a bag of 3d points. In Proceedings of the International Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, San Francisco, CA, USA, 13–18 June 2010. [Google Scholar]

- Arici, T.; Celebi, S.; Aydin, A.S.; Temiz, T.T. Robust gesture recognition using feature pre-processing and weighted dynamic time warping. Multimedia Tools Appl. 2014, 3045–3062. [Google Scholar] [CrossRef]

- Sfikas, G.; Akasiadis, C.; Spyrou, E. Creating a Smart Room using an IoT approach. In Proceedings of the Workshop on AI and IoT (AI-IoT), 9th Hellenic Conference on Artificial Intelligence, Thessaloniki, Greece, 18–20 May 2016. [Google Scholar]

- Pierris, G.; Kothris, D.; Spyrou, E.; Spyropoulos, C. SYNAISTHISI: An enabling platform for the current internet of things ecosystem. In Proceedings of the Panhellenic Conference on Informatics, Athens, Greece, 1–3 October 2015; ACM: New York, NY, USA, 2015. [Google Scholar]

- Peng, X.; Wang, L.; Cai, Z.; Qiao, Y. Action and gesture temporal spotting with super vector representation. In European Conference on Computer Vision (ECCV); Springer: Cham, Switzerland, 2014. [Google Scholar]

- Camgoz, N.C.; Hadfield, S.; Koller, O.; Bowden, R. Using convolutional 3d neural networks for user-independent continuous gesture recognition. In Proceedings of the 23rd International Conference on Pattern Recognition (ICPR), Cancun, Mexico, 4–8 December 2016. [Google Scholar]

- Hachaj, T.; Ogiela, M.R. Full body movements recognition–unsupervised learning approach with heuristic R-GDL method. Digit. Signal Process. 2015, 46, 239–252. [Google Scholar] [CrossRef]

| Feature Name | Frames Involved | Equation |

|---|---|---|

| Spatial angle | ||

| Spatial angle | ||

| Spatial angle | ||

| Total vector angle | ||

| Squared total vector angle | ||

| Total vector displacement | ||

| Total displacement | ||

| Maximum displacement | ||

| Bounding box diagonal length | ||

| Bounding box angle |

| Symbol | Definition |

|---|---|

| J | a given joint |

| child/parent joint of J, respectively | |

| a given video frame, | |

| vector of 3D coordinates of J at | |

| the 3D coordinates of | |

| the set if all joints | |

| the set of all vectors | |

| a 3D bounding box of a set of vectors | |

| the lengths of the sides of |

| Classifier | Parameters |

|---|---|

| ABDT | , |

| ABET | , |

| DT | , |

| ET | , , |

| KNN | , , |

| LSVM | |

| QDA | |

| RBFSVM | , |

| RF | , , |

| User 1 | User 2 | User 3 | User 4 | User 5 | User 6 | User 7 | User 8 | User 9 | User 10 | |

|---|---|---|---|---|---|---|---|---|---|---|

| LH-SwipeDown | 0.76 | 0.83 | 1.00 | 0.82 | 1.00 | 0.80 | 1.00 | 1.00 | 1.00 | 0.96 |

| LH-SwipeIn | 0.38 | 0.92 | 0.84 | 1.00 | 1.00 | 0.92 | 1.00 | 1.00 | 1.00 | 1.00 |

| LH-SwipeOut | 0.61 | 0.93 | 0.86 | 1.00 | 1.00 | 0.89 | 1.00 | 1.00 | 0.97 | 1.00 |

| LH-SwipeUp | 0.69 | 0.90 | 1.00 | 0.84 | 1.00 | 0.83 | 1.00 | 1.00 | 0.97 | 0.96 |

| RH-SwipeDown | 0.78 | 1.00 | 0.95 | - | 1.00 | 1.00 | 0.92 | 1.00 | 0.87 | 1.00 |

| RH-SwipeIn | 0.64 | 1.00 | 0.67 | - | 1.00 | 1.00 | 1.00 | 1.00 | 0.89 | 0.96 |

| RH-SwipeOut | 0.61 | 1.00 | 0.80 | - | 1.00 | 1.00 | 0.95 | 1.00 | 1.00 | 0.95 |

| RH-SwipeUp | 0.40 | 1.00 | 0.95 | - | 1.00 | 1.00 | 1.00 | 0.96 | 1.00 | |

| Average | 0.62 | 0.94 | 0.88 | 0.92 | 1.00 | 0.92 | 0.99 | 1.00 | 0.96 | 0.97 |

| Test I | Test II | Test III | Avg. | ||||||

|---|---|---|---|---|---|---|---|---|---|

| [35] | Our | [35] | Our | [35] | Our | [4] | [35] | Our | |

| AS1 | 89.50 | 85.36 | 93.30 | 91.39 | 72.90 | 89.28 | 93.50 | 85.23 | 88.68 |

| AS2 | 89.00 | 72.90 | 92.90 | 84.40 | 71.90 | 73.20 | 52.00 | 84.60 | 76.84 |

| AS3 | 96.30 | 93.69 | 96.30 | 98.81 | 79.20 | 97.47 | 95.40 | 90.60 | 96.66 |

| Avg. | 91.60 | 83.98 | 94.17 | 91.53 | 74.67 | 86.65 | 80.30 | 84.24 | 87.39 |

| [7] | [3] | [9] | Our | |

|---|---|---|---|---|

| Acc. (%) | 96.7 | 95.6 | 89.4 | 100.0 |

| RotationDB | RelaxedDB | Rotation/RelaxedDB | ||||

|---|---|---|---|---|---|---|

| [36] | Our | [36] | Our | [36] | Our | |

| Acc. (%) | n/a | 98.01 | 97.13 | 98.74 | 96.64 | 97.98 |

| [18] | Our | |

|---|---|---|

| Acc. (%) | 91.0 | 96.0 |

| Ref. | Approach | Gestures | Acc.(s.) | Comments/Drawbacks |

|---|---|---|---|---|

| [11] | 2D projected joint trajectories, rules and HMMs | Swipe(L,R), Circle, Hand raise, Push | 95.4 (5) | heuristic, not scalable rules, different features for different kinds of moves |

| [1] | 3D joints, rules, SVM/DT | Neutral, T-shape, T-shape tilt/pointing(L,R) | 95.0 (3) | uses an exemplar gesture to avoid segmentation |

| [7] | Norm. 3D joints, Weig. DTW | Push Up, Pull Down, Swipe | 96.7 (n/a) | very limited evaluation |

| [12] | Head/hands detection, GHMM | Up/Down/Left/Stretch(L, R, B), Fold(B) | 98.0 (n/a) | relies on head/hands detection |

| [13] | clustered joints, HMM | Come, Go, Sit, Rise, Wave(L) | 85.0 (2) | very limited evaluation, fails at higher speeds |

| [10] | HMM, DTW | Circle, Elongation, Punch, Swim, Swipe(L, R), Smash | 96.0 (4) | limited evaluation |

| [2] | Differences to reference joint, KNN | Swipe(L, R, B), Push(L, R, B), Clapping in/out | 97.2 (20) | sensitive to temporal misalignments |

| [3] | 3D joints, velocities, ANN | Swipe(L,R), Push Up(L,R), Pull Down(L,R), Wave(L,R) | 95.6 (n/a) | not scalable for gestures that use both hands |

| [4] | Pose sequences, Decision Forests | Open Arms, Turn Next/Prev. Page, Raise/Lower Right Arm, Good Bye, Jap. Greeting, Put Hands Up Front/Laterally | 91.5 (10) | pose modeling requires extra effort, limited to gestures composed of distinctive key poses |

| [8] | 3D joints, feature weighted DTW | Jumping, Bending, Clapping, Greeting, Noting | 68.0 (10) | detected begin/end of gestures |

| [9] | 3D joints, DTW and KNN | Swipe(L, R), Push Up(L, R), Pull Down(L, R), Wave(L, R) | 89.4 (n/a) | relies on heuristically determined parameters |

| [5] | 4D quaternions, SVM | Swipe(L, R), Clap, Waving, Draw circle/tick | 98.9 (5) | limited evaluation |

| [14] | Head/hands detection, kinematic constraints, GMM | Punch(L, R), Clap, Wave(L, R), Dumbell Curls(L, R) | 93.1 (5) | relies on head/hands detection |

| [15] | Motion and HOG features of hands, hierarchical HMMs | Swipe(L, R), Circle, Wave, Point, Palm Forward, Grab | 66.0 (10) | below average performance on continuous gestures |

| our | novel set of features, ET | Swipe Up/Down/In/Out(L,R) | 95.0 (10) | scalable, does not use heuristics |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Paraskevopoulos, G.; Spyrou, E.; Sgouropoulos, D.; Giannakopoulos, T.; Mylonas, P. Real-Time Arm Gesture Recognition Using 3D Skeleton Joint Data. Algorithms 2019, 12, 108. https://doi.org/10.3390/a12050108

Paraskevopoulos G, Spyrou E, Sgouropoulos D, Giannakopoulos T, Mylonas P. Real-Time Arm Gesture Recognition Using 3D Skeleton Joint Data. Algorithms. 2019; 12(5):108. https://doi.org/10.3390/a12050108

Chicago/Turabian StyleParaskevopoulos, Georgios, Evaggelos Spyrou, Dimitrios Sgouropoulos, Theodoros Giannakopoulos, and Phivos Mylonas. 2019. "Real-Time Arm Gesture Recognition Using 3D Skeleton Joint Data" Algorithms 12, no. 5: 108. https://doi.org/10.3390/a12050108

APA StyleParaskevopoulos, G., Spyrou, E., Sgouropoulos, D., Giannakopoulos, T., & Mylonas, P. (2019). Real-Time Arm Gesture Recognition Using 3D Skeleton Joint Data. Algorithms, 12(5), 108. https://doi.org/10.3390/a12050108