Facial Expression Recognition Based on Auxiliary Models

Abstract

1. Introduction

2. Related Work

3. The Proposed Method

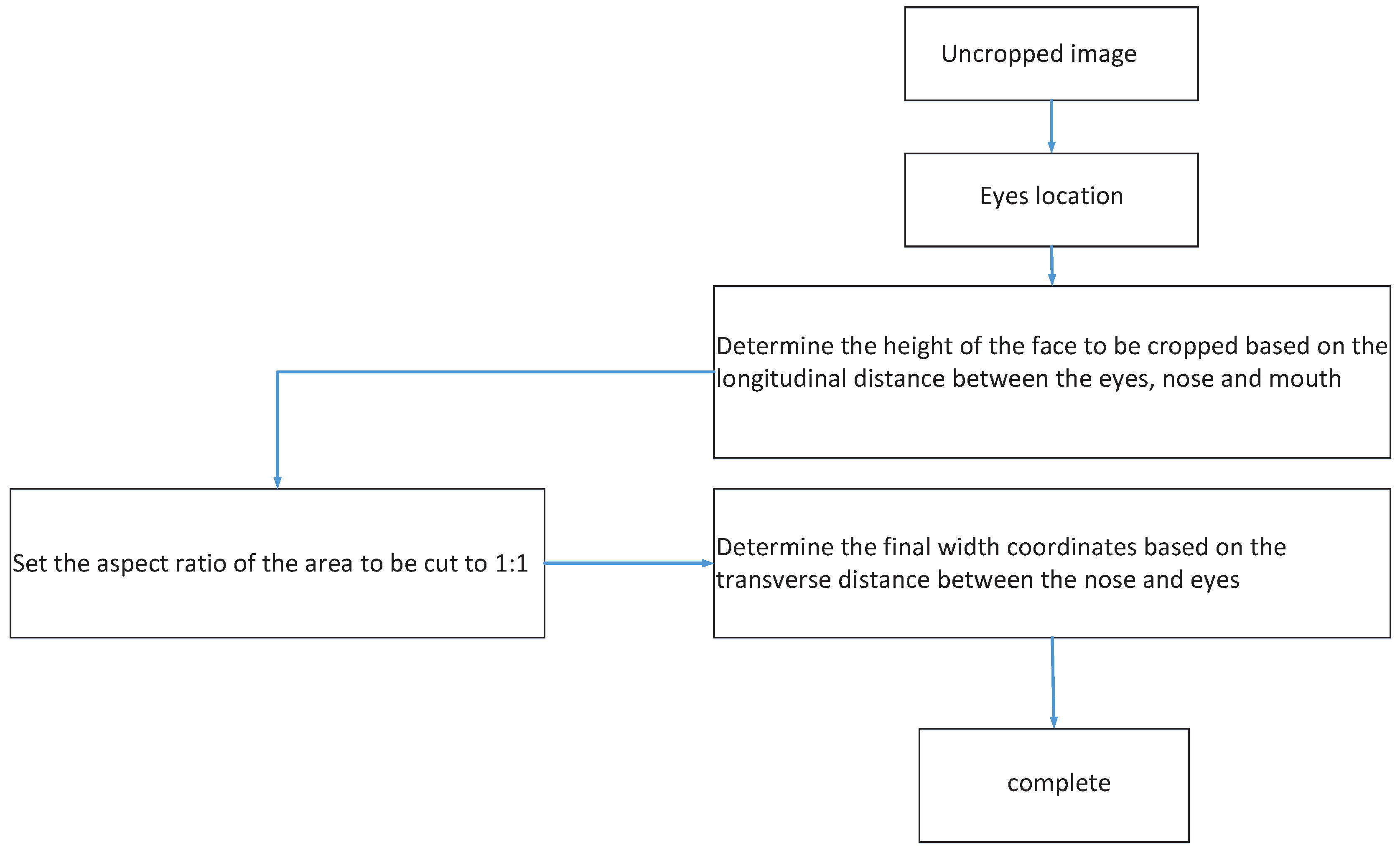

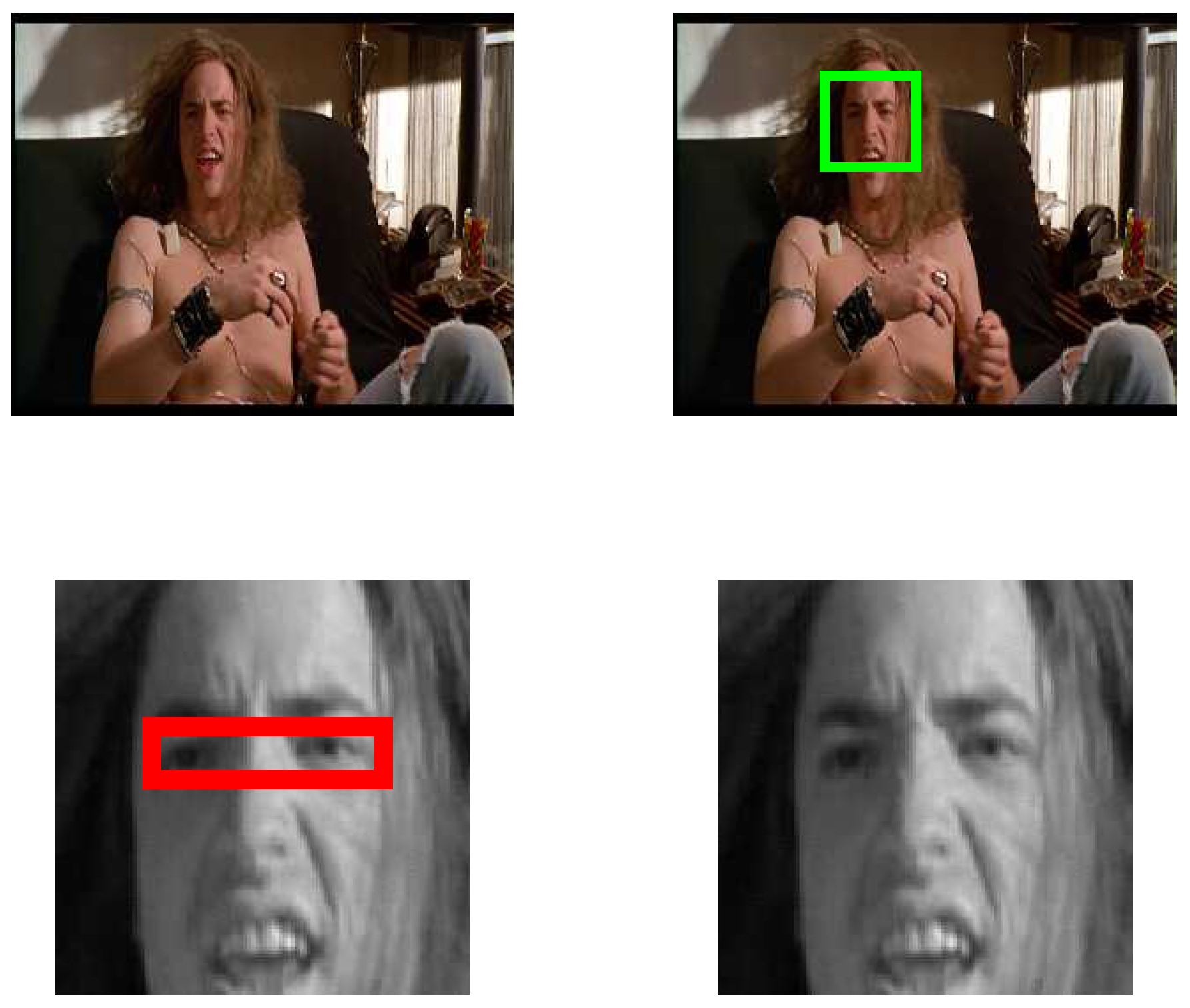

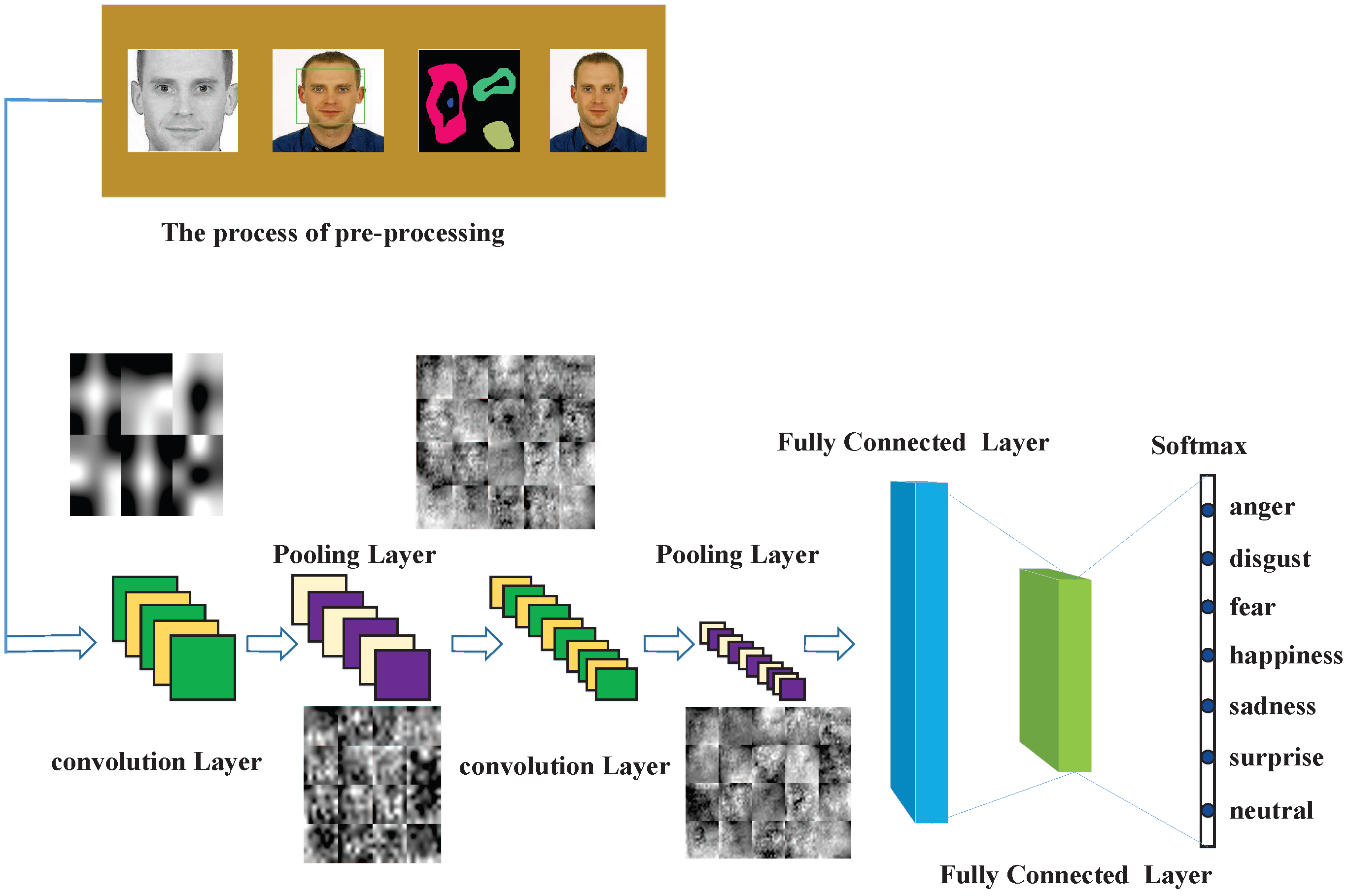

3.1. Data Pre-Pocessing

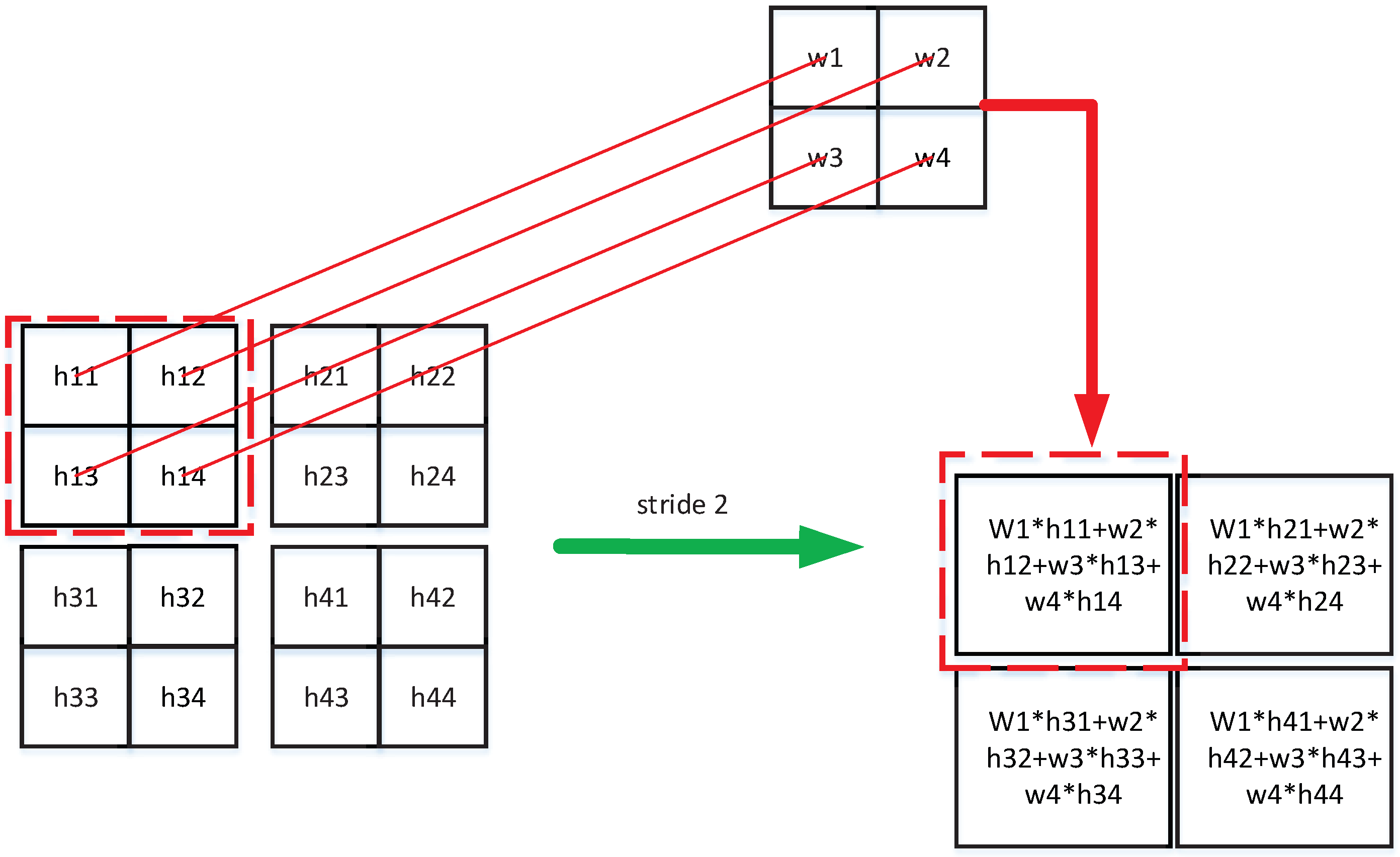

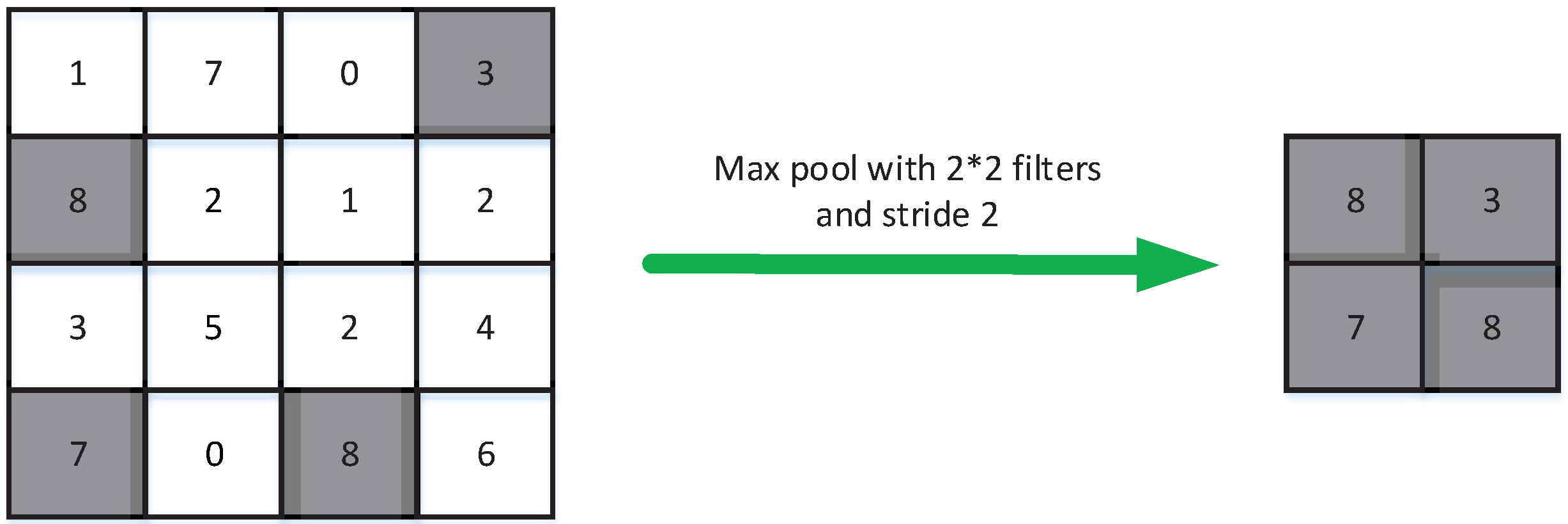

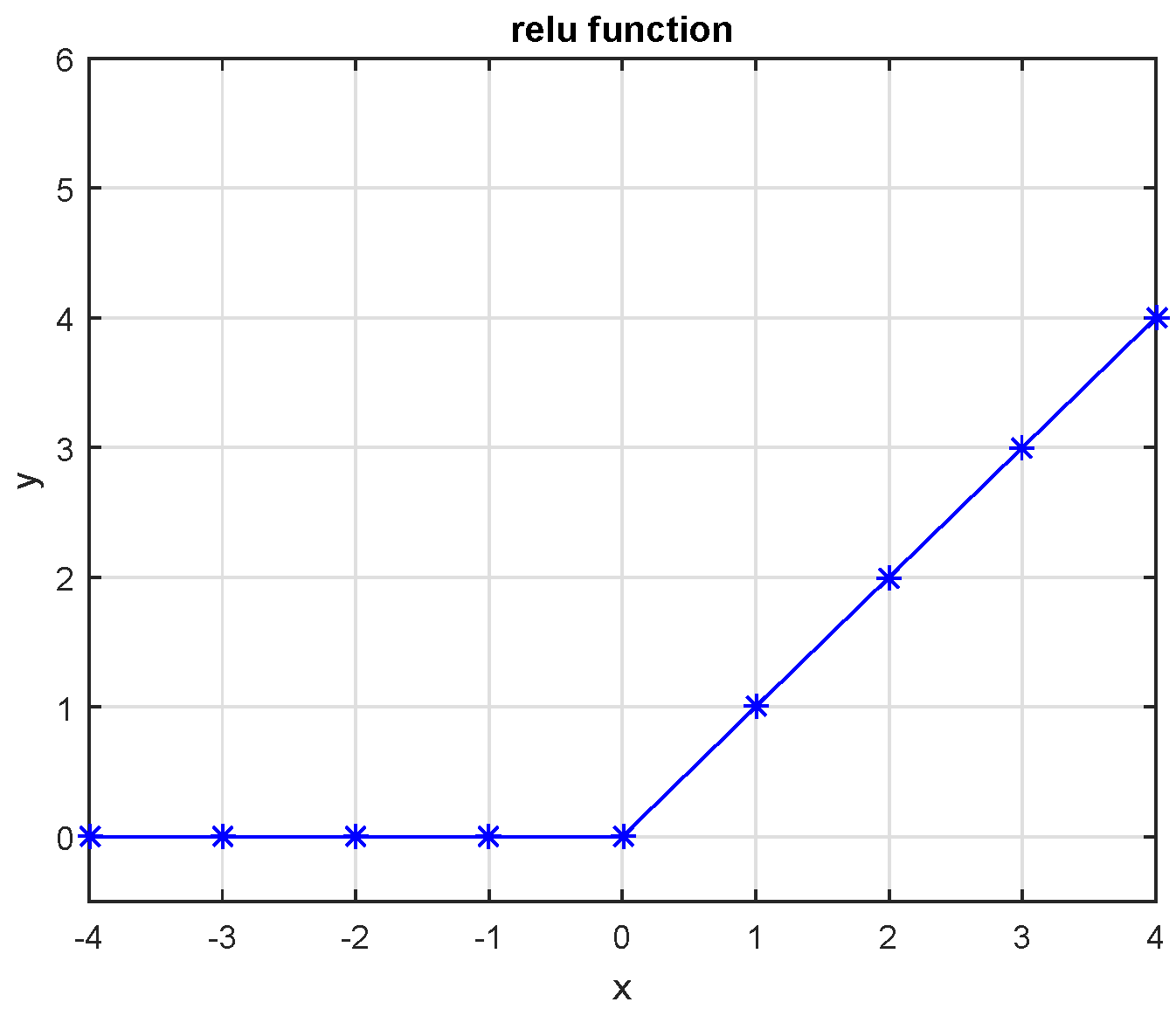

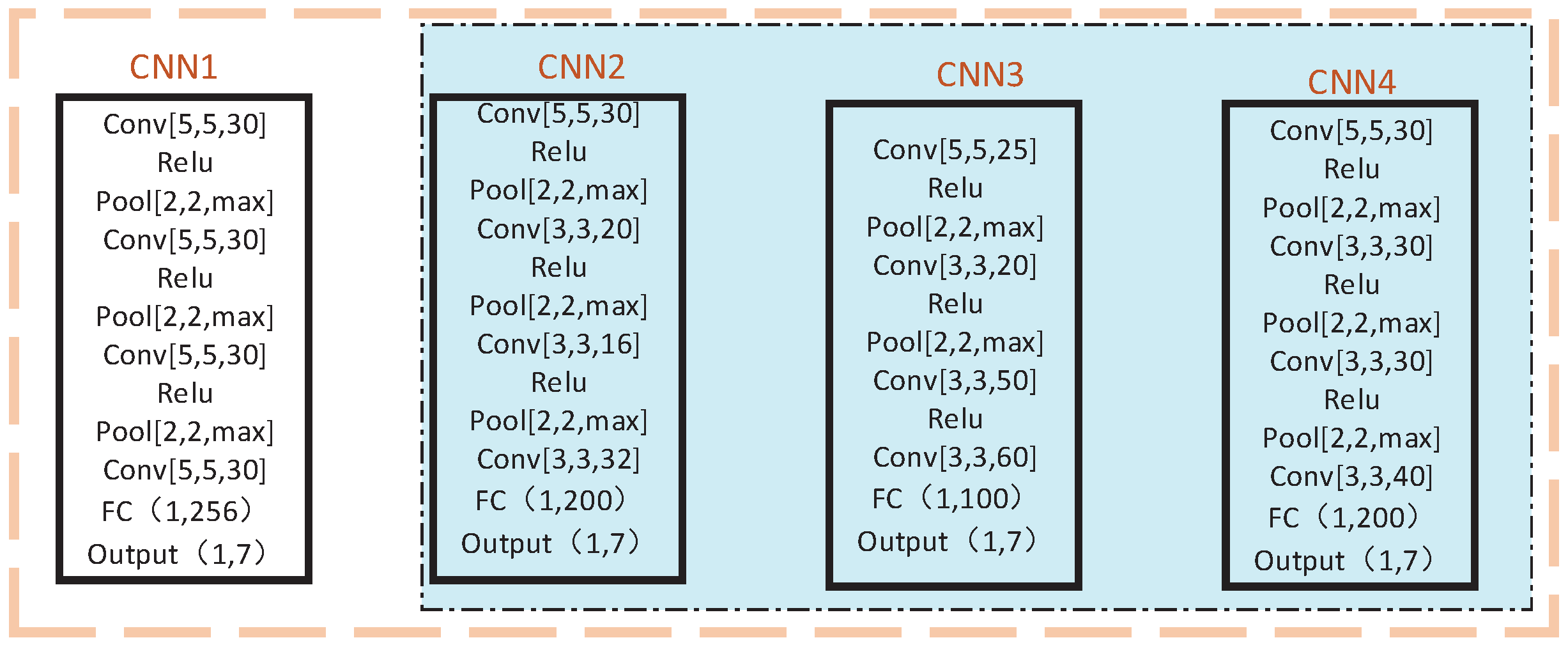

3.2. Convolutional Neural Networks

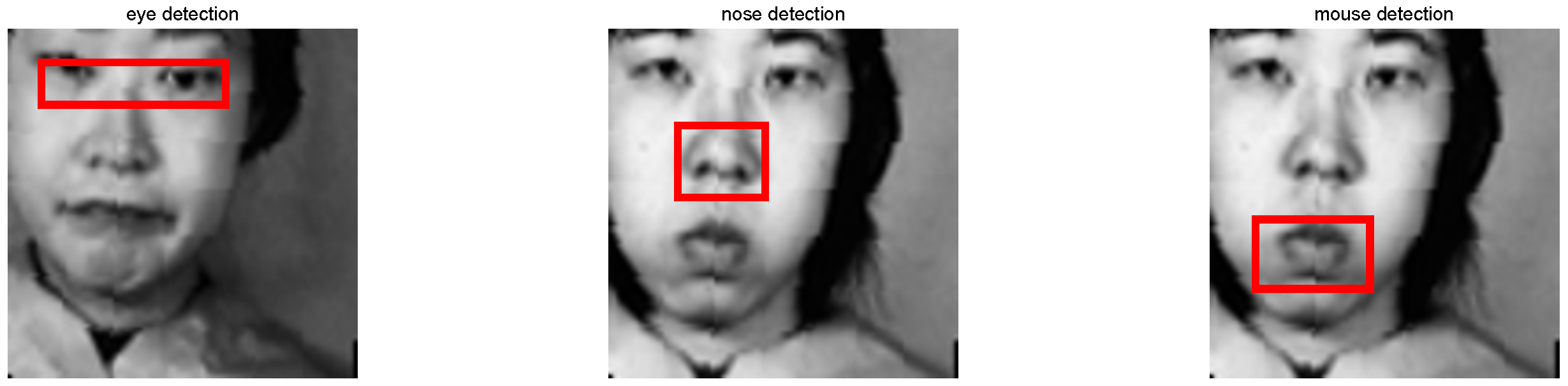

3.3. The Acquisition of Some Important Components of the Face

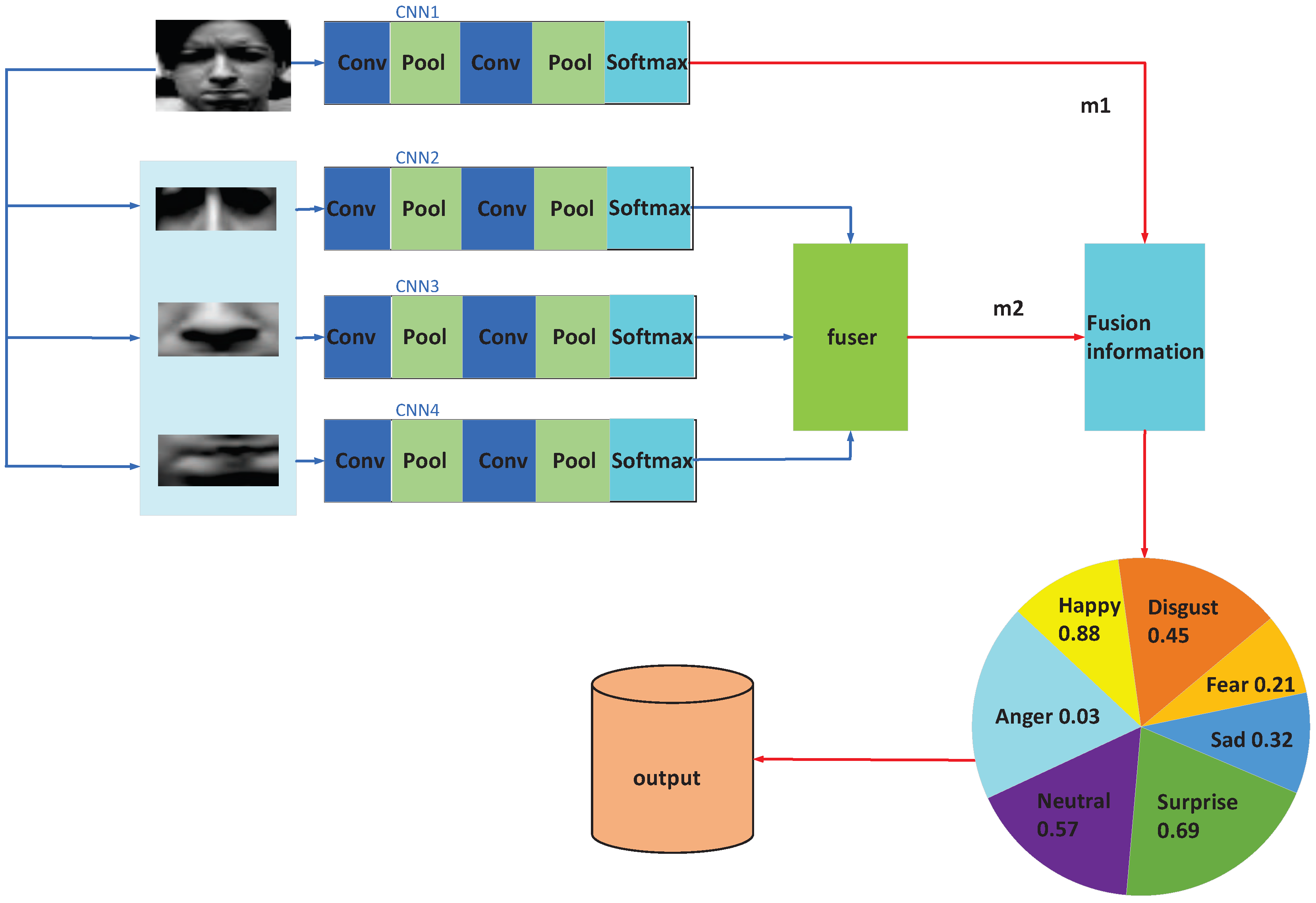

3.4. New Structure

4. Experiments

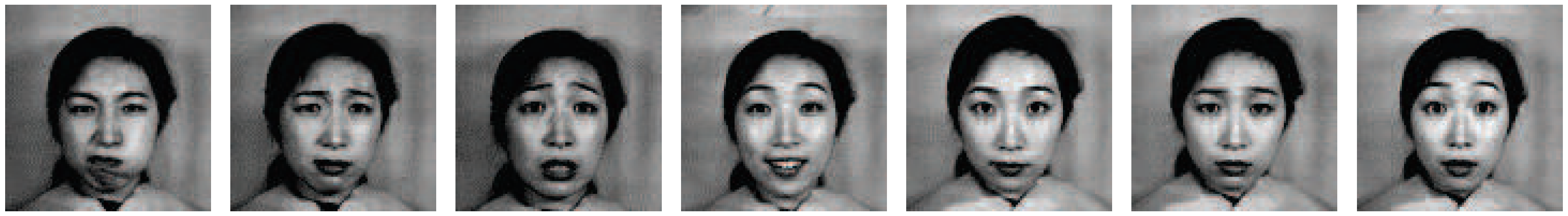

4.1. Database

4.2. Data Augmentation

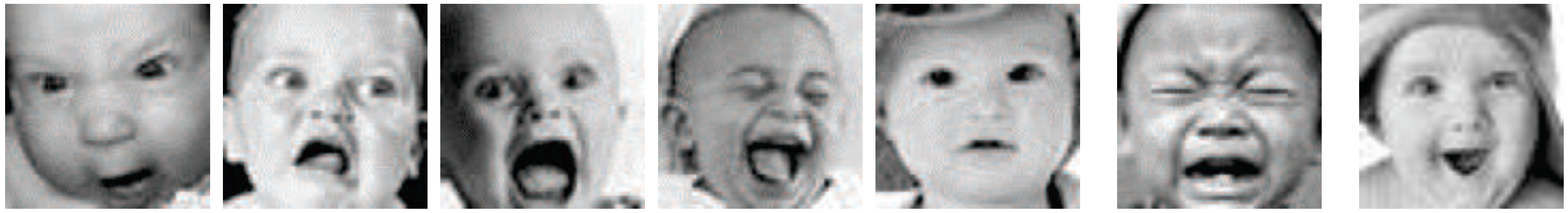

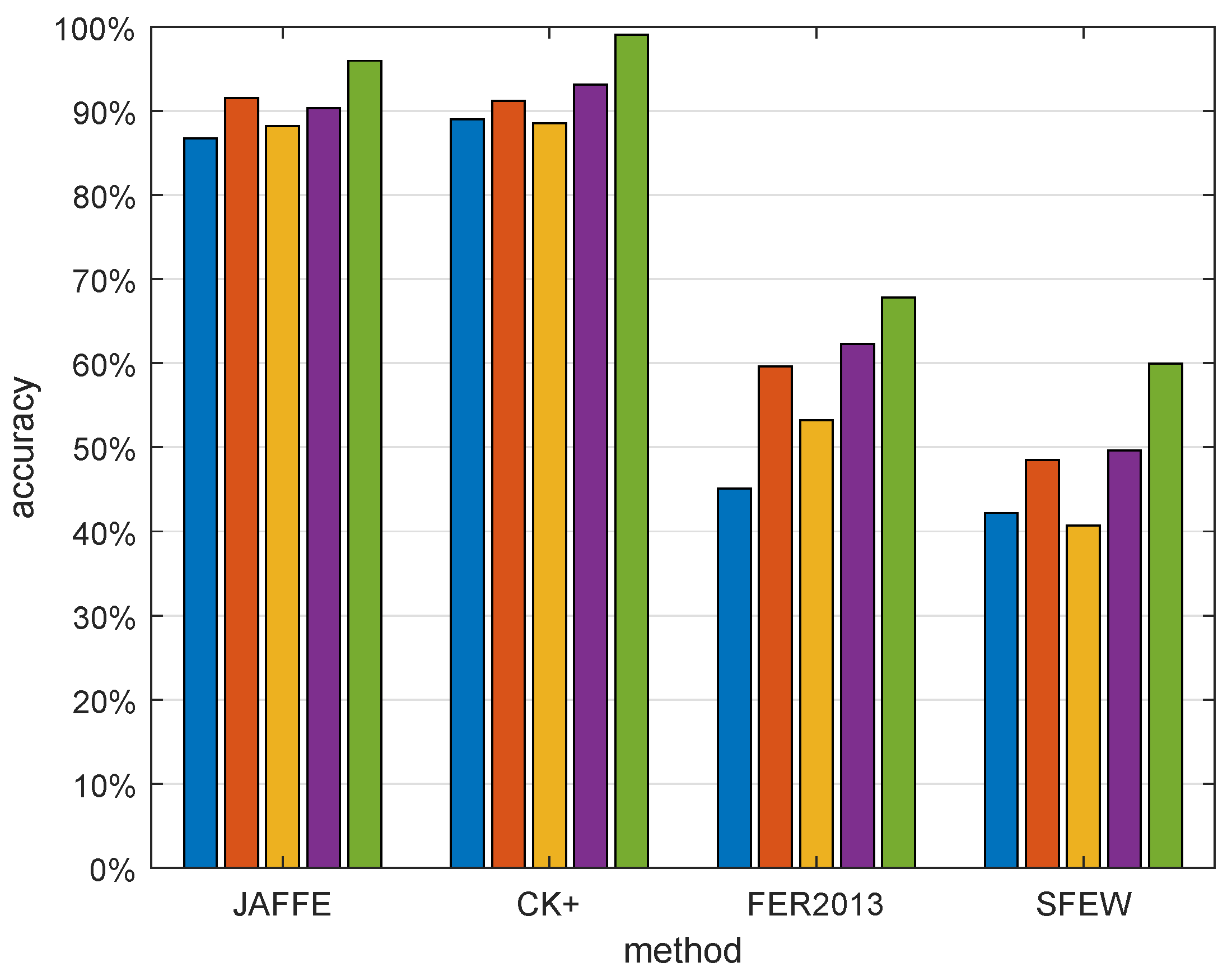

4.3. Results

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Mehrabian, A. Communication without words. Psychol. Today 1968, 2, 193–200. [Google Scholar]

- Darwin, C.; Ekman, P. Expression of the emotions in man and animals. Portable Darwin 2003, 123, 146. [Google Scholar]

- Ekman, P.; Friesen, W.V. Constants across cultures in the face and emotion. J. Pers. Soc. Psychol. 1971, 17, 124–129. [Google Scholar] [CrossRef]

- Alshamsi, H.; Meng, H.; Li, M. Real time facial expression recognition app development on mobile phones. In Proceedings of the International Conference on Natural Computation, Changsha, China, 13–15 August 2016. [Google Scholar]

- Jatmiko, W.; Nulad, W.P.; Matul, I.E.; Setiawan, I.M.A.; Mursanto, P. Heart beat classification using wavelet feature based on neural network. WSEAS Trans. Syst. 2011, 10, 17–26. [Google Scholar]

- Kumar, B.V.; Ramakrishnan, A.G. Machine Recognition of Printed Kannada Text. Lect. Notes Comput. Sci. 2002, 2423, 37–48. [Google Scholar]

- Ying, Z.; Fang, X. Combining LBP and Adaboost for facial expression recognition. In Proceedings of the International Conference on Signal Processing, Beijing, China, 26–29 October 2008. [Google Scholar]

- Gu, W.; Xiang, C.; Venkatesh, Y.V.; Huang, D.; Lin, H. Facial expression recognition using radial encoding of local Gabor features and classifier synthesis. Pattern Recognit. 2012, 45, 80–91. [Google Scholar] [CrossRef]

- Ming, Y.U.; Quan-Sheng, H.U.; Yan, G.; Xue, C.H.; Yang, Y.U. Facial expression recognition based on LGBP features and sparse representation. Comput. Eng. Des. 2013, 34, 1787–1791. [Google Scholar]

- Berretti, S.; Amor, B.B.; Daoudi, M.; Bimbo, A.D. 3D facial expression recognition using SIFT descriptors of automatically detected keypoints. Vis. Comput. 2011, 27, 1021. [Google Scholar] [CrossRef]

- Wang, X.; Chao, J.; Wei, L.; Min, H.; Xu, L.; Ren, F. Feature fusion of HOG and WLD for facial expression recognition. In Proceedings of the IEEE/SICE International Symposium on System Integration, Kobe, Japan, 15–17 December 2013. [Google Scholar]

- Yuan, L.; Wu, C.M.; Yi, Z. Facial expression recognition based on fusion feature of PCA and LBP with SVM. Opt. Int. J. Light Electron Opt. 2013, 124, 2767–2770. [Google Scholar]

- Pu, X.; Ke, F.; Xiong, C.; Ji, L.; Zhou, Z. Facial expression recognition from image sequences using twofold random forest classifier. Neurocomputing 2015, 168, 1173–1180. [Google Scholar] [CrossRef]

- Lin, Y.; Song, M.; Quynh, D.T.P.; He, Y.; Chen, C. Sparse Coding for Flexible, Robust 3D Facial-Expression Synthesis. IEEE Comput. Graph. Appl. 2012, 32, 76–88. [Google Scholar] [PubMed]

- Meng, Z.; Ping, L.; Jie, C.; Han, S.; Yan, T. Identity-Aware Convolutional Neural Network for Facial Expression Recognition. In Proceedings of the IEEE International Conference on Automatic Face & Gesture Recognition, Washington, DC, USA, 30 May–3 June 2017. [Google Scholar]

- Liu, Y.; Li, Y.; Ma, X.; Song, R. Facial Expression Recognition with Fusion Features Extracted from Salient Facial Areas. Sensors 2017, 17, 712. [Google Scholar] [CrossRef] [PubMed]

- Lv, Y.; Feng, Z.; Chao, X. Facial expression recognition via deep learning. In Proceedings of the International Conference on Smart Computing, Hong Kong, China, 3–5 November 2014; pp. 303–308. [Google Scholar]

- Sun, W.; Zhao, H.; Zhong, J. A Complementary Facial Representation Extracting Method based on Deep Learning. Neurocomputing 2018, 306, 246–259. [Google Scholar] [CrossRef]

- Li, H.; Jian, S.; Xu, Z.; Chen, L. Multimodal 2D+3D Facial Expression Recognition With Deep Fusion Convolutional Neural Network. IEEE Trans. Multimed. 2017, 19, 2816–2831. [Google Scholar] [CrossRef]

- Shan, C.; Gong, S.; Mcowan, P.W. Facial expression recognition based on Local Binary Patterns: A comprehensive study. Image Vis. Comput. 2009, 27, 803–816. [Google Scholar] [CrossRef]

- Cohen, I.; Sebe, N.; Garg, A.; Chen, L.S.; Huang, T.S. Facial expression recognition from video sequences: Temporal and static modeling. Comput. Vis. Image Underst. 2003, 91, 160–187. [Google Scholar] [CrossRef]

- Happy, S.L.; George, A.; Routray, A. A real time facial expression classification system using Local Binary Patterns. In Proceedings of the International Conference on Intelligent Human Computer Interaction, Kharagpur, India, 27–29 December 2012. [Google Scholar]

- Zhang, B.; Liu, G.; Xie, G. Facial expression recognition using LBP and LPQ based on Gabor wavelet transform. In Proceedings of the IEEE International Conference on Computer & Communications, Chengdu, China, 16–17 October 2016. [Google Scholar]

- Li, L.; Ying, Z.; Yang, T. Facial expression recognition by fusion of Gabor texture features and local phase quantization. In Proceedings of the International Conference on Signal Processing, Hangzhou, China, 19–23 October 2014. [Google Scholar]

- Shin, M.; Kim, M.; Kwon, D.S. Baseline CNN structure analysis for facial expression recognition. In Proceedings of the IEEE International Symposium on Robot & Human Interactive Communication, New York, NY, USA, 26–31 August 2016. [Google Scholar]

- Yu, Z.; Zhang, C. Image based Static Facial Expression Recognition with Multiple Deep Network Learning. In Proceedings of the Acm on International Conference on Multimodal Interaction, Seattle, WA, USA, 9–13 November 2015. [Google Scholar]

- Jung, H.; Lee, S.; Yim, J.; Park, S.; Kim, J. Joint Fine-Tuning in Deep Neural Networks for Facial Expression Recognition. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 13–16 December 2015. [Google Scholar]

- Abdulrahman, M.; Eleyan, A. Facial expression recognition using Support Vector Machines. In Proceedings of the Signal Processing & Communications Applications Conference, Malatya, Turkey, 16–19 May 2015. [Google Scholar]

- Lucey, P.; Cohn, J.F.; Kanade, T.; Saragih, J. The Extended Cohn-Kanade Dataset (CK+): A complete dataset for action unit and emotion-specified expression. In Proceedings of the Computer Vision & Pattern Recognition Workshops, San Francisco, CA, USA, 13–18 June 2010. [Google Scholar]

- Mollahosseini, A.; Chan, D.; Mahoor, M.H. Going deeper in facial expression recognition using deep neural networks. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision, Lake Placid, NY, USA, 7–10 march 2015. [Google Scholar]

- Dhall, A.; Goecke, R.; Lucey, S.; Gedeon, T. Static facial expression analysis in tough conditions: Data, evaluation protocol and benchmark. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Barcelona, Spain, 6–13 November 2011. [Google Scholar]

- Liu, M.; Li, S.; Shan, S.; Wang, R.; Chen, X. Deeply Learning Deformable Facial Action Parts Model for Dynamic Expression Analysis. In Proceedings of the Asian Conference on Computer Vision, Singapore, 1–5 November 2014. [Google Scholar]

- Chen, J.; Chen, Z.; Chi, Z.; Hong, F. Facial Expression Recognition in Video with Multiple Feature Fusion. IEEE Trans. Affect. Comput. 2018, 9, 38–50. [Google Scholar] [CrossRef]

- Wen, G.; Zhi, H.; Li, H.; Li, D.; Jiang, L.; Xun, E. Ensemble of Deep Neural Networks with Probability-Based Fusion for Facial Expression Recognition. Cognit. Comput. 2017, 9, 1–14. [Google Scholar] [CrossRef]

- Li, Y.; Zeng, J.; Shan, S.; Chen, X. Occlusion aware facial expression recognition using CNN with attention mechanism. IEEE Trans. Image Process. 2018, 28, 2439–2450. [Google Scholar] [CrossRef] [PubMed]

- Liu, X.; Kumar, B.V.K.V.; You, J.; Ping, J. Adaptive Deep Metric Learning for Identity-Aware Facial Expression Recognition. In Proceedings of the IEEE Conference on Computer Vision & Pattern Recognition Workshops, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

| Ck+ Expression Label | Number | JAFFE Expression Label | Number |

| anger | 5941 | anger | 4840 |

| contempt | 2970 | disgust | 4840 |

| disgust | 9735 | fear | 4842 |

| fear | 4125 | happy | 4842 |

| happy | 12,420 | neutral | 4840 |

| sadness | 3696 | sad | 4841 |

| surprise | 14,619 | surprise | 4840 |

| FER2013 Expression Label | Number | SFEW Expression Label | Number |

| anger | 4486 | anger | 244 |

| contempt | 491 | disgust | 73 |

| disgust | 4625 | fear | 123 |

| fear | 8094 | happy | 252 |

| happy | 5591 | neutral | 226 |

| sadness | 5424 | sad | 228 |

| surprise | 3587 | surprise | 152 |

| Database | Author | Method | Accuracy (%) |

|---|---|---|---|

| CK+ | Liu [32] | 3DCNN | 85.9 |

| CK+ | Jung [27] | DTNN | 91.44 |

| CK+ | This paper | new method | 99.07 |

| JAFFE | Chen [33] | ECNN | 94.3 |

| JAFFE | Wen [34] | Probability-Based | 50.7 |

| JAFFE | This paper | new method | 95.95 |

| FER2013 | Chen [33] | ECNN | 69.96 |

| FER2013 | This paper | new method | 67.7 |

| SFEW | Li [35] | attention mechanism | 53 |

| SFEW | Liu [36] | Adaptive Deep Metric | 54 |

| SFEW | This paper | new method | 59.97 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, Y.; Li, Y.; Song, Y.; Rong, X. Facial Expression Recognition Based on Auxiliary Models. Algorithms 2019, 12, 227. https://doi.org/10.3390/a12110227

Wang Y, Li Y, Song Y, Rong X. Facial Expression Recognition Based on Auxiliary Models. Algorithms. 2019; 12(11):227. https://doi.org/10.3390/a12110227

Chicago/Turabian StyleWang, Yingying, Yibin Li, Yong Song, and Xuewen Rong. 2019. "Facial Expression Recognition Based on Auxiliary Models" Algorithms 12, no. 11: 227. https://doi.org/10.3390/a12110227

APA StyleWang, Y., Li, Y., Song, Y., & Rong, X. (2019). Facial Expression Recognition Based on Auxiliary Models. Algorithms, 12(11), 227. https://doi.org/10.3390/a12110227