This section presents our Byzantine fault tolerant Generalized Paxos Protocol (or BGP, for short) (code for proposer in Algorithm 6). In BGP, the number of acceptor processes is a function of the maximum number of tolerated Byzantine faults f, specifically, , and quorums are any set of processes.

5.2. View Change

The goal of the view change subprotocol is to elect a distinguished proposer process, called the leader (code for leader in Algorithm 7), that carries through the agreement protocol (i.e., enables proposed commands to eventually be learned by all the learners). The overall design of this subprotocol is similar to the corresponding part of existing BFT state machine replication protocols [

9].

In this subprotocol, the system moves through sequentially numbered views, and the leader for each view is chosen in a rotating fashion using the simple equation . The protocol works continuously by having acceptor processes monitor whether progress is being made on adding commands to the current sequence, and, if not, by multicasting a signed suspicion message for the current view to all acceptors suspecting the current leader. Then, if enough suspicions are collected, processes can move to the subsequent view. However, the required number of suspicions must be chosen in a way that prevents Byzantine processes from triggering view changes spuriously. To this end, acceptor processes will multicast a view change message indicating their commitment to starting a new view only after hearing that processes suspect the leader to be faulty. This message contains the new view number, the signed suspicions, and is signed by the acceptor that sends it. This way, if a process receives a view-change message without previously receiving suspicions, it can also multicast a view-change message, after verifying that the suspicions are correctly signed by distinct processes. This guarantees that if one correct process receives the suspicions and multicasts the view-change message, then all correct processes, upon receiving this message, will be able to validate the suspicions and also multicast the view-change message.

| Algorithm 7 Byzantine Generalized Paxos—Leader l |

Local variables:- 1:

uponreceive() from acceptor a do - 2:

- 3:

for p in do - 4:

- 5:

if then - 6:

- 7:

if then - 8:

- 9:

- 10:

upontrigger_next_ballot()do - 11:

- 12:

to proposers - 13:

if then - 14:

to acceptors - 15:

else - 16:

to acceptors - 17:

- 18:

uponreceive() from proposer do - 19:

if then - 20:

- 21:

else - 22:

- 23:

- 24:

uponreceive() from acceptor a do - 25:

if then - 26:

return - 27:

- 28:

- 29:

for i in do - 30:

- 31:

if then - 32:

- 33:

- 34:

if then - 35:

- 36:

- 37:

- 38:

if then - 39:

- 40:

- 41:

functionphase_2a() - 42:

- 43:

- 44:

- 45:

to acceptors - 46:

- 47:

end function

|

Finally, an acceptor process must wait for view-change messages to start participating in the new view (i.e., update its view number and the corresponding leader process). At this point, the acceptor also assembles the view-change messages, proving that others are committing to the new view, and sends them to the new leader (cf. Algorithm 8). This allows the new leader to start its leadership role in the new view once it validates the signatures contained in a single message.

5.3. Agreement Protocol

The consensus protocol allows learner processes to agree on equivalent sequences of commands (according to the definition of equivalence presented in

Section 3). An important conceptual distinction between Fast Paxos [

12] and our protocol is that ballots correspond to an extension of the sequence of learned commands of a single ongoing consensus instance, instead of being a separate instance of consensus. Proposers can try to extend the current sequence by either single commands or sequences of commands. We use the term

proposal to denote either the command or sequence of commands that was proposed.

Ballots can either be

classic or

fast. In classic ballots, a leader proposes a single proposal to be appended to the commands learned by the learners. The protocol is then similar to the one used by classic Paxos [

1], with a first phase where each acceptor conveys to the leader the sequences that the acceptor has already voted for (so that the leader can resend commands that may not have gathered enough votes), followed by a second phase where the leader instructs and gathers support for appending the new proposal to the current sequence of learned commands. Fast ballots, in turn, allow any proposer to contact all acceptors directly in order to extend the current sequence (in case there are no conflicts between concurrent proposals). However, both types of ballots contain an additional round, called the verification phase, in which acceptors broadcast proofs among each other indicating their committal to a sequence. This additional round comes after the acceptors receive a proposal and before they send their votes to the learners.

Next, we present the protocol for each type of ballot in detail. We start by describing fast ballots since their structure has consequences that influence classic ballots.

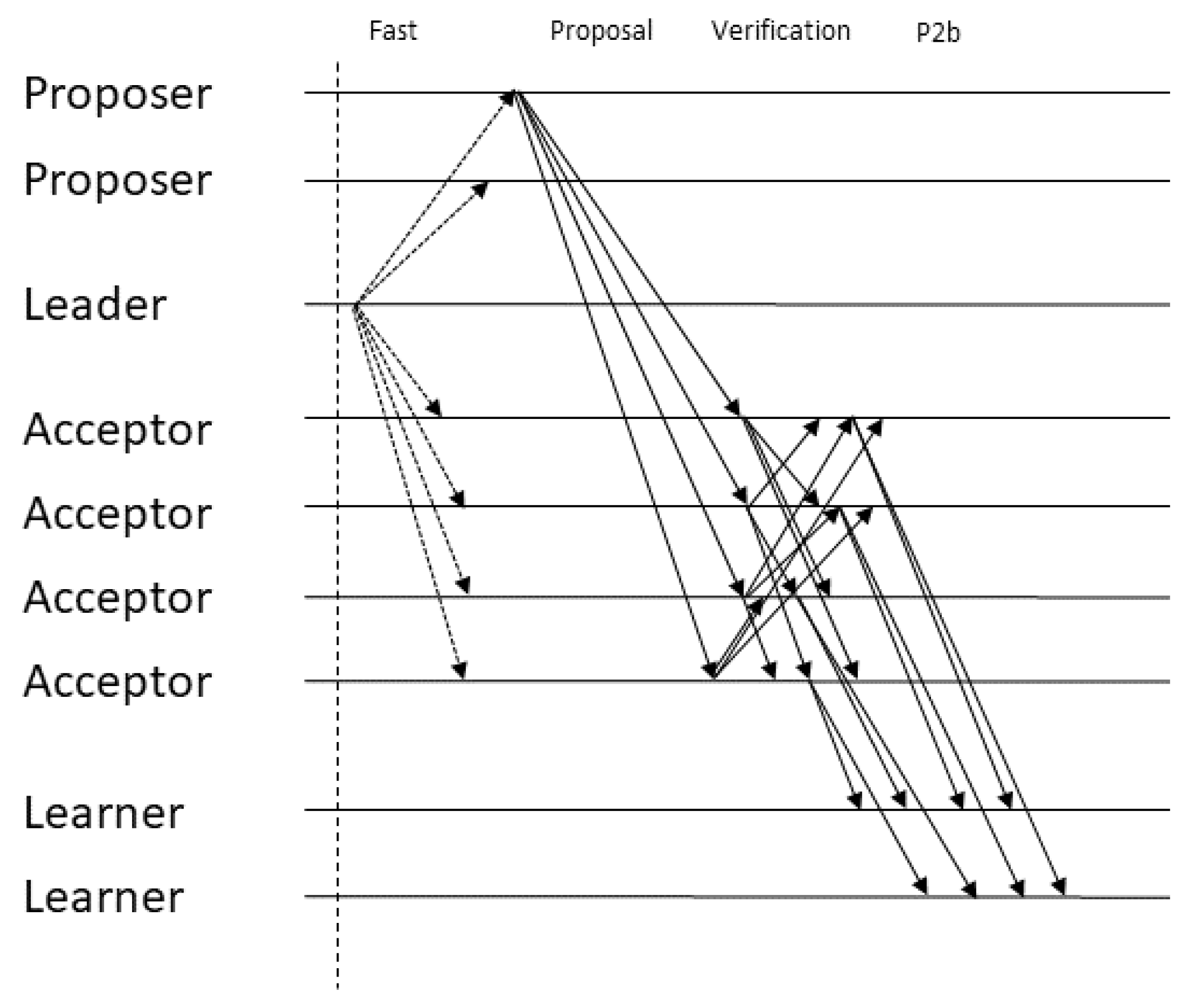

Figure 1 and

Figure 2 illustrate the message pattern for fast and classic ballots, respectively. In these illustrations, arrows that are composed of solid lines represent messages that can be sent multiple times per ballot (once per proposal) while arrows composed of dotted lines represent messages that are sent only once per ballot.

| Algorithm 8 Byzantine Generalized Paxos—Acceptor a (view change) |

Local variables:- 1:

uponsuspect_leaderdo - 2:

if then - 3:

- 4:

- 5:

- 6:

- 7:

uponreceive() from acceptor i do - 8:

if then - 9:

return - 10:

if then - 11:

- 12:

if and then - 13:

- 14:

- 15:

- 16:

- 17:

uponreceive() from acceptor i do - 18:

if then - 19:

return - 20:

- 21:

- 22:

for p in do - 23:

- 24:

- 25:

if then - 26:

- 27:

- 28:

if then - 29:

return - 30:

- 31:

- 32:

if then - 33:

- 34:

- 35:

- 36:

- 37:

if then - 38:

- 39:

- 40:

- 41:

to leader

|

5.3.1. Fast Ballots

Fast ballots leverage the weaker specification of generalized consensus (compared to classic consensus) in terms of command ordering at different replicas, to allow for the faster execution of commands in some cases. The basic idea of fast ballots is that proposers contact the acceptors directly, bypassing the leader, and then the acceptors send their vote for the current sequence to the learners. If a conflict exists and progress is not being made, the protocol reverts to using a classic ballot. This is where generalized consensus allows us to avoid falling back to this slow path, namely in the case where commands that ordered differently at different acceptors commute (code for acceptors in Algorithm 9).

However, this concurrency introduces safety problems even when a quorum is reached for some sequence. If we keep the original Fast Paxos message pattern [

12], it is possible for one sequence

s to be learned at one learner

while another non-commutative sequence

is learned before

s at another learner

. Suppose

s obtains a quorum of votes and is learned by

but the same votes are delayed indefinitely before reaching

. In the next classic ballot, when the leader gathers a quorum of

phase 1b messages it must arbitrate an order for the commands that it received from the acceptors and it does not know the order in which they were learned. This is because, of the

messages it received,

f may not have participated in the quorum and another

f may be Byzantine and lie about their vote, which only leaves one correct acceptor that participated in the quorum and a single vote is not enough to determine if the sequence was learned or not. If the leader picks the wrong sequence, it would be proposing a sequence

that is non-commutative to a learned sequence

s. Since the learning of

s was delayed before reaching

,

could learn

and be in a conflicting state with respect to

, violating consistency. In order to prevent this, sequences accepted by a quorum of acceptors must be monotonic extensions of previous accepted sequences. Regardless of the order in which a learner learns a set of monotonically increasing sequences, the resulting state will be the same. The additional verification phase is what allows acceptors to prove to the leader that some sequence was accepted by a quorum. By gathering

proofs for some sequence, an acceptor can prove that at least

correct acceptors voted for that sequence. Since there are only another

acceptors in the system, no other non-commutative value may have been voted for by a quorum.

Next, we explain each of the protocol’s steps for fast ballots in greater detail.

Step 1: Proposer to acceptors. To initiate a fast ballot, the leader informs both proposers and acceptors that the proposals may be sent directly to the acceptors. Unlike classic ballots, where the sequence proposed by the leader consists of the commands received from the proposers appended to previously proposed commands, in a fast ballot, proposals can be sent to the acceptors in the form of either a single command or a sequence to be appended to the command history. These proposals are sent directly from the proposers to the acceptors.

Step 2: Acceptors to acceptors. Acceptors append the proposals they receive to the proposals they have previously accepted in the current ballot and broadcast the resulting sequence and the current ballot to the other acceptors, along with a signed tuple of these two values. Intuitively, this broadcast corresponds to a verification phase where acceptors gather proofs that a sequence gathered enough support to be committed. These proofs will be sent to the leader in the subsequent classic ballot in order for it to pick a sequence that preserves consistency. To ensure safety, correct learners must learn non-commutative commands in a total order. When an acceptor gathers proofs for equivalent values, it proceeds to the next phase. That is, sequences do not necessarily have to be equal in order to be learned since commutative commands may be reordered. Recall that a sequence is equivalent to another if it can be transformed into the second one by reordering its elements without changing the order of any pair of non-commutative commands (in the pseudocode, proofs for equivalent sequences are being treated as belonging to the same index of the proofs variable, to simplify the presentation). By requiring votes for a sequence of commands, we ensure that, given two sequences where non-commutative commands are differently ordered, only one sequence will receive enough votes even if f Byzantine acceptors vote for both sequences. Outside the set of (up to) f Byzantine acceptors, the remaining correct acceptors will only vote for a single sequence, which means there are only enough correct processes to commit one of them. As in the non-Byzantine protocol, the fact that proposals are sent as extensions to previous sequences makes the protocol robust against the network reordering of non-commutative commands.

| Algorithm 9 Byzantine Generalized Paxos—Acceptor a (agreement) |

Local variables:- 1:

uponreceive() from leader l do - 2:

if and then - 3:

to leader - 4:

- 5:

- 6:

- 7:

uponreceive() from leader do - 8:

if then - 9:

- 10:

- 11:

uponreceive() from acceptor i do - 12:

if and then - 13:

- 14:

if then - 15:

- 16:

to learners - 17:

- 18:

uponreceive) from leader do - 19:

if then - 20:

- 21:

- 22:

uponreceive() from proposer do - 23:

- 24:

- 25:

functionphase_2b_classic() - 26:

- 27:

if and and and ( or or ) then - 28:

- 29:

if then - 30:

to learners - 31:

else - 32:

- 33:

- 34:

- 35:

to acceptors - 36:

end function - 37:

- 38:

functionphase_2b_fast() - 39:

if and then - 40:

if then - 41:

to learners - 42:

else - 43:

- 44:

- 45:

- 46:

to acceptors - 47:

end function

|

Step 3: Acceptors to learners. Similarly to what happens in classic ballots, the fast ballot equivalent of the phase 2b message, which is sent from acceptors to learners, contains the current ballot number, the command sequence and the proofs gathered in the verification round. One could think that, since acceptors are already gathering proofs that a value will eventually be committed, learners are not required to gather votes and they can wait for a single phase 2b message and validate the proofs contained in it. However, this is not the case due to the possibility of learners learning sequences without the leader being aware of it. If we allowed the learners to learn after witnessing proofs for just one acceptor then that would raise the possibility of that acceptor not being present in the quorum of phase 1b messages. Therefore, the leader wouldn’t be aware that some value was proven and learned. The only way to guarantee that at least one correct acceptor will relay the latest proven sequence to the leader is by forcing the learner to require phase 2b messages since only then will one correct acceptor be in the intersection of the two quorums.

Arbitrating an order after a conflict. When, in a fast ballot, non-commutative commands are concurrently proposed, these commands may be incorporated into the sequences of various acceptors in different orders and, therefore, the sequences sent by the acceptors in phase 2b messages will not be equivalent and will not be learned. In this case, the leader subsequently runs a classic ballot and gathers these unlearned sequences in phase 1b. Then, the leader will arbitrate a single serialization for every previously proposed command, which it will then send to the acceptors. Therefore, if non-commutative commands are concurrently proposed in a fast ballot, they will be included in the subsequent classic ballot and the learners will learn them in a total order, thus preserving consistency.

5.3.2. Classic Ballots

Classic ballots work in a way that is very close to the original Paxos protocol [

1]. Therefore, throughout our description, we will highlight the points where BGP departs from that original protocol, either due to the Byzantine fault model, or due to behaviors that are particular to our specification of the consensus problem.

In this part of the protocol, the leader continuously collects proposals by assembling all commands that are received from the proposers since the previous ballot in a sequence (this differs from classic Paxos, where it suffices to keep a single proposed value that the leader attempts to reach agreement on). When the next ballot is triggered, the leader starts the first phase by sending

phase 1a messages to all acceptors containing just the ballot number. Similarly to classic Paxos, acceptors reply with a

phase 1b message to the leader, which reports all sequences of commands they voted for. In classic Paxos, acceptors also promise not to participate in lower-numbered ballots, in order to prevent safety violations [

1]. However, in BGP this promise is already implicit, given (1) there is only one leader per view and it is the only process allowed to propose in a classic ballot and (2) acceptors replying to that message must be in the same view as that leader.

As previously mentioned, phase 1b messages contain proofs for each learned sequence. By waiting for such messages, the leader is guaranteed that, for any learned sequence s, at least one of the messages will be from a correct acceptor that, due to the quorum intersection property, participated in the verification phase of s. Please note that waiting for phase 1b messages is not what makes the leader be sure that a certain sequence was learned in a previous ballot. The leader can be sure that some sequence was learned because each phase 1b message contains cryptographic proofs from acceptors stating that they would vote for that sequence. Since there are only acceptors in the system, no other non-commutative sequence could have been learned. Even though each phase 1b message relays enough proofs to ensure the leader that some sequence was learned, the leader still needs to wait for such messages to be sure that he is aware of any sequence that was previously learned. Please note that, since each command is signed by the proposer (this signature and its check are not explicit in the pseudocode), a Byzantine acceptor cannot relay made-up commands. However, it can omit commands from its phase 1b message, which is why it is necessary for the leader to be sure that at least one correct acceptor in its quorum took part in the verification quorum of any learned sequence.

After gathering a quorum of phase 1b messages, the leader initiates phase 2a where it assembles a proposal and sends it to the acceptors. This proposal sequence must be carefully constructed in order to ensure all of the intended properties. In particular, the proposal cannot contain already learned non-commutative commands in different relative orders than the one in which they were learned, in order to preserve consistency, and it must contain unlearned proposals from both the current and the previous ballots, in order to preserve liveness (this differs from sending a single value with the highest ballot number as in the classic specification). Due to the importance and required detail of the leader’s value picking rule, it will be described next in its own subsection.

The acceptors reply to phase 2a messages by broadcasting their verification messages containing the current ballot, the proposed sequence and proof of their committal to that sequence. After receiving verification messages, an acceptor sends its phase 2b messages to the learners, containing the ballot, the proposal from the leader and the proofs gathered in the verification phase. As is the case in the fast ballot, when a learner receives a phase 2b vote, it validates the proofs contained in it. Waiting for a quorum of messages for a sequence ensures the learners that at least one of those messages was sent by a correct acceptor that will relay the sequence to the leader in the next classic ballot (the learning of sequences also differs from the original protocol in the quorum size, due to the fault model, and in that the learners would wait for a quorum of matching values instead of equivalent sequences, due to the consensus specification).

5.3.3. Leader Value Picking Rule

Phase 2a is crucial for the correct functioning of the protocol because it requires the leader to pick a value that allows new commands to be learned, ensuring progress, while at the same time preserving a total order of non-commutative commands at different learners, ensuring consistency. The value picked by the leader is composed of three pieces: (1) the subsequence that has proven to be accepted by a majority of acceptors in the previous fast ballot, (2) the subsequence that has been proposed in the previous fast ballot but for which a quorum hasn’t been gathered and (3) new proposals sent to the leader in the current classic ballot.

The first part of the sequence will be the largest of the proven sequences sent in the phase 1b messages. The leader can pick such a value deterministically because, for any two proven sequences, they are either equivalent or one can be extended to the other. The leader is sure of this because for the quorums of any two proven sequences there is at least one correct acceptor that voted in both and votes from correct acceptors are always extensions of previous votes from the same ballot. If there are multiple sequences with the maximum size then they are equivalent (by same reasoning applied previously) and any can be picked.

The second part of the sequence is simply the concatenation of unproven sequences of commands in an arbitrary order. Since these commands are guaranteed to not have been learned at any learner, they can be appended to the leader’s sequence in any order. Since phase 2b messages are required for a learner to learn a sequence and the intersection between the leader’s quorum and the quorum gathered by a learner for any sequence contains at least one correct acceptor, the leader can be sure that if a sequence of commands is unproven in all of the gathered phase 1b messages, then that sequence wasn’t learned and can be safely appended to the leader’s sequence in any order.

The third part consists simply of commands sent by proposers to the leader with the intent of being learned at the current ballot. These values can be appended in any order and without any restriction since they’re being proposed for the first time.

5.3.4. Byzantine Leader

The correctness of the protocol is heavily dependent on the guarantee that the sequence accepted by a quorum of acceptors is an extension of previous proven sequences. Otherwise, if the network rearranges phase 2b messages such that they’re seen by different learners (cf. Algorithm 10) in different orders, they will result in a state divergence. If, however, every vote is a prefix of all subsequent votes then, regardless of the order in which the sequences are learned, the final state will be the same.

| Algorithm 10 Byzantine Generalized Paxos—Learner l |

Local variables:- 1:

uponreceive() from acceptor a do - 2:

- 3:

for i in do - 4:

- 5:

if then - 6:

- 7:

- 8:

if then - 9:

- 10:

- 11:

if then - 12:

- 13:

- 14:

uponreceive() from acceptor a do - 15:

if then - 16:

- 17:

if then - 18:

|

This state equivalence between learners is ensured by the correct execution of the protocol since every vote in a fast ballot is equal to the previous vote with a sequence appended at the end (Algorithm 9 lines {43–46}) and every vote in a classic ballot is equal to all the learned votes concatenated with unlearned votes and new proposals (Algorithm 7 lines {42–45}) which means that new votes will be extensions of previous proven sequences. However, this begs the question of how the protocol fares when Byzantine faults occur. In particular, the worst case scenario occurs when both f acceptors and the leader are Byzantine (remember that a process can have multiple roles, such as leader and acceptor). In this scenario, the leader can purposely send phase 2a messages for a sequence that is not prefixed by the previously accepted values. Coupled with an asynchronous network, this malicious message can be delivered before the correct votes of the previous ballot, resulting in different learners learning sequences that may not be extensible to equivalent sequences.

To prevent this scenario, the acceptors must ensure that the proposals they receive from the leader are prefixed by the values they have previously voted for. Since an acceptor votes for its sequence after receiving verification votes for an equivalent sequence and stores it in its variable, the acceptor can verify that it is a prefix of the leader’s proposed value (i.e., ). A practical implementation of this condition is simply to verify that the subsequence of starting at the index 0 up to index is equivalent to the acceptor’s sequence.

5.4. Checkpointing

BGP includes an additional feature that deals with the indefinite accumulation of state at the acceptors and learners. This is of great practical importance since it can be used to prevent the storage of commands sequences from depleting the system’s resources. This feature is implemented by a special command , proposed by the leader, which causes both acceptors and learners to safely discard previously stored commands. However, the reason acceptors accumulate state continuously is because each new proven sequence must contain any previous proven sequence. This ensures that an asynchronous network cannot reorder messages and cause learners to learn in different orders. In order to safely discard state, we must implement a mechanism that allows us to deal with reordered messages that do not contain the entire history of learned commands.

To this end, when a learner learns a sequence that contains a checkpointing command at the end, it discards every command in its sequence except and sends a message to the acceptors notifying them that it executed the checkpoint for some command . Acceptors stop participating in the protocol after sending phase 2b messages with checkpointing commands and wait for notifications from learners. After gathering a quorum of notifications, the acceptors discard their state, except for the command , and resume their participation in the protocol. Please note that since the acceptors also leave the checkpointing command in their sequence of proven commands, every valid subsequent sequence will begin with . The purpose of this command is to allow a learner to detect when an incoming message was reordered. The learner can check the first position of an incoming sequence against the first position of its and, if a mismatch is detected, it knows that either a pre and post-checkpoint message has been reordered.

When performing this check, two possible anomalies that can occur: either (1) the first position of the incoming sequence contains a command and the learner’s sequence does not, in which case the incoming sequence was sent post-checkpoint and the learner is missing a sequence containing the respective checkpoint command; or (2) the first position of the sequence contains a checkpoint command and the incoming sequence does not, in which case the incoming sequence was assembled pre-checkpoint and the learner has already executed the checkpoint.

In the first case, the learner can simply store the post-checkpoint sequences until it receives the sequence containing the appropriate command at which point it can learn the stored sequences. Please note that the order in which the post-checkpoint sequences are executed is irrelevant since they’re extensions of each other. In the second case, the learner receives sequences sent before the checkpoint sequence that it has already executed. In this scenario, the learner can simply discard these sequences since it knows that it executed a subsequent sequence (i.e., the one containing the checkpoint command) and proven sequences are guaranteed to be extensions of previous proven sequences.

To simplify the algorithm presentation, this extension to the protocol is not included in the pseudocode description.