Thermal Environment Prediction for Metro Stations Based on an RVFL Neural Network

Abstract

:1. Introduction

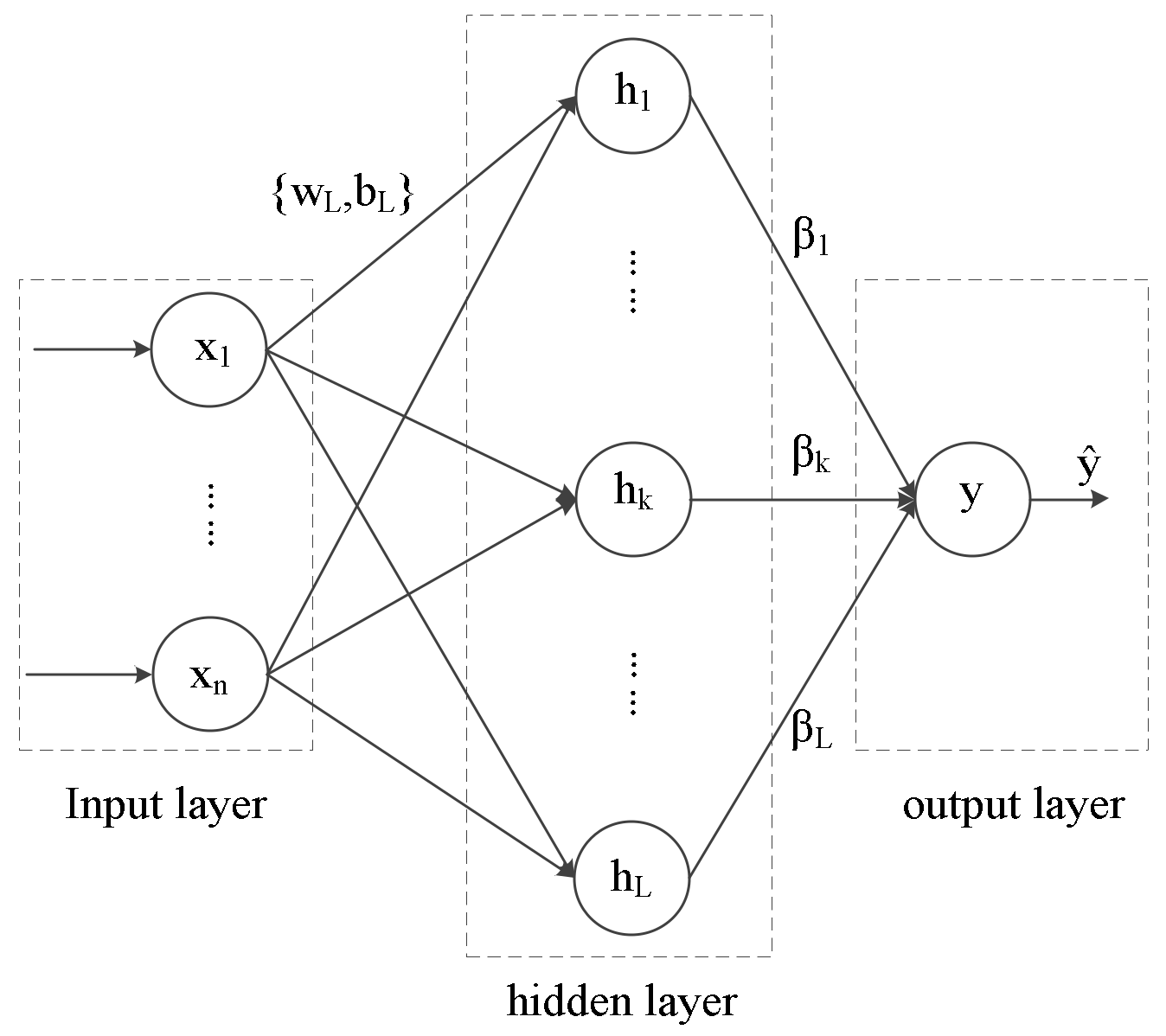

2. Training Principle of the RVFLNN Model

3. Measurement Process

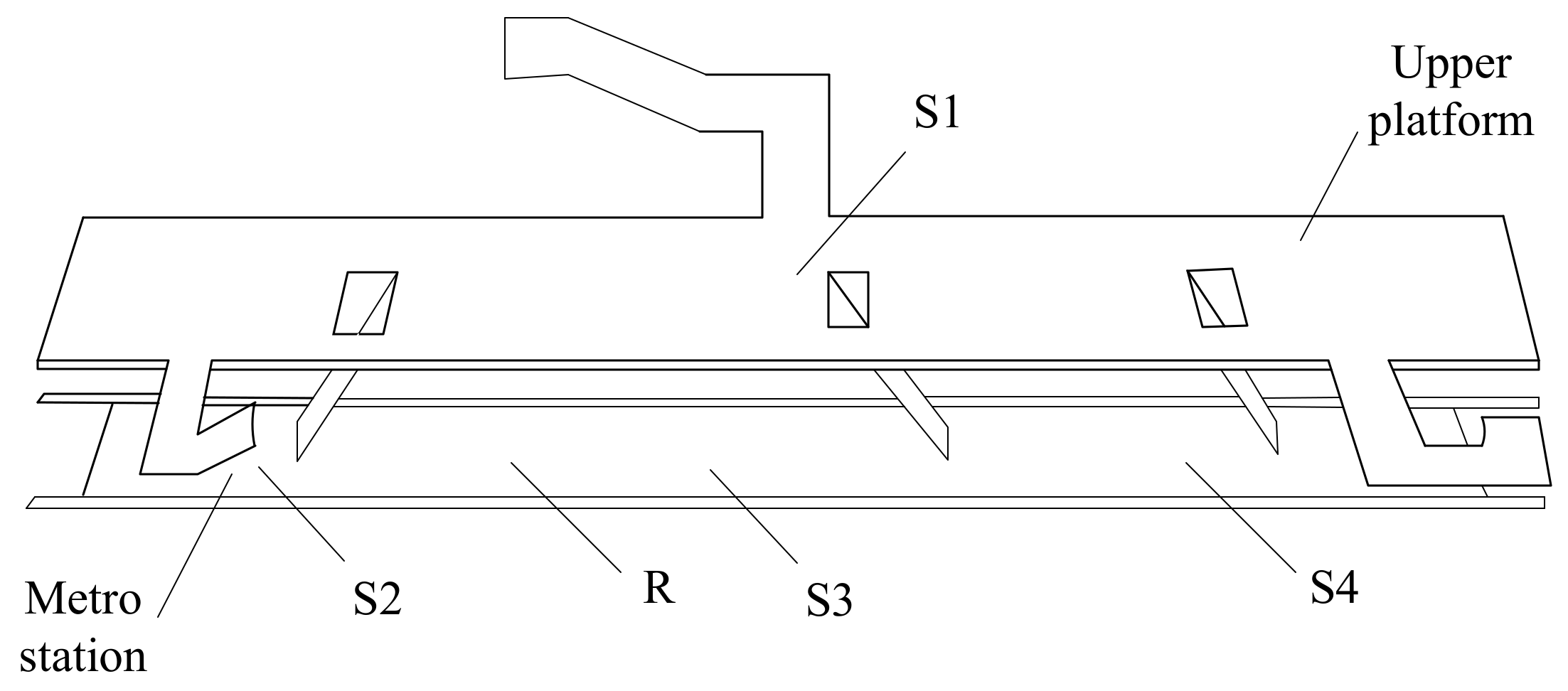

3.1. Test Points in Metro Station

3.2. Data Acquisition and Processing

- (1)

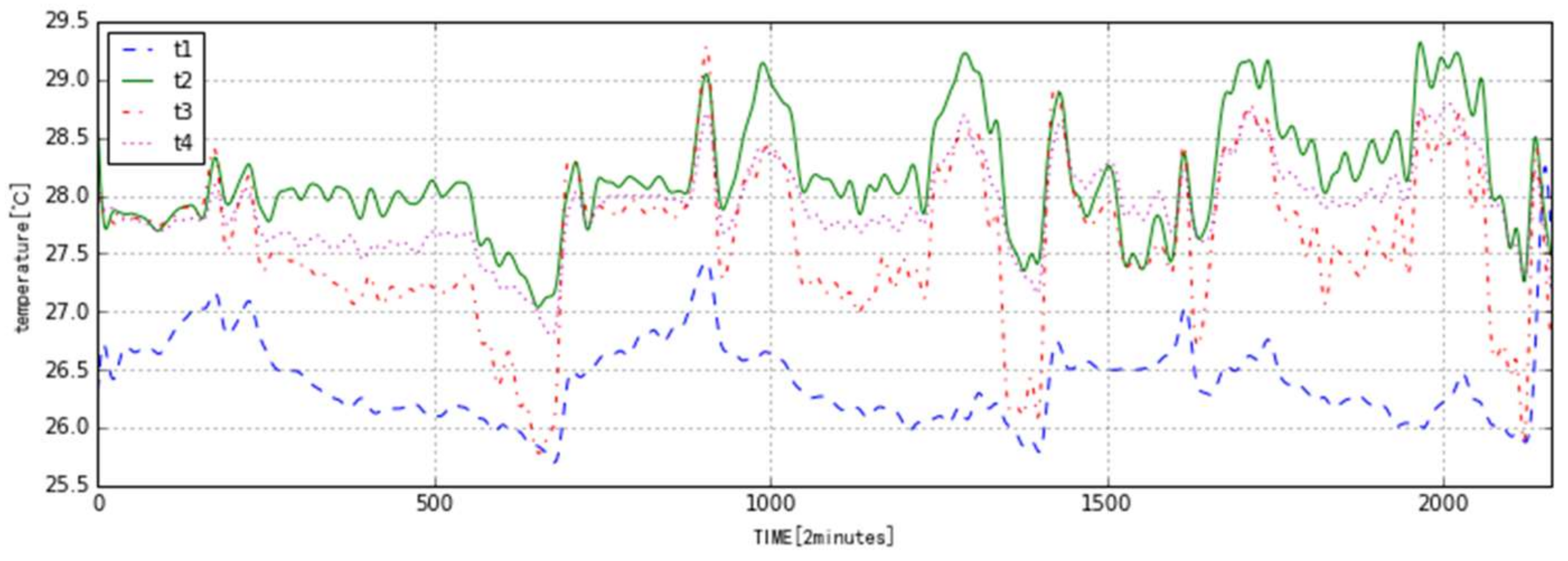

- The temperatures were monitored for 3 days and recorded every 2 min for a total of 2160 data points. T1, T2, T3, and T4 are temperatures at S1, S2, S3, and S4, respectively, as shown in Figure 3. They were processed from the primitive data with a Butterworth filter. The first 720 data points were measured on Sunday; the second and third lots of 720 data points were measured on Monday and Tuesday, respectively.

- (2)

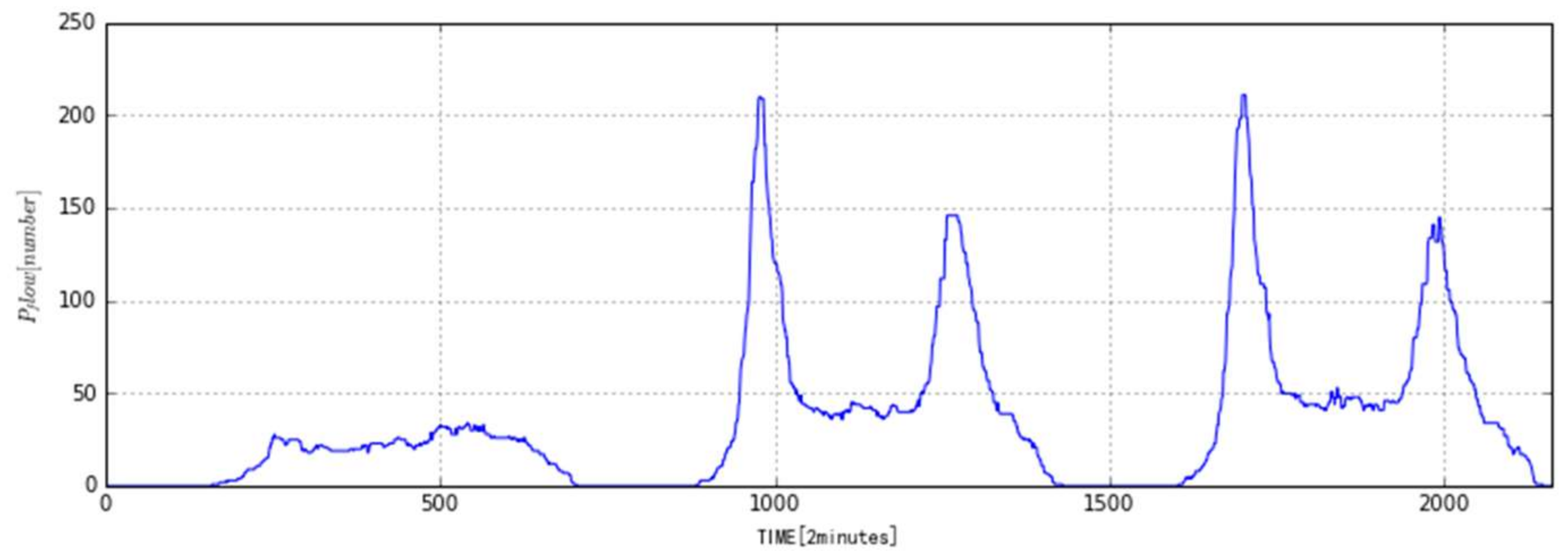

- The passenger flow monitoring point was at Point R. The number of passengers, Pflow, was monitored every two minutes. Figure 4 is the passenger flow curve after processing with median filtering. It is very obvious that the passenger flow data is very different between weekdays and weekends.

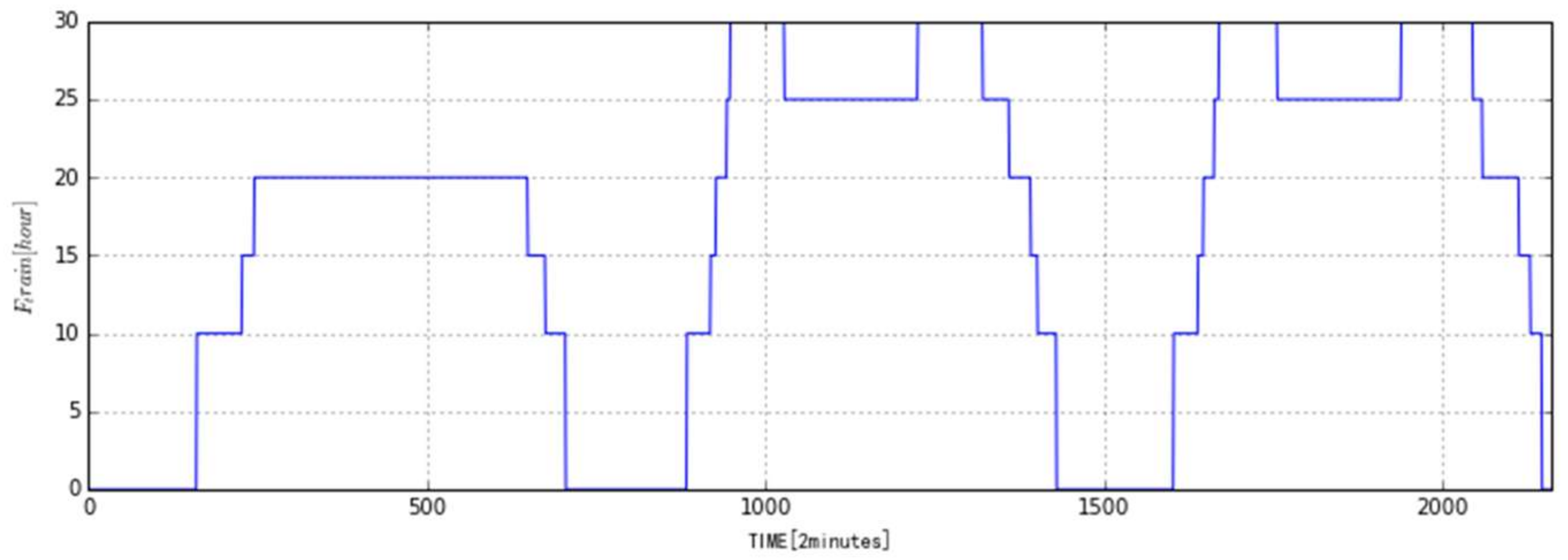

- (3)

- Since the metro arrival frequency, Ftrain, varies significantly with the time and the number of passengers, Figure 5 gives the change of Ftrain with time. It can be seen that Ftrain is obviously changed with the morning and evening rush hours. Ftrain is also different for weekdays and weekends.

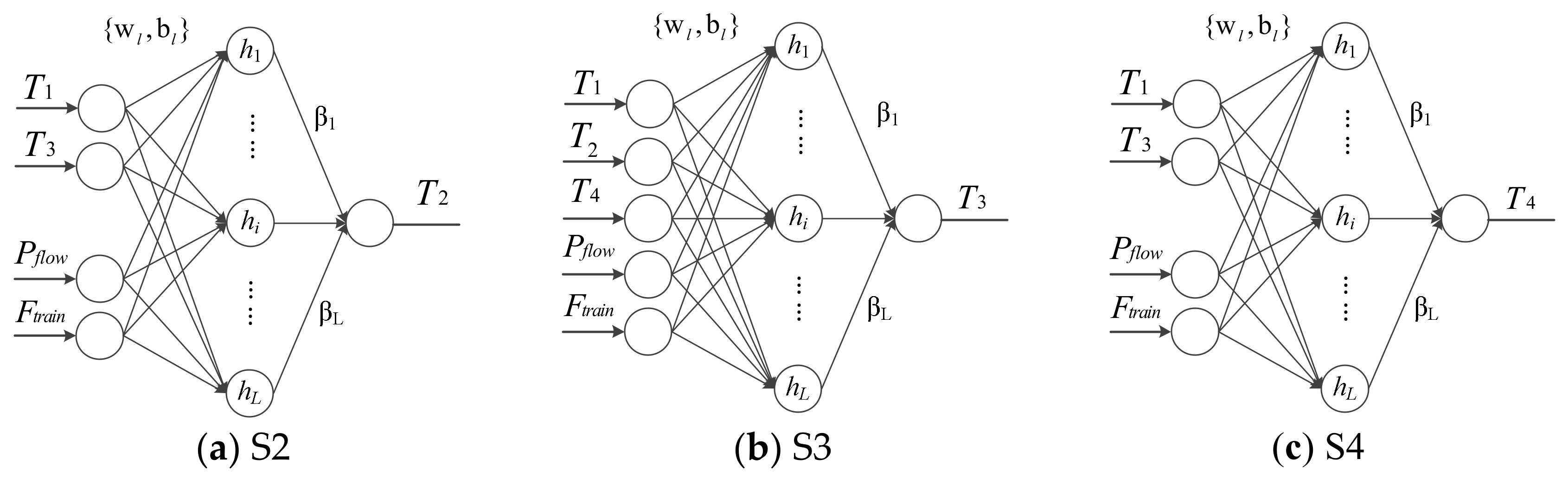

3.3. Thermal Environment Model Based on an RVFLNN for a Metro Station

3.3.1. Model Input and Output

3.3.2. Input Normalization

3.3.3. Build the Model

3.4. Results and Analysis

3.4.1. Effects of Training Parameters

3.4.2. Prediction Performance of Thermal Model Based on RVFLNN

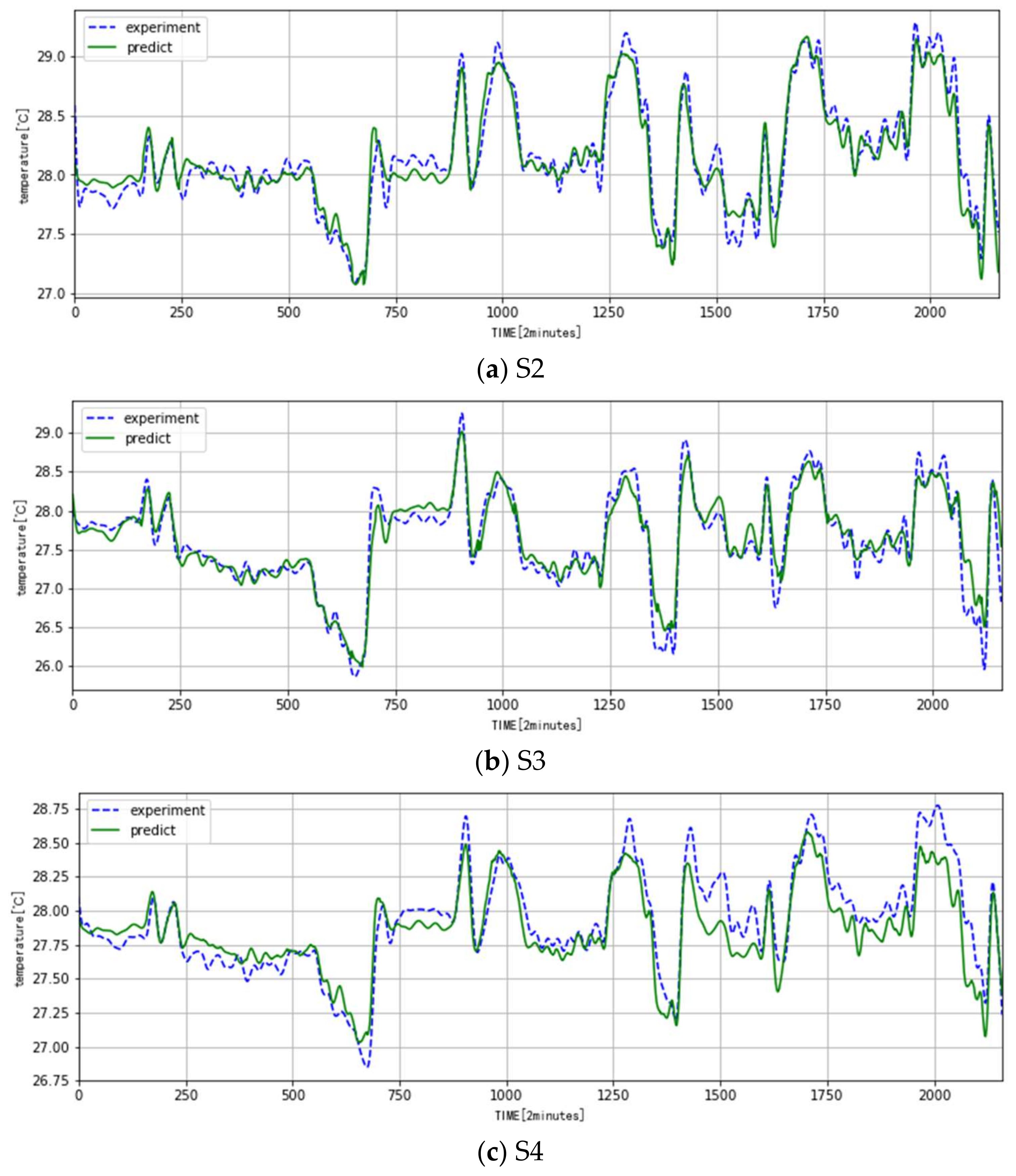

- (1)

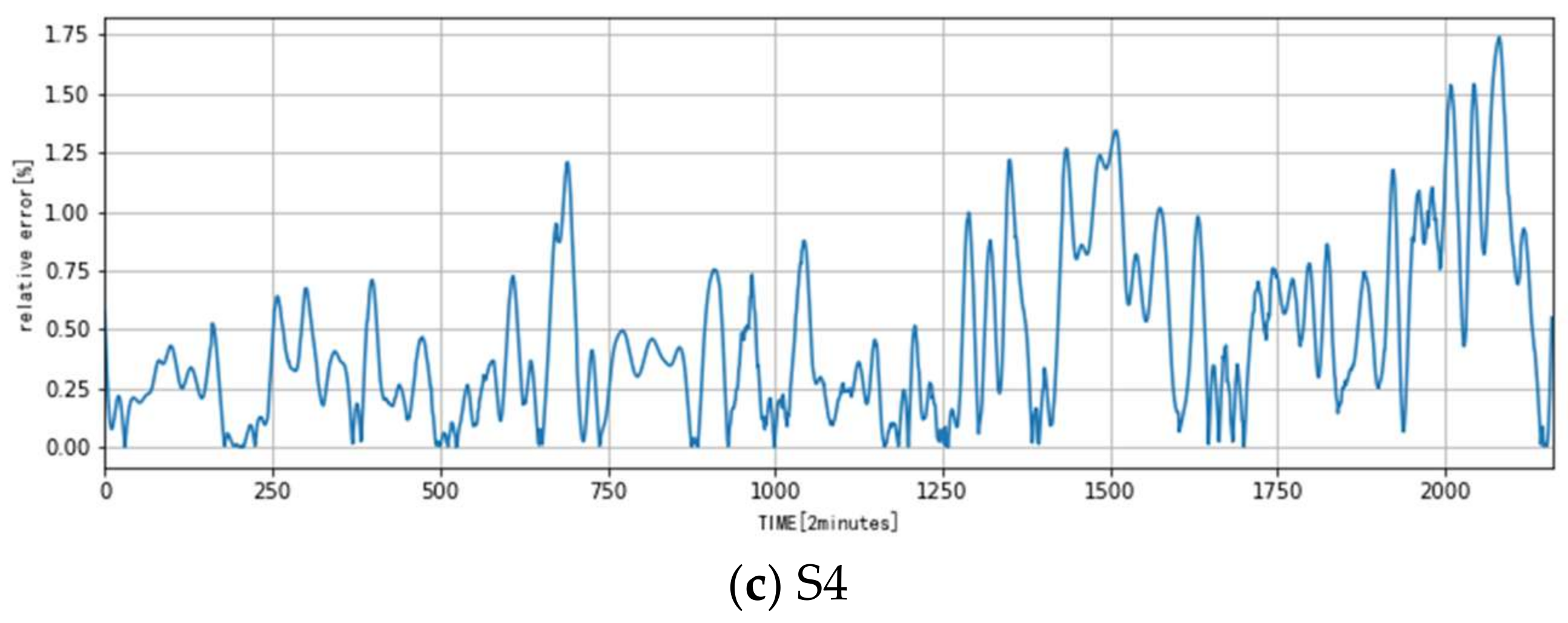

- The temperature change in the metro station is influenced by many factors and its change rule is relatively complicated. The presented model based on the RVFLNN can reveal this rule very well: its fitting error and prediction error are both very small.

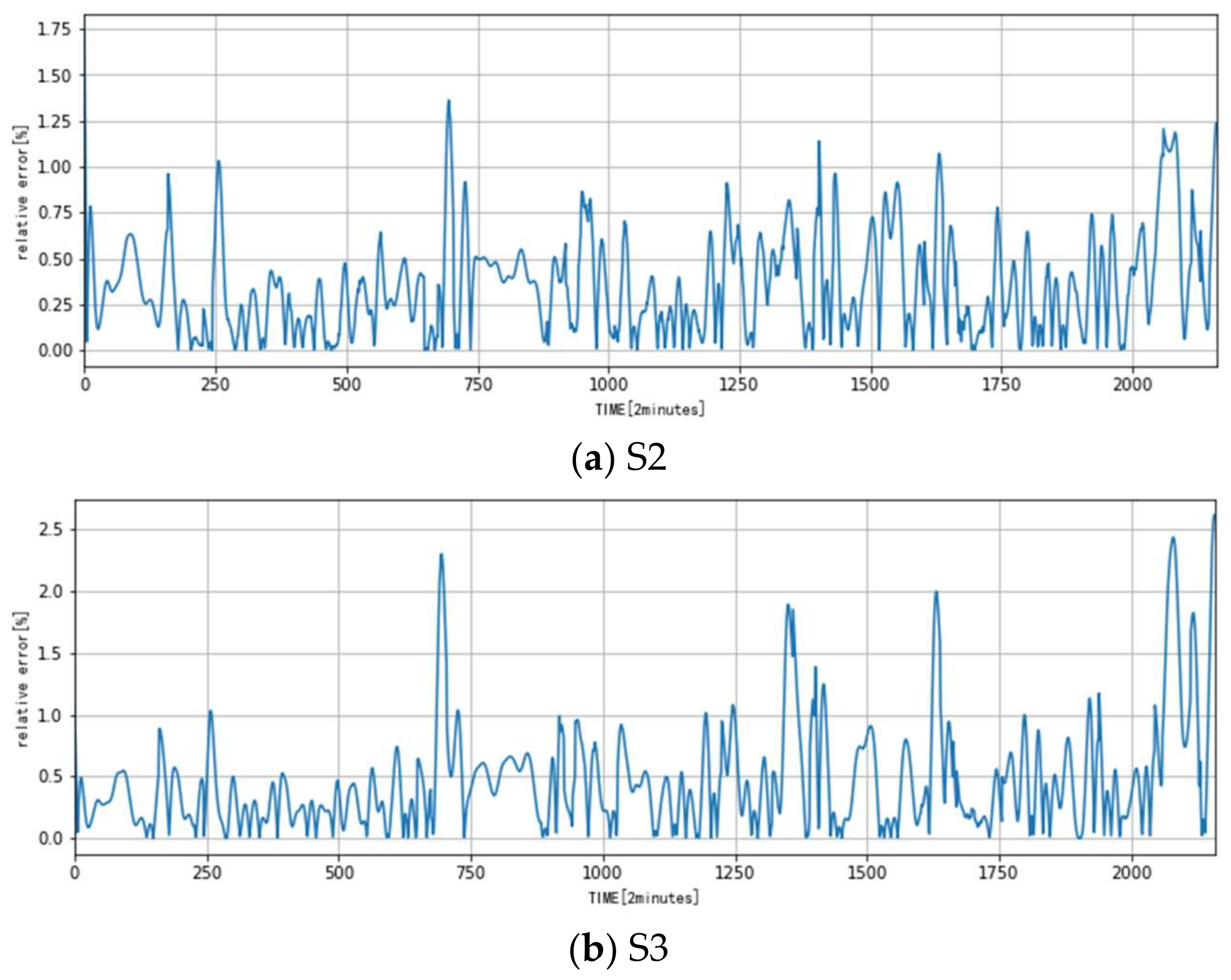

- (2)

- Comparison of the predicted data and experimental data shows that the maximum absolute error is about 0.4 °C and the maximum relative error is about 2.5%.

- (3)

4. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Ma, Z.; Wang, G.; Zhang, J.; Gu, Y.; Dai, L. Simulation and forecast of the grinding temperature based on finite element and neural network. J. Electron. Meas. Instrum. 2013, 2013, 1080–1085. [Google Scholar]

- Wu, B.; Cui, D.; He, X.; Zhang, D.; Tang, K. Cutting tool temperature prediction method using analytical model for end milling. Chin. J. Aeronaut. 2016, 29, 1788–1794. [Google Scholar]

- Deng, J.; Ma, R.; Yuan, G.; Chang, C.; Yang, X. Dynamic thermal performance prediction model for the flat-plate solar collectors based on the two-node lumped heat capacitance method. Sol. Energy 2016, 135, 769–779. [Google Scholar] [CrossRef]

- Le Tellier, R.; Skrzypek, E.; Saas, L. On the treatment of plane fusion front in lumped parameter thermal models with convection. Appl. Therm. Eng. 2017, 120, 314–326. [Google Scholar] [CrossRef]

- Underwood, C.P. An improved lumped parameter method for building thermal modeling. Energy Build. 2014, 79, 191–201. [Google Scholar] [CrossRef]

- Mezrhab, A.; Bouzidi, M. Computation of thermal comfort inside a passenger car compartment. Appl. Therm. Eng. 2006, 26, 1697–1704. [Google Scholar] [CrossRef]

- Tian, Z.; Qian, C.; Gu, B.; Yang, L.; Liu, F. Electric vehicle air conditioning system performance prediction based on artificial neural network. Appl. Therm. Eng. 2015, 89, 101–114. [Google Scholar] [CrossRef]

- Korteby, Y.; Mahdi, Y.; Azizou, A.; Daoud, K.; Regdon, G., Jr. Implementation of an artificial neural network as a PAT tool for the prediction of temperature distribution within a pharmaceutical fluidized bed granulator. Eur. J. Pharm. Sci. 2016, 88, 219–232. [Google Scholar] [CrossRef] [PubMed]

- Adewole, B.Z.; Abidakun, O.A.; Asere, A.A. Artificial neural network prediction of exhaust emissions and flame temperature in LPG (liquefied petroleum gas) fueled low swirl burner. Energy 2013, 61, 606–611. [Google Scholar] [CrossRef]

- Dhanuskodi, R.; Kaliappan, R.; Suresh, S.; Anantharaman, N.; Arunagiri, A.; Krishnaiah, J. Artificial Neural Network model for predicting wall temperature of supercritical boilers. Appl. Therm. Eng. 2015, 90, 749–753. [Google Scholar] [CrossRef]

- Kara, F.; Aslantaş, K.; Cicek, A. Prediction of cutting temperature in orthogonal maching of AISI 316L using artificial neural network. Appl. Soft Comput. 2016, 38, 64–74. [Google Scholar] [CrossRef]

- Starkov, S.O.; Lavrenkov, Y.N. Prediction of the moderator temperature field in a heavy water reactor based on a cellular neural network. Nucl. Energy Technol. 2017, 3, 133–140. [Google Scholar] [CrossRef]

- Wang, X.; You, M.; Mao, Z.; Yuan, P. Tree-Structure Ensemble General Regression Neural Networks applied to predict the molten steel temperature in Ladle Furnace. Adv. Eng. Inf. 2016, 30, 368–375. [Google Scholar] [CrossRef]

- Lgelnik, B.; Pao, Y.-H.; LeClair, S.; Shen, C.Y. The ensemble approach to neural-network learning and generalization. IEEE Trans. Neural Netw. 1999, 10, 19–30. [Google Scholar] [CrossRef] [PubMed]

- Rao, C.R.; Mitra, S.K. Generalized Inverse of Matrices and Its Applications; Wiley: New York, NY, USA, 1971. [Google Scholar]

- Wang, D.; Alhamdoosh, M. Evolutionary extreme learning machine ensembles with size control. Neurocomputting 2013, 102, 98–110. [Google Scholar] [CrossRef]

- Igelnik, B.; Pao, Y.H. Stochastic choice of basis functions in adaptive function approximation and the functional-link net. IEEE Trans. Neural Netw. 1995, 6, 1320–1329. [Google Scholar] [CrossRef] [PubMed]

- Scardapane, S.; Wang, D.; Panella, M.; Uncini, A. Distributed learning for Random Vector Functional-Link networks. Inf. Sci. 2015, 301, 271–284. [Google Scholar] [CrossRef]

- Pao, Y.H.; Park, G.H.; Sobajic, D.J. Learning and generalization characteristics of the random vector functional-link net. Neurocomputing 1994, 6, 163–180. [Google Scholar] [CrossRef]

- Alhamdoosh, M.; Wang, D. Fast decorrelated neural network ensembles with random weights. Inf. Sci. 2014, 264, 104–117. [Google Scholar] [CrossRef]

- Cannistraro, M.; Cannistraro, G.; Gestivo, R. The Local Media Radiant Temperature for the Calculation of Comfort in Areas Characterized by Radiant Surfaces. Int. J. Heat Technol. 2015, 33, 115–122. [Google Scholar] [CrossRef]

- Cannistraro, G.; Cannistraro, M.; Restivo, R. Smart Control of air Climatization System in Function on the Valuers of the Mean Local Radiant Temperature. Smart Sci. 2015, 3, 157–163. [Google Scholar] [CrossRef]

- Cannistraro, G.; Cannistraro, M.; Cannistraro, A. Evaluation of the Sound Emissions and Climate Acoustic in Proximity of ome Railway Station. Int. J. Heat Technol. 2016, 34, S589–S596. [Google Scholar]

| (a) S2 | |||||||||

| Number of Nodes | 20 | 50 | 100 | 200 | 300 | 400 | 600 | 800 | 1000 |

| Etrain | 3.687 | 3.435 | 3.404 | 3.438 | 3.333 | 3.284 | 3.271 | 3.235 | 3.224 |

| Evalidation | 4.532 | 4.201 | 4.144 | 4.119 | 4.067 | 3.991 | 3.972 | 3.910 | 3.941 |

| (b) S3 | |||||||||

| Number of Nodes | 20 | 50 | 100 | 200 | 300 | 400 | 600 | 800 | 1000 |

| Etrain | 4.657 | 4.391 | 4.294 | 4.162 | 4.126 | 4.122 | 3.958 | 3.930 | 3.920 |

| Evalidation | 6.011 | 5.807 | 5.490 | 5.452 | 5.528 | 5.613 | 5.487 | 5.502 | 5.494 |

| (c) S4 | |||||||||

| Number of Nodes | 20 | 50 | 100 | 200 | 300 | 400 | 600 | 800 | 1000 |

| Etrain | 3.229 | 2.866 | 2.791 | 2.720 | 2.695 | 2.660 | 2.629 | 2.600 | 2.585 |

| Evalidation | 6.880 | 6.760 | 6.714 | 6.824 | 6.773 | 6.846 | 6.763 | 6.821 | 6.773 |

| λ | ω,b ϵ [−0.5, 0.5] | ω,b ϵ [−1, 1] | ω,b ϵ [−2, 2] |

|---|---|---|---|

| Evalidation | Evalidation | Evalidation | |

| 0.05 | 5.554 | 5.596 | 5.917 |

| 0.1 | 5.557 | 5.586 | 5.667 |

| 0.5 | 5.485 | 5.464 | 5.632 |

| 1 | 5.478 | 5.455 | 5.447 |

| 5 | 5.491 | 5.630 | 5.626 |

| 10 | 5.826 | 5.725 | 5681 |

| 20 | 6.683 | 6.030 | 5.691 |

| S2 | S3 | S4 | |

|---|---|---|---|

| Etest | 4.124 | 5.513 | 6.925 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tian, Q.; Zhao, W.; Wei, Y.; Pang, L. Thermal Environment Prediction for Metro Stations Based on an RVFL Neural Network. Algorithms 2018, 11, 49. https://doi.org/10.3390/a11040049

Tian Q, Zhao W, Wei Y, Pang L. Thermal Environment Prediction for Metro Stations Based on an RVFL Neural Network. Algorithms. 2018; 11(4):49. https://doi.org/10.3390/a11040049

Chicago/Turabian StyleTian, Qing, Weihang Zhao, Yun Wei, and Liping Pang. 2018. "Thermal Environment Prediction for Metro Stations Based on an RVFL Neural Network" Algorithms 11, no. 4: 49. https://doi.org/10.3390/a11040049

APA StyleTian, Q., Zhao, W., Wei, Y., & Pang, L. (2018). Thermal Environment Prediction for Metro Stations Based on an RVFL Neural Network. Algorithms, 11(4), 49. https://doi.org/10.3390/a11040049