1. Introduction

Wire arc additive manufacturing (WAAM), a subset of additive manufacturing (AM), has emerged as a transformative technology for fabricating complex geometric shapes and customized parts. WAAM utilizes welding processes, such as cold metal transfer (CMT), to deposit material layer by layer, enabling the production of large-scale components with high deposition rates. However, ensuring quality in WAAM poses significant challenges, particularly in detecting defects during the deposition process. Traditionally, the industry relies on post-weld inspections, which have several disadvantages [

1]. Detected defects typically result in either reworking or scrapping the component, leading to considerable production waste [

2]. Moreover, this method is prone to oversights that can cause failures or safety hazards in subsequent uses of the components [

3]. Therefore, real-time monitoring, especially of the melt pool, is essential to mitigate these risks [

4].

Online quality monitoring in WAAM generally follows two approaches: analyzing the relationship between welding parameters (e.g., current and voltage) and quality targets (e.g., defect presence) [

5,

6], and examining the correlation between in situ data from various modalities and these quality targets. In situ data, acquired via non-contact sensors such as infrared cameras for thermal fields [

7,

8,

9], audio cards for arc sounds [

10,

11,

12], and three-dimensional scanning equipment for morphology [

13], provides a stronger statistical correlation than welding parameters alone. This data more accurately reflects the physical and chemical changes during the deposition process, thereby enhancing model learning and improving prediction accuracy.

Furthermore, the acquisition and processing of in situ data enable timely feedback to the WAAM control system, facilitating immediate adjustments and optimizations within the process. While mainstream online monitoring in intelligent manufacturing still predominantly uses single-mode imaging, such as visible cameras to observe melt pool dynamics [

14,

15,

16,

17,

18,

19,

20], these systems, effective under controlled conditions, often falter in AM processes like CMT due to severe interference from arc light and spatter.

The aforementioned studies underscore significant limitations in using single-sensor systems for online quality monitoring in WAAM. These systems either fail to achieve real-time monitoring or are affected by noise interference, resulting in low prediction accuracy and poor robustness of single-mode monitoring models. These limitations underscore the urgent need for more robust and effective monitoring methods. In recent years, multimodal learning has become a prominent area of interest within the field of deep learning [

21,

22]. This approach leverages data from diverse modalities, significantly enhancing a model’s capacity to comprehend and process tasks by integrating varied informational inputs. Its applications span several domains, including autonomous driving and virtual reality [

23,

24,

25], proving its versatility and efficacy. Similarly, multimodal learning can be strategically applied to online quality monitoring in WAAM to overcome the inherent constraints associated with single-modality data. Numerous scholars have explored the integration of multimodal information within the welding domain [

26], utilizing combinations such as image and sound [

27,

28,

29,

30], image and spectroscopy [

31,

32,

33], and spectroscopy with photodiode data to discern welding characteristics [

34,

35]. However, incorporating non-visual information into CNN often requires preprocessing, and in some cases, manual feature extraction, complicating their application in real-time monitoring. Additionally, when selecting different modalities as source data, the alignment of information requires meticulous design. This includes synchronizing and aligning data from various sensors and appropriately truncating sequence lengths to manage one-dimensional sound sequences, two-dimensional images, and three-dimensional morphological features. Despite these efforts, the combination of data across different dimensions remains abstract and challenging to interpret specifically within the context of WAAM.

The latest advancements in imaging technology have facilitated the integration of multiple imaging modes, thereby enhancing detection capabilities under challenging conditions. Unlike using different sensors, the combination of multiple imaging technologies can easily ensure synchronous data acquisition, capable of characterizing the same melt pool state. Also, as 2D image data, they can be directly fed into the CNN without preprocessing. For example, the combination of infrared and visible light imaging provides a complementary perspective, widely used in large-scale scenarios such as autonomous driving [

36]. Despite the considerable potential of multimodal imaging systems, their application in WAAM still faces significant gaps [

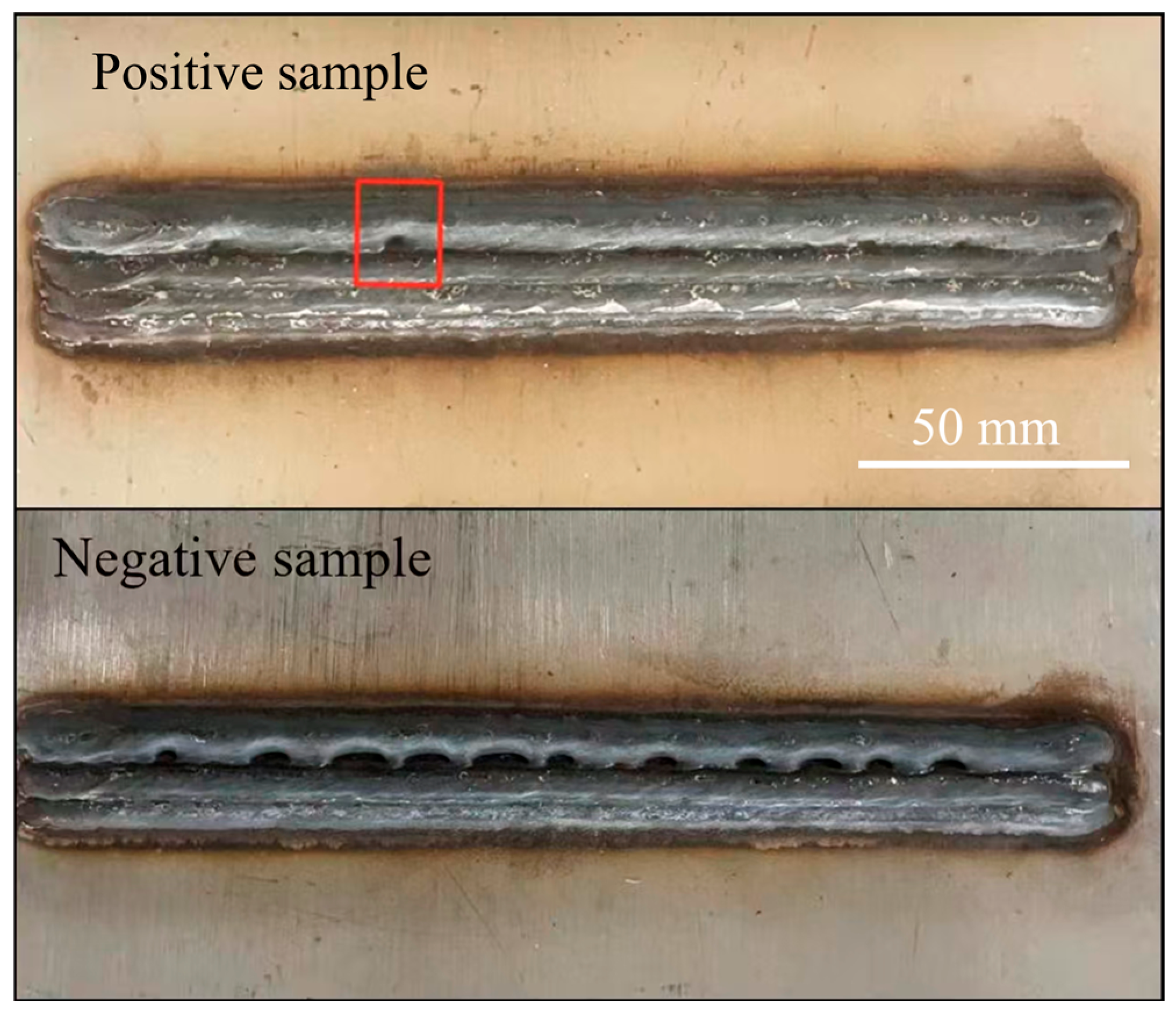

37,

38], particularly in the study of specific defects such as overlap defects in the narrow field of view. In this paper, an overlap defect refers to insufficient overlap between adjacent tracks, which results in incomplete inter-track bonding. The interactions between material layers make it crucial to detect these defects. The combination of visible and infrared images can capture thermal dynamics obscured by interference from the visible spectrum.

This study investigates a dual-modal approach that combines visible and infrared imaging for melt pool defect detection in WAAM, aiming to improve in-process defect monitoring by leveraging complementary spectral information. The integration of these two modalities aims to overcome the traditional limitations posed by single-mode systems, allowing for a more comprehensive analysis of both thermal and morphological dynamics of the melt pool. By leveraging the complementary strengths of infrared imaging (sensitivity to thermal changes) and visible light imaging (detailed spatial resolution), the proposed approach aims to provide a more meticulous and interference-resistant monitoring solution. The paper is organized as follows:

Section 2 introduces the system setup and the data generation process;

Section 3 analyzes the characteristics of visible and infrared images and the fusion network;

Section 4 describes the training strategy and compares the network results. Finally, conclusions are drawn in

Section 5.

3. Dual-Modal Image Analysis and Modeling

3.1. Visible and Infrared Images

The design of Multimodal Mutual Fusion Network (MMFNet) is driven by the distinct characteristics of visible and infrared imaging modalities in the WAAM process. Advancements in visible light sensing technology allow for the acquisition of detailed morphological information of the melt pool. The morphology of the melt pool plays a vital role in real-time quality monitoring of WAAM, offering a direct visual indicator of the forming quality. Using morphological information from the melt pool, it is possible to monitor surface-level defects such as burrs, cracks, pores, and deviations.

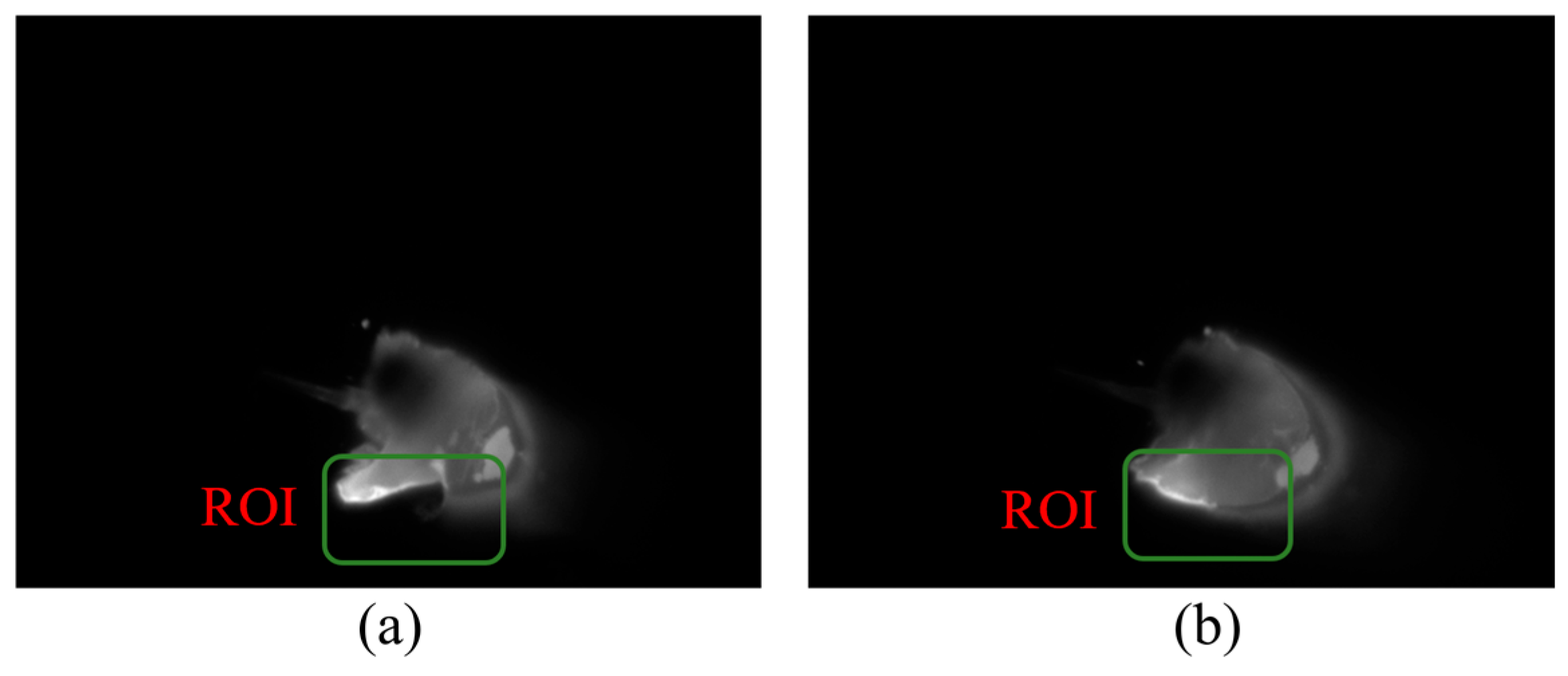

Visible light melt pool images offer higher resolution, displaying the geometry of the melt pool including its shape, size, and potential surface disruptions indicative of defects such as poor overlap. These details are crucial for understanding the behavior of the molten material and the formation mechanisms of overlap defects. In cases of overlap defects, irregularities in the shape of the melt pool or evidence of discontinuity where the new layer has not properly fused with the previous layer may be observed. The Region of Interest (ROI) in

Figure 4 shows the variation in the visible melt pool image when lap defects are present.

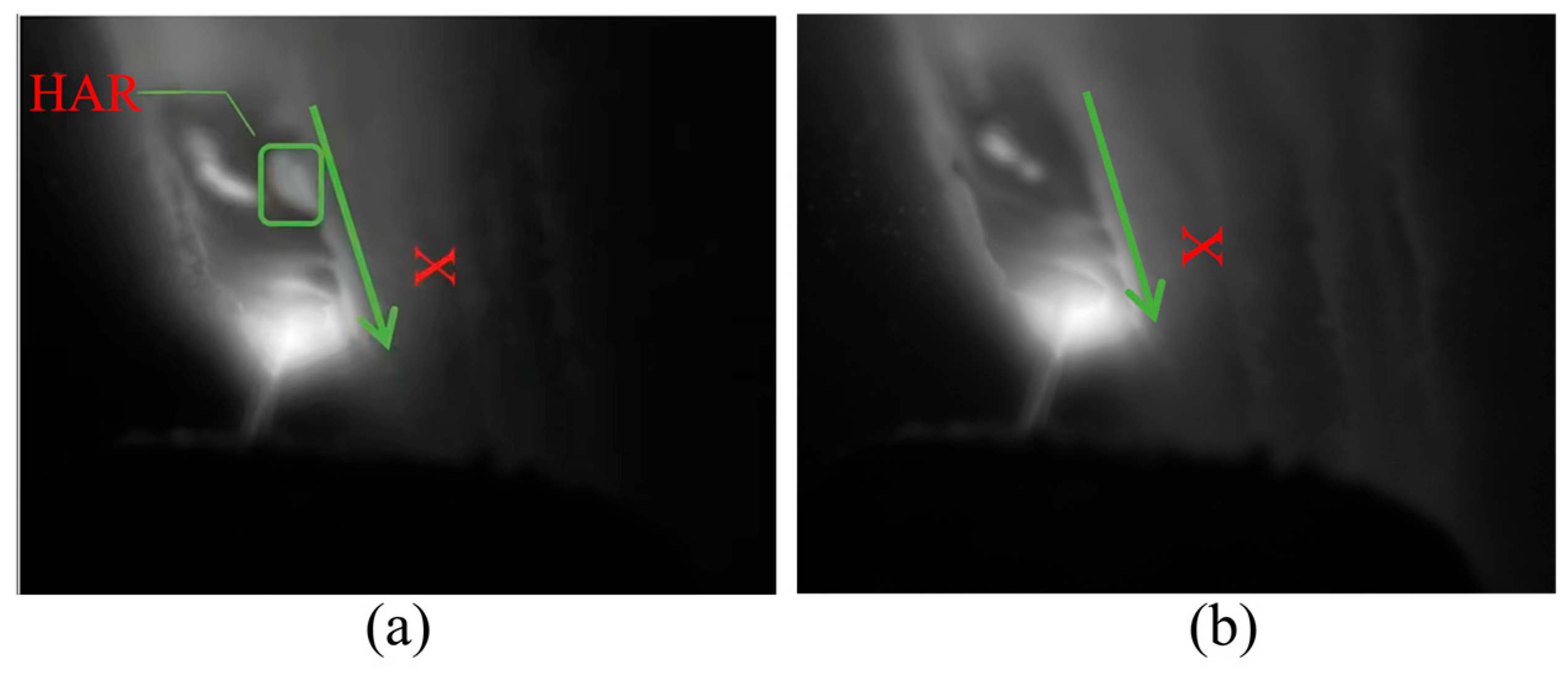

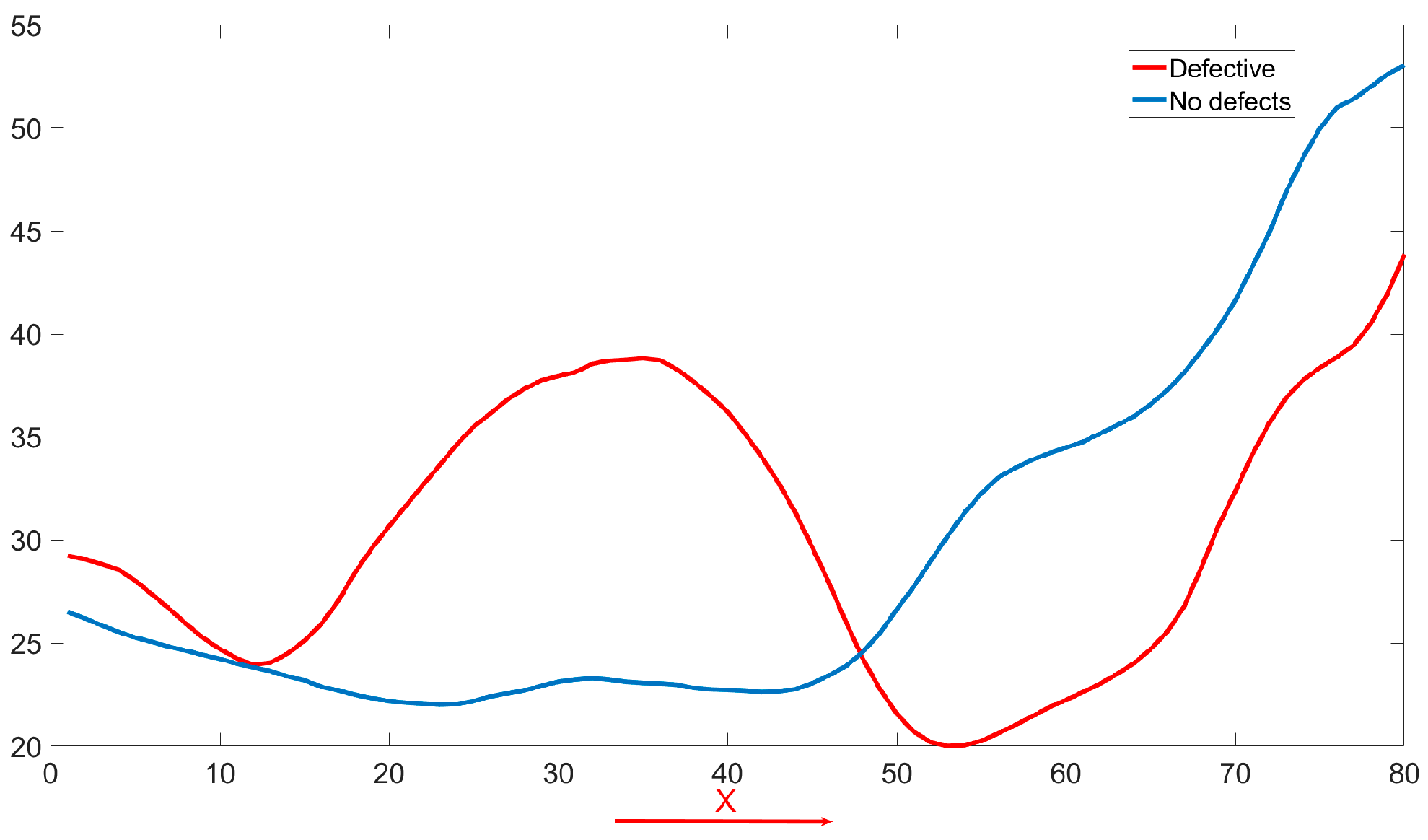

Particularly, poor inter-track overlap often manifests as anomalies within the thermal field. Incomplete bonding between adjacent tracks leads to reduced heat dissipation in the defect area, resulting in the formation of a heat accumulation region (HAR). Consequently, while normal weld seams display smooth temperature transitions, defect areas exhibit abnormal changes in the temperature curve, including areas of unusually high temperatures (as shown in

Figure 5). A reliable quality monitoring system should therefore pay close attention to the thermal behaviors during the WAAM process, and infrared modal data are highly effective at capturing this thermal behavior information. Thus, beyond melt pool data, the incorporation of infrared thermal field information for in situ defect monitoring is crucial.

Infrared thermal field images reveal temperature distributions, providing insights into the thermal characteristics of the molten material. Infrared imaging can depict the temperatures of the melt pool and adjacent areas, with areas of insufficient overlap possibly displaying irregular thermal features. This could include abnormal hot spots where the material has not melted correctly, or cooler areas indicating incomplete melting or bonding.

In WAAM, the quality of interlayer bonding is crucial for the mechanical integrity of the final product. Infrared (IR) thermography serves as an effective diagnostic tool by providing real-time insights into thermal phenomena indicative of interlayer adhesion quality.

Figure 5a displays the IR profile of a defective weld beads with a clearly delineated HAR, while

Figure 5b shows defect-free weld beads, establishing a comparative benchmark. The temperature profile curve (

Figure 6) highlights differences along the X-axis; defects related to inadequate overlap are marked by HAR, where incomplete bonding results in localized high temperatures. Conversely, a uniform heat distribution in defect-free weld beads indicates consistent interlayer fusion.

Therefore, a robust quality monitoring system should closely track these thermal behaviors. IR thermography not only enhances visible spectrum melt pool monitoring but is also essential for capturing critical heat flow and material consolidation details, vital for assessing quality. Integrating IR thermography into defect detection frameworks provides a comprehensive view of thermal dynamics, significantly improving the early detection of anomalies and thus enhancing the overall quality control.

However, relying solely on any single modality presents inherent limitations: infrared imagery lacks detailed resolution, while visible light imagery is susceptible to interference from spatter and arc light, as demonstrated in

Figure 7. Therefore, this study demonstrates the effectiveness of a multimodal approach, which leverages the complementary strengths of both infrared and visible light data, enhancing the capability to detect and analyze defects that may otherwise remain undetected with single-modal monitoring systems. Given the complementary nature of the two modalities, a fusion strategy is required that selectively enhances reliable features while suppressing noise. For instance, infrared thermal anomalies often correlate with visible morphological irregularities in overlap defects, but their spatial alignment may vary due to sensor placement and heat diffusion.

3.2. Fusion Strategies

The selection of an appropriate fusion strategy is crucial for successful multimodal integration. Currently, fusion strategies can be broadly categorized into early fusion and late fusion.

Early fusion involves merging different modal data at the very beginning of the processing pipeline, before inputting it into the neural network. This method is valued for its simplicity and straightforwardness, as it directly combines the raw data from all modalities into a single set that feeds into the learning algorithm. However, this approach imposes certain requirements on the data. In this case, the two modalities consist of image data acquired from visible light and infrared sensors. For effective early fusion, pixel-level registration of the two image types would be necessary. Due to sensor size limitations, there is a substantial resolution discrepancy between the infrared and visible light images, as shown in

Figure 8. Achieving pixel-level registration would necessitate interpolation operations, which could introduce significant errors. Such resampling under cross-modal misalignment has been reported to cause structural artifacts such as ghosting and blur in infrared–visible fusion, making pixel-level early fusion sensitive to registration quality [

41]. Meanwhile, the early fusion approach is prone to introduce inter-modal noise interference (e.g., arc light interference in the visible vs. thermal diffusion noise in the infrared). Related RGB–thermal perception studies have shown that naive early fusion can suffer from information interference between modalities and may not consistently outperform single-modality inputs [

42]. In addition, the fixed fusion approach cannot dynamically adjust the modal contributions according to the mission requirements, resulting in amplified noise. Therefore, this study does not utilize early fusion.

Late fusion, on the other hand, refers to the integration of data at a later stage in the processing pipeline, often at the feature or decision level. Late fusion splicing or weighting at high-level features (e.g., in front of the classification layer) preserves modal independence but ignores fine-grained interaction information in the middle layer. For example, a mid-level feature in the visible may contain details of the melt pool profile, while an infrared feature may contain the thermal gradient distribution, and the two may not be effectively correlated at high levels due to loss of resolution.

To address these challenges, the Cross-Modal Interaction Module (CMIM) is proposed, which integrates channel and spatial attention mechanisms tailored for dual-modal data. The CMIM as an interlayer fusion strategy overcomes the noise sensitivity of early fusion and the fine-grained information loss problem of late fusion through layered dynamic fusion with dual-attention mechanism, striking a balance between accuracy, robustness and efficiency (

Figure 9).

Joint Channel Attention: Unlike conventional Squeeze-and-Excitation (SE) modules that process modalities independently, visible and infrared features are concatenated before channel weight generation. This forces the model to learn inter-modal dependencies. For example, suppressing visible channels in arc light-saturated regions while amplifying infrared channels in corresponding thermal anomaly zones. The shared MLP reduces parameters by 25% compared to dual SE blocks while improving cross-modal synergy. The steps are as follows: let the input features from the visible and infrared branches be denoted as

and

. Start by connecting the two features:

The channel weights were generated after global average pooling (GAP) with Sigmoid function:

is the ReLU activation function,

is the Sigmoid activation function. After weight splitting and feature recalibration the final channel attention features are obtained as Equation (4).

Here, denotes channel-wise multiplication.

Cross-Modal Spatial Attention: Spatial weights are derived from concatenated channel-averaged maps of both modalities, allowing the model to learn where to emphasize complementary information. A 7 × 7 convolution followed by sigmoid activation generates the spatial attention map:

The final output is the cross-modal feature fusion result:

This contrasts with independent spatial attention mechanisms, which may prioritize conflicting regions (e.g., focusing on spatter in visible images while ignoring thermal anomalies).

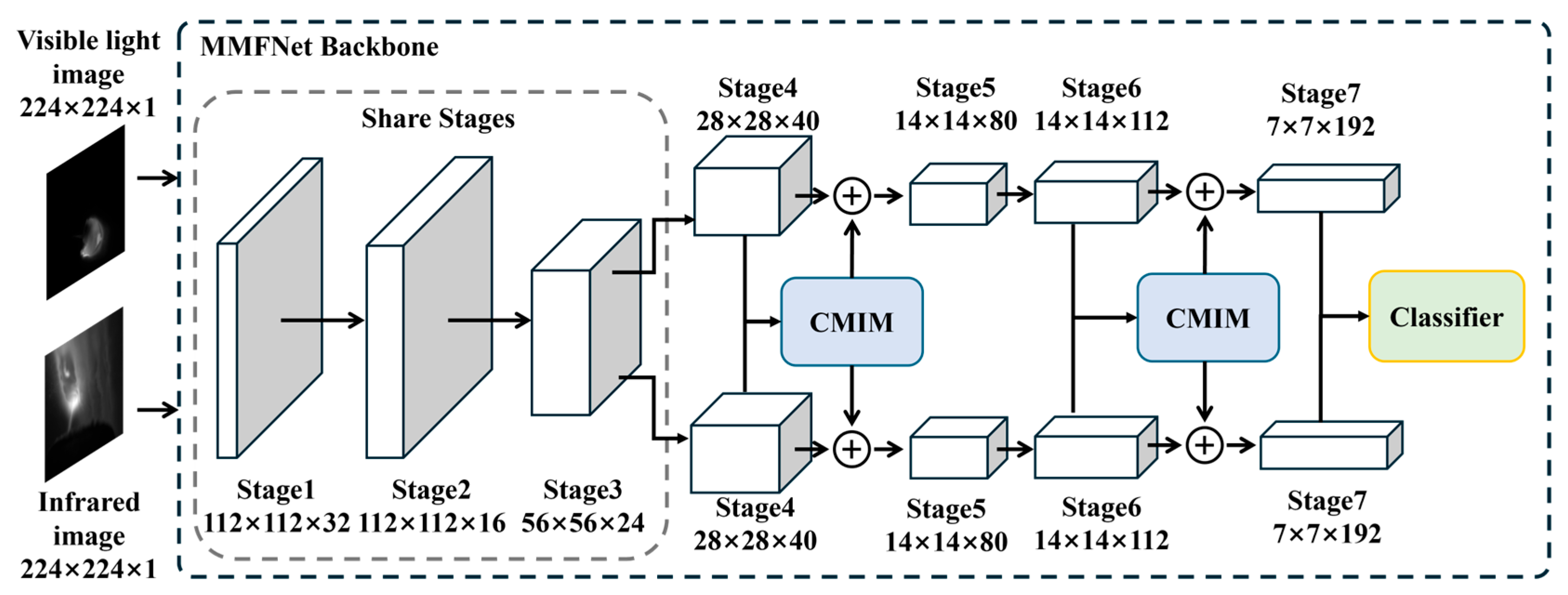

3.3. Network Framework

Visible and infrared inputs are processed through two parallel EfficientNet-B0 backbones. Both modalities are resized to 256 × 256 before network input, and each modality is normalized independently to reduce sensor-dependent intensity scale differences. The EfficientNet-B0 backbones are trained from scratch in this study without ImageNet pretraining. The shallow layers (Stages 1–3) of both branches share weights to capture modality-agnostic low-level features such as edges and textures, which are critical for aligning the spatial characteristics of the melt pool across modalities. In contrast, the deeper layers (Stages 4–7) are trained independently to adapt to modality-specific patterns. For instance, the visible branch focuses on high-resolution morphological details (e.g., melt pool geometry and surface irregularities), while the infrared branch extracts thermal dynamics (e.g., HAR and temperature gradients). This design ensures efficient utilization of shared low-level features while preserving the unique discriminative capabilities of each modality (

Figure 10).

To mitigate interference from arc light and spatter in visible images, CMIMs are embedded after Stages 4 and 6 to dynamically fuse cross-modal features. To balance detection accuracy and computational efficiency, only the final-stage features (Stage 7 outputs) from both modalities are concatenated for classification. While deeper layers sacrifice spatial resolution (7 × 7), they encode high-level semantic information critical for defect identification, such as global thermal patterns and consolidated morphological deviations. The fused features are passed through a lightweight classifier composed of global average pooling and two fully connected layers, achieving real-time inference while maintaining robustness against noise.

3.4. Loss Function

The model training relies on an optimization process that involving the search for the most favorable set of parameters within the confines of predetermined constraints. In the domain of deep learning, these constraints are encapsulated by what is termed a loss function. The loss function serves as a quantitative measure of the discrepancy between the predicted values and the true values, guiding the model’s predictions to align as closely as possible with reality.

In MMFNet, the total loss function consists of two key components: the Weighted Cross-Entropy Loss and the Cross-Modal Consistency Loss. These two loss functions are combined in a linearly weighted manner to synergistically optimize the model’s performance on both the classification task and the cross-modal feature alignment task.

The Weighted Cross-Entropy Loss for a binary classification task is defined as follows:

Here, represents the label for sample i, with 1 denoting the positive class and 0 the negative class. denotes the model’s predicted probability for sample i being of the positive class. denotes the weight of the defective category (set to 0.7 in the experiment), which mitigates the category imbalance by boosting the loss contribution of defective samples. In the WAAM process, defective samples usually account for less than 10% of the samples, and the direct use of the standard cross entropy causes the model to be biased towards normal samples. Weighted cross-entropy enhances the focus on defects by adjusting the α value. It is evident that when the model’s predicted probability distribution aligns perfectly with the true one-hot vector, the cross-entropy loss function reaches its minimum value of 0. Conversely, greater discrepancies between the model’s predictions and the actual labels result in a larger loss function value, indicating a less accurate model.

Visible and infrared data may have skewed feature distributions due to sensor noise or physical property differences. By maximizing the cosine similarity of features in the middle layer, the model is forced to learn the semantic representation shared between modalities to improve the fusion robustness. Cross-Modal Consistency Loss acts on stage 4 and 6 with the goal of constraining the eigenspace alignment of the visible and infrared modes and reducing the effect of mode-specific noise. It is defined as follows:

Here,

denotes the visible and infrared features of layer

(Stage 4 and Stage 6). cos (⋅) computed the cosine similarity, which measures the consistency of the direction of the feature vectors. The total loss function is the weighted sum of the above two losses:

denotes the weighting factor for cross-modal consistency loss, which is determined by grid search, balancing classification performance with feature alignment strength. Each of the two loss functions has its own role: dominates the optimization direction and ensures the core performance of the model on the defect detection task. serves as a regularity term to prevent excessive deviation of inter-modal features and enhance the robustness of the model to noise.

4. Experimental Results and Analysis

4.1. Training Strategies

Pytorch was used to realize the above multimodal network architecture construction. NVIDIA RTX Titan (NVIDIA Corporation, Santa Clara, CA, USA) is used to train the multimodal model proposed in this paper and subsequent comparison tests. In the training phase, the mini-batch gradient descent algorithm is employed as the optimization algorithm, effectively bridging the gap between batch gradient descent and stochastic gradient descent. This method strikes a balance by incorporating the benefits of both—the computational efficiency of using batches and the stochastic approach’s noise reduction in gradient estimation.

Mini-batch gradient descent operates by randomly selecting a subset of training samples to compute gradients during each iteration. These gradients are then used to update the model parameters incrementally. The update rule for mini-batch gradient descent is articulated as follows:

Here, θ represents the model parameters to be optimized, α signifies the learning rate, m denotes the size of the mini-batch, is the predicted value by the model, is the true label of the sample, and is the feature vector of the sample.

The utilization of mini-batch gradient descent allows for a more controlled and potentially faster convergence on large datasets, making it a prudent choice for training deep learning models.

4.2. Ablation Study on Dual-Modal System

A systematic ablation study was conducted to quantify the contribution of the proposed MMFNet and to distinguish the benefit of dual-modal input from that of the fusion design. The collected paired images were randomly divided into training and validation sets with a 4:1 ratio, and all metrics were evaluated on the validation set only. Each configuration was trained and evaluated for five independent runs under the same setting to reflect run to run variability.

Table 2 compares three model configurations, including the visible-only model, the infrared-only model, and the complete dual-modal model. The infrared-only model achieves higher accuracy than the visible-only model, which is consistent with its ability to capture thermal anomalies related to incomplete inter-track bonding. The visible-only model shows larger variability, which is consistent with its higher sensitivity to transient optical disturbances such as arc glare and spatter. The dual-modal model provides the best overall performance, achieving the highest mean accuracy together with the smallest variability.

To make the run-to-run variability explicit,

Figure 11 visualizes the distribution of validation accuracies over the five runs for the three configurations reported in

Table 2. In this plot, the spread reflects variability across repeated training and evaluation under the same setting, rather than measurement uncertainty. The dual-modal model exhibits not only the highest median accuracy but also strong stability across all runs, with all results remaining above 98%.

Uncertainty of repeated evaluation:

To quantify the uncertainty associated with repeated evaluation, each configuration was trained and evaluated for

independent runs under the same training and validation setting. Let

denote the validation accuracy of run

. The mean accuracy is

The sample standard deviation is

The standard uncertainty of the mean is

and the expanded uncertainty at 95% confidence is

where

is the Student

factor for

degrees of freedom. This uncertainty describes run-to-run variability due to stochastic training factors such as initialization and mini-batch ordering, and it does not represent sensor measurement uncertainty.

Using the results in

Table 2, for the visible-only model

and

, giving

. For the infrared-only model

and

, giving

. For the dual-modal model

and

, giving

. These results indicate that the dual-modal model not only achieves the highest mean accuracy but also exhibits lower run-to-run variability than the unimodal baselines under the same evaluation setting.

To isolate the effect of the proposed CMIM,

Table 3 reports a dual-modal baseline without CMIM and the complete dual-modal model. The baseline without CMIM improves accuracy relative to unimodal models, confirming that combining infrared and visible information enhances robustness beyond any single source. Adding CMIM further increases accuracy from 96.05% to 98.34% demonstrating that the performance gain is not solely due to the availability of dual-modal input, but is also attributable to the proposed interactive fusion mechanism.

The per-class metric further confirms the benefit of CMIM for defect-risk reduction. As shown in

Table 3, incorporating CMIM improves recall from 0.90 to 0.95, indicating fewer missed-defect cases under the same evaluation setting. This improvement complements the overall accuracy gain and supports the effectiveness of the proposed interactive fusion mechanism for robust overlap defect detection.

In summary, the ablation results verify that the performance superiority of the proposed system is intrinsically linked to the CMIM-based fusion architecture. The CMIM is the key component that unlocks the synergistic potential of dual-modal data for robust overlap defect detection.

4.3. Comparative Analysis

Having established the efficacy of the fusion strategy, the proposed MMFNet was benchmarked against state-of-the-art network architectures to evaluate its overall performance. The competitors included widely adopted models in the field: ResNet-50 and Vision Transformer (ViT-Base). To ensure a fair comparison, all models were adapted to accept dual-modal input via early fusion (channel-wise concatenation of visible and infrared images at the input layer), which is the most common baseline approach for multimodal learning.

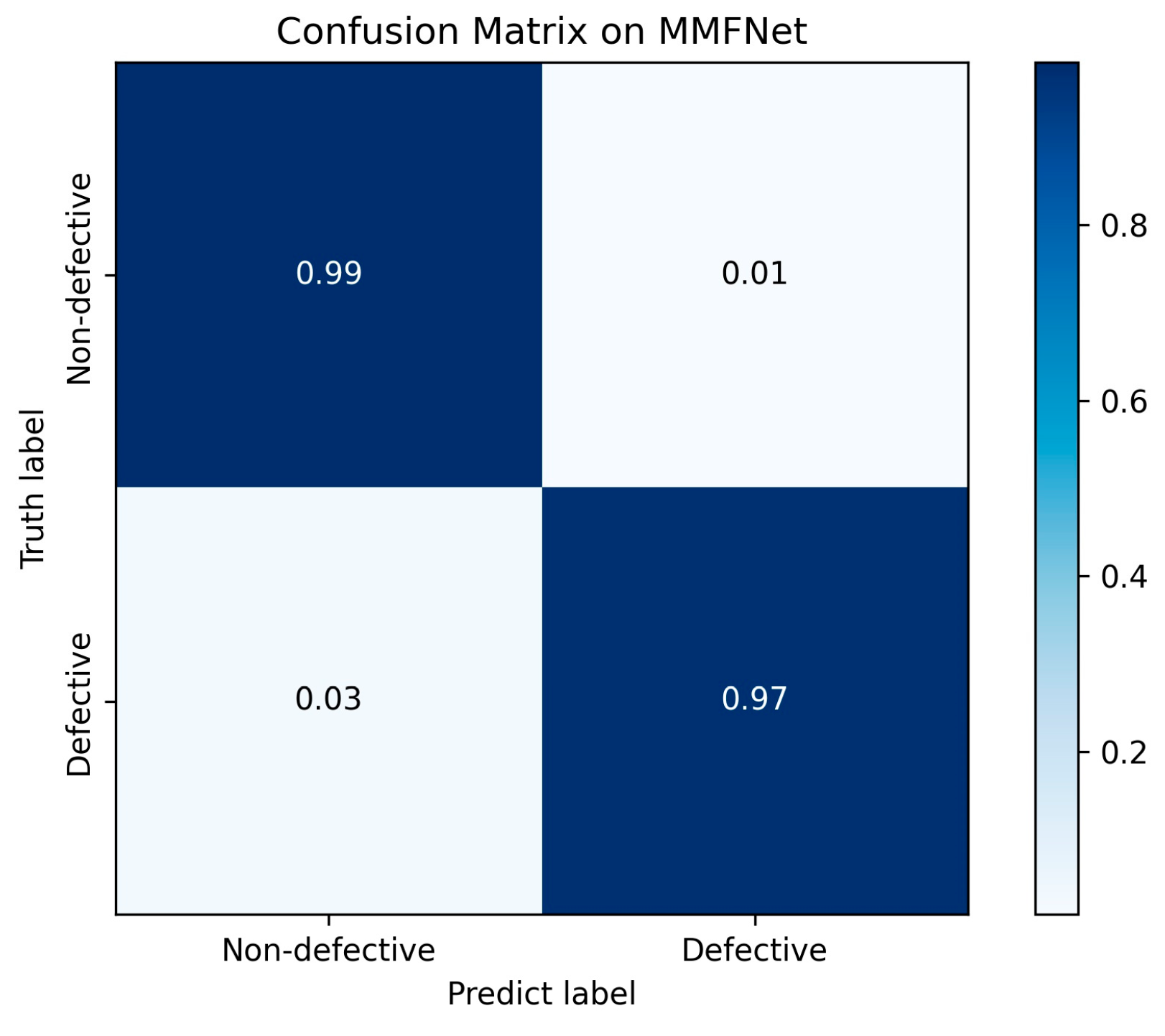

Figure 12 presents the confusion matrices corresponding to the binary classification task under different input configurations. The diagonal entries of each matrix indicate correctly classified instances, while the off-diagonal elements represent misclassifications. A higher concentration along the diagonal signifies improved classification accuracy, thereby providing an intuitive visualization of the comparative performance across unimodal and multimodal models.

The F1 score, computed for each class individually, serves as a balanced measure of classification performance. It is defined as the harmonic mean of precision and recall, effectively capturing the trade-off between false positives and false negatives. A higher F1 score indicates that the model achieves both high precision and high recall, making it particularly suitable for evaluating performance in imbalanced classification scenarios.

The comparative results are presented in

Table 4. The proposed MMFNet model obtained the highest scores across all evaluated metrics. It achieved an accuracy of 98.34%, compared to 95.36% for ResNet-50 and 91.39% for ViT. More notably, the Recall rate for MMFNet was 95.00%, exceeding that of ResNet-50 and ViT. Given that Recall measures the model’s ability to identify all actual defect instances, a higher value is critical for minimizing missed detections in quality monitoring applications.

The Macro-F1 score, which provides a balanced summary of precision and recall, was 99.11% for MMFNet. This represents an increase of 4.55% over ResNet-50 (94.56%) and 9.40% over ViT (89.71%). The superior Macro-F1 score indicates that the proposed model maintains a more robust balance between correctly identifying defects and minimizing false alarms, even in the presence of class imbalance.

The results in

Table 4 indicate that while general-purpose architectures like ResNet and ViT provide strong baseline performance, a specialized design may be better suited for the specific requirements of multimodal defect detection. The early fusion strategy employed for the baseline models may be insufficient for modeling the complex, non-linear interactions between visible and infrared modalities. In contrast, the MMFNet architecture, which incorporates dedicated backbones and cross-modal interaction modules, appears to more effectively leverage the complementary information present in the two data streams.

4.4. Discussion

The experimental results demonstrate that the dual-modal system achieves a significant improvement in overlap defect detection accuracy compared to single-modality models. Infrared imaging’s superiority in capturing thermal anomalies aligns with its ability to directly characterize temperature gradients caused by incomplete inter-track bonding. This advantage is visually corroborated by the Class Activation Mapping (CAM) analysis, which reveals that the infrared modality generates highly focused activation patterns concentrated specifically within the heat-affected zone. The infrared CAM exhibits a notable insensitivity to arc light interference, allowing it to maintain precise localization on thermally anomalous regions that correspond to areas of incomplete bonding and abnormal heat retention (

Figure 13).

Complementing the thermal analysis, visible light imaging provides distinct morphological information essential for characterizing geometric aspects of defects. The CAM visualization for the visible modality demonstrates a fundamentally different attention pattern, with activation distributed across the overall bead contour and particularly concentrated at the trailing edge of the melt pool. This focus on geometrical transitions and boundary variations captures critical morphological evidence of overlap defects, though the modality remains susceptible to arc light artifacts that can disperse attention patterns.

By synergizing these complementary modalities, the proposed system effectively leverages the precise thermal localization of infrared imaging and the detailed morphological sensitivity of visible light. The CAM analysis provides compelling visual evidence of this complementary integration: while infrared attention pinpoints the thermal signature within the HAR, visible light attention captures the geometrical manifestations at bead boundaries and melt pool transitions. This dual-perspective approach enables comprehensive defect characterization that neither modality could achieve independently, explaining the model’s superior performance and low variability in accuracy across trials.

In terms of computational efficiency, the current implementation provides a practical baseline for online monitoring. On the present hardware, the average inference time of the dual-modal model is 41.7 ms per paired frame. This indicates that the method can support online analysis at a reduced acquisition rate, while end-to-end latency benchmarking and validation under representative industrial conditions remain for future work.

4.5. Limitations and Scope

This study focuses on multi-pass WAAM builds and the overlap defect induced by inter-track spacing, and the current validation is limited to this defect scenario. Although arc-based WAAM is generally subject to optical disturbances related to shielding gas flow and transient fume, the dataset in this work was collected under stable and normal CMT operating conditions and does not intentionally cover cases with dense fume or severe optical occlusion. Therefore, the robustness of the proposed system under harsher industrial environments, such as poor ventilation, heavy fume, strong ambient illumination, or mechanical vibration, remains to be validated.

In addition, the experiments were conducted using a single baseline process parameter set in order to induce the target defect mechanism, rather than to map the full parameter space. The proposed model is intended to learn thermal and morphological anomalies associated with insufficient overlap, and generalization across broader process windows and different materials has not yet been systematically verified. Future work will expand validation to broader operating conditions, additional defect types, and more diverse build settings. Future research will also investigate cost-effective alternatives to infrared cameras, such as enhanced visible light sensors with broader temperature response ranges (see

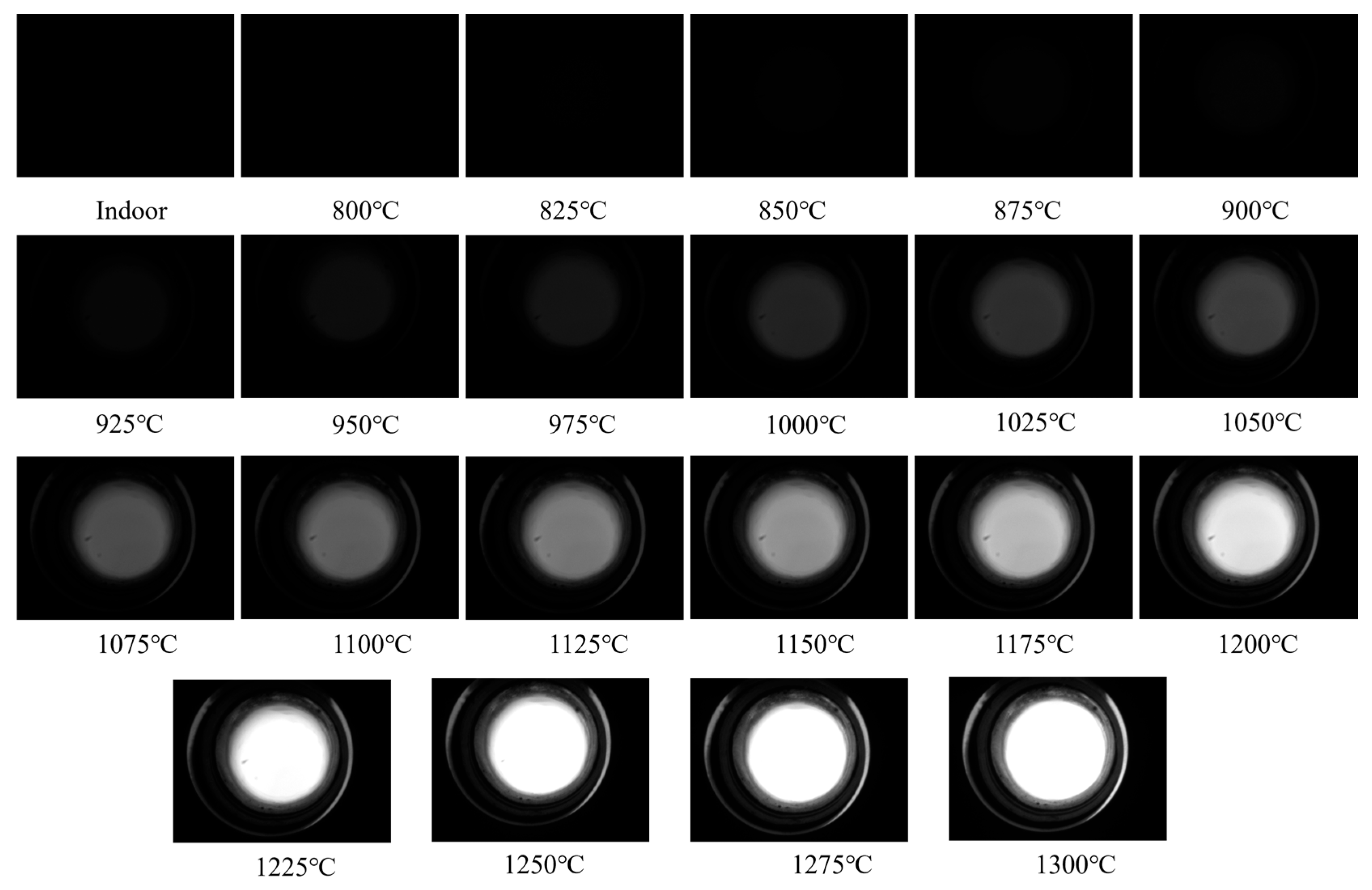

Appendix A for preliminary analysis). If real-time performance claims are required, end-to-end latency and inference throughput should be measured and reported under representative deployment hardware.

5. Conclusions

This study has established a visible–infrared dual-modal monitoring system for detecting overlap defects in wire arc additive manufacturing. The principal conclusions are as follows:

- (1)

A dual-modal fusion network, MMFNet, was designed around a Cross-Modal Interaction Module that performs dynamic feature-level fusion. Ablation studies verified that this module is critical for performance, as it effectively suppresses modality-specific noise and enhances complementary information.

- (2)

The proposed MMFNet architecture, incorporating a dedicated CMIM, achieves a defect detection accuracy of 98.34%. This represents a significant improvement over comparable unimodal models, which attained accuracies of 95.76% (infrared) and 92.85% (visible light). The network’s design facilitates effective feature-level fusion of complementary information.

- (3)

The model demonstrated high robustness, with low performance variance across trials. Visualization via Class Activation Mapping provided interpretable evidence that the model’s decisions are based on physically relevant features, including thermal anomalies in the infrared modality and morphological irregularities in the visible modality.

In summary, this work validates the significant advantage of intelligent multimodal fusion for quality assurance in additive manufacturing. The proposed framework offers a reliable and interpretable solution for detecting overlap defects.

Future work will focus on validating robustness under representative industrial conditions, including stronger optical disturbances and longer production runs. In addition, expanding the dataset to cover broader process windows, different materials, and additional defect types will be important to assess generalization. Finally, system-level benchmarking and optimization will be conducted to quantify end-to-end latency and to support online monitoring in deployment-oriented settings.