1. Introduction

Numerous researchers have attempted to enhance the sustainability of concrete by not only reducing the amount of carbon dioxide (CO

2) generated from the production of the Portland cement, but also increasing the durability of concrete, which can benefit the environment through the conservation of resources and the reduction of waste [

1]. One commonly used strategy is to utilize recycled aggregates and mineral admixtures, such as fly ash, ground granulated blast furnace slag (GGBFS), and silica fume as a partial replacement for cement or aggregate in concrete [

1,

2,

3]. The use of such industrial by-products has been found to improve the mechanical properties and durability of concrete, reducing CO

2 emissions, conserving energy, and mitigating the adverse environmental effects of concrete [

4].

Blast furnace slag is a by-product that was obtained in the production of iron in a blast furnace. When the molten blast furnace slag is quenched with water and finely ground to a cement parcel size, it is transformed into GGBFS. GGBFS, as a latent hydraulic material, reacts with calcium hydroxide (Ca(OH)

2) in the presence of water, forming calcium silicate hydrate (C-S-H), which is primarily responsible for the strength of cement-based materials [

5,

6]. Through this pozzolanic reaction, the use of GGBFS as a supplementary cementitious material might reduce the early strength, but it increases the ultimate strength and significantly improves the microstructure and durability of hardened concrete [

7,

8,

9].

Several empirical equations and mathematical models have been developed for estimating the compressive strength (CS) and other properties to minimize the experimental task that is required for concrete mix design [

10,

11,

12]. These equations are generally in regression form based on the results of a series of experiments. However, selecting a suitable regression equation (linear, nonlinear, exponential, etc.) for each analysis requires considerable experience and multiple techniques, and the accuracy of analysis decreases as the number of explanatory variables increases [

13,

14,

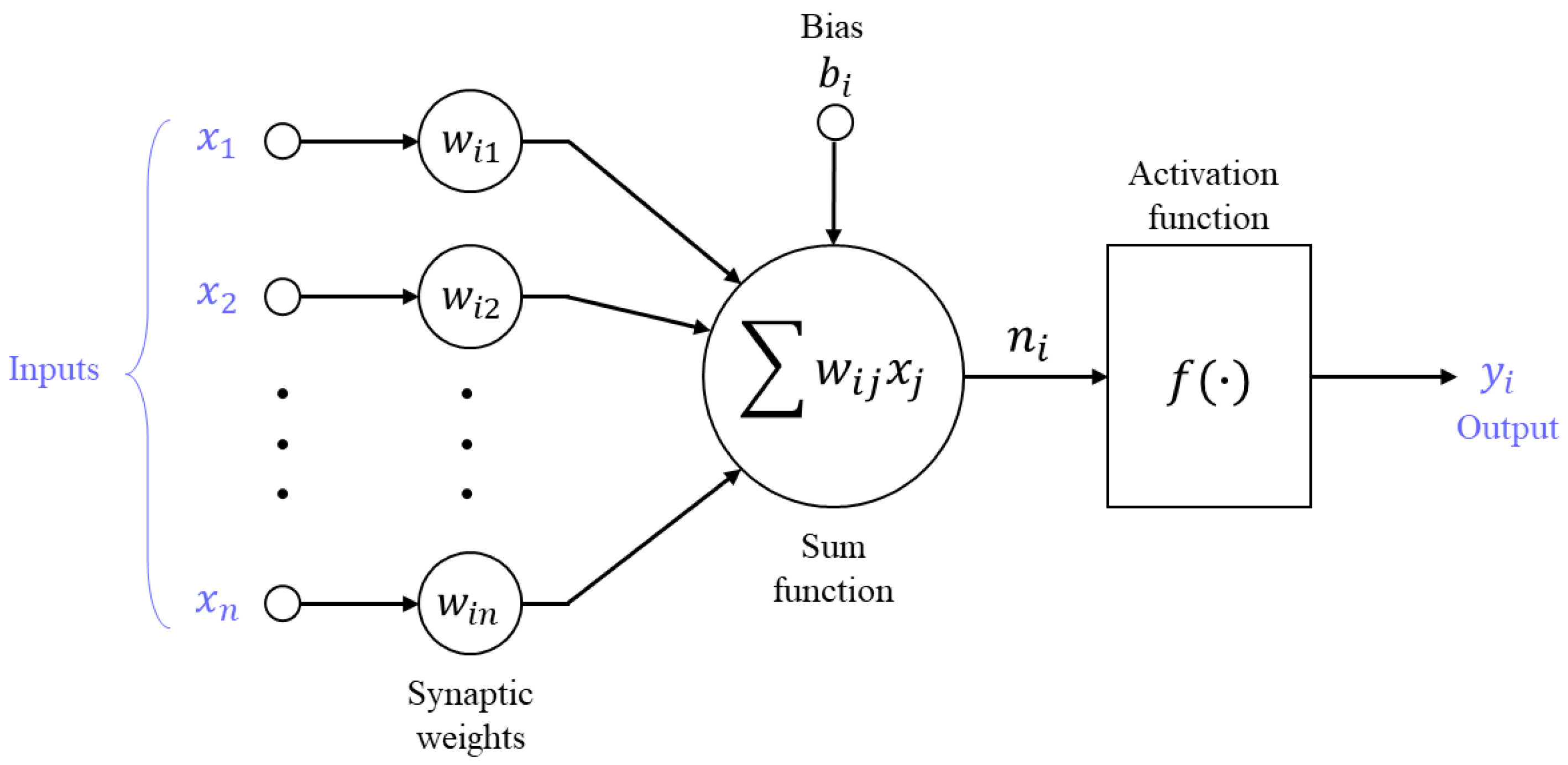

15]. In recent years, numerical modeling for such relationships has been accomplished by constructing an artificial neural network (ANN) model, which is capable of learning and generalizing from examples through the trial-and-error method without any presumptions [

13,

16]. ANNs can not only produce correct or nearly correct solutions to incomplete tasks, but also generate evidential results, even when the data are poor or insufficient [

17,

18]. Owing to these advantages, numerous researchers have applied ANNs for predicting the CS and other properties of concrete [

4,

19,

20,

21]. Bilim (2009) [

21] used ANN models that were trained by several different back-propagation (BP) algorithms to predict the CS of GGBFS concrete based on concrete ingredients and age. Bakhta Boukhatem et al. (2011) [

22] investigated the efficiency factor of GGBFS related with concrete strength by using ANNs.

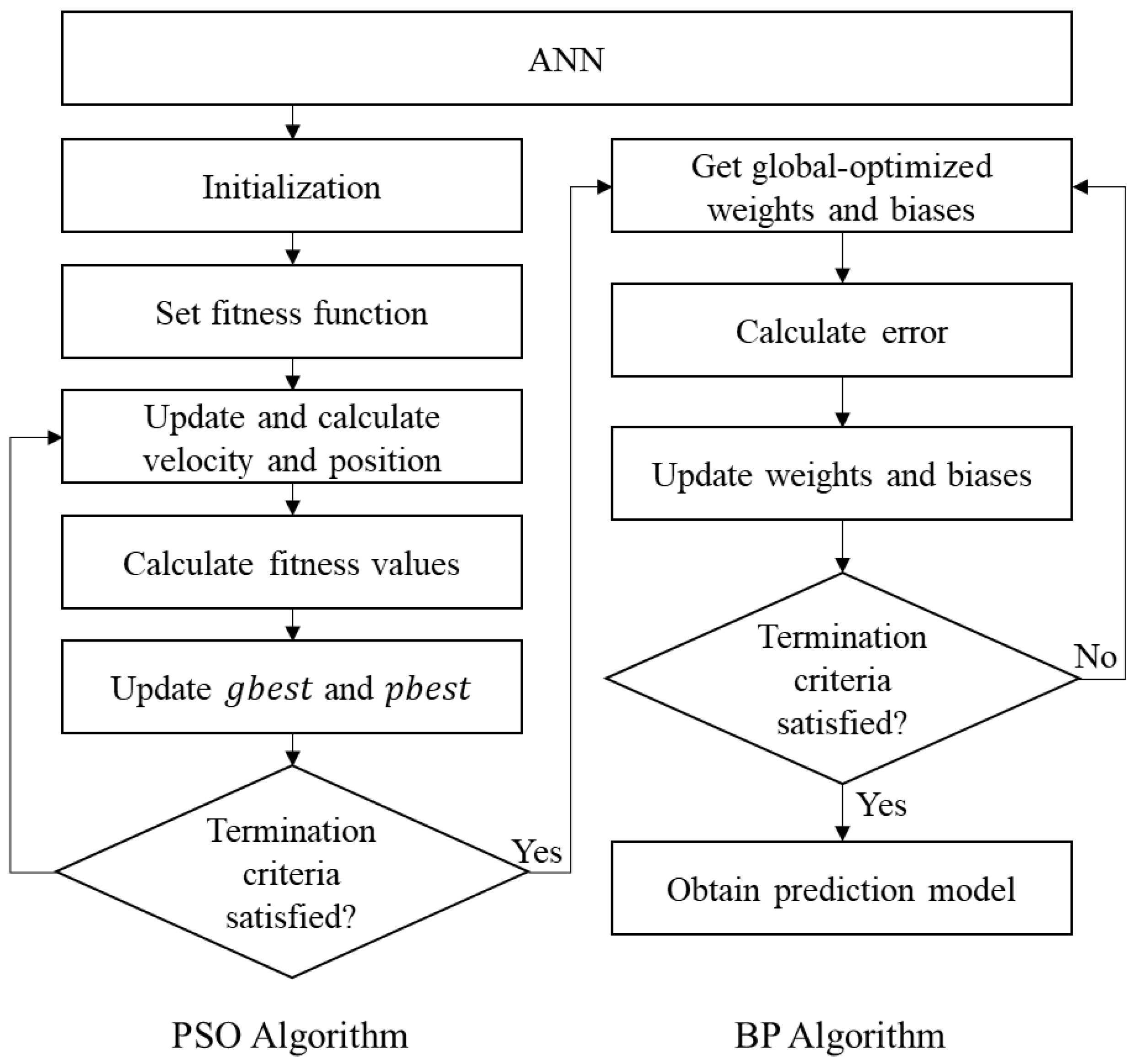

In most studies employing ANN models for the estimation of concrete properties, a BP algorithm was used to train the network [

19,

20,

21]. Nevertheless, the BP algorithm has some disadvantages: it can be easily trapped in local minima depending on the selection of initial parameters and it may be unreliable (with a low prediction accuracy), relying on training data [

23,

24]. Combinations of BP and several metaheuristic algorithms have been proposed as alternatives to overcome these drawbacks. Among the metaheuristic algorithms, particle swarm optimization (PSO) has been often integrated with BP algorithm to improve the performance of predictive models due to its simplicity and wide applicability. The hybrid PSO-BP algorithm uses the global search ability of PSO algorithm and the fast-converging capabilities of BP algorithm so that the ANN models with it can converge to true global optimization more accurately and rapidly than the models with a single algorithm. The effectiveness and superiority of this hybrid algorithm have been proven in various fields [

25,

26,

27,

28]. Bo et al. (2017) [

27] proposed a hybrid PSO-BP neural network for wind power forecasting, and its performance was compared to the network that was trained by the conventional BP algorithm. The results of their study showed that the performance prediction of the developed hybrid algorithm is superior to the basic BP algorithm. Wang et al. (2015) [

28] used the PSO-BP neural network to enhance the performance of the integrated navigation system and indicated that neural networks with the hybrid PSO-BP algorithm can compensate and estimate the navigation error more effectively than the conventional neural networks. However, few studies have been performed on the use of the hybrid algorithms to develop ANN models for predicting concrete properties.

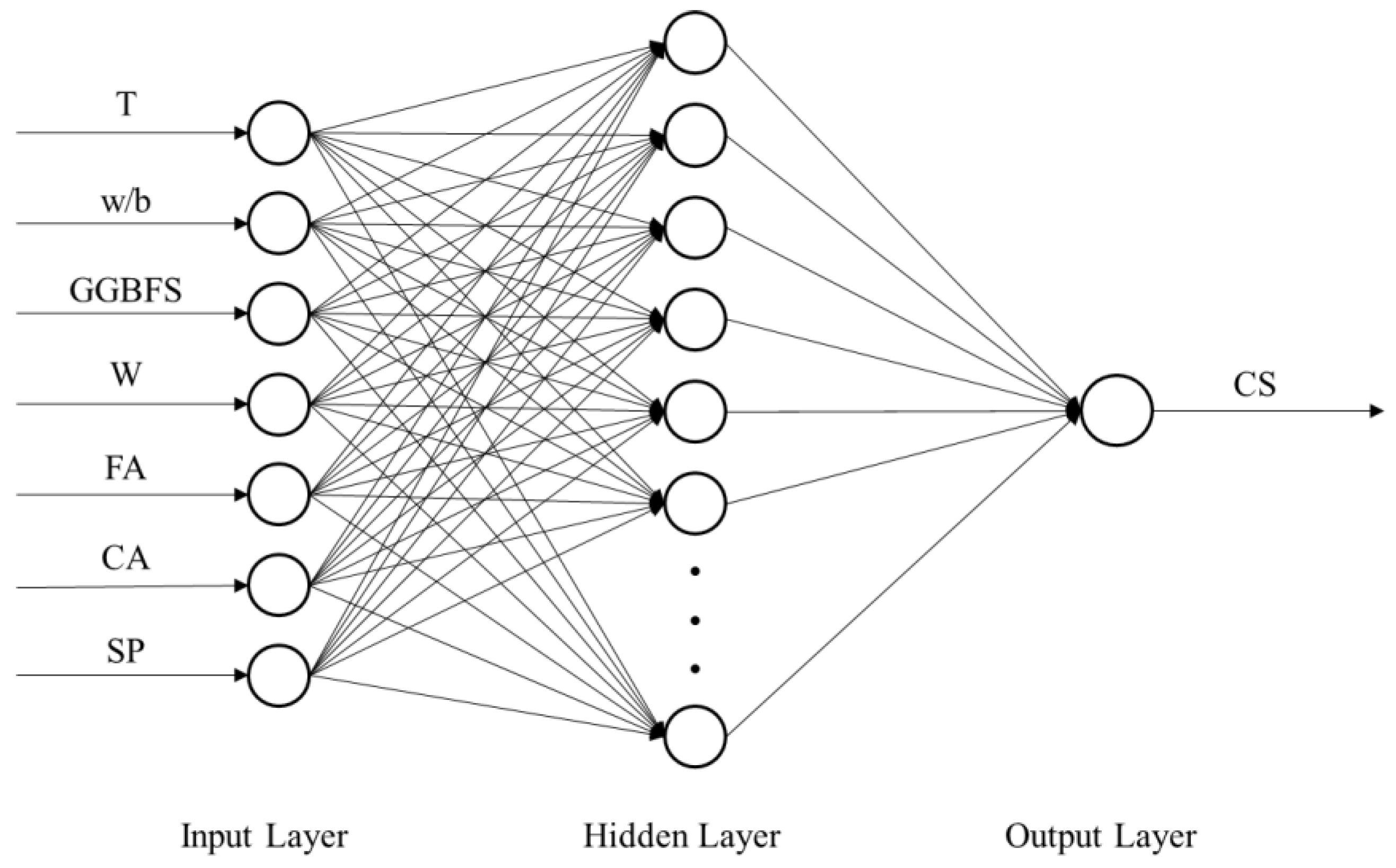

In this study, three different ANN models using BP, PSO, and hybrid PSO-BP algorithms were developed for predicting the CS of GGBFS concrete based on the concrete mix ingredients and curing temperature. The prediction results of these models were compared to investigate the beneficial effects of combining the BP and PSO algorithms and select the best intelligent system for the estimation of GGBFS concrete strength.

5. Evaluation of CS Prediction Models

The CS prediction ANN models trained by the BP, PSO, and PSO-BP algorithms were evaluated and compared. Each model was run 15 times with different training and testing data, and the results were evaluated with regard to the prediction accuracy, efficiency, and stability through a threefold procedure.

The four statistical indices that were employed to evaluate the performance capacity and prediction accuracy of each CS prediction model. The RMSE, mean absolute error (MAE), mean absolute percentage error (MAPE), and coefficient of determination (

R2) were the main criteria that were used for performance measurement. These indices are defined as follows:

where

is the predicted value of the compressive strength,

is the experimental value,

is the total number of data,

is the mean value of the predicted strength, and

is the mean value of the experimental strength. Lower values of the MAE, RMSE, and MAPE and higher values of

R2 indicate a better predictability of the models.

Table 4 presents the performance indices of the best BP ANN, PSO ANN, and PSO-BP ANN models. As shown, among the developed models, the model that was trained by the hybrid algorithm had the lowest MAE, RMSE, and MAPE, as well as the highest

R2, for both the training and testing datasets, which indicated that this model could predict the CS with the highest accuracy. Furthermore, the difference between the statistical performance results for the training and testing data was the smallest for the hybrid model. This result reveals the PSO-BP network model has better generalization performance than the other models.

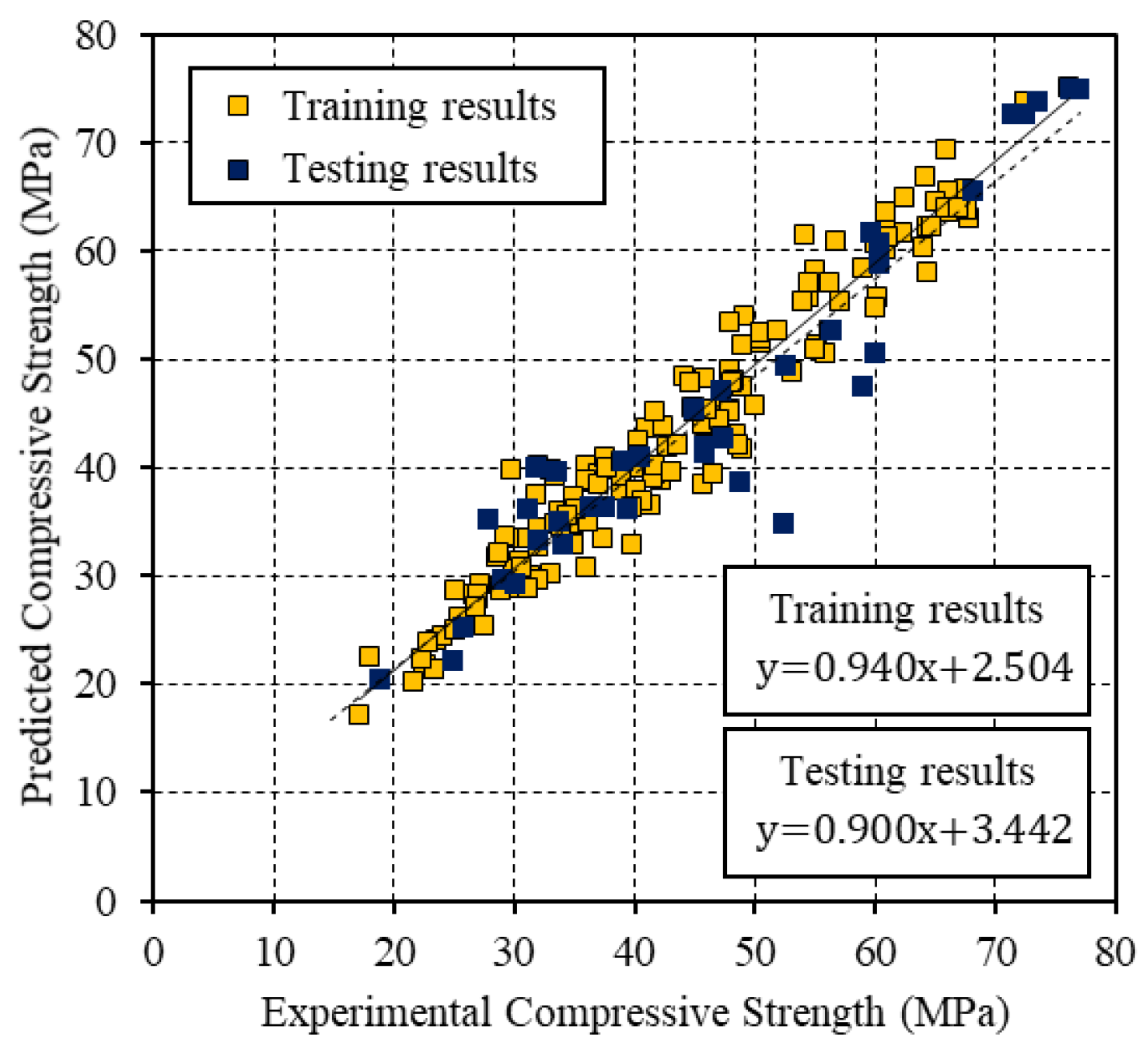

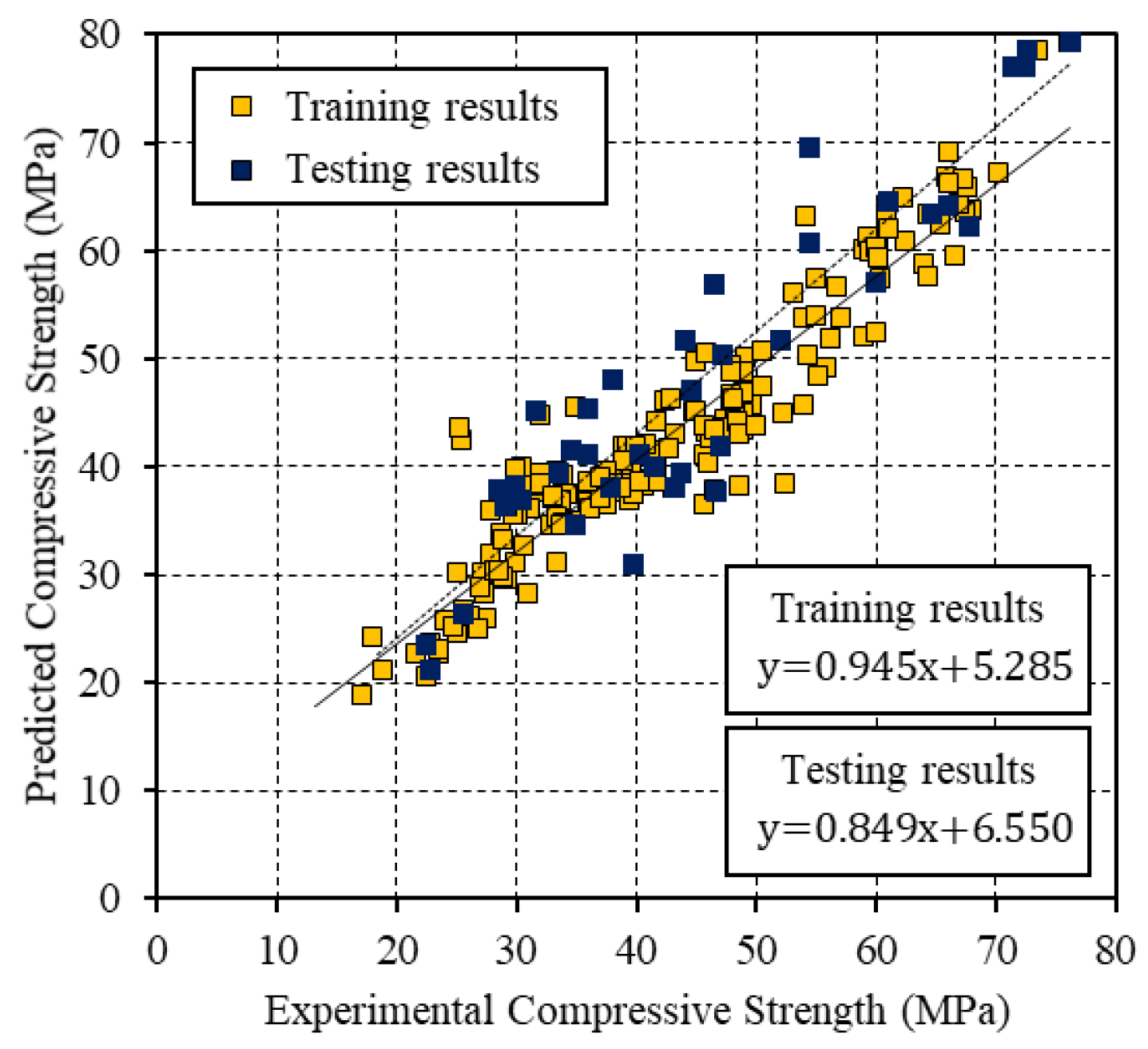

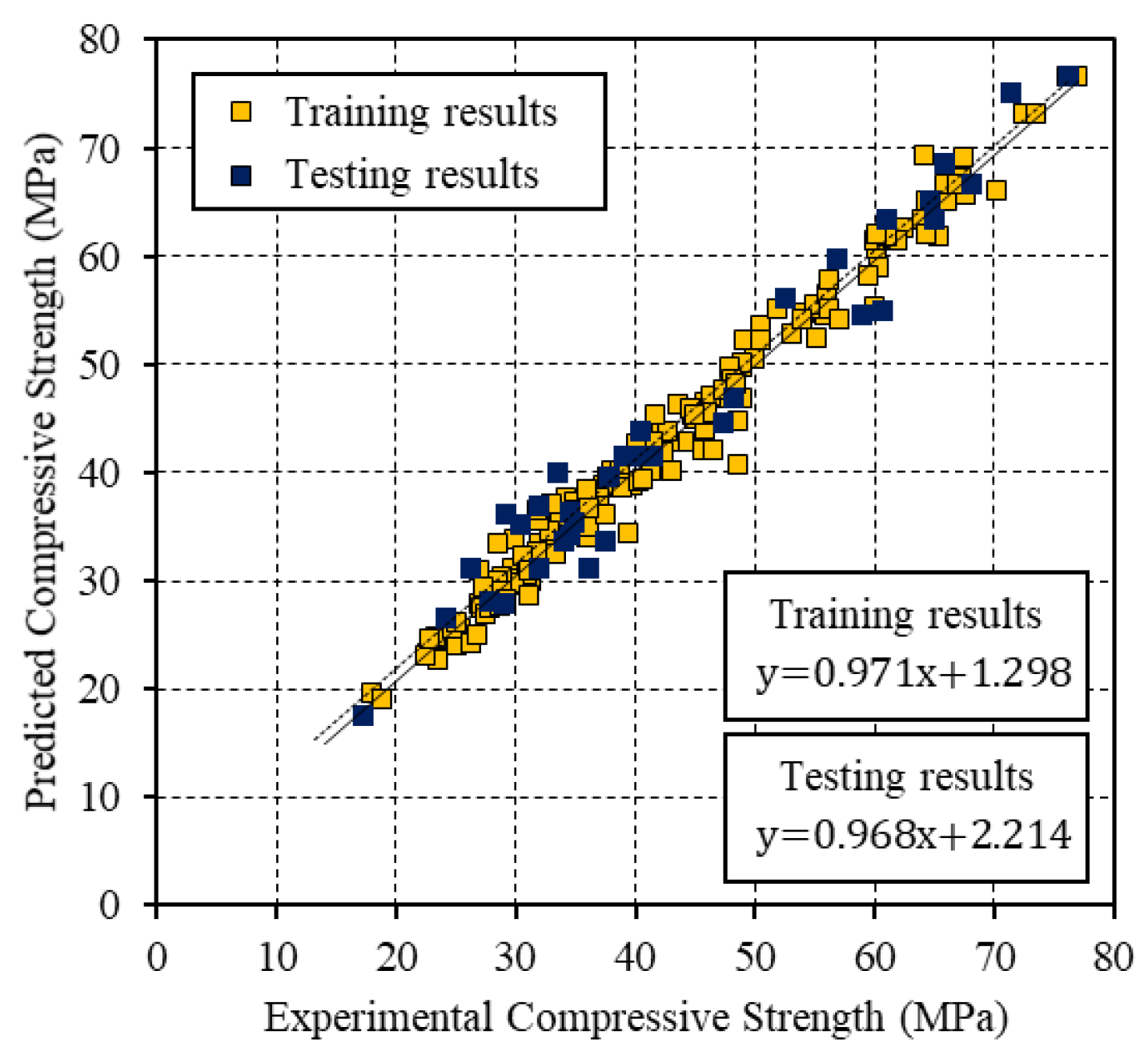

Figure 4,

Figure 5 and

Figure 6 present the relationships between the experimental CS and the values that were predicted by the BP, PSO, and PSO-BP networks, respectively. The BP and PSO-BP models exhibited

R2 values of >0.9 for both the training and testing datasets, which indicated that these models can provide reliable outputs with a high degree of fitness to the actual values. Thus, they are suitable for predicting the CS of GGBFS based on the mixture constituents and curing temperature. The relatively high

R2 values of the proposed PSO-BP model suggest that it has the potential for estimating strength more accurately than the other models.

To perform a detailed assessment, the computational efficiency of each model was evaluated while using the

SR [

64], which is given by the following equations:

where

is the relative error and

and

are the measured and predicted values, respectively, of the

th data entry in the dataset.

is the number of data entries, the relative error of which is smaller than the restrained error bound

(i.e., the number of entries within the area

), and

is the total number of data in the considered set. The

SR is the percentage of data that have equal or smaller relative error than the specified error criterion and it has been used for the estimation of the numerical efficiency and validity of the developed models in several studies [

64]. The

SR of each model was computed with the variation of the restrained error

from 0 to 100%.

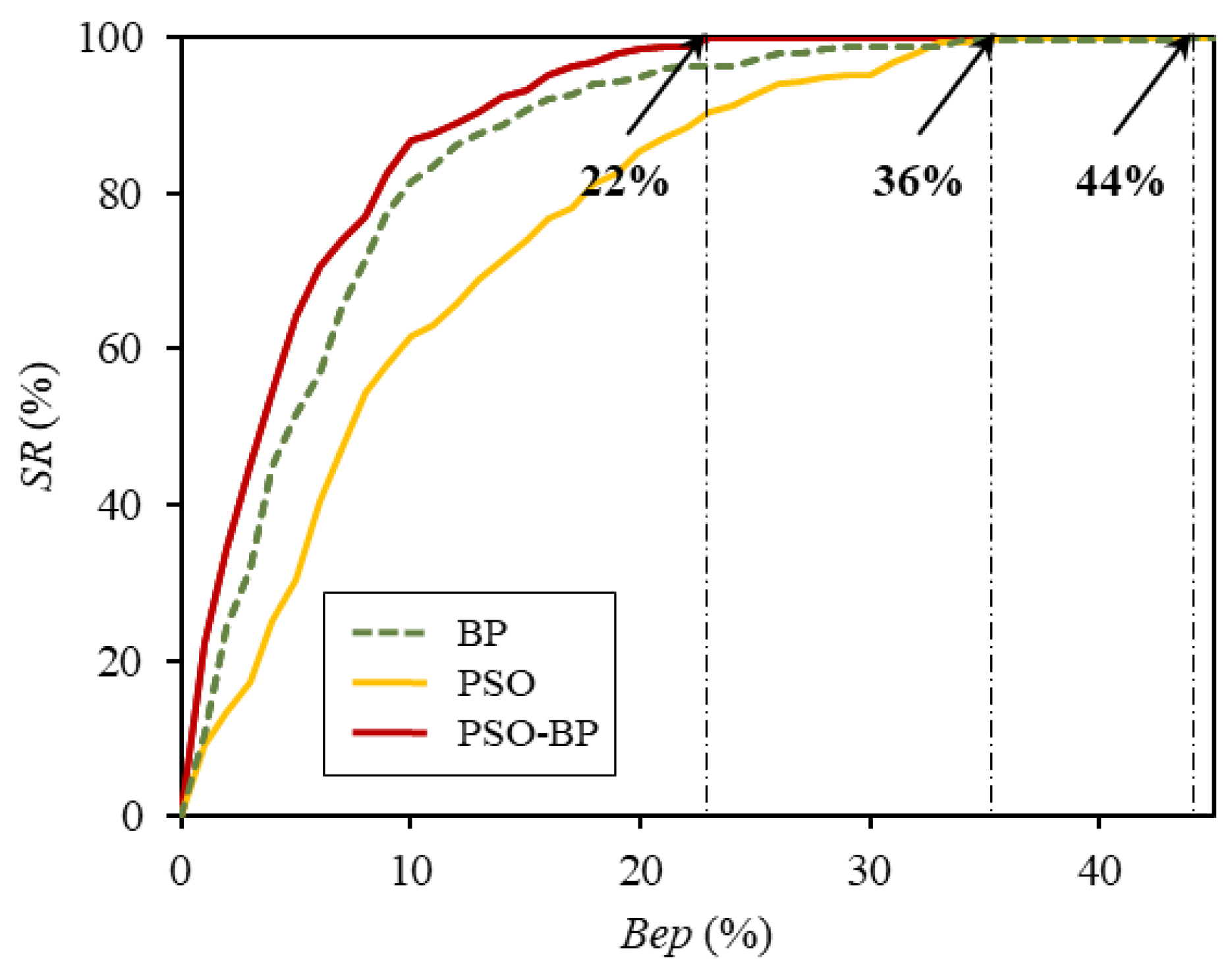

Figure 7 and

Table 5 show the obtained results. When

was 5%, the

SR for the PSO-BP ANN model was 64.1%, and those for the conventional BP ANN and the PSO ANN were 49.2% and 30.2%, respectively. These results indicate that 64.1% of the data were well-predicted by the hybrid model, with accuracy of

. As shown in

Figure 7, for all values of

, including 5%, the

SR of the PSO-BP network model was greater than that of the other models. Additionally, for the PSO-BP ANN, the relative error of the entire data was not greater than 22%; that is, when the restrained error

was 22%, the

SR was 100%. In comparison, the prediction errors of all the data for the ANNs that were trained by the BP algorithm alone and the PSO algorithm alone were equal to or smaller than 43% and 35%, respectively. These results indicate that the hybrid prediction model has better validity and efficiency than the other models for predicting the CS of GGBFS concrete.

Finally, to evaluate the stability of the developed models, the standard deviations of the RMSE for the models that were trained with 15 randomly selected training samples were calculated and compared. An ANN-based predictive model can give different outputs and have different performance for the same inputs, depending on the initial weight and bias values or the data-splitting method [

24]. This property can cause significant problems in practical application [

53,

65]. Therefore, the stability of an ANN model must be validated prior to use [

65,

66]. In this study, as mentioned previously, each model was trained 15 times while using different combinations of training and testing sets and, then, the standard deviation (

) of the RMSE was computed while using Equation (12) to evaluate the stability of the developed models. The standard deviation indicates the sensitivity of the prediction performance of a model to the data used to train and develop it. A model with higher standard deviation is more strongly dependent on the training observations.

Here,

is the total number of training data and

is the RMSE for the kth training set.

denotes the mean value of the RMSE for models that are trained by a specific algorithm with 15 randomly selected training samples.

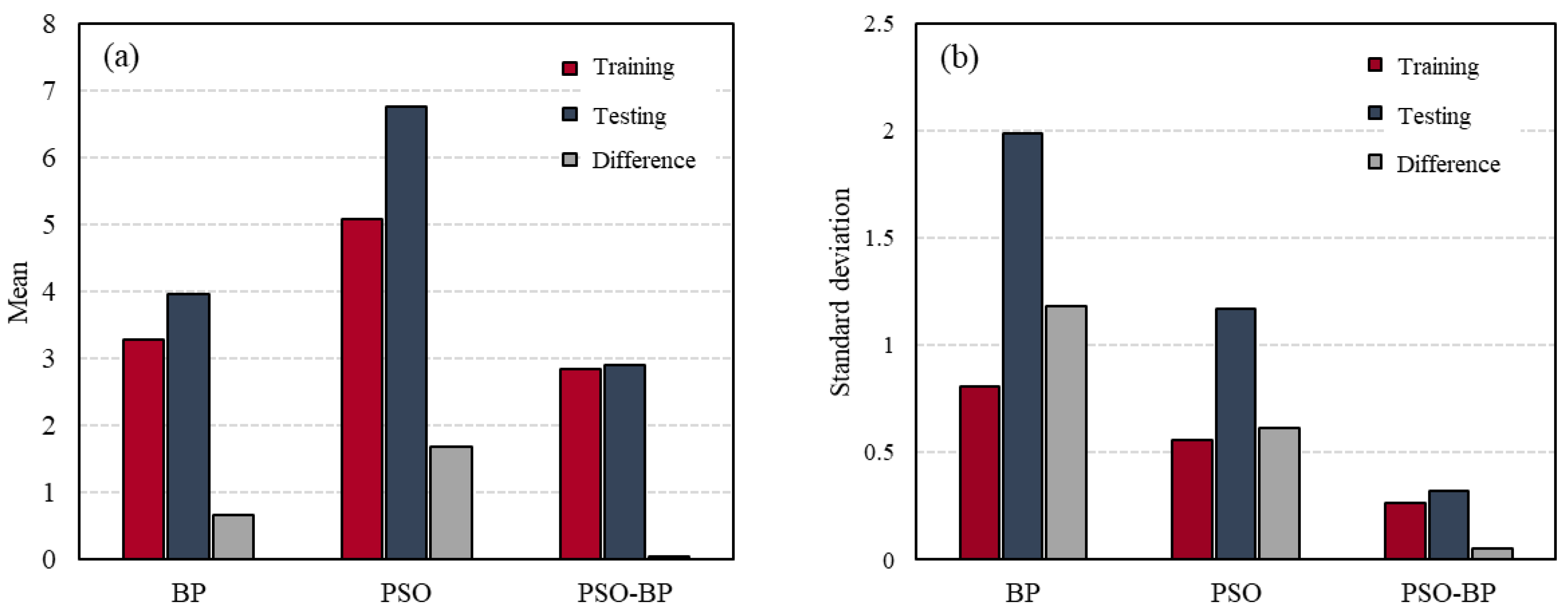

Figure 8 and

Table 6 show the standard deviations and mean values for the BP, PSO, and PSO-BP ANN models that are based on the training and testing datasets. The BP ANN model had lower means and higher standard deviations of RMSE than PSO ANN model. These results show that the ANN models trained by BP algorithm have better prediction accuracy, but lower stability than the PSO ANN models. The standard deviations and means of the PSO-BP ANN model for both the training and testing data were smaller than those of the other models, which indicates that the model based on the hybrid algorithm was less influenced by the data splitting. Moreover, the difference between the standard deviations for the two datasets was the smallest for the PSO-BP model. As a result, it can be concluded that the PSO-BP neural network model is the most stable and accurate among the three models for estimating the CS of GGBFS concrete.

6. Conclusions

The ANN models were constructed to predict the CS of GGBFS concrete based on the concrete mix proportions and curing temperature while using three different learning algorithms: BP, PSO, and PSO-BP. The parameters that were associated with each algorithm or neural network were determined via a trial-and-error method, and the proposed models were trained while using 269 data divided into two sets: testing and training. The developed PSO-BP neural network model was compared with ANN models that were trained by either BP or PSO to verify its accuracy, efficiency, and stability in prediction and to prove the synergetic benefits of using the hybrid algorithms.

The PSO-BP neural network model had the lowest values of the RMSE, MAE, and MAPE, as well as the highest values of R2 for both the training and testing data, and the deviation between the results that were obtained from the training and testing data was the smallest for the PSO-BP network. These results indicate that the proposed hybrid model has the best fit for not only training data, but also unseen data.

As shown in

Table 5 and

Figure 7, the hybrid model also had the highest

for the specified error limit; i.e., its maximum relative error was smaller than those of the other two models. Additionally, when the models were trained with 15 randomly selected training samples, the PSO-BP network model exhibited the lowest standard deviation and mean values of the RMSE, which demonstrates that its prediction performance was the least affected by data division.

Several performance analyses indicated that the PSO-BP ANN model offers more accurate, reliable, and stable prediction of the CS of GGBFS concrete than the other models. That is, it has the best predictability and generalization performance among the developed models in this study. According to the results, it is obvious that using the hybrid algorithm has synergistic benefits for the performance of ANN models and the proposed hybrid PSO-BP ANN model is reliable for estimating the CS of GGBFS concrete.