Evaluating Sentiment and Factuality of Offshore Wind Technological Trends Using Large Language Models

Abstract

1. Introduction

2. The UK Paradigm

2.1. Road to Net-Zero

2.2. Offshore Wind Success

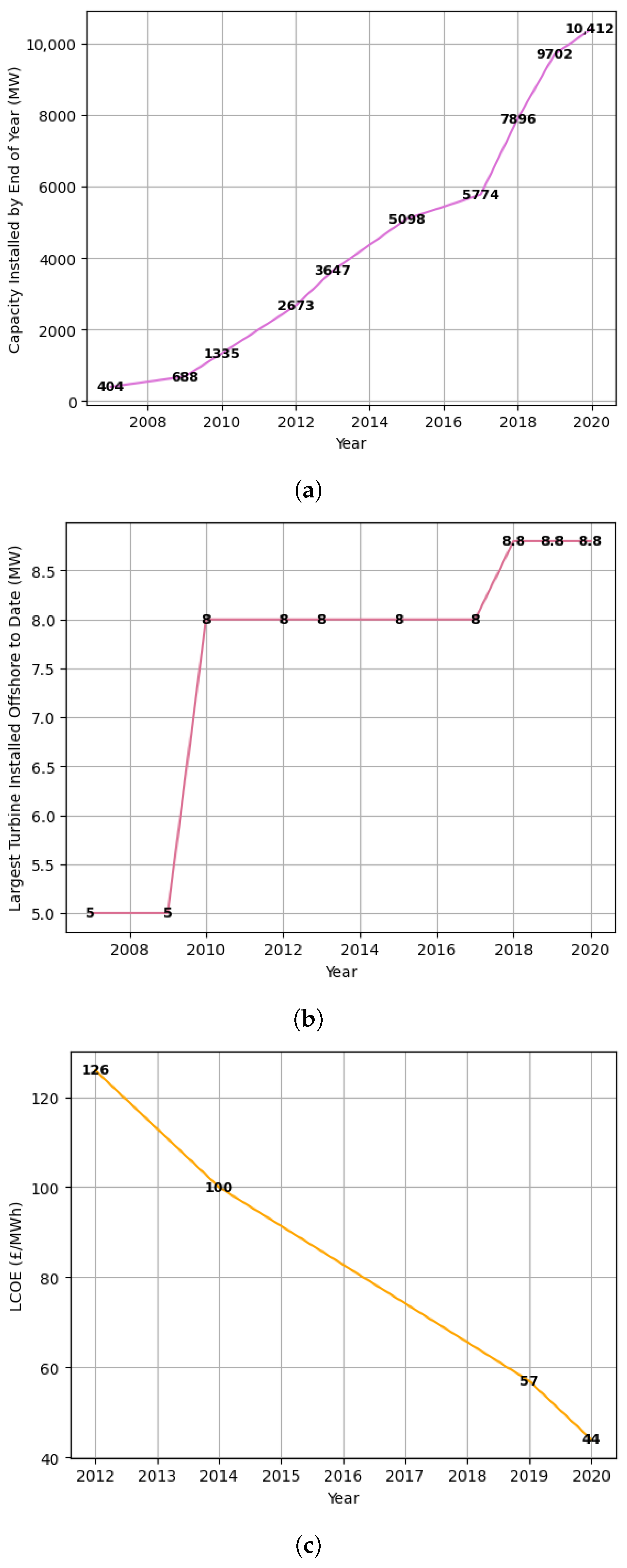

- Developments in Turbine Design: Greater power outputs have been enabled through larger turbines: increases in height and rotor diameter have allowed more wind energy to be captured at higher altitudes, where wind speeds are stronger and more consistent. Following a combination of advanced turbine designs and their cost reductions, the capacity of the largest UK turbines increased from 2 MW in 2000 to 8.8 MW in 2020 [17]. The total maximum power the UK can theoretically produce is referred to as installed capacity.

- New Construction Techniques: More efficient construction techniques, such as easier-to-install foundation structures and larger cable sizes, along with process standardisation have further reduced construction, assembly and installation costs [18].

- Government Support: Offshore wind in the UK is a policy success story. Consistent government support has led to improved investor confidence, catalysing advancements in research, supply chains and deployment. To incentivise investments with large-scale renewable energy, the UK government established the Contract for Difference (CfD) scheme, which has minimised investor risks from volatile wholesale prices. The CfD has elicited a healthy pipeline of offshore wind projects bidding into each auction [2]; this has fostered strong competition between developers and has resulted in a reduction of 10–21% on overall project costs [2].

3. Large Language Models

3.1. Emergent Properties

3.2. Prompt Engineering

3.3. Dangers of Stochastic Parrots

3.4. Environmental and Financial Costs

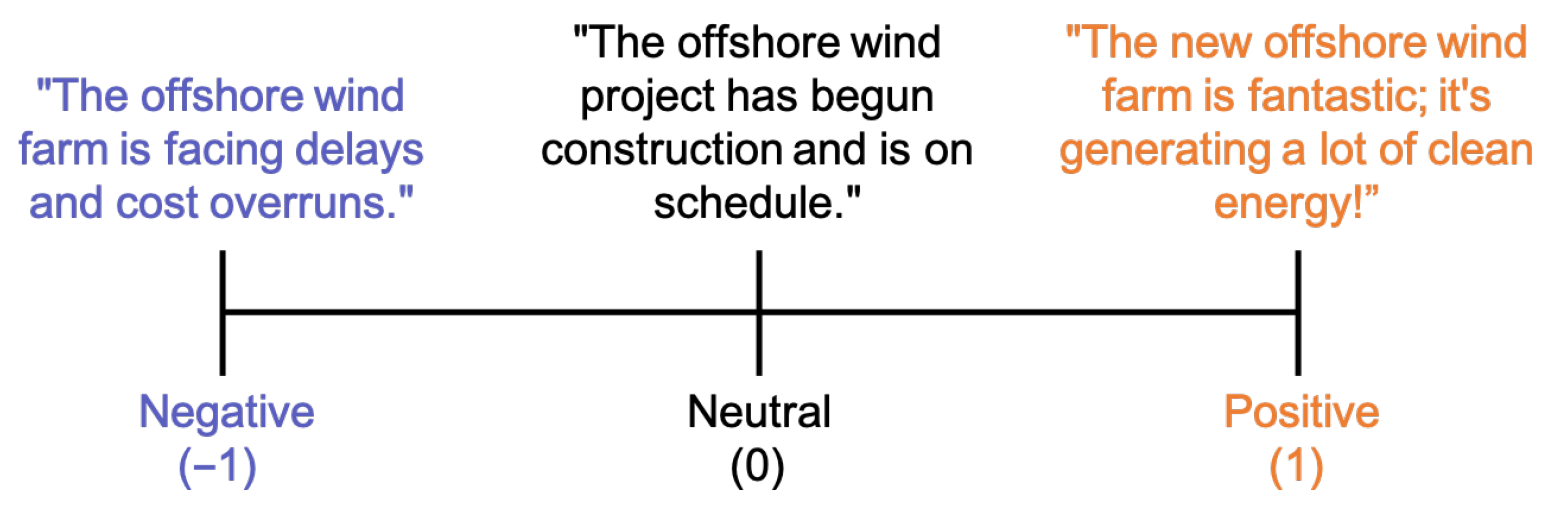

3.5. Sentiment Analysis

- Document Level analyses the sentiment of an entire document. This assumes that each document expresses opinions on a single topic, and thus not suitable for evaluating multiple topics.

- Sentence Level analyses the sentiment of individual sentences within a document. This enables a way for more granular understanding of sentiment transitions within a document.

- Aspect Level analyses the target of opinion (the aspect) instead of language constructs in documents and sentences.

3.6. LLMs vs. SLMs

3.7. Multi-Agent Debate: LLM Collaboration

3.7.1. Society of Mind

3.7.2. Principal Components

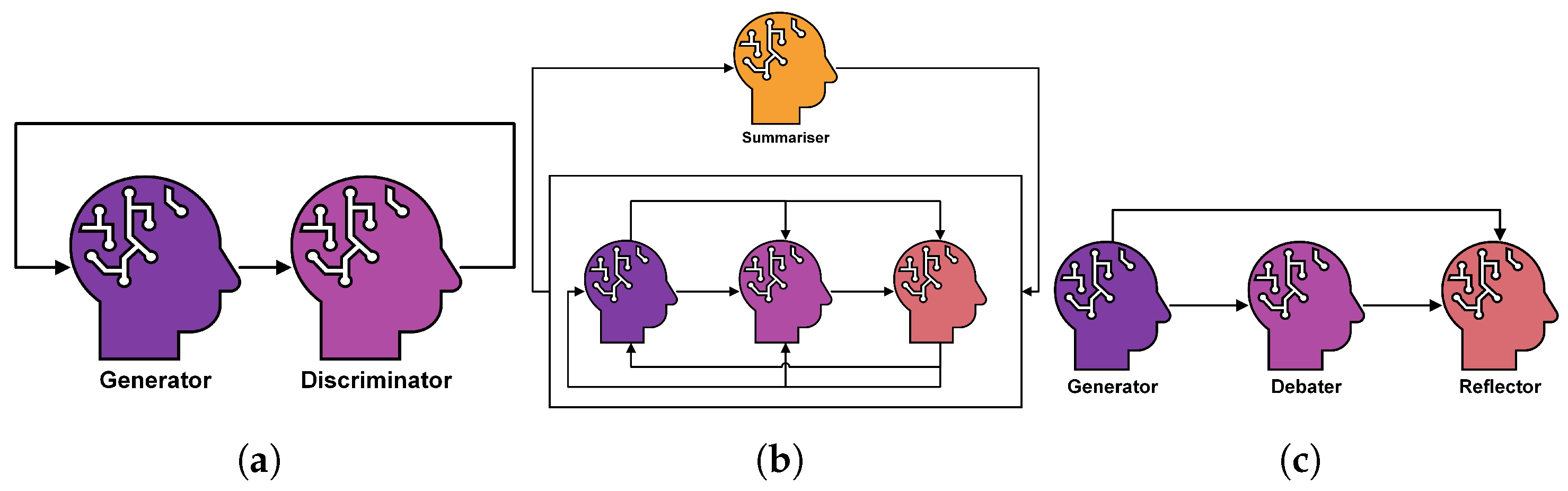

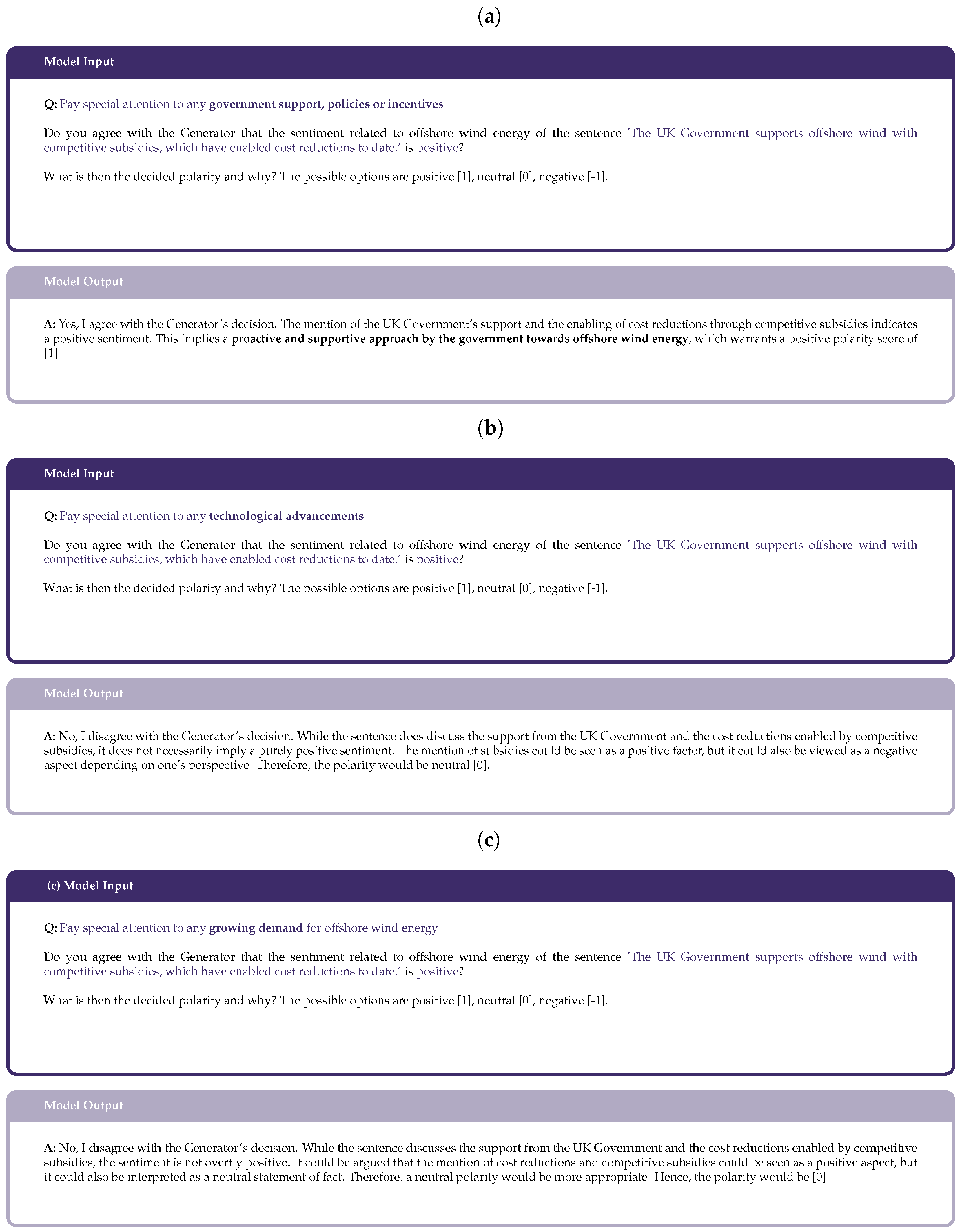

- Debater Agents: These individual LLM instances take input from previous agents and output new perspectives in group discussions. As part of a communication network, information flows between debaters. A key feature of debater agents is their distinct roles, which ensure varied viewpoints.

- Communication Strategy: This refers to how chat history is maintained. Various configurations are emerging, some using Summariser agents to overwrite previous chat history [31] and others employing Reflector agents [32] to review responses and extract lessons. Figure 5 illustrates examples of popular communication strategies.

- Diverse Roles: Stemming from the parallel between LLMs and social behaviours, having diverse personas for each debater is vital for effective MAD [31]. Designing for these different roles is a core optimisation challenge in understanding what traits and skills need to be distributed to each debater. In [29] approaches for designing these heterogeneous LLM agents based on prior domain knowledge of the types of errors that occur in the sentiment analysis tasks are presented.

3.7.3. Groupthink Phenomenon

4. Research Methodology

- First Layer—Dataset The first layer sources the literature relevant to offshore wind energy in the UK, and processes these data in an interpretable format for labelling. The dataset analysed in this study comprises UK-based energy literature collected from 2010 to 2022, encompassing a total of approximately 50 documents. These include a diverse range of government and industry reports, academic journal articles, and policy briefings focusing on offshore wind technology, energy transition, and sustainability. Data are sourced primarily from open-access repositories, government archives, and Scopus-indexed publications to ensure representativeness across academic and policy domains. While the dataset provides a comprehensive view of the UK energy landscape, we acknowledge a potential bias toward English-language and UK-centric publications, which may limit broader international generalisability. Nevertheless, this focus enables a more detailed and contextually grounded assessment of offshore wind discourse within the UK energy sector.The data are then gathered across a wide time range and breadth of sources to analyse the time-series trends and limit bias, respectively. Pre-processing converts the PDF documents into tokens of text (as detailed in Section 5.1.2).

- Second Layer—Labelling: Following data collection and pre-processing, two consistency-based prompting techniques using pre-trained LLMs are compared using a ground truth dataset constructed from a randomly sampled subset of the overall test data. Popular GPT-3.5 and GPT-4 models are used, alongside open-source models Llama, Mixtral and Falcon. Self-consistency and multi-agent debate prompts are designed and contrasted. These consistency-based techniques are essential for reducing hallucinations to improve the reliability of results. The best-performing approach will be selected for labelling the offshore wind dataset, required for performing sentiment analysis. Polarity, subjectivity, category and aspect opinions are chosen to be extracted from the data in order to capture the fine-grained details of offshore wind features. Hence, this implements an aspect-based sentiment analysis task. Best performing refers to the design that yields the most accurate results against the ground truth dataset and that requires the lowest computational cost since energy-efficient models are needed for the future of LLMs.

- Third Layer—Offshore Wind Development and Trends: From the labelled offshore wind dataset, the third layer involves presenting the evolution of polarity and subjectivity, along with the positive and negative keywords, to verify offshore wind becoming more positive and objective with time, following the UK’s successful deployment.

- Fourth Layer—Tracking Sentiment Analysis: Lastly, the fourth layer entails tracking the sentiment development relevant to cost, capacity, and turbine size features.

5. Experimental Setup

5.1. Dataset

5.1.1. Dataset Collection

5.1.2. Data Pre-Processing

- Extract Text: Using the Python fitz library, the text within the PDF document is extracted.

- Noise Reduction: This removes special characters that are not [a-zA-Z0-9,.%-:;], HTML tags, header titles, references, and page numbers that appear throughout the data.

- Tokenisation: Using the Python punkt tokeniser model from the nltk library, the string of text is segmented into a list of consecutive sentences.

- Handling Noisy Text: The final step in the data pre-processing pipeline is to mitigate the impact of remaining noisy characters, including duplicate newline commands and negligible sentences.

5.1.3. Ground Truth Dataset

5.2. Selection of Large Language Models

5.3. Self-Consistency

Generator LLM Agent

5.4. Multi-Agent Debate

5.4.1. Debate Metrics

- Accuracy is important to quantify how well the model performs against the ground truth dataset, a metric already used for the self-consistency results.

- Answers Changed is used to measure the confidence of the model in changing the previous model’s answer. If the answers changed is low, this could either mean an accurate consensus has been reached between the two agents, or it could imply that the debater model struggles to impart its own opinion. Likewise, if the answers changed is high, this could mean the previous model’s answers were mostly incorrect or that the debater agent is overly confident. This behaviour significantly affects the debating performance and understanding such metric is highly important when designing the prompt and selecting the LLM.

- To differentiate these varying interpretations, the Answers Changed when Correctly Parsed metric indicates the loss incurred by the debater agent from reevaluating the previous model’s correct decisions. A low percentage is desirable since this means the debater model leads to more accurate conclusions. Contrasting this, a high percentage implies that arbitrary changes have been made by the debater without a strategic basis—this would require design improvement to enhance the model’s decision-making accuracy.

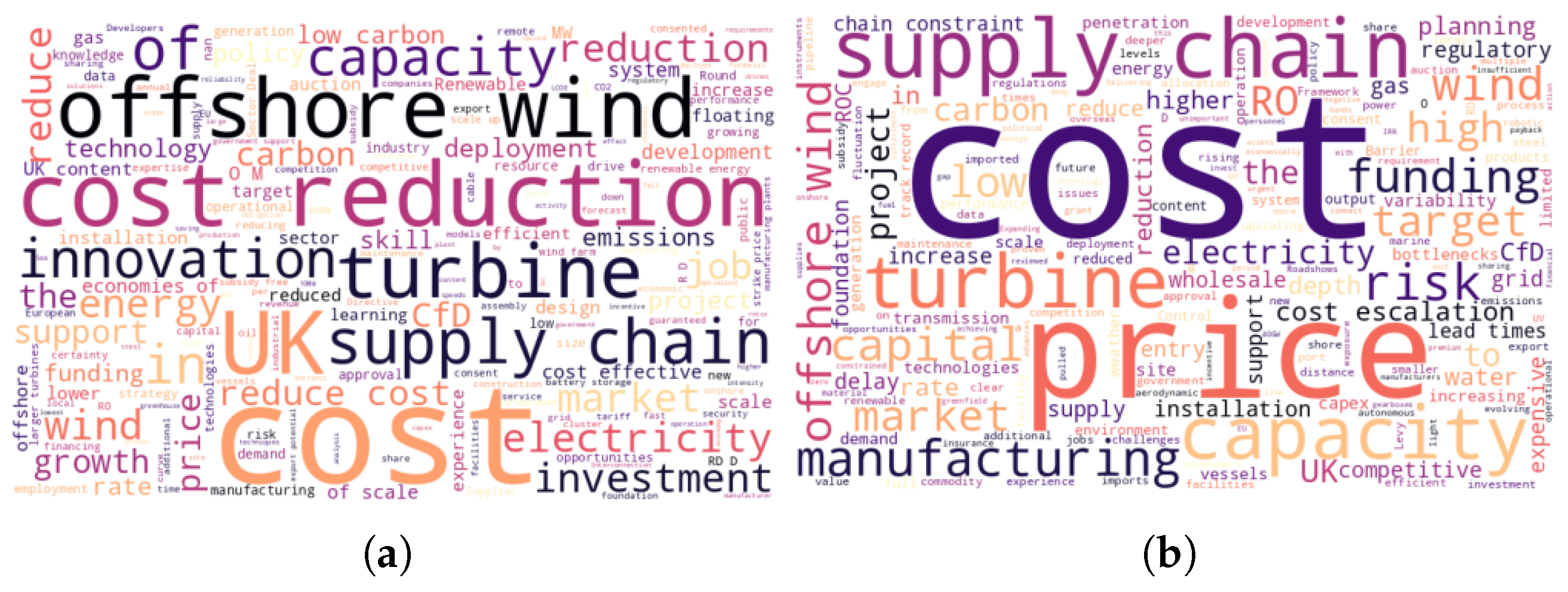

5.4.2. Heterogeneous Role Prompts

5.4.3. Debater LLM Agent

5.5. Sentiment Analysis Pipeline

5.6. Introducing EnergyEval

- Multi-agent debate in the evaluation of sentiment and subjectivity, leveraging heterogeneous energy-specialised role prompts to improve upon accuracy and offshore wind-specific reasoning.

- Effective debate has been encouraged through a least-to-most prompt design in the polarity task, structuring an unbiased and logical debate dialogue to reduce hallucinations and the detriment of groupthink.

- Mixtral and GPT-3.5 are employed in this design as the generator and debater, respectively, in which the use of different LLMs has not been explored yet in MAD sentiment analysis [32].

- The topic modelling task follows a single-prompting strategy using the Mixtral model due to the simplicity of its instruction.

6. Results and Analysis

6.1. Overview

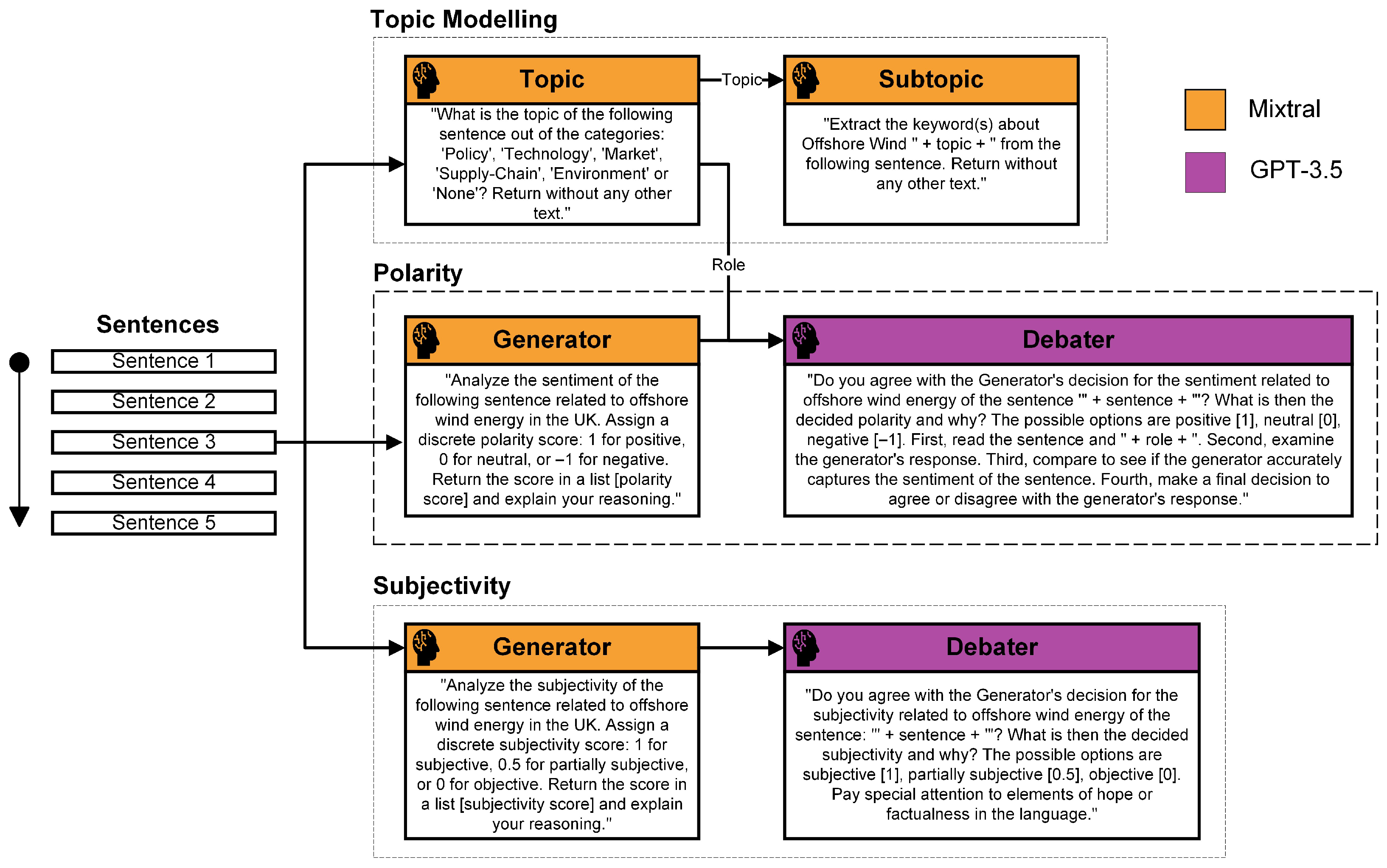

6.2. Task 1: High Level Trends

6.3. Task 2: Identify the Drivers

6.4. Task 3: Analysis of the Drivers

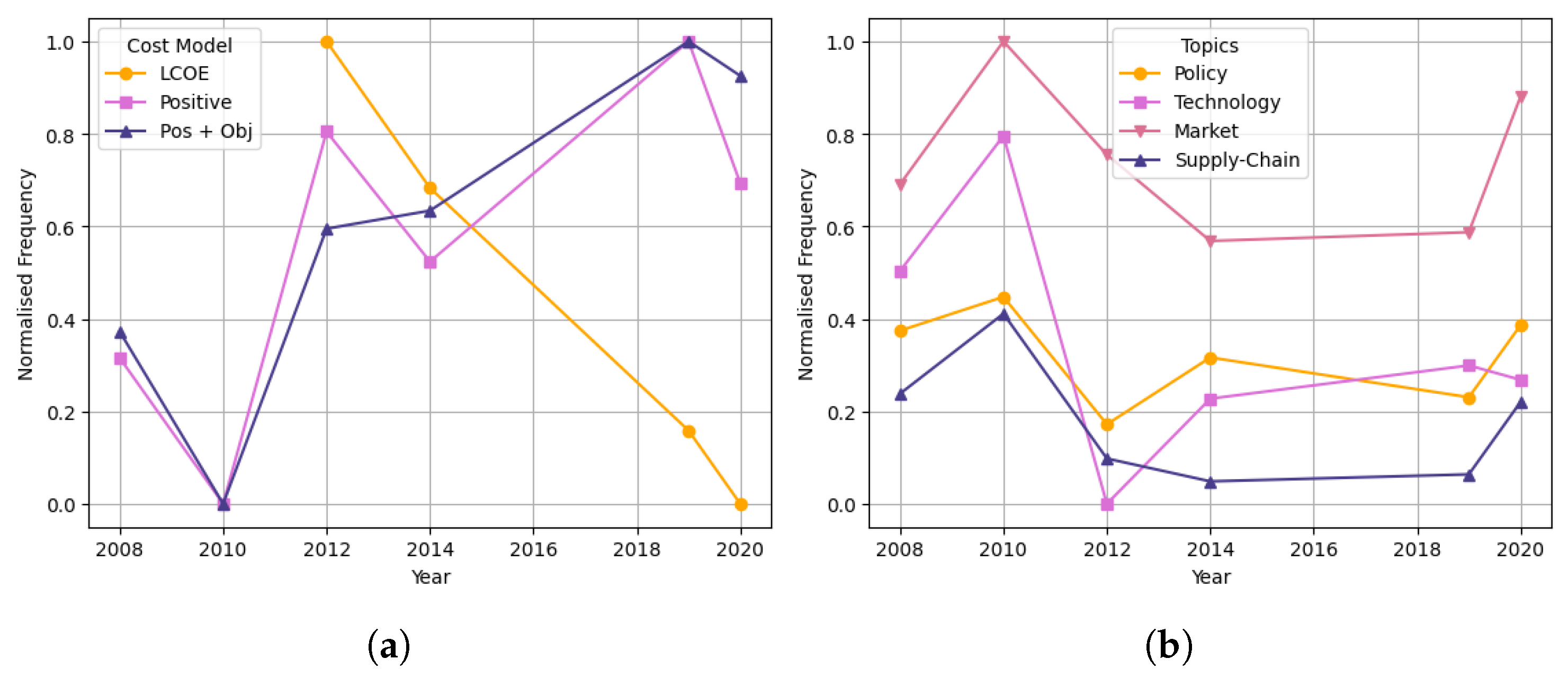

6.4.1. Cost

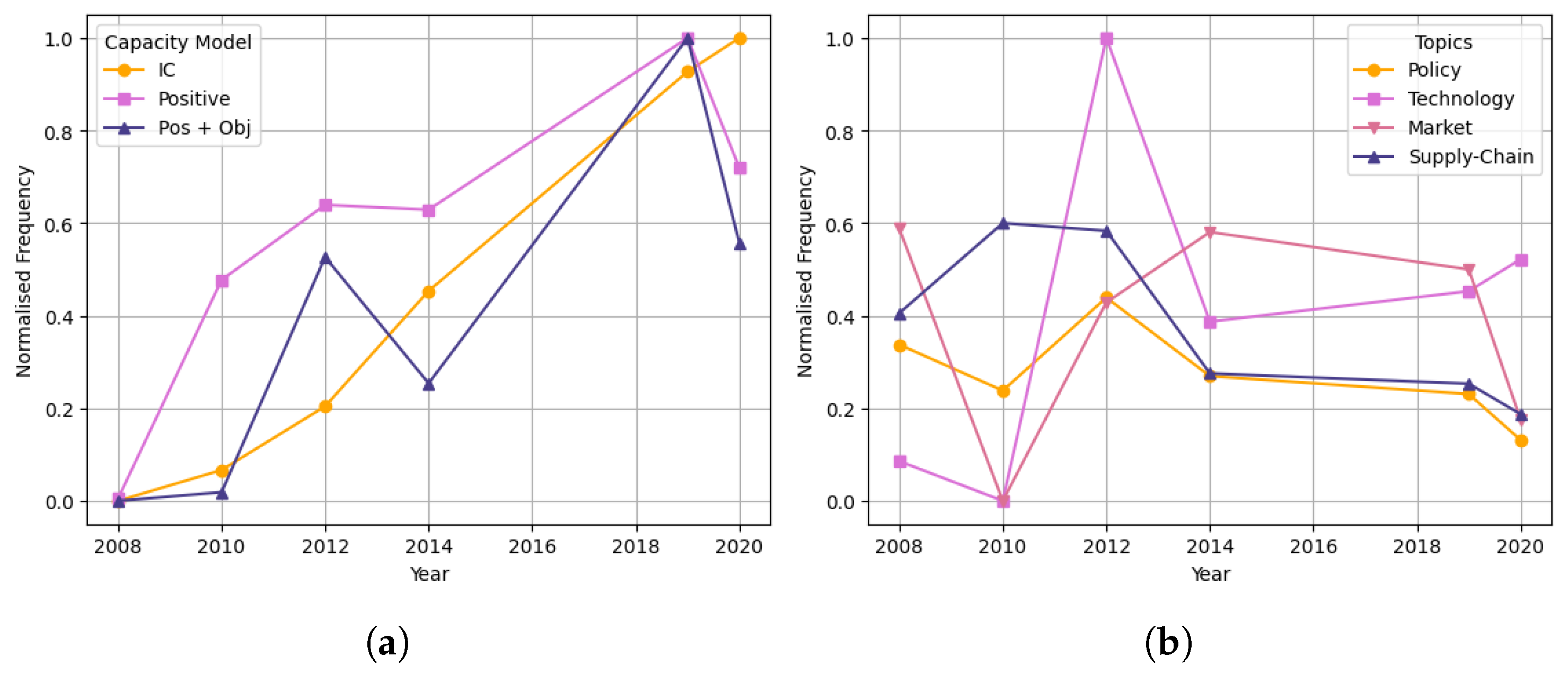

6.4.2. Capacity

6.4.3. Turbine Size

7. Discussion

7.1. EnergyEval in Practice

7.1.1. Extension to Net-Zero Technologies

7.1.2. Extension to Multi-Modal LLMs

7.1.3. Comparison to Existent Methods

7.2. Limitations of EnergyEval

7.2.1. Gathering Reliable Energy Data

7.2.2. Extracting Broader Sentiment Information

7.2.3. Increasing the Number of Debate Rounds

7.2.4. Using Smaller, Energy-Efficient LLMs

8. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A. Prompt Engineering

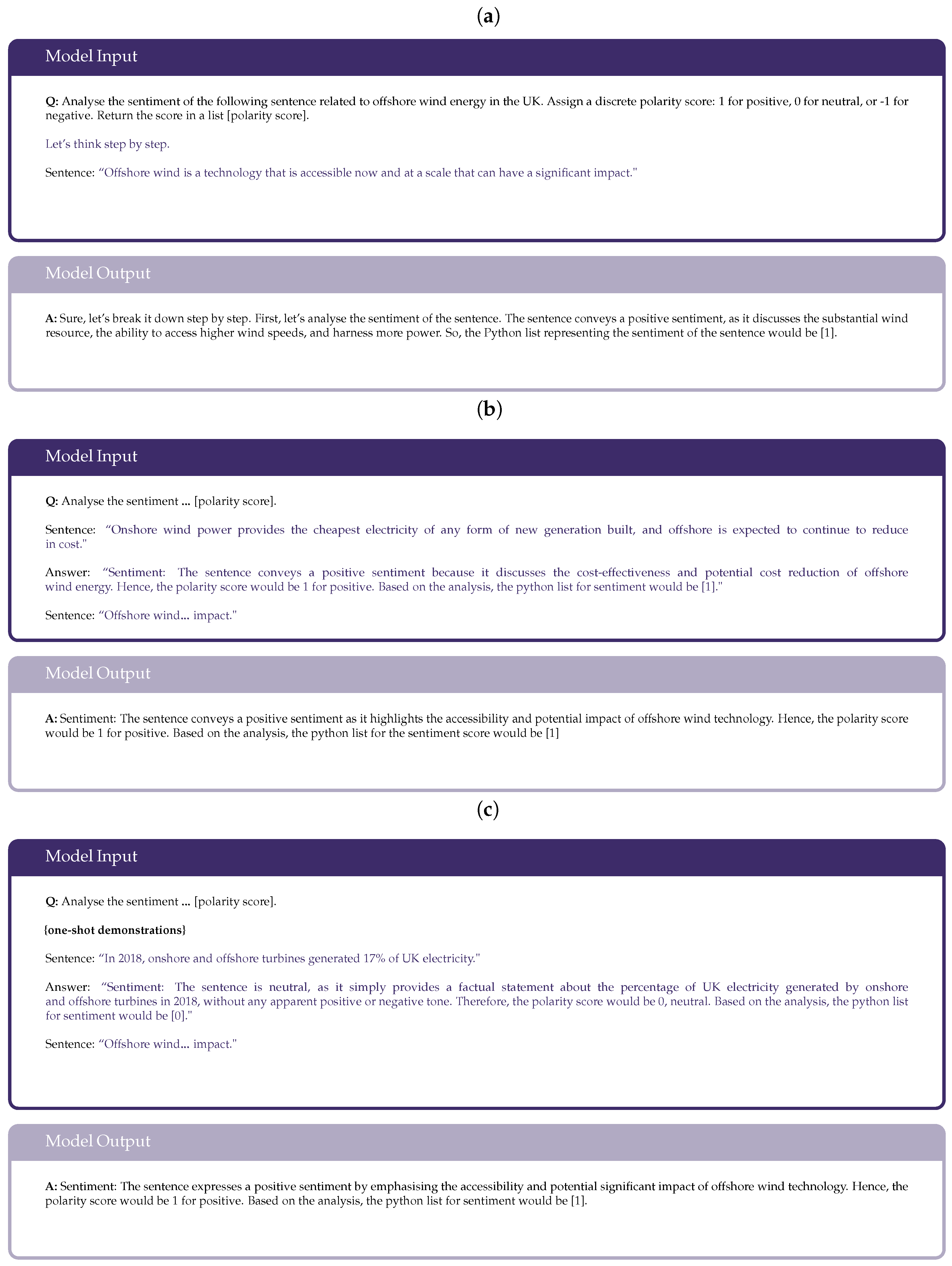

- Comprehensive Description: A detailed definition of the desired task is necessary in prompting. This is because a basic instruction lends itself to a number of possible options, whereas more descriptive prompts generate more precise results [46]. In the latent concept theory, this could be due to the fact that, by increasing the number of in-context concepts, the probability of a certain output given the prompt increases, narrowing to a more relevant answer.

- Few-shot Prompting: This is when a number of examples are given in the prompt, alongside a description of the task. How many demonstrations are used has been found to vary from task-to-task. For simpler tasks, one-shot prompting is typically sufficient, whereas few-shot prompts tend to improve performance for more invented tasks [46]. The reasoning behind this depends on whether few-shot learns or recalls latent concepts. Despite evidence suggesting few-shot potential [10], zero-shot prompts have been shown to outperform few-shots in certain scenarios [47].

- Chain of Thought: CoT works by prompting the model to provide intermediate reasoning steps in order to guide the response [46]. This approach has demonstrated highly significant improvement to accuracy, such as an increase from 17% to 78.7% for zero-shot arithmetic tasks [48].

- (a)

- Zero-shot CoT: This is an instance of CoT where the task instruction is followed by ‘Let’s think step by step’, with ‘zero-shot’ referring to there being no examples of reasoning steps.

- (b)

- Few-shot CoT: Likewise to few-shot prompting, few-shot CoT contains a number of manual demonstrations, along with reasoning included in the answer for each example. The disadvantage is the complexity in implementing these examples, requiring correct manual reasoning.

- Self-Consistency: The self-consistency prompting method aims to ensure the model’s responses are consistent with each other by replacing the naive greedy decoding used in chain-of-thought [49]. This follows a 3-stage process. The first step prompts the language model using chain of thought; the second samples multiple, diverse responses to the same prompt. The most consistent answer is found using the majority vote over all reasoning paths.

- Generated Knowledge: This approach prompts the model to generate potentially useful information prior to the task instruction [46], leveraging the pre-trained knowledge within LLMs to provide additional context. This method could be interpreted to increase the output probability by adding more latent concepts to the context from its pre-trained ‘subconscious’.

- Least-to-Most: Least-to-most prompting consists of a series of prompts that build upon each other, where each represent a fundamental step to the desired task—reducing ‘cognitive overload’.

- Tree of Thoughts: ToT follows a problem-solving process: an initial input prompts the model to outline the steps to solve the problem, and the subsequent steps allow the model to automatically approach the problem step-by-step. Unlike least-to-most, this methodology is based on the thought-process prior to the task—hence, more versatile and less-specific to a certain problem.

- Retrieval Augmentation: This incorporates up-to-date external information into the prompt, treated as foundational knowledge for the model [46]. The aim of this method is to reduce hallucinations.

- Role Prompting: This method involves assigning the model a specific role to play, which has been shown to be effective in aligning to a desired output [46].

References

- Poynting, M. Climate Change: Is the UK on Track to Meet Its Net Zero Targets? May 2024. Available online: https://www.bbc.co.uk/news/58160547 (accessed on 3 April 2024).

- McNally, P. An Efficient Energy Transition: Lessons from the UK’s Offshore Wind Rollout. February 2022. Available online: https://www.institute.global/insights/climate-and-energy/efficient-energy-transition-lessons-uks-offshore-wind-rollout (accessed on 1 January 2024).

- OpenAI. GPT-3.5 Turbo. Available online: https://chatgpt.com/pricing (accessed on 14 February 2024).

- Bloomberg. Introducing BloombergGPT. March 2023. Available online: https://www.bloomberg.com/company/press/bloomberggpt-50-billion-parameter-llm-tuned-finance/ (accessed on 4 April 2024).

- Research, G. Med-PaLM. March 2023. Available online: https://sites.research.google/med-palm/ (accessed on 3 April 2024).

- DeepMind, G. Gemini: A Family of Highly Capable Multimodal Models. arXiv 2024, arXiv:2312.11805. [Google Scholar]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Rozière, N.; Goyal, N.; Hambro, E.; Azhar, A.; Rodriguez, A.J.; et al. LLaMA: Open and Efficient Foundation Language Models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- Luk, M. Generative AI: Overview, Economic Impact, and Applications in Asset Management; SSRN. September 2023. Available online: https://ssrn.com/abstract=4574814 (accessed on 1 December 2023).

- Kojima, T.; Gu, S.S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. Large Language Models are Zero-Shot Reasoners. arXiv 2023, arXiv:2205.11916. [Google Scholar] [CrossRef]

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models are Few-Shot Learners. arXiv 2020, arXiv:2005.14165. [Google Scholar] [CrossRef]

- Zhang, Z.; Liu, M.; Sun, M.; Deng, R.; Cheng, P.; Niyato, D.; Chow, M.Y.; Chen, J. Vulnerability of Machine Learning Approaches Applied in IoT-based Smart Grid: A Review. arXiv 2023, arXiv:2308.15736v3. [Google Scholar] [CrossRef]

- Zhang, Z.; Yang, Z.; Yau, D.K.Y.; Tian, Y.; Ma, J. Data security of machine learning applied in low-carbon smart grid: A formal model for the physics-constrained robustness. Appl. Energy 2023, 347, 121405. [Google Scholar] [CrossRef]

- Poynting, M. What are fossil fuels? Where does the UK get its energy from? BBC News, 12 March 2024. [Google Scholar]

- Department for Energy Security & Net Zero. UK Energy in Brief 2023; National Statistics: Newport, UK, 2023.

- Department for Energy Security & Net Zero. The Ten Point Plan for a Green Industrial Revolution. November 2020. Available online: https://www.gov.uk/government/publications/the-ten-point-plan-for-a-green-industrial-revolution/title (accessed on 29 April 2024).

- Ploszajski, A. The UK’s Future Energy Mix: What Will It Look Like If We’re to Reach Net Zero? Available online: https://www.imperial.ac.uk/Stories/future-energy (accessed on 29 April 2024).

- UK Offshore Wind History. Available online: https://guidetoanoffshorewindfarm.com/uk-offshore-wind-history/ (accessed on 1 May 2024).

- Developments in Wind Power; Houses of Parliament Parliamentary Office of Science and Technology: London, UK, 2019.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. arXiv 2017, arXiv:1706.03762. [Google Scholar]

- Radford, A.; Narasimhan, K.; Salimans, T.; Sutskever, I. Improving Language Understanding by Generative Pre-Training. Technical Report, OpenAI. 2018. Available online: https://cdn.openai.com/research-covers/language-unsupervised/language_understanding_paper.pdf (accessed on 1 December 2023).

- Xie, S.M.; Raghunathan, A.; Liang, P.; Ma, T. An Explanation of In-context Learning as Implicit Bayesian Inference. arXiv 2022, arXiv:2111.02080. [Google Scholar] [CrossRef]

- von Oswald, J.; Niklasson, E.; Randazzo, E.; Sacramento, J.; Mordvintsev, A.; Zhmoginov, A.; Vladymyrov, M. Transformers Learn In-Context by Gradient Descent. arXiv 2023, arXiv:2212.07677. [Google Scholar] [CrossRef]

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? In Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, Virtual, 3–10 March 2021; pp. 610–623. [Google Scholar] [CrossRef]

- Kalai, A.T.; Vempala, S.; Nachum, O.; Zhang, E. Why Language Models Hallucinate. arXiv 2025, arXiv:2509.04664. [Google Scholar] [PubMed]

- Bailey, N. The Carbon Footprint of LLMs—A Disaster in Waiting. September 2024. Available online: https://nathanbaileyw.medium.com/the-carbon-footprint-of-llms-a-disaster-in-waiting-6fc666235cd0 (accessed on 27 September 2024).

- Liu, B. Sentiment Analysis and Opinion Mining; Springer: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

- Zhang, W.; Deng, Y.; Liu, B.; Pan, S.J.; Bing, L. Sentiment Analysis in the Era of Large Language Models: A Reality Check. arXiv 2023, arXiv:2305.15005. [Google Scholar] [CrossRef]

- Fatemi, S.; Hu, Y. A Comparative Analysis of Fine-Tuned LLMs and Few-Shot Learning of LLMs for Financial Sentiment Analysis. arXiv 2023, arXiv:2312.08725. [Google Scholar]

- Xing, F. Designing Heterogeneous LLM Agents for Financial Sentiment Analysis. arXiv 2024, arXiv:2401.05799. [Google Scholar] [CrossRef]

- Xing, F. Improving Factuality and Reasoning in Language Models through Multiagent Debate. arXiv 2023, arXiv:2305.14325. [Google Scholar] [CrossRef]

- Chan, C.M.; Chen, W.; Su, Y.; Yu, J.; Xue, W.; Zhang, S.; Fu, J.; Liu, Z. ChatEval: Towards Better LLM-Based Evaluators Through Multi-Agent Debate. arXiv 2023, arXiv:2308.07201. [Google Scholar]

- Zhang, J.; Xu, X.; Zhang, N.; Liu, R.; Hooi, B.; Deng, S. Exploring Collaboration Mechanisms for LLM Agents: A Social Psychology View. arXiv 2024, arXiv:2310.02124. [Google Scholar] [CrossRef]

- Sun, X.; Li, X.; Zhang, S.; Wang, S.; Wu, F.; Li, J.; Zhang, T.; Wang, G. Sentiment Analysis through LLM Negotiations. arXiv 2023, arXiv:2311.01876. [Google Scholar] [CrossRef]

- Jennings, T.; Tipper, H.; Daglish, J.; Grubb, M.; Drummond, P. Policy, Innovation and Cost Reduction in UK Offshore Wind; Technical Report; The Carbon Trust: London, UK, 2020. [Google Scholar]

- Whitmarsh, M.; Canning, C.; Ellson, T.; Sinclair, V.; Thorogood, M. The UK Offshore Wind Industry: Supply Chain Review. 2019. Available online: https://safety4sea.com/wp-content/uploads/2019/02/OWIC-The-UK-Offshore-Wind-Industry-Supply-Chain-Review-2019_02.pdf (accessed on 27 September 2024).

- Trewby, J.; Kemp, R.; Green, R.; Harrison, G.; Gross, R.; Smith, R.; Williamson, S. Wind Energy: Implications of Large-Scale Deployment on the GB Electricity System; Royal Academy of Engineering: London, UK, 2014. [Google Scholar]

- Spencer, M.; Tipper, W.A.; Coats, E. UK Offshore Wind in the 2020s: Creating the Conditions for Cost Effective Decarbonisation; Green Alliance: London, UK, 2014. [Google Scholar]

- Chinn, M. The UK Offshore Wind Supply Chain: A Review of Opportunities and Barriers; Offshore Wind Industry Council: London, UK, 2014. [Google Scholar]

- Wind Energy in the UK: State of Industry Report; Renewable UK: London, UK, 2012.

- Greenacre, P.; Gross, R.; Heptonstall, P. Great Expectations: The Cost of Offshore Wind in UK Waters—Understanding the Past and Projecting the Future; UK Energy Research Centre: London, UK, 2010. [Google Scholar]

- Jennings, T.; Delay, T. Offshore Wind Power: Big Challenge, Big Opportunity; The Carbon Trust: London, UK, 2008. [Google Scholar]

- OpenAI. GPT-4 Turbo. Available online: https://chatgpt.com/pricing (accessed on 14 February 2024).

- Touvron, H.; Martin, L.; Stone, K.; Albert, P.; Almahairi, A.; Babaei, Y.; Bashlykov, N.; Batra, S.; Bhargava, P.; Bhosale, S.; et al. Llama 2: Open Foundation and Fine-Tuned Chat Models. arXiv 2023, arXiv:2307.09288. [Google Scholar] [CrossRef]

- Jiang, A.; Sablayrolles, A.; Roux, A.; Mensch, A.; Savary, B.; Bamford, C.; Chaplot, D.S.; de las Casas, D.; Hanna, E.B.; Bressand, F.; et al. Mixtral-8x7B-Instruct. 2023. Available online: https://huggingface.co/mistralai/Mixtral-8x7B-Instruct-v0.1 (accessed on 14 February 2024).

- Falcon. Falcon-7b-Instruct. Available online: https://huggingface.co/tiiuae/falcon-7b-instruct (accessed on 14 February 2024).

- Chen, F.; Zhang, Z. Unleashing the potential of prompt engineering in Large Language Models: A comprehensive review. arXiv 2023, arXiv:2310.14735. [Google Scholar] [CrossRef]

- Reynolds, L.; McDonell, K. Prompt programming for large language models: Beyond the few-shot paradigm. In Proceedings of the Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems, Yokohama, Japan, 8–13 May 2021; pp. 1–7. [Google Scholar]

- Mu, J. Stanford CS224N | 2023 | Lecture 10—Prompting, Reinforcement Learning from Human Feedback. 2023. Available online: https://www.youtube.com/watch?v=SXpJ9EmG3s4&ab_channel=StanfordOnline (accessed on 29 January 2024).

- Wang, X.; Wei, J.; Schuurmans, D.; Le, Q.; Chi, E.; Narang, S.; Chowdhery, A.; Zhou, D. Self-Consistency Improves Chain of Thought Reasoning in Language Models. arXiv 2023, arXiv:2203.11171. [Google Scholar] [CrossRef]

| Year | Document | Organisation | Tokens |

|---|---|---|---|

| 2020 | Policy, Innovation and Cost Reduction in UK Offshore Wind [34] | Carbon Trust, UCL | 560 |

| 2019 | Developments in Wind Power [18] | HM Government | 145 |

| The UK Offshore Wind Industry: Supply Chain Review [35] | Offshore Wind Industry Council | 601 | |

| 2014 | Wind Energy: Implications of Large-Scale Deployment on the GB Electricity System [36] | Royal Academy of Engineering | 847 |

| UK Offshore Wind in the 2020s: Creating the Conditions for Cost Effective Decarbonisation [37] | Green Alliance | 269 | |

| The UK Offshore Wind Supply Chain: A Review of Opportunities and Barriers [38] | Offshore Wind Industry Council | 584 | |

| 2012 | Wind Energy in the UK: State of the Industry Report [39] | Renewable UK | 345 |

| 2010 | Great Expectations: The Cost of Offshore Wind in UK Waters [40] | UK Energy Research Centre | 668 |

| 2008 | Offshore Wind Power: Big Challenge, Big Opportunity [41] | Carbon Trust | 1014 |

| Model Name | Provider | Parameters | Training Tokens | Fine-Tuned? | Pricing |

|---|---|---|---|---|---|

| GPT-3.5-Turbo-1106 [3] | OpenAI | 175B | - | No | $2/1M tokens |

| GPT-4-Turbo-Preview [42] | OpenAI | 1.8T | 13T | No | $40/1M tokens |

| Llama-2-7b-Chat [43] | Meta | 6.74B | 2T | Yes | Free |

| Mixtral-8x7b-Instruct [44] | Mistralai | 46.7B | - | Yes | Free |

| Falcon-7b-Instruct [45] | TII | 7B | 1.5T | Yes | Free |

| Model | Polarity Accuracy | Subjectivity Accuracy |

|---|---|---|

| GPT-3.5 | 0.79 ± 0.01 | 0.61 ± 0.03 |

| GPT-4 | 0.69 ± 0.01 | 0.65 ± 0.06 |

| Llama 2 | 0.61 ± 0.02 | 0.47 ± 0.01 |

| Mixtral | 0.73 ± 0.09 | 0.50 ± 0.02 |

| Falcon | 0.71 ± 0.01 | 0.21 ± 0.05 |

| Metric | Description |

|---|---|

| Accuracy (ACC) | Percentage of correctly answered predictions |

| Answers Changed (AC) | Percentage of predictions that changed from the previous debate round |

| Answers Changed When Correctly Parsed (ACCP) | Percentage of predictions that changed from the previous debate round, given correctly parsed answers |

| Model | Polarity | Subjectivity | ||||

|---|---|---|---|---|---|---|

| ACC ↑ | AC | ACCP ↓ | ACC ↑ | AC | ACCP ↓ | |

| GPT-3.5 | 0.77 ± 0.01 | 0.10 ± 0.06 | 0.47 ± 0.06 | 0.66 ± 0.02 | 0.52 ± 0.04 | 0.31 ± 0.03 |

| GPT-4 | 0.75 ± 0.03 | 0.13 ± 0.06 | 0.61 ± 0.18 | 0.70 ± 0.07 | 0.31 ± 0.05 | 0.11 ± 0.14 |

| Mixtral | 0.67 ± 0.01 | 0.11 ± 0.02 | 0.90 ± 0.16 | 0.47 ± 0.01 | 0.15 ± 0.01 | 0.41 ± 0.03 |

| Prompt Strategy | SC GPT-3.5 | SC Mixtral | MAD Mixtral GPT-3.5 |

|---|---|---|---|

| Polarity Accuracy | 0.79 ± 0.01 | 0.73 ± 0.09 | 0.77 ± 0.01 |

| Subjectivity Accuracy | 0.61 ± 0.03 | 0.50 ± 0.02 | 0.66 ± 0.02 |

| Polarity Cost (/1M tokens) | $6 | $0 | $2 |

| Subjectivity Cost (/1M tokens) | $6 | $0 | $2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Freed, H.; Vogiatzaki, K.; Roberts, S. Evaluating Sentiment and Factuality of Offshore Wind Technological Trends Using Large Language Models. Energies 2025, 18, 5816. https://doi.org/10.3390/en18215816

Freed H, Vogiatzaki K, Roberts S. Evaluating Sentiment and Factuality of Offshore Wind Technological Trends Using Large Language Models. Energies. 2025; 18(21):5816. https://doi.org/10.3390/en18215816

Chicago/Turabian StyleFreed, Holly, Konstantina Vogiatzaki, and Stephen Roberts. 2025. "Evaluating Sentiment and Factuality of Offshore Wind Technological Trends Using Large Language Models" Energies 18, no. 21: 5816. https://doi.org/10.3390/en18215816

APA StyleFreed, H., Vogiatzaki, K., & Roberts, S. (2025). Evaluating Sentiment and Factuality of Offshore Wind Technological Trends Using Large Language Models. Energies, 18(21), 5816. https://doi.org/10.3390/en18215816