Abstract

This paper presents the design and implementation of an Intelligent Home Energy Management System in a smart home. The system is based on an economically decentralized hybrid concept that includes photovoltaic technology, a proton exchange membrane fuel cell, and a hydrogen refueling station, which together provide a reliable, secure, and clean power supply for smart homes. The proposed design enables power transfer between Vehicle-to-Home (V2H) and Home-to-Vehicle (H2V) systems, allowing electric vehicles to function as mobile energy storage devices at the grid level, facilitating a more adaptable and autonomous network. Our approach employs Double Deep Q-networks for adaptive control and forecasting. A Multi-Agent System coordinates actions between home appliances, energy storage systems, electric vehicles, and hydrogen power devices to ensure effective and cost-saving energy distribution for users of the smart grid. The design validation is carried out through MATLAB/Simulink-based simulations using meteorological data from Tunis. Ultimately, the V2H/H2V system enhances the utilization, reliability, and cost-effectiveness of residential energy systems compared with other management systems and conventional networks.

1. Introduction

The world faces a general energy crisis owing to the growing demand for electricity and the depletion of traditional fossil fuels like coal, natural gas, and oil. These conventional fuel sources are susceptible to depletion and significantly contribute to greenhouse gas emissions, which are detrimental to the environment. Consequently, solar and wind energy have emerged as substantial alternatives to address these challenges. Solar energy has attracted particular interest because of its sustainability, availability, and wide range of applications in the industrial and domestic sectors [1,2]. However, solar systems are inherently intermittent because their power output cannot be consistently sustained at night or during overcast weather [3].

As new renewable energy technologies are developed and adopted, there needs to be a way to reliably store and transport the harvested energy. To balance supply and demand, scientists have investigated numerous energy storage methods, including electrolyzers, batteries, hydrogen storage tanks, and supercapacitors [4]. One such environmentally friendly and efficient technology is the proton exchange membrane fuel cell (PEMFC), which is essentially a portable hydrogen energy system. Hydrogen is the closest thing we have to an ideal energy source when there are low levels of renewable energy and high demand, owing to its high energy density and storage capacity. Hydrogen technology is versatile and adaptable to various applications. For example, PEMFCs can be applied to hydrogen refueling stations (HRSs), flexible solar panels, and mobile devices. Ultimately, a single household can independently store and produce a sustainable amount of energy [5,6].

Nonetheless, because the energy supply is not constant or consistent, standalone installations of these renewable energy technologies cannot respond to rapid and dynamic variations in energy demand. Notably, vehicle-to-grid technology and hydrogen cars enable transport and sharing among individual energy producers/consumers [7]. Thus, smart houses and electric vehicles (EVs) can potentially cooperate, forming a smart grid as an extension of the standard electricity grid or as an independent unified network. As needed, an electric car can feed power to a Vehicle-to-Home (V2H) system or draw power from the home to use later (Home-to-Vehicle, H2V). This technology can make life simpler and enable more effective energy use in the home [8].

Moreover, current research indicates that smart home apps facilitate users’ production, storage, and consumption of energy. Advanced energy management systems are crucial for integrating smart home components with renewable energy sources, energy storage systems (ESSs), EVs, and communication functionalities [9,10]. Various regulatory techniques have been proposed, including real-time load scheduling and the management of certain appliances, although most existing systems lack the requisite flexibility and speed to respond to unforeseen events in real time. The concept of using hydrogen, regarding the energy exchange between EVs and smart home management systems, has not been fully developed or explored [11,12].

We seek to introduce an innovative approach for balancing energy supply and demand in smart homes by leveraging renewable energy sources, such as hydrogen, and enabling energy exchange for EV owners. Our approach is expected to enable flexible and independent energy management by integrating solar photovoltaics (PVs), PEMFCs (particularly those with hydrogen refueling capabilities), and bidirectional V2H/H2V power exchange. The system employs a hybrid Double Deep Learning (DDL) algorithm integrating Double Deep Q-networks (DDQNs) and a Multi-Agent System (MAS) to facilitate the efficient collaboration of all energy components. The main goal is to create household energy systems that are more resilient, adaptive, and economical in response to variations in demand and supply. We accomplish this by thoroughly examining the proposed system utilizing real-world data, following standard assessment methodologies.

1.1. Literature Review and Contributions

In Ref. [13], the authors underlined the importance of hydrogen energy systems and PEMFCs as efficient long-term storage solutions for integrating renewable energy. Nevertheless, the primary aim was to employ them in industrial environments rather than homes. The present study leverages a Hydrogen-Integrated Management Approach (HIMA) to tackle this problem on the domestic level, advancing the integration of hydrogen refueling stations and PEMFs as reliable backup power sources for smart homes.

In Ref. [14], the authors investigated solar and wind power integration in residential energy systems. The present paper also focuses on handling intermittent renewable energy sources and the mismatch between consumption and demand. A Hybrid Systems Approach (HSA), which couples solar PV technology with hydrogen storage and a backup PEMFC, is adopted. This technique is guaranteed to deliver consistent power output and employs a hybrid operating system developed for smart homes.

In Ref. [15], the authors reviewed different battery, ultracapacitor, and hydrogen storage technologies, showing that the interaction between these systems is insufficient for real-time energy management. The present paper implements the Smart Storage Coordination Approach (SSCA) and an MAS that addresses this limitation. Each storage unit operates autonomously, but they also act collectively to regulate energy usage.

In Ref. [16], the authors presented V2H and H2V technologies as flexible energy exchange mechanisms but did not fully integrate them into hybrid systems. To address this issue, the present study introduces the Bidirectional Vehicle Energy Exchange Approach (BVEEA), which allows EVs to act as active storage components within the home energy management system, supporting energy supply during peak demand and energy absorption during periods of surplus production.

In Ref. [17], the authors introduced an MAS architecture as a practical tool for managing distributed energy resources. However, the interactions among hydrogen storage, EV energy exchange, and renewable generation in a smart home context were not studied therein. The present paper proposes the Cooperative Energy Management Approach (CEMA), where the MAS actively manages the cooperation between hydrogen systems, EVs, PV generation, and home appliances for optimal resource allocation.

In Ref. [18], the authors implemented reinforcement learning algorithms to enhance energy planning but encountered delayed convergence and scalability issues in hybrid systems. Based on an experimental database, the Adaptive Scheduling Learning Approach (ASLA) has been created and deployed herein to boost flexibility and learning speed. Specifically, an advanced DDQN is employed to enhance energy scheduling decisions among various power sources and storage systems.

In Ref. [19], the authors adopted Deep Q-Learning for energy management optimization; however, they did not apply it to hydrogen-integrated or bidirectional EV systems using real data. We present the Predictive Energy Learning Approach (PELA), which utilizes DDL with DDQN for intelligent real-time decision-making throughout the hybrid smart home system.

In Ref. [20], the authors concluded that no comprehensive solution integrates hydrogen refueling stations, V2H/H2V energy exchange, MAS coordination, and artificial intelligence (AI)-based scheduling into a unified energy management framework. The current paper addresses this research gap through the Integrated Smart Energy Management Approach, which combines all these technologies into a Real-Time Embedded Smart Energy Management System, providing scalability, adaptability, and sustainable operation for the next generation of smart homes. Table 1 compares the proposed Integrated Home Energy Management System (IHEMS) with existing approaches and shows that only the IHEMS fully integrates hydrogen storage, V2H/H2V, MAS coordination, DDQN learning, and real-time smart home energy management control.

Table 1.

Comparison of related work with the proposed IHEMS.

In Ref. [21], the authors proposed a decentralized multi-agent-based control method for two interconnected microgrids, including PV, wind, diesel generators, and battery ESSs (BESSs). The technology constantly adapts to load scheduling and environmental uncertainties and allows power exchange via a network-controlled inverter. Simulation results demonstrate enhanced resilience and effective energy use under optimal, sunny, cloudy, and blackout circumstances. The system achieves peak outputs of 95 kW (PV) and 60 kW (wind) while effectively redistributing extra energy between different areas, thus supporting essential infrastructure like hospitals and industrial facilities.

In Ref. [22], the authors proposed an optimal planning technique for hybrid AC/DC microgrids, which simultaneously considers the ideal sizing of interlink converters and BESSs. The method uses the Arrhenius equation to calculate the cost of interlink converter degradation and incorporates an energy throughput constraint to reduce cyclic aging of the BESS. Real-world data from Griffith University and the Australian National Electricity Market confirm the model’s scalability and applicability in many circumstances.

In Ref. [23], the authors introduced a transformer-based multi-agent reinforcement learning (MARL) approach for long-term energy management in Multi-Station Collaborative EV Charging Systems. The model addresses coordination problems across several charging stations by leveraging the attention mechanism of transformers to enhance agent decision-making. By optimizing charging procedures across extended time horizons, the technology lowers peak loads, boosts efficiency, and guarantees systemwide collaboration. Simulation findings demonstrate better load balancing and energy cost reduction performance than conventional MARL approaches.

In Ref. [24], the authors proposed an asynchronous, decentralized load restoration technique for recovering resilient electricity-transportation networks following a disaster. The study integrates building thermal dynamics and e-bus routing to maximize transportation efficiency and ensure a continuous energy supply by utilizing the flexibility of air conditioning systems and e-bus schedules. Their proposed method guarantees parallel processing while maintaining anonymity. The outcomes demonstrate improved critical load restoration, increased computing performance, and enhanced user comfort.

In Ref. [25], the authors presented a Coordinated Planning of Electric and Heating model that considers the seasonal reconfiguration of district heating networks to enhance wind energy penetration. The model is structured as a mixed-integer linear programming problem and is resolved via a scenario-oriented generalized Benders decomposition method, enhancing computing efficiency. Simulations, including data from a case study from Jilin, China, demonstrate the benefits of seasonal district heating network flexibility in enhancing energy plant planning and facilitating renewable integration.

1.2. Contributions

We aimed to develop a novel system that provides smart homes with clean, reliable, and sustainable energy by integrating a hybrid system utilizing PVs, PEMFCs, and HRSs into a singular network. The V2H and H2V operations facilitate energy transfer between EVs. Power sources, storage systems, EVs, and domestic appliances within the MAS framework can operate collaboratively in real time. The DDQN enhances schedule management, rendering the framework more adaptable and efficient. Actual meteorological data from Tunisia are utilized in MATLAB/Simulink simulations to demonstrate the system’s validity (https://ww2.mathworks.cn/products/matlab.html, https://ww2.mathworks.cn/products/simulink.html). The proposed IHEMS fully complies with the requirements of intelligent home energy management systems regarding energy efficiency, dependability, and load shedding.

1.3. Outline of the Paper

The remainder of the paper is organized as follows: Section 2 outlines the IHEMS by detailing the interaction of the PV systems, PEMFCs, HRSs, dual ESSs, and MAS utilized in decentralized control. Section 3 discusses the intelligent decision-making system that is based on an MDP and augmented with DDQN learning. We describe the architecture of the neural network, the control method, and the roles of all agents, as well as the grid configuration, the simulation platform, and the climate data used to assess the system. Section 4 presents the findings, and Section 5 summarizes the major conclusions and future work.

2. Autonomous Hybrid System for Smart Home Energy

This study presents an autonomous IHEMS that integrates a PV system, a PEMFC, and a hydrogen refueling station to provide intelligent home applications with a dependable, stable, and efficient electrical energy source. The system acknowledges that solar electricity is not consistently accessible, especially at night and on overcast days or when sunlight is diminished. It achieves this by integrating renewable power with hydrogen-based energy recovery while controlling flexibility and storage options. In the proposed setup, the PV system is the primary energy source, consistently operating at its Maximum Power Point (MPP) to maximize energy output. The PEMFC extracts hydrogen from the HRS and may serve as an exceptional peak power producer, assisting when the CHPs surpass demand and fulfilling residential requirements during periods of high demand. This hybrid methodology guarantees a continuous power supply and enhances the resilience of the smart home energy system. The mechanisms for managing energy surplus and deficit are analyzed using two storage layers. The first is a long-term ESS, which provides extended backup during periods of low energy generation. The second is a short-term Ultracapacitor Storage System (USS), designed to quickly adjust power demands in relation to the load. This dual storage solution enhances system responsiveness and offers greater operational flexibility. A MAS-based real-time embedded energy management system governs the control and coordination of these hybrid components [26].

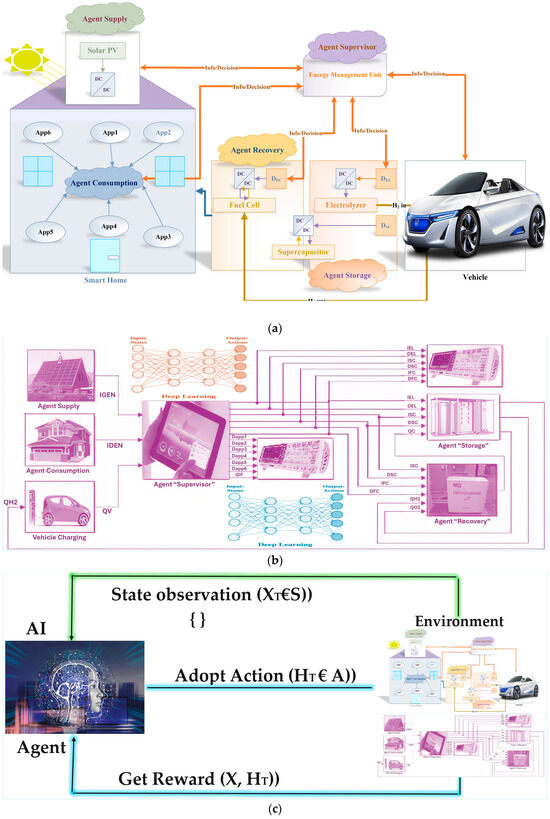

Figure 1 illustrates the IHEMS framework, comprising a PV system, PEMFC, HRS, and V2H/H2V systems. A DDQN-based AI learning MAS manages the system, facilitating real-time energy scheduling. The MAS allows decentralized and intelligent decision-making, using an individual agent for each energy system, storage unit, and home appliance. Each supervisor agent collects system state data, measures the energy flow, and manages interactions among all agents in the architecture hierarchy. This is done to maximize the power distribution for the given energy demand and supply. The eco-friendly power generation, hydrogen storage, V2H communication, and AI system are enhanced by the MAS framework with DDL and DDQN technology. The agents work in a decentralized way, and the central supervisor agent optimally coordinates them; that is, it minimizes the global cost of the system and makes decisions in real time. Every agent under the supervision of a supervisory agent is assigned a specific role and interacts with other agents in the MAS. This AI-driven coordination provides a valuable tool for enhancing energy management, allowing the system to autonomously adapt and evolve. It can improve from basic efficiency and resilience to more complex forms of both, based on continuous system analysis.

Figure 1.

(a) PV system, PEMFC, HRS, and V2H/H2V systems. (b) DDQN-based AI learning MAS manages the system, facilitating real-time energy scheduling. (c) Autonomous hybrid smart home energy management architecture.

2.1. Problem Statement

An increasing number of smart homes use renewable energy sources to reduce CO2 emissions and diminish the dependence on fossil fuels. However, providing a dependable, economical, and efficient energy source is hampered by the irregular and intermittent nature of solar electricity, changing household energy demands, and an unstable infrastructure. Modern energy management systems typically do not possess the necessary flexibility, real-time intelligence, and integrated control to manage various energy sources, such as solar PVs, hydrogen fuel cells, and EVs, all within one household. Moreover, conventional scheduling methods may not fully use two-way V2H and H2V communication modes and lack advanced learning algorithms for autonomous control. This highlights the necessity for a cohesive, intelligent energy management system capable of proactively optimizing the interplay between diverse energy sources, storage systems, and home demands, particularly in response to abrupt climatic fluctuations and load requirements. The proposed IHEMS design addresses this gap by utilizing MAS and DDQN technology to optimize intelligent, scalable, real-time decisions regarding solar systems, pulsed hydrogen fuel cells, hydrogen refueling, and V2H/H2V technologies.

2.2. Agent-Based Energy Management System Design

The IHEMS is modeled after an MDP, where each agent represents a decision unit using DDL over DDQN. The system dynamically optimizes energy generation, storage, consumption, and trading decisions (see Equation (1)) [27].

In this context, S stands for system statuses, which include the PV output, stored energy center (SOC), hydrogen levels, and the state of the EV. A denotes Actions, which refer to judgments concerning the regulation of entities, including activating or deactivating them, altering the current state, and selecting a mode. P(st, at) represents the transition probabilities, which the operational mechanics of the physical system determine. R(st, at) represents the reward function, considering efficiency, cost, and reliability, whereas γ denotes the discount factor for future rewards.

2.3. PV Agent

The power supply agent manages the PV system that produces electricity. This agent functions at the MPP to guarantee that the PV modules consistently produce optimal power, irrespective of variations in solar radiation levels. The agent uses DDQN-based learning to evaluate solar power availability and choose the ideal operational point. The agent informs the supervisory agent of the present generating capacity and makes modifications based on expected environmental changes. This method enables anticipatory energy planning (see Equation (2)) [28].

2.4. Home Agent

The load depletion factor computes residential energy consumption for heating, cooling, lighting, and EV charging. The supervisor evaluates the expected load during the load depletion period. Consumption patterns and peak periods are also examined. If this factor exists, the supervisor can enhance demand management by suggesting load shifts or establishing operational priorities. Equation (3) shows that this strategy enhances system reliability and reduces costs [29].

2.5. Hydrogen Production Agent

The hydrogen production factor ensures that the electrolyzer operates efficiently, converting excess solar energy into hydrogen at a high rate for long-term storage. Faraday’s electrochemical law of hydrogen production (in DDQN scheduling) allows the factor to determine when and how much hydrogen to produce based on expected energy surplus, the presence of an HL array in the tanks, or anticipated demand. This proactive approach can reduce energy loss and make the system more autonomous (see Equation (4)) [30].

2.6. Hydrogen Recovery Agent

The hydrogen recovery agent manages the operation of the PEMFC and USS. The function of the system is to recover stored hydrogen energy during periods of solar power shortages and stabilize the system using supercapacitors. This agent uses a DDQN-based decision-making mechanism to determine optimal fuel cell operation periods, avoid unnecessary PEMFC cycles, extend component life, and ensure a continuous power supply during critical periods (see Equation (5)) [31].

2.7. V2H/H2V Agent

The hydrogen recovery agent oversees the functioning of the PEMFCl and ultracapacitor. Its function is to retrieve stored hydrogen energy during solar power deficits and stabilize the system with rapid-response supercapacitors. This agent employs a DDQN-based decision-making framework to determine optimal operating times for the fuel cell, mitigate superfluous PEMFC cycles, prolong component longevity, and guarantee uninterrupted power delivery throughout key phases (see Equation (6)) [32].

2.8. Storage Status Agent

The storage condition agent monitors the condition of the SOC and the hydrogen tank. This agent also oversees the pressure, charging, and discharge rates to ensure everything runs safely. The agent uses a predictive control system based on the DDL model to regulate storage usage, prevent over-discharging, and maintain optimal charging levels for the supercapacitors. The supervisor receives constant reports on the available storage capacity, enabling them to determine the operating schedule (see Equation (7)) [33].

2.9. Hydrogen Station Agent

The hydrogen station agent operates the HRS, manages hydrogen production and refueling cycles, and regulates tank pressure. By utilizing DDQN-based predictions, this agent coordinates refueling tasks, optimizes the timing for hydrogen production, and maintains safe pressure levels in the storage tanks. This agent contributes to the seamless integration of hydrogen production, storage, and consumption processes (see Equation (8)).

2.10. Supervisor Agent

The supervisor agent acts as a global decision-maker, coordinating the actions of all other agents using DDQN. The primary function is to balance energy generation, storage, and consumption in real time while reducing operating expenses and enhancing system reliability. The supervisor constantly checks how the system is doing and chooses the best way to operate (e.g., using only solar power or combining solar power sources). Additionally, the system supports energy storage and management for V2H and H2V applications, adjusting its decision rules based on rewards related to its performance in efficiency, cost, and stability (see Equation (9)) [34].

3. Intelligent Home Energy Management System

3.1. DDQN-Based Decision-Making

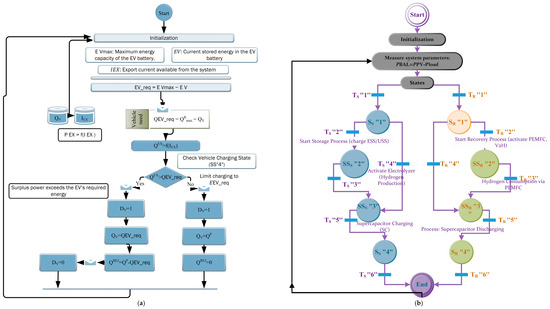

The proposed IHEMS, which integrates MAS, DDL, DDQN, and MDP, ensures that the renewable energy generation, storage system, and residential loads can collaborate in real time. The control strategy consists of two fundamental flowcharts (Figure 2). Figure 2a illustrates that the supervisor agent manages all these things globally. The supervisor monitors the system’s status and the choices made by DDQN, coordinates tasks among the agents (PV, PEMFC, HRS, EV, and ESS), and adjusts learning rules using feedback and Q-values. Figure 2b illustrates the behavior of each agent. Every agent observes its state, selects actions with the ε-greedy policy of DDQN, performs the control action (e.g., dispatches the electrolyzer or EV charging), and sends inputs to the supervisor. The decision-making process uses a state machine model with specific state changes (e.g., starting storage, recovering, managing hydrogen storage/usage, managing supercapacitors, and loading vehicles in SS4). This allows the system to transition energy flows dynamically and ensures that home energy management is stable and efficient.

Figure 2.

(a) State-based decision process using DDQN, MDP, and TS/TR transitions. (b) Adaptive vehicle charging using export power, energy requirement calculation, and dynamic current adjustment.

The IHEMS operation is explained by organized conditions and changes controlling energy moving between production, storage, hydrogen generation, and the grid. Those conditions are defined by system states (SSs) and recovery states (SRs). Energy balance, hydrogen levels, supercapacitor state of charge (SoC), grid availability, and TS/TR from electricity pricing represent operational parameters. These changes are summarized in Table 2, which indicates that the system stores energy, spends energy, controls H2 and supercapacitor usage, or manages grid sharing based on cost and availability. State 5 (Grid Management) and State 6 (Price-Aware Energy Optimization) increase flexibility, accounting for instantaneous energy market effects, which makes the system more economical and secure.

Table 2.

Check conditions and apply TS/TR transitions.

Algorithm 1 outlines the management of the IHEMS utilizing MDP and DDQN, coordinating several agents, employing state machine logic, and learning adaptively. The algorithm keeps track of the hydrogen level, energy generation, energy consumption, energy storage, EV energy systems, GSO, and energy pricing. It subsequently identifies the most efficient energy usage: whether to store it, retrieve it, produce hydrogen, charge the vehicle at home, complete laundry, operate the dishwasher, or delay these activities. The resulting electronic system is designed for continuous power conditions (SS/SR with TS/TR logic) and provides real-time learning performance feedback, ensuring optimal energy use, low costs, and reliable operation in homes striving for smart home designs.

| Algorithm 1: Unified Algorithm for IHEMS | |

| 1 | Initialize system parameters: PPV, Pload, SOCH2, SOCSC, EV, EVmax, Grid Status, Energy Price), DDQN networks, replay buffer, learning rates, and define agents (Supply, Consumption, Storage, Recovery, Hydrogen Station, V2H/H2V, Supervisor). |

| 2 | Loop while the system is active: Measure power balance: PBAL = PPV − Pload Supervisor collects current state: St = {PBAL, SOCH2, SOC_SC, EV, GridStatus, EnergyPrice, AppStatus} |

| 3 | IF PBAL > 0 THEN Set State = SS”1”, apply TS”1”, start Storage Process (charge ESS/USS) ELSE IF PBAL < 0 THEN Set State = SR”1”, apply TR”1”, start Recovery Process (activate PEMFC, V2H) END IF |

| 4 | IF SOCH2 < 1 THEN Set State = SS”2”, apply TS”2”, activate Electrolyzer for Hydrogen Production ELSE IF SOCH2 > 0 THEN Set State = SR”2”, apply TR”2”, consume Hydrogen via PEMFC END IF |

| 5 | IF SOCH2 > 1 OR P_BAL < I_NEL THEN Set State = SS”3”, apply TS”3”/TS”4”, charge Supercapacitor ELSE IF SOCH2 = 0 OR PBAL < I_NFC THEN Set State = SR”3”, apply TR”3”/TR”4”, discharge Supercapacitor END IF |

| 6 | IF SOCSC > 1 THEN Set State = SS”4”, apply TS”5”, prioritize Vehicle Charging (V2H) ELSE IF SOCSC = 0 THEN Set State = SR”4”, apply TR”5”, enter Appliance Load Scheduling Mode END IF |

| 7 | IF Grid Status = TRUE THEN Set State = SS”5”, apply TS”6”, enable Grid-Assisted Recovery ELSE Set State = SR”5”, apply TR”6”, operate in Self-Supply Mode END IF |

| 8 | IF Energy Price < Threshold THEN Set State = SS”6”, apply TS”7”, optimize cost by charging EV/Battery from Grid ELSE Set State = SR”6”, apply TR”7”, avoid Grid Charging, use Renewable/Stored Power END IF |

| 9 | IF State = SR”4” THEN Set active home appliances Check deficit and recovery rate via Recovery Agent WHILE PBAL < 0: Turn OFF non-critical appliances based on priority END WHILE END IF |

| 10 | Supervisor selects optimal action at = argmax Q(st, at) using DDQN policy Execute selected action through the corresponding agent Compute reward: |

| 11 | Store experience (st, at, rt, s{t + 1}) in replay buffer Update Q-values: Periodically update target Q-network |

| 12 | Continue to the next monitoring cycle END LOOP |

3.2. DDQN Neural Network Input–Output Configuration for IHEMS

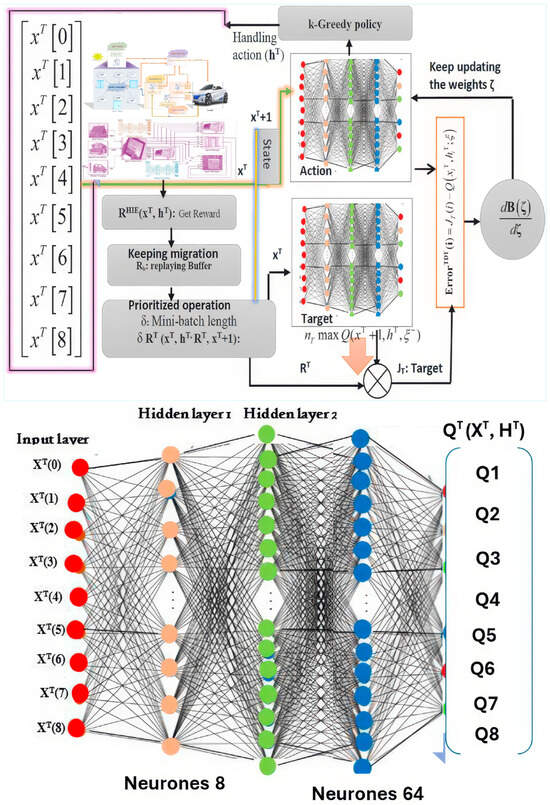

The proposed DDQN-based learning architecture for the IHEMS aims to accurately map environmental system states with optimal control actions.

The input layer of the neural network includes state-related system properties, such as the power balance (PBAL), hydrogen tank SoC (SoCH2), supercapacitor SoC (SoCSC), VV (representing the battery level of the EV), grid availability, energy pricing, and device status. These observations allow the agent to perceive and communicate the system’s current state. A matrix of potential actions is generated in the output layer, covering actions such as controlling devices, operating the electrolyzer, activating the PEMFC, exchanging V2H or H2V, charging/discharging the supercapacitor, and using or not using the grid. Table 3 summarizes the network architecture, including the number of neurons in each layer. The number of neurons in the input layer corresponds to the seven crucial system state variables. Two hidden layers (64 neurons in each layer) with ReLU activation functions capture the nonlinear relationship between inputs and outputs.

Table 3.

DDQN neural network configuration for the IHEMS.

The output layer includes neurons for the eight possible actions. Figure 3 illustrates the DDQN network developed for the IHEMS. The architecture consists of seven input neurons representing the state, two hidden layers for processing, and eight neurons in the output layer to perform power management actions. This approach involves continuous monitoring of the system operating conditions, using a DDL algorithm to determine the best actions and adaptive control of power flows to conserve resources and ensure their stability and optimal utilization.

Figure 3.

DDQN-based intelligent energy management framework developed for the IHEMS.

3.3. Performance Evaluation Metrics and Methodology

To assess the effectiveness of the proposed real-time energy management system, we conducted simulations based on realistic operating conditions using MATLAB/Simulink. The system model includes PV generation, BSUs, SCs, and bidirectional energy flow between EVs and the home using V2H and H2V capabilities. The simulation environment uses real-world meteorological and household load data from Tunisia, allowing for an accurate representation of solar variability and residential energy demand patterns.

Although hardware-in-the-loop testing is planned for future implementation, the current simulation framework enables a detailed performance analysis of the control strategies in a digital environment. The control algorithm is modeled as an MDP and optimized using a DQN, allowing for adaptive scheduling based on dynamic inputs such as PV output, load demand, energy pricing, and SoC.

The system’s performance is quantified using the following Key Performance Indicators (KPIs): Electricity Cost Reduction, which assesses the cost efficiency achieved through intelligent scheduling; Self-Sufficiency Ratio, which measures the proportion of local energy supply used to meet demand; Grid Dependency Reduction, which indicates how effectively the system minimizes reliance on the utility grid; Storage Utilization Efficiency, which evaluates the operational effectiveness of BSUs and SCs; and Control Response Time (ms), which reflects the real-time responsiveness of the DQN controller. These KPIs are used to compare different control strategies, including open-loop, closed-loop, MPC, and DQN-based optimization. The evaluation confirms the proposed system’s ability to achieve significant cost savings, improved flexibility, and greater energy autonomy in smart residential microgrids.

3.4. Roles of MPC, DDQN, and MAS in Intelligent Energy Management

The proposed IHEMS integrates MPC, DDQN, and a MAS to achieve intelligent, real-time decision-making. MPC serves as a forecasting tool that anticipates future energy demands and constraints (e.g., load, solar output, EV availability), enabling the system to pre-emptively guide energy scheduling. DDQN serves as the core learning algorithm, leveraging reinforcement learning to optimize energy flow decisions based on a reward structure that prioritizes cost efficiency, renewable usage, and reliability. It continuously updates its policy through experience replay and target network stabilization. MAS decomposes the system into distributed agents—representing PV, storage, hydrogen, EV, and load components—that act semi-autonomously under a centralized supervisor agent. This supervisor coordinates decisions using the DDQN policy and MPC forecasts, ensuring decentralized execution with global optimization. Together, these components form a cohesive control hierarchy that enables adaptive, scalable, and real-time energy management in smart homes.

4. Results

This section covers the modeling and validation results. The findings indicate that the proposed control algorithms facilitate adaptive energy management, optimize hydrogen utilization, enhance household self-sufficiency, reduce grid dependence, and yield financial benefits, based on genuine Tunisian meteorological data.

The proposed DDQN-augmented MAS designed for managing energy in smart homes is stable and can respond in real time.

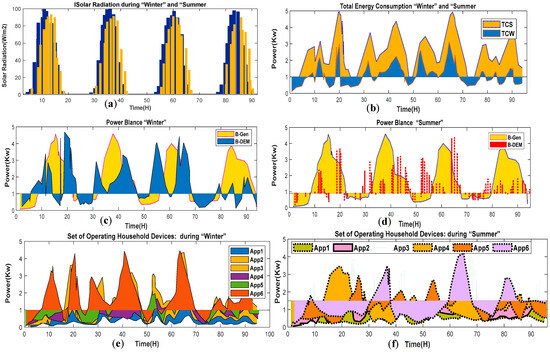

4.1. Simulation Setup and Parameters

The validity of the proposed IHEMS was tested using actual meteorological data from Tunisia in MATLAB/Simulink simulations. The inputs consist of solar irradiance, temperature, and residential energy use histories from four consecutive days in winter and summer (Figure 4). Thus, the system’s performance is assessed under dynamic real-life conditions, as shown in Table 4.

Figure 4.

Simulation input parameters: (a) solar radiation; (b) total energy demand; (c,d) seasonal power balance analysis. (e,f) seasonal operation of household devices.

Table 4.

Daily energy flow metrics under Tunisian seasonal conditions.

Figure 4a shows the solar values for the daily seasonal trends during winter and summer. As anticipated, insolation levels are significantly greater throughout the summer, signifying enhanced PV generation capability. The seasonal transition substantially influences the energy availability for houses and storage systems. Figure 4b shows the total energy consumption of all homes. The observed variations revealed that winter demand, in particular, frequently surpassed the capacity to generate power. This illustrates the significance of storage techniques and efficient load planning in averting supply problems. Figure 4c,d shows the calculated PBAL, which delineates the disparity between used and produced energy throughout the simulation. Positive PBAL readings during the summer signify a surplus of electricity, indicating excessive grid stability. Winter evaluations are negative, showing a significant need for hydrogen infrastructure and innovative control systems. Figure 4e,f shows that individuals use greater energy at home throughout winter; people need to heat their homes and use certain appliances more frequently. The increasing energy demand combined with limited sunlight availability highlights the need to manage energy consumption and integrate hydrogen energy storage into a home energy management system.

4.2. Energy Flow Management Behavior

To analyze the dynamic operation of the IHEMS, this section examines how power is coordinated between PV generation, PEMFC, hydrogen storage, and EVs using bidirectional V2H and H2V capabilities. Using DDQN learning within an MDP, the supervisory agent manages all state transitions (SS/SR) and conditions (TS/TR), as shown in Table 5.

Table 5.

System states and transitions for IHEMS.

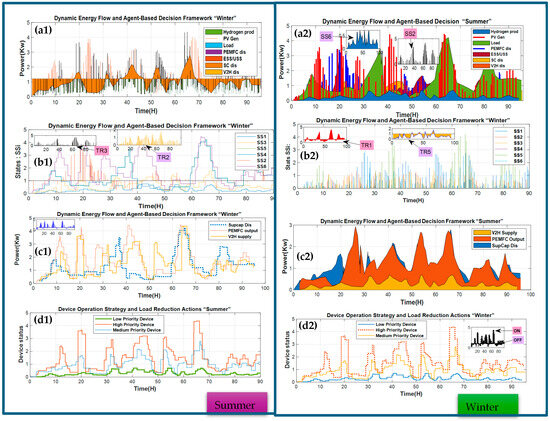

Figure 5a1,a2 shows PV generation versus household demand over 24 h. The overlap shows periods of excess power (midday) and shortages (early morning and evening), which form the basis for storage and recovery operations. ESS and USS are switched on when excess power is detected. This subfigure illustrates the charging cycles, particularly during periods of excess PV power in the middle of the day, allowing for later discharge during peak demand. Hydrogen production by electrolysis begins when excess PV power exceeds the electrolysis system’s capacity. This diagram shows the periods when the electrolyzer is active, converting excess electricity into hydrogen for later use by the PEMFC unit. Accordingly, recovery events are observed for the PEMFC, supercapacitor, and V2H discharges. These resources are activated during high-demand periods, helping maintain energy balance and reduce reliance on the grid. When demand exceeds production (SR1, TR1), the PEMFC unit is activated to convert the stored hydrogen into electricity (SR2, TR2). The hydrogen recovery agent also monitors the ultracapacitor discharges (SR3, TR3, TR4) to quickly respond to load changes.

Figure 5.

Overview of the 24 h power flow in the IHEMS: (a1,a2) charging behavior of the ESS/USS; (b1,b2) state transition mechanism of the IHEMS (SS/TR table visualization); (c1,c2) dynamic energy flow and multi-agent decision-making mechanism; (d1,d2) appliance operation strategy and load reduction procedures for peak demand discharges.

Figure 5b1,b2 shows the IHEMS’s state space representation in a state transition diagram. The system switches between operational modes (e.g., store, recover, produce, and dispose of) based on specific rules (e.g., PBAL, SoCH2, SoCSC) and a DQN policy from a Markov decision process—Figure 5c1,c2 shows how well the DDQN method manages real-time energy units (e.g., PV, BT hydrogen storage (as a backup), EVs, and domestic appliances). The energy routing path changes based on the system’s state and the signals from the grid, showing that the structure makes wise choices. Figure 5d1,d2 shows how much power the PEMFC, supercapacitor, and V2H cells can give off when the system needs the most power. The different energy flows are intelligently regulated to stabilize the system and avoid overloading or relying on expensive utility power. The graph also shows how much energy domestic appliances like washing machines, dishwashers, and EV chargers use during the day. The officials in the basement decide how many appliances to run depending on load forecasts, energy price indications, and the SoC. In situations of insufficient power, non-essential loads are turned off, and all activated appliances are turned on simultaneously.

4.3. Adaptive Energy Management, Hydrogen Utilization, and V2H Exchange for Grid-Independent Smart Homes

This section discusses the dynamic operation of the IHEMS, demonstrating its ability to adaptively manage PV power generation, hydrogen production and consumption, EV/EV interactions, and smart device scheduling. The IHEMS evaluates the system stability under varying energy demands, efficient V2H integration, and strategies for reducing grid dependency and lowering energy costs. Figure 6 provides insight into different operational aspects, including real-time power flow, agent-based state transitions, energy loss during peak load periods, and specific device control under limited power conditions.

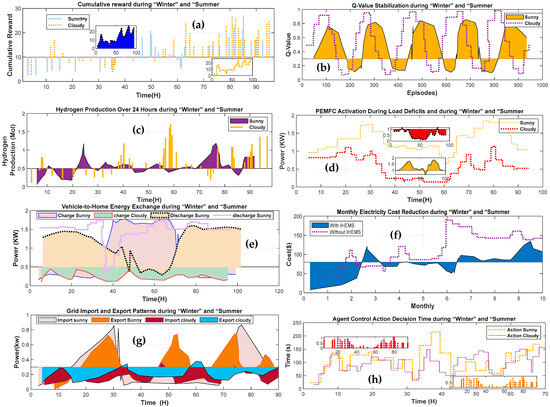

Figure 6.

Performance evaluation and intelligent decision-making in IHEMS: (a) DDQN reward convergence; (b) Q-value stabilization; (c) hydrogen production over 24 h; (d) PEMFC activation during load deficits; (e) V2H energy exchange; (f) monthly electricity cost reduction; (g) grid import and export patterns; (h) agent control action decision time.

4.3.1. System Stability, Adaptation, and Evaluation of Hydrogen Management

Figure 6a shows that the cumulative rewards of the DDQN operator converge over multiple iterations. The system is self-adaptive, learning from past errors to optimize control strategies for efficiency. Figure 6b shows when the Q-value reaches a plateau or stabilizes. This pattern indicates that the operator’s performance improves and becomes more consistent over time.

Figure 6c shows that the electrolyzer produces the greatest amount of hydrogen when receiving additional solar energy, meaning that hydrogen production is directly proportional to the excess energy received, especially at 52 °C (TS2). This demonstrates the ability to store excess renewable energy for long periods. Figure 6d shows the effect of the hydrogen tank SoC on the PEMFC operating frequency during active and inactive states. Additionally, the PEMFC operates continuously in SR1 and SR2 to produce electricity when generators are insufficient, improving system reliability. The results indicate that the IHEMS can effectively align energy requirements with fluctuating solar and electricity prices while efficiently overseeing hydrogen generation, storage, and use.

4.3.2. Vehicle-to-Home Energy Exchange Evaluation

This section analyses the role of EVs in the IHEMS, focusing on H2V interactions. Figure 6e shows the variation in EV charging patterns throughout the day, depending on energy availability and demand. The controller transfers power from the EV to the home and recharges the vehicle during high demand or when surplus low-cost energy is available. This bidirectional power flow enhances the system’s adaptability and maintains the home’s energy balance. It also facilitates off-peak charging of EVs, resulting in cost and space savings.

4.3.3. Optimizing Energy Costs and Reducing Grid Dependency

The proposed IHEMS reduces residential electricity consumption and enhances resilience to power interruptions. Figure 6f illustrates the alterations in the monthly electricity bill resulting from the IHEMS system. The strategy conserves substantial funds by maximizing the use of renewable energy, emphasizing energy storage, and charging EVs during off-peak rates. The grid’s functions in product import and export are illustrated in Figure 6g. If the system generates surplus solar electricity, it transmits the excess power to other locations. This behavior indicates enhanced procedural efficiency, which results in reduced import requirements.

To assess their magnitude, various scenarios involving EVs and improved hydrogen storage were examined. The system continued to function, and the agents persisted in collaborating despite the ineffectiveness of their cooperation agreements. Figure 6h shows an agent’s real-time decision-making response time, determining their control actions within approximately 200 ms. The IHEMS architecture effectively facilitates several agents’ real-time coordination and synchronization amid rapid changes. Table 6 presents the primary performance metrics: self-sufficiency rate, average monthly network import reduction, and cost savings.

Table 6.

Key performance metrics.

4.4. Discussion

The simulation results indicate that the proposed IHEMS operates as expected. It uses hydrogen energy technology, bidirectional EV energy flow, and online decision-making combined with MAS and DDQN. The IHEMS utilizes an MDP-based control approach to address challenges related to unpredictable home loads, the lack of local renewable energy sources, and reliance on the grid. Hydrogen can be generated using PEMFC technology, allowing for the management of surplus power produced by renewables. Notably, the production of hydrogen fluctuates between excessive and insufficient levels. V2H/H2V operations are used for convenient energy conversion, responding to the price of electricity or the grid’s condition in terms of demand levels. Eventually, the Q-values converge; this is how the agents determine the appropriate action plan. Advanced learning techniques were successfully leveraged to adapt to environmental changes, including load, solar radiation, and power demand patterns, or even a new tariff schedule. The original price decreased by 28.43% with IHEMS, and 22% of the energy-related decisions were managed in-house, which is beneficial for businesses and general operations. The modeling suggests that hydrogen storage and V2H/H2Vs operations work well at scale, especially considering that the agents can respond within 200 ms. The proposed approach can be utilized in standard smart homes to effectively and efficiently control energy flows.

4.5. Performance Evaluation and Multi-Scenario Assessment

This section provides a comprehensive comparative analysis of the Intelligent Home Energy Management System (IHEMS) under varying environmental and operational conditions. The evaluation considered fluctuations in solar radiation, household electricity demand, and time-varying energy prices. To capture both seasonal and daily variability, simulations were conducted over eight representative days, including four winter and four summer days. This experimental design ensured that the responsiveness and robustness of the proposed DDQN-based multi-agent control framework were tested under diverse operating scenarios.

Performance Improvements. The results summarized in Table 6 and illustrated in Figure 6f–h highlight the substantial advantages of the DDQN approach compared with the baseline system. The proposed framework achieved an average household energy self-sufficiency of 84%, indicating a significant increase in the utilization of locally generated renewable energy. Furthermore, monthly grid imports decreased by 43.9%, demonstrating the system’s ability to minimize reliance on external supply. In economic terms, electricity costs were reduced by 28.43%, confirming that the framework not only improves technical performance but also delivers tangible financial benefits to end users.

System Integration and Flexibility. The IHEMS architecture integrates multiple distributed energy resources (DERs), including supercapacitors, a proton exchange membrane fuel cell (PEMFC), hydrogen storage, and bidirectional Vehicle-to-Home (V2H) and Home-to-Vehicle (H2V) energy exchange. This integration enhances resilience and adaptability by enabling the system to coordinate short-term energy balancing, long-term storage strategies, and flexible demand-side scheduling. The DDQN controller dynamically schedules appliance operation and vehicle charging in response to both renewable generation levels and prevailing electricity tariffs. Moreover, the system can perform smart load shedding and prioritize critical household loads, thereby enhancing both grid autonomy and household reliability.

Scalability and Practical Viability. Taken together, these results demonstrate that the DDQN-based IHEMS is scalable and effective across a wide range of conditions, including both sunny and cloudy scenarios. The framework’s ability to combine renewable energy integration, advanced storage management, and real-time adaptive decision-making validates the viability of agent-based reinforcement learning approaches for contemporary smart homes. By simultaneously improving self-sufficiency, reducing costs, and enhancing system resilience, the proposed solution represents a significant step toward achieving sustainable, autonomous, and economically efficient residential energy management.

4.6. Critical Analysis of Results and Figure Consistency

Seasonal fluctuations in solar irradiation and load demand significantly impact energy production and consumption (see Figure 4a,b). Figure 4c,d reveals the distinction between generation and demand. Through these changes, the DDQN agent controls the policy and triggers state transitions.

The stability of the Q-values in Figure 6b illustrates how the convergence of the reinforcement learning process guarantees trustworthy decision-making in every situation. The hydrogen subsystem’s reaction to signals for consumption and storage is depicted in Figure 6d, which displays the PEMFC’s discharge patterns, and Figure 6c, which displays the hydrogen generation profiles. Through V2H operations, Figure 6e illustrates the bidirectional nature of EV integration, especially during times of high demand and low generation.

4.7. Monte Carlo Simulation and Statistical Analysis

To evaluate the long-term robustness of the proposed IHEMS, a Monte Carlo simulation was conducted over four seasons using real-world irradiance and load variations from Tunisian datasets. For each season, 100 simulation runs with randomized household consumption profiles were conducted. The derived statistical distributions for power cost and energy self-sufficiency are summarized in Table 7. Error bars highlight the system consistency across seasonal uncertainties, showing low standard deviations. Overall, the DDQN-based control maintains adequate energy autonomy and cost efficiency under variable situations.

Table 7.

Monte Carlo simulation results.

4.8. Examination of the Stability and Convergence of DDQN Learning

The training of the DDQN agent over 2000 episodes exhibited strong evidence of stability and convergence.

Loss Function. The temporal-difference loss steadily decreased during training, indicating consistent error reduction and stable optimization without divergence or oscillation.

Q-Values. After approximately 1000 training instances, Q-value estimates stabilized, signaling convergence of the learned action-value function toward a consistent policy. This behavior suggests effective mitigation of the overestimation bias observed in standard DQN methods.

Replay Buffer. Sampling activity within the replay buffer remained uniformly distributed across the training process. This stability ensured that the agent consistently learned from a diverse and representative set of experiences, reducing the risk of overfitting or catastrophic forgetting.

Overall Stability. Taken together, the reduction in loss, stabilization of Q-values, and balanced replay buffer usage confirm that the DDQN model achieved robust learning dynamics. The metrics summarized in Table 8 provide quantitative evidence of stable convergence across diverse training conditions.

Table 8.

Summary of the DDQN learning metrics.

5. Conclusions and Future Work

This work describes the operation, implementation, testing, and experimental results of the IHEMS, which is based on MAS and DDQN learning within an MDP framework. We focus on an IHEMS framework that integrates solar PV technology, kilowatt-hour storage utilizing hydrogen, and EVs in a V2H/H2V context. The comparative MATLAB/Simulink simulations demonstrate that the proposed model can operate in real time, effectively regulate power usage, and utilize real-world meteorological data for decision-making. Energy dependence and operational costs were significantly diminished compared with the baseline. The DDQN controller revised statuses based on the observed energy consumption of the system components. The system’s reliance on the grid diminished, and its response to disturbances was enhanced owing to price-based strategies and the flexibility afforded by bidirectional plug-in EVs.

A real-time hardware-in-the-loop experiment of the IHEMS is necessary to validate the simulation results. The system should also be extended to the energy management of an entire neighborhood or cluster of residences.

Moreover, blockchain technology may be considered to provide transparent and secure consumer energy transactions. We intend to incorporate additional renewable energy sources, such as small-scale wind energy, to enhance the operational reliability and sustainability. We plan to employ cutting-edge forecasting to improve the precision of the scheduling feature, alongside AI, to anticipate maintenance requirements for hydrogen and storage system components.

Author Contributions

Conceptualization, M.A.; Methodology, A.A. and B.L.; Software, B.L.; Formal analysis, A.A.; Resources, A.A.; Data curation, B.L.; Writing—original draft, M.A.; Writing—review & editing, B.L.; Supervision, M.A. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The original contributions presented in the study are included in the article, further inquiries can be directed to the corresponding author.

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations and Nomenclature

The following abbreviations are used in this manuscript:

| Nomenclature Table | ||||

| Parameters | Symbol | Value | Unit | Description |

| PV System Parameters | ||||

| Maximum Power Point voltage | VMPP | 36.0 | V | Voltage at which PV module delivers maximum power |

| Maximum Power Point current | IMPP | 8.5 | A | Current at Maximum Power Point |

| Short circuit current | Isc | 9.0 | A | Current when output terminals are shorted |

| Open circuit voltage | VOC | 44.5 | V | Voltage when output terminals are open |

| Rated power output (1 module) | ƞPV | 300 | W | Rated electrical output per PV module |

| PV system efficiency | PPV | 18.0 | % | Conversion efficiency of solar to electric power |

| Number of PV modules | — | 10 | — | Total number of PV panels in the array |

| Solar irradiance (peak) | G | 1000 | W/m2 | Standard test irradiance level |

| Temperature coefficient (voltage) | — | –0.3 | %/°C | Voltage reduction per °C rise in temperature |

| Hydrogen System Parameters | ||||

| Electrolyzer current | Iel | Variable | A | Current used to produce hydrogen |

| PEMFC current | Ifc | Variable | A | Current output from PEM fuel cell |

| Hydrogen molar mass | Mh2 | 2.016 | g/mol | Molar mass of hydrogen |

| Faraday constant | F | 96,485 | C/mol | Charge per mole of electrons |

| Number of electrons per mole (H2 reaction) | n | 2 | – | Used in Faraday’s law for hydrogen production |

| Hydrogen tank capacity | – | Variable | mol | Total hydrogen storage capacity |

| Hydrogen storage SOC range | SOCH2 | 0–1 | – | State of charge of hydrogen tank |

| Hydrogen tank pressure range | – | 0–700 | bar | Operational pressure for hydrogen storage |

| PEMFC efficiency | η | ~50–60 | % | Conversion efficiency of hydrogen to electricity |

| Vehicle Parameters | ||||

| Electric vehicle voltage | VEV | 360 | V | Nominal operating voltage of the EV battery |

| Electric vehicle current | IEV | Variable | A | Charging or discharging current of the EV |

| Vehicle battery capacity | — | 40–100 | kWh | Total energy storage capacity of the EV battery |

| Minimum State of Charge (SoC) | — | 0.20 | — | Minimum allowable SoC for V2H to avoid deep discharge |

| Maximum State of Charge (SoC) | — | 1.00 | — | Maximum SoC representing a fully charged battery |

| Charging efficiency | — | ~90 | % | Energy conversion efficiency during charging |

| Discharging efficiency | — | ~90 | % | Energy conversion efficiency during V2H discharging |

| V2H/H2V power limit | — | 3.3–7.2 | kW | Maximum bidirectional power exchange with the home |

| Vehicle availability pattern | — | Time-dependent | — | EV availability schedule for charging/discharging |

| Charging connector standard | — | Type 2/CCS | — | Standard for EV charging interface |

| Storage System Parameters | ||||

| ESS capacity | CESS | 10 | kWh | ESS capacity |

| ESS voltage | VESS | 48 | V | ESS voltage |

| ESS max charge current | ICHESS | 20 | A | ESS max charge current |

| ESS max discharge C=current | IDISESS | 20 | A | ESS max discharge current |

| USS capacity | CUSS | 5 | kWh | USS capacity |

| USS voltage | VUSS | 48 | V | USS voltage |

| USS max charge current | ICHUSS | 50 | A | USS max charge current |

| USS max discharge current | IDIS_USS | 50 | A | USS max discharge current |

| Supercapacitor efficiency | ηSC | 95 | % | Supercapacitor efficiency |

| Load and Balance Parameters | ||||

| Average household load | PLoad | 1.5 | kW | Average power demand of household |

| Peak household load | PLoad_peak | 4.0 | kW | Peak load during high-demand periods |

| Power balance | PBAL | - | kW | Difference: PV + storage − load |

| Load forecasting interval | - | 15 | Minutes | Interval for real-time demand prediction |

| Load scheduling resolution | - | 1 | Seconds | Control resolution for appliance scheduling |

| Minimum load operating threshold | Pmin | 0.2 | kW | Threshold to trigger low-power mode |

| Load priority levels | - | 3 | Levels | High, medium, low |

References

- Zhang, W.; Wu, J. Optimal real-time flexibility scheduling for Community Integrated Energy System Considering Consumer Psychology: A cloud-edge collaboration-based framework. Energy 2025, 320, 135340. [Google Scholar] [CrossRef]

- Ulleberg, Ø.; Hancke, R. Techno-economic calculations of small-scale hydrogen supply systems for zero emission transport in Norway. Int. J. Hydrogen Energy 2020, 45, 1201–1211. [Google Scholar] [CrossRef]

- Deng, D.; Tang, X. Research on optimal configuration of energy storage in microgrid considering the reliability of power supply for critical loads. In Proceedings of the 2023 3rd New Energy and Energy Storage System Control Summit Forum (NEESSC), Mianyang, China, 26–28 September 2023; pp. 63–66. [Google Scholar] [CrossRef]

- Omprakash, B.; Patel, J.; Dhanaselvam, J.; Choubey, S.B. Cost and renewable energy management by iot—Oriented smart home based on Smart Grid Demand response. In Sustainable Smart Homes and Buildings with Internet of Things; John Wiley & Sons: New York, NY, USA, 2024; pp. 97–113. [Google Scholar] [CrossRef]

- Cui, X. Smart home: Smart devices and the everyday experiences of the home. In Materializing Digital Futures; Bloomsbury Academic: New York, NY, USA, 2022. [Google Scholar] [CrossRef]

- Tutkun, N.; Scarcello, L.; Mastroianni, C. Improved low-cost home energy management considering user preferences with photovoltaic and energy-storage systems. Sustainability 2023, 15, 8739. [Google Scholar] [CrossRef]

- Nilsson, A.; Wester, M.; Lazarevic, D.; Brandt, N. Smart Homes, Home Energy Management Systems and real-time feedback: Lessons for influencing household energy consumption from a Swedish field study. Energy Build. 2018, 179, 15–25. [Google Scholar] [CrossRef]

- Balavignesh, S.; Kumar, C.; Ueda, S.; Senjyu, T. Optimization-based Optimal Energy Management System for smart home in Smart Grid. Energy Rep. 2023, 10, 3733–3756. [Google Scholar] [CrossRef]

- Abuznaid, A.R.S.; Tutkun, N. Home appliances in the smart grid: A heuristic algorithm-based dynamic scheduling model. Front. Energy Syst. Power Eng. 2021, 3, 20–27. [Google Scholar] [CrossRef]

- Jafari, M.; Malekjamshidi, Z. Optimal Energy Management of a residential-based hybrid renewable energy system using rule-based real-time control and 2D dynamic programming optimization method. Renew. Energy 2020, 146, 254–266. [Google Scholar] [CrossRef]

- Jafari, M.; Malekjamshidi, Z. Optimal scheduling of Smart Home Energy Systems: A user-friendly and adaptive home intelligent agent with self-learning capability. Adv. Appl. Energy 2024, 15, 100182. [Google Scholar] [CrossRef]

- Tai, C.-S.; Hong, J.-H.; Hong, D.-Y.; Fu, L.-C. A real-time demand-side management system considering user preference with adaptive deep Q Learning in Home Area Network. Sustain. Energy Grids Netw. 2022, 29, 100572. [Google Scholar] [CrossRef]

- Venkatasatish, R.; Dhanamjayulu, C. Reinforcement learning based energy management systems and hydrogen refuelling stations for Fuel Cell Electric vehicles: An overview. Int. J. Hydrogen Energy 2022, 47, 27646–27670. [Google Scholar] [CrossRef]

- Wu, X.; Wu, Y.; Tang, Z.; Kerekes, T. An adaptive power smoothing approach based on artificial potential field for PV plant with Hybrid Energy Storage System. Sol. Energy 2024, 270, 112377. [Google Scholar] [CrossRef]

- Kouache, I.; Sebaa, M.; Bey, M.; Allaoui, T.; Denai, M. A new approach to demand response in a microgrid based on coordination control between smart meter and distributed Superconducting Magnetic Energy Storage Unit. J. Energy Storage 2020, 32, 101748. [Google Scholar] [CrossRef]

- Wang, W.; Li, Y.; Shi, M.; Song, Y. Optimization and control of battery-flywheel compound energy storage system during an electric vehicle braking. Energy 2021, 226, 120404. [Google Scholar] [CrossRef]

- Jordehi, A.R. Optimal scheduling of home appliances in home energy management systems using Grey Wolf Optimisation (GWO) algorithm. In Proceedings of the 2019 IEEE Milan PowerTech, Milan, Italy, 23–27 June 2019. [Google Scholar] [CrossRef]

- Giwa, Y.; Akinmuyisitan, T. Dynamic learning models for adaptive digital twins: A scalable approach to real-time adaptability in non-stationary environments. In Proceedings of the International Conference on Smart and Sustainable Technologies, Supetar, Croatia, 1–4 July 2025. [Google Scholar] [CrossRef]

- Uddin, M.F.; Hassan, K.M.; Biswas, S. Peak load minimization in smart grid by optimal coordinated on–off scheduling of Air Conditioning Compressors. Sustain. Energy Grids Netw. 2021, 28, 100545. [Google Scholar] [CrossRef]

- Aldahmashi, J.; Ma, X. Real-time energy management in smart homes through deep reinforcement learning. IEEE Access 2024, 12, 43155–43172. [Google Scholar] [CrossRef]

- Aldahmashi, J.; Ma, X. Decentralized multi-agent control for Optimal Energy Management of neighborhood based hybrid microgrids in real-time networking. Results Eng. 2025, 27, 106337. [Google Scholar] [CrossRef]

- Mahmoudian, A.; Taghizadeh, F.; Sanjari, M.J.; Garmabdari, R.; Mousavizade, M.; Lu, J. Optimal sizing of Battery Energy Storage System and Interlink converter in an energy constraint hybrid AC/DC Microgrid. In Proceedings of the 2024 IEEE 9th Southern Power Electronics Conference (SPEC), Brisbane, Australia, 2–5 December 2024; pp. 1–6. [Google Scholar] [CrossRef]

- Song, G.; Xie, H.; Zhang, J.; Fu, H.; Shi, Z.; Feng, D.; Song, X.; Zhang, H. Long-term efficient energy management for Multi-Station Collaborative Electric Vehicle Charging: A transformer-based multi-agent reinforcement learning approach. Appl. Energy 2025, 397, 126315. [Google Scholar] [CrossRef]

- Li, Z.; Sun, H.; Xue, Y.; Li, Z.; Jin, X.; Wang, P. Resilience-oriented asynchronous decentralized restoration considering building and E-bus co-response in electricity-transportation networks. IEEE Trans. Transp. Electrif. 2025, in press. [Google Scholar] [CrossRef]

- Du, Y.; Xue, Y.; Wu, W.; Shahidehpour, M.; Shen, X.; Wang, B.; Sun, H. Coordinated planning of Integrated Electric and heating system considering the optimal reconfiguration of District Heating Network. IEEE Trans. Power Syst. 2024, 39, 794–808. [Google Scholar] [CrossRef]

- Stennikov, V.; Barakhtenko, E.; Mayorov, G.; Sokolov, D.; Zhou, B. Coordinated management of centralized and distributed generation in an integrated energy system using a multi-agent approach. Appl. Energy 2022, 309, 118487. [Google Scholar] [CrossRef]

- Sami, B.S.; Sihem, N.; Bassam, Z. Design and implementation of an Intelligent Home Energy Management System: A realistic autonomous hybrid system using energy storage. Int. J. Hydrogen Energy 2018, 43, 19352–19365. [Google Scholar] [CrossRef]

- Malik, F.H.; Ali, M.; Lehtonen, M. Intelligent agent-based architecture for demand side management considering space heating and Electric Vehicle Load. Engineering 2014, 6, 670–679. [Google Scholar] [CrossRef][Green Version]

- Basiony, M.G.; Nada, S.; Mori, S.; Hassan, H. Performance evaluation of standalone new solar energy system of hybrid PV/electrolyzer/fuel cell/med-MVC with hydrogen production and storage for power and freshwater building demand. Int. J. Hydrogen Energy 2024, 77, 1217–1234. [Google Scholar] [CrossRef]

- Kuo, J.-K.; Thamma, U.; Wongcharoen, A.; Chang, Y.-K. Optimized fuzzy proportional integral controller for improving output power stability of active hydrogen recovery 10-kW PEM fuel cell system. Int. J. Hydrogen Energy 2024, 50, 1080–1093. [Google Scholar] [CrossRef]

- Mazzeo, D.; Matera, N.; De Luca, R.; Musmanno, R. A smart algorithm to optimally manage the charging strategy of the home to vehicle (H2V) and vehicle to home (V2H) technologies in an off-grid home powered by renewable sources. Energy Syst. 2022, 15, 715–752. [Google Scholar] [CrossRef]

- Sharma, D.D.; Lin, J. Robust optimal multi-agent-based distributed control scheme for Distributed Energy Storage System. Robust Optim. Plan. Oper. Electr. Energy Syst. 2019, 233–252. [Google Scholar] [CrossRef]

- Halder, P.; Babaie, M.; Salek, F.; Haque, N.; Savage, R.; Stevanovic, S.; Bodisco, T.A.; Zare, A. Advancements in hydrogen production, storage, distribution and refuelling for a sustainable transport sector: Hydrogen Fuel Cell vehicles. Int. J. Hydrogen Energy 2024, 52, 973–1004. [Google Scholar] [CrossRef]

- Ma, J.Y. Curious supervisor puts team innovation within reach: Investigating supervisor trait curiosity as a catalyst for collective actions. Organ. Behav. Hum. Decis. Process. 2023, 175, 104236. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).