1. Introduction

The efficient operation of a microgrid requires accurate energy forecasting and effective optimisation. This is referred to as the “predict + optimise” problem [

1].

The same principles apply to the forecasting and optimisation of larger grids. New microgrids often operate entirely on renewable energy, and the modelling of such grids provides important lessons for the operation of national grids where rapid decarbonisation must occur. In this paper, we study a particular microgrid with a collection of buildings, associated solar panels and batteries.

Studying such a problem deeply and investigating which approaches worked best, and conducting a review of possible data sources can help many parties, such as forecasters, grid operators, and future competition organizers. In the last decade, several competitions have been organized, covering both the narrow forecasting-only problem and the larger “predict + optimise” problem.

Here, we describe the approach used in a competition to model the Monash University Microgrid, focussing on the forecasting aspect of the problem. In 2021, the IEEE Computational Intelligence Society ran a “predict + optimise” competition from 1 July to 3 November online [

2].

The geographical context of the competition was in the city of Melbourne, in the state of Victoria, Australia. In this microgrid, the electricity demand at a set of six buildings is met by a set of six solar installations, while a set of batteries with differing capacities and efficiency rates may be charged or discharged to meet requirements. A related optimisation problem in the competition concerned how the energy requirements could be met at lowest cost using the batteries and foreknowledge of electricity prices.

The competition ran in two phases. In Phase 1 from 1 July to 11 October 2021, competitors were able to upload forecasts to a public “leaderboard” which would calculate the Mean Absolute Scaled Error (MASE) [

3] of each the 12 time series for October 2020. The mean of these 12 MASE values would then be displayed on the leaderboard. At the end of Phase 1, the load and solar data of October 2020 (the 12 time series) was made public, and Phase 2, based on forecasting the month of November 2020, began.

From 13 October to 3 November 2021, competitors could upload forecasts, but the leaderboard only provided an indication of whether forecasts were better, worse, or the same as a reference forecast of all zero values. On 3 November the MASE and energy cost figures were released, while the energy data were released on 6 December 2021.

In this paper, we present the approach used to win the forecasting section of the competition, and describe the novel contributions (for example, thresholding and combining daily and hourly input data) which led to the model outperformance. We explain how the competition fits in the context of energy-forecasting competitions, in particular with reference to COVID-19 effects, and investigate how other data sources could have improved the results.

We review the recent literature relevant to the competition in

Section 2.

Section 3 describes the data available in the competition, a naive forecast, and the general approach used;

Section 4 examines the specific case study of the competition, and

Section 5 provides the results.

Section 6 is a “post-mortem” discussion of what other predictor variables may be useful in this kind of forecasting, with implications, and

Section 7 concludes the paper.

2. Literature Review

We consider literature from well-known energy forecasting competitions (largely the GEFCOM series and Kaggle-based competitions), other energy-forecasting ideas from the literature, and concerning energy usage and forecasting during COVID-19. These competitions are relevant as techniques specific to some of them could be applied to the competition described here.

2.1. Energy-Forecasting Competitions and Principles

To develop an accurate forecast for a specific case such as the microgrid under consideration, it is important to review past competitions and derive lessons from them. The Global Energy-Forecasting Competition (GEFCOM) has been run three times (2012, 2014 and 2017) with themes of forecasting electricity load, solar, wind and price, with both point and quantile forecasting.

The 2014 and 2017 iterations of GEFCOM [

4,

5] provided strong evidence that random forests were one of the most successful techniques in energy forecasting (or at least in related competitions). For example, in the solar track of GEFCOM 2014, three of the top five entries used quantile regression forests or random forests as part of the model. In the GEFCOM 2017 competition for hierarchical load forecasting, three of the top seven entries used quantile regression-based models. For the sake of saving time, we used quantile regression forests for both building and solar modelling.

Concerning GEFCOM 2012, Table 2 of [

6] summarised the methods used in the hierarchical load forecasting track plus a “Vanilla Benchmark” using multiple linear regression (MLR). Linear regression is a simple method for relating weather and temporal variables to the output variable (load). Clearly, the relationship between input weather variables such as temperature and load is non-linear requiring transformation such as the use of heating and cooling degree days; and similarly for the time of day/Julian date temporal variables.

Ten methods were given, of which three used MLR or ensembles featuring MLR, three used neural networks, and one (Chaotic Experiments) used random forests. Data cleaning was a key challenge of the competition.

The winning team [

7], used multiple linear regression, with key steps of combining models from multiple weather stations, outlier removal and public holiday treatments. A quadratic function was used to account for the non-linear relationship of temperature with load. A separate model was built for each zone and hour of the day. Then the temperature for each zone was modelled in terms of various weather station inputs and some holidays are treated as weekend days. Two tracks of the GEFCOM 2014 competition were particularly relevant, as they covered load and solar forecasting (GEFCom2014-L and GEFCom2014-S). The competition assessed probabilistic, rather than point forecasting. Additionally, the weather data provided was from ECMWF, as in the competition described in this paper.

The winning team in the load track [

8] used a quantile generalized additive model to win the load and price forecasting tracks after testing random forests, gradient boosting machines and generalized additive models.

We considered generalized additive models for our work, but random forest models achieved better performance with little training required.

A quantile regression forest model was used by [

9] as part of their approach; using all the ECMWF ERA5 variables provided, plus temporal variables: hour, day of year, and month.

The 2017 competition focussed on hierarchical probabilistic load forecasting; as in previous GEFCOM instances, quantile regression forests, gradient boosting machine, neural networks, multiple linear regression and various ensembles were used. However, the weather variables were not provided by ECMWF and so lessons cannot be applied directly here as for the 2014 instance. Of the solution papers, ref. [

10] provided labelling ideas for each time series, which proved to be a useful concept for the IEEE challenge.

Another competition run on Kaggle was described in [

11] using machine learning for solar forecasting. This was an early example of a competition using NWP data in the US (specifically, Oklahoma) for solar forecasting; gradient boosting regression trees were found to outperform other methods.

Other papers have focussed on forecasting day-ahead load and solar generation in large electricity markets using weather forecast data.

In the context of forecasting the Australian National Electricity Market [

12] wrote about forecasting day-ahead electricity load. They used a heating/cooling degree day approach, with lagged and leading variables. Linear regression and piecewise linear modelling of temperature effects were used for a regional electricity forecast in Australia. The approach was refined in [

13] to forecast quantiles. They noted that important factors for forecasting were the seasonality of load, temperature and special day effects. Lagged load effects were noted to be critically dependent on the day of the week.

A paper on solar forecasting [

14] noted that the commonly used ensemble technique in wind and solar power forecasting was to blend weather data from different sources. This was important in the competition studied here, with some data hourly and other data available on a daily resolution.

Since the IEEE-CIS competition, papers from winners have appeared describing their forecasting and optimisation approaches [

15,

16,

17,

18,

19,

20,

21]. Two competitors used LightGBM (gradient boosting machines), two used random forests or quantile regression forests, one used ResNet/Refined Motif, and two used ensemble approaches (one involved neural networks and the other random forests, gradient boosting machines, and regression). Further details may be found in [

2] while the effect of different error metrics is examined in [

22].

For the design of a forecasting competition, it is important to choose a correct error metric (in this competition, MASE or Mean Absolute Scaled Error) and to avoid using training data in the evaluation phase.

Forecast evaluation methods were examined in [

23]. The authors discussed data leakage, where training data are used in the test or evaluation set. In the context of this competition, data partitioning was applied to retain the temporal order of the time series, splitting it across phases. Thus, the month of October 2020 was used in Phase 1 to allow competitors to refine their models while November 2020 was used in Phase 2 for the evaluation. A possible issue with non-stationarity occurred; that is, the “return to campus” effect observed in November 2020 had not been seen since the March 2020 lockdown.

In a similar manner to leakage, we discuss extra sources of data in

Section 5 which could have been used as input data by the competition designers or by unethical competitors. In the GEFCOM competition run on Kaggle, the team which initially achieved the lowest score exploited “some external utility data” in their final submission [

24].

The authors cite [

25,

26] as arguing that point forecast evaluation alone is insufficient for all end use cases. The second paper proposes assessing a range of point forecast error measures in competitions, in the context of discussing the results of the “M4” competition [

27].

Figure 11 and Table 1 of the paper provide a large flowchart for point forecasting. For example, RMSE or “root mean squared error” and MAE/MASE or “mean absolute (scaled) error” are discussed. MAPE is inappropriate for time series containing zero values, such as solar time series used in the competition. Thus, it was appropriate for the multiple time series containing non-stationarities of the seasonality type, to choose MASE as a forecast metric.

MASE is calculated as defined in [

3], as follows. Let

,

be the observations at time

t and

be the forecast at time

t, with the forecast error

. Then calculate the scaled forecast errors

as

with MASE = mean(

).

A detailed study on the theory and practice of forecasting was published in [

28]. In particular, one subsection (3.4.3) gives a summary of recent references based on the time horizon of the forecasts. It is noted that 15 min forecasts (as seen in the challenge) are classified as “VSTLF” (i.e., Very Short-Term Load Forecast) while in MTLF (medium-term load forecasts) one may forecast electrical peak load, which is relevant for the optimisation phase of the challenge. VSTLF is stated to require meteorological data and day type identification codes.

Recent systematic reviews of electricity load forecasting models have been provided in [

29,

30,

31,

32]. The paper [

32] recommended extreme gradient boosting as a technique, studying Panama’s electricity load over the last 5.5 years, using temperature, humidity and temporal variables. Various other single approaches have been examined in other papers [

33,

34,

35,

36,

37,

38]. For example, deep neural networks, deep learning, combinations of stationary wavelet transforms, neural networks, hybrid models, PSO (particle swarm optimisation) and ensemble empirical mode decomposition were examined. An extensive review of ML methods for solar forecasting was given in [

39], which noted that SVM, regression trees and random forests gave similar results and ensemble methods always outperformed simple predictors.

2.2. COVID-19 Energy Usage

During the period of COVID-19 since December 2019, there have been many papers published about the effect of “lockdowns” on energy use in buildings. More specifically, several papers have been published on energy use in universities in the UK, Spain, Malaysia and Australia explaining the effect of full and partial lockdowns and reopenings, and what kind of loads are running irrespective of whether a lockdown is in place. For a more general survey of how COVID-19 affected household energy usage in an Australian context, see [

40].

One study [

41] looked at 122 buildings in Griffith University, Australia. The buildings were across five campuses in the Gold Coast, Australia and compared the “COVID-19” year February 2020 to February 2021 to the “pre-COVID” year February 2019–February 2020. Learning and administration moved off campus while research remained on campus, saving 16% of energy year on year. Air-conditioning reduction accounted for half of the energy-use change. In agreement with [

42] it was found that research buildings have highest energy-use intensity and academic offices the least. They noted that it was important to assess the special occupancy conditions of these buildings and not model them as regular commercial or residential buildings.

Some researchers required specialised equipment and could not work from home. The energy savings may have been caused by reduced hours of operating building equipment, such as HVAC (heating ventilation and air conditioning) which was shut down in some types of buildings. In the location of the university (with a sub-tropical climate) there was high demand for cooling in summer but little demand for heating in winter.

Examining the University of Almeria in Spain, ref. [

43] found that the library category was most influenced by shutdowns and the research category was least influences. University campuses were noted to use energy in a different way from residential buildings, with differing occupancy rates, HVAC, lighting, computers and so on. UPS, fridges and freezers, security systems, exterior lighting and telecommunications could not be turned off and were characterized as “heavy plug load”. Thus, building by building modelling is appropriate, which was seen in the competition. Library, sport, and restaurant buildings were affected strongly at different times.

At the BarcelonaTech university campus [

44] studied 83 buildings, identified the main use case for each, calculated heating and cooling temperature effects, and showed estimated occupancy. They again noted that research buildings experienced the least proportion of avoided energy use during lockdown. Further savings were not possible due to some buildings having a low level of centralised controls.

The study [

45] examined a 7-level research complex building in Malaysia. They noted a strong weekend/weekday usage pattern with centralised air conditioning during work hours and studied the effects of partial and complete lockdowns.

In Scotland, ref. [

46] examined public buildings during 2020 and 2021 in the context of “lockdown fatigue”. Library buildings were noted as offering higher EU (electricity use) and EUI (electricity use intensity) reduction potential.

In summary, the research on universities could not be directly applied to the competition as it was unknown which classification the buildings fell into. However, the choice of input data to the model (that is, the starting point chosen for each building’s data) had a large effect on the performance of the model.

A competition was organized by IEEE [

47] requiring competitors to forecast day-ahead electricity use in March and April 2021 from a “metropolitan electric utility”. Four years of data and weather variables were supplied (historical and forecast). Out of 20 teams that finished the competition, only nine had a “significantly lower MAE” compared to the persistence-based benchmark load forecast. The persistence forecast set Sunday and Saturday load to the previous Sunday or Saturday; Monday and Tuesday to the previous Friday, and Wednesday to Friday from two days prior. The top teams used ensembles of models which were weighted based on the recent performance of individual models.

The competition organizers noted that multi-model approaches were dominant, and this dominance had also been noted in the recent M5 competition [

48]. They concluded that the winning models were adaptable to sudden changes in conditions such as lockdowns, but that it was not clear if the models were robust or could be generalized to other circumstances.

The winning team published details of their approach in [

49]. They used an ensemble of 72 models including linear regression, GAMs, random forests, and MLPs, using an “online robust aggregation of experts”. The aggregation method is more fully described at [

50] in the context of lockdowns in France.

The third placed team [

51] explained their approach involving cleaning of weather data using linear interpolation, lagged load effects, outlier detection and adjustment for holidays. Their ensemble of 674 models incorporated STL decomposition with exponential smoothing, an AR(P) time series model, GAMs, and regression models.

The organizers stressed that publication of the methods was critical to maximize benefit to society, in contrast with commercial data science competitions.

3. Materials and Methods

In this section, we describe the data provided by external sources and by the competition organizers. The external data consists of weather data from two different meteorological organizations and electricity price data from the grid operator in Australia.

We then provide a general description of the random forest algorithm, and give a baseline error rate based on various “naive” forecast approaches.

3.1. Prediction Data

Competitors in the challenge were permitted to use external data from two sources: the Australian Bureau of Meteorology (BOM) weather data available through manual download from Climate Data Online [

52] and the European Centre for Medium-Range Weather Forecasts (ECMWF) [

53,

54] data provided by OikoLab [

55]. Thus, the competitors were allowed to use “perfect forecast” weather data. From the BOM data, we used only the “daily global solar exposure” data, although daily minimum and maximum temperatures and daily rainfall data were available. The European Centre model is known as ERA5 and provides hourly historical weather (reanalysis) data.

The BOM “daily global solar exposure” data is measured from midnight to midnight each day, and is the total solar energy for a day falling on a horizontal surface. For the stations used in this study, values ranged from 1.3 to 32.3 MJ per square metre in 2019 and 2020. For the competition, these data were available at three nearby sites—Oakleigh, Olympic Park, and Moorabbin (BOM sites 86077, 86088 and 86338, respectively).

Table 1 lists and describes the variables used to model solar output in several recent papers and this competition: GEFCOM [

4,

56,

57], PVLIB [

58], and PVCompare [

59]. The descriptions come from the ECMWF “GRIB Parameter Database” [

60]. Their use in competitions is shown in

Table 2.

One study [

61] used the higher-resolution ERA5-Land data, which provides 0.1 degree grid resolution coverage of the Earth, and four of the “PVLIB” variables i.e., wind speed (

u and

v), surface solar radiation downward, and 2 m temperature.

The PVLIB source code [

62] which derives values from the European Commission’s Photovoltaic Geographical Information System (PVGIS) [

63] takes into account global horizontal irradiance, direct normal irradiance, diffuse horizontal irradiance, the plane of array irradiance (global, direct, sky diffuse, ground diffuse), solar elevation, air temperature, relative humidity, pressure, wind speed and direction. The PVGIS data is itself partially dependent on ECMWF data and the variables chosen are similar to those listed in

Table 2.

3.2. Building and Solar Data

3.2.1. Solar

As a rule of thumb, a 4 kW system in Melbourne produced 14.4 kWh of solar energy daily, according to an estimate by Australia’s Clean Energy Council [

64]. Equivalently, this can be expressed as a “capacity factor” of 15.0% relative to the size of the system. In the centre of Australia in Alice Springs, such systems can produce 20.0 kWh per year; that is, a capacity factor of 20.8%. This can also be expressed in a “kWh/kW” form—that is, 3.6 kWh/kW in Melbourne and 5 kWh/kW in Alice Springs.

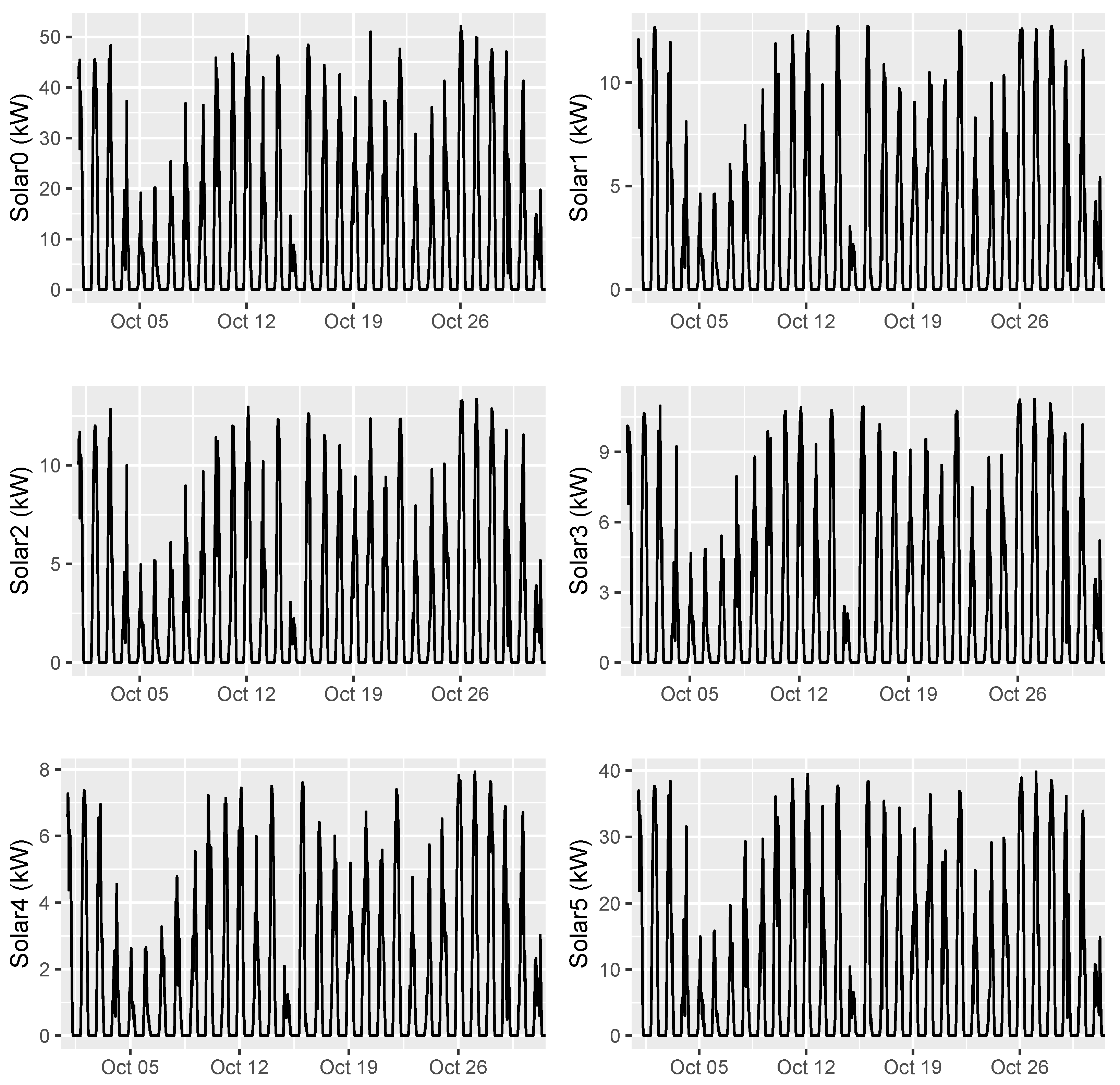

The solar installations, named Solar0 to Solar5, appeared to be of sizes of approximately 8 to 50 kW. The data for Solar0 only began in April 2020, but the estimated capacity factors of each installation (using data up to and including October 2020) ranged from 15.9% (Solar5, max 40.4 kW) to 23.5% (Solar1, max 12.7 kW).

For Solar0, 86% of the time-series values are non-zero; for Solar5, this proportion is 30%; while for the others, the value ranges from 38% to 48%. It seemed that some kind of cleaning or thresholding approach could be used to improve performance for forecasting Solar0 and Solar5 values and this proved to be the case.

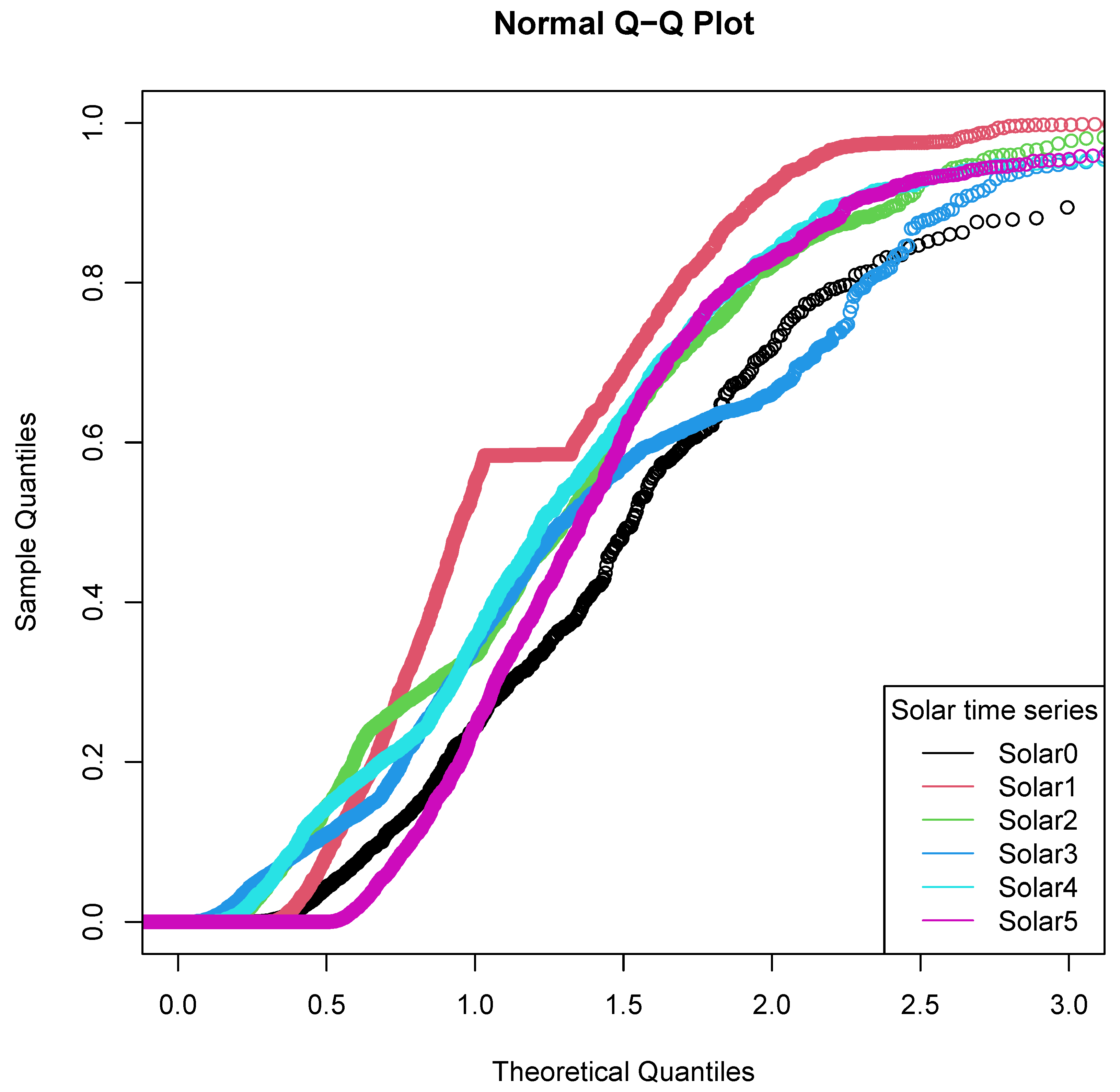

A Q-Q plot of the six solar installations, using all available data, is shown in

Figure 1. The red line represents Solar1. On closer examination, the kink in this line was found to be caused by cumulative data in the Solar1 time series in late 2020. Thus, not all the data from Solar1 could be trusted or used without cleaning. We thresholded the data from Solar1, Solar2 and Solar3 time series to begin from 22 May 2020. All of the Solar0, Solar4 and Solar5 time series were used in training, although only hours where at least one period of generation (of four) was greater than 0.05 kW were used for Solar0 and Solar5 training.

3.2.2. Buildings

The provided data for the buildings and solar installations began at various dates, with the earliest data available from “Building 3” on 1 March 2016. The data for some buildings was very spotty; for example, Building 4 had 46,733 values but 18,946 of the values were unavailable. The modal and median value for Building 4 (19,621 occurrences) was 1 kW and all the other values were 2, 3, 4 or 5 kW. A perfect approach for data such as Building 4 would forecast one of these discrete values as the MASE of every series was significant for the competition, regardless of size.

We omitted one day in October 2020 from the building training data due to a state public holiday effect in the data (Friday before Grand Final). It was unclear whether the public holiday was influencing this day, while November 2020 (the evaluation month) had no significant public holidays.

3.3. Naive Forecasts

It is instructive to consider the performance of naive forecasts (for example, persistence-based or those based on median of each time series for the most recent month).

To develop a persistence-based forecast, we examined the building and solar traces for November 2019 versus previous data. (We also checked the time series for solar against previous time series reversed in case seasonality effects were useful). It was quickly determined that for November 2019, for a “month-ahead” forecast, the best lag period was 30 days (720 h) for the solar and 35 days (840 h) for the buildings, which preserved the weekend patterns. This was the lag period that generally minimized the sum of squares of differences, comparing November 2019 to previous time periods of the same length. The organizers explained that for computing the MASE, they used a 28-day-ahead seasonal naive forecast (i.e., 2688 15-min periods).

Applying this to the November 2020 forecast, we achieved a mean MASE over the 12 time series of 1.1754. The final leaderboard for Phase 2 contained 14 different forecasts for Phase 2, of which 10 had a lower MASE than this naive forecast.

Classifying each hour in the data by weekday/weekend type and by hour (i.e., 48 classifications) and setting each value for November 2020 to the median of the corresponding hourly/day type value for the last 30 days of October 2020 gave a mean MASE of 1.0754, while including the day of the week value (i.e., 168 classifications) reduced this to 1.0630. Nine entries beat this mean MASE.

For the training phase, Phase 1, requiring the prediction of October 2020, the same approaches give a mean MASE of 1.1504 (persistence forecast), 0.9313 (weekend/weekday and hour), and 0.9211 (day of week and hour). Of 22 different forecasts on the final leaderboard for Phase 1, 14 beat the persistence forecast while nine beat the hourly based forecasts.

3.4. Random Forests and Quantile Regression Forests

The initial random forest model was described in [

65] as a generalization of tree-based prediction or classification algorithms. Later, [

66] provided an extension of the concept to carry out regression and provide quantiles as output, rather than a single point.

Random forests grow a large number of trees (for instance, the default in the “randomForest” R package is 500 trees) splitting each tree at each node in a random way. At each node, a random selection of predictor variables (of size known as the “mtry” parameter) is chosen.

The quantile regression forest paper [

66] defines a pair of variables

X and

Y, a covariate or predictor variable and a real-valued response variable, respectively. Standard regression analysis develops an estimate

of the conditional mean of the response variable

Y given a particular value

.

The original random forest then provides a point forecast for

where

are the original observations,

k single trees are in use, and

is the random parameter vector determining how to split the tree at each node.

is the average of a weight vector defined over

calculated for the new data point

as follows:

where

is a weight vector defined for the

k trees, 1, ...,

t. Further details may be found in [

66].

Quantile regression forests then develop a conditional distribution function of Y given , using these weights .

4. Case Study

In this section, we investigate the competition data in detail, and provide pseudocode for our specific approach, showing the sequential improvement of the error rate over the period of the competition.

4.1. Initial Investigation

We began by using the generalized additive model as seen in [

67], which has also been used in a similar model for bike-sharing demand using weather and temporal variables [

68]. This was to develop an initial feel for how temperature and solar variables in the ECMWF (ERA5) data set affected each building and solar installation, along with temporal variables (weekend, time of day, and day of year).

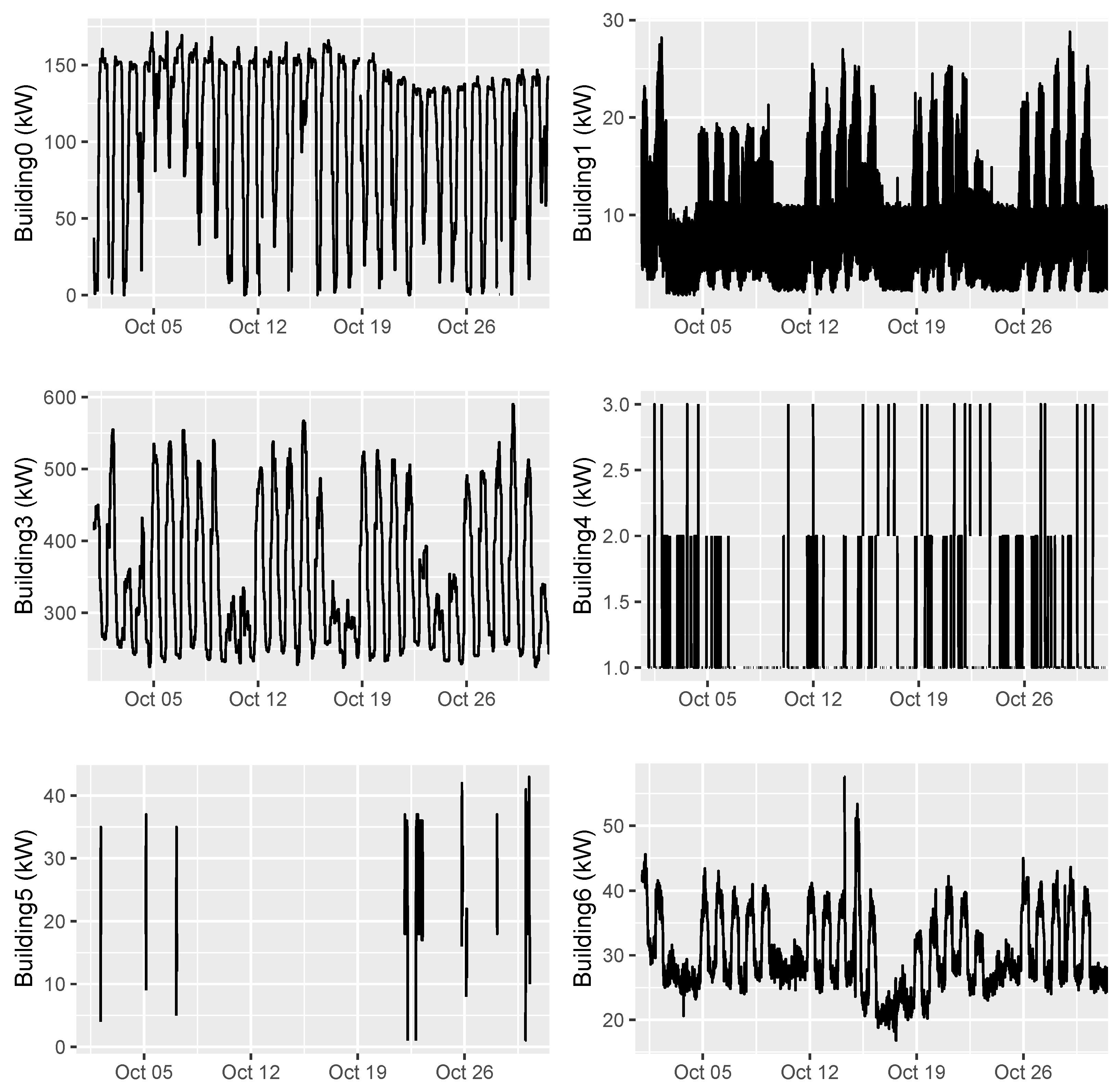

We noticed that the buildings were very different in terms of load on weekend and public holidays (see Building 1, 3 and 6 in

Figure 2), and that temperature and solar (leading and lagging) were the most critical predictor variables in the models for the buildings and solar output.

We quickly switched to a random forest model as the focus of the competition was purely the lowest error rate, rather than explainability or visualization. This also enabled us to build on the previous experience of [

69]. The

ranger package [

70] in R has provided multi-threaded random forests with an extension of options over those seen in the original

quantregForest package of [

66].

A plot of the building and solar traces for October 2020 (the data held out for Phase 1) is shown in

Figure 2 and

Figure 3.

The solar traces seemed to be genuine 15 min readings, while Buildings 0 and 3 were series of 15 min values repeated four times each. It appeared that Building 4 and Building 5 readings were uncorrelated or poorly correlated with any weather or temporal variables we were provided. Additionally, these time series were missing large numbers of values, with Building 5 in particular containing only 133 values, of which 76 are the values 18, 19, 36 or 37 kW. Thus, for the November 2020 forecast, for Buildings 4 and 5, we simply repeated the median value from October 2020 (i.e., “manual optimisation”); 1 kW and 19 kW, respectively. This observation saved time in the prediction development and iterative process as the observations for only the other four, rather than all six, buildings were used in the combined training data.

We thresholded values from Building 0 and 3 as some of them appeared to be large outliers. Building 0 and 3 upper bounds were set to 606.5 and 2264 kW while the Building 3 lower bound was set to 193 kW.

A “maximal” approach was used; that is, for each building time series, the training data start date decreased month by month as far as possible until the error rate started increasing. For the building time series, this was the months of June, February, May, and January 2020 for Buildings 0, 1, 3 and 6, respectively, i.e., for Building 0, the training data for Phase 1 consisted of June to September 2020 inclusive, while, for Phase 2, the training data were from June to October 2020 inclusive. It was assumed that all the most recent data should be included in training. We attempted to add a recency bias for newer data following [

71] using exponential decay in the

ranger training, but this was unsuccessful.

The approach chosen here was the most difficult and most purely subjective choice made by us in the competition. It was assumed that a full reopening after COVID-19 restrictions were lifted (in October 2020) would not result in a return to pre-2020 levels of building energy use. For example, the maximum Building 3 energy use observed was 683 kW after May 2020 but there were observations of over 1000 kW on 19 March 2020. The last observation over 700 kW was on 27 March 2020 after which it was assumed stricter COVID-19 lockdowns commenced.

4.2. Forecast Code

The forecast code was built in R due the availability of useful packages such as ranger, xts, and lubridate.

The following Algorithm shows the pseudocode loop we used for the model development in Phase 1. The same R code was used for Phase 1 (October) and Phase 2 (November) with only the phase parameter changed.

Feature selection was generally performed manually using our knowledge of other competitions and experience in solar and energy forecasting, rather than an automated step-wise process of addition and subtraction of variables. This was motivated by time pressure of the competition. Initially all groups of buildings and solar installations were trained together, and the highest variables in terms of importance across all the group were extracted. The idea was to avoid overfitting and save time by choosing only directly relevant variables and testing only against a proxy for the evaluation metric—the MASE of Phase 1. In a production environment the validity of these assumptions should be verified through cross-validation.

5. Results

The evaluation metric for the competition was the MASE error rate; in this section, we show how we were able to reduce this during the competition by the approaches described, in both the evaluation and training phases.

After performing feature selection for each building and setting the value of Building 4 to be 1 kW for the whole month of October, we achieved an error rate (MASE) for Phase 1 of 0.6528. This required the selection of start months for the buildings and solar data.

By the end of Phase 1, we had lowered this to 0.6320 by incorporating median forecasting of the time series (that is, the 50th percentile in quantile regression) and adding in BOM solar data.

On 13 October, the competition organizers released individual Phase 1 time series and we began investigating how the MASE value was derived using the provided data and R program.

During this improvement process, we observed that forecasting Solar0 and Solar5 as linear combinations of the other solar variables was working better than the actual Solar0 and Solar5 prediction, that some pairs of solar series were much more highly correlated than other pairs, and that buildings 3/6 were also highly correlated. This caused us to attempt various groupings of solar and building training data as explained in Algorithm 1.

We added the following improvements sequentially through experimentation as each change seemed reasonable, as we considered each change would improve the error rate for Phase 2 (November 2020) as well. The possibility of overfitting seemed minimal, as each of the changes could be justified with reference to the closest month of October 2020.

Added cloud cover variables ± 3 h. The effect may be seen in

Figure 4 with the predicted solar (black line) beginning to closely match the actual solar (red line) during the day. (MASE 0.6243, 16 October)

Selected solar data from beginning of 2020 instead of from day 142 (22 May). (MASE 0.6063, 17 October)

Selected start month (0–8) for each of four building series from 2020, added all possible weather variables, set Building 5 equal to median training value 19 kW (MASE 0.5685, 18 October)

Fixed up Solar5 data by filtering out values less than 0.05 kW. (MASE 0.5387, 24 October)

Trained all solar and building data together following [

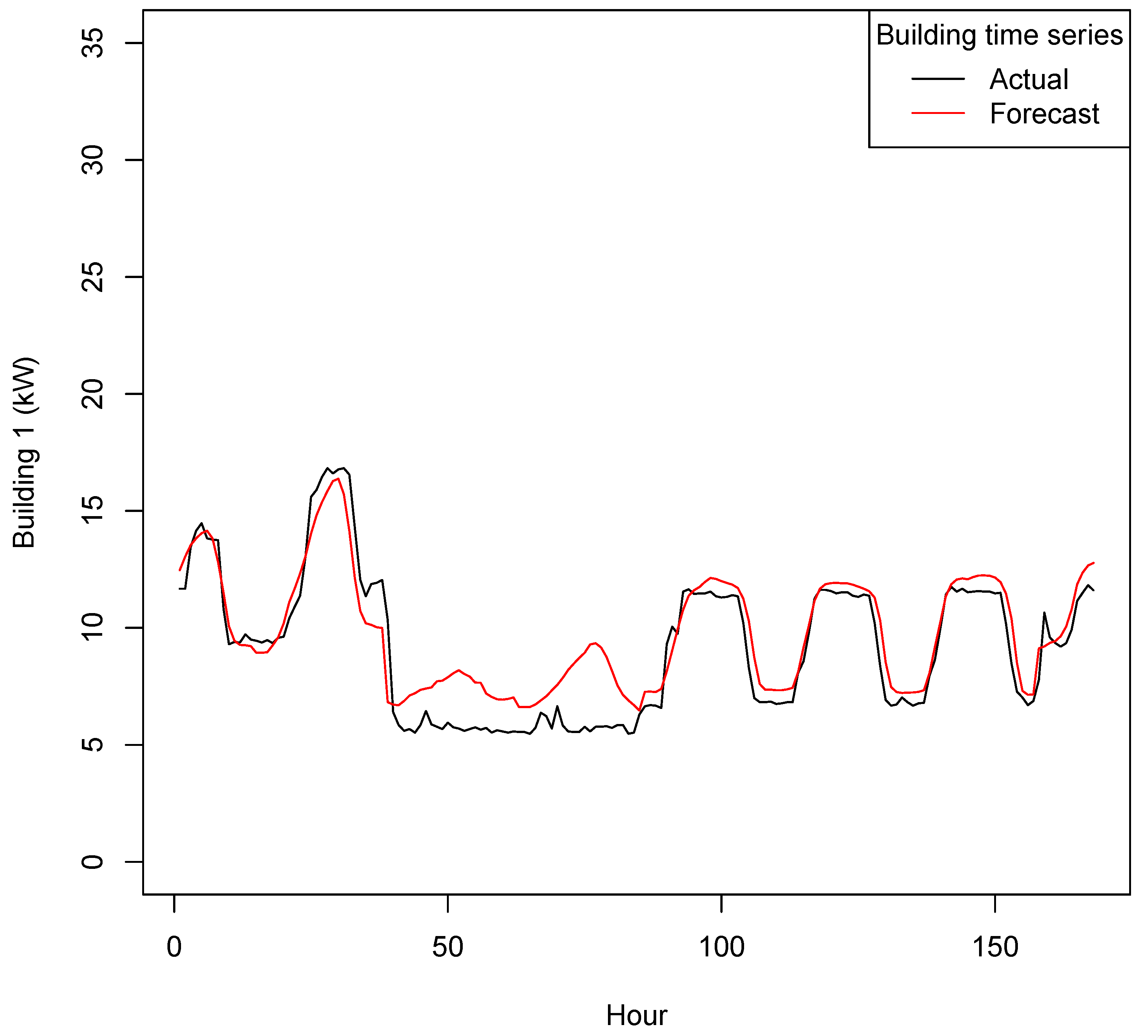

10]. A building forecast with averaged hourly values may be seen in

Figure 5; the weekend/weekday pattern is clear (1 October is a Thursday). (MASE 0.5220, 30 October)

Fixed up Solar0 data by same filtering as for Solar5. (MASE 0.5207, 31 October)

Added in separate binary decision variables for each day of the week. (MASE 0.5166, 2 November)

| Algorithm 1: Model development for Phase 1. |

Select a time series or group of time series from the 12 series Perform adjustment:

- (a)

Adjust start and end dates of training data - (b)

Perform thresholding of energy values (effective for Solar 0 and Solar 5 series) - (c)

Add or remove predictor variables (e.g., leading and lagging weather variables, BOM variables) - (d)

Adjust grouping of solar or buildings - (e)

Adjust random forest parameters (ntrees and mtry)

If the adjustment decreases the MASE for Phase 1, then retain it; else discard it Go to Step 1.

|

Figure 4.

Solar 0 Forecast vs Actual (kW)—Phase 1 (1–7 October 2020).

Figure 4.

Solar 0 Forecast vs Actual (kW)—Phase 1 (1–7 October 2020).

Figure 5.

Building 1 Forecast vs Actual (kW)—Phase 1 (1–7 October 2020).

Figure 5.

Building 1 Forecast vs Actual (kW)—Phase 1 (1–7 October 2020).

We were expecting a similar MASE for Phase 2; however, the “reopening” effects of lockdown appeared to result in a reversion to historic usage patterns in some of the buildings, which diverged from our forecast.

Naturally this “reopening” affected all the results of all competitors. In an actual production environment, instead of having a “month-ahead” forecast, the forecasts would be day-ahead and able to rapidly adjust to reopening effects.

Although we do not know the types of the six buildings used in the competition, we surmise that in November 2020 the air-conditioning use of some of them began to revert to the long-term mean. Thus, our approach of choosing different starting months for each building to minimize MASE vis-a-vis October 2020 led to model outperformance.

Model Description

We summarise the predictor variables used in the building and solar modelling and discuss their relative importance.

A list of the 74 predictor variables used in the model for Buildings 0, 1, 3 and 6 is in

Table 3. Example values are given for each variable. Similarly, the 39 predictor variables used in the Solar model are shown in

Table 4.

These variables are being used to predict the quarter-hour energy usage for each of the buildings; that is, a different model is used for each quarter-hour offset (:00, :15, :30 and :45 of each hour). In the final model, all the solar and building variables (for Buildings 0, 1, 3 and 6) were normalized using the maximum value found in the training data.

The other weather variables (t2m, d2m, wind, MSLP, R, SSRD, STRD and TCC) are the variables provided by OikoLab via the ERA5 model: 2 m temperature, 2 m dewpoint temperature, wind speed, mean sea level pressure, relative humidity, surface solar radiation downward, and surface thermal radiation downward (as seen in

Table 1 and

Table 2).

The “wh” variable identifies the building being predicted, analogously to the same variable in [

10]. Variable name postfixes refer to leading and lagging variables one, two or three hours from the period. Postfixes of 24, 72 and 48 are used for building training—the temperatures 24, 72 and 48 h ago based on the market demand modelling of [

12]. These proved to be significant variables in the building energy forecasting.

The variables labelled Moorabbin, Oakleigh and Olympic are repeated values for the BOM daily solar global exposure variables at three sites, i.e., in each quarter hour, the variable is assigned the daily solar global exposure for that day, as the value is measured from midnight to midnight.

The variables “

sin_

hr” and “

sin_

day” refer to the Fourier terms related to the hour of the day and the day of the year (Julian date). Thus,

and

and similarly for the cosine term. These terms model the diurnal and annual cycle in the building energy usage and solar generation. Including these temporal terms, plus the weekday/weekend Boolean variable for the buildings, gives a good first approximation to the building energy usage and solar generation. Competition winning entries such as [

72], from the 2014 Global Energy-Forecasting competition, used these terms in both the solar and wind forecasting tracks.

Other variables are binary variables for weekend (“wd”), Monday/Friday (“wd1”), Tuesday/Wednesday/Thursday (“wd2”) and named variables for each day of the week.

For the random forest parameters, each forest was trained with 2000 trees (increased from the default ranger value of 500). The mtry parameter was set to 43 for the forests training buildings 0, 1, 3 and 6 together; to 14 for the forests for Building 1 only; 19 for the forests for Building 6; and 13 for the forests for the solar installations. In ranger, the default mtry parameter is the (floored) square root of the number of independent variables. The variable count is 74 for buildings and 39 for solar; thus, the default mtry parameters would be 8 and 6, respectively, significantly differing from the competition values.

Out of the individuals/teams who made submissions to the evaluation Phase, our entry had the lowest MASE for Phase 2 of 0.6460.

The outperformance is due to many factors including the fine-tuning described above in

Section 4.2. We believe that relative to other competitors the approaches of thresholding each building input data set differently, modelling all solar time series together, and including both daily and hourly weather data in the model led to its strong outperformance.

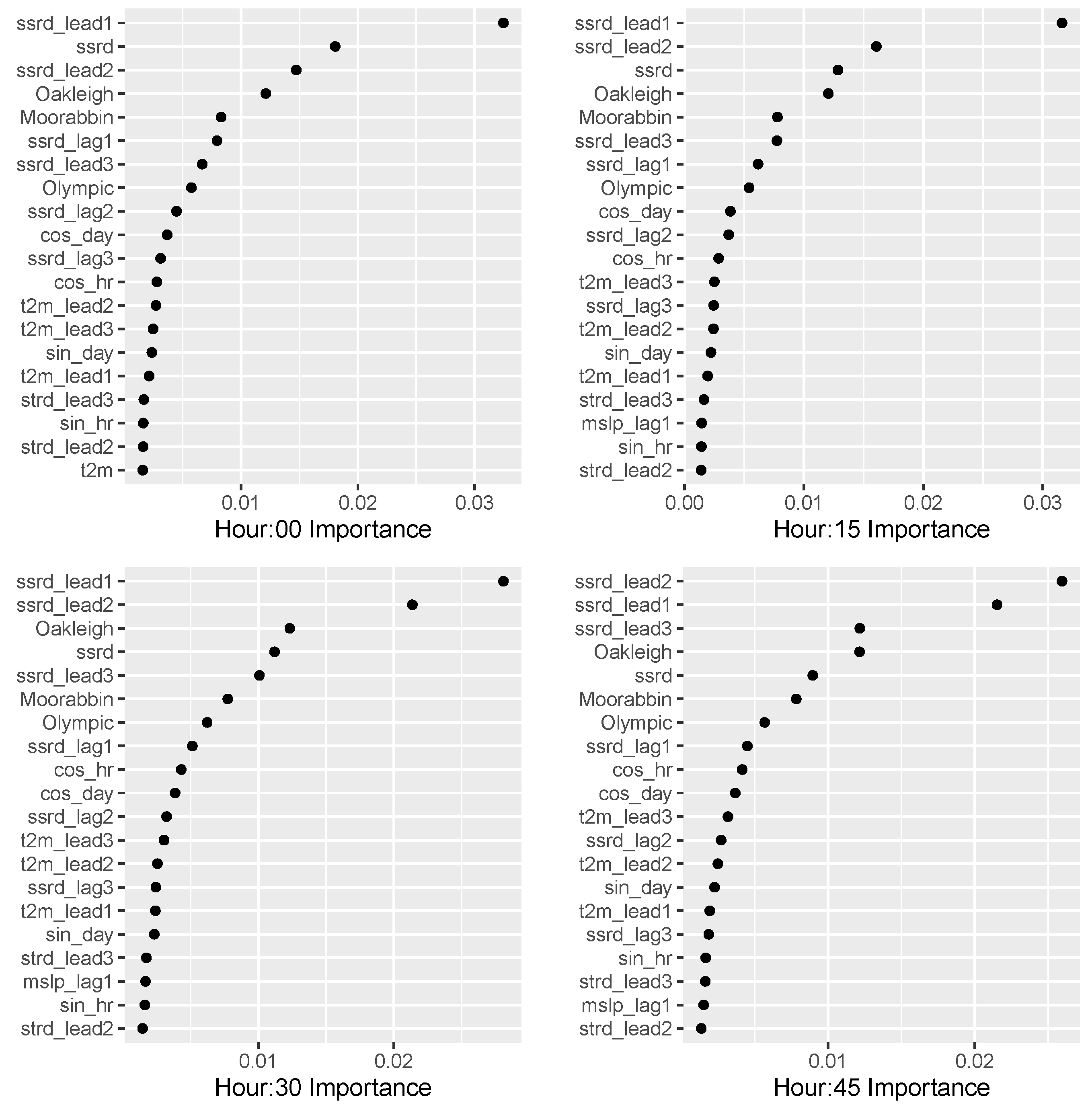

It is difficult to pinpoint the relative contribution of each change, as undoing each change and recalculating the MASE, or even calculating “drop-column importance” for each variable would be very time consuming. Instead, we plot the importance (calculating using permutations in

ranger) of the top 20 variables. The plots for the model, for each 15 min offset in an hour, built for Buildings 0, 1, 3 and 6 and the solar model are shown in

Figure 6 and

Figure 7.

The distance from the Monash campus in a straight line is 3.2 km to Oakleigh, 8.3 km to Moorabbin, and 16.1 km to Olympic Park; this ordering is reflected in the descending order of variable importance in the building and solar models.

For the buildings, the weekday/weekend Boolean variable, followed by the “ssrd” solar variables, the Fourier terms for the hour and Julian date, and the temperature variables are the most important.

For the solar installations, the “ssrd” variables (lagging and leading) followed by the daily BOM solar variables, following by Fourier terms for hour, day and then temperature variables were most important. However, cloud cover, pressure and surface thermal radiation variables (lagging and leading) were still included in the solar model as they improved the performance for Phase 1.

A curious feature of the relative variable importance in the models is seen in the “ssrd” variables; for three of the Building models (:15, :30, and :45), “ssrd” itself is the most important solar variable. But in the four solar models, the important “ssrd” variables are all leading variables and the most important temperature “t2m” variables are also leading variables, often two or three hours into the future.

6. Discussion

After the competition finished, we investigated whether the forecast could have been improved by using extra data that was not mentioned or provided explicitly by the organizers. This could increase the usefulness and generalisability of our model for other applications.

For example, competitors were instructed to download a file of Victorian half-hourly electricity grid pool prices from the Australian Energy Market Operator (AEMO) website for the months of October and November 2020, for use with the optimisation section of the competition. The file also contained electricity demand for the region of Victoria. Changing the URL allowed competitors to access price and demand for all other months.

Elsewhere on the AEMO website, files containing five-minute price and demand (that is, “dispatch period” rather than 30 min “trading period”) data were available. As of 1 July 2021 (after the competition forecast period), the Australian electricity grid has been operated on a “five-minute settlement” basis [

73]. This means that generators are paid for their output based on a pool price which changes every five minutes, including in the Victoria region.

Microgrids can be run as direct market participants; that is, it could be envisaged that building air conditioning, for example, could be ramped up or down depending on the real-time pool price. It is not known if the competition microgrid was being operated in this way. The microgrid could be operated based on half-hourly pool prices, although market participants can also see the five-minute pool prices.

The Australian Bureau of Meteorology provides one-minute solar data from the nearby Tullamarine Airport [

74] while the City of Melbourne provides 15 min microclimate sensor readings [

75].

Other numerical weather prediction (NWP) data are available. As noted above, other published solar models use different variables from the ERA5 data set. The variables at the exact site location were obtained by OikoLab through the process of bilinearly interpolating the nearest four ERA5 data points. Using only the magnitude of the wind rather than its direction removes nuance from the wind data. Inverse distance weighting the four points is another possible technique that could be used to interpolate between the ERA5 data points. The usual exponent used is 2 as in the original paper [

76] but this can be varied; see for example [

77].

From the ECMWF, higher-resolution data known as ERA5-Land [

78] is available, which covers the same variables at a 0.1 × 0.1 degree grid resolution, except for cloud cover. For the competition site, however, only three points on land were available.

The Japanese Meteorological Agency provides JRA-55 historical reanalysis data at a 3-hourly, 0.5625 degree resolution [

79] and the NCEP in the US provides GFS (Global Forecasting System reanalysis data) at a 3-hourly, 0.25 degree resolution [

80]. The JRA-55 reanalysis point was located only 400 m away from the microgrid.

Finally, the US National Aeronautics and Space Administration (NASA) provides data from MERRA-2 [

81] at an hourly, 0.5 latitude × 0.625 longitude degree resolution, examined in [

61]; the model “SWGNT” variable can be used for the solar radiation downwards variable.

The website “pvoutput.org” [

82] allows users who donate to download historical data from photovoltaic installations. Such data can be available at 5 min resolution. These data are used in the Australian Solar Energy Forecasting System (ASEFS) of AEMO [

83] and are available at several sites near the microgrid. Similar data are available on a commercial basis from a different company [

84].

The “Weatherman” paper [

85] described a method for determining the parameters of a solar installation by examining time series data of its output, and then estimating its size, location, angle of inclination, and orientation. A similar approach was implemented to locate a house using PV data in [

86] based on ECMWF data and PV simulation. This approach may also have been useful in this and similar competitions, but could not pursued due to lack of time.

Including AEMO 5-minute Victorian demand reduced the Phase 1 MASE slightly (final average MASE 0.6395) while including AEMO 5-minute price data or MERRA-2 solar data reduced the MASE by similar amounts.

In any case, including AEMO price or demand data (forecasts) in a production model may not be realistic, unless the microgrid trades in the wholesale electricity market. Additionally, MERRA-2 and JRA-55 are purely “reanalysis” data and thus no corresponding forecast is currently available, as for BOM and ECMWF data.

Ultimately, it was determined that only the one-minute BOM solar data and PVOutput.org data provided significant improvements in the competition framework.

Table 5 shows the MASE for six competitors’ entries. The building and solar MASE values are labelled “b” and “s”, respectively. The mean MASE over all 12 time-series values for Phase 2 is shown in the top row. This was the benchmark used for the forecasting prize of the competition.

Our error rates are in the column labelled “1” while those for five other entrants are labelled “2” to “6” for comparison (2, 3 and 4 were ranked second, third and fourth). The last two columns labelled “BOM” and “PVO” show the MASE when one-minute BOM solar data from Tullamarine Airport is included and when the 15-min solar output (from PVOutput.org) of seven houses nearby is added to the model (without adjusting any other model parameters). In the last case, at almost all houses, the 15-min solar data for 2019 and 2020 is missing at least one data point. This limited the number of houses in the vicinity to seven.

For the last “PVO” case, the solar MASEs are all in the range 0.22 to 0.35 (down from 0.38 to 0.60 in the submitted solution). It is noteworthy that the Building 1 MASE also decreases with the addition of these variables.

Adding one-minute solar data from the Tullamarine Airport, which is 37.7 km from the site in great circle distance, has a much smaller effect, with the solar MASEs in the range 0.34 to 0.54.

As including these extra variables lowers the MASE significantly, a practical finding is that competition winners should open-source their code for review not just by competition organizers but the public. This should be communicated from the competition outset. An example from Kaggle showed how hidden code was detected long after prizes were awarded [

87]. The possibility of competitors using these extra variables in an intermediate step before the final solution cannot be excluded.

7. Conclusions

To forecast 15-min energy and solar time-series data, we applied the quantile regression forest of [

66] based on the original random forest idea of [

65] as provided in the R

ranger package [

70]. This was highly effective in conjunction with techniques of thresholding, grouping related buildings and solar installations, combining daily and hourly data from two uncorrelated data sources, and normalization. The quantile regression forest approach was ideal as it required minimal parameter tuning and thus the process of building and testing models was expedited in the time-pressured environment of the competition. Other more complex approaches such as gradient boosting machines may have required more parameter tuning.

In the training phase, the training data for each building and solar installation was extended backwards from the latest available data, month by month, until the error rate for each building or solar time series began to increase. In a production environment as opposed to a competition, this approach should be verified by performing cross-validation. This approach of grouping and thresholding may have captured different building types present on campus and their differing response to reopening, as seen in studies of the effect of COVID-19 lockdowns on energy at university campuses worldwide.

The success of combining daily solar exposure data from the Australian BOM with hourly weather data from the ECMWF appeared to play a large part in the outperformance of our approach versus the other contest entrants.

For real-world forecasting, it is beneficial to take the performance of private PV systems into account; sites such as PVOutput.org can help in this case.

Further research could include studying the relative effect of each change, the potential effect of interpolating weather variables differently, and testing other machine-learning algorithms. In particular, it would be useful to generalize the approach of grouping related buildings and solar installations. For the generalization from this microgrid to, for example, houses in a larger electricity grid, the time-series grouping [

88] could be based on different kinds of houses, residents, and aspects of solar installations, and could be derived automatically rather than manually as in this competition.