A Novel Attention Temporal Convolutional Network for Transmission Line Fault Diagnosis via Comprehensive Feature Extraction

Abstract

:1. Introduction

2. Preliminaries

2.1. The Short Circuit Faults of Transmission Line

2.2. The Basic PCA Method

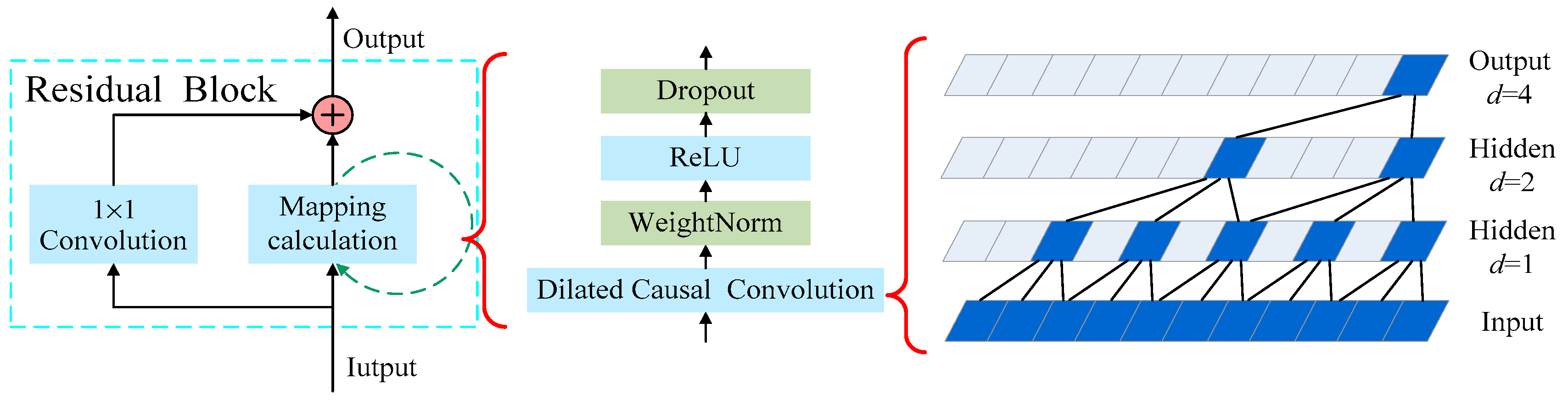

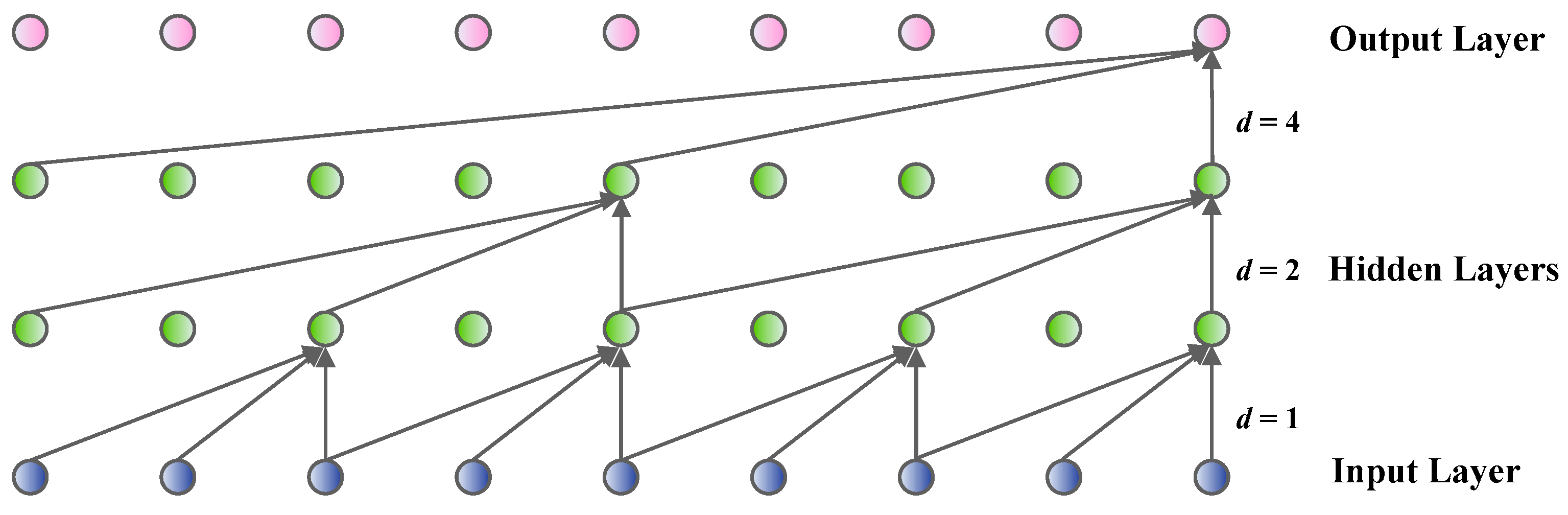

2.3. The Basic Temporal Convolutional Network

3. The Developed CFP-Based Feature Extraction Technique

4. The Enhanced Attention TCN-Based Fault Diagnosis Model

4.1. The Established SCA Network

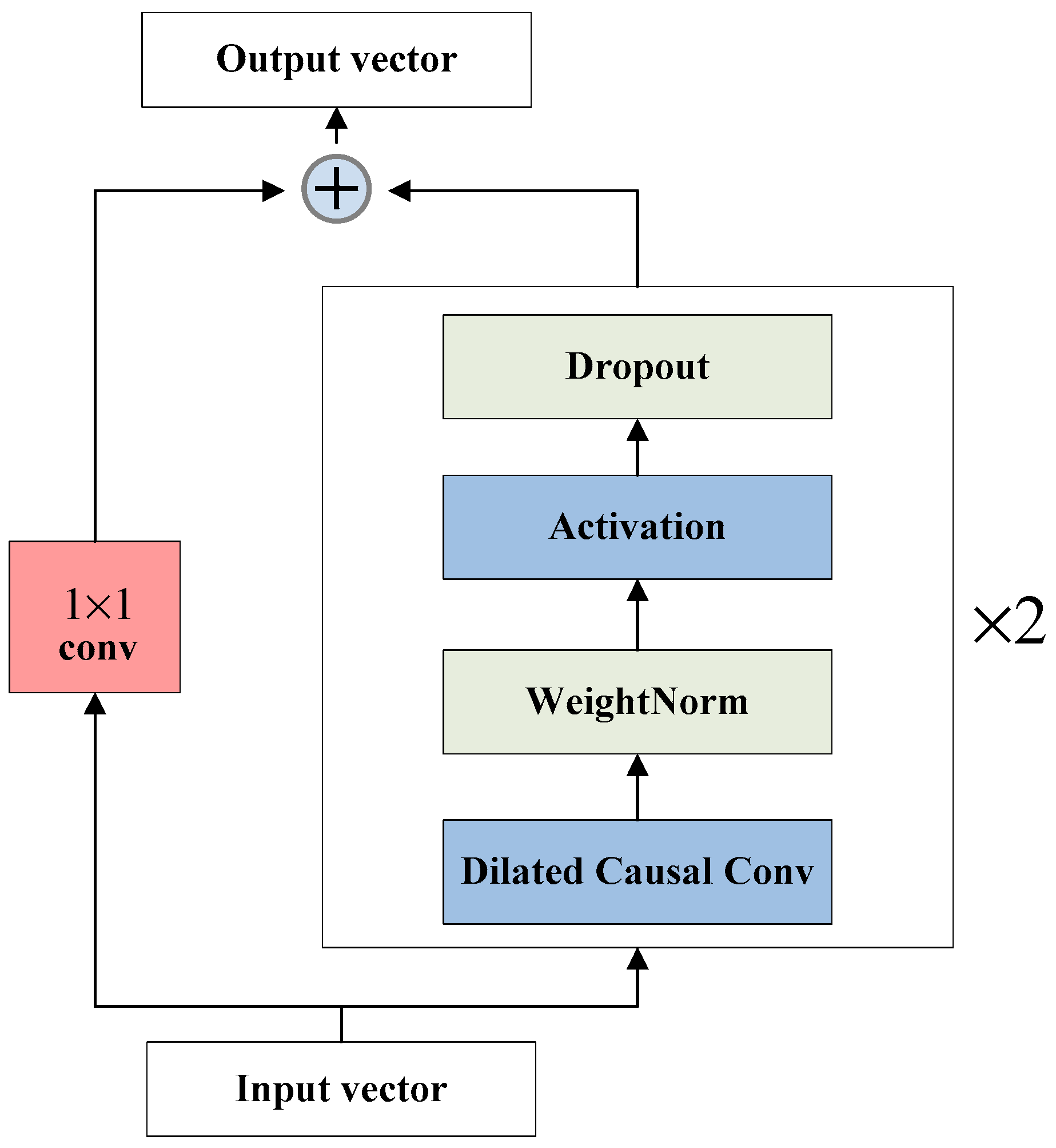

4.2. The Developed EATCN Fault Diagnosis Model Based on the SCA Network

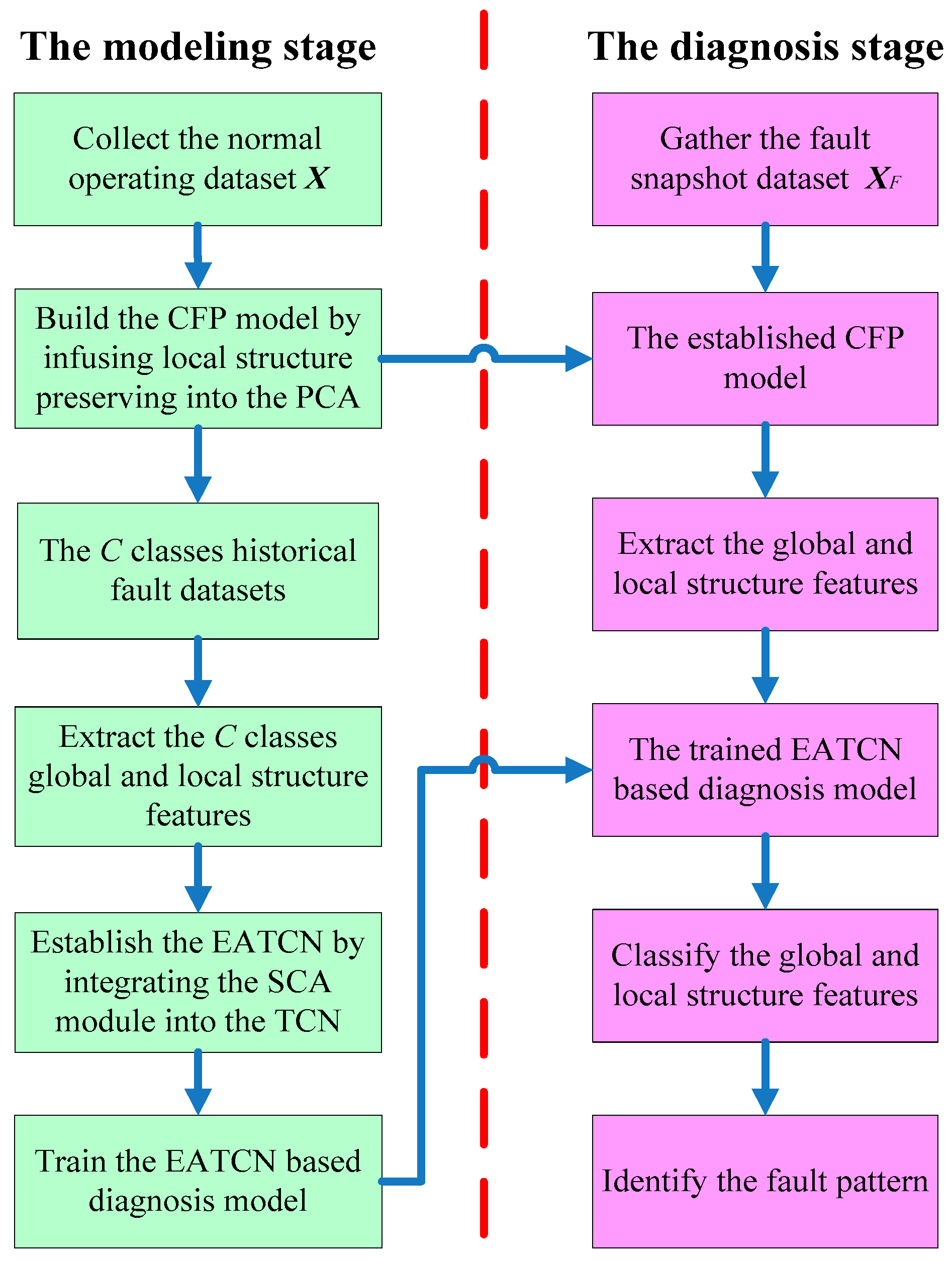

5. The EATCN-Based Fault Diagnosis Scheme for the Transmission Line

6. The Experiments and Comparisons

6.1. Introduction of the Experimental Data

6.2. Compared Approaches and Effectiveness Evaluation Index

6.3. Comparison of the Fault Diagnosis Results

- (1)

- Fault diagnosis results comparison for the pattern ABC

- (2)

- Fault diagnosis results comparison for the pattern BC

- (3)

- Fault diagnosis results comparison for the pattern ABG

- (4)

- Fault diagnosis results comparison for the eleven patterns

6.4. Fault Diagnosis Effects of the Proposed EATCN under Different Noise Environments

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

Nomenclature

| PCA | principal component analysis |

| LSP | local structure-preserving |

| CFP | comprehensive feature preserving |

| TCN | temporal convolutional network |

| SCA | skip connection attention |

| EATCN | enhanced feature extraction-based attention TCN |

| CNN | convolutional neural network |

| LSTM | long short-term memory |

| VRF | variable refrigerant flow |

| LG | line to ground |

| LL | double lines (line-to-line) |

| LLG | double lines to ground |

| LLL | triple lines |

| LLLG | triple lines to ground |

| PC | principal component |

| GRU | gate recurrent unit |

| ReLU | rectified linear unit |

| LSP | local structure-preserving |

| ATCN | attention temporal convolutional network |

| NF | no fault (normal operation) |

| AG | short fault of line a to ground |

| BG | short fault of line b to ground |

| CG | short fault of line c to ground |

| AB | short fault of line a to line b |

| BC | short fault of line b to line c |

| AC | short fault of line a to line c |

| ABG | short fault of line a and line b to ground |

| BCG | short fault of line b and line c to ground |

| ACG | short fault of line a and line c to ground |

| ABC | short fault of line a, line b and line c |

| ABCG | short fault of line a, line b and line c to ground |

| SVM | support vector machine |

| DBN | deep belief network |

| X | original high-dimensional dataset |

| the i-th sample of the matrix X | |

| mean value of the samples | |

| nearest neighbors of the | |

| local neighborhood dataset subset of the | |

| the number of samples | |

| the number of measured variables | |

| S | covariance of the datasets of the PCA |

| D | diagonal matrix of the PCA |

| loading matrix of the PCA | |

| T | score matrix of the PCA |

| the i-th score vector of the matrix T | |

| the number of retained leading vectors of the PCA | |

| E | residual matrix in PCA’s the residual space |

| F(n) | convolution computation of the input vector’s n-th element |

| input vector of the TCN | |

| q | filter with the size of k |

| d | dilation coefficient |

| p | loading vector of the CFP |

| W | similarity matrix of the LSP |

| element of the similarity matrix W | |

| neighborhood relationship between the samples and | |

| Laplacian matrix of the LSP | |

| objective function of the PCA | |

| objective function of the LSP | |

| objective function of the CFP | |

| tradeoff parameter of the CFP | |

| first d largest eigenvalues of the CFP | |

| eigenvectors of related to in the CFP | |

| P | loading vector of the CFP |

| score matrix of the CFP | |

| residual matrix of the CFP | |

| fault sample | |

| latent significant features | |

| snapshot dataset | |

| V | value vector of the SCA |

| K | key vector of the SCA |

| Q | query vector of the SCA |

| Fscore | attention scores of the SCA |

| fault diagnosis rate of the i-th fault pattern | |

| average fault diagnosis rate |

References

- Liu, Y.; Lu, D.; Vasilev, S.; Wang, B.; Lu, D.; Terzija, V. Model-based transmission line fault location methods: A review. Int. J. Electr. Power Energy Syst. 2023, 153, 109321. [Google Scholar] [CrossRef]

- Rajesh, P.; Kannan, R.; Vishnupriyan, J.; Rajani, B. Optimally detecting and classifying the transmission line fault in power system using hybrid technique. ISA Trans. 2022, 130, 253–264. [Google Scholar] [CrossRef]

- Belagoune, S.; Bali, N.; Bakdi, A.; Baadji, B.; Atif, K. Deep learning through LSTM classification and regression for transmission line fault detection, diagnosis and location in large-scale multi-machine power systems. Measurement 2021, 3, 109330. [Google Scholar] [CrossRef]

- França, I.A.; Vieira, C.W.; Ramos, D.C.; Sathler, L.H.; Carrano, E.G. A machine learning-based approach for comprehensive fault diagnosis in transmission lines. Comput. Electr. Eng. 2022, 101, 108107. [Google Scholar] [CrossRef]

- Moradzadeh, A.; Teimourzadeh, H.; Mohammadi-Ivatloo, B.; Pourhossein, K. Hybrid CNN-LSTM approaches for identification of type and locations of transmission line faults. Int. J. Electr. Power Energy Syst. 2022, 135, 107563. [Google Scholar] [CrossRef]

- Haq, E.U.; Jianjun, H.; Li, K.; Ahmad, F.; Banjerdpongchai, D.; Zhang, T. Improved performance of detection and classification of 3-phase transmission line faults based on discrete wavelet transform and double-channel extreme learning machine. Electr. Eng. 2021, 103, 953–963. [Google Scholar] [CrossRef]

- Li, C.; Shen, C.; Zhang, H.; Sun, H.; Meng, S. A novel temporal convolutional network via enhancing feature extraction for the chiller fault diagnosis. J. Build. Eng. 2021, 42, 103014. [Google Scholar] [CrossRef]

- Fahim, S.R.; Muyeen, S.M.; Mannan, M.A.; Sarker, S.K.; Das, S.K.; Al-Emadi, N. Uncertainty awareness in transmission line fault analysis: A deep learning based approach. Appl. Soft Comput. 2022, 128, 109437. [Google Scholar] [CrossRef]

- Liu, L.; Liu, J.; Wang, H.; Tan, S.; Yu, M.; Xu, P. A multivariate monitoring method based on kernel principal component analysis and dual control chart. J. Process Control. 2023, 127, 102994. [Google Scholar] [CrossRef]

- Zhu, A.; Zhao, Q.; Yang, T.; Zhou, L.; Zeng, B. Condition monitoring of wind turbine based on deep learning networks and kernel principal component analysis. Comput. Electr. Eng. 2023, 105, 108538. [Google Scholar] [CrossRef]

- You, L.X.; Chen, J. A variable relevant multi-local PCA modeling scheme to monitor a nonlinear chemical process. Chem. Eng. Sci. 2021, 246, 116851. [Google Scholar] [CrossRef]

- Lu, X.; Long, J.; Wen, J.; Fei, L.; Zhang, B.; Xu, Y. Locality preserving projection with symmetric graph embedding for unsupervised dimension reduction. Pattern Recognit. 2022, 131, 108844. [Google Scholar] [CrossRef]

- Zhou, Y.; Xu, K.; He, F.; He, D. Nonlinear fault detection for batch processes via improved chordal kernel tensor locality preserving projections. Control. Eng. Pract. 2020, 101, 104514. [Google Scholar] [CrossRef]

- He, Y.L.; Zhao, Y.; Hu, X.; Yan, X.N.; Zhu, Q.X.; Xu, Y. Fault diagnosis using novel AdaBoost based discriminant locality preserving projection with resamples. Eng. Appl. Artif. Intell. 2020, 91, 103631. [Google Scholar] [CrossRef]

- Chen, B.J.; Li, T.M.; Ding, W.P. Detecting deepfake videos based on spatiotemporal attention and convolutional LSTM. Inf. Sci. 2022, 601, 58–70. [Google Scholar] [CrossRef]

- Chen, Y.; Lv, Y.; Wang, X.; Li, L.; Wang, F.Y. Detecting traffic information from social media texts with deep learning approaches. IEEE Trans. Intell. Transport. Syst. 2018, 20, 3049–3058. [Google Scholar] [CrossRef]

- Zhang, H.; Yang, W.; Yi, W.; Lim, J.B.; An, Z.; Li, C. Imbalanced data based fault diagnosis of the chiller via integrating a new resampling technique with an improved ensemble extreme learning machine. J. Build. Eng. 2023, 70, 106338. [Google Scholar] [CrossRef]

- Guo, D.F.; Zhong, M.Y.; Ji, H.Q.; Liu, Y.; Yang, R. A hybrid feature model and deep learning based fault diagnosis for unmanned aerial vehicle sensors. Neurocomputing 2018, 319, 155–163. [Google Scholar] [CrossRef]

- Lu, W.; Li, Y.; Cheng, Y.; Meng, D.; Liang, B.; Zhou, P. Early fault detection approach with deep architectures. IEEE. Trans. Instrum. Meas. 2018, 67, 1679–1689. [Google Scholar] [CrossRef]

- Guo, Y.; Tan, Z.; Chen, H.; Li, G.; Wang, J.; Huang, R.; Liu, J.; Ahmad, T. Deep learning-based fault diagnosis of variable refrigerant flow air-conditioning system for building energy saving. Appl. Energy 2018, 225, 732–745. [Google Scholar] [CrossRef]

- Zhou, Z.; Li, G.; Wang, J.; Chen, H.; Zhong, H.; Cao, Z. A comparison study of basic data-driven fault diagnosis methods for variable refrigerant flow system. Energy Build. 2020, 224, 110232. [Google Scholar] [CrossRef]

- Greff, K.; Srivastava, R.K.; Koutník, J.; Steunebrink, B.R.; Schmidhuber, J. LSTM: A search space odyssey. IEEE Trans. Neural Netw. Learn. Syst. 2016, 28, 2222–2232. [Google Scholar] [CrossRef]

- Guo, Q.; Zhang, X.H.; Li, J.; Li, G. Fault diagnosis of modular multilevel converter based on adaptive chirp mode decomposition and temporal convolutional network. Eng. Appl. Artif. Intell. 2022, 107, 104544. [Google Scholar] [CrossRef]

- Dudukcu, H.V.; Taskiran, M.; Taskiran, Z.G.; Yildirim, T. Temporal convolutional networks with RNN approach for chaotic time series prediction. Appl. Soft Comput. 2023, 133, 109945. [Google Scholar] [CrossRef]

- Niu, Z.; Zhong, G.; Yu, H. A review on the attention mechanism of deep learning. Neurocomputing 2021, 452, 48–62. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 5998–6008. [Google Scholar]

- Deng, D.; Jing, L.; Yu, J.; Sun, S. Sparse attention LSTM for sentiment lexicon construction. IEEE/ACM Trans. Audio Speech Lang. Process 2019, 27, 1777–1790. [Google Scholar] [CrossRef]

- Nath, A.G.; Udmale, S.S.; Raghuwanshi, D.; Singh, S.K. Structural rotor fault diagnosis using attention-based sensor fusion and transformers. IEEE Sens. J. 2021, 22, 707–719. [Google Scholar] [CrossRef]

- Fahim, S.R.; Sarker, Y.; Sarker, S.K.; Sheikh, M.R.; Das, S.K. Attention convolutional neural network with time series imaging based feature extraction for transmission line fault detection and classification. Electr. Power Syst. Res. 2020, 187, 106437. [Google Scholar] [CrossRef]

- Fathabadi, H. Novel filter based ANN approach for short-circuit faults detection, classification and location in power transmission lines. Int. J. Electr. Power Energy Syst. 2016, 74, 374–383. [Google Scholar] [CrossRef]

- Farshad, M.; Sadeh, J. Fault locating in high-voltage transmission lines based on harmonic components of one-end voltage using random forests. Iran. J. Electr. Electron. Eng. 2013, 9, 158–166. [Google Scholar]

- Mitra, S.; Mukhopadhyay, R.; Chattopadhyay, P. PSO driven designing of robust and computation efficient 1D-CNN architecture for transmission line fault detection. Expert Syst. Appl. 2022, 210, 118178. [Google Scholar] [CrossRef]

- Abdullah, A. Ultrafast Transmission Line Fault Detection Using a DWT-Based ANN. IEEE Trans. Ind. Appl. 2018, 54, 1182–1193. [Google Scholar] [CrossRef]

- Howard, A.; Sandler, M.; Chu, G.; Chen, L.C.; Chen, B.; Tan, M.; Wang, W.; Zhu, Y.; Pang, R.; Vasudevan, V.; et al. Searching for mobilenetv3. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 1314–1324. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 2016, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Fahim, S.R.; Sarker, S.K.; Muyeen, S.; Das, S.K.; Kamwa, I. A Deep Learning Based Intelligent Approach in Detection and Classification of Transmission Line Faults. Int. J. Electr. Power Energy Syst. 2021, 133, 107102. [Google Scholar] [CrossRef]

- Kundur, P. Power System Stability and Control; McGraw-Hill: New York, NY, USA, 2022. [Google Scholar]

- Kim, J.; Lee, J. Instance-based transfer learning method via modified domain-adversarial neural network with influence function: Applications to design metamodeling and fault diagnosis. Appl. Soft Comput. 2022, 123, 108934. [Google Scholar] [CrossRef]

- Zhao, Z.B.; Zhang, Q.Y.; Yu, X.L.; Sun, C.; Wang, S.B.; Yan, R.Q.; Chen, X.F. Applications of unsupervised deep transfer learning to intelligent fault diagnosis: A survey and comparative study. IEEE Trans. Instrum. Meas. 2021, 70, 3525828. [Google Scholar] [CrossRef]

| Number | Fault Pattern | Fault Description |

|---|---|---|

| 0 | NF | No fault (normal operation) |

| 1 | AG | Short fault of line A to ground |

| 2 | BG | Short fault of line B to ground |

| 3 | CG | Short fault of line C to ground |

| 4 | AB | Short fault of line A to line B |

| 5 | BC | Short fault of line B to line C |

| 6 | AC | Short fault of line A to line C |

| 7 | ABG | Short fault of line A and line B to ground |

| 8 | BCG | Short fault of line B and line C to ground |

| 9 | ACG | Short fault of line A and line C to ground |

| 10 | ABC | Short fault of line A, line B and Line C |

| 11 | ABCG | Short fault of line A, line B and Line C to ground |

| Fault Pattern | SVM | DBN | LSTM | EATCN |

|---|---|---|---|---|

| AG | 75.50% | 82.75% | 86.50% | 93.25% |

| BG | 81.50% | 85.00% | 87.25% | 95.00% |

| CG | 61.75% | 82.00% | 90.75% | 96.25% |

| AB | 70.50% | 79.25% | 85.50% | 94.75% |

| BC | 76.50% | 70.75% | 81.75% | 92.50% |

| AC | 72.25% | 80.00% | 85.50% | 93.75% |

| ABG | 71.50% | 83.50% | 87.25% | 94.25% |

| BCG | 75.75% | 81.75% | 88.75% | 95.50% |

| ACG | 81.00% | 87.25% | 91.50% | 98.75% |

| ABC | 64.25% | 71.75% | 79.00% | 93.50% |

| ABCG | 80.00% | 84.25% | 89.25% | 97.25% |

| FDRaverage | 73.68% | 80.75% | 86.64% | 94.98% |

| Fault Pattern | SVM | DBN | LSTM | EATCN |

|---|---|---|---|---|

| AG | 66.96% | 82.34% | 86.50% | 92.33% |

| BG | 74.94% | 79.63% | 89.26% | 96.69% |

| CG | 68.04% | 78.28% | 87.47% | 95.06% |

| AB | 75.40% | 81.28% | 85.71% | 93.81% |

| BC | 72.51% | 77.53% | 86.97% | 99.20% |

| AC | 76.46% | 79.21% | 88.60% | 95.18% |

| ABG | 73.71% | 79.71% | 85.54% | 93.55% |

| BCG | 74.45% | 82.78% | 84.12% | 95.98% |

| ACG | 82.03% | 89.95% | 84.92% | 94.27% |

| ABC | 71.39% | 79.06% | 87.78% | 93.97% |

| ABCG | 74.94% | 78.74% | 86.65% | 95.11% |

| Paverage | 73.71% | 80.77% | 86.68% | 95.01% |

| Fault Pattern | Variance (0.1) | Variance (0.01) | Variance (0.001) | Variance (0.0001) |

|---|---|---|---|---|

| AG | 90.50% | 93.25% | 94.50% | 95.25% |

| BG | 91.25% | 95.00% | 97.25% | 98.00% |

| CG | 92.75% | 96.25% | 98.25% | 98.75% |

| AB | 90.75% | 94.75% | 96.00% | 96.75% |

| BC | 91.00% | 92.50% | 93.75% | 94.50% |

| AC | 90.25% | 93.75% | 95.50% | 96.25% |

| ABG | 91.50% | 94.25% | 96.25% | 97.00% |

| BCG | 93.50% | 95.50% | 97.50% | 98.25% |

| ACG | 98.00% | 98.75% | 100.00% | 100.00% |

| ABC | 89.50% | 93.50% | 95.00% | 96.00% |

| ABCG | 95.50% | 97.25% | 98.00% | 98.50% |

| FDRaverage | 92.23% | 94.98% | 96.55% | 97.20% |

| Fault Pattern | Variance (0.1) | Variance (0.01) | Variance (0.001) | Variance (0.0001) |

|---|---|---|---|---|

| AG | 89.80% | 92.33% | 93.32% | 94.52% |

| BG | 93.29% | 96.69% | 97.58% | 98.36% |

| CG | 90.97% | 95.06% | 96.79% | 97.53% |

| AB | 92.51% | 93.81% | 95.56% | 96.27% |

| BC | 96.23% | 99.20% | 99.50% | 100.00% |

| AC | 93.07% | 95.18% | 96.74% | 97.68% |

| ABG | 91.83% | 93.55% | 95.98% | 96.39% |

| BCG | 94.42% | 95.98% | 97.70% | 98.48% |

| ACG | 91.46% | 94.27% | 97.26% | 98.36% |

| ABC | 92.08% | 93.97% | 96.53% | 97.38% |

| ABCG | 93.00% | 95.11% | 96.85% | 98.13% |

| Paverage | 92.61% | 95.01% | 96.71% | 97.55% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

E, G.; Gao, H.; Lu, Y.; Zheng, X.; Ding, X.; Yang, Y. A Novel Attention Temporal Convolutional Network for Transmission Line Fault Diagnosis via Comprehensive Feature Extraction. Energies 2023, 16, 7105. https://doi.org/10.3390/en16207105

E G, Gao H, Lu Y, Zheng X, Ding X, Yang Y. A Novel Attention Temporal Convolutional Network for Transmission Line Fault Diagnosis via Comprehensive Feature Extraction. Energies. 2023; 16(20):7105. https://doi.org/10.3390/en16207105

Chicago/Turabian StyleE, Guangxun, He Gao, Youfu Lu, Xuehan Zheng, Xiaoying Ding, and Yuanhao Yang. 2023. "A Novel Attention Temporal Convolutional Network for Transmission Line Fault Diagnosis via Comprehensive Feature Extraction" Energies 16, no. 20: 7105. https://doi.org/10.3390/en16207105

APA StyleE, G., Gao, H., Lu, Y., Zheng, X., Ding, X., & Yang, Y. (2023). A Novel Attention Temporal Convolutional Network for Transmission Line Fault Diagnosis via Comprehensive Feature Extraction. Energies, 16(20), 7105. https://doi.org/10.3390/en16207105