4.1. Dynamic State Estimation

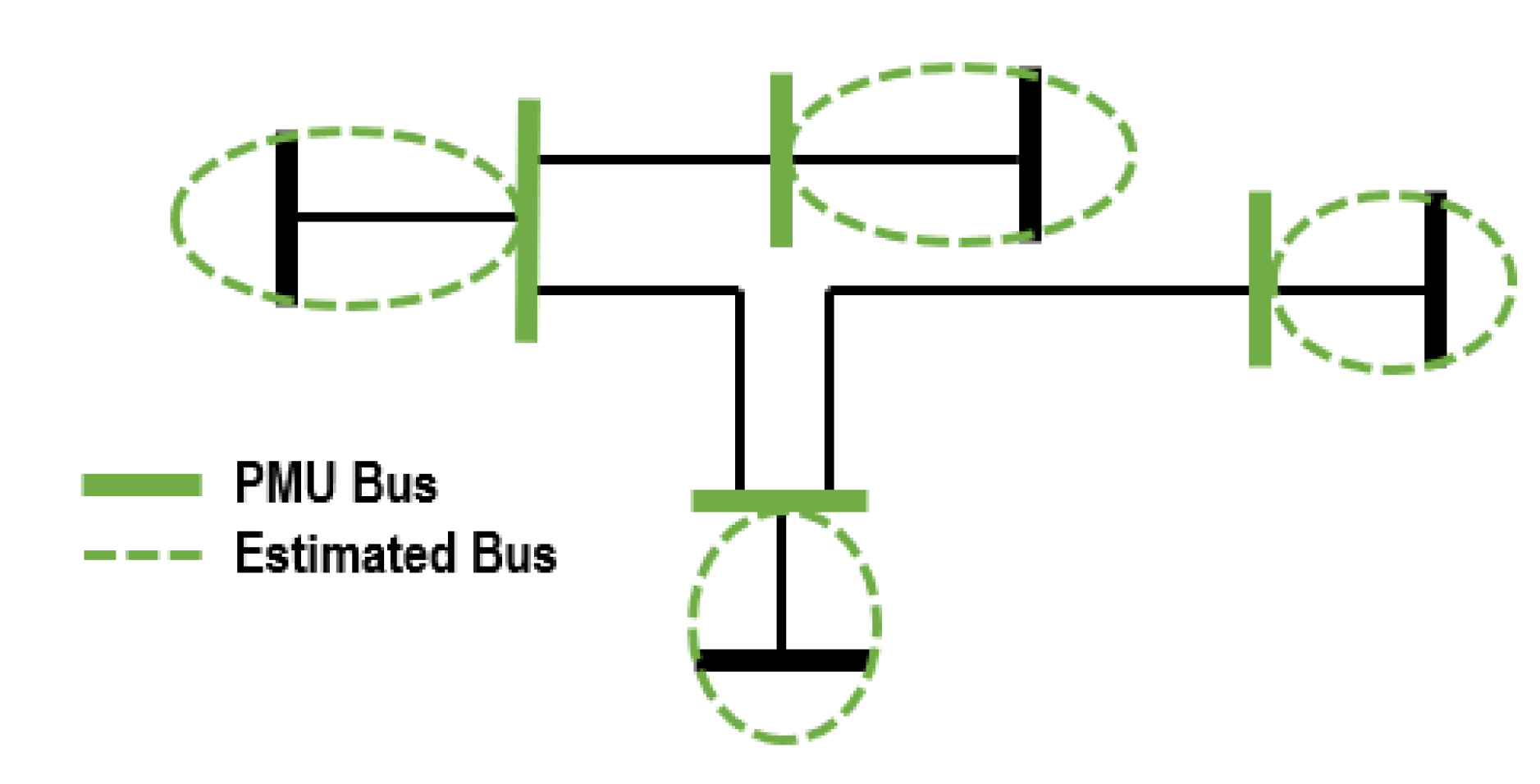

One of the first challenges encountered by researchers in this area was a lack of system observability due to low PMU penetration. By leveraging the electrical relationships of the buses in a network along with techniques originally developed for SSE, PMU-observability can be achieved without having to install PMUs at every bus in a system [

8]. These ideas are illustrated in

Figure 3, where not every bus is equipped with a PMU, yet the algorithm is capable of providing dynamic estimates for all buses in the system.

PM-observability can be achieved via algorithms based on graph theory that take into account the electrical relationships, as well as the topology of the system [

9,

10]. Each year, the typical utility company receives a fixed amount of funding for PMU installation. This results in a fixed amount of PMUs being added to the grid annually. These new PMUs must be installed at key locations to maximize technical and financial gains. When constraints such as the cost of installation or the availability of measurements are considered, the PMU placement problem can be approached as an optimization problem [

11]. In [

12], optimal PMU placement is studied under multiple contingency conditions such as line outages and loss of measurements. Robust PMU placement via mutual information (MI) is investigated in [

13]. This approach takes into consideration uncertainties such as reduced number of states and PMU outages, as well as financial constraints. Financial constraints are once again considered in a Bayesian-estimation-based technique introduced in [

14]. The solution presented in [

15] attempts to strike a balance between estimation accuracy and cost. Additional constraints, such as redundancy for critical buses, prohibited substations, the handling of existing PMUs, and others, can be integrated into the optimization algorithm in order to meet a certain design criteria. For the remainder of this review, all systems will be assumed to be PMU-observable, unless stated otherwise.

DSE frameworks share a common foundation: the Kalman filter (KF) [

16]. A complete derivation of the KF can be found in [

17].

Over time, variations or

extensions of the KF have been proposed to overcome the limitations of the original formulation [

16]. In [

18], a variation of the extended Kalman filter (EKF) is introduced to improve the performance of the filter in cases where a full set of input measurements is not available. An extension developed to improve the ability of the EKF to track transients and disturbances is presented in [

19]. An estimator based on the unscented Kalman filter (UKF) is used in [

20] to streamline the estimation process, while in [

21], a decentralized scheme based on the UKF is investigated.

The work in [

22] presents a KF-based technique for systems containing uncertainties due to noise, saturation, or transients. The idea is to utilize a tuning parameter to ensure that the covariance matrix remains positive definite. Simulations produced encouraging results as this technique outperformed other similar KF-based estimation solutions. Being able to deal with uncertainties related to data is a significant contribution that increases the robustness and the flexibility of DSE.

The work presented in [

23] tackles the challenges that KF-based DSE encounters when estimating larger power systems. Classical KF-based algorithms struggle to overcome uncertainties and nonlinearities present in the state space model of larger power systems, which can lead these filters to become unstable. The unscented Kalman filter (UKF) is used as the foundation of this method. The UFK is reinforced by improving its numerical stability though modifications that guarantee key matrices remain positive semi-definite even in the presence of nonlinear events. This introduces an algorithm that replaces matrix

with the nearest symmetric semidefinite matrix in Frobenius norm whenever

is no longer positive semidefinite. The new matrix

is found through a modified version of Dykstra’s projection algorithm [

24]. Positive definiteness is enforced by replacing negative eigenvalues with their positive equivalent. This solution performed well in some of the testing scenarios; unfortunately, it did not maintain a consistent level of performance across a variety of system topologies. It must be noted that this novel approach did not display the adaptability of more established techniques such as [

25,

26].

An important aspect of DSE is the detection of abnormal data. Some of the classical bad-data detection techniques inherited from traditional SSE, such as residual analysis, can be applied to DSE, as seen in [

27,

28]. However, contemporary solutions are moving in a different direction as new frameworks are being adopted. In [

29], a unified PMU placement and bad-data detection solution is proposed. First, PMU locations are optimized, then abnormal data are detected by analyzing the linear independence of the Jacobian matrix. In [

30], Lagrange multipliers are used to identify errors in WLS estimations. Erroneous values are identified and removed via mixed-integer programming (MIP) in [

31]. An estimator based on the least absolute value (LAV) estimator [

32] is presented in [

33]. This technique offers advantages in terms of efficiency as LAV can handle both the estimations and bad-data detection in a single module. Detrended fluctuation analysis (DFA) is used to develop a novel bad-data detection scheme for dynamic estimation in [

34].

A decentralized solution for abnormal data detection in sychrophasor measurements is developed in [

35]. In this approach, several detector types are used in unison. The first is a detector based on linear regression, the second detector is a variation of Chebyshev’s inequality, and the third detector is based on spatial clustering. At the global level, another layer of detection is used, this one based on Bayesian estimation. This technique was shown to be effective during testing, performing reliably well across a series of tests. This is a data-driven solution, and therefore benefits from a high degree of generality. Another highlight of this technique is its use of Bayesian estimation and a distributed architecture, two very promising methods that studies have just recently begun utilizing. Finally, while the scope of [

35] is limited to abnormal data detection, it has the potential to be a standalone solution if expanded accordingly.

In [

36], a decentralized-approach-based graph theory is presented. The system is broken into a series of observable islands. The number of PMUs is found via optimization algorithms while graph theory ensures observability.

Whether it is dynamic, static, or integrated (covered in the following section) estimation, the integrity and the quality of measurements used by the estimators remains a key issue. For these techniques to flourish, their results must be robust in the presence of signal quality issues; this topic is covered in greater detail in [

37,

38,

39,

40]. In [

41], quality issues stemming from GPS errors, latency, scaling, and noise are investigated and mitigation solutions are presented. Redundancy between PMU measurements and linear estimators is used to identify abnormalities. The rank of the Jacobian matrix is then used to derive correction factors.

The subject of uncertainty in measurements is an important aspect of estimation, and one that has the potential to generate system-wide errors. Uncertainties come from multiple sources, including latency, discrepancies in system parameters, and instrument inaccuracies [

42]. In [

43], uncertainty propagation theory is used to analyze the spread of errors in WLS estimators and how the results are shaped by these uncertainties. In this method, lower and upper error bounds are established for the outputs of the estimator, and errors are mitigated by adjusting the weights of suspected measurements. The impact of buffering times in estimates are studied in [

44]. This work considers a system where PMUs measurements are generated at different rates. A solution based on a memory buffer is proposed. At the memory buffer, data are primed before being fed to the estimator. This priming includes the determination of weighting factors. This method is complemented by the inclusion of an optimization routine that is used to find efficient buffer lengths. In [

45], fuzzy set theory and Markov models are used to develop reliability indices to identify PMUs and estimates that are vulnerable to uncertainties.

More recently, in [

46], techniques for the characterization and quantification of noise in PMU measurements were presented. The goal of these techniques is to facilitate the mitigation of estimation errors due to noise and uncertainties. In [

47],

-model approximations are extended to identify and correct estimation errors due to uncertainties in line and transformer parameters. A root mean square error (RMSE) is used to track the performance of the estimator. The validity of uncertainty propagation theory in transmission line estimates is examined in [

48]. Results indicate that when both voltage and current measurements corresponding to a node are used, the accuracy of the theoretical models are significantly improved. The widely used Gaussian assumption is studied in [

49], with results indicating the assumption might be flawed. The impact of uncertainty on fault location was investigated in [

50]. Discrepancies in transmission line parameters are mitigated via stochastic models and MLE estimators. The performance of PMU placement strategies in the face of uncertainties are studied in [

51]. A variation of the nondominated sorting genetic algorithm II (NSGA-II) is presented to alleviate the effects of uncertainty while keeping costs to a minimum. Finally, extensions of the KF have been developed for the purpose of increasing the resilience of dynamic estimators in the presence of uncertainties, as shown in [

22,

52,

53].

Trends: The Kalman filter and its extensions are the most prevalent estimators used in contemporary dynamic estimation. In regards to bad-data detection, there is not a clear go-to technique, but the trend appears to be shifting away from traditional residual analysis as more holistic approaches are being investigated. Most techniques include a PMU placement module; in this regard, the trend is to make the system observable with the lowest number of PMUs possible.

Gaps: As mentioned above, PMU-observability is pivotal. Graph theory and optimization tools are being used to address this gap. Other significant obstacles in DSE are the possible limitations in terms of communication network infrastructure. Decentralized and distributed approaches are being evaluated to overcome this challenge. This topic is covered in greater detail in

Section 6.4. Key paper contributions in this topic are presented in

Table 1.

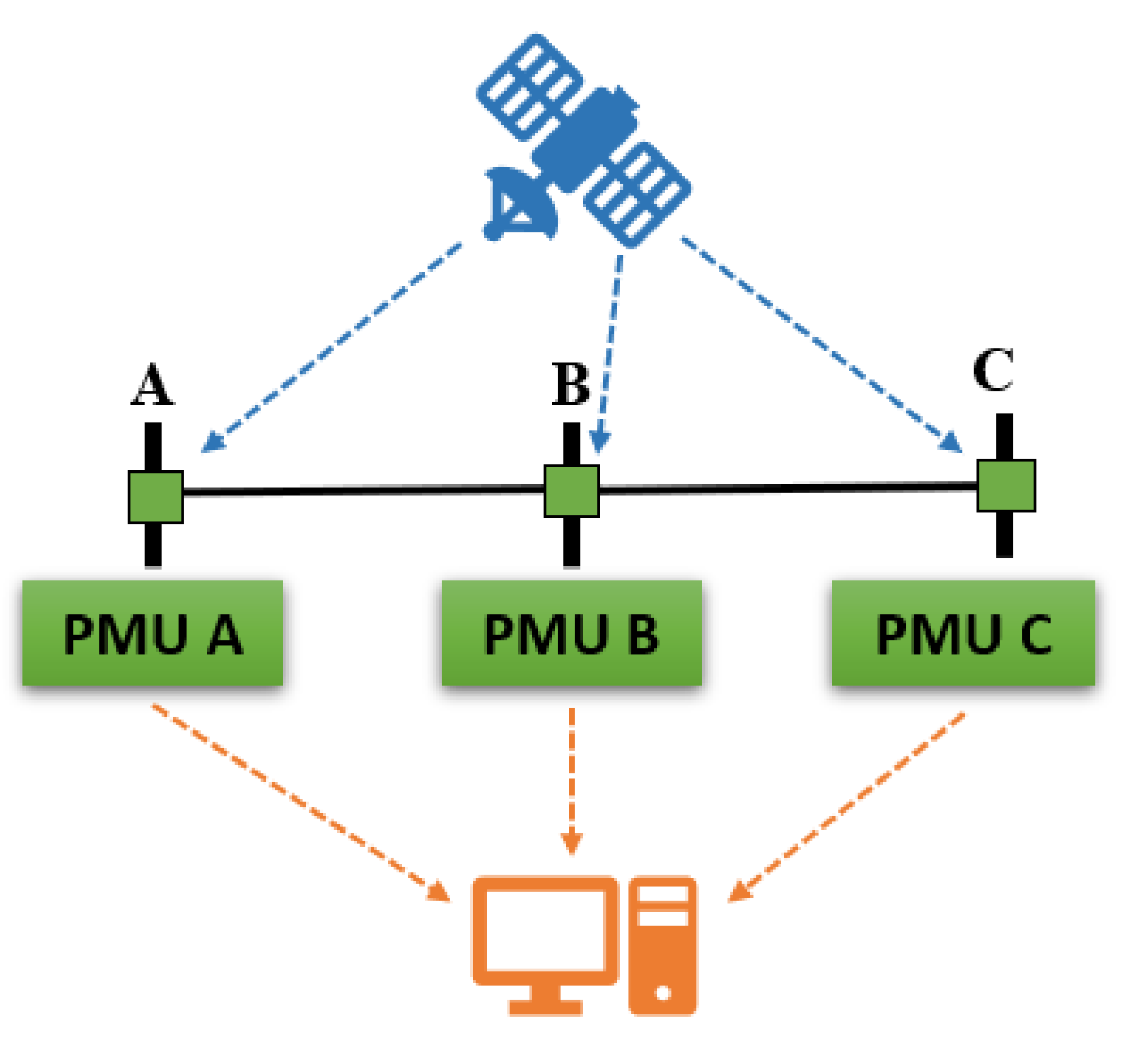

4.2. Integrated Estimation

As previously mentioned, currently, few systems can support full DSE. Research has been carried out attempting to leverage the technology currently deployed in the grid to close the gap between SSE and DSE. The techniques presented in this section leverage smart meters (SM), SCADA, and other sources of data in the grid to make sections of the grid PMU-observable.

Figure 4 illustrates an arrangement where multiple meter types are used for estimation.

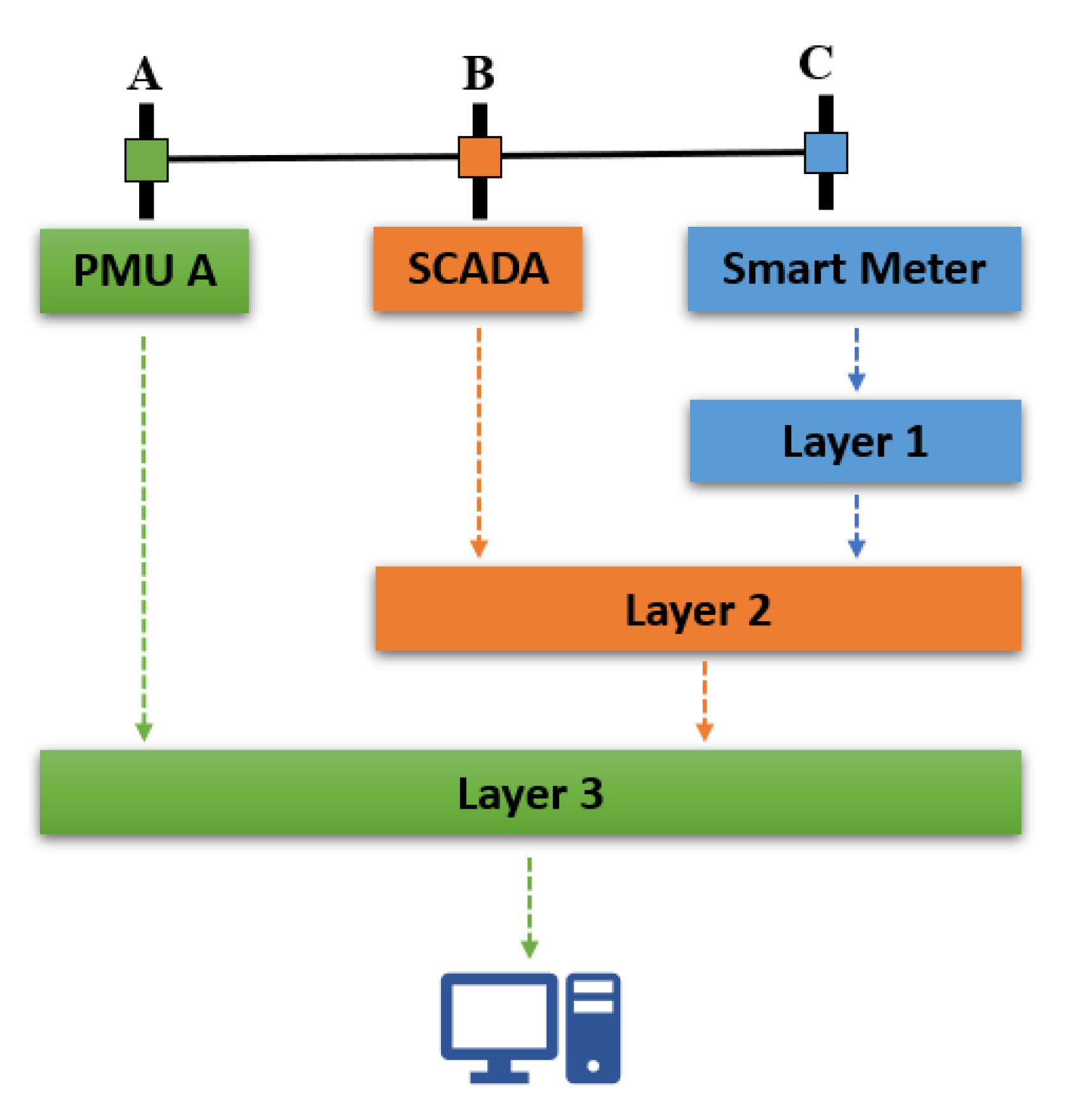

Bayesian estimation is at the core of these techniques, which represents a significant paradigm shift in SE, as algorithms such as WLS and KF-based methods remain the most widely utilized techniques in SSE and DSE, respectively. This work refers to these techniques as integrated state estimation (ISE). These techniques follow a layered approach in which measurements are processed separately based on sampling rate. The outputs of the slower layers are used to augment the PMU layer, which is the fastest layer processing data in real time [

54]. This arrangement is illustrated in

Figure 5.

In [

55], Bayesian inference is employed to estimate the probability distribution of a given set of measurements. This process can have a varying degree of complexity depending on the properties of the dataset and the assumptions that are made. Ref. [

55] leverages NNs to carry out the process of retrieving the corresponding probability distributions from historical power-flow measurements. Once the probability distributions are established, another NN is then used to emulate a mean-squared error (MMSE) estimator for real-time estimation. The goal of the technique is to minimize the MMSE, defined as

Data anomalies are detected using likelihood ratios, and when an anomaly is detected the erroneous measurement is replaced by the mean of the prior distribution. One significant advantage of this approach over other, more popular, estimation procedures is that Bayesian estimation performs well in systems with low or lack of observability. Probability distributions and Bayesian estimation produce estimates comparable to pseudo measurements. In the case of [

55], the technique seemed to outperform classical approaches in terms of accuracy and resolution. The main obstacle of the technique is obtaining the appropriate probability distributions to make the MSSE estimator produce accurate results.

The framework presented in [

55] is expanded in [

56] to incorporate measurements from meters with different sampling rates. In a layered approach, measurements from meters with lower sampling rates are included in the calculation of the probability distributions. Online estimation, which is performed via an MMSE estimator, utilizes measurements from the meters with the highest sampling rates. A second extension was presented in [

57] to enable PMU-observability in an approach that produces quasi-dynamic estimates. The highlight of this technique is that it produces results comparable to the results produced by contemporary state estimators (in some cases outperforming them), while utilizing techniques that are not widely used in SE. While extremely promising, this approach is not the most accessible for the average researcher as it requires specialized knowledge in ML and probability theory. This technique can be improved by modifying the structure of the NNs, the number of neurons, and activation functions. The training and accuracy thresholds can be optimized to meet a particular specification. Additionally, measurements produced by other metering infrastructure could be leveraged to make the solution more robust.

In [

58], smart meter and pseudo measurements are processed along with PMU and SCADA measurements as part of a Bayesian estimation solution. The principle behind this work is similar to that of [

57]. A Bayesian estimator is once again used, but this time, it is a simpler maximum a posteriori (MAP) estimator, compared to the more complex MMSE in the previous technique.

As per

Figure 5, the input from slower metering infrastructure is used to augment the PMU layer of the solution. The state equations developed in this solution resemble those of the Kalman filter, and they establish relationships between the different measurement layers:

where

represents the state at the current sampling layer, and

represents the state at the previous (slower) sampling layer.

represents uncertainties between the two layers,

is the error component at the current layer, and

is the measurements at the current layer. An objective function for the estimated states is developed and minimized via the modified Newton method.

Abnormal data are detected via Chebyshev’s inequality.

where

is a probability based on a credibility interval, and

P is the covariance of the posterior state. The solution produced encouraging results relative to KF and hybrid estimation algorithms. This technique emphasizes a low computational burden and the adaptability of the solution to a variety of systems. One assumption that deserves further investigation was that of modeling the probability distributions as Gaussian. Assuming that the distributions were Gaussian allowed [

58] to bypass the computationally intensive step of estimating probability distributions using complex techniques such as NNs, as was carried out in [

57].

Integrated estimation formulations without the use of Bayesian techniques have also been proposed. As presented in [

59,

60], SCADA and RTU (remove terminal unit) measurements were used to obtain pseudo measurements which were then used in linear estimators that process PMU data in real time.

Trends: The most simple form of integrated estimation is the expansion of PMU-observability through the integration of other (slower) measurement sources, such as SCADA. The slower measurements are processed offline to obtain PMU pseudo measurements. Bayesian estimation appears to be experiencing a resurgence, as ML is being used to derive probability distributions that are then used for online estimation. Based on the results produced by works such as [

57], ISE appears to have the potential to become a viable and powerful estimation platform in the near future until dynamic estimation becomes a widespread reality.

Gaps: Challenges in ISE can be attributed to the complexity involved in acquiring probability distributions for Bayesian-based ISE.

Key paper contributions in this topic are presented in

Table 2.

4.3. Disturbance Detection

Solutions in the disturbance detection group aim to enhance the situational awareness of the grid. Events, such as switching and loading changes, and disturbances, such as faults, are monitored and reported by via holistic solutions. For example, in [

61],

big data is analyzed to detect disturbances at their inception giving system operators more time to react.

In [

62],

-PMUs are assumed to be located at the ends of a feeder. After an event is detected, changes in voltage and current are calculated at both ends.

These deviations are then used to determine the change in impedance

at both ends of the feeder. The sign (positive or negative) of the real component of each

relative to each other is used to determine whether the disturbance is internal or external. Once the location of the disturbance has been identified, sweep forward and backward voltage calculations are performed to calculate the change in voltage at every node along the feeder. Discrepancies in nodal voltages are used to pinpoint the exact location of the events. Events such as the switching of capacitor banks, high and low impedance faults, and DERs changing status were identified by this technique. The concept is a relatively simple one to understand and to implement; however, considerable drops in performance were experienced as more complexities such as error in measurements, marginal stability, and high fault impedances were added to the events. This solution also lacked a well-defined scope and application. Despite a few shortcomings, this technique could serve as the foundation of a more robust solution, as was performed in [

63], where ML techniques were used to extract additional features from data reported by PMUs. While this technique improved on the work of its predecessors, extensive training of ML algorithms represents an implementation challenge.

Another data-driven method was introduced in [

64]. First, in order to reduce the data burden produced by PMUs, the minimum amount of data required to monitor the system is found by changing the sampling intervals of the measurements until estimates produce errors that violate a criterion. In other words, fewer data are used until the system can no longer be recognized. Once a satisfactory sampling interval has been established, principal component analysis is used to estimate key system parameters. Density-based clustering is then used to identify data outliers and locate them. This approach presented an interesting take on PMU data processing and is an approach that does not rely on sampling at the higher end of current PMU technology. As expected, lower sampling rates had a negative impact on performance, but a balance between the capacity of the PMUs, infrastructure available, and the requirements of the user can be used to optimize the operating parameters of the program.

PMUs and NNs were utilized for load forecasting in [

65]. Meanwhile, in [

66], NNs were used in a novel capacity to create heatmaps that were used to monitor system stability. The rotor angles of generators connected to a network were monitored and were used as input to the NN. This technique produced interesting results and presented stability information in a novel form. Several steps in this process were of empirical nature, in particular the structure and parameters of the NN. While the solution does not revolutionize the state of the art, it brings new ideas to the table.

Efforts in the area of situational awareness are also delving into system validation and topology. These efforts leverage PMUs to monitor changes in topology in order to deliver a comprehensive view of the grid in real time. Trees were used in [

67] to monitor the topology of radial systems. In [

68], results from simulations were used to build a database of baseline values which were then used to calculate differences between them and real-time measurements. This method uses these deviations as indicators of changes in topology. In [

69], variations in topology were identified via

-PMUs and power-flows.

Trends: The main trend in situational awareness is the development of holistic solutions that:

Track deviations or changes in the system to uncover issues early.

Facilitate the access to the true status of the system in terms of states, phasors, and topology.

This is carried out to enhance the level of knowledge of operators and the system, so that the decision-making process can be expedited. Given the diversity of the techniques used in situational awareness, it is difficult to identify a method or tool is that is being widely used.

Gaps: This area seems to lack focus, or least a well-defined goal or methodology. While some interesting ideas can be encountered in this area, presently it is hard to see any of them being implemented in the real world. One possible target of situational awareness could be to replace EMS (energy management system) systems in the future.

Key paper contributions in this topic are presented in

Table 3.