Unmanned Aerial Vehicles (UAV) in Precision Agriculture: Applications and Challenges

Abstract

1. Introduction

2. Precision Agriculture

3. Types of Unmanned Aerial Vehicle (UAV)

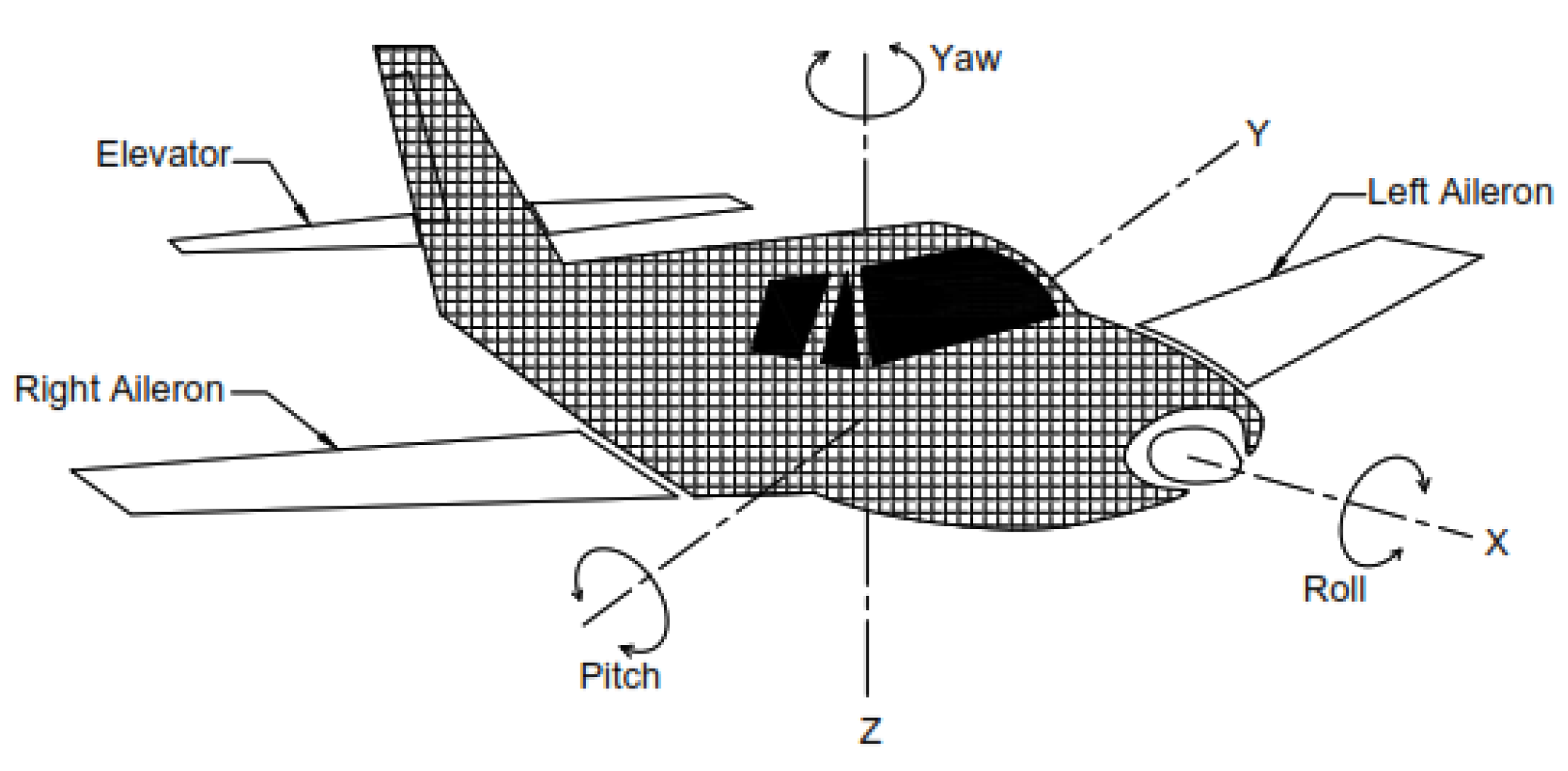

3.1. Fixed Winged

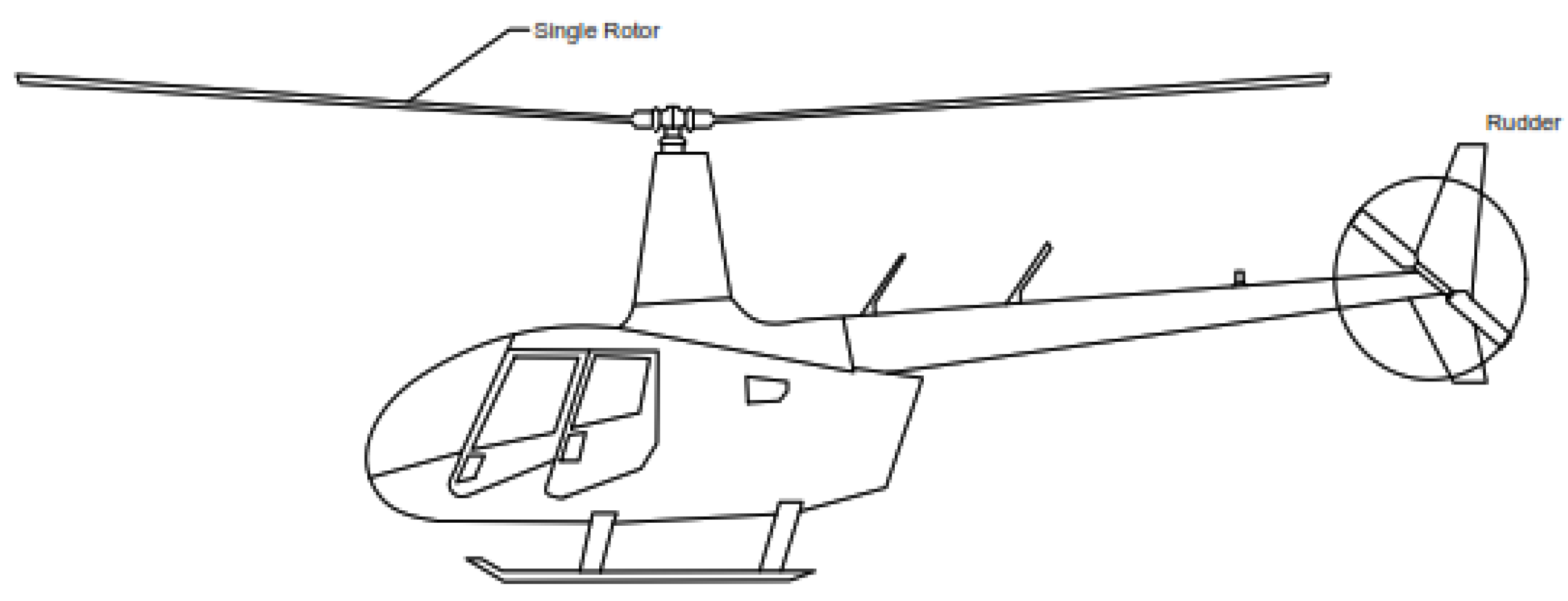

3.2. Single Rotor

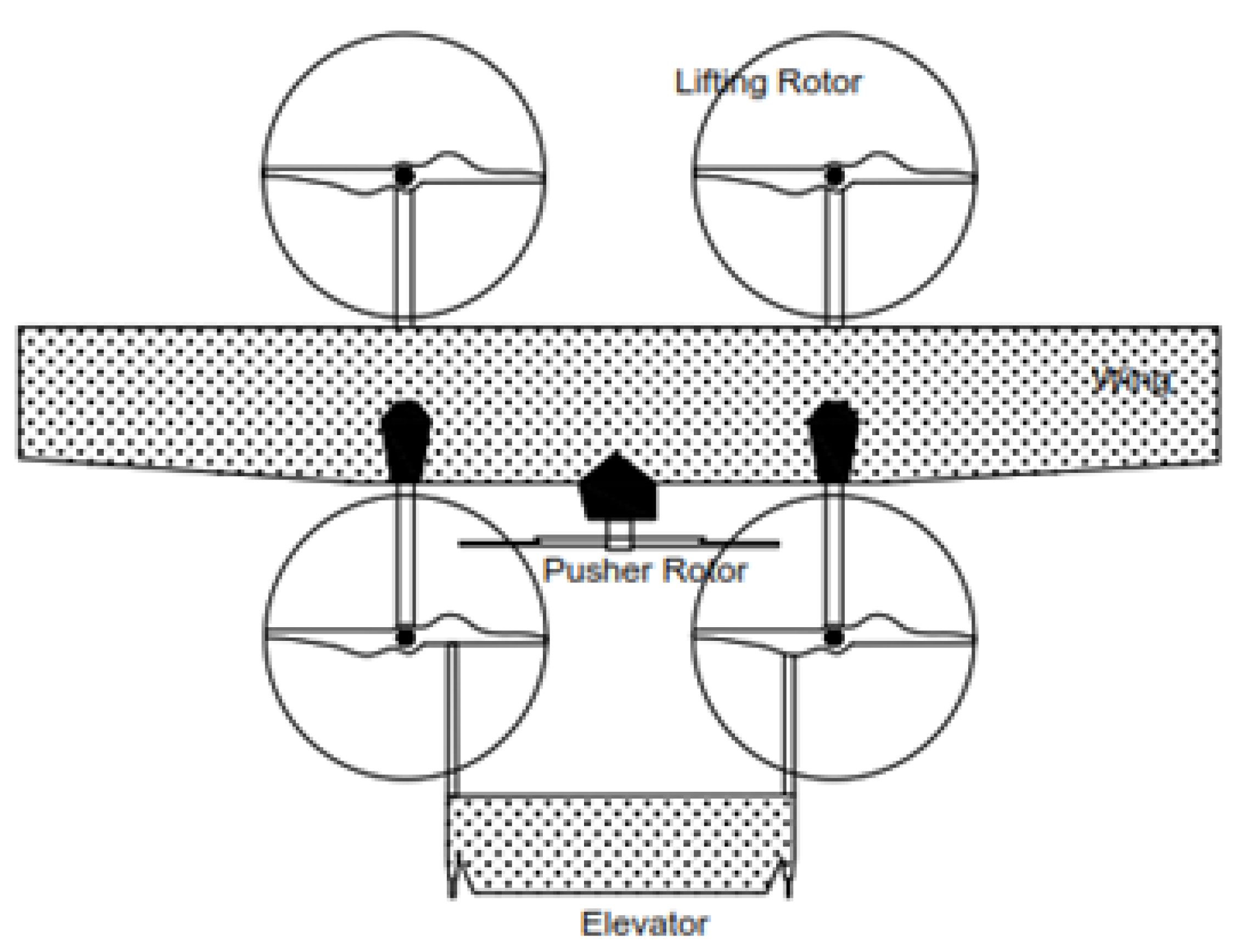

3.3. Hybrid Vertical Take-Off and Landing (VTOL)

3.4. Multi Rotor

3.4.1. Tri Copter

3.4.2. Quad Copter

3.4.3. Hex Copter

3.4.4. Octocopter

4. Role of UAV in Precision Pest Management

- Pressure nozzle;

- Spraying controller;

- Pesticide box;

- Hall-flow sensor;

- Small diaphragm pump;

- Field-map interpretation system.

| References | Crop Name | Parameters | ||

|---|---|---|---|---|

| Camera | Pest Name | Observations | ||

| Xuan Li et al., 2021 [48] | alfalfa | Multispectral | Empoasca fabae | Damage assessments |

| Bhattarai et al., 2019 [49] | Wheat | Multispectral | Hessian fly | Arthropod counts |

| Backoulou et al., 2018a,b [50,51] | Sorghum | Multispectral | Sugarcane aphid | Damage assessments |

| Backoulou et al., 2016 [52] | Wheat | Multispectral | Greenbug | Arthropod counts or visual inspection |

| Elliott et al., 2015 [53] | Sorghum | Multispectral | Sugarcane aphid | Damage assessments |

| Backoulou et al., 2011a,b, 2013, 2015 [54,55,56] | Wheat | Multispectral | Russian wheat aphid | Visual inspections |

| Mirik et al., 2014 [57] | Wheat | Hyper spectral | Russian wheat aphid | Visual inspection of images |

| Reisig and Godfrey 2010 [58] | Cotton | Multispectral, Hyper spectral | Cotton aphid | Arthropod counts |

| Elliott et al., 2009 [59] | Wheat | Multispectral | Greenbug | Arthropod counts or visual inspection |

| Carroll 2008 [60] | Corn | Hyper spectral | European corn borer | Damage assessments |

| Elliott et al., 2007 [61] | Wheat | Multispectral | Russian wheat aphid | Proportion of infested plants |

| Reisig and Godfrey, 2006 [62] | Cotton | Multispectral, Hyper spectral | Spider mite | Arthropod counts |

| Willers et al., 2005 [63] | Cotton | Multispectral | Tarnished plantbug | Sweep net sampling |

| Fitzgerald et al., 2004 [34] | Cotton | Hyper spectral | Strawberry spider | Arthropod counts |

| Sudbrink et al., 2003 [64] | Cotton | Multispectral | Beet armyworm | Arthropod counts |

| F. W. Nutter Jr. et al., 2002 [65] | Soya Bean | Multispectral | Soya Bean Cyst Nematode | Visual inspection of images |

| Willers et al., 1999 [66] | Cotton | Multispectral | Tarnished plant bug | Sweep net sampling, drop cloth sampling |

| Lobits et al., 1997 [67] | Grape | Multispectral | Grape phylloxera | Root digging |

| Hart and Meyers, 1968 [68] | Citrus | Multispectral | Brown soft scale | Arthropod counts sooty mold assessments |

| Everitt et al., 1994 [69] | Citrus | Multispectral | Citrus blackfly | Visual inspections sooty mold assessments |

| Everitt et al., 1996 [70] | Cotton | Multispectral | Silverleaf whitefly | Visual inspections sooty mold assessments |

| Hart et al., 1973 [71] | Citrus | Multispectral | Citrus blackfly | Arthropod counts sooty mold assessments |

| References | Crop Name | Parameters | ||

|---|---|---|---|---|

| Camera | Pest Name | Observations | ||

| MarianAdan et al., 2021 [73] | avocado | Multispectral | Persea mite | Visual Inspections |

| Michael Gomez Selvaraj et al., 2020 [74] | Banana | RGB, Multispectral | Yellow sigatoka | Visual Inspections |

| Bhattarai et al., 2019 [50] | Wheat | Multispectral | Hessian fly | Arthropod counts |

| Ma et al., 2019 [23] | Wheat | Multispectral | Wheat aphid | Arthropod counts |

| Abdel-Rahman et al., 2017 [75] | Corn | Multispectral | Stem borer | Arthropod counts |

| Zhang et al., 2016 [76] | Corn | Multispectral | Oriental armyworm | Damage assess-counts |

| Lestina et al., 2016 [77] | Wheat | Multispectral | Wheat stem sawfly | Arthropod counts |

| Luo et al., 2014 [78] | Wheat | Multispectral | Wheat aphid | Arthropod counts damage assessments |

| Huang et al., 2011 [79] | Wheat | Multispectral | Aphid | Arthropod counts |

| Reisig and Godfrey, 2010 [59] | Cotton | Multispectral | Cotton aphid | Arthropod counts |

| Reisig and Godfrey, 2006 [63] | Cotton | Multispectral | Spider mite | Arthropod counts |

| References | Crop Name | Parameters | ||

|---|---|---|---|---|

| Camera | Pest Name | Observations | ||

| MaríaGyomar Gonzalez-Gonzalez et al., 2021 [80] | Citrus | Hyperspectral | Tetranychus urticae | visual inspection of the leaves |

| Martin and Latheef 2019 [81] | Corn | Multispectral | Banks grassmite spotted spidermite | Damage assessments |

| Alves et al., 2019, 2013 [82,83] | Soyabean | Hyperspectral | Soybean aphid | Arthropod counts |

| Samuel Joall and et al., 2018 [43] | Sugar Beet | Multispectral, Hyperspectral | Beet Cyst Nematode | Visual Images |

| Martin and Latheef, 2018 [84] | Pinto bean | Multispectral | Two-spotted spider | Controlled infestations |

| Fan et al., 2017 [85] | Rice | Hyperspectral | Striped stem borer | Damage assessments |

| Herrmann et al., 2017 [86] | Bean | Hyperspectral | Two spotted spider mite | Damage assessments |

| Abdel-Rahman et al., 2013, 2010, 2009 [87,88,89] | Sugarcane | Hyperspectral | Sugarcane thrips | Arthropod counts, Damage assessments |

| Mirik et al., 2012 [90] | Wheat | Multispectral | Russian wheat aphid | Visual inspections |

| Zhang et al., 2008 [91], Luedeling et al., 2009 [92] | Peach | Hyperspectral | Spider mite | Arthropod counts, Damage assessments |

| Fraulo et al., 2009 [93] | Strawberry | Hyperspectral | Two spotted spider mite | Arthropod counts |

| Li et al., 2008 [94] | Sorghum | Hyperspectral | Corn leaf aphid | Arthropod counts, |

| Xu et al., 2007 [95] | Tomato | Hyperspectral | Leaf miner | Damage assessments |

| F. W. Nutter Jr. et al., 2002 [65] | Soya Bean | Multispectral | Soya Bean Cyst Nematode | Visual inspection of images |

| Everitt et al., 1996 [70] | Cotton | Multispectral | Silverleaf whitefly | Visual inspections |

| Peñuelas et al., 1995 [96] | Apple | Hyperspectral | European red mite | Arthropod counts |

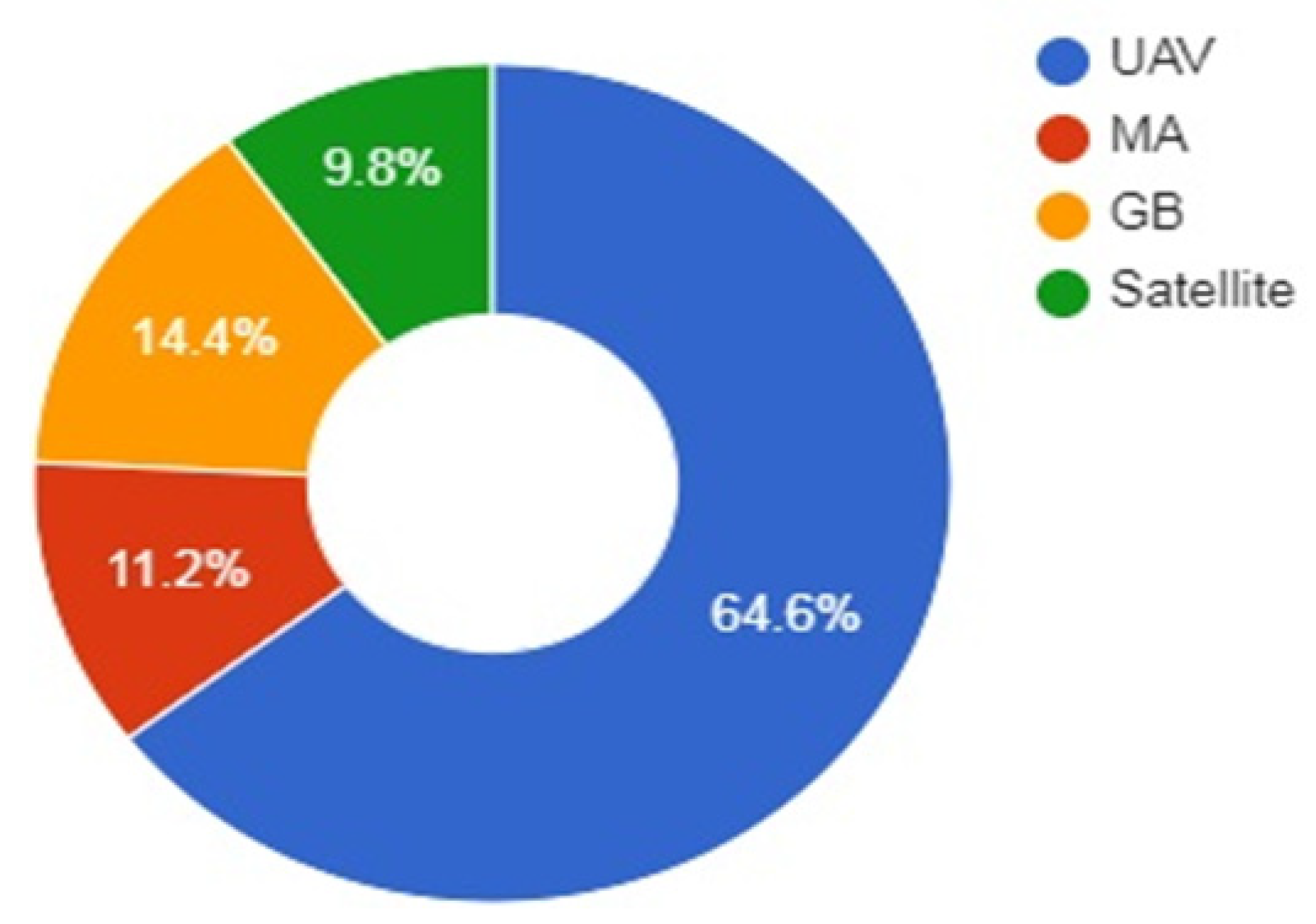

5. Economic Benefits of UAV Technologies

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Colomina, I. Unmanned aerial systems for photo grammetry and remote sensing: Areview. ISPRS J. Photo Grammetry Remote Sens. 2014, 92, 79–97. [Google Scholar] [CrossRef]

- Everaerts, J. The use of unmanned aerial vehicles (UAVs) for remote sensing and mapping. The International Archives of the Photo grammetry. Remote Sens. Spat. Inf. Sci. 2008, 37, 1187–1192. [Google Scholar]

- Natu, A.S.; Kulkarni, S.C. Adoption and Utilization of Drones for Advanced Precision Farming: A Review. Int. J. Recent Innov. Trends Comput. Commun. 2016, 4, 563–565. [Google Scholar]

- Zhang, C.; Kovacs, J.M. The application of small unmanned aerial systems for precision agriculture: A review. In Precision Agriculture; Springer: Berlin/Heidelberg, Germany, 2012; Volume 13, pp. 693–712. [Google Scholar]

- Zhang, H.L.; Tian, W.T.; Yin, J. A Review of Unmanned Aerial Vehicle Low-Altitude Remote Sensing (UAV-LARS) Use in Agricultural Monitoring in China. Remote Sens. 2021, 13, 1221. [Google Scholar] [CrossRef]

- Farooq, M.S.; Riaz, S.; Abid, A.; Abid, K.; Naeem, M.A. A Survey on the Role of IoT in Agriculture for the Implementation of Smart Farming. IEEE Access 2019, 7, 156237–156271. [Google Scholar] [CrossRef]

- Swain, M.; Zimon, D.; Singh, R.; Hashmi, M.F.; Rashid, M.; Hakak, S. LoRa-LBO: An Experimental Analysis of LoRa Link Budget Optimization in Custom Build IoT Test Bed for Agriculture 4.0. Agronomy 2021, 11, 820. [Google Scholar] [CrossRef]

- Delavarpour, N.; Cengiz, K.; Nowatzki, N.; Bajwa, S.; Sun, X. A Technical Study on UAV Characteristics for Precision Agriculture Applications and Associated Practical Challenges. Remote Sens. 2021, 13, 1204. [Google Scholar] [CrossRef]

- Rahman, M.F.F.; Fan, S.; Zhang, Y.; Chen, L. A Comparative Study on Application of Unmanned Aerial Vehicle Systems in Agriculture. Agriculture 2021, 11, 22. [Google Scholar] [CrossRef]

- Islam, N.; Rashid, M.M.; Pasandideh, F.; Ray, B.; Moore, S.; Kadel, R. A Review of Applications and Communication Technologies for Internet of Things (IoT) and Unmanned Aerial Vehicle (UAV) based Sustainable Smart Farming. Sustainability 2021, 13, 1821. [Google Scholar] [CrossRef]

- Ziliani, m.; Parkes, s.; Hoteit, I.; McCabe, M. Intra-Season Crop Height Variability at Commercial Farm Scales Using a Fixed-Wing UAV. Remote Sens. 2018, 10, 2007. [Google Scholar] [CrossRef]

- Xinyu, X.; Lan, Y.; Sun, Z.; Chang, C.; Hoffmann, W.C. Develop an unmanned aerial vehicle based automatic aerial spraying system. Comput. Electron. Agric. 2016, 128, 58–66. [Google Scholar]

- McArthur, D.R.; Chowdhury, A.B.; Cappelleri, D.J. Design of the interacting-boomcopter unmanned aerial vehicle for remote sensor mounting. J. Mech. Robot. 2018, 10, 025001. [Google Scholar] [CrossRef]

- Sharma, R. Review on Application of Drone Systems in Precision Agriculture. J. Adv. Res. Electron. Eng. Technol. 2021, 7, 520137. [Google Scholar]

- Yallappa, D.; Veerangouda, M.; Maski, D.; Palled, V.; Bheemanna, M. Development and evaluation of drone mounted sprayer for pesticide applications to crops. In Proceedings of the 2017 IEEE Global Humanitarian Technology Conference (GHTC), San Jose, CA, USA, 19–22 October 2017; pp. 1–7. [Google Scholar]

- Qing, T.; Zhang, R.; Chen, L.; Min, X.; Tongchuan, Y.; Bin, Z. Droplets movement and deposition of an eight-rotor agricultural UAV in downwash flow field. Int. J. Agric. Biol. Eng. 2017, 10, 47–56. [Google Scholar]

- Bhoi, S.K.; Kumar Jena, K.; Kumar Panda, S.; Long, H.V.; Kumar, P.R.; Bin Jebreen, S.H. An Internet of Things assisted Unmanned Aerial Vehicle based artificial intelligence model for rice pest detection. Microprocess. Microsyst. 2021, 80, 103607. [Google Scholar] [CrossRef]

- Wu, B.; Liang, A.; Zhang, H.; Zhu, T.; Zou, Z.; Yang, D.; Tang, W.; Li, J.; Su, J. Application of conventional UAV-based high-throughput object detection to the early diagnosis of pine wilt disease by deep learning. For. Ecol. Manag. 2021, 486, 118986. [Google Scholar] [CrossRef]

- Ishengoma, F.S.; Rai, I.A.; Said, R.N. Identification of maize leaves infected by fall armyworms using UAV-based imagery and convolutional neural networks. Comput. Electron. Agric. 2021, 184, 106124. [Google Scholar] [CrossRef]

- Saito Moriya, É.A.; Imai, N.N.; Tommaselli, A.M.G.; Berveglieri, A.; Santos, G.H.; Soares, M.A.; Marino, M.; Reis, T.T. Detection and mapping of trees infected with citrus gummosis using UAV hyperspectral data. Comput. Electron. Agric. 2021, 188, 106298. [Google Scholar] [CrossRef]

- An, G.; Xing, M.; He, B.; Kang, H.; Shang, J.; Liao, C.; Huang, X.; Zhang, H. Extraction of Areas of Rice False Smut Infection Using UAV Hyperspectral Data. Remote Sens. 2021, 13, 3185. [Google Scholar] [CrossRef]

- Nguyen, C.; Sagan, V.; Maimaitiyiming, M.; Maimaitijiang, M.; Bhadra, S.; Kwasniewski, M.T. Early Detection of Plant Viral Disease Using Hyperspectral Imaging and Deep Learning. Sensors 2021, 21, 742. [Google Scholar] [CrossRef]

- Ma, H.; Huang, W.; Jing, Y.; Yang, C.; Han, L.; Dong, Y.; Ye, H.; Shi, Y.; Zheng, Q.; Liu, L.; et al. Integrating growth and environmental parameters to discriminate powdery mildew and aphid of winter wheat using bi-temporal Landsat-8 imagery. Remote Sens. 2019, 11, 846. [Google Scholar] [CrossRef]

- Qin, J.; Wang, B.; Wu, Y.; Lu, Q.; Zhu, H. Identifying Pine Wood Nematode Disease Using UAV Images and Deep Learning Algorithms. Remote Sens. 2021, 13, 162. [Google Scholar] [CrossRef]

- Xiao, Y.; Dong, Y.; Huang, W.; Liu, L.; Ma, H. Wheat Fusarium Head Blight Detection Using UAV-Based Spectral and Texture Features in Optimal Window Size. Remote Sens. 2021, 13, 2437. [Google Scholar] [CrossRef]

- Guo, A.; Huang, W.; Dong, Y.; Ye, H.; Ma, H.; Liu, B.; Wu, W.; Ren, Y.; Ruan, C.; Geng, Y. Wheat Yellow Rust Detection Using UAV-Based Hyperspectral Technology. Remote Sens. 2021, 13, 123. [Google Scholar] [CrossRef]

- Castrignanò, A.; Belmonte, A.; Antelmi, I.; Quarto, R.; Quarto, F.; Shaddad, S.; Sion, V.; Muolo, M.R.; Ranieri, N.A.; Gadaleta, G.; et al. A geostatistical fusion approach using UAV data for probabilistic estimation of Xylella fastidiosa subsp. pauca infection in olive trees. Sci. Total Environ. 2020, 752, 141814. [Google Scholar] [CrossRef]

- Francesconi, S.; Harfouche, A.; Maesano, M.; Balestra, G.M. UAV-Based Thermal, RGB Imaging and Gene Expression Analysis Allowed Detection of Fusarium Head Blight and Gave New Insights into the Physiological Responses to the Disease in Durum Wheat. Front. Plant. Sci. 2021, 12, 628575. [Google Scholar] [CrossRef] [PubMed]

- Yadav, S.; Sengar, N.; Singh, A.; Singh, A.; Dutta, M.K. Identification of disease using deep learning and evaluation of bacteriosis in peach leaf. Ecol. Inform. 2021, 61, 101247. [Google Scholar] [CrossRef]

- Görlich, F.; Marks, E.; Mahlein, A.-K.; König, K.; Lottes, P.; Stachniss, C. UAV-Based Classification of Cercospora Leaf Spot Using RGB Images. Drones 2021, 5, 34. [Google Scholar] [CrossRef]

- Yu, R.; Ren, L.; Luo, Y. Early detection of pine wilt disease in Pinus tabuliformis in North China using a field portable spectrometer and UAV-based hyperspectral imagery. For. Ecosyst. 2021, 8, 40. [Google Scholar] [CrossRef]

- Yu, R.; Luo, Y.; Zhou, Q.; Zhang, X.; Wu, D.; Ren, L. Early detection of pine wilt disease using deep learning algorithms and UAV-based multispectral imagery. For. Ecol. Manag. 2021, 497, 119493. [Google Scholar] [CrossRef]

- Chivasa, W.; Mutanga, O.; Burgueño, J. UAV-based high-throughput phenotyping to increase prediction and selection accuracy in maize varieties under artificial MSV inoculation. Comput. Electron. Agric. 2021, 184, 106128. [Google Scholar] [CrossRef]

- Fitzgerald, J.G.; Maas, S.J.; Detar, W.R. Spider mite detection and canopy component mapping in cotton using hyperspectral imagery and spectral mixture analysis. Precis. Agric. 2004, 5, 275–289. [Google Scholar] [CrossRef]

- Gao, J.; Westergaard, J.C.; Sundmark, E.H.R.; Bagge, M.; Liljeroth, E.; Alexandersson, E. Automatic late blight lesion recognition and severity quantification based on field imagery of diverse potato genotypes by deep learning. Knowl. Based Syst. 2014, 214, 106723. [Google Scholar] [CrossRef]

- Deng, X.; Zhu, Z.; Yang, J.; Zheng, Z.; Huang, Z.; Yin, X.; Wei, S.; Lan, Y. Detection of Citrus Huanglongbing Based on Multi-Input Neural Network Model of UAV Hyperspectral Remote Sensing. Remote Sens. 2020, 12, 2678. [Google Scholar] [CrossRef]

- Tetila, E.C.; Machado, B.B.; Astolfi, G.; Belete, N.A.D.; Amorim, W.P.; Roel, A.R.; Pistori, H. Detection and classification of soybean pests using deep learning with UAV images. Comput. Electron. Agric. 2020, 179, 105836. [Google Scholar] [CrossRef]

- Calou, V.C.; Teixeira, A.d.S.; Moreira, L.C.; Lima, C.S.; de Oliveira, J.; de Oliveira, M. The use of UAVs in monitoring yellow sigatoka in banana. Biosyst. Eng. 2020, 193, 115–125. [Google Scholar] [CrossRef]

- Del-Campo-Sanchez, A.; Ballesteros, R.; Hernandez-Lopez, D.; Ortega, J.F.; Moreno, M.A. Agroforestry and Cartography Precision Research Group. Quantifying the effect of Jacobiascalybica pest on vineyards with UAVs by combining geometric and computer vision technique. PLoS ONE 2019, 14, e0215521. [Google Scholar] [CrossRef] [PubMed]

- Abdulridha, J.; OzgurandAmpatzidis, B.J. UAV-Based Remote Sensing Technique to Detect Citrus Canker Disease Utilizing Hyperspectral Imaging and Machine Learning. Remote Sens. 2013, 11, 1373. [Google Scholar] [CrossRef]

- Vanegas, F.; Bratanov, D.; Powell, K.; Weiss, J.; Gonzalez, F. A novel methodology for improving plant pest surveillance in vineyards and crops using UAV-based hyperspectral and spatial data. Sensors 2018, 18, 260. [Google Scholar] [CrossRef] [PubMed]

- Huang, H.; Deng, J.; Lan, Y.; Yang, A.; Deng, X.; Zhang, L.; Wen, S.; Jiang, Y.; Suo, G.; Chen, P. A two-stage classification approach for the detection of spider mite-infested cotton using UAV multispectral imagery. Remote Sens. Lett. 2018, 9, 933–941. [Google Scholar] [CrossRef]

- Joalland, S.; Screpanti, C.; Varella, H.V.; Reuther, M.; Schwind, M.; Lang, C.; Liebisch, A.W.F. Aerial and Ground Based Sensing of Tolerance to BeetCyst Nematode in Sugar Beet. Remote Sens. 2018, 10, 787. [Google Scholar] [CrossRef]

- Hunt, J.E.R.; Rondon, S.I. Detection of potato beetle damage using remote sensing from small unmanned aircraft systems. J. Appl. Remote Sens. 2017, 11, 026013. [Google Scholar] [CrossRef]

- Stanton, C.; Starek, M.J.; Elliott, N.; Brewer, M.; Maeda, M.M.; Chu, T. Unmanned aircraft system-derived crop height and normalized difference vegetation index metrics for sorghum yield and aphid stress assessment. J. Appl. Remote Sens. 2017, 1, 026035. [Google Scholar] [CrossRef]

- Severtson, D.; Callow, N.; Flower, K.; Neuhaus, A.; Olejnik, M.; Nansen, C. Unmanned aerial vehicle canopy reflectance data detects potassium deficiency and green peach aphid susceptibility in canola. Precis. Agric. 2016, 17, 659–677. [Google Scholar] [CrossRef]

- Nebiker, S.; Lack, N.; Abächerli, M.; Läderach, S. Light-weight multispectral UAV sensors and their capabilities for predicting grain yield and detecting plant diseases. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci 2016, XLI-B1, 963–970. [Google Scholar] [CrossRef]

- Li, X.; Giles, D.K.; Andaloro, J.T.; Long, R.; Lang, E.B.; Watson, L.J.; Qandah, I. Comparison of UAV and Fixed-Wing Aerial Application for Alfalfa Insect Pest Control: Evaluating Efficacy, Residues, and Spray Quality. Pest. Manag. Sci. 2021, 77, 4980–4992. [Google Scholar] [CrossRef] [PubMed]

- Bhattarai, G.P.; Schmid, R.B.; McCornack, B.P. Remote sensing data to detect hessian fly infestation in commercial wheat fields. Sci. Rep. 2019, 9, 6109. [Google Scholar]

- Backoulou, F.G.; Elliott, N.; Giles, K.; Alves, T.; Brewer, M.; Starek, M. Using multispectral imagery to map spatially variable sugarcane aphid infestations in sorghum. Southwest. Entomol. 2018, 43, 37–44. [Google Scholar] [CrossRef]

- Backoulou, F.G.; Elliott, K.L.; Brewer, G.M.J.; Starek, M. Detecting change in a sorghum field infested by sugarcane aphid. Southwest. Entomol. 2018, 43, 823–832. [Google Scholar] [CrossRef]

- Backoulou, F.G.; Elliott, N.C.; Giles, K.L. Using multispectral imagery to compare the spatial pattern of injury to wheat caused by Russian wheat aphid and greenbug. Southwest. Entomol. 2016, 41, 1–8. [Google Scholar] [CrossRef]

- Elliott, C.N.; Backoulou, G.F.; Brewer, M.J.; Giles, K.L. NDVI to detect sugarcane aphid injury to grain sorghum. J. Econ. Entomol. 2015, 108, 1452–1455. [Google Scholar] [CrossRef][Green Version]

- Backoulou, F.G.; Elliott, N.C.; Giles, K.; Phoofolo, M.; Catana, V. Development of a method using multispectral imagery and spatial pattern metrics to quantify stress to wheat fields caused by Diuraphisnoxia. Comput. Electron. Agric. 2011, 75, 64–70. [Google Scholar] [CrossRef]

- Backoulou, F.G.; Elliott, K.L.; Giles Rao, M.N. Differentiating stress to wheat fields induced by Diuraphisnoxia from other stress causing factors. Comput. Electron. Agric. 2013, 90, 47–53. [Google Scholar] [CrossRef]

- Backoulou, F.G.; Elliott, N.C.; Giles, K.L.; Mirik, M. Processed multispectral imagery differentiates wheat crop stress caused by greenbug from other causes. Comput. Electron. Agric. 2015, 115, 34–39. [Google Scholar] [CrossRef]

- Mirik, M.; Ansley, R.J.; Steddom, K.; Rush, C.M.; Michels, G.J.; Workneh, F.; Cui, S.; Elliott, N.C. High spectral and spatial resolution hyperspectral imagery for quantifying Russian wheat aphid infestation in wheat using the constrained energy minimization classifier. J. Appl. Remote Sens. 2014, 8, 083661. [Google Scholar] [CrossRef]

- Reisig, D.D.; Godfrey, L.D. Remotely sensing arthropod and nutrient stressed plants: A case study with nitrogen and cotton aphid (Hemiptera: Aphididae). Environ. Entomol. 2010, 39, 1255–1263. [Google Scholar] [CrossRef] [PubMed]

- Elliott, N.; Mirik, M.; Yang, Z.; Jones, D.; Phoofolo, M.; Catana, V.; Giles, K.; Michels, G.J. Airborne remote sensing to detect greenbug stress to wheat. Southwest. Entomol. 2009, 34, 205–221. [Google Scholar] [CrossRef]

- Carroll, W.M.; Glaser, J.A.; Hellmich, R.L.; Hunt, T.E.; Sappington, T.W.; Calvin, D.; Copenhaver, K.; Fridgen, J. Use of spectral vegetation indices derived from airborne hyperspectral imagery for detection of European corn borer infestation in Iowa corn plots. J. Econ. Entomol. 2008, 101, 1614–1623. [Google Scholar] [CrossRef] [PubMed]

- Elliott, C.N.; Mirik, M.; Yang, Z.; Dvorak, T.; Rao, M.; Michels, J.; Walker, T.; Catana, V.; Phoofolo, M.; Giles, K.L.; et al. Airborne multispectral remote sensing of Russian wheat aphid injury to wheat. Southwest. Entomol. 2007, 32, 213–219. [Google Scholar] [CrossRef]

- Reisig, D.; Godfrey, L. Remote sensing for detection of cotton aphid- (Homoptera: Aphididae) and spider mite- (Acari: Tetranychidae) infested cotton in the San Joaquin Valley. Environ. Entomol. 2006, 35, 1635–1646. [Google Scholar] [CrossRef]

- Willers, J.L.; Jenkins, J.N.; Ladner, W.L.; Gerard, P.D.; Boykin, D.L.; Hood, K.B.; McKibben, P.L.; Samson, S.A.; Bethel, M.M. Site-specific approaches to cotton insect control. Sampling and remote sensing analysis techniques. Precis. Agric. 2005, 6, 431–445. [Google Scholar] [CrossRef]

- Sudbrink, D.; Harris, F.; Robbins, J.; English, P.; Willers, J. Evaluation of remote sensing to identify variability in cotton plant growth and correlation with larval densities of beet armyworm and cabbage looper (Lepidoptera: Noctuidae). Fla. Entomol. 2003, 86, 290–294. [Google Scholar] [CrossRef]

- Nutter, F.W., Jr.; Tylka, G.L.; Guan, J.; Moreira, A.J.D.; Marett, C.C.; Rosburg, T.R.; Basart, J.P.; Chong, C.S. Use of Remote Sensing to Detect Soybean Cyst Nematode-Induced Plant Stress. J. Nematol. 2002, 34, 222–231. [Google Scholar] [PubMed]

- Willers, L.J.; Seal, M.R.; Luttrell, R.G. Remote sensing, lineintercept sampling for tarnished plant bugs (Heteroptera: Miridae) in midsouth cotton. J. Cotton Sci. 1999, 3, 160–170. [Google Scholar]

- Lobits, B.; Johnson, L.; Hlavka, C.; Armstrong, R.; Bell, C. Grapevine remote sensing analysis of phylloxera early stress (GRAPES): Remote sensing analysis summary. NASA Tech. Memo. 1997, 112218. [Google Scholar]

- Hart, W.G.; Meyers, V.I. Infrared aerial color photography for detection of populations of brown soft scale in citrus groves. J. Econ. Entomol. 1968, 61, 617–624. [Google Scholar] [CrossRef]

- Everitt, J.; Escobar, D.; Summy, K.; Davis, M. Using airborne video, global positioning system, and geographical information system technologies for detecting and mapping citrus blackfly infestations. Southwest. Entomol. 1994, 19, 129–138. [Google Scholar]

- Everitt, J.; Escobar, D.; Summy, K.; Alaniz, M.; Davis, M. Using spatial information technologies for detecting and mapping whitefly and harvester ant infestations in south Texas. Southwest. Entomol. 1996, 21, 421–432. [Google Scholar]

- Hart, G.W.; Ingle, S.J.; Davis, M.R.; Mangum, C. Aerial photography with infrared color film as a method of surveying for citrus blackfly. J. Econ. Entomol. 1973, 66, 190–194. [Google Scholar] [CrossRef]

- Backoulou, F.G.; Elliott, N.C.; Giles, K.; Phoofolo, M.; Catana, V.; Mirik, M.; Michels, J. Spatially discriminating Russian wheat aphid induced plant stress from other wheat stressing factors. Comput. Electron. Agric. 2011, 78, 123–129. [Google Scholar] [CrossRef]

- Adan, M.; Abdel-Rahman, E.M.; Gachoki, S.; Muriithi, H.B.W.; Lattorff, M.G.; Kerubo, V.; Landmann, T.; Mohamed, S.A.; Tonnang, H.E.Z.; Dubois, T. Use of earth observation satellite data to guide the implementation of integrated pest and pollinator management (IPPM) technologies in an avocado production system. Remote Sens. Appl. Soc. Environ. 2021, 23, 100566. [Google Scholar] [CrossRef]

- Selvaraj, M.G.; Vergara, A.; Montenegro, F.; Ruiz, H.A.; Safari, N.; Raymaekers, D.; Ocimati, W.; Ntamwira, J.; Tits, L.; Blomme, G. Detection of banana plants and their major diseases through aerial images and machine learning methods: A case study in DR Congo and Republic of Benin. J. Photogramm. Remote Sens. 2020, 169, 110–124. [Google Scholar] [CrossRef]

- Abdel-Rahman, M.E.; Landmann, T.; Kyalo, R.; Ong’amo, G.; Mwalusepo, S.; Sulieman, S.; LeRu, B. Predicting stem borer density in maize using RapidEye data and generalized linear models. Int. J. Appl. Earth Obs. Geoinf. 2017, 57, 61–74. [Google Scholar] [CrossRef]

- Zhang, J.; Huang, Y.; Yuan, L.; Yang, G.; Chen, L.; Zhao, C. Using satellite multispectral imagery for damage mapping of armyworm (Spodopterafrugiperda) in maize at a regional scale. Pest. Manag. Sci. 2016, 72, 335–348. [Google Scholar] [CrossRef] [PubMed]

- Lestina, J.; Cook, M.; Kumar, S.; Morisette, J.; Ode, P.J.; Peairs, F. MODIS imagery improves pest risk assessment: A case study of wheat stem sawfly (Cephuscinctus, Hymenoptera: Cephidae) in Colorado, USA. Environ. Entomol. 2016, 45, 1343–1351. [Google Scholar] [CrossRef] [PubMed]

- Luo, J.; Huang, W.; Zhao, J.; Zhang, J.; Ma, R.; Huang, M. Predicting the probability of wheat aphid occurrence using satellite remote sensing and meteorological data. Optik 2014, 125, 5660–5665. [Google Scholar] [CrossRef]

- Huang, W.; Luo, J.; Zhao, J.; Zhang, J.; Ma, Z. Predicting wheat aphid using 2-dimensional feature space based on multi-temporal Landsat TM. In IEEE International Geoscience and Remote Sensing Symposium; IEEE: New York, NY, USA, 2011; Volume 24–29, pp. 1830–1833. [Google Scholar]

- Gonzalez-Gonzalez, M.; Blasco, J.; Cubero, S.; Chueca, P. Automated Detection of TetranychusurticaeKoch in Citrus Leaves Based on Colour and VIS/NIR Hyperspectral Imaging. Agronomy 2021, 11, 1002. [Google Scholar] [CrossRef]

- Martin, D.E.; Latheef, M.A. Aerial application methods control spider mites on corn in Kansas, USA. Exp. Appl. Acarol. 2019, 77, 571–582. [Google Scholar] [CrossRef] [PubMed]

- Alves, M.T.; Moon, R.D.; MacRae, I.V.; Koch, R.L. Optimizing band selection for spectral detection of Aphis glycines Matsumura in soybean. Pest. Manag. Sci. 2019, 75, 942–949. [Google Scholar] [CrossRef]

- Alves, M.T.; Macrae, I.V.; Koch, R.L. Soybean aphid (Hemiptera: Aphididae) affects soybean spectral reflectance. J. Econ. Entomol. 2013, 108, 2655–2664. [Google Scholar] [CrossRef]

- Martin, D.E.; Latheef, M.A. Active optical sensor assessment of spider mite damage on greenhouse beans and cotton. Exp. Appl. Acarol. 2018, 74, 147–158. [Google Scholar] [CrossRef]

- Fan, Y.; Wang, T.; Qiu, Z.; Peng, J.; Zhang, C.; He, Y. Fast detection of striped stem-borer (Chilosuppressalis Walker) infested rice seedling based on visible/near-infrared hyperspectral imaging system. Sensors 2017, 17, 2470. [Google Scholar] [CrossRef]

- Herrmann, I.; Berenstein, M.; Paz-Kagan, T.; Sade, A.; Karnieli, A. Spectral assessment of two-spotted spider mite damage levels in the leaves of greenhouse-grown pepper and bean. Biosyst. Eng. 2017, 157, 72–85. [Google Scholar] [CrossRef]

- Abdel-Rahman, M.E.; VandenBerg, M.; Way, M.J.; Ahmed, F.B. Hand-held spectrometry for estimating thrips (Fulmekiolaserrata) incidence in sugarcane. In IEEE International Geoscience and Remote Sensing Symposium; IEEE: New York, NY, USA, 2019; Volume 12–17, pp. 268–271. [Google Scholar]

- Abdel-Rahman, M.E.; Ahmed, F.B.; vandenBerg, M.; Way, M.J. Potential of spectroscopic data sets for sugarcane thrips (FulmekiolaserrataKobus) damage detection. Int. J. Remote Sens. 2010, 31, 4199–4216. [Google Scholar] [CrossRef]

- Abdel-Rahman, M.E.; Way, M.; Ahmed, F.; Ismail, R.; Adam, E. Estimation of thrips (FulmekiolaserrataKobus) density in sugarcane using leaf-level hyperspectral data. S. Afr. J. Plant. Soil 2013, 30, 91–96. [Google Scholar] [CrossRef]

- Mirik, M.; Ansley, R.; Michels, G.; Elliott, N. Spectral vegetation indices selected for quantifying Russian wheat aphid (Diuraphisnoxia) feeding damage in wheat (Triticumaestivum L.). Precis. Agric. 2012, 13, 501–516. [Google Scholar] [CrossRef]

- Zhang, M.; Hale, A.; Luedeling, E. Feasibility of using remote sensing techniques to detect spider mite damage in stone fruit orchards. In Proceedings of the IEEE International Geoscience and Remote Sensing Symposium, Boston, MA, USA, 6–11 July 2008; Volume 7–11, pp. I323–I326. [Google Scholar]

- Luedeling, E.; Hale, A.; Zhang, M.; Bentley, W.J.; Dharmasri, L.C. Remote sensing of spider mite damage in California peach orchards. Int. J. Appl. Earth Obs. Geoinf. 2009, 11, 244–255. [Google Scholar] [CrossRef]

- Fraulo, B.A.; Cohen, M.; Liburd, O.E. Visible/near infrared reflectance (VNIR) spectroscopy for detecting twospotted spider mite (Acari: Tetranychidae) damage in strawberries. Environ. Entomol 2009, 38, 137–142. [Google Scholar] [CrossRef]

- Li, H.; Payne, W.A.; Michels, G.J.; Rush, C.M. Reducing plant abiotic and biotic stress: Drought and attacks of greenbugs, corn leaf aphids and virus disease in dryland sorghum. Environ. Exp. Bot. 2008, 63, 305–316. [Google Scholar] [CrossRef]

- Xu, H.; Ying, Y.; Fu, X.; Zhu, S. Near-infrared spectroscopy in detecting leaf miner damage on tomato leaf. Biosyst. Eng. 2007, 96, 447–454. [Google Scholar] [CrossRef]

- Peñuelas, J.; Filella, I.; Lloret, P.; Munoz, F.; Vilajeliu, M. Reflectance assessment of mite effects on apple trees. Int. J. Remote Sens. 1995, 16, 2727–2733. [Google Scholar] [CrossRef]

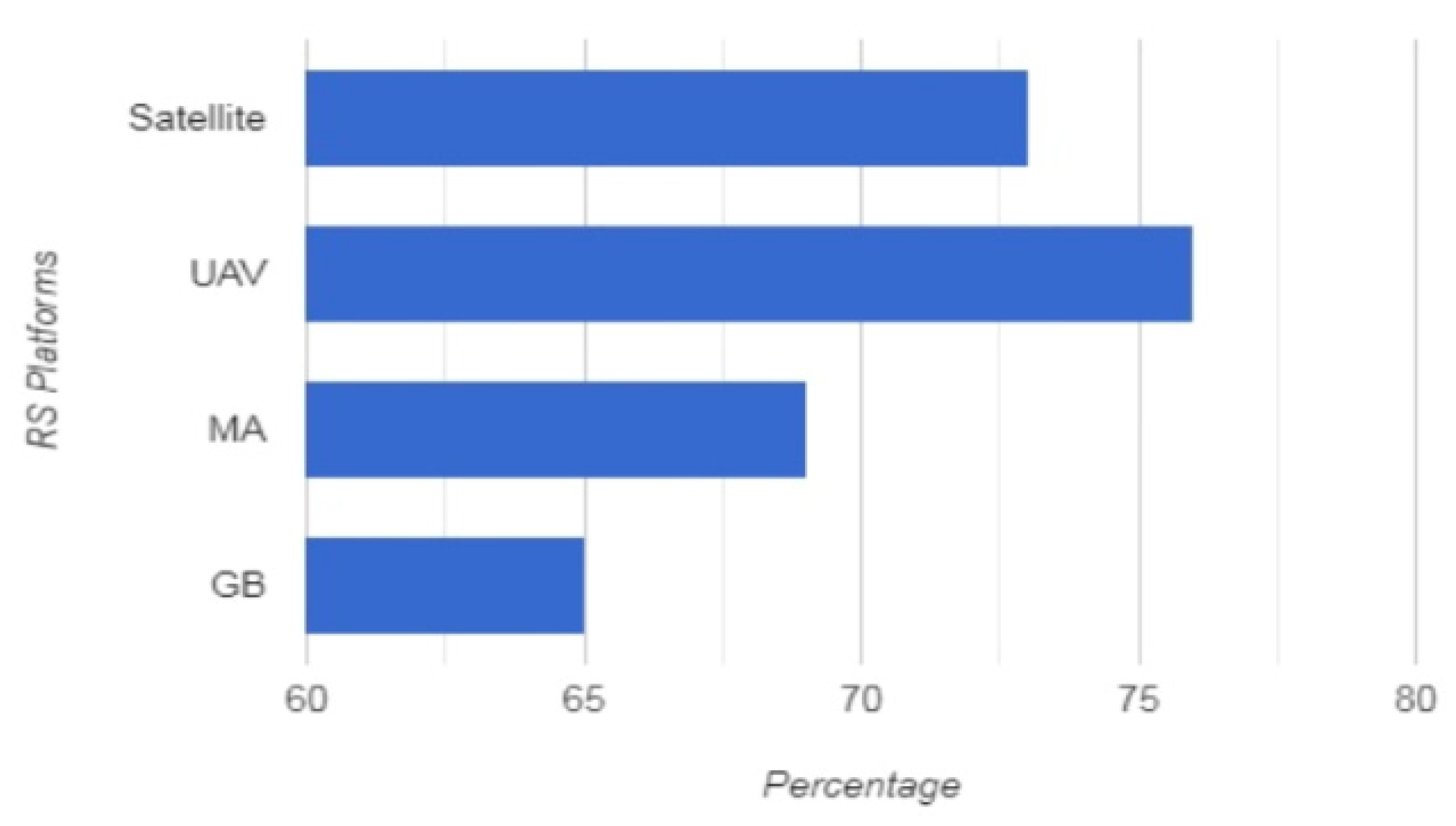

| Quality of Services | Types of RS Platforms | |||

|---|---|---|---|---|

| UAV | Satellite | Manned Aircraft | Ground Based | |

| Flexibility | high | low | low | low |

| Adaptability | high | low | low | low |

| Cost | low | high | high | low |

| Time Consumption | low | low | low | high |

| Risk | low | average | high | low |

| Accuracy | high | low | high | moderate |

| Deployment | easy | difficult | complex | moderate |

| Feasibility | yes | no | no | yes |

| Availability | yes | no | yes | no |

| Operability | easy | complex | complex | easy |

| Parameters | Types of UAV | |||

|---|---|---|---|---|

| Fixed Wing | Single Rotor | Multi-Rotor | Hybrid VTOL | |

| No. of Rotors | 1 | 1(1 Big Sized and Small Sized on the tail of the drone) | Tricopter-3 Quadcopter-4 Hexacopter-6 Octocopter-8 | 1 |

| Manufacture and Maintenance | Simple | Complex | Complex | Complex |

| Cost | High | High | Low | High |

| Average Flying Time | 2 h (Battery) 16 h (Powered by Gas Engine) | Higher (Powered by Gas Engine) | Limited (20–30 min) | Ability to cover longer distances |

| Endurance | More | More (with Gas Power) | Limited | More |

| Energy | Battery—They never utilize energy to stay afloat on air, Gas Engine | Gas Power | Battery—They utilize energy to stay afloat on air | Battery |

| Speed | Fast Flying Speed | Limited | Limited | Fast Flying Speed |

| Applications | Long-Distance Aerial Mapping and Surveillance | Aerial Scanning | Aerial Photography, Short Distance Aerial Mapping and Surveillance | Mapping and Land Surveying, Mining, Surveillance and Security |

| Drawbacks | Aerial photography is not applicable because it needs to be motionless in the air for a period. | Harder to fly, Dangerous to handle | Limited Payload | Imperfect in hovering Limited Payload |

| Training Required in Flying | Required (runway or a Catapult Launcher- to set a fixed-wing in air, Parachute or a Net- Landing) | Not Required | Not Required | Not Required |

| References | Crop Name | Parameters | ||||

|---|---|---|---|---|---|---|

| Type of UAV | Camera | No. of Rotors | Pest Name | Observations | ||

| Sourav Kumar Bhoia et al., 2021 [17] | Rice | Multi-Rotor | RGB, Multispectral | 4 | Leaf hopper | Visual inspection of images |

| Wu, Bizhi et al., 2021 [18] | Pine | Multi-Rotor | Multispectral | 6 | Bursaphelenchus xylophilus | Visual Images |

| Ishengoma, Farian Severine et al., 2021 [19] | Maize | Multi-Rotor | Multispectral | 6 | Lepidoptera | Visual Images |

| Érika Akemi Saito Moriya et al., 2021 [20] | Lemon | Multi-Rotor | Hyperspectral | 4 | Phytophthora Gummosis | Visual inspection of images |

| An, G et al., 2021 [21] | Rice | Multi-Rotor | Hyperspectral | 4 | Ustilaginoidea virens | Damage assessments |

| Nguyen, C et al., 2021 [22] | Grapevine | Multi-Rotor | Hyperspectral | 4 | Grapevine vein-clearing virus | Visual Images |

| Ma, H et al., 2021 [23] | Wheat | Multi-Rotor | Hyperspectral | 4 | Fusarium head blight | Visual inspection of images |

| Qin, J et al., 2021 [24] | Pine | Multi-Rotor | Multispectral | 6 | Bursaphelenchusxylophilus | Damage assessments |

| Xiao, Y et al., 2021 [25] | Wheat | Multi-Rotor | Hyperspectral | 4 | Pathogen Fusarium graminearum (Gibberellazeae) | Visual Images |

| Guo, A et al., 2021 [26] | Wheat | Multi-Rotor | Hyperspectral | 4 | Puccinia striiformis | Disease Monitoring |

| Castrignanò, A et al., 2020 [27] | Olive | Multi-Rotor | Multispectral | 6 | Xylella fastidiosa | Visual Images |

| Francesconi S et al., 2021 [28] | Wheat | Multi-Rotor | Hyperspectral | 4 | Pathogen Fusarium graminearum (Gibberellazeae) | Visual Images |

| SaumyaYadav et al., 2021 [29] | Peach | Multi-Rotor | RGB, Multispectral | 4 | Xanthomonas campestris pv.pruni | Visual Images |

| Görlich, F et al., 2021 [30] | Sugar beet | Multi-Rotor | Hyperspectral | 4 | Cercosporabeticola | Damage assessments |

| Yu, Run et al., 2021 [31] | Pine | Multi-Rotor | Hyperspectral | 4 | Bursaphelenchusxylophilus | Visual Images |

| Yue Shi et al., 2021 [32] | Potato | Multi-Rotor | Hyperspectral | 4 | Phytophthora infestans | Visual Images |

| Walter Chivasa, et al., 2021 [33] | Maize | Multi-Rotor | Multispectral | 6 | Gemini virus | Visual Images |

| Anton Louise P. de Ocampo and Elmer P. Dadios 2021 [34] | Solanummelongena | Multi-Rotor- Quad copter | RGB | 4 | Aphis gossypii | Vision-based Monitoring |

| Gao, Junfeng et al., 2020 [35] | Potato | Multi-Rotor | Multispectral | 6 | Phytophthora infestans | Visual Images, Degree of Severity |

| Deng, Xiaoling et al., 2020 [36] | Lemon | Multi-Rotor | Hyperspectral | 4 | CandidatusLiberibacter asiaticus | Visual inspection of images |

| Everton Castel˜aoTetila et al., 2020 [37] | Soya | Multi-Rotor- Quad copter | RGB | 4 | Defoliant pests such as insects and mollusks | Pest Segmentation and Classification |

| Vinı’cius Bitencourt Campos Calou et al., 2020 [38] | Banana | Multi-Rotor- Quad copter | RGB | 4 | Yellow sigatoka | Visual Images, Degree of Severity |

| Del Campo-Sanchez et al., 2019. [39] | Grape | Multi-Rotor | RGB | 4 | Cotton assid | Visual inspection of images |

| Abdulridha, Jaafar et al., 2019. [40] | Lemon | Multi-Rotor | Hyperspectral | 4 | Xanthomonas citri | Visual inspection of images |

| Vanegas et al., 2018 [41] | Grape | Multi-Rotor | RGB, Multispectral, Hyperspectral | 4 | Grapephylloxera | Ground trapsand root digging, visual vigour assessments |

| Huang et al., 2018 [42] | Cotton | Multi-Rotor | Multispectral | 4 | Two-spotted spidermite | Damage assessments |

| Samuel Joalland et al., 2018 [43] | Sugar Beet | Multi-Rotor | Hyperspectral | 4 | Beet Cyst Nematode | Visual Images |

| Hunt et al., 2017. [44] | Potato | Multi-Rotor | Multispectral | 6 | Colorado potato beetle | Damage assessments |

| Stanton et al., 2017 [45] | Sorghum | Fixed Wing | Multispectral | 1 | Sugarcane aphid | Arthropod counts |

| Severtson et al., 2016a. [46] | Canola | Multi-Rotor | Multispectral | 8 | Green peachaphid | Arthropod counts, soil and plant tissue nutrient analyses |

| Nebiker et al., 2016 [47] | Onion | Fixed Wing | Multispectral | 1 | Thrips | NA |

| Ishengoma et al., 2021 [19] | Wheat | Multi-Rotor | RGB, Multispectral | 4 | Fall armyworm | Outbreak reported by grower |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Velusamy, P.; Rajendran, S.; Mahendran, R.K.; Naseer, S.; Shafiq, M.; Choi, J.-G. Unmanned Aerial Vehicles (UAV) in Precision Agriculture: Applications and Challenges. Energies 2022, 15, 217. https://doi.org/10.3390/en15010217

Velusamy P, Rajendran S, Mahendran RK, Naseer S, Shafiq M, Choi J-G. Unmanned Aerial Vehicles (UAV) in Precision Agriculture: Applications and Challenges. Energies. 2022; 15(1):217. https://doi.org/10.3390/en15010217

Chicago/Turabian StyleVelusamy, Parthasarathy, Santhosh Rajendran, Rakesh Kumar Mahendran, Salman Naseer, Muhammad Shafiq, and Jin-Ghoo Choi. 2022. "Unmanned Aerial Vehicles (UAV) in Precision Agriculture: Applications and Challenges" Energies 15, no. 1: 217. https://doi.org/10.3390/en15010217

APA StyleVelusamy, P., Rajendran, S., Mahendran, R. K., Naseer, S., Shafiq, M., & Choi, J.-G. (2022). Unmanned Aerial Vehicles (UAV) in Precision Agriculture: Applications and Challenges. Energies, 15(1), 217. https://doi.org/10.3390/en15010217