Advanced Laboratory Testing Methods Using Real-Time Simulation and Hardware-in-the-Loop Techniques: A Survey of Smart Grid International Research Facility Network Activities

Abstract

1. Introduction

- RTS is a simulation, which is solely digitally executed in a real-time way on an RTSM.

- Co-simulation is a test setup that combines at least two different software tools executed on one or more computational systems.

- HIL is a test setup that combines a real-time simulated system with a physical hardware component or system, where interfaces with physical and simulated systems enabling closed loop interactions.

- Controller HIL or CHIL is a HIL technique where the sensors and actuators of a physical controller are interfaced with a real-time simulation.

- Power HIL or PHIL is a HIL setup, where at least one of the bi-directional interfaces of a setup exchanges power with real, physical power hardware through a Power Amplifier.

- Power System in-the-Loop or PSIL is a novel HIL concept where more than two domains interface each other in order to perform holistic experiments, e.g., a connection between a virtual simulated system (where RTS and co-simulation occur), a controller component (where CHIL occurs), and physical power system (where PHIL occurs).

1.1. Motivation of the Review

1.2. Review Structure

- Interfacing methods of PHIL, CHIL, and PSIL simulation;

- HIL testing of power system protection and control;

- HIL testing of smart grid/microgrid controllers, energy management systems, and power electronic converters;

- Co-simulation and RTS integration;

- Geographically distributed HIL and RTS;

- Industrial experiences and HIL in standardized testing.

2. Interfacing Methods of PHIL, CHIL, and PSIL Simulation

2.1. Introduction

- 1

- Interface Algorithms for Fast Dynamics: Nowadays, the accuracy and stability of several IAs have been analyzed in detail [1,2,7,8,9,10,11,12], including: the ideal transformer model (ITM), partial circuit duplication (PCD), transmission line model (TLM), damping impedance method (DIM), time variant first-order approximation (TFA), and advanced ideal transformer model (AITM); being the ITM and DIM are the most widely used techniques for connecting power equipment to a PHIL RTS.

- 2

- PHIL Integration at Slow Time Scales: For application areas where the concerned dynamics are slow (RMS values calculated using tens to hundreds of cycles), the technical requirements of the HIL integration can be simplified, that reduces hardware cost and improves scalability. Examples of system behaviors and relevant functions in these time scales are: power and energy management, active and reactive power balancing, demand side management, voltage control strategies, and determination of the proximity to operating limits. By the use of the PSIL concept, this review present two methods: (a) quasi-dynamic PHIL in Section 2.2.3 and (b) quasi-static PHIL in Section 2.2.4.

2.2. Reported Experiences and Activities from SIRFN ALTM Members

2.2.1. Power Amplifier Characterization for RTS

2.2.2. Stability and Accuracy Comparison for Different Interfacing Methods

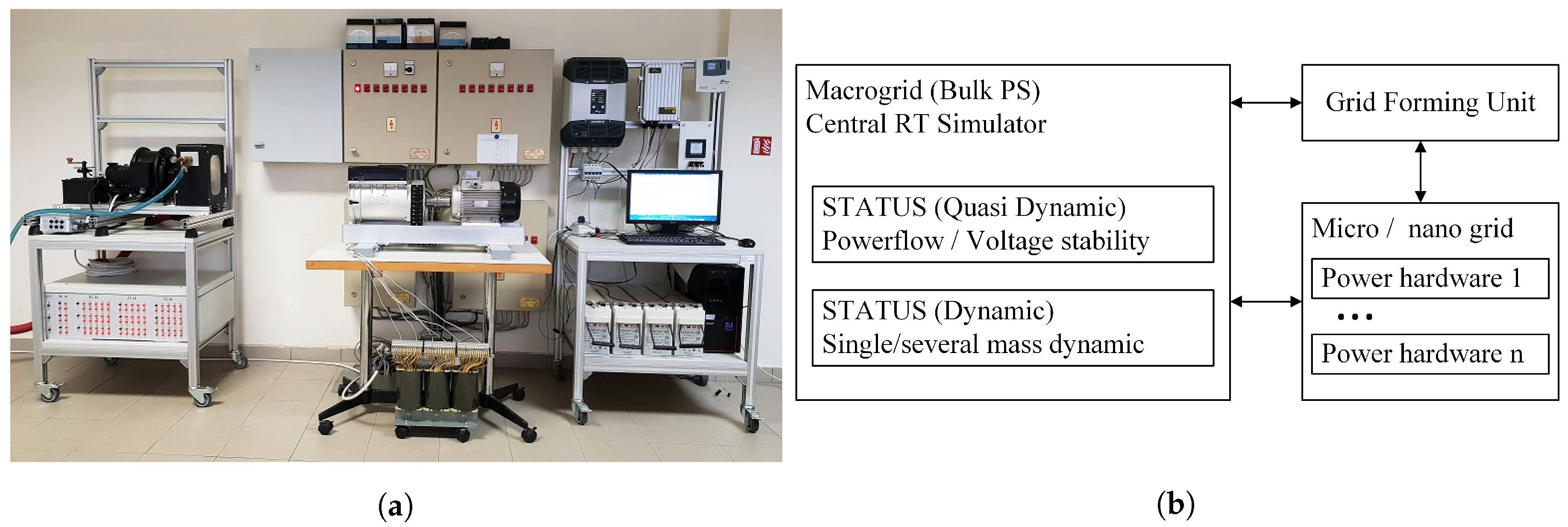

2.2.3. Quasi-Dynamic PHIL

2.2.4. Quasi-Static PHIL

- a RTSM to simulate the relevant high and/or medium voltage simulation elements, and an interfacing component;

- a physical interfacing device such as a controllable grid-forming converter which can set frequency and voltage;

- a sufficiently fast RMS measurement at the interface device or its local bus.

- the RTSM system sends voltage and frequency RMS setpoints;

- the Interface device (grid-forming component of the lab) realizes these setpoint with some dynamic delay;

- the RMS P and Q values (or I, ) are measured at the interface component, and transmitted to the RTSM component.

3. HIL Testing of Power System Protection and Control

3.1. Introduction

3.2. Reported Experiences and Activities from SIRFN ALTM Members

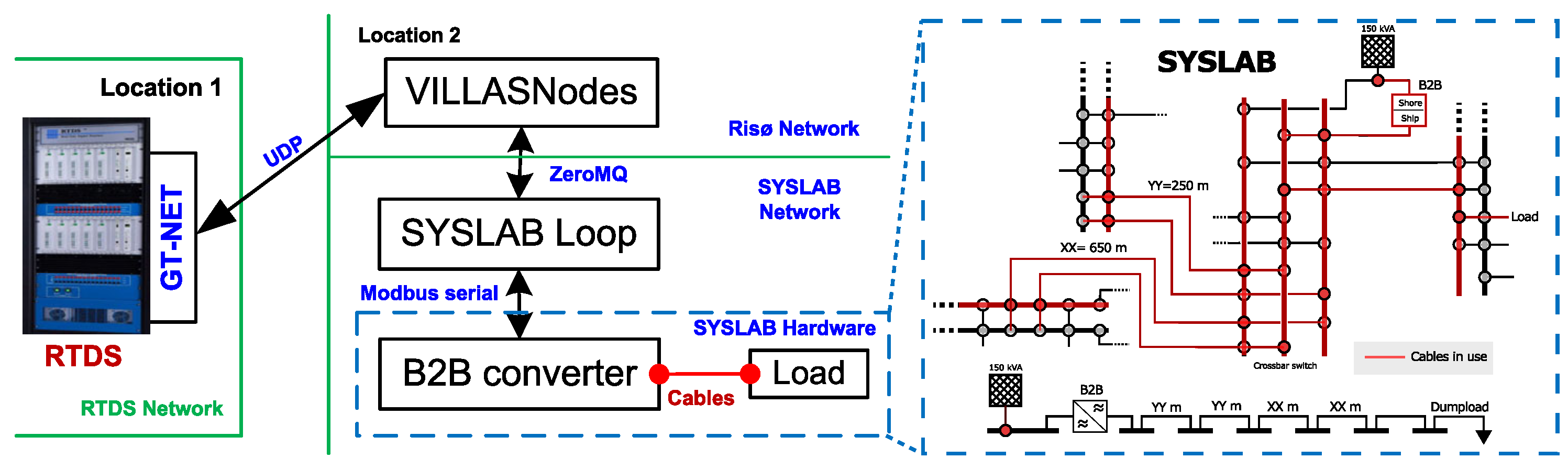

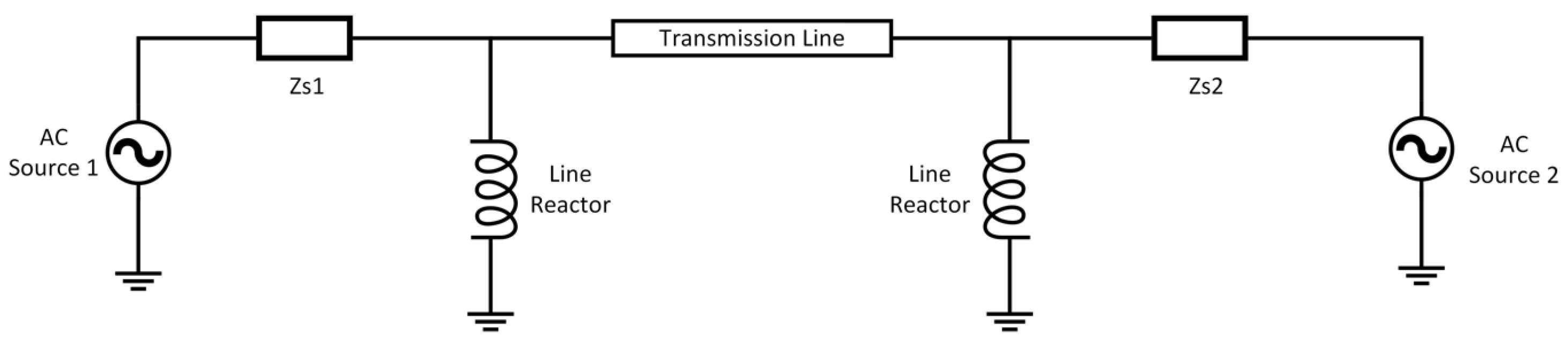

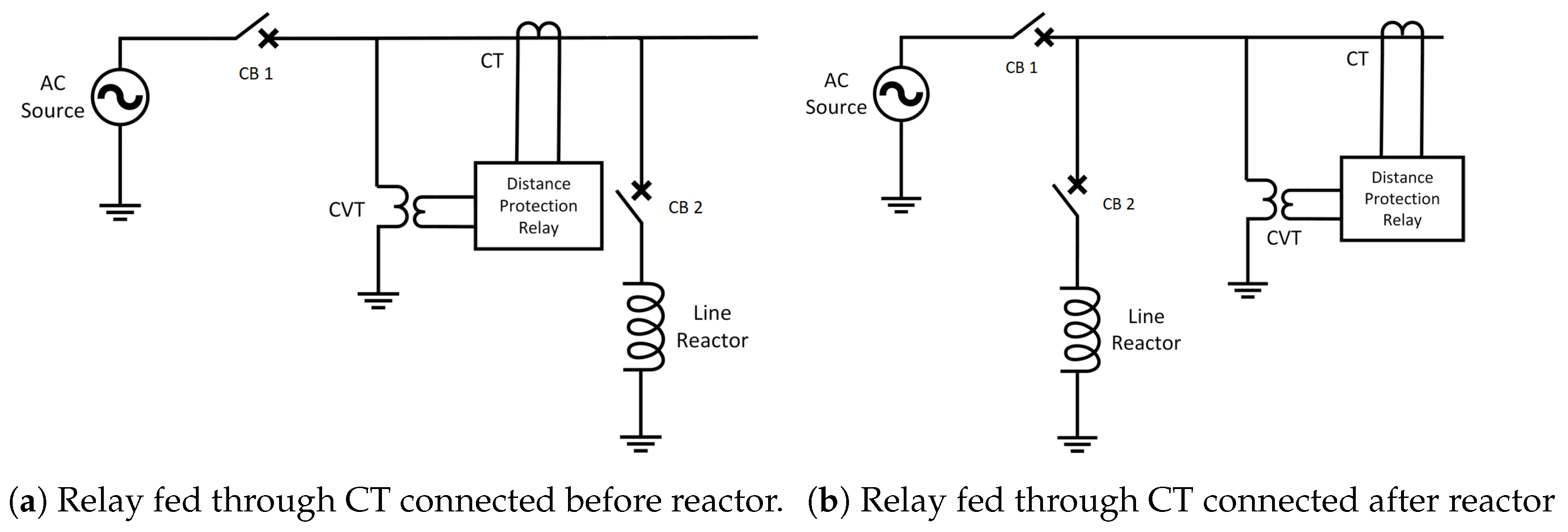

3.2.1. HIL Validation of Fault Locator Accuracy without Line Reactor Current in Distance Protection Scheme

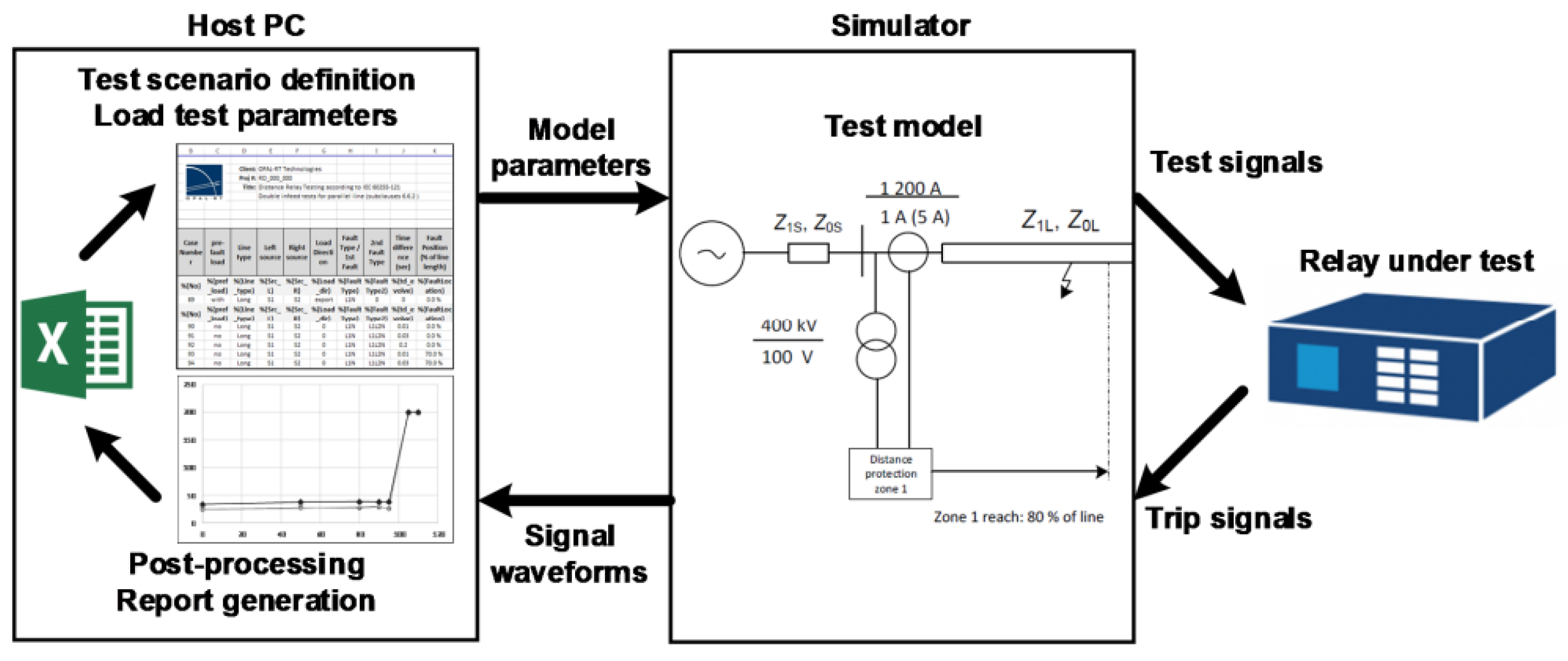

3.2.2. Distance Protection Relay Type Testing Framework

3.2.3. Adaptive Protection with HIL

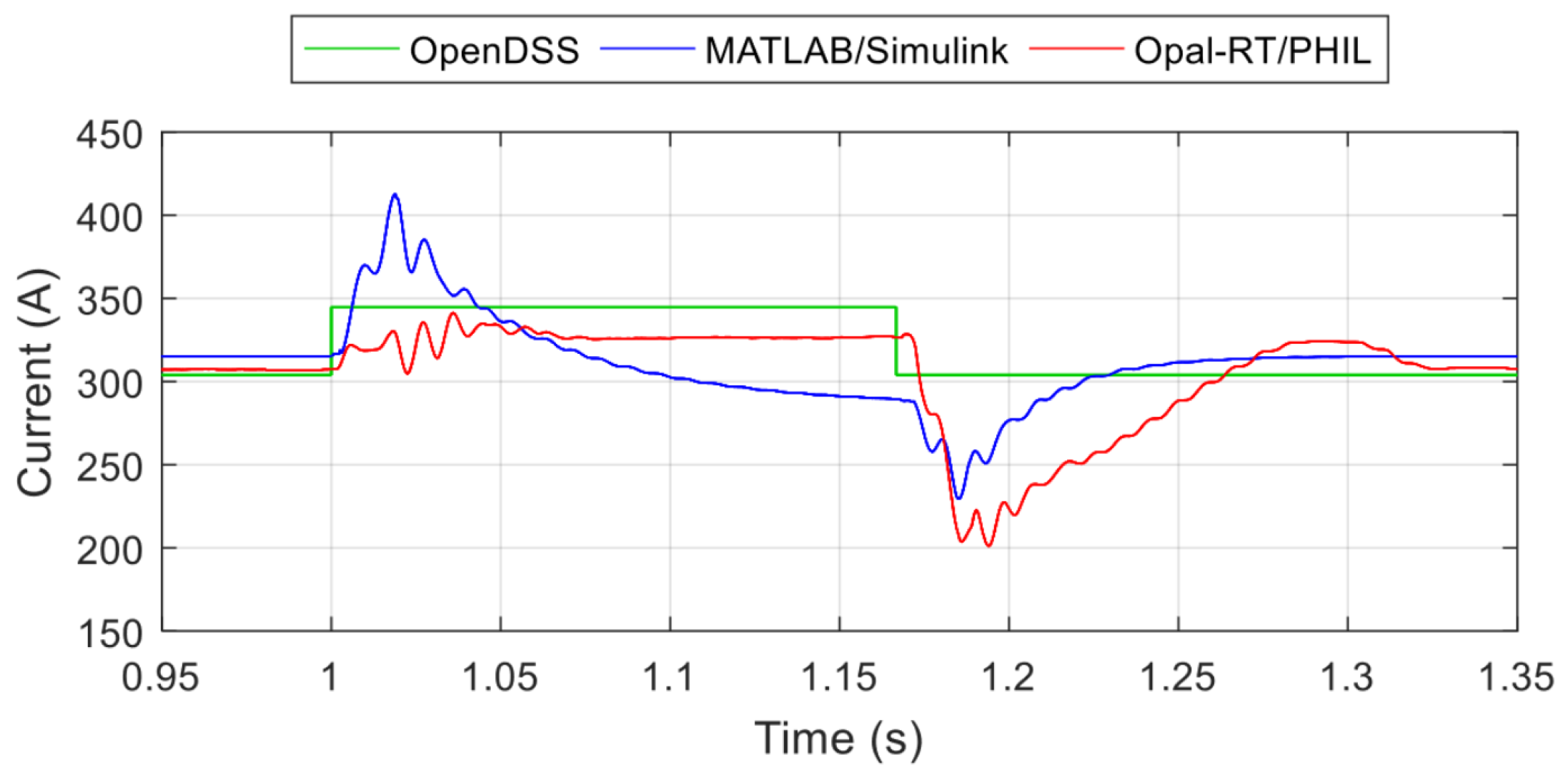

3.2.4. Fault Modeling and Validation Between Simulation Tools

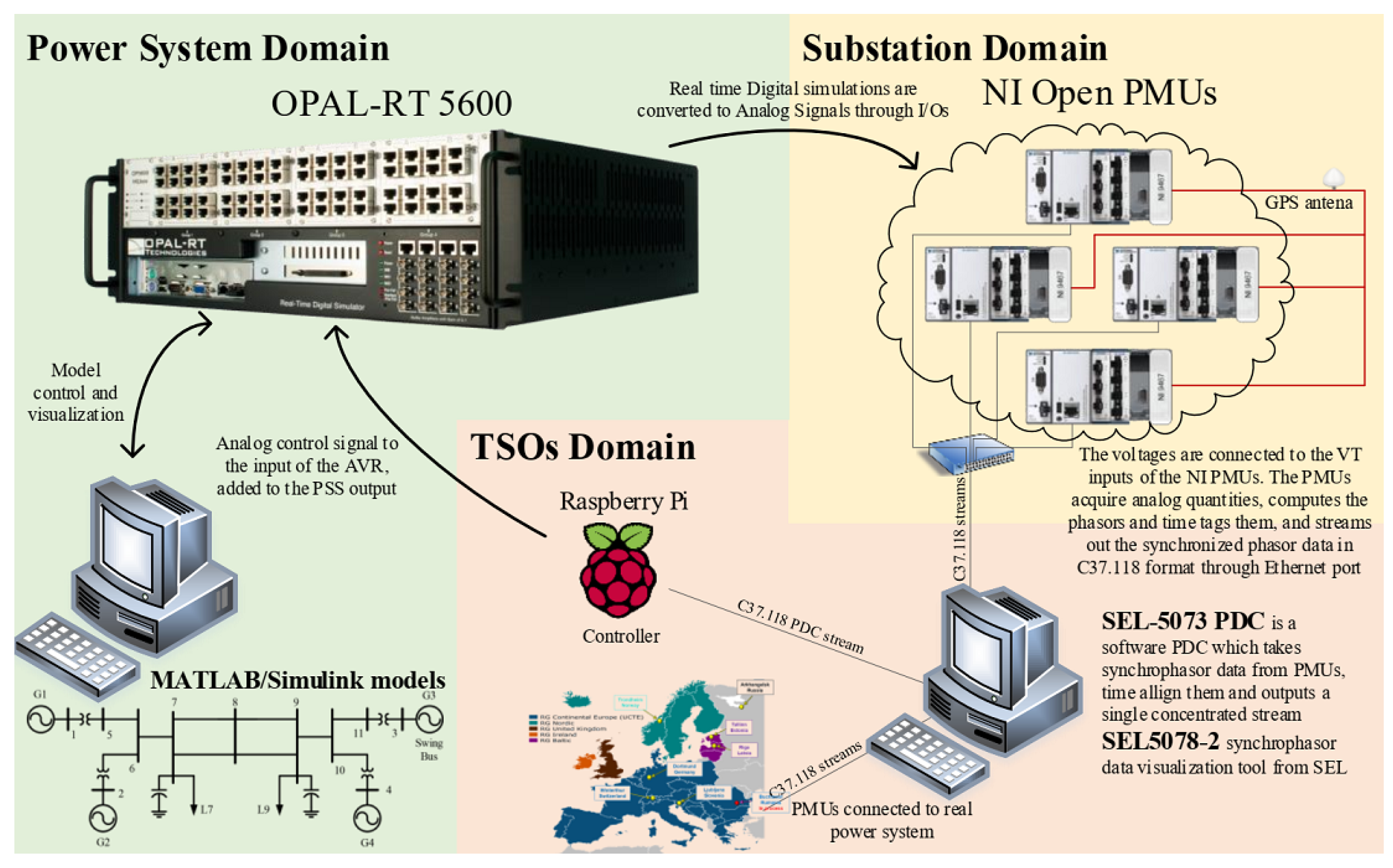

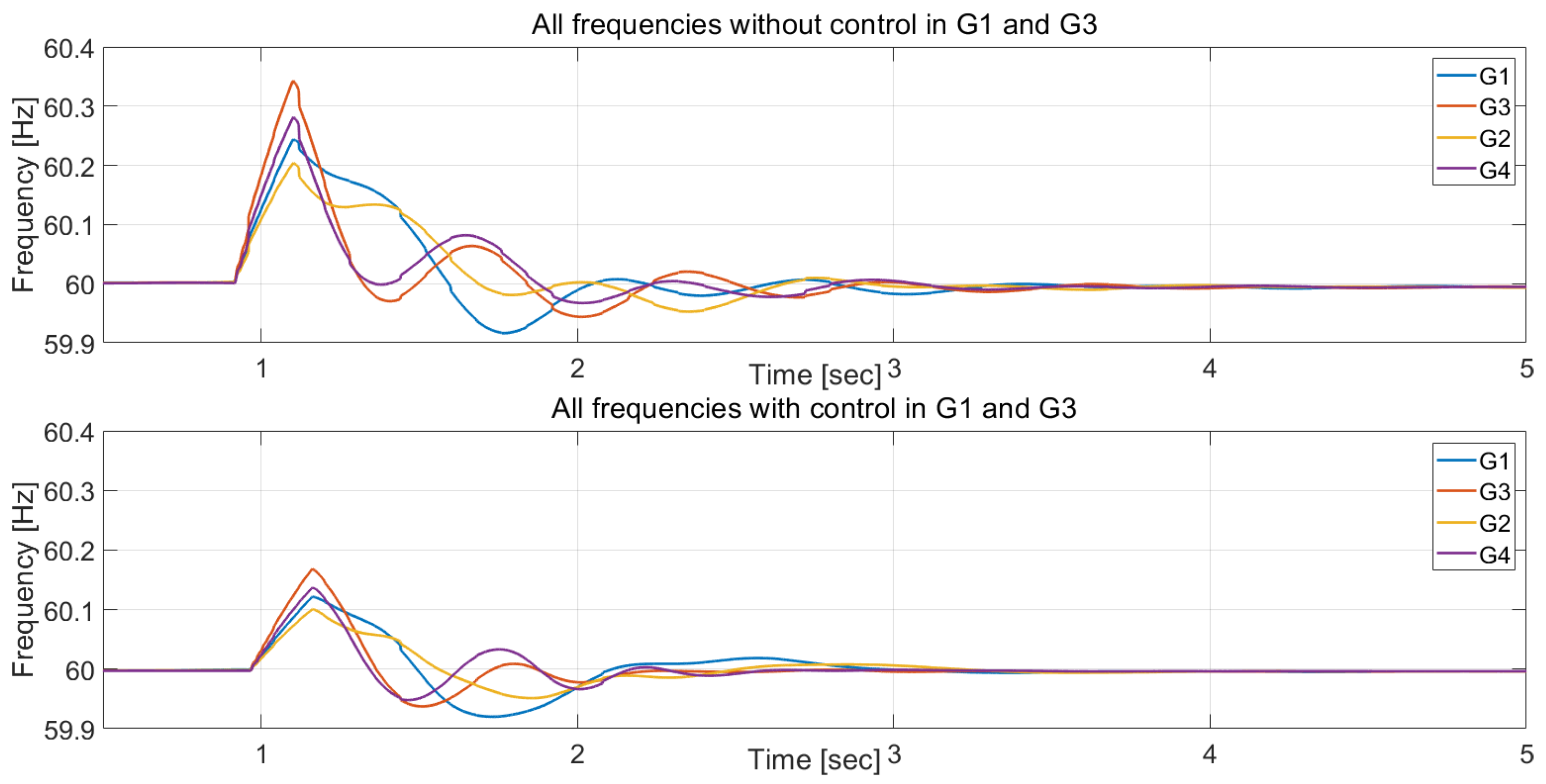

3.2.5. Wide Area Controller HIL Testing for Power Systems Oscillation Damping

4. HIL Testing of Smart Grid/Microgrid Controllers, Energy Management Systems, and Power Electronic Converters

4.1. Introduction

- Microgrid prototyping and validation: Testing AC, DC, and Hybrid MGs requires a wide variety of techniques and methodologies including: benchmarks and prototyping platforms [32,33,34,35], testing chains and procedures for centralized and decentralized controllers [36,37,38,39,40,41], and rapid control prototyping (RCP) for a quick development of new control strategies in a real hardware environments [42,43,44].

- Microgrid control strategies: Advanced testing methods are helpful in the establishment of the control strategies for loads and generators, reconfiguration equipment, and isolation and re-synchronization actions [28].

- Development of inverter and power electronics functions: DER functions and device interoperability support large, traditional power systems and microgrids alike. These technologies can be evaluated prior to implementation using CHIL and RT simulation techniques [45,46,47,48,49,50,51,52]. The same testing approach can be used for large-scale power electronics integration studies [53,54].

4.2. Reported Experiences and Activities from SIRFN ALTM Members

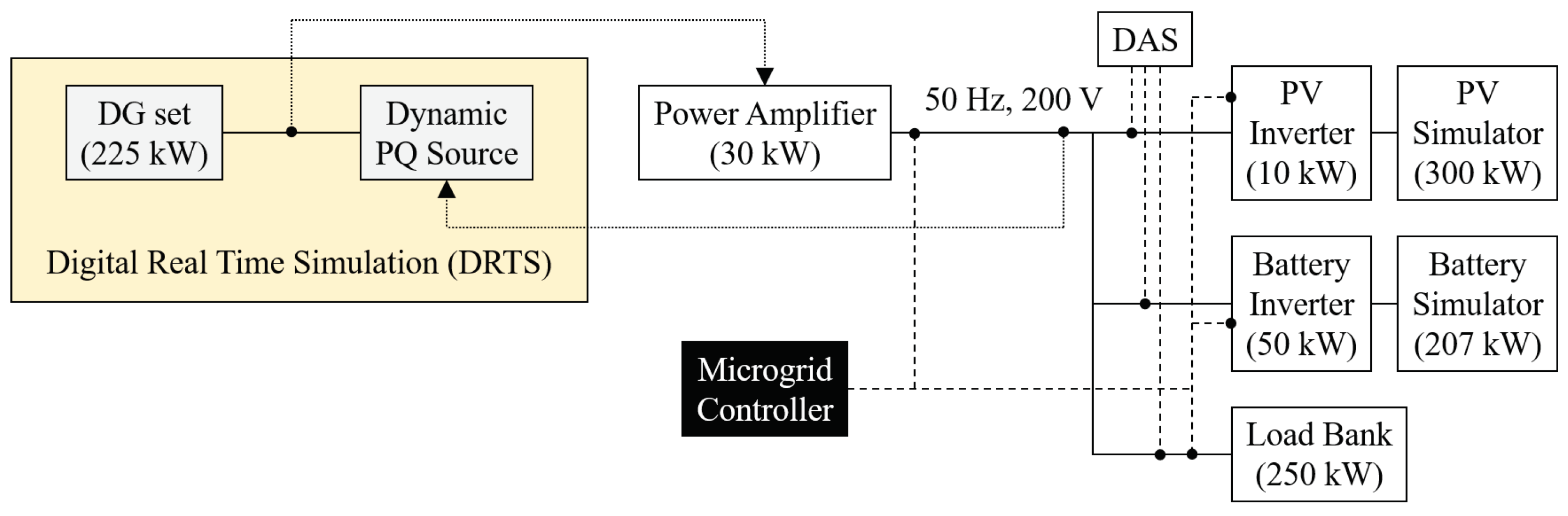

4.2.1. Integrated PHIL and Laboratory Testing for Microgrid Controller

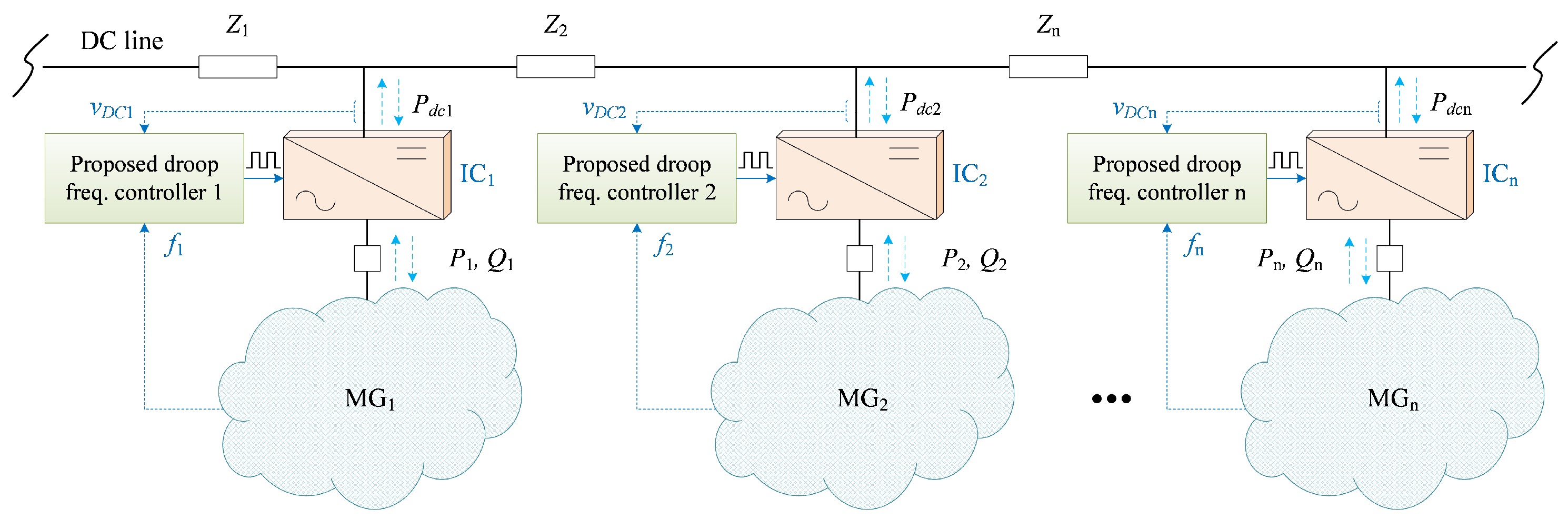

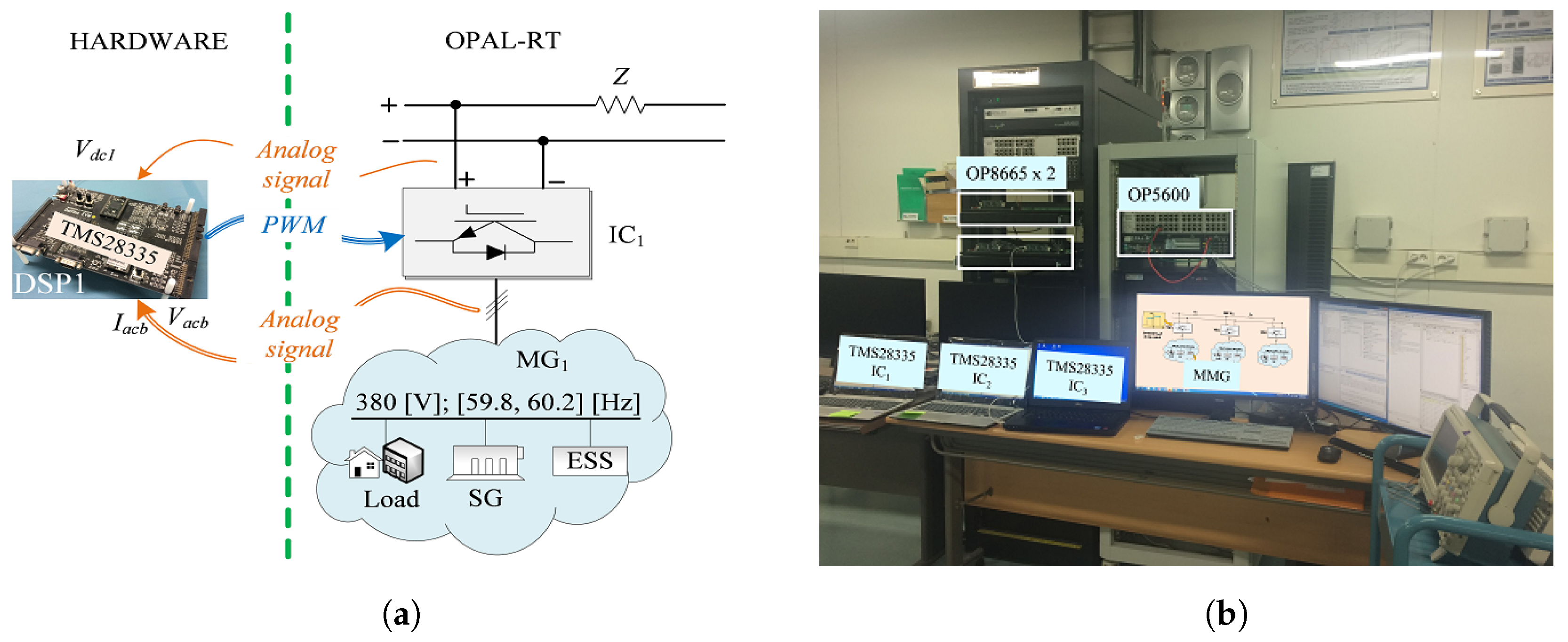

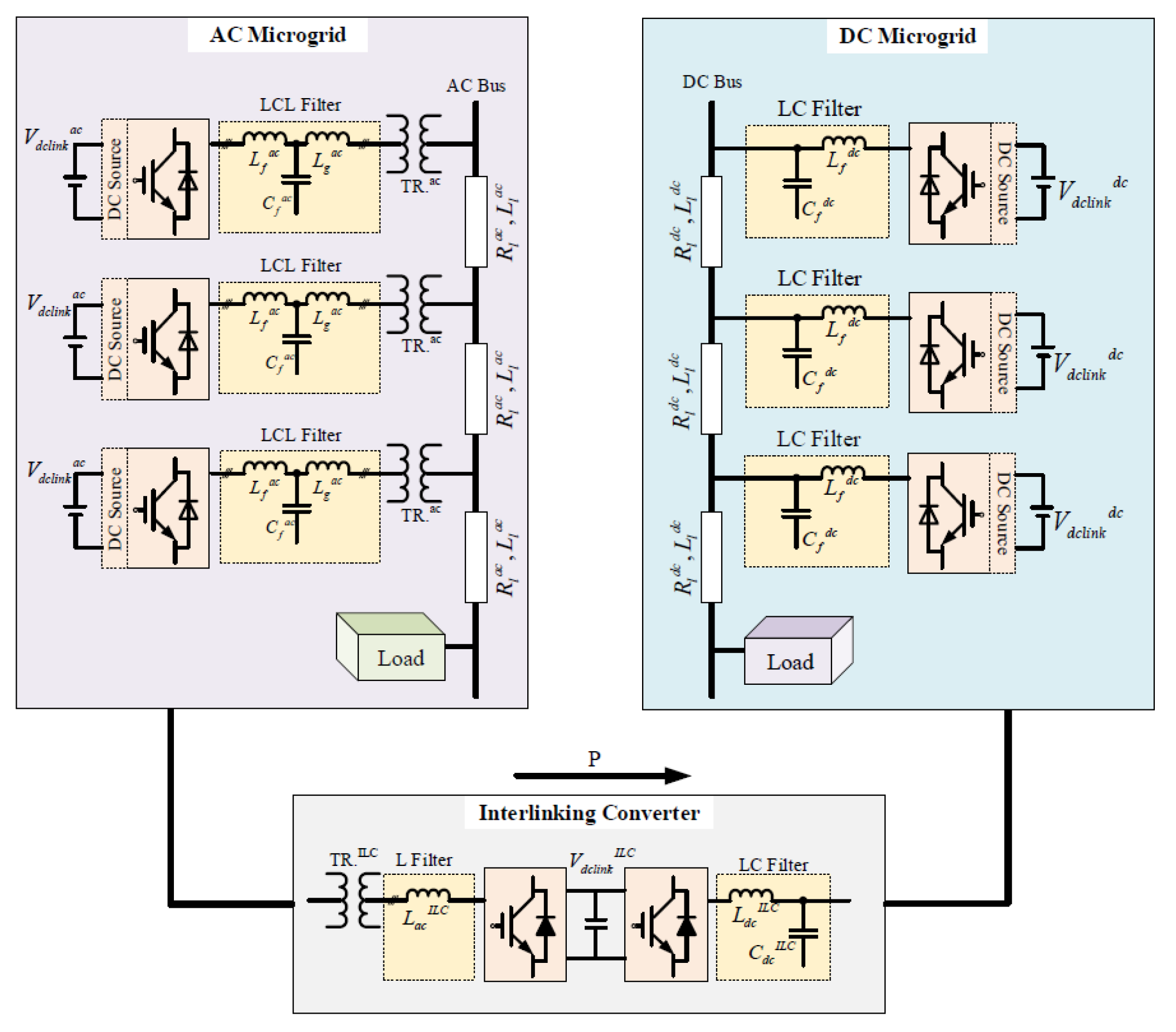

4.2.2. Development of a Droop Frequency Control of Stand-Alone Multi-Microgrid System with HIL

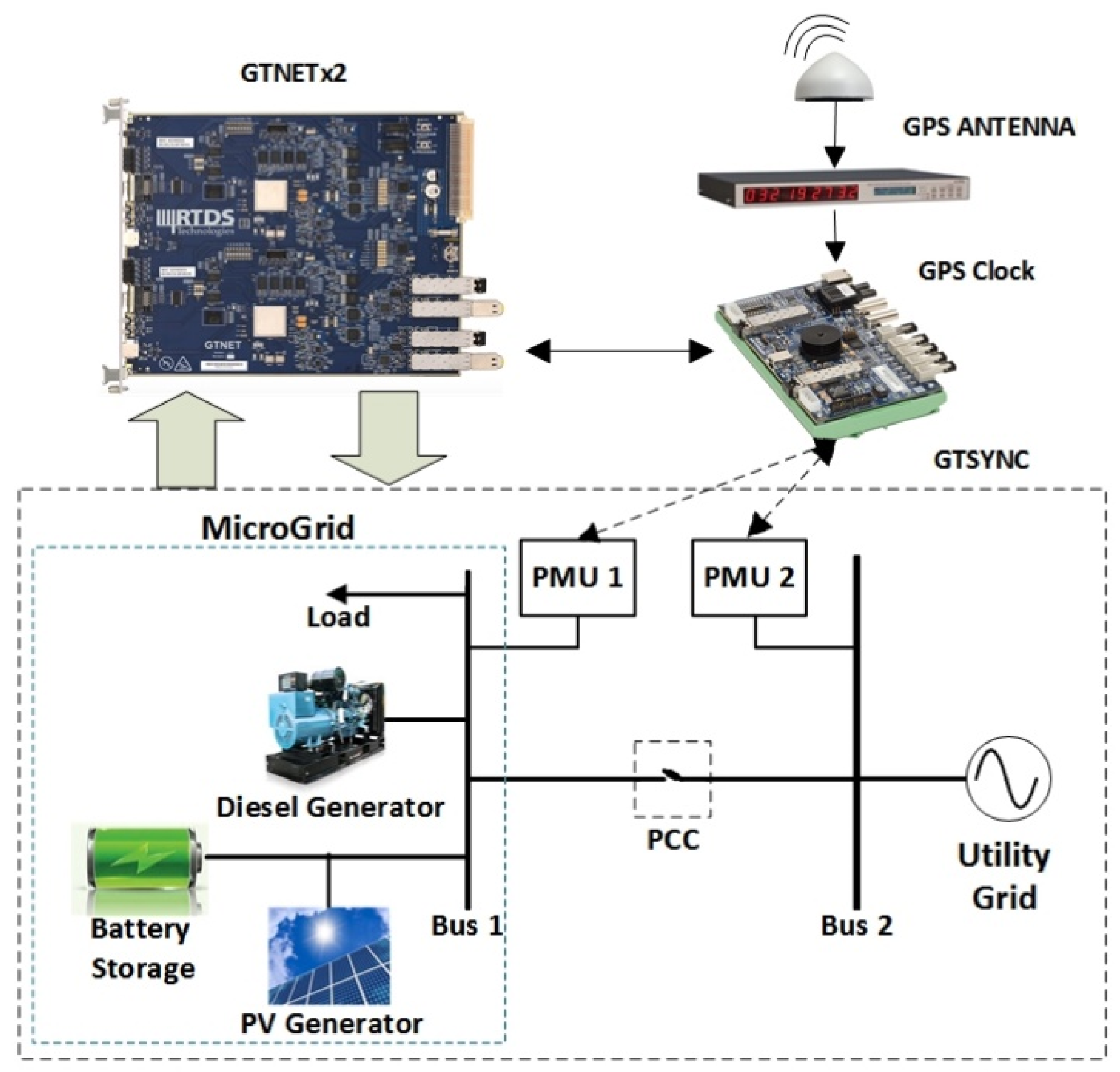

4.2.3. Microgrid Re-Synchronization with PMU Measurements

4.2.4. Distributed Coordination Control in Hybrid AC/DC Microgrid with RCP

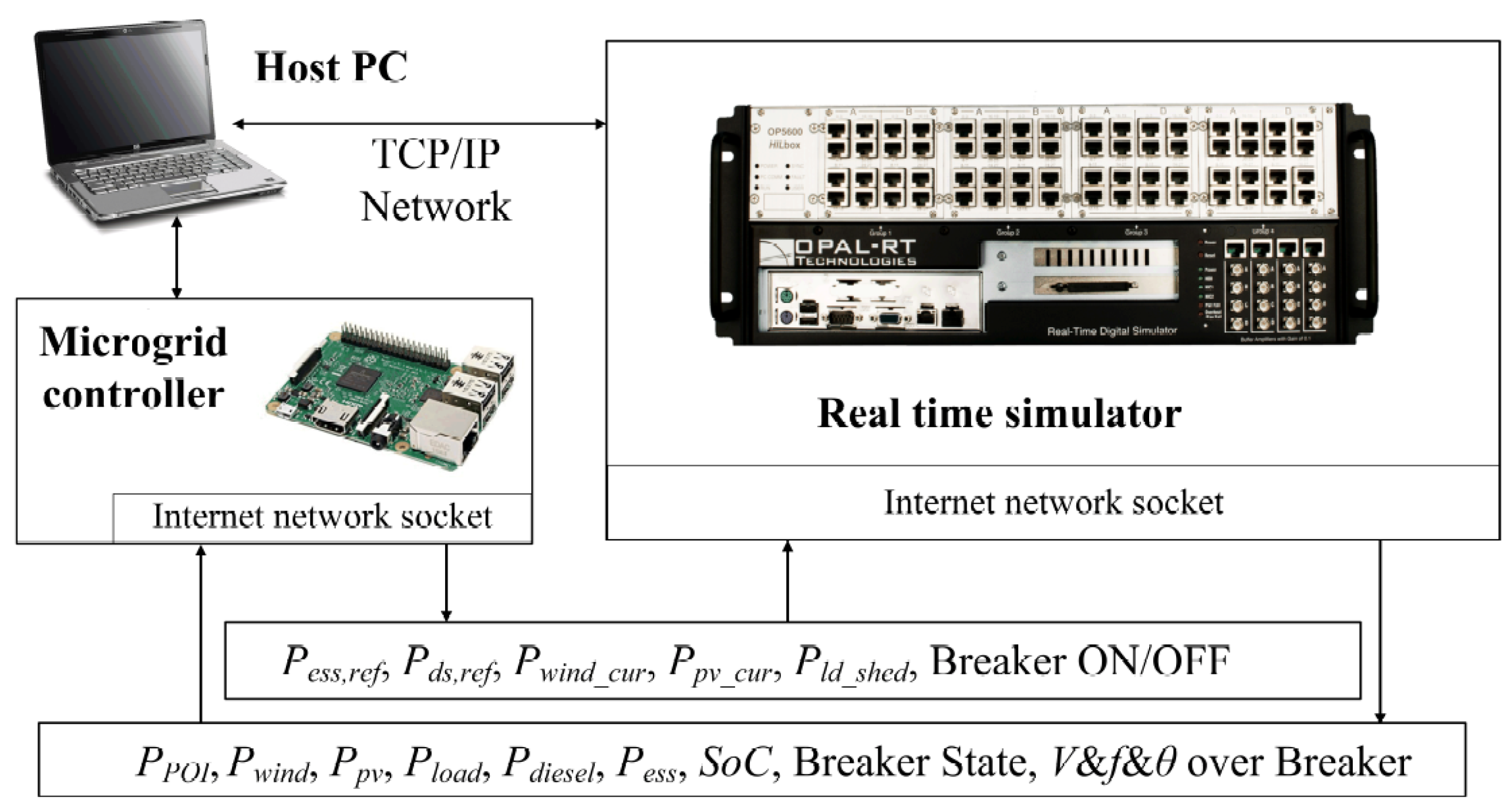

4.2.5. Design and Validation of a Rule-Based Microgrid Controller

4.2.6. Decentralized Microgrid Control Systems

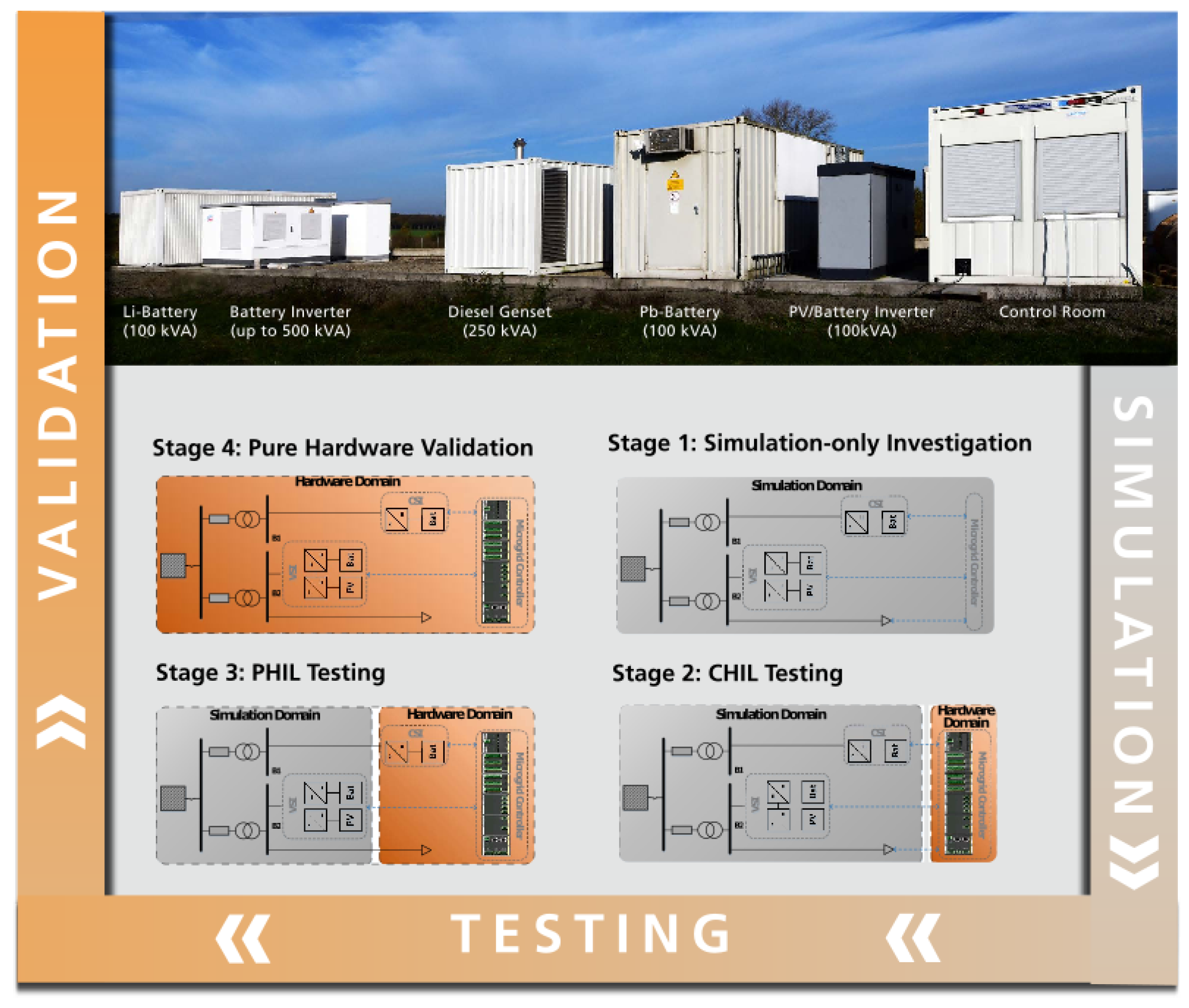

4.2.7. Microgrid Controller Development with an Advanced Testing Chain Methodology

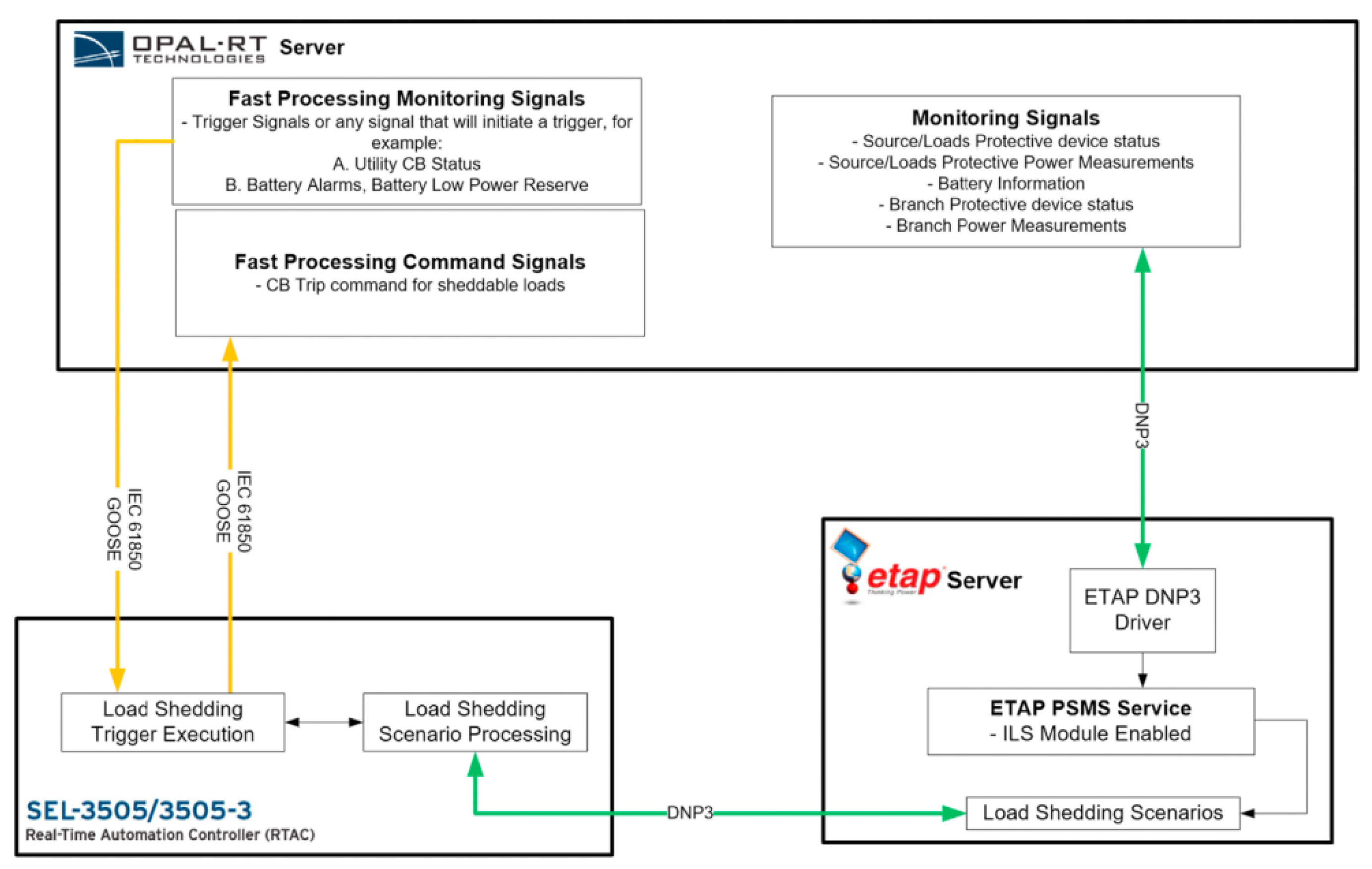

4.2.8. Generic Microgrid Controller Development, Testing, and Validation

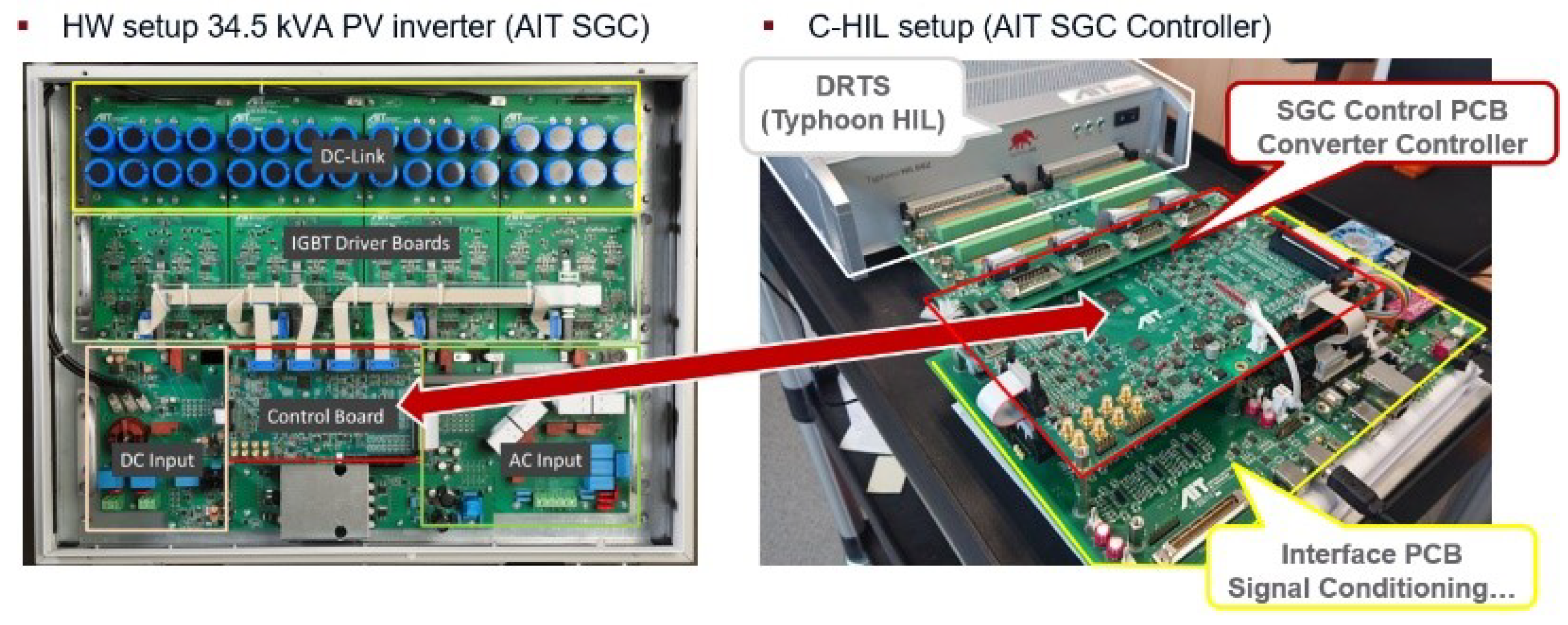

4.2.9. CHIL for Grid-Support Functions of Inverters

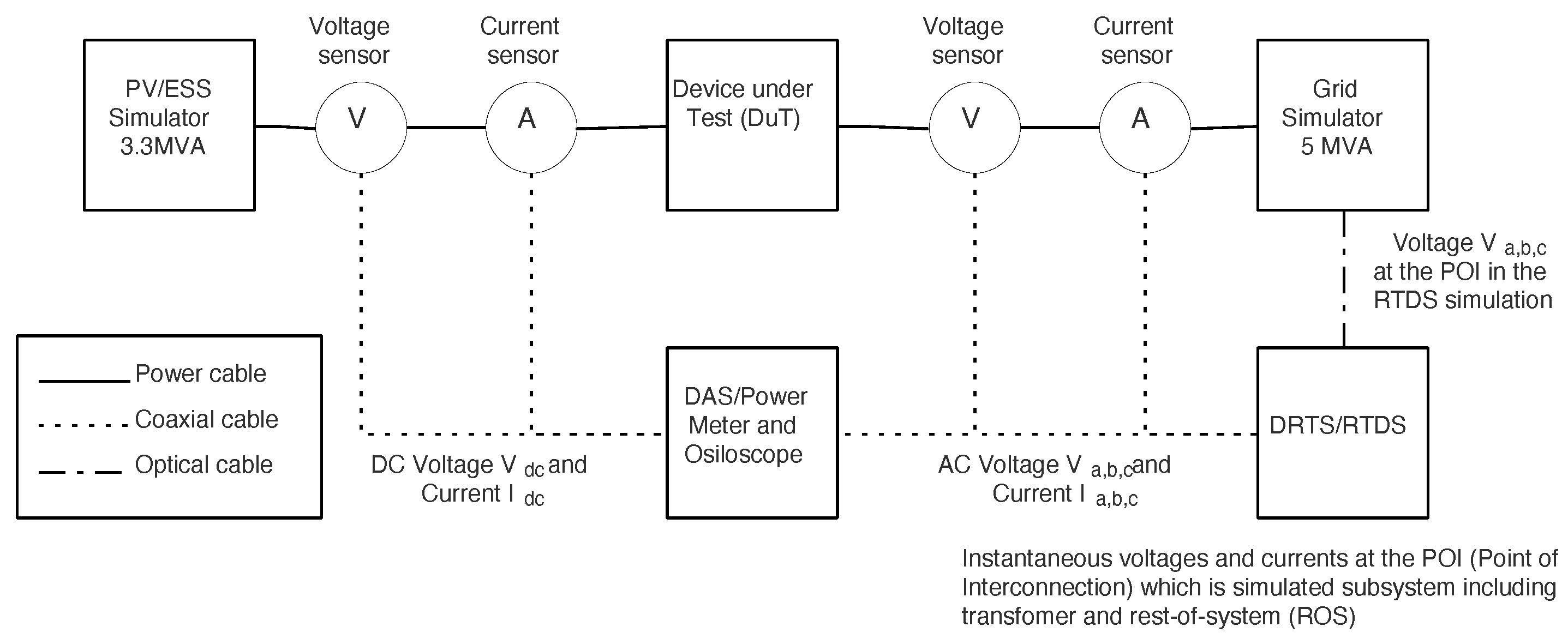

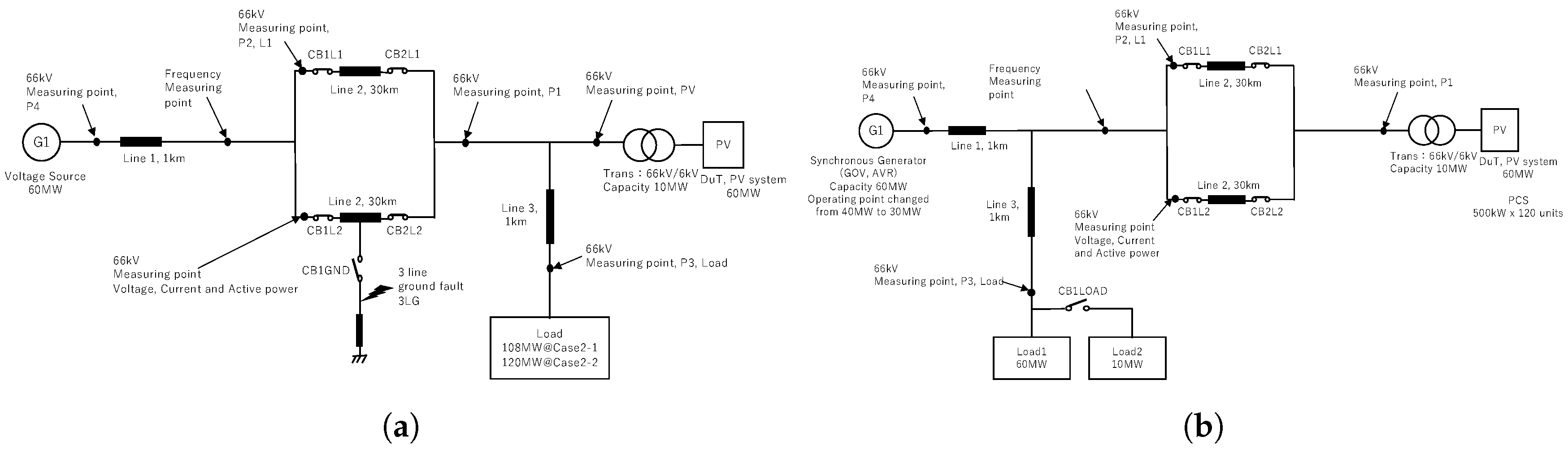

4.2.10. PHIL Smart Inverter Testing with Megawatt Scale Grid Simulator

4.2.11. CHIL for Validation of Unintentional Islanding

5. Co-Simulation and RTS Integration

5.1. Introduction

5.2. Reported Experiences and Activities from SIRFN ALTM Members

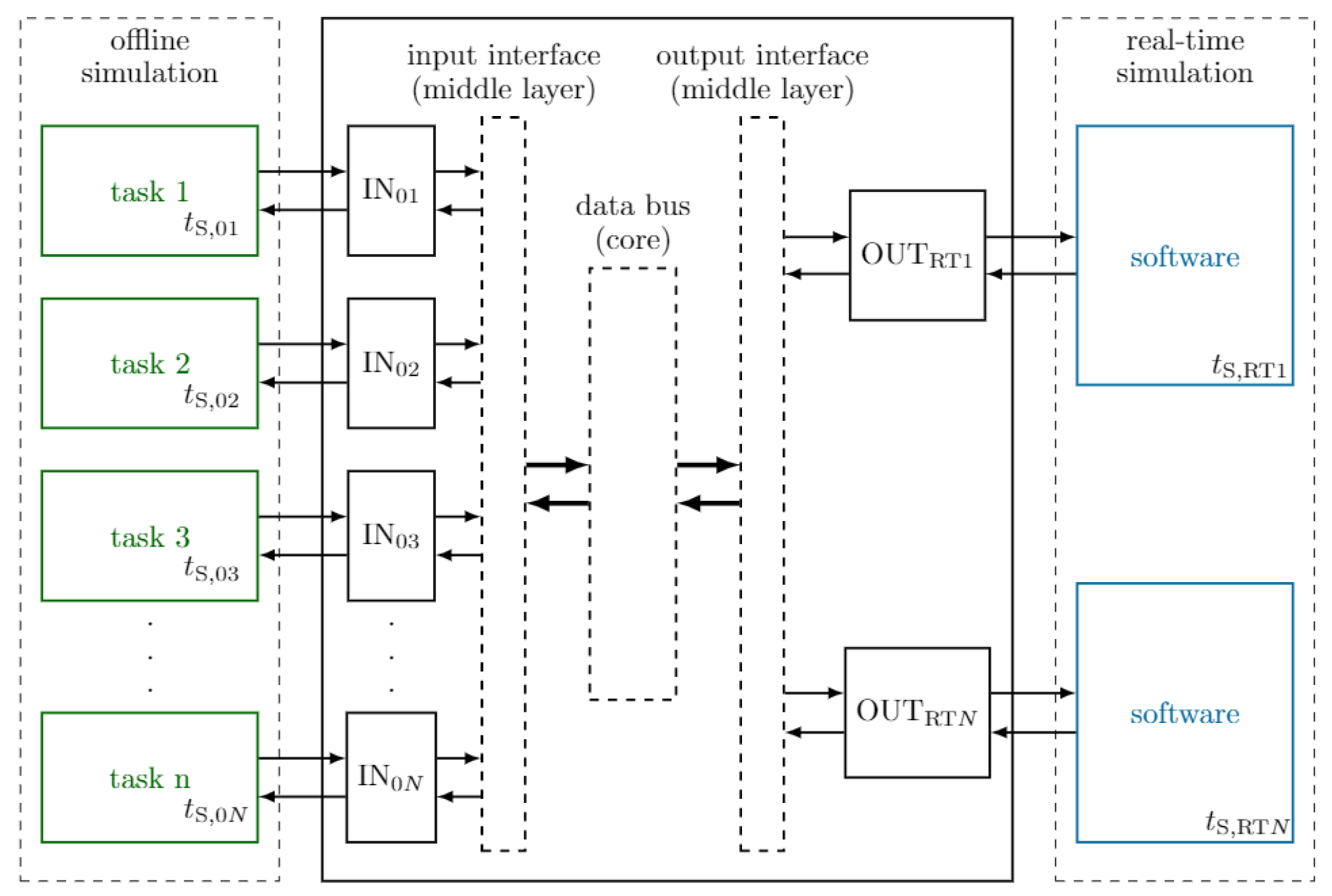

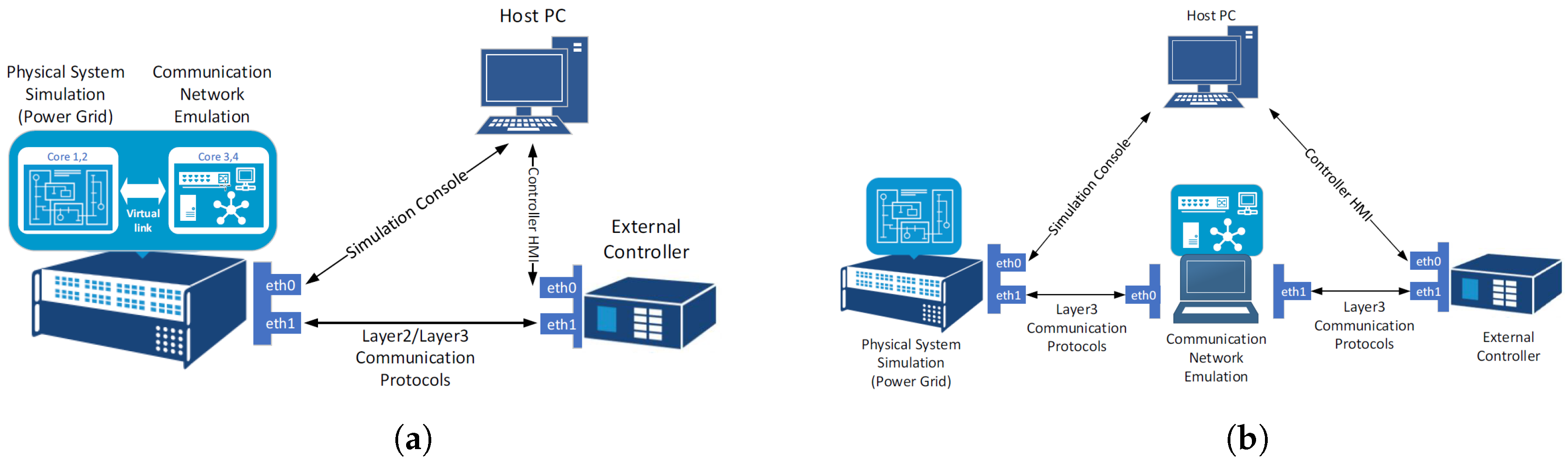

5.2.1. Asynchronous Integration of RTSM with Co-Simulation Platforms

- Lablink: Simulation Message Bus (SMB) based Implementation

- OpSim: Representational State Transfer (REST) based Implementation

5.2.2. Co-Simulation of Cyber–Physical Systems

5.2.3. SCEPTRE: Suite of Tools Providing an ICS Co-Simulation Environment

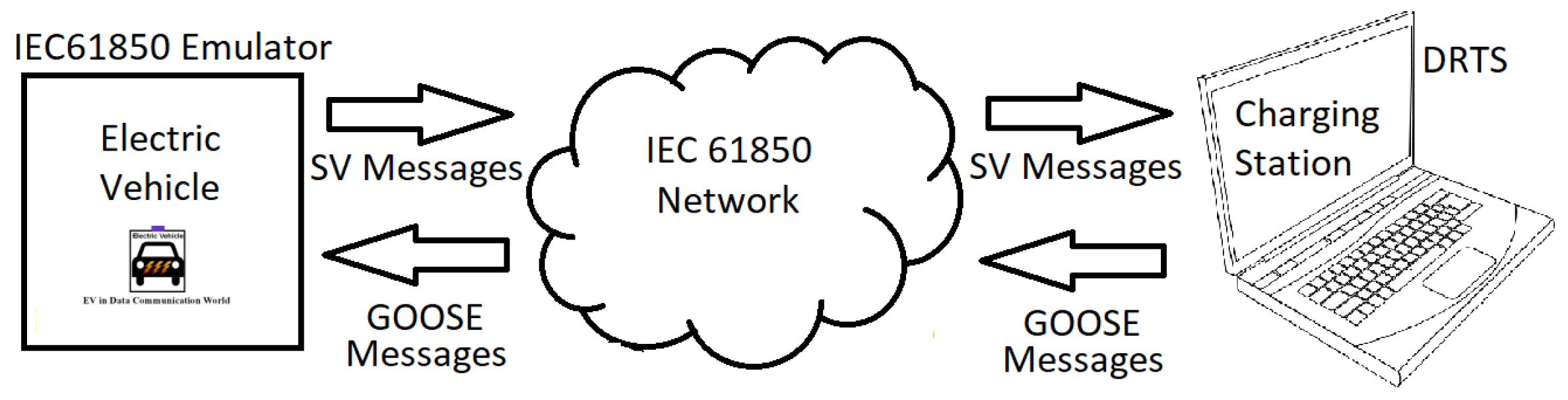

5.2.4. Electrical Vehicle/Charging Station Integration Testing

6. Geographically Distributed HIL and RTS

6.1. Introduction

- Geographically distributed RTS: in this group only RTSMs at both ends are coupled together. Typically, this is used to overcome large simulation complexity that would require large RT resources that might not be available in a single test site. This has been the most common type of GD simulations reported in the literature [67,68,69,70,71].

- Geographically distributed HIL experiments: this group involves configurations where hardware is coupled remotely to a RTS. By using this configuration, the testing of hardware equipment (controllers or power components) in a system environment when no RTS is available at the premises is made possible. The involvement of hardware in this group requires careful consideration based on the interfacing method and communications [72,73,74,75,76], as discussed in Section 2.

6.1.1. Interfacing GD Simulations

6.1.2. Communications

6.2. Reported Experiences and Activities from SIRFN ALTM Members

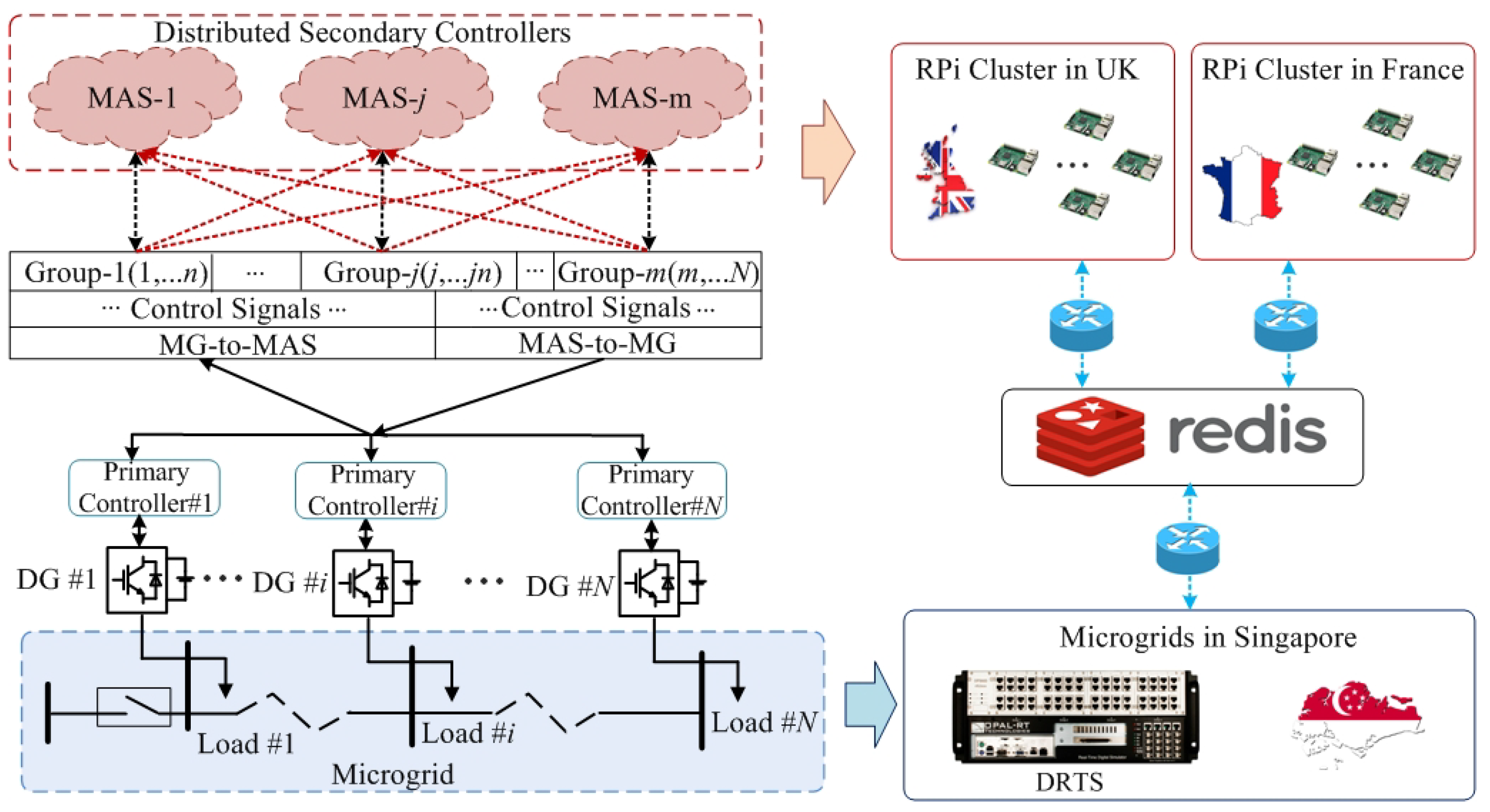

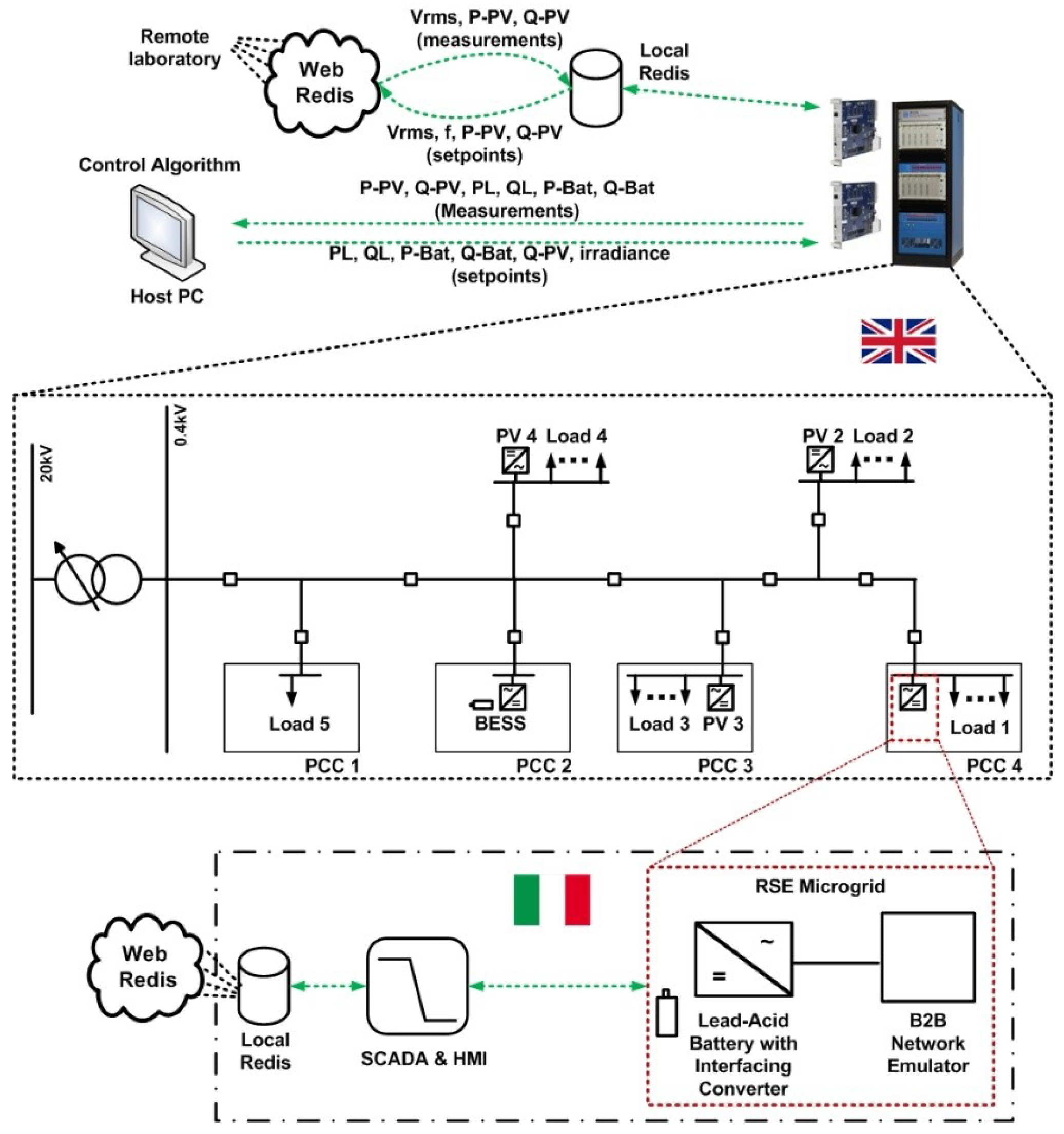

6.2.1. Geographically Distributed CHIL for Advanced Validation of a Distributed Control Algorithm

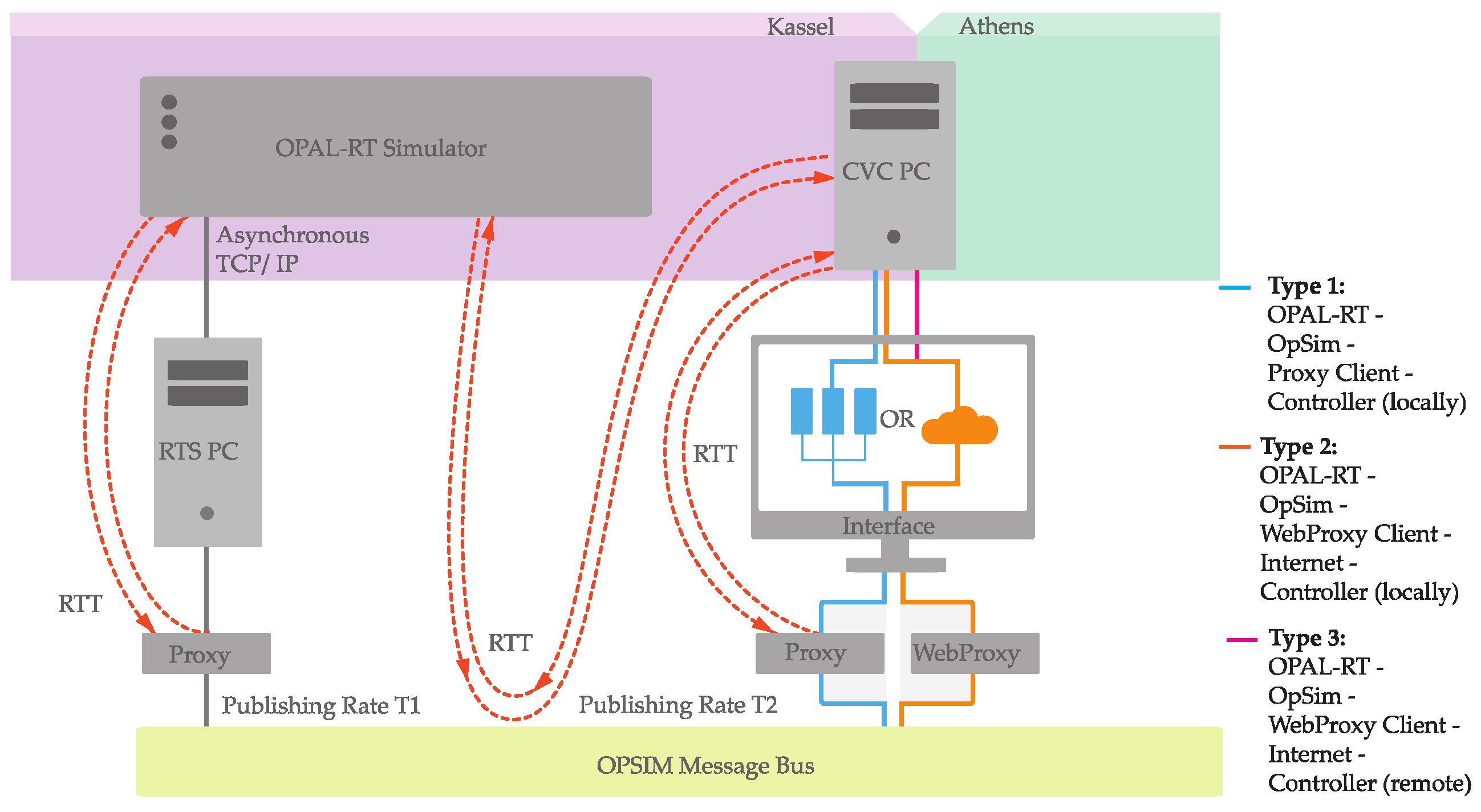

6.2.2. Delay Assessment for Geographically Distributed CHIL Experiment

6.2.3. Geographically Distributed PHIL for Testing of a Voltage Controller

6.2.4. Global RT Super Lab Demonstration

6.3. Future Outlook

- With a number of different interface algorithms, signal transformations, and communication protocols being reported, there is a lack of formalization or guidance available for selection in regards to application. A set of combinations need to be appraised for applications such as transient studies, dynamic studies and steady state evaluations.

- Recognizing that the communications delays are dominated by the non-deterministic characteristic of the Internet, options such as use of dedicated bandwidth should be explored.

- In distinction to a monolithic PHIL simulation where a DuT is connected to a RTSM, the GD simulation presents a significant challenge in determining system partitioning. The optimal approach to split a system for simulation over the GD simulation requires further assessment.

- With the number of subsystems within one GD simulation expected to increase, where more than two research infrastructures are expected to be interconnected, a streamlined facilitation of initialization is required. A lot of work for co-simulation setups has been reported and their applicability for GD simulation needs to be explored.

7. Industrial Experiences and HIL in Standardized Testing

7.1. Introduction

7.2. Reported Experiences and Activities from SIRFN ALTM Members

7.2.1. Compliance Testing of a Hybrid UPS According to JEC2433-2016

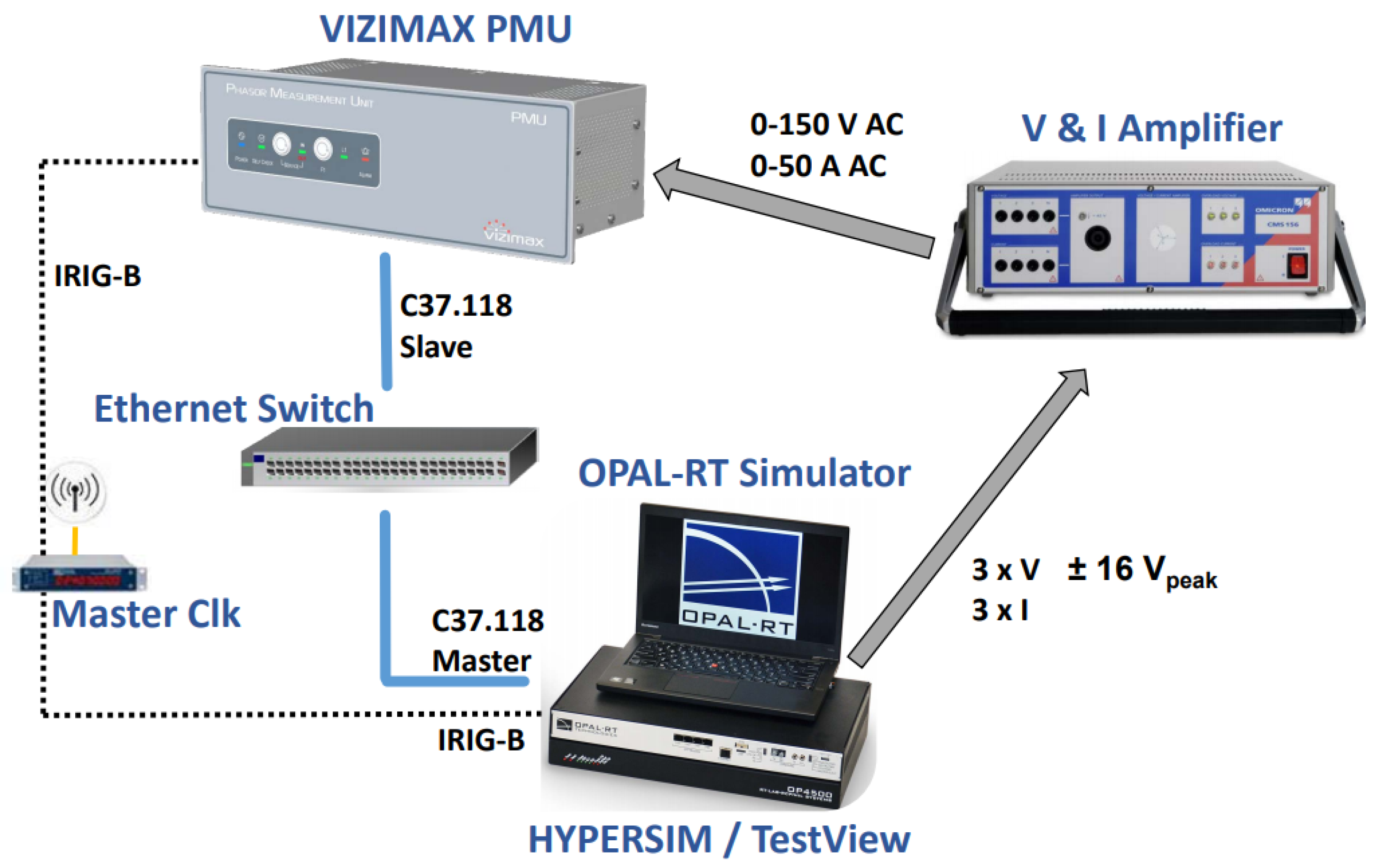

7.2.2. Development of a PMU Pre-Certification Platform

7.2.3. Factory Acceptance Tests Based on HIL Testing

7.2.4. System Validation Platform HIL Based Grid Code Testing Aspects of DER Inverter

7.2.5. HIL for Marine Electrical Power Systems (MEPS)

8. Summary of Testing Methods and Configurations

9. Conclusions and Future Outlook

Author Contributions

Funding

Acknowledgments

- Fraunhofer IEE contributions are supported by the European Community’s Horizon 2020 Program (H2020/2014–2020) under the project “ERIGrid” (Grant Agreement No.654113), and by the German Ministry for Economic Affairs and Energy (BMWi) and the Projekträger Jülich (PTJ) within the project “Netzregelung 2.0 - Regelung und Stabilität im stromrichter-dominierten Verbundnetz” (FKZ0350023A).

- Sandia National Laboratories is a multimission laboratory managed and operated by National Technology and Engineering Solutions of Sandia, LLC, a wholly owned subsidiary of Honeywell International, Inc., for the U.S. Department of Energy’s National Nuclear Security Administration under contract DE-NA0003525. Its contributions to this work are supported by the U.S. Department of Energy Office of International Affairs.

- National Institute of Advanced Industrial Science and Technology (AIST) contributions to this work are supported by the Ministry of Economy, Trade and Industry (METI).

- CanmetENERGY is a federal research laboratory in Canada; financial support for this research work was provided by Natural Resources Canada (NRCan) through the Program on Energy Research and Development (PERD) in the framework of REN-2 Smart Grid and Microgrid Control for Resilient Power Systems Project.

- Korea Electrotechnology Research Institute (KERI) participation was supported by the Korea Institute of Energy Technology Evaluation and Planning (KETEP) and the Ministry of Trade, Industry & Energy (MOTIE) of Republic of Korea (No.20178530000210).

- University of Strathclyde and Technical University of Denmark (DTU) contributions are supported by the European Community’s Horizon 2020 Program (H2020/2014–2020) under the project “ERIGrid” (Grant Agreement No. 654113).

- Zurich University of Applied Science (ZHAW), Institute of Energy Systems and Fluid Engineering (IEFE) contributions are supported by the Swiss Federal Office of Energy (SFOE) and activities of the Swiss Centre for Competence in Energy Research on the Future Swiss Electrical Infrastructure (SCCER-FURIES), which is financially supported by the Swiss Innovation Agency (Innosuisse - SCCER program).

- The participation of AIT within ISGAN-SIRFN is funded in the frame of the IEA Research Cooperation program by the Austrian Federal Ministry for Climate Action, Environment, Energy, Mobility, Innovation and Technology (FFG no. 870646). The development of the AIT SGC was supported by the Austrian Ministry for Transport, Innovation and Technology (bmvit) and the Austrian Research Promotion Agency (FFG) under the “Energy Research Program 2015” in the SPONGE project (FFG no.848915).

- Contributions from Power Grid Corporation of India Limited are the part of research work carried out at POWERGRID Advanced Research & Technology Centre (PARTeC) located at Manesar. PARTeC is the R&D establishment of Power Grid Corporation of India Ltd.

Conflicts of Interest

Abbreviations

| AC | Alternate Current |

| ALTM | Advanced Laboratory Testing Methods |

| CHIL | Controller Hardware-in-the-Loop |

| DAS | Data Acquisition System |

| DC | Direct Current |

| DER | Distributed Energy Resources |

| DIM | Damping Impedance Method |

| DuT | Device Under Test |

| EMT | Electro-magnetic transients |

| GD | Geographically Distributed |

| HIL | Hardware-in-the-Loop |

| IA | Interface Algorithm |

| ITM | Ideal Transformer Method |

| MG | Microgrid |

| MGC | Microgrid Controller |

| PA | Power Amplifier |

| PDC | Phasor Data Concentrator |

| PHIL | Power Hardware-in-the-Loop |

| PMU | Phasor Measurement Unit |

| PSIL | Power System-in-the-Loop |

| RCP | Rapid Control Prototyping |

| RMS | Root Mean Square |

| RT | Real-time |

| RTS | Real-Time Simulation |

| RTSM | Real-Time Simulation Machine |

| WAC | Wide Area Control |

References

- Brandl, R. Operational range of several interface algorithms for different power hardware-in-the-loop setups. Energies 2017, 10, 1946. [Google Scholar] [CrossRef]

- Lauss, G.F. Interfacing challenges in PHIL simulations for investigations on P-Q controls of grid connected generation units in electric power systems. IFAC-PapersOnLine 2017, 50, 10964–10970. [Google Scholar] [CrossRef]

- Brandl, R.; Montoya, J.; Strauss-Mincu, D.; Calin, M. Power System-in-the-Loop testing concept for holistic system investigations. In Proceedings of the 2018 IEEE International Conference on Industrial Electronics for Sustainable Energy Systems (IESES), Hamilton, New Zealand, 31 January–2 February 2018; pp. 560–565. [Google Scholar] [CrossRef]

- Ebe, F.; Idlbi, B.; Stakic, D.E.; Chen, S.; Kondzialka, C.; Casel, M.; Heilscher, G.; Seitl, C.; Bründlinger, R.; Strasser, T.I. Comparison of power hardware-in-the-loop approaches for the testing of smart grid controls. Energies 2018, 11, 3381. [Google Scholar] [CrossRef]

- Stanev, R.; Krusteva, A.; Tornelli, C.; Sandroni, C. A quasi-dynamic approach for slow dynamics time domain analysis of electrical networks with distributed energy ressources. Proc. Tech. Univ. Sofia 2013, 63, 273–281. [Google Scholar]

- Andrén, F.; Lehfuss, F.; Jonke, P.; Strasser, T.; Rikos, E.; Kotsampopoulos, P.; Moutis, P.; Belloni, F.; Tornelli, C.; Sandroni, C.; et al. DERri Common Reference Model for Distributed Energy Resources—modeling scheme, reference implementations and validation of results. Elektrotechnik Inf. 2014, 121, 378–385. [Google Scholar] [CrossRef]

- Langston, J.; Schoder, K.; Steurer, M.; Edrington, C.; Roberts, R.G. Analysis of Linear Interface Algorithms for Power Hardware- in - the- Loop Simulation. In Proceedings of the IECON 2018—44th Annual Conference of the IEEE Industrial Electronics Society, Washington, DC, USA, 21–23 October 2018; pp. 4005–4012. [Google Scholar]

- Paran, S.; Edrington, C.S. Improved power hardware in the loop interface methods via impedance matching. In Proceedings of the 2013 IEEE Electric Ship Technologies Symposium (ESTS), Arlington, VA, USA, 22–24 April 2013; pp. 342–346. [Google Scholar]

- Ren, W.; Steurer, M.; Baldwin, T.L. Improve the Stability and the Accuracy of Power Hardware-in-the-Loop Simulation by Selecting Appropriate Interface Algorithms. IEEE Trans. Ind. Appl. 2007, 44, 1286–1294. [Google Scholar] [CrossRef]

- Paran, S. Utilization of Impedance Matching to Improve Damping Impedance Method-Based Phil Interface. Master’s Thesis, Florida State University, Tallahassee, FL, USA, 2013. [Google Scholar]

- Crăciun, B.; Kerekes, T.; Séra, D.; Teodorescu, R.; Brandl, R.; Degner, T.; Geibel, D.; Hernandez, H. Grid integration of PV power based on PHIL testing using different interface algorithms. In Proceedings of the IECON 2013—39th Annual Conference of the IEEE Industrial Electronics Society, Vienna, Austria, 10–13 November 2013; pp. 5380–5385. [Google Scholar]

- Summers, A.; Hernandez-Alvidrez, J.; Darbali-Zamora, R.; Reno, M.J.; Johnson, J.; Gurule, N.S. Comparison of Ideal Transformer Method and Damping Impedance Method for PV Power-Hardware-In-The-Loop Experiments. In Proceedings of the 2019 IEEE 46th Photovoltaic Specialists Conference (PVSC), Chicago, IL, USA, 16–21 June 2019; pp. 2989–2996. [Google Scholar]

- Stanev, R. A control strategy and operation paradigm for electrical power systems with electric vehicles and distributed energy ressources. In Proceedings of the 2016 19th International Symposium on Electrical Apparatus and Technologies (SIELA), Bourgas, Bulgaria, 29 May–1 June 2016; pp. 1–4. [Google Scholar]

- Strasser, T.; de Jong, E.C.W.; Sosnina, M. (Eds.) European Guide to Power System Testing: The ERIGrid Holistic Approach for Evaluating Complex Smart Grid Configurations, 1st ed.; Springer International Publishing AG: Basel, Switzerland, 2020. [Google Scholar] [CrossRef]

- Strasser, T.I.; Babazadeh, D.; Heussen, K.; Pelegrino, L.; Arnold, G.; Nguyen, V.H.; Palensky, P.; Kotsampopoulos, P.; Kontou, A.; Hatziargyriou, N.; et al. Virtual ERIGrid Final Conference. 2020. Available online: https://zenodo.org/record/3769631#.XvBJEXERXIU (accessed on 27 April 2020).

- Vogel, S.; Stevic, M.; Nguyen, H.T.; Jensen, T.V.; Heussen, K.; Rajkumar, V.S.; Monti, A. Distributed Power Hardware-in-the-Loop Testing using a Grid-forming Converter as Power Interface. Energies 2020, 1–22, submitted. [Google Scholar]

- Papaspiliotopoulos, V.A.; Korres, G.N.; Kleftakis, V.A.; Hatziargyriou, N.D. Hardware-In-the-Loop Design and Optimal Setting of Adaptive Protection Schemes for Distribution Systems With Distributed Generation. IEEE Trans. Power Deliv. 2017, 32, 393–400. [Google Scholar] [CrossRef]

- Jennett, K.I.; Booth, C.D.; Coffele, F.; Roscoe, A.J. Investigation of the sympathetic tripping problem in power systems with large penetrations of distributed generation. IET Gener. Transm. Distrib. 2015, 9, 379–385. [Google Scholar] [CrossRef]

- Coffele, F.; Booth, C.; Dyśko, A.; Burt, G. Quantitative analysis of network protection blinding for systems incorporating distributed generation. IET Gener. Transm. Distrib. 2012, 6, 1218–1224. [Google Scholar] [CrossRef]

- Montoya, L.A.; Montenegro, D.; Ramos, G. Adaptive protection testbed using real time and hardware-in-the-loop simulation. In Proceedings of the 2013 IEEE Grenoble Conference, Grenoble, France, 16–20 June 2013; pp. 1–4. [Google Scholar]

- Mishra, P.; Pradhan, A.K.; Bajpai, P. Adaptive Relay Setting for Protection of Distribution System with Solar PV. In Proceedings of the 2018 20th National Power Systems Conference (NPSC), Tiruchirappalli, India, 14–16 December 2018; pp. 1–5. [Google Scholar]

- Jain, R.; Lubkeman, D.L.; Lukic, S.M. Dynamic Adaptive Protection for Distribution Systems in Grid-Connected and Islanded Modes. IEEE Trans. Power Deliv. 2019, 34, 281–289. [Google Scholar] [CrossRef]

- Safari-Shad, N.; Franklin, R.; Negahdari, A.; Toliyat, H.A. Adaptive 100% Injection-Based Generator Stator Ground Fault Protection with Real-Time Fault Location Capability. IEEE Trans. Power Deliv. 2018, 33, 2364–2372. [Google Scholar] [CrossRef]

- Darbali-Zamora, R.; Quiroz, J.E.; Hernández-Alvidrez, J.; Johnson, J.; Ortiz-Rivera, E.I. Validation of a Real-Time Power Hardware-in-the-Loop Distribution Circuit Simulation with Renewable Energy Sources. In Proceedings of the 2018 IEEE 7th World Conference on Photovoltaic Energy Conversion (WCPEC) (A Joint Conference of 45th IEEE PVSC, 28th PVSEC 34th EU PVSEC), Waikoloa Village, HI, USA, 10–15 June 2018; pp. 1380–1385. [Google Scholar] [CrossRef]

- Darbali-Zamora, R.; Hernandez-Alvidrez, J.; Summers, A.; Gurule, N.S.; Reno, M.J.; Johnson, J. Distribution Feeder Fault Comparison Utilizing a Real-Time Power Hardware-in-the-Loop Approach for Photovoltaic System Applications. In Proceedings of the 2019 IEEE 46th Photovoltaic Specialists Conference (PVSC), Chicago, IL, USA, 16–21 June 2019; pp. 2916–2922. [Google Scholar] [CrossRef]

- Li, S.; Hilbrich, D.; Bonetti, A.; Paquin, J.N. Evolution to model-based testing of protection systems and the publication of the IEC 60255-121 standard. In Proceedings of the 8th PAC World Conference, Wroclaw, Poland, 26–29 June 2017. [Google Scholar]

- Stifter, M.; Cordova, J.; Kazmi, J.; Arghandeh, R. Real-time simulation and hardware-in-the-loop testbed for distribution synchrophasor applications. Energies 2018, 11, 876. [Google Scholar] [CrossRef]

- Aleem, S.K.A.; Aftab, M.A.; Hussain, S.M.S.; Ali, I.; Ganesh, V.; Ustun, T.S. Real-Time Microgrid Synchronization using Phasor Measurement Units. In Proceedings of the 2019 IEEE International Conference on Intelligent Systems and Green Technology (ICISGT), Visakhapatnam, India, 29–30 June 2019; pp. 19–193. [Google Scholar]

- IEEE. IEEE Standard for Interconnecting Distributed Resources with Electric Power Systems; IEEE Std 1547-2003; IEEE: Piscataway, NJ, USA, 28 July 2003; pp. 1–28. [Google Scholar] [CrossRef]

- Baltensperger, D.; Dobrowolski, J.; Obushevs, A.; Segundo Sevilla, F.; Korba, P. Scaling Version of Kundur’s Two-Areas System for Electromechanical Oscillations Representation. In Proceedings of the 2020 International Symposium on Power Electronics, Electrical Drives, Automation and Motions (SPEEDAM), Sorrento, Italy, 24–26 June 2020; pp. 1–7. [Google Scholar]

- Kundur, P.; Balu, N.J.; Lauby, M.G. Power System Stability and Control; McGraw-hill: New York, NY, USA, 1994; Volume 7. [Google Scholar]

- Salcedo, R.; Corbett, E.; Smith, C.; Limpaecher, E.; Rekha, R.; Nowocin, J.; Lauss, G.; Fonkwe, E.; Almeida, M.; Gartner, P.; et al. Banshee distribution network benchmark and prototyping platform for hardware-in-the-loop integration of microgrid and device controllers. J. Eng. 2019, 2019, 5365–5373. [Google Scholar] [CrossRef]

- Kikusato, H.; Ustun, T.S.; Suzuki, M.; Sugahara, S.; Hashimoto, J.; Otani, K.; Shirakawa, K.; Yabuki, R.; Watanabe, K.; Shimizu, T. Microgrid Controller Testing Using Power Hardware-in-the-Loop. Energies 2020, 13, 2044. [Google Scholar] [CrossRef]

- Kikusato, H.; Ustun, T.S.; Suzuki, M.; Sugahara, S.; Hashimoto, J.; Otani, K.; Shirakawa, K.; Yabuki, R.; Watanabe, K.; Shimizu, T. Integrated Power Hardware-in-the-Loop and Lab Testing for Microgrid Controller. In Proceedings of the 2019 IEEE Innovative Smart Grid Technologies—Asia (ISGT Asia), Chengdu, China, 21–24 May 2019; pp. 2743–2747. [Google Scholar] [CrossRef]

- Ustun, T.S.; Konishi, H.; Hashimoto, J.; Otani, K. Hardware-in-the-loop simulation based testing of power conditioning systems. In Proceedings of the 2018 IEEE International Conference on Industrial Electronics for Sustainable Energy Systems (IESES), Hamilton, New Zealand, 31 January–2 February 2018; pp. 546–551. [Google Scholar]

- Brandl, R.; Kotsampopoulos, P.; Lauss, G.; Maniatopoulos, M.; Nuschke, M.; Montoya, J.; Strasser, T.I.; Strauss-Mincu, D. Advanced Testing Chain Supporting the Validation of Smart Grid Systems and Technologies. In Proceedings of the 2018 IEEE Workshop on Complexity in Engineering (COMPENG), Florence, Italy, 10–12 October 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Sun, C.; Joos, G.; Ali, S.Q.; Paquin, J.N.; Rangel, C.M.; Jajeh, F.A.; Novickij, I.; Bouffard, F. Design and Real-time Implementation of a Centralized Microgrid Control System with Rule-based Dispatch and Seamless Transition Function. IEEE Trans. Ind. Appl. 2020, 56, 3168–3177. [Google Scholar] [CrossRef]

- Al Jajeh, M.F.; Qaseem Ali, S.; Joos, G.; Novickij, I. Islanding of a Microgrid Using a Distributed Multi-agent Control System. In Proceedings of the 2019 IEEE Energy Conversion Congress and Exposition (ECCE), Baltimore, MD, USA, 29 September–3 October 2019; IEEE: Baltimore, MD, USA, 2019; pp. 6286–6293. [Google Scholar] [CrossRef]

- Du, Y.; Tu, H.; Lukic, S.; Lubkeman, D.; Dubey, A.; Karsai, G. Development of a Controller Hardware-in-the-Loop Platform for Microgrid Distributed Control Applications. In Proceedings of the 2018 IEEE Electronic Power Grid (eGrid), Charleston, SC, USA, 12–14 November 2018; IEEE: Charleston, SC, USA, 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Tu, H.; Du, Y.; Yu, H.; Dubey, A.; Lukic, S.; Karsai, G. Resilient Information Architecture Platform for the Smart Grid (RIAPS): A Novel Open-Source Platform for Microgrid Control. IEEE Trans. on Ind. Electron. 2019. [Google Scholar] [CrossRef]

- Razeghi, G.; Gu, F.; Neal, R.; Samuelsen, S. A generic microgrid controller: Concept, testing, and insights. Appl. Energy 2018, 229, 660–671. [Google Scholar] [CrossRef]

- Bagudai, S.K.; Ray, O.; Samantaray, S.R. Evaluation of Control Strategies within Hybrid DC/AC Microgrids using Typhoon HIL. In Proceedings of the 2019 8th International Conference on Power Systems (ICPS), Jaipur, India, 20–22 December 2019; pp. 1–6. [Google Scholar]

- Nguyen, T.T.; Yoo, H.J.; Kim, H.M. A Droop Frequency Control for Maintaining Different Frequency Qualities in a Stand-Alone Multimicrogrid System. IEEE Trans. Sustain. Energy 2018, 9, 599–609. [Google Scholar] [CrossRef]

- Yoo, H.J.; Nguyen, T.T.; Kim, H.M. Consensus-Based Distributed Coordination Control of Hybrid AC/DC Microgrids. IEEE Trans. Sustain. Energy 2020, 11, 629–639. [Google Scholar] [CrossRef]

- Johnson, J.; Ablinger, R.; Bründlinger, R.; Fox, B.; Flicker, J. Design and Evaluation of SunSpec-Compliant Smart Grid Controller with an Automated Hardware-in-the-Loop Testbed. Technol. Econ. Smart Grids Sustain. Energy 2017, 2, 16. [Google Scholar] [CrossRef]

- Johnson, J.; Ablinger, R.; Bruendlinger, R.; Fox, B.; Flicker, J. Interconnection Standard Grid-Support Function Evaluations Using an Automated Hardware-in-the-Loop Testbed. IEEE J. Photovolt. 2018, 8, 565–571. [Google Scholar] [CrossRef]

- Ninad, N.; Apablaza-Arancibia, E.; Bui, M.; Johnson, J.; Gonzalez, S.; Moore, T. Development and Evaluation of Open-Source IEEE 1547.1 Test Scripts for Improved Solar Integration. In Proceedings of the EU PVSEC 2019: 36th European Photovoltaic Solar Energy Conference and Exhibition, Marseille, France, 9–13 September 2019. [Google Scholar]

- Bründlinger, R.; Stöckl, J.; Miletic, Z.; Ablinger, R.; Leimgruber, F.; Johnson, J.; Shi, J. Pre-certification of Grid-Code Compliance for Solar Inverters with an Automated Controller-Hardware-In-The-Loop Test Environment. In Proceedings of the 8th Solar Integration Workshop, Stockholm, Sweden, 16– 17 October 2018. [Google Scholar]

- Stöckl, J.; Miletic, Z.; Bründlinger, R.; Schulz, J.; Ablinger, R.; Tremmel, W.; Johnson, J. Pre-Evaluation of Grid Code Compliance for Power Electronics Inverter Systems in Low-Voltage Smart Grids. In Proceedings of the 20th European Conference on Power Electronics and Applications (EPE’18 ECCE Europe), Riga, Latvia, 17–21 September 2018. [Google Scholar]

- EPRI. Performance Assessment of Inverter on-Board Islanding Detection with Multiple Testing Platforms; Final Project Report, Product Id: 3002014051; Technical Report of Electric Power Research Institute: Palo Alto, CA, USA, 2020. [Google Scholar]

- EPRI. Risk of Islanding Study Utilizing Hardware in the Loop; Technical Update Report, Product Id: 3002017225; Technical Report of Electric Power Research Institute: Palo Alto, CA, USA, 2020. [Google Scholar]

- EPRI. Inverter On-board Detection Methods to Prevent Unintended Islanding: Generic Response Models; Technical Update Report, Product Id: 3002014049; Technical Report of Electric Power Research Institute: Palo Alto, CA, USA, 2018. [Google Scholar]

- Steurer, M.M.; Schoder, K.; Faruque, O.; Soto, D.; Bosworth, M.; Sloderbeck, M.; Bogdan, F.; Hauer, J.; Winkelnkemper, M.; Schwager, L.; et al. Multifunctional megawatt-scale medium voltage DC test bed based on modular multilevel converter technology. IEEE Trans. Transp. Electrif. 2016, 2, 597–606. [Google Scholar] [CrossRef]

- Langston, J.; Schoder, K.; Steurer, M.; Faruque, O.; Hauer, J.; Bogdan, F.; Bravo, R.; Mather, B.; Katiraei, F. Power hardware-in-the-loop testing of a 500 kW photovoltaic array inverter. In Proceedings of the IECON 2012—38th Annual Conference on IEEE Industrial Electronics Society, Montreal, QC, Canada, 25–28 October 2012; pp. 4797–4802. [Google Scholar]

- Nuschke, M.; Brandl, R.; Montoya, J. Entwicklung und Test eines Microgrid Controllers. RET.Con 2018: 1. Regenerative Energietechnik-Konferenz. 2018, pp. 172–180. Available online: https://www.hs-nordhausen.de/fileadmin/daten/aktuelles/veranstaltungen/ret.con/tagungsband_retcon_2018_web.pdf (accessed on 20 April 2020).

- IEEE Std 2030.8-2018 IEEE Standard for the Testing of Microgrid Controllers; IEEE: Piscataway, NJ, USA, 24 August 2018; pp. 1–42. [CrossRef]

- Hashimoto, J.; Ustun, T.S.; Otani, K. Smart Inverter Functionality Testing for Battery Energy Storage Systems. Smart Grid Renew. Energy 2017, 8, 337–350. [Google Scholar] [CrossRef][Green Version]

- Vogt, M.; Marten, F.; Braun, M. A survey and statistical analysis of smart grid co-simulations. Appl. Energy 2018, 222, 67–78. [Google Scholar] [CrossRef]

- Gavriluta, C.; Lauss, G.; Strasser, T.I.; Montoya, J.; Brandl, R.; Kotsampopoulos, P. Asynchronous Integration of Real-Time Simulators for HIL-based Validation of Smart Grids. In Proceedings of the IECON 2019—45th Annual Conference of the IEEE Industrial Electronics Society, Lisbon, Portugal, 14–17 October 2019; Volume 1, pp. 6425–6431. [Google Scholar] [CrossRef]

- Vogt, M.; Marten, F.; Montoya, J.; Töbermann, C.; Braun, M. A REST based co-simulation interface for distributed simulations. In Proceedings of the 2019 IEEE Milan PowerTech, Milan, Italy, 23–27 June 2019; pp. 1–6. [Google Scholar] [CrossRef]

- Zhang, L.; Li, S.; Wihl, L.; Kazemtabrizi, M.; Ali, S.Q.; Paquin, J.N.; Labbé, S. Cybersecurity Study of Power System Utilizing Advanced CPS Simulation Tools. In Proceedings of the 2019 PAC World Americas Conference, Raleigh, NC, USA, 19–22 August 2019; p. 13. [Google Scholar]

- Bian, D.; Kuzlu, M.; Pipattanasomporn, M.; Rahman, S.; Wu, Y. Real-time co-simulation platform using OPAL-RT and OPNET for analyzing smart grid performance. In Proceedings of the 2015 IEEE Power & Energy Society General Meeting, Denver, CO, USA, 26–30 July 2015; IEEE: Denver, CO, USA, 2015; pp. 1–5. [Google Scholar] [CrossRef]

- Armendariz, M.; Chenine, M.; Nordstrom, L.; Al-Hammouri, A. A co-simulation platform for medium/low voltage monitoring and control applications. In Proceedings of the ISGT 2014, Washington, DC, USA, 19–22 February 2014; IEEE: Washington, DC, USA, 2014; pp. 1–5. [Google Scholar] [CrossRef]

- Johnson, J.; Onunkwo, I.; Cordeiro, P.; Wright, B.; Jacobs, N.; Lai, C. Assessing DER Network Cybersecurity Defenses in a Power-Communication Co-Simulation Environment. IET Cyber-Phys. Syst. Theory Appl. 2020. [Google Scholar] [CrossRef]

- Onunkwo, I.; Wright, B.; Cordeiro, P.; Jacobs, N.; Lai, C.; Johnson, J.; Hutchins, T.; Stout, W.; Chavez, A.; Richardson, B.T.; et al. Cybersecurity Assessments on Emulated DER Communication Networks; Technical Report SAND2019-2406; Sandia National Laboratories: Albuquerque, NM, USA, 2019. [Google Scholar]

- Ustun, T.S.; Hussain, S.M.S. Implementation of IEC 61850 Based Integrated EV Charging Management in Smart Grids. In Proceedings of the 2019 IEEE Vehicle Power and Propulsion Conference (VPPC), Hanoi, Vietnam, 14–17 October 2019; pp. 1–5. [Google Scholar] [CrossRef]

- Faruque, M.O.; Dinavahi, V.; Sloderbeck, M.; Steurer, M. Geographically distributed thermo-electric co-simulation of all-electric ship. In Proceedings of the 2009 IEEE Electric Ship Technologies Symposium, Baltimore, MD, USA, 20–22 April 2009; pp. 36–43. [Google Scholar]

- Vogel, S.; Stevic, M.; Kadavil, R.; Mohanpurkar, M.; Koralewicz, P.; Gevorgian, V.; Hovsapian, R.; Monti, A. Distributed Real-Time Simulation and its Applications to Wind Energy Research. In Proceedings of the 2018 IEEE International Conference on Probabilistic Methods Applied to Power Systems (PMAPS), Boise, ID, USA, 24–28 June 2018; pp. 1–6. [Google Scholar]

- Vogel, S.; Rajkumar, V.S.; Nguyen, H.T.; Stevic, M.; Bhandia, R.; Heussen, K.; Palensky, P.; Monti, A. Improvements to the Co-simulation Interface for Geographically Distributed Real-time Simulation. In Proceedings of the IECON 2019—45th Annual Conference of the IEEE Industrial Electronics Society, Lisbon, Portugal, 14–17 October 2019; Volume 1, pp. 6655–6662. [Google Scholar]

- Ravikumar, K.G.; Schulz, N.N.; Srivastava, A.K. Distributed simulation of power systems using real-time digital simulator. In Proceedings of the 2009 IEEE/PES Power Systems Conference and Exposition, Seattle, WA, USA, 15–18 March 2009; pp. 1–6. [Google Scholar]

- Stevic, M.; Estebsari, A.; Vogel, S.; Pons, E.; Bompard, E.; Masera, M.; Monti, A. Multi-site European framework for real-time co-simulation of power systems. IET Gener. Transm. Distrib. 2017, 11, 4126–4135. [Google Scholar] [CrossRef]

- Wang, Y.; Nguyen, T.L.; Syed, M.H.; Xu, Y.; Nguyen, V.H.; Guillo-Sansano, E.; Burt, G.; Tran, Q.T.; Caire, R. A Distributed Control Scheme of Microgrids in Energy Internet and Its Multi-Site Implementation. IEEE Trans. Ind. Inform. 2020. [Google Scholar] [CrossRef]

- Lundstrom, B.; Palmintier, B.; Rowe, D.; Ward, J.; Moore, T. Trans-oceanic remote power hardware-in-the-loop: Multi-site hardware, integrated controller, and electric network co-simulation. IET Gener. Transm. Distrib. 2017, 11, 4688–4701. [Google Scholar] [CrossRef]

- Palmintier, B.; Lundstrom, B.; Chakraborty, S.; Williams, T.; Schneider, K.; Chassin, D. A Power Hardware-in-the-Loop Platform with Remote Distribution Circuit Cosimulation. IEEE Trans. Ind. Electron. 2015, 62, 2236–2245. [Google Scholar] [CrossRef]

- Montoya, J.; Brandl, R.; Vogt, M.; Marten, F.; Maniatopoulos, M.; Fabian, A. Asynchronous Integration of a Real-Time Simulator to a Geographically Distributed Controller Through a Co-Simulation Environment. In Proceedings of the IECON 2018—44th Annual Conference of the IEEE Industrial Electronics Society, Washington, DC, USA, 21–23 October 2018; pp. 4013–4018. [Google Scholar] [CrossRef]

- Monti, A.; Stevic, M.; Vogel, S.; De Doncker, R.W.; Bompard, E.; Estebsari, A.; Profumo, F.; Hovsapian, R.; Mohanpurkar, M.; Flicker, J.D.; et al. A Global Real-Time Superlab: Enabling High Penetration of Power Electronics in the Electric Grid. IEEE Power Electron. Mag. 2018, 5, 35–44. [Google Scholar] [CrossRef]

- Guillo-Sansano, E.; Roscoe, A.J.; Burt, G.M. Harmonic-by-harmonic time delay compensation method for PHIL simulation of low impedance power systems. In Proceedings of the 2015 International Symposium on Smart Electric Distribution Systems and Technologies (EDST), Vienna, Austria, 8–11 September 2015; pp. 560–565. [Google Scholar]

- Guillo-Sansano, E.; Syed, M.; Roscoe, A.J.; Burt, G.; Coffele, F. Characterization of Time Delay in Power Hardware in the Loop Setups. IEEE Trans. Ind. Electron. 2020. [Google Scholar] [CrossRef]

- Wiezorek, C.; Parisio, A.; Kyntäjä, T.; Elo, J.; Gronau, M.; Johannson, K.H.; Strunz, K. Multi-location virtual smart grid laboratory with testbed for analysis of secure communication and remote co-simulation: Concept and application to integration of Berlin, Stockholm, Helsinki. IET Gener. Transm. Distrib. 2017, 11, 3134–3143. [Google Scholar] [CrossRef]

- Strasser, T.I.; Pellegrino, L.; Degefa, M.Z.; Lagos, D.; Syed, M. Demonstration of Multi Research Infrastructure Integration Tests. 2019. ERIGrid Webinar. Available online: https://doi.org/10.5281/zenodo.3553979 (accessed on 22 April 2020).

- IEEE. IEEE Approved Draft Standard Conformance Test Procedures for Equipment Interconnecting Distributed Energy Resources with Electric Power Systems and Associated Interfaces; IEEE P1547.1/D9.9; IEEE: Piscataway, NJ, USA, 2020; pp. 1–283. [Google Scholar]

- Paquin, J.N. Synchrophasor Application Studies using Real-Time Simulators. In Proceedings of the 1st International Synchrophasor Symposium, Atlanta, GA, USA, 22–24 March 2016. [Google Scholar]

- Sunspec System Validation Platform (SVP). 2020. Available online: https://sunspec.org/svp/ (accessed on 1 May 2020).

- Johnson, J.; Apablaza-Arancibia, E.; Ninad, N.; Turcotte, D.; Prieur, A.; Ablinger, R.; Brïndlinger, R.; Moore, T.; Heidari, R.; Hashimoto, J.; et al. International Development of a Distributed Energy Resource Test Platform for Electrical and Interoperability Certification. In Proceedings of the 2018 IEEE 7th World Conference on Photovoltaic Energy Conversion (WCPEC) (A Joint Conference of 45th IEEE PVSC, 28th PVSEC 34th EU PVSEC), Waikoloa Village, HI, USA, 10–15 June 2018; pp. 2492–2497. [Google Scholar] [CrossRef]

- Qiu, D.; Paquin, J.N.; Uda, S.; Kashihara, H.; Fukuda, Y.; Okazaki, N.; Nishimura, S.; Kawasaki, Y.; Gao, F.; Ali, S.Q. Design and HIL Testing of a Hybrid UPS with Seamless Transition. In Proceedings of the 2019 IEEE Power & Energy Society General Meeting (PESGM), Atlanta, GA, USA, 4–8 August 2019; IEEE: Atlanta, GA, USA, 2019; pp. 1–5. [Google Scholar] [CrossRef]

- Paré, D.; Turmel, G.; Marcoux, B.; McNabb, D. Validation Tests of The Hypersim Digital Real Time Simulator with a Large AC-DC Network. In Proceedings of the International Conference on Power Systems Transients (IPST2003), New Orleans, LA, USA, 28 September–2 October 2003; p. 6. [Google Scholar]

- Guay, F.; Chiasson, P.A.; Verville, N.; Tremblay, S.; Askvid, P. New Hydro-Québec Real-Time Simulation Interface for HVDC Commissioning Studies. In Proceedings of the International Conference on Power Systems Transients (IPST2017), Seoul, Korea, 26–29 June 2017; p. 8. [Google Scholar]

- Vernay, Y.; Martin, C.; Petesch, D.; Dennetière, S. Hardware in the loop simulations to test SVC performances on the French Grid. In Proceedings of the International Conference on Power Systems Transients (IPST2015), Cavtat, Croatia, 15–18 June 2015; p. 8. [Google Scholar]

- Li, G.; Dong, Y.; Tian, J.; Wang, W.; Li, W.; Belanger, J. Factory acceptance test of a five-terminal MMC control and protection system using hardware-in-the-loop method. In 2015 IEEE Power & Energy Society General Meeting; IEEE: Denver, CO, USA, 2015; pp. 1–5. [Google Scholar] [CrossRef]

- Syed, M.H.; Guillo-Sansano, E.; Avras, A.; Downie, A.; Jennett, K.; Burt, G.M.; Coffele, F.; Rudd, A.; Bright, C. The Role of Experimental Test Beds for the Systems Testing of Future Marine Electrical Power Systems. In Proceedings of the 2019 IEEE Electric Ship Technologies Symposium (ESTS), Washington, DC, USA, 14–16 August 2019; pp. 141–148. [Google Scholar]

- Langston, J.; Steurer, M.; Schoder, K.; Borraccini, J.; Dalessandro, D.; Rumney, T.; Fikse, T. Power hardware-in-the-loop simulation testing of a flywheel energy storage system for shipboard applications. In Proceedings of the 2017 IEEE Electric Ship Technologies Symposium (ESTS), Arlington, VA, USA, 14–17 August 2017; pp. 305–311. [Google Scholar] [CrossRef]

- Sanchez, J.; Wetz, D.; Dong, Q.; Heinzel, J. Integration and study of hardware in the loop diesel generator with a hybrid energy storage module for naval applications. In Proceedings of the 2017 IEEE Electric Ship Technologies Symposium (ESTS), Arlington, VA, USA, 14–17 August 2017; pp. 580–585. [Google Scholar] [CrossRef]

- Strank, S.; Feng, X.; Gattozzi, A.; Wardell, D.; Pish, S.; Herbst, J.; Hebner, R. Experimental test bed to de-risk the navy advanced development model. In Proceedings of the 2017 IEEE Electric Ship Technologies Symposium (ESTS), Arlington, VA, USA, 14–17 August 2017; pp. 352–358. [Google Scholar] [CrossRef]

- UL 1741. Inverters, Converters, Controllers and Interconnection System Equipment for Use with Distributed Energy Resources, 2nd ed.; UL Standard: Northbrook, IL, USA, 2018. [Google Scholar]

- Nuschke, M. Development of a microgrid controller for black start procedure and islanding operation. In Proceedings of the 2017 IEEE 15th International Conference on Industrial Informatics (INDIN), Emden, Germany, 24–26 July 2017; pp. 439–444. [Google Scholar]

| Method | Average Error (%) | Execution Cycle (%) | Major Computation Time (%) |

|---|---|---|---|

| DIM LPF | 27.755 | 62.21 | 49.19 |

| DIM LPF LD | 3.303 | 65.99 | 52.16 |

| ITM LPF | 14.940 | 49.20 | 42.71 |

| ITM LPF LD | 4.507 | 49.46 | 42.99 |

| Fault Position | Case 1—Bus Side CT | Case 2—Line Side CT | |||

|---|---|---|---|---|---|

| % ength | km | Fault Locator | % error | Fault Locator | % error |

| Reading (km) | Reading (km) | ||||

| 5 | 15 | 14.90 | 0.67 | 14.92 | 0.53 |

| 15 | 45 | 44.62 | 0.84 | 44.64 | 0.80 |

| 25 | 75 | 74.17 | 1.11 | 74.68 | 0.43 |

| 35 | 105 | 104.03 | 0.92 | 104.82 | 0.17 |

| 45 | 135 | 133.61 | 1.03 | 135.05 | 0.04 |

| 55 | 165 | 163.06 | 1.18 | 165.64 | 0.39 |

| 65 | 195 | 193.77 | 0.63 | 196.90 | 0.97 |

| 75 | 225 | 223.78 | 0.54 | 228.32 | 1.48 |

| 85 | 255 | 254.02 | 0.38 | 259.89 | 1.92 |

| 95 | 285 | 285.04 | 0.01 | 292.46 | 2.62 |

| Section | Topic | RTS/HIL Type | Interfaces and Protocols | Hardware and Equipment |

|---|---|---|---|---|

| Section 2.2.1 | Power amplifier characterization for RTS | PHIL | Analog I/O | RTSM: OPAL OP5700 PA: AMETEK |

| Section 2.2.2 | Stability and accuracy comparison for different interfacing methods | PHIL [12] | Analog I/O | RTSM: OPAL OP5600 PA: AMETEK RS90 |

| Section 2.2.3 | Quasi-dynamic PHIL | PHIL/ PSIL * | IA: ITM Digital/Soft I/O | RTSM: Workstation PA: Studer XTM 4000, Electroinvent ELDI |

| Section 2.2.4 | Quasi-static PHIL | PHIL/ PSIL * [16] | IA: ITM Digital/Soft I/O | RTSM: RTDS PA: ABB PCS100 SFC |

| Section 3.2.1 | HIL validation of fault locator accuracy in distance protection scheme | CHIL | Analog I/O IEC 61850 9-2 SV | RTSM: RTDS Signal Amp: Omicron |

| Section 3.2.2 | Distance protection relay type testing framework | CHIL [26] | Analog I/O Digital I/O IEC 61850 /60255-121 | RTSM: OPAL OP5600 |

| Section 3.2.3 | Adaptive protection with HIL | PHIL [20,21] | Analog I/O IEC 61850 SV C37.118 GOOSE | RTSM: OPAL OP5600 PA: AMETEK RS90 |

| Section 3.2.4 | Fault modeling and validation between simulation tools | PHIL [25] | IA: ITM, DIM Analog I/O OpenDSS | RTSM: OPAL OP5600 PA: AMETEK RS90 |

| Section 3.2.5 | Wide Area Controller HIL testing for Power Systems Oscillation Damping | CHIL [30] | C37.118 GPS Analog I/O | WAC: Raspberry Pi PMU: NI cRIO Open PMU, NI9467 PDC: SEL-5073 PDC |

| Section 4.2.1 | Integrated PHIL and laboratory testing for microgrid controller | PHIL [33,34] | IA: ITM Analog I/O | RTSM: RTDS NovaCore PA: SanRex 500kVA DAS: Yokogawa WT3000E/WT1800 DuT: NK-EMS Load: SanRex RLC bank |

| Section 4.2.2 | Droop frequency control of stand-alone multi-microgrid system with HIL | CHIL [43] | Analog I/O Digital I/O | RTSM: OPAL OP5600 Control Unit: OP8665 |

| Section 4.2.3 | Microgrid re-synch with PMU measurements | CHIL | C37.118 GPS | RTSM: RTDS/GTNET |

| Section 4.2.4 | Distributed coordination control in hybrid AC/DC MG with RCP | PHIL [44] | Analog I/O Digital I/O Modbus TCP/IP | RTSM: OPAL OP4510 |

| Section 4.2.5 | Design and validation of a rule-based microgrid controller | CHIL [37] | IEC 61850 | RTSM: OPAL OP4510 MGC: SEL 3360 |

| Section 4.2.6 | Decentralized microgrid control systems | CHIL [38] | IEC 61850 GOOSE | RTSM: OPAL OP5600 Raspberry PI |

| CHIL [39,40] | MODBUS C37-118 | RTSM: OPAL OP031 + OPAL OP5607 TI F28377S, Beaglebone Black Boards | ||

| Section 4.2.7 | Microgrid controller development with an Advanced Testing Chain methodology | CHIL/ PHIL/ PSIL * [55] | Analog I/O Modbus TCP/IP IA: DIM | RTSM: OPAL OP5600 PA: AMETEK RS90 DAS: DEWETRON 800 Current Source Inverter: SMA SCS500 |

| Section 4.2.8 | Generic microgrid controller development, testing, and validation | CHIL [41] | IEC 61850 GOOSE DNP3 | RTSM: OPAL OP5600 Load control: SEL 3505 RTAC MGC: ETAP |

| Section 4.2.9 | CHIL for Grid-support functions of inverters | CHIL [45,48,49] | Analog I/O Modbus TCP/IP | RTSM: Typhoon HIL602 DuT: AIT SGC |

| Section 4.2.10 | PHIL smart inverter testing with megawatt scale grid simulator | PHIL | IA: ITM Analog I/O | RTSM: RTDS NovaCore PA: SanRex 5MVA DAS: HIOKI PW6001, MR8827 |

| Section 4.2.11 | CHIL for validation of unintentional islanding | CHIL [50,51] | Analog I/O Modbus TCP/IP | RTSM: Typhoon HIL602 DuT: AIT SGC |

| Section 5.2.1 | Asynchronous integration of RTSM with co-simulation platforms | CHIL [59] | Co-Sim: Lablink UDP | RTSM: OPAL OP5600 Gateway: Raspberry Pi |

| CHIL [60] | Co-Sim: OpSim Async. TCP/IP | RTSM: OPAL OP5600 | ||

| Section 5.2.2 | Co-simulation of cyber-physical systems | CHIL [61] | IEC 61850 Co-Sim: TCP/IP Virtual Link with Exata CPS | RTSM: OPAL OP4510 |

| CHIL [62,63] | IEC 61850 Co-Sim: Ethernet with Opnet | RTSM: OPAL OP4510 | ||

| Section 5.2.3 | SCEPTRE: suite of tools providing an ICS co-simulation environment | CHIL/ PHIL [64,65] | Real TCP/IP packets running over simulated network. Physical interfaces to the network can be presented to users/equipment | RTSM: Custom Power Simulation runing in PowerWorld Dynamics Studio PA: AMETEK RS180 |

| Section 5.2.4 | Electrical vehicle/ charging station integration testing | CHIL [66] | Co-Sim: MATLAB and IEC61850 SV Sender (Commercial software) | RTSM: RTDS/GTNET |

| Section 6.2.1 | GD-CHIL for advanced validation of a distributed control algorithm | GD-CHIL [72] | UDP TCP/IP | RTSM: OPAL OP5600 Controller: Raspberry Pi |

| Section 6.2.2 | Delay assessment for geographically distributed CHIL experiment | GD-CHIL [75] | Co-Sim: OpSim Message Bus architecture TCP/IP | RTSM: OPAL OP5600 Controller: Coordinated Voltage Control in Matlab |

| Section 6.2.3 | GD-PHIL for testing of a voltage controller | GD-PHIL PSIL * [80] | IA: ITM UDP | RTSM: RTDS DuT: Lead-Acid Battery |

| Section 6.2.4 | Global RT SuperLab | GD-PHIL / GD-CHIL/ PSIL * [76] | IA: Multiple Comm. protocol: VILLASnode | RTSM: OPAL OP5600, Typhoon HIL, RTDS RS: Multiple |

| Section 7.2.1 | Compliance testing of a hybrid UPS according to JEC2433-2016 | CHIL [85] | Analog I/O Digital I/O JEC2433-2016 | RTSM: OPAL OP5600 RCP: Custom |

| Section 7.2.2 | Development of a PMU pre- certification platform | CHIL [82] | Analog I/O Digital I/O | PMU: Vizimax PMU Signal Amp: Omicron |

| Section 7.2.3 | Factory Acceptance Tests based on HIL testing | CHIL [86,87] | Analog I/O Digital I/O | RTSM: SGI Altix UV300s Parallel Computers Controller: ABB HVDC Controller |

| CHIL [88] | Analog I/O Digital I/O | RTSM: SGI UV100 Parallel Computers Controller: Static Var Compensator Controllers | ||

| CHIL [89] | Analog I/O Digital I/O | RTSM: OPAL OP5600 + OP7020 Controller: HVDC MMC controller | ||

| Section 7.2.4 | System Validation Platform HIL Based Grid Code Testing Aspects of DER Inverter | PHIL [47] | IA: ITM, DIM Analog I/O | RTSM: OPAL OP5700 PA: Ametek |

| CHIL [47] | Analog I/O Digital I/O | RTSM: Typhoon HIL602 DuT: AIT SGC | ||

| Section 7.2.5 | HIL for marine electrical power systems (MEPS) | PHIL [90] | IA: ITM, DIM Analog I/O | RTSM: RTDS PA: Triphase PM90 |

| Section | Topic | Reports in Literature |

|---|---|---|

| Section 2 | Interfacing methods of PHIL, CHIL, and PSIL simulation | [1,2,3,4,5,6,7,8,9,10,11,12,13,14,15] |

| Section 3 | HIL testing of power system protection and control | [17,18,19,20,21,22,23,24,25,26,27,28,29,30,31] |

| Section 4 | HIL testing of smart grid/microgrid controllers, energy management systems, and power electronic converters | [28,32,33,34,35,36,37,38,39,40,41,42,43,44,45,46,47,48,49,50,51,52,53,54,55,56,95] |

| Section 5 | HIL co-simulation and CPES | [58,59,60,61,62,63,64,65,66] |

| Section 6 | Geographically distributed HIL and RTS | [14,59,67,68,69,70,71,72,73,74,75,76,77,78,79,80] |

| Section 7 | Industrial experiences and HIL in standardized testing | [14,47,56,81,82,83,84,85,86,87,88,89,90,91,92,93,94] |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Montoya, J.; Brandl, R.; Vishwanath, K.; Johnson, J.; Darbali-Zamora, R.; Summers, A.; Hashimoto, J.; Kikusato, H.; Ustun, T.S.; Ninad, N.; et al. Advanced Laboratory Testing Methods Using Real-Time Simulation and Hardware-in-the-Loop Techniques: A Survey of Smart Grid International Research Facility Network Activities. Energies 2020, 13, 3267. https://doi.org/10.3390/en13123267

Montoya J, Brandl R, Vishwanath K, Johnson J, Darbali-Zamora R, Summers A, Hashimoto J, Kikusato H, Ustun TS, Ninad N, et al. Advanced Laboratory Testing Methods Using Real-Time Simulation and Hardware-in-the-Loop Techniques: A Survey of Smart Grid International Research Facility Network Activities. Energies. 2020; 13(12):3267. https://doi.org/10.3390/en13123267

Chicago/Turabian StyleMontoya, Juan, Ron Brandl, Keerthi Vishwanath, Jay Johnson, Rachid Darbali-Zamora, Adam Summers, Jun Hashimoto, Hiroshi Kikusato, Taha Selim Ustun, Nayeem Ninad, and et al. 2020. "Advanced Laboratory Testing Methods Using Real-Time Simulation and Hardware-in-the-Loop Techniques: A Survey of Smart Grid International Research Facility Network Activities" Energies 13, no. 12: 3267. https://doi.org/10.3390/en13123267

APA StyleMontoya, J., Brandl, R., Vishwanath, K., Johnson, J., Darbali-Zamora, R., Summers, A., Hashimoto, J., Kikusato, H., Ustun, T. S., Ninad, N., Apablaza-Arancibia, E., Bérard, J.-P., Rivard, M., Ali, S. Q., Obushevs, A., Heussen, K., Stanev, R., Guillo-Sansano, E., Syed, M. H., ... Bründlinger, R. (2020). Advanced Laboratory Testing Methods Using Real-Time Simulation and Hardware-in-the-Loop Techniques: A Survey of Smart Grid International Research Facility Network Activities. Energies, 13(12), 3267. https://doi.org/10.3390/en13123267