Data-Driven Decentralized Algorithm for Wind Farm Control with Population-Games Assistance

Abstract

:1. Introduction

- the algorithm proposed in this paper uses historical information of the system evolution to compute multiple directions of the gradient estimations in a decentralized fashion and for every single wind turbine, i.e., it is not necessary to share information among turbines, and

- the proposed approach produces global solutions due to the availability of the total generated power amount.

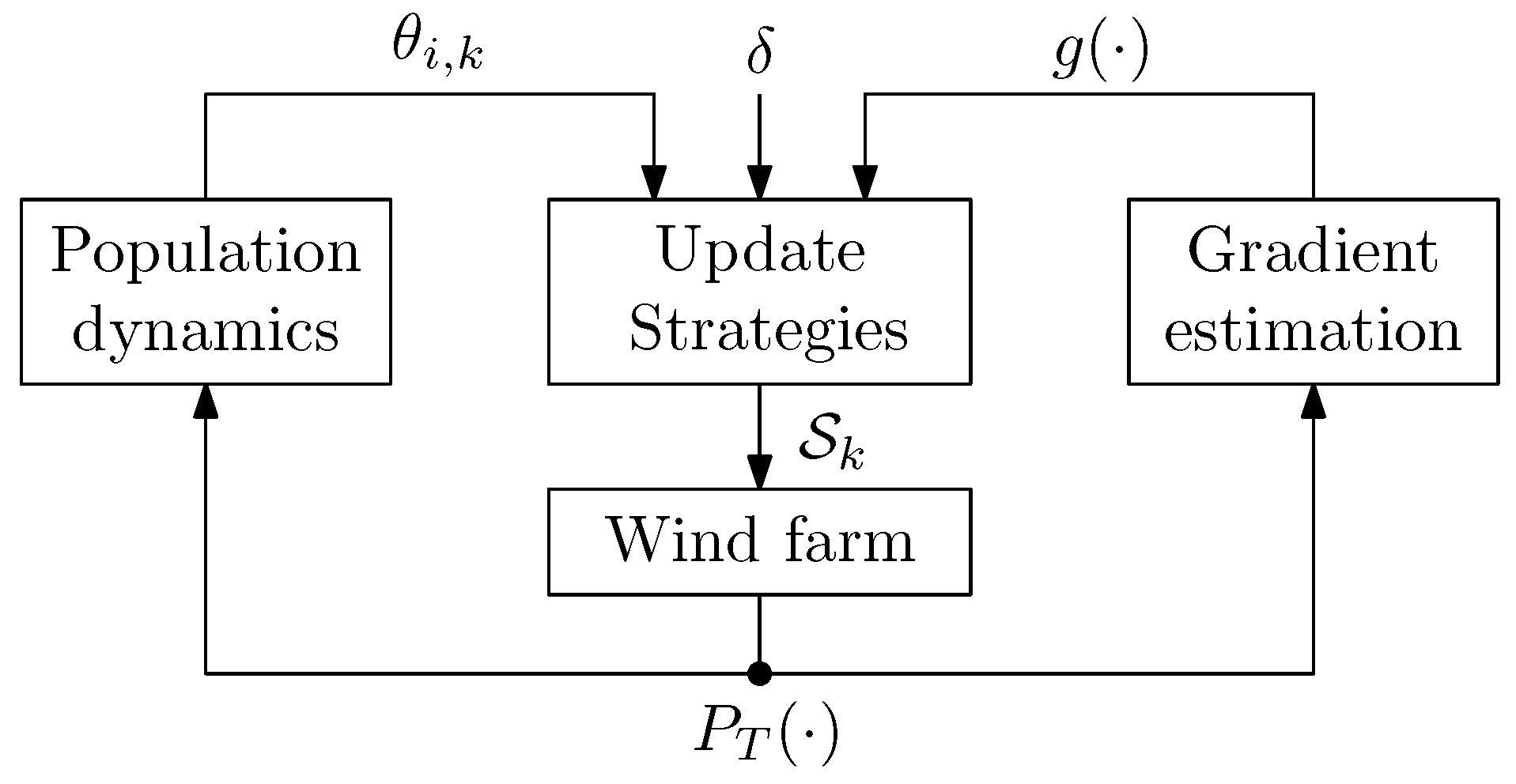

Notation

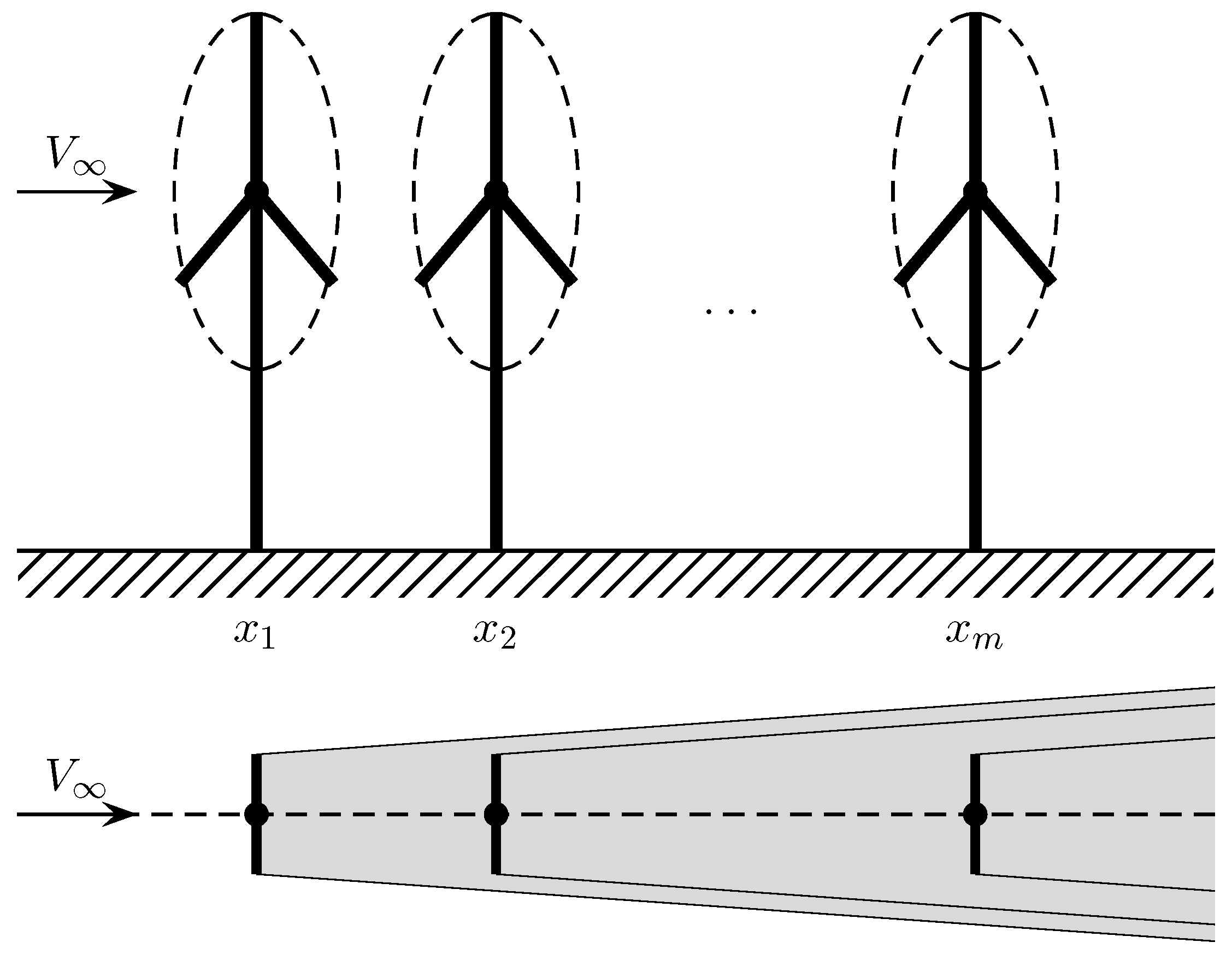

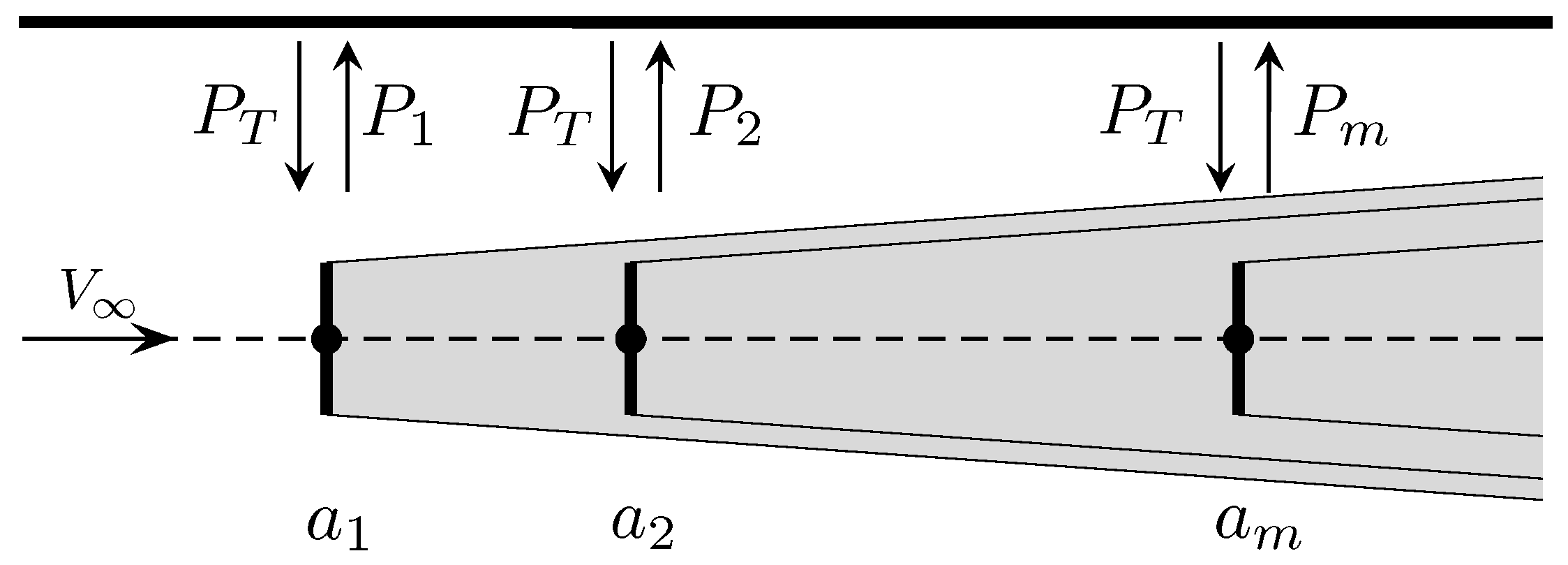

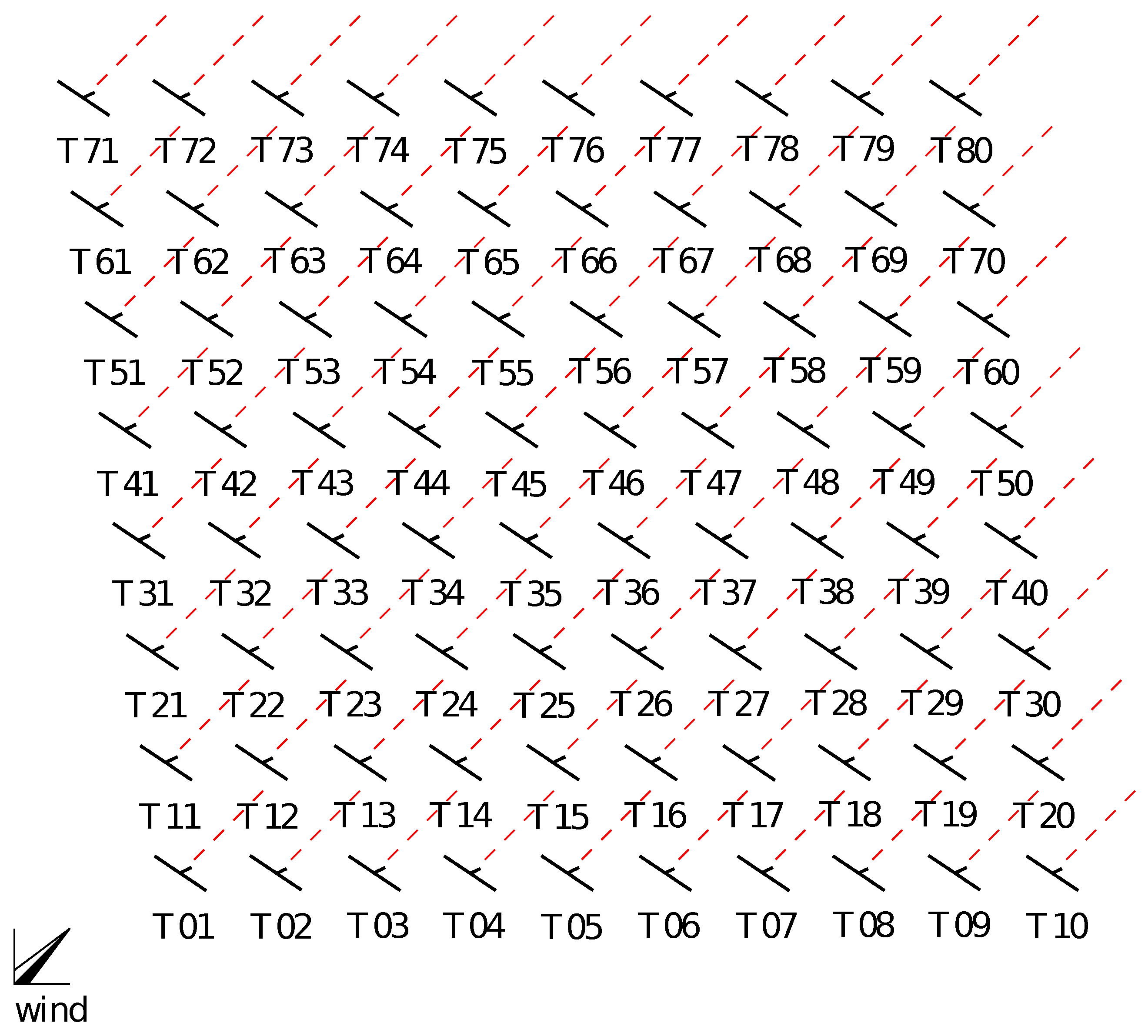

2. Problem Statement

3. Preliminary Concepts

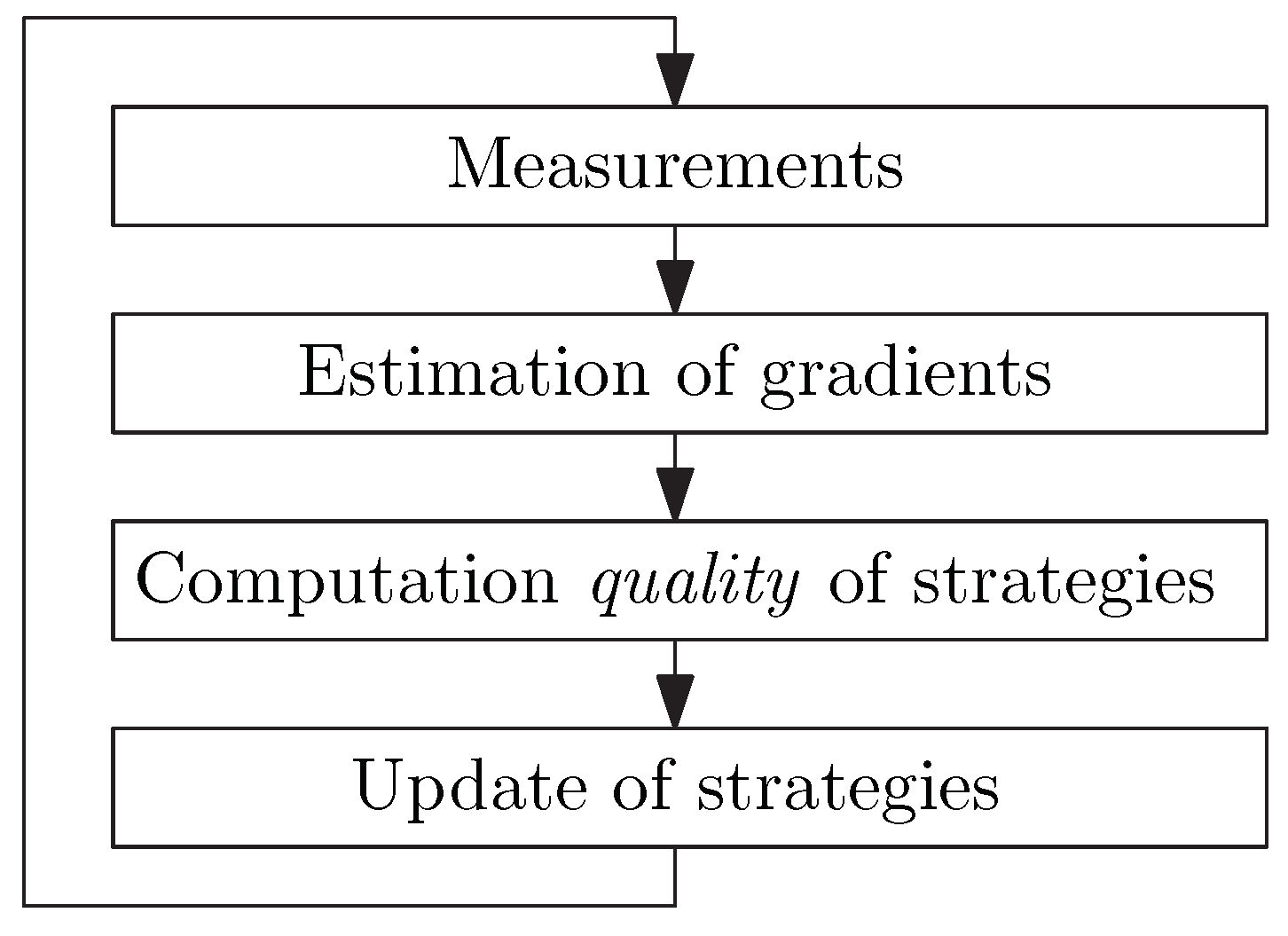

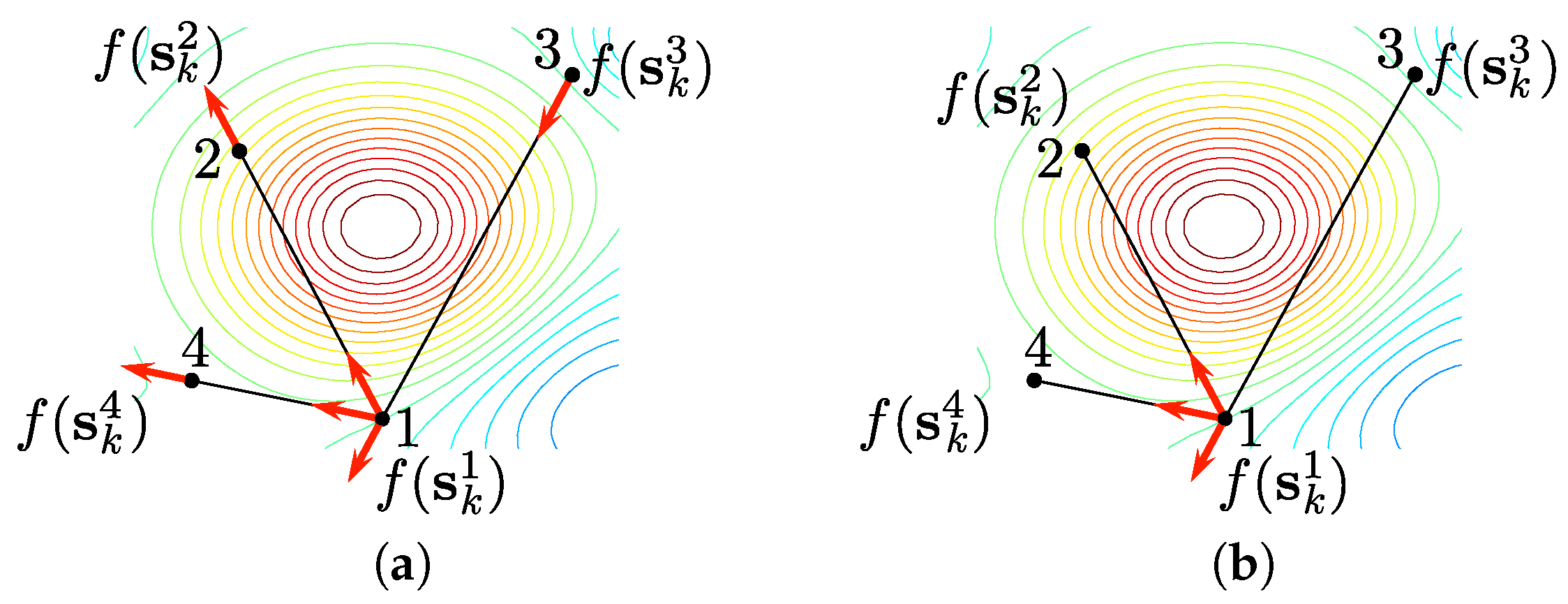

3.1. Gradient Estimation

- 1.

- to be able to capture measurements of the unknown function f, and

- 2.

- to know the correspondence of the measurement with the element in the domain of f, i.e., for a measurement the element in the domain of f is known.

3.2. Population-Game Role

4. Algorithms According to Information Availability

4.1. Using Multiple Measurements at Each Iteration

4.2. Using a Single Measurement at Each Iteration

5. Data-Driven Decentralized Control of Wind Farms

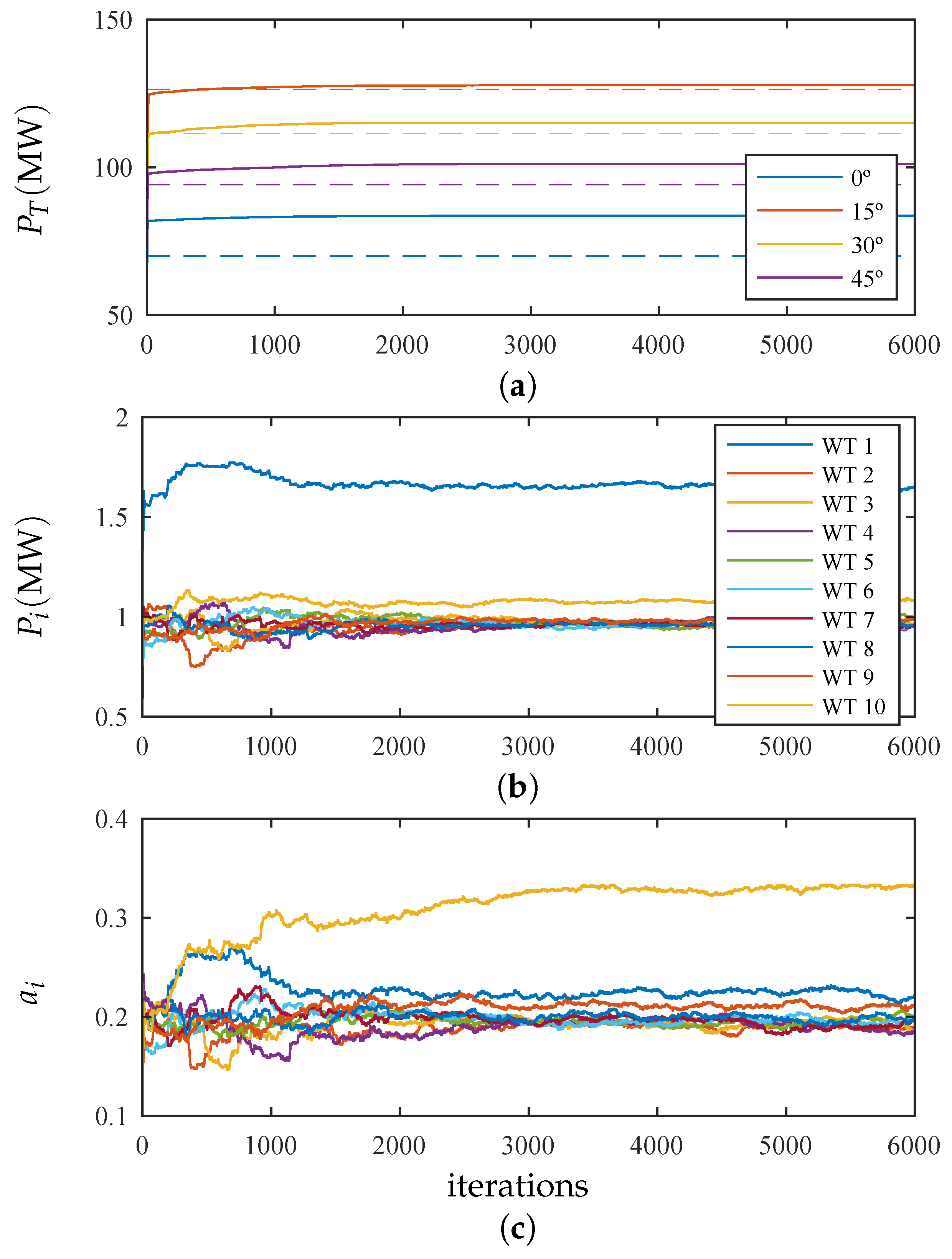

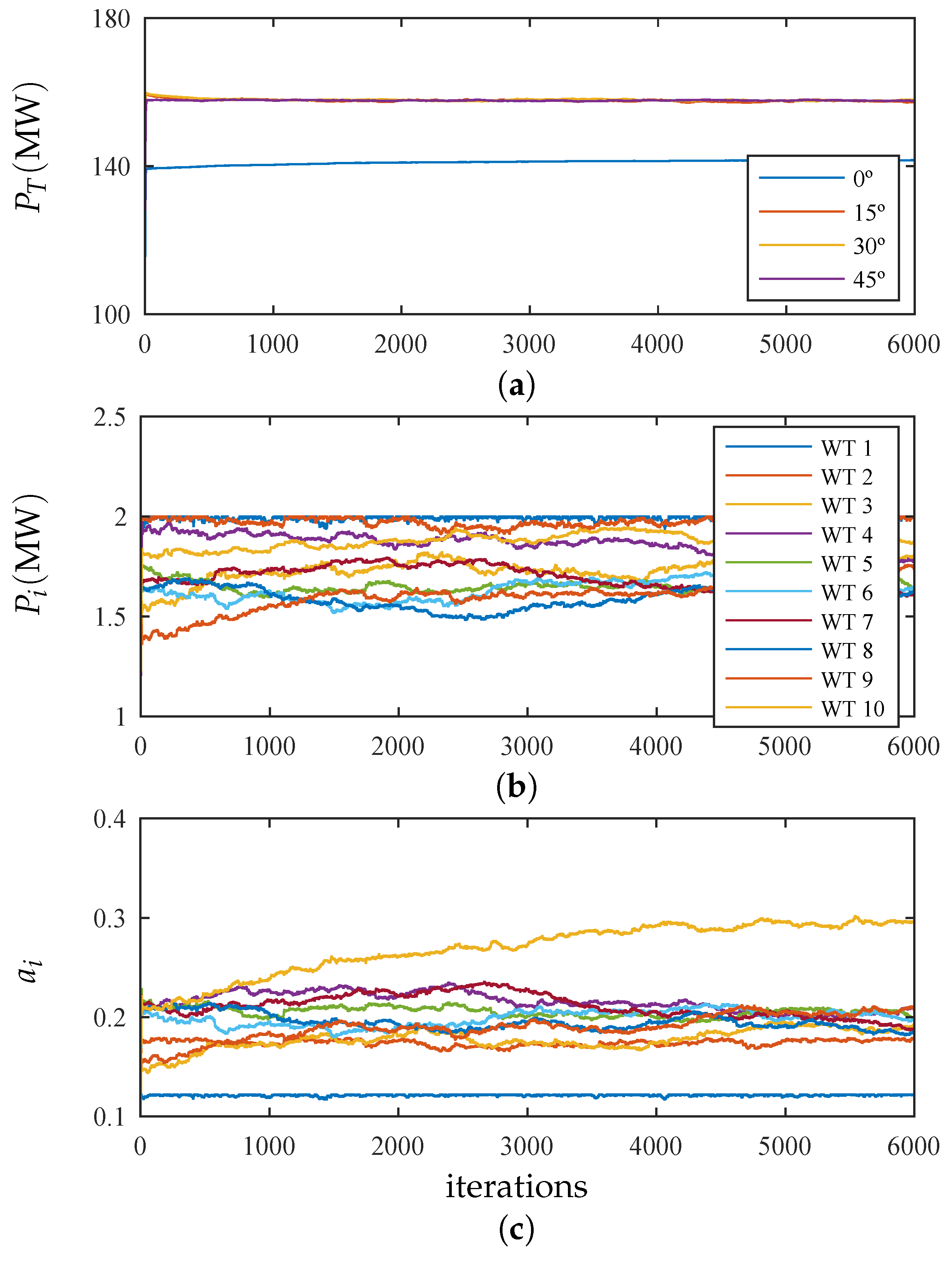

6. Case Study and Simulation Results

- Scenario 1: the free-stream wind speed was below the rated value and all wind turbines are working in maximization of the energy capture.

- Scenario 2: the free-stream wind speed was above the rated value and some turbines are working in power limitation (at 2 MW).

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Marden, J.; Ruben, S.; Pao, L.Y. Surveying Game Theoretic Approaches for Wind Farm Optimization. In Proceedings of the 50th AIAA Aerospace Sciences Meeting, Nashville, TN, USA, 9–12 January 2012; pp. 1–10. [Google Scholar]

- Marden, J.R.; Ruben, S.D.; Pao, L.Y. A Model-Free Approach to Wind Farm Control Using Game Theoretic Methods. IEEE Trans. Control Syst. Technol. 2013, 21, 1207–1214. [Google Scholar] [CrossRef]

- Buccafusca, L.; Beck, C.; Dullerud, G. Modeling and Maximizing Power in Wind Turbine Arrays. In Proceedings of the IEEE Conference on Control Technology and Applications (CCTA), Kamuela, HI, USA, 27–30 August 2017; pp. 773–778. [Google Scholar]

- Zhong, S.; Wang, X. Decentralized Model-Free Wind Farm Control via Discrete Adaptive Filtering Methods. IEEE Trans. Smart Gird 2018, 9, 2529–2540. [Google Scholar] [CrossRef]

- Jensen, N.O. A Note on Wind Generator Interaction; Technical Report; Riso National Laboratory: Roskilde, Denmark, 1983. [Google Scholar]

- Fleming, P.; Lee, S.; Churchfield, M.; Scholbrock, A.; Michalakes, J.; Johnson, K.; Moriarty, P. The SOWFA Super-Controller: A High-Fidelity Tool for Evaluating Wind Plant Control Approaches. In Proceedings of the EWEA Annual Meeting, Vienna, Austria, 4–7 February 2013. [Google Scholar]

- Boersma, S.; Doekemeijer, B.; Gebraad, P.; Fleming, P.; Annoni, J.; Scholbrock, A.; Frederik, J.; van Wingerden, J.W. A tutorial on control-oriented modeling and control of wind farms. In Proceedings of the American Control Conference, Seattle, WA, USA, 24–26 May 2017. [Google Scholar] [CrossRef]

- Annoni, J.; Gebraad, P.M.O.; Scholbrock, A.K.; Fleming, P.A.; van Wingerden, J. Analysis of axial-induction-based wind plant control using an engineering and a high-order wind plant model. Wind Energy 2016, 19, 1135–1150. [Google Scholar] [CrossRef]

- Vali, M.; van Wingerden, J.; Boersma, S.; Petrović, V.; Kühn, M. A predictive control framework for optimal energy extraction of wind farms. J. Phys. Conf. Ser. 2016, 753, 052013. [Google Scholar] [CrossRef]

- Gebraad, P.M.O.; van Wingerden, J.W. Maximum power-point tracking control for wind farms. Wind Energy 2015, 18, 429–447. [Google Scholar] [CrossRef]

- Park, J.; Law, K.H. Bayesian Ascent: A Data-Driven Optimization Scheme for Real-Time Control With Application to Wind Farm Power Maximization. IEEE Trans. Control Syst. Technol. 2016, 24, 1655–1668. [Google Scholar] [CrossRef]

- Ciri, U.; Rotea, M.; Santoni, C.; Leonardi, S. Large-eddy simulations with extremum-seeking control for individual wind turbine power optimization. Wind Energy 2017, 20, 1617–1634. [Google Scholar] [CrossRef]

- Sandholm, W.H. Population Games and Evolutionary Dynamics; MIT Press: Cambridge, MA, USA, 2010. [Google Scholar]

- Simić, S.N.; Sastry, S. Distributed gradient estimation using random sensor networks. In Proceedings of the 1st ACM International Workshop on Wireless Sensor Networks and Applications (WSNA), Memorandum UCB/ERL M02/25, Atlanta, GA, USA, 28 September 2002. [Google Scholar]

- Simić, S.N.; Sastry, S. Distributed Environmental Monitoring Using Random Sensor Networks. In Information Processing in Sensor Networks: Second International Workshop; Zhao, F., Guibas, L., Eds.; Springer: Berlin/Heidelberg, Germany, 2003; pp. 582–592. [Google Scholar]

- Tembine, H.; Altman, E.; El-Azouzi, R.; Hayel, Y. Evolutionary games in wireless networks. IEEE Trans. Syst. Man Cybern. Part B Cybern. 2010, 40, 634–646. [Google Scholar] [CrossRef] [PubMed]

- Quijano, N.; Ocampo-Martinez, C.; Barreiro-Gomez, J.; Obando, G.; Pantoja, A.; Mojica-Nava, E. The role of population games and evolutionary dynamics in distributed control systems. Control Syst. Mag. 2017, 37, 70–97. [Google Scholar]

- Barreiro-Gomez, J.; Obando, G.; Quijano, N. Distributed Population Dynamics: Optimization and Control Applications. IEEE Trans. Syst. Man Cybern. Syst. 2017, 47, 304–314. [Google Scholar] [CrossRef]

- Barreiro-Gomez, J.; Ocampo-Martinez, C.; Quijano, N. Time-varying Partitioning for Predictive Control Design: Density-Games Approach. J. Process Control 2019, 75, 1–14. [Google Scholar] [CrossRef]

- van Dam, F.C.; Gebraad, P.M.O.; van Wingerden, J. A Maximum Power Point Tracking Approach for Wind Farm Control. In Proceedings of the Science of Making Torque from Wind, Oldenburg, Germany, 9–11 October 2012. [Google Scholar]

- Barreiro-Gomez, J.; Ocampo-Martinez, C.; Bianchi, F.; Quijano, N. Model-free control for wind farms using a gradient estimation-based algorithm. In Proceedings of the European Control Conference (ECC), Linz, Austria, 15–17 July 2015; pp. 1516–1521. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Barreiro-Gomez, J.; Ocampo-Martinez, C.; Bianchi, F.D.; Quijano, N. Data-Driven Decentralized Algorithm for Wind Farm Control with Population-Games Assistance. Energies 2019, 12, 1164. https://doi.org/10.3390/en12061164

Barreiro-Gomez J, Ocampo-Martinez C, Bianchi FD, Quijano N. Data-Driven Decentralized Algorithm for Wind Farm Control with Population-Games Assistance. Energies. 2019; 12(6):1164. https://doi.org/10.3390/en12061164

Chicago/Turabian StyleBarreiro-Gomez, Julian, Carlos Ocampo-Martinez, Fernando D. Bianchi, and Nicanor Quijano. 2019. "Data-Driven Decentralized Algorithm for Wind Farm Control with Population-Games Assistance" Energies 12, no. 6: 1164. https://doi.org/10.3390/en12061164

APA StyleBarreiro-Gomez, J., Ocampo-Martinez, C., Bianchi, F. D., & Quijano, N. (2019). Data-Driven Decentralized Algorithm for Wind Farm Control with Population-Games Assistance. Energies, 12(6), 1164. https://doi.org/10.3390/en12061164