Abstract

Aiming at the problem of easy-to-fall-into local convergence for automatic train operation (ATO) velocity ideal trajectory profile optimization algorithms, an improved multi-objective hybrid optimization algorithm using a comprehensive learning strategy (ICLHOA) is proposed. Firstly, an improved particle swarm optimization algorithm which adopts multiple particle optimization models is proposed, to avoid the destruction of population diversity caused by single optimization model. Secondly, to avoid the problem of random and blind searching in iterative computation process, the chaotic mapping and the reverse learning mechanism are introduced into the improved whale optimization algorithm. Thirdly, the improved archive mechanism is used to store the non-dominated solutions in the optimization process, and fusion distance is used to maintain the diversity of elite set. Fourthly, a dual-population evolutionary mechanism using archive as an information communication medium is designed to enhance the global convergence improvement of hybrid optimization algorithms. Finally, the optimization results on the benchmark functions show that the ICLHOA can significantly outperform other algorithms for contrast. Furthermore, the ATO Matlab/simulation and hardware-in-the-loop simulation (HILS) results show that the ICLHOA has a better optimization effect than that of the traditional optimization algorithms and improved algorithms.

1. Introduction

Rail transit has the characteristics of large transport volume, energy saving, environmental protection, comfort, safety, punctuality, reliability, fast and convenient. Vigorously developing rail transit is particularly important for improving people’s living standards. The application of automatic train oeration (ATO) system can greatly improve the operation efficiency of rail transit. Enabling the function of automatic velocity adjustment in the course of train operation will make the train in the optimal working state as much as possible. Therefore, velocity adjustment is the core unit of ATO and it is an important component of the train optimization and control system [1,2]. The ATO system has the function module of target velocity trajectory profile optimization, which depends on multi-objective optimization of train operation process. It can obtain the target velocity trajectory profile that pleases the decision maker, which takes into account the optimization indexes such as energy consumption, comfort, parking accuracy and running time.

The ATO control system which can give a precise control sequence for any given train parameter, line condition, constraint and optimization objective has always been the goal pursued by relevant scientific researchers for many years. Therefore, because the optimization of train operation process is characterized by multi-objective, nonlinear, large lag and large search space, the ATO control system using traditional optimization algorithm is difficult to obtain the optimal control sequence in numerous different control sequences. Various optimized control schemes have been proposed in recent works on the ATO control strategy. A method to design robust and efficient speed profiles to be programmed in the ATO equipment of a metro line is proposed. This design method of velocity trajectory profile takes both running time and energy consumption into account. The experimental results show that the proposed method is more robust than the prior optimization technique [3]. The main contributions are the proposed method for the optimal design of the automatic train driving considering the energy regenerated by the trains to the electrical network, and for the accurate assessment of energy savings associated to investments in equipment to improve the use of regenerative energy [4]. A nonlinear programming method about the optimization strategy for the energy-saving speed trajectory of the following train is proposed. The simulation results show that the new method is efficient on energy saving [5]. A new energy-efficient train operation model based on real-time traffic information from the geometric is proposed. In contrast to most existing methods, the proposed model turns out to be a small-scale problem, and some complex computational processes can be avoided [6]. The novelty of literature [7] lies in the establishment of a novel multiple-model-based switching optimization framework to reduce energy consumption while guaranteeing the punctuality during train tracking operation. In order to save locomotive energy consumption, in [8], a fuzzy predictive control approach (FPC) is designed, continuously providing locomotive operation instructions for Chinese mainline railways, the proposed method is applied to an on-board auxiliary driving system to assist drivers in driving. The relevant auxiliary systems is tested on the Ning’xi line in China, its locomotive energy consumption declines 4% less than before. A robust control algorithm with adaptive control rate is proposed, which can estimate unknown parameters in closed-loop system on-line. To cope with actuator saturation, another robust adaptive control is proposed for the ATO system. The simulation results show the effectiveness of the two control algorithms [9]. Based on research in ATO on optimizing an energy-efficient speed profiled and designing control algorithms to track the speed profile, two intelligent train operation (ITO) algorithms without using precise train model information and offline optimized speed profiles are proposed [10]. A predictive train rescheduling model is proposed which incorporates the model predictive control model predictive control (MPC) mechanism and the non-analytical prediction model. Numerical experiments demonstrate the efficiency of the proposed methodology for train synergistic safe and efficient operations [11]. The multi-objective optimization feature information is transformed into the association function first, and then the matter-element theory is introduced to establish models for the speed trajectory to achieve the multi-objective optimization so as to be compatible to knowledge-based safety requirement constrained condition. Taking Shanghai Railway Transit equipment in China as a case study, the experimental results show that the improved algorithm is superior to the traditional algorithms in some optimization indicators such as comfort, stability, energy saving and precise parking [12]. A new optimal strategy used in train operation process, which contains two processes of offline global optimization and online local optimization. The performance of the algorithm is tested by CHR-3 on high-speed railway. Under the same conditions, the actual speed deviation can be revised in a timely manner with the proposed method [13]. A speed trajectory profile optimization method for ATO of the Chinese Train Control System (CTCS) is designed. In order to obtain Pareto frontier, a hybrid evolutionary algorithm is designed and applied to solve the model based on the differential evolution and simulating annealing algorithms [14].

As can be seen from the literatures listed above, it is obvious that plenty of important contributions have been made from the existing literatures. However, the application of the some research findings is often restricted by a certain specific ATO scenario, which has affected the popularization and use of its research results. Furthermore, some research results show that the actual optimization effect of train operation often falls short of the ideal expectation in the train operation simulation model [15]. In terms of the optimal design of the ideal train speed trajectory profile, three important factors should not be ignored. First, the multi-objective optimization problem of train operation process is an extremely complex nonlinear optimization problem, which is affected by many constraints and parameters. It is necessary to take into account multiple optimization objectives in ATO, but these optimization objectives have some contradictions and unfair measures. There are an infinite number of feasible solutions in the solution set of the problem, which requires the design of an intelligent optimization algorithm with excellent global convergence performance. Secondly, there is an obvious difference between the train operation simulation model and the actual situation. In the actual operation process, there are many factors such as inaccurate sensor data collection, delay in transmission process and limitation of tracking control, but these factors are completely ignored or partially considered. So it is necessary to provide a set of optimization simulation platform that can truly reflect the actual running process of the train. Thirdly, due to the restriction of scientific research conditions, a lot of obtained scientific findings are based on a specific ATO scenario. Catholicity ATO velocity ideal trajectory profile optimization design algorithms are rarely considered.

In response to the problem that the existing intelligent optimization algorithms and its improved algorithms are easy to fall into local convergence, a hybrid optimization algorithm based on comprehensive learning strategy is proposed in this paper. Improved particle swarm optimization using a comprehensive learning strategy (CLPSO) and whale optimization algorithm (WOA) have been highly sought after by relevant scholars due to their strong optimization performance, and considerable research achievements have been achieved so far. A PCLPSO (parallel comprehensive learning particle swarm optimizer) is proposed in literature [16]. By changing the topological structure of the CLPSO, the population is divided into several subgroups for parallel calculation, which has the characteristics of fast convergence and high computational efficiency. A MOCLPSO (multi-objective comprehensive learning particle swarm optimizer) which combines the Pareto dominance mechanism with the CLPSO algorithm is proposed, its non-dominant external archive is used to improve the optimization performance of the algorithm [17]. An improved LWOA (Lévy flight trajectory-based whale optimization algorithm) is proposed. The Lévy flight trajectory helps maintain the population diversity of the algorithm, and thus enhance its ability to restrain local convergence [18]. A chaos whale optimization algorithm (CWOA) is proposed to optimize the Elman neural network, which is a WOA improvement algorithm that utilizes chaotic features to improve the diversity of the population [19]. WOA is introduced and applied to solve a practical problem in [20].

As for the problem that the existing operation simulation model truly reflects the low degree of actual operation, a set of automatic train operation hardware-in-the-loop simulation including optimized loop and control loop is used to verify the optimized performance in this paper. Since the hardware-in-the-loop simulation (HILS) platform contains the core hardware and physical devices in the real scene, it can provide a simulation environment close to the real scene. Other simulation platforms are extremely difficult to replace it, so it is highly valued by researchers and developers. They spare no effort to research, develop and purchase HILS related products and technologies, and have achieved numerous research results [21,22,23]. Improved multi-objective hybrid optimization algorithm using a comprehensive learning strategy (ICLHOA) proposed in this paper is a hybrid optimization algorithm for parallel computing, which mixes two improved algorithms based on comprehensive learning strategy. The two improved algorithms are, respectively, improved particle swarm optimization and whale optimization algorithm using a comprehensive learning strategy (ICLPSO and ICLHOA), and the improved elite archiving mechanism is used as a medium for the two improved algorithms to exchange information, and their advantages are as follows:

(1) ICLPSO contains a variety of optimization modes, which can weaken the destruction of population diversity caused by PSO’s single optimization mode that learns from very few individuals to a certain extent.

(2) ICLWOA can enhance WOA’s global optimization performance to some extent by introducing chaotic mapping and reverse learning mechanism.

(3) ICLHOA has designed an elite archiving mechanism to enhance the global convergence of the hybrid optimization algorithm by using the elite archive set as the information communication medium. the elite archiving mechanism uses fusion distance (fused by mahalanobis distance and Euclidean distance) as the distance measure index, which can effectively prevent the aggregation of individuals within the population to maintain the diversity of the population, so that it has a better guidance to the global convergence.

In order to verify the algorithm performance of ICLHOA, the improved algorithm ICLHOA proposed in this paper and other intelligent optimization algorithms are applied to benchmark functions and optimize the ideal train speed trajectory of Matlab/simulink, isolating ‘control loop’ containing physical hardware devices (ICL)-HILS, retaining ‘control loop’ containing physical hardware devices (RCL)-HILS. The optimization results can show that the improved algorithm proposed in this paper can find a more ideal optimization solution, which has better global optimization performance.

2. Optimization Model for Train Operation Process

2.1. Constraints for Train Operation Process

In order to ensure the train operation process is safe and stable, many constraints such as the dynamic equation of train motion, force characteristics, velocity limitation, etc. should be taken into account in the train operation process [24,25,26].

2.1.1. Train Dynamical Model

The dynamic equation of train operation is as follows:

where t represents the actual running time of the train; s denotes the actual position of the train; M is the mass of the train, , represents the rotating mass factor, represents the weight of the train; and are tractive and braking force of the train; is the additional resistance of the train; u represents the train control mode sequence. The control modes include maximum traction, partial traction, idle running, partial braking and maximum braking, which are represented by .

2.1.2. Boundary Constraint

The boundary constraint of train operation is as follows:

where and represents the velocity and distance in the initial state ; and represents the velocity and distance in the terminal state ; stands for the total distance between and ; represents the total time.

2.1.3. Position Variable Constraint

The conversion position corresponding to each working condition should keep increasing order.

where represents the j-th inflection position of train control sequence; k represents the number of inflection point for control sequence.

2.1.4. Velocity Limit Constraint

In order to ensure the safety of train operation and prevent accidents such as derailment, the real-time velocity limit is needed.

where represents the maximum running velocity allowed by each subinterval; is inflexion point of subinterval related to the line, it represents the starting point of the -th subinterval or the terminal point of the j-th subinterval.

2.1.5. Characteristic Constraints of Traction and Braking Forces

In general, the traction and braking force characteristic curve of urban rail vehicle is partitioned into three regions for design: constant torque region, constant power region, and power reduction region (natural characteristic region). In the constant torque region, the traction power (braking power) of the urban rail vehicle is proportional to the velocity, and the traction force (braking force) is maximum and constant. In the constant power region, the traction power (braking power) of urban rail vehicle is maximum and constant, and the traction force (braking force) is inversely proportional to the instantaneous velocity; In the power reduction region, the traction force (braking force) is inversely proportional to the instantaneous velocity square; In the braking start-up region, the braking force is proportional to the velocity. The traction force (braking force) is man-set according to the requirement of urban rail transit, and the actual traction should be equal to the designed value in theory. Due to the ageing of the traction motor, the dry running line, the wear and tear of wheels and so on, there still exists the difference between the actual dynamic characteristic curve and the designed curve.

where and represent the actual instantaneous traction force and braking force at the train speed v; and represent the designed instantaneous traction force and braking force at the train speed v; and represents the maximum designed traction force and braking force at the train speed v; and represent the maximum designed traction power and braking power at the train speed v; and respectively represent the inflexion velocities of the train in the constant power region; and represent the inflexion velocities of the train in the power reduction region; represents the maximum train velocity; represents the inflexion velocity of the train in constant torque region.

2.1.6. Constraints of Running Resistance

The running resistance of trains can be divided into basic resistance and additional resistance. In general, unit resistance is used to measure the resistance, which is the resistance of a vehicle per unit weight. The basic resistance is related to the instantaneous velocity in the train operation process. Bearing resistance accounts for a large proportion at low velocity. The proportion of sliding resistance between wheel and rail, impact vibration resistance and air resistance gradually increase with the increase of velocity. At present, unit basic resistance can be expressed in the form of quadratic function of train velocity.

where a, b, c are some parameters related to vehicle type; , , .

Additional resistance is the resistance produced under specific conditions in train operation process. Additional resistance mainly depends on line conditions. Additional resistance includes slope additional resistance, curve additional resistance, tunnel additional resistance and other resistances. These additional resistances exist alone or coexist in a variety of ways.

The component of gravity along the track direction is the additional resistance of the ramp in train operation process. Unit ramp resistance is similar to reduction numerical value of related slope (reduction numerical value of slope i).

where d is a parameter related to the line. In general, .

The resistance caused by the increase of friction when the train enters in the curve track is called curve additional resistance. The curve additional resistance is inversely proportional to the curve radius.

where R is the curve radius, e is a parameter related to the line. In general, .

2.2. Multi-Objective Optimization Model for Train Operation Process

The optimization of train operation process is a complex nonlinear problem, which has many input and output variables. So, taking the energy consumption, comfort, punctuality and parking accuracy as the optimization objectives, and combining the dynamic equation and other constraints, the multi-objective optimization model of train operation process is established as follows:

where K represents the comprehensive performance index by the traction force during the train operation; represents the energy consumed by the traction force during the train operation, represents the resistance of the i-th condition; represents the position of the i-th condition; represents the acceleration of of the -th condition; represents comfort level of passengers; represents the absolute value of the difference between the actual running time and the prescribed running time; represents the actual running time; represents the prescribed running time; represents the cumulative absolute value sum of the difference between the actual running distance and the prescribed running distance of all stations; represents the actual running distance between the -th station and -th station; represents the actual running time between the -th station and -th station; represents the distance between the -th station and -th station, that is, the prescribed running distance; represents number of stations; p represents the inflection points’ position of train control sequence u.

2.3. Coding Design for Train Operation Process

The coding is used to transform the solution space of the problem into the searching space that the intelligent algorithm can handle. Real number coding is very intuitive and can save coding and decoding operations, so real number coding is adopted in this paper. The number of variables of the solution should be determined before coding. If there are too many variables to be solved, the search space will be too large. At this time, the algorithm will take a long time and the optimal solution will not be easily found. Therefore, the following coding mechanism is used to solve the above problem.

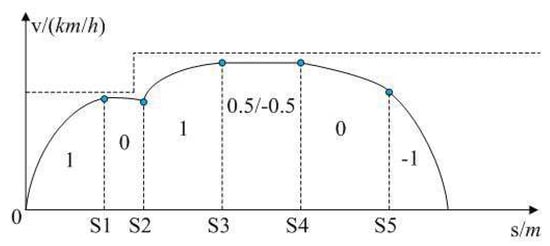

(1) There is no static speed limit falling interval and the corresponding diagram of train operation mode (recorded as train operation mode 1) is shown in Figure 1.

Figure 1.

Diagram of train operation mode 1.

Trains leave the station with maximum traction, so the solution can be set as shown below, which omits the maximum traction mode. The solution is set as shown below:

The second part of solution X represents the inflection position of the train control condition, and the first part of that is the working mode (control condition) of the corresponding position.

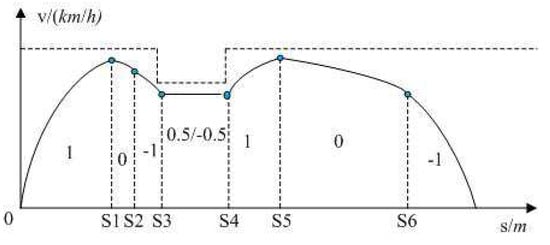

(2) There is a static speed limit falling interval and the corresponding diagram of train operation mode (recorded as train operation mode 2) is shown in Figure 2.

Figure 2.

Diagram of train operation mode 2.

The solution is set as shown below:

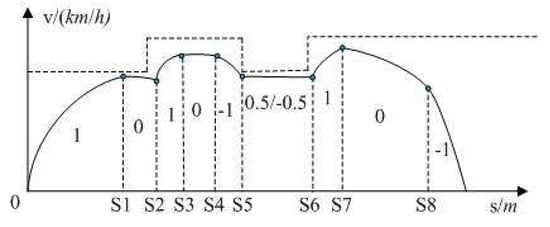

(3) There is static speed limit falling and rising intervals and the corresponding diagram of train operation mode (recorded as train operation mode 3) is shown in Figure 3.

Figure 3.

Diagram of train operation mode 3.

The solution is set as shown below:

Any complex speed limit interval can be composed of the above three basic speed limit intervals, and the coding mechanisms of three basic speed intervals are also applicable to any complex speed limit interval. Based on previous research results, the following conclusion can be obtained. When the train keeps an idle running condition or a constant speed running condition as much as possible, the energy consumed by train is the least.

3. Decomposition and Basic Algorithm

3.1. Decomposition

The aggregate function based on Tchebycheff decomposition is as follows [27]:

where m is the number of optimization index; is the refence point , , which is the optimal solution of each objective function so far; is the weight of the i-th individual , .

3.2. Particle Swarm Optimization

Particle swarm optimization (PSO) is a heuristic algorithm for optimization problems. In the PSO, is an individual, and N individuals forms a population. Population evolves by updating the speed and the position of each particle, which is as follows.

where w is the inertial weight coefficient, it represents the influence that the last speed is made to the next speed; and are the learning factors, which allows the particles to learn from their own historical experiences and from the best individual in the group. Therefore, it is close to the best particle in the group or neighborhood, and also plays a role in balancing the local search and the global search; is a random number related to ; is the best position of the particle so far; is the global optimal particle; d represents the value of particle i in the d-th dimension, .

3.3. Whale Optimization Algorithm

Whale optimization algorithm (WOA) is a new heuristic optimization algorithm proposed to imitate the hunting behavior of humpback whales. The algorithm mainly includes three stages: surrounding prey, bubble hunting and searching for prey [28]. The humpback whale hunting behavior is shown in Figure 4.

Figure 4.

Diagram of humpback whale hunting behavior.

The mathematical model of surrounding prey is as follows:

where t represents the iterative number; A and C represents the coefficient; represents the best position vector of all iterations to date. A and C are obtained from the following formulas:

where and are random numbers in ; the value of a goes linearly from 2 to 0; is the maximum number of iterations.

The mathematical model of bubble hunting is as follows:

where is the distance between the whale and its prey, b is the constant that defines the shape of the spiral, its value is 1, l is the random number in .

It is worth noting that whales swim in a spiral trajectory toward the prey, as well as shrinking their encirclement. In this synchronous behavior model, it is assumed that the contraction enveloping mechanism is selected with probability and the spiral model is selected with probability to update the whale’s position. Therefore, the position updating formula of the basic whale optimization algorithm can be described as:

where A is a random value in . If the value A is in the interval , the whale’s next position is somewhere between its present position and the position of its prey.

The mathematical model of surrounding prey is as follows:

where randomly selected position vector of the whale. The algorithm sets that when , a search leader is randomly selected to update the position of other whales according to the location of the leader, which steers the whale away from its prey to find a more suitable prey.

4. Hybrid Optimization Algorithm Using a Comprehensive Learning Strategy

4.1. Improved CLPSO

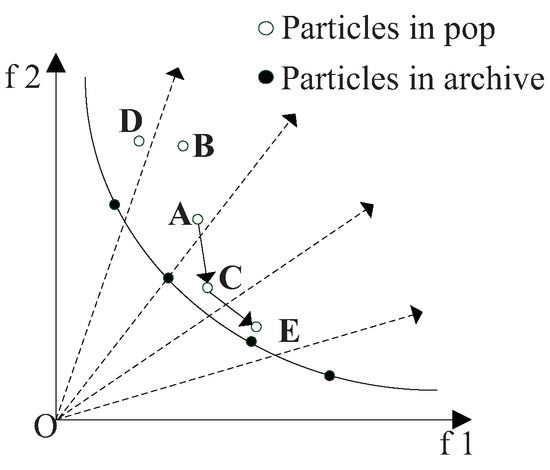

CLPSO is an improved algorithm of PSO, in which it is easy to jump out of local optimum. For traditional PSO, gbest plays a key role in the evolution. So if the gbest traps in local optimum, the entire population will trap in local optimum too. So CLPSO updates particle velocities mainly by learning pbest rather than gbest, which is shown in Figure 5. The particle velocity update formula of CLPSO is as follows [29]:

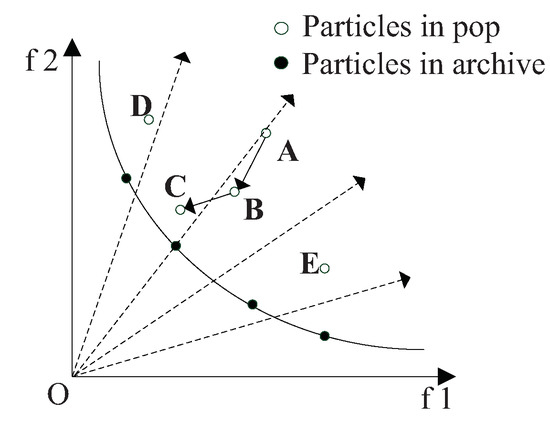

where is the template chosen from the population, which has been sorted by the aggregate function based on Tchebycheff decompose; is the best value of the chosen particle; in the ideal state, by using the decomposition method, N direction vectors will be generated uniformly in the multi-objective solution space, so as to divide the population into N subproblems, and each particle is upadated based on the direction vectors. As shown in the Figure 5 and Figure 6, the dotted lines represent the direction vectors that partition the objective space. The solid lines represent the true frontier of the function. The solid dots represent the particles of elite archive. The hollow dots represent the particles of the population. In the update process, A is the position at the previous moment, B is the position at the current moment, and C is the position at the next moment. The individual optimal value update mode of CLPSO is shown in Figure 5, and the optimal particle individual plays a guiding role in the search of the whole population. In order to update , the decomposition method should be adopted to scale the multi-objective problem. Tchebycheff method is used to construct the aggregation function in this paper. By calculating the aggregate value of each particle, the binary tournament will remain throughout the binary tournament. The global optimal value update method for traditional PSO is shown in Figure 6. In Figure 6, the global optimal value of particle swarm is used to guide the development of particles, and the global optimal value of the particles is updated by using the information of the neighborhood particles.

Figure 5.

Diagram of particle update by particle swarm optimization using a comprehensive learning strategy (CLPSO).

Figure 6.

Diagram of particle update by traditional particle swarm optimization (PSO).

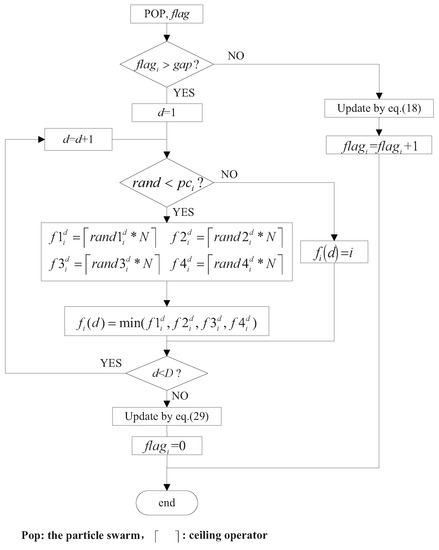

Similar to traditional PSO, CLPSO tends to locally converge to an individual extreme value. In fact, the diversity of the population is obviously destroyed by the single learning optimization model. In this paper, an improved particle swarm optimization algorithm based on comprehensive learning strategy is proposed, which is denoted as ICLPSO. The flow diagram of this paper is shown in Figure 7.

Figure 7.

Flow chart of Improved CLPSO particles’ update.

In Figure 7, is the learning probability, which determines whether it is updated based on template particles or not. As the number of iterations increases, the probability of particle swarm falling into local optimum increases gradually. In order to avoid that, the learning probability should also increase gradually. The formula of learning probability is shown as follows:

If the i-th particle cannot be effectively optimized in continuous iteration (the refresh interval generally is 2), this particle will learn by using formula 29 (CLPSO’s individual optimal value updating method). If > , the corresponding dimension will learn from its own pbest, or it will learn from pbest of template particles. To obtain better diversity when handling complex MOP, the optimal particle among four random particles in template will be chosen as the template pbest.

4.2. Improved CLWOA

Whale optimization algorithm simulating whale foraging is one of the most efficient optimization algorithms, but it also has the disadvantage that it is easy to fall into local convergence in the later iteration [30]. Therefore, this paper proposes an improved WOA algorithm (ICLWOA) that contains both learning strategies. the specific improved measures are described below.

(1) Parameter adjustment based on chaos mapping

In the whale optimization algorithm, parameters A and C are important parameters, which affect the searching ability of the algorithm to some extent. In the traditional whale optimization algorithm, its parameters are generated in an excessively random manner, which will reduce the convergence speed of the algorithm and invalidate the search area in the later period of operation.

Chaos is a common phenomenon in nonlinear systems. Its change process seems to be chaotic, but, in fact, it has inherently regularity and can iterate over all states according to its own law within a certain range. The behavior of chaotic map is complex, which is similar to random motion. But compared with random motion, it has ergodicity, which can make up for the defect of random motion to achieve global optimization. Therefore, chaos theory is applied to WOA in this paper to improve WOA’s ability to explore and create new individuals [31].

By using chaos mapping to adjust parameters, the range of random search of parameters reduces and the regularity of parameters increases. In this way, on the basis of avoiding fall into local optimum in the search process, thus, avoiding the problems of slow convergence and invalid search area caused by random blind search.

(2) Boundary processing measures based on reverse learning

In the process of algorithm optimization, it is possible to ‘overflow’ the search scope when the individual updates. The general way to deal with that is to generate new random individuals who cross the boundary. However, if a large number of individuals exceeds the boundary, this results in the waste of search resources. When the updating position of the individual is out of bounds, if the individual position is randomly reset, the beneficial information obtained by the previous iteration of the individual will be lost. A reverse learning strategy can generate a reverse individual far away from the local optimal. Therefore, this paper uses the reverse learning strategy to effectively guide the whale to quickly return to the decision space which needs to be searched.

In the calculation process, if the i-th individual is over the boundary, then the reverse learning strategy is adopted for the previous individual . The specific formula is as follows:

where is the reverse solution of the previous individual ; and are respectively the maximum and minimum value of solution; , as a generalization coefficient, which can prevent individuals from excessive escape.

4.3. Improved Archive Mechanism

In the multi-objective optimization problem, it is impossible to directly compare the advantages and disadvantages of two individuals. In this paper, the Tchebycheff decomposition method is selected to quantify the multi-objective problem, and the aggregation function is used to compare the advantages and disadvantages of individuals. For the multi-objective optimization algorithm based on the decomposition method, the reference point (elite individual) in the aggregation function plays a significant role in guiding the convergence direction of the population. In order to make better use of the information of each generation, the external elite archive set is used to record the beneficial information of the population after each population’s update. The elite archive set is recorded as Archive. The existing literature adds the updated non-dominant individuals from each generation to the archive mostly, so does this paper. As the number of iterations increases, more and more non-dominanted individuals are obtained. If all of them are kept in archive, it will greatly increase the computational burden of the algorithm [32]. Therefore, the elite archive set need to be kept within a certain size. In order to maintain the diversity of the whale elite archive set, this paper deletes the denser individuals by calculating the distance among individuals in archive. Traditional optimization algorithms generally adopt Euclidean distance, which is simple in definition and easy to calculate, as the distance measurement index. However, there are two weaknesses in Euclidean distance. First, the calculation of Euclidean distance depends on the dimensionality of variables, so its actual meaning is difficult to be explained. In addition, the distribution of samples is not taken into account when calculating Euclidean distance, so the correlation between variables cannot be measured. It is the straight-line distance between samples, so it is insufficient in solving the multivariate data analysis. Therefore, this paper uses the fusion distance which fuses Mahalanobis distance and Euclidean distance as the distance measurement index to maintain the archive scale. When the archive exceeds the predetermined scale, the fusion distance of each individual is calculated and the individual with small fusion distance is deleted until the scale of the archive is maintained at the predetermined value. The calculation formula of specific fusion distance is as follows:

where represents fusion distance; represents Mahalanobis distance; represents the correlation confficient matrix of sample set Y; n represents the number of samples in Y; represents the samples in Y; represents the correlation confficient. Since the Mahalanobis distance takes into account the correlation between variables, it uses the weight with relevant information to fuse, while the Euclidean distance uses to fuse. Therefore, the fusion distance takes into account both the correlation between variables and the independence between variables [33].

Both PSO and WOA are optimization algorithms that the population mainly learns from the optimal individuals or some elite individuals. This optimization model of directional learning always has a relatively fixed evolutionary direction, which is not conducive to its maintenance of population diversity. Genetic algorithm has obvious advantages over PSO and WOA in maintaining population diversity, because it produces a large number of new solutions with great differences through selection, crossover and variation. Therefore, this paper introduces the genetic evolution mechanism into the optimization algorithm, so as to better maintain the population diversity of the optimization algorithm. However, if the genetic algorithm is introduced blindly and excessively, it will destroy the favorable information obtained by the long-term iterative learning of individuals on a large scale, which is not conducive to optimization. Based on the genetic algorithm and the elite archiving set, this paper proposes the following improvement strategy to prevent the aggregation of individuals in the population to maintain the diversity of the population, which is denoted as the improved elite archiving set mechanism (IESM). The specific steps are as follows:

Step 1: add the updated non-dominanted individuals in each generation to the elite archive set;

Step 2: determine whether the size of the archive exceeds the predetermined value . If the condition is not true, skip step 3 and perform step 4.

Step 3: calculate the fusion distance between each individual to the archive (formula 34), and delete the individual with a smaller fusion distance until the size of the archive is maintained at a predetermined value;

Step 4: calculate the fusion distance radius of the elite individuals. The radius is the maximum distance between each individual in the elite archive set and the archive;

Step 5: test whether the number of individuals in the archive range in the current population is greater than the threshold value . If the condition is not established, it indicates that there is no phenomenon of individuals gathering in the archive range in the population at this time. The fusion distance between the individual and the archive is less than the radius of the archive , which indicates that the individual is within the range of the elite archive set.

Step 6: select, cross recombination and mutation operation of genetic algorithm is used to reset the individuals that gather in the elite archive set, until there is no phenomenon that individuals gather in the archive range in the population.

Obviously, this mechanism has the following advantages:

(1) Keep the scale of archive little than the predetermined value ().

(2) Ensure that elite individuals are evenly distributed in the elite archive set.

(3) Suppress the archive ‘domination’ of the entire population by restricting the concentration of individuals in the archive () to enhance the global convergence performance of the hybrid optimization algorithm.

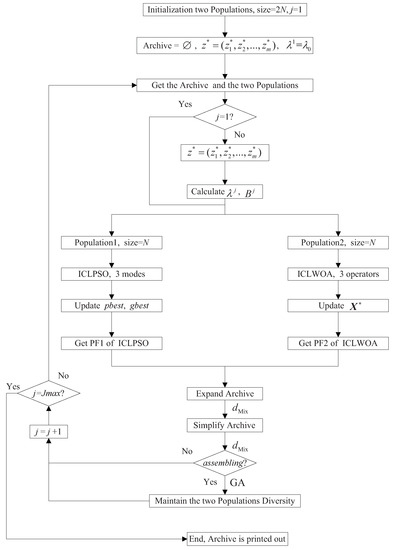

4.4. Design of Hybrid Optimization Algorithm

PSO and WOA have a fixed optimization mode, and this optimization mode with certain optimization direction will damage the diversity of the population to a certain extent. Therefore, this paper designs a hybrid optimization algorithm which uses the elite archive as the medium of information exchange. The intelligent evolution process of its hybrid optimization algorithm is denoted as IEP, and the specific IEP flow chart is shown in Figure 8.

Figure 8.

Flow chart of improved multi-objective hybrid optimization algorithm using a comprehensive learning strategy (CLHOA).

As shown in Figure 8, the step of improved CLHOA IEP is as follows:

Step 1:

(1) Initialize particle swarm (the size is N) and whale swarm (the size is N), including the velocity and position of each particle in the particle swarm, the Tchebycheff aggregation function value, and the individual optimal value, global optimal value of particle swarm, the Tchebycheff aggregation function value of each whale position in the whale group, the optimal whale position of the whale group . The current iteration number is 1, and the number of weight vectors in each neighborhood is T.

(2) To initialize the common elite archive set of particle swarm and whale swarm, let archive = ∅ and initialize the reference point , where , and m is the number of optimization objectives. A uniformly distributed weight vector set is generated and used in the initial iteration calculation of ICLPSO and ICLWOA. let , the elements in each row and column of the matrix are uniformly distributed.

Step 2:

(1) Archive and two populations (particle swarm and whale swarm) are obtained.

(2) If the current iteration number is greater than 1, recalculate the reference points , and . In the j-th iteration, the weight of the k-th optimization index of the i-th individual in the population is , , , and its calculation formula is as follows [34].

For any Pareto solution target in a continuous Pareto front, the weight vector is obtained according to the formula . The optimal solution of the single objective subproblem corresponding to the weight vector is the Pareto solution target . Because Pareto front is not easily available, it is replaced by the nearest solution target to in the archive. The angles between and the weight vectors of other individuals are calculated (), then take the smallest T weight vectors in to form the neighbor of .

(3) For each particle in the particle swarm, the particle velocity update process (Section 3.1) of ICLPSO is used to calculate the particle velocity and update the particle position. For each whale in the whale population, the whale location is updated according to the three stages of ICLWOA, and three additional learning strategies are adopted in the update process (Section 3.2).

(4) Update individual value and global optimal value based on the aggregate function’s value. If ( could be , or ), the and will be replaced by and .

(5) Obtain the Pareto front of the current particle population and the Pareto front of the whale population.

Step 3: information exchange based on the archive

(1) Expand the scale of the archive: extend the Pareto front of the current particle swarm and whale swarm to the elite archive. If there are the same individuals or dominated individuals, delete them until any two individuals in the elite archive are different and there is no dominated relationship.

(2) Maintain the size of the archive: if the size of the elite archive set exceeds the preset value, calculate the crowding distance between the elite solutions and delete the particle with the smallest crowding distance. If the size of the elite archive set still exceeds the preset value, continue to perform that until the size remains at the preset value. Fusion distance is used as the index of distance measurement.

(3) Restrict the concentration of the population in the archive area: if there are individuals within the range of the archive in the population, the individuals within the elite archive set will be partially reset by selection, cross recombination, mutation operation of genetic algorithm until there is no individuals that are clustered within the range of archive. In order to better retain the favorable information obtained by the previous iteration of the individual, the object of the crossover recombination operation of the i-th individual in the j-th iteration needs to be selected from the individuals set corresponding to the neighbor of its weight vector. Same as in step 2, the fusion distance is adopted as the distance measurement index.

Step 4: if the maximum iteration number (or the maximum evaluation number) is reached, then terminate the algorithm and output the archive set, otherwise return Step 2.

5. The Experimental Simulation

5.1. Optimization Performance Analysis based on Standard Test Functions

In order to evaluate the performance of the proposed algorithm, six test functions were used in this paper [35,36]. The specific six test functions were two-objective function ZDT series (ZDT1 ZDT2 and ZDT3) and three-objective test function DTLZ series (DTLZ1 DTLZ2 and DTLZ7). ICLHOA (improved algorithm proposed in this paper) was compared with the results optimized by ICLWOA, ICLPSO, dMOPSO (multi-objective particle swarm optimization based on decomposition) [37], NSGAII (non-dominated sorting genetic algorithm II) [38] and MOEA/D (multi-objective evolutionary algorithm based on decomposition) [39]. ZDT1, ZDI2 and ZDI3 set 30 decision variables, and DTLZ1, DTLZ2 and DTLZ7 used seven, 12 and 22 decision variables, respectively. The specific parameters of the algorithm are as follows.

(1) ZDT function: the neighborhood size T was 10; the number m of targets was two; the number N of individuals was 100; the maximum number of evaluation was 30,000.

(2) DTLZ function: the neighborhood size T was 10; the number m of targets is three; the number N of individuals was 200; the maximum number of evaluation was 20,000.

In this paper, the inverse generation distance (IGD), which can represent the convergence and diversity of the algorithm, is used as the evaluation index. The calculation formula is shown in Formula (33).

where is the set of uniform sampling points of the real Pareto front; is the approximate Pareto solution set obtained by the algorithm to be tested; is the size of set ; is the minimum distance between the i-th Pareto sampling point and the Pareto solution set [40].

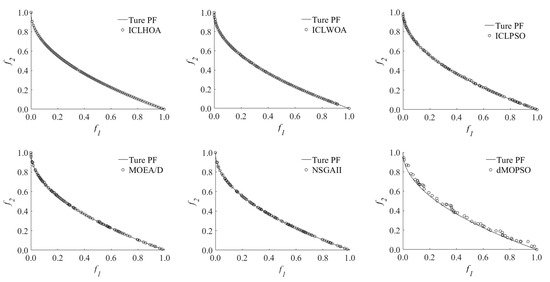

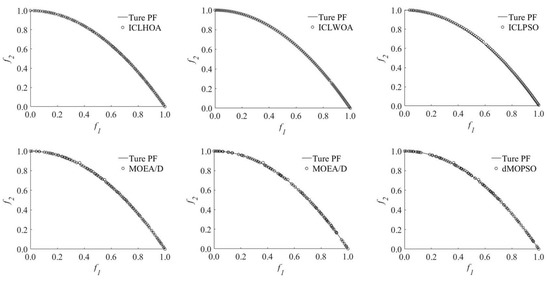

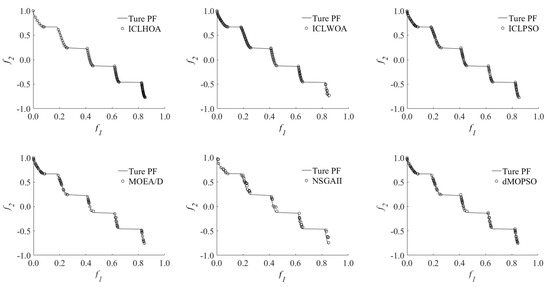

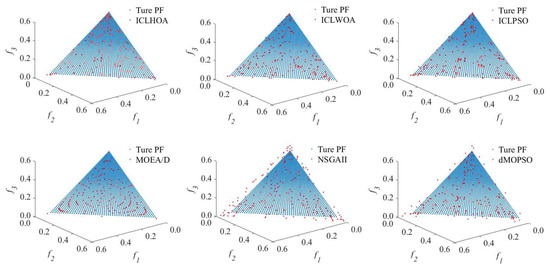

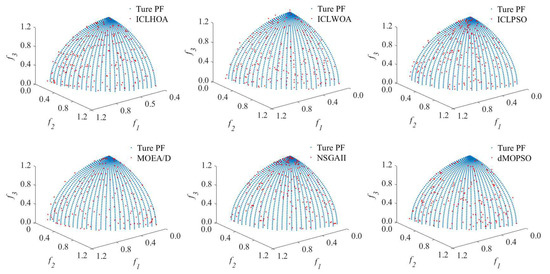

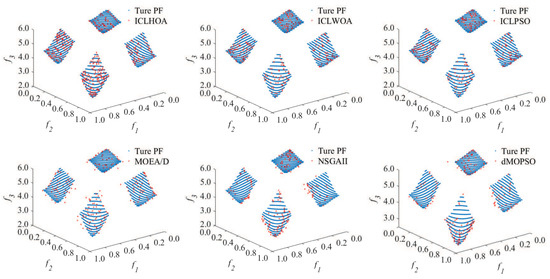

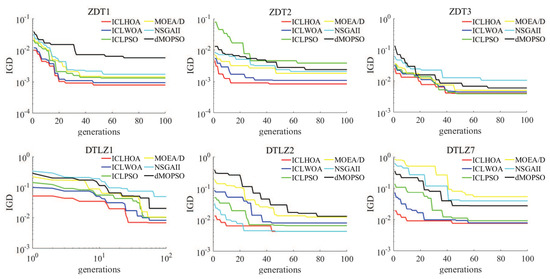

The specific IGD values of each optimization algorithm are shown in Table 1, the PF (real Pareto front and approximate Pareto solution set) of each optimization algorithm is shown in Figure 9, Figure 10, Figure 11, Figure 12, Figure 13 and Figure 14, and the iterative convergence curves of IGD values of each optimization algorithm are shown in Figure 15.

Table 1.

Inverse generation distance (IGD) value of each optimization algorithm.

Figure 9.

ZDT1 PF of each optimization algorithm.

Figure 10.

ZDT2 PF of each optimization algorithm.

Figure 11.

ZDT3 PF of each optimization algorithm.

Figure 12.

DTLZ1 PF of each optimization algorithm.

Figure 13.

DTLZ2 PF of each optimization algorithm.

Figure 14.

DTLZ7 PF of each optimization algorithm.

Figure 15.

The iterative convergence curve of inverse generation distance (IGD) values of each optimization algorithm.

It can be seen from Table 1 that, compared with the traditional algorithm and its improvement algorithm, ICLHOA had significant advantages over ZDT series of dual-objective test functions and DTLZ series of three-objective test functions; only in ZDT3, ICLHOA was slightly inferior to ICLPSO, and in DTLZ2, ICLHOA was slightly inferior to NSGAII. According to Figure 9, Figure 10, Figure 11, Figure 12, Figure 13 and Figure 14, the optimization results obtained by ICLHOA were closer to the real front of ZDT1, ZDT3, DTLZ2 and DTLZ7, and were distributed more evenly. Only in ZDT3 and DTLZ2, the optimization results of ICLHOA had no obvious advantages over ICLWOA and ICLPSO. According to Figure 16, compared with other optimization algorithms, ICLHOA not only found better IGD value, but also had a significantly faster convergence rate. Only on DTLZ2, the convergence rate of ICLHOA is slightly lower than that of NSGAII. In conclusion, ICLHOA had better optimization performance than other optimization algorithms in ZDT and DTLZ series test functions.

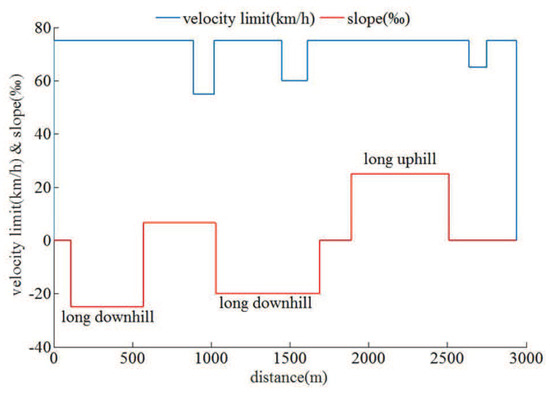

Figure 16.

The schematic diagram of ramp parameters and train speed limit.

5.2. The Relevant Data and Simulation Platform of the Practical Example of Multi-Objective ATO

This paper selects the station area between new port in Lvshun and Tieshan town of Dalian rail transit line number 12 as the research object. The running section length was 2.94 km, with two long lower ramps and one long upper ramp. Dalian rail transit line number 12 is an urban rail transit line extending from Hekou station to new port in Lvshun, which has eight stations and seven operating zones. The specific basic train attributes are shown in Table 2, and the ramp parameters and train speed limit are shown in Figure 16.

Table 2.

Characteristics of the train.

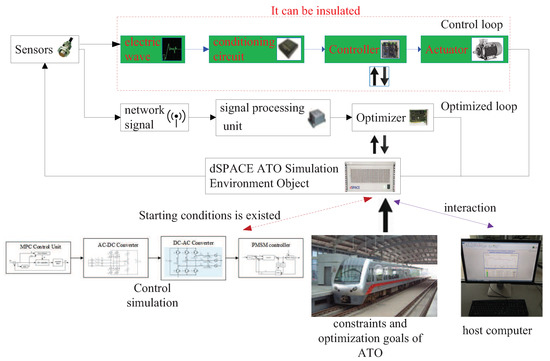

Most of the research on ATO for the traditional correlation algorithm is the offline simulation platform based on Matlab/Simulink. This simulation platform has serious defects. Because there is no real device or real controlled object in the simulation platform, the virtual simulation environment is a very ideal simulation environment, which is greatly different from the actual real-time and online ATO operation control environment. In order to solve the serious disconnection between the simulation environment and the actual situation, Germany dSPACE company developed a set of semi-physical real-time simulation platform based on Matlab/Simulink. The new dSPACE real-time simulation system contains some hardware objects such as controllers, which is also known as hardware-in-loop simulation (HILS). Because HLIS is able to safely and efficiently verify the optimal performance or control performance for electrical and control products, it can accelerate the breakthrough of the core algorithm, greatly improve the test efficiency, greatly reduce the development risk, greatly shorten the online time, and save the development cost as much as possible. After decades of development, HILS has been deeply favored by scientific research units and manufacturers all over the world. In China, many manufacturers (CRRC, China’s State Grid, China First Automobile) and higher teaching institutions (Shanghai Jiaotong University, National University of Defense Technology, Harbin Institute of Technology) have carried out scientific research in related fields based on HILS, and have achieved fruitful research results [41,42,43,44]. ATO has both optimization and control functions: the main processor unit (MPU) uses an optimization algorithm to optimize the train’s ideal target speed distance curve based on constraints such as line condition and optimization objectives such as expected running time. The traction control unit (TCU) uses a proper control algorithm to enable the train to track the target speed curve in real time. Therefore, the HILS platform contains two simulation parts: the upper optimization and the lower control. The upper optimization simulation aims to verify the optimization performance of optimizer (MPU), while the lower control simulation aims to verify the controllability of controller (TCU). The structure diagram of ATO HILS platform and the physical diagram of the simulation cabinet are shown in Figure 17 and Figure 18.

Figure 17.

The structure diagram of automatic train operation (ATO) hardware-in-the-loop simulation (HILS) platform.

Figure 18.

The physical diagram of the simulation cabinet.

The automatic train operation HILS platform as shown in Figure 17, contains five kinds of actual hardware necessary for ATO, and two kinds of simulation hardware in place of actual hardware. Here is the specific actual hardware: sensors, conditioning circuit, signal processing unit, controller, optimizer, actuators. Among which, the controller (TCU) and optimizer (MPU) respectively contain the dSPACE board of the write-in control algorithm and optimization algorithm, which are the core equipment of ‘control loop’ and ‘optimized loop’; the sensors, conditioning circuit, signal processing unit are the equipment for connecting different equipment, collecting and processing different data information. The ‘control loop’ and ‘optimized loop’ maintain real-time communication with ‘dSPACE ATO simulation environment object’ through them, and use MVB (multifunction vehicle bus) as the communication protocol. The emulator and actuators are the two kinds of simulation hardware for ATO HILS platform, which is used for virtual replacement of the real environment or components not easily obtainable in real ATO, for example, running route, collecting electric network for traction, etc. The Emulator is used to provide the ATO simulation environment, for example, using rheostat to simulate the actual line. The Actuators are the actuating mechanism in ATO, mainly including inverter, rectifier and traction motors. Generally, their rated powers are , which is the actuator simulation proportionality coefficient in a real circumstance and it is 1/2000 in this paper. In automatic train operation HILS platform, the control loop with the controller (TCU) as the core can be isolated. When the ‘control loop’ is isolated, corresponding control simulation module will be used in place of corresponding physical components as the hardware in ‘dSPACE ATO simulation environment object’ can be activated so as to virtualize the practical ATO control. In this paper, the HILS platform isolating the ‘control loop’ that contains the physical hardware component, is marked as ICL-HILS, and the HILS platform retaining the ‘control loop’ that contains the physical hardware components as RCL-HILS.

In Figure 18, the simulation cabinet contains four kinds of hardware devices of HILS platform: ‘emulator’ provides ‘dSPACE ATO simulation environment object’ to the HILS platform, which includes various related models, such as the vehicle dynamics model, wheel and rail model, line model, accurate braking model, traction transformer model, traction rectifier model, traction inverter model, traction motor model, etc; ‘conditioning circuit’ can regulate electrical signals appropriately; ‘signal processing unit’ can adjust the network signal accordingly; ‘controller (TCU)’ can apply ATO real-time control instructions according to the actual situation. The HILS platform also has many external service devices, such as optimizer (MPU), speed sensor, current sensor, permanent magnet synchronous motor (PMSM), AC–DC converter, DC–AC converter, etc.

As the HILS platform contains a large number of real train-borne equipment, it can truly reflect the real-time data interactive, conversion and processing in the actual ATO. Therefore, HILS environment completely overcomes the various defects that Matlab/simulink simulation cannot consider the sensor sampling accuracy, can only ignore the disturbance imposed by signal transmission delay and imposes an overly idealistic disturbance. The specific differences among Matlab/simulink, ICL-HILS and RCL-HILS are shown in Table 3.

Table 3.

Differences of three different simulation platforms.

5.3. The Optimization Result of Multi-Objective ATO Actual Example

Based on the metro train of Dalian rail transit line number 12 and the running line between the new port station in Lvshun and Tieshan town station area, Matlab/simulink, ICL-HILS and RCL-HILS are adopted, and ICLHOA, ICLWOA, ICLPSO, dMOPSO [36], NSGAII [37] and MOEA/D [38] are respectively used to find optimal solutions. The algorithms mentioned above are written into the optimization model of Matlab/simulink and the chips of optimizer (MPU) of ICL-HILS and RCL-HIL. The simulation environment of the algorithm is the same, including the basic settings of algorithm parameters and the configuration of software and hardware, and the specific configuration is described below: The population size is 100; the number of iterations is 150; configuration of Matlab/simulink platform is the same (Matlab GUI 2016a, CPU Core i7, Windows 10); configuration of HILS platform is the same (the core chip of the controller is ‘TMS320F28335’, the processor of emulator is ‘DS1006’). The controller of HILS platform has identical control algorithms, and also chooses fuzzy PID (fuzzy proportion integration differentiation) or MPC as control algorithms, which is good in control performance and strong in universality. The optimal solution must satisfy the following conditions: the train’s instantaneous speed must not exceed the speed limit; the train must complete the journey; the running time error is less than 0.2 seconds. When the planned running time is 180 s, the velocity ideal trajectory profile, control sequence distance curve and optimization results obtained by different algorithms are shown in Figure 19, Figure 20, Figure 21, Figure 22, Figure 23 and Figure 24 and Table 4, Table 5 and Table 6.

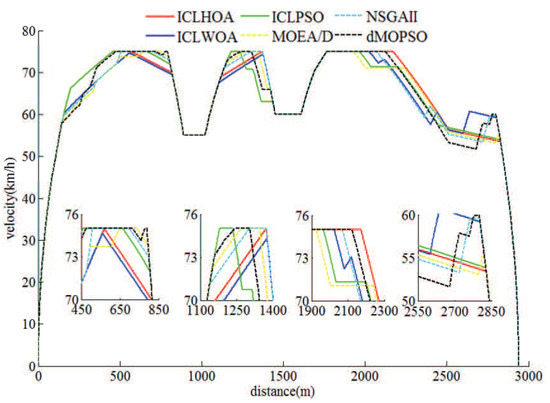

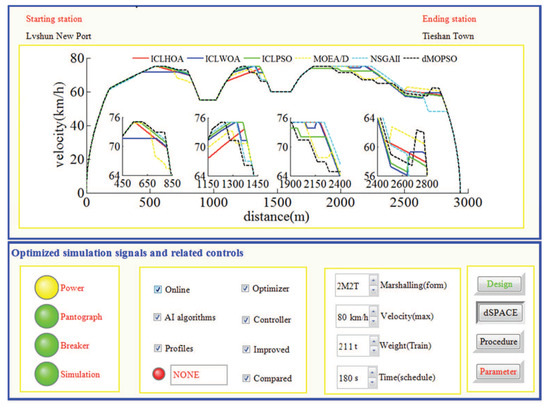

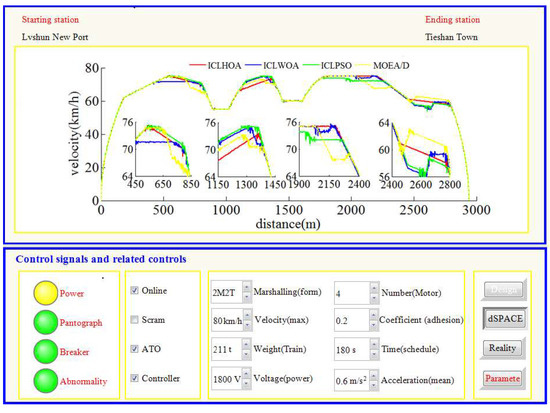

Figure 19.

The velocity ideal trajectory profile obtained by different algorithms in Matlab/simulink.

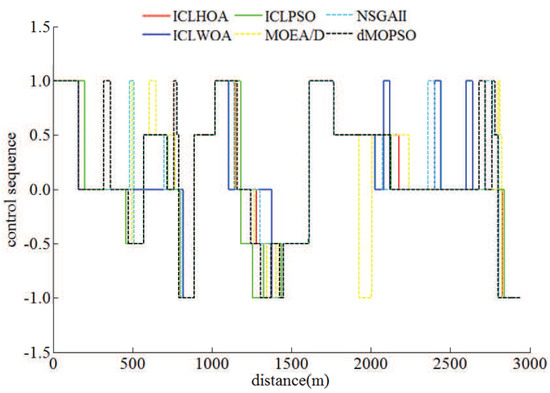

Figure 20.

The control sequence distance curve obtained by different algorithms in Matlab/simulink.

Figure 21.

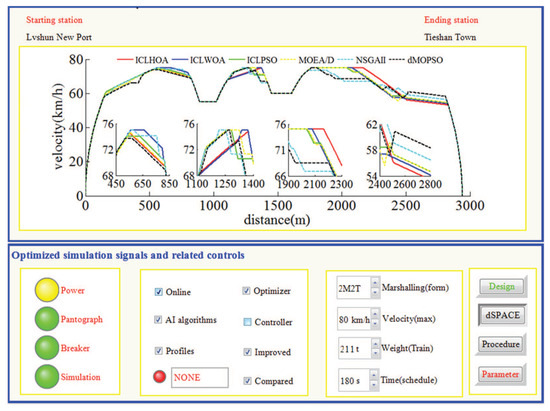

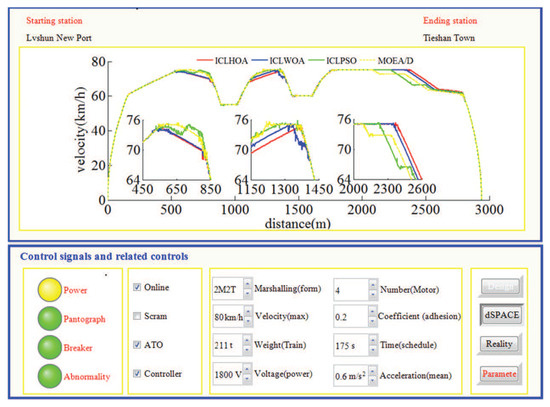

The velocity ideal trajectory profile obtained by different algorithms in isolating ‘control loop’ containing physical hardware devices (ICL)-HILS.

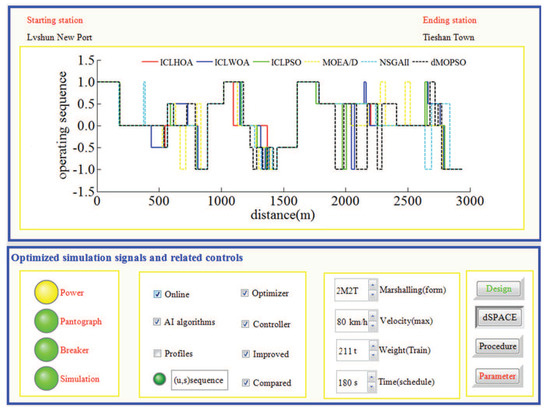

Figure 22.

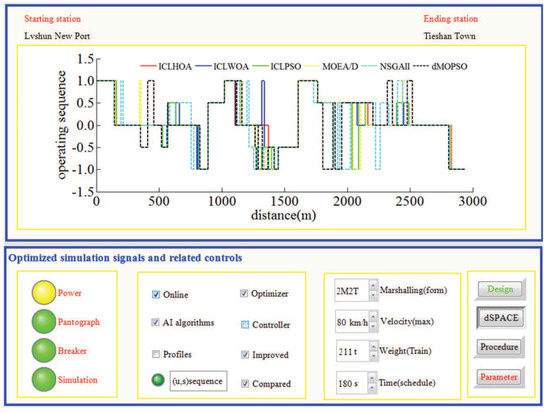

The control sequence distance curve obtained by different algorithms in ICL-HILS.

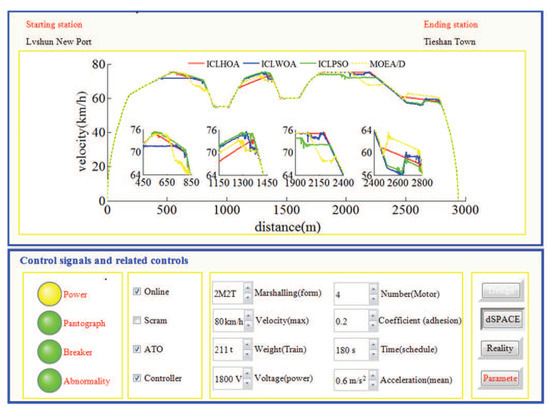

Figure 23.

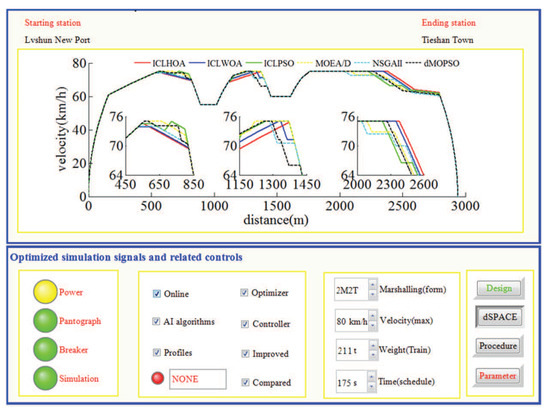

The velocity ideal trajectory profile obtained by different algorithms in retaining ‘control loop’ containing physical hardware devices (RCL)-HILS.

Figure 24.

The control sequence distance curve obtained by different algorithms in RCL-HILS.

Table 4.

Optimization results of velocity ideal trajectory profile obtained by different algorithms in Matlab/simulink.

Table 5.

Optimization results of velocity ideal trajectory profile obtained by different algorithms in isolating ‘control loop’ containing physical hardware devices (ICL)-hardware-in-the-loop simulation (HILS).

Table 6.

Optimization results of velocity ideal trajectory profile obtained by different algorithms in retaining ‘control loop’ containing physical hardware devices (RCL)-HILS.

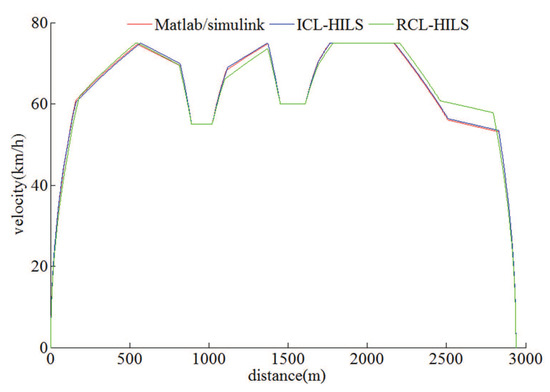

Figure 21, Figure 22, Figure 23 and Figure 24 show that in the real-time simulation of ICL-HILS and RCL-HILS, the power was switched on, the pantograph was raised, and the circuit breaker was normally closed. At the same time, the dSPACE simulator was in the working state (the dSPACE button was pressed and the procedure button is waiting to be pressed), the human–computer interaction signal was normal (the design button was green), and the parameters cannot be changed (the parameters button was red). Table 3, Table 4 and Table 5 show that, based on Matlab/Simulink, ICL-HILS and RCL-HILS, when the planned running time was 180 s and the simulation operation environment was the same, the optimal solution obtained by ICLHOA was superior to other optimization algorithms, and the three indicators, such as energy saving, punctuality and comfort have been improved to a considerable extent. The running line selected in this paper was located in the hilly area of economic and technological development zone in Lvshun, Dalian, and hilly was the typical geomorphologic feature in Dalian. In such a terrain, the control sequence needs to be concise and able to use the large downhill for acceleration and large uphill for deceleration, as much as possible, in order to reduce turbulence, save energy and improve punctuality. As can be seen from Figure 25, ICLHOA can obtain extremely smooth target velocity distance curve under the three simulation platforms mentioned above, and can maximize the use of long uphill and long downhill slopes. As can be seen from Figure 20, Figure 22, and Figure 24, the control sequence obtained by ICLHOA was the most concise, which can avoid unnecessary manipulation to the greatest extent As can be seen from Figure 19, Figure 21, and Figure 23, the target speed distance curve obtained by ICLHOA was the smoothest, compared with other algorithms, which enables the train to maintain an appropriate speed more smoothly. This advantage was particularly evident in the 12 enlarged areas of the three figures. The control algorithm (fuzzy PID or model predictive control) used is written in the kernel chip of controller (TCU) of ‘control loop’ in RCL-HILS. Under RCL-HILS, the target velocity distance curve obtained by partial optimization algorithm can be tracked and controlled by the controller. When the planned running time was 180 s, the actual velocity distance curve and corresponding control results obtained by partial tracking control algorithms under RCL-HILS are shown in Table 7 and Table 8 and Figure 26 and Figure 27.

Figure 25.

The velocity ideal trajectory profile obtained by improved multi-objective hybrid optimization algorithm using a comprehensive learning strategy (ICLHOA) in each simulation platform.

Table 7.

The tracking model predictive control (MPC) results of the velocity ideal trajectory profile obtained by partial algorithms under RCL-HILS.

Table 8.

The tracking fuzzy PID results of the velocity ideal trajectory profile obtained by partial algorithms under RCL-HILS.

Figure 26.

The actual velocity model predictive control (MPC) tracking trajectory profile obtained by different algorithms in RCL-HILS.

Figure 27.

The actual velocity fuzzy PID tracking trajectory profile obtained by different algorithms in RCL-HILS (peak time period).

As can be seen from Figure 26 and Figure 27, whichever control algorithm is adopted (MPC or fuzzy PID), when RCL-HILS real-time tracking control is applied, the power supply was switched on, the pantograph was raised, and the circuit breaker is normally closed. At the same time, the dSPACE simulator was in the simulation state (the ‘dSPACE’ button is pressed and the ‘reality’ button was waiting to be pressed), the design parameters cannot be changed in the tracking control state (the ‘design’ button was white) and the given parameters cannot be changed (the ‘parameters’ button was red). As can be seen from the four enlarged areas in Figure 26 and Figure 27, whichever control algorithm was adopted (MPC or fuzzy PID), the tracking control speed curve corresponding to ICLHOA was optimal compared with other algorithms. Because the ideal curve obtained by ICLHOA was relatively smooth, it was easier to avoid the phenomenon of unstable speed control caused by signal transmission delay, limited control performance and uncertain disturbance in the simulation process. The reason is that the ICLHOA optimizer (MPU) kernel chip has more powerful optimization ability to find a more optimized target speed distance curve, and the target speed distance curve is smoother, which is easy to be tracked by a tracking control algorithm. It can be seen from Table 6 that, in terms of the actual running time, ICLWOA and ICLPSO algorithms barely reached the standard under RCL-HILS, and the running time error was about 0.2 s; NSGAII and dMOPSO algorithms can reach the standard under ICL-HILS, but they cannot be tracked and controlled under RCL-HILS due to insufficient margin, and the running time errors were 0.1912 s and 0.1797 s, respectively. If the comfort level was limited below 175 (m/s, the target velocity trajectory obtained by MOEA/D algorithm cannot be tracked and controlled under the current conditions. To sum up, ICLHOA was more suitable for solving ATO multi-objective train operation process optimization problem, and the actual train operation process results can meet the expected assumptions even under RCL-HILS. Furthermore, a large number of control algorithms (such as MPC and fuzzy PID) are suitable to being used as tracking control algorithms for ATO. However, some control algorithms (such as MPC) with good tracking performance are more recommended. Compared with fuzzy PID, more ideal tracking results is obtained by adopted MPC (as shown in Figure 26 and Figure 27 and Table 7 and Table 8), the fluctuation of velocity was more restrained (as shown in the four enlarged areas in Figure 26 and Figure 27, especially the enlarged area which distance interval was [2400,2800]).

At running time arrangement plan, the planned running time of the simulation line was 180 s in the flat time period (the traffic is relatively ideal, such as weekday morning 9:00–11:00 h), the planned running time is 175 s during peak time period (the traffic is relatively nervous, such as weekday morning 6:00–8:00 h). If the planned running time was 175 s, there were still similar simulation conclusions under the same calculation conditions, which further indicates that ICLHOA is more suitable for solving ATO multi-objective train running process optimization problem. Under RCL-HILS, the optimal target velocity distance curves and their corresponding optimization results obtained by different algorithms and the actual velocity distance curves and their corresponding control results obtained by some algorithms through tracking MPC are shown in Figure 28 and Figure 29 and Table 9 and Table 10.

Figure 28.

The actual velocity MPC tracking trajectory profile obtained by different algorithms in RCL-HILS (peak time period).

Figure 29.

The velocity ideal trajectory profile obtained by different algorithms in RCL-HILS (peak time period).

Table 9.

The tracking MPC results of the velocity ideal trajectory profile obtained by partial algorithms under RCL-HILS (peak time period).

Table 10.

Optimization results of velocity ideal trajectory profile obtained by different algorithms in RCL-HILS (peak time period).

In order to verify that the improved algorithm (ICLHOA) in this paper has good universality and better practical optimization effect, multiple ATO scenarios are given as the optimized object for ICLHOA and some comparison algorithms, the optimization effect as shown in Table 4, Table 5, Table 6, Table 7, Table 8, Table 9 and Table 10 and Figure 19, Figure 20, Figure 21, Figure 22, Figure 23, Figure 24, Figure 25, Figure 26, Figure 27, Figure 28 and Figure 29. Obviously, in some different types of ATO scenarios (different simulation environments, different control algorithms, different control algorithms, different ATO requirements), compared with these comparison algorithms (ICLWOA, ICLPSO, dMOPSO, NSGAII and MOEA/D), ICLHOA obtained more ideal optimization results. This shows that ICLHOA is an all-purpose algorithm with good practical optimization effect for ATO velocity ideal trajectory profile optimization design problem.

6. Conclusions

ATO velocity ideal trajectory profile optimization design problem is a complex optimization problem that needs to take into account the energy consumption, running time, comfort and parking accuracy, which is not easy to obtain the ideal optimization solution. In order to, in any circumstance, obtain the ATO velocity ideal trajectory profile with a excellent effect for the whole optimization, the scientific researchers relating to it are required to provide an optimized suitable for most ATO velocity ideal trajectory profile optimization design problems, and having a very good effect for optimized algorithm. However, as far as the status quo is concerned, there are three types of problems needing urgent solution.

(1) The optimization performance of the optimized algorithm used is not good enough, or is unable to find the ATO velocity ideal trajectory profile with better comprehensive performance indicators, or is able to find it but which is not easy to be tracked and controlled. There are two possibilities, i.e., the design of the optimization algorithm itself is not excellent, or it is not suitable for finding an excellent ATO velocity ideal trajectory profile.

(2) The optimization algorithm used is strongly restrictive, with which some ATO velocity ideal trajectory profiles with special characteristics can be designed, for example, the mileage of driving route cannot be greater than the threshold, and it is only limited to low-speed light rail or metro, there cannot be too many long and big slope section on the route, it is not suitable for such route that is easy to get jammed, etc.

(3) The optimization algorithm used is poor in optimization efficiency during practical application. The actual ATO needs to consider the sampling accuracy, signal transmission delay or packet loss, as well as various unstable factors in the course of control. However, the simply simulated ATO model to be optimized cannot truly reflect these complicated circumstances. Actually, it is a special case in which a real ATO is simplified. This leads to some designed and improved optimization algorithms having no expected optimization effect in practical application.

In order to solve the above three kinds of problems more effectively, a thorough improvement has to be made to the optimization algorithm itself for the ATO velocity ideal trajectory profile optimization design problem so as to consider both the actual optimization performance and application scope. Therefore, ICLHOA proposed in this paper is a multi-objective hybrid optimization algorithm with excellent global optimization performance. ICLHOA combines the improved WOA and the improved PSO (ICLPSO and ICLWOA) which adopts the comprehensive learning strategy, and also uses the improved elite archive set mechanism and intelligent evolution process.

The advantages of ICLHOA are as follows:

(1) ICLPSO adopts a variety of particle optimization models to avoid the destruction of population diversity caused by a single model.

(2) In ICLWOA, chaos mapping operation is introduced to avoid random and blind search in the process of iterative calculation. Reverse learning is carried out for the individuals who cross the boundary, so as to better retain the beneficial information in the iterative process.

(3) Improved elite archive set mechanism is used to store the non-dominant solution in the optimization process, and the fusion distance is used to ensure the diversity of the elite archiving set.

(4) The dual-population parallel evolution process, which uses the elite archive set as the information communication medium, can greatly improve the computational efficiency and improve the global convergence performance of ICLHOA.

According to the Matlab/simulink results and ATO HILS (hardware-in-the-loop simulation) results, compare with the traditional intelligent optimization algorithm and its improvement algorithm, ICLHOA has better global convergence performance, so it can get more ideal optimization results. In this paper, two different autonomous driving scenarios (the planned running times are 180 s and 175 s) are taken into account, and ICLHOA is optimized to obtain more ideal target velocity trajectory, and the tracking control effect under RCL-HILS is very ideal. This shows that ICLHOA is able to find a more ideal solution for complex ATO practical problems, compared with the traditional optimization algorithm and its improved algorithm, thus it has stronger applicability.

Author Contributions

The work presented here was performed in collaboration among all authors. L.W. designed, analyzed, and wrote the paper and completed the simulation experiment. X.W. guided the full text and provided simulation conditions. K.L. conceived idea and involved simulation experiment. Z.S. involved simulation experiment and analyzed the data. All authors have contributed to, and approved the manuscript.

Funding

This research was funded by the “Nature Science Foundation of China” (grand number 51609033 and 61773049).

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| ATO | automatic train operation |

| ICLHOA | improved multi-objective hybrid optimization algorithm using a comprehensive learning strategy |

| ICLPSO | improved particle swarm optimization using a comprehensive learning strategy |

| ICLWOA | improved whale optimization algorithm using a comprehensive learning strategy |

| HILS | hardware-in-the-loop simulation |

| ICL | isolating ‘control loop’ containing physical hardware devices |

| RCL | retaining ‘control loop’ containing physical hardware devices |

References

- Chang, C.S.; Xu, D.Y. Differential evolution based tuning of fuzzy automatic train operation for mass rapid transit system. IEEE Proc. Electr. Power Appl. 2000, 147, 206–212. [Google Scholar] [CrossRef]

- Cai, B.; Sheng, Z.; Shang, G.W.; Sun, J. Train trajectory optimization with dynamic headway. In Proceedings of the 36th Chinese Control Conference, Dalian, China, 26–28 July 2017; pp. 9920–9925. [Google Scholar]

- Adrián, F.-R.; Antonio, F.-C.; Asunción, P.C.; Marı’a, D.; Tad, G. Design of Robust and Energy-Efficient ATO Speed Profiles of Metropolitan Lines Considering Train Load Variations and Delays. IEEE Trans. Automat. Sci. Eng. 2015, 16, 2061–2071. [Google Scholar]

- María, D.; Antonio, F.-C.; Asunción, P.C.; Ramón, R.P. Energy Savings in Metropolitan Railway Substations Through Regenerative Energy Recovery and Optimal Design of ATO Speed Profiles. IEEE Trans. Automat. Sci. Eng. 2012, 9, 496–504. [Google Scholar]

- Gu, Q.; Meng, Y.; Ma, F. Energy saving for automatic train control in moving block signaling system. China Commun. Suppl. 2014, 11, 12–22. [Google Scholar] [CrossRef]

- Gu, Q.; Tang, T.; Cao, F.; Song, Y. Energy-Efficient Train Operation in Urban Rail Transit Using Real-Time Traffic Information. IEEE Trans. Intell. Transp. Syst. 2014, 15, 1216–1233. [Google Scholar] [CrossRef]

- Gu, Q.; Tang, T.; Ma, F. Energy-Efficient Train Tracking Operation Based on Multiple Optimization Models. IEEE Trans. Intell. Transp. Syst. 2016, 17, 882–892. [Google Scholar] [CrossRef]

- Bai, Y.; Tin, K.H.; Mao, B.; Ding, Y.; Chen, S. Energy-Efficient Locomotive Operation for Chinese Mainline Railways by Fuzzy Predictive Control. IEEE Trans. Intell. Transp. Syst. 2014, 15, 938–948. [Google Scholar] [CrossRef]

- Gao, S.; Dong, H.; Chen, Y.; Ning, B.; Chen, G. Approximation-Based Robust Adaptive Automatic Train Control: An Approach for Actuator Saturation. IEEE Trans. Intell. Transp. Syst. 2013, 14, 1733–1742. [Google Scholar] [CrossRef]

- Jiateng, Y.; Chen, D.; Li, L. Intelligent Train Operation Algorithms for Subway by Expert System and Reinforcement Learning. IEEE Trans. Intell. Transp. Syst. 2014, 15, 2561–2571. [Google Scholar]

- Zhou, Y.; Tao, X. Robust Safety Monitoring and Synergistic Operation Planning Between Time and Energy-Efficient Movements of High-Speed Trains Based on MPC. IEEE Access 2018, 6, 17377–17390. [Google Scholar] [CrossRef]

- Meng, J.; Xu, R.; Li, D.; Chen, X. Combining the Matter-Element Model With the Associated Function of Performance Indices for Automatic Train Operation Algorithm. IEEE Trans. Intell. Transp. Syst. 2019, 20, 253–263. [Google Scholar] [CrossRef]

- Song, Y.; Song, W. A Novel Dual Speed-Curve Optimization Based Approach for Energy-Saving Operation of High-Speed Trains. IEEE Trans. Intell. Transp. Syst. 2016, 17, 1564–1575. [Google Scholar] [CrossRef]

- Shangguan, W.; Yan, X.; Cai, B.; Wang, J. Multiobjective Optimization for Train Speed Trajectory in CTCS High-Speed Railway With Hybrid Evolutionary Algorithm. IEEE Trans. Intell. Transp. Syst. 2015, 16, 2215–2225. [Google Scholar] [CrossRef]

- Yang, X.; Li, X.; Ning, B.; Tang, T. A Survey on Energy-Efficient Train Operation for Urban Rail Transit. IEEE Trans. Intell. Transp. Syst. 2015, 17, 2–13. [Google Scholar] [CrossRef]

- Saban, G.; Halife, K. A novel parallel multi-swarm algorithm based on comprehensive learning particle swarm optimization. Eng. Appl. Artif. Intell. 2015, 45, 33–45. [Google Scholar]

- Huang, V.L.; Suganthan, P.N.; Liang, J.J. Comprehensive learning particle swarm optimizer for solving multiobjective optimization problems: Research Articles. Int. J. Intell. Syst. 2006, 21, 209–226. [Google Scholar] [CrossRef]

- Ling, Y.; Zhou, Y.; Luo, Q. Lévy Flight Trajectory-Based Whale Optimization Algorithm for Global Optimization. IEEE Access 2017, 5, 6168–6186. [Google Scholar] [CrossRef]

- Sun, W.; Wang, J. Elman Neural network Soft-sensor Model of Conversion Velocity in Polymerization Process Optimized by Chaos Whale Optimization Algorithm. IEEE Access 2017, 5, 13062–13076. [Google Scholar] [CrossRef]

- Zhang, C.; Fu, X.; Leo, P.L.; Peng, S.; Xie, M. Synthesis of Broadside Linear Aperiodic Arrays With Sidelobe Suppression and Null Steering Using Whale Optimization Algorithm. IEEE Antennas Wirel. Propag. Lett. 2018, 17, 347–350. [Google Scholar] [CrossRef]

- Cale, J.; Johnson, B.; Dall’Anese, E.; Young, P.; Duggan, G.; Bedge, P.; Zimmerle, D.; Holton, L. Mitigating Communication Delays in Remotely Connected Hardware-in-the-loop Experiments. IEEE Trans. Ind. Electron. 2018, 65, 9739–9748. [Google Scholar] [CrossRef]

- Hasanzadeh, A.; Edrington, C.S.; Stroupe, N.; Bevis, T. Real-Time Emulation of a High-Speed Microturbine Permanent-Magnet Synchronous Generator Using Multiplatform Hardware-in-the-Loop Realization. IEEE Trans. Ind. Electron. 2013, 61, 3109–3118. [Google Scholar] [CrossRef]

- Riad, A.; Toufik, R.; Djamila, R.; Abdelmounaïm, T. Robust nonlinear predictive control of permanent magnet synchronous generator turbine using Dspace hardware. Int. J. Hydrog. Energy 2016, 41, 21047–21056. [Google Scholar]

- Yu, M.; Tang, X.; Lin, Y.; Wang, X. Diesel engine modeling based on recurrent neural networks for a hardware-in-the-loop simulation system of diesel generator sets. Neurocomputing 2018, 283, 9–19. [Google Scholar] [CrossRef]

- Cheng, J.; Howlett, P. Application of critical velocities to the minimisation of fuel consumption in the control of trains. Automatica 1992, 28, 165–169. [Google Scholar]

- Howlett, P.; Cheng, J. Optimal driving strategies for a train on a track with continuously varying gradient. J. Aust. Math. Soc. 1997, 38, 388–410. [Google Scholar] [CrossRef]

- Lu, H.; Zhang, M.; Fei, Z.; Mao, K. Multi-Objective Energy Consumption Scheduling in Smart Grid Based on Tchebycheff Decomposition. IEEE Trans. Smart Grid 2015, 6, 2869–2883. [Google Scholar] [CrossRef]

- Mirjalili, S.; Lewis, A. The Whale Optimization Algorithm. Adv. Eng. Softw. 2016, 95, 51–67. [Google Scholar] [CrossRef]

- Gao, L.; Hailu, A. Comprehensive Learning Particle Swarm Optimizer for Constrained Mixed-Variable Optimization Problems. Int. J. Comput. Int. Sys. 2010, 6, 832–842. [Google Scholar] [CrossRef]

- Kaur, G.; Arora, S. Chaotic Whale Optimization Algorithm. J. Comput. Des. Eng. 2018, 5, 275–284. [Google Scholar] [CrossRef]

- Dumitriu, T. A novel hybrid approach of Evolutionary Algorithm based on Imperialist Competitive Algorithm. In Proceedings of the 19th International Conference on System Theory, Control and Computing, Cheile Gradistei, Romania, 14–16 October 2015; pp. 140–146. [Google Scholar]

- Dharmbir, P.; Aparajita, M.; Gauri, S.; Vivekananda, M. Application of chaotic whale optimisation algorithm for transient stability constrained optimal power flow. IET Sci. Meas. Technol. 2006, 24, 83–88. [Google Scholar]

- Liu, G.; Wang, X. Fault diagnosis of diesel engine based on fusion distance calculation. In Proceedings of the Advanced Information Management, Communicates, Electronic & Automation Control Conference, Chongqing, China, 24–26 March 2017; pp. 1621–1627. [Google Scholar]

- Miettinen, K. Nonlinear Multiobjective Optimization; Kluwer Academic Publishers: Norwell, MA, USA, 2017. [Google Scholar]

- Zitzler, E.; Deb, K.; Thiele, L. Comparison of Multiobjective Evolutionary Algorithms: Empirical Results. Evolut. Comput. 2000, 8, 173–195. [Google Scholar] [CrossRef]