Multi-Step Short-Term Power Consumption Forecasting with a Hybrid Deep Learning Strategy

Abstract

:1. Introduction

- High prediction accuracy. The volatility level of single household power consumption is high due to the irregular human behaviours. Moreover, the source data is usually univariate, consisting only power consumption records in kilowatts (kws), which increases the difficulty for accurate power consumption forecasting.

- Multi-step forecasting. Most existing load forecasting works focus on one-step forecasting solutions. A longer time forecasting solution is required to facilitate real-world application usage, such as the dynamic electricity market bidding system design.

1.1. Related Works

1.2. Contributions

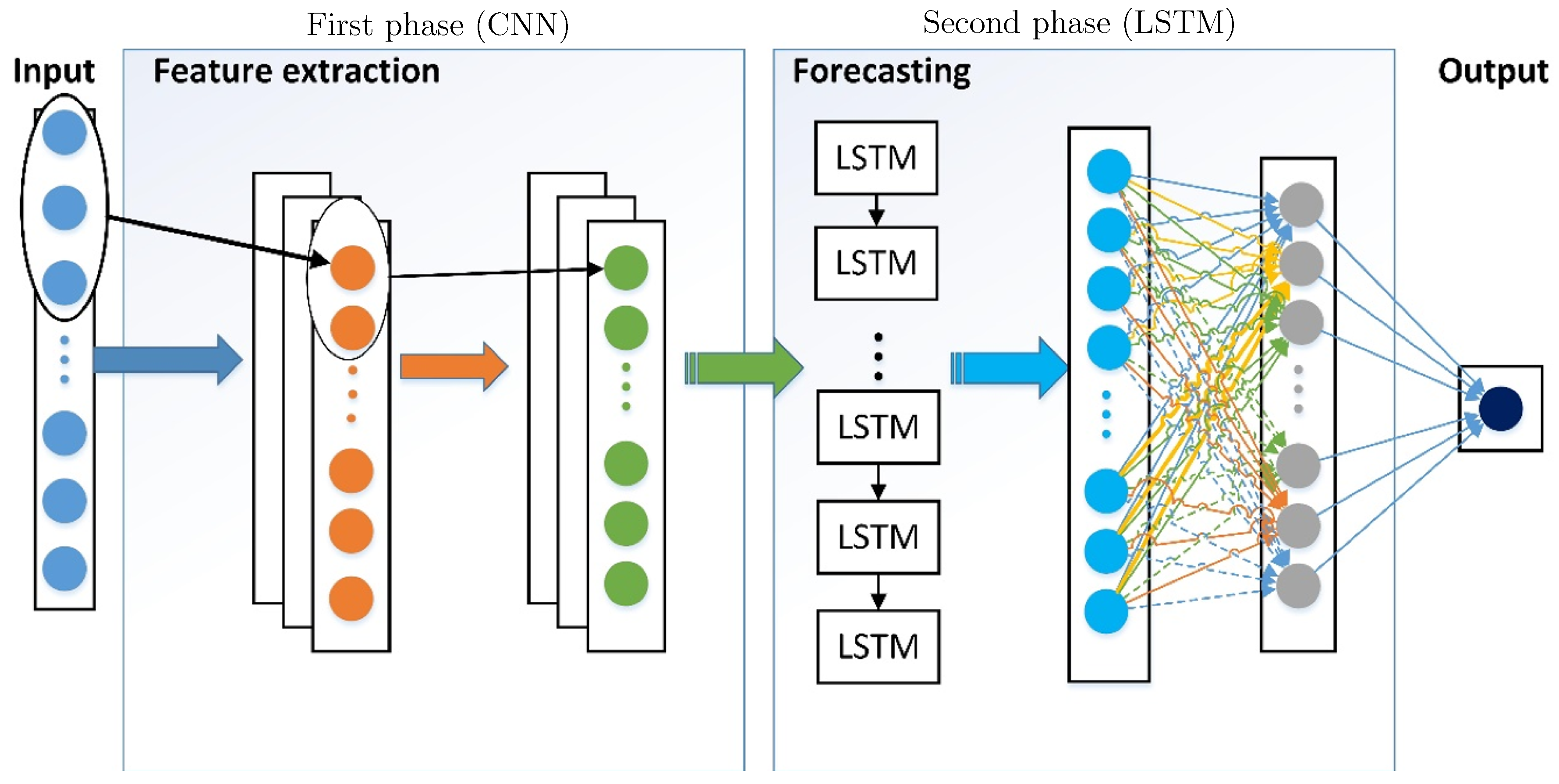

- A 1-D convolutional neural network is introduced to pre-process the univariate dataset and convert the original data into multi-dimensional features after two layers of temporal convolution operations.

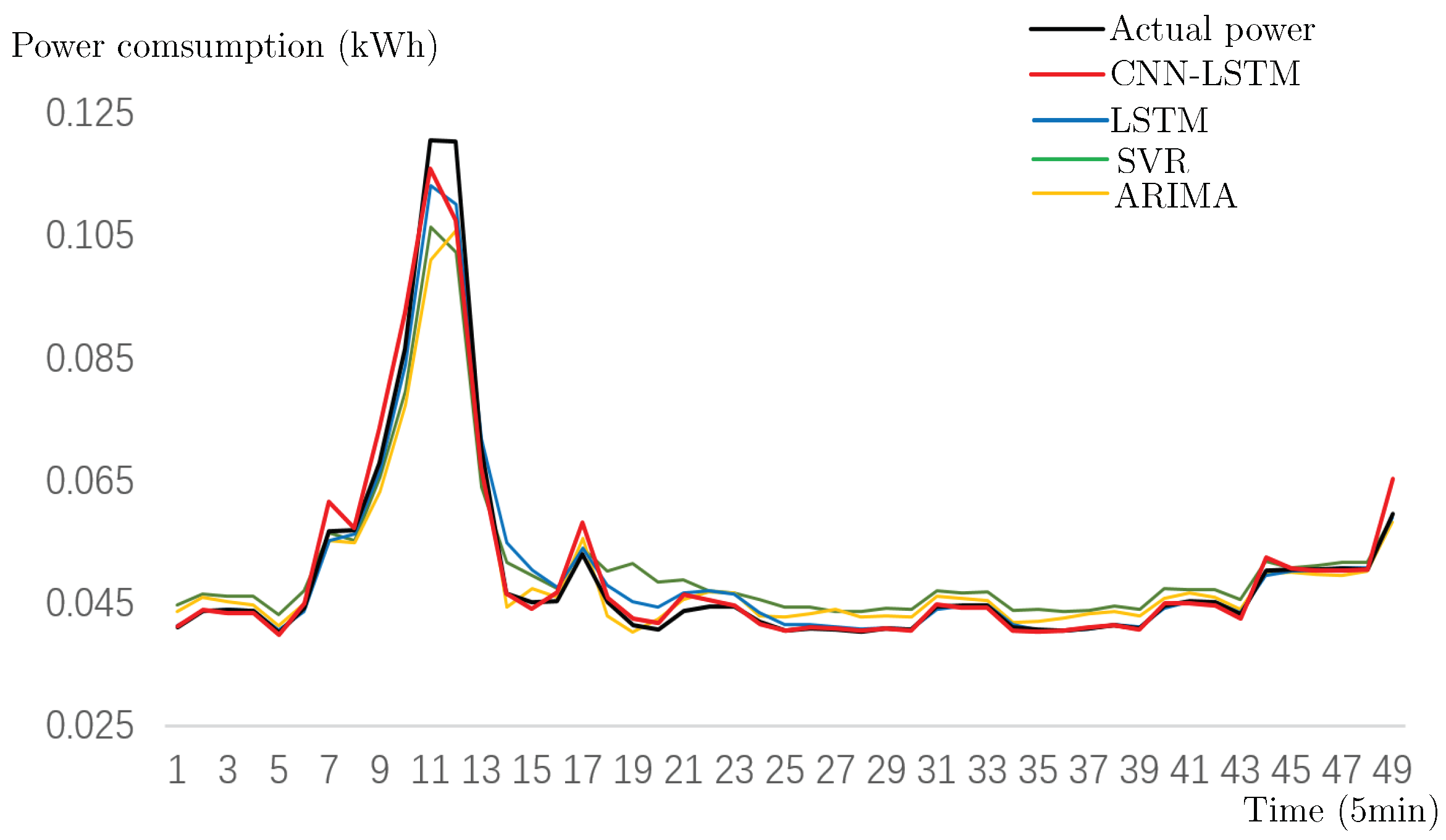

- A hybrid deep neural network is designed to forecasting power consumption for individual household. Experimental results show that the proposed framework outperforms most of the existing approaches including ARIMA, SVR and LSTM.

- A k-step forecasting strategy is designed to introduce k forecasting points/values simultaneously. The value of k is determined to be less than or equal to the number of cores/threads to maintain the efficiency. The actual forecasting period/response time depends on the power consumption recording interval and the value of k. Compared with traditional one-step forecasting strategies, the k-step forecasting solution provides more response time for dynamic electricity market bidding.

2. Materials and Methods

2.1. Data Description

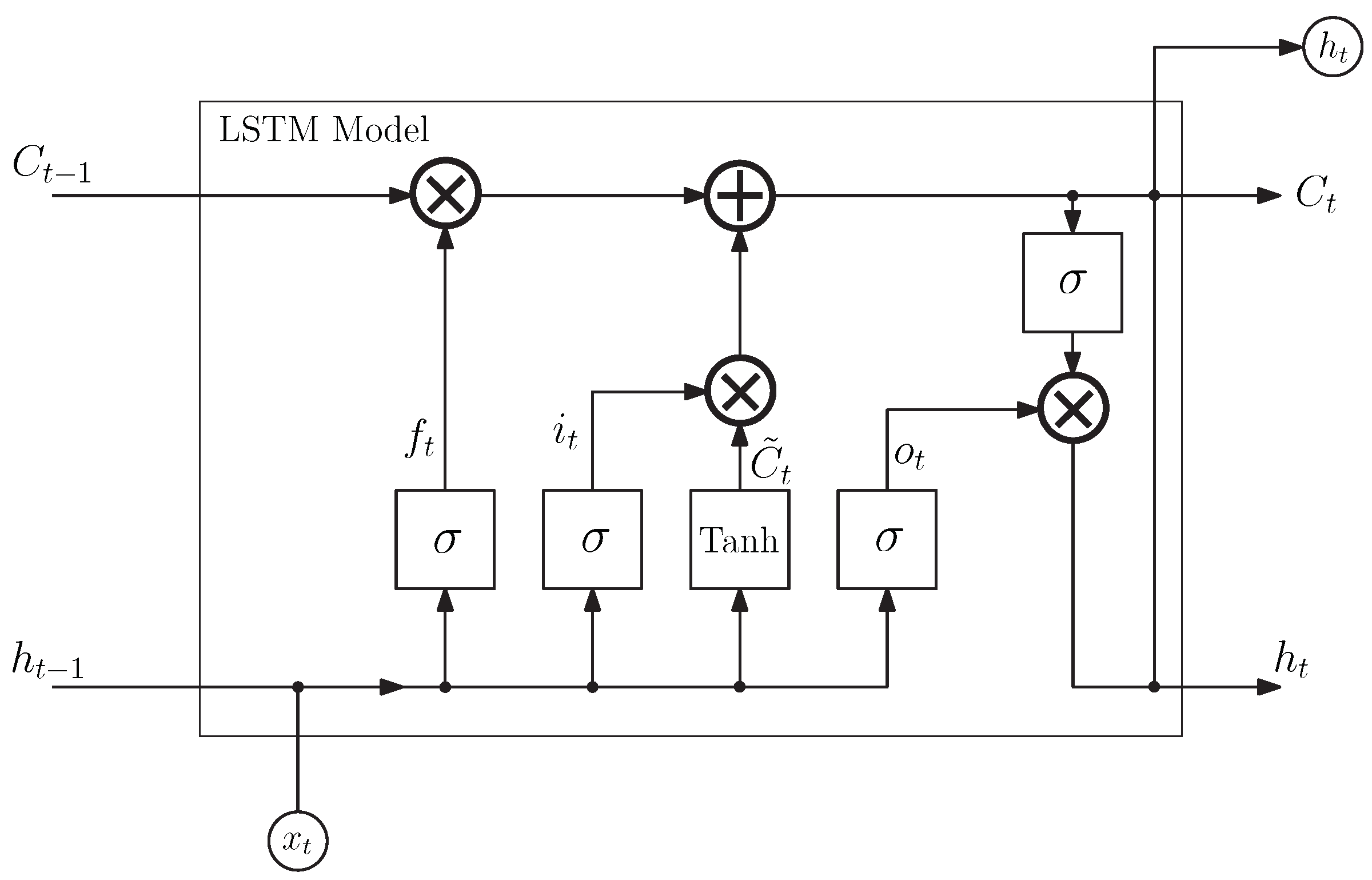

2.2. Long Short Term Memory based Recurrent Neural Network

2.3. Temporal Convolutional Neural Network

2.4. CNN-LSTM Forecasting Framework

2.5. A k-Step Power Consumption Forecasting Strategy

| Algorithm 1 A k-step power consumption forecasting strategy |

Input: The UK-DALE dataset. Output: Data points at 5 min, 10 min, … 5k min. Initialization: re-organize the original data into k different datasets according to specified step sizes. While There are unassigned datasets and there are free threads/cores Assign any unassigned dataset to a free thread/core. Apply the proposed CNN-LSTM framework to the specific dataset and obtain one-step forecasting result. end-While Combine all one-step forecasting results to obtain a k-step power consumption forecasting result. |

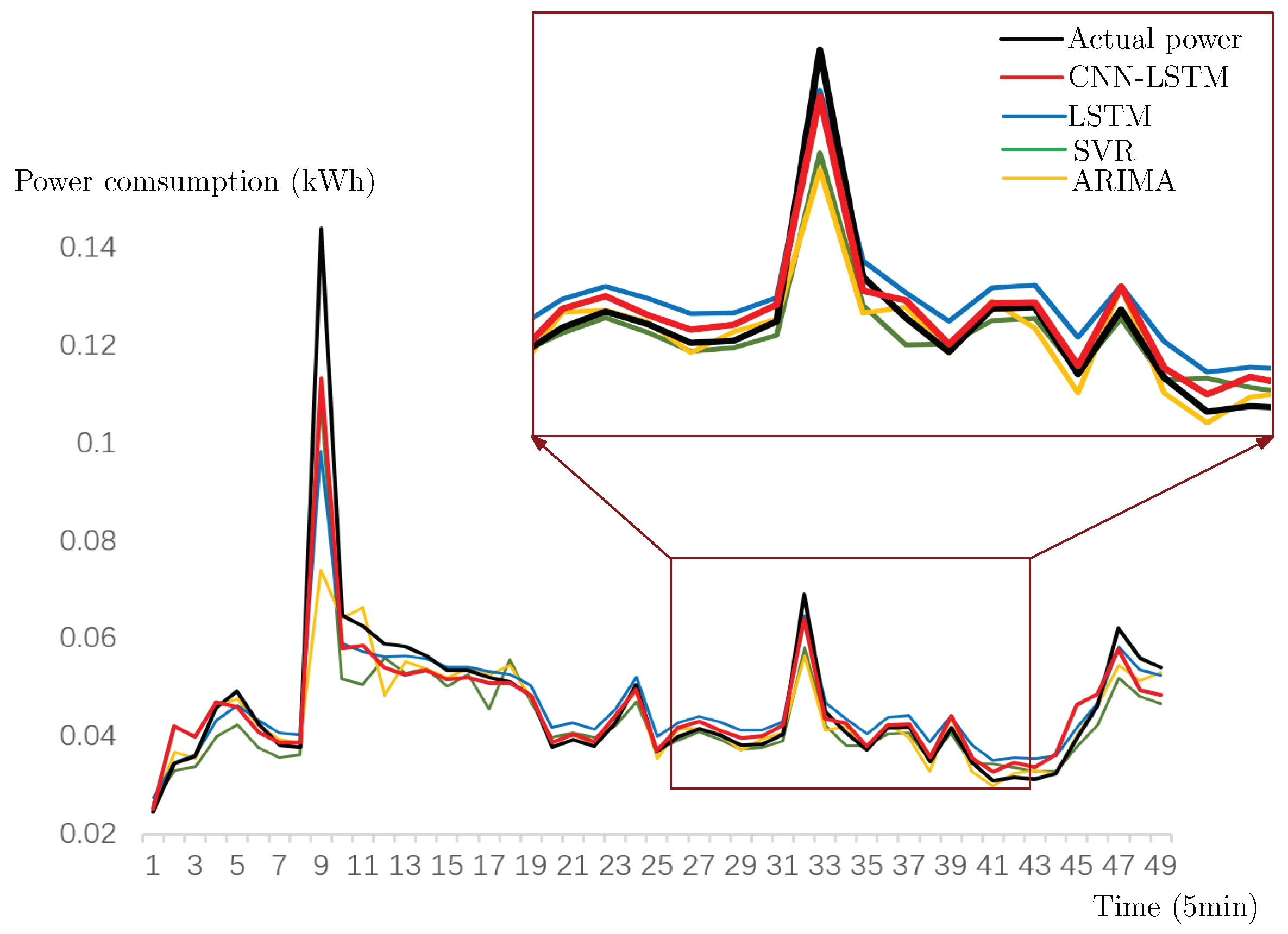

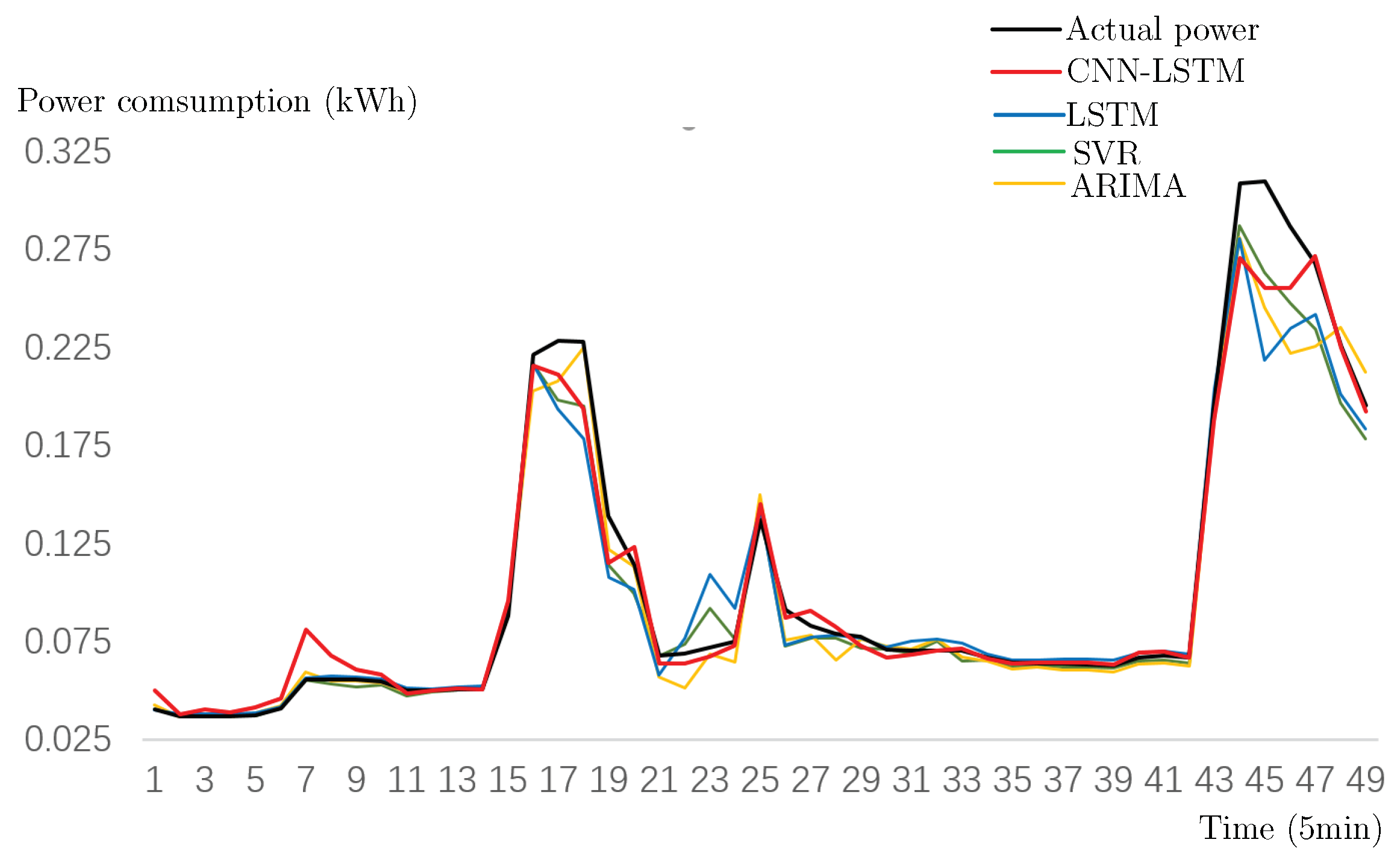

3. Results

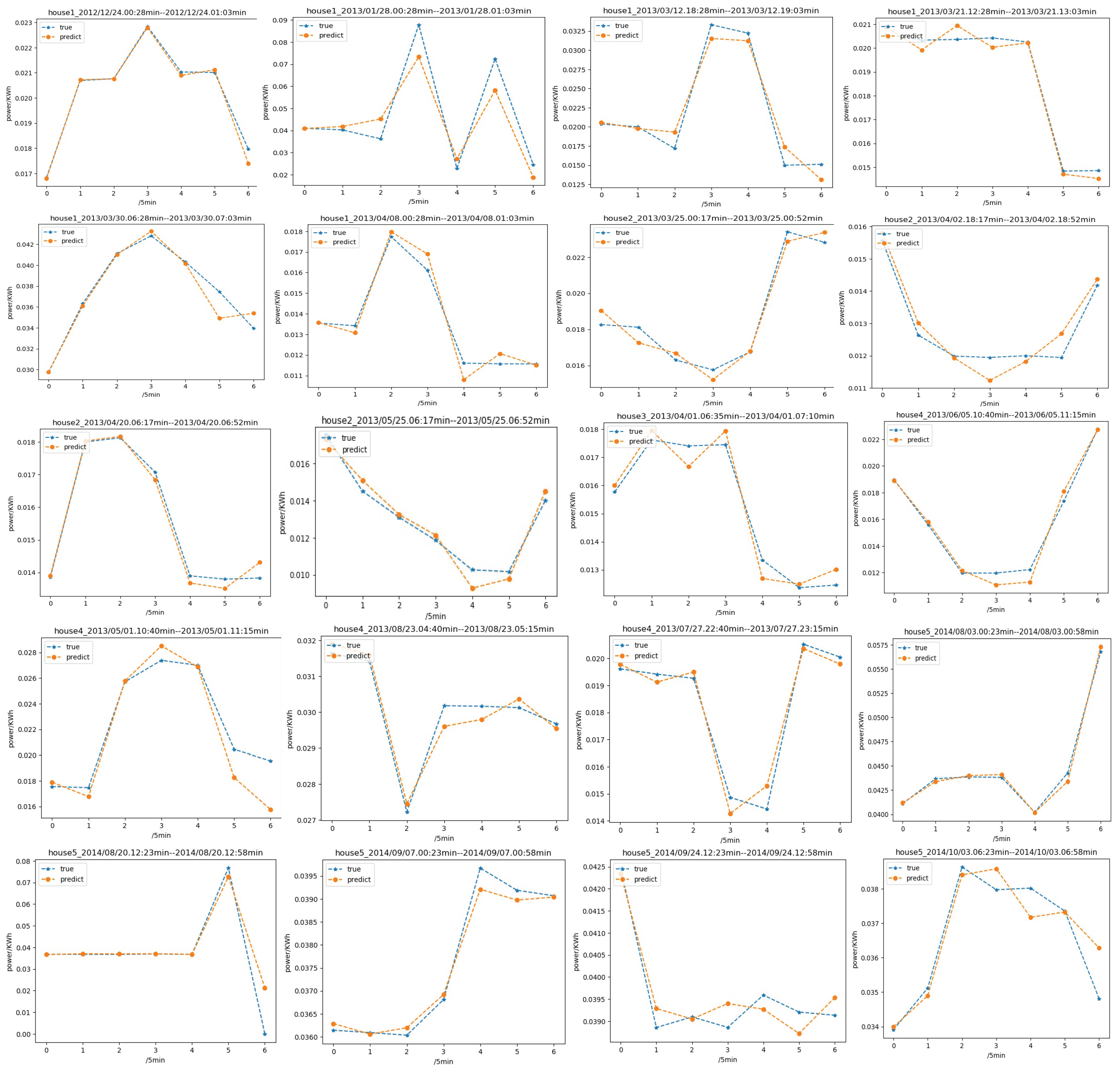

- First, the 5 × 6 = 30 min can be extended with larger k value. In order to keep our computation in real-time, we force the value of k to be less than or equivalent to the number of cores/threads. The response time can be extended with more powerful CPU.

- Second, the 5 × 6 = 30 min can also be extend using a coarser time interval, e.g., 15 min resolution instead of 5 min. For k = 6, the proposed k-step forecasting algorithm provides a one-and-a-half-hour response time for market bidding.

4. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Gyamfi, S.; Krumdieck, S. Scenario analysis of residential demand response at network peak periods. Electr. Power Syst. Res. 2012, 93, 32–38. [Google Scholar] [CrossRef] [Green Version]

- Kong, W.; Dong, Z.Y.; Hill, D.J.; Luo, F.; Xu, Y. Short-term residential load forecasting based on resident behaviour learning. IEEE Trans. Power Syst. 2018, 33, 1087–1088. [Google Scholar] [CrossRef]

- Chen, S.; Liu, C. From demand response to transactive energy: State of the art. J. Mod. Power Syst. Clean Energy 2017, 5, 10–19. [Google Scholar] [CrossRef]

- Gountis, V.P.; Bakirtzis, A.G. Bidding strategies for electricity producers in a competitive electricity marketplace. IEEE Trans. Power Syst. 2004, 19, 356–365. [Google Scholar] [CrossRef]

- Kian, A.R.; Cruz, J.B., Jr. Bidding strategies in dynamic electricity markets. Decis. Support Syst. 2005, 40, 543–551. [Google Scholar] [CrossRef]

- Siano, P. Demand response and smart grids—A survey. Renew. Sustain. Energy Rev. 2014, 30, 461–478. [Google Scholar] [CrossRef]

- Hu, M.; Ji, Z.; Yan, K.; Guo, Y.; Feng, X.; Gong, J.; Zhao, X.; Dong, L. Detecting anomalies in time deries fata via a meta-feature based approach. IEEE Access 2018, 6, 27760–27776. [Google Scholar] [CrossRef]

- Hsieh, T.J.; Hsiao, H.F.; Yeh, W.C. Forecasting stock markets using wavelet transforms and recurrent neural networks: An integrated system based on artificial bee colony algorithm. Appl. Soft Comput. 2011, 11, 2510–2525. [Google Scholar] [CrossRef]

- Socher, R.; Lin, C.C.; Manning, C.; Ng, A.Y. Parsing natural scenes and natural language with recursive neural networks. In Proceedings of the 28th International Conference on Machine Learning (ICML-11), Bellevue, WA, USA, 28 June–2 July 2011; pp. 129–136. [Google Scholar]

- Shao, L.; Cai, Z.; Liu, L.; Lu, K. Performance evaluation of deep feature learning for RGB-D image/video classification. Inform. Sci. 2017, 385, 266–283. [Google Scholar] [CrossRef]

- Kuremoto, T.; Kimura, S.; Kobayashi, K.; Obayashi, M. Time series forecasting using a deep belief network with restricted Boltzmann machines. Neurocomputing 2014, 137, 47–56. [Google Scholar] [CrossRef]

- Shi, H.; Xu, M.; Li, R. Deep learning for household load forecasting—A novel pooling deep RNN. IEEE Trans. Smart Grid 2018, 9, 5271–5280. [Google Scholar] [CrossRef]

- Kong, W.; Dong, Z.Y.; Jia, Y.; Hill, D.J.; Xu, Y.; Zhang, Y. Short-term residential load forecasting based on LSTM recurrent neural network. IEEE Trans. Smart Grid 2017. [Google Scholar] [CrossRef]

- Yuan, C.; Liu, S.; Fang, Z. Comparison of China’s primary energy consumption forecasting by using ARIMA (the autoregressive integrated moving average) model and GM (1, 1) model. Energy 2016, 100, 384–390. [Google Scholar] [CrossRef]

- Yan, K.; Du, Y.; Ren, Z. MPPT perturbation optimization of photovoltaic power systems based on solar irradiance data classification. IEEE Trans. Sustain. Energy 2018. [Google Scholar] [CrossRef]

- Du, Y.; Yan, K.; Ren, Z.; Xiao, W. Designing localized MPPT for PV systems using fuzzy-weighted extreme learning machine. Energies 2018, 11, 2615. [Google Scholar] [CrossRef]

- Shen, W.; Babushkin, V.; Aung, Z.; Woon, W.L. An ensemble model for day-ahead electricity demand time series forecasting. In Proceedings of the Fourth International Conference on Future Energy Systems, Berkeley, CA, USA, 21–24 May 2013; pp. 51–62. [Google Scholar]

- Funahashi, K.I.; Nakamura, Y. Approximation of dynamical systems by continuous time recurrent neural networks. Neural Netw. 1993, 6, 801–806. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. In Proceedings of the 25th International Conference on Neural Information Processing Systems, Lake Tahoe, NV, USA, 3–6 December 2012; pp. 1097–1105. [Google Scholar]

- Hinton, G.E.; Osindero, S.; Teh, Y.W. A fast learning algorithm for deep belief nets. Neural Comput. 2006, 18, 1527–1554. [Google Scholar] [CrossRef] [PubMed]

- Almalaq, A.; Edwards, G. A Review of deep learning methods applied on load forecasting. In Proceedings of the 2017 16th IEEE International Conference on Machine Learning and Applications (ICMLA), Cancun, Mexico, 18–21 December 2017; pp. 511–516. [Google Scholar]

- Wang, J.; Yu, L.C.; Lai, K.R.; Zhang, X. Dimensional sentiment analysis using a regional CNN-LSTM model. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, Berlin, Germany, 7–12 August 2016; pp. 225–230. [Google Scholar]

- Chen, X.; Du, Y.; Wen, H.; Jiang, L.; Xiao, W. Forecasting based power ramp-rate control strategies for utility-scale PV systems. IEEE Trans. Ind. Electron. 2018, 3, 1862–1871. [Google Scholar] [CrossRef]

- Karnik, N.N.; Mendel, J.M. Applications of type-2 fuzzy logic systems to forecasting of time-series. Inform. Sci. 1999, 120, 89–111. [Google Scholar] [CrossRef]

- Conejo, A.J.; Plazas, M.A.; Espinola, R.; Molina, A.B. Day-ahead electricity price forecasting using the wavelet transform and ARIMA models. IEEE Trans. Power Syst. 2005, 20, 1035–1042. [Google Scholar] [CrossRef]

- Yan, K.; Ji, Z.; Shen, W. Online fault detection methods for chillers combining extended kalman filter and recursive one-class SVM. Neurocomputing 2017, 228, 205–212. [Google Scholar] [CrossRef]

- Yan, K.; Shen, W.; Mulumba, T.; Afshari, A. ARX model based fault detection and diagnosis for chillers using support vector machines. Energy Build. 2014, 81, 287–295. [Google Scholar] [CrossRef]

- Kumar, U.; Jain, V. Time series models (Grey-Markov, Grey Model with rolling mechanism and singular spectrum analysis) to forecast energy consumption in India. Energy 2010, 35, 1709–1716. [Google Scholar] [CrossRef]

- Ediger, V.Ş.; Akar, S. ARIMA forecasting of primary energy demand by fuel in Turkey. Energy Policy 2007, 35, 1701–1708. [Google Scholar] [CrossRef]

- Oğcu, G.; Demirel, O.F.; Zaim, S. Forecasting electricity consumption with neural networks and support vector regression. Procedia-Soc. Behav. Sci. 2012, 58, 1576–1585. [Google Scholar] [CrossRef]

- Rodrigues, F.; Cardeira, C.; Calado, J.M.F. The daily and hourly energy consumption and load forecasting using artificial neural network method: A case study using a set of 93 households in Portugal. Energy Procedia 2014, 62, 220–229. [Google Scholar] [CrossRef]

- Deb, C.; Eang, L.S.; Yang, J.; Santamouris, M. Forecasting energy consumption of institutional buildings in Singapore. Procedia Eng. 2015, 121, 1734–1740. [Google Scholar] [CrossRef]

- Wang, J.; Hu, J. A robust combination approach for short-term wind speed forecasting and analysis—Combination of the ARIMA (Autoregressive Integrated Moving Average), ELM (Extreme Learning Machine), SVM (Support Vector Machine) and LSSVM (Least Square SVM) forecasts using a GPR (Gaussian Process Regression) model. Energy 2015, 93, 41–56. [Google Scholar]

- Armano, G.; Marchesi, M.; Murru, A. A hybrid genetic-neural architecture for stock indexes forecasting. Inform. Sci. 2005, 170, 3–33. [Google Scholar] [CrossRef]

- Rather, A.M.; Agarwal, A.; Sastry, V. Recurrent neural network and a hybrid model for prediction of stock returns. Expert Syst. Appl. 2015, 42, 3234–3241. [Google Scholar] [CrossRef]

- Wang, H.; Wang, G.; Li, G.; Peng, J.; Liu, Y. Deep belief network based deterministic and probabilistic wind speed forecasting approach. Appl. Energy 2016, 182, 80–93. [Google Scholar] [CrossRef]

- Khodayar, M.; Kaynak, O.; Khodayar, M.E. Rough deep neural architecture for short-term wind speed forecasting. IEEE Trans. Ind. Inf. 2017, 13, 2770–2779. [Google Scholar] [CrossRef]

- Voyant, C.; Notton, G.; Kalogirou, S.; Nivet, M.L.; Paoli, C.; Motte, F.; Fouilloy, A. Machine learning methods for solar radiation forecasting: A review. Renew. Energy 2017, 105, 569–582. [Google Scholar] [CrossRef]

- Alzahrani, A.; Shamsi, P.; Dagli, C.; Ferdowsi, M. Solar irradiance forecasting using deep neural networks. Procedia Comput. Sci. 2017, 114, 304–313. [Google Scholar] [CrossRef]

- Ryu, S.; Noh, J.; Kim, H. Deep neural network based demand side short term load forecasting. Energies 2016, 10, 3. [Google Scholar] [CrossRef]

- Kelly, J.; Knottenbelt, W. The UK-DALE dataset, domestic appliance-level electricity demand and whole-house demand from five UK homes. Sci. Data 2015. [Google Scholar] [CrossRef] [PubMed]

- Jozefowicz, R.; Zaremba, W.; Sutskever, I. An empirical exploration of recurrent network architectures. In Proceedings of the 32nd International Conference on International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 2342–2350. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Werbos, P.J. Backpropagation through time: What it does and how to do it. Proc. IEEE 1990, 78, 1550–1560. [Google Scholar] [CrossRef]

- Ketkar, N. Convolutional Neural Networks. In Deep Learning with Python; Springer: Berlin, Germany, 2017; pp. 63–78. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A.; Bengio, Y. Deep Learning; MIT Press: Cambridge, UK, 2016; Volume 1. [Google Scholar]

- Abadi, M.; Barham, P.; Chen, J.; Chen, Z.; Davis, A.; Dean, J.; Devin, M.; Ghemawat, S.; Irving, G.; Isard, M.; et al. TensorFlow: A System for Large-Scale Machine Learning. In Proceedings of the 12th USENIX Conference on Operating Systems Design and Implementation, Savannah, GA, USA, 2–4 November 2016; pp. 265–283. [Google Scholar]

- Keras: The Python Deep Learning library. Available online: http://keras.io/ (accessed on 1 November 2018).

- Brailsford, T.J.; Faff, R.W. An evaluation of volatility forecasting techniques. J. Bank. Financ. 1996, 20, 419–438. [Google Scholar] [CrossRef]

- Kavasseri, R.G.; Seetharaman, K. Day-ahead wind speed forecasting using f-ARIMA models. Renew. Energy 2009, 34, 1388–1393. [Google Scholar] [CrossRef]

| Dataset | RMSE | MAE | MAPE | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ARIMA | SVR | LSTM | Persis. | Propos. | ARIMA | SVR | LSTM | Persis. | Propos. | ARIMA | SVR | LSTM | Persis. | Propos. | |

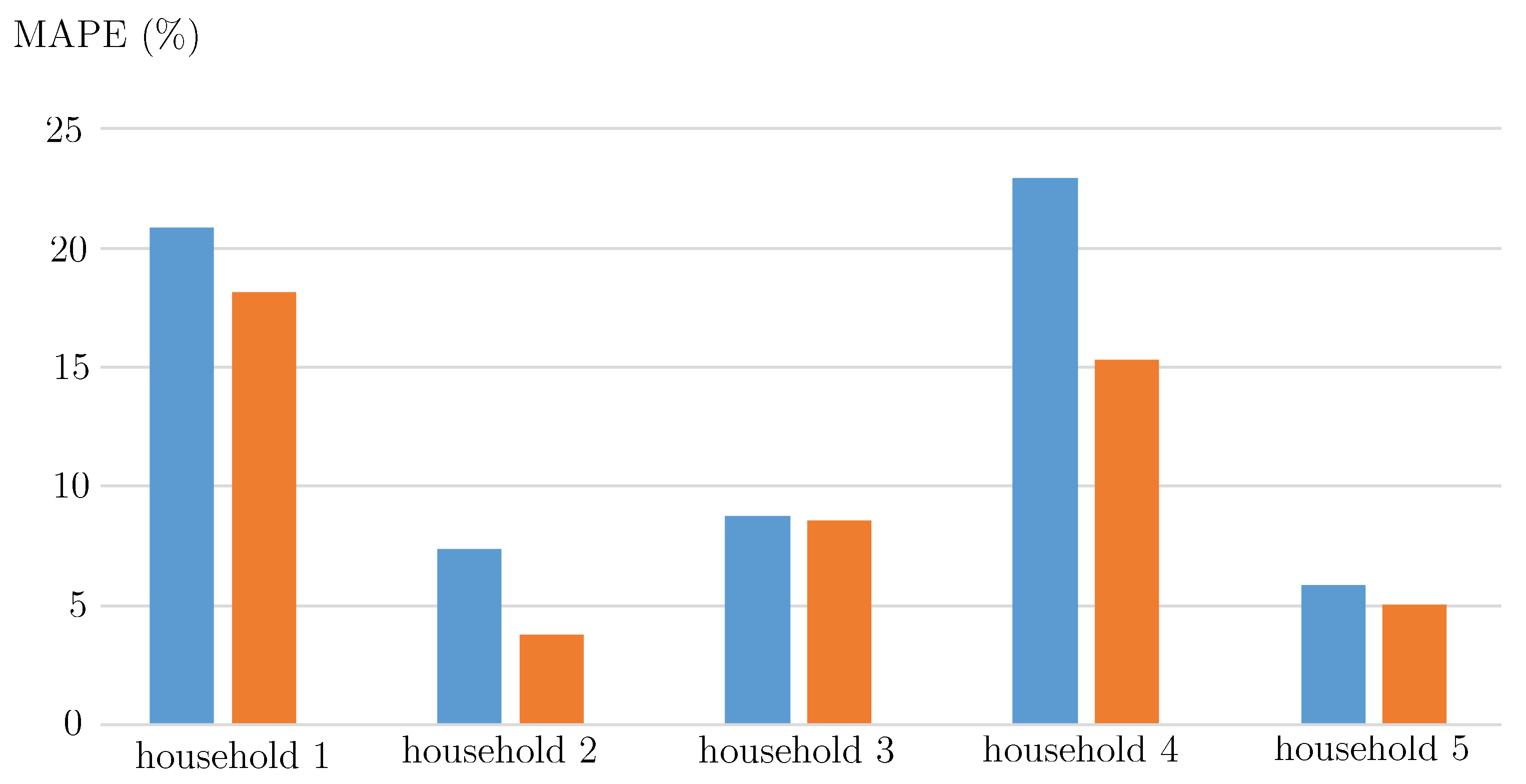

| Hse 1 | 0.0305 | 0.034 | 0.0299 | 0.0335 | 0.0304 | 0.0151 | 0.0156 | 0.0149 | 0.0153 | 0.0140 | 20.8196 | 18.3178 | 20.8594 | 21.3442 | 18.1268 |

| Hse 2 | 0.0027 | 0.0014 | 0.0023 | 0.0038 | 0.0016 | 0.0024 | 0.0011 | 0.0018 | 0.0018 | 0.0010 | 12.5666 | 5.2907 | 7.3485 | 7.7049 | 3.7647 |

| Hse 3 | 0.0122 | 0.0144 | 0.0168 | 0.0356 | 0.0117 | 0.0072 | 0.0078 | 0.0090 | 0.0168 | 0.0069 | 8.7881 | 8.6152 | 8.7619 | 16.9004 | 8.5512 |

| Hse 4 | 0.0075 | 0.0058 | 0.0070 | 0.0072 | 0.0064 | 0.0054 | 0.0036 | 0.0050 | 0.0046 | 0.0042 | 27.1986 | 14.2533 | 22.9484 | 17.5189 | 15.3256 |

| Hse 5 | 0.0070 | 0.0073 | 0.0069 | 0.0071 | 0.0060 | 0.0043 | 0.0037 | 0.0032 | 0.0028 | 0.0028 | 8.4620 | 6.7788 | 5.8760 | 5.0290 | 5.0226 |

| Average | 0.0120 | 0.0127 | 0.0126 | 0.0175 | 0.0112 | 0.0069 | 0.0064 | 0.0068 | 0.0083 | 0.0058 | 15.5670 | 10.6512 | 13.1589 | 13.6995 | 10.1582 |

| Approach | House 1 | House 2 | House 3 | House 4 | House 5 | Average |

|---|---|---|---|---|---|---|

| CNN-LSTM | 0.0652 | 0.0631 | 0.0591 | 0.0631 | 0.0656 | 0.0632 |

| LSTM | 0.0059 | 0.0036 | 0.0044 | 0.0044 | 0.0038 | 0.0021 |

| SVR | 0.0075 | 0.0065 | 0.0045 | 0.0095 | 0.005 | 0.0066 |

| ARIMA | 0.7493 | 0.6898 | 0.6918 | 0.7109 | 0.8783 | 0.7440 |

| Metric | RMSE | MAPE | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Dataset | k = 2 | k = 3 | k = 4 | k = 5 | k = 6 | k = 2 | k = 3 | k = 4 | k = 5 | k = 6 |

| House 1 | 0.0341 | 0.0339 | 0.0478 | 0.0508 | 0.0577 | 18.42 | 18.98 | 19.66 | 19.89 | 20.15 |

| House 2 | 0.0017 | 0.0021 | 0.0024 | 0.0026 | 0.0025 | 3.91 | 4.33 | 4.64 | 4.90 | 4.85 |

| House 3 | 0.0120 | 0.0274 | 0.0284 | 0.0236 | 0.0256 | 9.24 | 10.31 | 10.50 | 11.98 | 10.98 |

| House 4 | 0.0068 | 0.0069 | 0.0072 | 0.0070 | 0.0071 | 15.41 | 15.25 | 16.38 | 15.79 | 16.08 |

| House 5 | 0.0067 | 0.0068 | 0.0079 | 0.0879 | 0.0100 | 5.53 | 5.78 | 6.03 | 6.41 | 6.64 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yan, K.; Wang, X.; Du, Y.; Jin, N.; Huang, H.; Zhou, H. Multi-Step Short-Term Power Consumption Forecasting with a Hybrid Deep Learning Strategy. Energies 2018, 11, 3089. https://doi.org/10.3390/en11113089

Yan K, Wang X, Du Y, Jin N, Huang H, Zhou H. Multi-Step Short-Term Power Consumption Forecasting with a Hybrid Deep Learning Strategy. Energies. 2018; 11(11):3089. https://doi.org/10.3390/en11113089

Chicago/Turabian StyleYan, Ke, Xudong Wang, Yang Du, Ning Jin, Haichao Huang, and Hangxia Zhou. 2018. "Multi-Step Short-Term Power Consumption Forecasting with a Hybrid Deep Learning Strategy" Energies 11, no. 11: 3089. https://doi.org/10.3390/en11113089

APA StyleYan, K., Wang, X., Du, Y., Jin, N., Huang, H., & Zhou, H. (2018). Multi-Step Short-Term Power Consumption Forecasting with a Hybrid Deep Learning Strategy. Energies, 11(11), 3089. https://doi.org/10.3390/en11113089