Surviving Meltdowns That Cannot Be Prevented: Review of Gaps in Managing Uncertainty and Addressing Existential Vulnerabilities

Abstract

:1. Introduction

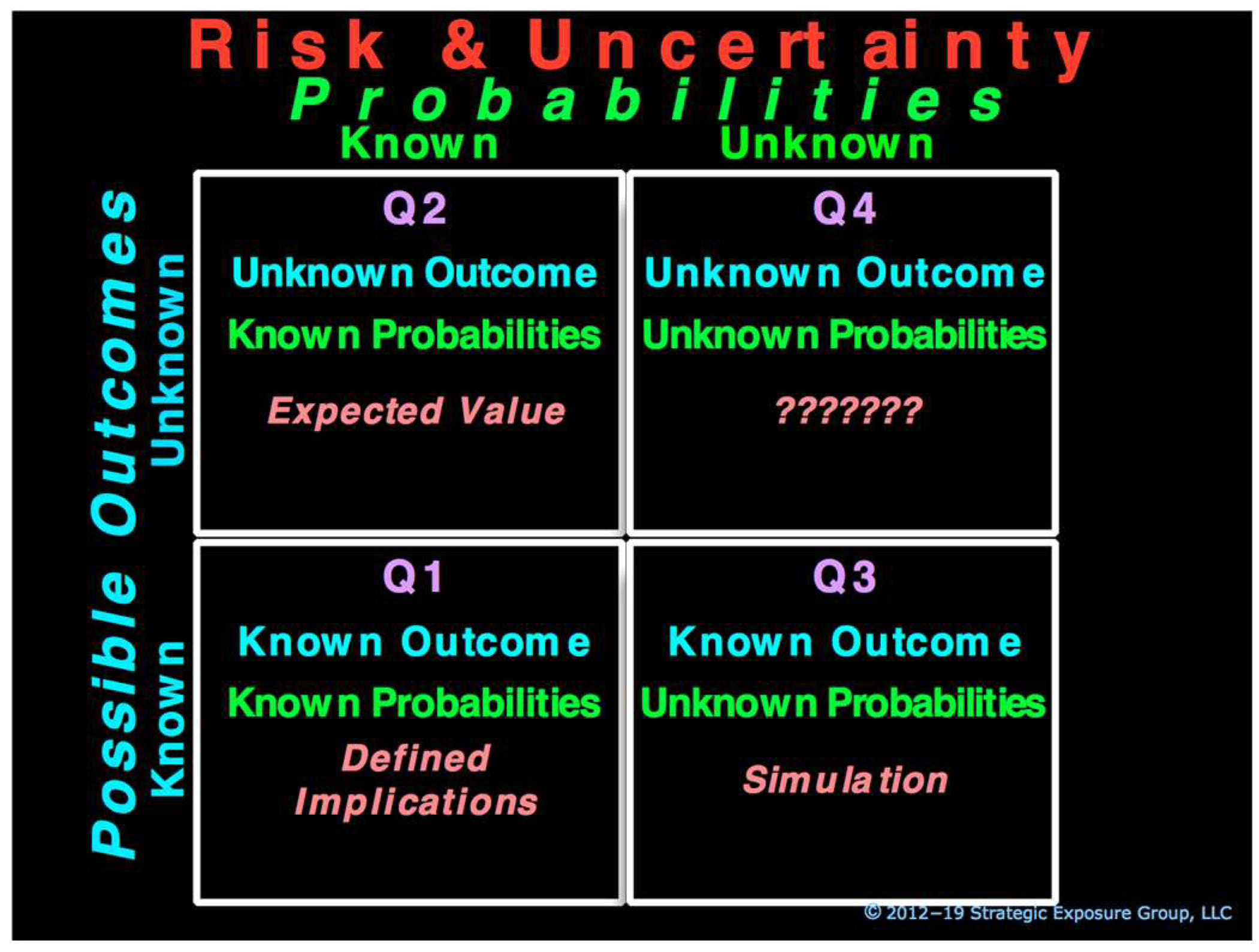

2. Current Approach in Managing Risk

2.1. Prevention Based Approach & Vulnerability to the Unknown

2.2. Challenges Related to the Unknown of Climate Change

2.3. Two Types of Unknown Create Vulnerabilities

2.4. Distinction between the Exposure from the Known and from the Unknown

3. Conceptual Analytic Framework

4. Exposure from the Known

4.1. Known-Known

4.2. Unknown-Known

- A critical component in manufacturing a product is unexpectedly out of stock, which can be prevented by maintaining excess inventory.

- The market value of a financial asset drops unexpectedly, the impact of which can be mitigated by a diversified portfolio.

- An unexpected imbalance occurs between an electricity grid’s active capacity and the current demand, which can be mitigated by multiple standby sources of power, such as peaking plants or a plan to shed loads.

4.3. Known-Unknown

- The supplier of a critical component cannot supply it for an extended period, which can be prevented by maintaining standby suppliers for the critical component.

- The market value of several financial assets drops precipitously, the impact of which can be mitigated by maintaining a cushion of capital to absorb losses.

- All power plants supplying an electricity grid shut down, which can be prevented by installing standby generators called “black starts” or by providing spinning capacity.

4.4. Primary Focus in Addressing the Known: Event-Centric Prevention

4.5. Scholarly Evolution

To preserve the distinction … between the measurable uncertainty and an unmeasurable one we may use the term “risk” to designate the former and the term “uncertainty” for the latter …. The practical difference between the two categories, risk and uncertainty, is that in the former the distribution of the outcome in a group of instances is known …, while in the case of uncertainty this is not true, the reason being in general that it is impossible to form a group of instances, because the situation dealt with is in a high degree unique.

In Knight’s view, uncertainty arises out of our partial knowledge …. The key issue, however, is to understand what the partialness is about …. Knight’s use of partial knowledge, we argue, reveals that his distinction between risk and uncertainty has more to do with the initial classification of random outcomes than with the assignment of probabilities to the outcomes …. The point is not so much that we do not know probabilities as that we do not know the classification of outcomes … uncertainty as Knight understood it arises from the impossibility of exhaustive classification of states …. When a decision maker faces uncertainty … he or she would have first to “estimate” the possible outcomes to be able to “estimate” the probabilities of occurrence of each.

At the heart of Shackle’s theory of choice is the idea that, when people consider the possible consequences of taking a decision, they give their attention only to those outcomes that they (a) imagine and (b) deem, to some degree, to be possible. This set of outcomes is not guaranteed to include what actually happens, which may be an event they had not even imagined and which comes as a complete surprise to them.

The financial crisis of 2008 reminds us that we live in a world of risk and uncertainty. Knight and Keynes developed the conceptual distinction between risk and uncertainty ninety years ago and it remains fundamentally important today. In risky environments, sorting events into different classes poses not special challenge for sophisticated decision makers. We cannot be sure what tomorrow will bring, but we can rest assured that unforeseen events will be drawn from known probability distributions “with fixed mean and variance”. In the world of risk the assumption that agents follow consistent, rational, instrumental decision rules is plausible. But that assumption becomes untenable when parameters are too unstable to quantify the prospects for events that may or may not happen in the future.

5. Exposure from the Unknown

5.1. Current Approach to Address the Unknown

- Less than full appreciation of the distinct characteristics of Q4 exposure.

- The hindsight bias that leads individuals to believe that they had previously predicted or thought of an adverse event before it happened and thus they can predict other future events (Kahneman et al. 1982).

- Another bias that leads individuals to exaggerate the importance of a specific factor they picked as the cause of an adverse event while overlooking the numerous other factors some of which may have been unknown and had a greater impact and thus believing they can identify factors that may contribute to future adversities (Gilbert 2006).

5.2. Can Materialize Anytime Anywhere and Cannot Be Envisioned, Simulated or Prevented

Tsunamis Begin with Almost Undetectable Small WaveletsThere was nothing out of the ordinary in Albuquerque, NM on this Friday. As the day ended, thunderstorms came rolling in. At their peak, just before 8 P.M., a huge lightning bolt struck an electric line causing large power fluctuations, creating a blaze in Fabricator No. 22 at a nearby Philips plant. A combination of sprinklers and employee efforts put out the fire in under 10 min.The Fabricator Number 22 was a plant fabricating and churning out millions of semiconductors with complex circuits on chips that are barely half-inch square in size. Precision was critical and the presence of any air-borne foreign matter of the size so small to be invisible to human eye on or around the chips could ruin these semiconductors. Such fabricators were referred to as “the clean rooms”.The fire was minor. But the damage from water, smoke and soot was extensive, and wasn’t limited to only work-in-progress chips exposed to the air. The fabricator was also not “clean” anymore, making the plant useless for fabrication.On Monday morning, as the process of informing customers began, one of the first calls went to Espoo, Finland, about 5000 miles away, explaining the incident, the number of chips lost and the expected plant recovery time of one week to the chief purchasing manager of Nokia, the world’s leading electronic company with almost US$20 billion in revenues. A one-week delay in supply chains was no big deal, as experienced managers know that supply disruptions are more the rule than the exceptions. The purchasing manager shared the news with all members of the Nokia team. The affected items were placed on “special monitor” and a routine of daily calls to Philips was initiated.About two weeks later, Philips realized the full scope of the damage and estimated that it would take weeks, may be months, to restore clean rooms and restart production. Of the indispensable Albuquerque components purchased by Nokia, one, known as Asic chip, was made only by Philips. Nokia estimated that, in the absence of this chip, it would be 4 million handsets short during the holiday season, the strongest cell-phone sale period. That amounted to 5% of the company’s total sales. With cell phone market booming, running short of the handsets would be a major problem and could impact the company’s market share significantly.Nokia pulled all stops, internally and externally. The problem was elevated to the Nokia CEO level, as he obtained commitments from Philips CEO to dedicate all excess capacity to Nokia until Albuquerque plant was restored. Through such collaboration Nokia avoided disruption and handsets kept rolling off the assembly line to reach store shelves.The second of the two major customers of the Albuquerque plant was Ericsson, Sweden’s largest company with US$29 billion in sales. When Ericsson received the call on Monday following the fire, like its treatment at Nokia, it was no big deal. However, it was treated as one technician talking to another technician, and the news was not shared widely within the company. Even two weeks later, the delay was estimated by Ericsson to be a few weeks and the news was not widely disseminated internally.A week later the full impact of the delay was realized. Major efforts were initiated at Ericsson to obtain the critical semiconductors, including Asic chips. Philips had no spare capacity to alleviate the problem, and no other manufacturer could supply it in time for the upcoming holiday season. With significant delay in getting the new model to the market, Ericsson missed the holiday season.The end result was a favorable marketplace reception for Nokia cell phones, and its market share increased from 27% to 30%. Ericsson, on the other hand, found itself without a new model in the high-end cell-phone market and its market share went down from 12% to 9% in six months.Eighteen months later, the WSJ reported: “Ericsson said a slew of component shortages, a wrong product mix and marketing problems sparked a loss of 16.2 billion kronor (US$1.68 billion) last year for the company’s mobile phone division … The company will take an additional restructuring charge of eight billion kronor to finance further restructuring of its mobile-phone unit. The news sent Ericsson shares falling 13.5% [last Friday] and shook other high-tech stocks around the world. … When the company revealed the damage from the fire for the first time publicly last July, its shares tumbled 14% in just hours. Since then, the shares have continued to fall along with the declining fortunes of many global telecommunications stocks. Ericsson shares are trading around 50% below where they were before the fire”.Less than 24 months after the fire, the wavelets that began on that Friday evening almost 5000 miles away turned into a tsunami and forced Ericsson to leave the handset-manufacturing business, with almost 80% drop in its investor value over this period.This case is based upon the events as discussed in The Spider’s Strategy (Mukherjee 2008) and reported in the press.

5.3. Early Warning Mechanisms and Metrics Not Useful in Predicting and Preventing

Traditional Metrics Can Create a False Sense of SecurityMemoirs of a Senior Global-Bank Executive Who Managed Client Relationship With AIG.

First Quarter 2007The company was well capitalized. There was a tremendous amount of experience in all forms of insurance products and a very strong culture of sales and marketing because of CEO Sullivan’s successful experience. An entrepreneurial atmosphere permeated the company’s four major business segments. The AIG Financial Products (AIGFP) business was growing and contributing major profits to corporate earnings. It was a leader in structured swaps and credit default swap (CDS) transactions … One of the biggest corporate challenges at this point seemed to be cash management, as the company was flush with liquidity.What could be better! Writing insurance for these financial products raked in huge profits, with no significant capital allocations, and no cash calls to post collateral. All the securities, regardless of their fair-market value designation of level 1, 2, or 3, were listed at par, and many were rated AAA by rating agencies. It was what any business would like to have!September 2007AIG reported a staggering US$352 million loss in CDS in the financial products business.This caused a rush of adrenaline at our institution as loan agreements were pulled out for review. Our institution’s exposure to AIG ran into billions through direct loans, CDS protection purchased from AIG, and other “CDS wrapped” transactions. A downgrade would trigger a huge collateral call on AIG. The numbers were quite sobering. But I remember the general sentiment: “Hey, this is AIG!” The net assessment was that the downgrade was highly unlikely. AIG had just raised US$20 billion through a series of capital transactions. Their shareholders’ equity stood at over US$78 billion, with total assets of over US$1 trillion, as of 30 June 2007.In addition, a review of traditional risk-management indicators suggested ample capital, cash, and securities to cover any collateral call that AIG may face in a hypothetically extreme scenario. The concern about the lack of transparency had grown significantly as the question kept coming up: “How much counter-party exposure to how many parties?” But discussions always concluded that AIG had sufficient resources to cover its obligations.15 June 2008Just weeks after J.P. Morgan Chase had completed the acquisition of Bear Stearns, AIG reported a shocking US$5.6 billion loss attributable to AIGFP’s CDS portfolio, along with US$20 billion in write-downs on CDS guarantees and an additional US$18 billion write-down on mortgage- and asset-backed securities during the previous three quarters.Early September 2008… we reviewed and discussed AIG’s credit. At that time, AIG seemed well capitalized, with significant global assets. There was some concern about the lack of complete transparency of AIG’s exposure to other counter parties, including the number of parties involved. But on that day, any concern about AIG almost seemed to be a side issue.15 September 2008The downgrade this evening from AA to A- prompted a collateral call for a staggering amount of US$14.5 billion. Since the Lehman Brothers’ bankruptcy this morning, no one could be sure of what is to come next. The subsequent panic has frozen the market; because of a lack of buyers, it is not clear where the market prices of assets are.There was a full-blown panic at our institution as rumors began swirling around this morning. If Lehman wasn’t rescued, who could be next? Who will come to AIG’s rescue? What happens if there is no rescue?

5.3.1. Correlations Determine Outcomes

5.3.2. Nonlinear Correlations Make It Impossible to Envision, Simulate and Predict

5.4. Nonlinear Correlations Cannot Be Recognized Ex-Ante

Correlating Factors Are Easily Missed in Real Time Even When Developing Over YearsThings were looking good. The company, the world’s largest private-sector producer and distributor in its industry, was named to Fortune Magazine’s 2008 list of America’s Most Admired Companies. Revenues had been growing consistently year-after-year. Its stock price reached an all-time high earlier in the year. Following record third quarter results, the announcement said: “We continue to differentiate ourselves with a very strong financial and operating performance, rising cash flows, major contract backlog and rapidly growing international contributions. In the face of current economic conditions, we are pleased to be raising our outlook”.“We are seeing the first full quarter of benefits from our multi-year capital investment program”, said the CFO.Also, Standard & Poor’s had just upgraded its corporate credit rating to BB+ based on the “strengthened credit measures due to the company’s financial performance and favorable outlook”.The company, Peabody Energy based in St. Louis, Missouri in the US, was one of the world’s leading pure-play coal companies and operated through the entire value chain, from mining the coal, to selling and distributing it for electricity production and steelmaking. Prospects for price increase looked good as the global coal demand was growing. Peabody was well positioned to benefit from this.The company said in 2010 that global demand for coal was entering a multi-year growth period: “We’re in the early stages of a 30-year supercycle in global coal markets”, and reiterated in 2011 that “the coal supercycle is just getting underway”.

Prospects remained positive through 2011. Peabody received CEO of the Year and Energy Company of the Year Honors at Global Energy Awards and S&P affirmed its BB+ credit rating. However, little attention was paid by the company or the marketplace to certain factors that had been developing over several years.Pioneered by Halliburton Company, natural gas extraction through fracking dates to the 1940s but its cost, while declining over the years, remained high relative to coal until as recently as 2000. Coal used to be the cheapest source of electricity. But the cost of meeting new environmental standards raised its cost while the spurt in the number of fracking wells dropped natural gas prices. By 2012, the significant coal versus natural gas cost advantage was almost gone.Historically, environmentalists, without much funding or disciplined organizations were viewed more as nuisance than game changers. However, beginning in the late 2000s the Sierra Club in the US changed its modus operandi. Encouraged by the White House, and Michael Bloomberg’s commitment of tens of millions of dollars to launch an organization with strategy-based plans in 45 states, it began a disciplined “war on coal” at state and local levels that killed on average one coal-fired power plant every 10 days between 2010 and 2015. By 2012, such groups represented existential threats to the coal industry in the US.Peabody reported record operating and net profit margins, return on equity, and net income of almost US$1 billion in 2011.That was the last profitable year at Peabody, as starting in the early 2010s, the US coal industry began imploding and the company initiated closing mines rapidly. Saddled with debt and the outlook growing bleaker by the day, Peabody Energy filed for bankruptcy on 13 April 2016.This case is based upon information sourced by links inserted above as well as events as reported in Politico.

5.4.1. Climate Change Is an Unprecedented Challenge

5.4.2. Climate Change Exposure Requires Urgent Focus on the Unknown

- They can materialize anytime, anywhere.

- They cannot be envisioned ex ante, and thus they can neither be simulated, nor prevented.

- Traditional metrics are useless in gauging the strength of an entity to withstand adversity from such threats, can be misleading, and thus can cultivate a false sense of security.

- Correlations that give rise to them cannot be recognized ex ante even when they develop over a long period of time and are easily missed.

- Their adverse impact can be so huge that it cannot be absorbed in the normal course of business and can have existential consequences.

- How do you ensure the next market crash will not devolve into an unanticipated meltdown for businesses, such as financial institutions?

- How do you ensure survival when unanticipated longer-term trends redefine the marketplace for businesses, such as consumer products and services?

- How do you protect shareholder value when expected payoffs from significant upfront investment are distributed over decades when political, regulatory, environmental, or technological changes that cannot be envisioned ex ante may render the operating model obsolete, such as for infrastructure businesses?

- How do you ensure survival if any component of or link in the operating process fails with catastrophic consequences from an event that cannot be envisioned, such as for supply chains, or city, state and national infrastructure and services?

6. Practical Solutions

6.1. Primary Focus in Addressing the Unknown: Survival

6.2. Defining Possible Worst Case Is Key

6.3. Preventing Adversity and Surviving Adversity: Two Distinct Objectives

Three-part Framework for Living with the UnknownWalter Wriston, chairman of Citicorp, was frustrated. Once again, Citicorp’s net interest revenue had declined in consecutive months. Until recently there had been an assumption that when interest rates increased, Citicorp’s net interest revenue would go up. Yet, in the second half of 1979 and early 1980, the opposite was happening. Sure, net interest revenue declined because interest expenses increased but so did interest income, albeit by a smaller amount. However, knowing that alone was not very helpful as it didn’t quite enable identifying specific actions that could stem the decline in net interest margin from rising interest rates. And there was no near-term relief in sight from increase in interest rates.No one could be sure of how bad things could get. There had to be a way to turn the net interest margin dynamics to address the problem. It was clear that the company had too much exposure to interest rate changes, but how could this be addressed going forward … particularly if things got really bad?Paul Collins, senior vice president, asked an analyst to look at the problem. The analyst created a framework to quantify interest rate exposure, by defining the difference between the amount of assets re-pricing and the amount of liabilities re-pricing in a given period as “interest rate gap”. He showed in a simplistic way that by managing the structure of this gap, the interest rate exposure to the net interest margin could be addressed proactively.Policy FrameworkUntil defined, the finance committee of Citicorp was not aware of the worst-case exposure and therefore had not taken proactive actions to address the Unknown: how bad could it get? Once the company’s dynamics of interest rate gap were internalized, the analyst provided a startling perspective to the committee, showing that most of the company’s earnings would be wiped out if interest rates climbed by the highest 30-year historical increase in any 90-day period. This became a proxy for the worst-case exposure. There was sudden rush as the committee felt such large exposure was unacceptable.So, the committee established an acceptable level of exposure that would provide for earnings growth and yet be prudent to ensure that the worst-case exposure would not be catastrophic. This turned into an interest-rate-gap-management policy that provided a framework to address and manage the company’s interest rate exposure.The committee also devised a governance guideline to split the interest-rate gap into a structural gap to be managed by the committee and a short-term/treasury gap to be managed by operating units.Strategy FrameworkUsing the policy framework, Paul Collins and the treasurer developed a strategy to restructure the balance sheet and for the funding of incremental assets. They also established structural and short-term/treasury interest rate gap limits for the company.Implementation FrameworkThe new strategy translated into implementation on two fronts. A program was created to reduce fixed-rate assets through sale of certain assets; raising fixed-rate funds, albeit at the then existing higher rates; and restructuring certain business units to provide relief from external and regulatory constraints. The second part of the implementation included allocating the short-term/treasury gap limit among operating units with controls in place to monitor compliance and exposure.Years later, Walter Wriston recalled (paraphrased): “… the late 70s and early 80s were perilous times for the industry as banks were being squeezed in a vise between usury limits, regulatory restrictions and a hyper-inflationary environment not envisioned previously … the bank’s net interest margin was in what seemed like a free fall … no one seemed to know where the bottom was … but by defining and getting a handle on the worst-case bottom, we were able to develop and implement a strategy to continue our growth while avoiding what could have been disastrous for the bank”.This case is based upon the first-hand experience of the author as secretary of Citicorp’s finance committee. Portions of this case have been excerpted from (Paul 2013, pp. 50–52).

Reducing Going Concern Vulnerabilities to Maximize Operating Model PotentialThe two-day offsite board meeting was viewed as successful. It covered a lot of ground for all major areas of the company, and board members seemed satisfied with the company’s performance and prospects. But one question could not be answered and left some members concerned.Founded in the early 1990s, the company is a large manufacturer of a line of electronics devices. With 23% market share, it is the second largest producer of such devices, and has a record of steadily growing sales with stable profitable margins. It maintains a strong distribution network, with close relationships with two of the largest distributors who serve all customer segments in the marketplace. The company is consistent and diligent about investing in product development to maintain its market position and monitors new technology and applications closely. It is led by a respected CEO and an aggressive operating management team with a balanced focus on strategy development. It has strong operational and financial controls in treasury, credit, manufacturing, and distribution.At the offsite meeting, the board was pleased with product development updates. However, following a discussion of extreme risk late on the first day of the meeting, there was a growing concern as it became evident that what the company viewed as its strong market position also had a flip side. The company derived all its sales from consumers. Although there were no visible ripples in the marketplace, questions were raised, asking what would happen to the company if its product line became obsolete suddenly due to the introduction of new technology … like what happened to Kodak or Nokia and Blackberry. During the wrap-up session on the second day the concern was raised again, and VP of strategy development was asked to address this concern.Following a strategic review, the company concluded that the worst-case exposure from technology obsolescence cannot be reduced any more than what had already been done. The company monitored marketplace activity very diligently, invested in market research as well as in product and market development, and relied on external advisers for updates on technology development. Increased investment in any of these areas would not address the new concern, and an alternative solution would be needed.Following the CEO’s recommendation, the board established an objective with a policy to minimize existential vulnerability without impacting its current product line or market position. A new strategy was adopted to reduce its 100% reliance on consumer segment, with a goal to derive 30% of its sales from non-consumer segments by the end of year 5 of the new segments’ operation.An operating plan was put into place that emphasized modifying its product line with applications for corporate segment of the market. It was emphasized that these modified applications in the corporate market would not be impacted if new technology made its consumer devices obsolete.A new division was created with outsiders hired as GM, chief marketing officer and VP of sales. A strategic review ruled out a major acquisition to diversify sales and distribution, and instead it was decided to leverage its relationship with a current large distributor who also had a strong presence in the corporate market.Four years after the offsite board meeting, the company derived 14% of its sales from corporate segment in the third year of this new division’s operations and is optimistic about achieving the 30% diversification goal by the end of 5 years.This case is based upon the first-hand experience and observations by the author. The company in this case wishes to remain anonymous. Certain details have been modified to maintain this anonymity.

7. Conclusions

7.1. Risk Arising from the Unknown Needs Urgent Attention

7.2. Risk from the Unknown Can Be Addressed & Requires High-Level Commitment in Organizations

Funding

Acknowledgments

Conflicts of Interest

References

- Arrow, Kenneth J. 1951. Alternative Approaches to The Theory of Choice in Risk Taking Situations. Econometrica 19: 404–37. [Google Scholar] [CrossRef]

- BBC. 2022. Energy Bills: Tens of Thousands of Firms ‘Face Collapse’ without Help. Available online: https://www.bbc.com/news/business-62813782 (accessed on 10 September 2022).

- Carney, Mark. 2015. Breaking the Tragedy of the Horizon—Climate Change and Financial Stability. Speech at Lloyd’s of London. September 29. Available online: https://www.bankofengland.co.uk/-/media/boe/files/speech/2015/breaking-the-tragedy-of-the-horizon-climate-change-and-financial-stability.pdf?la=en&hash=7C67E785651862457D99511147C7424FF5EA0C1A (accessed on 1 September 2022).

- Clarke, Arthur C. 1962. Profiles of the Future: An Inquiry into the Limits of the Possible. London: Victor Gollancz Ltd., p. 19. [Google Scholar]

- De Groot, Kristel, and Roy Thurik. 2018. Disentangling Risk and Uncertainty: When Risk-Taking Measures Are Not About Risk. Frontiers in Psychology 9: 2194. [Google Scholar] [CrossRef] [PubMed]

- Dizikes, Peter. 2010. Explained: Knightian uncertainty. MIT News. June 2. Available online: https://news.mit.edu/2010/explained-knightian-0602%20 (accessed on 1 September 2022).

- Fink, Larry. 2022. The Power of Capitalism. Letter to CEOs 2022. Available online: https://www.blackrock.com/corporate/investor-relations/larry-fink-ceo-letter (accessed on 1 September 2022).

- Frowen, Stephen F. 2004. Economists in Discussion: The Correspondence Between G.L.S. Shackle and Stephen F. Frowen, 1951–1992. New York: Palgrave Macmillan. [Google Scholar]

- Gilbert, Daniel. 2006. Stumbling on Happiness. New York: Knopf. [Google Scholar]

- Grunwald, Michael. 2015. Inside the War on Coal. Politico. May 26. Available online: https://www.politico.com/agenda/story/2015/05/inside-war-on-coal-000002/ (accessed on 1 September 2022).

- Guerron-Quintana, Pable. 2012. Risk and Uncertainty. Business Review 2012 Federal Reserve Bank of Philadelphia. Available online: https://www.philadelphiafed.org/-/media/frbp/assets/economy/articles/business-review/2012/q1/brq112_risk-and-uncertainty.pdf (accessed on 1 September 2022).

- Haldane, Andrew G., and Vasileios Madouros. 2012. The Dog and the Frisbee. Speech at the Federal Reserve Bank of Kansas City’s Economic Policy Symposium, “The Changing Policy Landscape”, Jackson Hole, WY, USA, August 31; Available online: https://www.bis.org/review/r120905a.pdf (accessed on 1 September 2022).

- Ingram, B. Lynn. 2013. California Megaflood: Lessons from a Forgotten Catastrophe. Scientific American. January 1. Available online: https://www.scientificamerican.com/article/atmospheric-rivers-california-megaflood-lessons-from-forgotten-catastrophe/ (accessed on 1 September 2022).

- Kahneman, Daniel, Paul Slovic, and Amos Tversky. 1982. Judgment under Uncertainty: Heuristics and Biases. Cambridge: University of Cambridge Press. [Google Scholar]

- Kiel, L. Douglas. 1996. Lessons for Managing Periods of Extreme Instability. In California Research Bureau. CRB-96-005. Sacramento: California State Library. [Google Scholar]

- Knight, Frank. 1921. Risk, Uncertainty, and Profits. Part III Ch VII and Part III Ch VIII. pp. 224–25, 233. Available online: https://oll.libertyfund.org/title/knight-risk-uncertainty-and-profit (accessed on 1 September 2022).

- Koonin, Steven E. 2021. Unsettled: What Climate Science Tells Us, What It Doesn’t, and Why It Matters. Dallas: Ben Bella Books. [Google Scholar]

- Langlois, Richard N., and Metin M. Cosgel. 1993. Frank Knight on Risk, Uncertainty, And the Firm: A New Interpretation. Western Economic Association International XXXI: 459–60. [Google Scholar] [CrossRef]

- Latour, Almar. 2001. A Fire in Albuquerque Sparks Crisis For European Cell-Phone Giants. The Wall Street Journal. January 29. Available online: https://www.wsj.com/articles/SB980720939804883010 (accessed on 1 September 2022).

- Levin, Jonathan, and Paul Milgrom. 2004. Introduction to Choice Theory. Available online: https://web.stanford.edu/~jdlevin/Econ%20202/Choice%20Theory.pdf (accessed on 1 September 2022).

- Luft, Joseph, and Harry Ingham. 1961. The Johari Window: A graphic model of awareness in interpersonal relations. Human Relations Training News 5: 6–7. [Google Scholar]

- Macaskill, William. 2022. The Beginning of History: Surviving the Era of Catastrophic Risk. Foreign Affairs, September/October. Volume 101, Number 5. [Google Scholar]

- Mukherjee, Amit S. 2008. The Spider’s Strategy: Creating Networks to Avert Crisis, Create Change, and Really Get Ahead. Upper Saddle River: FT Press. [Google Scholar]

- Nelson, Stephen C., and Peter J. Katzenstein. 2014. Uncertainty, Risk and the Financial Crisis of 2008. International Organization 68: 361–92. [Google Scholar] [CrossRef]

- Nishiguchi, Toshihiro, and Alexandre Beaudet. 1998. Case Study: The Toyota Group and the Aisin Fire. Sloan Management Review. Available online: https://www.academia.edu/784006/The_Toyota_group_and_the_Aisin_fire (accessed on 1 September 2022).

- Park, K. Francis, and Zur Shapira. 2017. Risk and Uncertainty. In The Palgrave Encyclopedia of Strategic Management. Edited by de Mie Augier and David J. Teece. New York: Palgrave Macmillan. [Google Scholar]

- Parker, Mario, and Noah Buhayar. 2010. Peabody Says Coal in Early Phase of ‘Super Cycle’. Bloomberg Markets. June 24. Available online: https://www.bloomberg.com/news/articles/2010-06-24/peabody-energy-sees-global-coal-demand-at-the-beginning-of-a-super-cycle- (accessed on 1 September 2022).

- Paul, Karamjeet. 2013. Managing Extreme Financial Risk: Strategies and Tactics for Going Concerns. New York: Academic Press, pp. 50–52, 113–15. [Google Scholar]

- Restuccia, Andrew. 2015. Michael Bloomberg’s War on Coal. Politico. April 8. Available online: https://www.politico.com/story/2015/04/michael-bloomberg-environment-coal-sierra-club-116793 (accessed on 1 September 2022).

- Taleb, Nassim Nicholas. 2004. Fooled by Randomness: The Hidden Role of Chance in Life and in the Markets. New York: Random House. [Google Scholar]

- Taleb, Nassim Nicholas. 2007. The Black Swan. New York: Random House. [Google Scholar]

- United States Geological Survey. 2011. Overview of the ARkStorm Scenario; pp. 171–72. Available online: https://pubs.er.usgs.gov/publication/ofr20101312 (accessed on 1 September 2022).

- Wikipedia. 2022. Nord Stream 2. Available online: https://en.wikipedia.org/wiki/Nord_Stream_2 (accessed on 1 September 2022).

- Wladawsky-Berger, Irving. 2019. The Business Value of Resilience. The Wall Street Journal/CIO Blog. February 15. Available online: https://www.wsj.com/articles/the-business-value-of-resilience-01550253219 (accessed on 1 September 2022).

- Yaari, Menahem. 2017. Kenneth J. Arrow’s Work on Coping with Risk and Uncertainty. The Econometric Society: In Remembrance. Available online: https://www.econometricsociety.org/sites/default/files/inmemoriam/arrow_yaari.pdf (accessed on 1 September 2022).

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Paul, K.S. Surviving Meltdowns That Cannot Be Prevented: Review of Gaps in Managing Uncertainty and Addressing Existential Vulnerabilities. J. Risk Financial Manag. 2022, 15, 449. https://doi.org/10.3390/jrfm15100449

Paul KS. Surviving Meltdowns That Cannot Be Prevented: Review of Gaps in Managing Uncertainty and Addressing Existential Vulnerabilities. Journal of Risk and Financial Management. 2022; 15(10):449. https://doi.org/10.3390/jrfm15100449

Chicago/Turabian StylePaul, Karamjeet S. 2022. "Surviving Meltdowns That Cannot Be Prevented: Review of Gaps in Managing Uncertainty and Addressing Existential Vulnerabilities" Journal of Risk and Financial Management 15, no. 10: 449. https://doi.org/10.3390/jrfm15100449

APA StylePaul, K. S. (2022). Surviving Meltdowns That Cannot Be Prevented: Review of Gaps in Managing Uncertainty and Addressing Existential Vulnerabilities. Journal of Risk and Financial Management, 15(10), 449. https://doi.org/10.3390/jrfm15100449