1. Introduction

Anti-cancer therapies are a significant financial burden on both patients and healthcare systems. As the incidence of cancer continues to rise and new therapies are rapidly introduced, public drug plans with limited budgets continue to face difficult resource allocation decisions [

1]. Assessing cancer drugs for public reimbursement recommendation is a complex evidence-based process. In Canada, the pan-Canadian Oncology Drug Review (pCODR), originally established in 2011 and now a part of the Canadian Agency of Drugs and Technologies in Health (CADTH), is a pan-Canadian cancer specific health technology assessment (HTA) process created to make funding recommendations to federal, territorial, and provincial drug plans (except for the province of Quebec) [

2]. The pCODR Expert Review Committee (pERC) reviews submissions from drug manufacturers and makes non-binding recommendations, integrating the overall clinical benefit, patient perspectives, cost-effectiveness, and feasibility of adoption of the drug into the health system into their decision [

3].

Because of limited healthcare resources, evidence of cost-effectiveness is a critical component of drug funding decisions [

4]. Novel therapies are considered “dominant” and are preferred if they are less costly and more effective than the alternative [

5]. However, in almost all cases, new treatments that are more effective than existing therapies are often costlier. When evaluating these “non-dominant” drugs, the calculated incremental cost-effectiveness ratios (ICERs) can be compared to a willingness-to-pay (WTP) threshold, commonly cited as being between

$20,000–

$100,000 CAD per quality-adjusted life year (QALY) in Canada, albeit potentially controversial and arbitrary [

5,

6,

7]. A drug is considered cost-effective if its calculated ICER falls below the WTP threshold [

6]. Unlike some other countries and HTA bodies, it should be noted that Canada and pERC have not adopted an explicit WTP threshold.

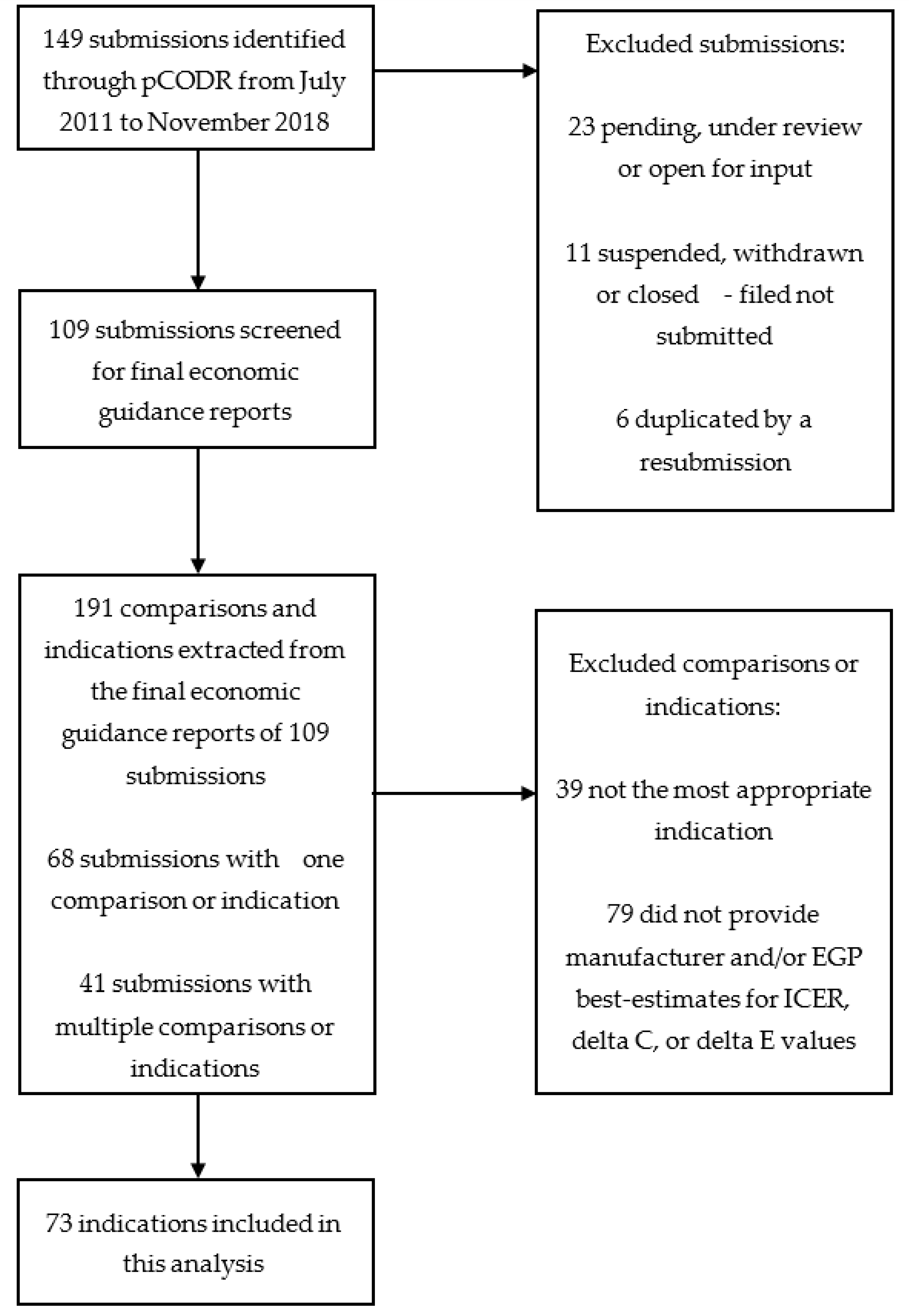

In the pCODR review process, the cost-effectiveness data used in pERC’s deliberations are delineated through a two-step submission process, as follows: manufacturers first submit their economic models to pCODR, specifying their best estimate of the drug’s ICER, ΔC, and ΔE. pCODR subsequently convenes an economic guidance panel (EGP), consisting of independent health economists or HTA-experienced panel members [

3,

8]. The EGP reanalyze the manufacturer’s model, revising components contributing to potential uncertainties and limitations. The revision process allows the EGP to calculate their best upper and lower estimates of ICERs, incremental cost (ΔC), and incremental effectiveness (ΔE). Manufacturers are permitted to review and provide feedback on the economic guidance reports prior to pERC making its final funding recommendation [

3].

Previous studies have examined the methodological issues in manufacturer submitted economic models to pCODR, the components commonly revised by the EGP, and their potential correlation with final pERC recommendations [

9,

10,

11]. Masucci et al. described the most frequent methodological issues identified and revised by EGPs, which involved costing (59%), time horizon (56%), and model structure (36%) [

11]. None of these issues, however, had a statistically significant relationship with the pERC’s final recommendations [

11]. The final recommendations are more dependent on clinical evidence and patient values, while subsequent pricing negotiations may be more dependent on cost-effectiveness [

7]. Two other studies evaluating methodological issues in manufacturer-submitted economic models found that the most common revision by the EGP was the shortening of the time horizon [

9,

10].

Identifying the recurring problems faced by economic models submitted to HTA review committees is an important step in the development of more suitable models in the future. While previous studies have examined some of these methodological issues, to our knowledge, a direct comparison of manufacturer-calculated and independently reanalyzed ICERs has not been formally conducted in the Canadian context. Therefore, in this study, we investigate if manufacturer-submitted economic models are generating ICERs that are more optimistic in comparison with pCODR-generated ICERs. The objectives of this study are to determine the magnitude of difference between manufacturer and pCODR ICERs, ΔC, and ΔE; to examine whether there is a significant difference in the proportion of ICERs deemed to be cost-effective; and to evaluate the trends in the ICERs over time. To further explore why manufacturer-submitted and EGP-reanalyzed ICERs may differ, we also present more recent data on the common methodological issues revised by the EGP.

4. Discussion

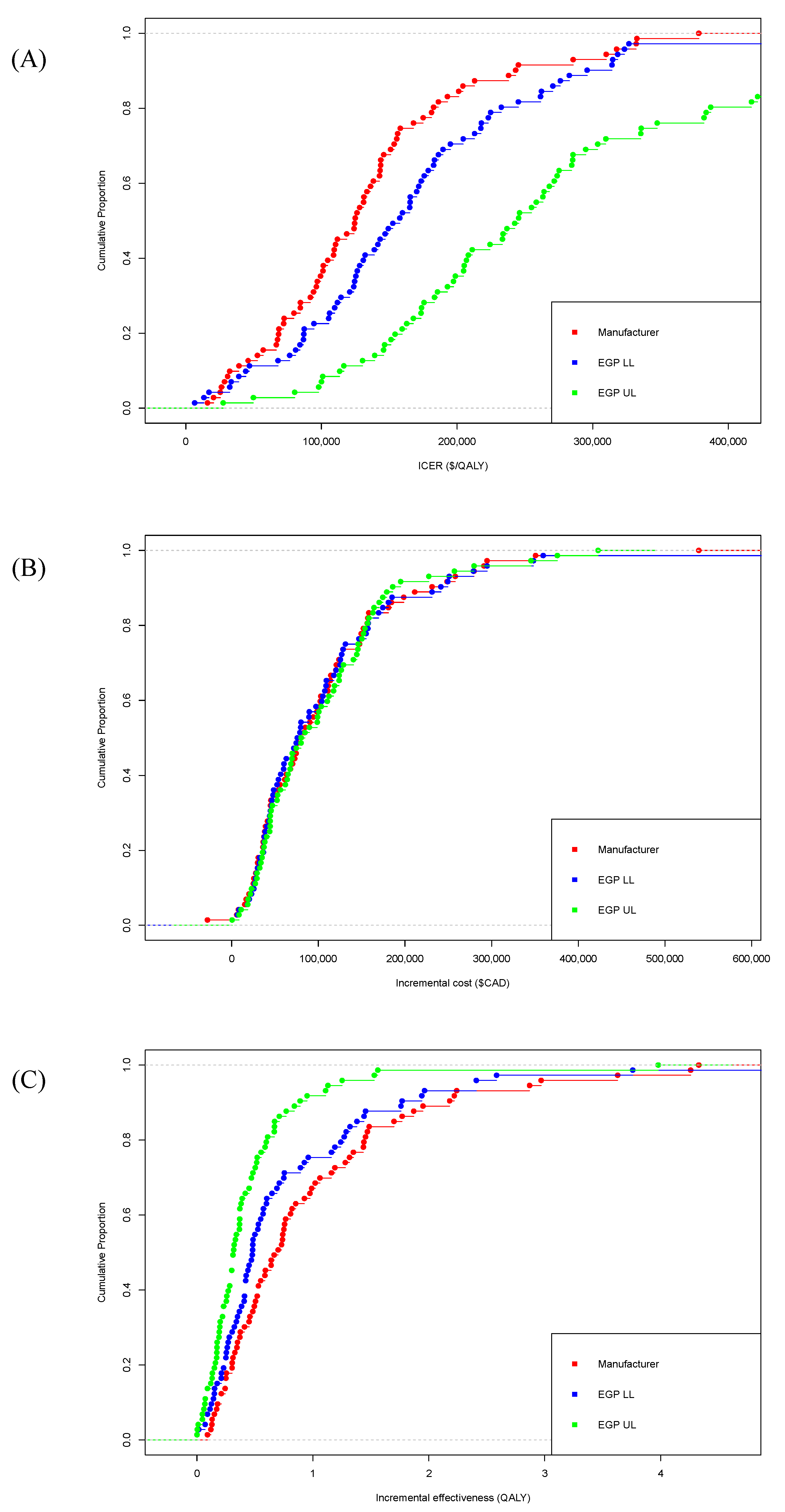

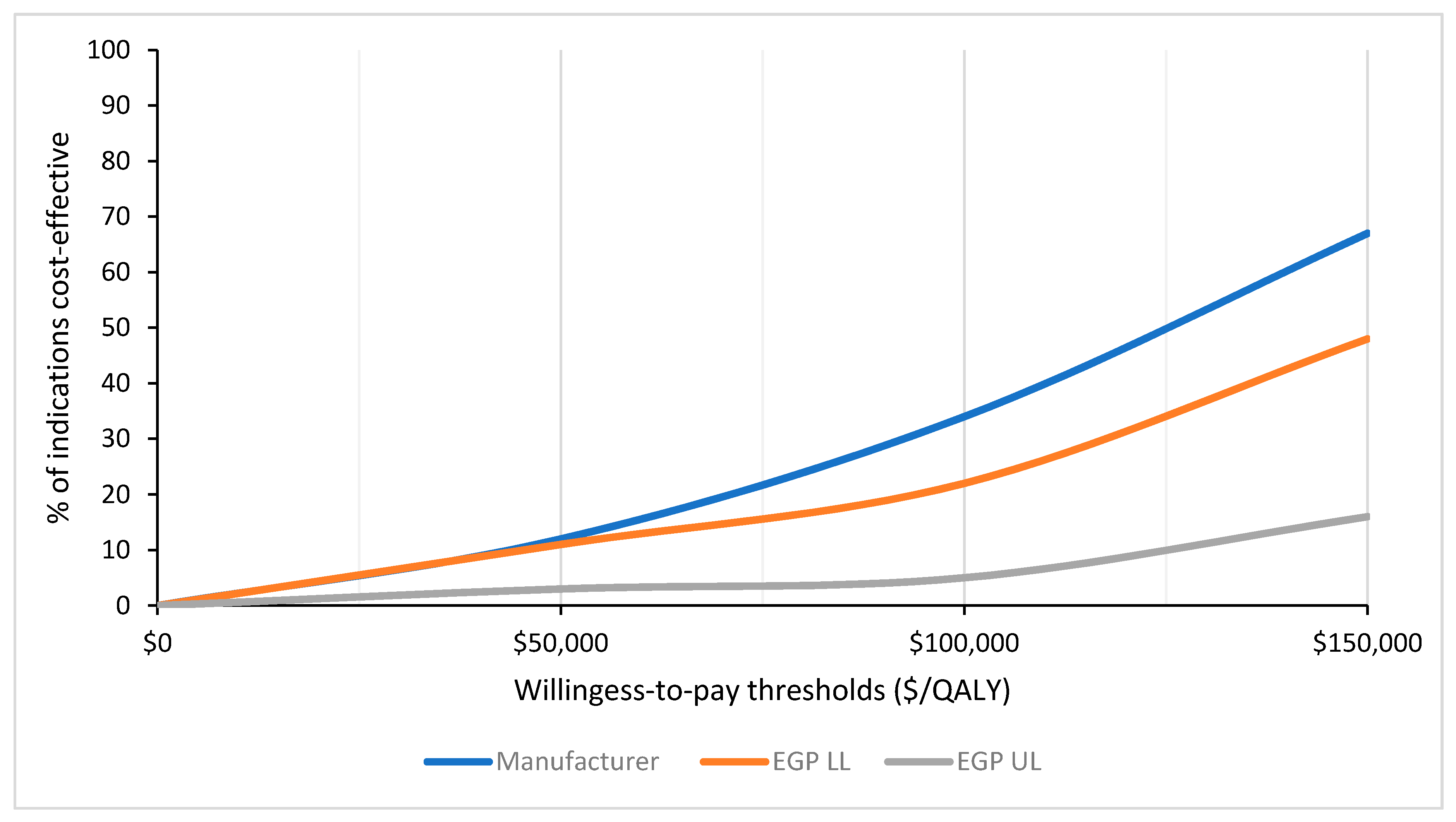

Manufacturer-submitted ICERs were consistently lower than EGP low and high limit estimates, suggesting that manufacturers may be overestimating the cost-effectiveness of their drugs. While these findings could also be potentially interpreted as EGP under-estimating the cost-effectiveness of those drugs, given that the EGP for each submission is comprised of academic researchers independent of manufacturers and payers, and different researchers are involved across the different submissions, it is unlikely that the pCODR process would produce biased estimates of ICERs that consistently underestimate cost-effectiveness. Furthermore, concerns about the accuracy of manufacturer-submitted economic models have been ongoing, and were also raised previously by the Joint Oncology Drug Review (JODR) review committee, the precursor to pCODR. A previous study that examined issues encountered by JODR when evaluating manufacturer-submitted economic models reported an uncertainty of comparative clinical benefits, costing assumptions that favored manufacturers and a lack of robustness for the submitted analyses to be the most common concerns [

16]. Manufacturer-submitted models also considered a higher proportion of cancer drugs to be cost-effective based on a variety of WTP thresholds when compared to the EGP reanalyzed estimates. This incongruity between manufacturer-submitted models and the EGP reanalysis was also highlighted by Raymakers et al., who found only 11 of the included 43 (26%) pCODR submissions had manufacturer-submitted ICERs that fell within the EGP calculated range [

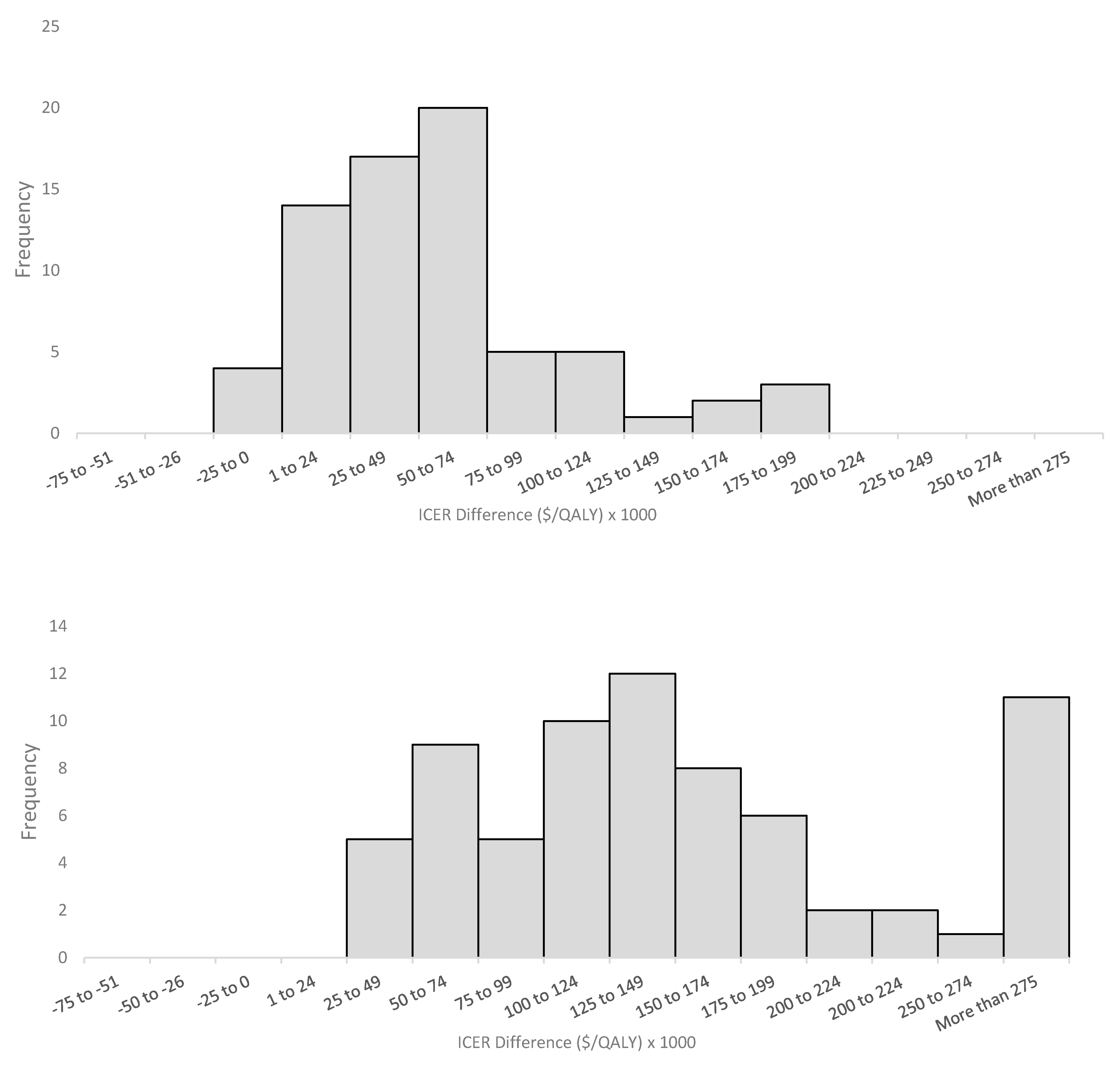

17]. While this study included a limited sample size, our analysis of 73 submissions further confirms this discrepancy between the manufacturer-submitted and EGP reanalyzed ICERs. Similar to Raymaker et al., only 19 (26%) manufacturer-submitted ICERs in our study fell within the EGP reanalyzed range. However, in contrast to Raymakers et al., who cited drug prices as the primary driver of the manufacturer ICERs, we found incremental gains in QALYs have a larger influence. While incremental cost values were similar in manufacturer-submitted and EGP re-analyzed models, manufacturers tend to incorporate higher incremental effectiveness. Thus, the improved cost-effectiveness reported by manufacturers is likely due to an overestimation or optimistic extrapolation of clinical effectiveness, rather than an underestimation of cost. These findings are similar to Cressman et al., who found only 25% of manufacturer-submitted models had higher rates of ΔC, while 72% reported higher rates of ΔE compared with the National Institute for Health and Clinical Excellence’s (NICE) Assessment Group estimates in the U.K. [

18].

Over the six-year period analyzed, the trend in cost-effectiveness was similar between the manufacturer and EGP lower limit re-analyses, with ICERs not significantly changing over time. However, the EGP upper limit re-analyses did show decreasing cost-effectiveness (increasing ICERs) over this six-year period. Previous research has shown that the cost-effectiveness of novel anticancer drugs is decreasing over time, as the cost of these therapies has been increasing dramatically without a proportional increase in clinical effectiveness [

18,

19]. These trends are congruent with EGP’s upper limit re-analyses, but not with the cost-effectiveness trends seen in the EGP lower limit and manufacturer submitted data.

In terms of the methodological issues with manufacturer-submitted economic models, time horizon issues were the most commonly identified and revised by the EGP. The EGP commonly re-computed the ICERs using a different (lifetime) time horizon, especially in cancer settings, where the models inappropriately projected clinically implausible survival tails of 30 to 40 years. These “long and thick” extrapolated survival tails are not properly validated by data, but are unfortunately created by the submitting modeler under the misguided defense of a “lifetime horizon”. In cases where submitted models project a substantial proportion of patients surviving many years beyond the expected survival based on data and clinical experience in these cancer settings, EGPs will adjust the model to a different lifetime time horizon in order to minimize the inappropriate effects of the overestimation of long-term survivors on the cost-effectiveness results. Overall, submitted models that incorporate inappropriately long time horizons may overestimate long-term survival benefits. This is similar to Masucci et al., suggesting that manufacturers continue to overestimate survival when submitting their drugs to pCODR [

11]. Issues with survival estimation were also highlighted by a study of 45 HTAs undertaken for NICE in the U.K., with findings that survival analysis is not conducted in a systematic way, and inappropriate survival models are frequently used in submitted HTAs [

20]. Similarly, studies from both France and Australia evaluating submitted economic evidence highlighted common issues related to clinical efficacy and extrapolating beyond the time horizon [

21,

22]. Costing and utility estimation issues are also frequently present in economic models submitted by the manufacturer to pCODR. Again, prior research has similarly documented concerns that manufacturers tend to make more optimistic assumptions in their model inputs with respect to costing and utility estimates [

11]. Raymakers et al. reported that manufacturers do not consistently collect health-related quality of life data in their clinical trials in order to calculate QALYs, but tend to rely on lower quality evidence from previous studies [

17]. Thus, concerns regarding utility estimates or data used to calculate QALYs in the economic models submitted is an ongoing concern. Issues related to model structure were mentioned less commonly in more recent submissions. While time horizon, costing, and utility estimation issues still exist, these model inputs can be more easily revised by reviewers through sensitivity analyses, unlike issues related to the model structure. Recognizing the need for more transparency in order to address the more difficult methodological issues such as the model structure, CADTH continues to publish revised guidelines for the economic evaluation of health technologies that submitters are advised to consult [

23]. In contrast to Massuci et al., who grouped drug wastage with other costing issues such as dosing and pricing structure, administration, and test costs, drug wastage was uniquely accounted for as a separate methodological issue in our study, with 13 (18%) manufacturer submissions failing to report any drug wastage data. Drug wastage can have a considerable impact on economic evaluations, with the potential to significantly increase ICERs [

24]. Our results are congruent with previous research showing that drug wastage is still not uniformly considered in economic models.

Our study is not without limitations: firstly, our analysis included 109 economic guidance reports with 73 indications due to the exclusion of submissions that did not publish all of their ICER data. While incorporating these additional indications might impact our findings, a potential limitation is that it is unclear whether the excluded reviews are methodologically similar to those included. Nevertheless, there is insufficient publicly available data for these excluded submissions to allow for further in-depth analysis. This highlights the importance of transparency in the reporting of economic models that inform public-funding decisions in order to enhance accountability and public scrutiny. Secondly, the categories used to classify commonly identified issues in our study were chosen subjectively based on how frequently they were reported in the economic guidance reports. Therefore, any issues discussed by the EGP and manufacturers (i.e., by verbal communication) that were not published in these reports were not captured. Another limitation is that EGP provides upper and lower limit estimates of ICERs, without providing a best point estimate in many cases. In certain indications, the difference between EGP upper and lower estimates can vary widely, making the comparison between the EGP and manufacturer ICERs less precise. Furthermore, there is also no evidence to show that EGP re-analyses are necessarily the gold standard for comparison, but the consistent finding of higher EGP ICERs that were derived from multiple health economists and academic researchers independent of manufacturers and payers across most submissions suggested that these ICERs were less likely to be subjected to perceived or actual influence from any stakeholders. For instance, there is still controversy regarding the appropriateness of adjusting the time horizon in the submitted economic models. Ideally, the time horizon should represent a life-time horizon, but two main issues emerge, namely: (1) there is no consensus on the correct life-time horizon for different cancer settings, and (2) most randomized controlled trials have a short-term follow-up, limiting the extent to which clinical benefits can be extrapolated. In revising the economic models, EGP may reduce the time horizons to remove future projected benefits that were unproven and subject to considerable uncertainty. However, there is no clear consensus if this approach taken by the EGP is the ideal approach to explore uncertainty in the over-extrapolation of potential long-term benefits when only short-term data exist. Finally, economic studies for oncology drugs conducted in the province of Quebec were not included in this analysis, as pCODR does not provide drug funding recommendations to Quebec. Future studies can be performed to expand this analysis to include economic reports from Quebec, and to compare our current results. As the number of submissions to pCODR increases and the sample size becomes larger, other areas of future research include evaluating methodological issues in submitted economic models based on specific disease areas or drug types (i.e., evaluating model issues of newer therapeutics such as immunotherapies).

With the increasing cost of oncology drugs, manufacturer-submitted economic models are scrutinized by the EGP before pERC makes its final funding recommendations. When compared with EGP-calculated estimates, manufacturers tend to overestimate the cost-effectiveness of their therapies when submitting economic models to pCODR. Although certain methodological issues are still common in manufacturer-submitted models, revision rates are high for most issues raised by the EGP. Given the ongoing methodological issues identified in this analysis and in previous studies, one natural option to consider is whether payers, such as pCODR, should directly construct economic models instead of modifying manufacturer submissions. However, this would require manufacturers to provide proprietary data access to pCODR, which is unlikely. Furthermore, pCODR would require greater resources to construct economic models for every drug submission. Because of resource limitations, it is currently feasible and is more convenient for pCODR to revise existing models from manufacturers rather than to build their own. Therefore, manufacturers should continue submitting economic models in accordance with the published guidelines. To improve the quality of these submitted models, and to better align EGP and manufacturer values, pCODR should continue revising their submission guidelines, highlighting and proposing solutions to commonly found methodological issues in submitted economic models. While manufactures tend to follow these pCODR guidelines, there is still room for interpretation, which can result in the model being revised by the EGP. For instance, the lack of consensus on what is considered a life-time horizon for specific cancers may currently result in model projections that do not reflect the clinical reality. This extrapolation of time horizon is a recurring issue identified in this analysis and in other literature. As manufacturers increasingly use surrogate endpoints and shorter follow-up times in clinical trials, potentially to gain early regulatory approval, correcting the time horizon issue remains an ongoing challenge. A potential solution to tackle this issue is implementing a process for lifecycle revaluation. For instance, when clinical trial data becomes mature, manufacturers should be encouraged to re-evaluate their initial model projections to see if they fit with the current data.