Examining the Suitability of the List of Indicators Describing Age-Related Typical Support Needs

Abstract

1. Introduction

- Can the indicators included in the list of indicators be considered valid sources for accurate descriptions of age-related typical support needs for the same activities, domains and age cohorts as those used in the SIS-C in Spain?

- Is the list of indicators an effective survey for collecting teachers’ subjective impressions of age-related typical support needs in the Spanish context (i.e., can the appropriateness of the indicators be ascertained after analyzing how the information used to determine their content validity has been gathered)?

2. Materials and Methods

2.1. Participants

2.2. Instrument

2.3. Procedure

2.4. Data Analysis

2.4.1. Research Question 1—Content Validity Analysis

2.4.2. Research Question 2—Rating Scale Assessment

3. Results

3.1. Research Question 1—Content Validity Analyses

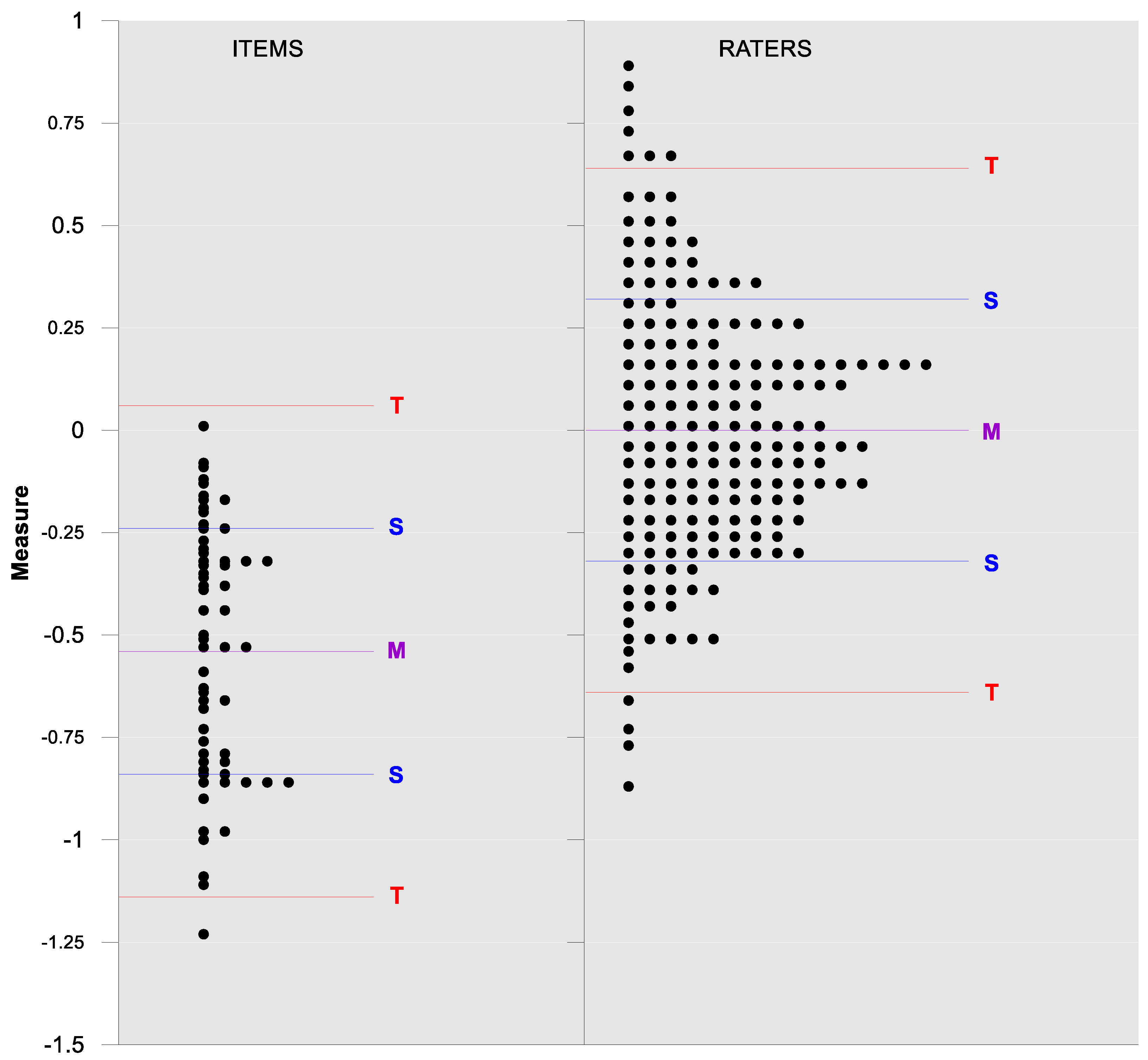

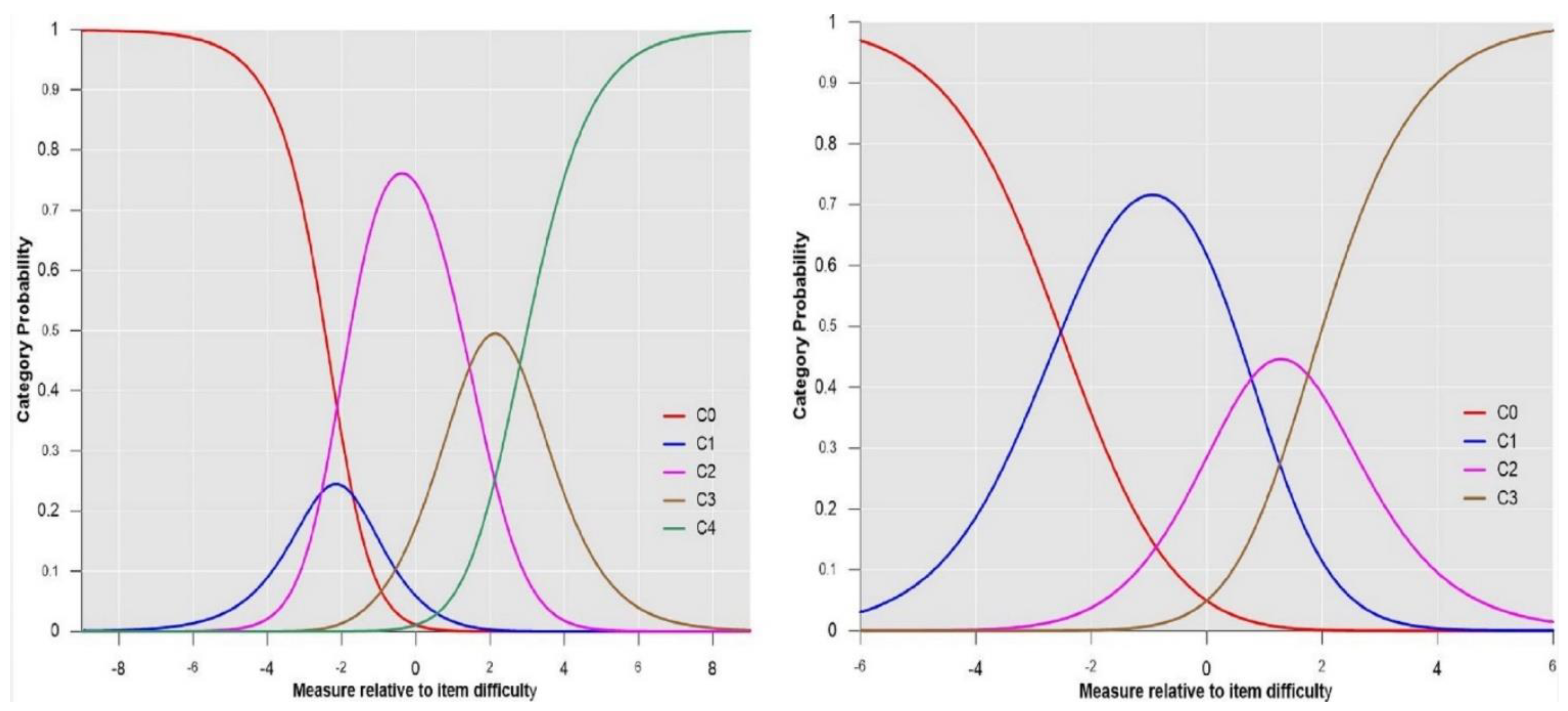

3.2. Research Question 2—Rating Scale Analyses

3.2.1. Iteration 1

3.2.2. Iterations 2 and 3

3.2.3. Iteration 4

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Acknowledgments

Conflicts of Interest

References

- United Nations. Convention on the Rights of Persons with Disabilities. 2006. Available online: https://www.un.org/development/desa/disabilities/convention-on-the-rights-of-persons-with-disabilities/convention-on-the-rights-of-persons-with-disabilities-2.html (accessed on 6 November 2020).

- Amor, A.M.; Verdugo, M.A.; Calvo, M.I.; Navas, P.; Aguayo, V. Psychoeducational assessment of students with intellectual disability. Professional-action framework analysis. Psicothema 2018, 30, 39–45. [Google Scholar] [CrossRef]

- Sánchez-Gómez, V.; López, M.; Amor, A.M.; Verdugo, M.A. Apoyos para la calidad de vida de escolares con y sin discapacidad: Revisión de literatura. Rev. Int. Educ. Justicia Soc. 2020, 9, 327–348. [Google Scholar] [CrossRef]

- Verdugo, M.A.; Amor, A.M.; Fernández, M.; Navas, P.; Calvo, M.I. La regulación de la inclusión educativa del alumnado con discapacidad intelectual: Una reforma pendiente. Siglo Cero 2018, 49, 27–58. [Google Scholar] [CrossRef]

- Amor, A.M.; Hagiwara, M.; Shogren, K.A.; Thompson, J.R.; Verdugo, M.A.; Burke, K.M.; Aguayo, V. International perspectives and trends in research on inclusive education: A systematic review. Int. J. Incl. Educ. 2019, 23, 1277–1295. [Google Scholar] [CrossRef]

- Thompson, J.R.; Walker, V.L.; Shogren, K.A.; Wehmeyer, M.L. Expanding inclusive educational opportunities for students with the most significant cognitive disabilities through personalized supports. Intellect. Dev. Disabil. 2018, 56, 396–411. [Google Scholar] [CrossRef]

- Schalock, R.L.; van Loon, J.; Mostert, R. A systematic approach to enhancing the personal well-being of children and adolescents. Int. J. Child Youth Fam. Stud. 2018, 9, 188–205. [Google Scholar] [CrossRef]

- Schalock, R.L. Six ideas that are changing the IDD field internationally. Siglo Cero 2018, 49, 21–33. [Google Scholar] [CrossRef]

- Verdugo, M.A. Conceptos clave que explican los cambios en la provisión de apoyos a las discapacidades intelectuales y del desarrollo en España. Siglo Cero 2018, 49, 35–52. [Google Scholar] [CrossRef]

- Amor, A.M.; Fernández, M.; Verdugo, M.A.; Aza, A.; Schalock, R.L. Shaping the faces of the prism: Rights, supports, and quality of life for enhancing inclusive education opportunities in students with intellectual disability. Man Disabil. Soc. 2020, 49, 5–33. [Google Scholar] [CrossRef]

- Walker, V.L.; DeSpain, S.N.; Thompson, J.R.; Hughes, C. Assessment and planning in k-12 schools: A social-ecological approach. Inclusion 2014, 2, 125–139. [Google Scholar] [CrossRef]

- Thompson, J.R.; Wehmeyer, M.L.; Hughes, C.M.; Shogren, K.A.; Seo, H.; Little, T.D.; Schalock, R.L.; Realon, R.E.; Copeland, S.R.; Patton, J.R.; et al. The Supports Intensity Scale-Children’s Version (SIS-C): User’s Manual; American Association on Intellectual and Developmental Disabilities: Washington, DC, USA, 2016. [Google Scholar]

- Thompson, J.R.; Bradley, V.J.; Buntinx, W.H.E.; Schalock, R.L.; Shogren, K.A.; Snell, M.E.; Wehmeyer, M.L. Conceptualizing supports and the supports needs of people with intellectual disability. Intellect. Dev. Disabil. 2009, 47, 135–146. [Google Scholar] [CrossRef] [PubMed]

- Schalock, R.L.; Borthwick-Duffy, S.A.; Bradley, V.J.; Buntinx, W.H.E.; Coulter, D.L.; Craig, E.M.; Gomez, S.C.; Lachapelle, Y.; Luckasson, R.; Reeve, A.; et al. Intellectual Disability: Definition, Classification, and Systems of Supports, 11th ed.; American Association on Intellectual and Developmental Disabilities: Washington, DC, USA, 2010. [Google Scholar]

- Arias, V.B.; Amor, A.M.; Verdugo, M.A.; Fernández, M.; Arias, B.; Aza, A. Toward a Better “Person-Environment Fit” through Items Calibration of the SIS-C. Int. J. Environ. Res. Public Health 2020, 17, 3471. [Google Scholar] [CrossRef] [PubMed]

- Verdugo, M.A.; Amor, A.M.; Arias, V.B.; Guillén, V.M.; Fernández, M.; Arias, B. Examining measurement invariance and differences across groups in the support needs of children with and without intellectual disability. J. Appl. Res. Intellect. Disabil. 2019, 32, 1535–1548. [Google Scholar] [CrossRef] [PubMed]

- Thompson, J.R.; Schalock, R.L.; Tassé, M.J. Evidence for the Reliability and Validity of the Supports Intensity Scales. Available online: https://www.aaidd.org/docs/default-source/sis-docs/evidence-for-the-reliabilityandvalidity-of-the-sis.pdf?sfvrsn=7ed3021_0 (accessed on 6 November 2020).

- Shogren, K.A.; Seo, H.; Wehmeyer, M.L.; Palmer, S.B.; Thompson, J.R.; Hughes, C.; Little, T.D. Support needs of children with intellectual and developmental disabilities: Age-related implications for assessment. Psychol. Sch. 2015, 52, 874–891. [Google Scholar] [CrossRef]

- Echeita, G.; Simón, C.; Márquez, C.; Fernández, M.L.; Moreno, A.; Pérez, E. Análisis y valoración del área de Educación del III Plan de Acción para Personas con Discapacidad en la Comunidad de Madrid (2012–2015). Siglo Cero 2017, 48, 51–71. [Google Scholar] [CrossRef]

- United Nations. Inquiry Concerning Spain Carried out by the Committee under Article 6 of the Optional Protocol to the Convention: Committee on the Rights of Persons with Disabilities. 2018. Available online: https://tbinternet.ohchr.org/_layouts/treatybodyexternal/Download.aspx?symbolno=CRPD/C/20/3&Lang=en (accessed on 6 November 2020).

- American Association on Intellectual Disabilities. Descriptions of Sample Activities and Support Needs for Typically Functioning Children in Relation to SIS-C Items (Unpublished Training Materials). Available online: https://inico.usal.es/lista-de-indicadores-ninos-con-desarrollo-tipico-list-of-indicators-typically-developing-children/ (accessed on 6 November 2020).

- Tassé, M.J.; Craig, E.M. Critical issues in the cross-cultural assessment of adaptive behavior. In Adaptive Behavior and Its Measurement: Implications for the Field of Mental Retardation; Schalock, R.L., Ed.; American Association on Mental Retardation: Washington, DC, USA, 1999; pp. 161–184. [Google Scholar]

- Bangdiwala, K. Using SAS software graphical procedures for the observer agreement chart. In Proceedings of the 12 SAS Users Group International Conference, Dallas, TX, USA, 8–11 February 1987; SAS Group International 12: Dallas, TX, USA, 1987; pp. 1083–1088. [Google Scholar]

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Viena, Austria, 2017. [Google Scholar]

- Linacre, J.M. A User’s Guide to FACETS. Rasch-Model Computer Programs; Winstepts.com: Chicago, IL, USA, 2017. [Google Scholar]

- Sick, J. Rasch Measurement in language education. Part 3: The family of Rasch Models. Shiken 2009, 13, 4–10. [Google Scholar]

- Linacre, J.M. Facet Rasch Measurement Computer Program; Winsteps.com: Chicago, IL, USA, 2015. [Google Scholar]

- Sigelman, C.K.; Rider, E.A. Life-Spain Human Development, 8th ed.; Cengage Learning: Stanford, CA, USA, 2015. [Google Scholar]

- Romer, D. Adolescent risk taking, impulsivity, and brain development: Implications for prevention. Dev. Psychobiol. 2010, 52, 263–276. [Google Scholar] [CrossRef]

- Tymula, A.; Rosenberg, L.A.; Roy, A.K.; Ruderman, L.; Manson, K.; Glimcher, P.W.; Levy, I. Adolescents’ risk-taking behavior is driven by tolerance to ambiguity. Proc. Natl. Acad. Sci. USA 2012, 109, 17135–17140. [Google Scholar] [CrossRef]

- Booker, J.A.; Dunsmore, J.C. Affective social competence in adolescence: Current findings and future directions. Soc. Dev. 2017, 26, 3–20. [Google Scholar] [CrossRef]

- Kilford, E.J.; Garrett, E.; Blakemore, S. The development of social cognition in adolescence: An integrated perspective. Neurosci. Biobehav. Rev. 2016, 70, 106–120. [Google Scholar] [CrossRef]

- Azorín, C.M.; Sandoval, M. Apoyos para avanzar hacia una educación más inclusiva en los centros escolares: Análisis de guías para la acción. Siglo Cero 2019, 50, 7–27. [Google Scholar] [CrossRef][Green Version]

| Variable | n | % | Variable | n | % |

|---|---|---|---|---|---|

| Gender | Schooling | ||||

| Male | 69 | 31.1 | Private school | 115 | 51.8 |

| Female | 147 | 66.22 | Public School | 107 | 48.2 |

| Missing | 6 | 2.68 | |||

| Age Cohort | Autonomous community | ||||

| 5–6 | 37 | 16.67 | Castile and Leon | 88 | 39.64 |

| 7–8 | 35 | 15.76 | Extremadura | 102 | 45.95 |

| 9–10 | 35 | 15.76 | Castile-La Mancha | 3 | 1.35 |

| 11–12 | 37 | 16.67 | Community of Valencia | 28 | 12.61 |

| 13–14 | 43 | 19.38 | Cantabria | 1 | 0.45 |

| 15–16 | 35 | 15.76 |

| School Participation Activities: Item 2 (7–8-Years-Olds Version) | Indicator Describing Typical Age-Related Support Needs that 7–8-Year-Old Typically-Developing Children Might Present |

|---|---|

| Participating in activities in common school areas (e.g., playground, hallways, cafeteria) | Most 7–8-year-old typically-developing children need verbal or visual support from one or two educators that provide prompts to groups of children outside the classroom, such as in hallways, playground and cafeterias. Once they are familiar with procedures and routines, typically-developing children only need personalized instructions occasionally and, hardly ever, need support in social interactions with others. Individual support is minimal, less than 30 min a day |

| Please, show your agreement with the indicator’s description by choosing one of the following options | |

| □ 0 = strongly disagree; students need far less support than described □ 1 = disagree; students need less support than described □ 2 = agree □ 3 = disagree; students need more support than described □ 4 = strongly disagree; students need far more support than described | |

| Age Cohort | BWN Ranges (% of Indicators) | ||||

|---|---|---|---|---|---|

| Poor 0.000–0.200 | Weak 0.201–0.400 | Moderate 0.401–0.600 | Good 0.601–0.800 | Very Good 0.801–1 | |

| 5–6 | 0 | 0 | 4.92 | 14.75 | 80.33 |

| 7–8 | 0 | 1.64 | 3.28 | 24.59 | 70.49 |

| 9–10 | 0 | 0 | 0 | 44.26 | 55.74 |

| 11–12 | 0 | 0 | 3.27 | 36.07 | 60.66 |

| 13–14 | 0 | 3.28 | 11.47 | 21.31 | 63.94 |

| 15–16 | 0 | 1.64 | 3.28 | 36.07 | 59.01 |

| General | 0 | 1.09 | 4.37 | 29.51 | 65.03 |

| Age Cohort | 5–6 | 7–8 | 9–10 | 11–12 | 13–14 | 15–16 | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| (BWN) | (BWN) | (BWN) | (BWN) | (BWN) | (BWN) | |||||||||||||

| Domain | m | M | M | m | M | M | m | M | M | m | M | M | m | M | M | m | M | M |

| HLA | 0.75 | 0.92 | 0.86 | 0.49 | 0.89 | 0.81 | 0.64 | 1 | 0.77 | 0.65 | 0.89 | 0.78 | 0.44 | 0.96 | 0.82 | 0.54 | 0.95 | 0.84 |

| CNA | 0.84 | 0.92 | 0.88 | 0.65 | 0.87 | 0.81 | 0.64 | 0.83 | 0.77 | 0.58 | 0.85 | 0.76 | 0.71 | 0.95 | 0.87 | 0.76 | 0.87 | 0.79 |

| SPA | 0.72 | 0.95 | 0.83 | 0.79 | 0.88 | 0.84 | 0.73 | 0.91 | 0.81 | 0.76 | 0.94 | 0.84 | 0.73 | 0.92 | 0.77 | 0.57 | 0.92 | 0.84 |

| SLA | 0.82 | 0.92 | 0.87 | 0.39 | 0.92 | 0.77 | 0.74 | 0.89 | 0.83 | 0.76 | 0.91 | 0.82 | 0.31 | 0.93 | 0.80 | 0.70 | 1 | 0.84 |

| HSA | 0.45 | 0.97 | 0.71 | 0.50 | 0.97 | 0.83 | 0.65 | 0.91 | 0.84 | 0.49 | 0.90 | 0.73 | 0.31 | 0.88 | 0.67 | 0.22 | 0.89 | 0.71 |

| SA | 0.72 | 0.94 | 0.87 | 0.62 | 0.95 | 0.84 | 0.70 | 0.95 | 0.85 | 0.82 | 0.87 | 0.85 | 0.52 | 0.89 | 0.75 | 0.71 | 0.86 | 0.79 |

| AA | 0.45 | 0.96 | 0.80 | 0.62 | 0.96 | 0.85 | 0.76 | 0.89 | 0.81 | 0.76 | 0.92 | 0.85 | 0.57 | 0.91 | 0.81 | 0.66 | 0.87 | 0.79 |

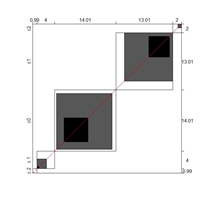

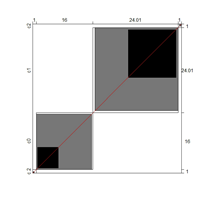

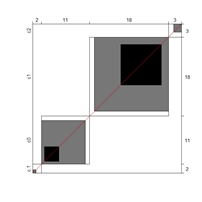

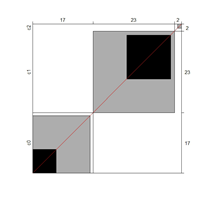

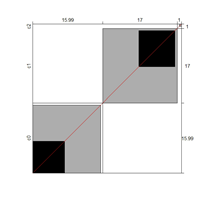

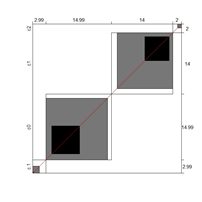

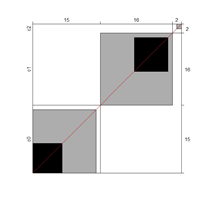

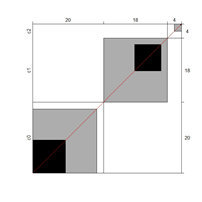

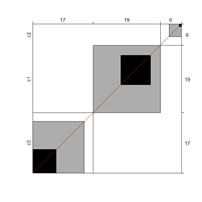

| Domain/Indicator (Age Cohort) | BWN | Chart | Domain/Indicator (Age Cohort) | BWN | Chart |

|---|---|---|---|---|---|

| SLA/04 (7–8) | 0.72 |  | HSA/07 (13–14) | 0.68 |  |

| CNA/07 (9–10) | 0.71 |  | HSA/08 (13–14) | 0.87 |  |

| SLA/03 (9–10) | 0.78 |  | AA/03 (13–14) | 0.78 |  |

| AA/02 (9–10) | 0.78 |  | HSA/08 (15–16) | 0.22 |  |

| AA/09 (9–10) | 0.76 |  | SA/02 (15–16) | 0.75 |  |

| CNA/05 (13–14) | 0.71 |  | AA/04 (15–16) | 0.85 |  |

| HSA/06 (13–14) | 0.68 |  |

| Judge | Logit (SE) Severity/Leniency | Outfit (ZStd) | Judge | Logit (SE) Severity/Leniency | Outfit (ZStd) |

|---|---|---|---|---|---|

| 221 | −1.21 (0.20) | 5.7 | 032 | 0.29 (0.22) | −5.5 |

| 008 | −1.17 (0.20) | 3.5 | 052 | 0.29 (0.22) | −4.8 |

| 022 | −1.13 (0.20) | 3.2 | 157 | 0.29 (0.22) | −4.3 |

| 140 | −1.09 (0.20) | 3.3 | 018 | 0.34 (0.22) | −6.6 |

| 219 | −1.05 (0.20) | 4.2 | 016 | 0.38 (0.21) | −6.0 |

| 056 | −1.01 (0.20) | 5.8 | 019 | 0.43 (0.21) | −5.6 |

| 220 | −1.01 (0.20) | 3.9 | 020 | 0.43 (0.21) | −4.4 |

| 207 | −0.89 (0.20) | 4.2 | 217 | 0.43 (0.21) | −4.1 |

| 097 | −0.72 (0.21) | 3.4 | 033 | 0.47 (0.21) | −4.9 |

| 096 | −0.67 (0.21) | 3.1 | 036 | 0.47 (0.21) | −2.5 |

| 208 | −0.67 (0.21) | 3.7 | 072 | 0.47 (0.21) | −3.2 |

| 201 | −0.39 (0.22) | 3.3 | 069 | 0.52 (0.21) | −4.9 |

| 172 | −0.25 (0.22) | 3.1 | 035 | 0.56 (0.21) | −4.6 |

| 118 | 0.00 (0.22) | 3.1 | 062 | 1.48 (0.16) | 4.00 |

| 039 | 0.05 (0.22) | 6.9 | 004 | 1.73 (0.15) | 3.7 |

| 030 | 0.10 (0.22) | −3.4 | 109 | 1.78 (0.15) | 3.2 |

| 013 | 0.20 (0.22) | −3.9 | 206 | 2.71 (0.17) | 6.2 |

| 049 | 0.20 (0.22) | −3.5 | 210 | 2.83 (0.17) | 7.1 |

| 121 | 0.20 (0.22) | −3.7 |

| Facets | Maladjusted Judges | Rating Scale’s Categories | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Items | Judges | ||||||||||||

| SD | Sep. | Strat. | Rel. | SD | Sep. | Strat. | Rel. | Judge | Logit (SE) Severity/Leniency | Outfit (ZStd) | Avg. Meas. | τ | |

| Iteration 1 | 0.44 | 3.54 | 5.05 | 0.93 | 0.59 | 2.70 | 3.94 | 0.88 | 174 | −0.44 (0.22) | 3.3 | 0 = −0.97 1 = −0.58 2 = −0.02 3 = 0.50 4 = 0.85 | τ1 = −2.02 τ2 = −2.49 τ3 = 1.46 τ4 = 3.04 |

| 095 | −0.34 (0.23) | 3.2 | |||||||||||

| 101 | −0.23 (0.23) | 3.3 | |||||||||||

| 139 | 1.08 (0.19) | 3.1 | |||||||||||

| Iteration 3 | 0.44 | 3.47 | 4.96 | 0.92 | 0.61 | 2.73 | 3.98 | 0.88 | NONE | 0 = −1.00 1 = −0.60 2 = −0.01 3 = 0.52 4 = 0.87 | τ1 = −2.07 τ2 = −2.50 τ3 = 1.49 τ4 = 3.08 | ||

| Facets | Rating Scale’s Categories | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Items | Judges | |||||||||

| SD | Separation | Strata | Reliability | SD | Separation | Strata | Reliability | Average Measure | τ | |

| Without extreme judges | 0.28 | 2.22 | 3.29 | 0.83 | 0.23 | 1.08 | 1.77 | 0.54 | 0 = −0.80 1 = −0.55 2 = −0.40 3 = −0.28 | τ1 = −2.53 τ2 = 0.77 τ3 = 1.75 |

| All judges | 0.30 | 2.60 | 3.80 | 0.87 | 0.22 | 1.02 | 1.70 | 0.51 | 0 = −0.84 1 = −0.57 2 = −0.42 3 = −0.31 | τ1 = −2.55 τ2 = 0.75 τ3 = 1.80 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Amor, A.M.; Verdugo, M.A.; Arias, B.; Fernández, M.; Aza, A. Examining the Suitability of the List of Indicators Describing Age-Related Typical Support Needs. Int. J. Environ. Res. Public Health 2021, 18, 764. https://doi.org/10.3390/ijerph18020764

Amor AM, Verdugo MA, Arias B, Fernández M, Aza A. Examining the Suitability of the List of Indicators Describing Age-Related Typical Support Needs. International Journal of Environmental Research and Public Health. 2021; 18(2):764. https://doi.org/10.3390/ijerph18020764

Chicago/Turabian StyleAmor, Antonio M., Miguel A. Verdugo, Benito Arias, María Fernández, and Alba Aza. 2021. "Examining the Suitability of the List of Indicators Describing Age-Related Typical Support Needs" International Journal of Environmental Research and Public Health 18, no. 2: 764. https://doi.org/10.3390/ijerph18020764

APA StyleAmor, A. M., Verdugo, M. A., Arias, B., Fernández, M., & Aza, A. (2021). Examining the Suitability of the List of Indicators Describing Age-Related Typical Support Needs. International Journal of Environmental Research and Public Health, 18(2), 764. https://doi.org/10.3390/ijerph18020764