Exploring the Impact of Linguistic Signals Transmission on Patients’ Health Consultation Choice: Web Mining of Online Reviews

Abstract

1. Introduction

2. Theoretical Background and Research Hypothesis

2.1. Linguistic Patterns

2.2. Signaling Theory

2.3. Hypotheses Development

2.3.1. Affective Signals and Patients’ Treatment Choice

2.3.2. Informative Signals and Patients’ Treatment Choice

2.3.3. Affective Signals and Information Helpfulness

2.3.4. Informative Signals and Information Helpfulness

3. Methods

3.1. Sample and Data Collection

3.2. Measurements

3.2.1. Dependent Variable

3.2.2. Independent and Mediating Variables

3.2.3. Control Variables

3.3. Machine Learning Sentiment Analysis

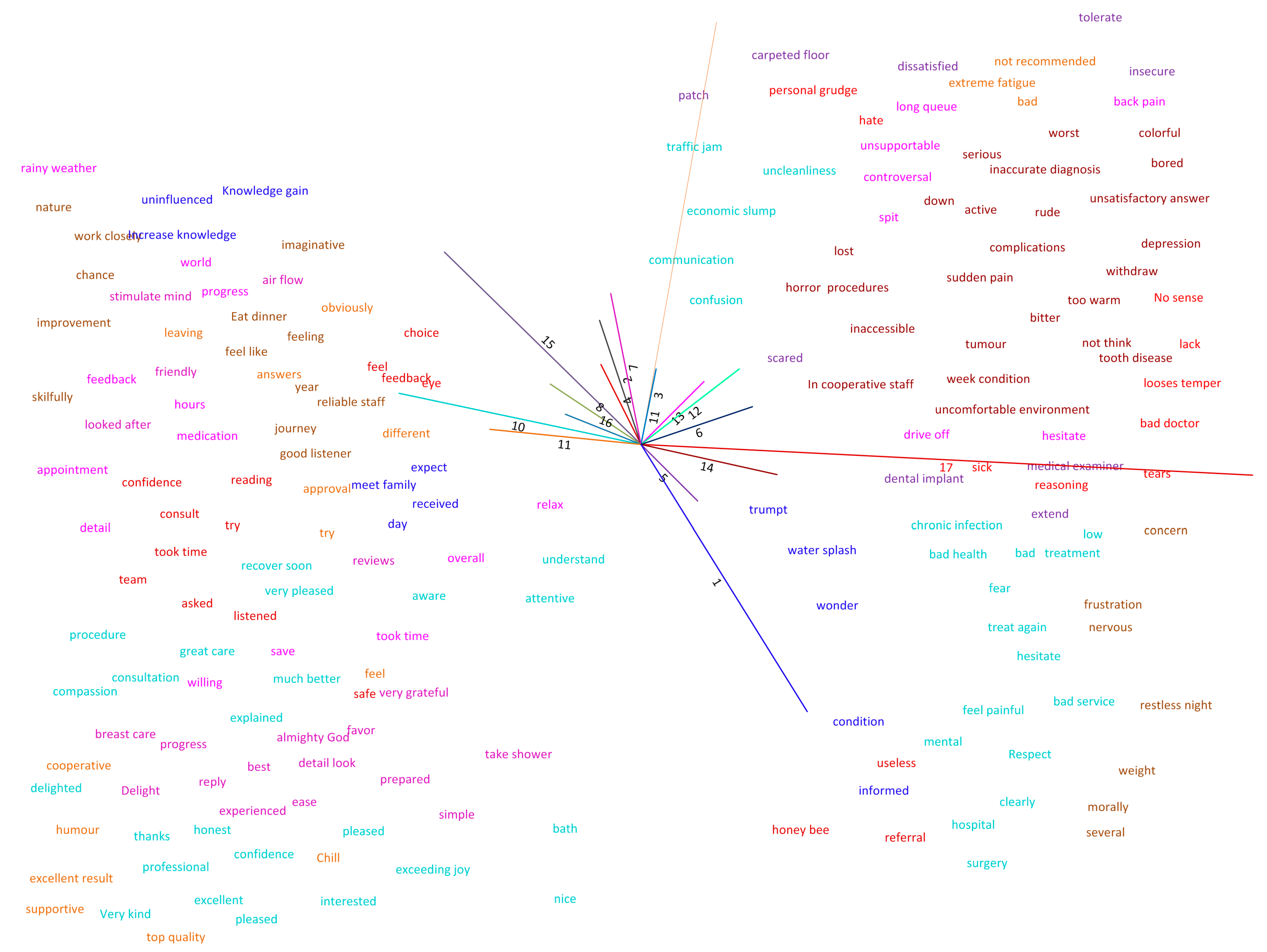

3.4. Pre-Processing of Online Reviews and Concept Mining

3.5. Empirical Model

4. Results

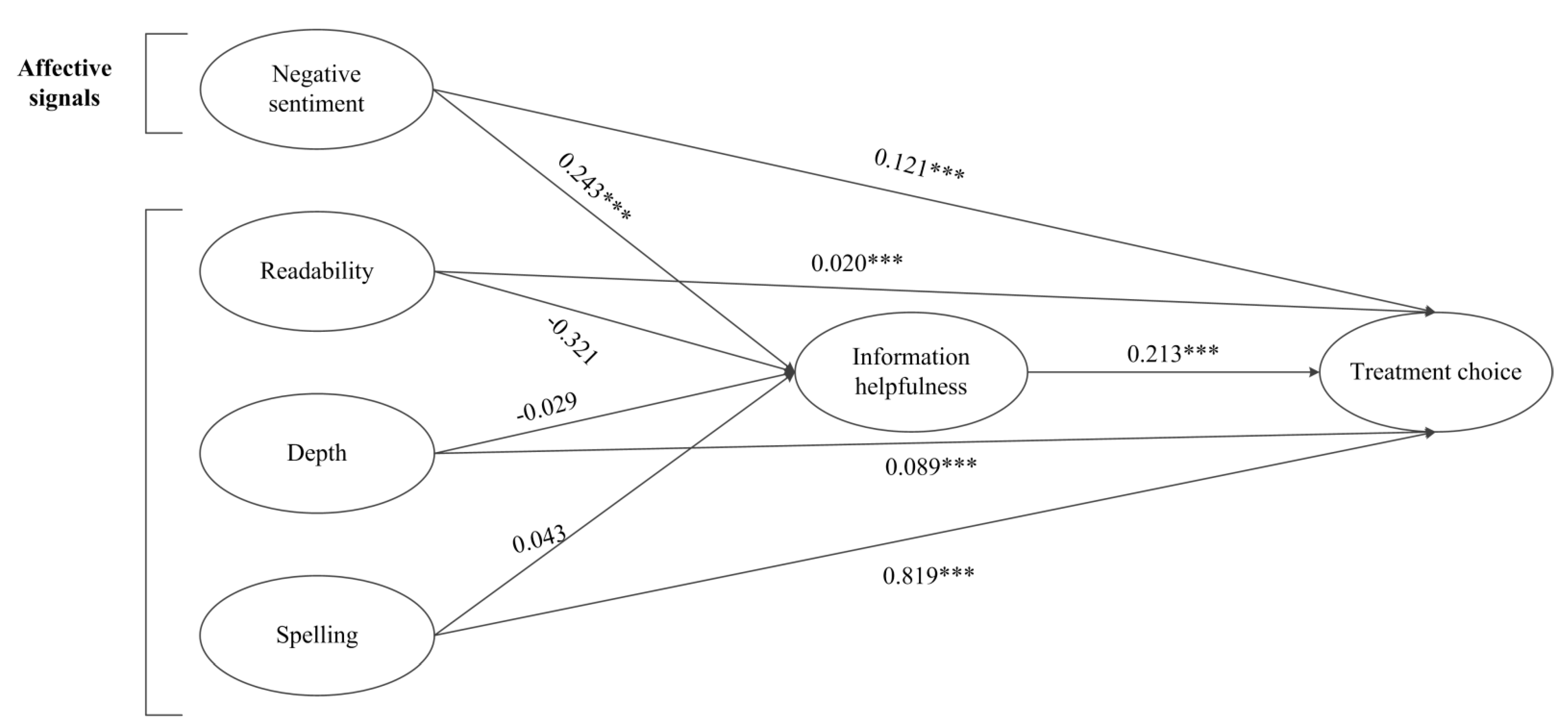

4.1. Analysis Results for Direct Effects

4.2. Results for Mediation Analysis

5. Discussion

5.1. Discussion of the Results

5.2. Theoretical Contributions

5.3. Practical Implications

5.4. Limitations and Future Research

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- Bidmon, S.; Elshiewy, O.; Terlutter, R.; Boztug, Y. What Patients Value in Physicians: Analyzing Drivers of Patient Satisfaction Using Physician-Rating Website Data. J. Med. Internet Res. 2020, 22, e13830. [Google Scholar] [CrossRef] [PubMed]

- Rothenfluh, F.; Germeni, E.; Schulz, P.J. Consumer Decision-Making Based on Review Websites: Are There Differences Between Choosing a Hotel and Choosing a Physician? J. Med. Internet Res. 2016, 18, e129. [Google Scholar] [CrossRef] [PubMed]

- Hanauer, D.A.; Zheng, K.; Singer, D.C.; Gebremariam, A.; Davis, M.M. Public Awareness, Perception, and Use of Online Physician Rating Sites. JAMA 2014, 311, 734–735. [Google Scholar] [CrossRef]

- Filieri, R.; McLeay, F.; Tsui, B.; Lin, Z. Consumer perceptions of information helpfulness and determinants of purchase intention in online consumer reviews of services. Inf. Manag. 2018, 55, 956–970. [Google Scholar] [CrossRef]

- Kaushik, K.; Mishra, R.; Rana, N.P.; Dwivedi, Y.K. Exploring reviews and review sequences on e-commerce platform: A study of helpful reviews on Amazon.in. J. Retail. Consum. Serv. 2018, 45, 21–32. [Google Scholar] [CrossRef]

- Nieto-García, M.; Muñoz-Gallego, P.A.; González-Benito, Ó. Tourists’ willingness to pay for an accommodation: The effect of eWOM and internal reference price. Int. J. Hosp. Manag. 2017, 62, 67–77. [Google Scholar] [CrossRef]

- Öğüt, H.; Onur Taş, B.K. The influence of internet customer reviews on the online sales and prices in hotel industry. Serv. Ind. J. 2012, 32, 197–214. [Google Scholar] [CrossRef]

- Emmert, M.; Halling, F.; Meier, F. Evaluations of Dentists on a German Physician Rating Website: An Analysis of the Ratings. J. Med. Internet Res. 2015, 17, e15. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Lu, W.; Wu, H. How Online Reviews and Services Affect Physician Outpatient Visits: Content Analysis of Evidence From Two Online Health Care Communities. JMIR Med. Inform. 2019, 7, e16185. [Google Scholar] [CrossRef]

- Shah, A.M.; Yan, X.; Shah, S.A.A.; Shah, S.J.; Mamirkulova, G. Exploring the impact of online information signals in leveraging the economic returns of physicians. J. Biomed. Inform. 2019, 98, 103272. [Google Scholar] [CrossRef]

- Li, J.; Liu, M.; Li, X.; Liu, X.; Liu, J. Developing Embedded Taxonomy and Mining Patients’ Interests From Web-Based Physician Reviews: Mixed-Methods Approach. J. Med. Internet Res. 2018, 20, e254. [Google Scholar] [CrossRef] [PubMed]

- Cao, X.; Liu, Y.; Zhu, Z.; Hu, J.; Chen, X. Online selection of a physician by patients: Empirical study from elaboration likelihood perspective. Comput. Hum. Behav. 2017, 73, 403–412. [Google Scholar] [CrossRef]

- Lu, N.; Wu, H. Exploring the impact of word-of-mouth about Physicians’ service quality on patient choice based on online health communities. BMC Med. Inform. Decis. 2016, 16, 151. [Google Scholar] [CrossRef]

- Li, J.; Tang, J.; Jiang, L.; Yen, D.C.; Liu, X. Economic Success of Physicians in the Online Consultation Market: A Signaling Theory Perspective. Int. J. Electron. Comm. 2019, 23, 244–271. [Google Scholar] [CrossRef]

- Emmert, M.; Meier, F.; Pisch, F.; Sander, U. Physician Choice Making and Characteristics Associated With Using Physician-Rating Websites: Cross-Sectional Study. J. Med. Internet Res. 2013, 15, e187. [Google Scholar] [CrossRef] [PubMed]

- Shah, A.M.; Yan, X.; Khan, S.; Shah, S.J. Exploring the Impact of Review and Service-related Signals on Online Physician Review Helpfulness: A Multi-Methods Approach. In Proceedings of the Twenty-Fourth Pacific Asia Conference on Information Systems, Dubai, United Arab Emirates, 22–24 June 2020; pp. 1–14. [Google Scholar]

- Zuo, Y.; Liu, J. A reputation-based model for mobile agent migration for information search and retrieval. Int. J. Inf. Manag. 2017, 37, 357–366. [Google Scholar] [CrossRef]

- Cambria, E.; Hussain, A.; Durrani, T.; Havasi, C.; Eckl, C.; Munro, J. Sentic Computing for patient centered applications. In Proceedings of the IEEE 10th International Conference on Signal Processing, Beijing, China, 24–28 October 2010; pp. 1279–1282. [Google Scholar]

- Wang, X.; Zhao, K.; Street, N. Analyzing and Predicting User Participations in Online Health Communities: A Social Support Perspective. J. Med. Internet Res. 2017, 19, e130. [Google Scholar] [CrossRef] [PubMed]

- Cheung, C.M.K.; Thadani, D.R. The impact of electronic word-of-mouth communication: A literature analysis and integrative model. Dec. Support Syst. 2012, 54, 461–470. [Google Scholar] [CrossRef]

- Wu, B. Patient Continued Use of Online Health Care Communities: Web Mining of Patient-Doctor Communication. J. Med. Internet Res. 2018, 20, e126. [Google Scholar] [CrossRef]

- Chen, Y.; Xie, J. Online Consumer Review: Word-of-Mouth as a New Element of Marketing Communication Mix. Manag. Sci. 2008, 54, 477–491. [Google Scholar] [CrossRef]

- Erkan, I.; Evans, C. The influence of eWOM in social media on consumers’ purchase intentions: An extended approach to information adoption. Comput. Hum. Behav. 2016, 61, 47–55. [Google Scholar] [CrossRef]

- Chang, S.H.; Cataldo, J.K. A systematic review of global cultural variations in knowledge, attitudes and health responses to tuberculosis stigma. Int. J. Tuberc. Lung Dis. Off. J. Int. Union Against Tuberc. Lung Dis. 2014, 18, 168–173. [Google Scholar] [CrossRef]

- Hussain, S.; Ahmed, W.; Jafar, R.M.S.; Rabnawaz, A.; Jianzhou, Y. eWOM source credibility, perceived risk and food product customer’s information adoption. Comput. Hum. Behav. 2017, 66, 96–102. [Google Scholar] [CrossRef]

- Patel, S.; Cain, R.; Neailey, K.; Hooberman, L. Public Awareness, Usage, and Predictors for the Use of Doctor Rating Websites: Cross-Sectional Study in England. J. Med. Internet Res. 2018, 20, e243. [Google Scholar] [CrossRef]

- Memon, M.; Ginsberg, L.; Simunovic, N.; Ristevski, B.; Bhandari, M.; Kleinlugtenbelt, Y.V. Quality of Web-based Information for the 10 Most Common Fractures. Interact. J. Med. Res. 2016, 5, e19. [Google Scholar] [CrossRef] [PubMed]

- Sun, Y.; Zhang, Y.; Gwizdka, J.; Trace, C.B. Consumer Evaluation of the Quality of Online Health Information: Systematic Literature Review of Relevant Criteria and Indicators. J. Med. Internet Res. 2019, 21, e12522. [Google Scholar] [CrossRef]

- Batini, C.; Scannapieco, M. Data and Information Quality; Springer: Cham, Switzerland, 2016; p. 500. [Google Scholar]

- Bujnowska-Fedak, M.M.; Węgierek, P. The Impact of Online Health Information on Patient Health Behaviours and Making Decisions Concerning Health. Int. J. Environ. Res. Public Health 2020, 17, 880. [Google Scholar] [CrossRef] [PubMed]

- Yoon, T.J. Quality Information Disclosure and Patient Reallocation in the Healthcare Industry: Evidence from Cardiac Surgery Report Cards. Mark. Sci. 2019, 39, 459–665. [Google Scholar] [CrossRef]

- Lu, X.; Zhang, R. Impact of Physician-Patient Communication in Online Health Communities on Patient Compliance: Cross-Sectional Questionnaire Study. J. Med. Internet Res. 2019, 21, e12891. [Google Scholar] [CrossRef]

- Yazdinejad, A.; Srivastava, G.; Parizi, R.M.; Dehghantanha, A.; Choo, K.K.R.; Aledhari, M. Decentralized Authentication of Distributed Patients in Hospital Networks Using Blockchain. IEEE J. Biomed. Health Inform. 2020, 24, 2146–2156. [Google Scholar] [CrossRef] [PubMed]

- Javed, A.R.; Fahad, L.G.; Farhan, A.A.; Abbas, S.; Srivastava, G.; Parizi, R.M.; Khan, M.S. Automated cognitive health assessment in smart homes using machine learning. Sustain. Cit. Soc. 2021, 65, 102572. [Google Scholar] [CrossRef]

- Shah, A.M.; Naqvi, R.A.; Jeong, O.-R. The Impact of Signals Transmission on Patients’ Choice through E-Consultation Websites: An Econometric Analysis of Secondary Datasets. Int. J. Environ. Res. Public Health 2021, 18, 5192. [Google Scholar] [CrossRef]

- Keselman, A.; Arnott Smith, C.; Murcko, A.C.; Kaufman, D.R. Evaluating the Quality of Health Information in a Changing Digital Ecosystem. J. Med. Internet Res. 2019, 21, e11129. [Google Scholar] [CrossRef]

- Wang, G.; Li, J.; Hopp, W.J.; Fazzalari, F.L.; Bolling, S.F. Using Patient-Specific Quality Information to Unlock Hidden Healthcare Capabilities. Manuf. Serv. Oper. Manag. 2019, 21, 582–601. [Google Scholar] [CrossRef]

- Spence, A.M. Market Signaling: Informational Transfer in Hiring and Related Screening Processes (Harvard Economic Studies), 1st ed.; Harvard University Press: Cambridge, MA, USA, 1974; Volume 143. [Google Scholar]

- Connelly, B.L.; Certo, S.T.; Ireland, R.D.; Reutzel, C.R. Signaling Theory: A Review and Assessment. J. Manag. 2011, 37, 39–67. [Google Scholar] [CrossRef]

- Khurana, S.; Qiu, L.; Kumar, S. When a Doctor Knows, It Shows: An Empirical Analysis of Doctors’ Responses in a Q&A Forum of an Online Healthcare Portal. Inf. Syst. Res. 2019, 30, 872–891. [Google Scholar] [CrossRef]

- Proserpio, D.; Zervas, G. Online Reputation Management: Estimating the Impact of Management Responses on Consumer Reviews. Mark. Sci. 2017, 36, 645–665. [Google Scholar] [CrossRef]

- Lee, M.; Jeong, M.; Lee, J. Roles of negative emotions in customers’ perceived helpfulness of hotel reviews on a user-generated review website: A text mining approach. Int. J. Contemp. Hosp. Manag. 2017, 29, 762–783. [Google Scholar] [CrossRef]

- Zhao, C. Are Negative Sentiments “Negative” for Review Helpfulness? In Proceedings of the Twenty-Fourth Pacific Asia Conference on Information Systems, Dubai, United Arab Emirates, 22–24 June 2020; pp. 1–14. [Google Scholar]

- Chen, L.; Baird, A.; Straub, D. A linguistic signaling model of social support exchange in online health communities. Dec. Support Syst. 2020, 130, 113233. [Google Scholar] [CrossRef]

- Korfiatis, N.; García-Bariocanal, E.; Sánchez-Alonso, S. Evaluating content quality and helpfulness of online product reviews: The interplay of review helpfulness vs. review content. Electron. Commer. R A 2012, 11, 205–217. [Google Scholar] [CrossRef]

- Yin, D.; Bond, S.D.; Zhang, H. Keep Your Cool or Let it Out: Nonlinear Effects of Expressed Arousal on Perceptions of Consumer Reviews. J. Mark. Res. 2017, 54, 447–463. [Google Scholar] [CrossRef]

- Siering, M.; Muntermann, J.; Rajagopalan, B. Explaining and predicting online review helpfulness: The role of content and reviewer-related signals. Dec. Support Syst. 2018, 108, 1–12. [Google Scholar] [CrossRef]

- Salehan, M.; Kim, D.J. Predicting the performance of online consumer reviews: A sentiment mining approach to big data analytics. Dec. Support Syst. 2016, 81, 30–40. [Google Scholar] [CrossRef]

- Wang, X.; Tang, L.; Kim, E. More than words: Do emotional content and linguistic style matching matter on restaurant review helpfulness? Int. J. Hosp. Manag. 2019, 77, 438–447. [Google Scholar] [CrossRef]

- Ghose, A.; Ipeirotis, P.G. Estimating the Helpfulness and Economic Impact of Product Reviews: Mining Text and Reviewer Characteristics. IEEE T Knowl. Data En. 2011, 23, 1498–1512. [Google Scholar] [CrossRef]

- Ren, G.; Hong, T. Examining the relationship between specific negative emotions and the perceived helpfulness of online reviews. Inform. Process. Manag. 2019, 56, 1425–1438. [Google Scholar] [CrossRef]

- Fang, B.; Ye, Q.; Kucukusta, D.; Law, R. Analysis of the perceived value of online tourism reviews: Influence of readability and reviewer characteristics. Tour. Manag. 2016, 52, 498–506. [Google Scholar] [CrossRef]

- Eslami, S.P.; Ghasemaghaei, M.; Hassanein, K. Which online reviews do consumers find most helpful? A multi-method investigation. Dec. Support Syst. 2018, 113, 32–42. [Google Scholar] [CrossRef]

- Forman, C.; Ghose, A.; Wiesenfeld, B. Examining the Relationship Between Reviews and Sales: The Role of Reviewer Identity Disclosure in Electronic Markets. Inf. Syst. Res. 2008, 19, 291–313. [Google Scholar] [CrossRef]

- Koo, C.; Wati, Y.; Park, K.; Lim, M.K. Website Quality, Expectation, Confirmation, and End User Satisfaction: The Knowledge-Intensive Website of the Korean National Cancer Information Center. J. Med. Internet Res. 2011, 13, e81. [Google Scholar] [CrossRef]

- Churchill, G.A.; Surprenant, C. An Investigation into the Determinants of Customer Satisfaction. J. Mark. Res. 1982, 19, 491–504. [Google Scholar] [CrossRef]

- Gu, D.; Yang, X.; Li, X.; Jain, H.K.; Liang, C. Understanding the Role of Mobile Internet-Based Health Services on Patient Satisfaction and Word-of-Mouth. Int. J. Environ. Res. Public Health 2018, 15, 1972. [Google Scholar] [CrossRef] [PubMed]

- Kordzadeh, N. An Empirical Examination of Factors Influencing the Intention to Use Physician Rating Websites. In Proceedings of the 52nd Hawaii International Conference on System Sciences, Grand Wailea, Maui, HI, USA, 8–11 January 2019; pp. 4346–4354. [Google Scholar]

- CDC. Coronavirus Disease 2019 (COVID-19). Available online: https://www.cdc.gov/coronavirus/2019-ncov/cases-updates/cases-in-us.html (accessed on 27 June 2020).

- Golinelli, D.; Boetto, E.; Carullo, G.; Nuzzolese, A.G.; Landini, M.P.; Fantini, M.P. How the COVID-19 pandemic is favoring the adoption of digital technologies in healthcare: A literature review. MedRxiv 2020, 1, 1–19. [Google Scholar] [CrossRef]

- Statista. Number of Active Physicians in the U.S. in 2020, by Specialty Area. Available online: https://www.statista.com/statistics/209424/us-number-of-active-physicians-by-specialty-area/ (accessed on 27 May 2020).

- Statista. Leading 10 U.S. States Based on the Number of Active Specialist Physicians as of 2020. Available online: https://www.statista.com/statistics/250125/us-states-with-highest-number-of-active-specialist-physicians/ (accessed on 30 May 2020).

- Cambria, E. Affective Computing and Sentiment Analysis. IEEE Intell. Syst. 2016, 31, 102–107. [Google Scholar] [CrossRef]

- Poria, S.; Cambria, E.; Winterstein, G.; Huang, G.-B. Sentic patterns: Dependency-based rules for concept-level sentiment analysis. Knowl.-Based Syst. 2014, 69, 45–63. [Google Scholar] [CrossRef]

- Farr, J.N.; Jenkins, J.J.; Paterson, D.G. Simplification of Flesch Reading Ease Formula. J. Appl. Psychol. 1951, 35, 333–337. [Google Scholar] [CrossRef]

- Pennebaker, J.W.; Boyd, R.L.; Jordan, K.; Blackburn, K. The Development and Psychometric Properties of LIWC2015. Available online: https://doi.org/10.15781/T25P41 (accessed on 21 May 2020).

- Winkler, W.E. String Comparator Metrics and Enhanced Decision Rules in the Fellegi-Sunter Model of Record Linkage. In Proceedings of the Section on Survey Research Methods, Anaheim, CA, USA, 6–9 August 1990; pp. 354–359. [Google Scholar]

- Ramshaw, L.A.; Marcus, M.P. Text Chunking Using Transformation-Based Learning; Springer: Dordrecht, The Netherlands, 1999; pp. 157–176. [Google Scholar]

- Cambria, E.; Hussain, A. Sentic Computing: A Common-Sense-Based Framework for Concept-Level Sentiment Analysis, 1st ed.; Springer: Cham, Switzerland, 2015; p. 176. [Google Scholar]

- Plutchik, R. The Nature of Emotions: Human emotions have deep evolutionary roots, a fact that may explain their complexity and provide tools for clinical practice. Am. Sci. 2001, 89, 344–350. [Google Scholar] [CrossRef]

- Hair, J.; Black, W.; Babin, B.; Anderson, R. Multivariate Data Analysis, 7th ed.; Pearson: New York, NY, USA, 2010; p. 816. [Google Scholar]

- Li, J.; Tang, J.; Yen David, C.; Liu, X. Disease risk and its moderating effect on the e-consultation market offline and online signals. Inf. Technol. People 2019, 32, 1065–1084. [Google Scholar] [CrossRef]

- Chen, M.-J.; Farn, C.-K. Examining the Influence of Emotional Expressions in Online Consumer Reviews on Perceived Helpfulness. Inf. Process. Manag. 2020, 57, 102266. [Google Scholar] [CrossRef]

- Preacher, K.J.; Hayes, A.F. Asymptotic and resampling strategies for assessing and comparing indirect effects in multiple mediator models. Behav. Res. Methods 2008, 40, 879–891. [Google Scholar] [CrossRef]

- Shrout, P.E.; Bolger, N. Mediation in experimental and nonexperimental studies: New procedures and recommendations. Psychol. Methods 2002, 7, 422–445. [Google Scholar] [CrossRef]

- Mauro, N.; Ardissono, L.; Petrone, G. User and item-aware estimation of review helpfulness. Inf. Process. Manag. 2021, 58, 102434. [Google Scholar] [CrossRef]

- Kim, E.; Tang, R. Rectifying Failure of Service: How Customer Perceptions of Justice Affect Their Emotional Response and Social Media Testimonial. J. Hosp. Mark. Manag. 2016, 25, 897–924. [Google Scholar] [CrossRef]

- Cacioppo, J.T.; Berntson, G.G. Relationship between attitudes and evaluative space: A critical review, with emphasis on the separability of positive and negative substrates. Psychol. Bull. 1994, 115, 401–423. [Google Scholar] [CrossRef]

- Qazi, A.; Shah Syed, K.B.; Raj, R.G.; Cambria, E.; Tahir, M.; Alghazzawi, D. A concept-level approach to the analysis of online review helpfulness. Comput. Hum. Behav. 2016, 58, 75–81. [Google Scholar] [CrossRef]

- Lee, S.; Choeh, J.Y. The determinants of helpfulness of online reviews. Behav. Inf. Technol. 2016, 35, 853–863. [Google Scholar] [CrossRef]

- Zhao, X.; Wang, L.; Guo, X.; Law, R. The influence of online reviews to online hotel booking intentions. Int. J. Contemp. Hosp. Manag. 2015, 27, 1343–1364. [Google Scholar] [CrossRef]

- Chen, Y.; Yang, L.; Zhang, M.; Yang, J. Central or peripheral? Cognition elaboration cues’ effect on users’ continuance intention of mobile health applications in the developing markets. Int. J. Med Inform. 2018, 116, 33–45. [Google Scholar] [CrossRef]

- Gao, B.; Hu, N.; Bose, I. Follow the herd or be myself? An analysis of consistency in behavior of reviewers and helpfulness of their reviews. Decis. Support Syst. 2017, 95, 1–11. [Google Scholar] [CrossRef]

- Shah, A.M.; Yan, X.; Qayyum, A.; Naqvi, R.A.; Shah, S.J. Mining topic and sentiment dynamics in physician rating websites during the early wave of the COVID-19 pandemic: Machine learning approach. Int. J. Med Inform. 2021, 149, 104434. [Google Scholar] [CrossRef]

- Kumar, V.; Hundal, B.S. Evaluating the service quality of solar product companies using SERVQUAL model. Int. J. Energy Sect. Manag. 2020, 13, 670–693. [Google Scholar] [CrossRef]

| Variables | Definition | Analytical Method | Mean | Std. | Min. | Max. |

|---|---|---|---|---|---|---|

| Dependent Variable Treatment choice | Rating—Physician quality ratings (Negative = 1–2, Neutral = 3, Positive = 4–5) Blogs—The number of blogs initiated by a physician (logarithmic value) Articles—The number of articles published by a physician (logarithmic value) Replies—The number of replies to patients by physician | 4.23 3.35 0.14 5.3 | 0.55 20.4 10.05 112.6 | 1 0 0 0 | 5 25 45 48 | |

| Independent Variables Negative sentiment | Score—Sentiment score of a review (in the range [−1, +1], where −1 is the strongest negative opinion) | Sentiment analysis | −0.34 | 1.22 | −1 | +1 |

| Review readability | Readability—The ease of reading score of a review | FKRE | 0.84 | 0.22 | – | – |

| Review depth | Depth—The number of words in the review | LIWC | 67.13 | 156.13 | – | – |

| Review spelling | Spelling—The level of spelling of the review (posted version vs. corrected version) | Spell checker software | 98.15 | 112.12 | – | – |

| Mediating Variable Information helpfulness | IH—Ratio of helpful/useful votes to the total votes | 0.92 | 0.07 | 0 | 1 | |

| Control Variables Physician title | Title—Physician title in offline hospital “1” if medical doctor, “0” otherwise | 0.91 | 0.51 | 0 | 1 | |

| Practical experience | Experience—Practical experience refers to how long a physician has provided professional service. Practical experience was coded with “0” for 0–10 years experience, “1” for 11–20 years experience, and “2” for more than 20 years experience | 1.34 | 0.43 | 0 | 2 | |

| Physician gender | Gender—Gender was coded with “0” for male and “1” for female | 0.89 | 0.49 | 0 | 1 |

| Variables | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 |

|---|---|---|---|---|---|---|---|---|---|---|

| 1. Treatment choice | 1.00 | |||||||||

| 2. Score | 0.25 | 1.00 | ||||||||

| 3. Readability | 0.01 | 0.02 | 1.00 | |||||||

| 4. Depth | 0.12 | 0.15 | 0.21 | 1.00 | ||||||

| 5. Spelling | −0.03 | −0.02 | −0.04 | −0.05 | 1.00 | |||||

| 6. IH | 0.32 | 0.41 | 0.25 | 0.21 | 0.23 | 1.00 | ||||

| 7. Title | 0.19 | 0.24 | 0.19 | 0.08 | 0.12 | 0.18 | 1.00 | |||

| 8. Experience | 0.32 | 0.28 | 0.32 | 0.02 | 0.12 | 0.13 | 0.10 | 1.00 | ||

| 9. Gender | 0.12 | 0.14 | 0.21 | 0.23 | 0.20 | 0.29 | 0.32 | 0.28 | 1.00 | |

| 10. Title | 0.13 | 0.151 | 0.114 | 0.21 | 0.19 | 0.22 | 0.24 | 0.25 | 0.23 | 1.00 |

| Variables | Model 1 | Model 2 |

|---|---|---|

| Constant | 0.121 (0.011) | 0.111 (0.015) |

| Title | 0.146 *** (0.004) | 0.041 *** (0.003) |

| Experience | 0.241 ** (0.028) | 0.261 ** (0.048) |

| Gender | 0.010 (0.015) | 0.016 (0.027) |

| Score | 0.121 *** (0.004) | |

| Readability | 0.020 *** (0.001) | |

| Depth | 0.089 *** (0.007) | |

| Spelling | 0.819 *** (0.145) | |

| Log (IH) | 0.213 *** (0.012) | |

| Adjusted-R2 | 0.208 | 0.217 |

| Log-likelihood ratio | 429.631 | 419.765 |

| F | 76.683 *** | 7.174 *** |

| n | 52, 340 | 52, 340 |

| Model’s Goodness of Fit | Hypotheses | Relationship | Β | T | ||

|---|---|---|---|---|---|---|

| χ2/df | 2.441 | H5 | Sentiment → IH | 0.243 *** | 4.021 | Supported |

| NFI | 0.916 | H6 | Readability → IH | −0.312 | −1.432 | Not supported |

| TLI | 0.925 | H7 | Depth → IH | −0.029 | −0.543 | Not supported |

| CFI | 0.939 | H8 | Spelling → IH | 0.043 | 1.243 | Not supported |

| RMSEA | 0.051 | |||||

| Indirect Effect CI at 95% | |||||

|---|---|---|---|---|---|

| Hypothesis | Direct Effect without Mediator | Direct Effect with Mediator | Upper Bounds | Lower Bounds | Mediation Category |

| Score → IH → treatment choice | −0.013 | −0.034 | 0.492 | 0.251 | Indirect mediation |

| Readability → IH → treatment choice | −0.040 | −0.034 | 0.035 | −0.642 | Insignificant |

| Depth → IH → treatment choice | 0.197 * | 0.156 * | 0.211 | 0.069 | Partial mediation |

| Spelling → IH → treatment choice | 0.265 * | 0.203 * | 0.089 | −0.007 | Insignificant |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shah, A.M.; Ali, M.; Qayyum, A.; Begum, A.; Han, H.; Ariza-Montes, A.; Araya-Castillo, L. Exploring the Impact of Linguistic Signals Transmission on Patients’ Health Consultation Choice: Web Mining of Online Reviews. Int. J. Environ. Res. Public Health 2021, 18, 9969. https://doi.org/10.3390/ijerph18199969

Shah AM, Ali M, Qayyum A, Begum A, Han H, Ariza-Montes A, Araya-Castillo L. Exploring the Impact of Linguistic Signals Transmission on Patients’ Health Consultation Choice: Web Mining of Online Reviews. International Journal of Environmental Research and Public Health. 2021; 18(19):9969. https://doi.org/10.3390/ijerph18199969

Chicago/Turabian StyleShah, Adnan Muhammad, Mudassar Ali, Abdul Qayyum, Abida Begum, Heesup Han, Antonio Ariza-Montes, and Luis Araya-Castillo. 2021. "Exploring the Impact of Linguistic Signals Transmission on Patients’ Health Consultation Choice: Web Mining of Online Reviews" International Journal of Environmental Research and Public Health 18, no. 19: 9969. https://doi.org/10.3390/ijerph18199969

APA StyleShah, A. M., Ali, M., Qayyum, A., Begum, A., Han, H., Ariza-Montes, A., & Araya-Castillo, L. (2021). Exploring the Impact of Linguistic Signals Transmission on Patients’ Health Consultation Choice: Web Mining of Online Reviews. International Journal of Environmental Research and Public Health, 18(19), 9969. https://doi.org/10.3390/ijerph18199969