Telemonitoring in Chronic Pain Management Using Smartphone Apps: A Randomized Controlled Trial Comparing Usual Assessment against App-Based Monitoring with and without Clinical Alarms

Abstract

1. Introduction

Chronic Pain: A Major Public Health Challenge

2. Materials and Methods

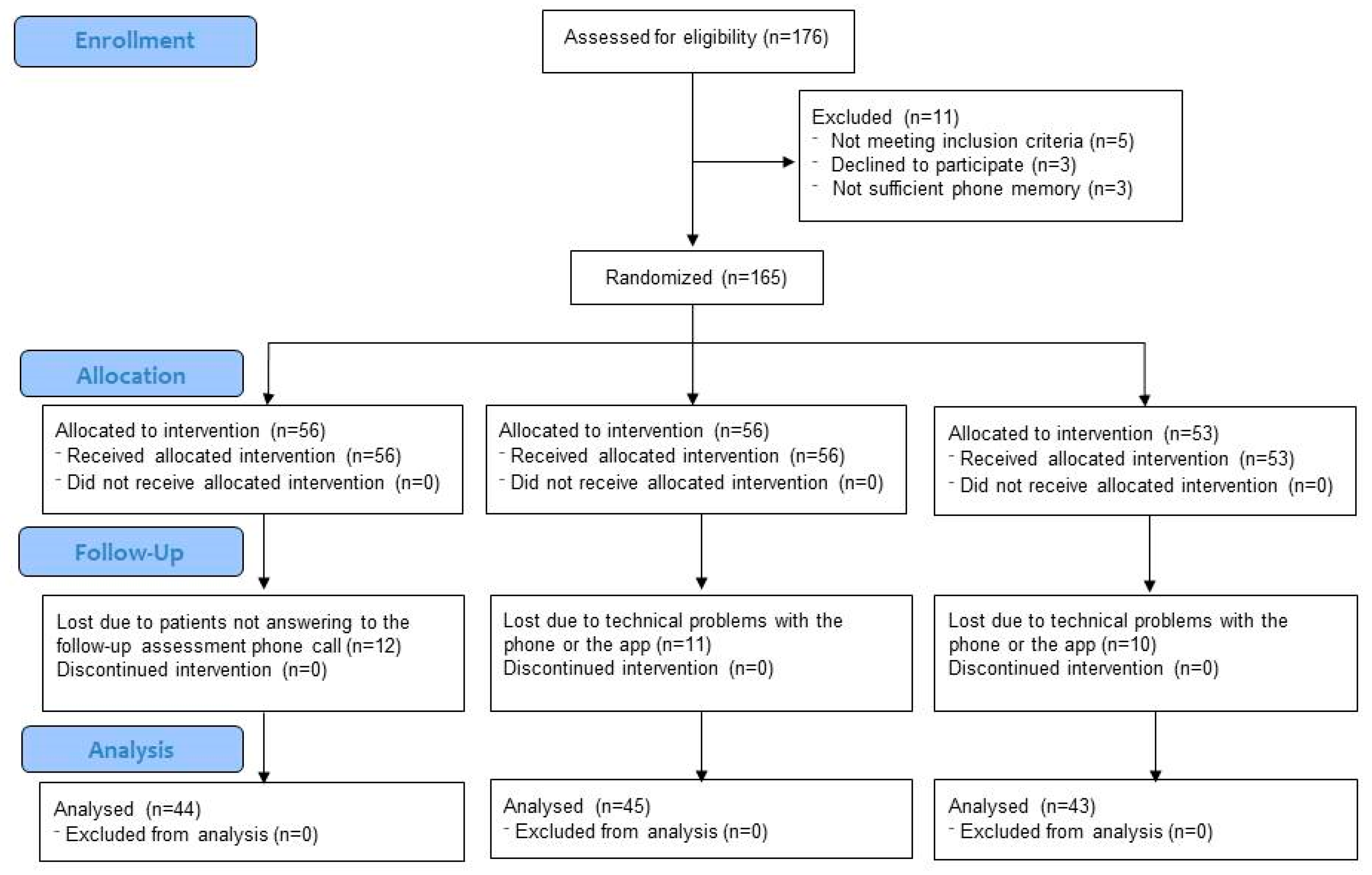

2.1. Design

2.2. Participants

2.3. Interventions

2.4. Pain Monitor App

2.5. Data Analysis

2.6. Trial Registration and Ethics

3. Results

3.1. Sample Characteristics

3.2. Baseline Differences in Study Variables

3.3. Changes in Pain Severity, Pain Interference, Fatigue and Mood across Treatment Conditions

3.4. Differences in Frequency of Experienced Physical Symptoms

3.5. Professionals’ Experience with the App

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

Appendix A

- Morning pain > 7 during 5 consecutive days

- Evening pain > 7 during 5 consecutive days

- Morning sadness >7 during 5 consecutive days

- Evening sadness >7 during 5 consecutive days

- Morning anxiety >7 during 5 consecutive days

- Evening anxiety >7 during 5 consecutive days

- Vomiting during 2 consecutive days

- Tachycardia during 2 consecutive days

- Blurred vision during 2 consecutive days

- Headache during 2 consecutive days

- Dry mouth during 2 consecutive days

- Constipation during 5 consecutive days

- Drowsiness during 5 consecutive days

- Nausea during 3 consecutive days

- Itching during 3 consecutive days

- Diarrhea during 2 consecutive days

- Fever during 2 consecutive days

- Facial redness during 2 consecutive days

- Urine retention during 2 consecutive days

- Unsteady walking during 3 consecutive days

- Excessive sweating during 7 consecutive days

- Dizziness during 3 consecutive days

- Do not take the medication and there is no intention to take it

- Number of rescue medication > 3

- Sleep interference > 7

- Missing data = 2 consecutive days

Appendix B

Appendix B.1

- Please indicate your date of birth (DD/MM/YYYY)

- What type of user are you?

- I am a person with chronic pain

- I do not have chronic pain, but I want to see the app

- Please indicate your gender:

- Male

- Female

- Please indicate your type of pain. You may select more than one option:

- Fibromyalgia

- Low back pain

- Cervical pain

- Rheumatoid arthritis

- Osteoarthritis; Headache

- Neuropathic pain

- Cancer pain

- None of the above.

- If you selected “None of the above” please indicate your type of pain. Otherwise, leave this question blank. Press OK to continue.

- Please indicate the location where your pain is more intense:

- Head

- Shoulder

- Neck

- High back

- Lower back

- Arm

- Elbow

- Wrist

- Hand

- Abdomen

- Chest

- Buttock

- Hip

- Leg

- Knee

- Foot

- Whole body

- Somewhere not listed

- Who is currently treating your pain? You may select more than one option:

- General practitioner

- Rheumatologist

- Orthopedic specialist

- Rehabilitation physician

- Psychiatrist

- Pain Unit

- Neurosurgeon

- Neurologist

- Oncologist

- Another professional.

- When did your current pain start?

- Less than one year ago

- Between 1 and 5 years ago

- Between 5 and 10 years ago

- More than 10 years ago

- What is your current treatment for pain? You may select more than one option:

- Physiotherapy

- Pharmacotherapy

- Infiltrations

- Psychological treatment

- Natural / alternative treatments

- My pain is not being treated

- Did you start a new treatment for pain in the last month?

- Yes

- No

- Please select the treatment/s you started in the last month. You may select more than one option:

- Physiotherapy

- Pharmacotherapy

- Infiltrations

- Psychological treatment

- Natural / alternative treatments

- I have not started a new treatment

- What is your marital status?

- Single

- Married

- In a relationship

- Divorced

- Separated

- Widowed

- What is your job status?

- Active worker

- Sick leave

- Permanent disability

- Unemployed

- Homemaker

- Retired

- Student

- What is the highest level of education you have completed?

- No studies

- Less than high school

- High school graduate

- Technical training

- University degree

- Do you currently have a diagnosis of depression by a physician or a psychologist?

- Yes

- No

- Do you currently have a diagnosis of anxiety by a physician or a psychologist?

- Yes

- No

Appendix B.2

- Please indicate the intensity of your CURRENT PAIN:0 No pain ---------10 Extreme pain

- Please indicate the intensity of your CURRENT FATIGUE:0 No fatigue ---------10 Extreme fatigue

- Please indicate the intensity of your CURRENT SADNESS:0 No sadness -------- 10 Extremely sad

- Please indicate the intensity of your CURRENT ANXIETY:0 No anxiety ------- 10 Extremely anxious

- Please indicate the intensity of your CURRENT ANGER:0 No anger ------- 10 Extremely angry

Appendix B.3

- Did your PAIN interfere with the quality of your SLEEP LAST NIGHT?0 No interference ------- 10 Maximum interference

- Did your PAIN interfere with your SOCIAL INTERACTIONS TODAY?0 No interference ------- 10 Maximum interference

- Did your PAIN interfere with the quality of your LEISURE ACTIVITIES TODAY?0 No interference ------- 10 Maximum interference

- Did your PAIN interfere with your USUAL WORK or HOUSEWORK TODAY?0 No interference ------- 10 Maximum interference

- Did you experience any of these symptoms TODAY? You may select more than one option. Only select symptoms that were not present before pain treatment onset:

- Nausea

- Vomiting

- Tachycardia

- Constipation

- Drowsiness / sedation

- Blurred vision

- Dry mouth

- Headache

- None of the above

- Did you experience any of these symptoms TODAY? You may select more than one option. Only select symptoms that were not present before pain treatment onset:

- Dizziness

- Itching

- Diarrhea

- Gait instability

- Excessive sweating

- Fever

- Urine retention

- Facial redness

- A different symptom

- None of the above

- Did you take your prescribed medication TODAY?

- Yes

- No, but I will do it later

- No and I do not plan to take it

- I have not been prescribed a pain medication

- How many times did you take a rescue medication TODAY?

- 0

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- More than 10

References

- de C. Williams, A.C.; Craig, K.D. Updating the definition of pain. Pain 2016, 157, 2420–2423. [Google Scholar] [CrossRef] [PubMed]

- Bevan, S.; Quadrello, T.; Mcgee, R.; Mahdon, M.; Vavrovsky, A.; Barham, L. Fit for Work Pain-European Report; The Work Fundation: London, UK, 2009. [Google Scholar]

- Breivik, H.; Collett, B.; Ventafridda, V.; Cohen, R.; Gallacher, D. Survey of chronic pain in Europe: Prevalence, impact on daily life, and treatment. Eur. J. Pain 2006, 10, 287–333. [Google Scholar] [CrossRef] [PubMed]

- Johannes, C.B.; Le, T.K.; Zhou, X.; Johnston, J.A.; Dworkin, R.H. The prevalence of chronic pain in United States adults: Results of an Internet-based survey. J. Pain 2010, 11, 1230–1239. [Google Scholar] [CrossRef] [PubMed]

- Wong, W.S.; Fielding, R. Prevalence and characteristics of chronic pain in the general population of Hong Kong. J. Pain 2011, 12, 236–245. [Google Scholar] [CrossRef] [PubMed]

- Fayaz, A.; Croft, P.; Langford, R.M.; Donaldson, L.J.; Jones, G.T. Prevalence of chronic pain in the UK: A systematic review and meta-analysis of population studies. BMJ Open 2016, 6, e010364. [Google Scholar] [CrossRef] [PubMed]

- Azevedo, L.F.; Costa-Pereira, A.; Mendonça, L.; Dias, C.C.; Castro-Lopes, J.M. Epidemiology of chronic pain: A population-based nationwide study on its prevalence, characteristics and associated disability in Portugal. J. Pain 2012, 13, 773–783. [Google Scholar] [CrossRef] [PubMed]

- Reid, K.J.; Harker, J.; Bala, M.M.; Truyers, C.; Kellen, E.; Bekkering, G.E.; Kleijnen, J. Epidemiology of chronic non-cancer pain in Europe: Narrative review of prevalence, pain treatments and pain impact. Curr. Med. Res. Opin. 2011, 27, 449–462. [Google Scholar] [CrossRef] [PubMed]

- Miró, J.; Paredes, S.; Rull, M.; Queral, R.; Miralles, R.; Nieto, R.; Huguet, A.; Baos, J. Pain in older adults: A prevalence study in the Mediterranean region of Catalonia. Eur. J. Pain 2007, 11, 83–92. [Google Scholar] [CrossRef] [PubMed]

- Gaskin, D.J.; Richard, P. The economic costs of pain in the United States. J. Pain 2012, 13, 715–724. [Google Scholar] [CrossRef]

- Bevan, S. Economic impact of musculoskeletal disorders (MSDs) on work in Europe. Best Pract. Res. Clin. Rheumatol. 2015, 29, 356–373. [Google Scholar] [CrossRef]

- Bevan, S.; Quadrello, T.; Mcgee, R.; Mahdon, M.; Vavrovsky, A.; Barham, L. Fit for Work? Musculoskeletal Disorders in the European Workforce. Available online: http://www.bollettinoadapt.it/old/files/document/3704FOUNDATION_19_10.pdf (accessed on 15 February 2018).

- Geneen, L.J.; Moore, R.A.; Clarke, C.; Martin, D.; Colvin, L.A.; Smith, B.H. Physical activity and exercise for chronic pain in adults: An overview of Cochrane Reviews. Cochrane Database Syst. Rev. 2017, 1, CD011279. [Google Scholar] [CrossRef] [PubMed]

- Gatchel, R.; McGeary, D.; McGeary, C.; Lippe, B. Interdisciplinary chronic pain management: Past, present, and future. Am. Psychol. 2014, 69, 119–130. [Google Scholar] [CrossRef] [PubMed]

- Hughes, L.S.; Clark, J.; Colclough, J.A.; Dale, E.; McMillan, D. Acceptance and commitment therapy (ACT) for chronic pain: A systematic review and meta-analyses. Clin. J. Pain 2017, 33, 552–568. [Google Scholar] [CrossRef] [PubMed]

- Salaffi, F.; Sarzi-Puttini, P.; Atzeni, F. How to measure chronic pain: New concepts. Best Pract. Res. Clin. Rheumatol. 2015, 29, 164–186. [Google Scholar] [CrossRef] [PubMed]

- Dansie, E.J.; Turk, D.C. Assessment of patients with chronic pain. Br. J. Anaesth. 2013, 111, 19–25. [Google Scholar] [CrossRef] [PubMed]

- Suso-Ribera, C.; Mesas, Á.; Medel, J.; Server, A.; Márquez, E.; Castilla, D.; Zaragozá, I.; García-Palacios, A. Improving pain treatment with a smartphone app: Study protocol for a randomized controlled trial. Trials 2018, 19, 145. [Google Scholar] [CrossRef]

- Mann, E.G.; LeFort, S.; VanDenKerkhof, E.G. Self-management interventions for chronic pain. Pain Manag. 2013, 3, 211–222. [Google Scholar] [CrossRef]

- OECD; EU. Health at a Glance: Europe 2016—State of Health in the EU Cycle; OECD Publishing: Paris, France, 2016; ISBN 9789264265585. [Google Scholar]

- Busse, R.; Blümel, M.; Scheller-Kreinsen, D.; Zentner, A. Tackling Chronic Disease in Europe: Strategies, Interventions and Challenges; WHO: Copenhagen, Denmark, 2010; Volume 20. [Google Scholar]

- Kikuchi, H.; Yoshiuchi, K.; Miyasaka, N.; Ohashi, K.; Yamamoto, Y.; Kumano, H.; Kuboki, T.; Akabayashi, A. Reliability of recalled self-report on headache intensity: Investigation using ecological momentary assessment technique. Cephalalgia 2006, 26, 1335–1343. [Google Scholar] [CrossRef]

- Kratz, A.L.; Murphy, S.L.; Braley, T.J. Ecological Momentary Assessment of Pain, Fatigue, Depressive, and Cognitive Symptoms Reveals Significant Daily Variability in Multiple Sclerosis. Arch. Phys. Med. Rehabil. 2017, 98, 2142–2150. [Google Scholar] [CrossRef]

- Allen, K.D.; Coffman, C.J.; Golightly, Y.M.; Stechuchak, K.M.; Keefe, F.J. Daily pain variations among patients with hand, hip, and knee osteoarthritis. Osteoarthr. Cartil. 2009, 17, 1275–1282. [Google Scholar] [CrossRef]

- Schneider, S.; Junghaenel, D.U.; Keefe, F.J.; Schwartz, J.E.; Stone, A.A.; Broderick, J.E. Individual differences in the day-to-day variability of pain, fatigue, and well-being in patients with rheumatic disease: Associations with psychological variables. Pain 2012, 153, 813–822. [Google Scholar] [CrossRef] [PubMed]

- Bartley, E.J.; Robinson, M.E.; Staud, R. Pain and fatigue variability patterns distinguish subgroups of Fibromyalgia patients. J. Pain 2018, 19, 372–381. [Google Scholar] [CrossRef] [PubMed]

- García-Palacios, A.; Herrero, R.; Belmonte, M.A.; Castilla, D.; Guixeres, J.; Molinari, G.; Banos, R.M.; Baños, R.M.; Botella, C.; Garcia-Palacios, A.; et al. Ecological momentary assessment for chronic pain in fibromyalgia using a smartphone: A randomized crossover study. Eur. J. Pain 2014, 18, 862–872. [Google Scholar] [CrossRef]

- Kirchner, T.R.; Shiffman, S. Ecological Momentary Assessment. In Wiley-Blackwell Handb. Addict. Psychopharmacology; MacKillop, J., de Wit, H., Eds.; John Wiley & Sons: Hoboken, NJ, USA, 2013; pp. 541–565. [Google Scholar] [CrossRef]

- Smyth, J.M.; Stone, A.A. Ecological momentary assessment research in behavioral medicine. J. Happiness Stud. 2003, 4, 35–52. [Google Scholar] [CrossRef]

- Deyo, R.A.; Mirza, S.K.; Turner, J.A.; Martin, B.I. Overtreating chronic back pain: Time to back off? J. Am. Board Fam. Med. 2009, 22, 62–68. [Google Scholar] [CrossRef]

- Dargan, P.J.; Simm, R.; Murray, C. New approaches towards chronic pain: Patient experiences of a solution-focused pain management programme. Br. J. Pain 2014, 8, 34–42. [Google Scholar] [CrossRef] [PubMed]

- Fashler, S.R.; Cooper, L.K.; Oosenbrug, E.D.; Burns, L.C.; Razavi, S.; Goldberg, L.; Katz, J. Systematic review of multidisciplinary chronic pain treatment facilities. Pain Res. Manag. 2016, 2016, 5960987. [Google Scholar] [CrossRef]

- Hong, J.; Reed, C.; Novick, D.; Happich, M. Costs associated with treatment of chronic low back pain: An analysis of the UK general practice research database. Spine (Phila. Pa. 1976) 2013, 38, 75–82. [Google Scholar] [CrossRef]

- Breivik, H.; Borchgrevink, P.C.; Allen, S.M.; Rosseland, L.A.; Romundstad, L.; Breivik Hals, E.K.; Kvarstein, G.; Stubhaug, A. Assessment of pain. Br. J. Anaesth. 2008, 101, 17–24. [Google Scholar] [CrossRef]

- World Health Organization. Innovative Care for Chronic Conditions; WHO: Geneva, Switzerland, 2002. [Google Scholar]

- Christensen, H.; Hickie, I.B. Using e-health applications to deliver new mental health services. Med. J. Aust. 2010, 192, 53–56. [Google Scholar] [CrossRef]

- Eccleston, C.; Blyth, F.M.; Dear, B.F.; Fisher, E.A.; Keefe, F.J.; Lynch, M.E.; Palermo, T.M.; Reid, M.C.; de C. Williams, A.C. Managing patients with chronic pain during the COVID-19 outbreak. Pain 2020, 161, 889–893. [Google Scholar] [CrossRef] [PubMed]

- Dworkin, R.H.; Turk, D.C.; Farrar, J.T.; Haythornthwaite, J.A.; Jensen, M.P.; Katz, N.P.; Kerns, R.D.; Stucki, G.; Allen, R.R.; Bellamy, N.; et al. Core outcome measures for chronic pain clinical trials: IMMPACT recommendations. Pain 2005, 113, 9–19. [Google Scholar] [CrossRef] [PubMed]

- Rosser, B.A.; Eccleston, C. Smartphone applications for pain management. J. Telemed. Telecare 2011, 17, 308–312. [Google Scholar] [CrossRef] [PubMed]

- Kaiser, U.; Kopkow, C.; Deckert, S.; Neustadt, K.; Jacobi, L.; Cameron, P.; De Angelis, V.; Apfelbacher, C.; Arnold, B.; Birch, J.; et al. Developing a core outcome-domain set to assessing effectiveness of interdisciplinary multimodal pain therapy. Pain 2017, 1, 673–683. [Google Scholar] [CrossRef] [PubMed]

- Portelli, P.; Eldred, C. A quality review of smartphone applications for the management of pain. Br. J. Pain 2016, 10, 135–140. [Google Scholar] [CrossRef]

- Suso-Ribera, C.; Castilla, D.; Zaragozá, I.; Ribera-Canudas, M.V.; Botella, C.; García-Palacios, A. Validity, reliability, feasibility, and usefulness of Pain Monitor. A multidimensional smartphone app for daily monitoring of adults with heterogeneous chronic pain. Clin. J. Pain 2018, 34, 900–908. [Google Scholar] [CrossRef]

- Alexander, J.; Joshi, G. Smartphone applications for chronic pain management: A critical appraisal. J. Pain Res. 2016, 9, 731–734. [Google Scholar] [CrossRef]

- Wallace, L.S.; Dhingra, L.K. A systematic review of smartphone applications for chronic pain available for download in the United States. J. Opioid Manag. 2014, 10, 63–68. [Google Scholar] [CrossRef]

- Sundararaman, L.V.; Edwards, R.R.; Ross, E.L.; Jamison, R.N. Integration of Mobile Health Technology in the Treatment of Chronic Pain. Reg. Anesth. Pain Med. 2017, 42, 488–498. [Google Scholar] [CrossRef]

- Lemey, C.; Larsen, M.E.; Devylder, J.; Courtet, P.; Billot, R.; Lenca, P.; Walter, M.; Baca-García, E.; Berrouiguet, S. Clinicians’ concerns about mobile ecological momentary assessment tools designed for emerging psychiatric problems: Prospective acceptability assessment of the memind app. J. Med. Internet Res. 2019, 21, e10111. [Google Scholar] [CrossRef]

- Araya Quintanilla, F.; Cuyul Vásquez, I.A. Influence of psychosocial factors on the experience of musculoskeletal pain: A literature review. Rev. Soc. Española Dolor 2018, 26, 44–51. [Google Scholar] [CrossRef]

- Ericsson Mobility Report. 2016. Available online: https://www.ericsson.com/en/mobility-report/reports (accessed on 8 September 2020).

- Faul, F.; Erdfelder, E.; Lang, A.-G.; Buchner, A. G*Power 3: A flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behav. Res. Methods 2007, 39, 175–191. [Google Scholar] [CrossRef] [PubMed]

- Furlan, A.D.; Sandoval, J.A.; Mailis-gagnon, A.; Tunks, E. Opioids for chronic noncancer pain: A meta-analysis of effectiveness and side effects. Can. Med. Assoc. J. 2006, 174, 1589–1594. [Google Scholar] [CrossRef] [PubMed]

- Turk, D.C.; Wilson, H.D.; Cahana, A. Treatment of chronic non-cancer pain. Lancet 2011, 377, 2226–2235. [Google Scholar] [CrossRef]

- Attal, N.; Cruccu, G.; Baron, R.; Haanpää, M.; Hansson, P.; Jensen, T.S.; Nurmikko, T. EFNS guidelines on the pharmacological treatment of neuropathic pain: 2010 revision. Eur. J. Neurol. 2010, 17, 1113-e88. [Google Scholar] [CrossRef]

- Cleeland, C.S.; Ryan, K.M. Pain assessment: Global use of the brief pain inventory. Ann. Acad. Med. Singapore 1994, 23, 129–138. [Google Scholar] [PubMed]

- McNair, D.; Lorr, M.; Droppleman, L. Profile of Mood States; Educational and Industrial Testing Service: San Diego, CA, USA, 1971. [Google Scholar]

- Zigmond, A.S.; Snaith, R.P. The hospital anxiety and depression scale. Acta Psychiatr. Scand. 1983, 67, 361–370. [Google Scholar] [CrossRef]

- Beck, A.; Ward, C.H.; Mendelson, M.; Mock, J.; Erbauch, J. An inventory for measuring depression. Arch. Gen. Psychiatry 1961, 4, 561. [Google Scholar] [CrossRef]

- Trescot, A.; Glaser, S.E.; Hansen, H.; Benyamin, R.; Patel, S.; Manchikanti, L. Effectiveness of opioids in the treatment of chronic non-cancer pain. Pain Physician 2008, 11, 181–200. [Google Scholar]

- Varrassi, G.; Müller-Schwefe, G.; Pergolizzi, J.; Orónska, A.; Morlion, B.; Mavrocordatos, P.; Margarit, C.; Mangas, C.; Jaksch, W.; Huygen, F.; et al. Pharmacological treatment of chronic pain—The need for CHANGE. Curr. Med. Res. Opin. 2010, 26, 1231–1245. [Google Scholar] [CrossRef]

- Davis, F.D.; Bagozzi, R.P.; Warshaw, P.R. User Acceptance of computer technology: A comparison of two theoretical models. Manag. Sci. 1989, 35, 982–1003. [Google Scholar] [CrossRef]

- Kersten, P.; White, P.J.; Tennant, A. Is the pain visual analogue scale linear and responsive to change? An exploration using rasch analysis. PLoS ONE 2014, 9, e99485. [Google Scholar] [CrossRef] [PubMed]

- Scott, W.; Wideman, T.H.; Sullivan, M.J.L. Clinically meaningful scores on pain catastrophizing before and after multidisciplinary rehabilitation: A prospective study of individuals with subacute pain after whiplash injury. Clin. J. Pain 2014, 30, 183–190. [Google Scholar] [CrossRef] [PubMed]

- Ostelo, R.W.J.G.; Deyo, R.A.; Stratford, P.; Waddell, G.; Croft, P.; Von Korff, M.; Bouter, L.M.; de Vet, H.C. Interpreting change scores for pain and functional status in low back pain. Spine (Phila. Pa. 1976). 2008, 33, 90–94. [Google Scholar] [CrossRef]

- Borrelli, B.; Ritterband, L.M. Special issue on ehealth and mhealth: Challenges and future directions for assessment, treatment, and dissemination. Health Psychol. 2015, 34, 1205–1208. [Google Scholar] [CrossRef]

- Thurnheer, S.E.; Gravestock, I.; Pichierri, G.; Steurer, J.; Burgstaller, J.M. Benefits of mobile apps in pain management: Systematic review. JMIR mHealth uHealth 2018, 6, e11231. [Google Scholar] [CrossRef]

- Martorella, G.; Boitor, M.; Berube, M.; Fredericks, S.; Le May, S.; Gélinas, C. Tailored web-based interventions for pain: Systematic review and meta-analysis. J. Med. Internet Res. 2017, 19, e385. [Google Scholar] [CrossRef]

- Mehta, S.; Peynenburg, V.A.; Hadjistavropoulos, H.D. Internet-delivered cognitive behaviour therapy for chronic health conditions: A systematic review and meta-analysis. J. Behav. Med. 2019, 42, 169–187. [Google Scholar] [CrossRef]

- Osma, J.; Suso-Ribera, C.; Martínez-Borba, V.; Barrera, A.Z. Content and format preferences of a depression prevention program: A study in perinatal women. An. Psicol. 2020, 36, 56–63. [Google Scholar] [CrossRef]

- Gual-montolio, P.; Martínez-borba, V.; Bretón-lópez, J.M.; Osma, J. How Are Information and Communication Technologies Supporting Routine Outcome Monitoring and Measurement-Based Care in Psychotherapy? A Systematic Review. Int. J. Environ. Res. Public Health 2020, 17, 3170. [Google Scholar] [CrossRef]

- Peters, D.H.; Tran, N.T.; Adam, T. Implementation research in health: A practical guide. Alliance for Health Policy and Systems Research, World Health Organization: Geneva, Switzerland, 2013; p. 69. ISBN 978-92-4-150621-2. [Google Scholar]

- Evans, J.G. Evidence-based and evidence-biased medicine. Age Ageing 1995, 24, 461–463. [Google Scholar] [CrossRef] [PubMed]

- Pereira, F.G.; França, M.H.; de Paiva, M.C.A.; Andrade, L.H.; Viana, M.C. Prevalence and clinical profile of chronic pain and its association with mental disorders. Rev. Saude Publica 2017, 51, 96. [Google Scholar] [CrossRef] [PubMed]

- da C. Menezes Costa, L.; Mahera, C.G.; McAuleya, J.H.; Hancock, M.J.; Smeets, R.J.E.M. Self-efficacy is more important than fear of movement in mediating the relationship between pain and disability in chronic low back pain. Eur. J. Pain 2011, 15, 213–219. [Google Scholar] [CrossRef]

- Sullivan, M.J.L.; Adams, H.; Horan, S.; Maher, D.; Boland, D.; Gross, R. The role of perceived injustice in the experience of chronic pain and disability: Scale development and validation. J. Occup. Rehabil. 2008, 18, 249–261. [Google Scholar] [CrossRef]

- Suso-Ribera, C.; Jornet-Gibert, M.; Ribera-Canudas, M.V.; McCracken, L.M.; Maydeu-Olivares, A.; Gallardo-Pujol, D.; Maydeu-Olivares, A.; Gallardo-Pujol, D. A reduction in pain intensity is more strongly associated with improved physical functioning in frustration tolerant individuals. J. Clin. Psychol. Med. Settings 2016, 23, 192–206. [Google Scholar] [CrossRef]

- Lubans, D.R.; Smith, J.J.; Skinner, G.; Morgan, P.J. Development and implementation of a smartphone application to promote physical activity and reduce screen-time in adolescent boys. Front. Public Health 2014, 2, 1–11. [Google Scholar] [CrossRef]

- Price, M.; Yuen, E.K.; Goetter, E.M.; Herbert, J.D.; Forman, E.M.; Acierno, R.; Ruggiero, K.J. mHealth: A mechanism to deliver more accessible, more effective mental health care. Clin. Psychol. Psychother. 2014, 21, 427–436. [Google Scholar] [CrossRef]

- Holm, S. A simple sequential rejective method procedure. Scand. J. Stat. 1979, 6, 65–70. [Google Scholar]

- Nadler, J.T.; Weston, R.; Voyles, E.C. Stuck in the middle: The use and interpretation of mid-points in items on questionnaires. J. Gen. Psychol. 2015, 142, 71–89. [Google Scholar] [CrossRef]

- Weems, G.H.; Onwuegbuzie, A.J. The impact of midpoint responses and reverse coding on survey data. Meas. Eval. Couns. Dev. 2001, 34, 166–176. [Google Scholar] [CrossRef]

- Garland, R. The mid-point on a rating scale: Is it desirable. Mark. Bull. 1991, 2, 66–70. [Google Scholar]

| TAU | TAU + App | TAU + App + Alarm | Kruskal–Wallis Test | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Outcomes | Baseline Mean (SD) | Follow-Up Mean (SD) | Friedman Z | Baseline Mean (SD) | Follow-Up Mean (SD) | Friedman Z | Baseline Mean (SD) | Follow-Up Mean (SD) | Friedman Z | Chi-Squared |

| Pain severity | 6.28 (0.34) | 5.90 (0.37) | −1.25 | 5.56 (0.33) | 5.50 (0.36) | −0.22 | 5.58 (0.34) | 5.14 (0.37) | −1.22 | 1.60 |

| Pain interference | 5.81 (0.37) | 5.42 (0.38) | −0.83 | 4.94 (0.37) | 4.75 (0.38) | −0.30 | 5.13 (0.37) | 4.28 (0.38) | −3.32 ** | 4.61 |

| Fatigue | 6.41 (0.37) | 5.85 (0.39) | −1.94 | 4.94 (0.36) | 4.65 (0.37) | −1.07 | 5.44 (0.37) | 5.14 (0.39) | −2.05 | 1.19 |

| Sadness | 4.26 (0.43) | 4.13 (0.42) | −0.25 | 3.94 (0.41) | 3.69 (0.40) | −0.78 | 4.11 (0.43) | 3.47 (0.42) | −1.76 | 1.79 |

| Anxiety | 3.89 (0.48) | 5.03 (0.45) | −2.07 | 3.20 (0.45) | 3.50 (0.42) | −0.63 | 3.70 (0.47) | 3.35 (0.44) | −0.75 | 6.55 |

| Anger | 2.73 (0.43) | 3.60 (0.46) | −1.29 | 2.57 (0.42) | 3.29 (0.45) | −1.65 | 2.51 (0.43) | 2.60 (0.46) | −0.19 | 0.55 |

| Outcomes | TAU | TAU + App | TAU + App + Alarm | χ2 | p |

|---|---|---|---|---|---|

| Pain severity | 15.4 | 16.7 | 33.3 | 4.65 | 0.098 |

| Pain interference | 17.5 | 20.0 | 38.5 | 5.27 | 0.072 |

| Fatigue | 17.9 | 14.3 | 25.6 | 1.74 | 0.419 |

| Sadness | 26.3 | 28.6 | 43.6 | 3.13 | 0.209 |

| Anxiety | 16.2 | 21.4 | 30.8 | 2.36 | 0.308 |

| Anger | 25.0 | 14.3 | 23.7 | 1.70 | 0.427 |

| To What Extent the App … | Completely Disagree | Slightly Disagree | Neither Agree Nor Disagree | Slightly Agree | Completely Agree |

|---|---|---|---|---|---|

| Was useful for pain management | 0 | 0 | 0 | 3 | 3 |

| Increases treatment safety | 0 | 0 | 0 | 4 | 2 |

| Increases treatment effectiveness | 0 | 0 | 1 | 5 | 0 |

| Gives me comfort | 0 | 1 | 1 | 3 | 1 |

| Is useful for me as a professional | 0 | 0 | 0 | 4 | 2 |

| Can be useful for patients | 0 | 0 | 0 | 2 | 4 |

| With alarms is something I want To use in the future | 0 | 0 | 0 | 4 | 2 |

| Without alarms is something I want to use in the future | 0 | 2 | 1 | 2 | 1 |

| Alarms impact daily job burden | 0 | 4 | 1 | 0 | 1 |

| Has an impact on burden (help patient downloading the app) | 2 | 2 | 1 | 1 | 0 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Suso-Ribera, C.; Castilla, D.; Zaragozá, I.; Mesas, Á.; Server, A.; Medel, J.; García-Palacios, A. Telemonitoring in Chronic Pain Management Using Smartphone Apps: A Randomized Controlled Trial Comparing Usual Assessment against App-Based Monitoring with and without Clinical Alarms. Int. J. Environ. Res. Public Health 2020, 17, 6568. https://doi.org/10.3390/ijerph17186568

Suso-Ribera C, Castilla D, Zaragozá I, Mesas Á, Server A, Medel J, García-Palacios A. Telemonitoring in Chronic Pain Management Using Smartphone Apps: A Randomized Controlled Trial Comparing Usual Assessment against App-Based Monitoring with and without Clinical Alarms. International Journal of Environmental Research and Public Health. 2020; 17(18):6568. https://doi.org/10.3390/ijerph17186568

Chicago/Turabian StyleSuso-Ribera, Carlos, Diana Castilla, Irene Zaragozá, Ángela Mesas, Anna Server, Javier Medel, and Azucena García-Palacios. 2020. "Telemonitoring in Chronic Pain Management Using Smartphone Apps: A Randomized Controlled Trial Comparing Usual Assessment against App-Based Monitoring with and without Clinical Alarms" International Journal of Environmental Research and Public Health 17, no. 18: 6568. https://doi.org/10.3390/ijerph17186568

APA StyleSuso-Ribera, C., Castilla, D., Zaragozá, I., Mesas, Á., Server, A., Medel, J., & García-Palacios, A. (2020). Telemonitoring in Chronic Pain Management Using Smartphone Apps: A Randomized Controlled Trial Comparing Usual Assessment against App-Based Monitoring with and without Clinical Alarms. International Journal of Environmental Research and Public Health, 17(18), 6568. https://doi.org/10.3390/ijerph17186568