Artificial Intelligence and Suicide Prevention: A Systematic Review of Machine Learning Investigations

Abstract

1. Introduction

2. Methods

2.1. Search Strategy

- (A).

- PubMed: (“Artificial Intelligence"[Mesh] OR machine learning[tw] OR natural language processing[tw] OR artificial intelligence[tw]) AND ("Suicide"[Mesh] OR suicid*[tw])

- (B).

- EMBASE: (’artificial intelligence’:ti,ab,kw OR ’machine learning’:ti,ab,kw OR ’natural language processing’:ti,ab,kw) AND (’mood disorder’:ti,ab,kw OR ’depression’:ti,ab,kw OR ’bipolar disorder’:ti,ab,kw OR ’suicidal behavior’:ti,ab,kw OR ’suicide’:ti,ab,kw OR suicid*)

- (C).

- Web of Science: TS = (“artificial intelligence" OR "machine learning" OR "natural language processing") AND TS = ("mood disorder" OR depress* OR bipolar OR suicid*)

- (D).

- PsycINFO: ((“artificial intelligence" or "machine learning" or "natural language processing") and ("mood disorder" or bipolar or depress* or suicid*)).ab,hw,id,ot,ti.

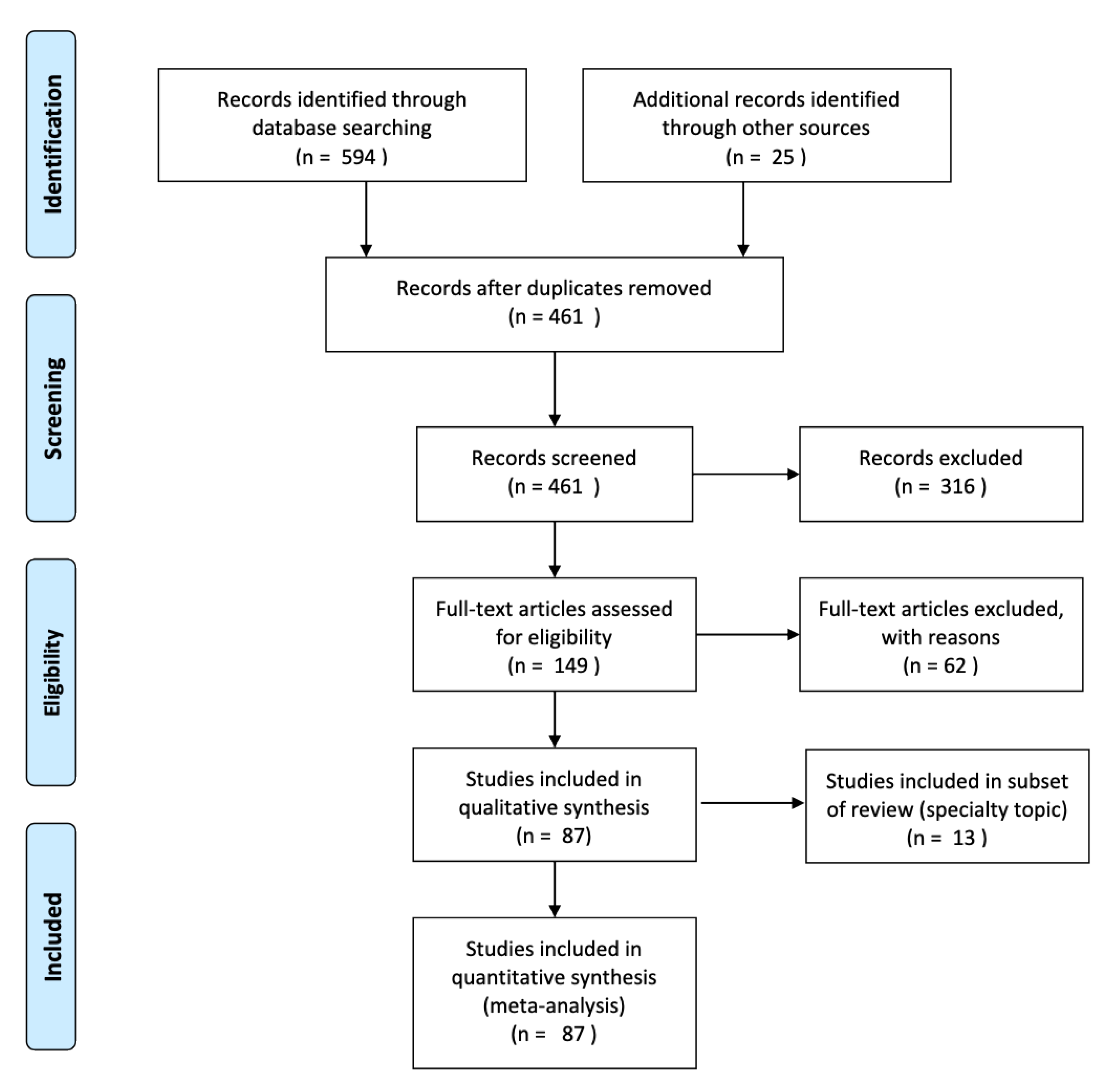

2.2. Study Selection

2.3. Data Collection Process

2.4. Data Analysis

3. Results

3.1. Data Extraction

3.2. Broad Outcome Groupings

3.3. ML Techniques and Learning Methods

3.4. Design Characteristics

3.5. Sample Size and High Dimensional Data

3.6. Sample Characteristics

3.7. Natural Language Processing and Biological Markers of Risk

3.8. Timeframe of Assessment and Predictive Modeling

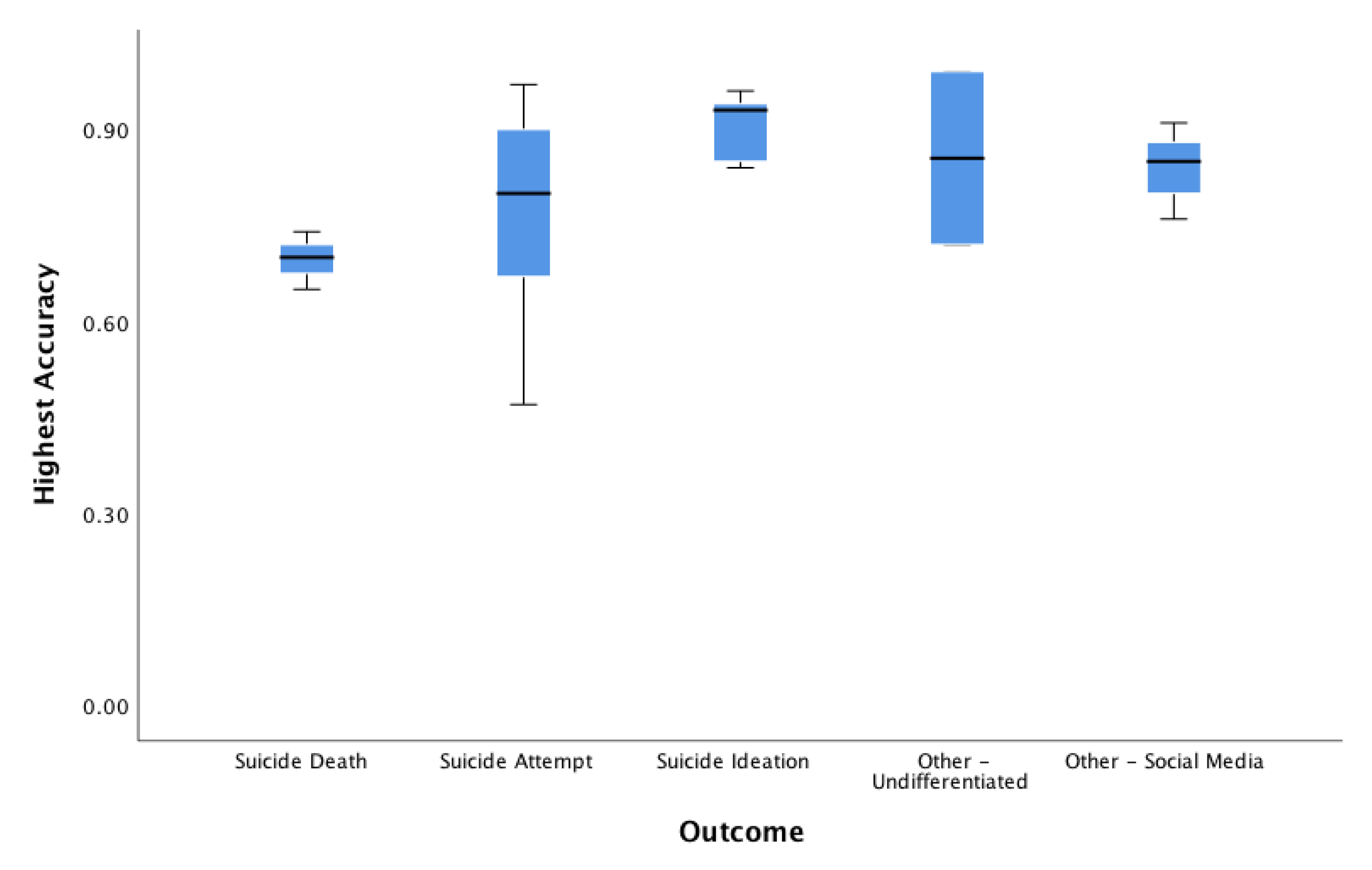

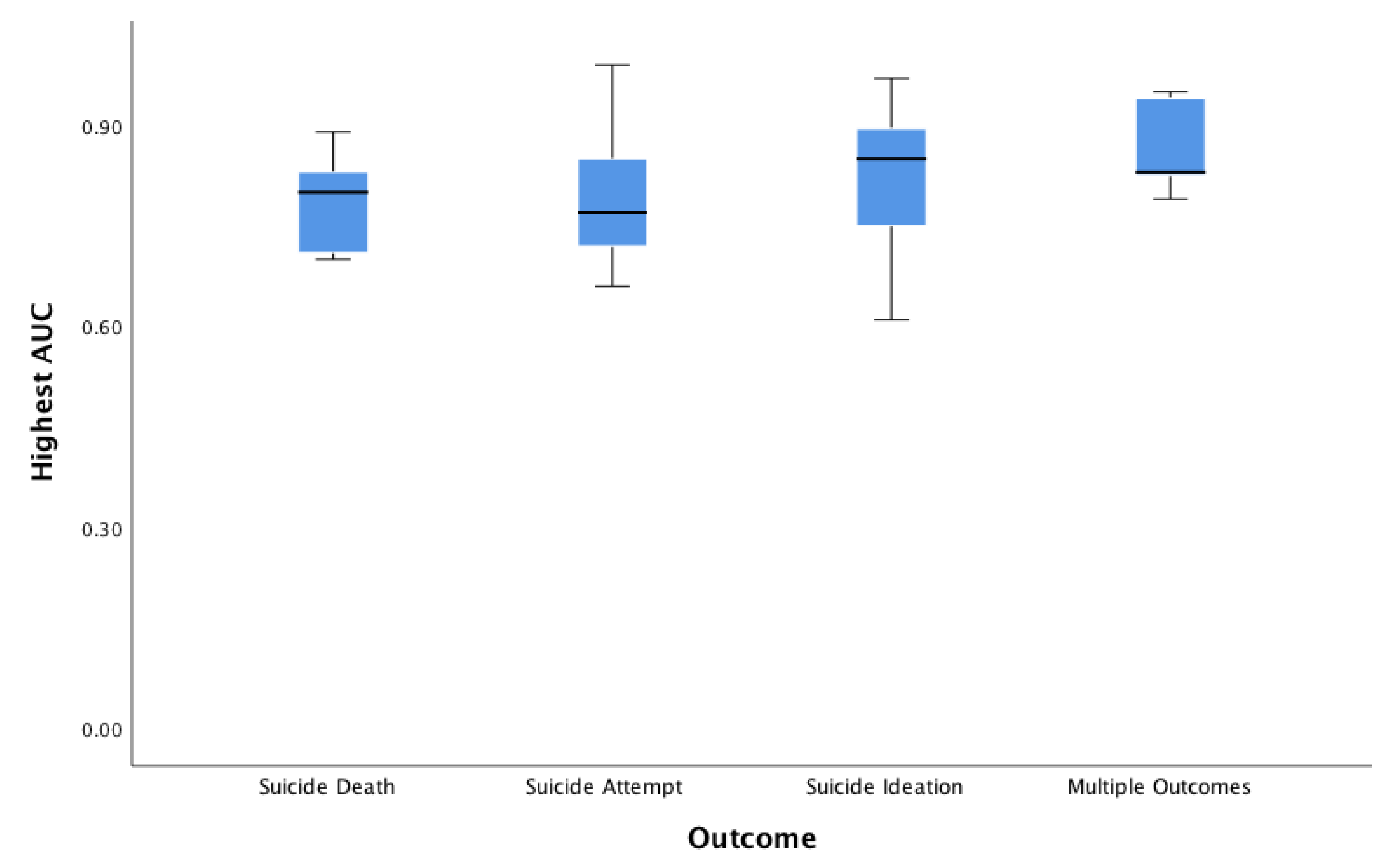

3.9. Accuracy, Area Under the Curve, Positive Predictive Value, Sensitivity/Specificity

4. Discussion

Critical Challenges and Future Directions

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- National Center for Injury Prevention and Control, CDC. Web-Based Injury Statistics Query and Reporting System (WISQARS), Fatal Injury and Violence Data. Available online: http://www.cdc.gov/injury/wisqars/index.html (accessed on 30 November 2018).

- Rockett, I.R.; Regier, M.D.; Kapusta, N.D.; Coben, J.H.; Miller, T.R.; Hanzlick, R.L.; Todd, K.H.; Sattin, R.W.; Kennedy, L.W.; Kleinig, J.; et al. Leading causes of unintentional and intentional injury mortality: United States, 2000–2009. Am. J. Public Health 2012, 102, e84–e92. [Google Scholar] [CrossRef]

- Shepard, D.S.; Gurewich, D.; Lwin, A.K.; Reed, G.A., Jr.; Silverman, M.M. Suicide and suicidal attempts in the United States: Costs and policy implications. Suicide Life Threat. Behav. 2016, 46, 352–362. [Google Scholar] [CrossRef]

- Goldsmith, S.; Pellmar, T.; Kleinman, A.; Bunney, W. Reducing suicide: A national imperative (Committee on Pathophysiology and Prevention of Adolescent and Adult Suicide, Board on Neuroscience and Behavioral Health, Institute of Medicine of the National Academies). Proc. Natl. Acad. Sci. USA 2002. [Google Scholar] [CrossRef]

- World Health Organization. Preventing Suicide: A Global Imperative; World Health Organization: Geneva, Switzerland, 2014. [Google Scholar]

- U.S. Department of Health and Human Services (HHS) Office of the Surgeon General and National Action Alliance for Suicide Prevention. 2012 National Strategy for Suicide Prevention: Goals and Objectives for Action; HHS: Washington, DC, USA, 2012.

- Curtin, S.C.; Warner, M.; Hedegaard, H. Increase in suicide in the United States, 1999–2014. NCHS Data Brief 2016, 241, 1–8. [Google Scholar]

- Juurlink, D.N.; Herrmann, N.; Szalai, J.P.; Kopp, A.; Redelmeier, D.A. Medical illness and the risk of suicide in the elderly. Arch. Intern. Med. 2004, 164, 1179–1184. [Google Scholar] [CrossRef]

- Smith, E.G.; Kim, H.M.; Ganoczy, D.; Stano, C.; Pfeiffer, P.N.; Valenstein, M. Suicide risk assessment received prior to suicide death by Veterans Health Administration patients with a history of depression. J. Clin. Psychiatry 2013, 74, 226–232. [Google Scholar] [CrossRef]

- Van Heeringen, K.; Mann, J.J. The neurobiology of suicide. Lancet Psychiatry 2014, 1, 63–72. [Google Scholar] [CrossRef]

- Franklin, J.C.; Ribeiro, J.D.; Fox, K.R.; Bentley, K.H.; Kleiman, E.M.; Huang, X.; Musacchio, K.M.; Jaroszewski, A.C.; Chang, B.P.; Nock, M.K. Risk factors for suicidal thoughts and behaviors: A meta-analysis of 50 years of research. Psychol. Bull. 2017, 143, 187–232. [Google Scholar] [CrossRef]

- Pourman, A.; Roberson, J.; Caggiula, A.; Monslave, N.; Rahimi, M.; Torres-Llenza, V. Telemedicine and e-Health. J. Cit. Rep. 2019, 26, 880–888. [Google Scholar]

- Ivey-Stephenson, A.Z. Suicide trends among and within urbanization levels by sex, race/ethnicity, age group, and mechanism of death—United States, 2001–2015. MMWR Surveill. Summ. 2017, 66, 1–16. [Google Scholar] [CrossRef]

- Miller, M.; Azrael, D.; Barber, C. Suicide mortality in the United States: The importance of attending to method in understanding population-level disparities in the burden of suicide. Annu. Rev. Public Health 2012, 33, 393–408. [Google Scholar] [CrossRef]

- Kessler, R.C.; Warner, C.H.; Ivany, C.; Petukhova, M.V.; Rose, S.; Bromet, E.J.; Brown, M.; Cai, T.; Colpe, L.J.; Cox, K.L.; et al. Predicting suicides after psychiatric hospitalization in US Army soldiers: The Army Study To Assess Risk and resilience in Servicemembers (Army STARRS). JAMA Psychiatry 2015, 72, 49–57. [Google Scholar] [CrossRef]

- Delgado-Rodríguez, M.; Llorca, J. Bias. J. Epidemiol. Community Health 2004, 58, 635–641. [Google Scholar] [CrossRef]

- Weng, C.; Li, Y.; Ryan, P.; Zhang, Y.; Liu, F.; Gao, J.; Bigger, J.T.; Hripcsak, G.A. distribution-based method for assessing the differences between clinical trial target populations and patient populations in electronic health records. Appl. Clin. Inform. 2014, 5, 463–479. [Google Scholar]

- Casey, J.A.; Schwartz, B.S.; Stewart, W.F.; Adler, N. Using electronic health records for population health Research: A review of methods and applications. Annu. Rev. Public Health 2016, 37, 61–81. [Google Scholar] [CrossRef]

- Goldstein, B.A.; Navar, A.M.; Pencina, M.J.; A Ioannidis, J.P. Opportunities and challenges in developing risk prediction models with electronic health records data: A systematic review. J. Am. Med. Inform. Assoc. 2016, 24, 198–208. [Google Scholar] [CrossRef]

- Saria, S.; Rajani, A.K.; Gould, J.; Koller, D.; A Penn, A. Integration of early physiological responses predicts later illness severity in preterm infants. Sci. Transl. Med. 2010, 2, 48–65. [Google Scholar] [CrossRef]

- Henry, K.E.; Hager, D.N.; Pronovost, P.J.; Saria, S. A targeted real-time early warning score (TREWScore) for septic shock. Sci. Transl. Med. 2015, 7, 299ra122. [Google Scholar] [CrossRef]

- Huang, S.H.; LePendu, P.; Iyer, S.V.; Tai-Seale, M.; Carrell, D.; Shah, N.H. Toward personalizing treatment for depression: Predicting diagnosis and severity. J. Am. Med. Inform. Assoc. 2014, 21, 1069–1075. [Google Scholar] [CrossRef]

- Peck, J.S.; Benneyan, J.C.; Nightingale, D.J.; Gaehde, S.A. Predicting emergency department inpatient admissions to improve same-day patient flow. Acad. Emerg. Med. 2012, 19, E1045–E1054. [Google Scholar] [CrossRef]

- Tiwari, V.; Furman, W.R.; Sandberg, W.S. Predicting case volume from the accumulating elective operating room schedule facilitates staffing improvements. Anesthesiology 2014, 121, 171–183. [Google Scholar] [CrossRef]

- Callahan, A.; Shah, N.H. Machine learning in healthcare. In Key Advances in Clinical Informatics, 1st Edition: Transforming Health Care Through Health Information Technology; Sheikh, A., Bates, D., Wright, A., Cresswell, K.K., Eds.; Academic Press: Cambridge, MA, USA, 2017; pp. 279–291. [Google Scholar]

- Pestian, J.P.; Grupp-Phelan, J.; Bretonnel Cohen, K.; Meyers, G.; Richey, L.A.; Matykiewicz, P. A controlled trial using natural language processing to examine the language of suicidal adolescents in the emergency department. Suicide Life Threat. Behav. 2016, 46, 154–159. [Google Scholar] [CrossRef]

- McCoy, T.H., Jr.; Castro, V.M.; Roberson, A.M.; Snapper, L.A.; Perlis, R.H. Improving prediction of suicide and accidental death after discharge from general hospitals with natural language processing. JAMA Psychiatry 2016, 73, 1064–1071. [Google Scholar] [CrossRef]

- Sanderson, M.; Bulloch, A.G.; Wang, J.; Williams, K.G.; Williamson, T.; Patten, S.B. Predicting death by suicide following an emergency department visit for parasuicide with administrative health care system data and machine learning. EClinicalMedicine 2020, 20, 100281. [Google Scholar] [CrossRef]

- Roy, A.; Nikolitch, K.; McGinn, R.; Jinah, S.; Klement, W.; Kaminsky, Z.A. A machine learning approach predicts future risk to suicidal ideation from social media data. NPJ Digit. Med. 2020, 3, 1–12. [Google Scholar] [CrossRef]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; Group, P. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. Ann. Intern. Med. 2009, 151, 264–269. [Google Scholar] [CrossRef]

- Crosby, A.; Ortega, L.; Melanson, C. Self-directed Violence Surveillance: Uniform Definitions and Recommended Data Elements; version 1.0; National Center for Injury Prevention and Control (CDC): Atlanta, GA, USA, 2011.

- Howick, J.; Chalmers, I.; Glasziou, P.; Greenhalgh, T.; Heneghan, C.; Liberati, A.; Moschetti, I.; Phillips, B.; Thornton, H. Explanation of the 2011 Oxford Centre for Evidence-Based Medicine (OCEBM) Levels of Evidence (Background Document). Oxford Centre for Evidence-Based Medicine. Available online: https://www.cebm.net/index.aspx?o=5653 (accessed on 31 July 2020).

- Kessler, R.C.; Hwang, I.; Hoffmire, C.A.; McCarthy, J.F.; Petukhova, M.V.; Rosellini, A.J.; Sampson, N.A.; Schneider, A.L.; Bradley, P.A.; Katz, I.R.; et al. Developing a practical suicide risk prediction model for targeting high-risk patients in the Veterans Health Administration. Int. J. Methods Psychiatr. Res. 2017, 26. [Google Scholar] [CrossRef]

- Kessler, R.C.; Stein, M.B.; Petukhova, M.V.; Bliese, P.; Bossarte, R.M.; Bromet, E.J.; Fullerton, C.S.; Gilman, S.E.; Ivany, C.; Lewandowski-Romps, L.; et al. Predicting suicides after outpatient mental health visits in the Army study to assess risk and resilience in servicemembers (Army STARRS). Mol. Psychiatry 2017, 22, 544–551. [Google Scholar] [CrossRef]

- Poulin, C.; Shiner, B.; Thompson, P.; Vepstas, L.; Young-Xu, Y.; Goertzel, B.; Watts, B.; Flashman, L.; McAllister, T. Predicting the risk of suicide by analyzing the text of clinical notes. PLoS ONE 2014, 9, e85733. [Google Scholar] [CrossRef]

- Pestian, J.P.; Matykiewicz, P.; Grupp-Phelan, J.; Lavanier, S.A.; Combs, J.; Kowatch, r. Using natural language processing to classify suicide notes. AMIA Annu. Symp. Proc. 2008, 1091. [Google Scholar]

- Pamer, C.; Serpi, T.; Finkelstein, J. Analysis of Maryland poisoning deaths using classification and regression tree (CART) analysis. AMIA Annu. Symp. Proc. 2008, 550–554. [Google Scholar]

- Haerian, K.; Salmasian, H.; Friedman, C. Methods for identifying suicide or suicidal ideation in EHRs. AMIA Annu Symp Proc. 2012, 2012, 1244–1253. [Google Scholar] [PubMed]

- Ilgen, M.A.; Downing, K.; Zivin, K. Exploratory data mining analysis identifying subgroups of patients with depression who are at high risk for suicide. J. Clin. Psychiatry 2009, 70, 1495–1500. [Google Scholar] [CrossRef] [PubMed]

- Adamou, M.; Antoniou, G.; Greasidou, E.; Lagani, V.; Charonyktakis, P.; Tsamardinos, I.; Doyle, M. Toward automatic risk assessment to support suicide prevention. Crisis 2019, 40, 249–256. [Google Scholar] [CrossRef]

- Rosellini, A.J.; Stein, M.B.; Benedek, D.M.; Bliese, P.D.; Chiu, W.T.; Hwang, I.; Monahan, J.; Nock, M.K.; Sampson, N.A.; Street, A.E.; et al. Predeployment predictors of psychiatric disorder-symptoms and interpersonal violence during combat deployment. Depress Anxiety 2018, 35, 1073–1080. [Google Scholar] [CrossRef]

- De Ávila Berni, G.; Rabelo-da-Ponte, F.D.; Librenza-Garcia, D.V.; Boeira, M.; Kauer-Sant’Anna, M. Potential use of text classification tools as signatures of suicidal behavior: A proof-of-concept study using Virginia Woolf’s personal writings. PLoS ONE 2018, 13, e0207963. [Google Scholar]

- Metzger, M.H.; Tvardik, N.; Gicquel, Q.; Bouvry, C.; Poulet, E.; Potinet-Pagliaroli, V. Use of emergency department electronic medical records for automated epidemiological surveillance of suicide attempts: A French pilot study. Int. J. Methods Psychiatr Res. 2017, 26, e1522. [Google Scholar] [CrossRef]

- Passos, I.C.; Mwangi, B.; Cao, B.; Hamilton, J.E.; Wu, M.J.; Zhang, X.Y.; Zunta-Soares, G.B.; Quevedo, J.; Kauer-Sant’Anna, M.; Kapczinski, F.; et al. Identifying a clinical signature of suicidality among patients with mood disorders: A pilot study using a machine learning approach. J. Affect Disord. 2016, 193, 109–116. [Google Scholar] [CrossRef]

- Kessler, R.C.; Van Loo, H.M.; Wardenaar, K.J.; Bossarte, R.M.; Brenner, L.A.; Cai, T.; Ebert, D.D.; Hwang, I.; Li, J.; De Jonge, P.; et al. Testing a machine-learning algorithm to predict the persistence and severity of major depressive disorder from baseline self-reports. Mol. Psychiatry 2016, 21, 1366–1371. [Google Scholar] [CrossRef]

- Modai, I.; Kuperman, J.; Goldberg, I.; Goldish, M.; Mendel, S. Fuzzy logic detection of medically serious suicide attempt records in major psychiatric disorders. J. Nerv. Ment. Dis. 2004, 192, 708–710. [Google Scholar] [CrossRef]

- Modai, I.; Kurs, R.; Ritsner, M.; Oklander, S.; Silver, H.; Segal, A.; Goldberg, I.; Mendel, S. Neural network identification of high-risk suicide patients. Med. Inform. Internet Med. 2002, 27, 39–47. [Google Scholar] [CrossRef]

- Modai, I.; Valevski, A.; Solomish, A.; Kurs, R.; Hines, I.L.; Ritsner, M.; Mendel, S. Neural network detection of files of suicidal patients and suicidal profiles. Med. Inform. Internet Med. 1999, 24, 249–256. [Google Scholar]

- Modai, I.; Hirschmann, S.; Hadjez, J.; Bernat, C.; Gelber, D.; Ratner, Y.; Rivkin, O.; Kurs, R.; Ponizovsky, A.; Ritsner, M. Clinical evaluation of prior suicide attempts and suicide risk in psychiatric inpatients. Crisis 2002, 23, 47–54. [Google Scholar] [CrossRef]

- Modai, S.; Greenstain, A.; Weizman, A.; Mendel, S. Backpropagation and adaptive resonance theory in predicting suicidal risk. Med. Inform. 1998, 23, 325–330. [Google Scholar] [CrossRef]

- Hettige, N.C.; Nguyen, T.B.; Yuan, C.; Rajakulendran, T.; Baddour, J.; Bhagwat, N.; Bani-Fatemi, A.; Voineskos, A.N.; Chakravarty, M.M.; De Luca, V. Classification of suicide attempters in schizophrenia using sociocultural and clinical features: A machine learning approach. Gen. Hosp. Psychiatry 2017, 47, 20–28. [Google Scholar] [CrossRef]

- Walsh, C.G.; Ribeiro, J.D.; Franklin, J.C. Predicting risk of suicide attempts over time through machine learning. Clin. Psychol. Sci. 2017, 5, 457–469. [Google Scholar] [CrossRef]

- Venek, V.; Scherer, S.; Morency, L.P.; Rizzo, A.; Pestian, J. Adolescent suicidal risk assessment in clinician-patient interaction. IEEE Trans. Affect. Comput. 2017, 8, 204–215. [Google Scholar] [CrossRef]

- Baca-Garcia, E.; Perez-Rodriguez, M.M.; Saiz-Gonzalez, D.; Basurte-Villamor, I.; Saiz-Ruiz, J.; Leiva-Murillo, J.M.; De Prado-Cumplido, M.; Santiago-Mozos, R.; Artés-Rodríguez, A.; De Leon, J. Variables associated with familial suicide attempts in a sample of suicide attempters. Prog. Neuropsychopharmacol. Biol. Psychiatry 2007, 31, 1312–1316. [Google Scholar] [CrossRef]

- Tiet, Q.Q.; Ilgen, M.A.; Byrnes, H.F.; Moos, R.H. Suicide attempts among substance use disorder patients: An initial step toward a decision tree for suicide management. Alcohol Clin. Exp. Res. 2006, 30, 998–1005. [Google Scholar] [CrossRef]

- Baca-Garcia, E.; Vaquero-Lorenzo, C.; Perez-Rodriguez, M.M.; Gratacòs, M.; Bayés, M.; Santiago-Mozos, R.; Leiva-Murillo, J.M.; De Prado‐Cumplido, M.; Artes‐Rodriguez, A.; Ceverino, M.M.; et al. Nucleotide variation in central nervous system genes among male suicide attempters. Am. J. Med. Genet. B. Neuropsychiatr. Genet. 2010, 153, 208–213. [Google Scholar] [CrossRef]

- Lopez-Castroman, J.; De las Mercedes Perez-Rodriguez, M.; Jaussent, I.; Alegria, A.A.; Artes-Rodriguez, A.; Freed, P.; Guillaume, S.; Jollant, F.; Leiva-Murillo, J.M.; Malafosse, A.; et al. Distinguishing the relevant features of frequent suicide attempters. J. Psychiatry Res. 2010, 45, 619–625. [Google Scholar] [CrossRef] [PubMed]

- Modai, I.; Kuperman, J.; Goldberg, I.; Goldish, M.; Mendel, S. Suicide risk factors and suicide vulnerability in various major psychiatric disorders. Med. Inform. Internet Med. 2004, 29, 65–74. [Google Scholar] [CrossRef] [PubMed]

- Mann, J.J.; Ellis, S.P.; Waternaux, C.M.; Liu, X.; Oquendo, M.A.; Malone, K.M.; Brodsky, B.S.; Haas, G.L.; Currier, D. Classification trees distinguish suicide attempters in major psychiatric disorders: A model of clinical decision making. J. Clin. Psychiatry 2008, 69, 23. [Google Scholar] [CrossRef] [PubMed]

- Bae, S.M.; Lee, S.A.; Lee, S.H. Prediction by data mining, of suicide attempts in Korean adolescents: A national study. Neuropsychiatr. Dis. Treat. 2015, 11, 2367–2375. [Google Scholar] [CrossRef]

- Choo, C.; Diederich, J.; Song, I.; Ho, R. Cluster analysis reveals risk factors for repeated suicide attempts in a multi-ethnic Asian population. Asian J. Psychiatr. 2014, 8, 38–42. [Google Scholar] [CrossRef]

- Oh, J.; Yun, K.; Hwang, J.H.; Chae, J.H. Classification of suicide attempts through a machine learning algorithm based on multiple systemic psychiatric scales. Front Psychiatry 2017, 8, 192. [Google Scholar] [CrossRef]

- Benton, A.; Mitchell, M.; Hovy, D. Multi-task learning for mental health using social media text. arXiv preprint 2017, arXiv:171203538. [Google Scholar]

- Ruderfer, D.M.; Walsh, C.G.; Aguirre, M.W.; Tanigawa, Y.; Ribeiro, J.D.; Franklin, J.C.; Rivas, M.A. Significant shared heritability underlies suicide attempt and clinically predicted probability of attempting suicide. Mol. Psychiatry 2019. [Google Scholar] [CrossRef]

- Lyu, J.; Zhang, J. BP neural network prediction model for suicide attempt among Chinese rural residents. J. Affect. Disord. 2019, 246, 465–473. [Google Scholar] [CrossRef]

- Coppersmith, G.; Leary, R.; Crutchley, P.; Fine, A. NLP of social media as screening for suicide risk. Biomed. Inform. Insights 2018, 10, 1–11. [Google Scholar] [CrossRef]

- Dargél, A.A.; Roussel, F.; Volant, S.; Etain, B.; Grant, R.; Azorin, J.M.; M’Bailara, K.; Bellivier, F.; Bougerol, T.; Kahn, J.P.; et al. Emotional hyper-reactivity and cardiometabolic risk in remitted bipolar patients: A machine learning approach. Acta Psychiatr. Scand. 2018, 138, 348–359. [Google Scholar] [CrossRef] [PubMed]

- Jordan, J.T.; McNeil, D.G. Characteristics of a suicide attempt predict who makes another attempt after hospital discharge: A decision-tree investigation. Psychiatry Res. 2018, 268, 317–322. [Google Scholar] [CrossRef]

- Setoyama, D.; Kato, T.A.; Hashimoto, R.; Kunugi, H.; Hattori, K.; Hayakawa, K.; Sato-Kasai, M.; Shimokawa, N.; Kaneko, S.; Yoshida, S.; et al. Plasma metabolites predict severity of depression and suicidal ideation in psychiatric patients - a multicenter pilot analysis. PLoS ONE 2016, 11, e0165267. [Google Scholar] [CrossRef] [PubMed]

- Pestian, J.P.; Sorter, M.; Connolly, B.; Bretonnel Cohen, K.; McCullumsmith, C.; Gee, J.T.; Morency, L.P.; Scherer, S.; Rohlfs, L.; STM Research Group. A machine learning approach to identifying the thought markers of suicidal subjects: A prospective multicenter trial. Suicide Life Threat. Behav. 2017, 47, 112–121. [Google Scholar] [CrossRef] [PubMed]

- Cook, B.L.; Progovac, A.M.; Chen, P.; Mullin, B.; Hou, S.; Baca-Garcia, E. Novel use of natural language processing (NLP) to predict suicidal ideation and psychiatric symptoms in a text-based mental health intervention in Madrid. Comput. Math Methods Med. 2016, 2016, 8708434. [Google Scholar] [CrossRef] [PubMed]

- Gradus, J.L.; King, M.W.; Galatzer-Levy, I.; Street, A.E. Gender differences in machine learning models of trauma and suicidal ideation in veterans of the Iraq and Afghanistan Wars. J. Trauma Stress. 2017, 30, 362–371. [Google Scholar] [CrossRef]

- Birjali, M.; Beni-Hssane, A.; Erritali, M. Machine learning and semantic sentiment analysis based algorithms for suicide sentiment prediction in social networks. Proc. Comput. Sci. 2017, 113, 65–72. [Google Scholar] [CrossRef]

- Just, M.A.; Pan, L.; Cherkassky, V.L. Machine learning of neural representations of suicide and emotion concepts identifies suicidal youth. Nat. Hum. Behav. 2017, 1, 911–919. [Google Scholar] [CrossRef]

- Ryu, S.; Lee, H.; Lee, D.K.; Park, K. Use of a machine learning algorithm to predict individuals with suicidal ideation in the general population. Psychiatry Investig. 2018, 15, 1030–1036. [Google Scholar] [CrossRef]

- Desjardins, I.; Cats-Baril, W.; Maruti, S.; Freeman, K.; Althoff, R. Suicide risk assessment in hospitals: An expert system-based triage tool. J. Clin. Psychiatry 2016, 77, e874–e882. [Google Scholar] [CrossRef]

- Tzeng, H.M. Forecasting: Adopting the methodology of support vector machines to nursing research. Worldviews Evid. Based Nurs. 2006, 3, 124–128. [Google Scholar] [CrossRef] [PubMed]

- Baca-García, E.; Perez-Rodriguez, M.M.; Basurte-Villamor, I.; Saiz-Ruiz, J.; Leiva-Murillo, J.M.; De Prado-Cumplido, M.; Santiago-Mozos, R.; Artés-Rodríguez, A.; De Leon, J. Using data mining to explore complex clinical decisions: A study of hospitalization after a suicide attempt. J. Clin. Psychiatry 2006, 67, 1124–1132. [Google Scholar] [CrossRef] [PubMed]

- Quan, C.; Wang, M.; Ren, F. An unsupervised text mining method for relation extraction from biomedical literature. PLoS ONE 2014, 9, e102039. [Google Scholar] [CrossRef] [PubMed]

- Litvinova, T.; Seredin, P.V.; Litvinov, O.A.; Romanchenko, O.V. Identification of suicidal tendencies of individuals based on the quantitative analysis of their internet texts. Computación y Sistemas. 2017, 21, 243–252. [Google Scholar] [CrossRef][Green Version]

- Zhang, Y.; Zhang, O.; Li, R.; Flores, A.; Selek, S.; Zhang, X.Y.; Xu, H. Psychiatric stressor recognition from clinical notes to reveal and association with suicide. Health Informatics J. 2019, 4, 1846–1862. [Google Scholar] [CrossRef]

- McKernan, L.C.; Lenert, M.C.; Crofford, L.J.; Walsh, C.G. Outpatient engagement and predicted risk of suicide attempts in fibromyalgia. Arthritis Care Research. 2019, 9, 1255–1263. [Google Scholar] [CrossRef]

- DelPozo-Banos, M.; John, A.; Petkov, N.; Berridge, D.M.; Southern, K.; LLoyd, K.; Jones, C.; Spencer, S.; Travieso, C.M. Using neural networks with routine health records to identify suicide risk: Feasibility study. JMIR Ment. Health 2018, 5, e10144. [Google Scholar] [CrossRef]

- Burke, T.A.; Jacobucci, R.; Ammerman, B.A.; Piccirillo, M.; McCloskey, M.S.; Heimberg, R.G.; Alloy, L.B. Identifying the relative importance of non-suicidal self-injury features in classifying suicidal ideation plans and behaviour using exploratory data mining. Psychiatry Res. 2018, 262, 175–183. [Google Scholar] [CrossRef]

- Cheng, Q.; Li, T.M.; Kwok, C.L.; Zhu, T.; Yip, P.S. Assessing suicide risk and emotional distress in Chinese social media: A text mining and machine learning study. J. Med. Internet Res. 2017, 19, e243. [Google Scholar] [CrossRef]

- Braithwaite, S.R.; Giraud-Carrier, C.; West, J.; Barnes, M.D.; Hanson, C.L. Validating machine learning algorithms for Twitter data against established measures of suicidality. JMIR Ment. Health 2016, 3, e21. [Google Scholar] [CrossRef]

- Guan, L.; Hao, B.; Cheng, Q.; Yip, P.S.; Zhu, T. Identifying Chinese microblog users with high suicide probability using Internet-based profile and linguistic features: Classification model. JMIR Ment. Health 2015, 2, e17. [Google Scholar] [CrossRef] [PubMed]

- Woo, H.; Cho, Y.; Shim, E.; Lee, K.; Song, G. Public trauma after the Sewol Ferry Disaster: The role of social media in understanding the public mood. Int. J Environ. Res. Public Health 2015, 12, 10974–10983. [Google Scholar] [CrossRef] [PubMed]

- O’Dea, B.; Wan, S.; Batterham, P.J.; Calear, A.L.; Paris, C.; Christensen, H. Detecting suicidality on Twitter. Internet Interv. 2015, 2, 183–188. [Google Scholar] [CrossRef]

- Nguyen, T.; O’Dea, B.; Larsen, M.; Phung, D.; Venkatesh, S.; Christensen, H. Using linguistic and topic analysis to classify sub-groups of online depression communities. Multimed. Tools Appl. 2017, 76, 10653–10676. [Google Scholar] [CrossRef]

- Burnap, P.; Colombo, G.; Amery, R.; Hodorog, A.; Scourfield, J. Multi-class machine classification of suicide-related communication on Twitter. Online Soc. Netw. Media 2017, 2, 32–44. [Google Scholar] [CrossRef]

- Vioulès, M.J.; Moulahi, B.; Azé, J.; Bringay, S. Detection of suicide-related posts in Twitter data streams. IBM J. Res. Dev. 2018, 62, 7–12. [Google Scholar]

- Zalar, B.; Plesnicar, B.K.; Zalar, I.; Mertik, M. Suicide and suicide attempt descriptions by multimethod approach. Psychiatria Danuba. 2018, 30, 317–322. [Google Scholar] [CrossRef]

- Tran, T.; Nguyen, T.D.; Phung, D.; Venkatesh, S. Learning vector representation of medical objects via EMR-driven nonnegative restricted Boltzmann machines (eNRBM). J. Biomed. Inform. 2015, 54, 96–105. [Google Scholar] [CrossRef]

- Leiva-Murillo, J.M.; Lopez-Castroman, J.; Baca-Garcia, E. EURECA Consortium. Characterization of suicidal behaviour with self-organizing maps. Comput. Math. Methods Med. 2013, 2013, 136743. [Google Scholar] [CrossRef]

- Tran, T.; Luo, W.; Phung, D.; Harvey, R.; Berk, M.; Kennedy, R.L.; Venkatesh, S. Risk stratification using data from electronic medical records better predicts suicide risks than clinician assessments. BMC Psychiatry. 2014, 14, 76. [Google Scholar] [CrossRef]

- Bernecker, S.L.; Zuromski, K.L.; Gutierrez, P.M.; Joiner, T.E.; King, A.J.; Liu, H.; Nock, M.K.; Sampson, N.A.; Zaslavsky, A.M.; Stein, M.B.; et al. Predicting suicide attempts among soldiers who deny suicidal ideation in the Army Study to Assess Risk and Resilience in Service Members (Army STARRS). Behav. Res. Ther. 2019, 120, 103350. [Google Scholar] [CrossRef] [PubMed]

- Zhong, Q.Y.; Mittal, L.P.; Nathan, M.D.; Brown, K.M.; González, D.K.; Cai, T.; Finan, S.; Gelaye, B.; Avillach, P.; Smoller, J.W.; et al. Use of natural language processing in electronic medical records to identify pregnant women with suicidal behavior: Towards a solution to the complex classification problem. Eur. J. Epidemiol. 2018, 34, 153–162. [Google Scholar] [CrossRef] [PubMed]

- Morales, S.; Barros, J.; Echávarri, O.; García, F.; Osses, A.; Moya, C.; Maino, M.P.; Fischman, R.; Núñez, C.; Szmulewicz, T.; et al. Acute mental discomfort associated with suicide behavior in a clinical sample of patients with affective disorders: Ascertaining critical variables using artificial intelligence tools. Front. Psychiatry 2017, 8, 7. [Google Scholar] [CrossRef]

- Barros, J.; Morales, S.; Echávarri, O.; García, A.; Ortega, J.; Asahi, T.; Moya, C.; Fischman, R.; Maino, M.P.; Núnez, C. Suicide detection in Chile: Proposing a predictive model for suicide risk in a clinical sample of patients with mood disorders. Braz. J. Psychiatry 2017, 39, 1–11. [Google Scholar] [CrossRef]

- Kuroki, Y. Risk factors for suicidal behaviors among Filipino Americans: A data mining approach. Am. J. Orthopsychiatry 2015, 85, 34. [Google Scholar] [CrossRef] [PubMed]

- Anderson, H.D.; Pace, W.D.; Brandt, E.; Nielsen, R.D.; Allen, R.R.; Libby, A.M.; West, D.R.; Valuck, R.J. Monitoring suicidal patients in primary care using electronic health records. J. Am. Board Fam. Med. 2015, 28, 65–71. [Google Scholar] [CrossRef] [PubMed]

- Levey, D.F.; Niculescu, E.M.; Le-Niculescu, H.; Dainton, H.L.; Phalen, P.L.; Ladd, T.B.; Weber, H.; Belanger, E.; Graham, D.L.; Khan, F.N.; et al. Towards understanding and predicting suicidality in women: Biomarkers and clinical risk assessment. Mol. Psychiatry 2016, 21, 768–785. [Google Scholar] [CrossRef]

- Colic, S.; Richardson, D.J.; Reilly, J.P.; Hasey, G. Using machine learning algorithms to enhance the management of suicide ideation. Conf. Proc. IEEE Eng. Med. Biol. Soc. 2018, 4936–4939. [Google Scholar]

- Aladağ, A.E.; Muderrisoglu, S.; Akbas, N.B.; Zahmacioglu, O.; Bingol, H.O. Detecting suicidal ideation on forums: Proof-of-concept study. J. Med. Internet Res. 2018, 20, e215. [Google Scholar] [CrossRef]

- Choi, S.B.; Wanhyung, L.; Jin-Ha, Y.; Wan, J.H.; Kim, D.W. Ten year prediction of suicide death using Cox regression and ML in a nationwide retrospective cohort study in South Korea. S. Korea J. Affect. Disord. 2018, 231, 8–14. [Google Scholar] [CrossRef]

- Downs, J.; Velupillai, S.; George, G.; Holden, R.; Kikoler, M.; Dean, H.; Fernandes, A.; Dutta, R. Detection of suicidality in adolescents with autism spectrum disorders: Developing a natural language processing approach for use in electronic health records. AMIA Annu. Symp. Proc. 2018, 641–649. [Google Scholar]

- Fahey, R.A.; Matsubayashi, T.; Ueda, M. Tracking the Werther effect on social media: Emotional responses to prominent suicide deaths on Twitter and subsequent increases in suicide. Soc. Sci. Med. 2018, 219, 19–29. [Google Scholar] [CrossRef] [PubMed]

- Zhong, Q.Y.; Karlson, E.W.; Gelaye, B.; Finan, S.; Avillach, P.; Smoller, J.W.; Cai, T.; Williams, M.A. Screening pregnant women for suicidal behavior in electronic medical records: Diagnostic codes vs. clinical notes processed by natural language processing. BMC Med. Inform. Decis. Mak. 2018, 18, 30. [Google Scholar] [CrossRef] [PubMed]

- Fernandes, A.C.; Dutta, R.; Velupillai, S.; Sanyal, J.; Stewart, R.; Chandran, D. Identifying suicide ideation and suicidal attempts in a psychiatric clinical research database using natural language processing. Sci. Rep. 2018, 8, 7426. [Google Scholar] [CrossRef]

- Jordan, P.; Sheddan-Mara, M.; Lowe, B. Predicting suicidal ideation in primary care: An approach to identity easily accessible key variables. Gen. Hosp. Psych. 2018, 51, 106–111. [Google Scholar] [CrossRef]

- Carson, N.J.; Mullin, B.; Sanchez, M.J.; Lu, F.; Yang, K.; Menezes, M. Identification of suicidal behavior among psychiatrically hospitalized adolescents using natural language processing and machine learning of electronic health records. PLoS ONE 2019, 14, e0211116. [Google Scholar] [CrossRef]

- McCoy, T.H.; Pellegrini, A.M.; Perlis, R.H. Research Domain Criteria scores estimated through natural language processing are associated with risk for suicide and accidental death. Depress. Anxiety 2019, 36, 392–399. [Google Scholar] [CrossRef]

- Connolly, B.; Cohen, K.B.; Bayram, U.; Pestian, J. A nonparametric Bayesian method of translating machine learning scores to probabilities in clinical decision support. BMC Bioinform. 2017, 18, 361. [Google Scholar] [CrossRef][Green Version]

- Modai, I.; Ritsner, M.; Kurs, R.; Shalom, M.; Ponizovsky, A. Validation of the Computerized Suicide Risk Scale - A backpropagation neural network instrument (CSRS-BP). Euro. Psychiatry 2002, 17, 75–81. [Google Scholar] [CrossRef]

- Rosellini, A.J.; Stein, M.B.; Benedek, D.M.; Bliese, P.D.; Chiu, W.T.; Hwang, I.; Monahan, J.; Nock, M.K.; Petukhova, M.V.; Sampson, N.A.; et al. Using self-report surveys at the beginning of service to develop multi-outcome risk models for new soldiers in the U.S. Army. Psychol. Med. 2017, 47, 2275–2287. [Google Scholar] [CrossRef]

- Liakata, M.; Kim, J.H.; Saha, S.; Hastings, J.; Rebholz-Schuhmann, D. Three hybrid classifiers for the detection of emotions in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8967. [Google Scholar] [CrossRef]

- Nikfarjam, A.; Emadzadeh, E.; Gonzalez, G. A hybrid system for emotion extraction from suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8981. [Google Scholar] [CrossRef] [PubMed]

- Yeh, E.; Jarrold, W.; Jordan, J. Leveraging psycholinguistic resources and emotional sequence models for suicide note emotion annotation. Biomed. Inform. Insights 2012, 5, BII-S8979. [Google Scholar] [CrossRef] [PubMed]

- Cherry, C.; Mohammad, S.M.; De Bruijn, B. Binary classifiers and latent sequence models for emotion detection in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8933. [Google Scholar] [CrossRef] [PubMed]

- Wang, W.; Chen, L.; Tan, M.; Wang, S.; Sheth, A.P. Discovering fine-grained sentiment in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8963. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Desmet, B.; Hoste, V. Combining lexico-semantic features for emotion classification in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8960. [Google Scholar] [CrossRef]

- Kovačević, A.; Dehghan, A.; Keane, J.A.; Nenadic, G. Topic categorisation of statements in suicide notes with integrated rules and machine learning. Biomed. Inform. Insights 2012, 5, BII-S8978. [Google Scholar]

- Pak, A.; Bernhard, D.; Paroubek, P.; Grouin, C. A combined approach to emotion detection in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8969. [Google Scholar] [CrossRef]

- Spasić, I.; Burnap, P.; Greenwood, M.; Arribas-Ayllon, M. A naïve bayes approach to classifying topics in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8945. [Google Scholar]

- McCarthy, J.A.; Finch, D.K.; Jarman, J. Using ensemble models to classify the sentiment expressed in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8931. [Google Scholar] [CrossRef]

- Wicentowski, R.; Sydes, M.R. emotion Detection in suicide notes using Maximum Entropy Classification. Biomed. Inform. Insights 2012, 5, BII-S8972. [Google Scholar] [CrossRef] [PubMed]

- Sohn, S.; Torii, M.; Li, D.; Wagholikar, K.; Wu, S.; Liu, H. A hybrid approach to sentiment sentence classification in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8961. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Yang, H.; Willis, A.; De Roeck, A.; Nuseibeh, B. A hybrid model for automatic emotion recognition in suicide notes. Biomed. Inform. Insights 2012, 5, BII-S8948. [Google Scholar] [CrossRef] [PubMed]

- The White House Office of Science and Technology Policy, The National Insitutes of Health, The U.S. Department of Veterans Affairs. Open Data and Innovation for Suicide Prevention: #MentalHealthHackathon; The White House Office of Science and Technology Policy, The National Insitutes of Health, The U.S. Department of Veterans Affairs: Boston, MA, USA; Chicago, IL, USA; San Francisco, CA, USA; New York, NY, USA; Washington, DC, USA, 12 December 2015.

- Chung, D.T.; Ryan, C.J.; Hadzi-Pavlovic, D.; Singh, S.P.; Stanton, C.; Large, M.M. Suicide rates after discharge from psychiatric facilities: A systematic review and meta-analysis. JAMA Psychiatry 2017, 74, 694–702. [Google Scholar] [CrossRef] [PubMed]

- Hom, M.A.; Joiner, T.E.; Bernert, R.A. Limitations of a single-item assessment of suicide attempt history: Implications for standardized suicide risk assessment. Psychol. Assess. 2016, 28, 1026–1030. [Google Scholar] [CrossRef] [PubMed]

- Millner, A.J.; Lee, M.D.; Nock, M.K. Single-item measurement of suicidal behaviors: Validity and consequences of misclassification. PLoS ONE 2015, 10, e0141606. [Google Scholar] [CrossRef]

- Carter, G.L.; Clover, K.; Whyte, I.M.; Dawson, A.H.; D’Este, C. Postcards from the EDge: 5-year outcomes of a randomised controlled trial for hospital-treated self-poisoning. Br. J. Psychiatry 2013, 202, 372–380. [Google Scholar] [CrossRef]

- Miller, I.W.; Camargo, C.A., Jr.; Arias, S.A.; Sullivan, A.F.; Allen, M.H.; Goldstein, A.B.; Manton, A.P.; Espinola, J.A.; Jones, R.; Hasegawa, K.; et al. Suicide prevention in an emergency department population: The ED-SAFE study. JAMA Psychiatry 2017, 74, 563–570. [Google Scholar] [CrossRef]

- Denchev, P.; Pearson, J.L.; Allen, M.H.; Claassen, C.A.; Currier, G.W.; Zatzick, D.F.; Schoenbaum, M.; Claassen, C. Modeling the cost-effectiveness of interventions to reduce suicide risk among hospital emergency department patients. Psychiatr. Serv. 2018, 69, 23–31. [Google Scholar] [CrossRef]

- Brent, D.A.; McMakin, D.L.; Kennard, B.D.; Goldstein, T.R.; Mayes, T.L.; Douaihy, A.B. Protecting adolescents from self-harm: A critical review of intervention studies. J. Am. Acad. Child Adolesc. Psychiatry 2013, 52, 1260–1271. [Google Scholar] [CrossRef]

- Mann, J.J.; Apter, A.; Bertolote, J.; Beautrais, A.; Currier, D.; Haas, A.; Hegerl, U.; Lönnqvist, J.; Malone, K.M.; Marusic, A.; et al. Suicide prevention strategies: A systematic review. JAMA 2005, 294, 2064–2074. [Google Scholar] [CrossRef]

- Hersh, W.R.; Weiner, M.G.; Embi, P.J.; Logan, J.R.; Payne, P.R.; Bernstam, E.V.; Lehmann, H.P.; Hripcsak, G.; Hartzog, T.H.; Cimino, J.J.; et al. Caveats for the use of operational electronic health record data in comparative effectiveness research. Med. Care 2013, 51, S30–S37. [Google Scholar] [CrossRef]

- Iezzoni, L.I. Statistically derived predictive models Caveat emptor. J. Gen. Intern. Med. 1999, 14, 388–389. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Fox, K.R.; Huang, X.; Linthicum, K.P.; Wang, S.B.; Franklin, J.C.; Ribeiro, J.D. Model complexity improves the prediction of nonsuicidal self-injury. J. Consult. Clin. Psychology 2019, 87, 684–692. [Google Scholar] [CrossRef] [PubMed]

- Siddaway, A.P.; Quinlivan, L.; Kapur, N.; O’Connor, R.C.; De Beurs, D. Cautions concerns and future directions for using machine learning in relation to mental health problems and clinical and forensic risks: A brief comment on “Model complexity improves the prediction of nonsuicidal self-injury” (Fox et al., 2019). J. Consult. Clin. Psychology 2020, 88, 384–387. [Google Scholar] [CrossRef] [PubMed]

| Author | Year | Journal | Quality Rating | Clinical Sample | Outcome | Biomarker | NLP a | Classification | Specificity | Sensitivity | Accuracy | AUC b | N | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Kessler et al. [33] | 2017 | Int. J. Methods Psychiatr. Res. | 2 | x | 1 | x | 0.28 | 6360 | |||||||

| McCoy et al. [27] | 2016 | JAMA Psychiatry | 3 | x | 1 | x | x | 458,053 | |||||||

| Kessler et al. [34] | 2017 | Mol. Psychiatry | 2 | x | 1 | x | 0.95 | 0.46 | 0.70 | 0.70 | 975,057 | ||||

| Kessler et al. [15] | 2015 | JAMA Psychiatry | 3 | x | 1 | 0.89 | 53,769 | ||||||||

| Poulin et al. [35] | 2014 | PLoS One | 4 | x | 1 | x | x | x | 0.65 | 210 | |||||

| Pestian et al. [36] | 2008 | AMIA Annu. Symp. Proc. | 3 | 1 | x | x | 0.74 | 66 | |||||||

| Pamer et al. [37] | 2008 | AMIA Annu. Symp. Proc. | 3 | 1 | x | 1204 | |||||||||

| Haerian et al. [38] | 2012 | AMIA Annu. Symp. Proc. | 3 | x | 1 | x | x | 280 | |||||||

| Ilgen et al. [39] | 2009 | J Clin. Psychiatry | 3 | x | 1 | 887,859 | |||||||||

| Adamou et al. [40] | 2019 | Crisis | 3 | x | 1 | x | x | 0.70 | 130 | ||||||

| Rossellini [41] | 2018 | Depress. Anxiety | 3 | x | 1 | x | 0.86 | 9488 | |||||||

| De Avila Berni [42] | 2018 | PLOS One | 5 | 1 | x | 0.91 | 0.69 | 0.80 | |||||||

| Metzger et al. [43] | 2017 | Int. J. Methods Psychiatr. Res. | 3 | 2 | x | x | 444 | ||||||||

| Passos et al. [44] | 2016 | J. Affect. Disord. | 4 | x | 2 | 0.71 | 0.72 | 0.77 | 144 | ||||||

| Kessler et al. [45] | 2016 | Mol. Psychiatry | 2 | 2 | x | 0.70 | 0.76 | 1056 | |||||||

| Modai et al. [46] | 2004 | J. Nerv. Ment. Dis. | 2 | x | 2 | 0.85 | 0.94 | 987 | |||||||

| Modai et al. [47] | 2002 | JMIR Med. Inform. | 3 | x | 2 | 0.85 | 1.00 | 0.82 | 197 | ||||||

| Modai et al. [48] | 1999 | Med. Inform. Internet Med. | 3 | 2 | 0.94 | 0.94 | 0.94 | 198 | |||||||

| Modai et al. [49] | 2002 | Crisis | 2 | x | 2 | 250 | |||||||||

| Modai et al. [50] | 1998 | Med. Inform. | 4 | x | 2 | x | 0.97 | 0.83 | 161 | ||||||

| Hettige et al. [51] | 2017 | Gen. Hosp. Psychiatry | 3 | x | 2 | x | x | 0.8 | 0.65 | 0.67 | 0.71 | 345 | |||

| Walsh et al. [52] | 2017 | Clin. Psychol. Sci. | 3 | x | 2 | x | 0.96 | 0.84 | 5167 | ||||||

| Venek et al. [53] | 2017 | IEEE Trans. Affect. Comput. | 3 | x | 2 | x | 0.90 | 60 | |||||||

| Baca-Garcia et al. [54] | 2007 | Prog. Neuropsych.. Biol Psychiatry | 4 | x | 2 | x | x | 0.99 | 0.99 | 0.97 | 0.99 | 539 | |||

| Tiet et al. [55] | 2006 | Alcohol Clin. Exp. Res. | 3 | x | 2 | x | x | x | 0.87 | 0.89 | 0.88 | 34,251 | |||

| Baca-Garcia et al. [56] | 2010 | Am. J. Med. Genet. B Genet. | 3 | x | 2 | x | x | 0.82 | 0.50 | 0.67 | 0.66 | 277 | |||

| Lopez-Castroman et al. [57] | 2011 | J. Psychiatry Res. | 4 | x | 2 | x | x | 0.97 | 0.76 | 0.71 | 1349 | ||||

| Modai et al. [58] | 2004 | Med. Inform. Internet Med. | 3 | x | 2 | 0.70 | 0.83 | 0.77 | 612 | ||||||

| Mann et al. [59] | 2008 | J. Clin. Psychiatry | 3 | x | 2 | x | 0.92 | 0.89 | 0.80 | 408 | |||||

| Bae et al. [60] | 2015 | Neuropsychiatr. Dis. Treat. | 3 | 2 | x | 2754 | |||||||||

| Choo et al. [61] | 2014 | Asian J. Psychiatr. | 3 | x | 2 | 0.90 | 418 | ||||||||

| Oh et al. [62] | 2017 | Front. Psychol. | 3 | x | 2 | x | 0.99 | 0.78 | 0.97 | 573 | |||||

| Benton et al. [63] | 2017 | Proc. 15th Conf. EACL | 3 | x | 2 | x | 9611 | ||||||||

| Ruderfer et al. [64] | 2019 | Mol. Psychiatry | 3 | 2 | x | 0.82 | 0.92 | 0.94 | 512,639 | ||||||

| Lyu and Zhang [65] | 2019 | J. Affect. Disord. | 3 | x | 2 | 0.94 | 0.68 | 0.85 | 1318 | ||||||

| Coppersmith et al. [66] | 2018 | Biomed. Inform. Insights | 3 | 2,6 | x | x | 0.94 | 418 | |||||||

| Dargel et al. [67] | 2018 | Acta Psychiatr. Scand. | 3 | x | 2 | x | 0.84 | 635 | |||||||

| Jordan et al. [68] | 2018 | Psychiatry Res. | 3 | x | 2 | x | 0.69 | 0.79 | 0.72 | 218 | |||||

| Setoyama et al. [69] | 2016 | PLoS One | 2 | x | 3 | x | x | 0.70 | 90 | ||||||

| Pestian et al. [70] | 2017 | Suicide Life Threat. Behav. | 2 | x | 3 | x | x | 0.93 | 0.85 | 379 | |||||

| Cook et al. [71] | 2016 | Comput. Math Meth. Med. | 2 | x | 3 | x | x | 0.57 | 0.56 | 0.85 | 0.61 | 1453 | |||

| Pestian et al. [26] | 2016 | Suicide Life Threat. Behav. | 2 | x | 3 | x | x | 0.96 | 0.97 | 60 | |||||

| Gradus et al. [72] | 2017 | J. Trauma Stress | 4 | x | 3 | x | 0.92 | 2240 | |||||||

| Birjali et al. [73] | 2017 | Procedia Comput. Sci. | 3 | 3 | x | x | |||||||||

| Just et al. [74] | 2017 | Nat. Hum. Behav. | 3 | x | 3 | x | x | 0.94 | 79 | ||||||

| Ryu et al. [75] | 2018 | Psychiatric Invest. | 3 | 3 | x | 0.81 | 0.84 | 0.80 | 11,628 | ||||||

| Desjardins et al. [76] | 2016 | J. Clin. Psychiatry | 4 | x | 4 | x | 0.93 | 879 | |||||||

| Tzeng [77] | 2006 | Worldview Evid. Base. Nurs. | 2 | x | 4 | x | 63 | ||||||||

| Baca-García et al. [78] | 2006 | J. Clin. Psychiatry | 3 | x | 4 | x | 1.00 | 0.99 | 509 | ||||||

| Quan et al. [79] | 2014 | PLoS One | 2 | 4 | x | ||||||||||

| Litvinova et al. [80] | 2017 | Comput. y Sistemas | 3 | x | 4 | x | x | 0.72 | 1000 | ||||||

| Zhang et al. [81] | 2019 | Health Inform. J. | 3 | x | 4 | x | x | 409 | |||||||

| McKernan et al. [82] | 2019 | Arthritis Care Res. | 3 | x | 2,3 | x | 1.00 | 1.00 | 0.82 | 8879 | |||||

| DelPozo-Banos et al. [83] | 2018 | JMIR Ment. Health | 3 | x | 1 | x | 0.85 | 0.65 | 0.80 | 2604 | |||||

| Burke et al. [84] | 2018 | Psychiatry Res. | 3 | 4 | x | 0.89 | 359 | ||||||||

| Cheng et al. [85] | 2017 | J. Med. Internet Res. | 4 | 5 | x | x | 0.61 | 974 | |||||||

| Braithwaite et al. [86] | 2016 | JMIR Ment. Health | 4 | 5 | x | 0.97 | 0.53 | 0.91 | 135 | ||||||

| Guan et al. [87] | 2015 | JMIR Ment. Health | 4 | 5 | x | 909 | |||||||||

| Woo et al. [88] | 2015 | Int. J. Environ. Res. Public Health | 4 | 5 | x | ||||||||||

| O’Dea et al. [89] | 2015 | Internet Interv. | 3 | 5 | x | 0.76 | 14,701 | ||||||||

| Nguyen et al. [90] | 2017 | Multimed. Tools Appl. | 3 | x | 5 | x | 0.88 | ||||||||

| Burnap et al. [91] | 2017 | Online Soc. Netw. Media | 3 | 5 | x | x | 0.85 | ||||||||

| Vioules et al. [92] | 2018 | IBM J. Res. Dev. | 3 | 5 | x | x | 120 | ||||||||

| Zalar et al. [93] | 2018 | Psychiatr. Danub. | 3 | 1, 2 | x | 0.91 | 78,625 | ||||||||

| Tran et al. [94] | 2015 | J. Biomed. Inform. | 3 | x | 1,2 | x | 7578 | ||||||||

| Leiva-Murillo et al. [95] | 2013 | Comput. Math. Methods Med. | 3 | x | 1,2 | x | 8699 | ||||||||

| Tran et al. [96] | 2014 | BMC Psychiatry | 3 | x | 1,2 | 0.97 | 0.79 | 7399 | |||||||

| Bernecker et al. [97] | 2019 | Behav. Res. Ther. | 3 | x | 2, 3 | x | 0.83 | 27,501 | |||||||

| Zhong et al. [98] | 2019 | Euro. J. Epidemiol. | 3 | 2, 3 | x | x | 0.96 | 0.34 | 0.83 | 275,843 | |||||

| Morales et al. [99] | 2017 | Front. Psychiatry | 4 | x | 2,3 | x | 0.79 | 0.71 | 707 | ||||||

| Barros et al. [100] | 2017 | Braz. J. Psychiatry | 4 | x | 2,3 | x | 0.79 | 0.77 | 0.78 | 707 | |||||

| Kuroki [101] | 2015 | Am. J. Orthopsychiatry | 3 | 2,3 | x | x | 624 | ||||||||

| Anderson et al. [102] | 2015 | J. Am. Board Fam. Med. | 3 | x | 2,3,4 | x | x | 0.96 | 0.94 | 0.95 | 15,761 | ||||

| Levey et al. [103] | 2016 | Mol. Psychiatry | 3 | x | 1,3,4 | x | 0.94 | 114 | |||||||

| Colic et al. [104] | 2018 | Conf. Proc. IEEE Eng. Med. Biol. Soc. | 3 | x | 3 | 0.84 | 738 | ||||||||

| Aladag et al. [105] | 2018 | J. Med. Internet Res. | 3 | 5 | x | x | 0.92 | 785 | |||||||

| Choi et al. [106] | 2018 | S. Korean J. Affect. Disord. | 3 | 1 | x | 0.72 | 819,951 | ||||||||

| Downs et al. [107] | 2018 | AMIA Annu. Symp. Proc. | 3 | x | 4 | x | x | 1906 | |||||||

| Fahey [108] | 2018 | Soc. Sci. Med. | 3 | 5 | x | x | 0.80 | 974,891 | |||||||

| Zhong et al. [109] | 2018 | BMC Med. Inform. Decis. Mak. | 3 | x | 2-4 | x | x | 275,843 | |||||||

| Fernandes et al. [110] | 2018 | Sci. Rep. | 3 | 2,3 | x | x | 0.87 | ||||||||

| Jordan et al. [111] | 2018 | Gen. Hosp. Psychiatry | 3 | x | 3 | x | 0.83 | 0.87 | 6805 | ||||||

| Carson et al. [112] | 2019 | PLOS one | 3 | x | 2 | x | x | 0.22 | 0.83 | 0.47 | 0.68 | 73 | |||

| McCoy et al. [113] | 2018 | Depress. Anxiety | 3 | 1 | x | 444,317 | |||||||||

| Connolly et al. [114] | 2017 | BMC Bioinform. | 3 | x | 3 | x | 314 | ||||||||

| Modai et al. [115] | 2002 | Euro. Psychiatry | 4 | x | 2 | 0.73 | 0.65 | 250 | |||||||

| Rossellini et al. [116] | 2017 | Psychol. Med. | 3 | 2 | x | 0.74 | 21,832 | ||||||||

| Citation | Journal | Outcome | Precision | Quality Rating |

|---|---|---|---|---|

| Liakata et al. 2012 [117] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.60 | 3 |

| Nikfarjam et al. 2012 [118] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.60 | 3 |

| Yeh et al. 2012 [119] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.77 | 3 |

| Cherry et al. 2012 [120] | Biomed Inform Insights | Death/NLP of Suicide Notes | 1.00 | 3 |

| Wang et al. 2012 [121] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.67 | 3 |

| Desmet et al. 2012 [122] | Biomed Inform Insights | Death/NLP of Suicide Notes | NR | 3 |

| Kovacevic et al. 2012 [123] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.67 | 3 |

| Pak et al. 2012 [124] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.62 | 3 |

| Spasic, 2012 [125] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.55 | 3 |

| McCarthy et al. 2012 [126] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.57 | 3 |

| Wicentowski et al. 2012 [127] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.69 | 3 |

| Sohn, 2012 [128] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.61 | 3 |

| Yang, 2012 [129] | Biomed Inform Insights | Death/NLP of Suicide Notes | 0.58 | 3 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bernert, R.A.; Hilberg, A.M.; Melia, R.; Kim, J.P.; Shah, N.H.; Abnousi, F. Artificial Intelligence and Suicide Prevention: A Systematic Review of Machine Learning Investigations. Int. J. Environ. Res. Public Health 2020, 17, 5929. https://doi.org/10.3390/ijerph17165929

Bernert RA, Hilberg AM, Melia R, Kim JP, Shah NH, Abnousi F. Artificial Intelligence and Suicide Prevention: A Systematic Review of Machine Learning Investigations. International Journal of Environmental Research and Public Health. 2020; 17(16):5929. https://doi.org/10.3390/ijerph17165929

Chicago/Turabian StyleBernert, Rebecca A., Amanda M. Hilberg, Ruth Melia, Jane Paik Kim, Nigam H. Shah, and Freddy Abnousi. 2020. "Artificial Intelligence and Suicide Prevention: A Systematic Review of Machine Learning Investigations" International Journal of Environmental Research and Public Health 17, no. 16: 5929. https://doi.org/10.3390/ijerph17165929

APA StyleBernert, R. A., Hilberg, A. M., Melia, R., Kim, J. P., Shah, N. H., & Abnousi, F. (2020). Artificial Intelligence and Suicide Prevention: A Systematic Review of Machine Learning Investigations. International Journal of Environmental Research and Public Health, 17(16), 5929. https://doi.org/10.3390/ijerph17165929