Effectiveness of Using Voice Assistants in Learning: A Study at the Time of COVID-19

Abstract

1. Introduction

1.1. Self-Regulation Learning and Advanced Learning Technologies

1.2. Advanced Learning Technologies and Intelligent Personal Assistant

1.3. The Use of Voice Assistants: Applicability in Prevention of Learning Difficulties

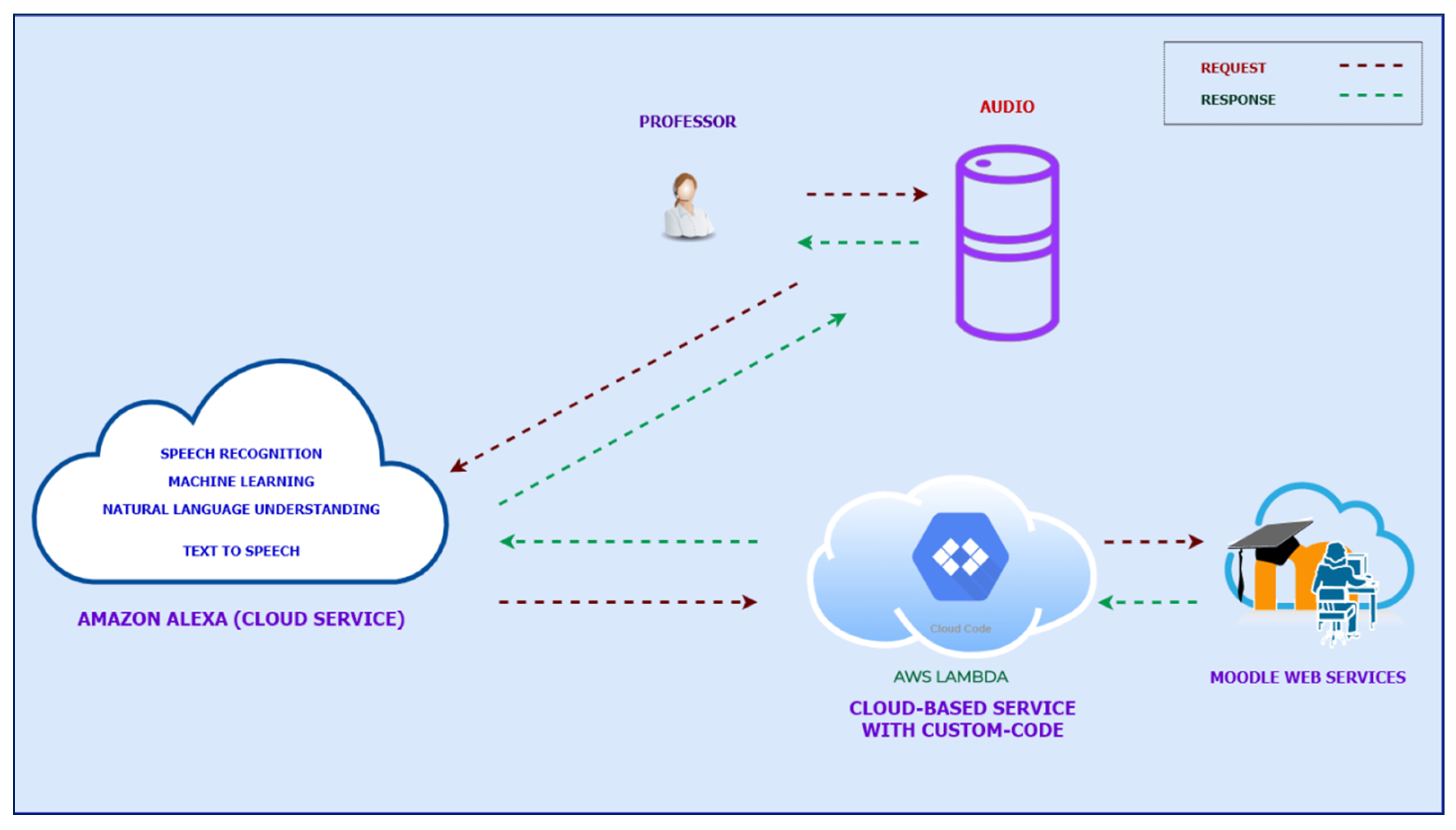

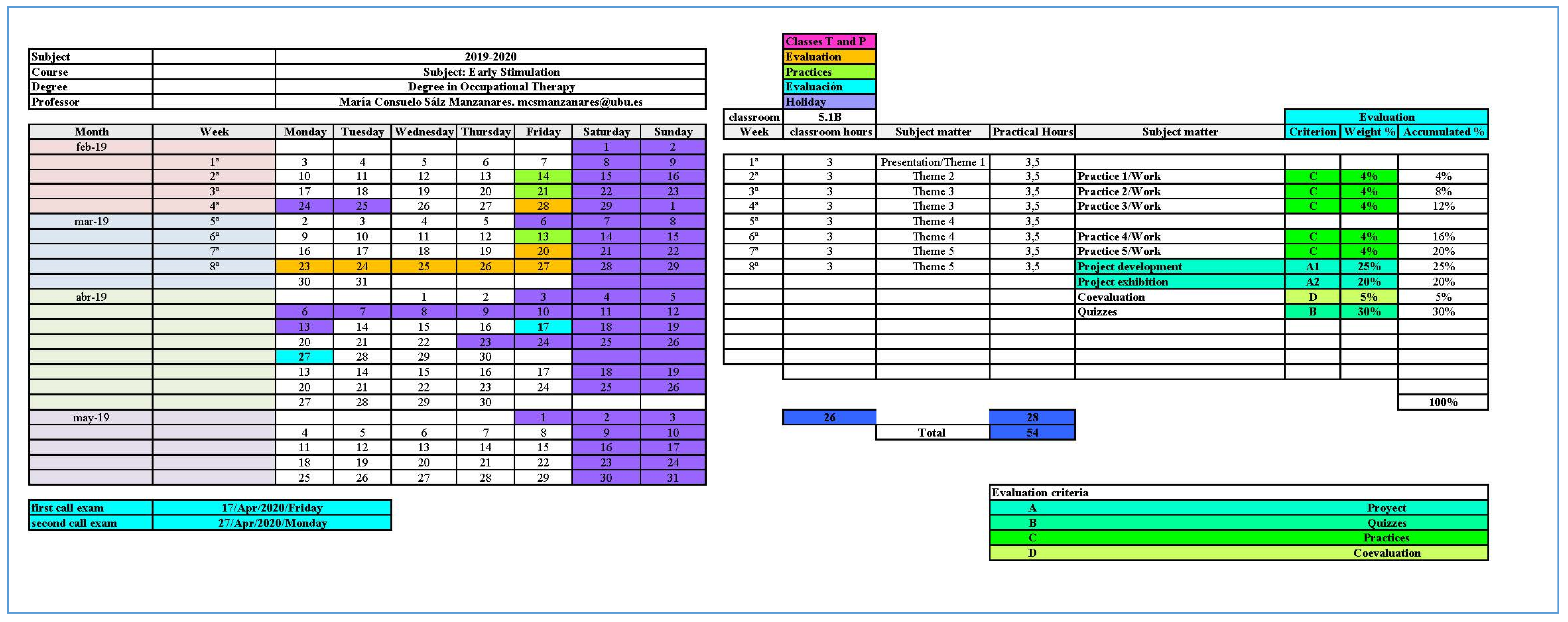

2. Materials and Methods

2.1. Participants

2.2. Instruments

2.3. Procedure

2.4. Design and Data Analysis

2.5. Ethical Approval

3. Results

3.1. Previous Statistical Analyses

3.2. Research Question 1

3.3. Research Question 2

3.4. Research Question 3

3.5. Research Question 4

4. Discussion

5. Conclusions

6. Patents

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| Scale of Metacognitive Strategies ACRAr [58] | U Mann-Whitney | p |

|---|---|---|

| 1. I am aware of strategies (exploration. underlining. headings) that help me concentrate. | 85.5 | 0.62 |

| 2. I am aware of learning strategies that help me to memorize (repetition and mnemonic rules, etc.). | 61 | 0.10 |

| 3. I am aware of the strategies that help me to elaborate the information (drawings or graphs. mental images. etc.) | 75.5 | 0.35 |

| 4. I am aware of the importance of organizing information by making outlines, sequences, diagrams, maps etc. | 95.5 | 0.98 |

| 5. When I need to remember information for an exam. work. etc., I use mnemonic strategies. drawings. concept maps. etc. | 92 | 0.85 |

| 6. I am aware that in order to remember information on an exam, it is useful for me to make mental connections with this information. | 86 | 0.63 |

| 7. When I prepare an exam, I use strategies to order the information (scripts, diagrams...). | 70 | 0.23 |

| 8. I plan the study by selecting the strategies that I think will be most effective. | 84.5 | 0.59 |

| 9. Before I answer the questions on a test, I use strategies that help me remember the information. | 77 | 0.36 |

| 10. Before I start studying, I distribute the time between all the subjects I have to learn. | 92.5 | 0.87 |

| 11. I take note of the tasks I have to perform in each subject. | 94 | 0.93 |

| 12. When the exams come up, I make a work plan establishing the time to be devoted to each topic. | 87.5 | 0.70 |

| 13. I dedicate a time to each part of the material to study that is proportional to its importance or difficulty. | 68 | 0.19 |

| 14. When I study, I check the strategies that work best for me. | 93 | 0.89 |

| 15. At the end of an exam, I check the answers recalling the information studied. | 87 | 0.68 |

| 16. If the strategies I use to “learn” are not effective, I look for others. | 96 | 1.00 |

| 17. I keep the strategies working for me to remember information. | 88 | 0.70 |

| Scale of Metacognitive Strategies ACRAr [58] | Range | Min | Max | M | SD | Skewness | Kurtosis | ||

|---|---|---|---|---|---|---|---|---|---|

| S | N | S | N | ||||||

| 1. I am aware of strategies (exploration. underlining. headings) that help me concentrate. | 2.00 | 2.00 | 4.00 | 3.17 | 0.63 | −0.17 | 0.41 | −0.32 | 0.81 |

| 2. I am aware of learning strategies that help me to memorize (repetition and mnemonic rules, etc.). | 2.00 | 2.00 | 4.00 | 3.30 | 0.63 | −0.40 | 0.41 | −0.44 | 0.81 |

| 3. I am aware of the strategies that help me to elaborate the information (drawings or graphs. mental images. etc.) | 3.00 | 1.00 | 4.00 | 3.17 | 0.85 | −0.70 | 0.41 | −0.29 | 0.81 |

| 4. I am aware of the importance of organizing information by making outlines, sequences, diagrams, maps etc. | 2.00 | 2.00 | 4.00 | 3.43 | 0.66 | −0.83 | 0.41 | −0.22 | 0.81 |

| 5. When I need to remember information for an exam. work. etc., I use mnemonic strategies. drawings. concept maps. etc. | 3.00 | 1.00 | 4.00 | 3.30 | 0.77 | −1.08 | 0.41 | 1.18 | 0.81 |

| 6. I am aware that in order to remember information on an exam, it is useful for me to make mental connections with this information. | 3.00 | 1.00 | 4.00 | 3.40 | 0.65 | −1.46 | 0.41 | 4.33 | 0.81 |

| 7. When I prepare an exam, I use strategies to order the information (scripts, diagrams...). | 3.00 | 1.00 | 4.00 | 3.20 | 0.86 | −1.09 | 0.41 | 0.91 | 0.81 |

| 8. I plan the study by selecting the strategies that I think will be most effective. | 2.00 | 2.00 | 4.00 | 3.00 | 0.76 | 0.00 | 0.41 | −1.22 | 0.81 |

| 9. Before I answer the questions on a test, I use strategies that help me remember the information. | 3.00 | 1.00 | 4.00 | 3.13 | 0.71 | −0.81 | 0.41 | 1.52 | 0.81 |

| 10. Before I start studying, I distribute the time between all the subjects I have to learn. | 3.00 | 1.00 | 4.00 | 3.13 | 0.83 | −0.64 | 0.41 | −0.26 | 0.81 |

| 11. I take note of the tasks I have to perform in each subject. | 2.00 | 2.00 | 4.00 | 3.10 | 0.73 | −0.18 | 0.41 | −1.05 | 0.81 |

| 12. When the exams come up, I make a work plan establishing the time to be devoted to each topic. | 3.00 | 1.00 | 4.00 | 3.07 | 0.88 | −0.75 | 0.41 | 0.08 | 0.81 |

| 13. I dedicate a time to each part of the material to study that is proportional to its importance or difficulty. | 3.00 | 1.00 | 4.00 | 2.93 | 0.84 | −0.89 | 0.41 | 0.86 | 0.81 |

| 14. When I study, I check the strategies that work best for me. | 3.00 | 1.00 | 4.00 | 3.29 | 0.67 | −1.22 | 0.41 | 3.29 | 0.81 |

| 15. At the end of an exam, I check the answers recalling the information studied. | 3.00 | 1.00 | 4.00 | 3.21 | 0.89 | −1.07 | 0.41 | 0.51 | 0.81 |

| 16. If the strategies I use to “learn” are not effective, I look for others. | 3.00 | 1.00 | 4.00 | 3.37 | 0.73 | −1.40 | 0.41 | 2.50 | 0.81 |

| 17. I keep the strategies working for me to remember information. | 2.00 | 2.00 | 4.00 | 3.50 | 0.42 | −1.38 | 0.41 | 3.99 | 0.81 |

| G1 N = 57 | G2 N = 40 | F(1,97) | p | η2 | |

|---|---|---|---|---|---|

| M (SD) | M (SD) | ||||

| 1. When you started the course your previous knowledge was at one level. | 3.86(0.58) | 4.05(0.22) | 3.89 | 0.05 * | 0.04 |

| 2. At the end of the course your knowledge is at one level. | 4.00(0.50) | 4.20(0.41) | 4.38 | 0.04 * | 0.04 |

| 3. In your opinion, the objectives of the course have been clear. | 4.00(0.53) | 4.23(0.48) | 4.53 | 0.04 * | 0.05 |

| 4. In your opinion, the concepts worked on in the course have been clear. | 4.93(0.32) | 4.85(0.48) | 0.96 | 0.33 | 0.01 |

| 5. In your opinion, the practices have helped to understand the theoretical concepts. | 4.79(0.73) | 4.93(0.30) | 1.27 | 0.26 | 0.01 |

| 6. Feedback from the teacher has been quick and accurate. | 4.88(0.47) | 4.93(0.30) | 0.34 | 0.56 | 0.00 |

| 7. In your opinion, group work has been facilitated. | 3.95(0.51) | 4.90(0.40) | 109.88 | 0.00 * | 0.54 |

| 8. In your opinion, all the contents explained in the teaching guide have been addressed. | 4.00(0.40) | 4.15(0.50) | 2.94 | 0.09 | 0.03 |

| 9. In your opinion, the skills you have developed in this subject can increase your chances of finding work. | 4.89(0.40) | 4.68(0.50) | 5.35 | 0.02 * | 0.05 |

| 10. The expectations you had when you enrolled in this course have been met. | 4.88(0.50) | 4.88(0.40) | 0.00 | 0.98 | 0.00 |

| 11. In your opinion, the procedure and the evaluation criteria were clearly explained | 4.88(0.43) | 4.83(0.44) | 0.34 | 0.56 | 0.00 |

| 12. In your opinion, the various assessment tests (practical, project-based learning) facilitated learning | 4.89(0.45) | 4.83(0.45) | 0.57 | 0.45 | 0.01 |

| 13. In your opinion, the use of UBUVirtual as an online teaching platform has been | 4.77(0.50) | 4.87(0.45) | 0.71 | 0.40 | 0.01 |

| 14. In your opinion, the use of questionnaires to evaluate the development of each unit has facilitated the understanding of it. | 3.98(0.44) | 3.80(0.61) | 2.93 | 0.09 | 0.03 |

| 15. In your opinion, the difficulty of the subject is at one level. | 4.79(0.60) | 4.90(0.30) | 1.18 | 0.28 | 0.01 |

| 16. Your level of satisfaction with the development of the practical activities has been | 4.72(0.73) | 4.88(0.40) | 1.51 | 0.22 | 0.02 |

| 17. Your level of satisfaction with the development of the subject has been. | 4.75(0.69) | 4.78(0.53) | 0.03 | 0.87 | 0.00 |

| 18. In your opinion, with respect to the rest of the subjects taken in the degree, you value this subject. | 4.90(0.37) | 4.90(0.34) | 0.00 | 0.96 | 0.00 |

| Question 1 n = 19 | Question 2 n = 24 | Question 3 n = 9 | Question 4 n = 6 | Question 1. COVID-19 n = 8 | Question 2 COVID-19 n = 6 | Question 3 COVID-19 n = 3 | Question 4 COVID-19 n = 2 | Question 5 COVID-19 n = 2 | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| F | % | F | % | F | % | F | % | F | % | F | % | F | % | F | % | F | % | |

| Group 1: Development has been good | 2 | 10.53 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Further explanations of the content of the practices | 1 | 5.26 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Increase practices | 1 | 5.26 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Many deliveries during state of alarm | 1 | 5.26 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Not | 1 | 5.26 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Suitable | 1 | 5.26 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Difficult | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 2 | 25.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: I don’t think anything | 0 | 0.00 | 0 | 0.00 | 1 | 10.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Increasing the explanations by videoconference | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 1 | 16.67 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Nothing | 0 | 0.00 | 9 | 37.50 | 1 | 10.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 1 | 33.33 | 1 | 50.00 |

| Group 1: Nothing has gone very well | 0 | 0.00 | 0 | 0.00 | 1 | 10.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: The development has been very good | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 1 | 16.67 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Very good | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 1 | 16.67 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Nothing has been taught correctly | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 1 | 33.33 | 0 | 0.00 | 0 | 0.00 |

| Group 1: Nothing has gone very well | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 1 | 33.33 | 0 | 0.00 | 0 | 0.00 |

| Group 2: Increasing practical classes | 0 | 0.00 | 1 | 4.17 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 2: Increasing the number of theoretical class | 1 | 5.26 | 1 | 4.17 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 2: Nothing | 0 | 0.00 | 13 | 54.17 | 7 | 70.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 2 | 66.67 | 1 | 50.00 |

| Group 2: There is no need to change anything | 11 | 57.89 | 0 | 0.00 | 0 | 0.00 | 4 | 66.67 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 2: To practice in real centres | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 1 | 16.67 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 2: Very good | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 6 | 75.00 | 4 | 66.67 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 |

| Group 2: Nothing this type of methodology has facilitated the continuation of the course | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 0 | 0.00 | 1 | 33.33 | 0 | 0.00 | 0 | 0.00 |

| Totals | 19 | 100 | 24 | 100 | 10 | 100 | 6 | 100 | 8 | 100 | 6 | 100 | 3 | 100 | 3 | 100 | 2 | 100 |

References

- Taub, M.; Sawyer, R.; Lester, J.; Azevedo, R. The Impact of Contextualized Emotions on Self-Regulated Learning and Scientific Reasoning during Learning with a Game-Based Learning Environment. Int. J. Artif. Intell. Educ. 2020, 30, 97–120. [Google Scholar] [CrossRef]

- Sáiz-Manzanares, M.C.; García Osorio, C.I.; Díez-Pastor, J.F.; Martín Antón, L.J. Will personalized e-Learning increase deep learning in higher education? Inf. Discov. Deliv. 2019, 47, 53–63. [Google Scholar] [CrossRef]

- Zimmerman, B.J.; Schunk, D.H. Self-regulated learning and performance: An introduction and an overview. In Handbook of Self-Regulation of Learning and Performance; Routledge/Taylor & Francis Group: New York, NY, USA, 2011; pp. 1–12. [Google Scholar]

- Noroozi, O.; Järvelä, S.; Kirschner, P.A. Multidisciplinary innovations and technologies for facilitation of self-regulated learning. Comput. Hum. Behav. 2019, 100, 295–297. [Google Scholar] [CrossRef]

- Sáiz-Manzanares, M.C.; García-Osorio, C.I.; Díez-Pastor, J.F. Differential efficacy of the resources used in B-learning environments. Psicothema 2019, 31, 170–178. [Google Scholar] [CrossRef] [PubMed]

- Hull, D.M.; Lowry, P.B.; Gaskin, J.E.; Mirkovski, K. A storyteller’s guide to problem-based learning for information systems management education. Inf. Syst. J. 2019, 29, 1040–1057. [Google Scholar] [CrossRef]

- Malmberg, J.; Järvelä, S.; Holappa, J.; Haataja, E.; Huang, X.; Siipo, A. Going beyond what is visible: What multichannel data can reveal about interaction in the context of collaborative learning? Comput. Hum. Behav. 2019, 96, 235–245. [Google Scholar] [CrossRef]

- Azevedo, R.; Gašević, D. Analyzing Multimodal Multichannel Data about Self-Regulated Learning with Advanced Learning Technologies: Issues and Challenges. Comput. Hum. Behav. 2019, 96, 207–210. [Google Scholar] [CrossRef]

- Sáiz-Manzanares, M.C.; Rodríguez-Díez, J.J.; Marticorena-Sánchez, R.; Zaparaín-Yáñez, M.J.; Cerezo-Menéndez, R. Lifelong learning from sustainable education: An analysis with eye tracking and data mining techniques. Sustainability 2020, 12, 1970. [Google Scholar] [CrossRef]

- Evans, T.L. Competencies and pedagogies for sustainability education: A roadmap for sustainability studies program development in colleges and universities. Sustainability 2019, 11, 5526. [Google Scholar] [CrossRef]

- Fjællingsdal, K.S.; Klöckner, C.A. Gaming Green: The Educational Potential of Eco—A Digital Simulated Ecosystem. Front. Psychol. 2019, 10, 2846. [Google Scholar] [CrossRef]

- Knutzen, B.; Kennedy, D. The global classroom project: Learning a second language in a virtual environment. Electron. J. E Learn. 2012, 10, 90–106. [Google Scholar]

- Wisniewski, B.; Zierer, K.; Hattie, J. The Power of Feedback Revisited: A Meta-Analysis of Educational Feedback Research. Front. Psychol. 2020, 10, 3087. [Google Scholar] [CrossRef] [PubMed]

- Shyr, W.J.; Chen, C.H. Designing a technology-enhanced flipped learning system to facilitate students’ self-regulation and performance. J. Comput. Assist. Learn. 2018, 34, 53–62. [Google Scholar] [CrossRef]

- Laeeq, K.; Memon, Z.A. Scavenge: An intelligent multi-agent based voice-enabled virtual assistant for LMS. Interact. Learn. Environ. 2019, 1–19. [Google Scholar] [CrossRef]

- De Barcelos Silva, A.; Gomes, M.M.; Da Costa, C.A.; Da Rosa Righi, R.; Barbosa, J.L.V.; Pessin, G.; Doncker, G.D.; Federizzi, G. Intelligent personal assistants: A systematic literature review. Expert Syst. Appl. 2020, 147, 113193. [Google Scholar] [CrossRef]

- Kita, T.; Nagaoka, C.; Hiraoka, N.; Suzuki, K.; Dougiamas, M. A discussion on effective implementation and prototyping of voice user interfaces for learning activities on moodle. In Proceedings of the 10th International Conference on Computer Supported Education, Madeira, Portugal, 15–17 March 2018; McLaren, B.M., Reilly, R., Zvacek, S., Uhomoibhi, J., Eds.; SciTePress: Setúbal, Portugal, 2018; pp. 398–404. [Google Scholar] [CrossRef]

- Kita, T.; Nagaoka, C.; Hiraoka, N.; Dougiamas, M. Implementation of Voice User Interfaces to Enhance Users’ Activities on Moodle. In Proceedings of the 4th International Conference on Information Technology (InCIT), Bangkok, Thailand, 24–25 October 2019; pp. 104–107. [Google Scholar] [CrossRef]

- Li, X.; Cui, H.; Rizzo, J.-R.; Wong, E.; Fang, Y. Cross-Safe: A Computer Vision-Based Approach to Make All Intersection-Related Pedestrian Signals Accessible for the Visually Impaired. Adv. Intell. Syst. Comput. 2020, 944, 132–146. [Google Scholar] [CrossRef]

- Grujić, D.D.; Milić, A.R.; Dadić, J.V.; Despotović-Zrakić, M.S. Application of IVR in E-learning system. In Proceedings of the 20th Telecommunications Forum, Belgrade, Serbia, 20–22 November 2012; Institute of Electrical and Electronics Engineers: Piscataway Township, NJ, USA, 2012; pp. 1472–1475. [Google Scholar] [CrossRef]

- Kawamura, Y.; Chen, C.X.; Hou, R. Implementation of Voice Recognition and Synthesis Module in Moodle System. In Proceedings of the 9th International Conference on Information and Communication Technology Convergence, Jeju Island, Korea, 17–19 October 2018; pp. 757–759. [Google Scholar] [CrossRef]

- Zimmerman, B.J.; Tsikalas, K.E. Can Computer-Based Learning Environments (CBLEs) Be Used as Self-Regulatory Tools to Enhance Learning? Educ. Psychol. 2005, 40, 267–271. [Google Scholar] [CrossRef]

- Abdolrahmani, A.; Storer, K.M.; Roy, A.R.M.; Kuber, R.; Branham, S.M. Blind leading the sighted: Drawing Design Insights from Blind Users towards More Productivity-oriented Voice Interfaces. ACM Trans. Access. Comput. 2020, 12, 1–35. [Google Scholar] [CrossRef]

- Li, C.; Zhou, H. Enhancing the efficiency of massive online learning by integrating intelligent analysis into MOOCs with an Application to Education of Sustainability. Sustainability 2018, 10, 468. [Google Scholar] [CrossRef]

- Schmidt, S.; Bruder, G.; Steinicke, F. Effects of virtual agent and object representation on experiencing exhibited artifacts. Comput. Graph. 2019, 83, 1–10. [Google Scholar] [CrossRef]

- Cerezo, R.; Calderón, V.; Romero, C. A holographic mobile-based application for practicing pronunciation of basic English vocabulary for Spanish speaking children. Int. J. Hum. Comput. Stud. 2019, 124, 13–25. [Google Scholar] [CrossRef]

- Cerezo, R.; Sánchez-Santillan, M.; Paule-Ruiz, M.P.; Núñez, J.C. Students’ LMS interaction patterns and their relationship with achievement: A case study in higher education. Comput. Educ. 2016, 96, 42–54. [Google Scholar] [CrossRef]

- Bernard, D.; Arnold, A. Cognitive interaction with virtual assistants: From philosophical foundations to illustrative examples in aeronautics. Comput. Ind. 2019, 107, 33–49. [Google Scholar] [CrossRef]

- Bates, M. Health Care Chatbots Are Here to Help. IEEE Pulse 2019, 10, 12–14. [Google Scholar] [CrossRef]

- Klašnja-Milićević, A.; Vesin, B.; Ivanović, M.; Budimac, Z.; Jain, L.C. E-Learning Systems: Intelligent Techniques for Personalization; Intelligent Systems; Springer: Cham, Switzerland, 2017; Volume 112. [Google Scholar] [CrossRef]

- Theobald, E.J.; Hill, M.J.; Tran, E.; Agrawal, S.; Nicole Arroyo, E.; Behling, S.; Chambwe, N.; Cintrón, D.L.; Cooper, J.D.; Dunster, G.; et al. Active learning narrows achievement gaps for underrepresented students in undergraduate science, technology, engineering, and math. PNAS 2020, 117, 6476–6483. [Google Scholar] [CrossRef]

- García, S.; Luengo, J.; Herrera, F. Data Preprocessing in Data Mining; Intelligent Systems Reference Library; Springer: New York, NY, USA, 2015; Volume 72. [Google Scholar] [CrossRef]

- Romero, C.; Ventura, S. Educational data mining: A review of the state of the art. IEEE Trans. Syst. Man Cybern. Part C Appl. Rev. 2010, 40, 601–618. [Google Scholar] [CrossRef]

- Webster, R.; Blatchford, P. Making sense of ‘teaching’, ‘support’ and ‘differentiation’: The educational experiences of pupils with Education, Health and Care Plans and Statements in mainstream secondary schools. Eur. J. Spec. Needs Educ. 2019, 34, 98–113. [Google Scholar] [CrossRef]

- Lopatovska, I.; Griffin, A.L.; Gallagher, K.; Ballingall, C.; Rock, C.; Velazquez, M. User recommendations for intelligent personal assistants. J. Libr. Inf. Sci. 2019, 52, 1–15. [Google Scholar] [CrossRef]

- Braiek, H.B.; Khomh, F. On testing machine learning programs. J. Syst. Softw. 2020, 164, 110542. [Google Scholar] [CrossRef]

- Koon, L.; McGlynn, S.A.; Blocker, K.A.; Rogers, W.A. Perceptions of Digital Assistants From Early Adopters Aged 55+. Ergon. Des. 2020, 28, 16–23. [Google Scholar] [CrossRef]

- Park, J.; Son, H.; Lee, J.; Choi, J. Driving Assistant Companion with Voice Interface Using Long Short-Term Memory Networks. IEEE Trans. Ind. Inform. 2019, 15, 582–590. [Google Scholar] [CrossRef]

- O’Brien, K.; Liggett, A.; Ramirez-Zohfeld, V.; Sunkara, P.; Lindquist, L.A. Voice-Controlled Intelligent Personal Assistants to Support Aging in Place. J. Am. Geriatr. Soc. 2020, 68, 176–179. [Google Scholar] [CrossRef] [PubMed]

- Moussalli, S.; Cardoso, W. Intelligent personal assistants: Can they understand and be understood by accented L2 learners? Comput. Assist. Lang. Learn. 2019, 1–26. [Google Scholar] [CrossRef]

- Gayathri, M.; Malathy, C.; Singh, S. MARK42: The Secured Personal Assistant Using Biometric Traits Integrated with Green IOT. J. Green Eng. 2020, 10, 255–267. [Google Scholar]

- Chen, M.; Decary, M. Artificial intelligence in healthcare: An essential guide for health leaders. Healthc. Manag. Forum 2020, 33, 10–18. [Google Scholar] [CrossRef]

- Gutiérez-Braojos, C.; Montejo-Gamez, J.; Marin-Jimenez, A.M.; Campaña, J. Hybrid learning environment: Collaborative or competitive learning? Virtual Reality. 2019, 23, 411–423. [Google Scholar] [CrossRef]

- Buenaventura, K.C.; Ablaza-Cruz, M.M. Impact assessment of classroom-based artificial intelligence in Bulacan agricultural state college. Int. J. Recent Technol. Eng. 2019, 8, 2777–2781. [Google Scholar] [CrossRef]

- Tiwari, A.; Paul, A.; Suganya, P. A smart bot as an interactive medical assistant with voice-based system using natural language processing. Int. J. Eng. Adv. Technol. 2019, 8, 164–166. [Google Scholar] [CrossRef]

- Vijayalakshmi, J.; Pandimeena, K. Agriculture talkbot using AI. Int. J. Recent Technol. Eng. 2019, 8, 186–190. [Google Scholar] [CrossRef]

- Sobitha Ahila, S.; Sivakumar, D.; Suganthan, A.; ManishKumar, S. BOT-O-PEDIA—Learning simplified. Int. J. Recent Technol. Eng. 2019, 7, 1877–1884. [Google Scholar]

- McKendrick, A.M.; Zeman, A.; Liu, P.; Aktepe, D.; Aden, I.; Bhagat, D.; Do, K.; Nguyen, H.D.; Turpin, A. Robot assistants for perimetry: A study of patient experience and performance. Transl. Vis. Sci. Technol. 2019, 8, 1–10. [Google Scholar] [CrossRef] [PubMed]

- Vtyurina, A.; Fourney, A.; Morris, M.R.; Findlater, L.; White, R.W. Bridging screen readers and voice assistants for enhanced eyes-free web search. In Proceedings of the World Wide Web Conference, San Francisco, CA, USA, 13–17 May 2019; Liu, L., White, R., Eds.; Association for Computing Machinery: New York, NY, USA, 2019; pp. 3590–3594. [Google Scholar] [CrossRef]

- Norval, C.; Singh, J. Explaining automated environments: Interrogating scripts, logs, and provenance using voice-assistants. In Proceedings of the 2019 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proceedings of the 2019 ACM International Symposium on Wearable, London, UK, 9–13 September 2019; pp. 332–335. [Google Scholar] [CrossRef]

- Rzepka, C. Examining the use of voice assistants: A value-focused thinking approach. In Proceedings of the 25th Americas Conference on Information Systems, AMCIS 2019, Cancun, Mexico, 15–17 August 2019; Association for Information Systems: Atlanta, GA, USA, 2019; pp. 1–10. Available online: https://www.researchgate.net/publication/335557789_Examining_the_Use_of_Voice_Assistants_A_Value-Focused_Thinking_Approach (accessed on 3 August 2020).

- De Cock, C.; Milne-Ives, M.; Van Velthoven, M.H.; Alturkistani, A.; Lam, C.; Meinert, E. Effectiveness of Conversational Agents (Virtual Assistants) in Health Care: Protocol for a Systematic Review. JMIR Res. Protoc. 2020, 9, 1–6. [Google Scholar] [CrossRef] [PubMed]

- Yang, H.; Lee, H. Understanding user behavior of virtual personal assistant devices. Inf. Syst. E Bus Manag. 2019, 17, 65–87. [Google Scholar] [CrossRef]

- Uskov, V.L.; Bakken, J.P.; Howlett, R.; Jain, L.C. Smart Universities: Concepts, Systems and Technologies; Springer: Cham, Switzerland, 2018. [Google Scholar] [CrossRef]

- Uskov, V.L.; Howlett, R.J.; Jain, L.C. Smart Education and e-Learning 2016; Springer: Cham, Switzerland, 2016. [Google Scholar] [CrossRef]

- Sáiz-Manzanares, M.C.; Marticorena-Sánchez, R.; García-Osorio, C.I.; Díez-Pastor, J.F. How do B-learning and learning patterns influence learning outcomes? Front. Psychol. 2017, 8, 745. [Google Scholar] [CrossRef]

- Sáiz-Manzanares, M.C.; Marticorena-Sánchez, R.; Díez-Pastor, J.F.; García-Osorio, C.I. Does the use of learning management systems with hypermedia mean improved student learning outcomes? Front. Psychol. 2019, 10, 88. [Google Scholar] [CrossRef] [PubMed]

- Román, J.M.; Poggioli, L. ACRA (r): Learning Strategies Scales; Publicaciones UCAB (Postgraduate: Doctorate in Education): Caracas, Venezuela, 2013. [Google Scholar]

- Carbonero, M.A.; Román, J.M.; Ferrer, M. Programme for “strategic learning” with university students: Design and experimental validation. Ann. Psychol. 2013, 29, 876–885. [Google Scholar] [CrossRef]

- Ochoa-Orihuel, J.; Marticorena-Sánchez, R.; Sáiz-Manzanares, M.C. UBU Voice Assistant Computer Application Software; BU-69-20; General Registry of Intellectual Property: Madrid, Spain, 29 July 2020. [Google Scholar]

- Sáiz-Manzanares, M.C.; Marticorena-Sánchez, R.; Díez-Pastor, J.F.; García-Osorio, C.I. Observation of Metacognitive Skills in Natural Environments: A Longitudinal Study with Mixed Methods. Front. Psychol. 2019, 10, 2398. [Google Scholar] [CrossRef]

- Sáiz-Manzanares, M.C.; Marticorena-Sánchez, M.C.; Arnaiz-González, Á.; Escolar, M.C.; Queiruga, M.Á. Detection of at-risk students with Learning Analytics Techniques. Eur J. Investig Heal. Psychol Educ 2018, 8, 129–142. [Google Scholar] [CrossRef]

- Sáiz-Manzanares, M.C.; Escolar, M.C.; Arnaiz-González, Á. Effectiveness of Blended Learning in Nursing Education. Int. J. Environ. Res. Public Health 2020, 17, 1589. [Google Scholar] [CrossRef]

- Sáiz-Manzanares, M.C.; Marticorena-Sánchez, M.C.; Escolar-Llamazares, M.C. eOrientation Computer Software for Moodle. Detection of the student at academic risk at University; 00/2020/588; General Registry of Intellectual Property: Madrid, Spain, 16 January 2020. [Google Scholar]

- Sáiz-Manzanares, M.C.; Marticorena-Sánchez, M.C.; García-Osorio, C.I. Monitoring Students at the University: Design and Application of a Moodle Plugin. Appl. Sci. 2020, 10, 3469. [Google Scholar] [CrossRef]

- IBM Corp. SPSS Statistical Package for the Social Sciences (SPSS); Version 24; IBM: Madrid, Spain, 2016. [Google Scholar]

| Participant Type | Gender | ||||||

|---|---|---|---|---|---|---|---|

| N | n | Men | n | Woman | |||

| Mage | SDage | Mage | SDage | ||||

| Group 1 (Nursing Degree) | 61 | 5 | 21.40 | 0.90 | 56 | 23.54 | 6.30 |

| Group 2 (Occupational Therapy Degree) | 48 | 7 | 21.71 | 1.90 | 41 | 22.37 | 2.19 |

| Total | 109 | 12 | 21.58 | 1.50 | 97 | 23.04 | 5.01 |

| G1 N = 61 | G2 N = 48 | F(1,107) | p | η2 | |

|---|---|---|---|---|---|

| M (SD) | M (SD) | ||||

| Access to practical information | 11.48(4.21) | 68.85(4.74) | 81.97 | 0.00 * | 0.43 |

| Access to information on the quiz-tests | 211.48(8.30) | 76.71(9.35) | 116.25 | 0.00 * | 0.52 |

| Access to project information | 30.11(2.72) | 30.12(3.07) | 0.00 | 0.99 | 0.00 |

| Access to co-evaluation information | 26.64(2.37) | 21.30(2.67) | 2.30 | 0.13 | 0.02 |

| Access to total information | 279.71(11.77) | 196.92(13.26) | 21.81 | 0.00 * | 0.17 |

| G1 N = 60 | G2 N = 45 | F(1,32) | p | η2 | |

|---|---|---|---|---|---|

| M (SD) | M (SD) | ||||

| Learning outcomes in practices | 1.99(0.04) | 1.96(0.10) | 6.02 | 0.02 * | 0.06 |

| Learning outcomes in questionnaires | 2.53(0.38) | 2.61(0.46) | 1.14 | 0.29 | 0.01 |

| Learning outcomes in project development | 2.17(0.24) | 2.14(0.37) | 0.35 | 0.55 | 0.003 |

| Learning outcomes in defence project | 1.82(0.10) | 1.85(0.30) | 0.60 | 0.44 | 0.01 |

| Learning outcomes in co-evaluation | 0.19(0.14) | 0.19(0.14) | 0.01 | 0.94 | 0.00 |

| Learning outcomes Total | 8.70(0.56) | 8.70(1.17) | 0.00 | 0.99 | 0.00 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sáiz-Manzanares, M.C.; Marticorena-Sánchez, R.; Ochoa-Orihuel, J. Effectiveness of Using Voice Assistants in Learning: A Study at the Time of COVID-19. Int. J. Environ. Res. Public Health 2020, 17, 5618. https://doi.org/10.3390/ijerph17155618

Sáiz-Manzanares MC, Marticorena-Sánchez R, Ochoa-Orihuel J. Effectiveness of Using Voice Assistants in Learning: A Study at the Time of COVID-19. International Journal of Environmental Research and Public Health. 2020; 17(15):5618. https://doi.org/10.3390/ijerph17155618

Chicago/Turabian StyleSáiz-Manzanares, María Consuelo, Raúl Marticorena-Sánchez, and Javier Ochoa-Orihuel. 2020. "Effectiveness of Using Voice Assistants in Learning: A Study at the Time of COVID-19" International Journal of Environmental Research and Public Health 17, no. 15: 5618. https://doi.org/10.3390/ijerph17155618

APA StyleSáiz-Manzanares, M. C., Marticorena-Sánchez, R., & Ochoa-Orihuel, J. (2020). Effectiveness of Using Voice Assistants in Learning: A Study at the Time of COVID-19. International Journal of Environmental Research and Public Health, 17(15), 5618. https://doi.org/10.3390/ijerph17155618