Towards Robust Decision-Making for Autonomous Highway Driving Based on Safe Reinforcement Learning

Abstract

1. Introduction

2. Related Works

2.1. Rule Based and Optimization Computation Based for Autonomous Driving Decision Planning

2.2. Machine-Learning-Based Methods for Autonomous Driving Decision Planning

2.3. Research on Safe Reinforcement Learning

3. Problem Statement and Method Framework

3.1. System Model and Problem Statement

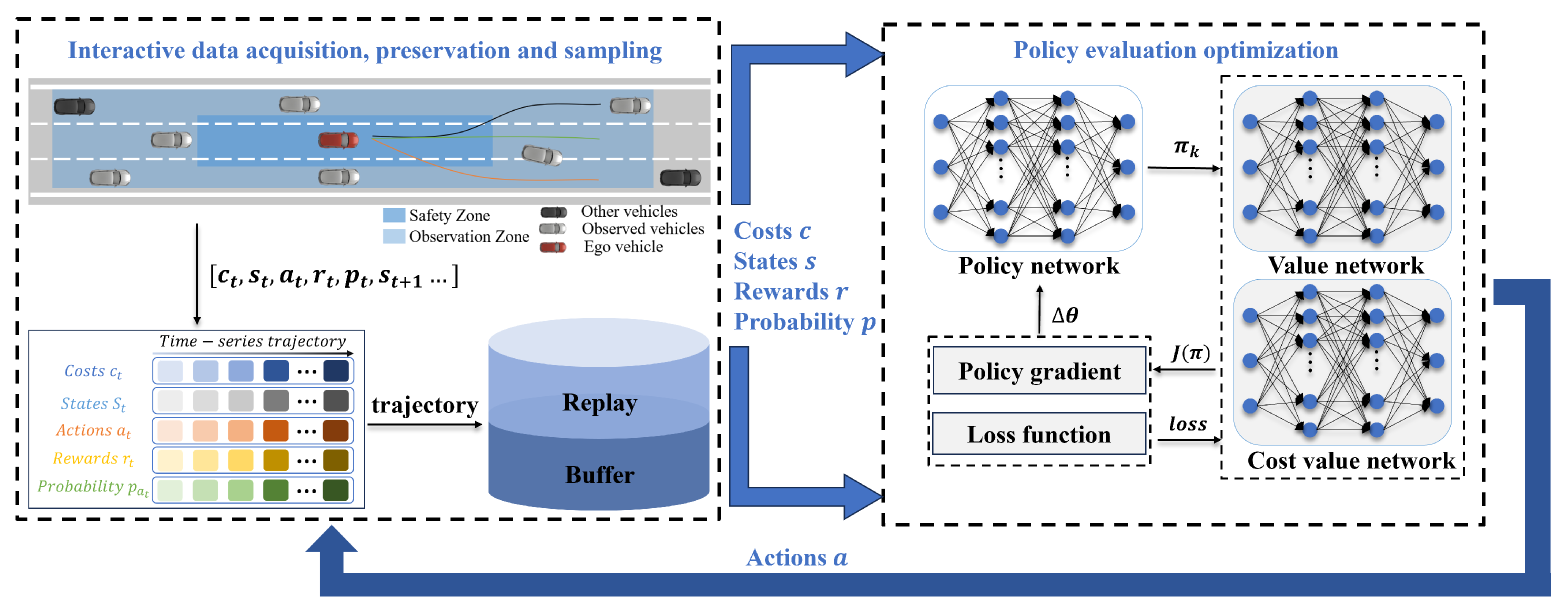

3.2. Framework for RECPO-Based Decision-Making in Autonomous Highway Driving

4. Highway Vehicle Control Transformed into Safe Reinforcement Learning

4.1. Constrained Markov Decision Process

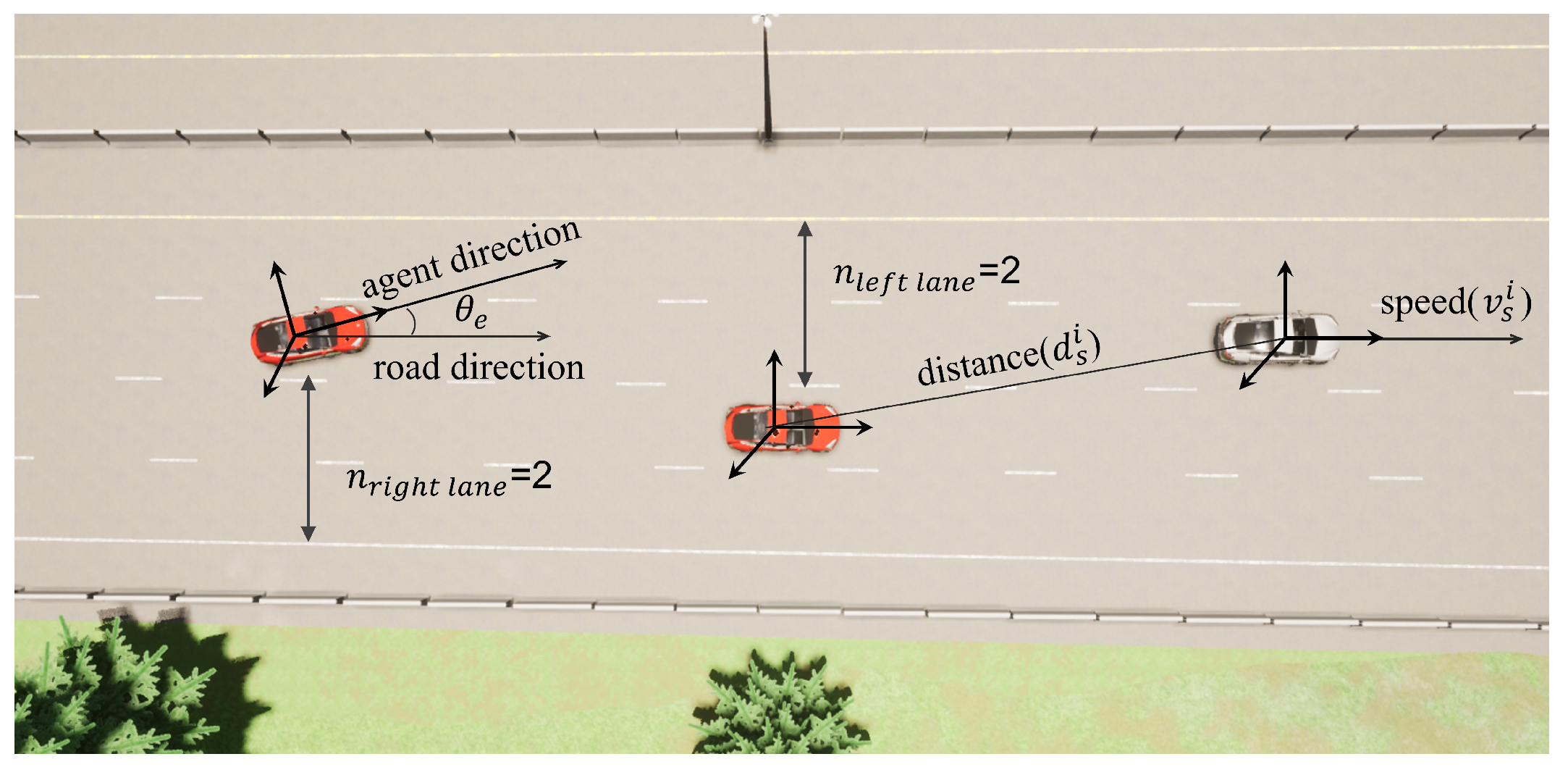

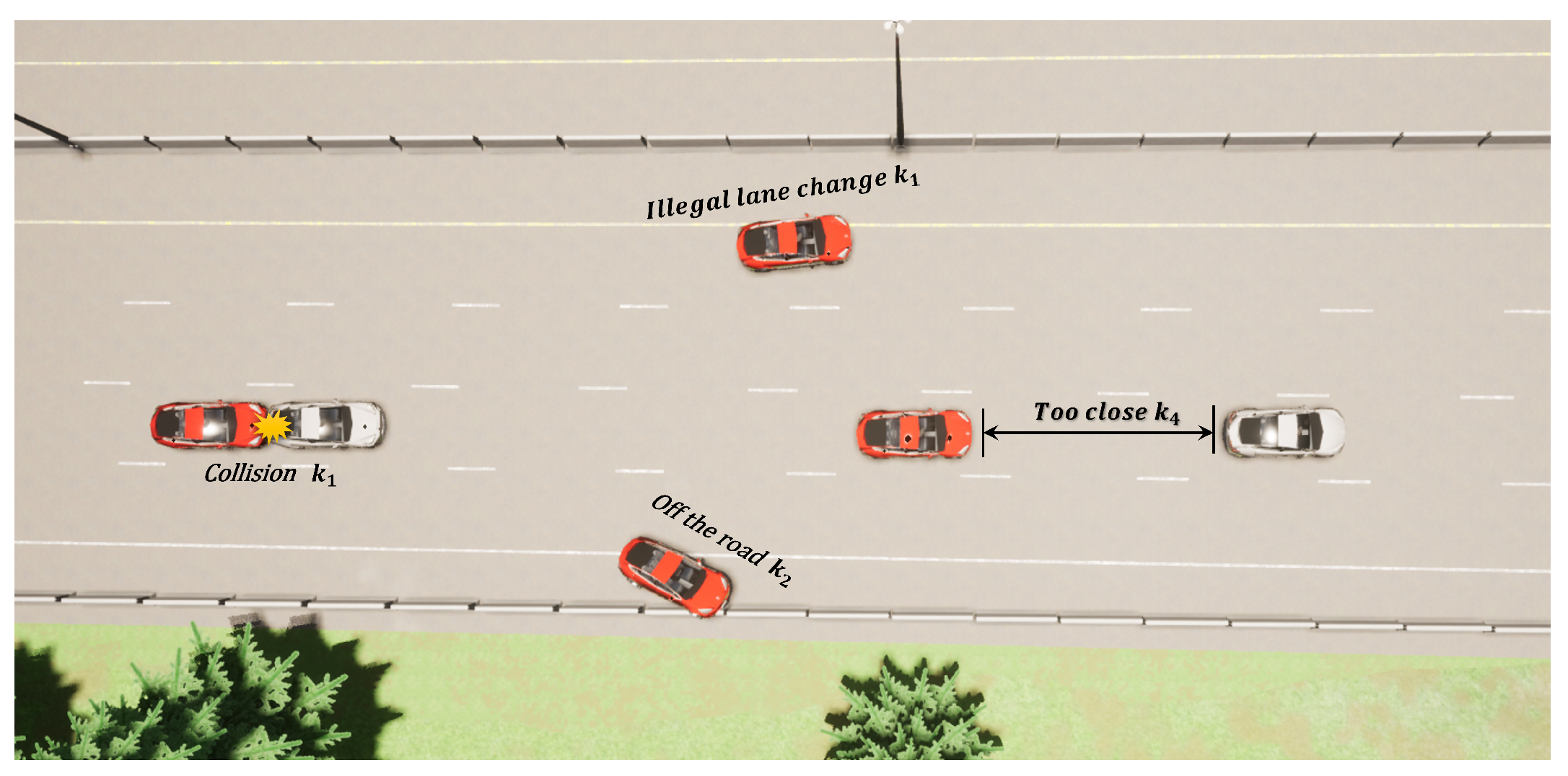

4.2. Convert Decision-Making Problem for Autonomous Highway Driving into a Constrained Markov Decision Problem

5. Autonomous Highway Driving Based on Safe Reinforcement Learning

5.1. Importance Sampling

5.2. Evaluation and Optimization of Policy Networks

5.3. Value Network Parameter Update

5.4. Highway Automatic Driving Algorithm Based on RECPO

| Algorithm 1 Replay Buffer Constrained Policy Optimization |

| Input: Initialize , set for epoch do for done is True do Collect when interacting with the environment in IDAPS Collect , get the feedback reward , cost , done and the probability distribution of the policy network for Obtain trajectory Replay Buff end for for Sampling the trajectory D from the Replay Buffer Calculate importance weight w Calculate advantage function of reward and cost function: Calculate : if or then update policy network as: //see the Equation (28) else if then solve convex dual problem, get solve by backtracking line search, update policy network as: // see the Equation (29) else if then update policy network as: // see the Equation (32) end if Update as: end for end for |

6. Experiment

6.1. Experimental Setup

6.2. Experimental Training Process

6.3. Performance Comparison of RECPO, CPO, DDPG and IDM + MOBIL after Deployment

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Cui, G.; Zhang, W.; Xiao, Y.; Yao, L.; Fang, Z. Cooperative Perception Technology of Autonomous Driving in the Internet of Vehicles Environment: A Review. Sensors 2022, 22, 5535. [Google Scholar] [CrossRef]

- Shan, M.; Narula, K.; Wong, Y.F.; Worrall, S.; Khan, M.; Alexander, P.; Nebot, E. Demonstrations of Cooperative Perception: Safety and Robustness in Connected and Automated Vehicle Operations. Sensors 2021, 21, 200. [Google Scholar] [CrossRef] [PubMed]

- Schiegg, F.A.; Llatser, I.; Bischoff, D.; Volk, G. Collective Perception: A Safety Perspective. Sensors 2021, 21, 159. [Google Scholar] [CrossRef] [PubMed]

- Xiao, W.; Mehdipour, N.; Collin, A.; Bin-Nun, A.; Frazzoli, E.; Duintjer Tebbens, R.; Belta, C. Rule-based Optimal Control for Autonomous Driving. arXiv 2021, arXiv:2101.05709. [Google Scholar]

- Collin, A.; Bilka, A.; Pendleton, S.; Duintjer Tebbens, R. Safety of the Intended Driving Behavior Using Rulebooks. arXiv 2021, arXiv:2105.04472. [Google Scholar]

- Chen, Y.; Bian, Y. Tube-based Event-triggered Path Tracking for AUV against Disturbances and Parametric Uncertainties. Electronics 2023, 12, 4248. [Google Scholar] [CrossRef]

- Seccamonte, F.; Kabzan, J.; Frazzoli, E. On Maximizing Lateral Clearance of an Autonomous Vehicle in Urban Environments. arXiv 2019, arXiv:1909.00342. [Google Scholar]

- Zheng, L.; Yang, R.; Peng, Z.; Liu, H.; Wang, M.Y.; Ma, J. Real-Time Parallel Trajectory Optimization with Spatiotemporal Safety Constraints for Autonomous Driving in Congested Traffic. arXiv 2023, arXiv:2309.05298. [Google Scholar]

- Yang, K.; Tang, X.; Qiu, S.; Jin, S.; Wei, Z.; Wang, H. Towards Robust Decision-Making for Autonomous Driving on Highway. IEEE Trans. Veh. Technol. 2023, 72, 11251–11263. [Google Scholar] [CrossRef]

- Sutton, R.S. Learning to Predict by the Methods of Temporal Differences. Mach. Learn. 1988, 3, 9–44. [Google Scholar] [CrossRef]

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; Graves, A.; Antonoglou, I.; Wierstra, D.; Riedmiller, M. Playing Atari with Deep Reinforcement Learning. arXiv 2013, arXiv:1312.5602. [Google Scholar]

- Lillicrap, T.P.; Hunt, J.J.; Pritzel, A.; Heess, N.; Erez, T.; Tassa, Y.; Silver, D.; Wierstra, D. Continuous Control with Deep Reinforcement Learning. arXiv 2015, arXiv:1509.02971. [Google Scholar]

- Li, Y.; Li, Y.; Poh, L. Deep Reinforcement Learning for Autonomous Driving. arXiv 2018, arXiv:1811.11329. [Google Scholar]

- Kiran, B.R.; Sobh, I.; Talpaert, V.; Mannion, P.; Al Sallab, A.A.; Yogamani, S.; Pérez, P. Deep Reinforcement Learning for Autonomous Driving: A Survey. arXiv 2020, arXiv:2002.00444. [Google Scholar] [CrossRef]

- Maramotti, P.; Capasso, A.P.; Bacchiani, G.; Broggi, A. Tackling real-world autonomous driving using deep reinforcement learning. In Proceedings of the 2022 IEEE Intelligent Vehicles Symposium (IV), Aachen, Germany, 4–9 June 2022. [Google Scholar]

- Zhu, W.; Miao, J.; Hu, J.; Qing, L. An Empirical Study of DDPG and PPO-Based Reinforcement Learning Algorithms for Autonomous Driving. IEEE Access 2020, 11, 125094–125108. [Google Scholar]

- Fu, Y.; Li, C.; Yu, F.R.; Luan, T.H.; Zhang, Y. A decision-making strategy for vehicle autonomous braking in emergency via deep reinforcement learning. IEEE Trans. Veh. Technol. 2020, 69, 5876–5888. [Google Scholar] [CrossRef]

- Hoel, C.-J.; Wolff, K.; Laine, L. Automated speed and lane change decision making using deep reinforcement learning. In Proceedings of the 21st International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018; pp. 2148–2155. [Google Scholar]

- Ho, S.S. Complementary and competitive framing of driverless cars: Framing effects, attitude volatility, or attitude resistance? Int. J. Public Opin. Res. 2021, 33, 512–531. [Google Scholar] [CrossRef]

- Ju, Z.; Zhang, H.; Li, X.; Chen, X.; Han, J.; Yang, M. A survey on attack detection and resilience for connected and automated vehicles: From vehicle dynamics and control perspective. IEEE Trans. Intell. Veh. 2022, 7, 815–837. [Google Scholar] [CrossRef]

- Tamar, A.; Xu, H.; Mannor, S. Scaling up robust mdps by reinforcement learning. arXiv 2013, arXiv:1306.6189. [Google Scholar]

- Geibel, P.; Wysotzki, F. Risk-sensitive reinforcement learning applied to control under constraints. J. Artif. Intell. Res. 2005, 24, 81–108. [Google Scholar] [CrossRef]

- Moldovan, T.M.; Abbeel, P. Safe exploration in markov decision processes. arXiv 2012, arXiv:1205.4810. [Google Scholar]

- Zhao, T.; Yurtsever, E.; Paulson, J.A.; Rizzoni, G. Formal certification methods for automated vehicle safety assessment. IEEE Trans. Intell. Veh. 2023, 8, 232–249. [Google Scholar] [CrossRef]

- Tang, X.; Zhang, Z.; Qin, Y. On-road object detection and tracking based on radar and vision fusion: A review. IEEE Intell. Transp. Syst. Mag. 2021, 14, 103–128. [Google Scholar] [CrossRef]

- Chen, J.; Shuai, Z.; Zhang, H.; Zhao, W. Path following control of autonomous four-wheel-independent-drive electric vehicles via second-order sliding mode and nonlinear disturbance observer techniques. IEEE Trans. Ind. Electron. 2021, 68, 2460–2469. [Google Scholar] [CrossRef]

- Achiam, J.; Held, D.; Tamar, A.; Abbeel, P. Constrained Policy Optimization. arXiv 2017, arXiv:1705.10528. [Google Scholar]

- Altman, E. Constrained Markov Decision Processes: Stochastic Modeling; Routledge: London, UK, 1999. [Google Scholar]

- Hu, X.; Chen, P.; Wen, Y.; Tang, B.; Chen, L. Long and Short-Term Constraints Driven Safe Reinforcement Learning for Autonomous Driving. arXiv 2024, arXiv:2403.18209. [Google Scholar]

- Dulac-Arnold, G.; Mankowitz, D.J.; Hester, T. Challenges of Real-World Reinforcement Learning. arXiv 2019, arXiv:1904.12901. [Google Scholar]

- Levine, S.; Kumar, V.; Tucker, G.; Fu, J. Open Problems and Fundamental Limitations of Reinforcement Learning from Human Feedback. arXiv 2023, arXiv:2307.15217. [Google Scholar]

- Bae, S.H.; Joo, S.H.; Pyo, J.W.; Yoon, J.S.; Lee, K.; Kuc, T.Y. Finite State Machine based Vehicle System for Autonomous Driving in Urban Environments. In Proceedings of the 2020 20th International Conference on Control, Automation and Systems (ICCAS), Busan, Republic of Korea, 13–16 October 2020; pp. 1181–1186. [Google Scholar] [CrossRef]

- Fan, H.; Zhu, F.; Liu, C.; Shen, L. Baidu Apollo EM Motion Planner for Autonomous Driving: Principles, Algorithms, and Performance. IEEE Intell. Transp. Syst. Mag. 2020, 12, 124–138. [Google Scholar]

- Urmson, C.; Anhalt, J.; Bagnell, D.; Baker, C.; Bittner, R.; Clark, M.N.; Dolan, J.; Duggins, D.; Galatali, T.; Geyer, C.; et al. Autonomous driving in urban environments: Boss and the Urban Challenge. J. Field Robot. 2008, 25, 425–466. [Google Scholar] [CrossRef]

- Treiber, M.; Kesting, A. Traffic Flow Dynamics: Data, Models and Simulation; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Vanholme, B.; De Winter, J.; Egardt, B. Integrating autonomous and assisted driving through a flexible haptic interface. IEEE Intell. Transp. Syst. Mag. 2013, 5, 42–54. [Google Scholar]

- Ferguson, D.; Stentz, A. Using interpolation to improve path planning: The Field D* algorithm. J. Field Robot. 2008, 23, 79–101. [Google Scholar] [CrossRef]

- Paden, B.; Čáp, M.; Yong, S.Z.; Yershov, D.; Frazzoli, E. A survey of motion planning and control techniques for self-driving urban vehicles. IEEE Trans. Intell. Veh. 2016, 1, 33–55. [Google Scholar] [CrossRef]

- Liu, C.; Lee, S.; Varnhagen, S.; Tseng, H.E. Path planning for autonomous vehicles using model predictive control. In Proceedings of the 2017 IEEE Intelligent Vehicles Symposium (IV), Los Angeles, CA, USA, 11–14 June 2017; pp. 174–179. [Google Scholar] [CrossRef]

- Thrun, S.; Montemerlo, M.; Dahlkamp, H.; Stavens, D.; Aron, A.; Diebel, J.; Fong, P.; Gale, J.; Halpenny, M.; Hoffmann, G.; et al. Stanley: The robot that won the DARPA Grand Challenge. J. Field Robot. 2006, 23, 661–692. [Google Scholar] [CrossRef]

- Tang, X.; Huang, B.; Liu, T.; Lin, X. Highway decision-making and motion planning for autonomous driving via soft actor-critic. IEEE Trans. Veh. Technol. 2022, 71, 4706–4717. [Google Scholar] [CrossRef]

- Dosovitskiy, A.; Ros, G.; Codevilla, F.; Lopez, A.; Koltun, V. CARLA: An Open Urban Driving Simulator. In Proceedings of the 1st Annual Conference on Robot Learning, Mountain View, CA, USA, 13–15 November 2017. [Google Scholar]

- Shalev-Shwartz, S.; Shammah, S.; Shashua, A. Safe, Multi-Agent, Reinforcement Learning for Autonomous Driving. arXiv 2016, arXiv:1610.03295. [Google Scholar]

- Pan, Y.; Cheng, C.-A.; Saigol, K.; Lee, K.; Yan, X.; Theodorou, E.; Boots, B. Agile Autonomous Driving using End-to-End Deep Imitation Learning. arXiv 2017, arXiv:1709.07174. [Google Scholar]

- Fulton, N.; Platzer, A. Safe Reinforcement Learning via Formal Methods: Toward Safe Control Through Proof and Learning. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018; Volume 32, pp. 6485–6492. [Google Scholar] [CrossRef]

- Cao, Z.; Xu, S.; Peng, H.; Yang, D.; Zidek, R. Confidence-Aware Reinforcement Learning for Self-Driving Cars. IEEE Trans. Intell. Transp. Syst. 2022, 23, 7419–7430. [Google Scholar] [CrossRef]

- Tian, R.; Sun, L.; Bajcsy, A.; Tomizuka, M.; Dragan, A.D. Safety assurances for human–robot interaction via confidence-aware game-theoretic human models. In Proceedings of the 2022 International Conference on Robotics and Automation (ICRA), Philadelphia, PA, USA, 23–27 May 2022; pp. 11229–11235. [Google Scholar]

- Wen, L.; Duan, J.; Li, S.E.; Xu, S.; Peng, H. Safe Reinforcement Learning for Autonomous Vehicles through Parallel Constrained Policy Optimization. arXiv 2020, arXiv:2003.01303. [Google Scholar]

- Xu, H.; Zhan, X.; Zhu, X. Constraints Penalized Q-learning for Safe Offline Reinforcement Learning. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 22 February–1 March 2022; Volume 36, pp. 8753–8760. [Google Scholar] [CrossRef]

- Zhang, Q.; Zhang, L.; Xu, H.; Shen, L.; Wang, B.; Chang, Y.; Wang, X.; Yuan, B.; Tao, D. SaFormer: A Conditional Sequence Modeling Approach to Offline Safe Reinforcement Learning. arXiv 2023, arXiv:2301.12203. [Google Scholar]

- Treiber, M.; Hennecke, A.; Helbing, D. Congested Traffic States in Empirical Observations and Microscopic Simulations. Phys. Rev. E 2000, 62, 1805–1824. [Google Scholar] [CrossRef] [PubMed]

- Treiber, M.; Kesting, A. Modeling lane-changing decisions with MOBIL. In Traffic and Granular Flow’07; Springer: Berlin/Heidelberg, Germany, 2009; pp. 211–221. [Google Scholar]

| Parameters | Value |

|---|---|

| CARLA Simulator | - |

| Time step | 0.05 s |

| Maximum number of training epochs and tiem steps | 5000, 100 |

| Total length of road and simulated road length | 10 km, 1 km |

| Speed limit | [17 m/s, 30 m/s] |

| Acceleration limit | <3 m/s2 |

| Lane width | 3.5 m |

| Number of lanes and vehicles | 3, 24 |

| RECPO & CPO | - |

| Discount factor and | 0.9, 0.97 |

| lr for reward and cost | 1 × →0 |

| Number of hidden layer and hidden layer neuron | 2, 128 |

| Distance of safe , observation and target waypoint | 30 m, 50 m, 2 m |

| Weight of cost function | 45, 50, 5, 5 |

| Weight of reward function | 2, 1, 50 |

| Replay Buffer size | 20,480 |

| DDPG | - |

| lr for actor and critic | 1 × →0 |

| Optimizer of actor and critic | Adam |

| Number of hidden layer | 2 |

| Number of hidden layer1 and layer2 neuron | 400, 300 |

| Discount factor | 0.99 |

| Replay Buffer size | 1,000,000 |

| Methods | Average Speed | Speed Standard Deviation |

|---|---|---|

| IDM + MOBIL | 24.81 | 5.95 |

| RECPO | 27.52 | 0.88 |

| CPO | 27.28 | 1.73 |

| DDPG | 44.57 | 19.41 |

| Methods | Average Acceleration | Acceleration Standard Deviation | Average Jerk |

|---|---|---|---|

| IDM + MOBIL | −0.0321 | 3.25 | −0.171 |

| RECPO | 0.084 | 0.75 | −0.098 |

| CPO | 0.21 | 0.85 | −0.058 |

| DDPG | 3.14 | 0.98 | −0.7 |

| Methods | Success Rate | Safe Distance Trigger | Average Front Vehicle Distance |

|---|---|---|---|

| IDM + MOBIL | 100% | 48 | 17.73 |

| RECPO | 100% | 0 | 58.85 |

| CPO | 100% | 0 | 48.53 |

| DDPG | 4% | 1 | 32.35 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhao, R.; Chen, Z.; Fan, Y.; Li, Y.; Gao, F. Towards Robust Decision-Making for Autonomous Highway Driving Based on Safe Reinforcement Learning. Sensors 2024, 24, 4140. https://doi.org/10.3390/s24134140

Zhao R, Chen Z, Fan Y, Li Y, Gao F. Towards Robust Decision-Making for Autonomous Highway Driving Based on Safe Reinforcement Learning. Sensors. 2024; 24(13):4140. https://doi.org/10.3390/s24134140

Chicago/Turabian StyleZhao, Rui, Ziguo Chen, Yuze Fan, Yun Li, and Fei Gao. 2024. "Towards Robust Decision-Making for Autonomous Highway Driving Based on Safe Reinforcement Learning" Sensors 24, no. 13: 4140. https://doi.org/10.3390/s24134140

APA StyleZhao, R., Chen, Z., Fan, Y., Li, Y., & Gao, F. (2024). Towards Robust Decision-Making for Autonomous Highway Driving Based on Safe Reinforcement Learning. Sensors, 24(13), 4140. https://doi.org/10.3390/s24134140