The LIBRA NeuroLimb: Hybrid Real-Time Control and Mechatronic Design for Affordable Prosthetics in Developing Regions

Abstract

:1. Introduction

2. Materials and Methods

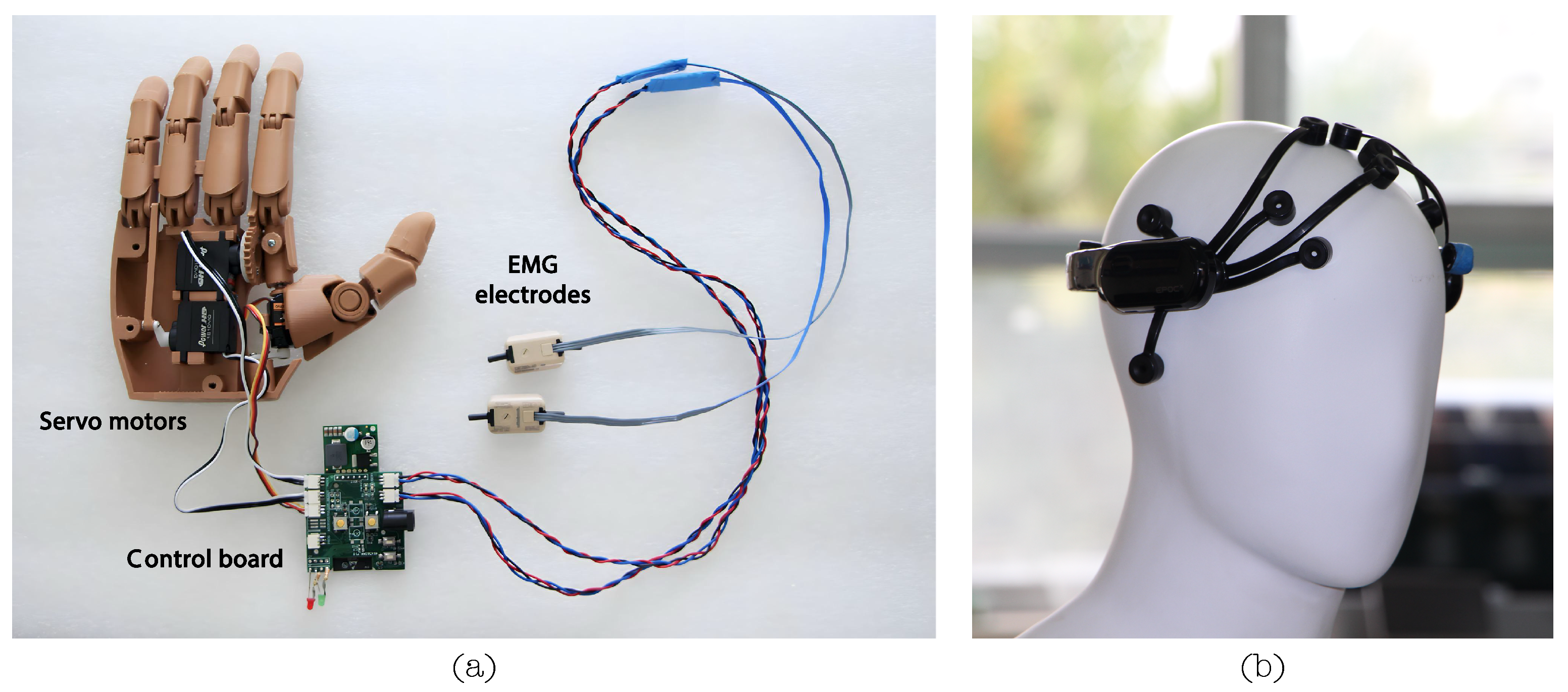

2.1. Mechatronic Prosthetic Design

2.1.1. Design Objectives and Requirements

2.1.2. Enabling Technologies and Design Decisions

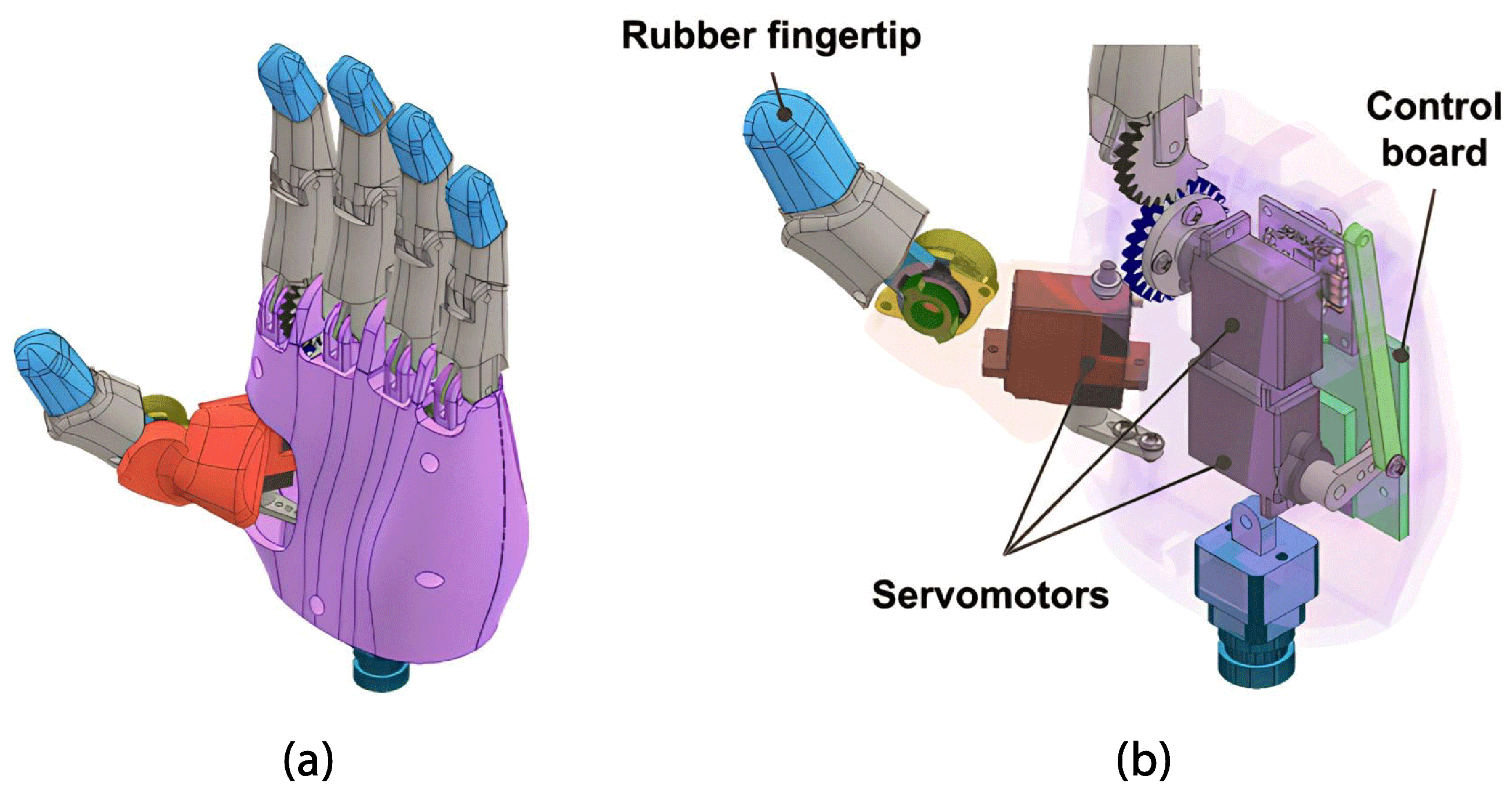

2.1.3. Hand Module

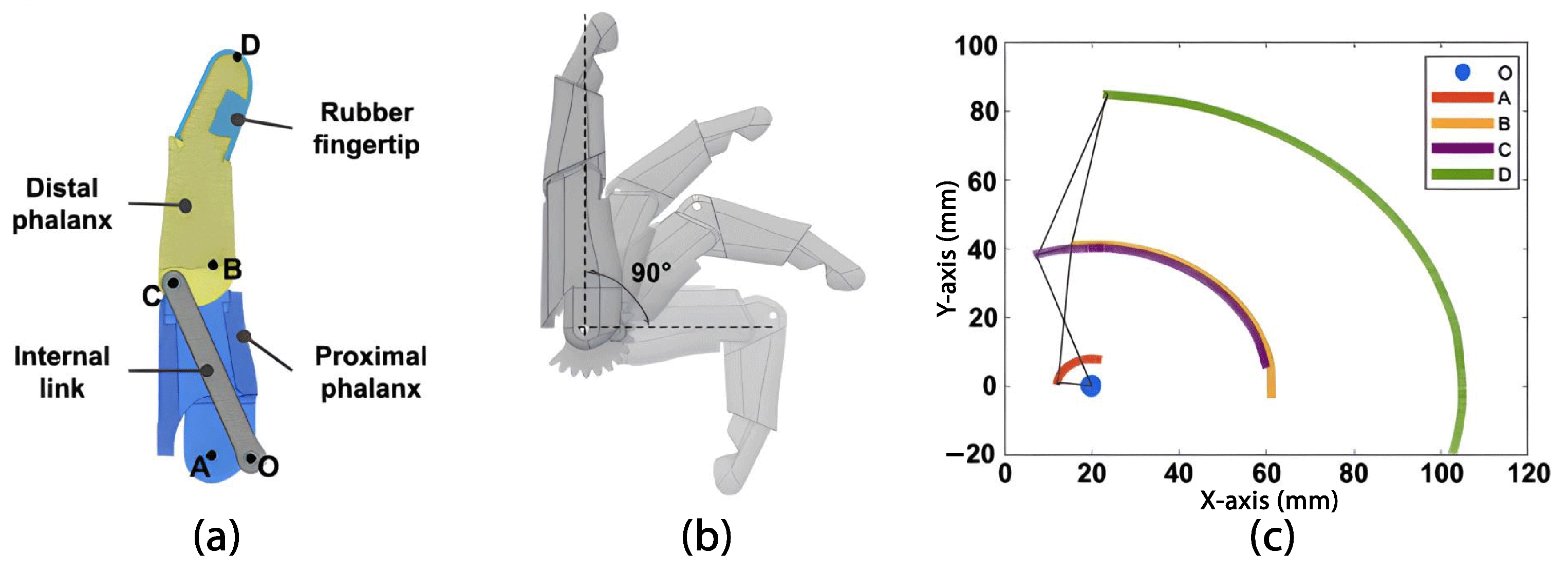

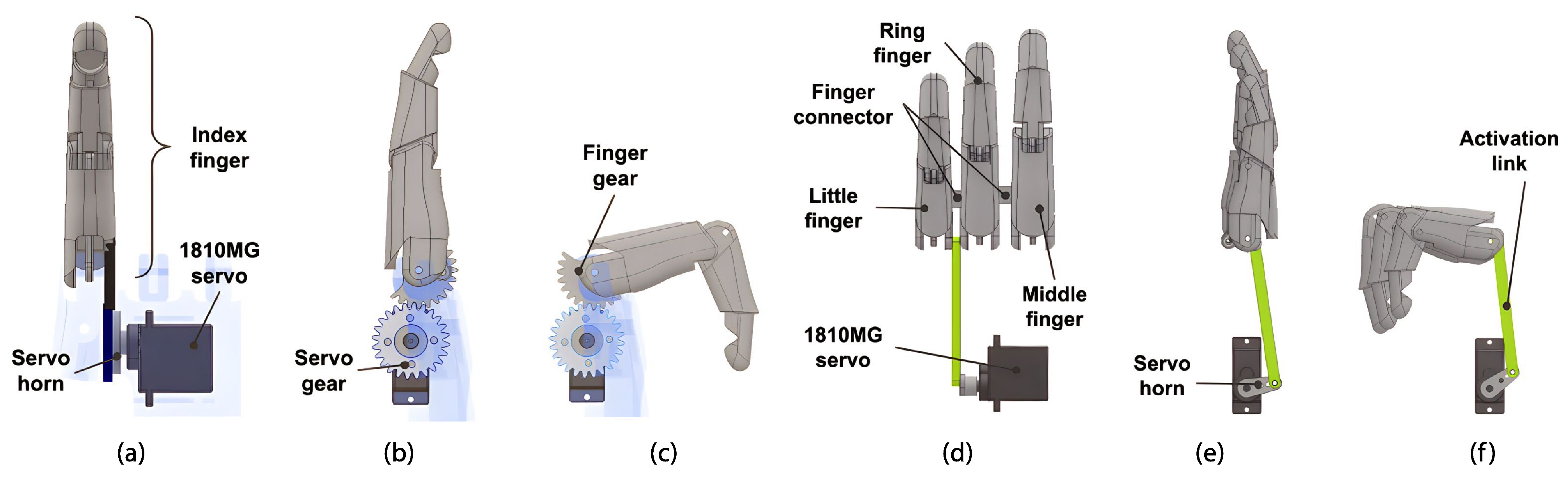

Fingers

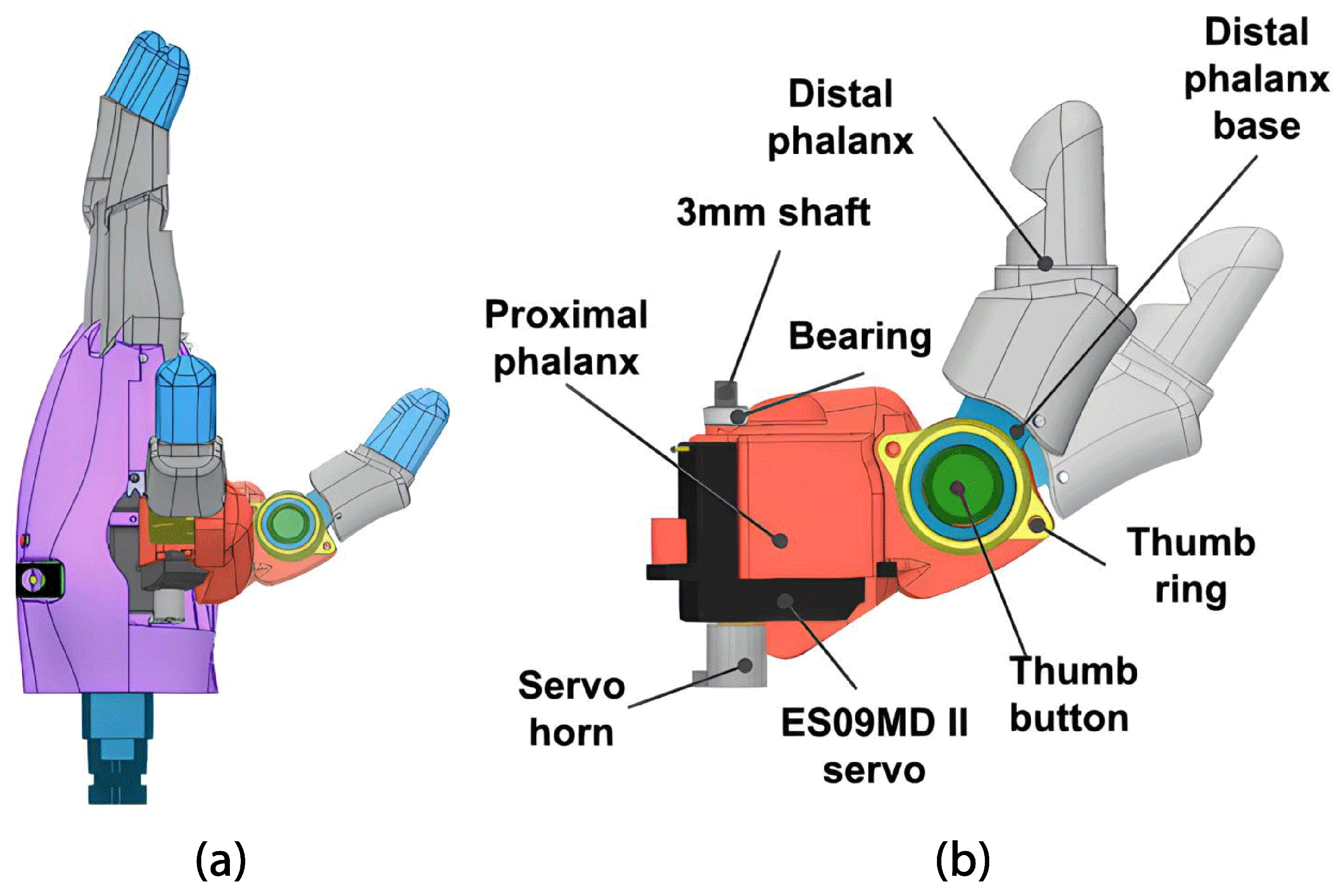

Thumb

Palm

2.1.4. Forearm Module

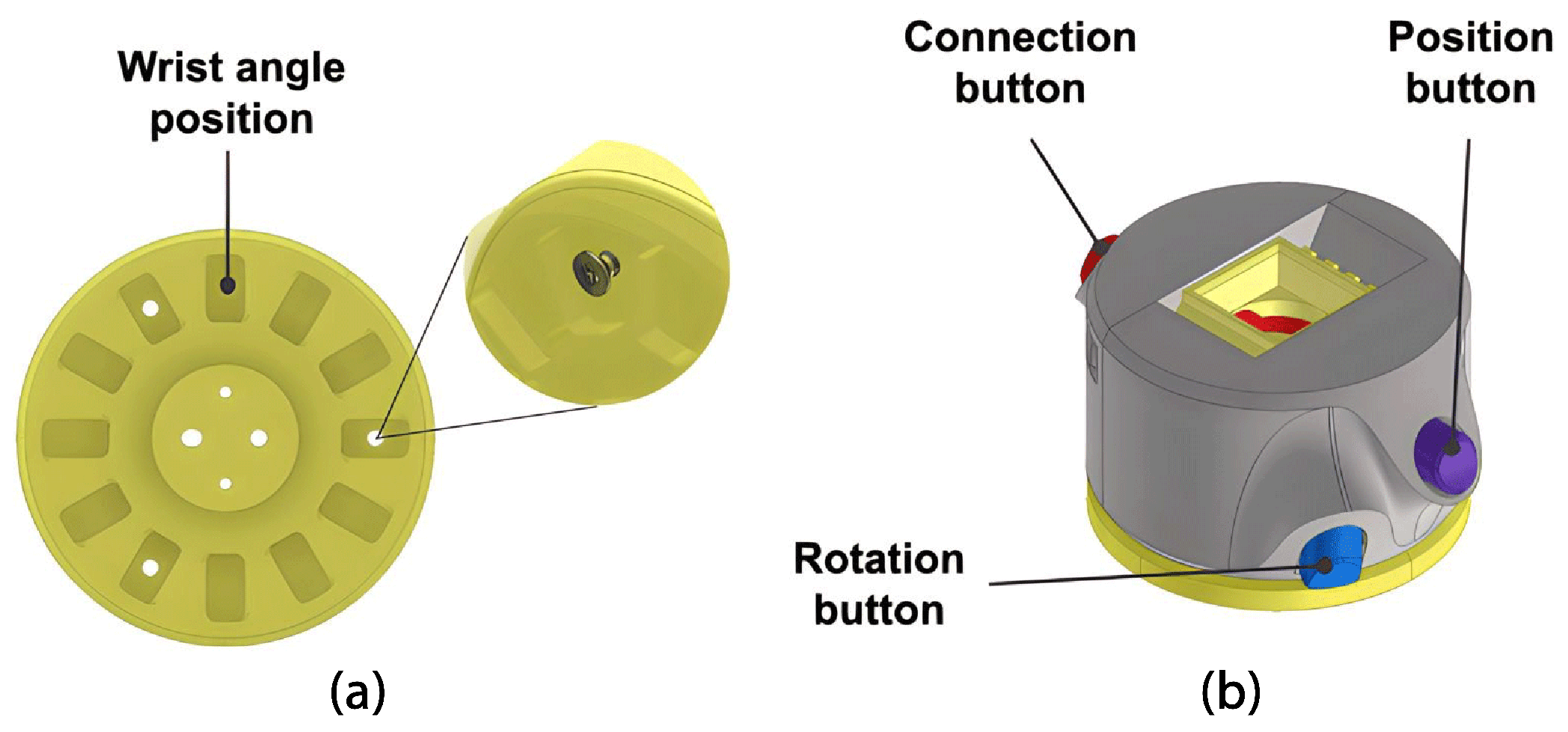

Wrist

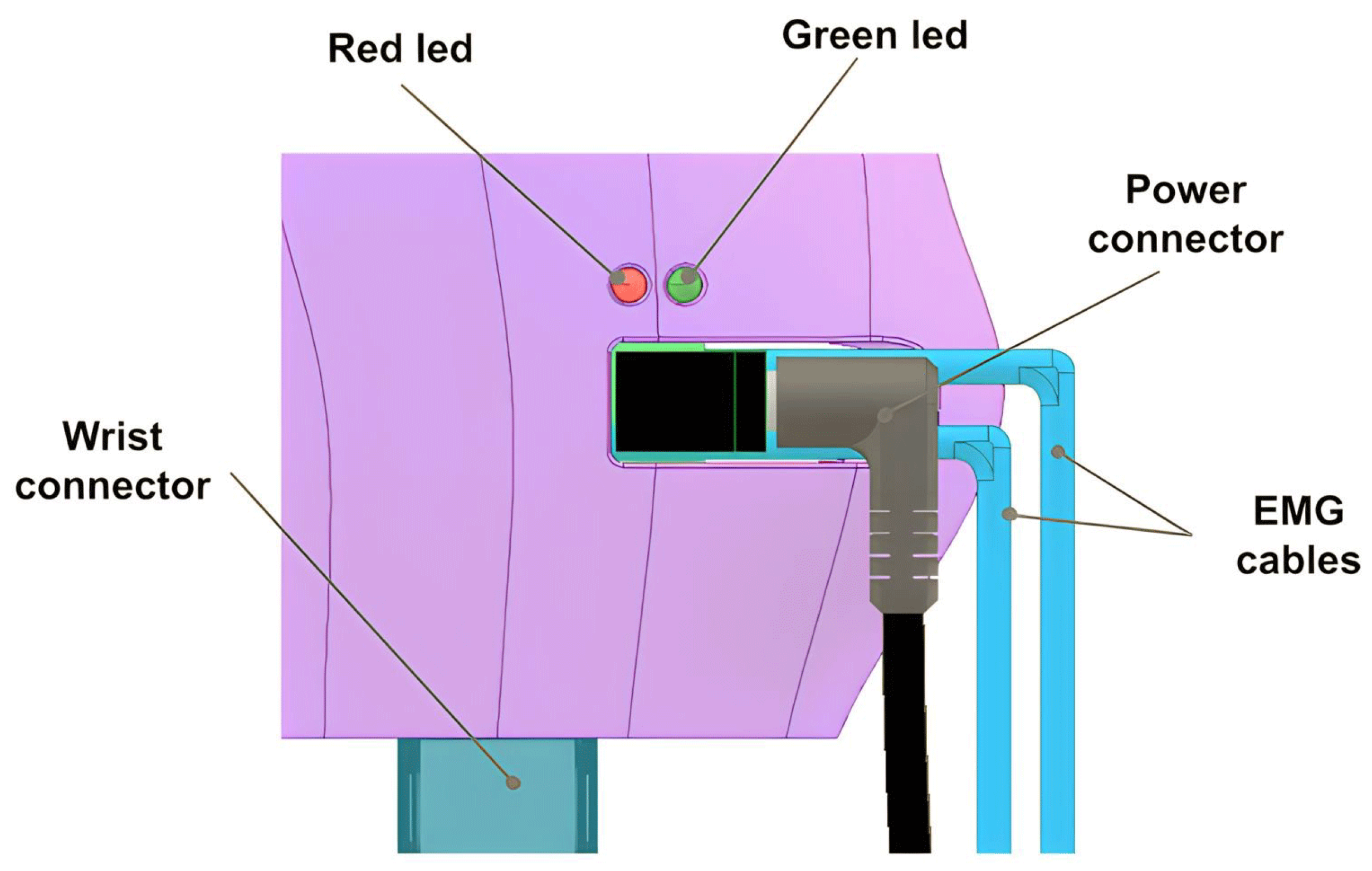

Forearm

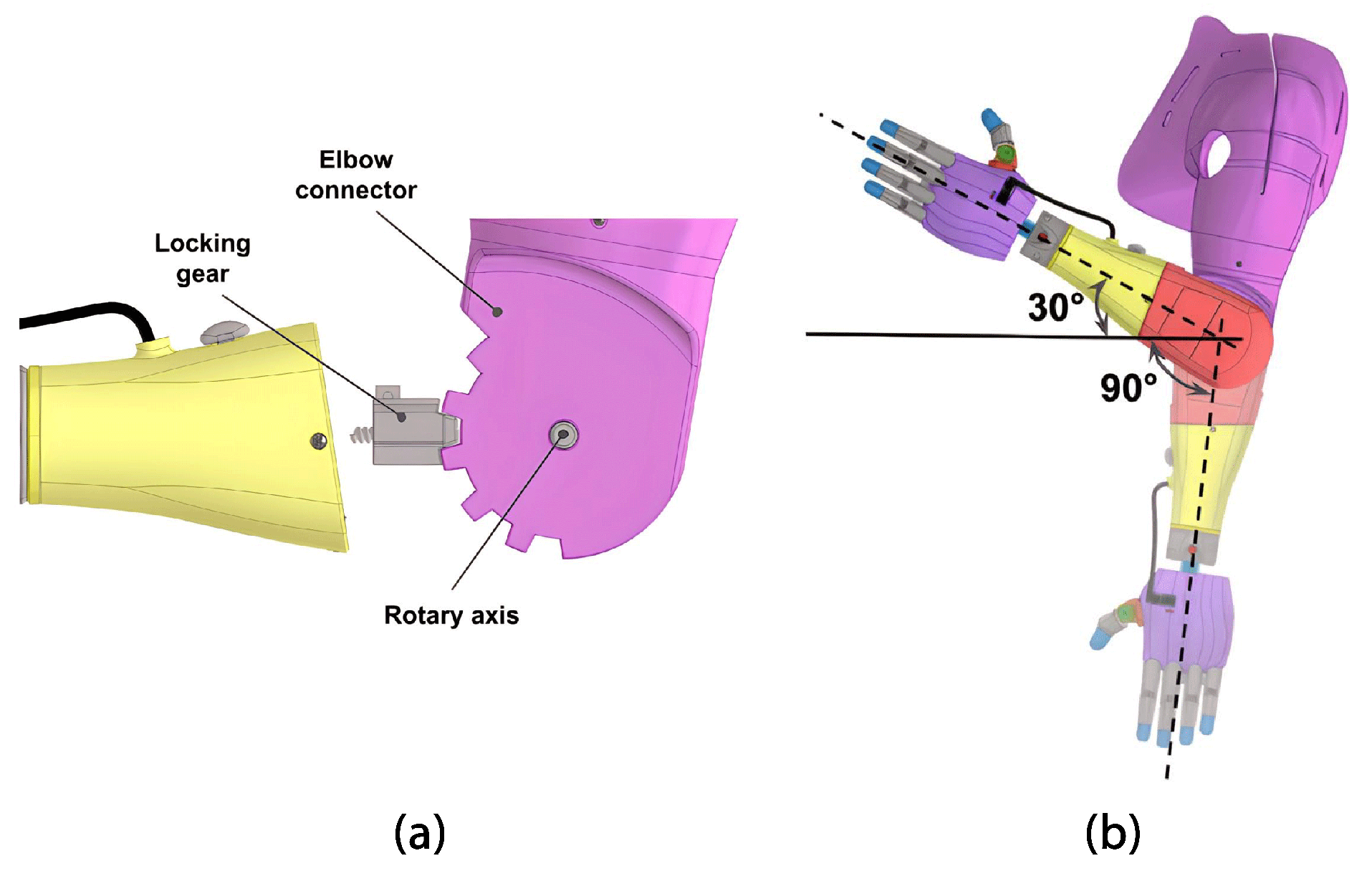

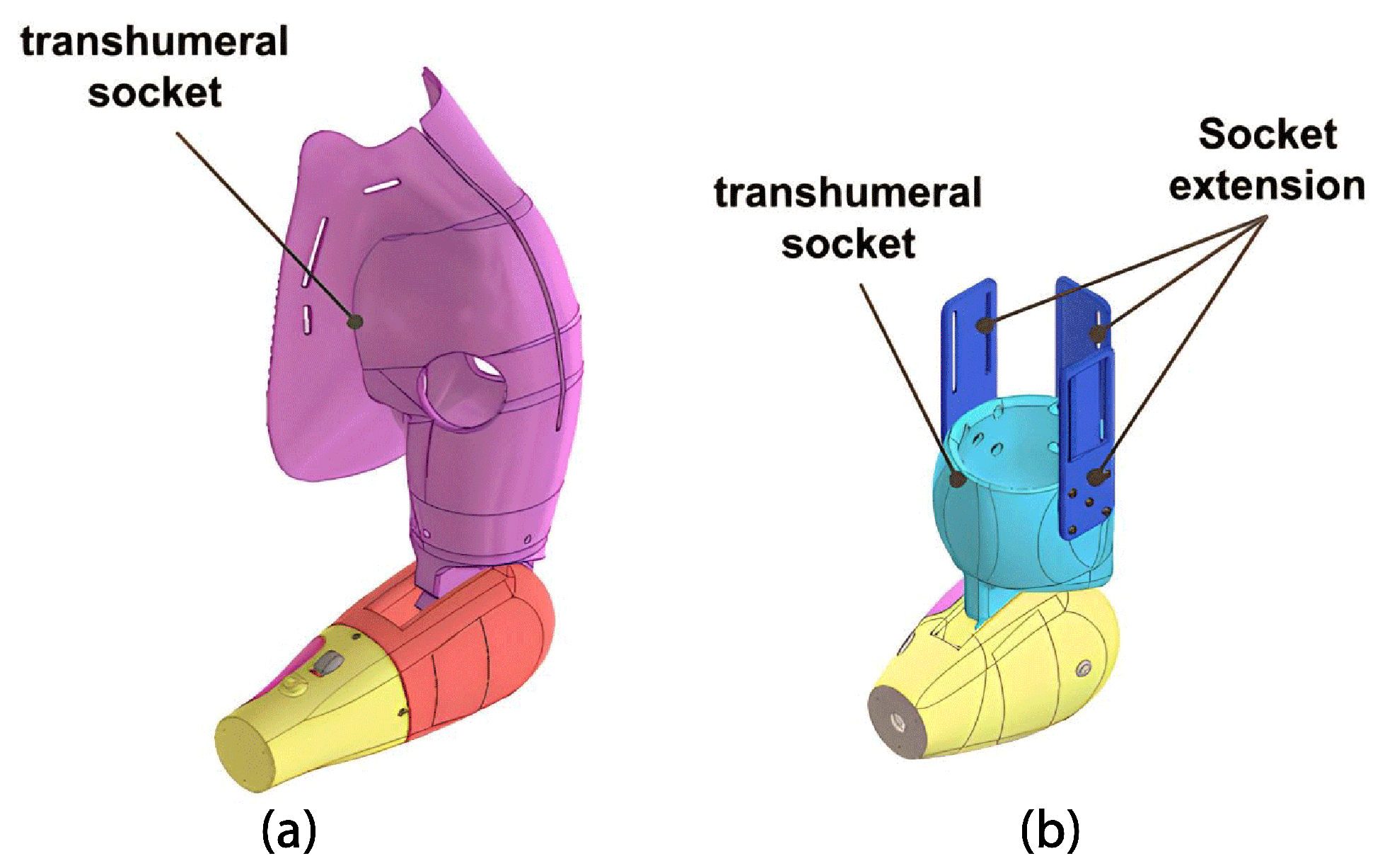

2.1.5. Arm Module

2.2. System Architecture

2.2.1. Electrical System

2.2.2. Control System

- Threading: The ‘threading’ library manages concurrent real-time data handling and communication tasks;

- Multiprocessing: ‘Multiprocessing’ facilitates parallel task execution, which is crucial for coordinating concurrent processes, especially in real-time data acquisition and control;

- Socket: ‘Socket’ enables network communication, ensuring seamless data exchange and integration with external devices;

- NumPy and Pandas: These libraries are essential for multidimensional data operations, manipulation, and processing, forming the foundation of efficient data handling;

- SciPy: ‘SciPy’ manages statistical and signal processing operations, augmenting data analysis and signal manipulation capabilities;

- pythonosc and argparse: These libraries enable communication via OSC and support interactions with external systems and devices;

- Scikit-Learn (sklearn): ‘Scikit-Learn’ empowers the code to efficiently perform classification and data processing tasks, offering a wide range of capabilities, including real-time data handling and classification.

2.3. Hybrid Classification System

2.3.1. Data Acquisition Protocol

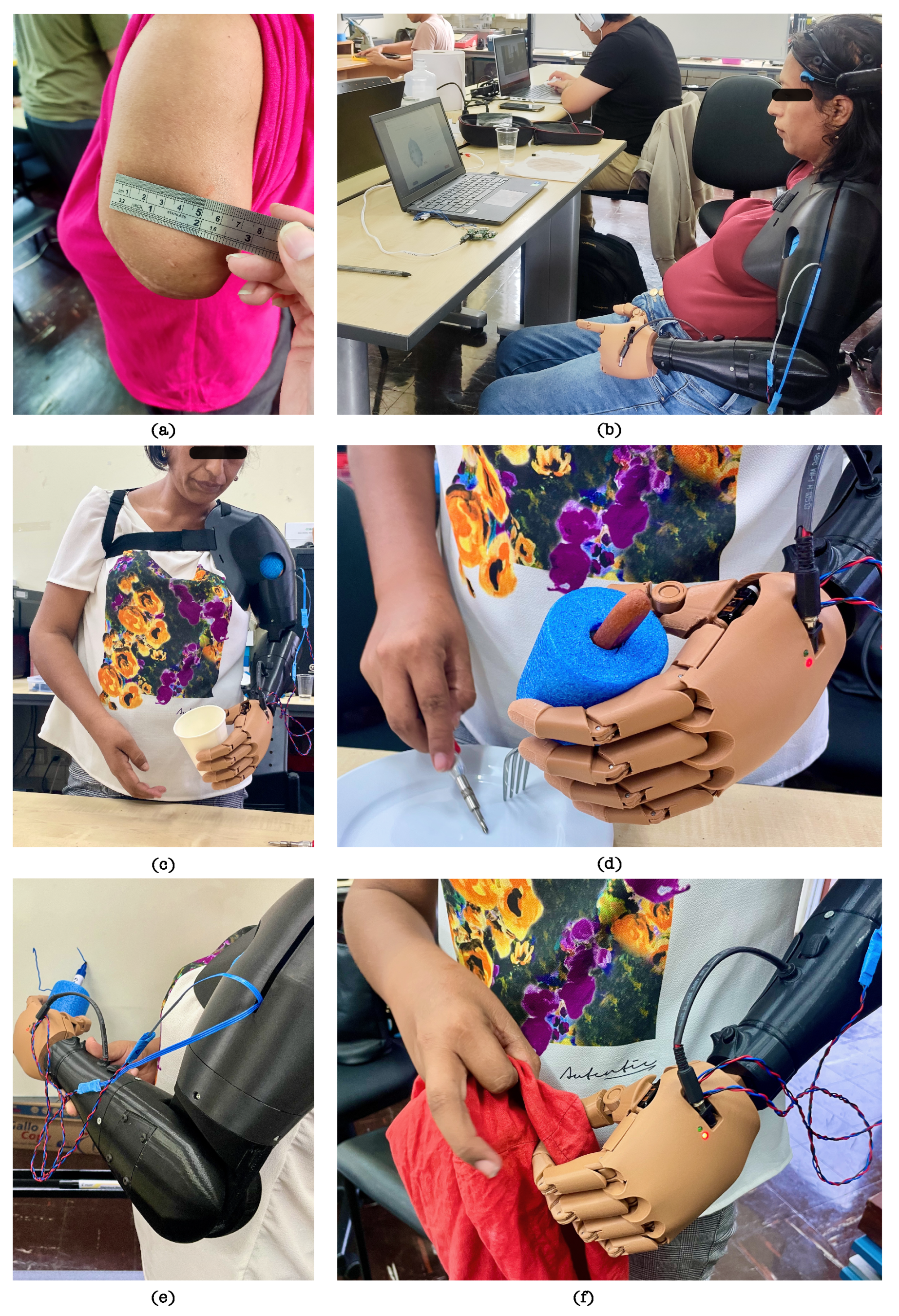

Participant Description

Session Setup and Sensor Placement

Experimental Procedure

2.3.2. Hybrid Classification Model

Preprocessing

Feature Extraction and Feature Selection

Classification Model and Feature Selection

2.4. Prosthesis Functional Assessment Protocol

- Completion of sub-task: Grades the extent of completion of all sub-tasks of the activity. A score of 0 points signifies that the user was unable to complete the sub-task;

- Speed of completion of the entire activity. Grades the speed of task performance compared to performance with a sound limb;

- Movement quality: Grades the amount of awkwardness or compensatory movements resulting in/from lack of prepositioning, device limitations, lack of skilled use, or any other reason;

- Skillfulness of prosthetic use. Grades the type of use (no active use, use as a stabilizer, assist, or prime mover) and control voluntary grip functions;

- Independence. Grades the use of assistive devices or adaptive equipment.

- a.

- Mental command: switching from deactivated to activated mode.

- b.

- Muscular command: selection of gesture for cylindrical type grip.

- c.

- Bring the extremity of their arm closer so that the hand is positioned around the cup.

- d.

- Muscular command: first flexion of the bicep. This flexion activates the grip.

- e.

- Mental command: switch from activated mode to deactivated mode. The grasping gesture is maintained.

- f.

- Activate the passive DOF at the wrist and elbow to achieve the desired position.

- g.

- Bring the arm towards the face to drink from the cup.

- h.

- Place the empty cup back on the table by the activating passive DOF at the wrist and elbow.

- i.

- Mental command: switch from deactivated mode to activated mode.

- j.

- Muscular command: second flexion of the biceps. This flexion activates the extension of the fingers.

- k.

- Mental command: switch to deactivated mode.

3. Results

3.1. Mechatronic Prosthetic Performance

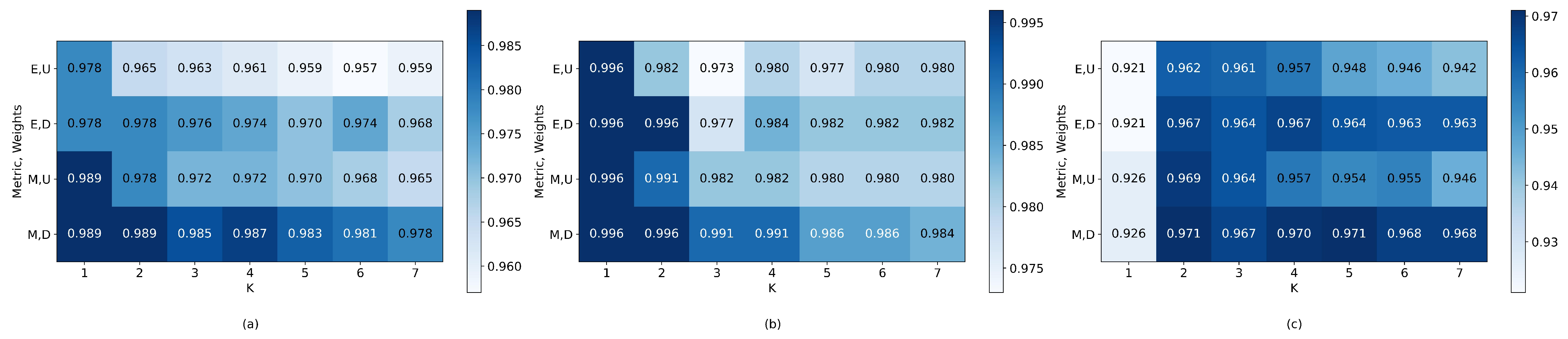

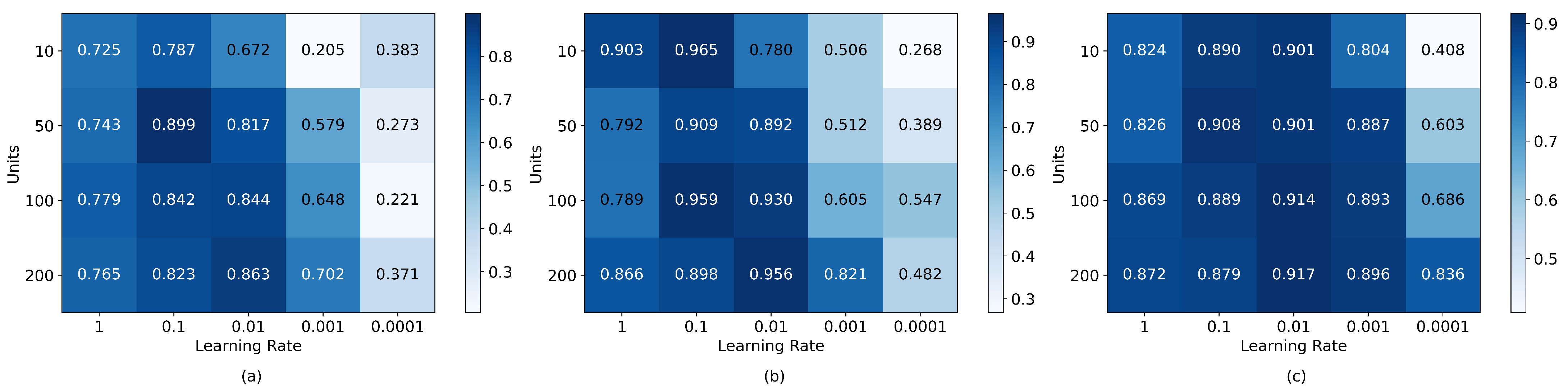

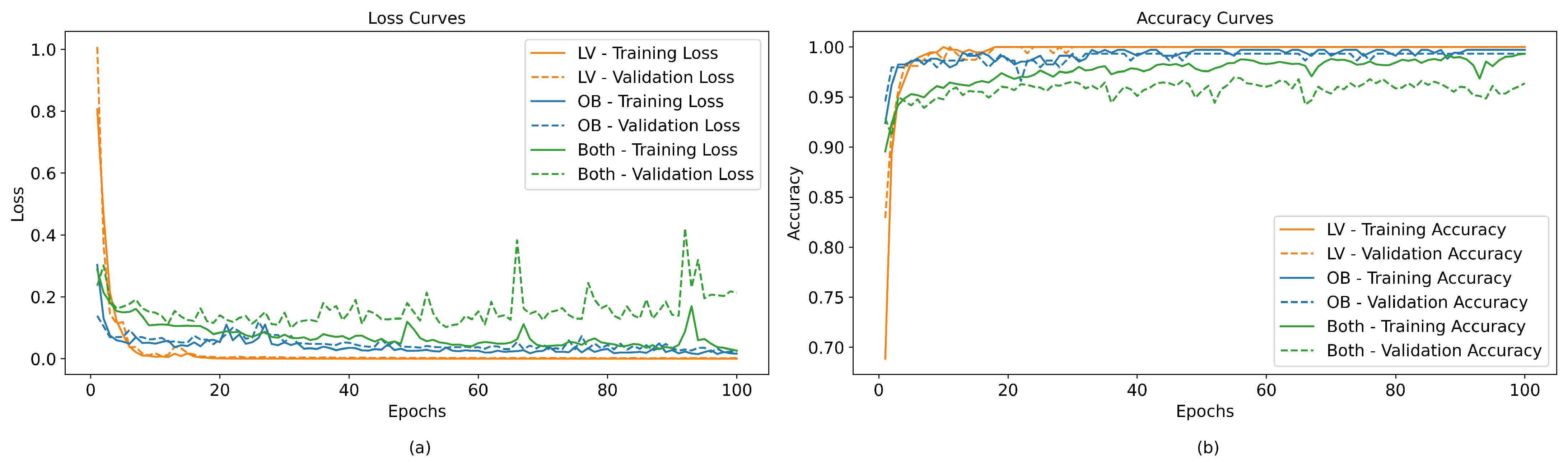

3.2. Offline Hybrid Classification System Performance

3.3. Experimental Validation Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AM-ULA | Activities Measure for Upper Limb Amputees |

| BCI | Brain–Computer Interface |

| DOF | Degree(s) of Freedom |

| EEG | Electroencephalography |

| EMG | Electromyography |

| FDM | Fused Deposition Modeling |

| IoT | Internet of Things |

| OSC | Open Sound Control |

| PLA | Polylactic acid |

| sEMG | Surface Electromyography |

| SVM | Support Vector Machine |

| TRL | Technology Readiness Level |

| UDP | User Datagram Protocol |

References

- World Health Organization; United Nations Children’s Fund. Global Report on Assistive Technology; World Health Organization: Genève, Switzerland, 2022. [Google Scholar]

- Holland, A.C.; Hummel, C. Informalities: An Index Approach to Informal Work and Its Consequences. Lat. Am. Politics Soc. 2022, 64, 1–20. [Google Scholar] [CrossRef]

- Diaz Dumont, J.R.; Suarez Mansilla, S.L.; Santiago Martinez, R.N.; Bizarro Huaman, E.M. Accidentes laborales en el Perú: Análisis de la realidad a partir de datos estadísticos. Rev. Venez. Gerenc. 2020, 25, 312–329. [Google Scholar]

- Inkellis, E.; Low, E.E.; Langhammer, C.; Morshed, S. Incidence and characterization of major upper-extremity amputations in the National Trauma Data Bank. JB JS Open Access 2018, 3, e0038. [Google Scholar] [CrossRef] [PubMed]

- Fitzgibbons, P.; Medvedev, G. Functional and clinical outcomes of upper extremity amputation. J. Am. Acad. Orthop. Surg. 2015, 23, 751–760. [Google Scholar] [CrossRef] [PubMed]

- Resnik, L.; Borgia, M.; Clark, M.A. Contralateral limb pain is prevalent, persistent, and impacts quality of life of veterans with unilateral upper-limb amputation. J. Prosthet. Orthot. 2023, 35, 3–11. [Google Scholar] [CrossRef]

- Abbady, H.E.M.A.; Klinkenberg, E.T.M.; de Moel, L.; Nicolai, N.; van der Stelt, M.; Verhulst, A.C.; Maal, T.J.J.; Brouwers, L. 3D-printed prostheses in developing countries: A systematic review. Prosthet. Orthot. Int. 2022, 46, 19–30. [Google Scholar] [CrossRef]

- Engdahl, S.M.; Lee, C.; Gates, D.H. A comparison of compensatory movements between body-powered and myoelectric prosthesis users during activities of daily living. Clin. Biomech. 2022, 97, 105713. [Google Scholar] [CrossRef]

- Timm, L.; Etuket, M.; Sivarasu, S. Design and Development of an Open-Source Adl-Compliant Prosthetic Arm for Trans-Radial Amputees. In Proceedings of the Design of Medical Devices Conference, Minneapolis, MN, USA, 11–14 April 2022. [Google Scholar] [CrossRef]

- Cura, V.O.D.; Cunha, F.L.; Aguiar, M.L.; Cliquet, A., Jr. Study of the different types of actuators and mechanisms for upper limb prostheses. Artif. Organs 2003, 27, 507–516. [Google Scholar] [CrossRef]

- Marinelli, A.; Boccardo, N.; Tessari, F.; Di Domenico, D.; Caserta, G.; Canepa, M.; Gini, G.; Barresi, G.; Laffranchi, M.; De Michieli, L.; et al. Active upper limb prostheses: A review on current state and upcoming breakthroughs. Prog. Biomed. Eng. 2023, 5, 012001. [Google Scholar] [CrossRef]

- Ulubas, M.K.; Akpinar, A.; Coskun, O.; Kahriman, M. EMG Controlled Artificial Hand and Arm Design. WSEAS Trans. Biol. Biomed. 2022, 19, 41–46. [Google Scholar] [CrossRef]

- Kansal, S.; Garg, D.; Upadhyay, A.; Mittal, S.; Talwar, G.S. A novel deep learning approach to predict subject arm movements from EEG-based signals. Neural Comput. Appl. 2023, 35, 11669–11679. [Google Scholar] [CrossRef]

- Kansal, S.; Garg, D.; Upadhyay, A.; Mittal, S.; Talwar, G.S. DL-AMPUT-EEG: Design and development of the low-cost prosthesis for rehabilitation of upper limb amputees using deep-learning-based techniques. Eng. Appl. Artif. Intell. 2023, 126, 106990. [Google Scholar] [CrossRef]

- Ihab, A.S. A comprehensive study of EEG-based control of artificial arms. Vojnoteh. Glas. 2023, 71, 9–41. [Google Scholar] [CrossRef]

- Das, T.; Gohain, L.; Kakoty, N.M.; Malarvili, M.; Widiyanti, P.; Kumar, G. Hierarchical approach for fusion of electroencephalography and electromyography for predicting finger movements and kinematics using deep learning. Neurocomputing 2023, 527, 184–195. [Google Scholar] [CrossRef]

- Rada, H.; Abdul Hassan, A.; Al-Timemy, A. Recognition of Upper Limb Movements Based on Hybrid EEG and EMG Signals for Human-Robot Interaction. Iraqi J. Comput. Commun. Control. Syst. Eng. 2023, 23, 176–191. [Google Scholar] [CrossRef]

- Capsi-Morales, P.; Piazza, C.; Grioli, G.; Bicchi, A.; Catalano, M.G. The SoftHand Pro platform: A flexible prosthesis with a user-centered approach. J. Neuroeng. Rehabil. 2023, 20, 20. [Google Scholar] [CrossRef]

- Huang, J.; Li, G.; Su, H.; Li, Z. Development and Continuous Control of an Intelligent Upper-Limb Neuroprosthesis for Reach and Grasp Motions Using Biological Signals. IEEE Trans. Syst. Man, Cybern. Syst. 2022, 52, 3431–3441. [Google Scholar] [CrossRef]

- Ruhunage, I.; Mallikarachchi, S.; Chinthaka, D.; Sandaruwan, J.; Lalitharatne, T.D. Hybrid EEG-EMG Signals Based Approach for Control of Hand Motions of a Transhumeral Prosthesis. In Proceedings of the 2019 IEEE 1st Global Conference on Life Sciences and Technologies (LifeTech), Osaka, Japan, 12–14 March 2019; pp. 50–53. [Google Scholar] [CrossRef]

- DynamicArm. Available online: https://shop.ottobock.us/Prosthetics/Upper-Limb-Prosthetics/Myoelectric-Elbows/DynamicArm-Elbow/DynamicArm/p/12K100N~550-2 (accessed on 5 December 2023).

- ErgoArm Electronic Plus. Available online: https://shop.ottobock.ca/en/Prosthetics/Upper-Limb-Prosthetics/Myoelectric-Elbows/ErgoArm-Electronic-plus/p/12K50#product-references-accessories (accessed on 5 December 2023).

- U3 Comparison—Utah Arm. Available online: https://www.utaharm.com/u3-comparison/ (accessed on 5 December 2023).

- George, J.A.; Davis, T.S.; Brinton, M.R.; Clark, G.A. Intuitive neuromyoelectric control of a dexterous bionic arm using a modified Kalman filter. J. Neurosci. Methods 2020, 330, 108462. [Google Scholar] [CrossRef]

- Biddiss, E.A.; Chau, T.T. Upper limb prosthesis use and abandonment: A survey of the last 25 years. Prosthetics Orthot. Int. 2007, 31, 236–257. [Google Scholar] [CrossRef]

- Rohwerder, B. Assistive Technologies in Developing Countries; Institute of Development Studies: Brighton, UK, 2018. [Google Scholar]

- Rios Ferrari, G.A. Informal Economy in Peru and Its Impact on GDP. Bachelor’s Thesis, LUISS Guido Carli, Rome, Italy, 2022. [Google Scholar]

- Capsi-Morales, P.; Piazza, C.; Sjoberg, L.; Catalano, M.G.; Grioli, G.; Bicchi, A.; Hermansson, L.M. Functional assessment of current upper limb prostheses: An integrated clinical and technological perspective. PLoS ONE 2023, 18, e0289978. [Google Scholar] [CrossRef]

- Fajardo, J.; Ferman, V.; Cardona, D.; Maldonado, G.; Lemus, A.; Rohmer, E. Galileo Hand: An Anthropomorphic and Affordable Upper-Limb Prosthesis. IEEE Access 2020, 8, 81365–81377. [Google Scholar] [CrossRef]

- Lealndash Joseacute, A.; Torres San miguel, C.; Manuel, F.; Luis, M. Structural numerical analysis of a three fingers prosthetic hand prototype. Int. J. Phys. Sci. 2013, 8, 526–536. [Google Scholar] [CrossRef]

- Johannes, M.; Bigelow, J.; Burck, J.; Harshbarger, S.; Kozlowski, M.; Doren, T. An Overview of the Developmental Process for the Modular Prosthetic Limb. Johns Hopkins Apl Tech. Dig. 2011, 30, 207–216. [Google Scholar]

- Resnik, L.; Acluche, F.; Lieberman Klinger, S.; Borgia, M. Does the DEKA Arm substitute for or supplement conventional prostheses. Prosthet. Orthot. Int. 2018, 42, 534–543. [Google Scholar] [CrossRef]

- He, L.; Xiong, C.; Zhang, K. Mechatronic design of an upper limb prosthesis with a hand. In Intelligent Robotics and Applications; Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2014; pp. 56–66. [Google Scholar]

- Bustamente, M.; Vega-Centeno, R.; Sanchez, M.; Mio, R. Regular Paper] A Parametric 3D-Printed Body-Powered Hand Prosthesis Based on the Four-Bar Linkage Mechanism. In Proceedings of the 2018 IEEE 18th International Conference on Bioinformatics and Bioengineering (BIBE), Taichung, Taiwan, 29–31 October 2018; pp. 79–85. [Google Scholar]

- Mio, R.; Sebastian, C.; Vega-Centeno, R.; Bustamente, M. One-To-Many Actuation Mechanism for a 3D-printed Anthropomorphic 1315 Hand Prosthesis. In Proceedings of the IX Latin American Congress on Biomedical Engineering and XXVIII Brazilian Congress on Biomedical Engineering, Florianópolis, Brazil, 24–28 October 2022. [Google Scholar]

- Leddy, M.T.; Dollar, A.M. Preliminary Design and Evaluation of a Single-Actuator Anthropomorphic Prosthetic Hand with Multiple Distinct Grasp Types. In Proceedings of the 2018 7th IEEE International Conference on Biomedical Robotics and Biomechatronics (Biorob), Enschede, The Netherlands, 26–29 August 2018; pp. 1062–1069. [Google Scholar] [CrossRef]

- Wattanasiri, P.; Tangpornprasert, P.; Virulsri, C. Design of multi-grip patterns prosthetic hand with single actuator. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 1188–1198. [Google Scholar] [CrossRef]

- Tigrini, A.; Al-Timemy, A.H.; Verdini, F.; Fioretti, S.; Morettini, M.; Burattini, L.; Mengarelli, A. Decoding transient sEMG data for intent motion recognition in transhumeral amputees. Biomed. Signal Process. Control 2023, 85, 104936. [Google Scholar] [CrossRef]

- Jarrassé, N.; Nicol, C.; Touillet, A.; Richer, F.; Martinet, N.; Paysant, J.; de Graaf, J.B. Classification of Phantom Finger, Hand, Wrist, and Elbow Voluntary Gestures in Transhumeral Amputees With sEMG. IEEE Trans. Neural Syst. Rehabil. Eng. 2017, 25, 71–80. [Google Scholar] [CrossRef]

- Sultana, A.; Ahmed, F.; Alam, M.S. A systematic review on surface electromyography-based classification system for identifying hand and finger movements. Healthc. Anal. 2023, 3, 100126. [Google Scholar] [CrossRef]

- Sattar, N.Y.; Kausar, Z.; Usama, S.A.; Naseer, N.; Farooq, U.; Abdullah, A.; Hussain, S.Z.; Khan, U.S.; Khan, H.; Mirtaheri, P. Enhancing Classification Accuracy of Transhumeral Prosthesis: A Hybrid sEMG and fNIRS Approach. IEEE Access 2021, 9, 113246–113257. [Google Scholar] [CrossRef]

- Guo, W.; Sheng, X.; Liu, H.; Zhu, X. Toward an Enhanced Human–Machine Interface for Upper-Limb Prosthesis Control With Combined EMG and NIRS Signals. IEEE Trans. Hum. Mach. Syst. 2017, 47, 564–575. [Google Scholar] [CrossRef]

- Davarinia, F.; Maleki, A. SSVEP-gated EMG-based decoding of elbow angle during goal-directed reaching movement. Biomed. Signal Process. Control 2022, 71, 103222. [Google Scholar] [CrossRef]

- Kim, S.; Shin, D.Y.; Kim, T.; Lee, S.; Hyun, J.K.; Park, S.M. Enhanced Recognition of Amputated Wrist and Hand Movements by Deep Learning Method Using Multimodal Fusion of Electromyography and Electroencephalography. Sensors 2022, 22, 680. [Google Scholar] [CrossRef]

- Li, X.; Samuel, O.W.; Zhang, X.; Wang, H.; Fang, P.; Li, G. A motion-classification strategy based on sEMG-EEG signal combination for upper-limb amputees. J. Neuroeng. Rehabil. 2017, 14, 2. [Google Scholar] [CrossRef]

- Tepe, C.; Demir, M.C. Real-Time Classification of EMG Myo Armband Data Using Support Vector Machine. IRBM 2022, 43, 300–308. [Google Scholar] [CrossRef]

- Prabhavathy, T.; Elumalai, V.K.; Balaji, E. Hand gesture classification framework leveraging the entropy features from sEMG signals and VMD augmented multi-class SVM. Expert Syst. Appl. 2024, 238, 121972. [Google Scholar] [CrossRef]

- Asghari Oskoei, M.; Hu, H. Myoelectric control systems—A survey. Biomed. Signal Process. Control 2007, 2, 275–294. [Google Scholar] [CrossRef]

- Yang, J.; Pan, J.; Li, J. sEMG-based continuous hand gesture recognition using GMM-HMM and threshold model. In Proceedings of the 2017 IEEE International Conference on Robotics and Biomimetics (ROBIO), Macau, China, 5–8 December 2017; pp. 1509–1514. [Google Scholar] [CrossRef]

- Hassan, H.F.; Abou-Loukh, S.J.; Ibraheem, I.K. Teleoperated robotic arm movement using electromyography signal with wearable Myo armband. J. King Saud Univ. Eng. Sci. 2020, 32, 378–387. [Google Scholar] [CrossRef]

- Phinyomark, A.; Scheme, E. A feature extraction issue for myoelectric control based on wearable EMG sensors. In Proceedings of the 2018 IEEE Sensors Applications Symposium (SAS), Seoul, Republic of Korea, 12–14 March 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Li, K.; Zhang, J.; Wang, L.; Zhang, M.; Li, J.; Bao, S. A review of the key technologies for sEMG-based human-robot interaction systems. Biomed. Signal Process. Control 2020, 62, 102074. [Google Scholar] [CrossRef]

- Gaudet, G.; Raison, M.; Achiche, S. Classification of Upper limb phantom movements in transhumeral amputees using electromyographic and kinematic features. Eng. Appl. Artif. Intell. 2018, 68, 153–164. [Google Scholar] [CrossRef]

- Shen, S.; Gu, K.; Chen, X.R.; Yang, M.; Wang, R.C. Movements Classification of Multi-Channel sEMG Based on CNN and Stacking Ensemble Learning. IEEE Access 2019, 7, 137489–137500. [Google Scholar] [CrossRef]

- Phukpattaranont, P.; Thongpanja, S.; Anam, K.; Al-Jumaily, A.; Limsakul, C. Evaluation of feature extraction techniques and classifiers for finger movement recognition using surface electromyography signal. Med. Biol. Eng. Comput. 2018, 56, 2259–2271. [Google Scholar] [CrossRef]

- Chen, W.; Zhang, Z. Hand Gesture Recognition using sEMG Signals Based on Support Vector Machine. In Proceedings of the 2019 IEEE 8th Joint International Information Technology and Artificial Intelligence Conference (ITAIC), Chongqing, China, 24–26 May 2019; pp. 230–234. [Google Scholar] [CrossRef]

- Said, S.; Boulkaibet, I.; Sheikh, M.; Karar, A.S.; Alkork, S.; Nait-ali, A. Machine-Learning-Based Muscle Control of a 3D-Printed Bionic Arm. Sensors 2020, 20, 3144. [Google Scholar] [CrossRef]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Chang, C.C.; Lin, C.J. LIBSVM. ACM Trans. Intell. Syst. Technol. 2011, 2, 1–27. [Google Scholar] [CrossRef]

- Knerr, S.; Personnaz, L.; Dreyfus, G. Single-layer learning revisited: A stepwise procedure for building and training a neural network. In Neurocomputing; Soulié, F.F., Hérault, J., Eds.; Springer: Berlin/Heidelberg, Germany, 1990; pp. 41–50. [Google Scholar]

- ClassifficationLearner | MathWorks. Available online: https://la.mathworks.com/help/stats/classificationlearner-app (accessed on 26 October 2023).

- Ding, C.; Peng, H. Minimum redundancy feature selection from microarray gene expression data. J. Bioinform. Comput. Biol. 2005, 3, 185–205. [Google Scholar] [CrossRef]

- Yang, W.; Wang, K.; Zuo, W. Neighborhood component feature selection for high-dimensional data. J. Comput. 2012, 7, 161–168. [Google Scholar] [CrossRef]

- Kononenko, I.; Šimec, E.; Robnik-Šikonja, M. Overcoming the Myopia of Inductive Learning Algorithms with RELIEFF. Appl. Intell. 1997, 7, 39–55. [Google Scholar] [CrossRef]

- Resnik, L.; Adams, L.; Borgia, M.; Delikat, J.; Disla, R.; Ebner, C.; Walters, L.S. Development and evaluation of the activities measure for upper limb amputees. Arch. Phys. Med. Rehabil. 2013, 94, 488–494.e4. [Google Scholar] [CrossRef] [PubMed]

- Li, Y.; Zhang, S.; Yin, Y.; Xiao, W.; Zhang, J. Parallel one-class extreme learning machine for imbalance learning based on Bayesian approach. J. Ambient Intell. Humaniz. Comput. 2018, 1, 1–18. [Google Scholar] [CrossRef]

- Jarrassé, N.; de Montalivet, E.; Richer, F.; Nicol, C.; Touillet, A.; Martinet, N.; Paysant, J.; de Graaf, J.B. Phantom-Mobility-Based Prosthesis Control in Transhumeral Amputees Without Surgical Reinnervation: A Preliminary Study. Front. Bioeng. Biotechnol. 2018, 6, 164. [Google Scholar] [CrossRef]

- Toro-Osaba, A.; Tejada, J.; Rúa, S.; López-González, A. A Proposal of Bioinspired Soft Active Hand Prosthesis. Biomimetics 2023, 28, 29. [Google Scholar] [CrossRef] [PubMed]

- Lenzi, T.; Lipsey, J.; Sensinger, J. The RIC Arm—A Small Anthropomorphic Transhumeral Prosthesis. IEEE/ASME Trans. Mechatronics 2016, 21, 2660–2671. [Google Scholar] [CrossRef]

- Resnik, L.; Borgia, M.; Cancio, J.; Heckman, J.; Highsmith, J.; Levy, C.; Phillips, S.; Webster, J. Dexterity, activity performance, disability, quality of life, and independence in upper limb Veteran prosthesis users: A normative study. Disabil. Rehabil. 2020, 44, 2470–2481. [Google Scholar] [CrossRef] [PubMed]

- Asogbon, M.G.; Samuel, O.W.; Geng, Y.; Oluwagbemi, O.; Ning, J.; Chen, S.; Ganesh, N.; Feng, P.; Li, G. Towards resolving the co-existing impacts of multiple dynamic factors on the performance of EMG-pattern recognition based prostheses. Comput. Methods Programs Biomed. 2020, 184, 105278. [Google Scholar] [CrossRef]

- Geng, Y.; Zhou, P.; Li, G. Toward attenuating the impact of arm positions on electromyography pattern-recognition based motion classification in transradial amputees. J. Neuroeng. Rehabil. 2012, 9, 74. [Google Scholar] [CrossRef]

| Aspect | LUKE Arm [24] | Utah Arm [23] | Dynamic Arm [21] | ErgoArm Electronic Plus [22] |

|---|---|---|---|---|

| Country of origin | US | US | Germany | Germany |

| Cost (USD) | 50 K–100 K | 20 K | 76 K | 27 K |

| Control mode | sEMG | sEMG | sEMG | sEMG or buttons |

| Compatibility | - | Ottobock | Ottobock, Bebionic | Ottobock, Bebionic |

| Active DOF | 3 | 3 | 3 | 1 |

| Weight | 3.5 kg | 913 g * | 1 kg * | 550–710 g * |

| Battery | Li-ion | Ni-MH | Li-ion | - |

| Customization | Socket only ** | Socket only ** | Socket only ** | Socket only ** |

| N° | Aspect | Requirements |

|---|---|---|

| 1 | Design | Compact and organic design using the anthropometry of a real user. Ensure materials that increase friction to prevent objects from slipping. |

| 2 | Kinematics | Maximum hand closing time of 1.5 s. Use self-locking mechanisms for passive systems. |

| 3 | Power | Ability to remove the power source. Not to exceed 10 mA of current in the sensors and 1000 mA in the actuators. |

| 4 | Materials | Resilient and corrosion-resistant. Hypoallergenic material for the prosthetic socket. |

| 5 | Signals | Power system operating at commercially available voltage levels. Non-invasive acquisition of physiological signals. |

| 6 | Safety | Prevent the ingress of liquids into the system. Manual disconnection of the actuator power source is possible. |

| 7 | Ergonomics | Maximum hand weight of 0.5 kg. Preventing excessive sweating. |

| 8 | Manufacturing | Selection of commercially available components. |

| 9 | Assembly | Prosthetic socket must avoid relative movements concerning the residual limb. |

| Characteristics | Power HD 1810MG | EMAX ES09MD II |

|---|---|---|

| Operating voltage (V) | 6 | 6 |

| Stall Torque (kgf-cm) | 3.9 | 2.6 |

| Speed (s/60°) | 0.13 | 0.08 |

| Weight (g) | 15.8 | 14.8 |

| Dimensions (mm) | 22.8 × 12.0 × 29.4 | 23.0 × 12.0 × 24.5 |

| Gear Type | Copper | Metal Gear |

| Algorithm | Abbreviation | Parameters |

|---|---|---|

| Linear SVM | L-SVM | Kernel function: Linear; Kernel scale: Automatic; Box constraint level: 1; Multiclass method: One-vs-One |

| Cubic SVM | C-SVM | Kernel function: Cubic; Kernel scale: Automatic; Box constraint level: 1; Multiclass method: One-vs-One |

| Medium Gaussian SVM | M-SVM | Kernel function: Gaussian; Kernel scale: 4.7; Box constraint level: 1; Multiclass method: One-vs-One |

| Fine Decision Tree | F-TREE | Maximum number of splits: 100; Split criterion: Gini’s diversity index; Surrogate decision splits: Off |

| Medium Decision Tree | M-TREE | Maximum number of splits: 20; Split criterion: Gini’s diversity index; Surrogate decision splits: Off |

| Linear Discriminant Analysis | LDA | Covariance structure: Full |

| Quadratic Discriminant Analysis | QDA | Covariance structure: Full |

| Fine KNN | F-KNN | Number of neighbors: 1; Distance metric: Euclidean; Distance weight: Equal |

| Medium KNN | M-KNN | Number of neighbors: 10; Distance metric: Euclidean; Distance weight: Equal |

| Cubic KNN | C-KNN | Number of neighbors: 10; Distance metric: Minkowski; Distance weight: Equal |

| Kernel Logistic Regression | KLR | Learner: Logistic Regression; Number of expansion dimensions: Auto; Regularization strength (Lambda): Auto; Kernel scale: Auto; Multiclass method: One-vs-One; Iteration limit: 1000 |

| Narrow Neural Network | N-NN | Number of fully connected layers: 1; Layer size: 10; Activation: ReLu; Iteration limit: 1000; Regularization strength (Lambda): 0 |

| Medium Neural Network | M-NN | Number of fully connected layers: 1; Layer size: 25; Activation: ReLu; Iteration limit: 1000; Regularization strength (Lambda): 0 |

| Wide Neural Network | W-NN | Number of fully connected layers: 1; Layer size: 100; Activation: ReLu; Iteration limit: 1000; Regularization strength (Lambda): 0 |

| Bilayered Neural Network | B-NN | Number of fully connected layers: 2; Layer sizes: 10; Activation: ReLu; Iteration limit: 1000; Regularization strength (Lambda): 0 |

| Trilayered Neural Network | T-NN | Number of fully connected layers: 3; Layer sizes = 10; Activation: ReLu; Iteration limit: 1000; Regularization strength (Lambda): 0 |

| Features | L-SVM | C-SVM | M-SVM | F-TREE | M-TREE | LDA | QDA | F-KNN | M-KNN | C-KNN | KLR | N-NN | M-NN | W-NN | B-NN | T-NN |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1-18 | 92.1 | 96.9 | 93.3 | 96.6 | 93.7 | 91.5 | 91.8 | 98.4 | 95.2 | 95 | 93.5 | 97.1 | 98.4 | 98.1 | 97.1 | 96.8 |

| 2, 4, 5, 7, 8, 12, 13, 15, 17 | 91.8 | 96.8 | 93.4 | 96.6 | 92.7 | 89.7 | 84.5 | 98.4 | 94.7 | 94.7 | 92.7 | 95.7 | 97.5 | 98.1 | 96.5 | 96.6 |

| 2, 4, 5, 7, 8, 12, 15, 17 | 91.9 | 96.8 | 93.6 | 96.5 | 92.8 | 89.6 | 83.7 | 98.3 | 94.7 | 94.6 | 92.7 | 95 | 97.2 | 98.4 | 95.7 | 95.9 |

| 2, 4, 7, 8, 12, 15, 17 | 91.5 | 96.8 | 93.5 | 96.6 | 92.5 | 89.3 | 87.7 | 98.3 | 94.7 | 94.6 | 92.3 | 95.1 | 96.8 | 97.7 | 96.1 | 96.3 |

| 2, 7, 8, 12, 15, 17 | 91.6 | 96.3 | 91.7 | 95.6 | 92.6 | 89.3 | 88.5 | 97.5 | 93.9 | 93.5 | 90.2 | 94.5 | 96.5 | 97.6 | 95.4 | 95.2 |

| 2, 7, 12, 15, 17 | 91.8 | 95.7 | 91.5 | 95.8 | 92.6 | 89.3 | 90.0 | 97.6 | 94.2 | 93.9 | 90.0 | 94.5 | 95.8 | 97.5 | 95.2 | 95.4 |

| PROM | 91.78 | 96.55 | 92.83 | 96.28 | 92.82 | 89.78 | 87.70 | 98.08 | 94.57 | 94.38 | 91.90 | 95.32 | 97.03 | 97.90 | 96.00 | 96.03 |

| MAX | 92.10 | 96.90 | 93.60 | 96.60 | 93.70 | 91.50 | 91.80 | 98.40 | 95.20 | 95.00 | 93.50 | 97.10 | 98.40 | 98.40 | 97.10 | 96.80 |

| N° | Sub-Tasks | Suggested Execution Order | N° | Sub-Tasks | Suggested Execution Order |

|---|---|---|---|---|---|

| 1 | Put ona shirt | (1) take the clothing (2) put it on the back (3) put the arms through (4) get comfortable | 7 | Use cutlery | (1) take the cutlery (2) bring the cutlery close to the mouth (3) move the cutlery away from the mouth (4) release |

| 2 | removeshirt | (1) take the garment (2) remove arms (3) place the garment on the table (4) loosen the garment | 8 | Pourliquid | (1) Take an empty glass (2) pour some water from another glass (3) leave glass that was emptied on table (4) drop the other glass that has been filled |

| 3 | Buttons | (1) get into position (2) push button through (3) pull the button (4) complete all buttons | 9 | Writeinitials | (1) take a marker (2) write your initials on the board (3) put the pen down |

| 4 | Zipper | (1) pick up the zipper (2) get comfortable (3) take the zipper to the other end (4) release | 10 | DialPhone | (1) take the cell phone (2) with the other hand dial a number (3) communicate on speaker phone (4) end call (5) leave the cell phone |

| 5 | Shoelace | (1) take a shoestring with prosthesis (2) take another shoestring with other arm (3) tie knot (4) tighten and loosen | 11 | FoldClothes | (1) take both ends (2) make a first fold (3) make a second fold (4) leave the folded garment on the table |

| 6 | Drinkwater | (1) hold the glass (2) bring the glass to the mouth (3) tilt the glass and simulate drinking (4) return glass to the table (5) release | 12 | Takeobjecton shelf | (1) raise arm (2) take the object (3) lower arm with object in hand |

| Grade | Speed of Completion | Movement Quality | Skillfulness of Prosthesis Use | Independence |

|---|---|---|---|---|

| Unable (0) | N/A | N/A | No prosthetic use | N/A |

| Poor (1) | Very slow to slow. | Very awkward, many compensatory movements. | Inappropriate choice of grip for the task (if choice is available). Loses grip multiple times during task, lack of proportional control (if available). Multiple unintentional activations of a control. | May or may not use an assistive device. |

| Fair (2) | Slow to Medium. | Some awkwardnessor compensatory movement. | Sub-optimal choice of grip for the task (if choice is available). Use of prosthesis to assist bimanual or prime mover unilateral activities. Loses grip once during the task. More than one attempt is needed to pre-position the object within grasp and more than minimal awkwardness in positioning the object. One incidence of unintentional activation of a control. | May or may not use an assistive device. |

| Good (3) | Medium-fast to normal. | Minimal to no awkwardness or compensatory movement. | Skilled use of prosthesis as an assist for bimanual activities or as a prime mover for unilateral activities. Quick and easy pre-positioning of the object within grasp. No unintentional loss of grip. | May or may not make use of the assistive device. |

| Excellent (4) | Equivalent to non-disabled. | Excellent movement quality, no awkwardness or compensatory movement. | No intentional loss of grip or unwanted movement. Optimal choice of grip for the task (if the choice is available). | May or may not make use of the assistive device. |

| Parameter | Index Finger | Middle Finger | Thumb |

|---|---|---|---|

| force (N) | 21.26 | 11.12 | 16.22 |

| current (A) | 1.07 | 1.07 | 0.67 |

| Value | Index Finger | Middle Finger | Thumb |

|---|---|---|---|

| mean | 0.20 | 0.21 | 0.23 |

| maximum | 0.27 | 0.23 | 0.27 |

| minimum | 0.13 | 0.18 | 0.20 |

| SD | 0.04 | 0.02 | 0.03 |

| Data | Algorithm | Parameters | Accuracy | Precision | Recall | F1-Score | Sensitivity | Specificity | Speed * |

|---|---|---|---|---|---|---|---|---|---|

| LV | Cubic SVM | C = 1000, Gamma = 0.1 | 0.977 | 0.979 | 0.974 | 0.976 | 0.964 | 0.989 | 22.9 |

| OB | C ≥ 1, Gamma = 1 | 0.992 | 0.994 | 0.990 | 0.992 | 0.990 | 0.995 | 22.9 | |

| Both | C = 100, Gamma = 0.1 | 0.949 | 0.935 | 0.946 | 0.940 | 0.946 | 0.974 | 1.75 | |

| LV | k-Nearest Neighbors | k = 1, metric = manhattan, weights = distance | 0.998 | 0.998 | 0.998 | 0.998 | 0.999 | 0.999 | 4.64 |

| OB | k = 1, metric = manhattan, weights = distance | 0.998 | 0.998 | 0.998 | 0.998 | 0.999 | 0.999 | 5.04 | |

| Both | k = 2, metric = manhattan, weights = distance | 0.965 | 0.966 | 0.965 | 0.965 | 0.965 | 0.983 | 0.31 | |

| LV | Single Layer Neural Network | units = 50, learning rate = 0.1 | 0.992 | 0.993 | 0.992 | 0.992 | 0.992 | 0.996 | 0.07 |

| OB | units = 10, learning rate = 0.1 | 0.992 | 0.991 | 0.994 | 0.992 | 0.994 | 0.996 | 0.07 | |

| Both | units = 200, lr = 0.01 | 0.954 | 0.944 | 0.951 | 0.947 | 0.967 | 0.977 | 0.06 |

| Sub-Task | Completion of Sub-Tasks | Speed of Completion | Movement Quality | Skillfulness of Prosthesis Use | Independence |

|---|---|---|---|---|---|

| Volunteer Code | OB | LV | OB | LV | OB | LV | OB | LV | OB | LV |

| Put on shirt | 2 | 1 | 2 | 1 | 2 | 1 | 0 | 1 | 2 | 2 |

| Take off shirt | 2 | 1 | 2 | 1 | 2 | 1 | 1 | 1 | 3 | 2 |

| Buttoning buttons | 3 | 2 | 2 | 2 | 2 | 2 | 0 | 0 | 2 | 2 |

| Volunteer Code | OB | LV | OB | LV | OB | LV | OB | LV | OB | LV |

| Running zipper | 3 | 0 | 2 | 0 | 2 | 0 | 2 | 0 | 1 | 0 |

| Tie shoelace | 3 | 2 | 2 | 2 | 1 | 1 | 2 | 2 | 2 | 2 |

| Drink water | 3 | 0 | 3 | 0 | 2 | 0 | 2 | 2 | 2 | 1 |

| Use cutlery | 1 | 0 | 1 | 1 | 2 | 1 | 1 | 1 | 1 | 1 |

| Pour liquid | 2 | 2 | 2 | 2 | 2 | 2 | 1 | 2 | 2 | 2 |

| Write initials | 3 | 2 | 3 | 3 | 2 | 1 | 2 | 1 | 1 | 1 |

| Dial a number | 2 | 0 | 2 | 2 | 3 | 2 | 2 | 1 | 1 | 2 |

| Fold clothes | 2 | 2 | 2 | 3 | 3 | 2 | 1 | 2 | 2 | 2 |

| Grab on shelf | 1 | 0 | 1 | 0 | 1 | 0 | 1 | 0 | 1 | 0 |

| Aspect | Libra Neurolimb | Hassan et al. [50] | Phukpattaranont et al. [55] | Shen et al. [54] | Chen et al. [56] | Said et al. [57] |

|---|---|---|---|---|---|---|

| SFR * | 100 Hz | 200 Hz | 1024 Hz | 10 KHz | 200 Hz | 200 Hz |

| Gestures | 3 | 7 | 14 | 41 | 5 | 4 |

| Channels | 2 | 8 | 6 | 8 | 8 | 8 |

| Accuracy (%) | SVM 99.2, | SVM 95.26 | SVM 93, LC 94, NB 90, | |||

| k-NN 99.8 | LDA 92.58 | KNN 93, RBF-ELM 93, | 74 | 89 | 89.93 | |

| K-NN 86.41 | AW-ELM, NN99 | |||||

| Data transfer | real-time | online mode ** | offline | real-time | offline | real-time |

| Signal typye | sEMG | sEMG | sEMG | sEMG | sEMG | sEMG |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cifuentes-Cuadros, A.A.; Romero, E.; Caballa, S.; Vega-Centeno, D.; Elias, D.A. The LIBRA NeuroLimb: Hybrid Real-Time Control and Mechatronic Design for Affordable Prosthetics in Developing Regions. Sensors 2024, 24, 70. https://doi.org/10.3390/s24010070

Cifuentes-Cuadros AA, Romero E, Caballa S, Vega-Centeno D, Elias DA. The LIBRA NeuroLimb: Hybrid Real-Time Control and Mechatronic Design for Affordable Prosthetics in Developing Regions. Sensors. 2024; 24(1):70. https://doi.org/10.3390/s24010070

Chicago/Turabian StyleCifuentes-Cuadros, Alonso A., Enzo Romero, Sebastian Caballa, Daniela Vega-Centeno, and Dante A. Elias. 2024. "The LIBRA NeuroLimb: Hybrid Real-Time Control and Mechatronic Design for Affordable Prosthetics in Developing Regions" Sensors 24, no. 1: 70. https://doi.org/10.3390/s24010070

APA StyleCifuentes-Cuadros, A. A., Romero, E., Caballa, S., Vega-Centeno, D., & Elias, D. A. (2024). The LIBRA NeuroLimb: Hybrid Real-Time Control and Mechatronic Design for Affordable Prosthetics in Developing Regions. Sensors, 24(1), 70. https://doi.org/10.3390/s24010070