An Energy-Efficient Routing Protocol with Reinforcement Learning in Software-Defined Wireless Sensor Networks

Abstract

:1. Introduction

- Identify the limitations of current RL-based routing schemes.

- Propose an intelligent multi-objective routing protocol named DOS-RL for SDWSN-IoT.

- Present distinctive features of the proposed routing scheme.

- Implement and evaluate the performance of the proposed solution in comparison to the OSPF and SDN-Q routing schemes in terms of energy efficiency and other related parameters.

2. Background and Related Work

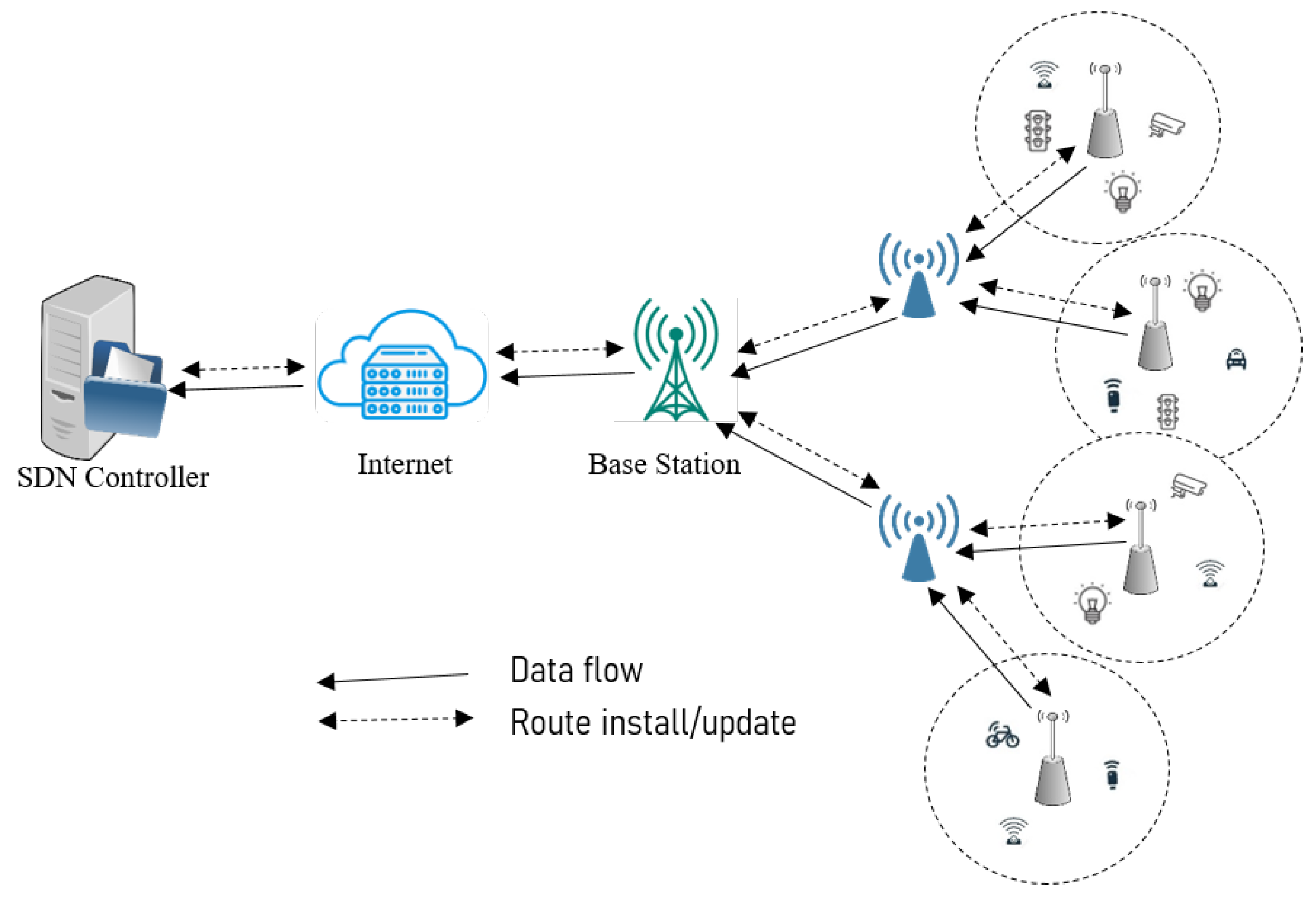

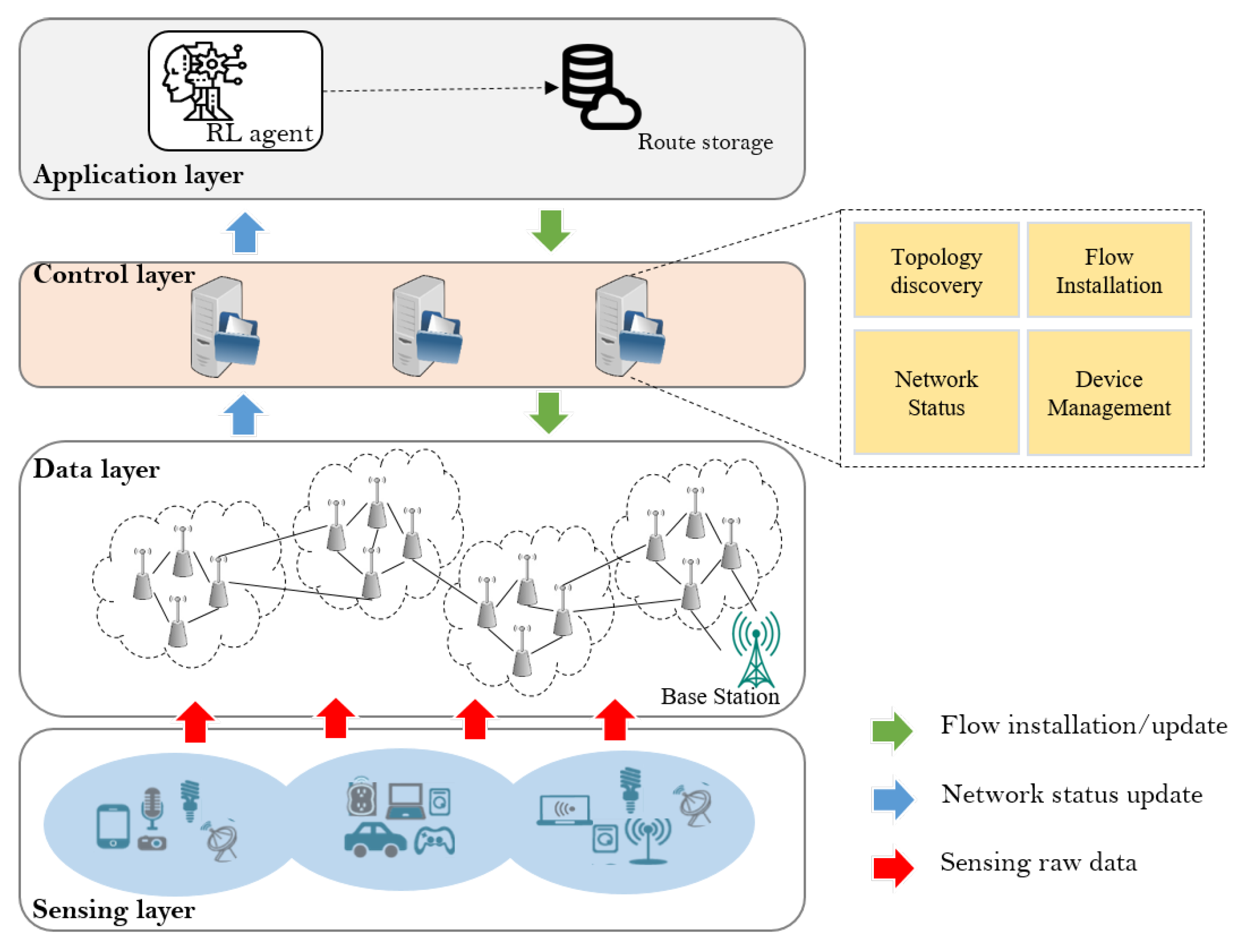

2.1. SDWSN-IoT

- Adaptive Optimization: Machine learning algorithms can analyze real-time network conditions and historical data to make intelligent decisions for optimizing energy consumption in IoT applications. By dynamically adapting to changing network conditions, the algorithms can optimize the operation of sensor nodes, reducing unnecessary energy consumption [21].

- Intelligent Routing: Machine learning algorithms can learn from past routing patterns and make predictions about future network conditions. This enables them to intelligently route data, considering factors such as energy constraints, network congestion, and node availability. By choosing efficient routing paths, energy consumption can be minimized while maintaining effective data transmission [22,23,24].

- Proactive Resource Allocation: Machine learning algorithms can analyze data patterns and predict resource demands in advance. This allows for proactive resource allocation, ensuring that resources, such as energy, computational power, and memory, are efficiently allocated to meet the demands of IoT applications. By optimizing resource allocation, energy efficiency can be significantly improved [25].

- Anomaly Detection: Machine learning algorithms can detect anomalies and abnormal behavior in the network. By identifying unusual patterns or events that may lead to energy wastage or inefficiency, proactive measures can be taken to mitigate such issues and optimize energy efficiency in IoT applications [26].

- Predictive Maintenance: Machine learning algorithms can analyze sensor data and predict potential failures or maintenance requirements. By proactively addressing maintenance needs, energy can be saved by preventing unexpected downtime and optimizing the overall performance of IoT devices [27].

2.2. Reinforcement Learning-Based SDWSN-IoT

3. Methodology

3.1. Preliminary

3.2. Correlated Multi-Objective Energy-Efficient Routing Scheme for SDWSN-IoT

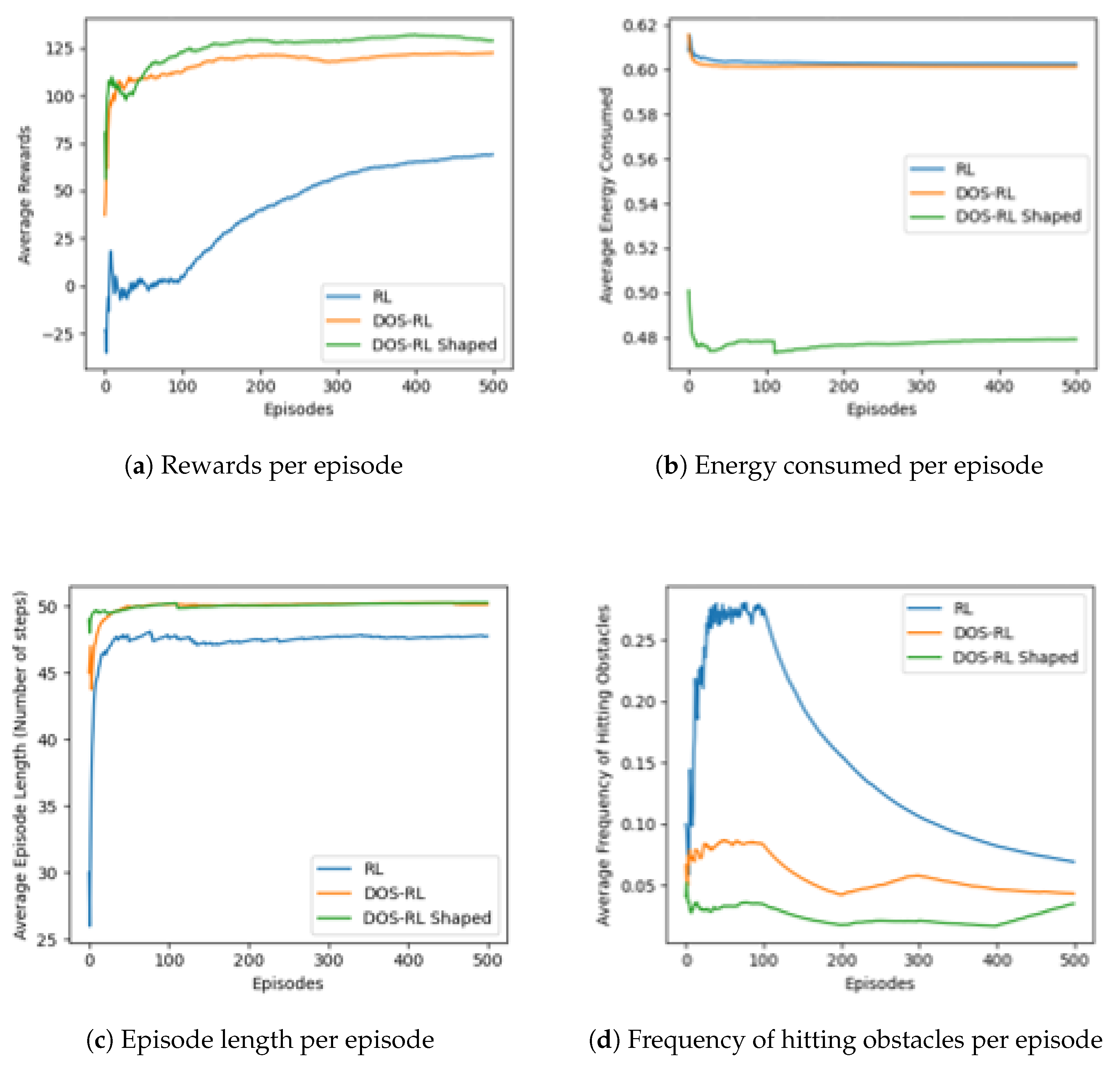

3.2.1. Dynamic Objective Selection RL with Shaped Rewards

| Algorithm 1 Dynamic Objective Selection (DOS) |

|

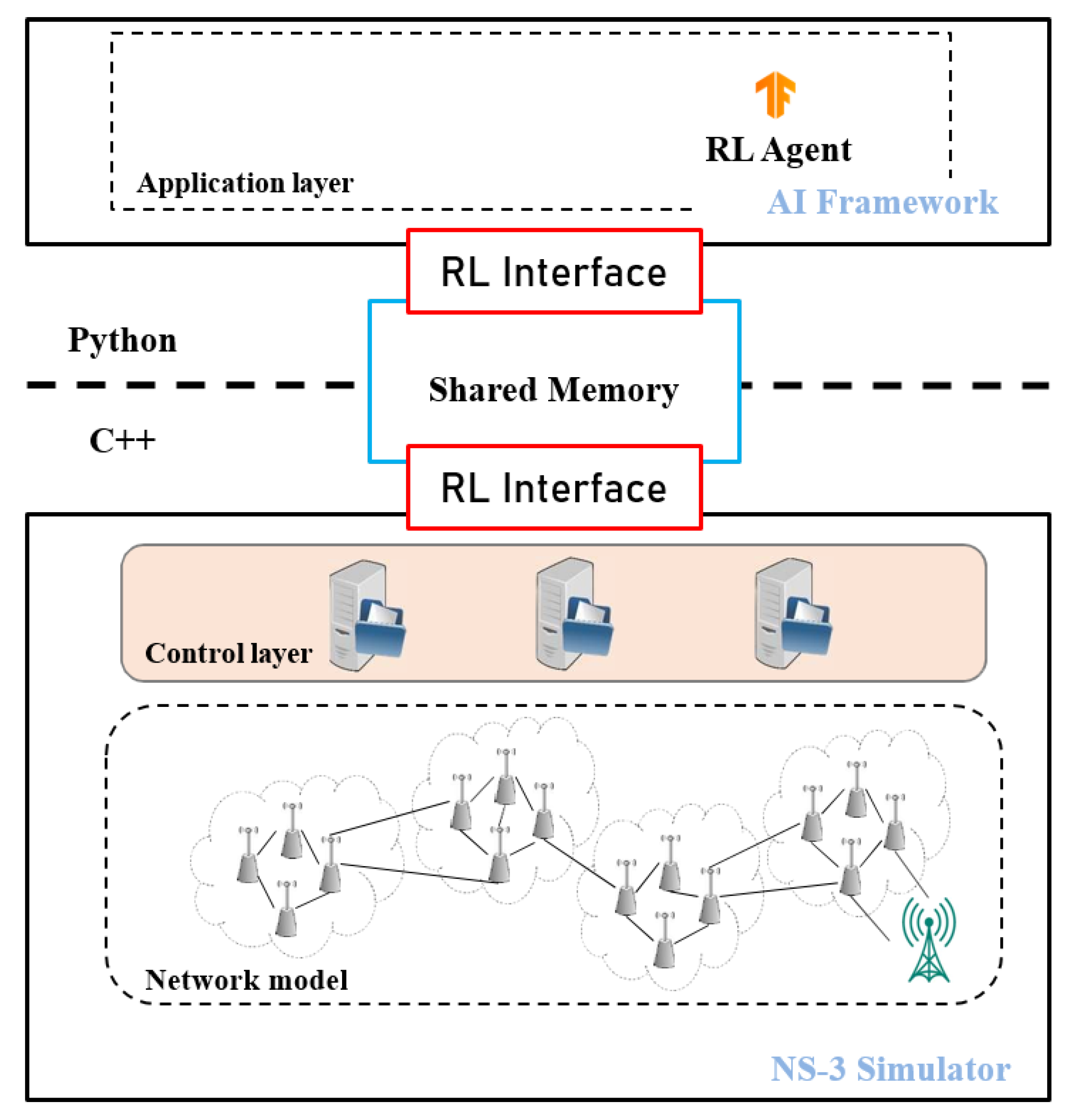

3.2.2. Components and Architecture of the Proposed DOS-RL for the SDWSN-IoT Scheme

3.2.3. The DOS-RL Routing Scheme

- The Reward Function (R): In reinforcement learning, the agent evaluates the effectiveness of its actions and improves its policy by relying on rewards collected from the environment. The rewards obtained are typically dependent on the actions taken, with varying actions resulting in differing rewards. To implement the proposed DOS-RL scheme, we define three reward functions, each corresponding to one of the correlated objectives: the selection of routing paths with sufficient energy for data forwarding (), load balancing (), and the selection of paths with a good link quality for a reliable data transfer (). Route selection based on any of these objectives is expected to improve the energy efficiency of the IoT network system. Details on each objective of the DOS-RL scheme and its related reward functions are provided below:

- (a)

- Energy-consumption: To achieve objective , we consider the energy-consumption parameter, which is a critical factor in determining the overall energy efficiency of an SDWSN: for instance, in a scenario where node i forwards a packet to node j. The reward received by node i for selecting node j as its relay node in terms of energy consumption is estimated by the reward function for the state-action pair, as:where represents the remaining energy of node i in state as a percentage; meanwhile, represents the remaining energy of node j in state as a percentage. The formula calculates the difference in remaining energy between node i and j after the data transmission. A higher value of indicates that node i has consumed less energy in forwarding the packet to node j, which is desirable to achieve the energy efficiency objective .

- (b)

- Load balance: To optimize energy efficiency and network performance in the SDWSN, the careful selection of relay nodes and balanced workload distribution are crucial. Otherwise, some nodes along overused paths may become overloaded, leading to bottlenecks and delays, which can result in a degraded network performance. For objective , we utilize the parameter-available buffer length to estimate the degree of queue congestion in relay nodes. The reward for load balancing as observed by node i when selecting node j is computed as follows:where represents the available buffer length of node j in state , represents the maximum buffer length of a node, represents the current load of node i in state , and represents the maximum load capacity of a node. This formula considers both the available buffer length of node j and the current load of node i. The second term subtracts the load of node i from the load balancing reward value, allowing for a more comprehensive estimation that takes into account both node i and node j in the load balancing process. A higher value of indicates a better load balancing situation for the given state-action pair .

- (c)

- Link quality: A wireless link can be measured by retrieving useful information from either the sender or receiver side. To achieve , we use simple measurements to estimate the link quality based on the parameter packet reception ratio (PRR), measured as the ratio of the total number of packets successfully received to the total number of packets transmitted through a specific wireless link between two nodes. Unlike other sophisticated techniques, this approach involves a low computation and communication overhead. Instead of using an instant value of the PRR, we calculate an average-over-time using an Exponentially-Weighted Moving Average (EWMA) filter. Suppose node i is forwarding data packets to node j, the reward received by node j based on PRR using EWMA is estimated as follows:whereby is the previously estimated average, is the most recent measured value of the packet reception ratio calculated, and is the filter parameter.

- The State Space (S): We define the state space as a graph corresponding to the global topology created by the RNs on the data plane, as seen by the intelligent RL controller. Each state in the state space corresponds to an RN, and a state transition refers to a link connecting two RNs. The intelligent RL controller uses the partial maps created by the topology discovery module to create a global topology. Therefore, the cardinality of the set of states depends on the number of nodes that can actively participate in routing.

- The Action Space (A): The action space, denoted as A, includes all possible actions that an agent can undertake from a given state of the RL environment. It defines the choices available to the agent at each time step, presenting the range of options to the agent. In our specific problem, the discrete action space comprises a finite number of actions that the RL agent can select when in a particular state . The cardinality of A at state i is determined by the number of nodes eligible to participate in the routing process from that specific state.

- The Optimal Policy (P): The policy determines how the learning agent should behave when it is at a given state with the purpose of maximizing the reward value in the learning process. Our proposed scheme estimates the Q-function of every objective o simultaneously and decides, before every action selection decision, which objective estimate an agent will consider in its decision-making process. We use the concept of confidence on computed Q-values by representing each action as a distribution and using a normal distribution of Q-values and the mean values to keep track of the variance as shown in Equation (2). The agent approximates the optimal Q-function by visiting all pairs of action-states and stores the updated Q-values in the Q-table. In our proposed scheme, the approximated Q-value represents the expected cumulative reward when the RL agent is in the state and takes action , transitioning to a new state while maximizing the cumulative rewards for an objective , where and N denote the set of all objectives. The Q-learning equation to update Q-values is designed as shown below in Equation (7):To steer the exploration behavior of the learning agent by incorporating some heuristic knowledge on the problem domain, we introduce an extra reward onto the reward received from the environment . The newly added shaping reward function F is included when updating the Q-learning rule as follows:To avoid changes on the optimal policy, F is implemented as the difference of some potential value, = over the state space, where is a potential function that provides some hints on states. In our study, we define as the Normalized Euclidean Distance between the current state(s) and the goal state(G) expressed as:where is the Euclidean distance between the current state and the goal state G, and represents the maximum distance between any state x in the state space S and the goal state G. The potential function guides the agent towards the goal state by giving bigger rewards to states that are closer to the goal and smaller rewards for states that are farther away.

- In DOS Q-routing: To find the best action-value of the Q-function, the learning agents use an action selection mechanism to trade-off between the exploitation and exploration of available action space. To achieve this, our proposed scheme uses the -greedy exploration and exploitation method, whereby , allowing the agent to exploit with probability pr = and explore with probability pr = 1 −. The agent action selection is determined by a randomly generated number , of which if , the agent exploits it by taking an action that returns the most expected optimal value; otherwise, it explores it by selecting a random action based on the most confident objective as observed from the current state by the learning agent as shown below:

3.2.4. DOS-RL Routing Algorithm

| Algorithm 2 The DOS-RL with Shaped Rewards Routing Scheme |

|

- Initialization and Setup: The intelligent controller is initialized and assigned an IP address. Partial topologies received from the controllers are used to create a global topology containing the eligible RNs. Set the learning rate , discount factor , and exploration rate , and define the potential function to provide hints on states for shaping rewards (Lines 2 to 7).

- Loop-Free Route Calculation: Loop-free routes are computed using the Spanning Tree Protocol (STP) algorithm for all given source-sink pairs. This step ensures that the Q-learning algorithm focuses only on exploring states that lead to the end goal. Here, a source node is defined as any RN that receives data from the SN(s) at the sensing layer (Line 8).

- Q-Routing Exploration: The set of computed loop-free routes serves as input for the SDN intelligent controller, which uses the Q-routing algorithm to find the best path for routing. The Q-learning process runs for a specified number of episodes or until the final state is reached. For each state , compute Q-values for every action for each objective o simultaneously, based on the current Q-table values (Lines 9 to 13).

- Q-Learning Process: During the learning process, the RL agent dynamically chooses the learning objective from the current state, takes the next action, and moves to the next state. Regarding the randomly generated number x ranging between 0 and 1, according to the exploration and exploitation -greedy method, if x < , then exploit with the probability by selecting the action with the highest Q-value for the most confident objective . Otherwise, explore with the probability by randomly selecting an action for the most confident objective (Lines 14 to 19).

- Optimal Route Determination: The RL agent uses the generated Q-table to determine the most rewarding route between the source and sink nodes. This is based on the state-action combinations that received the highest Q-values after completing a transition. Finally, all found optimum routes are stored and sent to respective controllers. These routes are installed or updated in the routing table of the RNs for immediate use in the network.

3.2.5. Algorithm Time Complexity Analysis

4. Performance Evaluation

4.1. Simulation Environment

4.2. Simulation Settings

4.3. Simulation Parameters Setup

4.4. Performance Evaluation

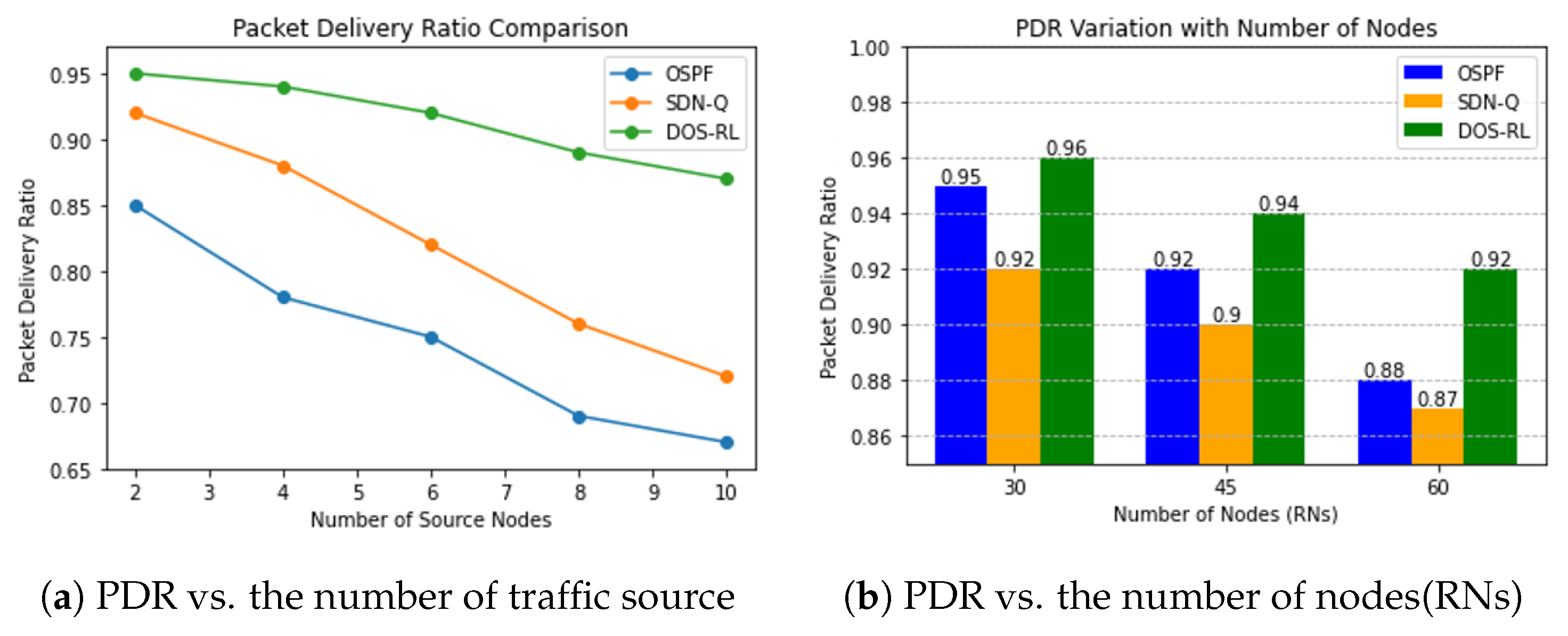

- PDR: This refers to the ratio of successfully delivered packets to the total number of packets sent within the network. It is a measure of the effectiveness of the routing protocols and communication infrastructure in ensuring that packets reach their intended destinations without loss or errors. A higher PDR indicates a more reliable and efficient network, whereas a lower PDR suggests potential issues such as packet loss, congestion, or faulty routing. Monitoring and optimizing the PDR is crucial in evaluating and improving the overall performance and reliability of the WSNs.

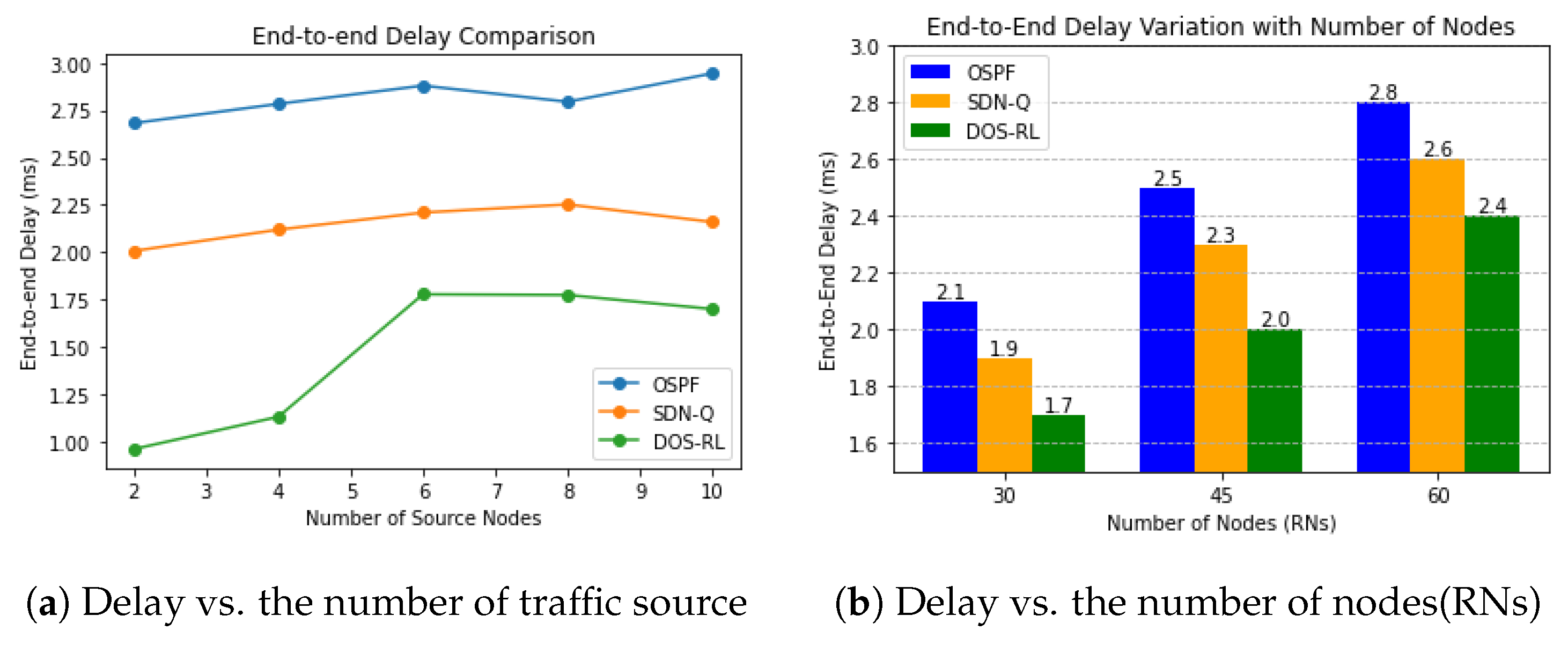

- E2E: This is the time it takes for a packet to travel from the source node to the destination node. It includes processing, queuing, transmission, and propagation delays. A lower delay is better for faster and more efficient communication. Minimizing the end-to-end delay is crucial in the WSNs to ensure timely and reliable data delivery.

- EE: This refers to the ability of the network to perform its intended tasks and communication while utilizing minimal energy resources. We defined this metric as the ratio of the PDR to the average energy consumed by the RNs. It is a critical consideration in the WSNs due to the limited and often non-rechargeable energy sources available to the sensor nodes. By enhancing energy efficiency, the WSNs can achieve a longer network lifetime, extended monitoring capabilities, and reduced maintenance requirements.

4.4.1. Packet Delivery Ratio of Routing Protocols

4.4.2. End-To-End Delay of Routing Protocols

4.4.3. Energy Efficiency of Routing Protocols

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Silva, B.N.; Khan, M.; Han, K. Towards sustainable smart cities: A review of trends, architectures, components, and open challenges in smart cities. Sustain. Cities Soc. 2018, 38, 697–713. [Google Scholar] [CrossRef]

- Jin, J.; Gubbi, J.; Marusic, S.; Palaniswami, M. An information framework for creating a smart city through internet of things. IEEE Internet Things J. 2014, 1, 112–121. [Google Scholar] [CrossRef]

- Syed, A.S.; Sierra-Sosa, D.; Kumar, A.; Elmaghraby, A. IoT in smart cities: A survey of technologies, practices and challenges. Smart Cities 2021, 4, 429–475. [Google Scholar] [CrossRef]

- Mainetti, L.; Patrono, L.; Vilei, A. Evolution of wireless sensor networks towards the internet of things: A survey. In Proceedings of the IEEE SoftCOM 2011, 19th International Conference on Software, Telecommunications and Computer Networks, Split, Croatia, 15–17 September 2011; pp. 1–6. [Google Scholar]

- Ngu, A.H.; Gutierrez, M.; Metsis, V.; Nepal, S.; Sheng, Q.Z. IoT middleware: A survey on issues and enabling technologies. IEEE Internet Things J. 2016, 4, 1–20. [Google Scholar] [CrossRef]

- Ijemaru, G.K.; Ang, K.L.M.; Seng, J.K. Wireless power transfer and energy harvesting in distributed sensor networks: Survey, opportunities, and challenges. Int. J. Distrib. Sens. Netw. 2022, 18, 15501477211067740. [Google Scholar] [CrossRef]

- Amini, N.; Vahdatpour, A.; Xu, W.; Gerla, M.; Sarrafzadeh, M. Cluster size optimization in sensor networks with decentralized cluster-based protocols. Comput. Commun. 2012, 35, 207–220. [Google Scholar] [CrossRef] [PubMed]

- Kobo, H.I.; Abu-Mahfouz, A.M.; Hancke, G.P. A survey on software-defined wireless sensor networks: Challenges and design requirements. IEEE Access 2017, 5, 1872–1899. [Google Scholar] [CrossRef]

- Zhao, Y.; Li, Y.; Zhang, X.; Geng, G.; Zhang, W.; Sun, Y. A survey of networking applications applying the software defined networking concept based on machine learning. IEEE Access 2019, 7, 95397–95417. [Google Scholar] [CrossRef]

- Da Xu, L.; He, W.; Li, S. Internet of things in industries: A survey. IEEE Trans. Ind. Inform. 2014, 10, 2233–2243. [Google Scholar]

- Sharma, N.; Shamkuwar, M.; Singh, I. The history, present, and future with IoT. In Internet of Things and Big Data Analytics for Smart Generation; Springer: Cham, Switzerland, 2019; pp. 27–51. [Google Scholar]

- Al Nuaimi, E.; Al Neyadi, H.; Mohamed, N.; Al-Jaroodi, J. Applications of big data to smart cities. J. Internet Serv. Appl. 2015, 6, 1–15. [Google Scholar] [CrossRef]

- Nishi, H.; Nakamura, Y. IoT-based monitoring for smart community. Urban Syst. Des. 2020, 335–344. [Google Scholar]

- Al-Turjman, F.; Zahmatkesh, H.; Shahroze, R. An overview of security and privacy in smart cities’ IoT communications. Trans. Emerg. Telecommun. Technol. 2022, 33, e3677. [Google Scholar] [CrossRef]

- Bukar, U.A.; Othman, M. Architectural design, improvement, and challenges of distributed software-defined wireless sensor networks. Wirel. Pers. Commun. 2022, 122, 2395–2439. [Google Scholar] [CrossRef]

- Faheem, M.; Butt, R.A.; Raza, B.; Ashraf, M.W.; Ngadi, M.A.; Gungor, V.C. Energy efficient and reliable data gathering using internet of software-defined mobile sinks for WSNs-based smart grid applications. Comput. Stand. Interfaces 2019, 66, 103341. [Google Scholar] [CrossRef]

- Luo, T.; Tan, H.P.; Quek, T.Q. Sensor OpenFlow: Enabling software-defined wireless sensor networks. IEEE Commun. Lett. 2012, 16, 1896–1899. [Google Scholar] [CrossRef]

- Mathebula, I.; Isong, B.; Gasela, N.; Abu-Mahfouz, A.M. Analysis of SDN-based security challenges and solution approaches for SDWSN usage. In Proceedings of the 2019 IEEE 28th International Symposium on Industrial Electronics (ISIE), Vancouver, BC, Canada, 12–14 June 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1288–1293. [Google Scholar]

- Jurado-Lasso, F.F.; Marchegiani, L.; Jurado, J.F.; Abu-Mahfouz, A.M.; Fafoutis, X. A survey on machine learning software-defined wireless sensor networks (ml-SDWSNS): Current status and major challenges. IEEE Access 2022, 10, 23560–23592. [Google Scholar] [CrossRef]

- Shah, S.F.A.; Iqbal, M.; Aziz, Z.; Rana, T.A.; Khalid, A.; Cheah, Y.N.; Arif, M. The role of machine learning and the internet of things in smart buildings for energy efficiency. Appl. Sci. 2022, 12, 7882. [Google Scholar] [CrossRef]

- Suryadevara, N.K. Energy and latency reductions at the fog gateway using a machine learning classifier. Sustain. Comput. Inform. Syst. 2021, 31, 100582. [Google Scholar] [CrossRef]

- Musaddiq, A.; Nain, Z.; Ahmad Qadri, Y.; Ali, R.; Kim, S.W. Reinforcement learning-enabled cross-layer optimization for low-power and lossy networks under heterogeneous traffic patterns. Sensors 2020, 20, 4158. [Google Scholar] [CrossRef]

- Mao, Q.; Hu, F.; Hao, Q. Deep learning for intelligent wireless networks: A comprehensive survey. IEEE Commun. Surv. Tutor. 2018, 20, 2595–2621. [Google Scholar] [CrossRef]

- Alsheikh, M.A.; Lin, S.; Niyato, D.; Tan, H.P. Machine learning in wireless sensor networks: Algorithms, strategies, and applications. IEEE Commun. Surv. Tutor. 2014, 16, 1996–2018. [Google Scholar] [CrossRef]

- Wang, M.; Cui, Y.; Wang, X.; Xiao, S.; Jiang, J. Machine learning for networking: Workflow, advances and opportunities. IEEE Netw. 2017, 32, 92–99. [Google Scholar] [CrossRef]

- DeMedeiros, K.; Hendawi, A.; Alvarez, M. A survey of AI-based anomaly detection in IoT and sensor networks. Sensors 2023, 23, 1352. [Google Scholar] [CrossRef] [PubMed]

- Kanawaday, A.; Sane, A. Machine learning for predictive maintenance of industrial machines using IoT sensor data. In Proceedings of the 2017 8th IEEE International Conference on Software Engineering and Service Science (ICSESS), Beijing, China, 24–26 November 2017; pp. 87–90. [Google Scholar]

- Sellami, B.; Hakiri, A.; Yahia, S.B.; Berthou, P. Energy-aware task scheduling and offloading using deep reinforcement learning in SDN-enabled IoT network. Comput. Netw. 2022, 210, 108957. [Google Scholar] [CrossRef]

- Ouhab, A.; Abreu, T.; Slimani, H.; Mellouk, A. Energy-efficient clustering and routing algorithm for large-scale SDN-based IoT monitoring. In Proceedings of the ICC 2020—2020 IEEE International Conference on Communications (ICC), Dublin, Ireland, 7–11 June 2020; pp. 1–6. [Google Scholar]

- Xu, S.; Liu, Q.; Gong, B.; Qi, F.; Guo, S.; Qiu, X.; Yang, C. RJCC: Reinforcement-learning-based joint communicational-and-computational resource allocation mechanism for smart city IoT. IEEE Internet Things J. 2020, 7, 8059–8076. [Google Scholar] [CrossRef]

- Yao, H.; Mai, T.; Xu, X.; Zhang, P.; Li, M.; Liu, Y. NetworkAI: An intelligent network architecture for self-learning control strategies in software defined networks. IEEE Internet Things J. 2018, 5, 4319–4327. [Google Scholar] [CrossRef]

- Younus, M.U.; Khan, M.K.; Bhatti, A.R. Improving the software-defined wireless sensor networks routing performance using reinforcement learning. IEEE Internet Things J. 2021, 9, 3495–3508. [Google Scholar] [CrossRef]

- Yu, C.; Lan, J.; Guo, Z.; Hu, Y. DROM: Optimizing the routing in software-defined networks with deep reinforcement learning. IEEE Access 2018, 6, 64533–64539. [Google Scholar] [CrossRef]

- Andres, A.; Villar-Rodriguez, E.; Martinez, A.D.; Del Ser, J. Collaborative exploration and reinforcement learning between heterogeneously skilled agents in environments with sparse rewards. In Proceedings of the 2021 International Joint Conference on Neural Networks (IJCNN), Virtual, 18–22 July 2021; pp. 1–10. [Google Scholar]

- Mammeri, Z. Reinforcement learning based routing in networks: Review and classification of approaches. IEEE Access 2019, 7, 55916–55950. [Google Scholar] [CrossRef]

- Marler, R.T.; Arora, J.S. The weighted sum method for multi-objective optimization: New insights. Struct. Multidiscip. Optim. 2010, 41, 853–862. [Google Scholar] [CrossRef]

- Kiran, B.R.; Sobh, I.; Talpaert, V.; Mannion, P.; Al Sallab, A.A.; Yogamani, S.; Pérez, P. Deep reinforcement learning for autonomous driving: A survey. IEEE Trans. Intell. Transp. Syst. 2021, 23, 4909–4926. [Google Scholar] [CrossRef]

- Brys, T.; Van Moffaert, K.; Nowé, A.; Taylor, M.E. Adaptive objective selection for correlated objectives in multi-objective reinforcement learning. In Proceedings of the 2014 International Conference on Autonomous Agents and Multi-Agent Systems, Paris, France, 5–9 May 2014; pp. 1349–1350. [Google Scholar]

- Alkama, L.; Bouallouche-Medjkoune, L. IEEE 802.15. 4 historical revolution versions: A survey. Computing 2021, 103, 99–131. [Google Scholar] [CrossRef]

- Maleki, M.; Hakami, V.; Dehghan, M. A model-based reinforcement learning algorithm for routing in energy harvesting mobile ad-hoc networks. Wirel. Pers. Commun. 2017, 95, 3119–3139. [Google Scholar] [CrossRef]

- Henderson, T.R.; Lacage, M.; Riley, G.F.; Dowell, C.; Kopena, J. Network simulations with the ns-3 simulator. SIGCOMM Demonstr. 2008, 14, 527. [Google Scholar]

- Yin, H.; Liu, P.; Liu, K.; Cao, L.; Zhang, L.; Gao, Y.; Hei, X. ns3-ai: Fostering artificial intelligence algorithms for networking research. In Proceedings of the 2020 Workshop on ns-3, Gaithersburg, MD, USA, 17–18 June 2020; pp. 57–64. [Google Scholar]

- Gawłowicz, P.; Zubow, A. Ns-3 meets openai gym: The playground for machine learning in networking research. In Proceedings of the 22nd International ACM Conference on Modeling, Analysis and Simulation of Wireless and Mobile Systems, Miami Beach, FL, USA, 25–29 November 2019; pp. 113–120. [Google Scholar]

| Parameters | Value | Description |

|---|---|---|

| 0.1 | Learning rate | |

| 0.99 | The discount factor | |

| 0.3 | Exploration rate | |

| 0.01 | Minimum exploration rate | |

| 0.995 | Exploration decay rate |

| Parameters | Value/Description |

|---|---|

| Traffic type | UDP |

| Learning rate | 0.3 |

| Number of RNs | 30, 45, and 60 |

| Discount factor | 0.85 |

| Packet size (Bits) | 512 |

| Simulation Time (s) | 200 |

| Number of source nodes | 2, 4, 6, 8, and 10 |

| Deployment of sensor nodes | Random |

| Packet generation rate (pkts/sec) | 10 |

| The initial energy of sensor nodes | 5 Joules |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Godfrey, D.; Suh, B.; Lim, B.H.; Lee, K.-C.; Kim, K.-I. An Energy-Efficient Routing Protocol with Reinforcement Learning in Software-Defined Wireless Sensor Networks. Sensors 2023, 23, 8435. https://doi.org/10.3390/s23208435

Godfrey D, Suh B, Lim BH, Lee K-C, Kim K-I. An Energy-Efficient Routing Protocol with Reinforcement Learning in Software-Defined Wireless Sensor Networks. Sensors. 2023; 23(20):8435. https://doi.org/10.3390/s23208435

Chicago/Turabian StyleGodfrey, Daniel, BeomKyu Suh, Byung Hyun Lim, Kyu-Chul Lee, and Ki-Il Kim. 2023. "An Energy-Efficient Routing Protocol with Reinforcement Learning in Software-Defined Wireless Sensor Networks" Sensors 23, no. 20: 8435. https://doi.org/10.3390/s23208435

APA StyleGodfrey, D., Suh, B., Lim, B. H., Lee, K.-C., & Kim, K.-I. (2023). An Energy-Efficient Routing Protocol with Reinforcement Learning in Software-Defined Wireless Sensor Networks. Sensors, 23(20), 8435. https://doi.org/10.3390/s23208435