IDAF: Iterative Dual-Scale Attentional Fusion Network for Automatic Modulation Recognition

Abstract

:1. Introduction

- We propose a deep learning method based on iterative dual-scale attentional fusion (iDAF), which complements the properties and complementarity of multimodal information with each other to achieve better recognition.

- We design two embedding layers to extract the local and global information, extracting information that promotes recognition from different-sized respective fields. The extracted features are sent into the iterative dual-channel attention module (iDCAM), which consists of the local and global branch. The branches respectively focus on the details of the high-level features and the variability across modalities.

- Experiments on the RML2016.10A dataset demonstrate the validity and rationalization of iDAF. The highest accuracy amount of 93.5% is achieved at 10 dB and the recognition accuracy is 0.6232 at full SNR.

2. Related works

2.1. Research on Traditional AMR Methods

2.2. Study of Different Inputs and DL-Models

3. The Proposed Method

3.1. Data Preprocessing

- In-phase/orthogonal (IQ): Generally, the receiver stores the signal in the modality of I/Q to facilitate mathematical operation and hardware design, which is expressed as follows:where I and Q represent the in-phase and quadrature components, and and refer to the real and imaginary parts of the signal, respectively.

- Amplitude/phas (AP): The instantaneous amplitude and phase of the signal are calculated, expressed as:where the values of n are .

- Spectrum (SP): The spectrum expresses the change of frequency over time, which is an important discrimination of different modulations. The calculation of the spectrum is expressed as:where n represents the n-th power of the spectrum, including 1, 2, and 4, which correspond to the Welch spectrum, square spectrum, and fourth power spectrum. Here, M1 and M2 represent the signal waveform and frequency, and M3 refers to signal time–frequency characteristics. The feature vectors of the three modalities were normalized into (batchsize ).

3.2. Iterative Dual-Scale Attentional Fusion Fusion (iDAF)

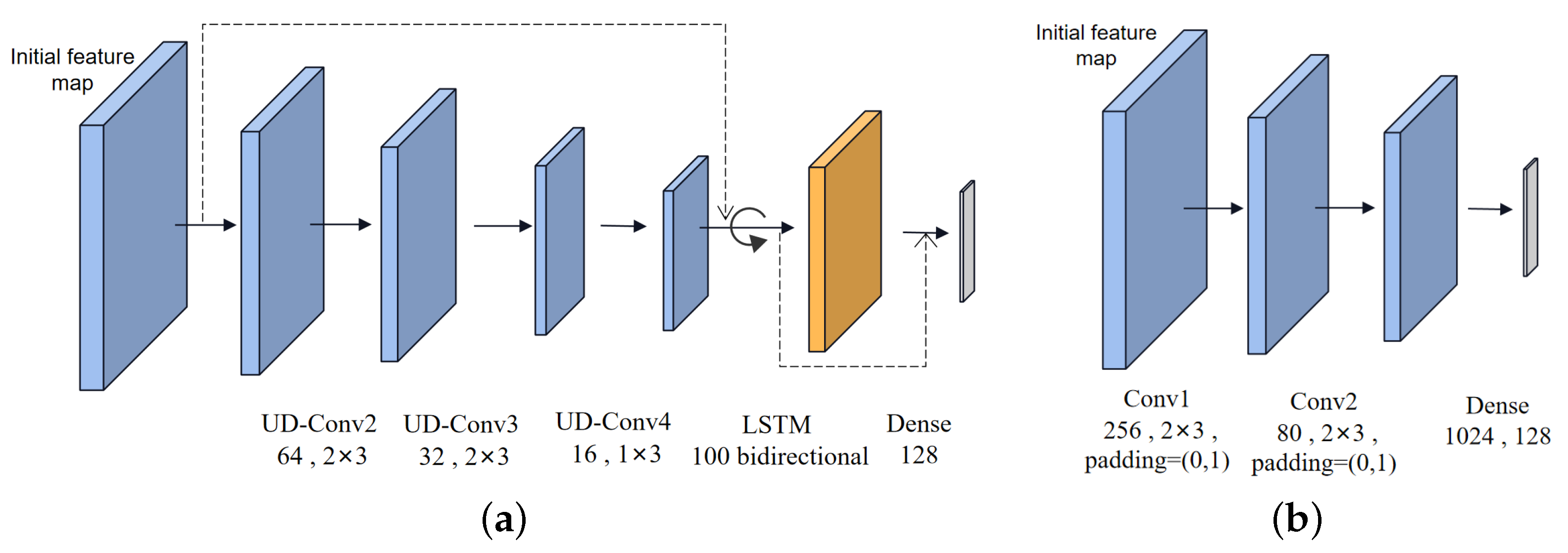

3.2.1. Data Embedding

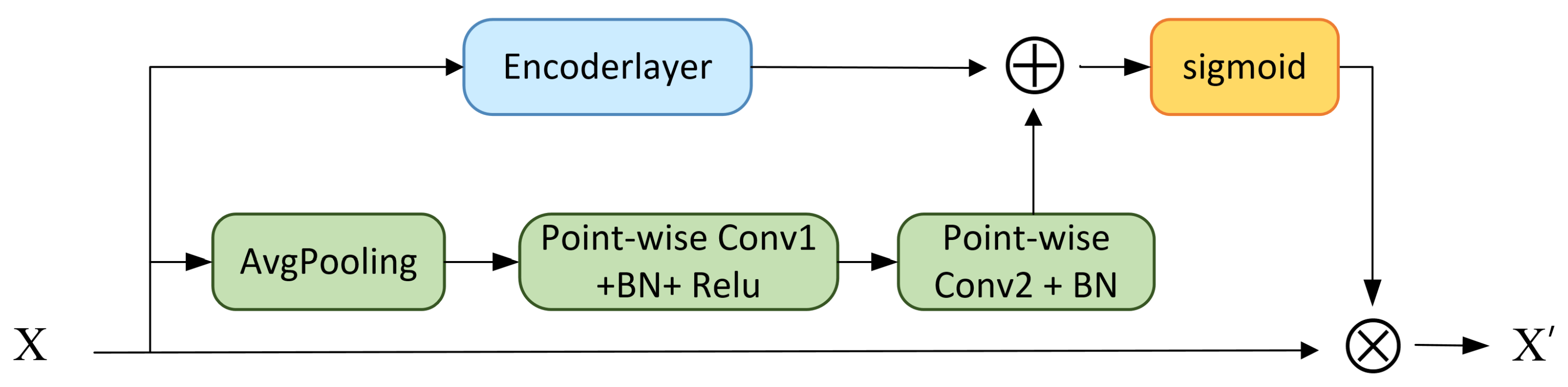

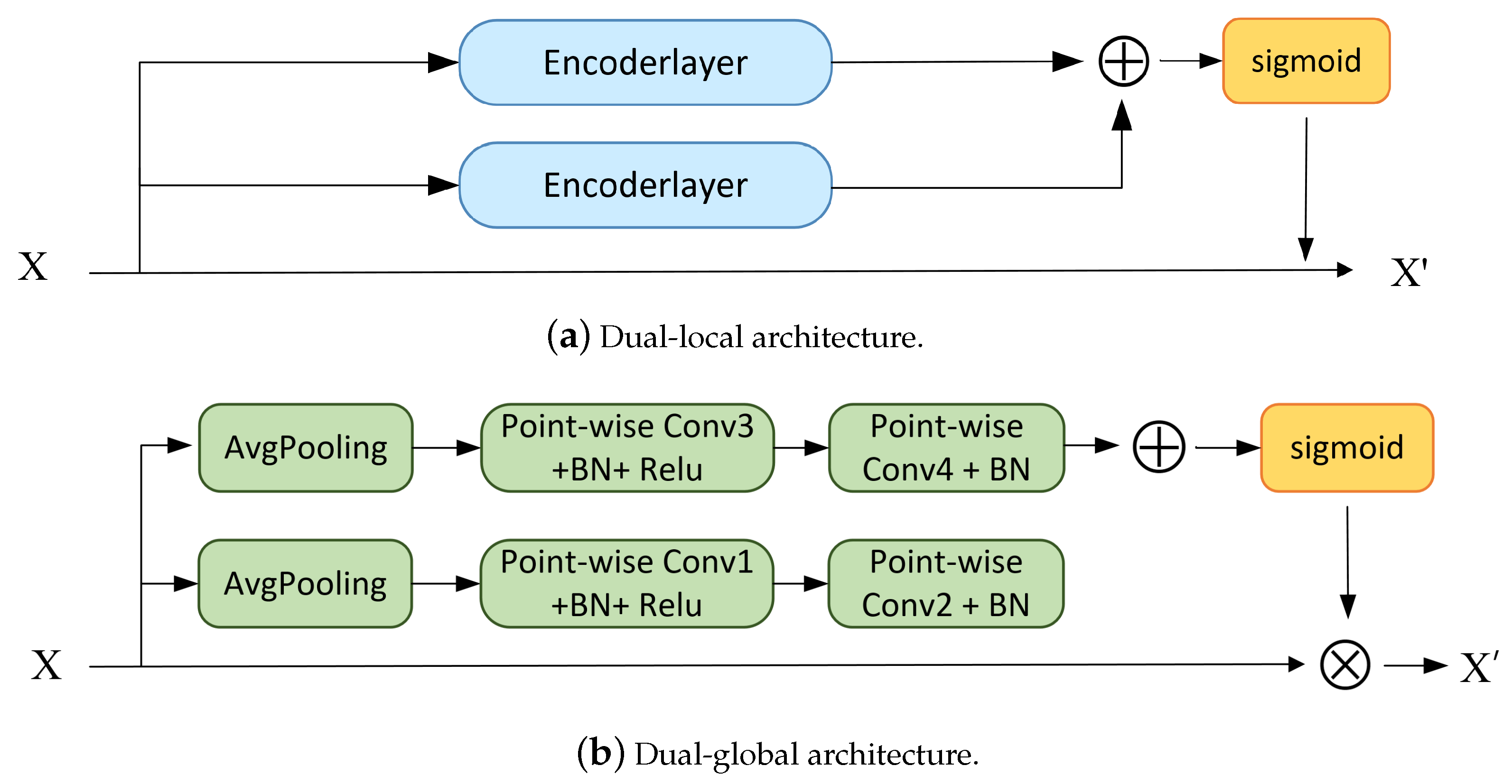

3.2.2. Dual-Scale Channel Attention Module

- (1)

- Passing through the encoder.

- (2)

- Construct the global channel attention matrix.

- (3)

- Matrix multiplication between the attention matrix and the original features.

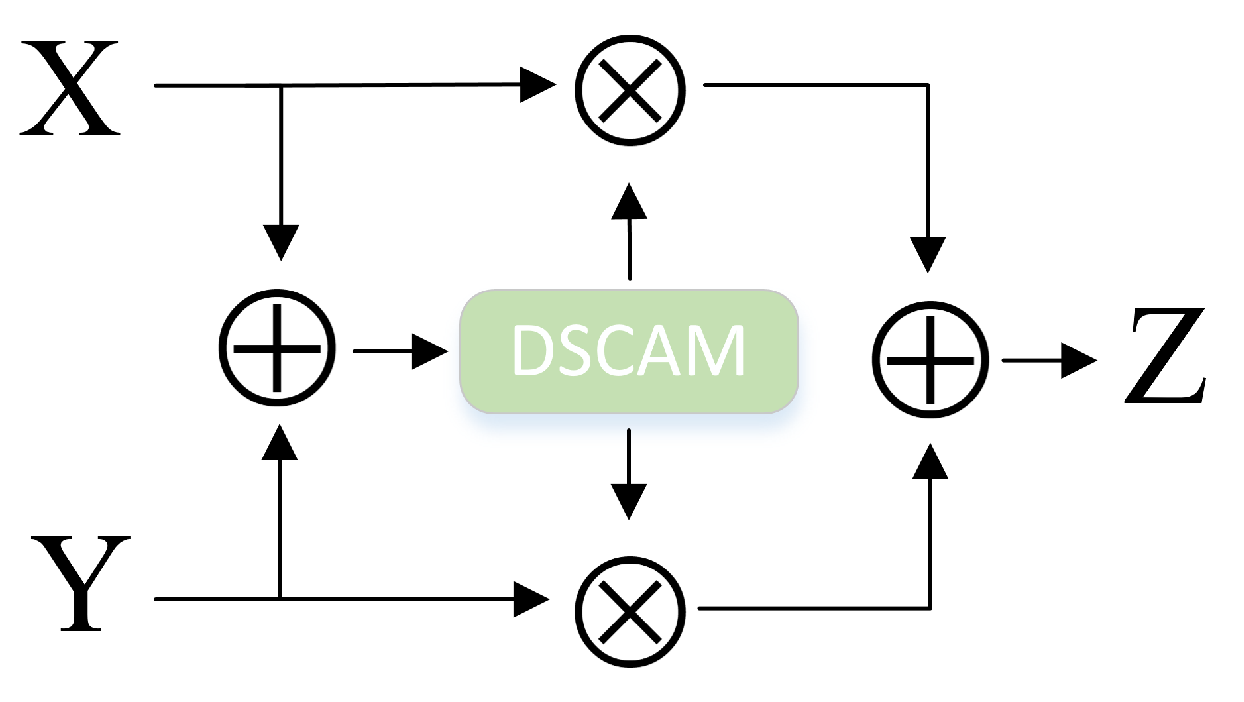

3.2.3. Iterative Dual-Channel Attention Module (iDCAM)

| Algorithm 1 IDAF |

|

3.2.4. Cross-Self-Attention Encoder

4. Experiment Results and Discussion

4.1. Datasets and Implemented Details

4.1.1. Datasets and Implemented Details

4.1.2. Evaluation Metrics

4.2. Comparative Validity Experiments

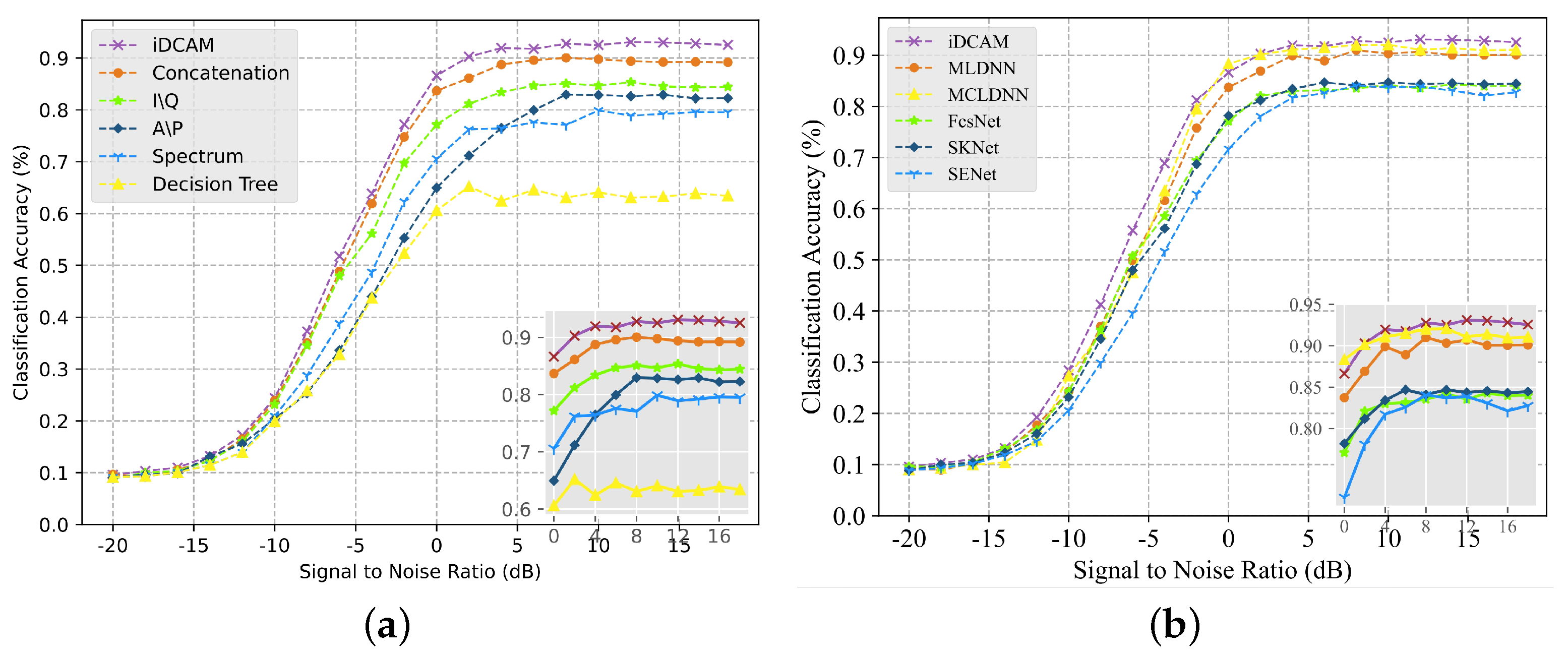

4.2.1. Comparison with Uni-Modal and Other AMR Networks

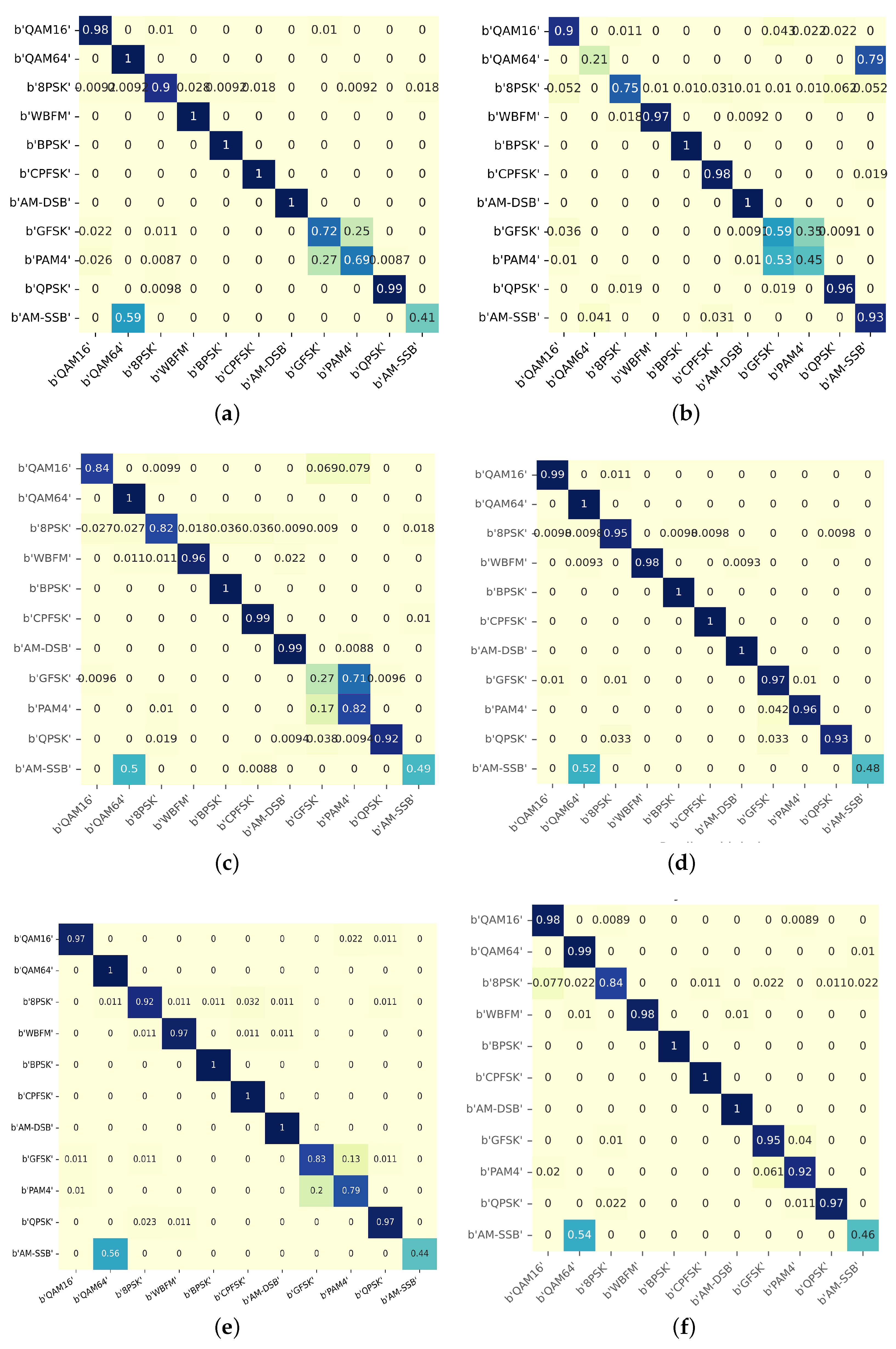

4.2.2. Comparison of iDCAM and Other Attention Mechanisms

4.2.3. Comparison with State-of-Art DL-AMR Methods

4.3. Ablation Studies

4.3.1. Ablation Experiments at Different Scales with DCAM

4.3.2. Ablation Experiments with Iterative Layers of iDCAM

4.4. Limitations and Constraints

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Dai, A.; Zhang, H.; Sun, H. Automatic modulation classification using stacked sparse auto-encoders. In Proceedings of the 2016 IEEE 13th International Conference on Signal Processing (ICSP), Chengdu, China, 6–10 November 2016; pp. 248–252. [Google Scholar]

- Al-Nuaimi, D.H.; Hashim, I.A.; Zainal Abidin, I.S.; Salman, L.B.; Mat Isa, N.A. Performance of feature-based techniques for automatic digital modulation recognition and classification—A review. Electronics 2019, 8, 1407. [Google Scholar] [CrossRef]

- Bhatti, F.A.; Khan, M.J.; Selim, A.; Paisana, F. Shared spectrum monitoring using deep learning. IEEE Trans. Cogn. Commun. Netw. 2021, 7, 1171–1185. [Google Scholar] [CrossRef]

- Richard, G.; Wiley, E. The Interception and Analysis of Radar Signals; Artech House: Boston, MA, USA, 2006. [Google Scholar]

- Kim, K.; Spooner, C.M.; Akbar, I.; Reed, J.H. Specific emitter identification for cognitive radio with application to IEEE 802.11. In Proceedings of the IEEE GLOBECOM 2008-2008 IEEE Global Telecommunications Conference, New Orleans, LA, USA, 30 November–4 December 2008; pp. 1–5. [Google Scholar]

- Wei, W.; Mendel, J.M. Maximum-likelihood classification for digital amplitude-phase modulations. IEEE Trans. Commun. 2000, 48, 189–193. [Google Scholar] [CrossRef]

- Xu, J.L.; Su, W.; Zhou, M. Likelihood-ratio approaches to automatic modulation classification. IEEE Trans. Syst. Man Cybern. Part C Appl. Rev. 2010, 41, 455–469. [Google Scholar] [CrossRef]

- Hazza, A.; Shoaib, M.; Alshebeili, S.A.; Fahad, A. An overview of feature-based methods for digital modulation classification. In Proceedings of the 2013 1st International Conference On Communications, Signal Processing, and Their Applications (ICCSPA), Sharjah, United Arab Emirates, 12–14 February 2013; pp. 1–6. [Google Scholar]

- Hao, Y.; Wang, X.; Lan, X. Frequency Domain Analysis and Convolutional Neural Network Based Modulation Signal Classification Method in OFDM System. In Proceedings of the 2021 13th International Conference on Wireless Communications and Signal Processing (WCSP), Changsha, China, 20–22 October 2021; pp. 1–5. [Google Scholar]

- Ali, A.; Yangyu, F. Unsupervised feature learning and automatic modulation classification using deep learning model. Phys. Commun. 2017, 25, 75–84. [Google Scholar] [CrossRef]

- Chang, S.; Huang, S.; Zhang, R.; Feng, Z.; Liu, L. Multitask-learning-based deep neural network for automatic modulation classification. IEEE Internet Things J. 2021, 9, 2192–2206. [Google Scholar] [CrossRef]

- Cao, M.; Yang, T.; Weng, J.; Zhang, C.; Wang, J.; Zou, Y. Locvtp: Video-text pre-training for temporal localization. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; Springer: Berlin/Heidelberg, Germany, 2022; pp. 38–56. [Google Scholar]

- O’Shea, T.J.; Corgan, J.; Clancy, T.C. Convolutional radio modulation recognition networks. In Proceedings of the Engineering Applications of Neural Networks: 17th International Conference, EANN 2016, Aberdeen, UK, 2–5 September 2016; Springer: Berlin/Heidelberg, Germany, 2016; pp. 213–226. [Google Scholar]

- Ke, Z.; Vikalo, H. Real-time radio technology and modulation classification via an LSTM auto-encoder. IEEE Trans. Wirel. Commun. 2021, 21, 370–382. [Google Scholar] [CrossRef]

- Liu, X.; Yang, D.; El Gamal, A. Deep neural network architectures for modulation classification. In Proceedings of the 2017 51st Asilomar Conference on Signals, Systems, and Computers, Pacific Grove, CA, USA, 29 October–1 November 2017; pp. 915–919. [Google Scholar]

- Rajendran, S.; Meert, W.; Giustiniano, D.; Lenders, V.; Pollin, S. Deep learning models for wireless signal classification with distributed low-cost spectrum sensors. IEEE Trans. Cogn. Commun. Netw. 2018, 4, 433–445. [Google Scholar] [CrossRef]

- Zhang, Z.; Wang, C.; Gan, C.; Sun, S.; Wang, M. Automatic modulation classification using convolutional neural network with features fusion of SPWVD and BJD. IEEE Trans. Signal Inf. Process. Netw. 2019, 5, 469–478. [Google Scholar] [CrossRef]

- Zeng, Y.; Zhang, M.; Han, F.; Gong, Y.; Zhang, J. Spectrum analysis and convolutional neural network for automatic modulation recognition. IEEE Wirel. Commun. Lett. 2019, 8, 929–932. [Google Scholar] [CrossRef]

- Zhang, F.; Luo, C.; Xu, J.; Luo, Y.; Zheng, F.C. Deep learning based automatic modulation recognition: Models, datasets, and challenges. Digit. Signal Process. 2022, 129, 103650. [Google Scholar] [CrossRef]

- Yuan, J.; Zhao-Yang, Z.; Pei-Liang, Q. Modulation classification of communication signals. In Proceedings of the IEEE MILCOM 2004, Military Communications Conference, 2004, Monterey, CA, USA, 31 October–3 November 2004; Volume 3, pp. 1470–1476. [Google Scholar] [CrossRef]

- Shi, F.; Hu, Z.; Yue, C.; Shen, Z. Combining neural networks for modulation recognition. Digit. Signal Process. 2022, 120, 103264. [Google Scholar] [CrossRef]

- Huang, F.-q.; Zhong, Z.-m.; Xu, Y.-t.; Ren, G.-c. Modulation recognition of symbol shaped digital signals. In Proceedings of the 2008 International Conference on Communications, Circuits and Systems, Xiamen, China, 25–27 May 2008; pp. 328–332. [Google Scholar]

- Zhang, X.; Li, T.; Gong, P.; Liu, R.; Zha, X. Modulation recognition of communication signals based on multimodal feature fusion. Sensors 2022, 22, 6539. [Google Scholar] [CrossRef] [PubMed]

- Qi, P.; Zhou, X.; Zheng, S.; Li, Z. Automatic modulation classification based on deep residual networks with multimodal information. IEEE Trans. Cogn. Commun. Netw. 2020, 7, 21–33. [Google Scholar] [CrossRef]

- Zhang, Z.; Luo, H.; Wang, C.; Gan, C.; Xiang, Y. Automatic modulation classification using CNN-LSTM based dual-stream structure. IEEE Trans. Veh. Technol. 2020, 69, 13521–13531. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 7132–7141. [Google Scholar]

- Hu, J.; Shen, L.; Albanie, S.; Sun, G.; Vedaldi, A. Gather-excite: Exploiting feature context in convolutional neural networks. arXiv 2018, arXiv:1810.12348. [Google Scholar]

- Gao, Z.; Xie, J.; Wang, Q.; Li, P. Global second-order pooling convolutional networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 3024–3033. [Google Scholar]

- Lee, H.; Kim, H.E.; Nam, H. Srm: A style-based recalibration module for convolutional neural networks. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Long Beach, CA, USA, 15–20 June 2019; pp. 1854–1862. [Google Scholar]

- Mnih, V.; Heess, N.; Graves, A. Recurrent models of visual attention. arXiv 2014, arXiv:1406.6247. [Google Scholar]

- Wang, X.; Girshick, R.; Gupta, A.; He, K. Non-local neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 7794–7803. [Google Scholar]

- Qin, Z.; Zhang, P.; Wu, F.; Li, X. Fcanet: Frequency channel attention networks. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 10–17 October 2021; pp. 783–792. [Google Scholar]

- Li, H.; Xiong, P.; An, J.; Wang, L. Pyramid attention network for semantic segmentation. arXiv 2018, arXiv:1805.10180. [Google Scholar]

- Wang, S.; Liang, D.; Song, J.; Li, Y.; Wu, W. Dabert: Dual attention enhanced bert for semantic matching. arXiv 2022, arXiv:2210.03454. [Google Scholar]

- Li, X.; Wang, W.; Hu, X.; Yang, J. Selective kernel networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 510–519. [Google Scholar]

- Yang, F.; Yang, L.; Wang, D.; Qi, P.; Wang, H. Method of modulation recognition based on combination algorithm of K-means clustering and grading training SVM. China Commun. 2018, 15, 55–63. [Google Scholar]

- Hussain, A.; Sohail, M.; Alam, S.; Ghauri, S.A.; Qureshi, I.M. Classification of M-QAM and M-PSK signals using genetic programming (GP). Neural Comput. Appl. 2019, 31, 6141–6149. [Google Scholar] [CrossRef]

- Das, D.; Bora, P.K.; Bhattacharjee, R. Blind modulation recognition of the lower order PSK signals under the MIMO keyhole channel. IEEE Commun. Lett. 2018, 22, 1834–1837. [Google Scholar] [CrossRef]

- Liu, Y.; Liang, G.; Xu, X.; Li, X. The Methods of Recognition for Common Used M-ary Digital Modulations. In Proceedings of the 2008 4th International Conference on Wireless Communications, Networking and Mobile Computing, Dalian, China, 12–14 October 2008; pp. 1–4. [Google Scholar] [CrossRef]

- Benedetto, F.; Tedeschi, A.; Giunta, G. Automatic Blind Modulation Recognition of Analog and Digital Signals in Cognitive Radios. In Proceedings of the 2016 IEEE 84th Vehicular Technology Conference (VTC-Fall), Montreal, QC, Canada, 18–21 September 2016; pp. 1–5. [Google Scholar] [CrossRef]

- Jiang, K.; Zhang, J.; Wu, H.; Wang, A.; Iwahori, Y. A novel digital modulation recognition algorithm based on deep convolutional neural network. Appl. Sci. 2020, 10, 1166. [Google Scholar] [CrossRef]

- Sainath, T.N.; Vinyals, O.; Senior, A.; Sak, H. Convolutional, Long Short-Term Memory, fully connected Deep Neural Networks. In Proceedings of the 2015 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), South Brisbane, QLD, Australia, 19–24 April 2015; pp. 4580–4584. [Google Scholar] [CrossRef]

- Sermanet, P.; LeCun, Y. Traffic sign recognition with multi-scale convolutional networks. In Proceedings of the The 2011 international joint conference on neural networks, San Jose, CA, USA, 31 July–5 August 2011; pp. 2809–2813. [Google Scholar]

- Soltau, H.; Saon, G.; Sainath, T.N. Joint training of convolutional and non-convolutional neural networks. In Proceedings of the 2014 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Florence, Italy, 4–9 May 2014; pp. 5572–5576. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 6000–6010. [Google Scholar]

- Park, J.; Woo, S.; Lee, J.Y.; Kweon, I.S. Bam: Bottleneck attention module. arXiv 2018, arXiv:1807.06514. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. Cbam: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Xu, J.; Luo, C.; Parr, G.; Luo, Y. A spatiotemporal multi-channel learning framework for automatic modulation recognition. IEEE Wirel. Commun. Lett. 2020, 9, 1629–1632. [Google Scholar] [CrossRef]

- Zheng, S.; Zhou, X.; Zhang, L.; Qi, P.; Qiu, K.; Zhu, J.; Yang, X. Towards Next-Generation Signal Intelligence: A Hybrid Knowledge and Data-Driven Deep Learning Framework for Radio Signal Classification. IEEE Trans. Cogn. Commun. Netw. 2023, 9, 564–579. [Google Scholar] [CrossRef]

| Domains | Models | Effects |

|---|---|---|

| I/Q | CNN combined with Deep Neural Networks (DNNs) [13], a combined CNN scheme [21] | Achieves high recognition of PAM4 at low signal-to-noise ratio (SNR) |

| A/P | Long Short Term Memory (LSTM) [16], a LSTM denoising auto-encoder [14] | Well recognize AM-SSB, and distinguish between QAM16 and QAM64 [22] |

| Spectrum | RSBU-CW with Welch spectrum, square spectrum, and fourth power spectrum [23]; SCNN [18] with the short-time Fourier transform (STFT), a fine-tuned CNN model [17] with smooth pseudo-Wigner–Ville distribution and Born–Jordan distribution | Achieves high accuracy of PSK [23], recognizes OFDM well, which is revealed only in the spectrum domain due to its plentiful sub-carriers [17] |

| Name | taskA | taskB |

|---|---|---|

| Direct aggregation on X | SENet [26] | |

| Aggregation after Slicing | FcaNet [32] | |

| Direct aggregation on Y | PAN [33] | |

| Gated multiple units | DABERT [34] | |

| Balanced weighting | SKNet [35] | |

| Iterative balanced weighting | iDAF |

| Dataset Content | Parameter Information |

|---|---|

| Software platform | GNUradio+Python |

| Data type and shape | I/Q (in-phase/orthogonal), 2 × 128 |

| Modulations | 8 digital modulations: 8PSK, BPSK, CPFSK, GFSK, PAM4, 16QAM, 64QAM, QPSK; 3 analog modulations: AM-DSB, AM-SSB, WBFM |

| Sample size | Each modulation has 2000 signal samples for a total of 220,000 |

| Signal-to-noise ratio | 2dB intervals from −20 dB to 18 dB |

| Channel environment | Additive White Gaussian Noise, Sample Rate Offset (SRO), Rician, Rayleigh, Center Frequency Offset (CFO) |

| Sample rate | 200 kHz |

| Sample rate offset standard deviation | 0.01 Hz |

| Model | Accuracy | Params (M) |

|---|---|---|

| SENet-ResNet18 | 0.6032 | 11.9 |

| SKNet-50 | 0.5994 | 27.6 |

| CBAM-ResNeXt50 | 0.6082 | 27.8 |

| Self-attention | 0.618 | 63.5 |

| BAM-Resnet-50 | 0.6038 | 24.7 |

| FcsNet | 0.6069 | 37.4 |

| iDCAM | 0.6232 | 6.9 |

| Model | Accuracy | Top1-Acc (Average) | F1 Score (Average) | FLOPS | Train Epochs |

|---|---|---|---|---|---|

| GRU | 0.5374 | 72.9% | 56.3% | 89,531 | 10 |

| DAE | 0.5632 | 75.7% | 59.8% | 67,682 | 9 |

| CLDNN | 0.5982 | 76.3% | 61.1% | 0.7 G | 11 |

| MCLDNN | 0.618 | 79.4% | 64.2% | 8.4 G | 21 |

| HKDD [49] | 0.6094 | 77.6% | 62.7% | 21.7 G | 38 |

| MLDNN [11] | 0.6106 | 78.5% | 63.2% | 36.7 G | 45 |

| iDAF | 0.6232 | 80.5% | 65.4% | 10.9 G | 34 |

| Model | Accuracy | Top1-Acc (Average) | F1 (Average) |

|---|---|---|---|

| GRU | 0.5732 | 75.3% | 60.3% |

| DAE | 0.5994 | 76.2% | 62.2% |

| CLDNN | 0.6082 | 77.1% | 62.6% |

| MCLDNN | 0.6314 | 80.8% | 65.9% |

| HKDD | 0.6198 | 78.2% | 64.1% |

| MLDNN | 0.6226 | 80.4% | 64.8% |

| iDCAM | 0.6483 | 81.2% | 66.7% |

| Architectures | Recognition Accuracy | FLOPs (G) |

|---|---|---|

| Local | 0.618 | 10.1 |

| Global | 0.6081 | / |

| Dual-local | 0.6192 | 20.2 |

| Dual-global | 0.6104 | / |

| Local-global | 0.6232 | 10.9 |

| Iterations K | One-Layer | Two-Layer | Three-Layer | Four-Layer |

|---|---|---|---|---|

| Accuracy | 0.6194 | 0.6232 | 0.6204 | 0.6181 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, B.; Ge, R.; Zhu, Y.; Zhang, B.; Zhang, X.; Bao, Y. IDAF: Iterative Dual-Scale Attentional Fusion Network for Automatic Modulation Recognition. Sensors 2023, 23, 8134. https://doi.org/10.3390/s23198134

Liu B, Ge R, Zhu Y, Zhang B, Zhang X, Bao Y. IDAF: Iterative Dual-Scale Attentional Fusion Network for Automatic Modulation Recognition. Sensors. 2023; 23(19):8134. https://doi.org/10.3390/s23198134

Chicago/Turabian StyleLiu, Bohan, Ruixing Ge, Yuxuan Zhu, Bolin Zhang, Xiaokai Zhang, and Yanfei Bao. 2023. "IDAF: Iterative Dual-Scale Attentional Fusion Network for Automatic Modulation Recognition" Sensors 23, no. 19: 8134. https://doi.org/10.3390/s23198134

APA StyleLiu, B., Ge, R., Zhu, Y., Zhang, B., Zhang, X., & Bao, Y. (2023). IDAF: Iterative Dual-Scale Attentional Fusion Network for Automatic Modulation Recognition. Sensors, 23(19), 8134. https://doi.org/10.3390/s23198134