IoT Solutions and AI-Based Frameworks for Masked-Face and Face Recognition to Fight the COVID-19 Pandemic

Abstract

1. Introduction

- Reduce the propagation of the COVID-19 pandemic.

- Precise improvement of the detection performance.

- High processing speed and seamless integration with surveillance cameras.

- They can be applied in schools, universities, and other institutions that monitor attendance using facial recognition. Consequently, administrators can readily determine if students, employees, and other visitors are wearing masks.

- A comprehensive overview of ML and DL algorithms and methods for face mask recognition and masked-face detection; in particular, operating modalities, advantages, and shortcomings of each method/algorithm are reported, along with application examples proposed in the scientific literature.

- A description of mobile networks for face mask detection and masked-face recognition applications developed during the COVID-19 pandemic to quickly detect whether people wear or not the mask, like Mobile Netv1 and MobileNetv2.

- A description of the main challenges and open issues for developing face mask detection systems, including precision, privacy, and improper use of private information.

- In-depth comparative analyzes of algorithms and models reported in the scientific literature to determine features and perspectives of innovative ML and DL tools for recognizing people wearing masks to fight future pandemics.

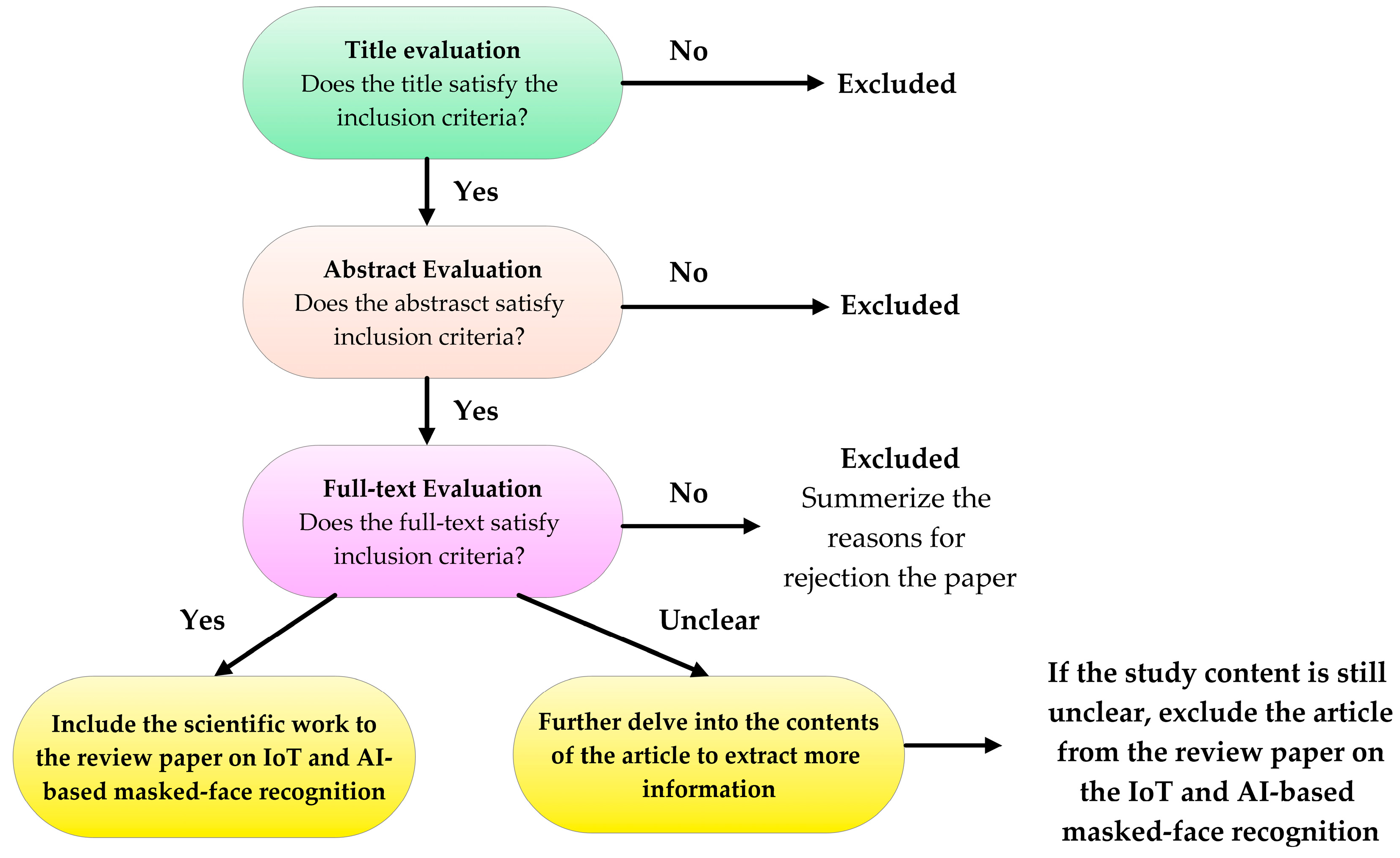

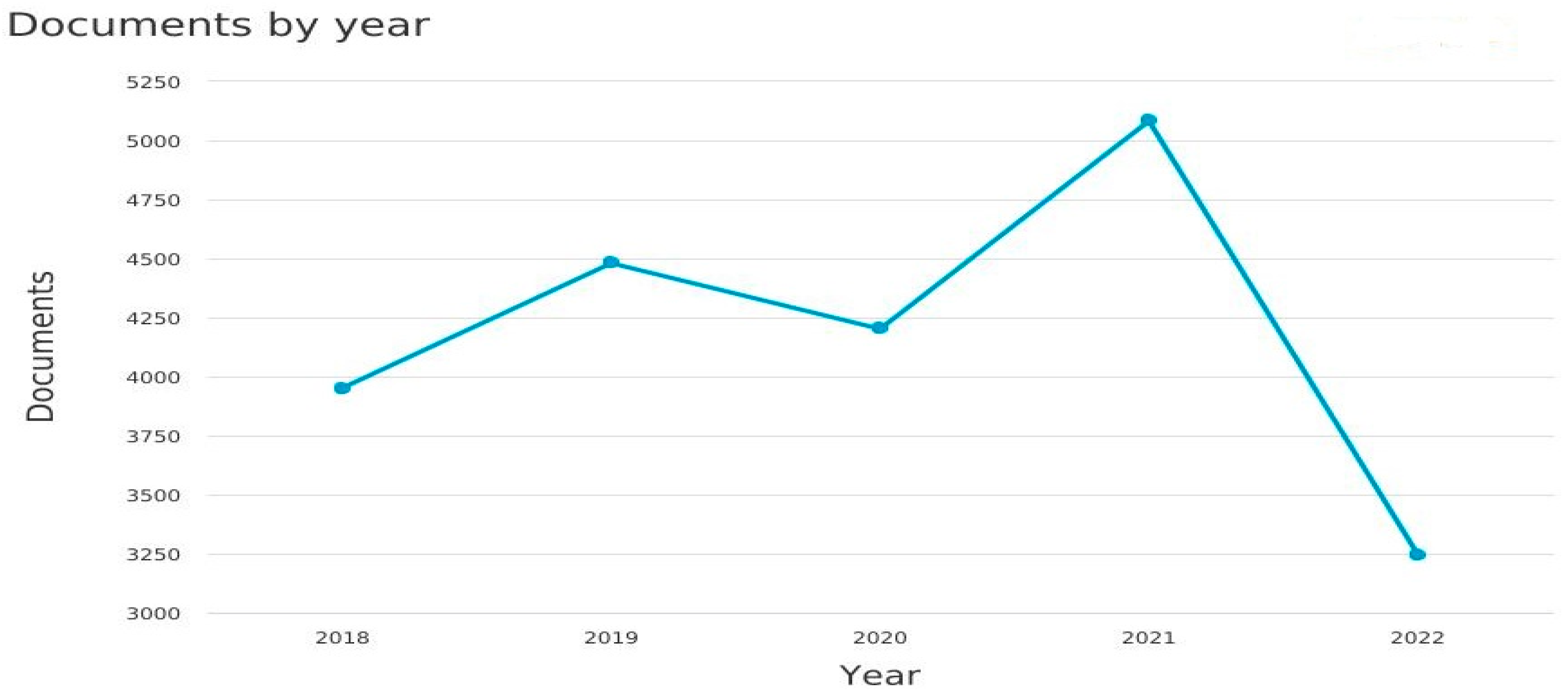

Paper Selection and Bibliometric Indexes

2. Machine Learning Prototypes Used to Identify Face Mask

- Image classification: ML models can be trained to classify images based on whether a person is wearing a face mask or not. This approach involves collecting a dataset of labeled images, where each image is categorized as either “with mask” or “without mask”. Using this dataset, a model can be trained to recognize patterns and features that distinguish between the two categories. Common algorithms for image classification are CNNs, SVM, Random Forest (RF), K-Nearest Neighbors (KNN), Naïve Bayes, etc.

- Object Detection: Object detection techniques can be employed to locate and classify face masks within an image or video. These models can identify the presence and position of face masks in real-time applications. Currently, for this application, several deep-learning techniques are applicable, like CNNs (e.g., R-CNN-Region-based CNN, Fast R-CNN, Faster R-CNN, YOLO-You Only Look Once, SSD-Single Shot MultiBox Detector, EfficientDet, etc.), which are discussed in Section 3.

- Facial Landmark Detection: Machine-learning models can also be trained to detect facial landmarks, such as the nose, mouth, and eyes, to determine if a face mask is properly worn. By analyzing the spatial relationships between these landmarks, the model can infer the presence and alignment of a face mask. Techniques like the Histogram of Oriented Gradients (HOG) combined with SVM or more modern methods like facial landmark detectors based on deep learning architectures (e.g., OpenPose, DLIB) can be utilized.

- Create M classifiers.

- Train each individual classifier.

- Combine the M classifiers and calculate their average throughput.

Datasets for Developing Face Mask Recognition Algorithms

3. Deep Learning (DL) Techniques Used to Identify Face Masks

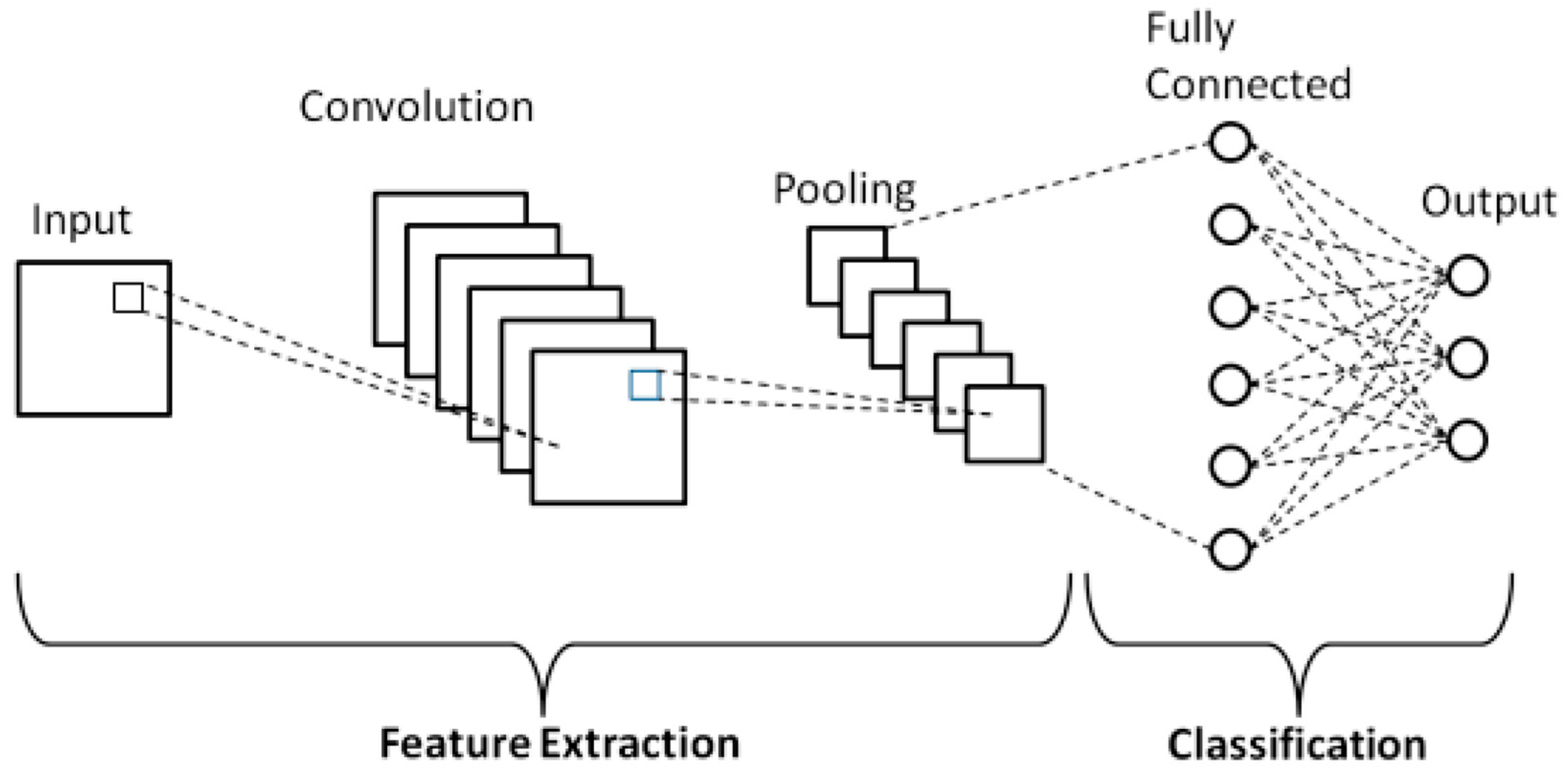

CNN for Face Mask Recognition

4. Mobile Networks for Face Mask Detection (Mobile Netv1 and MobileNetv2)

5. Face Mask Detection Sensors

6. Challenges in Face Mask Recognition Systems

- Accuracy: Face mask recognition technology should be developed and tested rigorously to ensure high accuracy rates, particularly in identifying both masked and unmasked individuals. False positives, where individuals are incorrectly identified as not wearing masks, can have severe consequences, such as denying access to essential services or causing unnecessary alarm. No face mask-recognition algorithm reaches 100% accuracy, even with the most sophisticated software. However, the technology is generally considered satisfactory, with at least 98% accuracy rates.

- Mask Variability: Face masks come in various shapes, sizes, colors, and designs. Recognizing and accommodating the diverse range of masks can be challenging for the algorithms. Each mask type may introduce unique textures, patterns, or features that must be considered for accurate recognition.

- Lighting and Environmental Factors: Variations in lighting conditions, such as shadows, reflections, or poor illumination, can affect the visibility of facial features and the overall performance of face mask recognition algorithms. Challenging lighting conditions can decrease accuracy and introduce additional variability.

- Rapid Deployment and Adaptation: The need for face mask recognition arose rapidly during the COVID-19 pandemic, requiring quick deployment of technology. Developing robust algorithms and adapting them to different scenarios and environments can be challenging due to the limited research, testing, and optimization time.

- Computational Resources: Implementing real-time face mask recognition systems that quickly process large amounts of data can be computationally demanding. High-speed processing and response times are crucial for applications where real-time identification is required.

- Database necessity: Training accurate and unbiased face mask recognition models requires diverse and representative datasets, including individuals wearing different types of masks. The availability of such datasets, as well as potential biases present in the data, can impact the performance and fairness of the algorithms.

- Ethical and Privacy Concerns: Face mask recognition involves capturing and processing personal biometric data, raising concerns about privacy, consent, and potential misuse of the collected information. Ensuring robust data protection measures, transparency, and addressing privacy concerns are important for the ethical use of the technology.

- User Acceptance and Cooperation: Face mask recognition systems often require user cooperation, such as proper positioning of masks, removing obstructions, or following specific guidelines. Achieving widespread user acceptance and compliance can be challenging, impacting the overall effectiveness of the technology.

- Use numerous forms of training sets to acquire knowledge.

- The use of deep learning techniques facilitates the correction of these differences.

7. Results and Discussions

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Zou, X. A Review of Object Detection Techniques. In Proceedings of the 2019 International Conference on Smart Grid and Electrical Automation (ICSGEA), Xiangtan, China, 10–11 August 2019; pp. 251–254. [Google Scholar]

- Calabrese, B.; Velázquez, R.; Del-Valle-Soto, C.; de Fazio, R.; Giannoccaro, N.I.; Visconti, P. Solar-Powered Deep Learning-Based Recognition System of Daily Used Objects and Human Faces for Assistance of the Visually Impaired. Energies 2020, 13, 6104. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Talahua, J.S.; Buele, J.; Calvopiña, P.; Varela-Aldás, J. Facial recognition system for people with and without face mask in times of the COVID-19 pandemic. Sustainability 2021, 13, 6900. [Google Scholar] [CrossRef]

- De Fazio, R.; Giannoccaro, N.I.; Carrasco, M.; Velazquez, R.; Visconti, P. Wearable Devices and IoT Applications for Detecting Symptoms, Infected Tracking, and Diffusion Containment of the COVID-19 Pandemic: A Survey. Front. Inf. Technol. Electron. Eng. 2021, 22, 1413–1442. [Google Scholar] [CrossRef]

- Visconti, P.; de Fazio, R.; Costantini, P.; Miccoli, S.; Cafagna, D. Innovative Complete Solution for Health Safety of Children Unintentionally Forgotten in a Car: A Smart Arduino-Based System with User App for Remote Control. IET Sci. Meas. Technol. 2020, 14, 665–675. [Google Scholar] [CrossRef]

- Ting, D.W.; Carin, L.; Dzau, V.; Wong, T.Y. Digital technology and COVID-19. Nat. Med. 2020, 26, 459–461. [Google Scholar] [CrossRef] [PubMed]

- Wechsler, H. Reliable Face Recognition Methods: System Design, Implementation and Evaluation, 2007th ed.; Springer: New York, NY, USA, 2006; ISBN 978-0-387-22372-8. [Google Scholar]

- Kundu, M.K.; Mitra, S.; Mazumdar, D.; Pal, S.K. Perception and Machine Intelligence. In Proceedings of the First Indo-Japan Conference, PerMIn 2012, Kolkata, India, 12–13 January 2011; Springer: Berlin/Heidelberg, Germany, 2012. ISBN 978-3-642-27386-5. [Google Scholar]

- Wechsler, H. Face in a Crowd. In Reliable Face Recognition Methods: System Design, Implementation and Evaluation; Springer: Boston, MA, USA, 2007; pp. 121–153. ISBN 978-0-387-38464-1. [Google Scholar]

- Li, S.Z.; Jain, A.K. (Eds.) Introduction. In Handbook of Face Recognition; Springer: London, UK, 2011; pp. 1–15. ISBN 978-0-85729-932-1. [Google Scholar]

- Fouquet, H. Paris Tests Facemask Recognition Software on Metro Riders—Bloomberg. Available online: https://www.bloomberg.com/news/articles/2020-05-07/paris-tests-face-mask-recognition-software-on-metro-riders#xj4y7vzkg (accessed on 15 June 2023).

- Graf, J.-P.; Neumann, J. Between Accuracy and Dignity: Legal Implications of Facial Recognition for Dead Combatants. Völkerrechtsblog 2022, 1–3. [Google Scholar] [CrossRef]

- Suganthalakshmi, R.; Hafeeza, A.; Abinaya, P.; Devi, A.G. COVID-19 facemask detection with deep learning and computer vision. Int. J. Eng. Res. Tech. (IJERT) ICRADL 2021, 9, 73–75. [Google Scholar]

- Zhao, Z.-Q.; Zheng, P.; Xu, S.-t.; Wu, X. Object detection with deep learning: A review. IEEE Trans. Neural Netw. Learn. Syst. 2019, 30, 3212–3232. [Google Scholar] [CrossRef]

- Punn, N.S.; Agarwal, S. Crowd Analysis for Congestion Control Early Warning System on Foot Over Bridge. In Proceedings of the 2019 Twelfth International Conference on Contemporary Computing (IC3), Noida, India, 8–10 August 2019; pp. 1–6. [Google Scholar]

- Parkhi, O.M.; Vedaldi, A.; Zisserman, A. Deep Face Recognition. In Proceedings of the British Machine Vision Conference 2015; British Machine Vision Association: Swansea, UK, 2015; pp. 41.1–41.12. [Google Scholar]

- Schroff, F.; Kalenichenko, D.; Philbin, J. FaceNet: A Unified Embedding for Face Recognition and Clustering. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; IEEE: Manhattan, NY, USA; pp. 815–823. [Google Scholar]

- Ullah, N.; Javed, A.; Ghazanfar, M.A.; Alsufyani, A.; Bourouis, S. A novel DeepMaskNet model for face mask detection and masked facial recognition. J. King Saud Univ.-Comput. Inf. Sci. 2022, 34, 9905–9914. [Google Scholar] [CrossRef]

- Agbehadji, I.E.; Awuzie, B.O.; Ngowi, A.B.; Millham, R.C. Review of big data analytics, artificial intelligence and nature-inspired computing models towards accurate detection of COVID-19 pandemic cases and contact tracing. Int. J. Environ. Res. Public Health 2020, 17, 5330. [Google Scholar] [CrossRef]

- Singhal, P.; Srivastava, P.K.; Tiwari, A.K.; Shukla, R.K. A Survey: Approaches to Facial Detection and Recognition with Machine Learning Techniques. In Proceedings of the Second Doctoral Symposium on Computational Intelligence; Gupta, D., Khanna, A., Kansal, V., Fortino, G., Hassanien, A.E., Eds.; Springer: Singapore, 2022; pp. 103–125. [Google Scholar]

- Ramík, D.M.; Sabourin, C.; Moreno, R.; Madani, K. A Machine Learning Based Intelligent Vision System for Autonomous Object Detection and Recognition. Appl. Intell. 2014, 40, 358–375. [Google Scholar] [CrossRef]

- Loey, M.; Manogaran, G.; Taha, M.H.N.; Khalifa, N.E.M. A hybrid deep transfer learning model with machine learning methods for face mask detection in the era of the COVID-19 pandemic. Measurement 2021, 167, 108288. [Google Scholar] [CrossRef] [PubMed]

- Adjed, F.; Faye, I.; Ababsa, F.; Gardezi, S.J.; Dass, S.C. Classification of Skin Cancer Images Using Local Binary Pattern and SVM Classifier. In Proceedings of the AIP Conference Proceedings, Kuala Lumpur, Malaysia, 15–17 August 2016; Volume 1787, pp. 1–5. [Google Scholar]

- Sadhukhan, M.; Bhattacharya, I. HybridFaceMaskNet: A Novel Facemask Detection Framework Using Hybrid Approach. Res. Sq. 2021, 1–7. [Google Scholar] [CrossRef]

- Cabani, A.; Hammoudi, K.; Benhabiles, H.; Melkemi, M. MaskedFace-Net—A Dataset of Correctly/Incorrectly Masked Face Images in the Context of COVID-19. Smart Health Amst. Neth. 2021, 19, 100144. [Google Scholar] [CrossRef]

- Hammoudi, K.; Cabani, A.; Benhabiles, H.; Melkemi, M. Validating the Correct Wearing of Protection Mask by Taking a Selfie: Design of a Mobile Application “CheckYourMask” to Limit the Spread of COVID-19. Comput. Model. Eng. Sci. 2020, 124, 1049–1059. [Google Scholar] [CrossRef]

- Batagelj, B.; Peer, P.; Štruc, V.; Dobrišek, S. How to Correctly Detect Facemasks for COVID-19 from Visual Information? Appl. Sci. 2021, 11, 2070. [Google Scholar] [CrossRef]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 618–626. [Google Scholar]

- DeepInsight RetinaFace Anti Cov Face Detector. Available online: https://github.com/deepinsight/insightface/tree/master/detection/retinaface_anticov (accessed on 15 May 2023).

- Eyiokur, F.I.; Ekenel, H.K.; Waibel, A. Unconstrained Face Mask and Face-Hand Interaction Datasets: Building a Computer Vision System to Help Prevent the Transmission of COVID-19. Signal Image Video Process. 2023, 17, 1027–1034. [Google Scholar] [CrossRef]

- Face Mask Detection Dataset 2020. Available online: https://www.kaggle.com/datasets/omkargurav/face-mask-dataset (accessed on 16 May 2023).

- Goyal, H.; Sidana, K.; Singh, C.; Jain, A.; Jindal, S. A Real Time Face Mask Detection System Using Convolutional Neural Network. Multimed. Tools Appl. 2022, 81, 14999–15015. [Google Scholar] [CrossRef]

- Ullah, N.; Javed, A. Face Mask Detection and Masked Facial Recognition Dataset (MDMFR Dataset) 2022. Available online: https://zenodo.org/record/6408603 (accessed on 16 May 2023).

- Kantarcı, A.; Ofli, F.; Imran, M.; Ekenel, H.K. BAFMD (Bias-Aware Face Mask Detection Dataset). arXiv 2022, arXiv:2211.01207. [Google Scholar]

- Ge, S.; Li, J.; Ye, Q.; Luo, Z. Detecting Masked Faces in the Wild with LLE-CNNs. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 426–434. [Google Scholar]

- Ji, H.; Alfarraj, O.; Tolba, A. Artificial intelligence-empowered edge of vehicles: Architecture, enabling technologies, and applications. IEEE Access 2020, 8, 61020–61034. [Google Scholar] [CrossRef]

- Mbunge, E.; Fashoto, S.G.; Bimha, H. Prediction of Box-Office Success: A Review of Trends and Machine Learning Computational Models. Int. J. Bus. Intell. Data Min. 2022, 20, 192–207. [Google Scholar] [CrossRef]

- Jiang, X.; Gao, T.; Zhu, Z.; Zhao, Y. Real-time face mask detection method based on YOLOv3. Electronics 2021, 10, 837. [Google Scholar] [CrossRef]

- De Fazio, R.; Mastronardi, V.M.; Petruzzi, M.; De Vittorio, M.; Visconti, P. Human–Machine Interaction through Advanced Haptic Sensors: A Piezoelectric Sensory Glove with Edge Machine Learning for Gesture and Object Recognition. Future Internet 2023, 15, 14. [Google Scholar] [CrossRef]

- Khan, A.; Sohail, A.; Zahoora, U.; Qureshi, A.S. A survey of the recent architectures of deep convolutional neural networks. Artif. Intell. Rev. 2020, 53, 5455–5516. [Google Scholar] [CrossRef]

- Rahman, A.; Hossain, M.S.; Alrajeh, N.A.; Alsolami, F. Adversarial examples—Security threats to COVID-19 deep learning systems in medical IoT devices. IEEE Internet Things J. 2020, 8, 9603–9610. [Google Scholar] [CrossRef]

- Mundial, I.Q.; Hassan, M.S.U.; Tiwana, M.I.; Qureshi, W.S.; Alanazi, E. In Towards facial recognition problem in COVID-19 pandemic. In Proceedings of the 2020 4rd International Conference on Electrical, Telecommunication and Computer Engineering (ELTICOM), Medan, Indonesia, 3–4 September 2020; pp. 210–214. [Google Scholar]

- Fan, X.; Jiang, M. RetinaFaceMask: A Single Stage Face Mask Detector for Assisting Control of the COVID-19 Pandemic. arXiv 2021, arXiv:2005.03950. [Google Scholar] [CrossRef]

- Cun, Y.L.; Boser, B.; Denker, J.S.; Howard, R.E.; Habbard, W.; Jackel, L.D.; Henderson, D. Handwritten Digit Recognition with a Backpropagation Network. In Advances in Neural Information Processing Systems 2; Morgan Kaufmann Publishers Inc.: San Francisco, CA, USA, 1990; pp. 396–404. ISBN 978-1-55860-100-0. [Google Scholar]

- Arora, D.; Garg, M.; Gupta, M. Diving Deep in Deep Convolutional Neural Network. In Proceedings of the 2020 2nd International Conference on Advances in Computing, Communication Control and Networking (ICACCCN), Greater Noida, India, 18–19 December 2020; pp. 749–751. [Google Scholar]

- Yao, G.; Lei, T.; Zhong, J. A review of convolutional-neural-network-based action recognition. Pattern Recognit. Lett. 2019, 118, 14–22. [Google Scholar] [CrossRef]

- McCann, M.T.; Jin, K.H.; Unser, M. Convolutional neural networks for inverse problems in imaging: A review. IEEE Signal Process. Mag. 2017, 34, 85–95. [Google Scholar] [CrossRef]

- Rawat, W.; Wang, Z. Deep convolutional neural networks for image classification: A comprehensive review. Neural Comput. 2017, 29, 2352–2449. [Google Scholar] [CrossRef]

- Aloysius, N.; Geetha, M. A Review on Deep Convolutional Neural Networks. In Proceedings of the 2017 International Conference on Communication and Signal Processing (ICCSP), Chennai, India, 6–8 April 2017; pp. 0588–0592. [Google Scholar]

- Al-Saffar, A.A.M.; Tao, H.; Talab, M.A. Review of Deep Convolution Neural Network in Image Classification. In Proceedings of the 2017 International Conference on Radar, Antenna, Microwave, Electronics, and Telecommunications (ICRAMET), Jakarta, Indonesia, 23–24 October 2017; pp. 26–31. [Google Scholar]

- Kamilaris, A.; Prenafeta-Boldú, F.X. A review of the use of convolutional neural networks in agriculture. J. Agric. Sci. 2018, 156, 312–322. [Google Scholar] [CrossRef]

- Yang, Z.; Yu, W.; Liang, P.; Guo, H.; Xia, L.; Zhang, F.; Ma, Y.; Ma, J. Deep transfer learning for military object recognition under small training set condition. Neural Comput. Appl. 2019, 31, 6469–6478. [Google Scholar] [CrossRef]

- Yamashita, R.; Nishio, M.; Do, R.K.G.; Togashi, K. Convolutional neural networks: An overview and application in radiology. Insights Imaging 2018, 9, 611–629. [Google Scholar] [CrossRef] [PubMed]

- Schwendicke, F.; Golla, T.; Dreher, M.; Krois, J. Convolutional neural networks for dental image diagnostics: A scoping review. J. Dent. 2019, 91, 103226. [Google Scholar] [CrossRef] [PubMed]

- Hassantabar, S.; Ahmadi, M.; Sharifi, A. Diagnosis and detection of infected tissue of COVID-19 patients based on lung X-ray image using convolutional neural network approaches. Chaos Solitons Fractals 2020, 140, 110170. [Google Scholar] [CrossRef] [PubMed]

- Lalmuanawma, S.; Hussain, J.; Chhakchhuak, L. Applications of machine learning and artificial intelligence for COVID-19 (SARS-CoV-2) pandemic: A review. Chaos Solitons Fractals 2020, 139, 110059. [Google Scholar] [CrossRef]

- Mukherjee, H.; Ghosh, S.; Dhar, A.; Obaidullah, S.M.; Santosh, K.; Roy, K. Deep neural network to detect COVID-19: One architecture for both CT Scans and Chest X-rays. Appl. Intell. 2021, 51, 2777–2789. [Google Scholar] [CrossRef]

- Al-Naami, B.; Badr, B.E.A.; Rawash, Y.Z.; Owida, H.A.; De Fazio, R.; Visconti, P. Social Media Devices’ Influence on User Neck Pain during the COVID-19 Pandemic: Collaborating Vertebral-GLCM Extracted Features with a Decision Tree. J. Imaging 2023, 9, 14. [Google Scholar] [CrossRef]

- Phung, V.H.; Rhee, E.J. A High-Accuracy Model Average Ensemble of Convolutional Neural Networks for Classification of Cloud Image Patches on Small Datasets. Appl. Sci. 2019, 9, 4500. [Google Scholar] [CrossRef]

- Meivel, S.; Sindhwani, N.; Anand, R.; Pandey, D.; Alnuaim, A.A.; Altheneyan, A.S.; Jabarulla, M.Y.; Lelisho, M.E. Mask Detection and Social Distance Identification using Internet of Things and Faster R-CNN Algorithm. Comput. Intell. Neurosci. 2022, 2022, 2103975. [Google Scholar] [CrossRef]

- Militante, S.V.; Dionisio, N.V. Real-Time Facemask Recognition with Alarm System Using Deep Learning. In Proceedings of the 2020 11th IEEE Control and System Graduate Research Colloquium (ICSGRC), Shah Alam, Malaysia, 8 August 2020; pp. 106–110. [Google Scholar]

- Nagrath, P.; Jain, R.; Madan, A.; Arora, R.; Kataria, P.; Hemanth, J. SSDMNV2: A real time DNN-based face mask detection system using single shot multibox detector and MobileNetV2. Sustain. Cities Soc. 2021, 66, 102692. [Google Scholar] [CrossRef]

- Liu, Z.; Yang, C.; Huang, J.; Liu, S.; Zhuo, Y.; Lu, X. Deep learning framework based on integration of S-Mask R-CNN and Inception-v3 for ultrasound image-aided diagnosis of prostate cancer. Future Gener. Comput. Syst. 2021, 114, 358–367. [Google Scholar] [CrossRef]

- Jignesh Chowdary, G.; Punn, N.S.; Sonbhadra, S.K.; Agarwal, S. Face Mask Detection Using Transfer Learning of InceptionV3. In Proceedings of the Big Data Analytics; Bellatreche, L., Goyal, V., Fujita, H., Mondal, A., Reddy, P.K., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 81–90. [Google Scholar]

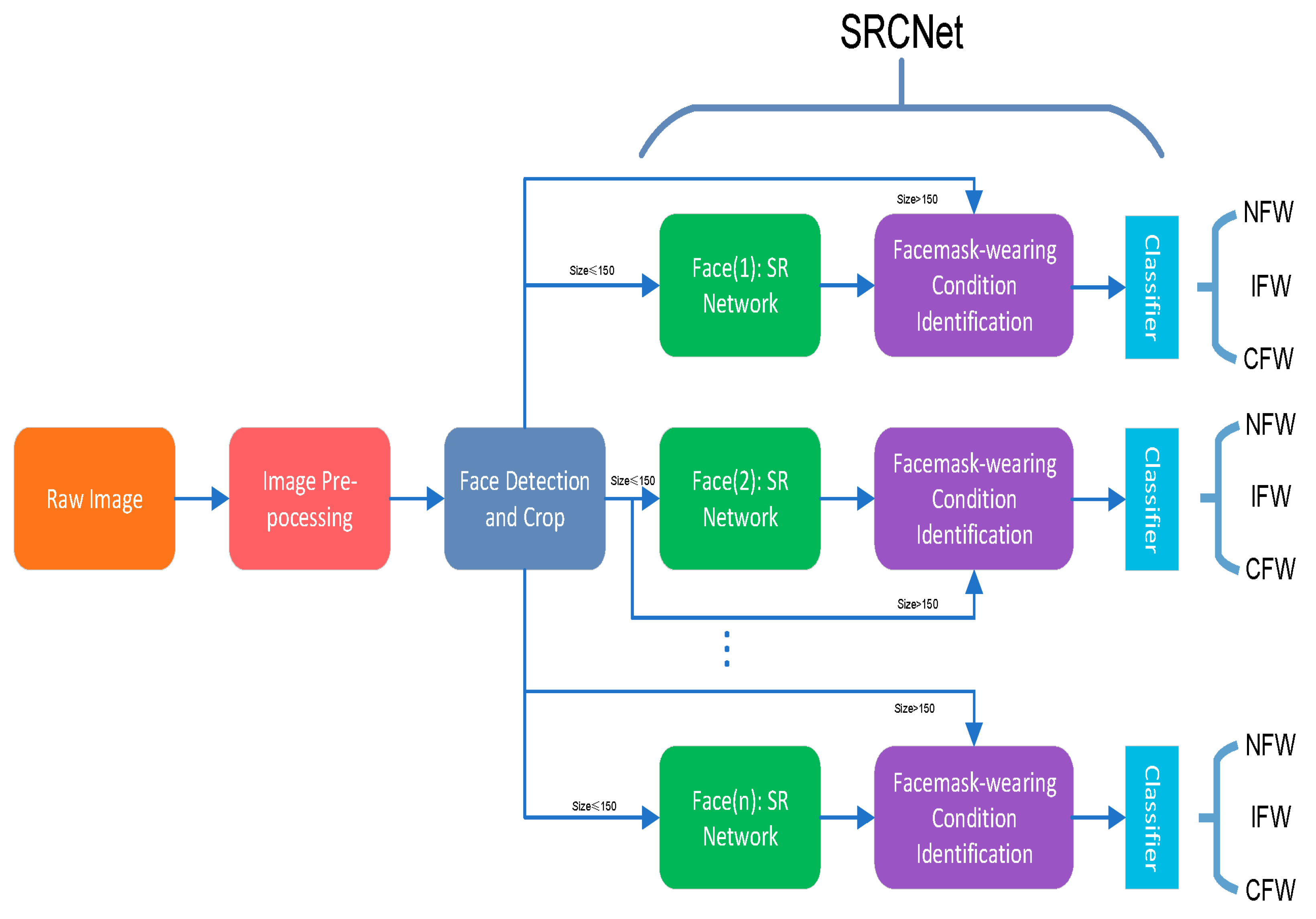

- Qin, B.; Li, D. Identifying facemask-wearing condition using image super-resolution with classification network to prevent COVID-19. Sensors 2020, 20, 5236. [Google Scholar] [CrossRef] [PubMed]

- Alonso-Fernandez, F.; Hernandez-Diaz, K.; Ramis, S.; Perales, F.J.; Bigun, J. Facial masks and soft-biometrics: Leveraging face recognition CNNs for age and gender prediction on mobile ocular images. IET Biom. 2021, 10, 562–580. [Google Scholar] [CrossRef]

- Liu, J.-J.; Hou, Q.; Cheng, M.-M.; Wang, C.; Feng, J. Improving Convolutional Networks with Self-Calibrated Convolutions. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 10093–10102. [Google Scholar]

- Ramachandran, P.; Parmar, N.; Vaswani, A.; Bello, I.; Levskaya, A.; Shlens, J. Stand-Alone Self-Attention in Vision Models. In Proceedings of the 33rd International Conference on Neural Information Processing Systems; Curran Associates Inc.: Red Hook, NY, USA, 2019; pp. 68–80. [Google Scholar]

- Loey, M.; Manogaran, G.; Taha, M.H.N.; Khalifa, N.E.M. Fighting against COVID-19: A novel deep learning model based on YOLO-v2 with ResNet-50 for medical face mask detection. Sustain. Cities Soc. 2021, 65, 102600. [Google Scholar] [CrossRef]

- Snyder, S.E.; Husari, G. Thor: A deep learning approach for face mask detection to prevent the COVID-19 pandemic. In Proceedings of the SoutheastCon 2021, Atlanta, GA, USA, 10–13 March 2021; pp. 1–8. [Google Scholar]

- Yadav, S. Deep learning based safe social distancing and face mask detection in public areas for COVID-19 safety guidelines adherence. Int. J. Res. Appl. Sci. Eng. Technol. 2020, 8, 1368–1375. [Google Scholar] [CrossRef]

- Inamdar, M.; Mehendale, N. Real-time face mask identification using facemasknet deep learning network. SSRN Electron. J. 2020. [Google Scholar] [CrossRef]

- Rahman, M.M.; Manik, M.M.H.; Islam, M.M.; Mahmud, S.; Kim, J.-H. An automated system to limit COVID-19 using facial mask detection in smart city network. In Proceedings of the 2020 IEEE International IOT, Electronics and Mechatronics Conference (IEMTRONICS), Vancouver, BC, Canada, 9–12 September 2020; pp. 1–5. [Google Scholar]

- Rao, T.S.; Devi, S.A.; Dileep, P.; Ram, M.S. A novel approach to detect face mask to control Covid using deep learning. Eur. J. Mol. Clin. Med. 2020, 7, 658–668. [Google Scholar]

- Lin, H.; Tse, R.; Tang, S.-K.; Chen, Y.; Ke, W.; Pau, G. Near-realtime face mask wearing recognition based on deep learning. In Proceedings of the 18th IEEE Annual Consumer Communications & Networking Conference (CCNC), Las Vegas, NV, USA, 9–12 January 2021; pp. 1–7. [Google Scholar]

- Chavda, A.; Dsouza, J.; Badgujar, S.; Damani, A. Multi-stage CNN architecture for face mask detection. In Proceedings of the 6th International Conference for Convergence in Technology (I2CT), Maharashtra, India, 2–4 April 2021; pp. 1–8. [Google Scholar]

- Christa, G.H.; Jesica, J.; Anisha, K.; Sagayam, K.M. CNN-based mask detection system using openCV and MobileNetV2. In Proceedings of the 3rd International Conference on Signal Processing and Communication (ICPSC), Coimbatore, India, 13–14 May 2021; pp. 115–119. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, QC, Canada, 7 December 2015; Volume 28, pp. 1–13. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- Taneja, S.; Nayyar, A.; Vividha; Nagrath, P. Face Mask Detection Using Deep Learning During COVID-19. In Proceedings of the Second International Conference on Computing, Communications, and Cyber-Security; Singh, P.K., Wierzchoń, S.T., Tanwar, S., Ganzha, M., Rodrigues, J.J.P.C., Eds.; Springer: Singapore, 2021; pp. 39–51. [Google Scholar]

- Shinde, R.K.; Alam, M.S.; Park, S.G.; Park, S.M.; Kim, N. Intelligent IoT (IIoT) Device to Identifying Suspected COVID-19 Infections Using Sensor Fusion Algorithm and Real-Time Mask Detection Based on the Enhanced MobileNetV2 Model. Healthcare 2022, 10, 454. [Google Scholar] [CrossRef]

- Hussain, S.; Yu, Y.; Ayoub, M.; Khan, A.; Rehman, R.; Wahid, J.A.; Hou, W. IoT and deep learning based approach for rapid screening and face mask detection for infection spread control of COVID-19. Appl. Sci. 2021, 11, 3495. [Google Scholar] [CrossRef]

- Petrović, N.; Kocić, Đ. IoT-based system for COVID-19 indoor safety monitoring. In Proceedings of the 7th International Conference on Electrical, Electronic and Computing Engineering IcETRAN, Belgrade, Serbia, 28–30 September 2020. [Google Scholar]

- Varshini, B.; Yogesh, H.; Pasha, S.D.; Suhail, M.; Madhumitha, V.; Sasi, A. IoT-Enabled smart doors for monitoring body temperature and face mask detection. Glob. Transit. Proc. 2021, 2, 246–254. [Google Scholar] [CrossRef]

- Hussain, G.K.J.; Priya, R.; Rajarajeswari, S.; Prasanth, P.; Niyazuddeen, N. The Face Mask Detection Technology for Image Analysis in the COVID-19 Surveillance System. In Proceedings of the Journal of Physics: Conference Series, Thessaloniki, Greece, 16–19 June 2021; Volume 1916, p. 012084. [Google Scholar]

- Asghar, M.Z.; Albogamy, F.R.; Al-Rakhami, M.S.; Asghar, J.; Rahmat, M.K.; Alam, M.M.; Lajis, A.; Nasir, H.M. Facial Mask Detection Using Depthwise Separable Convolutional Neural Network Model During COVID-19 Pandemic. Front. Public Health 2022, 10, 855254. [Google Scholar] [CrossRef] [PubMed]

- Arbib, M.A. The Handbook of Brain Theory and Neural Networks, 2nd ed.; A Bradford Book: Cambridge, MA, USA, 2002; ISBN 978-0-262-01197-6. [Google Scholar]

- Shadin, N.S.; Sanjana, S.; Ibrahim, D. Face Mask Detection Using Deep Learning and Transfer Learning Models. In Proceedings of the 2022 International Conference on Innovations in Science, Engineering and Technology (ICISET), Chittagong, Bangladesh, 26 February 2022; pp. 196–201. [Google Scholar]

- Lu, P.; Song, B.; Xu, L. Human Face Recognition Based on Convolutional Neural Network and Augmented Dataset. Syst. Sci. Control. Eng. 2020, 9, 29–37. Available online: http://mc.manuscriptcentral.com/tssc (accessed on 22 May 2023). [CrossRef]

- Khan, S.; Javed, M.H.; Ahmed, E.; Shah, S.A.A.; Ali, S.U. Facial Recognition Using Convolutional Neural Networks and Implementation on Smart Glasses. In Proceedings of the 2019 International Conference on Information Science and Communication Technology, ICISCT 2019, Karachi, Pakistan, 1 March 2019; pp. 1–15. [Google Scholar]

- Wang, J.; Li, Z. Research on Face Recognition Based on CNN. In Proceedings of the IOP Conference Series: Earth and Environmental Science, Banda Aceh, Indonesia, 26–27 September 2018; Volume 170, p. 032110. [Google Scholar]

- Sinha, D.; El-Sharkawy, M. Thin MobileNet: An Enhanced MobileNet Architecture. In Proceedings of the 2019 IEEE 10th Annual Ubiquitous Computing, Electronics & Mobile Communication Conference (UEMCON), New York, NY, USA, 10–12 October 2019; pp. 280–285. [Google Scholar]

- Liu, Y.; Miao, C.; Ji, J.; Li, X. MMF: A Multi-Scale MobileNet Based Fusion Method for Infrared and Visible Image. Infrared Phys. Technol. 2021, 119, 103894. [Google Scholar] [CrossRef]

- Dong, K.; Zhou, C.; Ruan, Y.; Li, Y. MobileNetV2 Model for Image Classification. In Proceedings of the 2020 2nd International Conference on Information Technology and Computer Application (ITCA), Guangzhou, China, 18–20 December 2020; pp. 476–480. [Google Scholar]

| Dataset | Total Number of Images | Number of Images with Mask | Number of Images without the Mask | Method | Mean Average Precision (%) |

|---|---|---|---|---|---|

| MaskedFace-Net [26] | 133,783 | 67,049 | 66,734 | Haar-features + Face and Nose Detection algorithm | 99.92 [27] |

| FMLD [28] | 41,934 | 29,532 | 33,540 | FetinaFace AntiCov | 92.93 [29] 88.91 [30] |

| ISL-UFMD [31] | 21,816 | 10,698 | 10,618 | Inception-v3 | 98.20 [31] |

| Face Mask Detection [32] | 7553 | 3725 | 3828 | CNN | 98.00 [33] |

| MDMFR [34] | 6006 | 3174 | 2832 | DeepMaskNet | 100.00 [19] |

| BAFMD [35] | 13,000 | 6264 | 3118 | YOLO-v5 AntiCov | 86.80 [35] 78.10 [35] |

| MAFA [36] | 35,806 | 911 | 30,811 | YOLO-v5 AntiCov | 87.30 [35] 84.90 [35] |

| Recognition Techniques | Technology | Description | References |

|---|---|---|---|

| Machine Learning | Support Vector Machines (SVM), decision trees, Multilayered Deep Neural Networks DL used for Access Control, Convolutional-neural-network-based action recognition | These cutting-edge techniques prioritize accuracy in some situations and speed in others. This section describes object detection utilizing the deep learning approach instead of the benefits of deep learning techniques in a real-time application. | [24,37,38,39,40,41,42,43,44,47,48,49,50,51,52,53,54,55,56,57,58] |

| Computer vision | There are now numerous object detection methods available. | For object detection, it is possible to find and recognize specific kinds of things in pictures and videos. Additionally, this method localizes the objects in the supplied image using bounding boxes. This can count the number of items in the image that has been provided. | [14,15,16] |

| CNN | Artificial Neural Networks, Layered CNN Inceptionv3 Super-Resolution of Images (SRCNet) Residual Networks | They focus on areas, or regions, in a photo similar to other areas, such as the pixelated region of an eye. If this region of the eye matches up with other eye regions, then the R-CNN knows it has found a match. However, CNNs can become so complex that they “overfit,” which means they match regions of noise in the training data and not the intended patterns of facial features. | [44,47,48,49,50,51,52,53,54,55,56,57,58,59,60,61,62,63,64,65,66,67,68,69,70,71,72,73,74] |

| Mobile Networks (MobileNet v1 and MobileNetv2) | Deep learning, TensorFlow, Keras, and OpenCV | MobileNets V1 are built on a simplified design that creates lightweight deep neural networks using depth-wise separable convolutions. Building on the concepts of MobileNet V1 MobileNet V2 employs depth-wise separable convolution as effective building pieces. Linear bottlenecks between layers and short connections between bottlenecks are two new characteristics added to the architecture by V2. | [78,79,80,81] |

| Sensors | Sensor Fusion (SF) approach with MobileNetv2, deep learning | Fusing data from at least two sensors is known as Sensor Fusion. Perception is the analysis and classification of sensor data to find, recognize, categorize, and track objects (e.g., faces). | [82,83,84,85,86] |

| Technique | Prototype/Method/Domain | Ref. |

|---|---|---|

| Machine Learning | Support Vector Machines (SVM), decision trees, and combination techniques | [23] |

| SVM | [24] | |

| Deep Learning | Multilayered Deep Neural Networks | [39] |

| DL used for Access Control | [42,43] | |

| Convolutional Neural Networks | A framework consideration module. | [44] |

| Convolutional-neural-network-based action recognition | [47,48,49,50,51] | |

| CNN adapted in the agriculture, defense, and medicine sectors. | [52,53,54,55,56,57,58] | |

| Artificial Neural Networks | [44,54] | |

| Layered CNN | [60,61,62,63,76,77] | |

| Inceptionv3 | [64,65] | |

| Super-Resolution of Images (SRCNet) | [66,67] | |

| Residual Networks | [68,69,70,71] | |

| Model for detecting people don’t wear masks | [72,73,74] | |

| Mobile Networks (MobileNet v1 and MobileNetv2) | Deep learning, TensorFlow, Keras, and OpenCV | [78,79,80,81] |

| Sensors | Sensor Fusion (SF) approach with MobileNetv2, deep learning | [82,83,84,85,86] |

| Work | AI Models | Achieved Accuracy |

|---|---|---|

| M. Loey et al., (2021) [23] | Hybrid deep transfer learning model Support Vector Machines (SVM), decision trees, and combination techniques | 99.64% |

| M. Loey et al., (2021) [70] | A model that integrated YOLO-v2 and ResNet-50 DL (Residual Networks) | 81% |

| M. M. Rahman (2020) [74] | Lightweight neural network (for detecting people who do not wear masks) | 85% |

| M. Inamdar and N. Mehendale (2020) [73] | A novel DL model (utilizing public recognition database to compile information) | 98% |

| S. Yadav (2020) [72] | Deep Learning and computer vision-based approach (method based on computer vision) | 95% |

| T. Rao and S. Devi (2020) [75] | Multi-stage CNN architecture for face mask Identification. | 91.2% |

| H. Lin et al., (2021) [76] | CNN combined with the DL approach for mask identification. | 95.8% |

| A. Chavda et al., (2021) [77] | Multi-stage CNN architecture for face mask detection | 99.98% |

| S. E. Snyder et al., (2021) [71] | Different types of deep learning for detecting face mask ((ResNet-50) with Feature Pyramid Network (FPN), Multi-Task CNN (MT-CNN), CNN classifier) | 99.2% |

| S. Taneja et al., (2021) [81] | CNN-based mask identification Method Utilizing OpenCV and MobileNetV2 | 99% |

| G. H. Christa et al., (2021) [78] | Deep learning, TensorFlow, Keras, and OpenCV | 99% |

| S. Ren et al., (2015) [79] | Lightweight Region Proposal Networks (RPNs) | 73% |

| R. K. Shinde et al., (2022) [82] | Sensor Fusion (SF) approach | 99.26% |

| S. Hussain et al., (2021) [83] | Smart Screening and Disinfection Walkthrough Gate (SSDWG) | 99.81% |

| N. Petrović and Đ. Kocić (2020) [84] | Contactless sensor with computer vision | 91% |

| B. Varshini et al., (2021) [85] | Sensors with deep learning | 97% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Al-Nabulsi, J.; Turab, N.; Owida, H.A.; Al-Naami, B.; De Fazio, R.; Visconti, P. IoT Solutions and AI-Based Frameworks for Masked-Face and Face Recognition to Fight the COVID-19 Pandemic. Sensors 2023, 23, 7193. https://doi.org/10.3390/s23167193

Al-Nabulsi J, Turab N, Owida HA, Al-Naami B, De Fazio R, Visconti P. IoT Solutions and AI-Based Frameworks for Masked-Face and Face Recognition to Fight the COVID-19 Pandemic. Sensors. 2023; 23(16):7193. https://doi.org/10.3390/s23167193

Chicago/Turabian StyleAl-Nabulsi, Jamal, Nidal Turab, Hamza Abu Owida, Bassam Al-Naami, Roberto De Fazio, and Paolo Visconti. 2023. "IoT Solutions and AI-Based Frameworks for Masked-Face and Face Recognition to Fight the COVID-19 Pandemic" Sensors 23, no. 16: 7193. https://doi.org/10.3390/s23167193

APA StyleAl-Nabulsi, J., Turab, N., Owida, H. A., Al-Naami, B., De Fazio, R., & Visconti, P. (2023). IoT Solutions and AI-Based Frameworks for Masked-Face and Face Recognition to Fight the COVID-19 Pandemic. Sensors, 23(16), 7193. https://doi.org/10.3390/s23167193