Machine Learning Model Application and Comparison in Actuated Traffic Signal Forecasting

Abstract

:1. Introduction

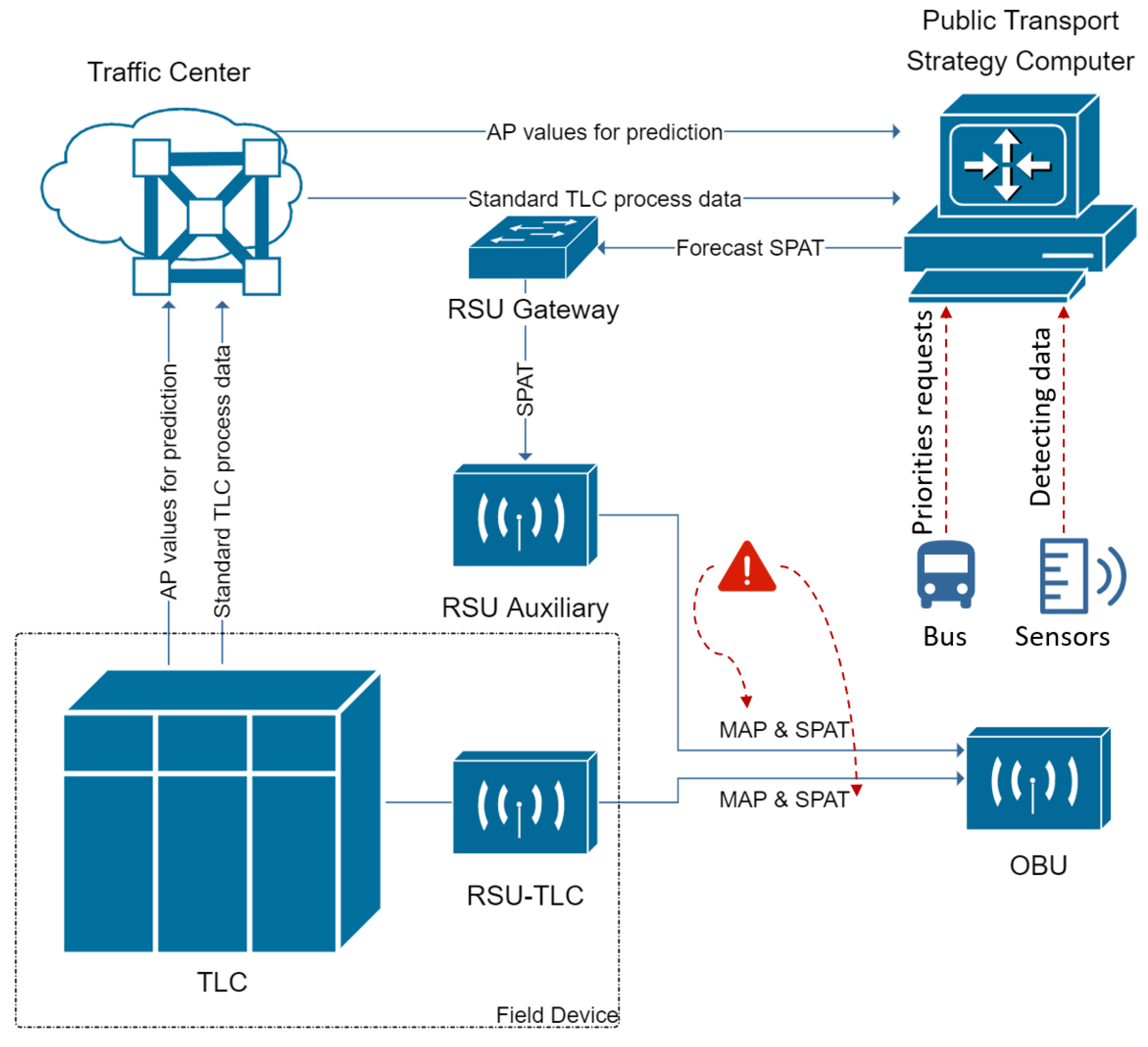

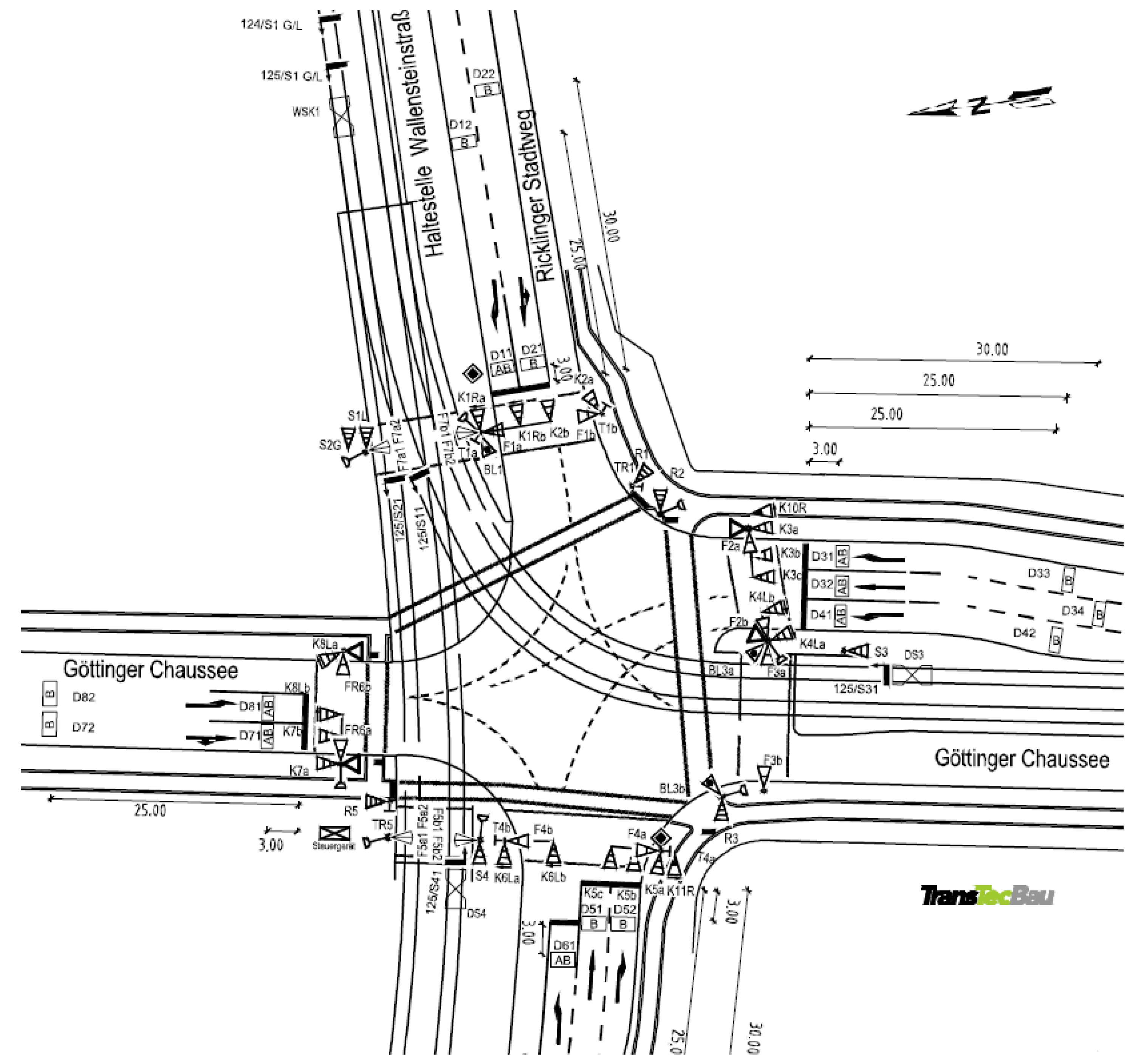

2. Materials and Methods

2.1. Data Preparation

2.1.1. Data Processing

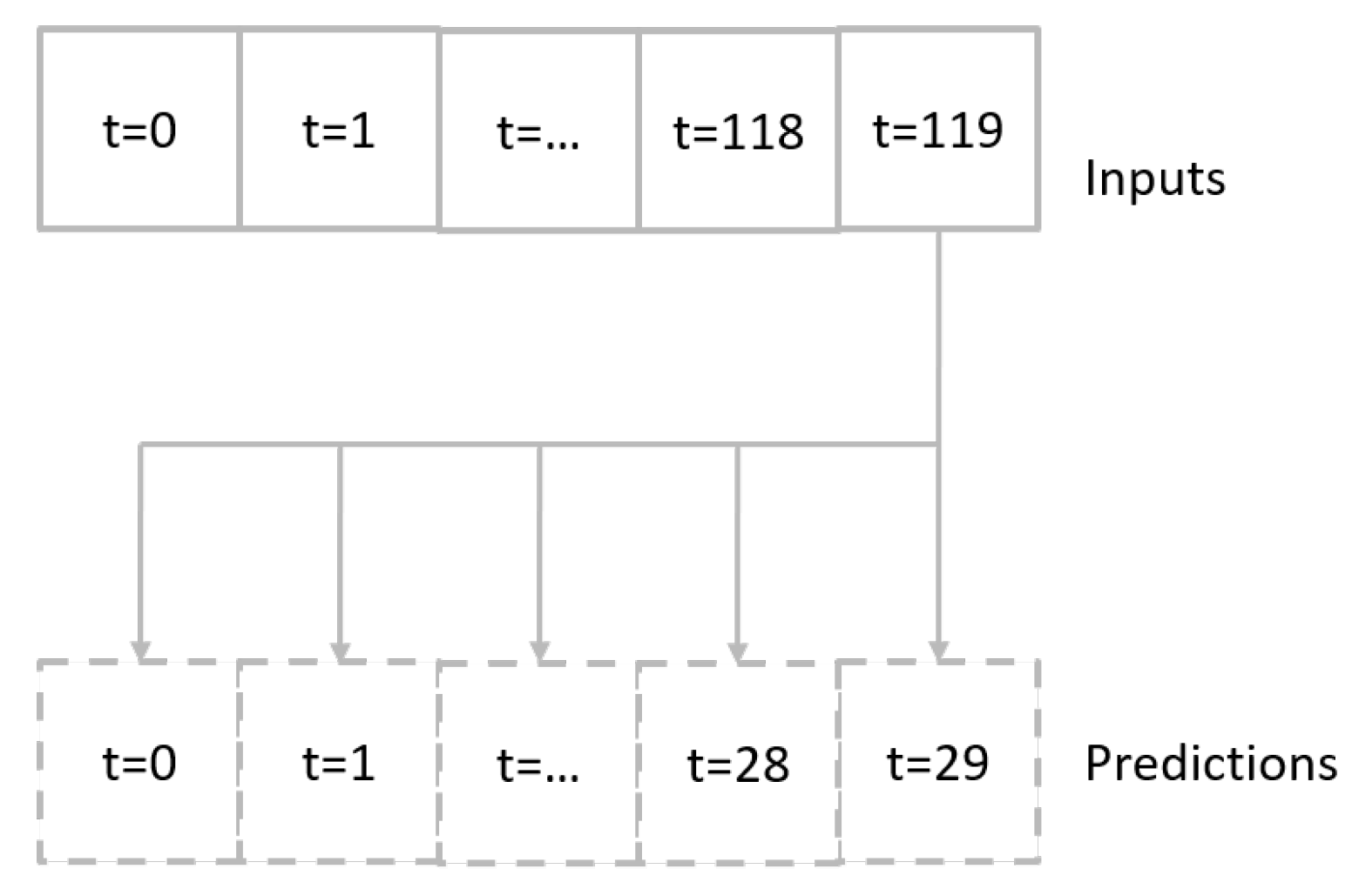

2.1.2. Time Window Generation

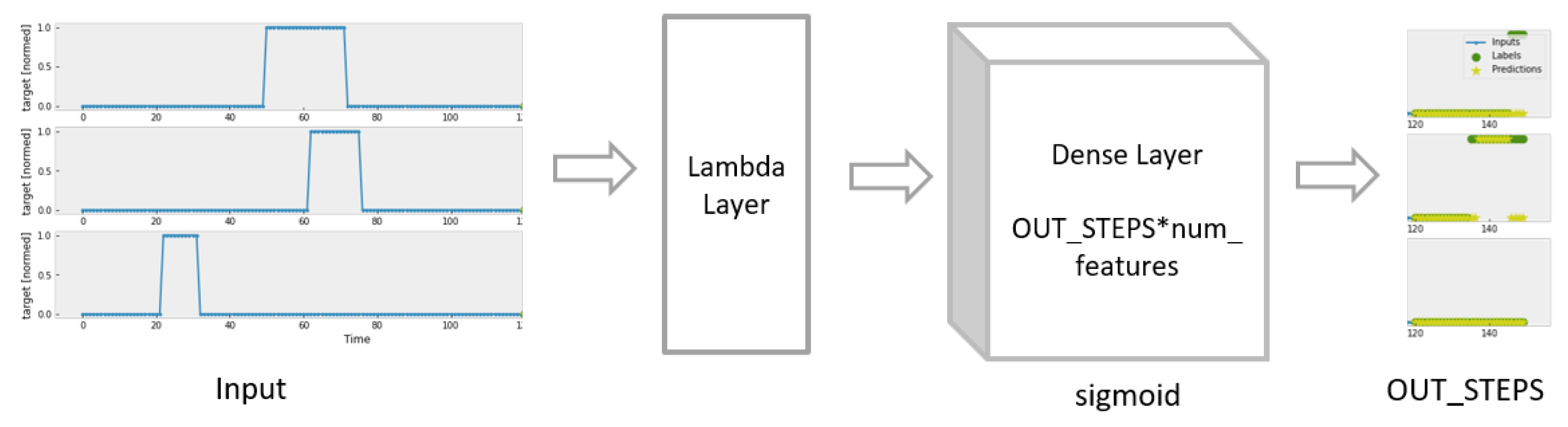

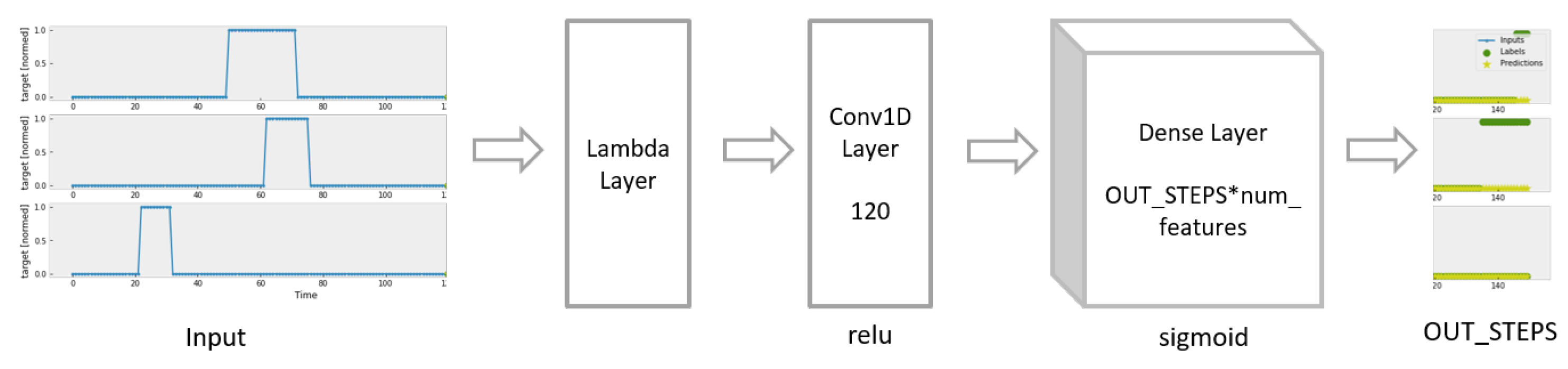

2.2. Machine Learning Models

- input_width = 120;

- OUT_STEPS = 30;

- num_features = 38;

- batchsize = 32;

- max_epoch = 32;

- patience = 5.

2.2.1. Baseline Model

2.2.2. Dense Model

2.2.3. Linear Model

2.2.4. CNN Model

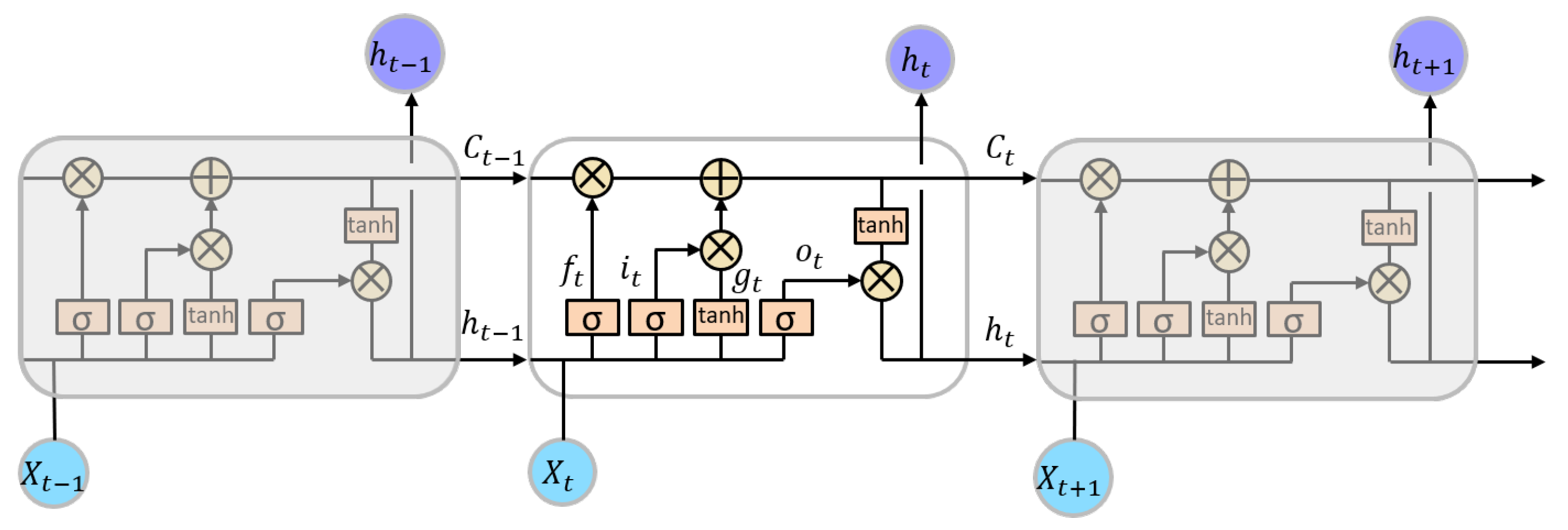

2.2.5. LSTM

3. Results

3.1. Comparison Results

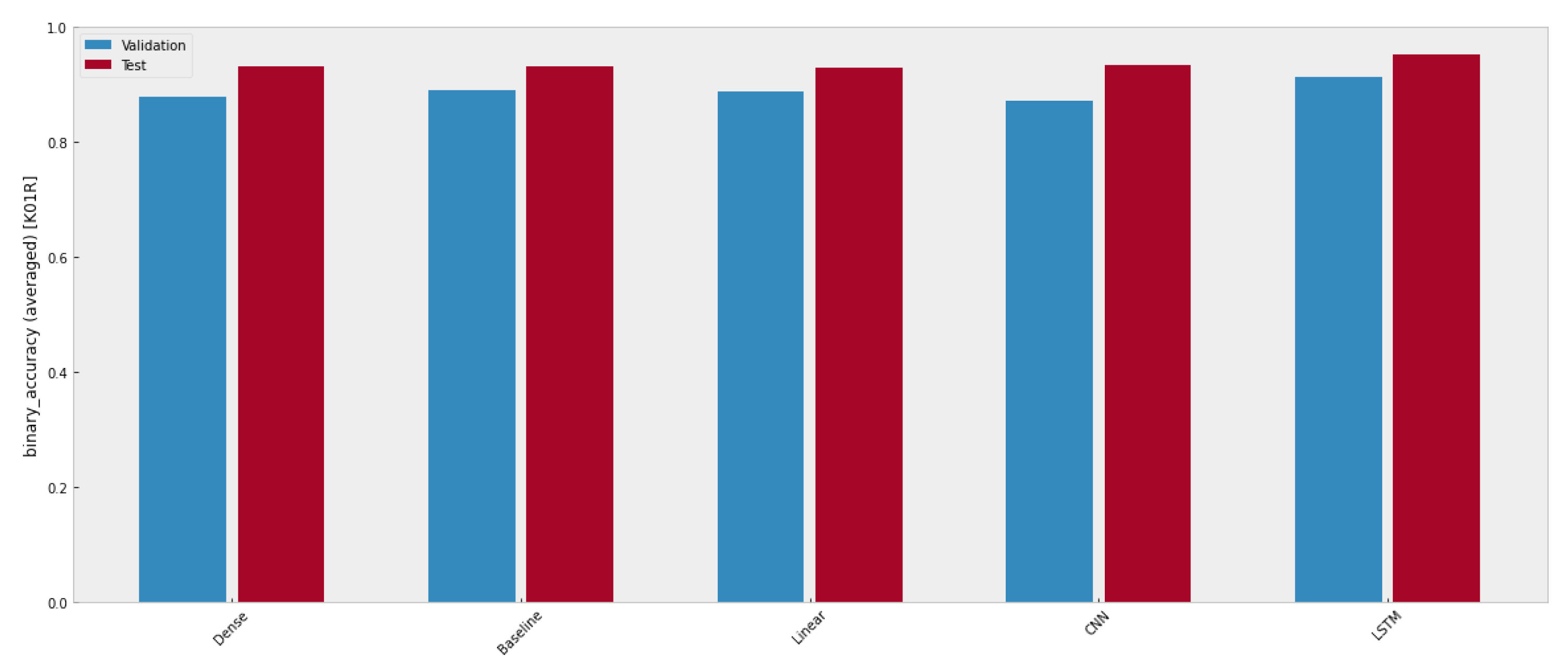

3.1.1. Binary Accuracy

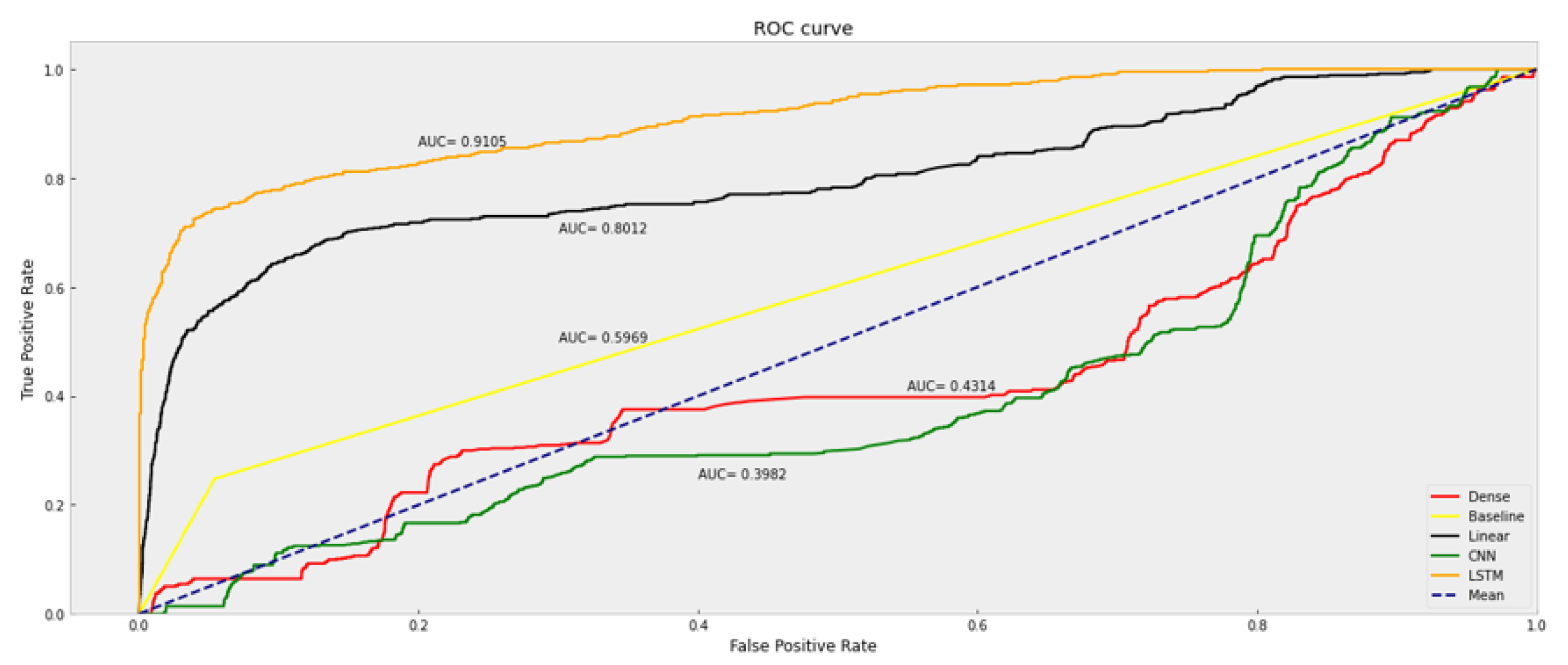

3.1.2. Basic Metrics

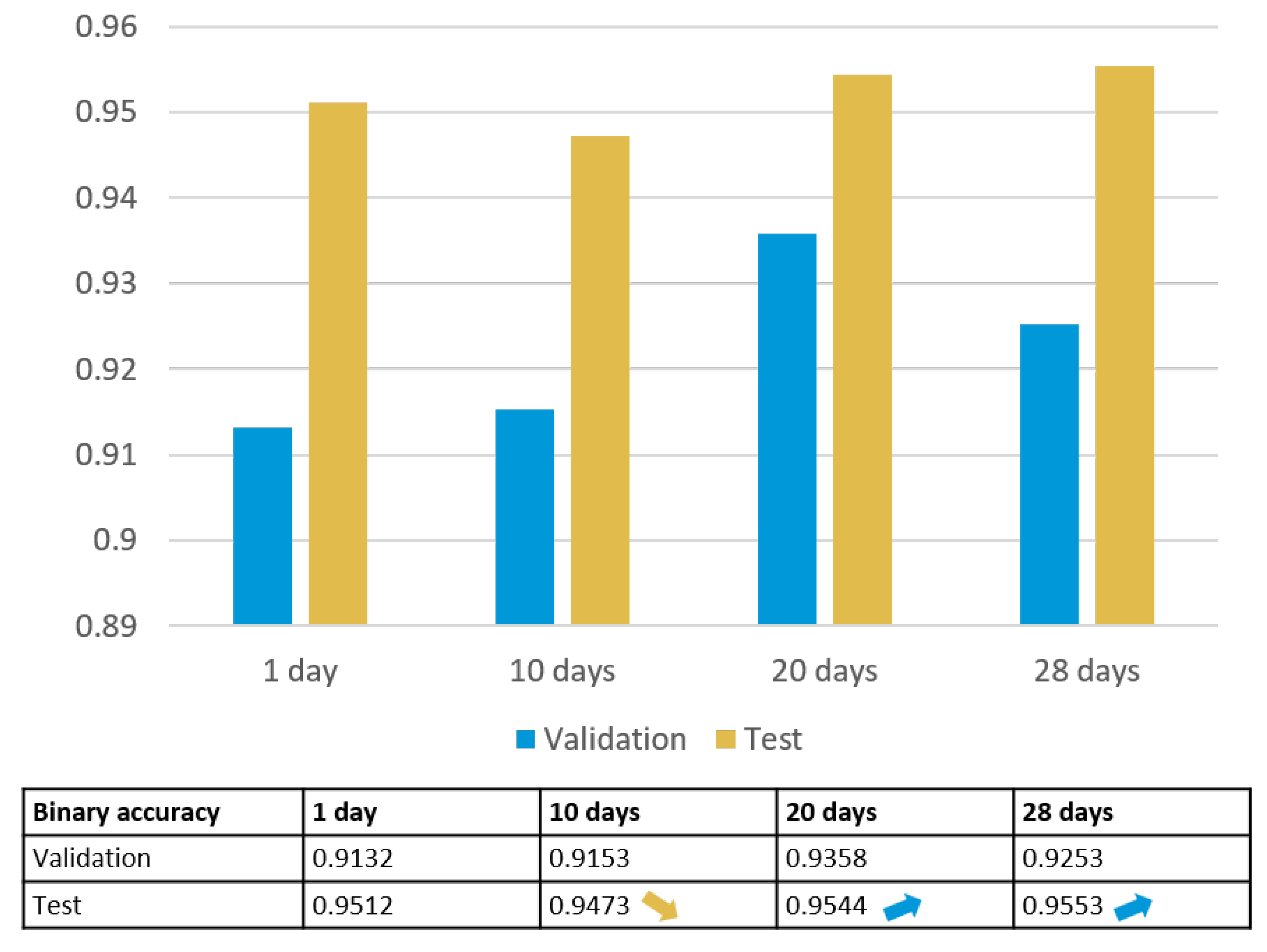

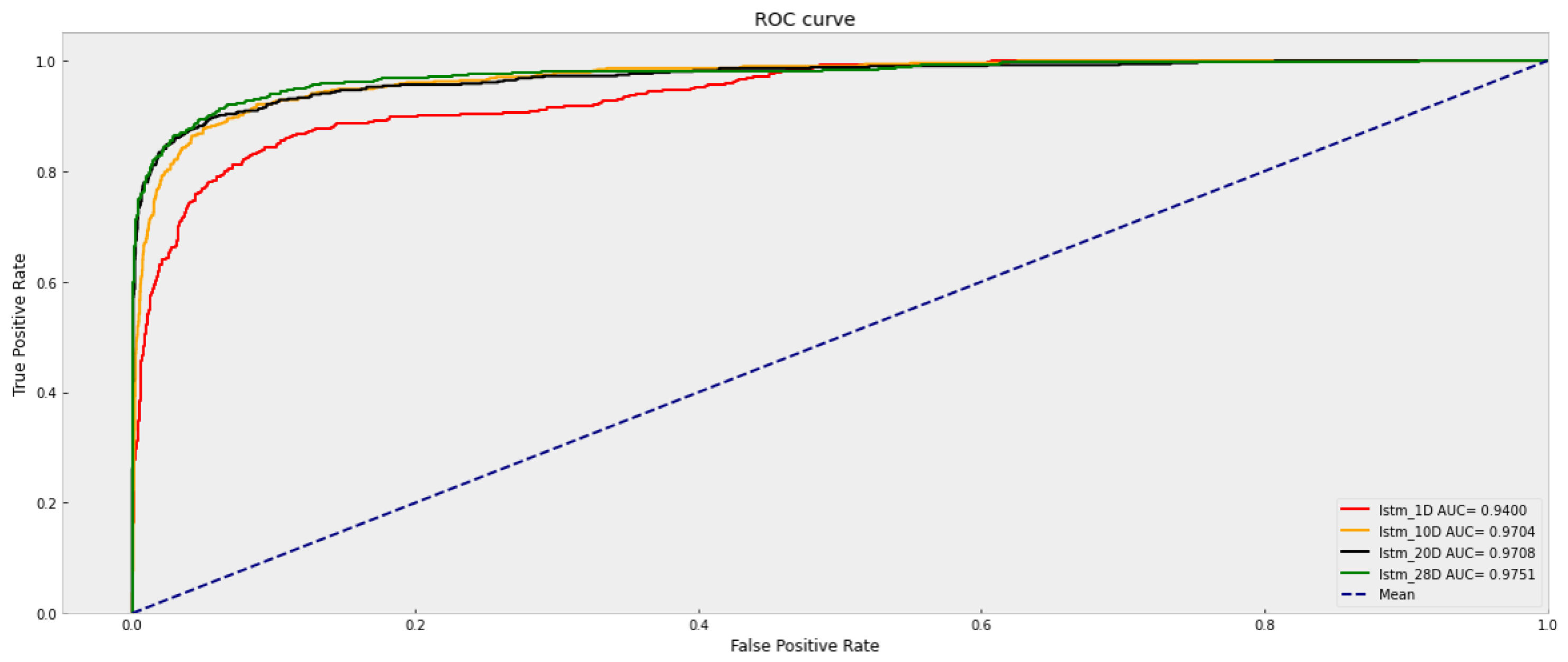

3.2. LSTM Trained by Data of One Month

3.2.1. Deviation Calculation

3.2.2. Segment Accuracy Calculation

- Segment 1: -> ;

- Segment 2: -> ;

- Segment 3: -> .

3.2.3. Basic Metrics of LSTM

4. Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ACC | Accuracy |

| AUC | Area under the Curve |

| CNN | Convolutional Neural Network |

| FN | False Negative |

| FP | False Positive |

| FPR | False Positive Rate |

| IoT | Internet of Things |

| LSTM | Long Short-Term Memory |

| MAP | MAP as intersection geometry |

| MCC | Matthew’s Correlation Coefficient |

| PPV | Positive Predictive Value |

| ROC | Receiver Operating Characteristic |

| SPAT | Signal Phase and Timing |

| TN | True Negative |

| TP | True Positive |

References

- Xie, F.; Sudhi, V.; Rub, T.; Purschwitz, A. Dynamic adapted green light optimal speed advisory for buses considering waiting time at the closest bus stop to the intersection. In Proceedings of the 27th ITS World Congress, Hamburg, Germany, 11–15 October 2021; pp. 1–10. [Google Scholar]

- Wang, Y.; Papageorgiou, M.; Messmer, A. RENAISSANCE—A unified macroscopic model-based approach to real-time freeway network traffic surveillance. Transp. Res. Part C Emerg. Technol. 2006, 14, 190–212. [Google Scholar] [CrossRef]

- Menig, C.; Hildebrandt, R.; Braun, R. Der informierte Fahrer-Optimierung des Verkehrsablaufs durch LSA-Fahrzeug-Kommunikation. In Heureka ‘08. Optimierung in Verkehr und Transport; Forschungsgesellschaft für Straßen-und Verkehrswesen: Koln, Germany, 2008. [Google Scholar]

- Nguyen, H.; Kieu, L.M.; Wen, T.; Cai, C. Deep learning methods in transportation domain: A review. IET Intell. Transp. Syst. 2018, 12, 998–1004. [Google Scholar] [CrossRef]

- Weisheit, T.; Hoyer, R. Support Vector Machines—A Suitable Approach for a Prediction of Switching Times of Traffic Actuated Signal Controls. In Advanced Microsystems for Automotive Applications; Springer: Berlin/Heidelberg, Germany, 2014; pp. 121–129. [Google Scholar]

- Heckmann, K.; Schneegans, L.E.; Hoyer, R. Estimating Future Signal States and Switching Times of Traffic Actuated Lights. In Proceedings of the 20th European Transport Congress and 12th Conference on Transport Sciences, Gyor, Hungary, 9–10 June 2022; pp. 80–91. [Google Scholar]

- Schneegans, L.E.; Heckmann, K.; Hoyer, R. Exploiting Stage Information for Prediction of Switching Times of Traffic Actuated Signals Using Machine Learning. In Proceedings of the 2022 12th International Conference on Advanced Computer Information Technologies (ACIT), Ruzomberok, Slovakia, 26–28 September 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 544–548. [Google Scholar]

- Dama, F.; Sinoquet, C. Time series analysis and modeling to forecast: A survey. arXiv 2021, arXiv:2104.00164. [Google Scholar]

- Chen, C.; Li, K.; Teo, S.G.; Zou, X.; Wang, K.; Wang, J.; Zeng, Z. Gated residual recurrent graph neural networks for traffic prediction. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; Volume 33, pp. 485–492. [Google Scholar]

- Khosravi, A.; Machado, L.; Nunes, R. Time-series prediction of wind speed using machine learning algorithms: A case study Osorio wind farm, Brazil. Appl. Energy 2018, 224, 550–566. [Google Scholar] [CrossRef]

- Genser, A.; Ambühl, L.; Yang, K.; Menendez, M.; Kouvelas, A. Enhancement of SPaT-messages with machine learning based time-to-green predictions. In Proceedings of the 9th Symposium of the European Association for Research in Transportation (hEART 2020), Lyon, France, 3–4 February 2020. [Google Scholar]

- Zhou, H.; Zhang, S.; Peng, J.; Zhang, S.; Li, J.; Xiong, H.; Zhang, W. Informer: Beyond efficient transformer for long sequence time-series forecasting. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtually, 2–9 February 2021; Volume 35, pp. 11106–11115. [Google Scholar]

- Tang, W.; Long, G.; Liu, L.; Zhou, T.; Blumenstein, M.; Jiang, J. Omni-Scale CNNs: A simple and effective kernel size configuration for time series classification. arXiv 2020, arXiv:2002.10061. [Google Scholar]

- Lim, B.; Zohren, S. Time-series forecasting with deep learning: A survey. Philos. Trans. R. Soc. A 2021, 379, 20200209. [Google Scholar] [CrossRef] [PubMed]

- TensorFlow. Time Series Forecasting. 2019. Available online: https://colab.research.google.com/github/tensorflow/docs/blob/master/site/en/tutorials/structured_data/time_series.ipynb#scrollTo=2Pmxv2ioyCRw (accessed on 17 May 2023).

- Olah, C. Understanding LSTM Networks. 2015. Available online: https://colah.github.io/posts/2015-08-Understanding-LSTMs/ (accessed on 25 May 2023).

| Timestamp | K01R 1 | K02 | K03 | K04L | K05 | ⋯ | MPN_2 | MPN_1 |

|---|---|---|---|---|---|---|---|---|

| 0 | R | R | G | G | R | ⋯ | 0 | 0 |

| 1 | R | R | A | G | R | ⋯ | 0 | 0 |

| 2 | R | R | A | G | R | ⋯ | 0 | 0 |

| 3 | R | R | A | G | R | ⋯ | 0 | 0 |

| 4 | R | R | R | G | R | ⋯ | 0 | 0 |

| ⋯ | ⋯ | ⋯ | ⋯ | ⋯ | ⋯ | ⋯ | ⋯ | ⋯ |

| 86,395 | G | R | R | R | R | ⋯ | 0 | 0 |

| 86,396 | G | R | R | R | R | ⋯ | 0 | 0 |

| 86,397 | A | R | R | R | R | ⋯ | 0 | 0 |

| 86,398 | A | R | R | R | R | ⋯ | 0 | 0 |

| 86,399 | A | R | R | R | R | ⋯ | 0 | 0 |

| Timestamp | K01R | K02 | K03 | K04L | K05 | ⋯ | MPN_2 | MPN_1 |

|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 1 | 1 | 0 | ⋯ | 0 | 0 |

| 1 | 0 | 0 | 0 | 1 | 0 | ⋯ | 0 | 0 |

| 2 | 0 | 0 | 0 | 1 | 0 | ⋯ | 0 | 0 |

| 3 | 0 | 0 | 0 | 1 | 0 | ⋯ | 0 | 0 |

| 4 | 0 | 0 | 0 | 1 | 0 | ⋯ | 0 | 0 |

| ⋯ | ⋯ | ⋯ | ⋯ | ⋯ | ⋯ | ⋯ | ⋯ | ⋯ |

| 86,395 | 1 | 0 | 0 | 0 | 0 | ⋯ | 0 | 0 |

| 86,396 | 1 | 0 | 0 | 0 | 0 | ⋯ | 0 | 0 |

| 86,397 | 0 | 0 | 0 | 0 | 0 | ⋯ | 0 | 0 |

| 86,398 | 0 | 0 | 0 | 0 | 0 | ⋯ | 0 | 0 |

| 86,399 | 0 | 0 | 0 | 0 | 0 | ⋯ | 0 | 0 |

| Models | Validation Accuracy | Test Accuracy |

|---|---|---|

| Dense | 0.8782 | 0.9325 |

| Baseline | 0.8904 | 0.9312 |

| Linear | 0.8869 | 0.9303 |

| CNN | 0.8722 | 0.9331 |

| LSTM | 0.9134 | 0.9525 |

| Models | ACC | PPV | TPR | F1 | MCC |

|---|---|---|---|---|---|

| Dense | 0.894 | 0.000 | 0.000 | 0.000 | 0.000 |

| Baseline | 0.856 | 0.277 | 0.227 | 0.249 | 0.172 |

| Linear | 0.911 | 0.722 | 0.249 | 0.371 | 0.390 |

| CNN | 0.894 | 0.000 | 0.000 | 0.000 | 0.000 |

| LSTM | 0.946 | 0.887 | 0.558 | 0.685 | 0.678 |

| Models | ACC | PPV | TPR | F1 | MCC |

|---|---|---|---|---|---|

| LSTM_1D | 0.924 | 0.912 | 0.449 | 0.602 | 0.609 |

| LSTM_10D | 0.949 | 0.922 | 0.656 | 0.766 | 0.752 |

| LSTM_20D | 0.962 | 0.957 | 0.736 | 0.832 | 0.820 |

| LSTM_28D | 0.962 | 0.966 | 0.726 | 0.829 | 0.819 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xie, F.; Naumann, S.; Czogalla, O.; Zadek, H. Machine Learning Model Application and Comparison in Actuated Traffic Signal Forecasting. Sensors 2023, 23, 6912. https://doi.org/10.3390/s23156912

Xie F, Naumann S, Czogalla O, Zadek H. Machine Learning Model Application and Comparison in Actuated Traffic Signal Forecasting. Sensors. 2023; 23(15):6912. https://doi.org/10.3390/s23156912

Chicago/Turabian StyleXie, Feng, Sebastian Naumann, Olaf Czogalla, and Hartmut Zadek. 2023. "Machine Learning Model Application and Comparison in Actuated Traffic Signal Forecasting" Sensors 23, no. 15: 6912. https://doi.org/10.3390/s23156912

APA StyleXie, F., Naumann, S., Czogalla, O., & Zadek, H. (2023). Machine Learning Model Application and Comparison in Actuated Traffic Signal Forecasting. Sensors, 23(15), 6912. https://doi.org/10.3390/s23156912