SDNC-Repair: A Cooperative Data Repair Strategy Based on Erasure Code for Software-Defined Storage

Abstract

1. Introduction

- Propose a method for improving the performance of erasure-code-based data repair called SDNC-Repair that optimizes the transmission of the data repair process using the measurement technology of SDN and creates a distributed pipeline data repair operation to achieve efficient repair.

- Develop a data source selection algorithm based on intelligent bandwidth measurement and a transmission scheduling algorithm based on dynamic feedback. These algorithms provide node combinations and schedule data flow during data repair.

- Present a cooperative and efficient data repair method that improves the efficiency of repair by using SDN to shorten the repair chain, and improve the transmission efficiency and distribution of computation.

2. Background and Motivation

2.1. Background

2.2. Motivation

3. Related Works

4. SDNC-Repair

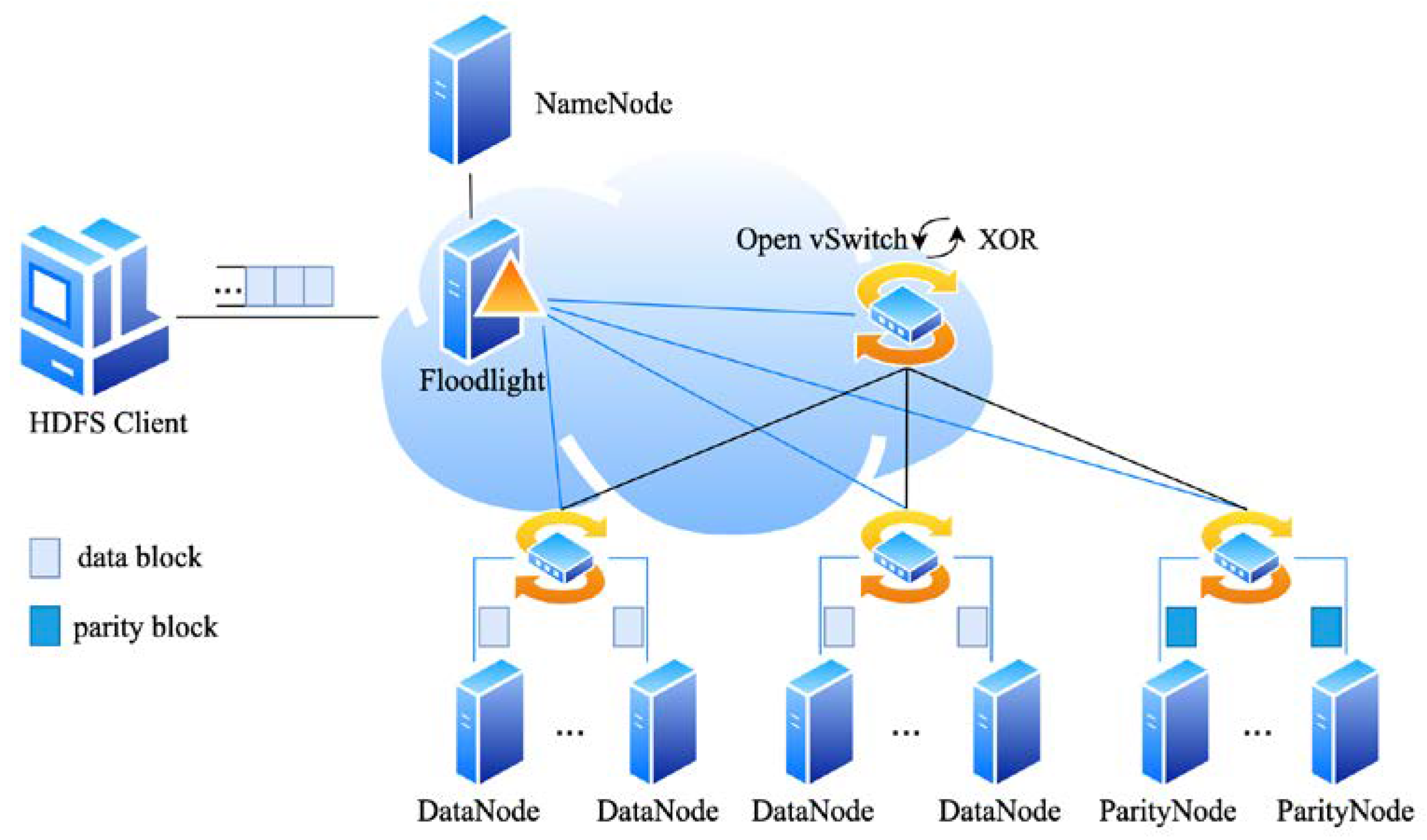

4.1. The SDNC-Repair Framework

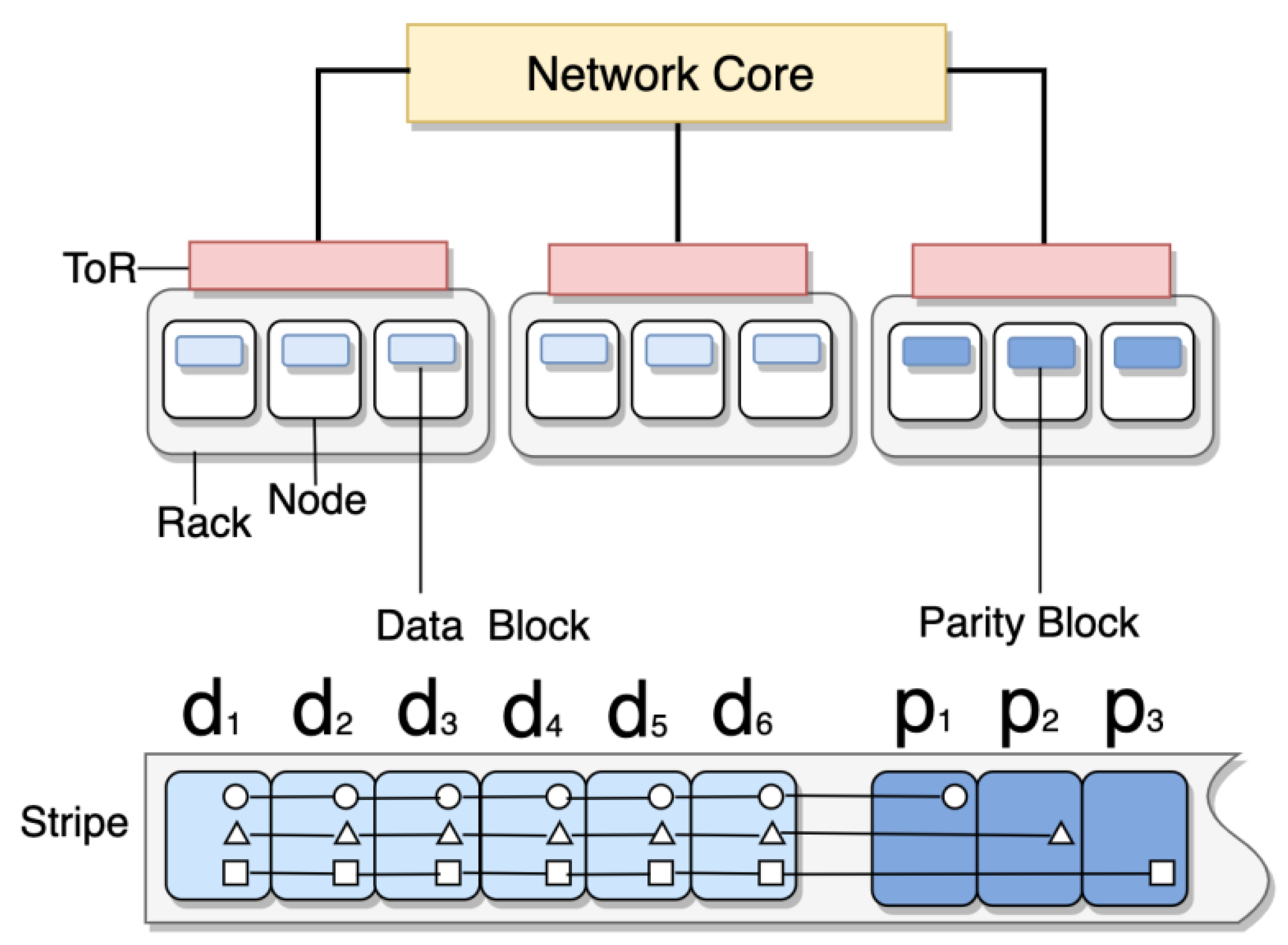

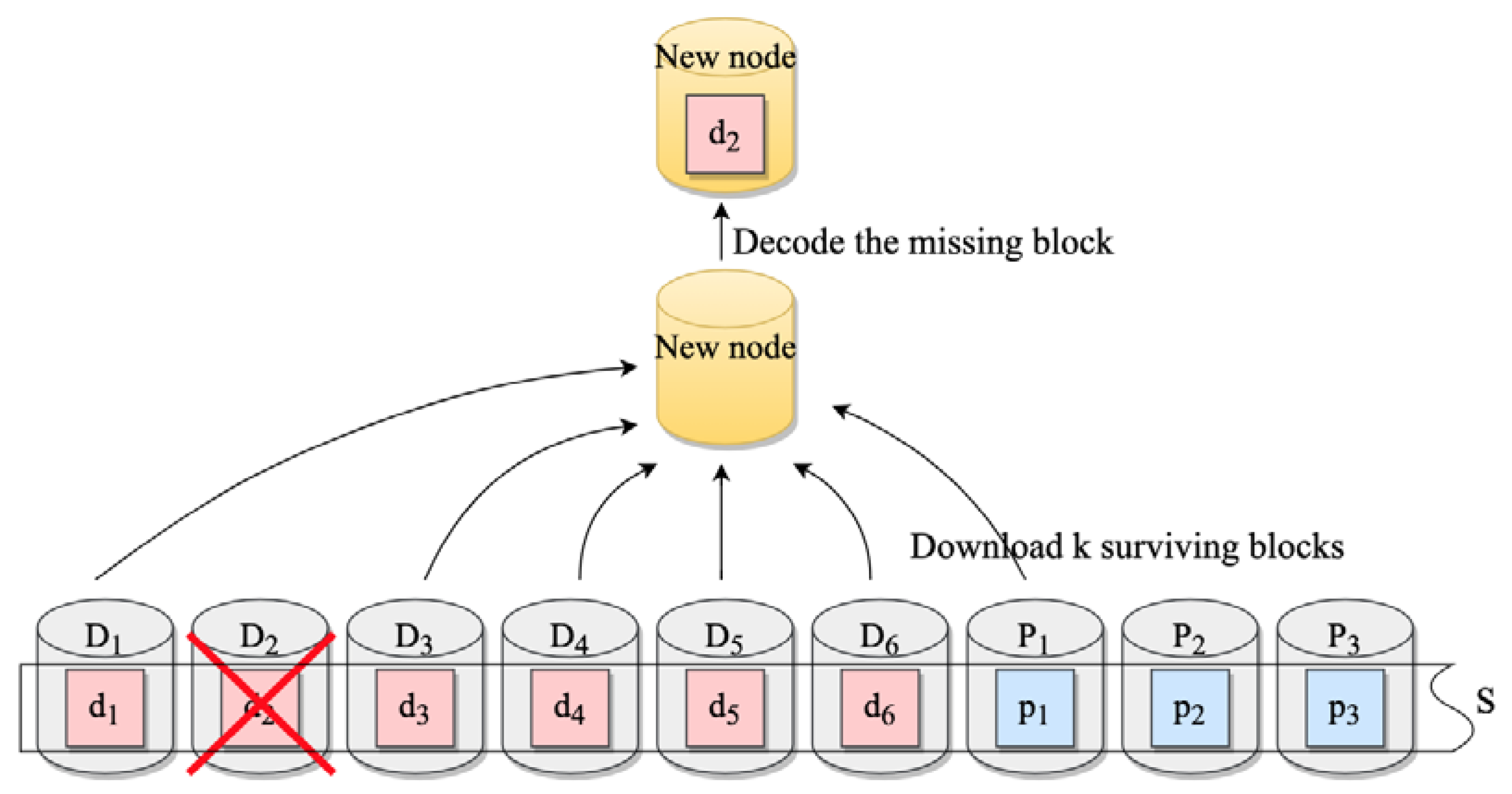

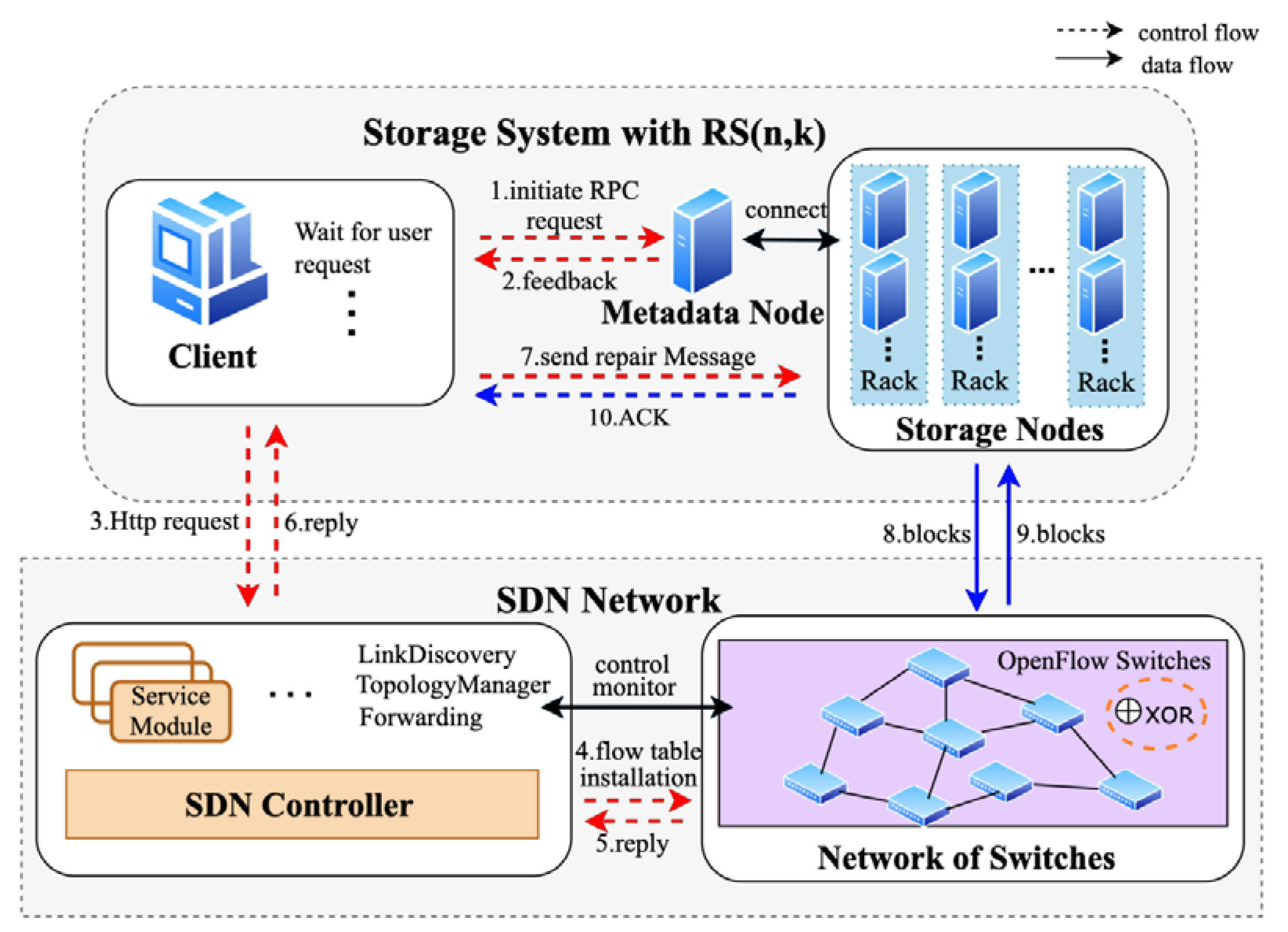

- Transmission phase (Steps 1–7 in Figure 3): The most suitable transmission routes are selected according to the network topology and monitors the workload of the switch to control the repair rate.

- Calculation phase (Steps 8–10 in Figure 3): The switch delivers data to the top-of-rack switch based on the flow table and achieves efficient data repair through pipelining and parallelization.

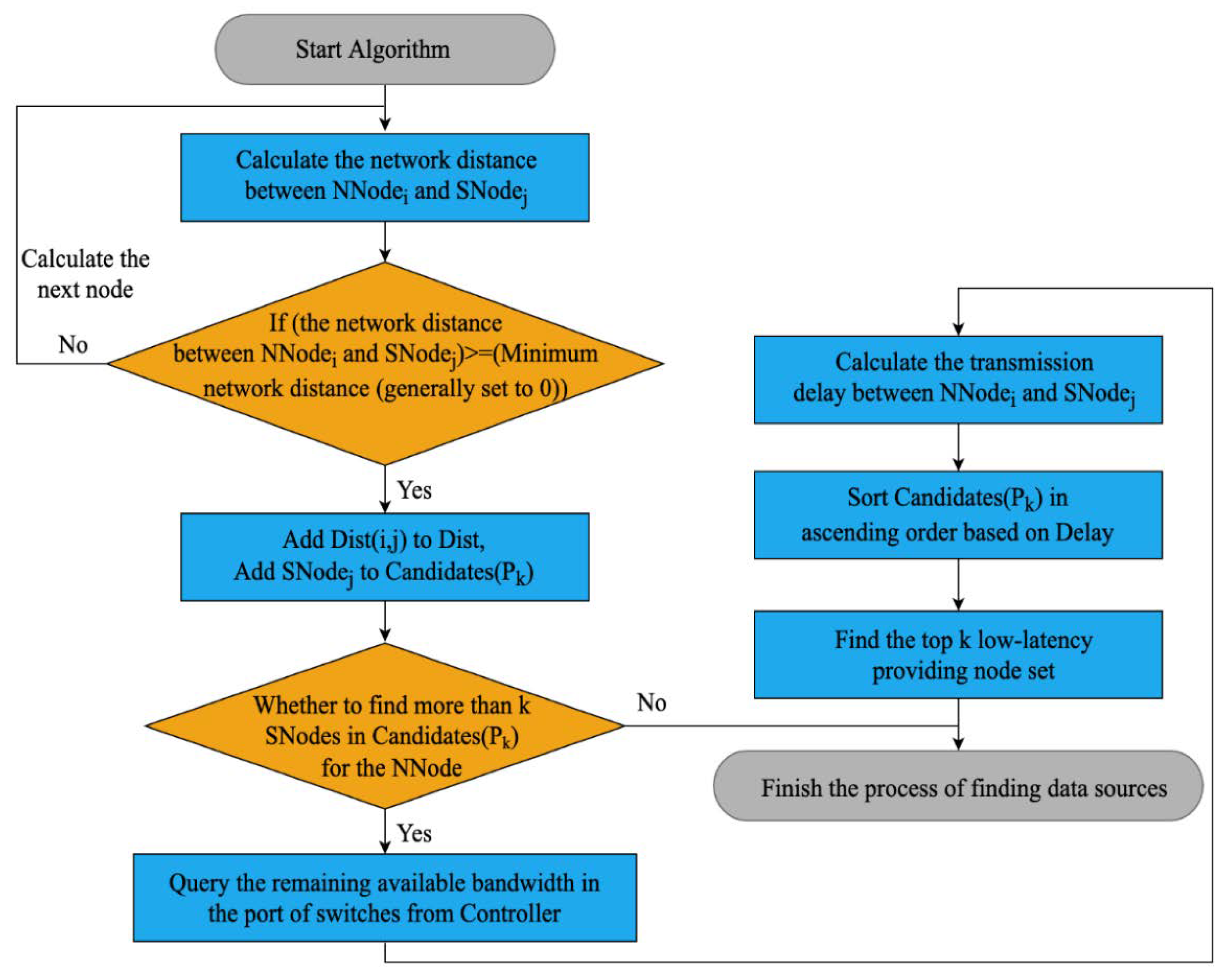

4.2. Data Source Selection Algorithm Based on Intelligent Bandwidth Measurement

| Algorithm 1: Data source selection algorithm based on intelligent bandwidth measurement |

| Input: Group of new nodes , Group of surviving nodes , Topology graph Output: Group of providing nodes |

| 1. 2. for Each node in do 3. Assume and 4. for Each node in do 5. if then // Indicates that the new node and the surviving node are connected 6. Add to // Generate node distance set 7. Add to // Generate candidate data source node set 8. end if 9. end for 10. end for 11. for Each node in do 12. for Each node in do 13. // Generate available bandwidth set 14. // Calculate latency according to the decision parameter of and the decision parameter of 15. end for 16. end for 17. Sort in ascending order based on // Sort in ascending order 18. // Generate the first k low-latency providing nodes set 19. return |

- The algorithm first calculates the distance between the new node and in , defined as the number of hops through switching devices, to determine if nodes are reachable.

- The distances between reachable nodes are then added to the decision parameter set , and reachable nodes are added to the candidate data source node sequence set .

- The controller measures the remaining available bandwidth in the ports of the switch-connected nodes, which is the difference between the path bandwidth and the smallest background load of all links in the path.

- Based on and , along with their respective weight factors α and β, the transmission delay is calculated. The first k data sources with the lowest delay are then selected from based on the ascending order of .

- The flowchart of the algorithm is shown in Figure 5.

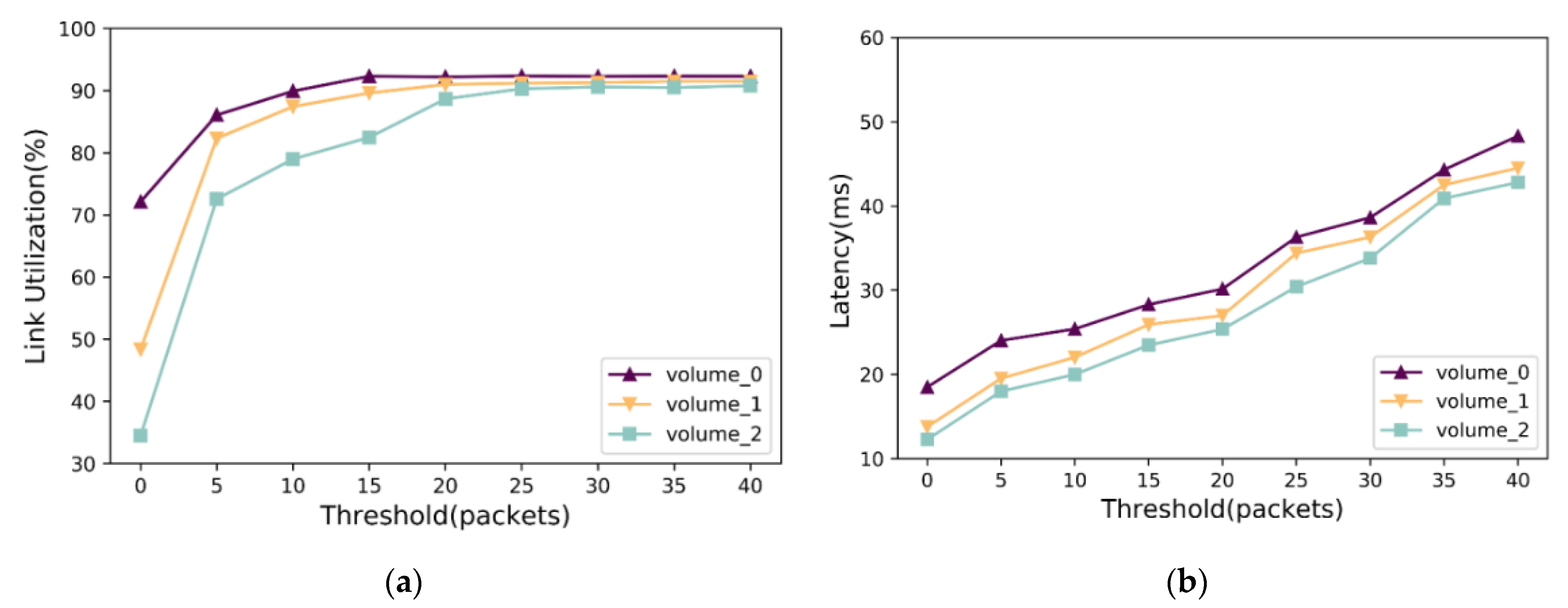

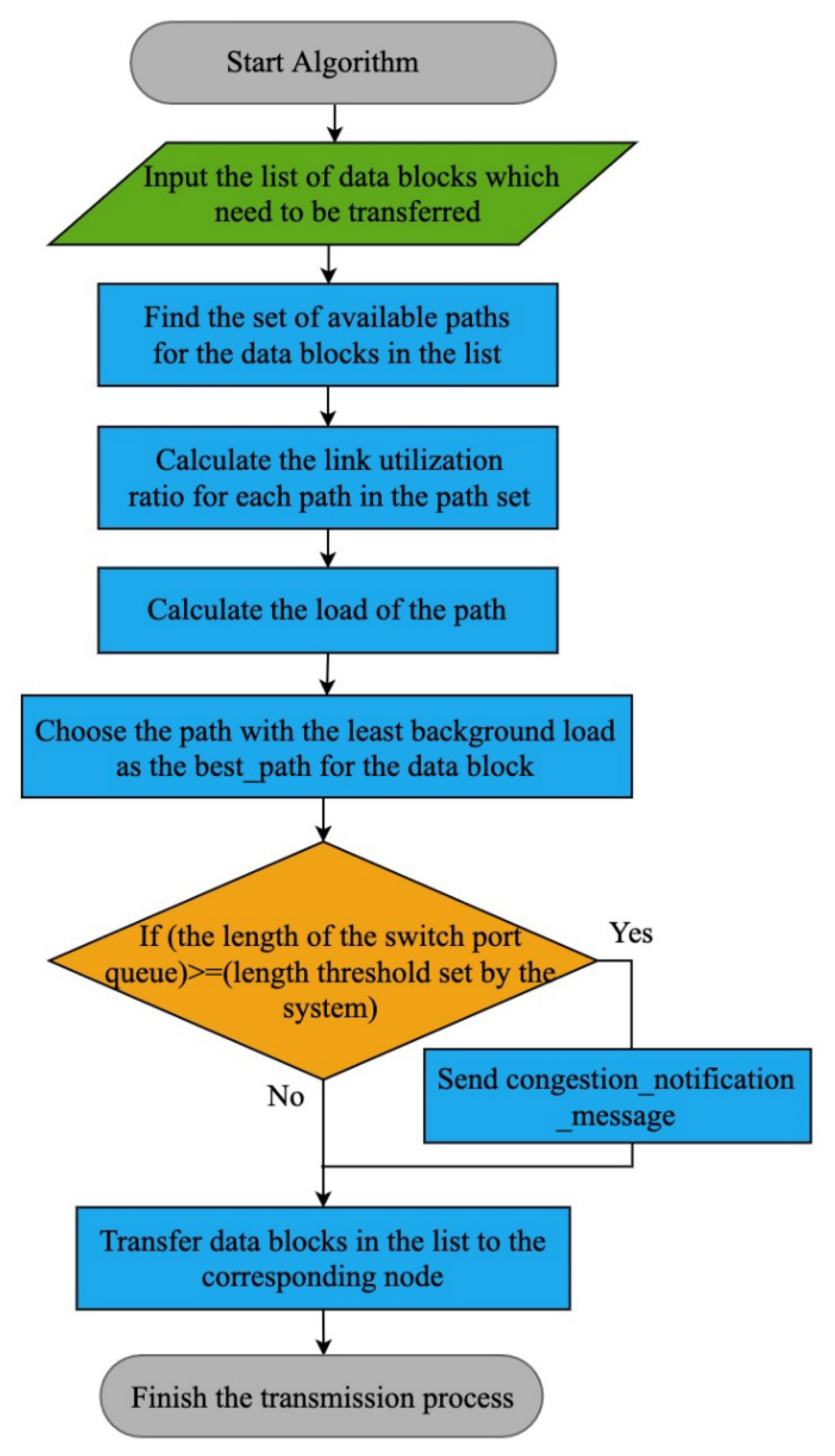

4.3. Transmission Scheduling Algorithm Based on Dynamic Feedback

| Algorithm 2: Transmission scheduling algorithm based on dynamic feedback |

| Input: The list of data to be transmitted , Global topology graph , The switch port length threshold Output: // A sign determines whether the transmission is successful or not 1. Set 2. for Each block in do 3. 4. for Each path in do 5. // Get the load of in at time t 6. Calculate // Calculate the utilization of the links in the path 7. if then 8. // Calculate the load on the path 9. end if 10. end for 11. Find , where // Select the route with the lowest background load 12. // Obtain the queue of the switch at time 13. if then 14. // sent congestion signal, notify other switches to reduce their sending rate. 15. end if 16. ,) 17. end for 18. |

- The algorithm first discovers the underlying network topology through the controller to find the available path set for the data blocks in the list.

- Then, the controller queries the switch port flow statistics through traffic monitoring components to calculate the link utilization and the path load , where represents the maximum utilization ratio of all links in the path.

- To ensure that the transmission avoids bottleneck links, the path with the smallest background load is selected as the transmission path for the data block.

- At the same time, the controller periodically checks the switch port queue length for the transmitted data block and dynamically adjusts the transmission rate by comparing it with the system’s set threshold . If exceeds , the switch sends a congestion notification message to the controller to reduce the transmission rate and avoid switch overload.

4.4. Cooperative and Efficient Data Repair Method

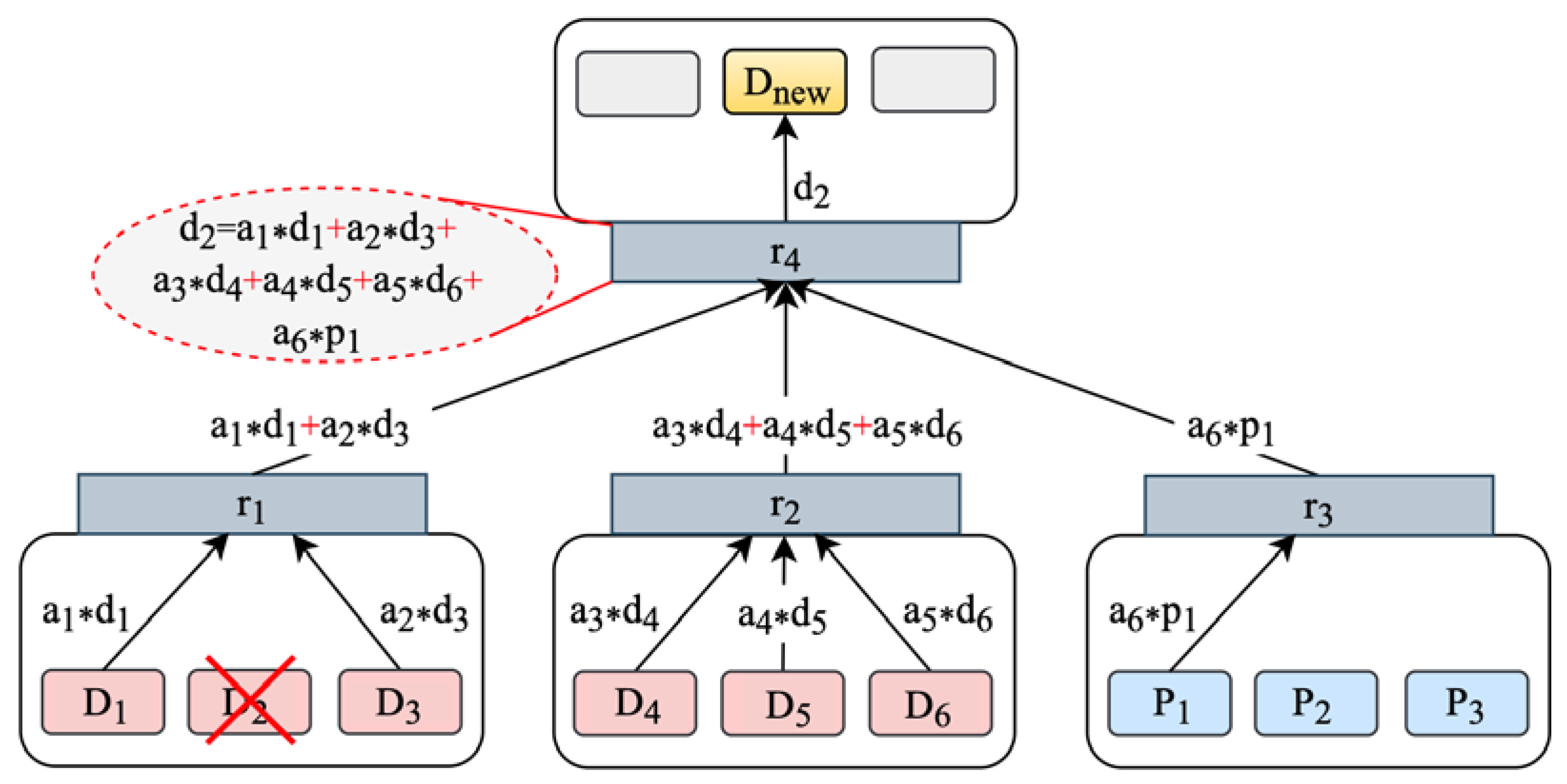

- According to the algorithm in Section 4.2, the data nodes and parity nodes participating in the repair operation are determined. The data block and the parity block stored in and are multiplied by their respective decoding coefficients in the decoding inverse matrix, resulting in the encoded blocks and .

- The encoded blocks are then sent to the ToR , where they are aggregated through a summation operation. The intermediate calculation results of partial repair, and , are obtained as a result. Then, delivers the results to the ToR where the new node is located.

- At the ToR , all the received results are summed, the data block is recovered, and it is sent to . Then, stores , indicating the completion of the repair.

- Providing nodes and decode in parallel and obtain encoded blocks . These encoded blocks are sent to ToR , respectively.

- The sums the received data and obtains an intermediate calculation result. Data aggregation greatly reduces the amount of data transferred backward. Then, , and send the results to , where is located.

- Finally, sums the received intermediate calculation results to recovers and sends it to , which stores and completes the data repair operation.

5. Experiments and Evaluation

5.1. Experimental Configuration

5.2. Results and Analysis

5.2.1. Data Repair Performance under Different Data Granularities

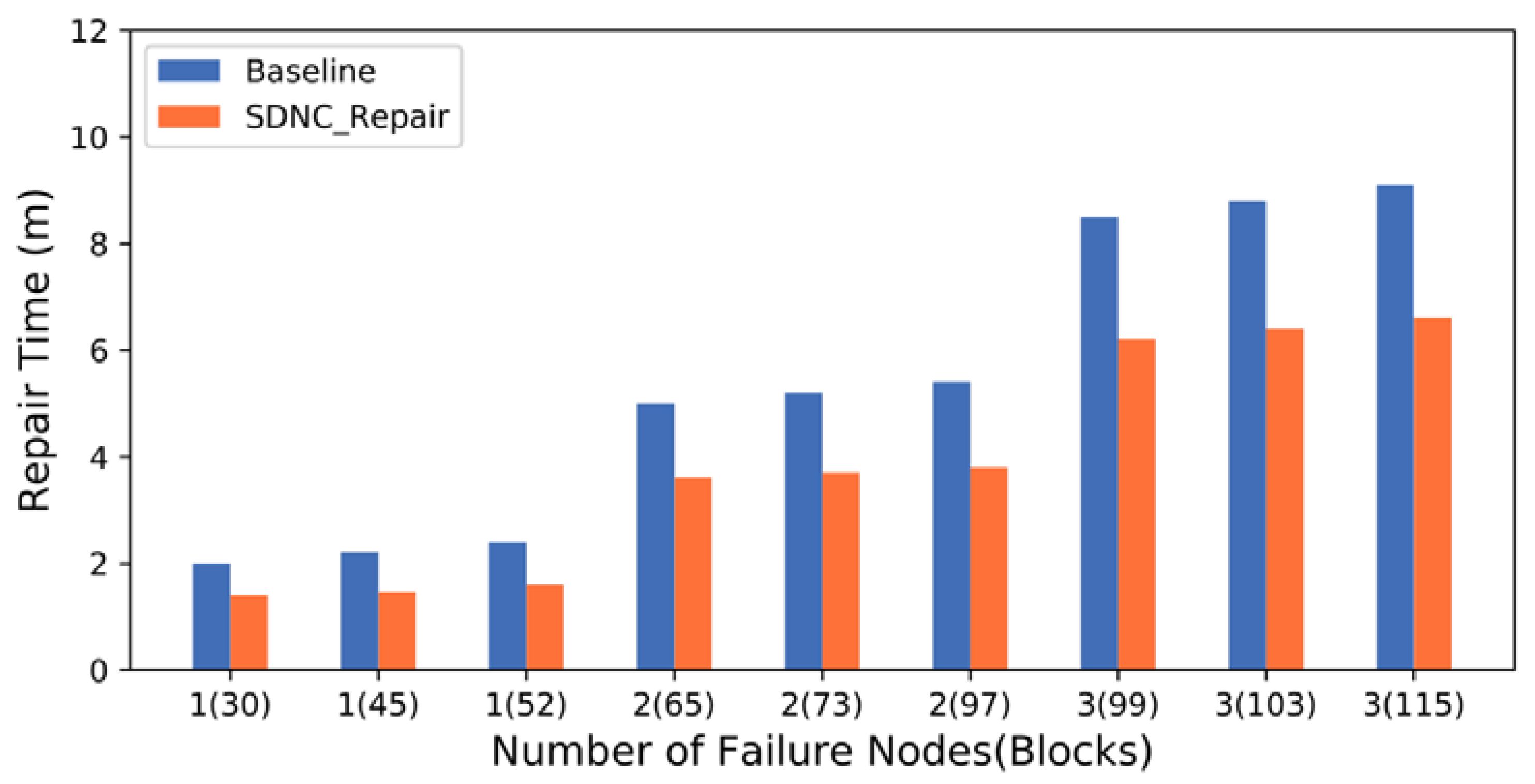

5.2.2. Data Repair Performance under Different Numbers of Failed Nodes

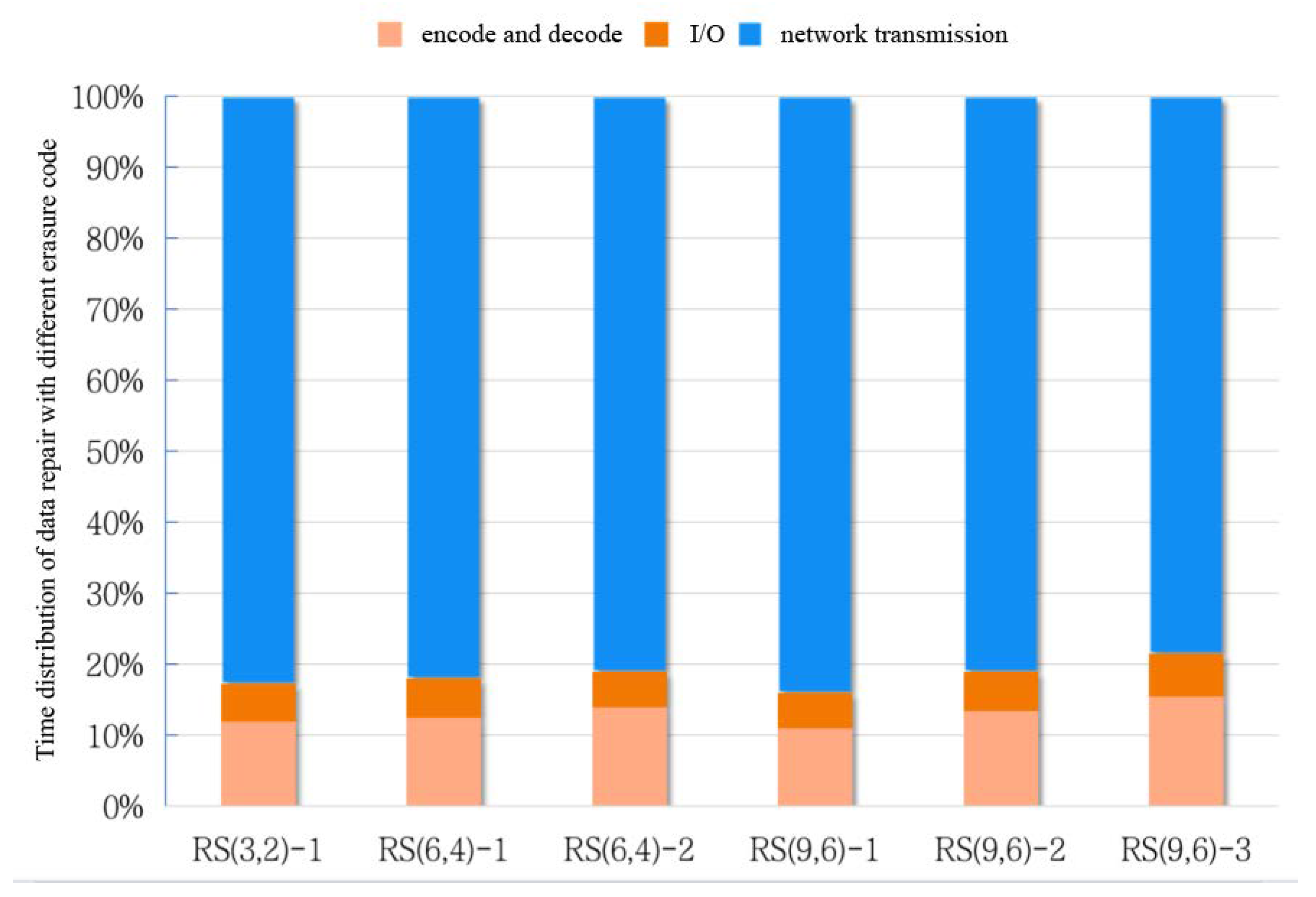

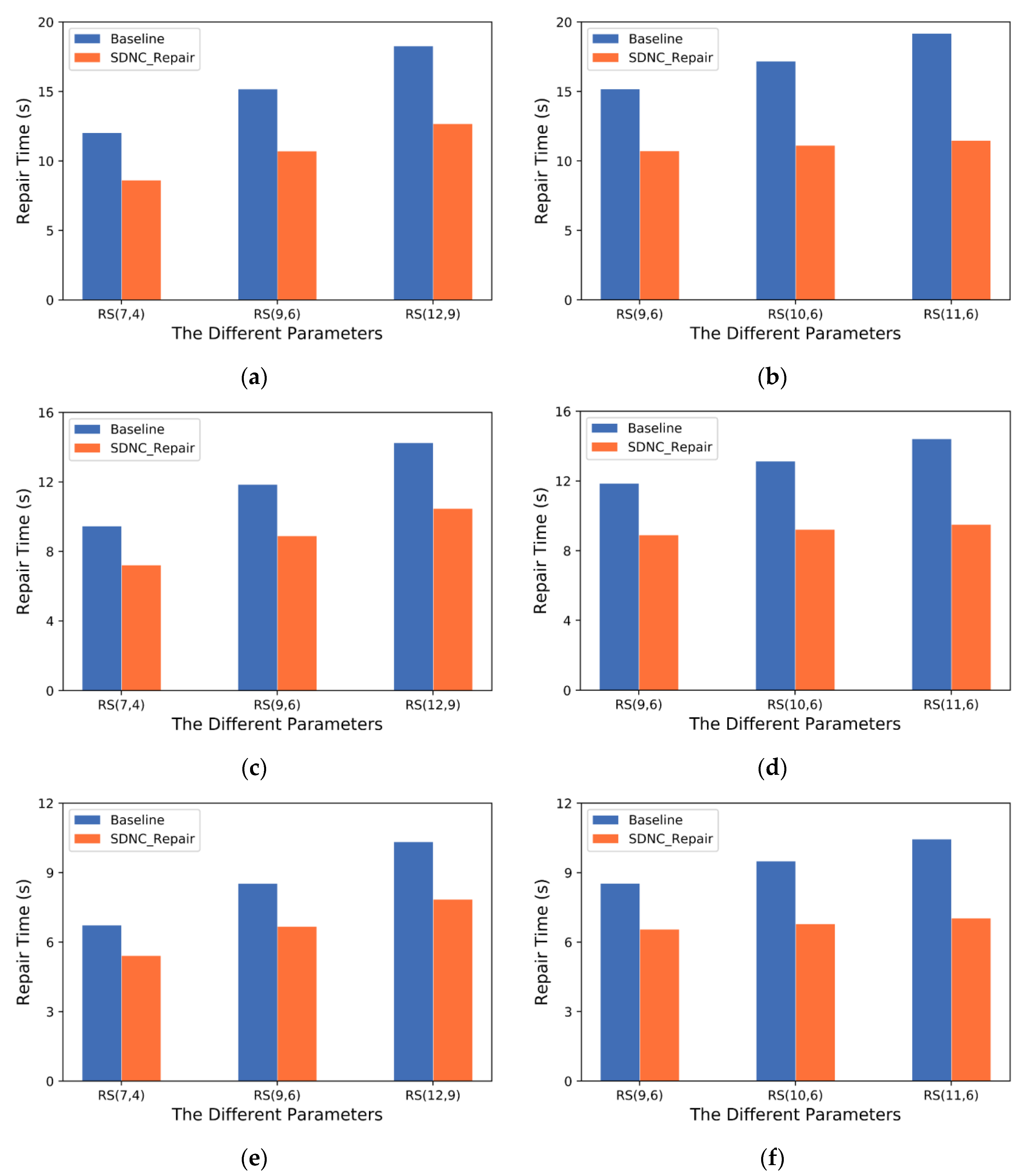

5.2.3. Data Repair Performance under Different Erasure Code Parameters and Cross-Rack Bandwidths

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Balaji, S.B.; Krishnan, M.N.; Vajha, M.; Ramkumar, V.; Sasidharan, B.; Kumar, P.V. Erasure Coding for Distributed Storage: An Overview. Sci. China Inf. Sci. 2018, 61, 100301. [Google Scholar] [CrossRef]

- Yingxun, F.; Shilin, W.; Li, M.; Jiwu, S. Survey on Single Disk Failure Recovery Methods for Erasure Coded Storage Systems. J. Comput. Res. Dev. 2018, 55, 1–13. [Google Scholar] [CrossRef]

- Wang, Y.-J.; Xu, F.-L.; Pei, X.-Q. Research on Erasure Code-Based Fault-Tolerant Technology for Distributed Storage. Chin. J. Comput. 2017, 40, 236–255. [Google Scholar] [CrossRef]

- Reed, I.S.; Solomon, G. Polynomial Codes over Certain Finite Fields. J. Soc. Ind. Appl. Math. 1960, 8, 300–304. [Google Scholar] [CrossRef]

- Corbett, J.C.; Dean, J.; Epstein, M.; Fikes, A.; Frost, C.; Furman, J.J.; Ghemawat, S.; Gubarev, A.; Heiser, C.; Hochschild, P.; et al. Spanner: Google’s Globally Distributed Database. ACM Trans. Comput. Syst. 2013, 31, 1–22. [Google Scholar] [CrossRef]

- Yahoo Developer Network. HUG Meetup Nov 2010: HDFS RAID. Available online: https://www.youtube.com/watch?v=TeeqmqTRD20 (accessed on 17 May 2023).

- Huang, C.; Simitci, H.; Xu, Y.; Ogus, A.; Calder, B.; Gopalan, P.; Li, J.; Yekhanin, S. Erasure Coding in Windows Azure Storage. In Proceedings of the 2012 USENIX Annual Technical Conference (USENIX ATC 12), Boston, MA, USA, 13–15 June 2012; pp. 15–26. [Google Scholar]

- Dai, M.; Cheng, G.; Zhou, Y. Survey on Measurement Methods in Software-Defined Networking. J. Softw. 2019, 30, 1853–1874. [Google Scholar] [CrossRef]

- Dimakis, A.G.; Godfrey, P.B.; Wu, Y.; Wainwright, M.J.; Ramchandran, K. Network Coding for Distributed Storage Systems. IEEE Trans. Inf. Theory 2010, 56, 4539–4551. [Google Scholar] [CrossRef]

- Liang, W.; Fan, Y.; Li, K.-C.; Zhang, D.; Gaudiot, J.-L. Secure Data Storage and Recovery in Industrial Blockchain Network Environments. IEEE Trans. Ind. Inform. 2020, 16, 6543–6552. [Google Scholar] [CrossRef]

- Shan, Y.; Chen, K.; Gong, T.; Zhou, L.; Zhou, T.; Wu, Y. Geometric Partitioning: Explore the Boundary of Optimal Erasure Code Repair. In Proceedings of the ACM SIGOPS 28th Symposium on Operating Systems Principles, Koblenz, Germany, 26–29 October 2021; Association for Computing Machinery: New York, NY, USA, 2021; pp. 457–471. [Google Scholar]

- Li, R.; Li, X.; Lee, P.P.; Huang, Q. Repair Pipelining for Erasure-Coded Storage. In Proceedings of the USENIX Annual Technical Conference, Santa Clara, CA, USA, 12 July 2017; pp. 567–579. [Google Scholar]

- Mitra, S.; Panta, R.; Ra, M.-R.; Bagchi, S. Partial-Parallel-Repair (PPR): A Distributed Technique for Repairing Erasure Coded Storage. In Proceedings of the Eleventh European Conference on Computer Systems, London, UK, 18–21 April 2016; Association for Computing Machinery: New York, NY, USA, 2016; pp. 1–16. [Google Scholar]

- Li, X.; Yang, Z.; Li, J.; Li, R.; Lee, P.P.C.; Huang, Q.; Hu, Y. Repair Pipelining for Erasure-Coded Storage: Algorithms and Evaluation. ACM Trans. Storage 2021, 17, 1–29. [Google Scholar] [CrossRef]

- Hu, Y.; Li, X.; Zhang, M.; Lee, P.P.C.; Zhang, X.; Zhou, P.; Feng, D. Optimal Repair Layering for Erasure-Coded Data Centers: From Theory to Practice. ACM Trans. Storage 2017, 13, 1–24. [Google Scholar] [CrossRef]

- Xu, L.; Lyu, M.; Li, Z.; Li, C.; Xu, Y. A Data Layout and Fast Failure Recovery Scheme for Distributed Storage Systems With Mixed Erasure Codes. IEEE Trans. Comput. 2022, 71, 1740–1754. [Google Scholar] [CrossRef]

- Liu, K.; Peng, J.; Wang, J.; Huang, Z.; Pan, J. Adaptive and Scalable Caching with Erasure Codes in Distributed Cloud-Edge Storage Systems. IEEE Trans. Cloud Comput. 2022, 11, 1840–1853. [Google Scholar] [CrossRef]

- Nehra, A.; Tripathi, M.; Gaur, M.S.; Battula, R.B.; Lal, C. TILAK: A Token-Based Prevention Approach for Topology Discovery Threats in SDN. Int. J. Commun. Syst. 2019, 32, e3781. [Google Scholar] [CrossRef]

- Marathe, N.; Gandhi, A.; Shah, J.M. Docker Swarm and Kubernetes in Cloud Computing Environment. In Proceedings of the 2019 3rd International Conference on Trends in Electronics and Informatics (ICOEI), Tirunelveli, India, 23–25 April 2019; pp. 179–184. [Google Scholar]

- Blaum, M.; Farrell, P.G.; van Tilborg, H.C.; Pless, V.S.; Huffman, W.C. Array Codes. Handb. Coding Theory 1998, 2, 1855–1909. [Google Scholar]

- Zheng, L.; Li, X. Low-cost Multi-node Failure Repair Method for Erasure Codes. Comput. Eng. 2017, 43, 110–118, 123. [Google Scholar] [CrossRef]

- Zhang, H.; Li, H.; Li, S.-Y.R. Repair Tree: Fast Repair for Single Failure in Erasure-Coded Distributed Storage Systems. IEEE Trans. Parallel Distrib. Syst. 2017, 28, 1728–1739. [Google Scholar] [CrossRef]

- Huang, Y.; Ye, M.; Cai, Y. A Node Selection Scheme for Data Repair Using Erasure Code in Distributed Storage System. In Proceedings of the 6th International Conference on High Performance Compilation, Computing and Communications, Jilin, China, 23–25 June 2022; Association for Computing Machinery: New York, NY, USA, 2022; pp. 19–24. [Google Scholar]

- Zhou, A.; Yi, B.; Luo, L. Tree-Structured Data Placement Scheme with Cluster-Aided Top-down Transmission in Erasure-Coded Distributed Storage Systems. Comput. Netw. 2022, 204, 108714. [Google Scholar] [CrossRef]

- Wang, F.; Tang, Y.; Xie, Y.; Tang, X. XORInc: Optimizing Data Repair and Update for Erasure-Coded Systems with XOR-Based In-Network Computation. In Proceedings of the 2019 35th Symposium on Mass Storage Systems and Technologies (MSST), Santa Clara, CA, USA, 20–24 May 2019; pp. 244–256. [Google Scholar]

- Hou, H.; Lee, P.P.C.; Shum, K.W.; Hu, Y. Rack-Aware Regenerating Codes for Data Centers. IEEE Trans. Inf. Theory 2019, 65, 4730–4745. [Google Scholar] [CrossRef]

- Peizhe, L.; Zhenfeng, X.; Zhongwei, C.; Yichao, W.; Xiangqi, L.; Jin, C.; Jingwen, X.; Yunwei, Z. A Network Traffic Scheduling Strategy for Energy Storage Data Centers Based on SDN. In Proceedings of the 2019 IEEE 19th International Conference on Communication Technology (ICCT), Xi’an, China, 16–19 October 2019; pp. 1413–1417. [Google Scholar]

- Aly, W.H.F. Controller Adaptive Load Balancing for SDN Networks. In Proceedings of the 2019 Eleventh International Conference on Ubiquitous and Future Networks (ICUFN), Zagreb, Croatia, 2–5 July 2019; pp. 514–519. [Google Scholar]

- Liu, W.; Wang, Y.; Zhang, J.; Liao, H.; Liang, Z.; Liu, X. AAMcon: An Adaptively Distributed SDN Controller in Data Center Networks. Front. Comput. Sci. 2020, 14, 146–161. [Google Scholar] [CrossRef]

- Rizvi, S.; Li, X.; Wong, B.; Kazhamiaka, F.; Cassell, B. Mayflower: Improving Distributed Filesystem Performance Through SDN/Filesystem Co-Design. In Proceedings of the 2016 IEEE 36th International Conference on Distributed Computing Systems (ICDCS), Nara, Japan, 27–30 June 2016; pp. 384–394. [Google Scholar]

- Pu, W.; Chen, N.; Zhong, Q. SDCUP: Software-Defined-Control Based Erasure-Coded Collaborative Data Update Mechanism. IEEE Access 2020, 8, 180646–180660. [Google Scholar] [CrossRef]

- Craig, A.; Nandy, B.; Lambadaris, I.; Ashwood-Smith, P. Load Balancing for Multicast Traffic in SDN Using Real-Time Link Cost Modification. In Proceedings of the 2015 IEEE International Conference on Communications (ICC), London, UK, 8–12 June 2015; pp. 5789–5795. [Google Scholar]

| Notation/Variable | Description | Notation/Variable | Description |

|---|---|---|---|

| Encoding parameter | The j-th parity blocks, | ||

| Number of data blocks lost, | The h-th rack, | ||

| Number of racks | The top-of-rack switch of the h-th rack, | ||

| The i-th new node, | The s-th coefficient in the decoding matrix, | ||

| The j-th surviving node, | Encoding units involve in data repair, | ||

| The i-th data node, | A selected new node | ||

| The j-th parity node, | The top-of-rack switch where is located | ||

| The i-th data blocks, |

| Trace | Load Density (%) | Block Size (KB) | Server Workload |

|---|---|---|---|

| volume_0 | 99.7 | 12.17 | Research projects |

| volume_1 | 59.6 | 22.67 | User home directories |

| volume_2 | 4.7 | 19.96 | Hardware monitoring |

| Baseline | SDNC-Repair | |

|---|---|---|

| Repair time with different data block size | Longer repair time as data block size increases | Shorter repair time due to data aggregation in the rack |

| Repair time with different number of nodes | Longer repair time as the number of nodes increases | Shorter repair time due to data source selection algorithm |

| Repair time with one or more failed nodes | Higher repair time with the growth rate significantly higher | Consistently lower repair time |

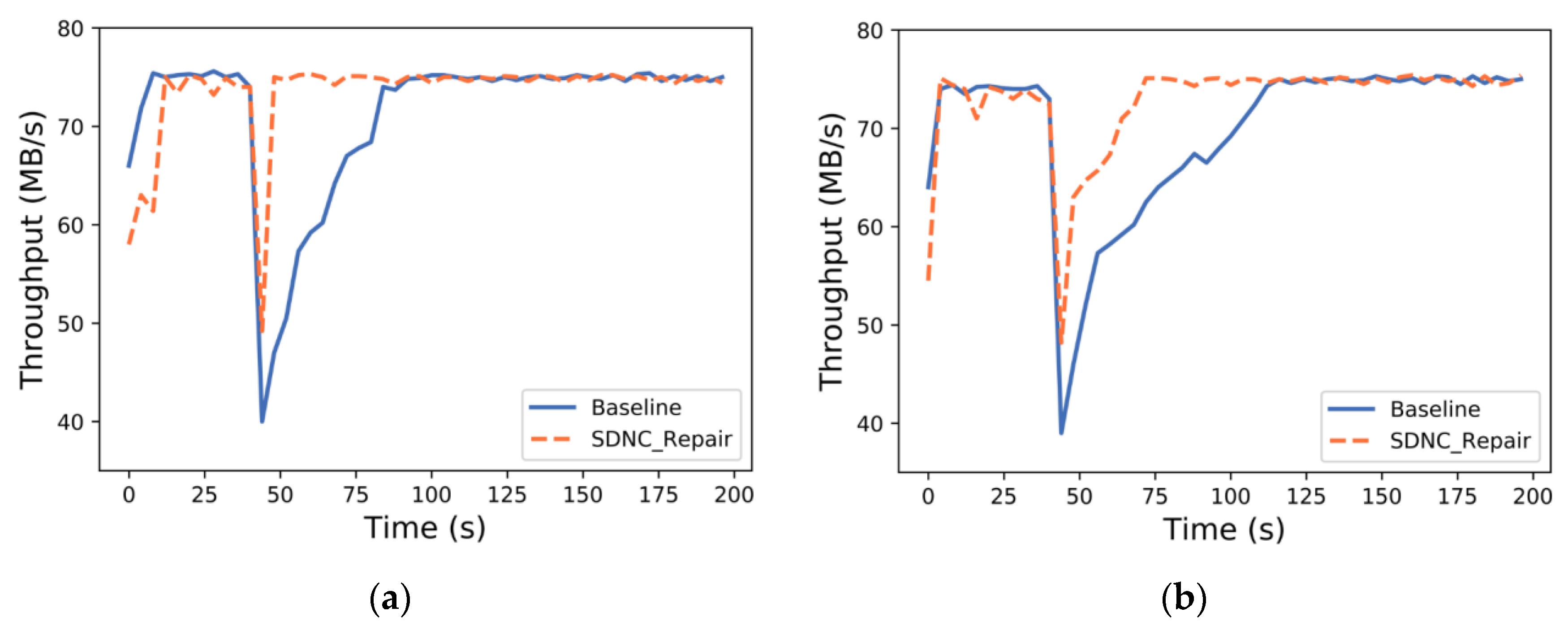

| Impact on system performance during data repair | Longer time to complete repair, decreasing system performance | Quickly recovers to average level |

| Repair time with different erasure code parameters | Increases more quickly | Increases more slowly due to data aggregation in the rack |

| Repair time with different cross-rack bandwidths | Longer repair time due to intense competition for network resources | Shorter repair time due to transmission scheduling algorithm |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chen, N.; Liu, W.; Pu, W.; Liu, Y.; Zhong, Q. SDNC-Repair: A Cooperative Data Repair Strategy Based on Erasure Code for Software-Defined Storage. Sensors 2023, 23, 5809. https://doi.org/10.3390/s23135809

Chen N, Liu W, Pu W, Liu Y, Zhong Q. SDNC-Repair: A Cooperative Data Repair Strategy Based on Erasure Code for Software-Defined Storage. Sensors. 2023; 23(13):5809. https://doi.org/10.3390/s23135809

Chicago/Turabian StyleChen, Ningjiang, Weitao Liu, Wenjuan Pu, Yifei Liu, and Qingwei Zhong. 2023. "SDNC-Repair: A Cooperative Data Repair Strategy Based on Erasure Code for Software-Defined Storage" Sensors 23, no. 13: 5809. https://doi.org/10.3390/s23135809

APA StyleChen, N., Liu, W., Pu, W., Liu, Y., & Zhong, Q. (2023). SDNC-Repair: A Cooperative Data Repair Strategy Based on Erasure Code for Software-Defined Storage. Sensors, 23(13), 5809. https://doi.org/10.3390/s23135809