Towards an Optimized Distributed Message Queue System for AIoT Edge Computing: A Reinforcement Learning Approach

Abstract

1. Introduction

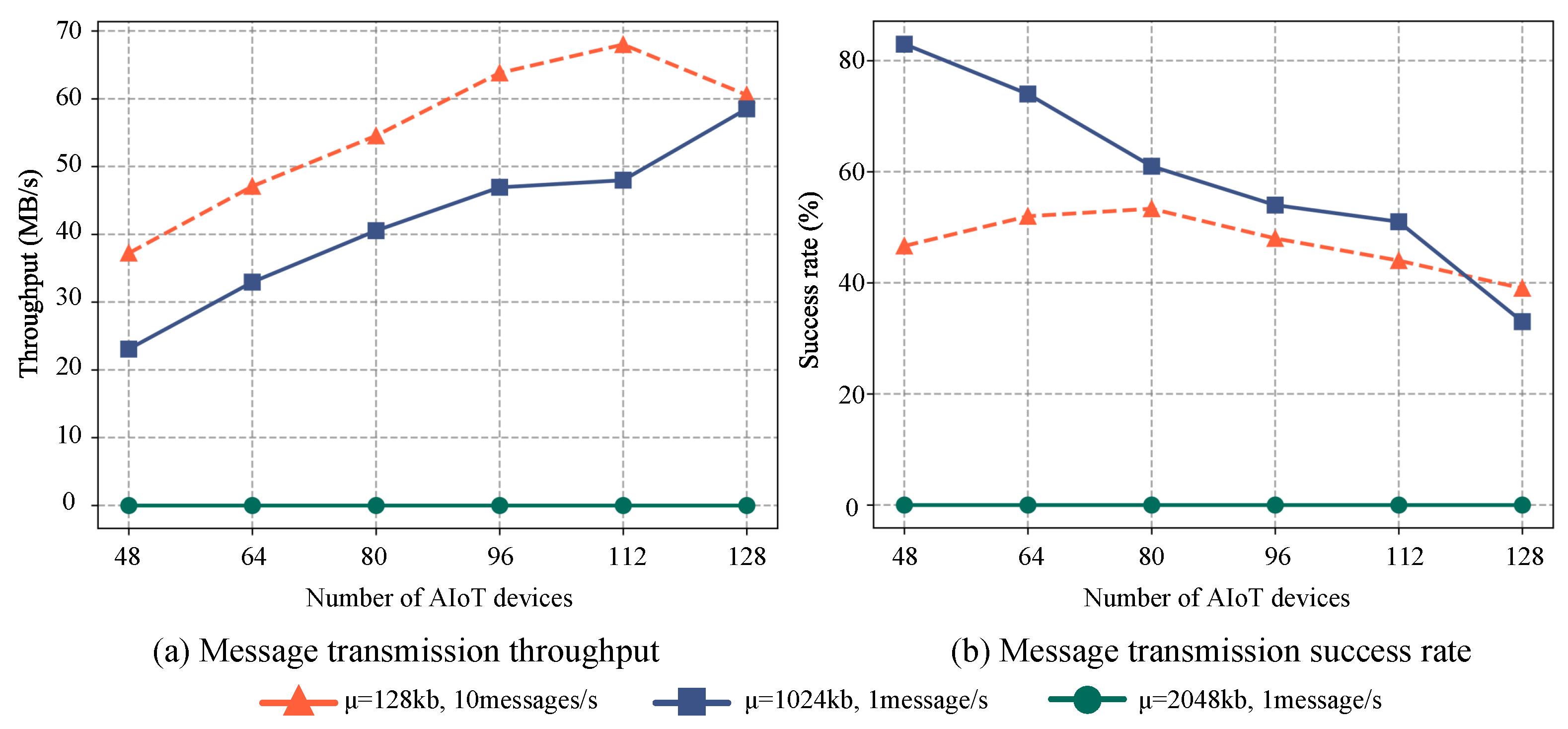

- We propose a distributed message system for large-scale AIoT based on Kafka to address message ordering challenges in AIoT edge computing. The impact of different factors on system performance in distributed AIoT messaging scenarios is investigated. A partition selection algorithm (PSA) is specifically designed for the proposed distributed message queues, aiming to maintain the order of AIoT messages, balance the load among broker clusters, and enhance availability during the publication of subscribable messages by AIoT edge devices.

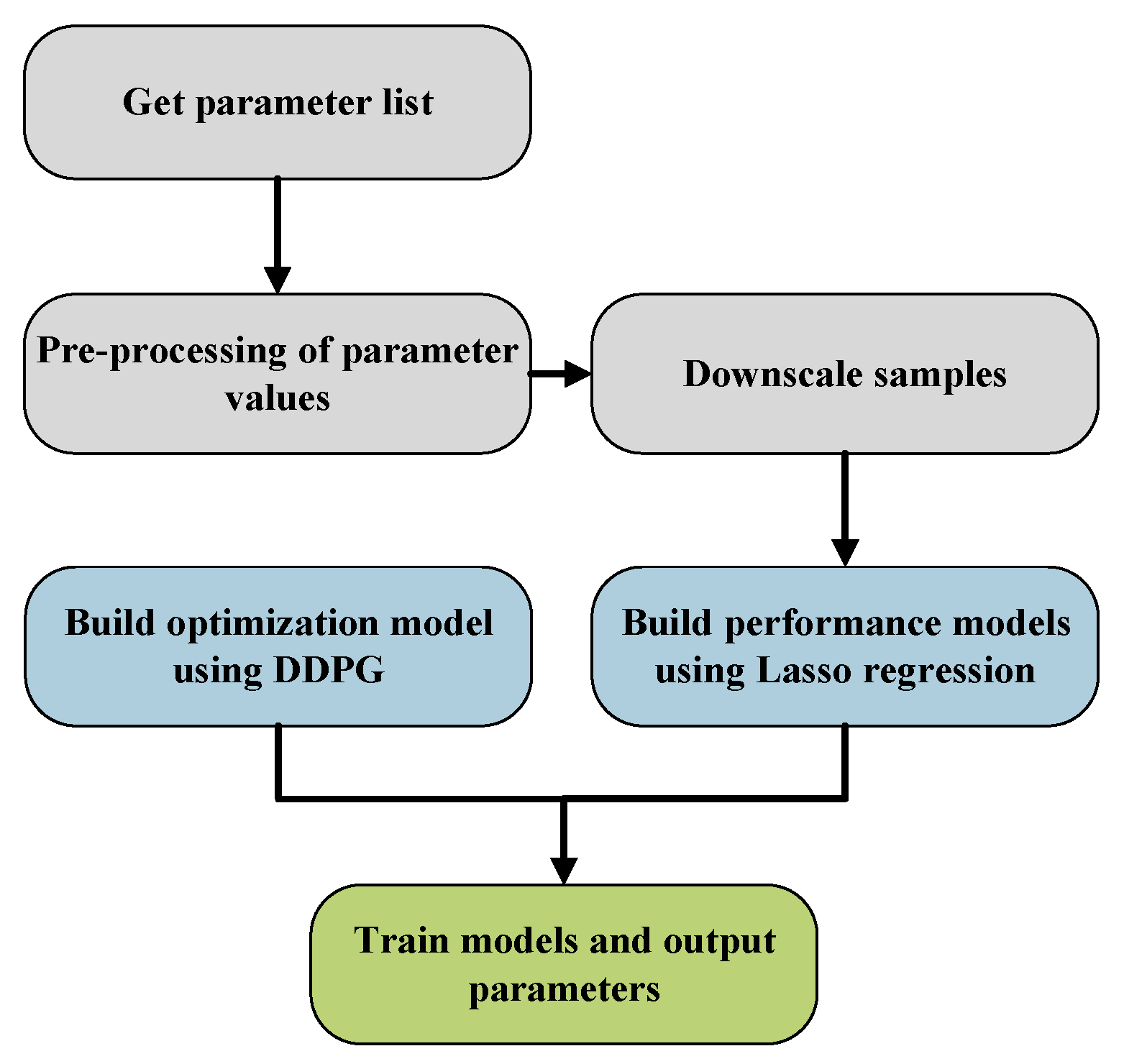

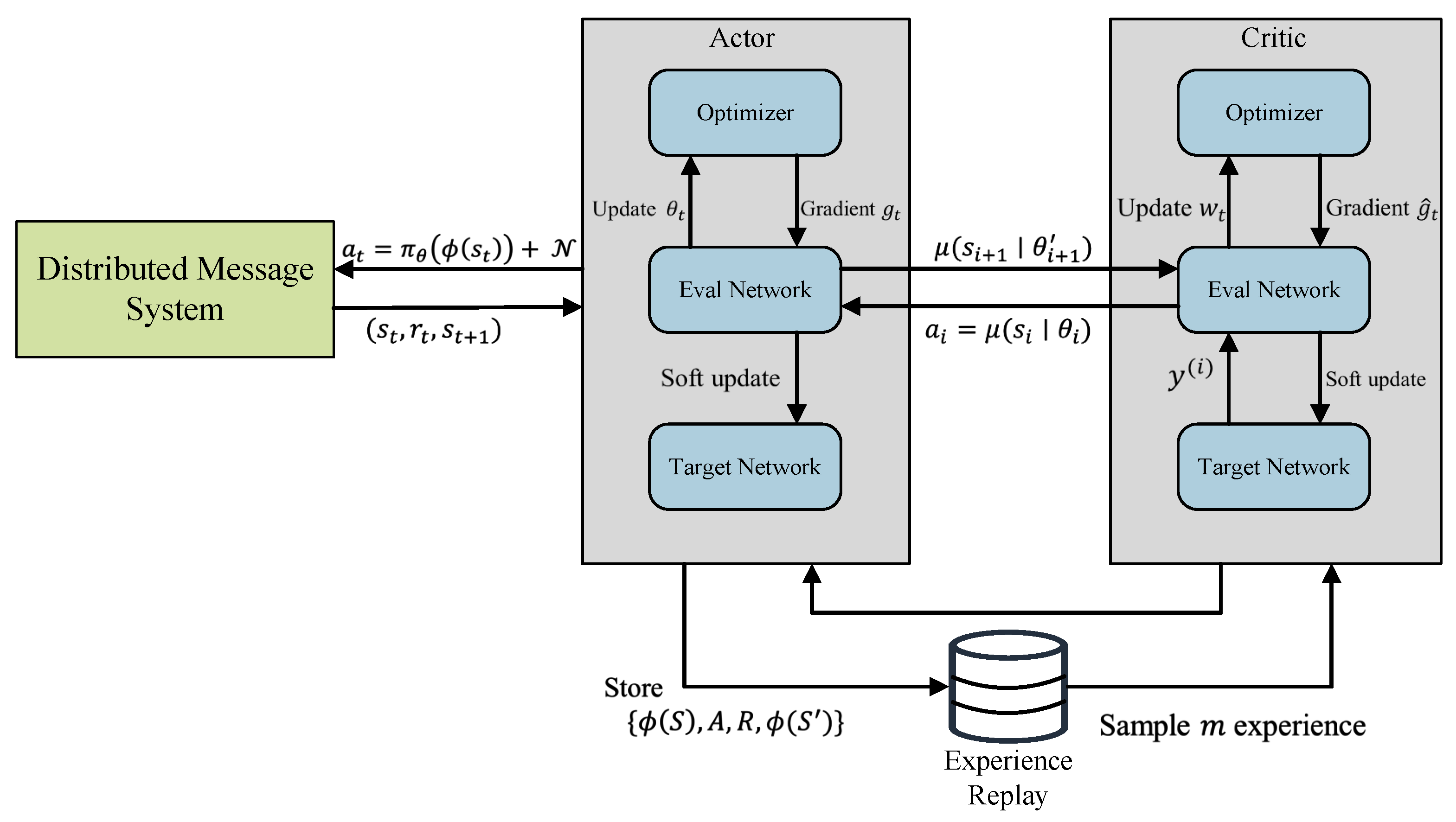

- We propose a reinforcement-learning-based method called DMSCO (DDPG-based distributed message queue systems configuration optimization) that utilizes a preprocessed parameter list as an action space to train our decision model. By incorporating rewards based on the distributed message queue system’s throughput and message transmission success rate, DMSCO efficiently optimizes messaging performance in AIoT scenarios by adaptively fine-tuning parameter configurations.

- We conducted a comprehensive evaluation of the proposed DMSCO algorithm, assessing its performance efficacy for the distributed message queue system in AIoT edge computing scenarios across varying message sizes and transmission frequencies. Through comparative analysis against methods employing genetic algorithms and random searching, we observed that the DMSCO algorithm provides an improved solution to meet the specific demands of larger-scale, high-concurrency AIoT edge computing applications.

2. Related Work

3. Distributed Message Queue System for AIoT Edge Computing

3.1. Distributed Message System for Large-Scale AIoT Edge Computing

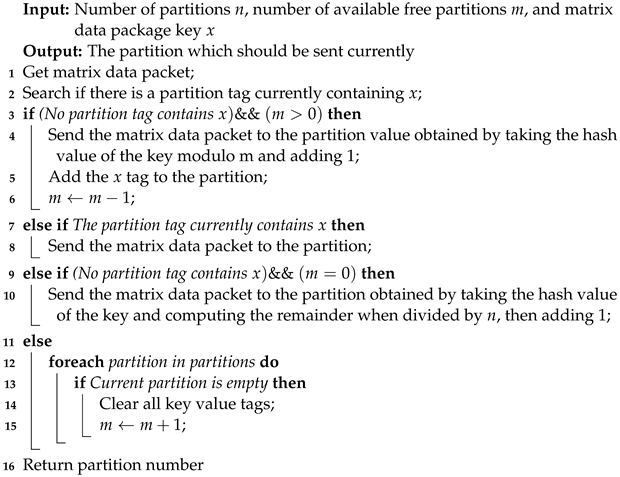

| Algorithm 1: Partition selection algorithm (PSA). |

|

3.2. Performance Modeling in AIoT Edge Computing Scenarios

4. Reinforcement-Learning-Based Method for Optimized AIoT Message Queue System

4.1. Parameter Screening

| Algorithm 2: Dimensionality reduction method based on PCA for the initial training sample set. |

| Input: Original samples , where each row represents values of each parameter in the training samples and each column represents the data of the i-th sample Output: The final sample dataset Y

|

4.2. Lasso-Regression-Based Performance Modeling

| Algorithm 3: Performance modeling and key parameters screening by Lasso regression. |

Input: Preprocessed samples Output: Key parameters and their weightings

|

4.3. Optimization Method Based on Deep Deterministic Policy Gradient Algorithm

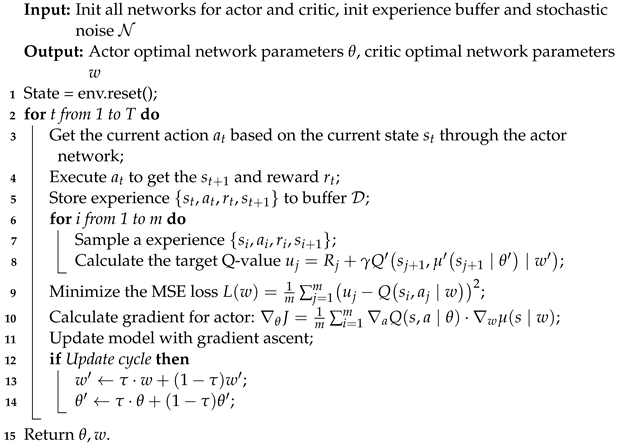

| Algorithm 4: DDPG-based distributed message system configuration optimization (DMSCO). |

|

- Environment: The environment refers to the distributed message system being optimized. We utilize the performance model built through the Lasso regression as the simulated edge environment, wherein the resultant increase or decrease in the system’s throughput serves as a performance-based reward.

- Agent: The configuration optimizer based on DDPG is regarded as the agent.

- Action: Action is depicted as a vector consisting of adjustable parameters.

- State: State can refer to the system running metrics.

- Reward: The reward is defined as the augmentation in throughput relative to both the initial configuration and the preceding one.

4.4. Complexity Analysis

- Forward propagation through the actor and critic networks occurs at each time step t within the range . During these passes, we perform computations on the actor and critic networks. Assuming the complexity of the forward pass for the actor network is , and for the critic network is , the overall complexity for T time steps is . It is important to note that the complexity of or depends on the specific size of the model, as these steps involve matrix multiplications.

- During each time step, a backward propagation is performed to calculate gradients for both the actor and critic networks. This step involves computing the gradients for the actor network with a complexity of and for the critic network with a complexity of . Considering T time steps, the total complexity becomes .

- Gradient descent optimization involves performing the optimization process for each batch of size B. Considering the complexity of the optimization step as , the overall complexity for T time steps can be estimated as , as an optimization step is executed for every B time steps.

- The target networks are updated periodically every time steps, which is a hyper-parameter to control the frequency of synchronization. Assume the complexity of updating the target networks is . This depends on the complexity of replication between two identical network matrices. The overall complexity for T time steps can be approximated as , as a target network update is performed for every time steps.

5. Experiments

Analysis on Performance and Results

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Peres, R.S.; Jia, X.; Lee, J.; Sun, K.; Colombo, A.W.; Barata, J. Industrial artificial intelligence in industry 4.0-systematic review, challenges and outlook. IEEE Access 2020, 8, 220121–220139. [Google Scholar] [CrossRef]

- Ullah, Z.; Al-Turjman, F.; Mostarda, L.; Gagliardi, R. Applications of artificial intelligence and machine learning in smart cities. Comput. Commun. 2020, 154, 313–323. [Google Scholar] [CrossRef]

- Zhu, S.; Ota, K.; Dong, M. Energy-Efficient Artificial Intelligence of Things With Intelligent Edge. IEEE Internet Things J. 2022, 9, 7525–7532. [Google Scholar] [CrossRef]

- Chang, Z.; Liu, S.; Xiong, X.; Cai, Z.; Tu, G. A Survey of Recent Advances in Edge-Computing-Powered Artificial Intelligence of Things. IEEE Internet Things J. 2021, 8, 13849–13875. [Google Scholar] [CrossRef]

- de Freitas, M.P.; Piai, V.A.; Farias, R.H.; Fernandes, A.M.R.; de Moraes Rossetto, A.G.; Leithardt, V.R.Q. Artificial Intelligence of Things Applied to Assistive Technology: A Systematic Literature Review. Sensors 2022, 22, 8531. [Google Scholar] [CrossRef] [PubMed]

- Baker, S.; Xiang, W. Artificial Intelligence of Things for Smarter Healthcare: A Survey of Advancements, Challenges, and Opportunities. IEEE Commun. Surv. Tutorials 2023, 25, 1261–1293. [Google Scholar] [CrossRef]

- Snyder, B.; Bosanac, D.; Davies, R. Introduction to Apache ActiveMQ. In Active MQ in Action; Manning Publications Co.: Shelter Island, NY, USA, 2011; pp. 6–16. [Google Scholar]

- Dinculeană, D.; Cheng, X. Vulnerabilities and limitations of MQTT protocol used between IoT devices. Appl. Sci. 2019, 9, 848. [Google Scholar] [CrossRef]

- Wu, H.; Shang, Z.; Wolter, K. Performance Prediction for the Apache Kafka Messaging System. In Proceedings of the 21st IEEE International Conference on High Performance Computing and Communications, Zhangjiajie, China, 10–12 August 2019; pp. 154–161. [Google Scholar]

- Li, R.; Yin, J.; Zhu, H. Modeling and Analysis of RabbitMQ Using UPPAAL. In Proceedings of the 2020 IEEE 19th International Conference on Trust, Security and Privacy in Computing and Communications (TrustCom), Guangzhou, China, 29 December–1 January 2020; pp. 79–86. [Google Scholar] [CrossRef]

- Fu, G.; Zhang, Y.; Yu, G. A Fair Comparison of Message Queuing Systems. IEEE Access 2021, 9, 421–432. [Google Scholar] [CrossRef]

- Camposo, G. Messaging with Apache Kafka. In Cloud Native Integration with Apache Camel: Building Agile and Scalable Integrations for Kubernetes Platforms; Apress: Berkeley, CA, USA, 2021; pp. 167–209. [Google Scholar] [CrossRef]

- Johansson, L.; Dossot, D. RabbitMQ Essentials: Build Distributed and Scalable Applications with Message Queuing Using RabbitMQ; Packt Publishing Ltd.: Birmingham, UK, 2020. [Google Scholar]

- Leang, B.; Ean, S.; Ryu, G.A.; Yoo, K.H. Improvement of Kafka Streaming Using Partition and Multi-Threading in Big Data Environment. Sensors 2019, 19, 134. [Google Scholar] [CrossRef]

- Wang, G.; Chen, L.; Dikshit, A.; Gustafson, J.; Chen, B.; Sax, M.J.; Roesler, J.; Blee-Goldman, S.; Cadonna, B.; Mehta, A.; et al. Consistency and Completeness: Rethinking Distributed Stream Processing in Apache Kafka. In Proceedings of the 2021 International Conference on Management of Data (SIGMOD ’21), New York, NY, USA, 20–25 June 2021; pp. 2602–2613. [Google Scholar] [CrossRef]

- Jolliffe, I. A 50-year personal journey through time with principal component analysis. J. Multivar. Anal. 2022, 188, 104820. [Google Scholar] [CrossRef]

- Wang, F.; Mukherjee, S.; Richardson, S.; Hill, S.M. High-dimensional regression in practice: An empirical study of finite-sample prediction, variable selection and ranking. Stat. Comput. 2020, 30, 697–719. [Google Scholar] [CrossRef] [PubMed]

- Dilek, S.; Irgan, K.; Guzel, M.; Ozdemir, S.; Baydere, S.; Charnsripinyo, C. QoS-aware IoT networks and protocols: A comprehensive survey. Int. J. Commun. Syst. 2022, 35, e5156. [Google Scholar] [CrossRef]

- Bayılmış, C.; Ebleme, M.A.; Ünal, Çavuşoğlu; Küçük, K.; Sevin, A. A survey on communication protocols and performance evaluations for Internet of Things. Digit. Commun. Netw. 2022, 8, 1094–1104. [Google Scholar] [CrossRef]

- Tariq, M.A.; Khan, M.; Raza Khan, M.T.; Kim, D. Enhancements and Challenges in CoAP—A Survey. Sensors 2020, 20, 6391. [Google Scholar] [CrossRef] [PubMed]

- da Cruz, M.A.; Rodrigues, J.J.; Lorenz, P.; Solic, P.; Al-Muhtadi, J.; Albuquerque, V.H.C. A proposal for bridging application layer protocols to HTTP on IoT solutions. Future Gener. Comput. Syst. 2019, 97, 145–152. [Google Scholar] [CrossRef]

- Hesse, G.; Matthies, C.; Uflacker, M. How Fast Can We Insert? An Empirical Performance Evaluation of Apache Kafka. In Proceedings of the 2020 IEEE 26th International Conference on Parallel and Distributed Systems (ICPADS), Hong Kong, 2–4 December 2020; pp. 641–648. [Google Scholar] [CrossRef]

- Wu, H.; Shang, Z.; Wolter, K. Learning to Reliably Deliver Streaming Data with Apache Kafka. In Proceedings of the 2020 50th Annual IEEE/IFIP International Conference on Dependable Systems and Networks (DSN), Valencia, Spain, 29 June–2 July 2020; pp. 564–571. [Google Scholar] [CrossRef]

- Donta, P.K.; Srirama, S.N.; Amgoth, T.; Annavarapu, C.S.R. Survey on recent advances in IoT application layer protocols and machine learning scope for research directions. Digit. Commun. Netw. 2022, 8, 727–744. [Google Scholar] [CrossRef]

- Dou, H.; Chen, P.; Zheng, Z. Hdconfigor: Automatically Tuning High Dimensional Configuration Parameters for Log Search Engines. IEEE Access 2020, 8, 80638–80653. [Google Scholar] [CrossRef]

- Ma, J.; Xie, S.; Zhao, J. NetMQ: High-performance In-network Caching for Message Queues with Programmable Switches. In Proceedings of the IEEE International Conference on Communications, Seoul, Republic of Korea, 16–20 May 2022; pp. 4595–4600. [Google Scholar] [CrossRef]

- Dou, H.; Wang, Y.; Zhang, Y.; Chen, P. DeepCAT: A Cost-Efficient Online Configuration Auto-Tuning Approach for Big Data Frameworks. In Proceedings of the 51st International Conference on Parallel Processing (ICPP ’22), Bordeaux, France, 29 August–1 September 2022. [Google Scholar] [CrossRef]

- Dou, H.; Zhang, L.; Zhang, Y.; Chen, P.; Zheng, Z. TurBO: A cost-efficient configuration-based auto-tuning approach for cluster-based big data frameworks. J. Parallel Distrib. Comput. 2023, 177, 89–105. [Google Scholar] [CrossRef]

- Gou, F.; Wu, J. Message transmission strategy based on recurrent neural network and attention mechanism in IoT system. J. Circuits Syst. Comput. 2022, 31, 2250126. [Google Scholar] [CrossRef]

- Hong, L.; Deng, L.; Li, D.; Wang, H.H. Artificial intelligence point-to-point signal communication network optimization based on ubiquitous clouds. Int. J. Commun. Syst. 2021, 34, e4507. [Google Scholar] [CrossRef]

- Lillicrap, T.P.; Hunt, J.J.; Pritzel, A.; Heess, N.; Erez, T.; Tassa, Y.; Silver, D.; Wierstra, D. Continuous control with deep reinforcement learning. In Proceedings of the 4th International Conference on Learning Representations, ICLR, San Juan, Puerto Rico, 2–4 May 2016. [Google Scholar]

- Cerda, P.; Varoquaux, G. Encoding High-Cardinality String Categorical Variables. IEEE Trans. Knowl. Data Eng. 2022, 34, 1164–1176. [Google Scholar] [CrossRef]

- Katoch, S.; Chauhan, S.S.; Kumar, V. A review on genetic algorithm: Past, present, and future. Multimed. Tools Appl. 2021, 80, 8091–8126. [Google Scholar] [CrossRef] [PubMed]

| Protocol | MQTT | CoAP | AMQP | HTTP |

|---|---|---|---|---|

| Communication Model | Publish/Subscribe | Request/Response | Publish/Subscribe | Request/Response |

| Lightweight | Yes | Yes | No | No |

| Bandwidth Efficiency | High | High | Medium | Low |

| Power Consumption | Low | Low | Medium | Medium |

| Real-Time Support | Limited | Limited | Yes | Limited |

| Security | Supplementary measures | Built-in options | Advanced options | Built-in options |

| Scalability | High | Medium | High | High |

| Parameter Name | Weight |

|---|---|

| bThreads | 15.03 |

| cType | 70.35 |

| nNThreads | 23.74 |

| nIThreads | 25.16 |

| mMBytes | 60.35 |

| qM·Requests | 124.32 |

| nRFetchers | |

| sRBBytes | 70.42 |

| sSBBytes | 120.35 |

| sRMBytes | 54.36 |

| acks | 43.58 |

| bMemory | 73.66 |

| bSize | |

| lMs | 34.32 |

| Component | Specification/Version |

|---|---|

| Operating system | Ubuntu 20.04.1 LTS |

| CPU | 48 CPUs—Intel Xeon Gold 6126 @ 2.60 GHz |

| Memory | 187 GB |

| Hard drive | 8.2 TB |

| LAN speed | 10 GbE |

| Docker version | 23.0.4 |

| Framework | Springboot 2.7 |

| Kafka image | wurstmeister/kafka:2.12-2.4.0 |

| Kafka Java client | Producer and consumer |

| Maven libraries | spring-boot-starter-web: v2.1.4, spring-kafka:v2.1.7, lombok: 0.32-2018.2 |

| Methods | Scenario | Throughput | Success Rate |

|---|---|---|---|

| DMSCO | Small-size msg and high frequency | 88.79 MB/s | 57.78% |

| Large-size msg and low frequency | 108.50 MB/s | 68.45% | |

| Genetic algorithm | Small-size msg and high frequency | 80.52 MB/s | 49.80% |

| Large-size msg and low frequency | 92.32 MB/s | 61.15% | |

| Random searching | Small-size msg and high frequency | 73.99 MB/s | 45.38% |

| Large-size msg and low frequency | 79.03 MB/s | 61.15% | |

| No optimization | Small-size msg and high frequency | 60.56 MB/s | 39.54% |

| Large-size msg and low frequency | 58.51 MB/s | 33.67% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xie, Z.; Ji, C.; Xu, L.; Xia, M.; Cao, H. Towards an Optimized Distributed Message Queue System for AIoT Edge Computing: A Reinforcement Learning Approach. Sensors 2023, 23, 5447. https://doi.org/10.3390/s23125447

Xie Z, Ji C, Xu L, Xia M, Cao H. Towards an Optimized Distributed Message Queue System for AIoT Edge Computing: A Reinforcement Learning Approach. Sensors. 2023; 23(12):5447. https://doi.org/10.3390/s23125447

Chicago/Turabian StyleXie, Zaipeng, Cheng Ji, Lifeng Xu, Mingyao Xia, and Hongli Cao. 2023. "Towards an Optimized Distributed Message Queue System for AIoT Edge Computing: A Reinforcement Learning Approach" Sensors 23, no. 12: 5447. https://doi.org/10.3390/s23125447

APA StyleXie, Z., Ji, C., Xu, L., Xia, M., & Cao, H. (2023). Towards an Optimized Distributed Message Queue System for AIoT Edge Computing: A Reinforcement Learning Approach. Sensors, 23(12), 5447. https://doi.org/10.3390/s23125447