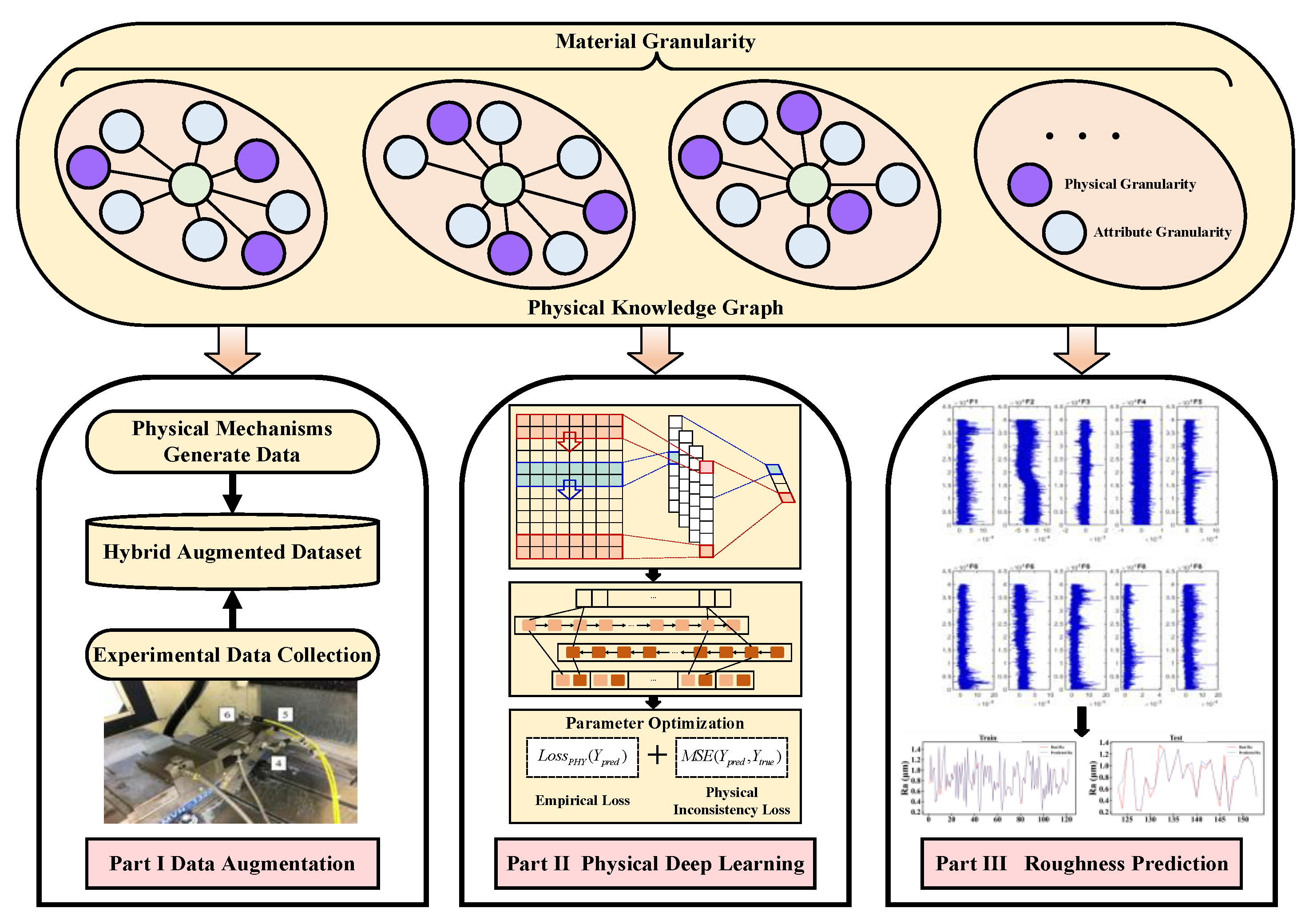

Milling Surface Roughness Prediction Based on Physics-Informed Machine Learning

Abstract

1. Introduction

- (1)

- The novel surface roughness prediction model integrates physical knowledge with deep learning to effectively solve the problem of data scarcity. In a limited data scenario, the proposed model has more accuracy and interpretability of prediction results than current methods.

- (2)

- The loss function guided by the physical model constructed in the deep learning model is able to use existing mechanistic knowledge to train the model and guide the model to converge the learning route to the region in the function space that conforms to the physical rules, which effectively improves the training efficiency of the model.

- (3)

- Physical knowledge was introduced as an input into the training process of the deep learning model to guide the learning process, resulting in a significant improvement in the accuracy of milling surface roughness predictions.

2. Related Work

2.1. Surface Roughness Prediction

2.2. Physics-Informed Machine Learning

3. Surface Roughness Prediction Model Based on Physics-Informed Deep Learning

3.1. Surface Roughness Mechanism Model

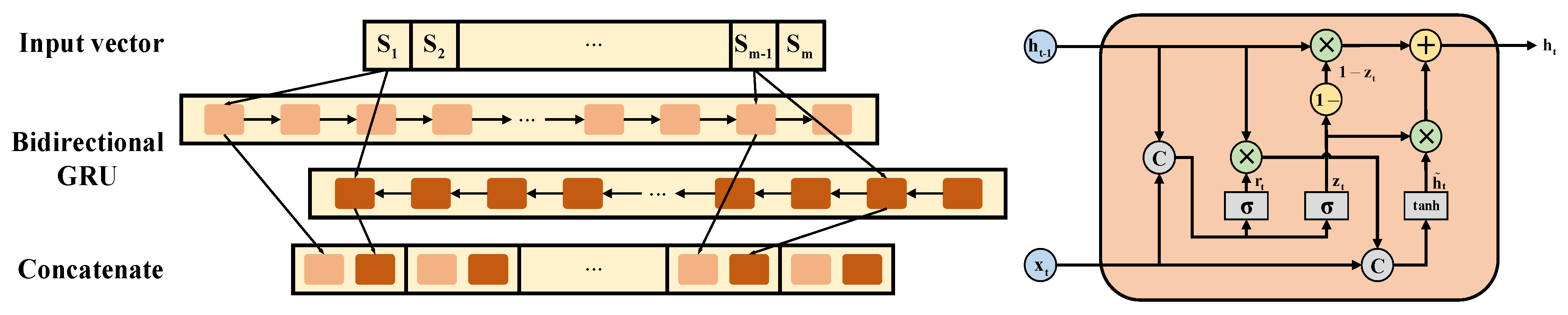

3.2. Physically Guided Deep Prediction Model

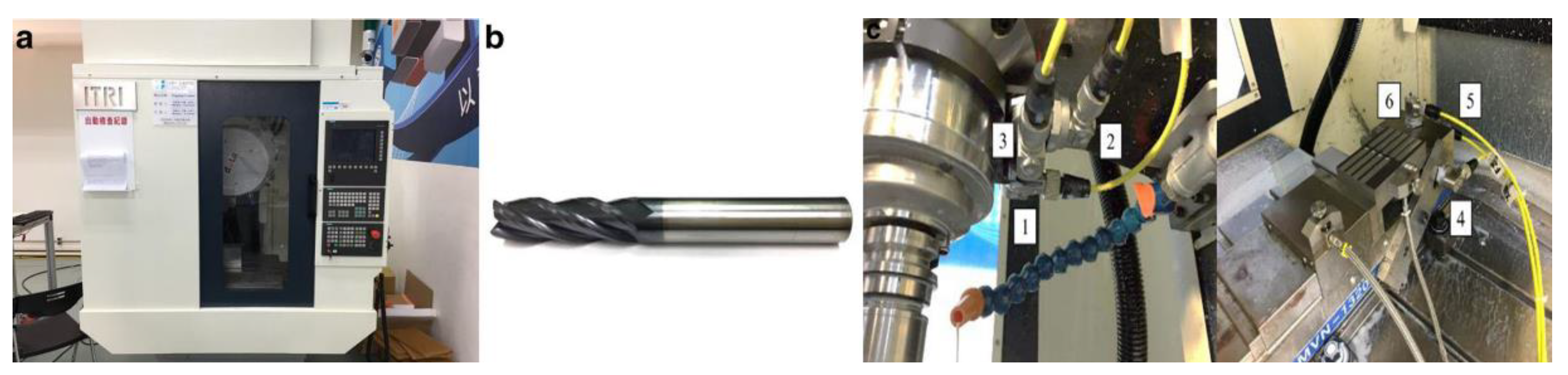

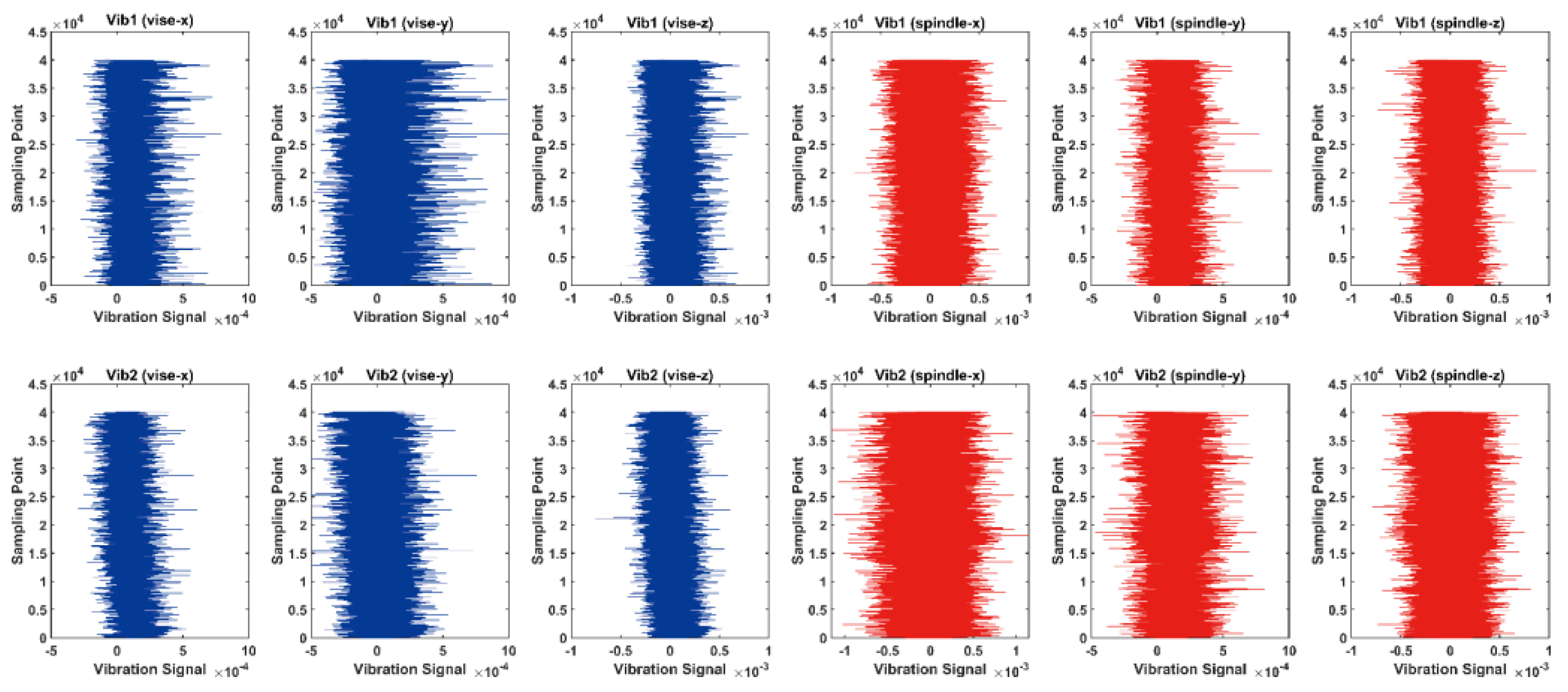

4. Experiment

4.1. Datasets and Metrics

4.2. Baseline Methods

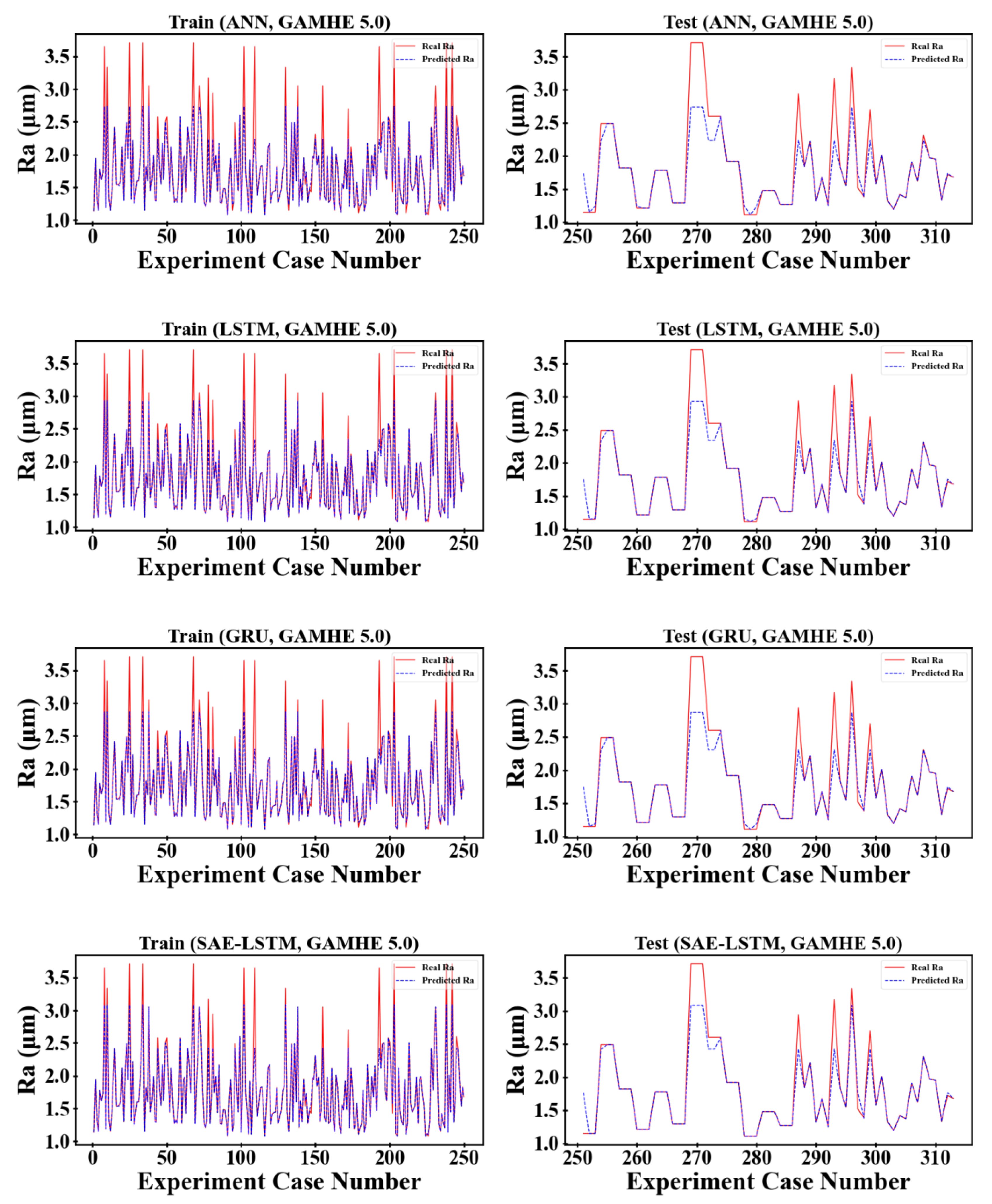

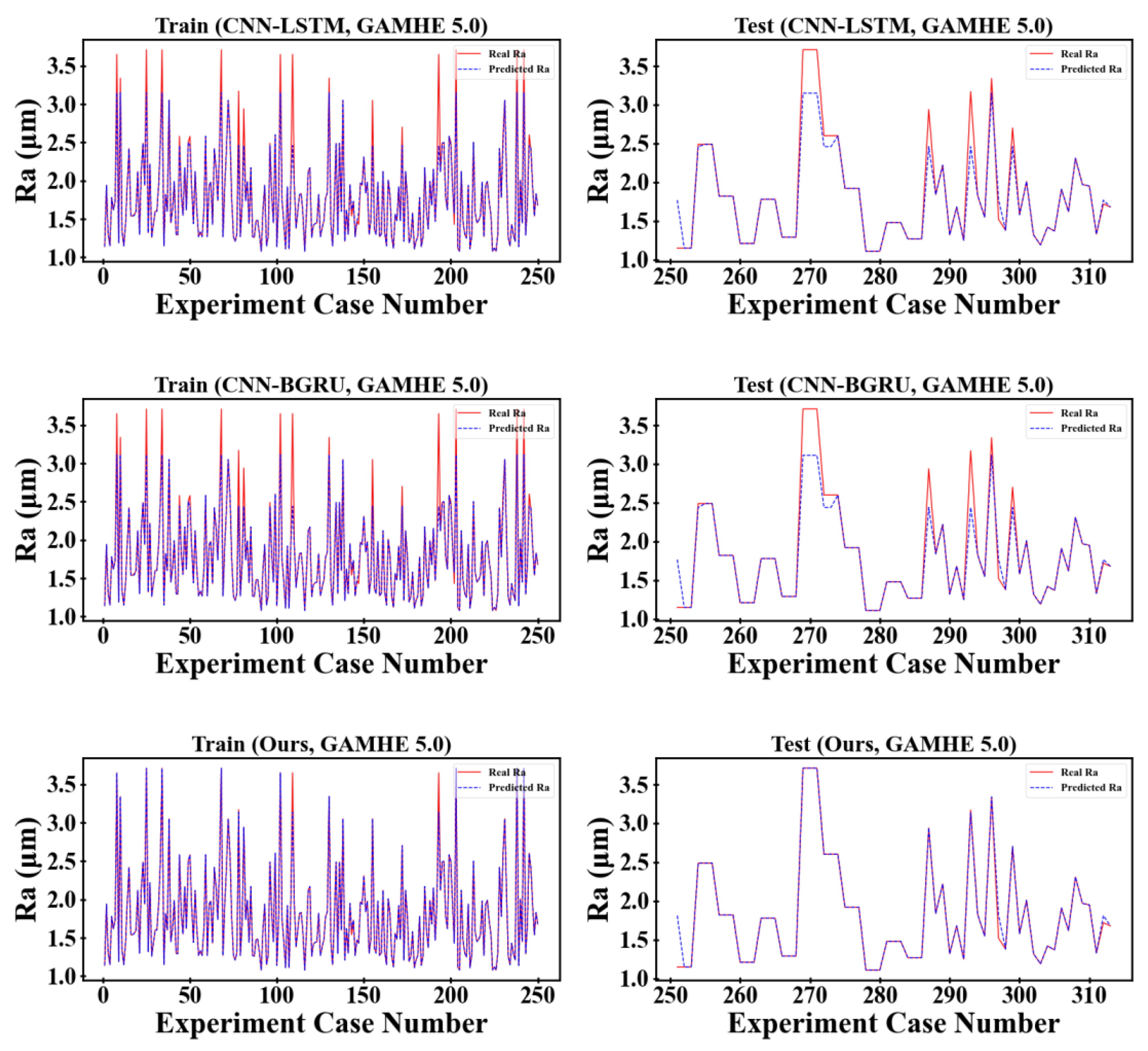

4.3. Experimental Results and Analysis

4.4. Ablation Experiment

5. Discussion

6. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Pimenov, D.Y.; Hassui, A.; Wojciechowski, S.; Mia, M.; Magri, A.; Suyama, D.I.; Bustillo, A.; Krolczyk, G.; Gupta, M.K. Effect of the Relative Position of the Face Milling Tool towards the Workpiece on Machined Surface Roughness and Milling Dynamics. Appl. Sci. 2019, 9, 842. [Google Scholar] [CrossRef]

- Kilickap, E.; Yardımeden, A.; Çelik, Y.H. Investigation of experimental study of end milling of CFRP composite. Sci. Eng. Compos. Mater. 2015, 22, 89–95. [Google Scholar] [CrossRef]

- Liao, Z.; la Monaca, A.; Murray, J.; Speidel, A.; Ushmaev, D.; Clare, A.; Axinte, D.; M’Saoubi, R. Surface integrity in metal machining–Part I: Fundamentals of surface characteristics and formation mechanisms. Int. J. Mach. Tools Manuf. 2021, 162, 103687. [Google Scholar] [CrossRef]

- Han, J.; Hao, X.; Li, L.; Zhong, L.; Zhao, G.; He, N. Investigation on micro-milling of Ti–6Al–4V alloy by PCD slotting-tools. Int. J. Precis. Eng. Manuf. 2020, 21, 291–300. [Google Scholar] [CrossRef]

- Han, J.; Ma, R.; Kong, L.; He, B.; Hao, X.; He, Q.; Li, L.; He, N. Investigation on self-fabricated PCD cutter and its application in deep-and-narrow micro-grooves. Int. J. Adv. Manuf. Technol. 2022, 119, 6743–6760. [Google Scholar] [CrossRef]

- Cui, Z.; Zhang, H.; Zong, W.; Li, G.; Du, K. Origin of the lateral return error in a five-axis ultraprecision machine tool and its influence on ball-end milling surface roughness. Int. J. Mach. Tools Manuf. 2022, 178, 103907. [Google Scholar] [CrossRef]

- Zhang, J.Z.; Chen, J.C.; Kirby, E.D. Surface roughness optimization in an end-milling operation using the Taguchi design method. J. Mater. Process. Technol. 2007, 184, 233–239. [Google Scholar] [CrossRef]

- Rifai, A.P.; Aoyama, H.; Tho, N.H.; Dawal, S.Z.M.; Masruroh, N.A. Evaluation of turned and milled surfaces roughness using convolutional neural network. Measurement 2020, 161, 107860. [Google Scholar] [CrossRef]

- Abouelatta, O.; Mádl, J. Surface roughness prediction based on cutting parameters and tool vibrations in turning operations. J. Mater. Process. Technol. 2001, 118, 269–277. [Google Scholar] [CrossRef]

- Ahmad, M.S.; Adnan, S.M.; Zaidi, S.; Bhargava, P. A novel support vector regression (SVR) model for the prediction of splice strength of the unconfined beam specimens. Constr. Build. Mater. 2020, 248, 118475. [Google Scholar] [CrossRef]

- Schreiber, M.; Klöber-Koch, J.; Bömelburg-Zacharias, J.; Braunreuther, S.; Reinhart, G. Automated quality assurance as an intelligent cloud service using machine learning. Procedia CIRP 2019, 86, 185–191. [Google Scholar] [CrossRef]

- Launhardt, M.; Wörz, A.; Loderer, A.; Laumer, T.; Drummer, D.; Hausotte, T.; Schmidt, M. Detecting surface roughness on SLS parts with various measuring techniques. Polym. Test. 2016, 53, 217–226. [Google Scholar] [CrossRef]

- Luk, F.; Huynh, V.; North, W. Measurement of surface roughness by a machine vision system. J. Phys. E Sci. Instrum. 1989, 22, 977. [Google Scholar] [CrossRef]

- Zhuo, Y.; Han, Z.; An, D.; Jin, H. Surface topography prediction in peripheral milling of thin-walled parts considering cutting vibration and material removal effect. Int. J. Mech. Sci. 2021, 211, 106797. [Google Scholar] [CrossRef]

- Wang, B.; Zhang, Q.; Wang, M.; Zheng, Y.; Kong, X. A predictive model of milling surface roughness. Int. J. Adv. Manuf. Technol. 2020, 108, 2755–2762. [Google Scholar] [CrossRef]

- Zhou, R.; Chen, Q. An analytical prediction model of surface topography generated in 4-axis milling process. Int. J. Adv. Manuf. Technol. 2021, 115, 3289–3299. [Google Scholar] [CrossRef]

- Huang, P.B.; Inderawati, M.M.W.; Rohmat, R.; Sukwadi, R. The development of an ANN surface roughness prediction system of multiple materials in CNC turning. Int. J. Adv. Manuf. Technol. 2023, 125, 1193–1211. [Google Scholar] [CrossRef]

- Liu, L.; Zhang, X.; Wan, X.; Zhou, S.; Gao, Z. Digital twin-driven surface roughness prediction and process parameter adaptive optimization. Adv. Eng. Inform. 2022, 51, 101470. [Google Scholar] [CrossRef]

- Manjunath, K.; Tewary, S.; Khatri, N. Surface roughness prediction in milling using long-short term memory modelling. Mater. Today Proc. 2022, 64, 1300–1304. [Google Scholar] [CrossRef]

- Du, Y.; Song, Q.; Liu, Z. Prediction of micro milling force and surface roughness considering size-dependent vibration of micro-end mill. Int. J. Adv. Manuf. Technol. 2022, 119, 5807–5820. [Google Scholar] [CrossRef]

- Wang, Y.; Wang, Y.; Zheng, L.; Zhou, J. Online Surface Roughness Prediction for Assembly Interfaces of Vertical Tail Integrating Tool Wear under Variable Cutting Parameters. Sensors 2022, 22, 1991. [Google Scholar] [CrossRef] [PubMed]

- Wang, Z.; Li, H.; Yu, T. Study on Surface Integrity and Surface Roughness Model of Titanium Alloy TC21 Milling Considering Tool Vibration. Appl. Sci. 2022, 12, 4041. [Google Scholar] [CrossRef]

- Xu, J.; Yan, F.; Wan, X.; Li, Y.; Zhu, Q. Surface Topography Model of Ultra-High Strength Steel AF1410 Based on Dynamic Characteristics of Milling System. Processes 2023, 11, 641. [Google Scholar] [CrossRef]

- Lyu, W.; Liu, Z.; Song, Q.; Ren, X.; Wang, B.; Cai, Y. Modelling and prediction of surface topography on machined slot side wall with single-pass end milling. Int. J. Adv. Manuf. Technol. 2023, 124, 1095–1113. [Google Scholar] [CrossRef]

- Kashyzadeh, K.R.; Ghorbani, S. New neural network-based algorithm for predicting fatigue life of aluminum alloys in terms of machining parameters. Eng. Fail. Anal. 2023, 146, 107128. [Google Scholar] [CrossRef]

- Chen, C.-H.; Jeng, S.-Y.; Lin, C.-J. Prediction and Analysis of the Surface Roughness in CNC End Milling Using Neural Networks. Appl. Sci. 2022, 12, 393. [Google Scholar] [CrossRef]

- Dubey, V.; Sharma, A.K.; Pimenov, D.Y. Prediction of Surface Roughness Using Machine Learning Approach in MQL Turning of AISI 304 Steel by Varying Nanoparticle Size in the Cutting Fluid. Lubricants 2022, 10, 81. [Google Scholar] [CrossRef]

- Wu, P.; Dai, H.; Li, Y.; He, Y.; Zhong, R.; He, J. A physics-informed machine learning model for surface roughness prediction in milling operations. Int. J. Adv. Manuf. Technol. 2022, 123, 4065–4076. [Google Scholar] [CrossRef]

- Li, Y.; Yu, R.; Shahabi, C.; Liu, Y. Diffusion convolutional recurrent neural network: Data-driven traffic forecasting. arXiv 2017, arXiv:1707.01926. [Google Scholar]

- Zhou, H.; Zhang, S.; Peng, J.; Zhang, S.; Li, J.; Xiong, H.; Zhang, W. Informer: Beyond efficient transformer for long sequence time-series forecasting. In Proceedings of the AAAI Conference on Artificial Intelligence, Online, 2–9 February 2021; pp. 11106–11115. [Google Scholar]

- Wu, Z.; Pan, S.; Long, G.; Jiang, J.; Chang, X.; Zhang, C. Connecting the dots: Multivariate time series forecasting with graph neural networks. In Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Online, 6–10 July 2020; pp. 753–763. [Google Scholar]

- Guen, V.L.; Thome, N. Disentangling physical dynamics from unknown factors for unsupervised video prediction. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 11474–11484. [Google Scholar]

- He, X.; Cao, H.L.; Zhu, B. AdvectiveNet: An Eulerian-Lagrangian Fluidic Reservoir for Point Cloud Processing. arXiv 2020, arXiv:2002.00118. [Google Scholar]

- Seo, S.; Meng, C.; Liu, Y. Physics-aware difference graph networks for sparsely-observed dynamics. In Proceedings of the International Conference on Learning Representations, Addis Ababa, Ethiopia, 30 April 2020. [Google Scholar]

- Iakovlev, V.; Heinonen, M.; Lähdesmäki, H. Learning continuous-time PDEs from sparse data with graph neural networks. arXiv 2020, arXiv:2006.08956. [Google Scholar]

- Arizmendi, M.; Jiménez, A. Modelling and analysis of surface topography generated in face milling operations. Int. J. Mech. Sci. 2019, 163, 105061. [Google Scholar] [CrossRef]

- He, C.L.; Zong, W.J.; Zhang, J.J. Influencing factors and theoretical modeling methods of surface roughness in turning process: State-of-the-art. Int. J. Mach. Tools Manuf. 2018, 129, 15–26. [Google Scholar] [CrossRef]

- Wu, T.Y.; Lei, K.W. Prediction of surface roughness in milling process using vibration signal analysis and artificial neural network. Int. J. Adv. Manuf. Technol. 2019, 102, 305–314. [Google Scholar] [CrossRef]

- Mahjoub, S.; Chrifi-Alaoui, L.; Marhic, B.; Delahoche, L. Predicting Energy Consumption Using LSTM, Multi-Layer GRU and Drop-GRU Neural Networks. Sensors 2022, 22, 4062. [Google Scholar] [CrossRef] [PubMed]

- Liu, Z.; Li, W.; Feng, J.; Zhang, J. Research on Satellite Network Traffic Prediction Based on Improved GRU Neural Network. Sensors 2022, 22, 8678. [Google Scholar] [CrossRef] [PubMed]

- Choudhury, N.A.; Soni, B. An Adaptive Batch Size based-CNN-LSTM Framework for Human Activity Recognition in Uncontrolled Environment. IEEE Trans. Ind. Inform. 2023, 1–9. [Google Scholar] [CrossRef]

- Shang, Y.; Tang, X.; Zhao, G.; Jiang, P.; Lin, T.R. A remaining life prediction of rolling element bearings based on a bidirectional gate recurrent unit and convolution neural network. Measurement 2022, 202, 111893. [Google Scholar] [CrossRef]

| Cutter Parameter | Setup Value |

|---|---|

| Diameter of cutter blade | 10 mm |

| Length of blade | 30 mm |

| Total length of cutter | 75 mm |

| Diameter of cutter hilter | 10 mm |

| Number of blades | 4 |

| Helix angle | 35° |

| Machining Parameter | Setup Value |

|---|---|

| Spindle speed (rpm) | 900, 1000, 1800, 1900, 2000, 2100, 2700, 3000 |

| Feed rate (mm/min) | 228, 240, 252, 320, 400, 420, 532, 560, 588 |

| Feed per tooth (mm/tooth) | 0.02–0.09 (total 10 levels) |

| Cutting depth (mm) | 0.5, 0.6, 0.7, 0.8, 0.9, 1 |

| Clamping torque of vise (N-m) | 18, 30, 75 |

| MRR per cutter (mm3) | Estimated value 0–74.8 |

| Machining Parameter | Setup Value |

|---|---|

| Spindle speed (rpm) | 30,000, 35,000, 40,000, 45,000 |

| Feed rate (mm/min) | 80, 100, 120, 160, 200, 240, 300, 360 |

| Radial depth of cut (mm) | 0.15, 0.25, 0.4, 0.5 |

| Axial depth of cut (mm) | 0.03, 0.05, 0.08, 0.1 |

| Milling tool radius (mm) | 0.15, 0.25, 0.4, 0.5 |

| Model | Training Phase | Testing Phase | ||||

|---|---|---|---|---|---|---|

| RMSE | MAPE | R2 | RMSE | MAPE | R2 | |

| [17] | 0.1202 | 16.24% | 0.8367 | 0.1643 | 25.99% | 0.7841 |

| [39] | 0.1034 | 14.00% | 0.8790 | 0.1518 | 23.54% | 0.8159 |

| [40] | 0.1001 | 13.55% | 0.8867 | 0.1492 | 23.03% | 0.8221 |

| SAE–LSTM [21] | 0.0935 | 12.67% | 0.9011 | 0.1443 | 22.05% | 0.8336 |

| CNN–LSTM [41] | 0.0863 | 11.72% | 0.9158 | 0.1402 | 21.12% | 0.8428 |

| CNN–BGRU [42] | 0.0386 | 5.451% | 0.9831 | 0.1334 | 16.79% | 0.8577 |

| 0.0273 | 3.102% | 0.9916 | 0.1145 | 12.33% | 0.8951 | |

| Model | Training Phase | Testing Phase | ||||

|---|---|---|---|---|---|---|

| RMSE | MAPE | R2 | RMSE | MAPE | R2 | |

| [17] | 0.2496 | 2.662% | 0.8389 | 0.2971 | 4.880% | 0.8079 |

| [39] | 0.2053 | 1.905% | 0.8910 | 0.2435 | 3.823% | 0.8710 |

| [40] | 0.2196 | 2.147% | 0.8753 | 0.2609 | 4.149% | 0.8519 |

| SAE-LSTM [21] | 0.1743 | 1.434% | 0.9214 | 0.2048 | 3.149% | 0.9087 |

| CNN-LSTM [41] | 0.1621 | 1.286% | 0.9321 | 0.1895 | 2.929% | 0.9219 |

| CNN-BGRU [42] | 0.1689 | 1.367% | 0.9262 | 0.1981 | 3.052% | 0.9146 |

| 0.0465 | 0.167% | 0.9944 | 0.0921 | 1.331% | 0.9815 | |

| Pattern | S45C | GAMHE 5.0 | ||||

|---|---|---|---|---|---|---|

| RMSE | MAPE | R2 | RMSE | MAPE | R2 | |

| Origin | 0.1145 | 12.33% | 0.8951 | 0.0921 | 1.331% | 0.9815 |

| -Augmentation | 0.1402 | 21.11% | 0.8430 | 0.2014 | 3.097% | 0.9118 |

| -CNN | 0.1559 | 24.35% | 0.8057 | 0.2379 | 3.715% | 0.8769 |

| -Bidirectional | 0.1362 | 20.05% | 0.8518 | 0.1689 | 2.626% | 0.9379 |

| -Multi-headed | 0.1279 | 17.56% | 0.8691 | 0.1550 | 2.412% | 0.9477 |

| -Loss | 0.1449 | 22.18% | 0.8322 | 0.2210 | 3.420% | 0.8938 |

| -Physical | 0.1503 | 23.25% | 0.8193 | 0.2570 | 4.078% | 0.8562 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zeng, S.; Pi, D. Milling Surface Roughness Prediction Based on Physics-Informed Machine Learning. Sensors 2023, 23, 4969. https://doi.org/10.3390/s23104969

Zeng S, Pi D. Milling Surface Roughness Prediction Based on Physics-Informed Machine Learning. Sensors. 2023; 23(10):4969. https://doi.org/10.3390/s23104969

Chicago/Turabian StyleZeng, Shi, and Dechang Pi. 2023. "Milling Surface Roughness Prediction Based on Physics-Informed Machine Learning" Sensors 23, no. 10: 4969. https://doi.org/10.3390/s23104969

APA StyleZeng, S., & Pi, D. (2023). Milling Surface Roughness Prediction Based on Physics-Informed Machine Learning. Sensors, 23(10), 4969. https://doi.org/10.3390/s23104969