Machine Learning-Based Classification of Human Behaviors and Falls in Restroom via Dual Doppler Radar Measurements †

Abstract

:1. Introduction

- The efficient implementation of Doppler radars and experimental examples for privacy-protected restroom monitoring were provided for the realistic environment; this is a significant contribution because there are only several limited reports on realistic restroom monitoring.

- Efficient classification models and types of their input data for the radar-based restroom-monitoring system were clarified via the thorough comparison of the radar-based motion recognition approaches.

2. Related Work

3. Experiments for Dataset Generation

3.1. Doppler Radar Experiments

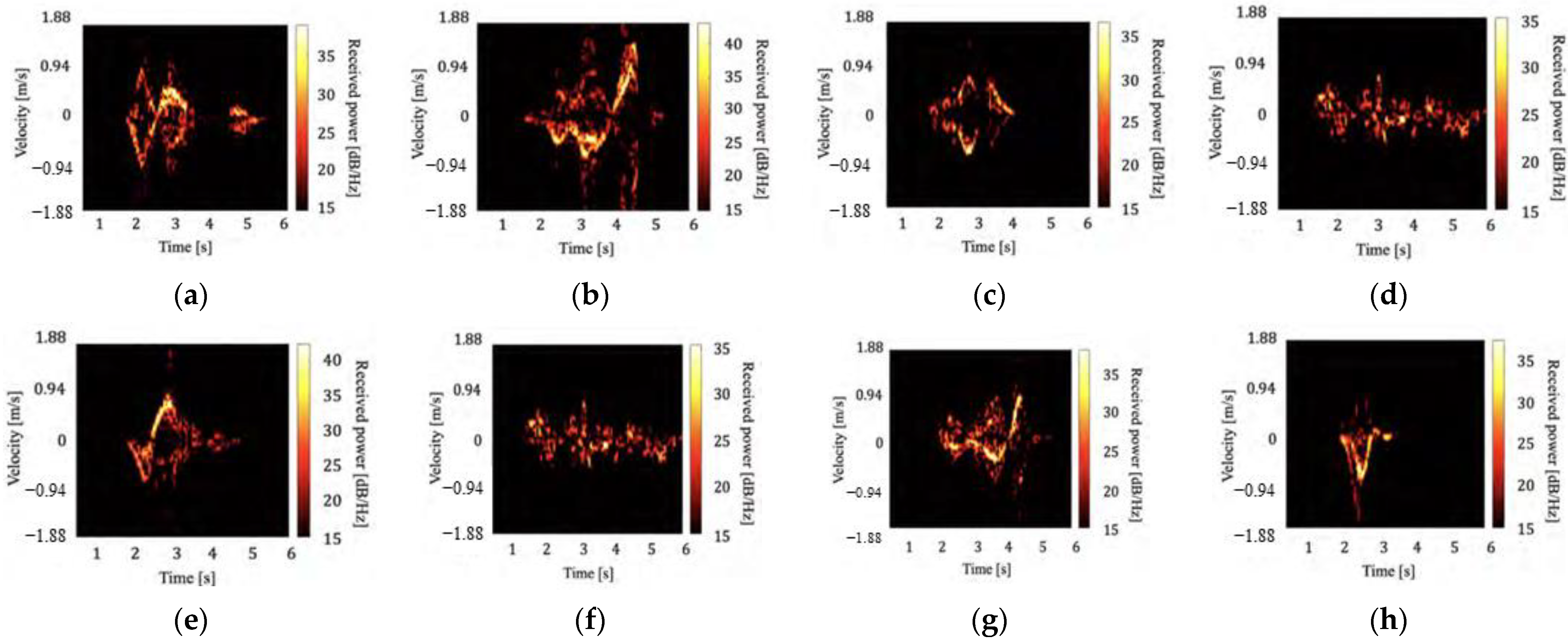

3.2. Generation of Spectrogram Dataset

4. Implementation of the Machine Learning-Based Classification Methods

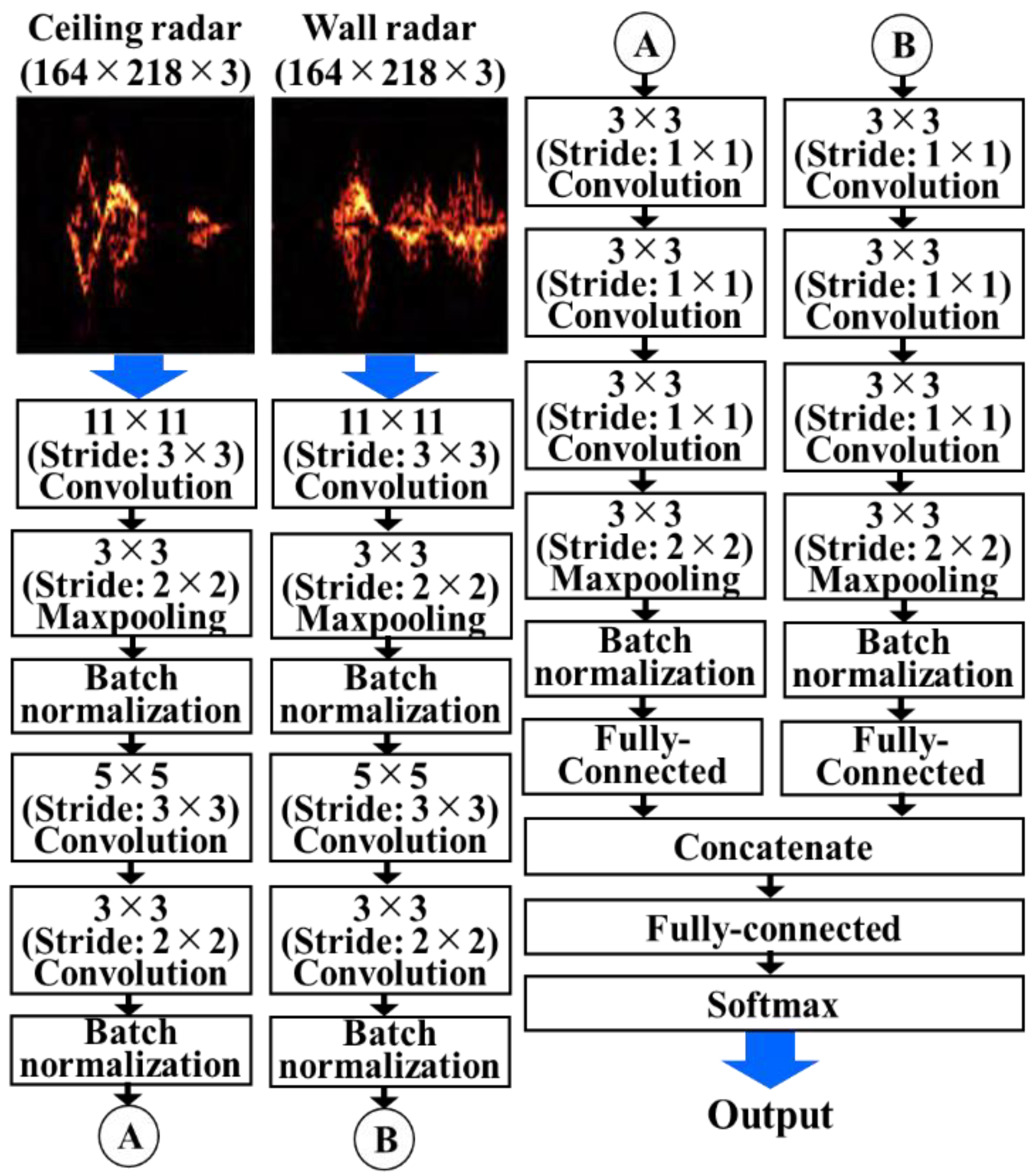

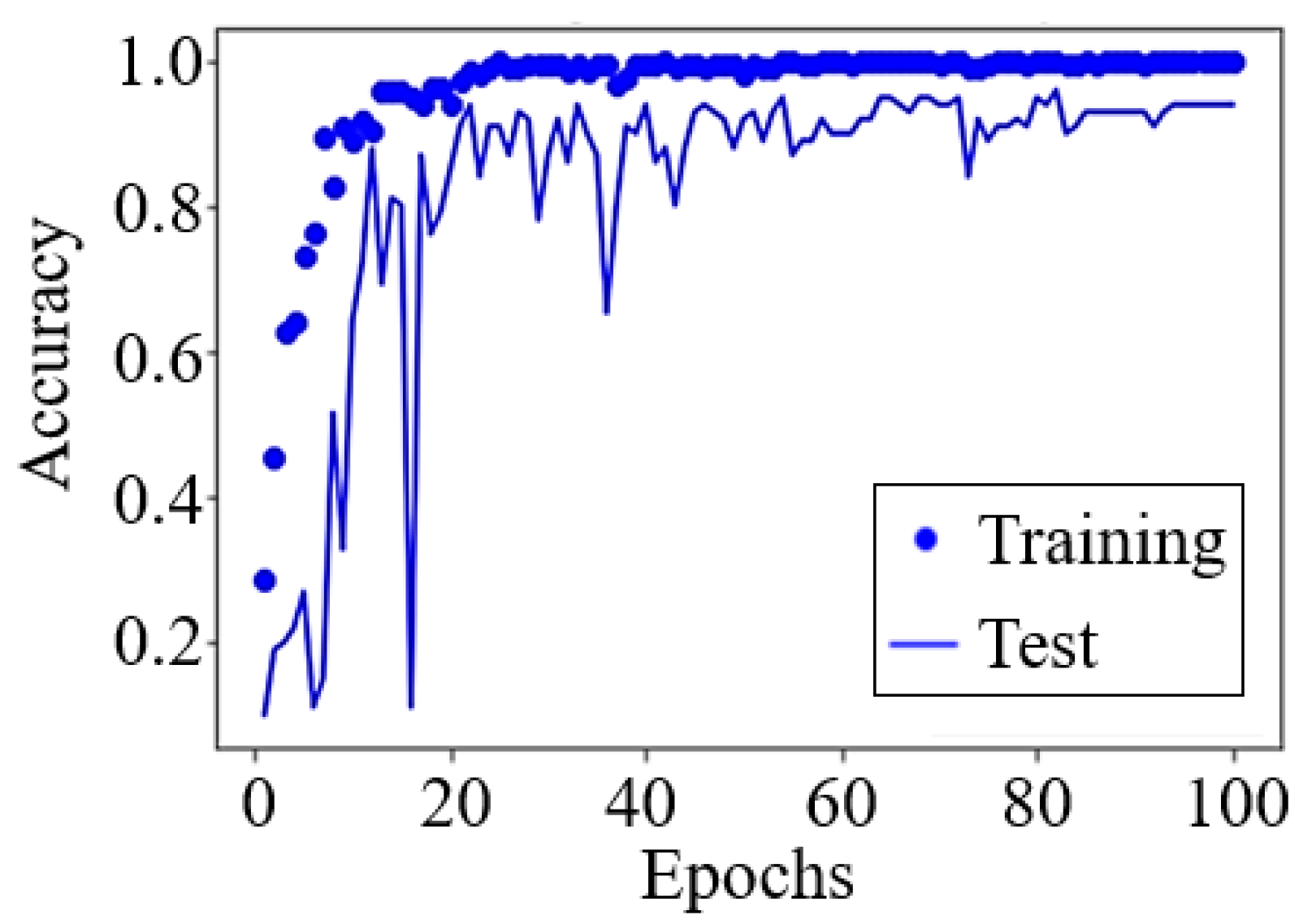

4.1. Spectrogram Image-Based Method Using CNN

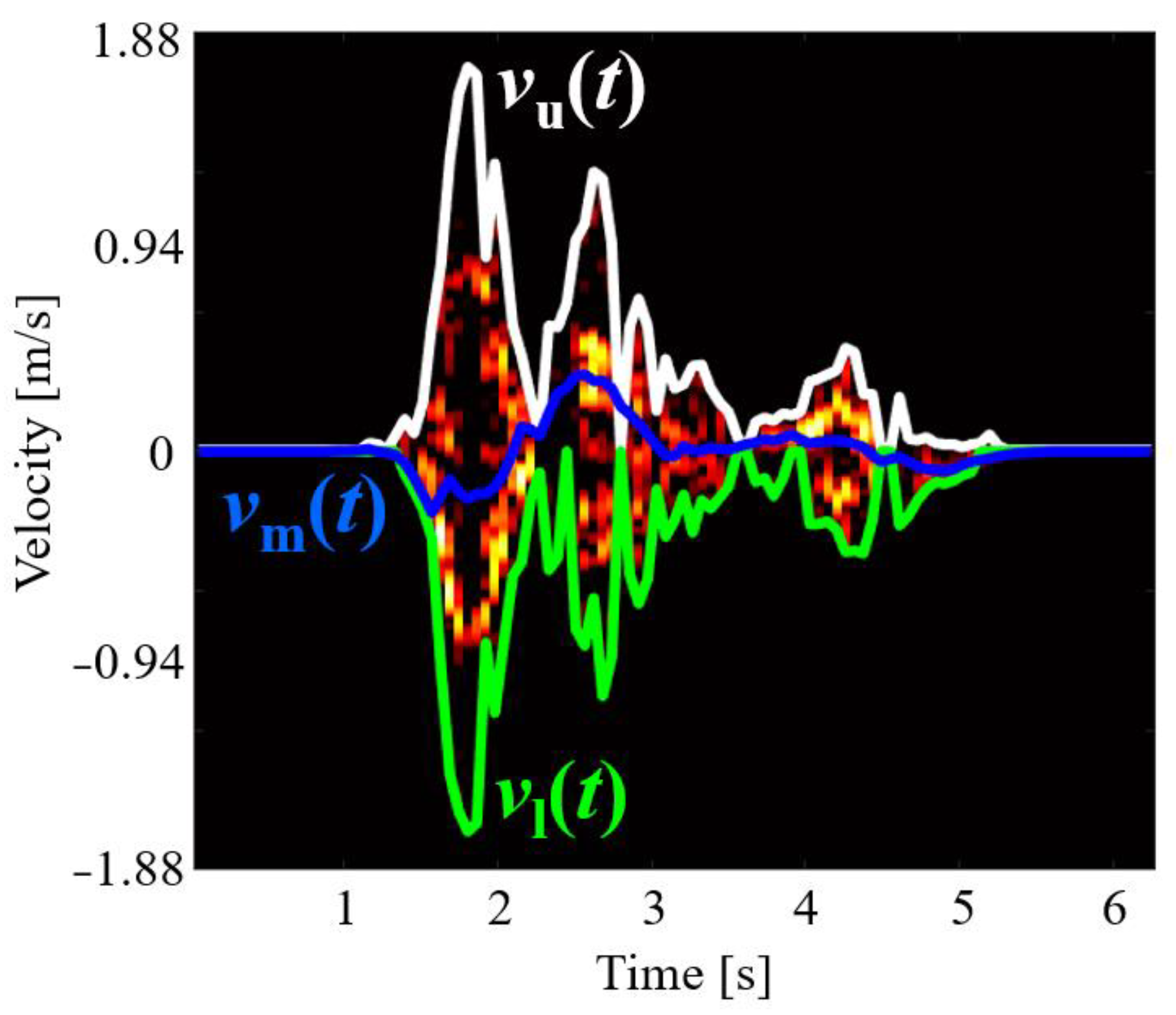

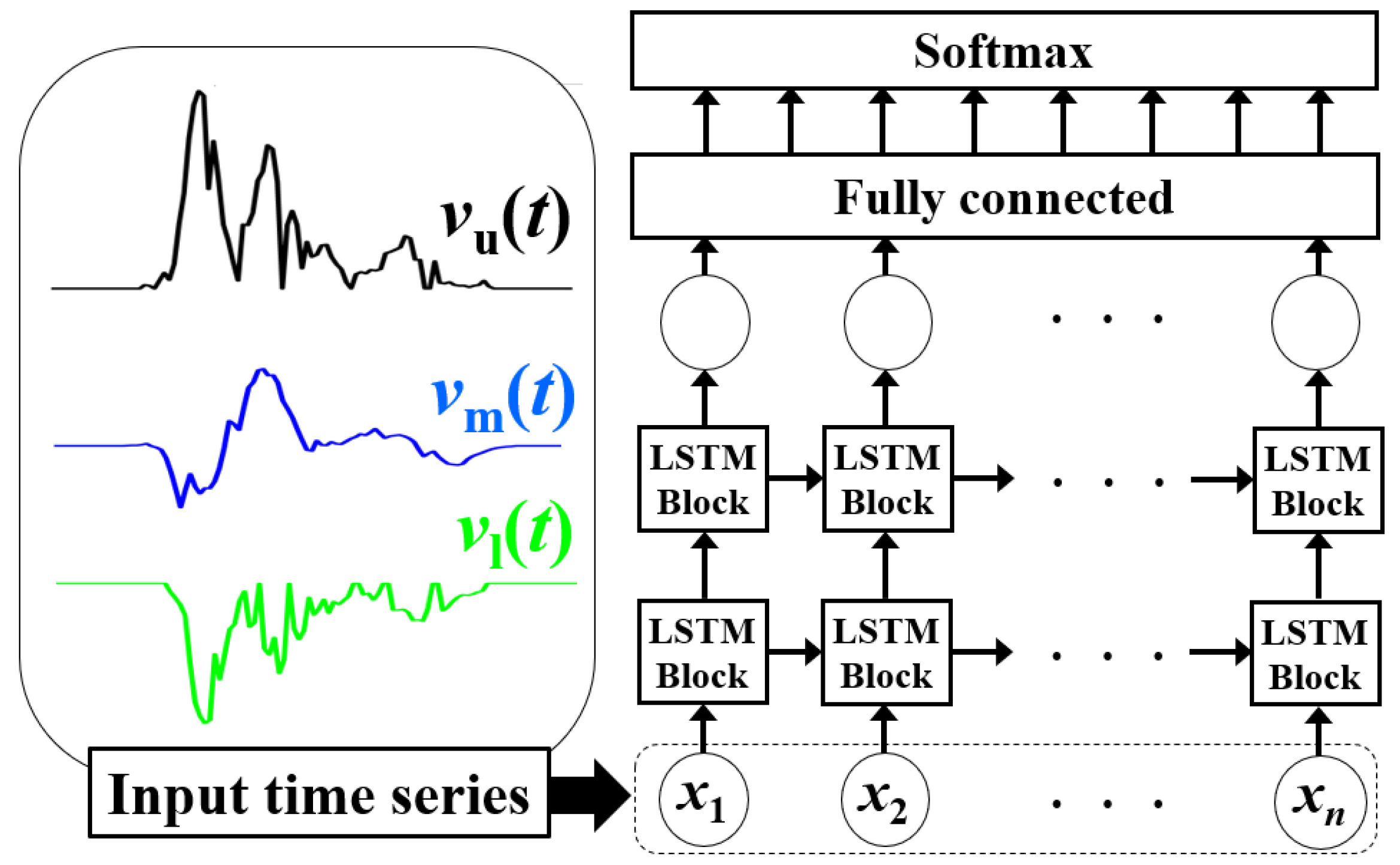

4.2. Spectrogram Envelope-Based Method Using LSTM

4.3. Motion Parameter-Based Methods

5. Evaluation and Discussion

5.1. Main Evaluation Results

5.2. Discussion on Efficient Features

- The wall radar that measured motion in the horizontal direction was more effective than the ceiling radar that measured motion in the vertical direction.

- The accurately classified classes for the two radars were different. Hence, a fusion of the two radars was effective.

- The proposed method effectively used the detailed higher-order derivative parameters of acceleration and jerk.

- Detailed motion information was diffused across the whole of the spectrograms and was not limited to the main components, and was efficiently extracted via the CNN.

5.3. Comparison with Conventional Studies

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- World Population Prospects. The 2019 Revision The Key Findings. Available online: https://esa.un.org/unpd/wpp/Publications/Files/WPP2019_10KeyFindings.pdf (accessed on 17 January 2022).

- Wang, X.; Ellul, J.; Azzopardi, G. Elderly fall detection systems: A literature survey. Front. Robot. AI 2020, 7, 71. [Google Scholar] [CrossRef]

- Neslihan, L.Ö.K.; Belgin, A.K.I.N. Domestic environmental risk factors associated with falling in elderly. Iran. J. Public Health 2013, 42, 120. [Google Scholar]

- Zhang, Y.; Wullems, J.; D’Haeseleer, I.; Abeele, V.V.; Vanrumste, B. Bathroom activity monitoring for older adults via wearable device. In Proceedings of the 2020 IEEE International Conference on Healthcare Informatics (ICHI), Oldenburg, Germany, 30 November–3 December 2020. [Google Scholar]

- Vineeth, C.; Anudeep, J.; Kowshik, G.; Vasudevan, S.K. An enhanced, efficient and affordable wearable elderly monitoring system with fall detection and indoor localisation. Int. J. Med. Eng. Inform. 2021, 13, 254–268. [Google Scholar] [CrossRef]

- Meng, L.; Kong, X.; Taniguchi, D. Dangerous Situation Detection for Elderly Persons in Restrooms Using Center of Gravity and Ellipse Detection. J. Robot. Mechatron. 2017, 29, 1057–1064. [Google Scholar] [CrossRef]

- Harrou, F.; Zerrouki, N.; Sun, Y.; Houacine, A. Vision-based fall detection system for improving safety of elderly people. IEEE Instrum. Meas. Mag. 2017, 20, 49–55. [Google Scholar] [CrossRef] [Green Version]

- Rougier, C.; Meunier, J.; St-Arnaud, A.; Rousseau, J. Robust Video Surveillance for Fall Detection Based on Human Shape Deformation. IEEE Trans. Circuits Syst. Video Technol. 2011, 21, 611–622. [Google Scholar] [CrossRef]

- Kido, S.; Miyasaka, T.; Tanaka, T.; Shimizu, T.; Saga, T. Fall Detection in Toilet Rooms Using Thermal Imaging Sensors. In Proceedings of the 2009 IEEE/SICE International Symposium on System Integration (SII), Tokyo, Japan, 29 January 2009; pp. 83–88. [Google Scholar]

- Shirogane, S.; Takahashi, H.; Murata, K.; Kido, S.; Miyasaka, T.; Saga, T.; Sakurai, S.; Hamaguchi, T.; Tanaka, T. Use of Thermal Sensors for Fall Detection in a Simulated Toilet Environment. Int. J. New Technol. Res. 2019, 5, 21–25. [Google Scholar] [CrossRef]

- Gurbuz, S.Z.; Amin, M.G. Radar-based human-motion recognition with deep learning: Promising applications for indoor monitoring. IEEE Signal Process. Mag. 2019, 36, 16–28. [Google Scholar] [CrossRef]

- Bhattacharya, A.; Vaughan, R. Deep learning radar design for breathing and fall detection. IEEE Sens. J. 2020, 20, 5072–5085. [Google Scholar] [CrossRef]

- Ma, L.; Liu, M.; Wang, N.; Wang, L.; Yang, Y.; Wang, H. Room-level fall detection based on ultra-wideband (UWB) monostatic radar and convolutional long short-term memory (LSTM). Sensors 2020, 20, 1105. [Google Scholar] [CrossRef] [Green Version]

- Tsuchiyama, K.; Kajiwara, A. Accident detection and health-monitoring UWB sensor in toilet. In Proceedings of the 2019 IEEE Topical Conference on Wireless Sensors and Sensor Networks (WiSNet), Orlando, FL, USA, 20–23 January 2019. [Google Scholar] [CrossRef]

- Takabatake, W.; Yamamoto, K.; Toyoda, K.; Ohtsuki, T.; Shibata, Y.; Nagate, A. FMCW Radar-Based Anomaly Detection in Toilet by Supervised Machine Learning Classifier. In Proceedings of the 2019 IEEE Global Communications Conference (GLOBECOM), Waikoloa, HI, USA, 9–13 December 2019. [Google Scholar] [CrossRef]

- Hayashi, S.; Saho, K.; Tsuyama, M.; Masugi, M. Motion Recognition in the Toilet by Dual Doppler Radars Measurement. IEICE Trans. Commun. (Jpn. Ed.) 2021, J104-B, 390–393. (In Japanese) [Google Scholar]

- Tsuyama, M.; Hayashi, S.; Saho, K.; Masugi, M. A Convolutional Neural Network Approach to Classification of Human’s Behaviors in a Restroom Using Doppler Radars. In Proceedings of the ATAIT 2021 (2021 International Symposium on Advanced Technologies and Applications in the Internet of Things), Kusatsu, Japan, 23–24 August 2021. [Google Scholar]

- Suk, M.; Ramadass, A.; Jin, Y.; Prabhakaran, B. Video human motion recognition using a knowledge-based hybrid method based on a hidden Markov model. ACM Trans. Intell. Syst. Technol. 2012, 3, 1–29. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, Z.; Zhang, Y.; Bao, J.; Zhang, Y.; Deng, H. Human activity recognition based on motion sensor using u-net. IEEE Access 2019, 7, 75213–75226. [Google Scholar] [CrossRef]

- Bilen, H.; Fernando, B.; Gavves, E.; Vedaldi, A. Action recognition with dynamic image networks. IEEE Trans. Pattern Anal. Mac. Intell. 2017, 40, 2799–2813. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Ijjina, E.P.; Chalavadi, K.M. Human action recognition in RGB-D videos using motion sequence information and deep learning. Pattern Recognit. 2017, 72, 504–516. [Google Scholar] [CrossRef]

- Krizhevsky, L.A.; Sutskever, I.; Hinton, G.E. ImageNet Classification with Deep ConvolutionalNeural Networks. Adv. Neural Inf. Process. Syst. 2012, 25, 1097–1105. [Google Scholar]

- Kingma, D.P.; Ba, J.L. Adam: A Method for Stochastic Optimization. In Proceedings of the International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Albon, C. Machine Learning with Python Cookbook; O’Reilly Media: Sevastopol, CA, USA, 2018. [Google Scholar]

- Tekeli, B.; Gurbuz, S.Z.; Yuksel, M. Information-theoretic feature selection for human micro-Doppler signature classification. IEEE Trans. Geosci. Remote Sens. 2016, 54, 2749–2762. [Google Scholar] [CrossRef]

- Jiang, X.; Zhang, Y.; Yang, Q.; Deng, B.; Wang, H. Millimeter-wave array radar-based human gait recognition using multi-channel three-dimensional convolutional neural network. Sensors 2020, 20, 5466. [Google Scholar] [CrossRef] [PubMed]

- Garripoli, C.; Mercuri, M.; Karsmakers, P.; Soh, P.J.; Crupi, G.; Vandenbosch, G.A.; Pace, C.; Leroux, P.; Schreurs, D. Embedded DSP-based telehealth radar system for remote in-door fall detection. IEEE J. Biomed. Health Inform. 2014, 19, 92–101. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Su, B.Y.; Ho, K.C.; Rantz, M.J.; Skubic, M. Doppler radar fall activity detection using the wavelet transform. IEEE Trans. Biomed. Eng. 2014, 62, 865–875. [Google Scholar] [CrossRef] [PubMed]

- Cippitelli, E.; Fioranelli, F.; Gambi, E.; Spinsante, S. Radar and RGB-depth sensors for fall detection: A review. IEEE Sens. J. 2017, 17, 3585–3604. [Google Scholar] [CrossRef] [Green Version]

- Amin, M.G.; Zhang, Y.D.; Ahmad, F.; Ho, K.D. Radar signal processing for elderly fall detection: The future for in-home monitoring. IEEE Sign. Process. Mag. 2016, 33, 71–80. [Google Scholar] [CrossRef]

- Jokanović, B.; Amin, M. Fall detection using deep learning in range-Doppler radars. IEEE Trans. Aero. Electron. Syst. 2017, 54, 180–189. [Google Scholar] [CrossRef]

- Han, T.; Kang, W.; Choi, G. IR-UWB sensor based fall detection method using CNN algorithm. Sensors 2020, 20, 5948. [Google Scholar] [CrossRef] [PubMed]

- Saeed, U.; Shah, S.Y.; Shah, S.A.; Ahmad, J.; Alotaibi, A.A.; Althobaiti, T.; Ramzan, N.; Alomainy, A.; Qammer, H.A. Discrete human activity recognition and fall detection by combining FMCW RADAR data of heterogeneous environments for independent assistive living. Electronics 2021, 10, 2237. [Google Scholar] [CrossRef]

- Gorji, A.; Bourdoux, A.; Pollin, S.; Sahli, H. Multi-View CNN-LSTM Architecture for Radar-Based Human Activity Recognition. IEEE Access (Early Access) 2022. Available online: https://ieeexplore.ieee.org/abstract/document/9709793 (accessed on 17 February 2022).

- Seyfioğlu, M.S.; Özbayoğlu, A.M.; Gürbüz, S.Z. Deep convolutional autoencoder for radar-based classification of similar aided and unaided human activities. IEEE Trans. Aerosp. Electron. Syst. 2018, 54, 1709–1723. [Google Scholar] [CrossRef]

- Yacchirema, D.; de Puga, J.S.; Palau, C.; Esteve, M. Fall detection system for elderly people using IoT and ensemble machine learning algorithm. Pers. Ubiquitous Comput. 2019, 23, 801–817. [Google Scholar] [CrossRef]

- Taylor, W.; Dashtipour, K.; Shah, S.A.; Hussain, A.; Abbasi, Q.H.; Imran, M.A. Radar sensing for activity classification in elderly people exploiting micro-doppler signatures using machine learning. Sensors 2021, 21, 3881. [Google Scholar] [CrossRef]

- Shrestha, A.; Li, H.; Le Kernec, J.; Fioranelli, F. Continuous human activity classification from FMCW radar with Bi-LSTM networks. IEEE Sens. J. 2020, 20, 13607–13619. [Google Scholar] [CrossRef]

- Singh, V.; Bhattacharyya, S.; Jain, P.K. Micro-Doppler classification of human movements using spectrogram spatial features and support vector machine. Int. J. RF Microw. Comput.-Aided Eng. 2020, 30, e22264. [Google Scholar] [CrossRef]

- Saho, K.; Shioiri, K.; Fujimoto, M.; Kobayashi, Y. Micro-Doppler Radar Gait Measurement to Detect Age-and Fall Risk-Related Differences in Gait: A Simulation Study on Comparison of Deep Learning and Gait Parameter-Based Approaches. IEEE Access 2021, 9, 18518–18526. [Google Scholar] [CrossRef]

- Saho, K.; Uemura, K.; Sugano, K.; Matsumoto, M. Using micro-Doppler radar to measure gait features associated with cognitive functions in elderly adults. IEEE Access 2019, 7, 24122–24131. [Google Scholar] [CrossRef]

| Method | Ceiling Radar Data | Wall Radar Data | Both Radars |

|---|---|---|---|

| RF | 41.5 ± 4.82% | 55.2 ± 3.80% | 63.8 ± 3.72% |

| SVM | 60.4 ± 5.37% | 62.4 ± 4.31% | 63.4 ± 4.27% |

| LSTM [16] | 72.3 ± 4.96% | 82.6 ± 4.24% | 83.2 ± 3.93% |

| CNN | 90.3 ± 2.66% | 91.5 ± 3.07% | 95.6 ± 2.28% |

| Predicted Label | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| (a) | (b) | (c) | (d) | (e) | (f) | (g) | (h) | ||

| True Label | (a) | 0.90/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0.10/ | 0/ |

| 0.93/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0.07/ | 0/ | ||

| 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ||

| (b) | 0/ | 0.85/ | 0/ | 0.15/ | 0/ | 0/ | 0/ | 0/ | |

| 0/ | 0.77/ | 0/ | 0/ | 0/ | 0/ | 0.23/ | 0/ | ||

| 0 | 0.79 | 0 | 0 | 0 | 0 | 0.21 | 0 | ||

| (c) | 0.08/ | 0/ | 0.92/ | 0/ | 0/ | 0/ | 0/ | 0/ | |

| 0/ | 0/ | 1/ | 0/ | 0/ | 0/ | 0/ | 0/ | ||

| 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | ||

| (d) | 0/ | 0/ | 0/ | 1/ | 0/ | 0/ | 0/ | 0/ | |

| 0/ | 0/ | 0/ | 0.9/ | 0.1/ | 0/ | 0/ | 0/ | ||

| 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | ||

| (e) | 0/ | 0/ | 0/ | 0/ | 0.92/ | 0.08/ | 0/ | 0/ | |

| 0/ | 0/ | 0/ | 0/ | 1/ | 0/ | 0/ | 0/ | ||

| 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | ||

| (f) | 0/ | 0/ | 0/ | 0.22/ | 0.06/ | 0.72/ | 0/ | 0/ | |

| 0/ | 0/ | 0/ | 0/ | 0/ | 0.92/ | 0.08/ | 0/ | ||

| 0.07 | 0 | 0.07 | 0 | 0 | 0.86 | 0 | 0 | ||

| (g) | 0.08/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0.92/ | 0/ | |

| 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 1/ | 0/ | ||

| 0.08 | 0 | 0 | 0 | 0 | 0 | 0.92 | 0 | ||

| (h) | 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 1/ | |

| 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 1/ | ||

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | ||

| Predicted Label | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| (a) | (b) | (c) | (d) | (e) | (f) | (g) | (h) | ||

| True Label | (a) | 0.71/ | 0/ | 0/ | 0/ | 0/ | 0.29/ | 0/ | 0/ |

| 0.90/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0.10/ | 0/ | ||

| 0.62 | 0 | 0 | 0 | 0 | 0.31 | 0.077 | 0 | ||

| (b) | 0.091/ | 0.27/ | 0.091/ | 0.18/ | 0/ | 0/ | 0.36/ | 0/ | |

| 0/ | 0.6/ | 0.2/ | 0/ | 0.067/ | 0.067/ | 0/ | 0.067/ | ||

| 0 | 0.83 | 0.083 | 0.083 | 0 | 0 | 0 | 0 | ||

| (c) | 0/ | 0.083/ | 0.83/ | 0/ | 0/ | 0/ | 0/ | 0.083/ | |

| 0.11/ | 0/ | 0.89/ | 0/ | 0/ | 0/ | 0/ | 0/ | ||

| 0.07 | 0 | 0.93 | 0 | 0 | 0 | 0 | 0 | ||

| (d) | 0/ | 0.071/ | 0/ | 0.86/ | 0/ | 0/ | 0.071/ | 0/ | |

| 0.083/ | 0/ | 0/ | 0.92/ | 0/ | 0/ | 0/ | 0/ | ||

| 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | ||

| (e) | 0/ | 0/ | 0.11/ | 0/ | 0.78/ | 0.056/ | 0/ | 0.056/ | |

| 0/ | 0/ | 0/ | 0/ | 0.94/ | 0.06/ | 0/ | 0/ | ||

| 0 | 0 | 0 | 0 | 0.78 | 0.11 | 0.11 | 0 | ||

| (f) | 0.25/ | 0.13/ | 0/ | 0/ | 0/ | 0.38/ | 0.25/ | 0/ | |

| 0.11/ | 0/ | 0.11/ | 0/ | 0/ | 0.78/ | 0/ | 0/ | ||

| 0.08 | 0 | 0.07 | 0 | 0.17 | 0.75 | 0 | 0 | ||

| (g) | 0/ | 0.16/ | 0/ | 0.077/ | 0/ | 0.077/ | 0.62/ | 0.077/ | |

| 0/ | 0.17/ | 0/ | 0/ | 0/ | 0.083/ | 0.75/ | 0/ | ||

| 0 | 0.14 | 0.07 | 0 | 0 | 0 | 0.79 | 0 | ||

| (h) | 0.059/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0.94/ | |

| 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 1/ | ||

| 0 | 0 | 0.08 | 0 | 0 | 0 | 0 | 0.92 | ||

| Predicted Label | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| (a) | (b) | (c) | (d) | (e) | (f) | (g) | (h) | ||

| True Label | (a) | 0.64/ | 0.091/ | 0/ | 0.18/ | 0.091/ | 0/ | 0/ | 0/ |

| 0.45/ | 0.27/ | 0.091/ | 0/ | 0/ | 0.11/ | 0/ | 0/ | ||

| 0.62 | 0.23 | 0 | 0 | 0 | 0.15 | 0 | 0 | ||

| (b) | 0/ | 0.21/ | 0.14/ | 0.21/ | 0.21/ | 0.071/ | 0.071/ | 0.071/ | |

| 0/ | 0.78/ | 0.11/ | 0/ | 0/ | 0.11/ | 0/ | 0/ | ||

| 0 | 0.71 | 0 | 0.14 | 0 | 0.071 | 0 | 0.071 | ||

| (c) | 0/ | 0/ | 0.45/ | 0/ | 0.45/ | 0/ | 0/ | 0.091/ | |

| 0.059/ | 0.059/ | 0.59/ | 0.18/ | 0.059/ | 0.059/ | 0/ | 0/ | ||

| 0.1 | 0 | 0.65 | 0 | 0.25 | 0 | 0 | 0 | ||

| (d) | 0.083/ | 0/ | 0/ | 0.5/ | 0.083/ | 0.17/ | 0.083/ | 0.083/ | |

| 0/ | 0/ | 0/ | 0.78/ | 0.22/ | 0/ | 0/ | 0/ | ||

| 0 | 0 | 0.2 | 0.6 | 0.067 | 0.067 | 0.067 | 0 | ||

| (e) | 0/ | 0/ | 0.1/ | 0.1/ | 0.6/ | 0.1/ | 0.1/ | 0/ | |

| 0/ | 0/ | 0.091/ | 0.45/ | 0.27/ | 0/ | 0.091/ | 0.091/ | ||

| 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | ||

| (f) | 0.31/ | 0/ | 0/ | 0.15/ | 0.077/ | 0.23/ | 0.15/ | 0.077/ | |

| 0.043/ | 0.26/ | 0/ | 0.043/ | 0.26/ | 0.35/ | 0.043/ | 0/ | ||

| 0.091 | 0 | 0.091 | 0.091 | 0.18 | 0.27 | 0.27 | 0 | ||

| (g) | 0.15/ | 0.15/ | 0.15/ | 0/ | 0.15/ | 0/ | 0.15/ | 0.077/ | |

| 0/ | 0.36/ | 0.21/ | 0.071/ | 0.071/ | 0/ | 0.29/ | 0/ | ||

| 0 | 0.29 | 0.071 | 0 | 0.071 | 0.071 | 0.43 | 0.071 | ||

| (h) | 0.24/ | 0/ | 0.29/ | 0.059/ | 0.12/ | 0.059/ | 0/ | 0.24/ | |

| 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 1/ | ||

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | ||

| Predicted Label | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| (a) | (b) | (c) | (d) | (e) | (f) | (g) | (h) | ||

| True Label | (a) | 0.6/ | 0/ | 0/ | 0.1/ | 0/ | 0.2/ | 0/ | 0.1/ |

| 0.57/ | 0.071/ | 0/ | 0.14/ | 0/ | 0.14/ | 0/ | 0.071/ | ||

| 0.7 | 0 | 0.085 | 0 | 0 | 0.085 | 0.13 | 0 | ||

| (b) | 0.17/ | 0.33/ | 0.083/ | 0/ | 0.17/ | 0/ | 0.25/ | 0/ | |

| 0.077/ | 0.38/ | 0/ | 0/ | 0.077/ | 0.15/ | 0.23/ | 0.077/ | ||

| 0.071 | 0.5 | 0 | 0 | 0.071 | 0.21 | 0.071 | 0.071 | ||

| (c) | 0.067/ | 0/ | 0.33/ | 0/ | 0.27/ | 0.067/ | 0/ | 0.27/ | |

| 0.077/ | 0/ | 0.077/ | 0.46/ | 0.15/ | 0/ | 0.077/ | 0.15/ | ||

| 0.18 | 0 | 0.46 | 0 | 0.36 | 0 | 0 | 0 | ||

| (d) | 0.23/ | 0.23/ | 0.077/ | 0.38/ | 0/ | 0.077/ | 0/ | 0/ | |

| 0/ | 0/ | 0.23/ | 0.69/ | 0/ | 0/ | 0/ | 0.08/ | ||

| 0 | 0 | 0.1 | 0.9 | 0 | 0 | 0 | 0 | ||

| (e) | 0/ | 0/ | 0.4/ | 0/ | 0.33/ | 0/ | 0/ | 0.27/ | |

| 0/ | 0/ | 0/ | 0.55/ | 0.091/ | 0/ | 0.18/ | 0.18/ | ||

| 0 | 0 | 0.3 | 0 | 0.4 | 0.2 | 0 | 0.1 | ||

| (f) | 0.067/ | 0.13/ | 0.13/ | 0.2/ | 0/ | 0.2/ | 0.13/ | 0.13/ | |

| 0.28/ | 0.11/ | 0/ | 0.06/ | 0.17/ | 0.28/ | 0.11/ | 0/ | ||

| 0.14 | 0.06 | 0 | 0 | 0 | 0.6 | 0.2 | 0 | ||

| (g) | 0.11/ | 0.11/ | 0/ | 0/ | 0.11/ | 0.033/ | 0.22/ | 0.11/ | |

| 0.12/ | 0.12/ | 0/ | 0/ | 0.12/ | 0.12/ | 0.5/ | 0/ | ||

| 0.077 | 0 | 0.15 | 0.077 | 0.15 | 0.077 | 0.38 | 0.077 | ||

| (h) | 0.17/ | 0/ | 0.083/ | 0/ | 0.083/ | 0/ | 0.083/ | 0.58/ | |

| 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 0/ | 1/ | ||

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | ||

| Radar | Selected Parameters |

|---|---|

| Ceiling radar | vc-u-std, ac-u-max,ac-u-std,ac-m-max,jc-u-mean,jc-u-mean,jc-u-std,jc-m-max |

| Wall radar | vw-m-max, aw-u-min,aw-u-mean,aw-u-std,aw-m-max,jw-m-max,jw-m-min,jw-l-max |

| Dual radar | vw-u-min, vw-u-mean, vw-u-std,ac-u-max,aw-u-min,aw-u-mean,aw-u-std,aw-m-max,aw-m-min,aw-m-mean,aw-l-min,jc-u-std,jw-m-max,jw-l-max,jc-l-std |

| Study | Sensor | Problem | No. of Participants | Performance |

|---|---|---|---|---|

| [6] | Camera | Detection of dangerous situation | 10 | N. A. (Secure detection of dangerous situation continues for 60 s) |

| [9] | Thermal Sensor | Classification of normal/fall data | 8 | Accuracy over 95% (2-class classification) |

| [10] | Thermal Sensor | Classification of normal use/fall patterns | 10 | Accuracy: 97.8% (2-class classification) |

| [14] | Radar | Detection of dangerous state (such as falls) | 3 | Detection rate: 95% |

| [15] | Radar | Classification of normal/abnormal behaviors (including falls) | 10 |

|

| Our previous study [16] | Radar | Classification of eight behaviors (including falls) | 21 |

|

| This study | Radar | Classification of eight behaviors (including falls) | 21 |

|

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Saho, K.; Hayashi, S.; Tsuyama, M.; Meng, L.; Masugi, M. Machine Learning-Based Classification of Human Behaviors and Falls in Restroom via Dual Doppler Radar Measurements. Sensors 2022, 22, 1721. https://doi.org/10.3390/s22051721

Saho K, Hayashi S, Tsuyama M, Meng L, Masugi M. Machine Learning-Based Classification of Human Behaviors and Falls in Restroom via Dual Doppler Radar Measurements. Sensors. 2022; 22(5):1721. https://doi.org/10.3390/s22051721

Chicago/Turabian StyleSaho, Kenshi, Sora Hayashi, Mutsuki Tsuyama, Lin Meng, and Masao Masugi. 2022. "Machine Learning-Based Classification of Human Behaviors and Falls in Restroom via Dual Doppler Radar Measurements" Sensors 22, no. 5: 1721. https://doi.org/10.3390/s22051721

APA StyleSaho, K., Hayashi, S., Tsuyama, M., Meng, L., & Masugi, M. (2022). Machine Learning-Based Classification of Human Behaviors and Falls in Restroom via Dual Doppler Radar Measurements. Sensors, 22(5), 1721. https://doi.org/10.3390/s22051721