Measurement System for Unsupervised Standardized Assessments of Timed Up and Go Test and 5 Times Chair Rise Test in Community Settings—A Usability Study

Abstract

:1. Introduction

2. Materials and Methods

2.1. Initial Prototype and Usability Study

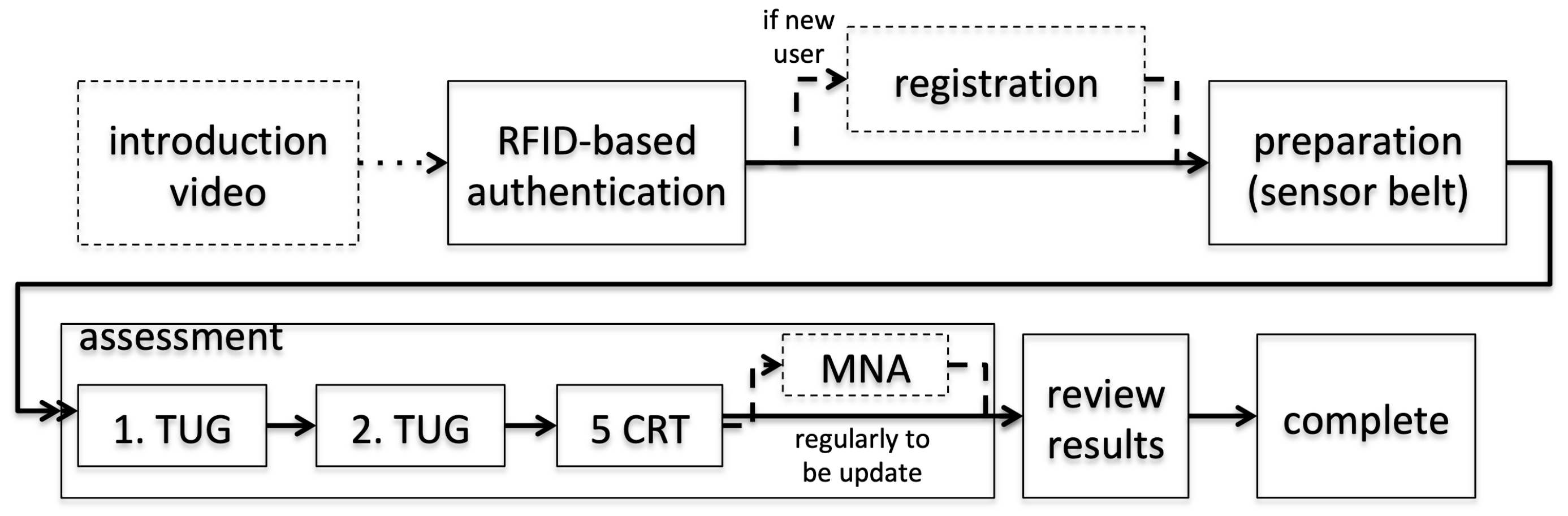

2.1.1. USS’s Initial User Interface

2.1.2. Initial Usability-Study

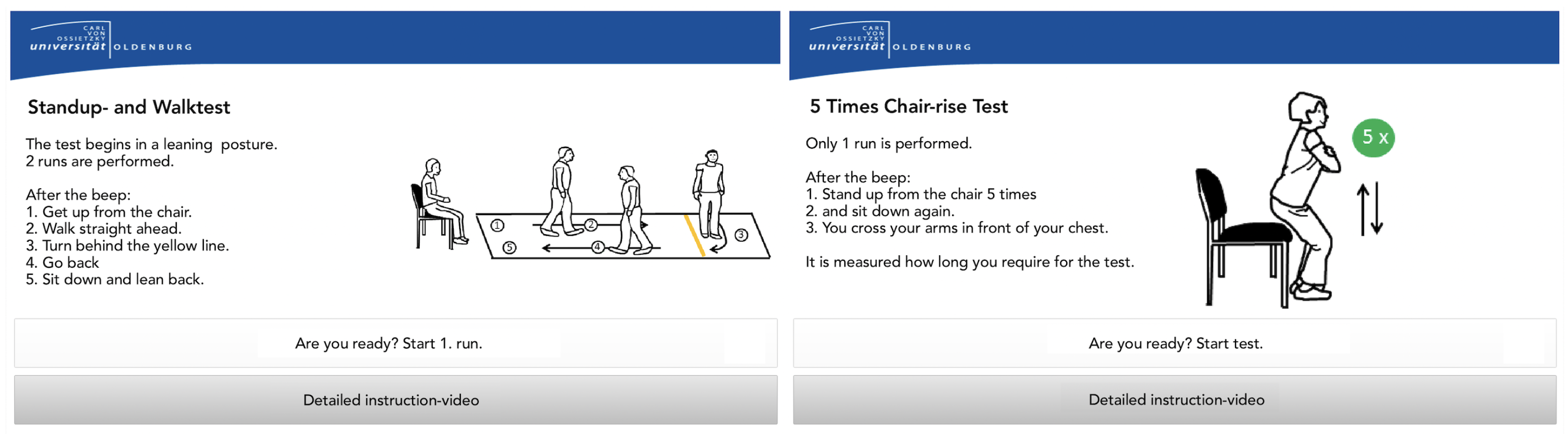

- Task 1 asked participants to “create a new user account and then log out of the system”. This covered implicitly, user comprehensibility, motivational aspects of the introductory video, and the suitability of the RFID login mechanism for authentication. The possibility that inputting age and weight parameters during the registration process may represent a barrier was also evaluated.

- Task 2 asked participants to “perform all mobility tests using the system and view your results at the end”. The applicability of the sensor belt was thus investigated, as well as potential challenges in user confidence resulting from unplugging and replugging in the USB cable. The clarity of the video instructions, regarding both auditory and visual perceptions, and the clarity of the explanation were evaluated.

- Task 3 required participants to “change your username and volume in the settings menu” and evaluated the usability of the corresponding functionality for parameter editing.

- The availability of subtexts.

- The preferred gender of the speaker.

- The suitability of the introduction video.

- The suitability of the multiple instruction videos.

- The suitability of the preparation videos.

- Preferences of the signaling tone indicating the start of an assessment.

- Assessment of the general suitability of the measurement system.

- Suitability of the presentation of the results.

2.1.3. Results and Discussion of the Initial Usability Study

- Some participants suggested that the video instructions were too lengthy and confusing. It was also pointed out that they focused on adults “much older” than the interviewees. By speaking clearly with distinct pauses, most participants felt the video presenter and general system addressed another target group. After identifying evidence for the well-known tendency of elderly groups not wanting to be addressed as restricted [33], we implemented a personalized approach. This offered both a short introductory audio with instructions and an extended video with instructions, activated once handling errors are recognized by the system.

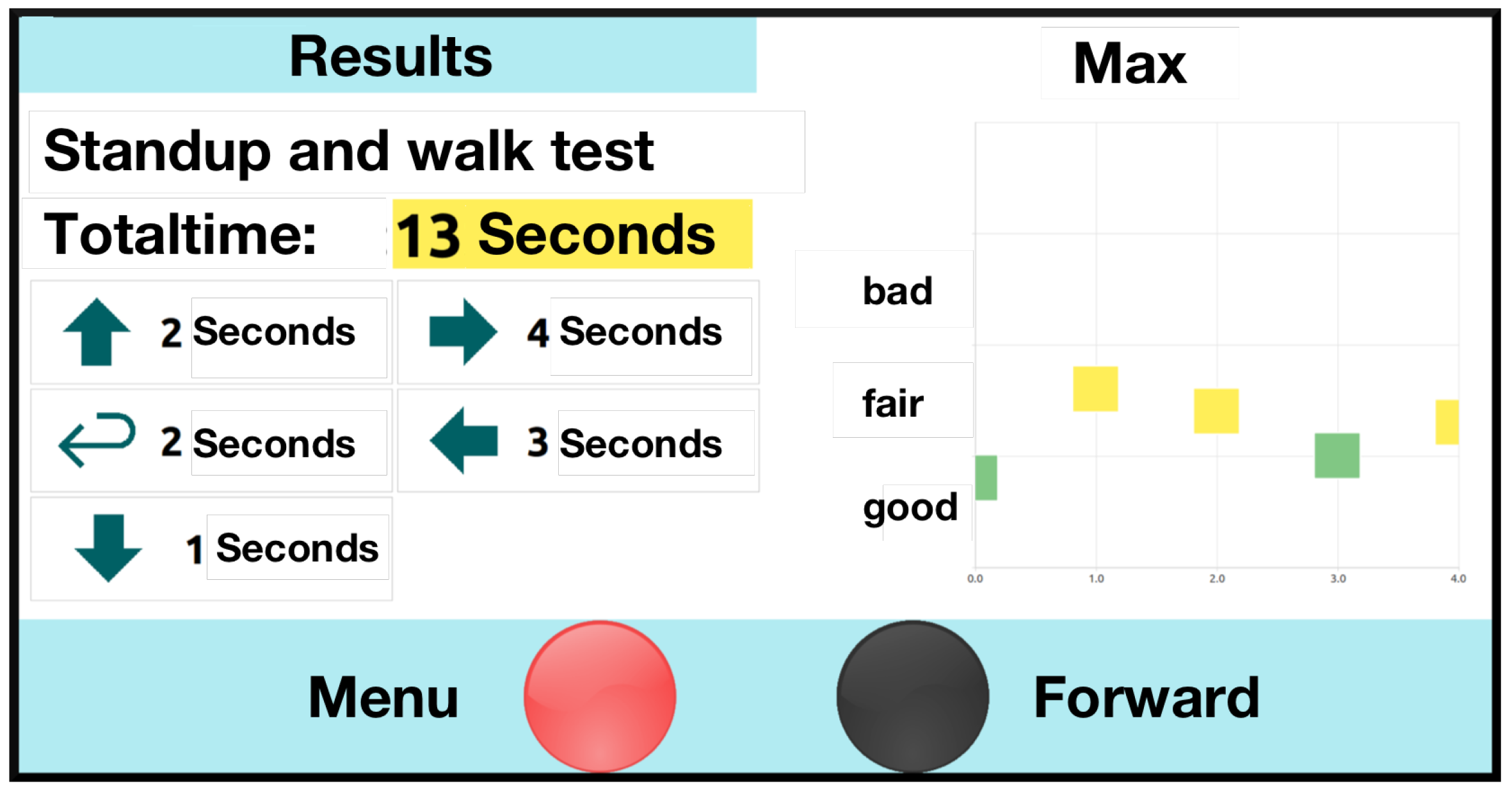

- The USS’s limited responsiveness was identified as a major challenge. Reported contests with instructional videos indicated that some users struggled to distinguish the videos from the menu structures. Attempts to enter data while the instructional videos were still running, and user interaction was still blocked, did not contribute to the perceived user confidence. This became especially obvious in a longer instructional video among which no user interaction was supported. The recognized desire for shorter instructional videos combined with the challenges of the resulting delayed interaction induced the following approach: By replacing the video stream with combined explanatory audio and static visualization (shown in Figure 6), the new instructional method enables continuous user interaction (e.g., skipping the audio voice, or adding additional data) and, thus, should overcome this challenge. These video tutorials are also shown when a user fails to complete a test.

- The sensor belt was either not detached from the USB cable or closing the belt buckle was perceived as nonintuitive, presenting a partial challenge. It has since been adjusted to ensure usability and proper attachment among participants. As shown in Figure 2, the buckle mechanism has been replaced by Velcro to support the belts’ easy attachment and detachment. To “remind” users to disconnect the sensor belt from the USB connector, the USB cable was shortened, so disconnection became an automatism. The correct use of the sensor belt was also addressed in the preparation video, and correct and faulty examples have been added. In addition, the upper side is marked by a yellow string so the user can assure the belt’s correct attachment. For the convenience of participants with an increased waist width, the belt’s length was extended.

- The presentation of the introductory video inside of the system was deemed a mental barrier. Participants suggested that the initial motivation and authentication process take place outside of the system. To overcome the necessity of sitting down in the system, which may serve as a psychological barrier for initial users, an external display (running a motivational video) has been added and the authentication mechanism (RFID reader) moved outside.

- For the TUG, some participants neither started from the back of the chair nor ended with their backs leaning against it. Instead, users moved forward during the countdown and remained there until the test was complete. This might have created erroneous results. They also reported uncertainty on if/when the assessment had finished. While the 5CRT was well performed in most cases, participants sporadically stopped with less than five repetitions, resulting in invalid assessments. As a solution, missed performances during the assessment period have been covered with hints in the instruction phase. These indicate potential errors through positive/negative examples. For the 5CRT, an audio signal has been added that notifies users once five sit-to-stand cycles have been completed.

- Some participants ignored the instructions during the logout process and missed the announcement regarding the intended frequency of the system use. To remedy this issue, the announcement has been moved in front of the log-out screen.

2.2. Conclusive Evaluation Prototype and Study Design

2.2.1. Conclusive Evaluation Prototype

2.3. Conclusive Usability Study

3. Results

3.1. Cohort of the Conclusive Usability Study

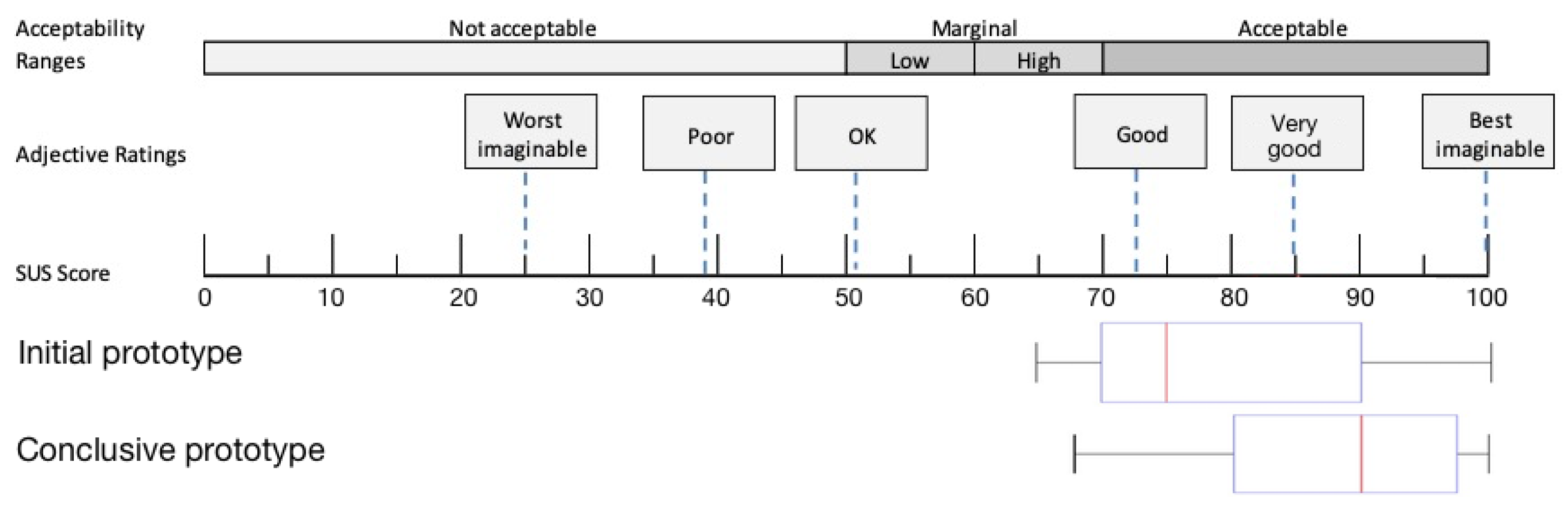

3.2. Usability Study

3.3. Results of the Telephone Interviews

3.4. Insights of Focus Group Discussion

3.4.1. Experiences: RFID Chip/Reader, Cabin Construction, Chair, and Sensor Belt

3.4.2. Experience Menu

3.4.3. Further Ideas for Enhancements

3.4.4. Appropriate Forms of Feedback of Measurement Results

3.4.5. Overall Impression

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

Abbreviations

| 5CRT | 5 Times Chair Rise Test |

| ADL | Activities of the daily living |

| aTUG | Ambient TUG |

| BMI | Body mass index |

| IQR | Interquartile range |

| iTUG | Instrumented TUG |

| RFID | Radio-frequency identification |

| SD | Standard deviation |

| SUS | System usability scale |

| TUG | Timed Up & Go |

| UI | User interaction |

| USS | Unsupervised Screening System |

References

- Marsh, A.P.; Rejeski, W.J.; Espeland, M.A.; Miller, M.E.; Church, T.S.; Fielding, R.A.; Gill, T.M.; Guralnik, J.M.; Newman, A.B.; Pahor, M.; et al. Muscle Strength and BMI as Predictors of Major Mobility Disability in the Lifestyle Interventions and Independence for Elders Pilot (LIFE-P). J. Gerontol. Ser. A Biol. Sci. Med. Sci. 2011, 66, 1376–1383. [Google Scholar] [CrossRef] [PubMed]

- Hicks, G.E.; Shardell, M.; Alley, D.E.; Miller, R.R.; Bandinelli, S.; Guralnik, J.; Lauretani, F.; Simonsick, E.M.; Ferrucci, L. Absolute Strength and Loss of Strength as Predictors of Mobility Decline in Older Adults: The InCHIANTI Study. J. Gerontol. Ser. A Biol. Sci. Med. Sci. 2011, 67, 66–73. [Google Scholar] [CrossRef] [PubMed]

- Vermeulen, J.; Neyens, J.C.; van Rossum, E.; Spreeuwenberg, M.D.; de Witte, L.P. Predicting ADL disability in community-dwelling elderly people using physical frailty indicators: A systematic review. BMC Geriatr. 2011, 11, 33. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Stineman, M.G.; Xie, D.; Pan, Q.; Kurichi, J.E.; Zhang, Z.; Saliba, D.; Henry-Sánchez, J.T.; Streim, J. All-Cause 1-, 5-, and 10-Year Mortality in Elderly People According to Activities of Daily Living Stage. J. Am. Geriatr. Soc. 2012, 60, 485–492. [Google Scholar] [CrossRef] [Green Version]

- Tikkanen, P.; Nykanen, I.; Lonnroos, E.; Sipila, S.; Sulkava, R.; Hartikainen, S. Physical Activity at Age of 20-64 Years and Mobility and Muscle Strength in Old Age: A Community-Based Study. J. Gerontol. Ser. A Biol. Sci. Med. Sci. 2012, 67, 905–910. [Google Scholar] [CrossRef] [Green Version]

- Conn, V.S.; Hafdahl, A.R.; Brown, L.M. Meta-analysis of Quality-of-Life Outcomes From Physical Activity Interventions. Nurs. Res. 2009, 58, 175–183. [Google Scholar] [CrossRef] [Green Version]

- Angevaren, M.; Aufdemkampe, G.; Verhaar, H.; Aleman, A.; Vanhees, L. Physical activity and enhanced fitness to improve cognitive function in older people without known cognitive impairment. Cochrane Database Syst Rev. 2008, 16, CD005381. [Google Scholar] [CrossRef]

- Chodzko-Zajko, W.J.; Proctor, D.N.; Fiatarone Singh, M.A.; Minson, C.T.; Nigg, C.R.; Salem, G.J.; Skinner, J.S. Exercise and Physical Activity for Older Adults. Med. Sci. Sport. Exerc. 2009, 41, 1510–1530. [Google Scholar] [CrossRef]

- Matsuda, P.N.; Shumway-Cook, A.; Ciol, M.A. The Effects of a Home-Based Exercise Program on Physical Function in Frail Older Adults. J. Geriatr. Phys. Ther. 2010, 33, 78–84. [Google Scholar]

- Monteserin, R.; Brotons, C.; Moral, I.; Altimir, S.; San Jose, A.; Santaeugenia, S.; Sellares, J.; Padros, J. Effectiveness of a geriatric intervention in primary care: A randomized clinical trial. Fam. Pract. 2010, 27, 239–245. [Google Scholar] [CrossRef] [Green Version]

- Stuck, A.; Siu, A.; Wieland, G.; Rubenstein, L.; Adams, J. Comprehensive geriatric assessment: A meta-analysis of controlled trials. Lancet 1993, 342, 1032–1036. [Google Scholar] [CrossRef]

- Stuck, A.E.; Egger, M.; Hammer, A.; Minder, C.E.; Beck, J.C. Home Visits to Prevent Nursing Home Admission and Functional Decline in Elderly People. JAMA 2002, 287, 1022–1028. [Google Scholar] [CrossRef]

- Podsiadlo, D.; Richardson, S. The Timed “Up & Go”: A Test of Basic Functional Mobility for Frail Elderly Persons. J. Am. Geriatr. Soc. 1991, 39, 142–148. [Google Scholar] [CrossRef] [PubMed]

- Hellmers, S.; Fudickar, S.; Lau, S.; Elgert, L.; Diekmann, R.; Bauer, J.M.; Hein, A. Measurement of the Chair Rise Performance of Older People Based on Force Plates and IMUs. Sensors 2019, 19, 1370. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Makizako, H.; Shimada, H.; Doi, T.; Tsutsumimoto, K.; Nakakubo, S.; Hotta, R.; Suzuki, T. Predictive Cutoff Values of the Five-Times Sit-to-Stand Test and the Timed “Up & Go” Test for Disability Incidence in Older People Dwelling in the Community. Phys. Ther. 2017, 97, 417–424. [Google Scholar] [CrossRef] [PubMed]

- Buatois, S.; Miljkovic, D.; Manckoundia, P.; Gueguen, R.; Miget, P.; Vançon, G.; Perrin, P.; Benetos, A. Five times sit to stand test is a predictor of recurrent falls in healthy community-living subjects aged 65 and older. J. Am. Geriatr. Soc. 2008, 56, 1575–1577. [Google Scholar] [CrossRef]

- Sprint, G.; Cook, D.J.; Weeks, D.L. Toward Automating Clinical Assessments: A Survey of the Timed Up and Go. IEEE Rev. Biomed. Eng. 2015, 8, 64–77. [Google Scholar] [CrossRef]

- Dibble, L.E.; Lange, M. Predicting Falls In Individuals with Parkinson Disease. J. Neurol. Phys. Ther. 2006, 30, 60–67. [Google Scholar] [CrossRef]

- Huang, S.L.; Hsieh, C.L.; Wu, R.M.; Tai, C.H.; Lin, C.H.; Lu, W.S. Minimal Detectable Change of the Timed “Up & Go” Test and the Dynamic Gait Index in People With Parkinson Disease. Phys. Ther. 2011, 91, 114–121. [Google Scholar] [CrossRef] [Green Version]

- Lin, M.R.; Hwang, H.F.; Hu, M.H.; Wu, H.D.I.; Wang, Y.W.; Huang, F.C. Psychometric Comparisons of the Timed Up and Go, One-Leg Stand, Functional Reach, and Tinetti Balance Measures in Community-Dwelling Older People. J. Am. Geriatr. Soc. 2004, 52, 1343–1348. [Google Scholar] [CrossRef] [PubMed]

- Guralnik, J.M.; Simonsick, E.M.; Ferrucci, L.; Glynn, R.J.; Berkman, L.F.; Blazer, D.G.; Scherr, P.A.; Wallace, R.B. A short physical performance battery assessing lower extremity function: Association with self-reported disability and prediction of mortality and nursing home admission. J. Gerontol. 1994, 49, M85–M94. [Google Scholar] [CrossRef]

- Hardy, R.; Cooper, R.; Shah, I.; Harridge, S.; Guralnik, J.; Kuh, D. Is chair rise performance a useful measure of leg power? Aging Clin. Exp. Res. 2010, 22, 412. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Fudickar, S.; Kiselev, J.; Frenken, T.; Wegel, S.; Dimitrowska, S.; Steinhagen-Thiessen, E.; Hein, A. Validation of the ambient TUG chair with light barriers and force sensors in a clinical trial. Assist. Technol. 2018, 32, 1–8. [Google Scholar] [CrossRef]

- Fudickar, S.; Kiselev, J.; Stolle, C.; Frenken, T.; Steinhagen-Thiessen, E.; Wegel, S.; Hein, A. Validation of a Laser Ranged Scanner-Based Detection of Spatio-Temporal Gait Parameters Using the aTUG Chair. Sensors 2021, 21, 1343. [Google Scholar] [CrossRef] [PubMed]

- Hellmers, S.; Izadpanah, B.; Dasenbrock, L.; Diekmann, R.; Bauer, J.M.; Hein, A.; Fudickar, S. Towards an Automated Unsupervised Mobility Assessment for Older People Based on Inertial TUG Measurements. Sensors 2018, 18, 3310. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- American Geriatrics Society; Geriatrics Society; American Academy Of; Orthopaedic Surgeons Panel on Falls Prevention. Guideline for the Prevention of Falls in Older Persons. J. Am. Geriatr. Soc. 2001, 49, 664–672. [Google Scholar] [CrossRef]

- Hellmers, S.; Kromke, T.; Dasenbrock, L.; Heinks, A.; Bauer, J.M.; Hein, A.; Fudickar, S. Stair Climb Power Measurements via Inertial Measurement Units-Towards an Unsupervised Assessment of Strength in Domestic Environments. In Proceedings of the 11th International Joint Conference on Biomedical Engineering Systems and Technologies, Madeira, Portugal, 19–21 January 2018; SCITEPRESS-Science and Technology Publications: Funchal, Portugal, 2018; pp. 39–47. [Google Scholar] [CrossRef]

- Hellmers, S.; Steen, E.E.; Dasenbrock, L.; Heinks, A.; Bauer, J.M.; Fudickar, S.; Hein, A. Towards a minimized unsupervised technical assessment of physical performance in domestic environments. In Proceedings of the 11th EAI International Conference on Pervasive Computing Technologies for Healthcare-PervasiveHealth ’17, Barcelona, Spain, 23–26 May 2017; ACM Press: New York, NY, USA, 2017; pp. 207–216. [Google Scholar] [CrossRef]

- Fudickar, S.; Stolle, C.; Volkening, N.; Hein, A. Scanning Laser Rangefinders for the Unobtrusive Monitoring of Gait Parameters in Unsupervised Settings. Sensors 2018, 18, 3424. [Google Scholar] [CrossRef] [Green Version]

- Fudickar, S.; Hellmers, S.; Lau, S.; Diekmann, R.; Bauer, J.M.; Hein, A. Measurement System for Unsupervised Standardized Assessment of Timed “Up & Go” and Five Times Sit to Stand Test in the Community—A Validity Study. Sensors 2020, 20, 2824. [Google Scholar] [CrossRef]

- Botolfsen, P.; Helbostad, J.L.; Moe-nilssen, R.; Wall, J.C. Reliability and concurrent validity of the Expanded Timed Up-and-Go test in older people with impaired mobility. Physiother. Res. Int. 2008, 13, 94–106. [Google Scholar] [CrossRef] [PubMed]

- Jung, H.W.; Roh, H.; Cho, Y.; Jeong, J.; Shin, Y.S.; Lim, J.Y.; Guralnik, J.M.; Park, J. Validation of a Multi–Sensor-Based Kiosk for Short Physical Performance Battery. J. Am. Geriatr. Soc. 2019, 67, 2605–2609. [Google Scholar] [CrossRef]

- Lee, C.; Coughlin, J.F. PERSPECTIVE: Older Adults’ Adoption of Technology: An Integrated Approach to Identifying Determinants and Barriers. J. Prod. Innov. Manag. 2014, 32, 747–759. [Google Scholar] [CrossRef]

- Demiris, G.; Rantz, M.; Aud, M.; Marek, K.; Tyrer, H.; Skubic, M.; Hussam, A. Older adults attitudes towards and perceptions of “smart home” technologies: A pilot study. Inform. Health Soc. Care 2004, 29, 87–94. [Google Scholar] [CrossRef] [PubMed]

- Davis, F.D. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef] [Green Version]

- Rogers, E.M. Diffusion of Innovations, 4th ed.; Simon and Schuster: New York, NY, USA, 2010. [Google Scholar]

- Bixter, M.T.; Blocker, K.A.; Rogers, W.A. Promoting social engagement of older adults through technology. In Aging, Technology, and Health; Pak, R., McLaughlin, A.C., Eds.; Academic Press: London, UK; San Diego, CA, USA; Cambridge, MA, USA; Oxford, UK, 2018. [Google Scholar]

- Mitzner, T.L.; Boron, J.B.; Fausset, C.B.; Adams, A.E.; Charness, N.; Czaja, S.J.; Dijkstra, K.; Fisk, A.D.; Rogers, W.A.; Sharit, J. Older adults talk technology: Technology usage and attitudes. Comput. Hum. Behav. 2010, 26, 1710–1721. [Google Scholar] [CrossRef] [Green Version]

- Eisma, R.; Dickinson, A.; Goodman, J.; Syme, A.; Tiwari, L.; Newell, A.F. Early user involvement in the development of information technology-related products for older people. Univers. Access Inf. Soc. 2004, 3, 131–140. [Google Scholar] [CrossRef]

- Wood, E.; Willoughby, T.; Rushing, A.; Bechtel, L.; Gilbert, J. Use of Computer Input Devices by Older Adults. J. Appl. Gerontol. 2005, 24, 419–438. [Google Scholar] [CrossRef]

- Hellmers, S.; Fudickar, S.; Büse, C.; Dasenbrock, L.; Heinks, A.; Bauer, J.M.; Hein, A. Technology Supported Geriatric Assessment. In Ambient Assisted Living: 9. AAL-Kongress, Frankfurt/M, Germany, 20–21 April 2016; Wichert, R., Mand, B., Eds.; Springer International Publishing: Cham, Switzerland, 2017; pp. 85–100. [Google Scholar] [CrossRef]

- Diekmann, R.; Hellmers, S.; Elgert, L.; Fudickar, S.; Heinks, A.; Lau, S.; Bauer, J.M.; Zieschang, T.; Hein, A. Minimizing comprehensive geriatric assessment to identify deterioration of physical performance in a healthy community-dwelling older cohort: Longitudinal data of the AEQUIPA Versa study. Aging Clin. Exp. Res. 2020, 33, 563–572. [Google Scholar] [CrossRef]

- Czaja, S.J.; Lee, C.C. The impact of aging on access to technology. Univers. Access Inf. Soc. 2006, 5, 341–349. [Google Scholar] [CrossRef]

- Fudickar, S.; Faerber, S.; Schnor, B. KopAL Appointment User-interface: An Evaluation with Elderly. In Proceedings of the 4th International Conference on PErvasive Technologies Related to Assistive Environments, PETRA ’11, Crete, Greece, 25–27 May 2011; ACM: New York, NY, USA, 2011; pp. 42:1–42:6. [Google Scholar] [CrossRef]

- Häikiö, J.; Wallin, A.; Isomursu, M.; Ailisto, H.; Matinmikko, T.; Huomo, T. Touch-based User Interface for Elderly Users. In Proceedings of the 9th International Conference on Human Computer Interaction with Mobile Devices and Services, MobileHCI ’07, Singapore, 9–12 September 2007; ACM: New York, NY, USA, 2007; pp. 289–296. [Google Scholar] [CrossRef]

- Rubenstein, L.Z.; Harker, J.O.; Salva, A.; Guigoz, Y.; Vellas, B. Screening for Undernutrition in Geriatric Practice: Developing the Short-Form Mini-Nutritional Assessment (MNA-SF). J. Gerontol. Ser. A Biol. Sci. Med. Sci. 2001, 56, M366–M372. [Google Scholar] [CrossRef] [Green Version]

- Salarian, A.; Horak, F.B.; Zampieri, C.; Carlson-Kuhta, P.; Nutt, J.G.; Aminian, K. iTUG, a Sensitive and Reliable Measure of Mobility. IEEE Trans. Neural Syst. Rehabil. Eng. 2010, 18, 303–310. [Google Scholar] [CrossRef] [Green Version]

- Nielsen, J. Evaluating the Thinking-aloud Technique for Use by Computer Scientists. In Advances in Human-Computer Interaction (Vol. 3); Hartson, H.R., Hix, D., Eds.; Ablex Publishing Corp.: Norwood, NJ, USA, 1992; pp. 69–82. [Google Scholar]

- Brooke, J. SUS: A “quick and dirty” usability scale. In Usability Evaluation in Industry; Jordan, P.W., Thomas, B., McClelland, I.L., Weerdmeester, B., Eds.; CRC Press: London, UK, 1996. [Google Scholar]

- Bangor, A.; Kortum, P.; Miller, J. Determining What Individual SUS Scores Mean: Adding an Adjective Rating Scale. J. Usability Stud. 2009, 4, 114–123. [Google Scholar]

- Mayring, P. Qualitative Inhaltsanalyse. Grundlagen und Techniken. 12., Überarb. Aufl.; Beltz: Weinheim, Germany, 2015. [Google Scholar]

- Kuckartz, U. Qualitative Inhaltsanalyse-Methoden, Praxis, Computerunterstützung; Beltz Juventa: Weinheim, Germany, 2012. [Google Scholar]

- Neyer, F.J.; Felber, J.; Gebhardt, C. Entwicklung und Validierung einer Kurzskala zur Erfassung von Technikbereitschaft. Diagnostica 2012, 58, 87–99. [Google Scholar] [CrossRef]

- Laugwitz, B.; Schrepp, M.; Held, T. Konstruktion eines Fragebogens zur Messung der User Experience von Softwareprodukten. In Mensch und Computer 2006: Mensch und Computer im Strukturwandel; Heinecke, A.M., Paul, H., Eds.; Oldenbourg Verlag: München, Germany, 2006; pp. 125–134. [Google Scholar]

- Craig, P.; Dieppe, P.; Macintyre, S.; Michie, S.; Nazareth, I.; Petticrew, M. Developing and evaluating complex interventions: The new Medical Research Council guidance. BMJ 2008, 337, a1655. [Google Scholar] [CrossRef] [Green Version]

- Moore, G.F.; Audrey, S.; Barker, M.; Bond, L.; Bonell, C.; Hardeman, W.; Moore, L.; O’Cathain, A.; Tinati, T.; Wight, D.; et al. Process evaluation of complex interventions: Medical Research Council guidance. BMJ 2015, 350, h1258. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Cavazzana, A.; Röhrborn, A.; Garthus-Niegel, S.; Larsson, M.; Hummel, T.; Croy, I. Sensory-specific impairment among older people. An investigation using both sensory thresholds and subjective measures across the five senses. PLoS ONE 2018, 13, e0202969. [Google Scholar] [CrossRef] [Green Version]

- Reuter, E.M.; Voelcker-Rehage, C.; Vieluf, S.; Godde, B. Touch perception throughout working life: Effects of age and expertise. Exp. Brain Res. 2011, 216, 287–297. [Google Scholar] [CrossRef] [PubMed]

- Page, T. Touchscreen mobile devices and older adults: A usability study. Int. J. Hum. Factors Ergon. 2014, 3, 65. [Google Scholar] [CrossRef] [Green Version]

- Merkel, S.; Kucharski, A. Participatory Design in Gerontechnology: A Systematic Literature Review. Gerontologist 2018, 59, e16–e25. [Google Scholar] [CrossRef] [PubMed]

- Grates, M.G.; Heming, A.C.; Vukoman, M.; Schabsky, P.; Sorgalla, J. New Perspectives on User Participation in Technology Design Processes: An Interdisciplinary Approach. Gerontologist 2018, 59, 45–57. [Google Scholar] [CrossRef]

- Beisch, N.; Schäfer, C. Ergebnisse der ARD/ZDF-Onlinestudie 2020. Internetnutzung mit großer Dynamik: Medien, Kommunikation, Social Media. Media Perspekt. 2020, 20, 462–481. [Google Scholar]

- Friemel, T.N. The digital divide has grown old: Determinants of a digital divide among seniors. New Media Soc. 2014, 18, 313–331. [Google Scholar] [CrossRef]

- Hunsaker, A.; Hargittai, E. A review of Internet use among older adults. New Media Soc. 2018, 20, 3937–3954. [Google Scholar] [CrossRef]

| Parameter | Item | Occurence |

|---|---|---|

| Marital status | Married, living with spouse | 22 |

| Widowed | 10 | |

| Divorced | 3 | |

| Highest school degree | Secondary school certificate | 11 |

| Secondary/elementary school | 6 | |

| University entrance qualification/secondary school | 6 | |

| Advanced technical college entrance qualification | 1 | |

| Training/university degrees | Vocational—in-company training | 19 |

| Vocational—school education | 8 | |

| Technical college/engineering school | 5 | |

| University/college | 5 | |

| Technical school, master school, technical school, | 3 | |

| vocational or technical academy | ||

| Other educational qualification | 3 | |

| None | 1 |

| How Often Do You Use the Following Technical Devices? | ||||||

|---|---|---|---|---|---|---|

| PC/ | Tablet | Classic | Smart | Smart | Other | |

| Laptop | PC | Bar Phone | Phone | Watch | Device | |

| Several times a day | 16 (43%) | 4 (11%) | 12 (32%) | 19 (51%) | 0 (0%) | 0 (0%) |

| Once a day | 8 (22%) | 0 (0%) | 1 (3%) | 2 (5%) | 0 (0%) | 0 (0%) |

| Several times a week | 4 (11%) | 2 (5%) | 2 (5%) | 1 (3%) | 0 (0%) | 0 (0%) |

| Once a week | 3 (8%) | 1 (3%) | 0 (0%) | 0 (0%) | 0 (0%) | 0 (0%) |

| Less than once a week | 2 (5%) | 5 (14%) | 4 (11%) | 0 (0%) | 0 (0%) | 0 (0%) |

| I own but never use | 1 (3%) | 1 (3%) | 2 (5%) | 1 (3%) | 0 (0%) | 1 (3%) |

| I do not use | 2 (5%) | 13 (35%) | 6 (16%) | 8 (22%) | 21 (57%) | 14 (38%) |

| Total | 36 (97%) | 26 (70%) | 27 (73%) | 31 (84%) | 21 (57%) | 15 (41%) |

| Missing | 1 (3%) | 11 (30%) | 10 (27%) | 6 (16%) | 16 (43%) | 22 (60%) |

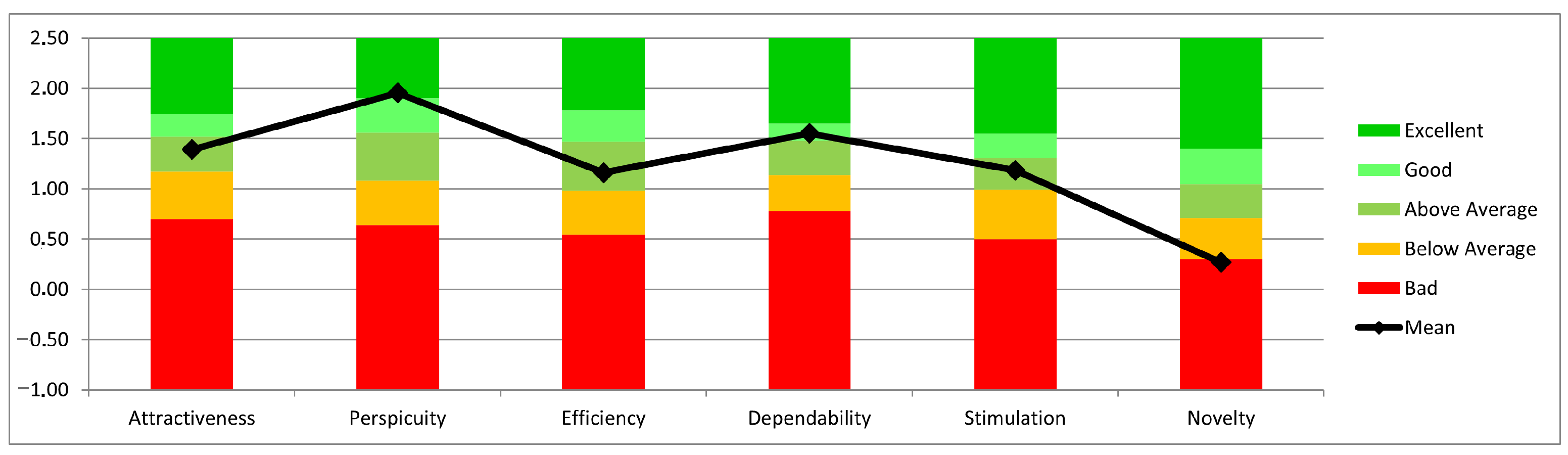

| Scale | Mean | Std. Dev. | N | Confidence | Confidence Interval | |

|---|---|---|---|---|---|---|

| Attractiveness | 1.390 | 1.189 | 37 | 0.383 | 1.007 | 1.774 |

| Perspicuity | 1.953 | 1.046 | 37 | 0.337 | 1.616 | 2.290 |

| Efficiency | 1.162 | 1.061 | 37 | 0.342 | 0.820 | 1.504 |

| Dependability | 1.550 | 0.974 | 37 | 0.314 | 1.236 | 1.864 |

| Stimulation | 1.186 | 1.184 | 37 | 0.382 | 0.804 | 1.567 |

| Novelty | 0.270 | 1.126 | 37 | 0.363 | −0.093 | 0.632 |

| Questions/Functions | Total Mentions |

|---|---|

| How are you doing healthwise? Has anything changed? | 20 |

| Do you feel healthy and able?/Wellbeing question | |

| Do you participate in any sports (if yes, how often)? | 15 |

| How did you obtain the measuring box? | 6 |

| Do you currently use assistive mobility devices? | 5 |

| Hand force measurement | 5 |

| Wobble plate for balance measurement | 5 |

| Comments/improvement suggestions after measurement | 4 |

| What would help you maintain your performance? | 4 |

| Automatic measurement of weight and height | 4 |

| Question about taking medication | 4 |

| Fatigue question | 3 |

| Has anything changed since the first question until today? | 3 |

| Relaxation exercise | 2 |

| Display data after use | 1 |

| Have you been ill between measurements? | 1 |

| (If yes, do you wish to talk?) | |

| How do you feel involved in your environment? | 1 |

| Balance measurement camera | 1 |

| Are you a smoker? | 1 |

| Do you drink alcohol? | 1 |

| Breathing measurement | 1 |

| Ideas | Total Mentions |

|---|---|

| Homepage/portal | 17 |

| 16 | |

| Printout on site (with comparison) | 12 |

| After each measurement review of the results of the measurement | 9 |

| (graphical display, statistics) | |

| Personal conversation | 7 |

| Closing/information event | 6 |

| Group meeting with exchange on a specific topic | 3 |

| Feedback with reference average value of the respective age group | 2 |

| Personal contact after last measurement | 2 |

| Feedback directly from the doctor | 2 |

| Feedback via app | 1 |

| Written report | 1 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fudickar, S.; Pauls, A.; Lau, S.; Hellmers, S.; Gebel, K.; Diekmann, R.; Bauer, J.M.; Hein, A.; Koppelin, F. Measurement System for Unsupervised Standardized Assessments of Timed Up and Go Test and 5 Times Chair Rise Test in Community Settings—A Usability Study. Sensors 2022, 22, 731. https://doi.org/10.3390/s22030731

Fudickar S, Pauls A, Lau S, Hellmers S, Gebel K, Diekmann R, Bauer JM, Hein A, Koppelin F. Measurement System for Unsupervised Standardized Assessments of Timed Up and Go Test and 5 Times Chair Rise Test in Community Settings—A Usability Study. Sensors. 2022; 22(3):731. https://doi.org/10.3390/s22030731

Chicago/Turabian StyleFudickar, Sebastian, Alexander Pauls, Sandra Lau, Sandra Hellmers, Konstantin Gebel, Rebecca Diekmann, Jürgen M. Bauer, Andreas Hein, and Frauke Koppelin. 2022. "Measurement System for Unsupervised Standardized Assessments of Timed Up and Go Test and 5 Times Chair Rise Test in Community Settings—A Usability Study" Sensors 22, no. 3: 731. https://doi.org/10.3390/s22030731

APA StyleFudickar, S., Pauls, A., Lau, S., Hellmers, S., Gebel, K., Diekmann, R., Bauer, J. M., Hein, A., & Koppelin, F. (2022). Measurement System for Unsupervised Standardized Assessments of Timed Up and Go Test and 5 Times Chair Rise Test in Community Settings—A Usability Study. Sensors, 22(3), 731. https://doi.org/10.3390/s22030731