A Review of Automated Bioacoustics and General Acoustics Classification Research

Abstract

1. Introduction

- RQ1: What are the main application areas?

- RQ2: What sound data processing and classification techniques are used?

- RQ3: How have the applications described in the studies been implemented?

- RQ4: To what extent have previously identified research problems been addressed by current studies?

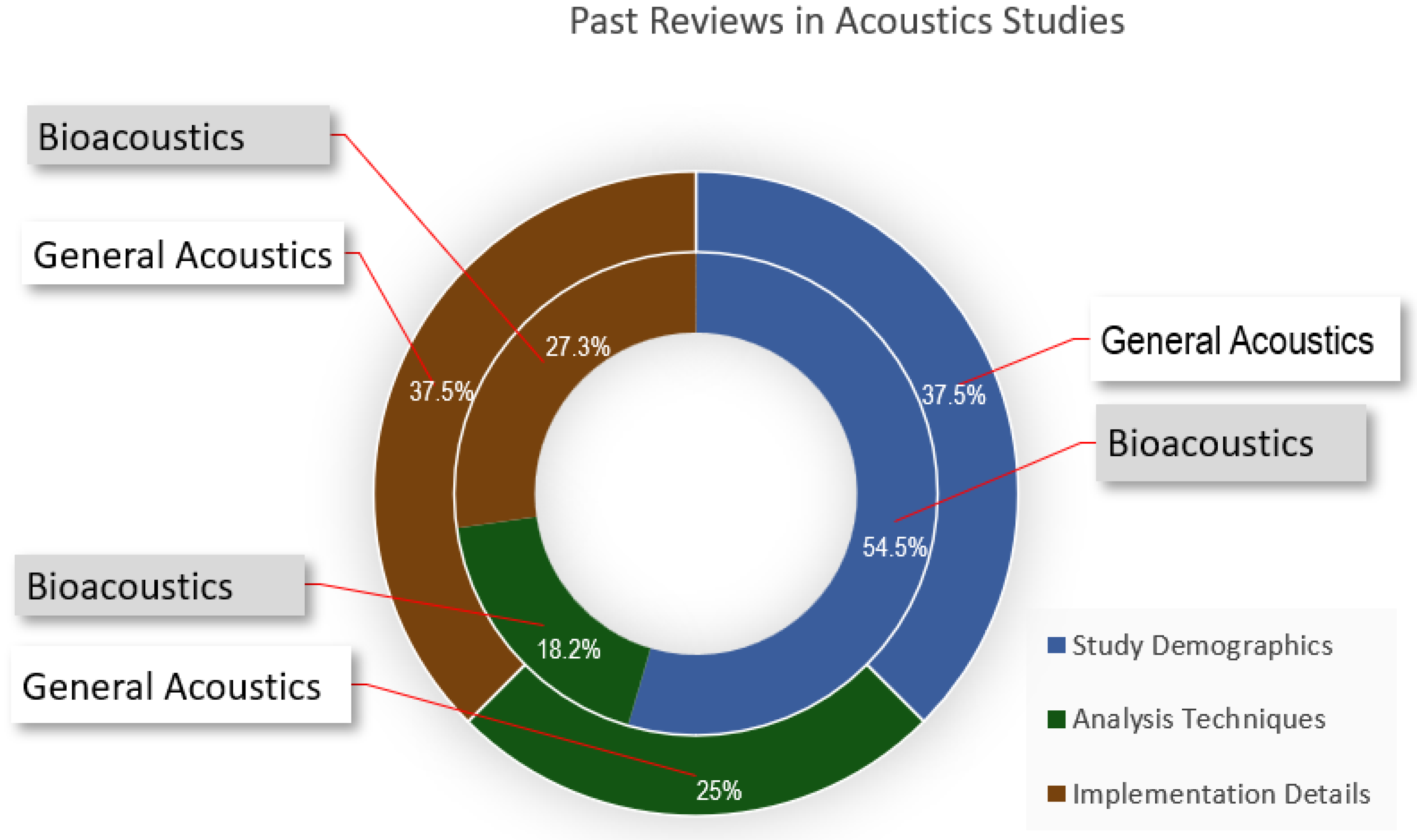

2. Related Work

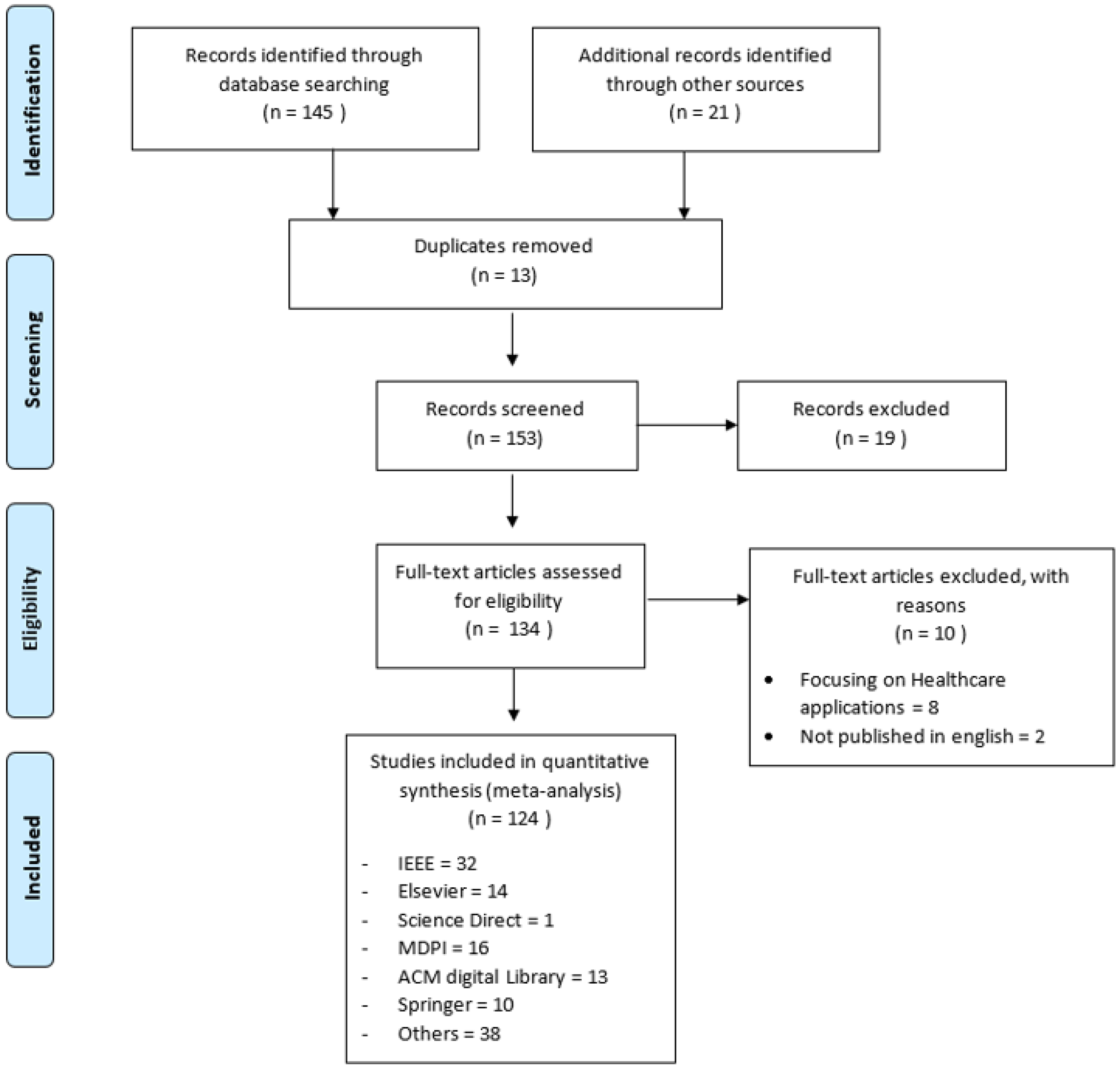

3. Methodology

3.1. Problem Formulation

3.1.1. Literature Search

3.1.2. Screening for Inclusion

3.1.3. Data Extraction

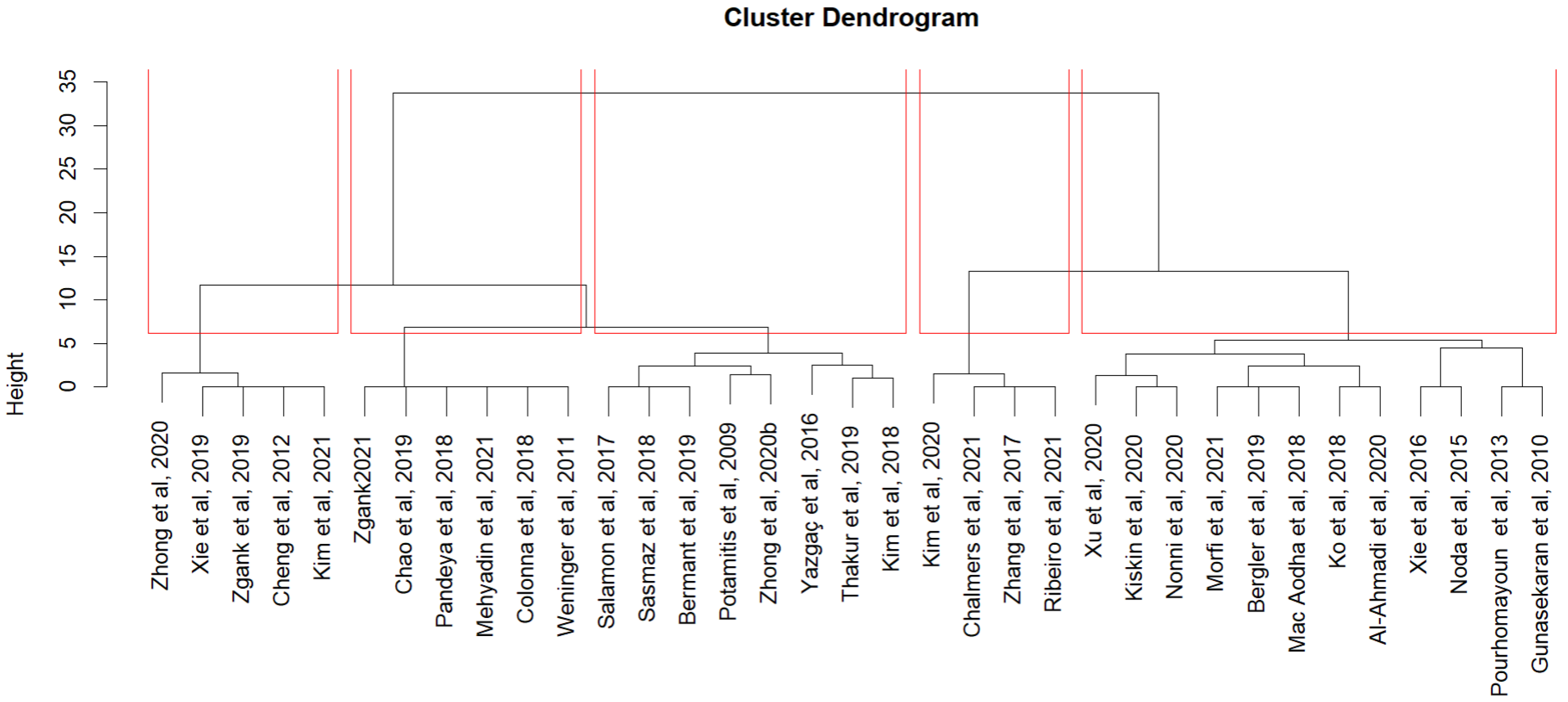

3.1.4. Data Analysis and Interpretation

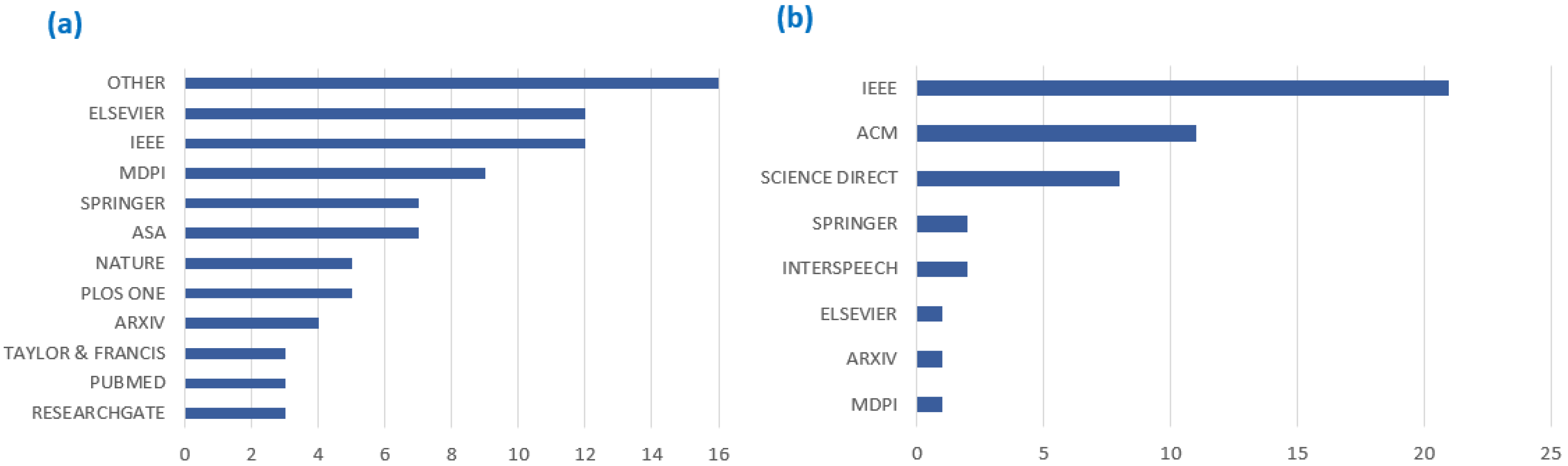

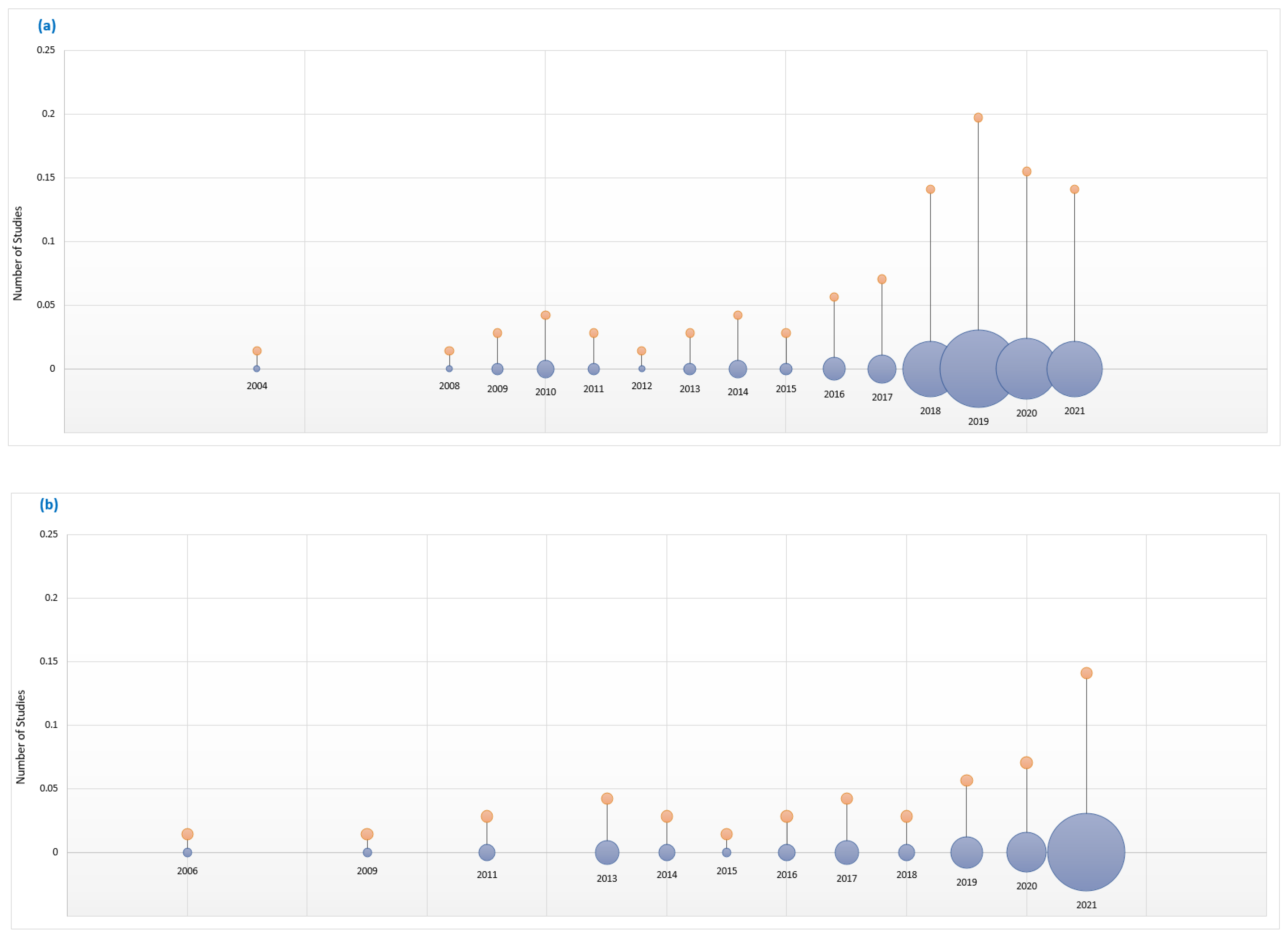

3.1.5. Publication Demographics

4. Results

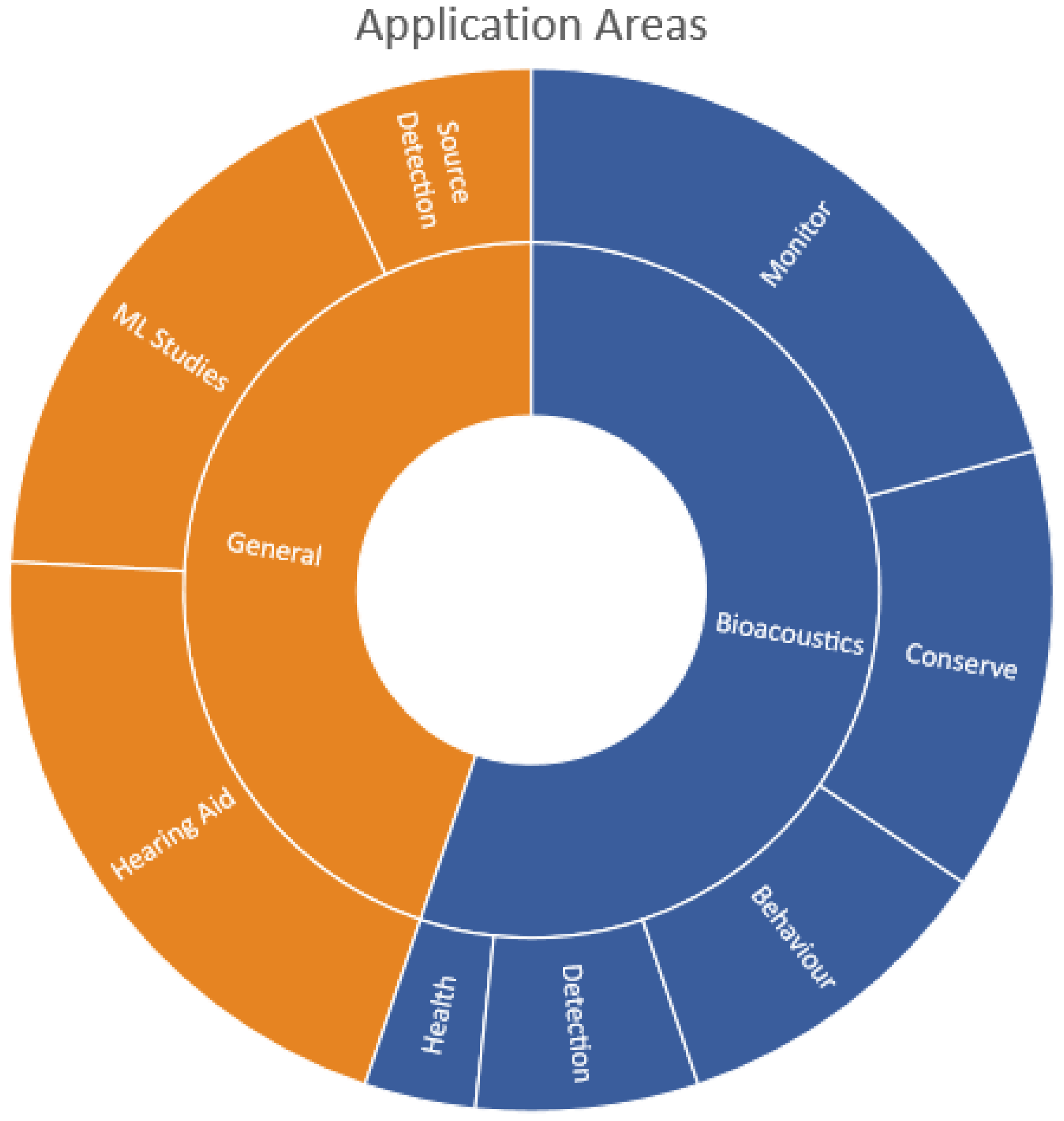

4.1. Application Areas

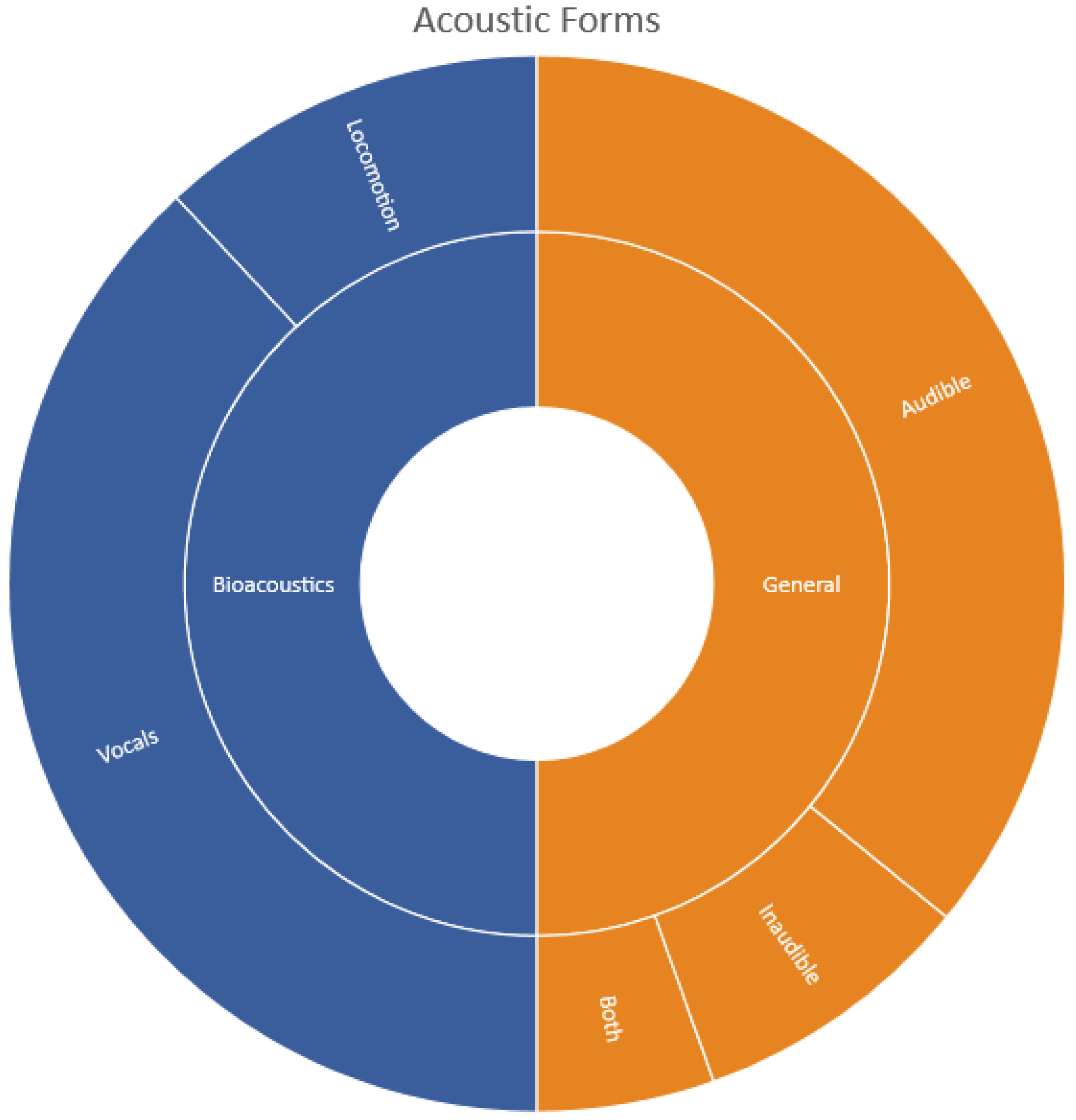

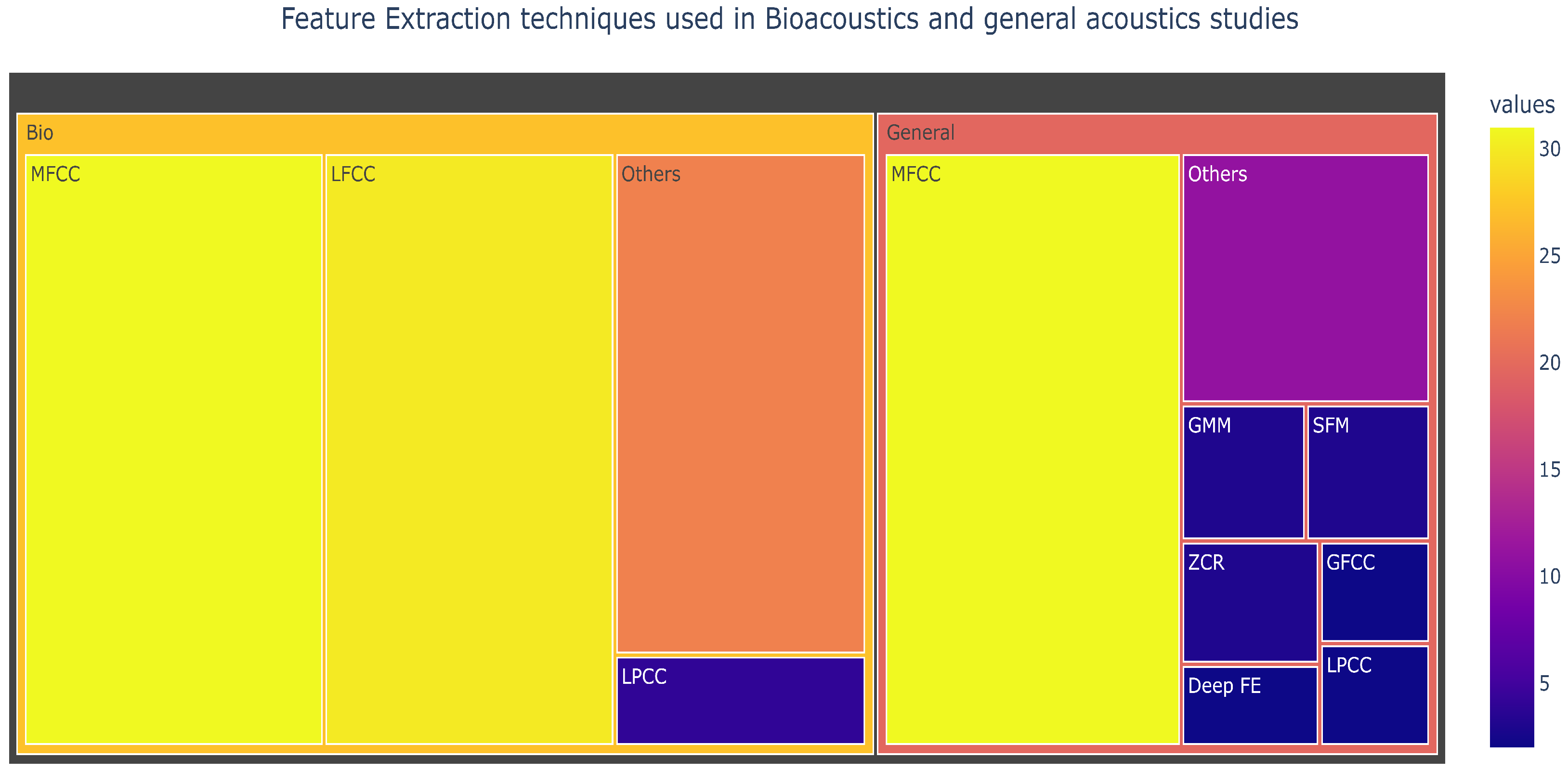

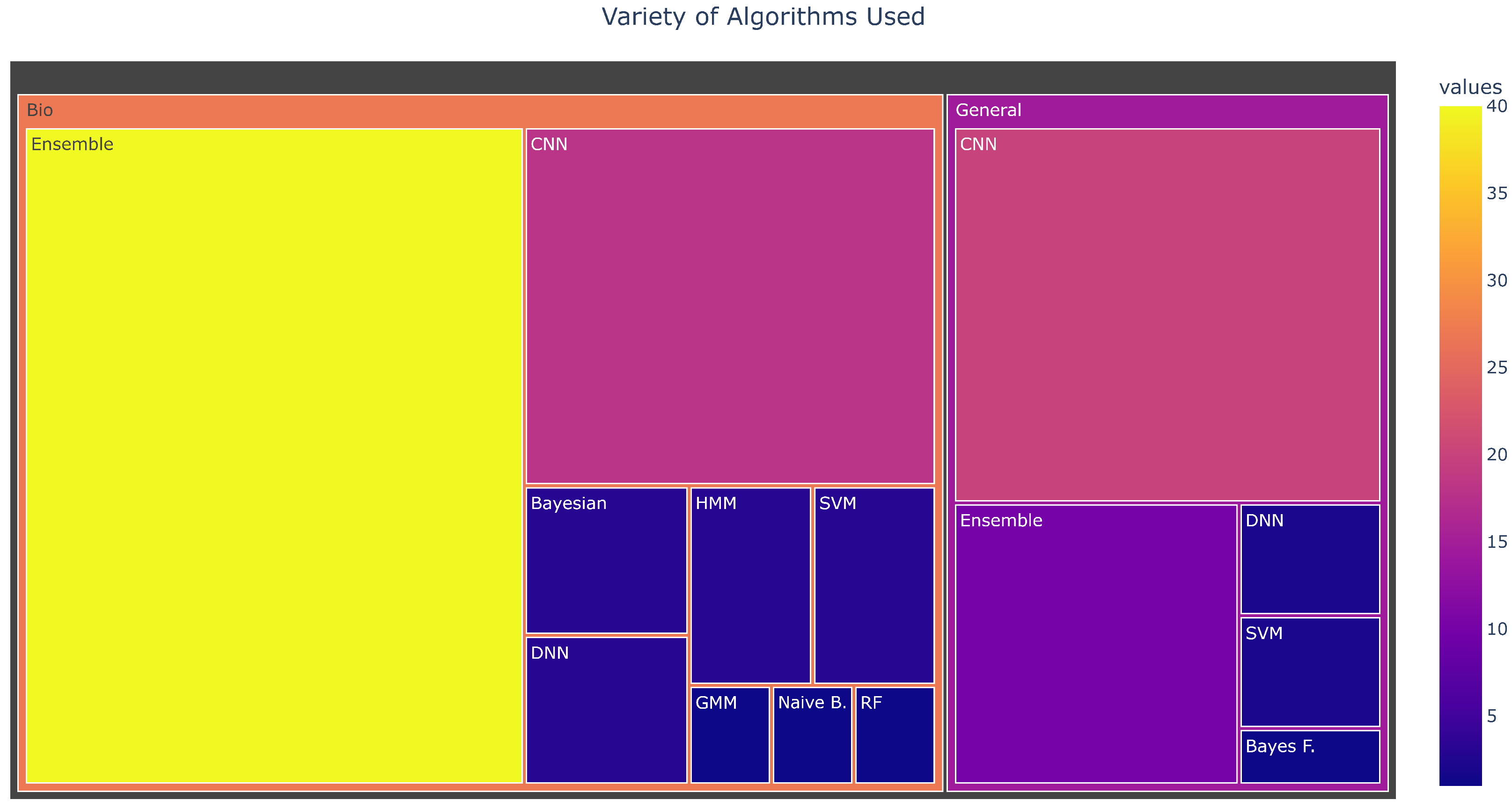

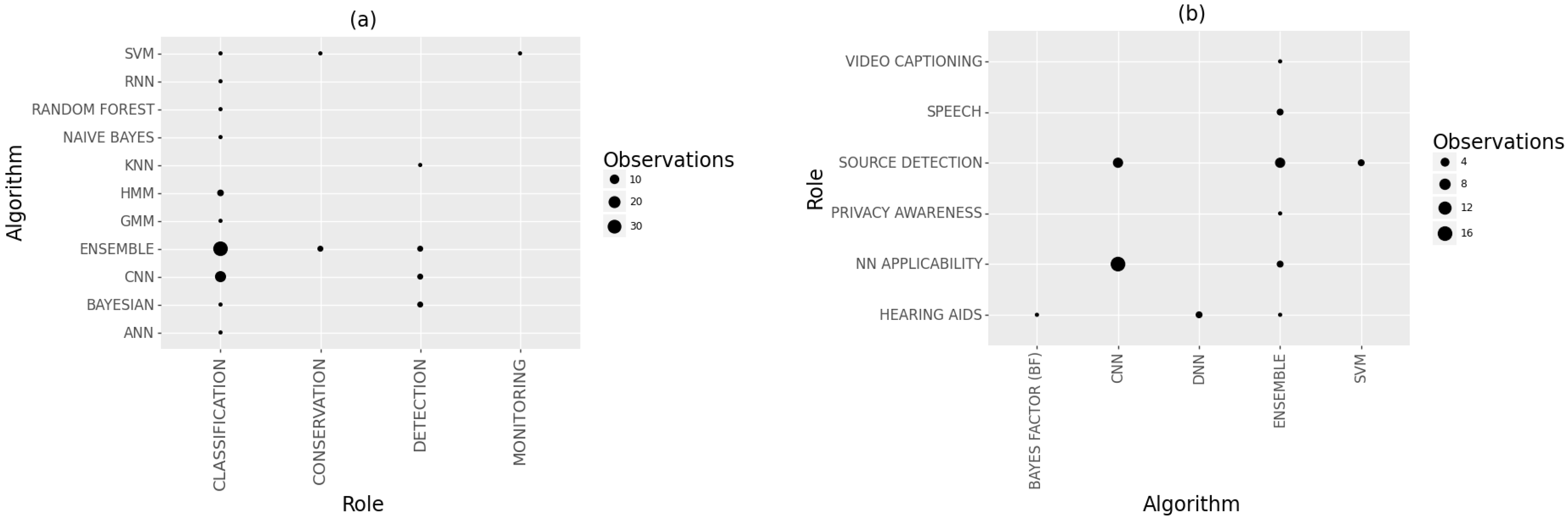

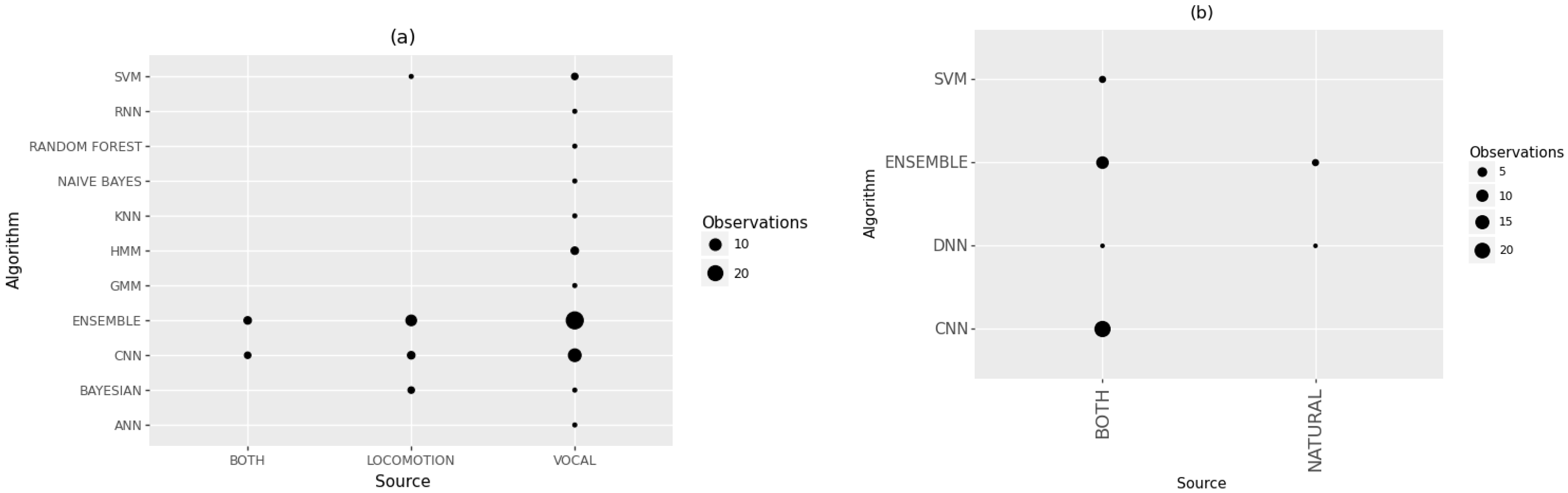

4.2. Techniques Used

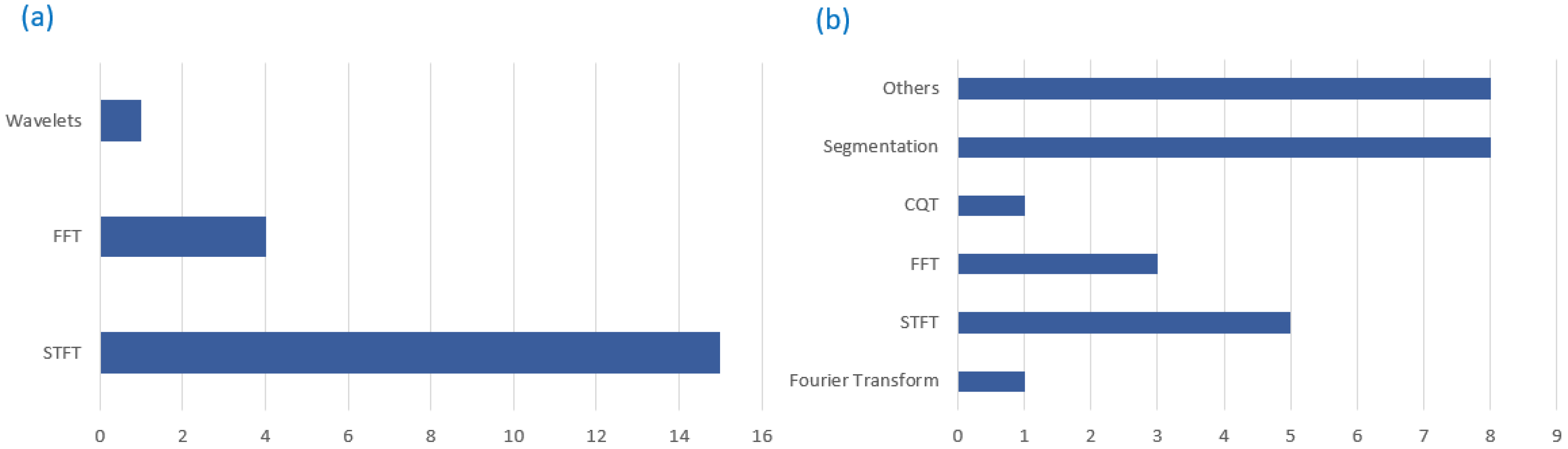

4.2.1. Data Preprocessing

4.2.2. Machine Learning Algorithms

4.2.3. Overtones in Acoustic Techniques

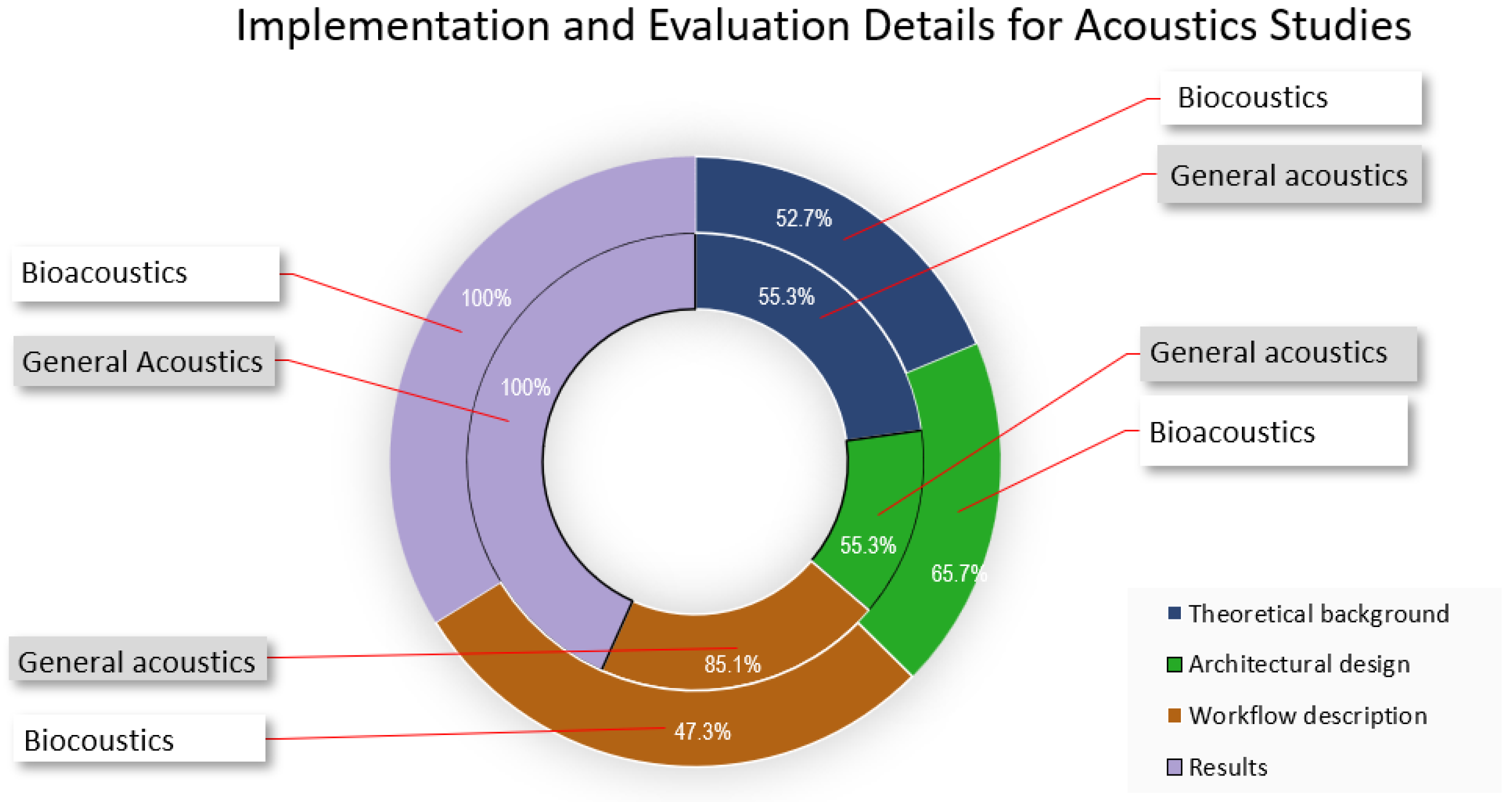

4.3. Implementation and Evaluation

5. Discussion and Open Questions

5.1. Acoustics

5.2. Dataset

5.3. Classification

5.4. Deployment

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Penar, W.; Magiera, A.; Klocek, C. Applications of bioacoustics in animal ecology. Ecol. Complex. 2020, 43, 100847. [Google Scholar] [CrossRef]

- Choi, Y.K.; Kim, K.M.; Jung, J.W.; Chun, S.Y.; Park, K.S. Acoustic intruder detection system for home security. IEEE Trans. Consum. Electron. 2005, 51, 130–138. [Google Scholar] [CrossRef]

- Shah, S.K.; Tariq, Z.; Lee, Y. Iot based urban noise monitoring in deep learning using historical reports. In Proceedings of the 2019 IEEE International Conference on Big Data (Big Data), Los Angeles, CA, USA, 9–12 December 2019; pp. 4179–4184. [Google Scholar]

- Vacher, M.; Serignat, J.F.; Chaillol, S.; Istrate, D.; Popescu, V. Speech and sound use in a remote monitoring system for health care. In International Conference on Text, Speech and Dialogue; Springer: Berlin/Heidelberg, Germany, 2006; pp. 711–718. [Google Scholar]

- Olivieri, M.; Malvermi, R.; Pezzoli, M.; Zanoni, M.; Gonzalez, S.; Antonacci, F.; Sarti, A. Audio information retrieval and musical acoustics. IEEE Instrum. Meas. Mag. 2021, 24, 10–20. [Google Scholar] [CrossRef]

- Schöner, M.G.; Simon, R.; Schöner, C.R. Acoustic communication in plant–animal interactions. Curr. Opin. Plant Biol. 2016, 32, 88–95. [Google Scholar] [CrossRef]

- Obrist, M.K.; Pavan, G.; Sueur, J.; Riede, K.; Llusia, D.; Márquez, R. Bioacoustics approaches in biodiversity inventories. Abc Taxa 2010, 8, 68–99. [Google Scholar]

- Chachada, S.; Kuo, C.C.J. Environmental sound recognition: A survey. APSIPA Trans. Signal Inf. Process. 2014, 3, 14015991. [Google Scholar] [CrossRef]

- Kvsn, R.R.; Montgomery, J.; Garg, S.; Charleston, M. Bioacoustics data analysis—A taxonomy, survey and open challenges. IEEE Access 2020, 8, 57684–57708. [Google Scholar]

- Mcloughlin, M.P.; Stewart, R.; McElligott, A.G. Automated bioacoustics: Methods in ecology and conservation and their potential for animal welfare monitoring. J. R. Soc. Interface 2019, 16, 20190225. [Google Scholar] [CrossRef]

- Walters, C.L.; Collen, A.; Lucas, T.; Mroz, K.; Sayer, C.A.; Jones, K.E. Challenges of using bioacoustics to globally monitor bats. In Bat Evolution, Ecology, and Conservation; Springer: New York, NY, USA, 2013; pp. 479–499. [Google Scholar]

- Xie, J.; Colonna, J.G.; Zhang, J. Bioacoustic signal denoising: A review. Artif. Intell. Rev. 2021, 54, 3575–3597. [Google Scholar] [CrossRef]

- Chen, W.; Sun, Q.; Chen, X.; Xie, G.; Wu, H.; Xu, C. Deep learning methods for heart sounds classification: A systematic review. Entropy 2021, 23, 667. [Google Scholar] [CrossRef]

- Potamitis, I.; Ganchev, T.; Kontodimas, D. On automatic bioacoustic detection of pests: The cases of Rhynchophorus ferrugineus and Sitophilus oryzae. J. Econ. Entomol. 2009, 102, 1681–1690. [Google Scholar] [CrossRef] [PubMed]

- Stowell, D.; Plumbley, M.D. Automatic large-scale classification of bird sounds is strongly improved by unsupervised feature learning. PeerJ 2014, 2, e488. [Google Scholar] [CrossRef] [PubMed]

- Bonet-Solà, D.; Alsina-Pagès, R.M. A comparative survey of feature extraction and machine learning methods in diverse acoustic environments. Sensors 2021, 21, 1274. [Google Scholar] [CrossRef]

- Lima, M.C.F.; de Almeida Leandro, M.E.D.; Valero, C.; Coronel, L.C.P.; Bazzo, C.O.G. Automatic detection and monitoring of insect pests—A review. Agriculture 2020, 10, 161. [Google Scholar] [CrossRef]

- Stowell, D.; Wood, M.; Stylianou, Y.; Glotin, H. Bird detection in audio: A survey and a challenge. In Proceedings of the 2016 IEEE 26th International Workshop on Machine Learning for Signal Processing (MLSP), Vietri sul Mare, Italy, 13–16 September 2016; pp. 1–6. [Google Scholar]

- Qian, K.; Janott, C.; Schmitt, M.; Zhang, Z.; Heiser, C.; Hemmert, W.; Yamamoto, Y.; Schuller, B.W. Can machine learning assist locating the excitation of snore sound? A review. IEEE J. Biomed. Health Inform. 2020, 25, 1233–1246. [Google Scholar] [CrossRef]

- Bhattacharya, S.; Das, N.; Sahu, S.; Mondal, A.; Borah, S. Deep classification of sound: A concise review. In Proceeding of First Doctoral Symposium on Natural Computing Research; Springer: Singapore, 2021; pp. 33–43. [Google Scholar]

- Bencharif, B.A.E.; Ölçer, I.; Özkan, E.; Cesur, B. Detection of acoustic signals from Distributed Acoustic Sensor data with Random Matrix Theory and their classification using Machine Learning. In SPIE Future Sensing Technologies; SPIE: Anaheim, CA, USA, 2020; Volume 11525, pp. 389–395. [Google Scholar]

- Sharma, G.; Umapathy, K.; Krishnan, S. Trends in audio signal feature extraction methods. Appl. Acoust. 2020, 158, 107020. [Google Scholar] [CrossRef]

- Piczak, K.J. ESC: Dataset for environmental sound classification. In Proceedings of the 23rd ACM International Conference on Multimedia, Brisbane, Australia, 13 October 2015; pp. 1015–1018. [Google Scholar]

- Gharib, S.; Derrar, H.; Niizumi, D.; Senttula, T.; Tommola, J.; Heittola, T.; Virtanen, T.; Huttunen, H. Acoustic scene classification: A competition review. In Proceedings of the 2018 IEEE 28th International Workshop on Machine Learning for Signal Processing (MLSP), Aalborg, Denmark, 17–20 September 2018; pp. 1–6. [Google Scholar]

- Schryen, G.; Wagner, G.; Benlian, A.; Paré, G. A knowledge development perspective on literature reviews: Validation of a new typology in the IS field. Commun. AIS 2020, 46, 134–186. [Google Scholar] [CrossRef]

- Templier, M.; Pare, G. Transparency in literature reviews: An assessment of reporting practices across review types and genres in top IS journals. Eur. J. Inf. Syst. 2018, 27, 503–550. [Google Scholar] [CrossRef]

- Moher, D.; Shamseer, L.; Clarke, M.; Ghersi, D.; Liberati, A.; Petticrew, M.; Shekelle, P.; Stewart, L.A. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015 statement. Syst. Rev. 2015, 4, 1. [Google Scholar] [CrossRef]

- Barber, J.R.; Plotkin, D.; Rubin, J.J.; Homziak, N.T.; Leavell, B.C.; Houlihan, P.R.; Miner, K.A.; Breinholt, J.W.; Quirk-Royal, B.; Padrón, P.S.; et al. Anti-bat ultrasound production in moths is globally and phylogenetically widespread. Proc. Natl. Acad. Sci. USA 2022, 119, e2117485119. [Google Scholar] [CrossRef]

- Bahuleyan, H. Music genre classification using machine learning techniques. arXiv 2018, arXiv:1804.01149. [Google Scholar]

- Sim, J.Y.; Noh, H.W.; Goo, W.; Kim, N.; Chae, S.H.; Ahn, C.G. Identity recognition based on bioacoustics of human body. IEEE Trans. Cybern. 2019, 51, 2761–2772. [Google Scholar] [CrossRef] [PubMed]

- Bisgin, H.; Bera, T.; Ding, H.; Semey, H.G.; Wu, L.; Liu, Z.; Barnes, A.E.; Langley, D.A.; Pava-Ripoll, M.; Vyas, H.J.; et al. Comparing SVM and ANN based machine learning methods for species identification of food contaminating beetles. Sci. Rep. 2018, 8, 6532. [Google Scholar] [CrossRef] [PubMed]

- Høye, T.T.; Ärje, J.; Bjerge, K.; Hansen, O.L.; Iosifidis, A.; Leese, F.; Mann, H.M.; Meissner, K.; Melvad, C.; Raitoharju, J. Deep learning and computer vision will transform entomology. Proc. Natl. Acad. Sci. USA 2021, 118, e2002545117. [Google Scholar] [CrossRef] [PubMed]

- Shankar, K.; Perumal, E.; Vidhyavathi, R. Deep neural network with moth search optimization algorithm based detection and classification of diabetic retinopathy images. SN Appl. Sci. 2020, 2, 748. [Google Scholar] [CrossRef]

- Bjerge, K.; Nielsen, J.B.; Sepstrup, M.V.; Helsing-Nielsen, F.; Høye, T.T. An automated light trap to monitor moths (Lepidoptera) using computer vision-based tracking and deep learning. Sensors 2021, 21, 343. [Google Scholar] [CrossRef]

- Valletta, J.J.; Torney, C.; Kings, M.; Thornton, A.; Madden, J. Applications of machine learning in animal behaviour studies. Anim. Behav. 2017, 124, 203–220. [Google Scholar] [CrossRef]

- Feng, L.; Bhanu, B.; Heraty, J. A software system for automated identification and retrieval of moth images based on wing attributes. Pattern Recognit. 2016, 51, 225–241. [Google Scholar] [CrossRef]

- Vasconcelos, D.; Nunes, N.J.; Gomes, J. An annotated dataset of bioacoustic sensing and features of mosquitoes. Sci. Data 2020, 7, 382. [Google Scholar] [CrossRef]

- Mayo, M.; Watson, A.T. Automatic species identification of live moths. Knowl.-Based Syst. 2007, 20, 195–202. [Google Scholar] [CrossRef]

- Cheng, J.; Xie, B.; Lin, C.; Ji, L. A comparative study in birds: Call-type-independent species and individual recognition using four machine-learning methods and two acoustic features. Bioacoustics 2012, 21, 157–171. [Google Scholar] [CrossRef]

- Colonna, J.G.; Gama, J.; Nakamura, E.F. A comparison of hierarchical multi-output recognition approaches for anuran classification. Mach. Learn. 2018, 107, 1651–1671. [Google Scholar] [CrossRef]

- Xu, W.; Zhang, X.; Yao, L.; Xue, W.; Wei, B. A multi-view CNN-based acoustic classification system for automatic animal species identification. Ad. Hoc Netw. 2020, 102, 102115. [Google Scholar] [CrossRef]

- Gan, H.; Zhang, J.; Towsey, M.; Truskinger, A.; Stark, D.; Van Rensburg, B.J.; Li, Y.; Roe, P. A novel frog chorusing recognition method with acoustic indices and machine learning. Future Gener. Comput. Syst. 2021, 125, 485–495. [Google Scholar] [CrossRef]

- Xie, J.; Towsey, M.; Zhang, J.; Roe, P. Acoustic classification of Australian frogs based on enhanced features and machine learning algorithms. Appl. Acoust. 2016, 113, 193–201. [Google Scholar] [CrossRef]

- Kim, J.; Oh, J.; Heo, T.Y. Acoustic scene classification and visualization of beehive sounds using machine learning algorithms and grad-CAM. Math. Probl. Eng. 2021, 2021, 5594498. [Google Scholar] [CrossRef]

- Kirkeby, C.; Rydhmer, K.; Cook, S.M.; Strand, A.; Torrance, M.T.; Swain, J.L.; Prangsma, J.; Johnen, A.; Jensen, M.; Brydegaard, M.; et al. Advances in automatic identification of flying insects using optical sensors and machine learning. Sci. Rep. 2021, 11, 1555. [Google Scholar] [CrossRef]

- Tacioli, L.; Toledo, L.; Medeiros, C. An architecture for animal sound identification based on multiple feature extraction and classification algorithms. In Anais do XI Brazilian e-Science Workshop; Sociedade Brasileira de Computação: Porto Alegre, Brazil, 2017; pp. 29–36. [Google Scholar]

- Bergler, C.; Schröter, H.; Cheng, R.X.; Barth, V.; Weber, M.; Nöth, E.; Hofer, H.; Maier, A. ORCA-SPOT: An automatic killer whale sound detection toolkit using deep learning. Sci. Rep. 2019, 9, 10997. [Google Scholar] [CrossRef]

- Zhang, L.; Saleh, I.; Thapaliya, S.; Louie, J.; Figueroa-Hernandez, J.; Ji, H. An empirical evaluation of machine learning approaches for species identification through bioacoustics. In Proceedings of the 2017 International Conference on Computational Science and Computational Intelligence (CSCI), Las Vegas, NV, USA, 14–16 December 2017; pp. 489–494. [Google Scholar]

- Şaşmaz, E.; Tek, F.B. Animal sound classification using a convolutional neural network. In Proceedings of the 2018 3rd International Conference on Computer Science and Engineering (UBMK), Sarajevo, Bosnia and Herzegovina, 20–23 September 2018; pp. 625–629. [Google Scholar]

- Nanni, L.; Brahnam, S.; Lumini, A.; Maguolo, G. Animal sound classification using dissimilarity spaces. Appl. Sci. 2020, 10, 8578. [Google Scholar] [CrossRef]

- Romero, J.; Luque, A.; Carrasco, A. Animal Sound Classification using Sequential Classifiers. In Proceedings of the BIOSIGNALS, 21–23 February 2011; ScitePress Digital Library, 2017; pp. 242–247. [Google Scholar]

- Kim, C.I.; Cho, Y.; Jung, S.; Rew, J.; Hwang, E. Animal sounds classification scheme based on multi-feature network with mixed datasets. KSII Trans. Internet Inf. Syst. (TIIS) 2020, 14, 3384–3398. [Google Scholar]

- Weninger, F.; Schuller, B. Audio recognition in the wild: Static and dynamic classification on a real-world database of animal vocalizations. In Proceedings of the 2011 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Prague, Czech Republic, 22–27 May 2011; pp. 337–340. [Google Scholar]

- Chesmore, E.; Ohya, E. Automated identification of field-recorded songs of four British grasshoppers using bioacoustic signal recognition. Bull. Entomol. Res. 2004, 94, 319–330. [Google Scholar] [CrossRef]

- Mac Aodha, O.; Gibb, R.; Barlow, K.E.; Browning, E.; Firman, M.; Freeman, R.; Harder, B.; Kinsey, L.; Mead, G.R.; Newson, S.E.; et al. Bat detective—Deep learning tools for bat acoustic signal detection. PLoS Comput. Biol. 2018, 14, e1005995. [Google Scholar] [CrossRef] [PubMed]

- Zgank, A. Bee swarm activity acoustic classification for an IoT-based farm service. Sensors 2019, 20, 21. [Google Scholar] [CrossRef] [PubMed]

- Zhong, M.; Castellote, M.; Dodhia, R.; Lavista Ferres, J.; Keogh, M.; Brewer, A. Beluga whale acoustic signal classification using deep learning neural network models. J. Acoust. Soc. Am. 2020, 147, 1834–1841. [Google Scholar] [CrossRef] [PubMed]

- Bravo Sanchez, F.J.; Hossain, M.R.; English, N.B.; Moore, S.T. Bioacoustic classification of avian calls from raw sound waveforms with an open-source deep learning architecture. Sci. Rep. 2021, 11, 15733. [Google Scholar] [CrossRef] [PubMed]

- Kiskin, I.; Zilli, D.; Li, Y.; Sinka, M.; Willis, K.; Roberts, S. Bioacoustic detection with wavelet-conditioned convolutional neural networks. Neural Comput. Appl. 2020, 32, 915–927. [Google Scholar] [CrossRef]

- Pourhomayoun, M.; Dugan, P.; Popescu, M.; Clark, C. Bioacoustic signal classification based on continuous region processing, grid masking and artificial neural network. arXiv 2013, arXiv:1305.3635. [Google Scholar]

- Mehyadin, A.E.; Abdulazeez, A.M.; Hasan, D.A.; Saeed, J.N. Birds sound classification based on machine learning algorithms. Asian J. Res. Comput. Sci. 2021, 9, 68530. [Google Scholar] [CrossRef]

- Arzar, N.N.K.; Sabri, N.; Johari, N.F.M.; Shari, A.A.; Noordin, M.R.M.; Ibrahim, S. Butterfly species identification using convolutional neural network (CNN). In Proceedings of the 2019 IEEE International Conference on Automatic Control and Intelligent Systems (I2CACIS), Selangor, Malaysia, 29 June 2019; pp. 221–224. [Google Scholar]

- Shamir, L.; Yerby, C.; Simpson, R.; von Benda-Beckmann, A.M.; Tyack, P.; Samarra, F.; Miller, P.; Wallin, J. Classification of large acoustic datasets using machine learning and crowdsourcing: Application to whale calls. J. Acoust. Soc. Am. 2014, 135, 953–962. [Google Scholar] [CrossRef]

- Molnár, C.; Kaplan, F.; Roy, P.; Pachet, F.; Pongrácz, P.; Dóka, A.; Miklósi, Á. Classification of dog barks: A machine learning approach. Anim. Cogn. 2008, 11, 389–400. [Google Scholar] [CrossRef]

- Gunasekaran, S.; Revathy, K. Content-based classification and retrieval of wild animal sounds using feature selection algorithm. In Proceedings of the 2010 Second International Conference on Machine Learning and Computing, Bangalore, India, 9–11 February 2010; pp. 272–275. [Google Scholar]

- Nanni, L.; Maguolo, G.; Paci, M. Data augmentation approaches for improving animal audio classification. Ecol. Inform. 2020, 57, 101084. [Google Scholar] [CrossRef]

- Ko, K.; Park, S.; Ko, H. Convolutional feature vectors and support vector machine for animal sound classification. In Proceedings of the 2018 40th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Honolulu, HI, USA, 18–21 July 2018; pp. 376–379. [Google Scholar]

- Bermant, P.C.; Bronstein, M.M.; Wood, R.J.; Gero, S.; Gruber, D.F. Deep machine learning techniques for the detection and classification of sperm whale bioacoustics. Sci. Rep. 2019, 9, 12588. [Google Scholar] [CrossRef] [PubMed]

- Thakur, A.; Thapar, D.; Rajan, P.; Nigam, A. Deep metric learning for bioacoustic classification: Overcoming training data scarcity using dynamic triplet loss. J. Acoust. Soc. Am. 2019, 146, 534–547. [Google Scholar] [CrossRef] [PubMed]

- Morfi, V.; Lachlan, R.F.; Stowell, D. Deep perceptual embeddings for unlabelled animal sound events. J. Acoust. Soc. Am. 2021, 150, 2–11. [Google Scholar] [CrossRef]

- Bardeli, R.; Wolff, D.; Kurth, F.; Koch, M.; Tauchert, K.H.; Frommolt, K.H. Detecting bird sounds in a complex acoustic environment and application to bioacoustic monitoring. Pattern Recognit. Lett. 2010, 31, 1524–1534. [Google Scholar] [CrossRef]

- Eliopoulos, P.; Potamitis, I.; Kontodimas, D.C.; Givropoulou, E. Detection of adult beetles inside the stored wheat mass based on their acoustic emissions. J. Econ. Entomol. 2015, 108, 2808–2814. [Google Scholar] [CrossRef]

- Kim, T.; Kim, J.W.; Lee, K. Detection of sleep disordered breathing severity using acoustic biomarker and machine learning techniques. Biomed. Eng. Online 2018, 17, 16. [Google Scholar] [CrossRef]

- Yazgaç, B.G.; Kırcı, M.; Kıvan, M. Detection of sunn pests using sound signal processing methods. In Proceedings of the 2016 Fifth International Conference on Agro-Geoinformatics (Agro-Geoinformatics), Tianjin, China, 18–20 July 2016; pp. 1–6. [Google Scholar]

- Pandeya, Y.R.; Kim, D.; Lee, J. Domestic cat sound classification using learned features from deep neural nets. Appl. Sci. 2018, 8, 1949. [Google Scholar] [CrossRef]

- Al-Ahmadi, S. Energy efficient animal sound recognition scheme in wireless acoustic sensors networks. Int. J. Wirel. Mob. Netw. (IJWMN) 2020, 12, 31–38. [Google Scholar]

- Huang, C.J.; Yang, Y.J.; Yang, D.X.; Chen, Y.J. Frog classification using machine learning techniques. Expert Syst. Appl. 2009, 36, 3737–3743. [Google Scholar] [CrossRef]

- Salamon, J.; Bello, J.P.; Farnsworth, A.; Kelling, S. Fusing shallow and deep learning for bioacoustic bird species classification. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 141–145. [Google Scholar]

- Xie, J.; Zhu, M. Handcrafted features and late fusion with deep learning for bird sound classification. Ecol. Inform. 2019, 52, 74–81. [Google Scholar] [CrossRef]

- Chao, K.W.; Hu, N.Z.; Chao, Y.C.; Su, C.K.; Chiu, W.H. Implementation of artificial intelligence for classification of frogs in bioacoustics. Symmetry 2019, 11, 1454. [Google Scholar] [CrossRef]

- Zgank, A. IoT-based bee swarm activity acoustic classification using deep neural networks. Sensors 2021, 21, 676. [Google Scholar] [CrossRef] [PubMed]

- Ribeiro, A.P.; da Silva, N.F.F.; Mesquita, F.N.; Araújo, P.d.C.S.; Rosa, T.C.; Mesquita-Neto, J.N. Machine learning approach for automatic recognition of tomato-pollinating bees based on their buzzing-sounds. PLoS Comput. Biol. 2021, 17, e1009426. [Google Scholar] [CrossRef]

- Noda, J.J.; Travieso, C.M.; Sanchez-Rodriguez, D. Methodology for automatic bioacoustic classification of anurans based on feature fusion. Expert Syst. Appl. 2016, 50, 100–106. [Google Scholar] [CrossRef]

- Chalmers, C.; Fergus, P.; Wich, S.; Longmore, S. Modelling Animal Biodiversity Using Acoustic Monitoring and Deep Learning. In Proceedings of the 2021 International Joint Conference on Neural Networks (IJCNN), Shenzhen, China, 18–22 July 2021; pp. 1–7. [Google Scholar]

- Caruso, F.; Dong, L.; Lin, M.; Liu, M.; Gong, Z.; Xu, W.; Alonge, G.; Li, S. Monitoring of a nearshore small dolphin species using passive acoustic platforms and supervised machine learning techniques. Front. Mar. Sci. 2020, 7, 267. [Google Scholar] [CrossRef]

- Zhong, M.; LeBien, J.; Campos-Cerqueira, M.; Dodhia, R.; Ferres, J.L.; Velev, J.P.; Aide, T.M. Multispecies bioacoustic classification using transfer learning of deep convolutional neural networks with pseudo-labeling. Appl. Acoust. 2020, 166, 107375. [Google Scholar] [CrossRef]

- Kim, D.; Lee, Y.; Ko, H. Multi-Task Learning for Animal Species and Group Category Classification. In Proceedings of the 2019 7th International Conference on Information Technology: IoT and Smart City, Guangzhou, China; 2019; pp. 435–438. [Google Scholar]

- Dugan, P.J.; Rice, A.N.; Urazghildiiev, I.R.; Clark, C.W. North Atlantic right whale acoustic signal processing: Part I. In Comparison of machine learning recognition algorithms. In Proceedings of the 2010 IEEE Long Island Systems, Applications and Technology Conference, Farmingdale, NY, USA, 7 May 2010; pp. 1–6. [Google Scholar]

- Balemarthy, S.; Sajjanhar, A.; Zheng, J.X. Our practice of using machine learning to recognize species by voice. arXiv 2018, arXiv:1810.09078. [Google Scholar]

- Gradišek, A.; Slapničar, G.; Šorn, J.; Luštrek, M.; Gams, M.; Grad, J. Predicting species identity of bumblebees through analysis of flight buzzing sounds. Bioacoustics 2017, 26, 63–76. [Google Scholar] [CrossRef]

- Lostanlen, V.; Salamon, J.; Farnsworth, A.; Kelling, S.; Bello, J.P. Robust sound event detection in bioacoustic sensor networks. PLoS ONE 2019, 14, e0214168. [Google Scholar] [CrossRef]

- Xie, J.; Bertram, S.M. Using machine learning techniques to classify cricket sound. In Eleventh International Conference on Signal Processing Systems; SPIE: Chengdu, China, 2019; Volume 11384, pp. 141–148. [Google Scholar]

- Nanni, L.; Rigo, A.; Lumini, A.; Brahnam, S. Spectrogram classification using dissimilarity space. Appl. Sci. 2020, 10, 4176. [Google Scholar] [CrossRef]

- Salamon, J.; Bello, J.P.; Farnsworth, A.; Robbins, M.; Keen, S.; Klinck, H.; Kelling, S. Towards the automatic classification of avian flight calls for bioacoustic monitoring. PLoS ONE 2016, 11, e0166866. [Google Scholar] [CrossRef] [PubMed]

- Kawakita, S.; Ichikawa, K. Automated classification of bees and hornet using acoustic analysis of their flight sounds. Apidologie 2019, 50, 71–79. [Google Scholar] [CrossRef]

- Li, L.; Qiao, G.; Liu, S.; Qing, X.; Zhang, H.; Mazhar, S.; Niu, F. Automated classification of Tursiops aduncus whistles based on a depth-wise separable convolutional neural network and data augmentation. J. Acoust. Soc. Am. 2021, 150, 3861–3873. [Google Scholar] [CrossRef] [PubMed]

- Ntalampiras, S. Automatic acoustic classification of insect species based on directed acyclic graphs. J. Acoust. Soc. Am. 2019, 145, EL541–EL546. [Google Scholar] [CrossRef]

- Chen, Y.; Why, A.; Batista, G.; Mafra-Neto, A.; Keogh, E. Flying insect classification with inexpensive sensors. J. Insect Behav. 2014, 27, 657–677. [Google Scholar] [CrossRef]

- Zhu, L.-Q. Insect sound recognition based on mfcc and pnn. In Proceedings of the 2011 International Conference on Multimedia and Signal Processing, Guilin, China, 14–15 May 2011; Volume 2, pp. 42–46. [Google Scholar]

- D’mello, G.C.; Hussain, R. Insect Inspection on the basis of their Flight Sound. Int. J. Sci. Eng. Res. 2015, 6, 49–54. [Google Scholar]

- Aide, T.M.; Corrada-Bravo, C.; Campos-Cerqueira, M.; Milan, C.; Vega, G.; Alvarez, R. Real-time bioacoustics monitoring and automated species identification. PeerJ 2013, 1, e103. [Google Scholar] [CrossRef]

- Rathore, D.S.; Ram, B.; Pal, B.; Malviya, S. Analysis of classification algorithms for insect detection using MATLAB. In Proceedings of the 2nd International Conference on Advanced Computing and Software Engineering (ICACSE), Sultanpur, India, 8–9 February 2019. [Google Scholar]

- Ovaskainen, O.; Moliterno de Camargo, U.; Somervuo, P. Animal Sound Identifier (ASI): Software for automated identification of vocal animals. Ecol. Lett. 2018, 21, 1244–1254. [Google Scholar] [CrossRef]

- Müller, L.; Marti, M. Bird Sound Classification using a Bidirectional LSTM. In Proceedings of the CLEF (Working Notes), Avignon, France, 10–14 September 2018. [Google Scholar]

- Supriya, P.R.; Bhat, S.; Shivani, S.S. Classification of birds based on their sound patterns using GMM and SVM classifiers. Int. Res. J. Eng. Technol. 2018, 5, 4708–4711. [Google Scholar]

- Oikarinen, T.; Srinivasan, K.; Meisner, O.; Hyman, J.B.; Parmar, S.; Fanucci-Kiss, A.; Desimone, R.; Landman, R.; Feng, G. Deep convolutional network for animal sound classification and source attribution using dual audio recordings. J. Acoust. Soc. Am. 2019, 145, 654–662. [Google Scholar] [CrossRef] [PubMed]

- Nanni, L.; Ghidoni, S.; Brahnam, S. Ensemble of convolutional neural networks for bioimage classification. Appl. Comput. Inform. 2020, 17, 19–35. [Google Scholar] [CrossRef]

- Hong, R.; Wang, M.; Yuan, X.T.; Xu, M.; Jiang, J.; Yan, S.; Chua, T.S. Video accessibility enhancement for hearing-impaired users. ACM Trans. Multimed. Comput. Commun. Appl. (TOMM) 2011, 7, 1–19. [Google Scholar] [CrossRef]

- Wang, W.; Chen, Z.; Xing, B.; Huang, X.; Han, S.; Agu, E. A smartphone-based digital hearing aid to mitigate hearing loss at specific frequencies. In Proceedings of the 1st Workshop on Mobile Medical Applications, Seattle, WA, USA; 2014; pp. 1–5. [Google Scholar]

- Bountourakis, V.; Vrysis, L.; Papanikolaou, G. Machine learning algorithms for environmental sound recognition: Towards soundscape semantics. In Proceedings of the Audio Mostly 2015 on Interaction with Sound, Thessaloniki Greece; 2015; pp. 1–7. [Google Scholar]

- Li, M.; Gao, Z.; Zang, X.; Wang, X. Environmental noise classification using convolution neural networks. In Proceedings of the 2018 International Conference on Electronics and Electrical Engineering Technology, Tianjin, China, 19–21 September 2018; pp. 182–185. [Google Scholar]

- Alsouda, Y.; Pllana, S.; Kurti, A. Iot-based urban noise identification using machine learning: Performance of SVM, KNN, bagging, and random forest. In Proceedings of the International Conference on Omni-Layer Intelligent Systems, Crete, Greece, 5–7 May 2019; pp. 62–67. [Google Scholar]

- Kurnaz, S.; Aljabery, M.A. Predict the type of hearing aid of audiology patients using data mining techniques. In Proceedings of the Fourth International Conference on Engineering & MIS 2018, Istanbul, Turkey, 19–20 June 2018; pp. 1–6. [Google Scholar]

- Wang, W.; Seraj, F.; Meratnia, N.; Havinga, P.J. Privacy-aware environmental sound classification for indoor human activity recognition. In Proceedings of the 12th ACM International Conference on PErvasive Technologies Related to Assistive Environments, Island of Rhodes, Greece, 5–7 June 2019; pp. 36–44. [Google Scholar]

- Seker, H.; Inik, O. CnnSound: Convolutional Neural Networks for the Classification of Environmental Sounds. In Proceedings of the 2020 4th International Conference on Advances in Artificial Intelligence, London, UK, 9–11 October 2020; pp. 79–84. [Google Scholar]

- Sigtia, S.; Stark, A.M.; Krstulović, S.; Plumbley, M.D. Automatic environmental sound recognition: Performance versus computational cost. IEEE/ACM Trans. Audio Speech Lang. Process. 2016, 24, 2096–2107. [Google Scholar] [CrossRef]

- Van De Laar, T.; de Vries, B. A probabilistic modeling approach to hearing loss compensation. IEEE/ACM Trans. Audio Speech Lang. Process. 2016, 24, 2200–2213. [Google Scholar] [CrossRef]

- Salehi, H.; Suelzle, D.; Folkeard, P.; Parsa, V. Learning-based reference-free speech quality measures for hearing aid applications. IEEE/ACM Trans. Audio Speech Lang. Process. 2018, 26, 2277–2288. [Google Scholar] [CrossRef]

- Demir, F.; Abdullah, D.A.; Sengur, A. A new deep CNN model for environmental sound classification. IEEE Access 2020, 8, 66529–66537. [Google Scholar] [CrossRef]

- Ridha, A.M.; Shehieb, W. Assistive Technology for Hearing-Impaired and Deaf Students Utilizing Augmented Reality. In Proceedings of the 2021 IEEE Canadian Conference on Electrical and Computer Engineering (CCECE), Virtual Conference. 12–17 September 2021; pp. 1–5. [Google Scholar]

- Ayu, A.I.S.M.; Karyono, K.K. Audio detection (Audition): Android based sound detection application for hearing-impaired using AdaBoostM1 classifier with REPTree weaklearner. In Proceedings of the 2014 Asia-Pacific Conference on Computer Aided System Engineering (APCASE), South Kuta, Indonesia, 10–12 February 2014; pp. 136–140. [Google Scholar]

- Chen, C.Y.; Kuo, P.Y.; Chiang, Y.H.; Liang, J.Y.; Liang, K.W.; Chang, P.C. Audio-Based Early Warning System of Sound Events on the Road for Improving the Safety of Hearing-Impaired People. In Proceedings of the 2019 IEEE 8th Global Conference on Consumer Electronics (GCCE), OSAKA, Japan, 15–18 October 2019; pp. 933–936. [Google Scholar]

- Bhat, G.S.; Shankar, N.; Panahi, I.M. Automated machine learning based speech classification for hearing aid applications and its real-time implementation on smartphone. In Proceedings of the 2020 42nd Annual International Conference of the IEEE Engineering in Medicine & Biology Society (EMBC), Montreal, QC, Canada, 20–24 July 2020; pp. 956–959. [Google Scholar]

- Healy, E.W.; Yoho, S.E. Difficulty understanding speech in noise by the hearing impaired: Underlying causes and technological solutions. In Proceedings of the 2016 38th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Orlando, FL, USA, 16–20 August 2016; pp. 89–92. [Google Scholar]

- Jatturas, C.; Chokkoedsakul, S.; Ayudhya, P.D.N.; Pankaew, S.; Sopavanit, C.; Asdornwised, W. Recurrent Neural Networks for Environmental Sound Recognition using Scikit-learn and Tensorflow. In Proceedings of the 2019 16th International Conference on Electrical Engineering/Electronics, Computer, Telecommunications and Information Technology (ECTI-CON), Pattaya, Thailand, 10–13 July 2019; pp. 806–809. [Google Scholar]

- Saleem, N.; Khattak, M.I.; Ahmad, S.; Ali, M.Y.; Mohmand, M.I. Machine Learning Approach for Improving the Intelligibility of Noisy Speech. In Proceedings of the 2020 17th International Bhurban Conference on Applied Sciences and Technology (IBCAST), Islamabad, Pakistan, 14–18 January 2020; pp. 303–308. [Google Scholar]

- Davis, N.; Suresh, K. Environmental sound classification using deep convolutional neural networks and data augmentation. In Proceedings of the 2018 IEEE Recent Advances in Intelligent Computational Systems (RAICS), Thiruvananthapuram, India, 6–8 December 2018; pp. 41–45. [Google Scholar]

- Chu, S.; Narayanan, S.; Kuo, C.C.J. Environmental sound recognition with time–frequency audio features. IEEE Trans. Audio Speech Lang. Process. 2009, 17, 1142–1158. [Google Scholar] [CrossRef]

- Chu, S.; Narayanan, S.; Kuo, C.C.J.; Mataric, M.J. Where Am I? In Scene recognition for mobile robots using audio features. In Proceedings of the 2006 IEEE International Conference on Multimedia and Expo, Toronto, ON, Canada, 9–12 July 2006; pp. 885–888. [Google Scholar]

- Ullo, S.L.; Khare, S.K.; Bajaj, V.; Sinha, G.R. Hybrid computerized method for environmental sound classification. IEEE Access 2020, 8, 124055–124065. [Google Scholar] [CrossRef]

- Zhang, X.; Zou, Y.; Shi, W. Dilated convolution neural network with LeakyReLU for environmental sound classification. In Proceedings of the 2017 22nd International Conference on Digital Signal Processing (DSP), London, UK, 23–25 August 2017; pp. 1–5. [Google Scholar]

- Piczak, K.J. Environmental sound classification with convolutional neural networks. In Proceedings of the 2015 IEEE 25th International Workshop on Machine Learning for Signal Processing (MLSP), Boston, MA, USA, 17–20 September 2015; pp. 1–6. [Google Scholar]

- Han, B.j.; Hwang, E. Environmental sound classification based on feature collaboration. In Proceedings of the 2009 IEEE International Conference on Multimedia and Expo, New York, NY, USA, 28 June–3 July 2009; pp. 542–545. [Google Scholar]

- Wang, J.C.; Lin, C.H.; Chen, B.W.; Tsai, M.K. Gabor-based nonuniform scale-frequency map for environmental sound classification in home automation. IEEE Trans. Autom. Sci. Eng. 2013, 11, 607–613. [Google Scholar] [CrossRef]

- Salamon, J.; Bello, J.P. Deep convolutional neural networks and data augmentation for environmental sound classification. IEEE Signal Process. Lett. 2017, 24, 279–283. [Google Scholar] [CrossRef]

- Wang, J.C.; Wang, J.F.; He, K.W.; Hsu, C.S. Environmental sound classification using hybrid SVM/KNN classifier and MPEG-7 audio low-level descriptor. In Proceedings of the 2006 IEEE International Joint Conference on Neural Network Proceedings, Vancouver, BC, Canada, 16–21 July 2006; pp. 1731–1735. [Google Scholar]

- Zhao, Y.; Li, J.; Zhang, M.; Lu, Y.; Xie, H.; Tian, Y.; Qiu, W. Machine learning models for the hearing impairment prediction in workers exposed to complex industrial noise: A pilot study. Ear Hear. 2019, 40, 690. [Google Scholar] [CrossRef] [PubMed]

- Tokozume, Y.; Harada, T. Learning environmental sounds with end-to-end convolutional neural network. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 2721–2725. [Google Scholar]

- Nossier, S.A.; Rizk, M.; Moussa, N.D.; el Shehaby, S. Enhanced smart hearing aid using deep neural networks. Alex. Eng. J. 2019, 58, 539–550. [Google Scholar] [CrossRef]

- Abdoli, S.; Cardinal, P.; Koerich, A.L. End-to-end environmental sound classification using a 1D convolutional neural network. Expert Syst. Appl. 2019, 136, 252–263. [Google Scholar] [CrossRef]

- Mushtaq, Z.; Su, S.F. Environmental sound classification using a regularized deep convolutional neural network with data augmentation. Appl. Acoust. 2020, 167, 107389. [Google Scholar] [CrossRef]

- Chen, Y.; Guo, Q.; Liang, X.; Wang, J.; Qian, Y. Environmental sound classification with dilated convolutions. Appl. Acoust. 2019, 148, 123–132. [Google Scholar] [CrossRef]

- Mushtaq, Z.; Su, S.F.; Tran, Q.V. Spectral images based environmental sound classification using CNN with meaningful data augmentation. Appl. Acoust. 2021, 172, 107581. [Google Scholar] [CrossRef]

- Ahmad, S.; Agrawal, S.; Joshi, S.; Taran, S.; Bajaj, V.; Demir, F.; Sengur, A. Environmental sound classification using optimum allocation sampling based empirical mode decomposition. Phys. A Stat. Mech. Appl. 2020, 537, 122613. [Google Scholar] [CrossRef]

- Medhat, F.; Chesmore, D.; Robinson, J. Masked conditional neural networks for environmental sound classification. In International Conference on Innovative Techniques and Applications of Artificial Intelligence; Springer: Cham, Switzerland, 2017; pp. 21–33. [Google Scholar]

- Zhang, Z.; Xu, S.; Cao, S.; Zhang, S. Deep convolutional neural network with mixup for environmental sound classification. In Chinese Conference on Pattern Recognition and Computer Vision (PRCV); Springer: Cham, Switzerland, 2018; pp. 356–367. [Google Scholar]

- Sailor, H.B.; Agrawal, D.M.; Patil, H.A. Unsupervised Filterbank Learning Using Convolutional Restricted Boltzmann Machine for Environmental Sound Classification. In Proceedings of the INTERSPEECH 2017, Stockholm, Sweden, 20–24 August 2017; Volume 8, p. 9. [Google Scholar]

- Sharma, J.; Granmo, O.C.; Goodwin, M. Environment Sound Classification Using Multiple Feature Channels and Attention Based Deep Convolutional Neural Network. In Proceedings of the INTERSPEECH 2020, Shanghai, China, 25–29 October 2020; pp. 1186–1190. [Google Scholar]

- Mohaimenuzzaman, M.; Bergmeir, C.; West, I.T.; Meyer, B. Environmental Sound Classification on the Edge: A Pipeline for Deep Acoustic Networks on Extremely Resource-Constrained Devices. arXiv 2021, arXiv:2103.03483. [Google Scholar] [CrossRef]

- Toffa, O.K.; Mignotte, M. Environmental sound classification using local binary pattern and audio features collaboration. IEEE Trans. Multimed. 2020, 23, 3978–3985. [Google Scholar] [CrossRef]

- Khamparia, A.; Gupta, D.; Nguyen, N.G.; Khanna, A.; Pandey, B.; Tiwari, P. Sound classification using convolutional neural network and tensor deep stacking network. IEEE Access 2019, 7, 7717–7727. [Google Scholar] [CrossRef]

- Su, Y.; Zhang, K.; Wang, J.; Madani, K. Environment sound classification using a two-stream CNN based on decision-level fusion. Sensors 2019, 19, 1733. [Google Scholar] [CrossRef] [PubMed]

- Bragg, D.; Huynh, N.; Ladner, R.E. A personalizable mobile sound detector app design for deaf and hard-of-hearing users. In Proceedings of the 18th International ACM SIGACCESS Conference on Computers and Accessibility, Reno, NV, USA, 23–26 October 2016; pp. 3–13. [Google Scholar]

- Jatturas, C.; Chokkoedsakul, S.; Avudhva, P.D.N.; Pankaew, S.; Sopavanit, C.; Asdornwised, W. Feature-based and Deep Learning-based Classification of Environmental Sound. In Proceedings of the 2019 IEEE International Conference on Consumer Electronics-Asia (ICCE-Asia), Bangkok, Thailand, 12–14 June 2019; pp. 126–130. [Google Scholar]

- Smith, D.; Ma, L.; Ryan, N. Acoustic environment as an indicator of social and physical context. Pers. Ubiquitous Comput. 2006, 10, 241–254. [Google Scholar] [CrossRef]

- Ma, L.; Smith, D.J.; Milner, B.P. Context awareness using environmental noise classification. In Proceedings of the INTERSPEECH, Geneva, Switzerland, 1–4 September 2003. [Google Scholar]

- Allen, J. Short term spectral analysis, synthesis, and modification by discrete Fourier transform. IEEE Trans. Acoust. Speech Signal Process. 1977, 25, 235–238. [Google Scholar] [CrossRef]

- Allen, J.B.; Rabiner, L.R. A unified approach to short-time Fourier analysis and synthesis. Proc. IEEE 1977, 65, 1558–1564. [Google Scholar] [CrossRef]

- Allen, J. Applications of the short time Fourier transform to speech processing and spectral analysis. In Proceedings of the ICASSP’82. IEEE International Conference on Acoustics, Speech, and Signal Processing, Paris, France, 3–5 May 1982; Volume 7, pp. 1012–1015. [Google Scholar]

- Lu, L.; Hanjalic, A. Text-like segmentation of general audio for content-based retrieval. IEEE Trans. Multimed. 2009, 11, 658–669. [Google Scholar] [CrossRef]

| Search Key | Acronyms | Search Refinement | Definition |

|---|---|---|---|

| Bioacoustics | Animals | birds, wildlife, pests | The branch of acoustics is concerned with sounds produced by or affecting living organisms, especially as relating to communication. |

| Non-Bioacoustics | Environment, artificial | Sounds are produced by artificial sources or both artificial and natural sources. | |

| Sound | Noise | Vibrations that travel through the air or another medium, and can be heard when they reach a person’s or animal’s ear. | |

| Classification | identification | The action or process of classifying something according to shared qualities or characteristics. | |

| Technology | Sensors, Devices | Technology classification of sounds. | |

| Machine Learning | Artificial Intelligence | CNN, SVM, Naïve Bayes | The use and development of computer systems that can learn and adapt without following explicit instructions, by using algorithms and statistical models to analyze and draw inferences from patterns in data. |

| Exclusion Criteria | Inclusion Criteria |

|---|---|

| Machine Learning Techniques Based on Images | bioacoustics classification. |

| Research not Published in English | General acoustic classification. |

| Research Published before 2000 | Using machine learning technology |

| Sound classification in the medical sector that does not touch on technology | Peer-reviewed publications |

| Papers that were not considered original research, such as letters to the, editor comments, etc. | papers published in English |

| Bioacoustics Research | General Acoustic Research | ||

|---|---|---|---|

| Citations | Number | Citations | Number |

| [14,15,39,40,41,42,43,44,45,46,47,48,49,50,51,52,53,54,55,56,57,58,59,60,61,62,63,64,65,66,67,68,69,70,71,72,73,74,75,76,77,78,79,80,81,82,83,84,85,86,87,88,89,90,91,92,93,94,95,96,97,98,99,100,101,102,103,104,105,106,107] | 77 (62.0%) | [108,109,110,111,112,113,114,115,116,117,118,119,120,121,122,123,124,125,126,127,128,129,130,131,132,133,134,135,136,137,138,139,140,141,142,143,144,145,146,147,148,149,150,151,152] | 47 (38.0%) |

| Dataset | Classes | Instances | Ratio | Average Accuracy |

|---|---|---|---|---|

| Cat Sound | 2 | 440 | 220.00 | 91.13 |

| Birdvox70k—CLO43SD | 43 | 5428 | 126.20 | 90.00 |

| Open Source Beehive Project | 2 | 78 | 39.00 | 89.33 |

| BIRDZ | 50 | 602,512 | 12,050.20 | 89.04 |

| Humboldt-University Animal Sound Archive | 2530 | 120,000 | 47.40 | 81.30 |

| MFCC dataset | 10 | 7195 | 719.50 | 78.40 |

| Zoological Sound Library | 10,000 | 240,000 | 24.00 | 73.04 |

| NIPS4Bplus | 87 | 687 | 7.90 | 65.00 |

| Dataset | Classes | Instances | Ratio | Average Accuracy |

|---|---|---|---|---|

| ESC-10 | 10 | 400 | 40.00 | 90.66 |

| DCASE | 16 | 320 | 20.00 | 86.70 |

| US8K | 10 | 8732 | 873.20 | 83.67 |

| ESC-50 | 50 | 2000 | 40.00 | 81.54 |

| Ryerson AV DB | 8 | 7356 | 919.50 | 71.30 |

| CICESE | 20 | 1367 | 68.35 | 68.10 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mutanu, L.; Gohil, J.; Gupta, K.; Wagio, P.; Kotonya, G. A Review of Automated Bioacoustics and General Acoustics Classification Research. Sensors 2022, 22, 8361. https://doi.org/10.3390/s22218361

Mutanu L, Gohil J, Gupta K, Wagio P, Kotonya G. A Review of Automated Bioacoustics and General Acoustics Classification Research. Sensors. 2022; 22(21):8361. https://doi.org/10.3390/s22218361

Chicago/Turabian StyleMutanu, Leah, Jeet Gohil, Khushi Gupta, Perpetua Wagio, and Gerald Kotonya. 2022. "A Review of Automated Bioacoustics and General Acoustics Classification Research" Sensors 22, no. 21: 8361. https://doi.org/10.3390/s22218361

APA StyleMutanu, L., Gohil, J., Gupta, K., Wagio, P., & Kotonya, G. (2022). A Review of Automated Bioacoustics and General Acoustics Classification Research. Sensors, 22(21), 8361. https://doi.org/10.3390/s22218361