1. Introduction

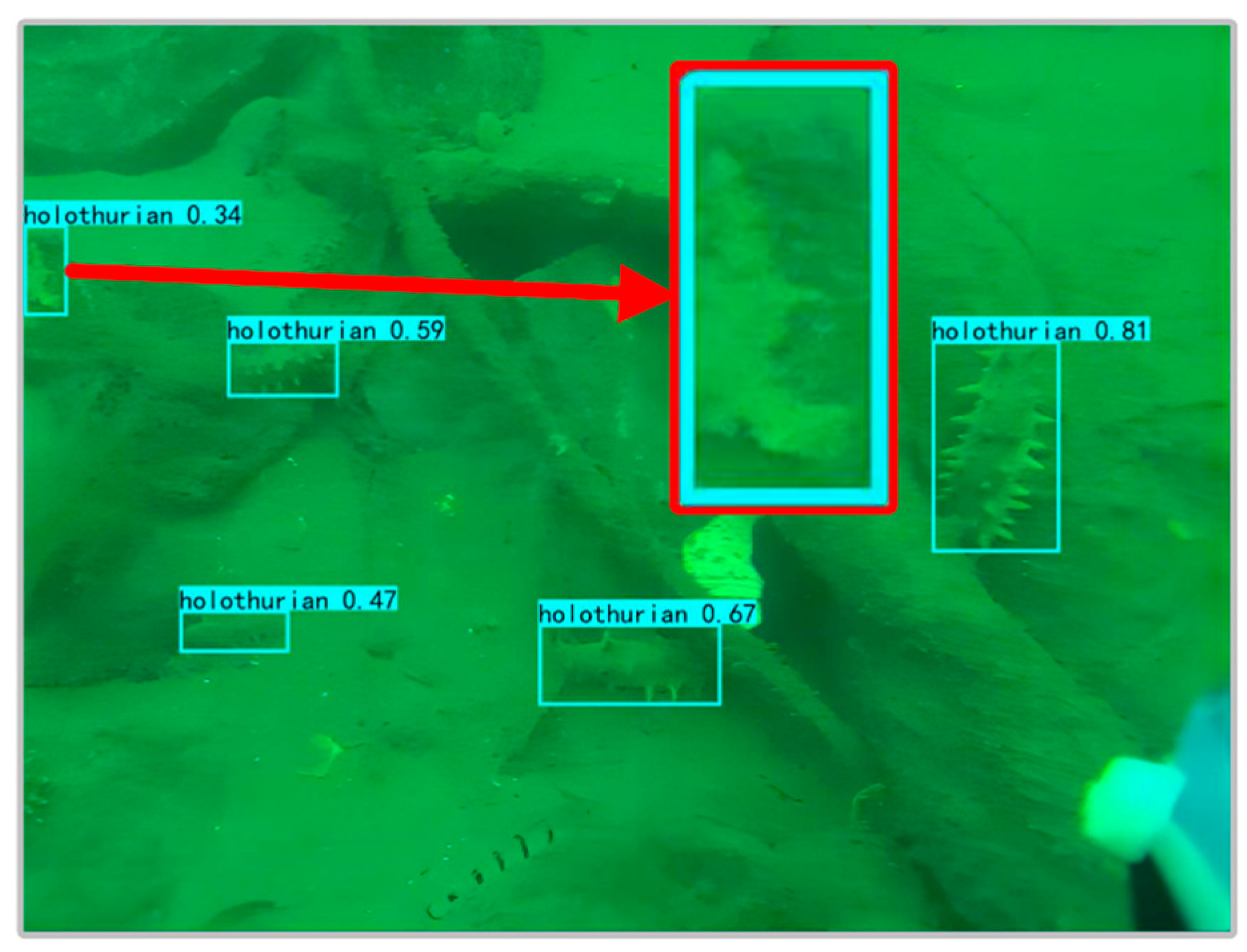

Holothurians are nutritious and delicious, and they are loved around the world, especially in Asia. At present, due to the immaturity of technology, the harvesting of holothurian is still performed mainly by artificial diving, and intelligent holothurian-fishing robots have not been widely used. Fishing holothurians by hand is not only time-consuming, inefficient, and costly, but also poses a serious threat to divers’ lives. Therefore, the popularization of holothurian-fishing robots is still an inevitable trend. However, the holothurian-fishing robot must first solve the technological difficulty that identifies and locates the holothurians. Therefore, the underwater holothurian target-detection method is an urgent research subject that has great practical value.

Compared with terrestrial environmental target-detection methods, underwater target-detection methods face further challenges. Due to the complicated seabed environment and the limitations of imaging equipment, underwater images have noise pollution, low contrast, and color distortion. These problems seriously affect the performance of underwater biological target detection. The similarity in body color and the environment also makes the accurate detection of holothurians more difficult.

At present, underwater biological target-detection methods are divided into traditional and deep learning methods, and there is little research on holothurian-detection methods.

Traditional methods are based on the color, texture, and body edges of the subjects to identify the target. In 2012, Schoening et al. [

1] proposed an automated detection method, iSIS (intelligent screening of underwater image sequences) to assist in the detection and monitoring of deep-sea benthic organisms, breaking new ground in marine research. In 2013, Fabic et al. [

2] used the Canny edge test to extract fish contours during a fish-population-estimation mission. In 2014, Hsiao et al. [

3], based on a sparse representation-based classification, proposed a partial-ranking method, SRC-MP, for underwater-video fish identification and for distinguishing swimming fish from other moving objects. In 2017, Qiao et al. [

4] used a controlled limited adaptive histogram equalization (CLAHE) method for image processing to increase the contrast between holothurian spines and the body of the holothurian. The contours of the holothurian can then be accurately identified using edge-detection algorithms. In 2019, Qiao et al. [

5] proposed an underwater holothurian-recognition method for support vector machines (SVMs). However, this method requires the texture features of the holothurian image dataset to be extracted first, and is susceptible to light conditions.

In recent years, more and more underwater target-detection methods based on deep learning have been proposed, most of which are improved versions of general target-detection models, which take into account the characteristics of the underwater environment. In 2015, Li et al. [

6] used Fast R-CNN, then the latest targeted-detection method, to better effect fish-detection missions. In 2018, Martin Zurowietz et al. [

7], in conjunction with Mask R-CNN, proposed machine learning-assisted image annotation (MAIA) to improve the annotation efficiency of large seafloor image sets. In 2020, Shi et al. [

8] proposed the YOLOv3-marine algorithm for underwater target detection. YOLOv3-marine reduces network parameters and optimizes residual modules based on YOLOv3, resulting in improved detection accuracy and speed. In 2020, Liu et al. [

9] proposed domain generalization YOLO (DG-YOLO) for underwater target detection. DG-YOLO is composed of YOLOv3, domain invariant module, and invariant risk-minimization penalty. In 2021, Zhang et al. [

10] improved YOLOv4 by replacing the backbone network with a lighter MobileNet v2, while adding attention-mechanism modules that perform well in underwater target-detection missions. In 2022, Nils Piechaud et al. [

11] used YOLOv4 for counting and measuring objects in the deep sea. In 2022, Lei et al. [

12] proposed an improved YOLOv5 network for underwater target detection. By improving the multi-scale feature-fusion method of the YOLOv5 pathway aggregation network, the network can focus more on learning important features.

Compared with the underwater target-detection method, the common target-detection method based on deep learning on land is more advanced. At present, there are two kinds of common target-detection methods: the anchor-based and anchor-free methods. Classic methods of anchorage include Faster R-CNN [

13], YOLOV3 [

14], YOLOV4 [

15], and YOLOV5. Although the anchor-based method is mature, there are always problems, such as an imbalance of positive and negative samples, memory consumption, and difficulty in identifying multi-scale targets. In order to solve these problems, more and more scholars have begun to study the anchor-free method, which is also the trend of the mainstream target-detection algorithm at present. Additionally, classic representatives of the anchor-free approach include CornerNet [

16], ExtremeNet [

17], CenterNet [

18], and FCOS [

19].

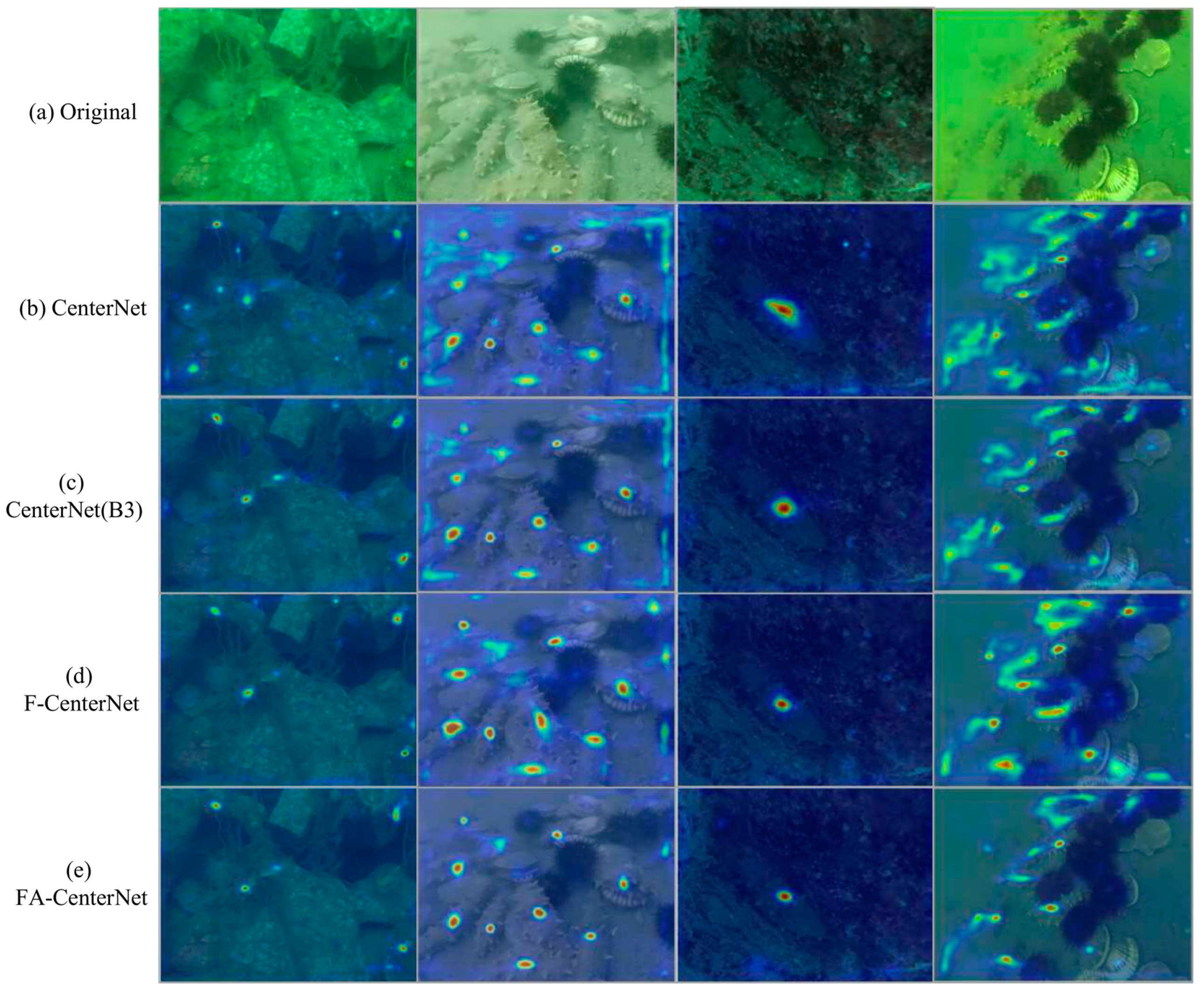

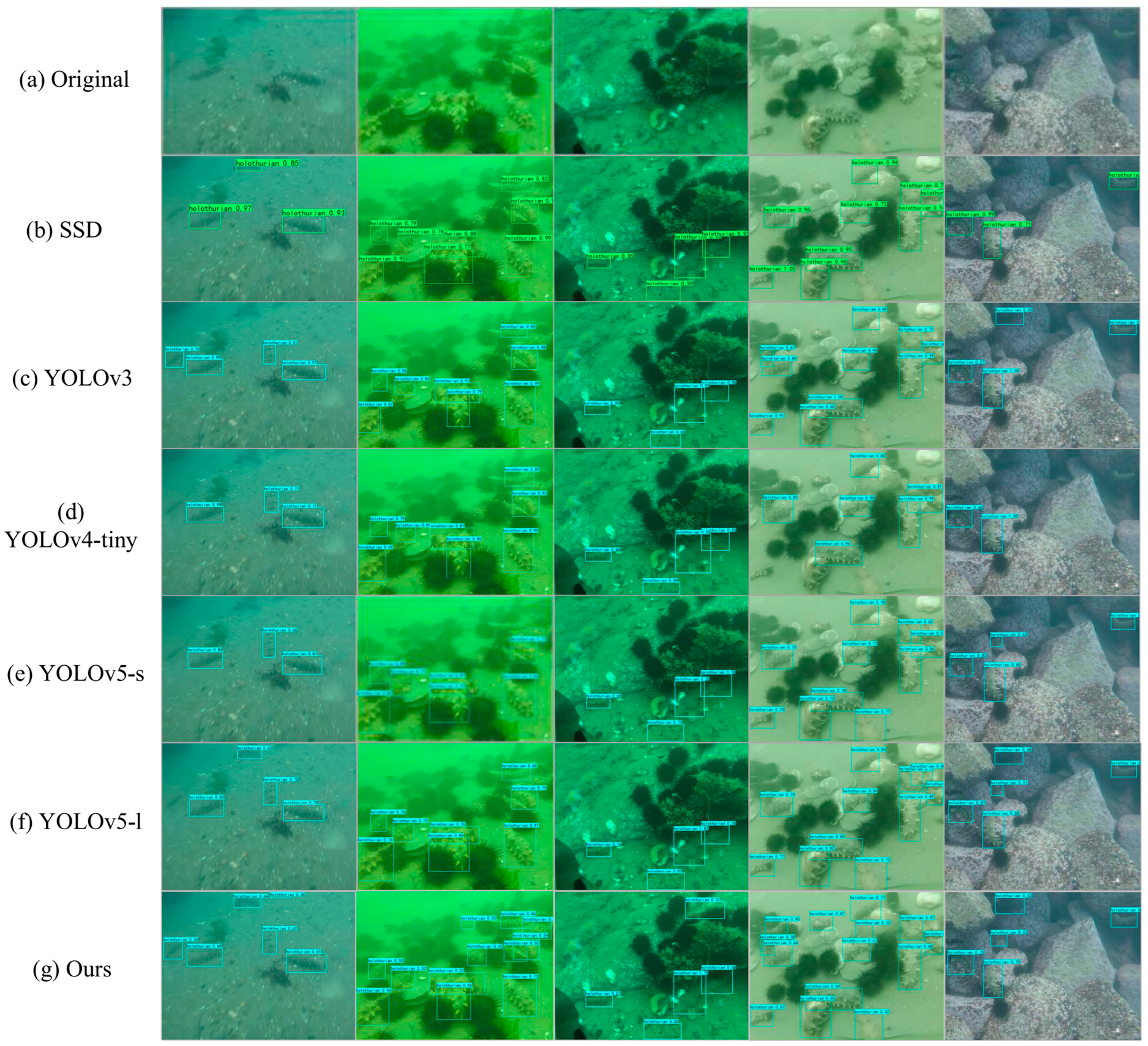

In order to achieve better holothurian detection, the anchor-free method, CenterNet, is used as the basic network in the current paper. For holothurian targets, we propose an improved detection algorithm for underwater holothurian targets based on CenterNet and scene feature fusion. This method can improve the detection accuracy for holothurians with fuzzy body features, small-sized holothurians, and high overlap, and can be deployed in embedded devices limited by resource requirements (for example, devices with low-graphics memory and low computational power). The main contributions of this paper are:

- (1)

We propose an improved CenterNet model for holothurian detection that replaces the original backbone network, ResNet 50, with a more robust EfficientNet-B3. EfficientNet-B3 reduces the Params and FLOPs of the model, while increasing the depth and width of the model by using neural network architecture search (NAS) technology and the Depthwise Separable Convolution strategy. High-performance EfficientNet-B3 considerably improves the feasibility of deploying the model to resource-limited embedded devices.

- (2)

In order to improve the accuracy of holothurian detection by making full use of the holothurian feature and co-existing scene information (e.g., waterweeds, reefs, and holothurian spines), we propose to add an FPT module between the backbone and neck networks. FPT uses three submodules, ST, GT, and RT, to integrate features from different scales and spaces, making full use of special scene features and details of holothurians to improve the accuracy of holothurian detection. At the same time, this paper improves the implementation of the FPT module in the target-detection network, adopts two FPT modules, inputs two different characteristic combinations, and then can integrate the model into more ecological scene information for holothurian detection.

- (3)

In this paper, we use the AFF module to achieve a better integration of multi-scale features. Unlike conventional linear feature fusion (such as “Concat”), the AFF module can simultaneously combine global feature attention and local feature attention to achieve the effective fusion of low-level-detail and high-level-semantic features, thus improving the accuracy of holothurian detection.

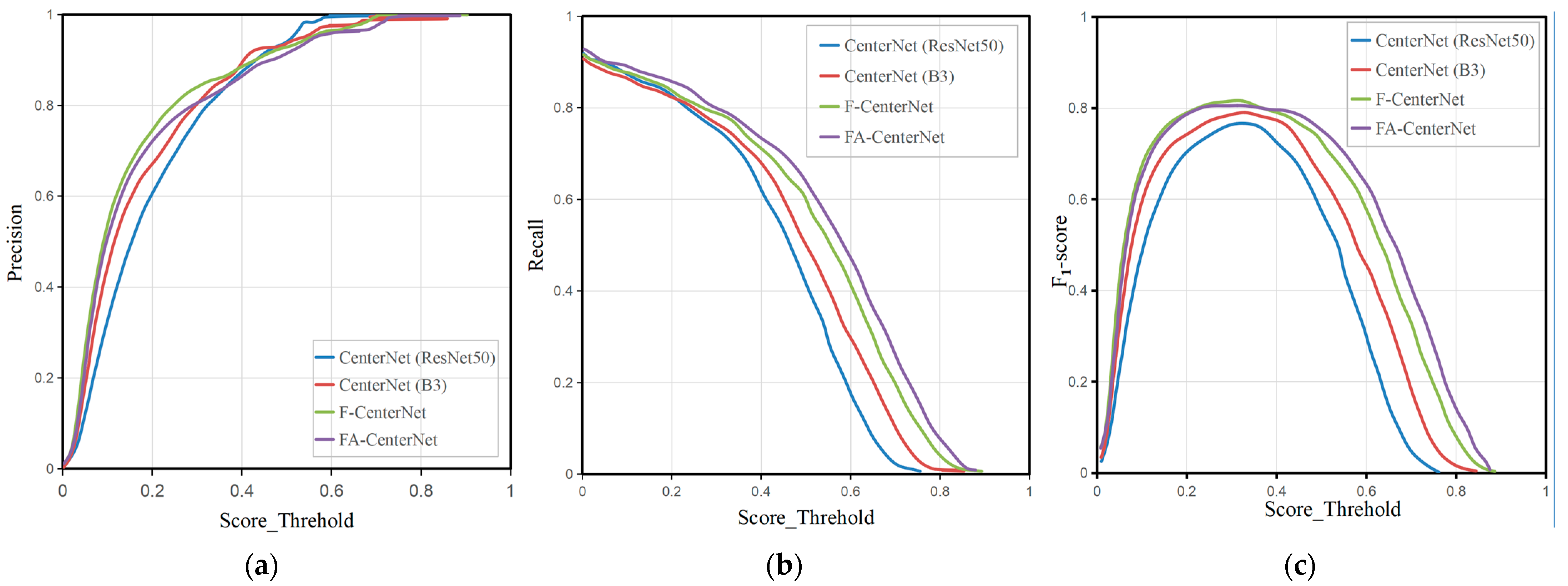

Compared with other underwater target-detection methods, the FA-CenterNet (CenterNet+B3+FPT+AFF) method proposed in this paper achieves better detection accuracy on the CURPC underwater target-detection dataset. Additionally, the model’s FLOPs and Params are also controlled at lower values. The results also show that the proposed method can achieve a good balance between AP50, Params, and FLOPs, and validate the validity of our approach.

The rest of this paper is arranged as follows: in the second section, the structure of the underwater holothurian target-detection method FA-CenterNet is introduced in detail; in the third section, the experimental results and analysis are presented; and in the fourth section, the work of this thesis is summarized.

2. Proposed Method

2.1. Overall Network Structure

In this paper, the proposed method FA-CenterNet structure consisted of input, backbone, neck, head, and output networks.

Figure 1 presents the overall network architecture of FA-CenterNet. In order to better complete the task of holothurian detection, the main improvements in this paper were to design and utilize the better lightweight network (EfficientNet-B3) as the backbone network of CenterNet, add two FPT (feature pyramid transformer) modules with different feature combinations between the backbone and neck networks, and use AFF modules to achieve feature fusion.

First, to make it easier for holothurian-detection models to be deployed in resource-limited embedded devices, we used EfficientNet-B3 as the backbone network in this paper. EfficientNet-B3, obtained using Google Neural Network Architecture (NAS) search techniques, has optimal model parameters, and the model contains a large number of Depthwise Separable Convolution and SE Attention Modules, enabling the model to perform well in terms of accuracy, Params, and FLOPs.

Then, neck network used the ConvTranspose operation to up-sample the multi-layer convolution results of EfficientNet-B3.

Then, in order to solve the difficulty of fuzzy features of holothurians, the FPT model was added to improve the detection accuracy of holothurians by using ecological scene information (e.g., waterweeds, reefs, and holothurian spines) that co-exist with holothurians. Additionally, we improved the implementation of FPT by using two FPT modules with different feature combinations to extract more holothurian scene information from the backbone network.

Then, feature fusion based on AFF modules was used between the backbone and neck networks. The AFF module can effectively integrate semantic and scale-incongruent holothurian features by enhancing channel attention between local and global features. Then, the features of the fusion were optimized again by using a 3 × 3 convolution.

Finally, the fusion features were introduced into CenterNet’s head module, and three independent head branches were used to generate critical point HEATmap, position offset, and target width. The final holothurian-detection result was obtained by a decoding operation.

In this paper, the loss function of FA-CenteNet was composed of three loss functions: heatmap, offset, and wh losses. We presented the specific formulas for the three loss functions in

Appendix A.

2.2. EfficientNet-B3

In an underwater holothurian target-detection task, the trained model needs to be deployed into embedded equipment. However, the large computation volume and Params often bring great challenges to the embedded devices that are short of resources. Therefore, the lightweight nature of the model is very important for underwater holothurian target detection.

In this paper, we replaced CenterNet’s backbone network from ResNet50 to EfficientNet-B3 to address the model lightweight issues. EfficientNet [

20] is a new lightweight network developed through Google’s Network Architecture Search (NAS) technology. In the ImageNet classification task, EfficientNet showed advanced performance in accuracy, FLOPs, and Params.

EfficientNet is guided by the idea that the model performs better by simultaneously scaling up its depth, width, and image resolution. A series of models of EfficientNet B0-B7 are obtained by scaling up the three dimensions of the model using different composite coefficients. EfficientNet-B3 has a high accuracy while maintaining smaller Params and less FLOPs, so we selected it as a feature extractor in this paper.

As shown in

Figure 1 and

Table 1, EfficientNet-B3 consists of one stem ordinary convolution layer and seven blocks. Blocks 1–7 are based on the MBConv module. There is a down-sampling relationship between blocks 2, 3, 5, and 7 in which stride is two. The default input resolution for EfficientNet-B3 was 300 × 300, and the proposed method in this paper adjusted it to 512 × 512.

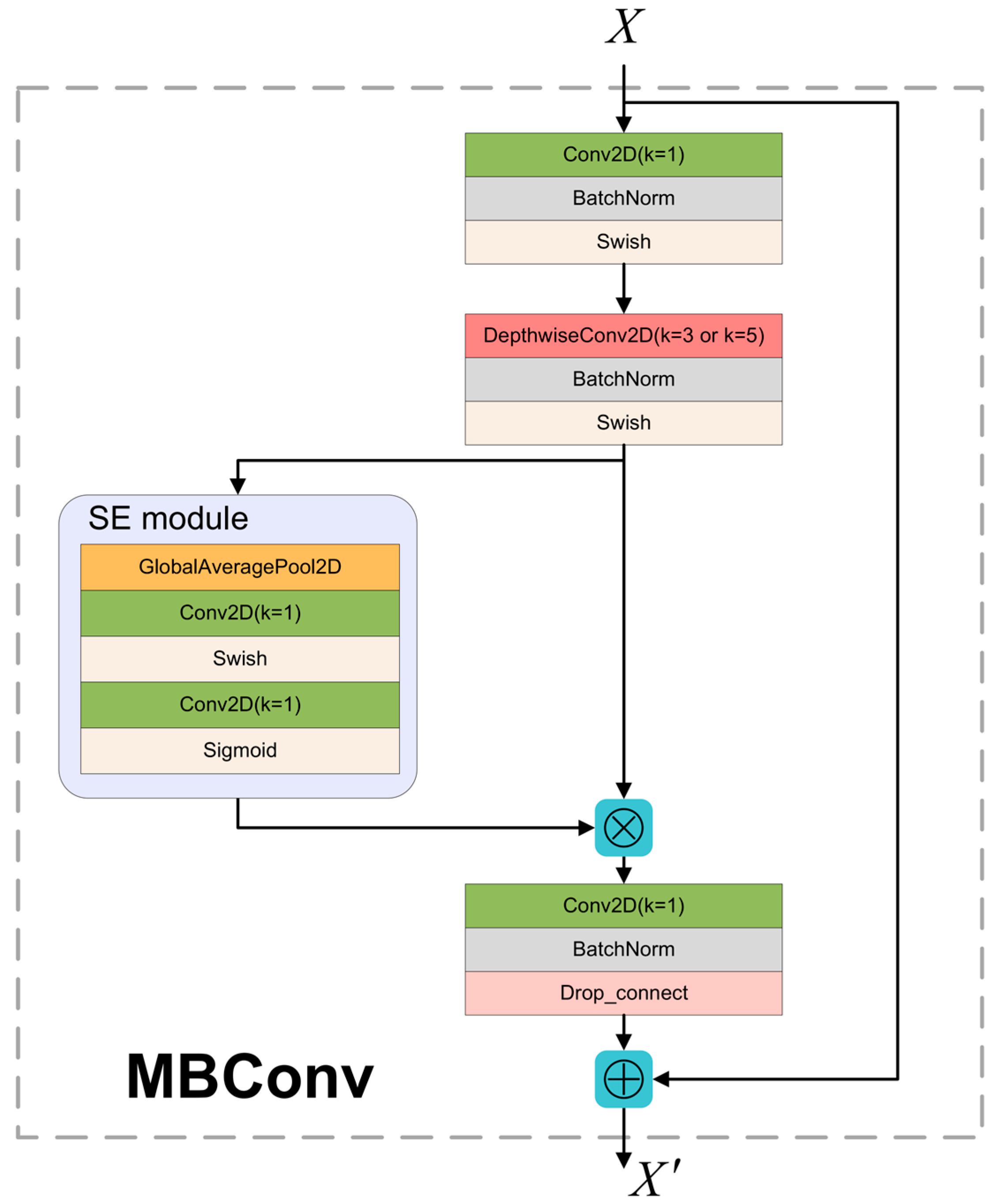

MBConv mainly refers to MobileNet v2’s inverted residual structure and adds SE modules. The structure of MBConv is presented in

Figure 2. In MBConv, when the expand ratio was 1, the input feature skipped the 1 × 1 convolution of the first layer and went directly to the Depthwise Separable Convolution module. When stride was two, the feature size shrank to 1/2 of its previous size. MBConv uses both the Depthwise Separable Convolution Strategy and SE Module, enabling EfficientNet-B3 to have an advanced performance in precision, Params, and FLOPs.

2.3. FPT Module

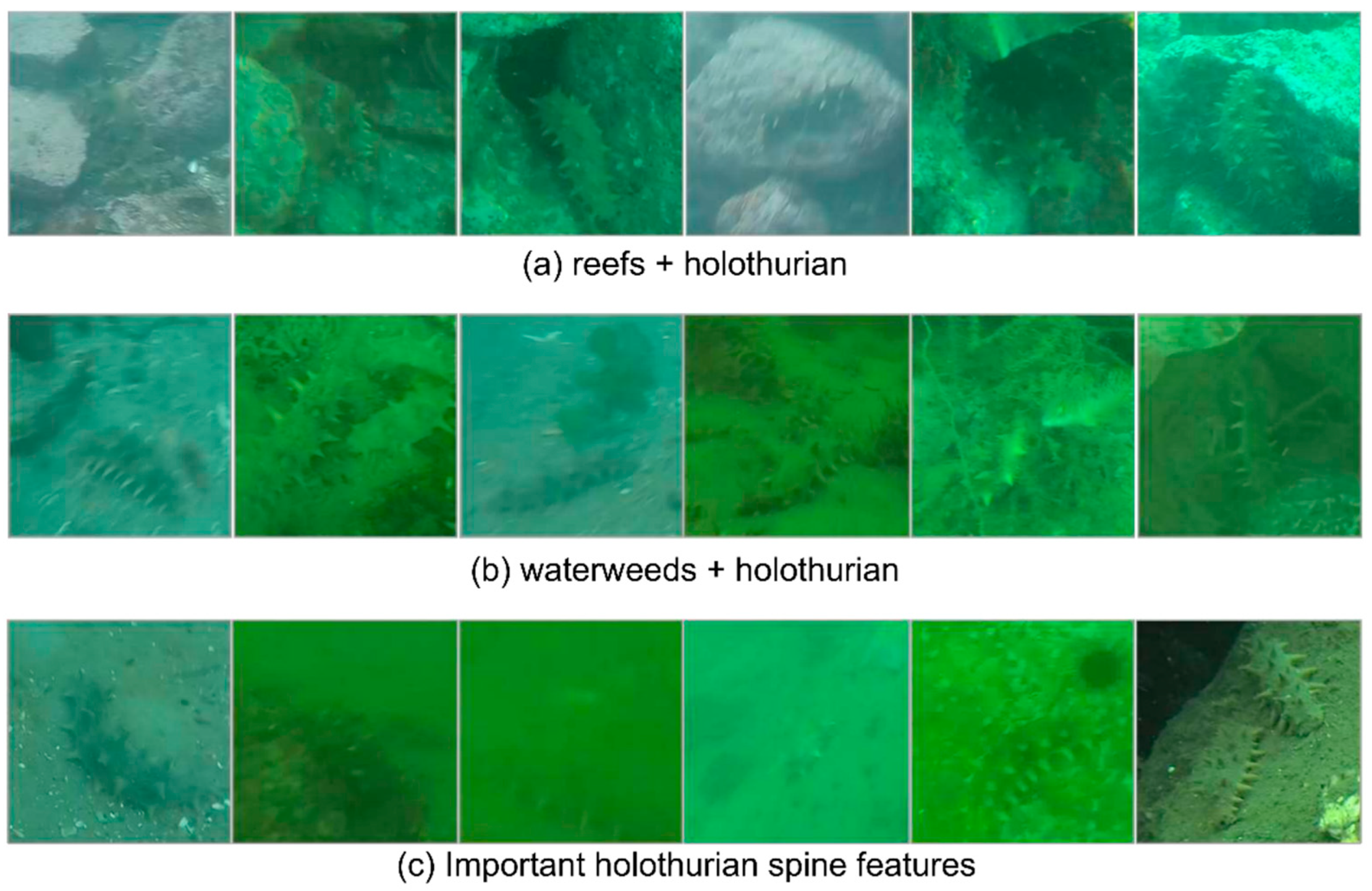

Holothurians have a good ability to protect themselves: their main body color can change with the environment’s color, so the body characteristics of holothurians and the environmental characteristics are highly similar. This is also the greatest challenge in holothurian-detection missions. As presented in

Figure 3, the characteristic color of holothurian spines does not change with the color of the environment, but takes on a steady yellow-green cone shape. In addition, holothurian living environments generally have reefs, waterweeds, and other ecological scene information. These features from different sizes and spaces often exist in the same scene as holothurians, especially the small target holothurian spines from the lower networks. We believed that capturing the scene information was beneficial to the detection of fuzzy holothurians and will be a breakthrough to improve the detection performance of holothurians.

The SE module of the backbone network (EfficientNet-B3) continuously enhances inter-channel information by continuously obtaining the global feature weight between channels. However, as the number of layers deepens, the model gradually loses important local spatial details of holothurians (i.e., holothurian spines). To a certain extent, it affects the model’s ability to detect holothurians.

In order to solve the problem that the characteristics of holothurians are fuzzy and difficult to recognize, the FPT [

21] (feature pyramid transformer) module was added to the proposed method. The FPT module incorporates features of holothurian ecological scenes (such as holothurian spines, reefs, and waterweeds) from different scales and spaces. These scene features can be used as auxiliary information to help the model detect holothurians. The FPT module is very useful for the recognition of fuzzy features and small-sized holothurians.

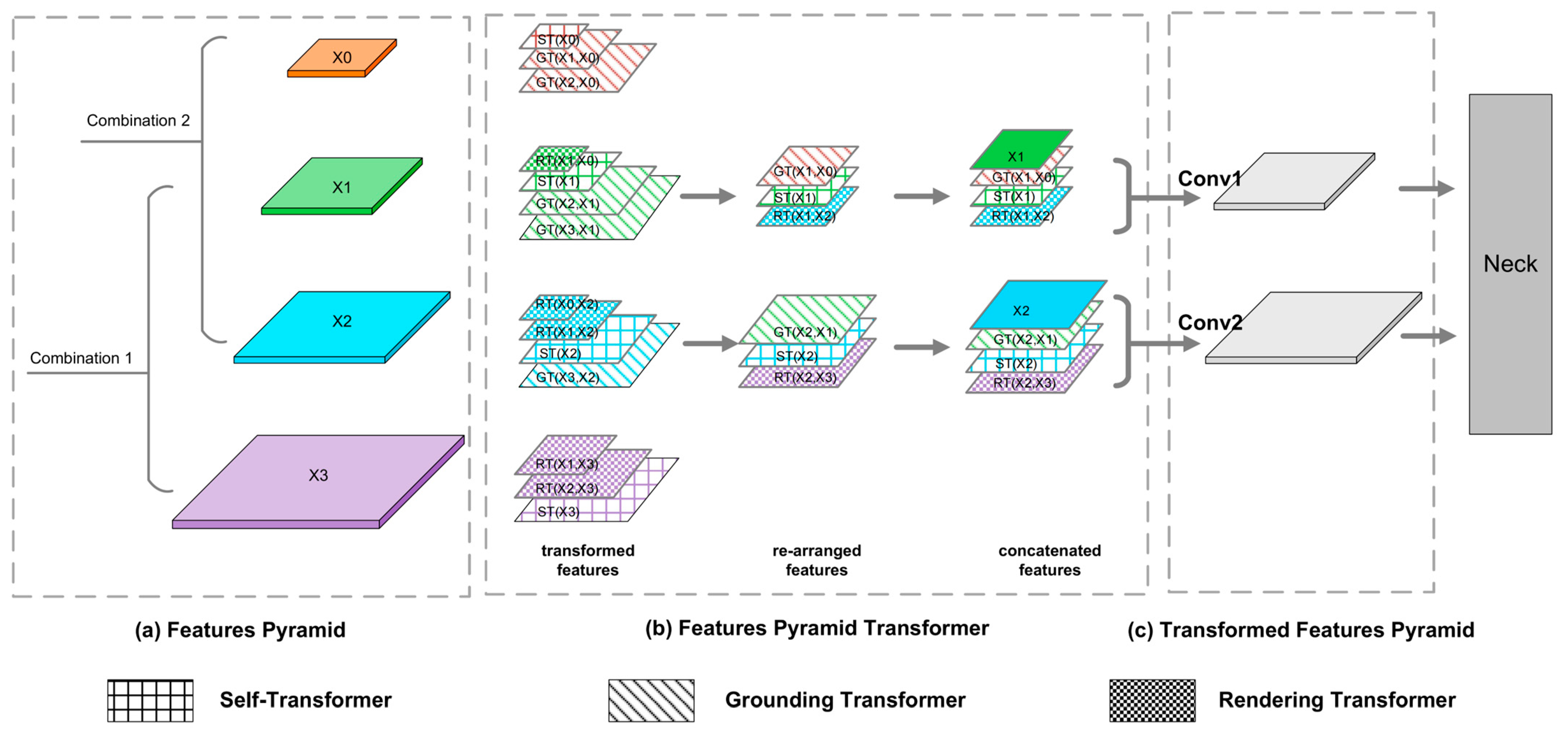

As presented in

Figure 4, the input of the FPT module is a feature pyramid, and the output is a transformed feature pyramid that incorporates different levels of features. Compared with the classical feature pyramid network (FPN), the FPT module adopts a more complex feature-fusion strategy, so that each layer of the output has more contextual information. The FPT module is guided by transformers. It uses query (Q), key (K), and value (V) to capture contextual information, and then interacts with non-local features across space and scales to generate new feature maps.

The FPT module consisted of three types of transformers: a self-, grounding, and rendering transformers.

Unlike the original FPT fusion strategy, this paper used two FPT modules to fuse two feature combinations. This allowed the model to obtain richer feature information. The FPT fusion objects in this paper were (X0, X1, X2) and (X1, X2, X3), respectively.

As presented in

Figure 4, the implementation details of FPT are described. First, feature combinations were processed by the transformers and the corresponding ST, GT, and RT features were obtained. Then, the new feature diagram was recombined to match the same sizes as X1 or X2. Finally, we used “Conv1” and “Conv2” to readjust the number of channels for the new feature-map combination, and then the final feature map was sent to the neck network.

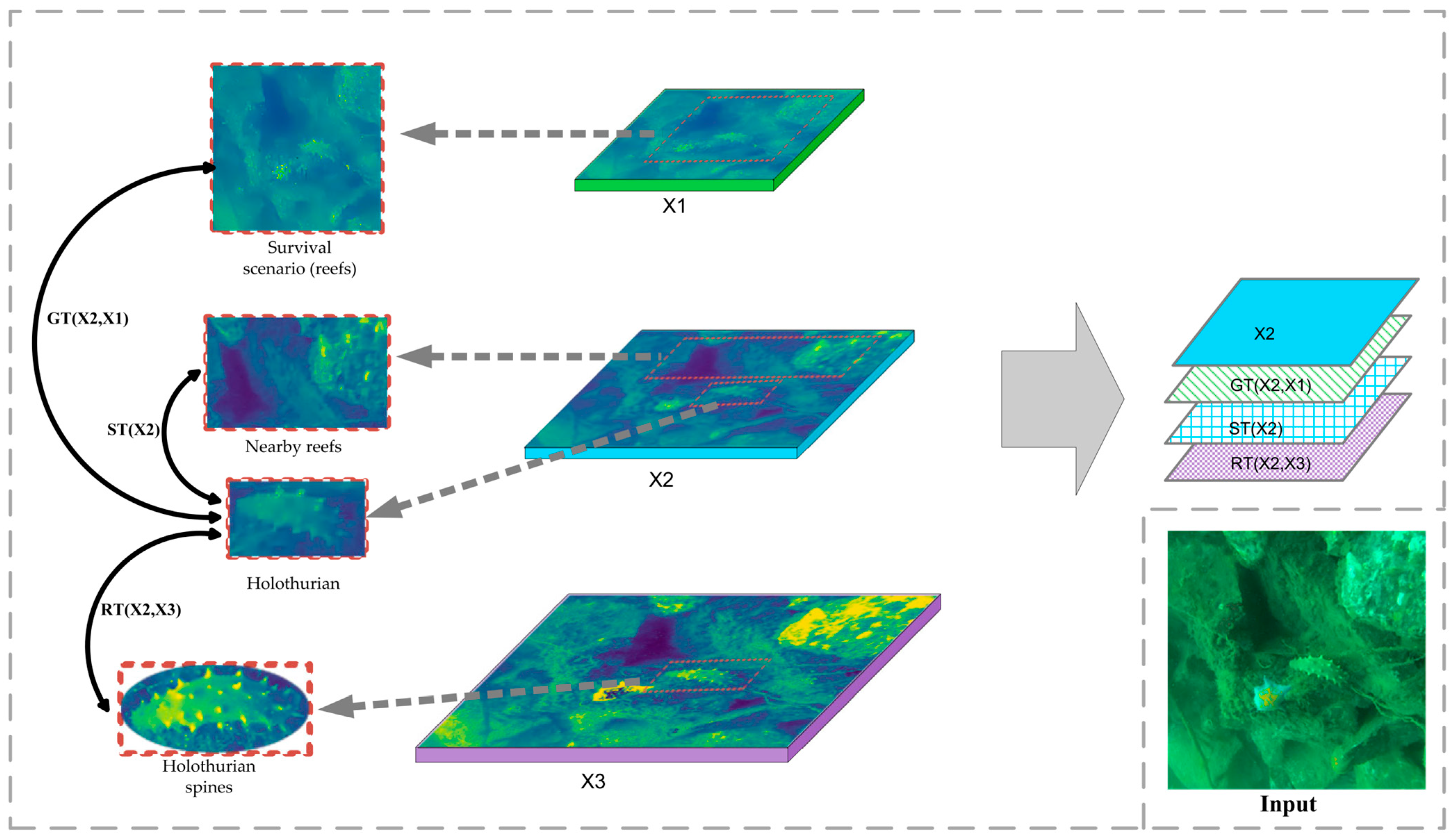

As presented in

Figure 5, to understand FPT in more detail, we obtained one of the FPT modules from the structure presented in

Figure 4 as an example for detail analysis. The feature combination of the selected FPT models was (X1, X2, X3). X1, X2, and X3 represented the network’s high-level, mid-level, and low-level features, respectively. X3 details (e.g., obvious holothurian spines) interacted with X2 mid-level information (e.g., holothurian) to obtain the new feature RT (x2, x3). The holothurian features of X2 interacted with habitat features, such as reefs, to obtain a new feature, ST (X2). The high-level information of X1 (e.g., holothurians and ecological scenarios) interacted with the holothurians features of X2 to obtain the new feature GT (x2, x1). Then, three new features were combined with the original feature X2 to obtain the FPT output.

In this paper, the core idea of the FPT module was to obtain more features that co-exist with holothurians (e.g., waterweeds, reefs, and holothurian spines) as detection aids to improve the accuracy of the model for holothurians. Specifically, the FPT module integrates features from different scales and spaces through ST, GT, and RT components, providing greater weight to holothurians with unique scene and detail features, thus reducing the error-detection and missed rates for holothurians. The following is a detailed description of the FPT components (ST, GT, and RT).

2.3.1. Self-Transformer

ST (self-transformer) [

21,

22] is a feature-interaction module based on non-local space, which can realize the information fusion of different spatial objects in the same scale feature map. In the underwater holothurian-detection task, ST can capture the relationship between holothurian and ecological scene features on the same scale. ST can use these scene features as auxiliary information for holothurian detection, and then enhance the model’s attention to this type of scene information, which improves the accuracy of holothurian detection. We presented the ST specific formula in

Appendix B.

2.3.2. Grounding Transformer

GT (grounding transformer) [

21], as a feature-fusion module, uses semantic information at the top of the network to enhance the information at the middle and lower levels of the network. In the underwater holothurian-detection mission, GT can capture the relationship between the characteristics of holothurian ecological scenes (e.g., waterweeds, reefs, and holothurian spines) at different scales. Then, GT uses semantic information, such as large reefs in high-level networks, as an aid in detecting holothurians, and increased the model’s attention to holothurians in such scenarios. To a certain extent, GT improves the accuracy of holothurian detection with insufficient semantic information. We presented the GT specific formula in

Appendix B.

2.3.3. Rendering Transformer

RT (rendering transformer) [

21], as a feature-fusion module, uses pixel-level information at the bottom of the network to render information at the middle and upper levels of the network. Unlike ST and RT, RT uses local spatial-feature interactions. Because the distance between non-local spatial features from different scales is too great, it makes little sense to capture the relationship between non-local spatial features. During the underwater holothurian-detection mission, RT enhanced the model’s attention to detail features, such as holothurian spines, thus improving the model’s accuracy in detecting holothurians with fuzzy body features. We presented the RT specific formula in

Appendix B.

2.4. AFF Module

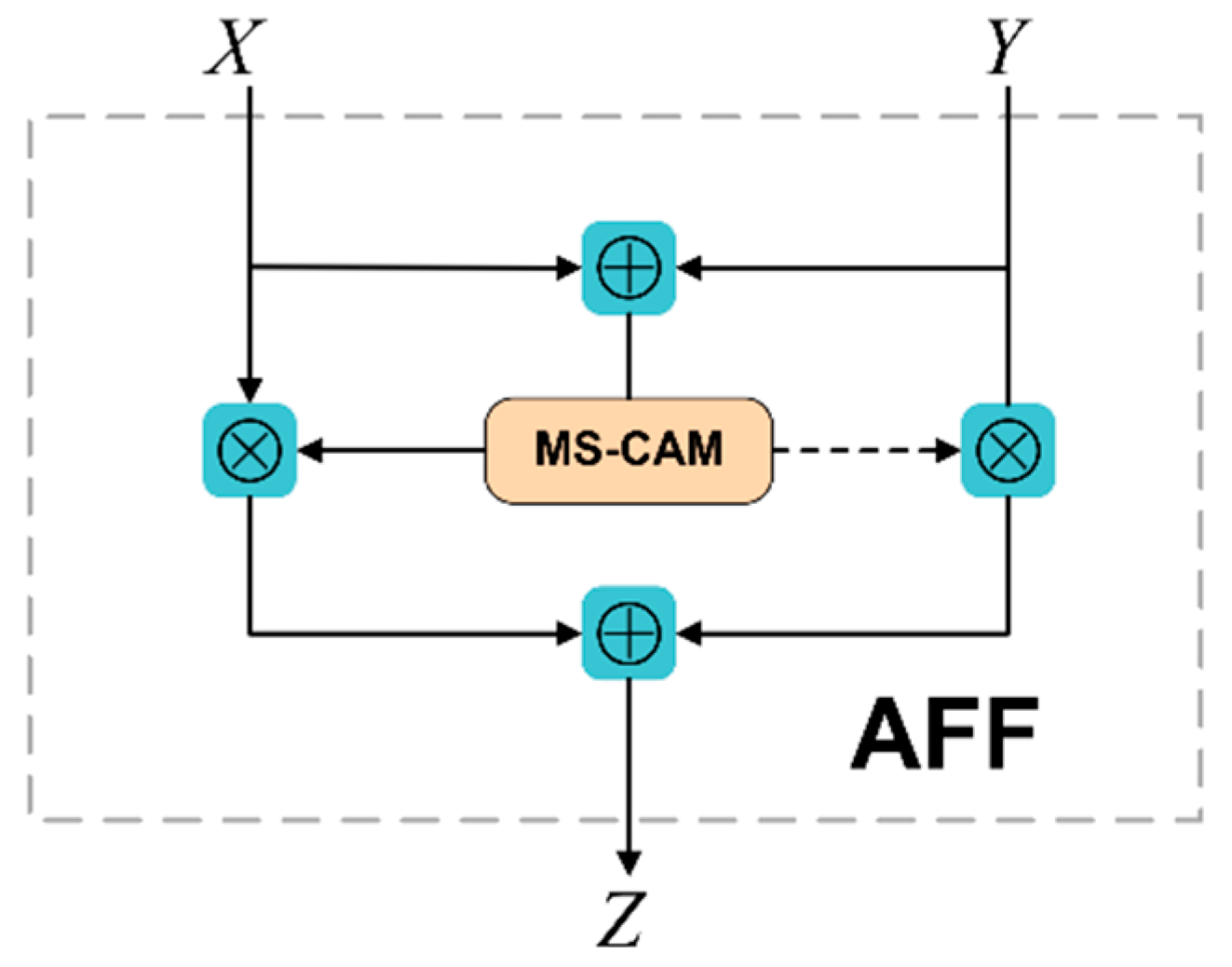

In general, the output of FPT is fused with the deconvolution module (neck) by means of “Concat”. However, this simple linear fusion is not the best way to integrate features that vary widely in semantics and scale. As presented in

Figure 1, the proposed method replaces the original “Concat” feature fusion with an AFF (attentional feature fusion) module-based feature-fusion approach. This method can better integrate the features of holothurians with different semantics and scales, thus improving the accuracy of holothurian detection.

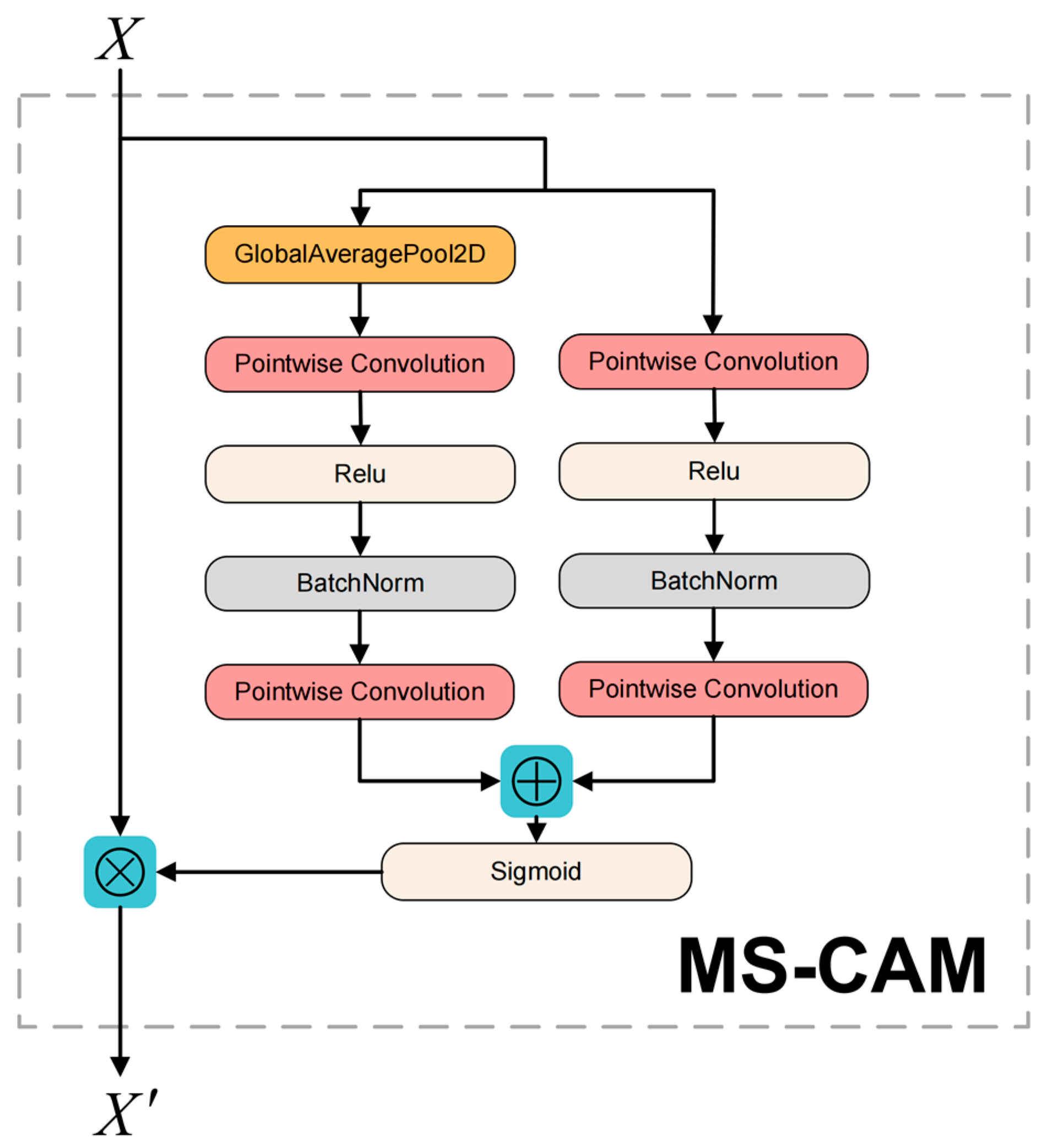

The core module of AFF [

23] (attentional feature fusion) is MS-CAM and its structure is presented in

Figure 6. Compared to SENet, MS-CAM not only extracts attention from global features, but also from channels with local features, with richer attention information. MS-CAM uses a combination of Global Branch + Local Branch. Unlike the local branch, the global branch adds an extra Global Avg Pooling. Global branching is used to extract attention from global features, helping models distinguish holothurian features from large-scale seafloor environments. The local branch uses pointwise convolution to extract channel attention for local features, which helps to enhance the model’s focus on the local features of holothurians (e.g., holothurian spines).

When AFF modules are used in feature pyramid structures, input feature X is the low-level detail feature of FPT output and input feature Y is the high-level semantic feature of the neck network. Based on MS-CAM, AFF’s computational equation can be expressed as follows:

where

represents the output characteristics of the AFF module;

represents the MS-CAM module;

represents the initial feature integration; and

represents element by element multiplication.

Figure 7 presents the AFF module’s structure. The dotted line represents

.

and

are both real numbers from 0 to 1. The advantage of AFF structural design is that the model can learn the weight between

and

through its own training.

4. Conclusions

At present, holothurians are still mainly caught manually. Due to noise pollution, low contrast, and color distortion in underwater images, the intelligent holothurian-fishing robot has encountered various technical difficulties in popularizing it. In order to avoid casualties in holothurian fishing, this paper proposed the method (FA-CenterNet) that combines high precision and its lightweight quality in order to promote the technological development of intelligent holothurian-fishing robots. The proposed method performed well in the CURPC 2020 datasets. Compared with other underwater target-detection methods, this method can improve the detection accuracy of fuzzy, small-sized, and overlapping holothurians. Meanwhile, the FA-CenterNet presents excellent performances of Params and FLOPs.

(1) In order to solve the problem of the resource limitation of embedded equipment, EfficientNet-B3 with its excellent performance was used as the backbone network. EfficientNet-B3 significantly reduced the model’s Params and FLOPs, making it easier for the model to be deployed in embedded devices, such as holothurian-fishing robots.

(2) In the current paper, the FPT module was added to deal with the difficulty of detecting holothurians better due to the complexity of the underwater environment and fuzzy features of holothurians. The FPT module could fully integrate the features of holothurian scenes (e.g., waterweeds, reefs, and holothurian spines) in different scales and spaces to improve the detection of holothurians with fuzzy features and highly similar bodies and backgrounds. The FPT module improved the implementation of the original FPT single-feature combination, and used two FPT modules as new fusion features in the model. Since the input had two different combinations of features, the model could be integrated into more ecological scene information for holothurian detection.

(3) In order to better integrate the different semantic features between the FPT output and neck-layer features, we proposed a feature-fusion method based on the AFF module. Compared with the “Concat” feature fusion in the conventional FPN structure, the AFF module simultaneously enhanced the model’s attention to global and local features, achieved the effective fusion of shallow and deep features, and improved the detection accuracy of holothurians.

(4) The method proposed in the current paper mainly identified and located underwater target detection of holothurians. The results show that FA-CenterNet has an AP50 of 83.43%, Params of 15.90 M, and FLOPs of 25.12 G on the CURPC 2020 underwater target-detection dataset. AP50 reflected the model’s ability to detect holothurians. Additionally, Params and FLOPs reflected the explicit memory space and chip computing power required by the model, respectively. Compared with other underwater target-detection methods, the proposed method, FA-CenterNet, achieved a good balance between detecting accuracy, Params, and FLOPs. FA-CenterNet can be used for real-world underwater holothurian-detection missions presenting an outstanding performance.

The method proposed in the current paper was helpful to promote the development of an intelligent holothurian-fishing robot and is of great significance to the further intelligent development of shallow-sea fisheries. The research in the future should focus on improving the model’s FPS performance by optimizing the model structure further to achieve a better balance of the model’s AP50, Params, FLOPs, and FPS performance metrics.