Smartphone-Based Localization for Passengers Commuting in Traffic Hubs

Abstract

1. Introduction

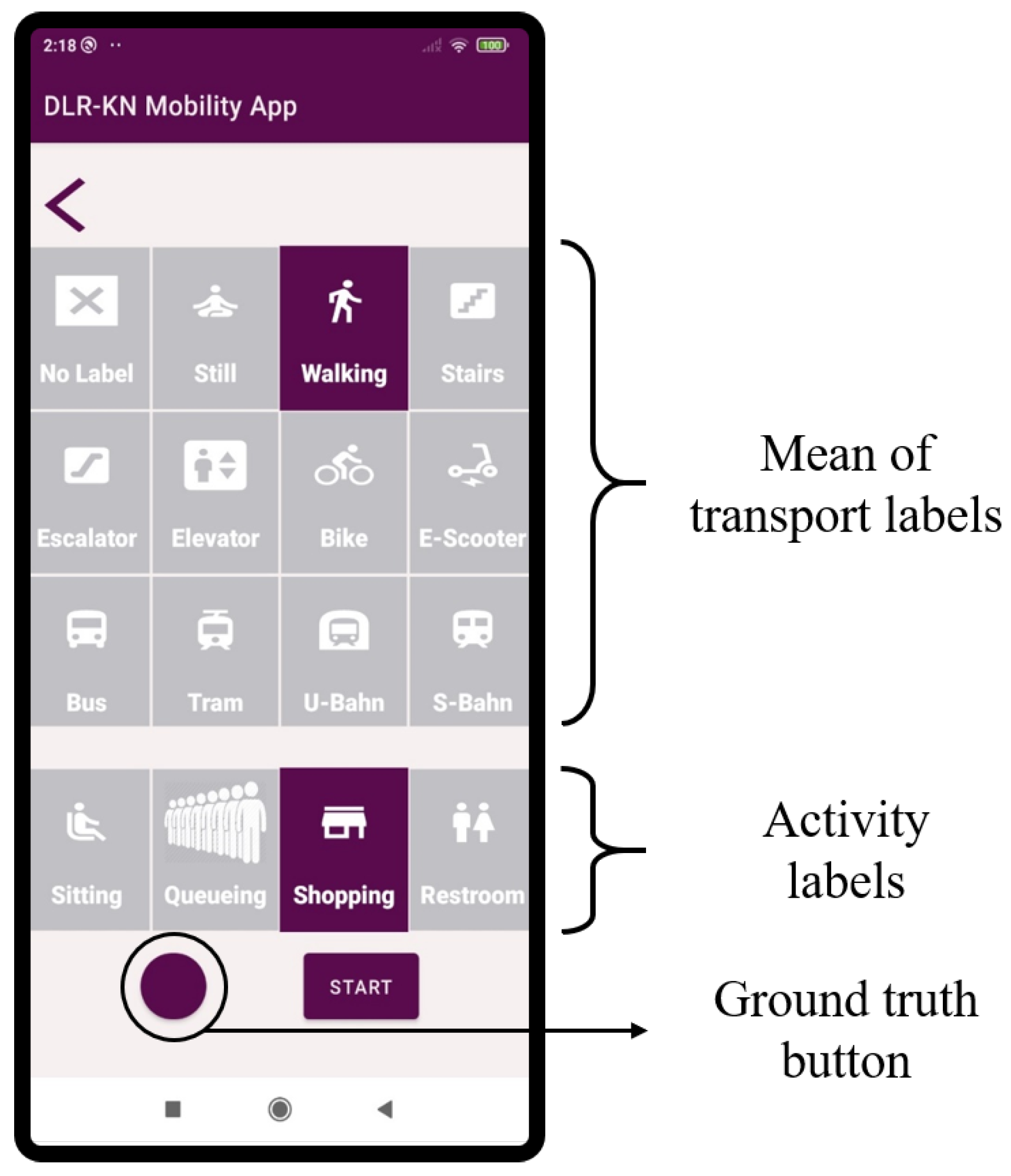

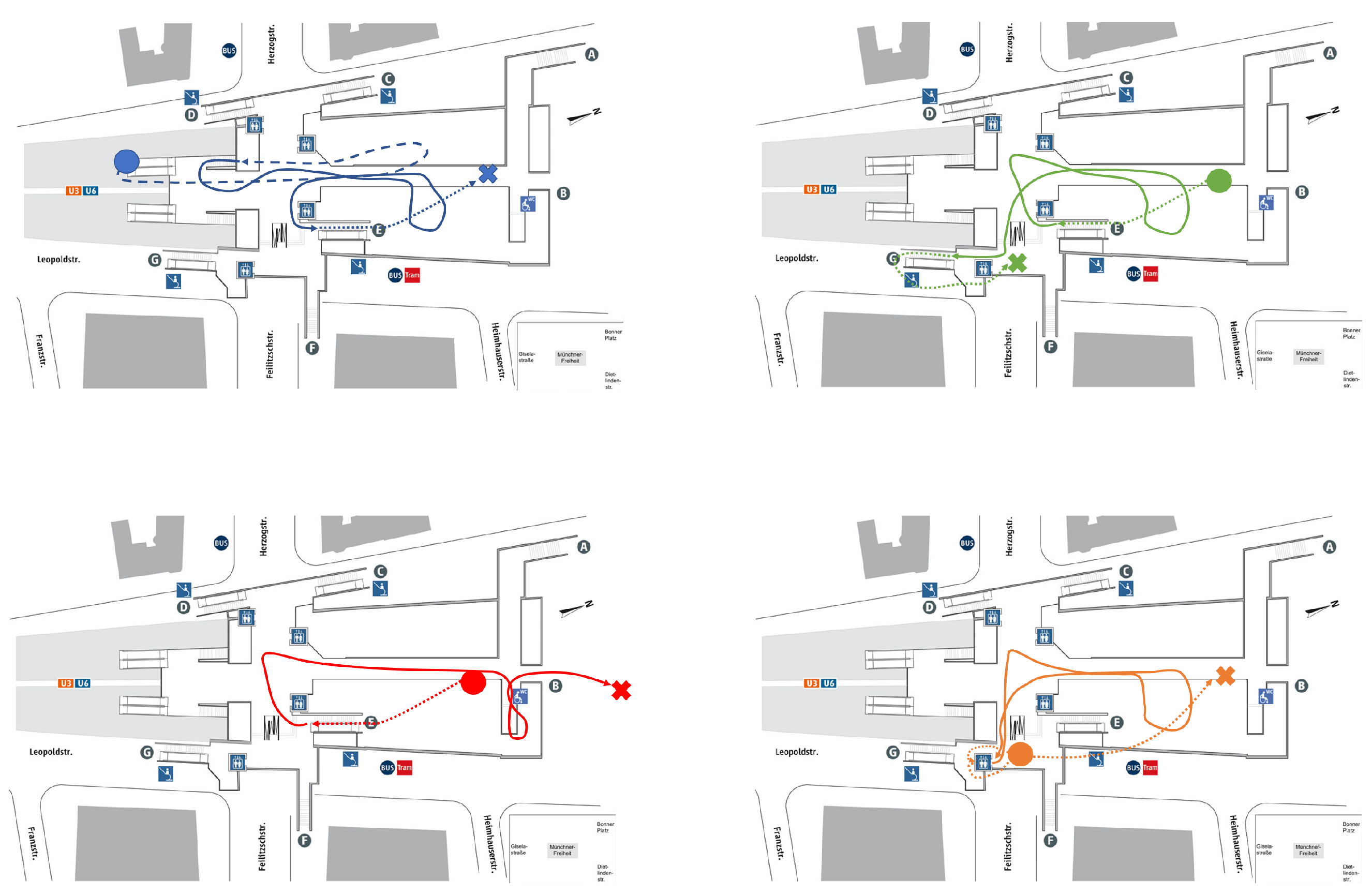

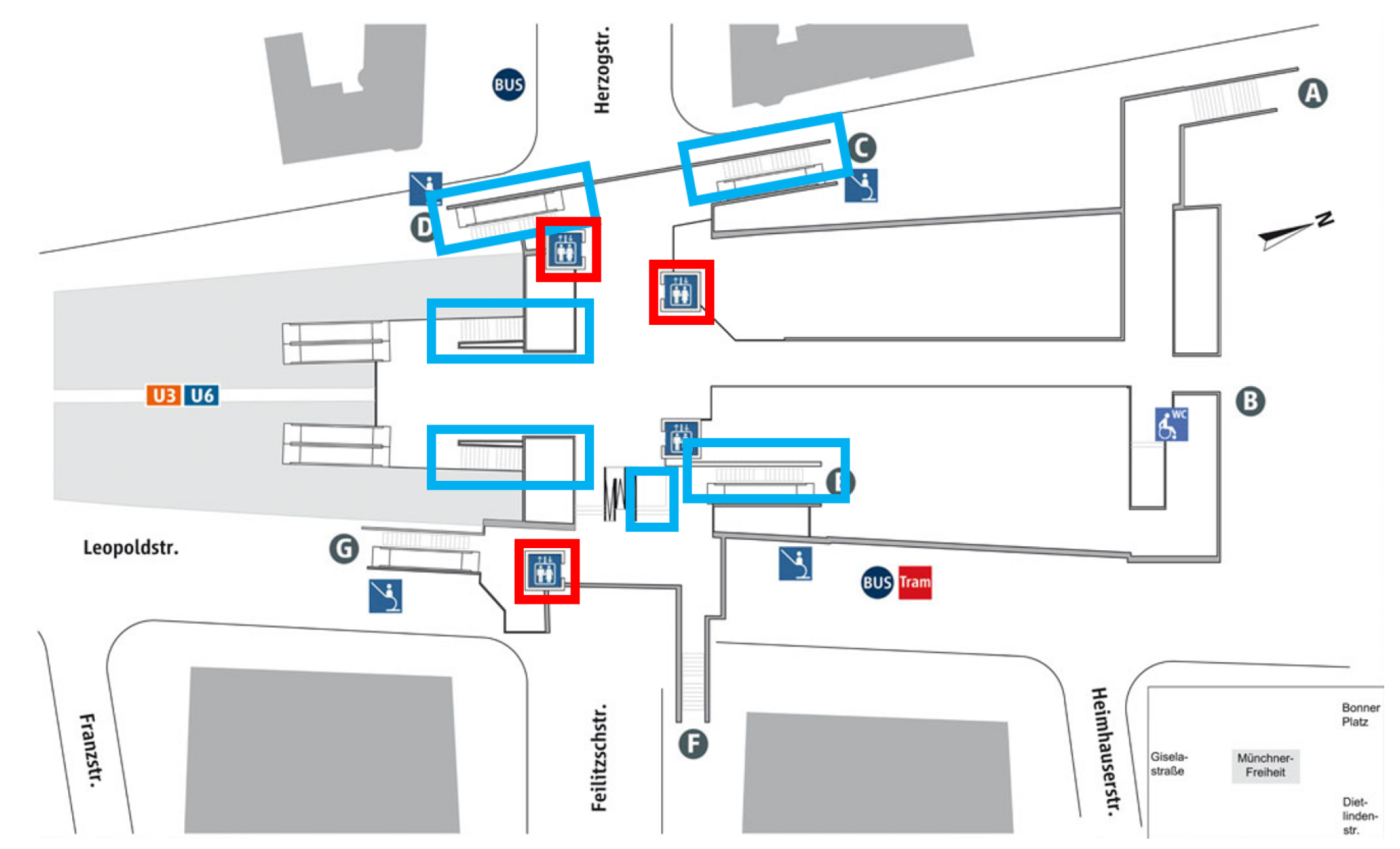

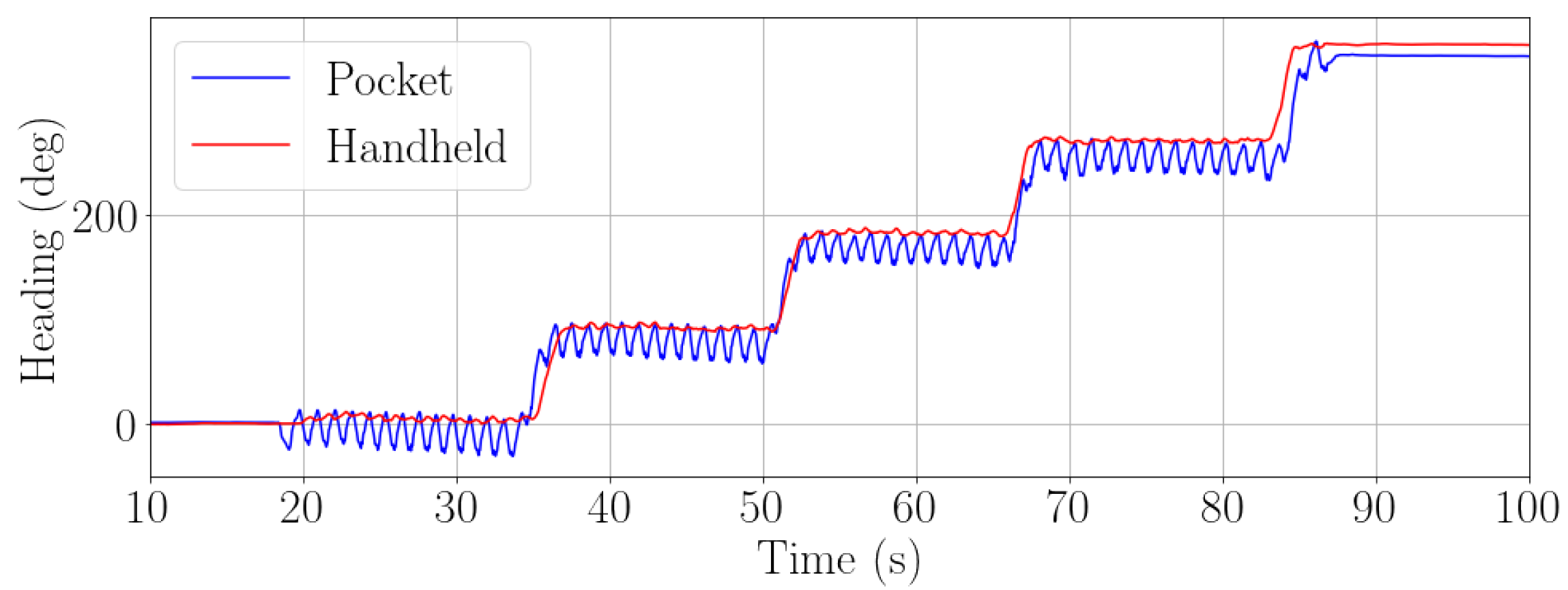

2. Data Collection

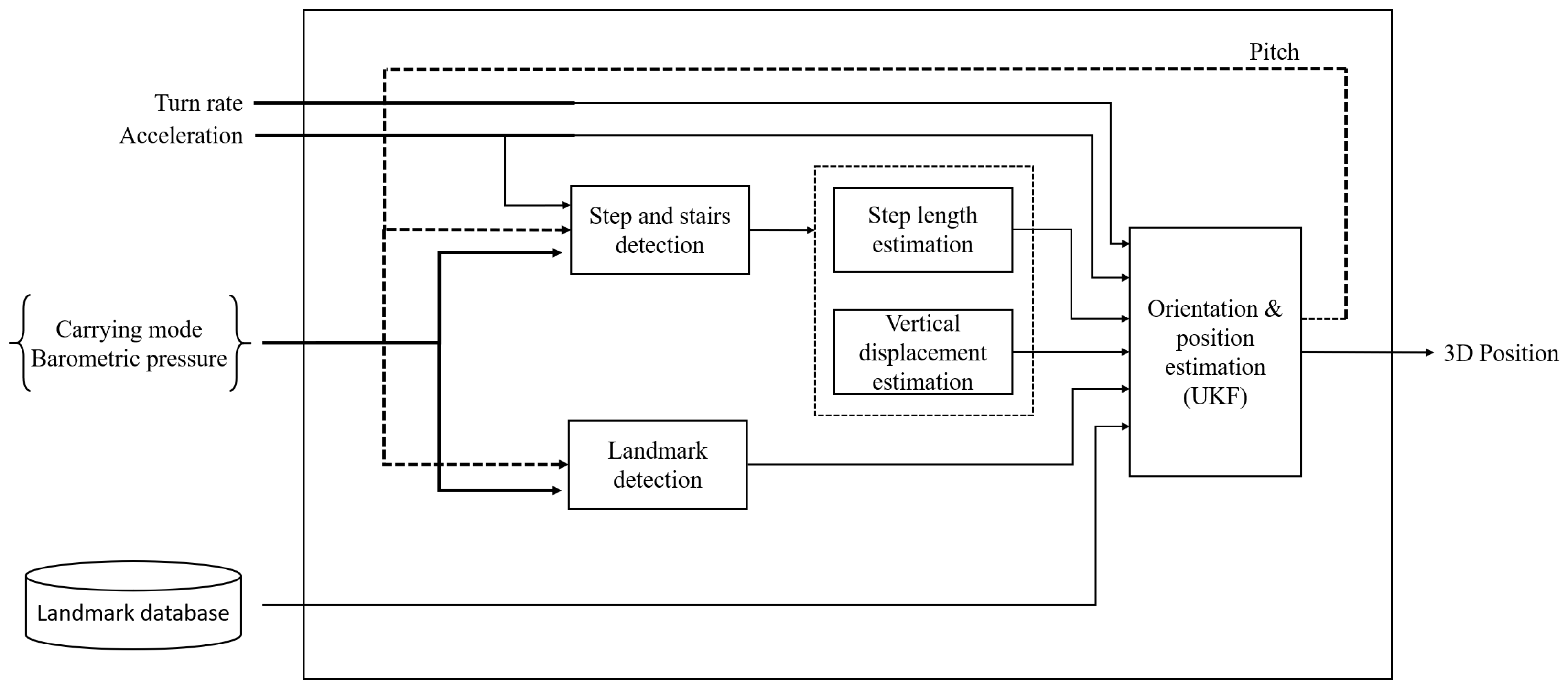

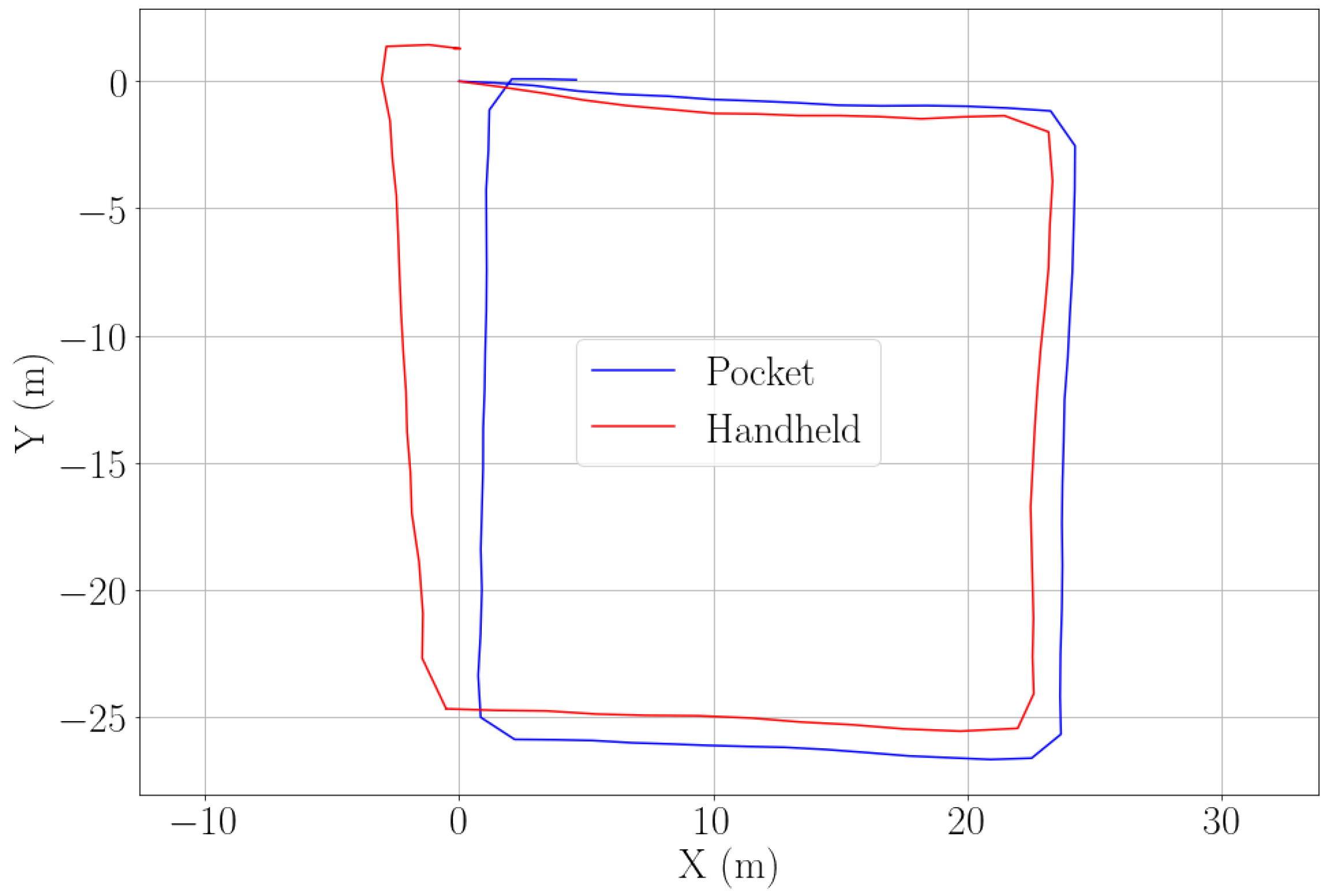

3. Passenger Localization

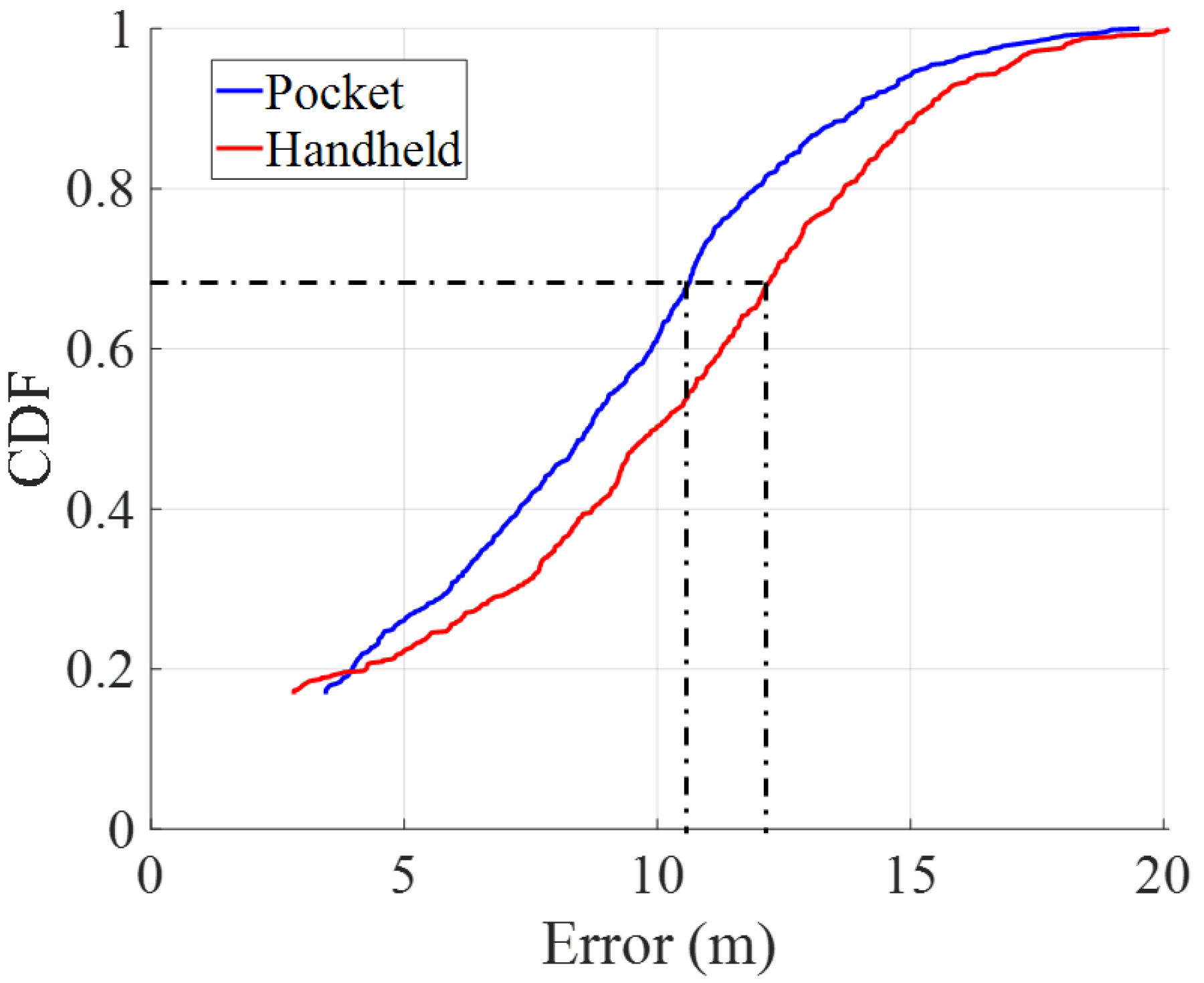

- In the front pocket of the trousers;

- Held in the hand.

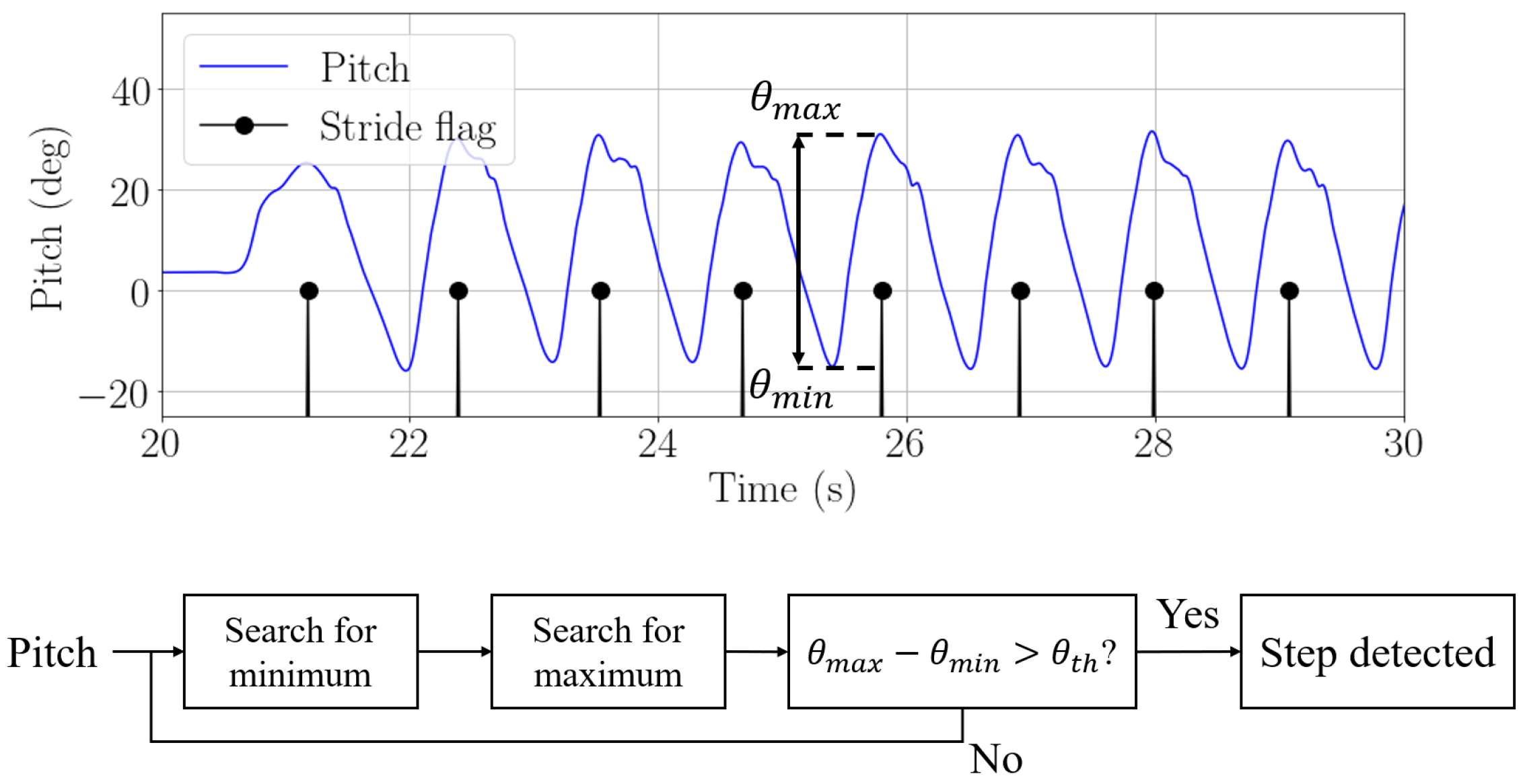

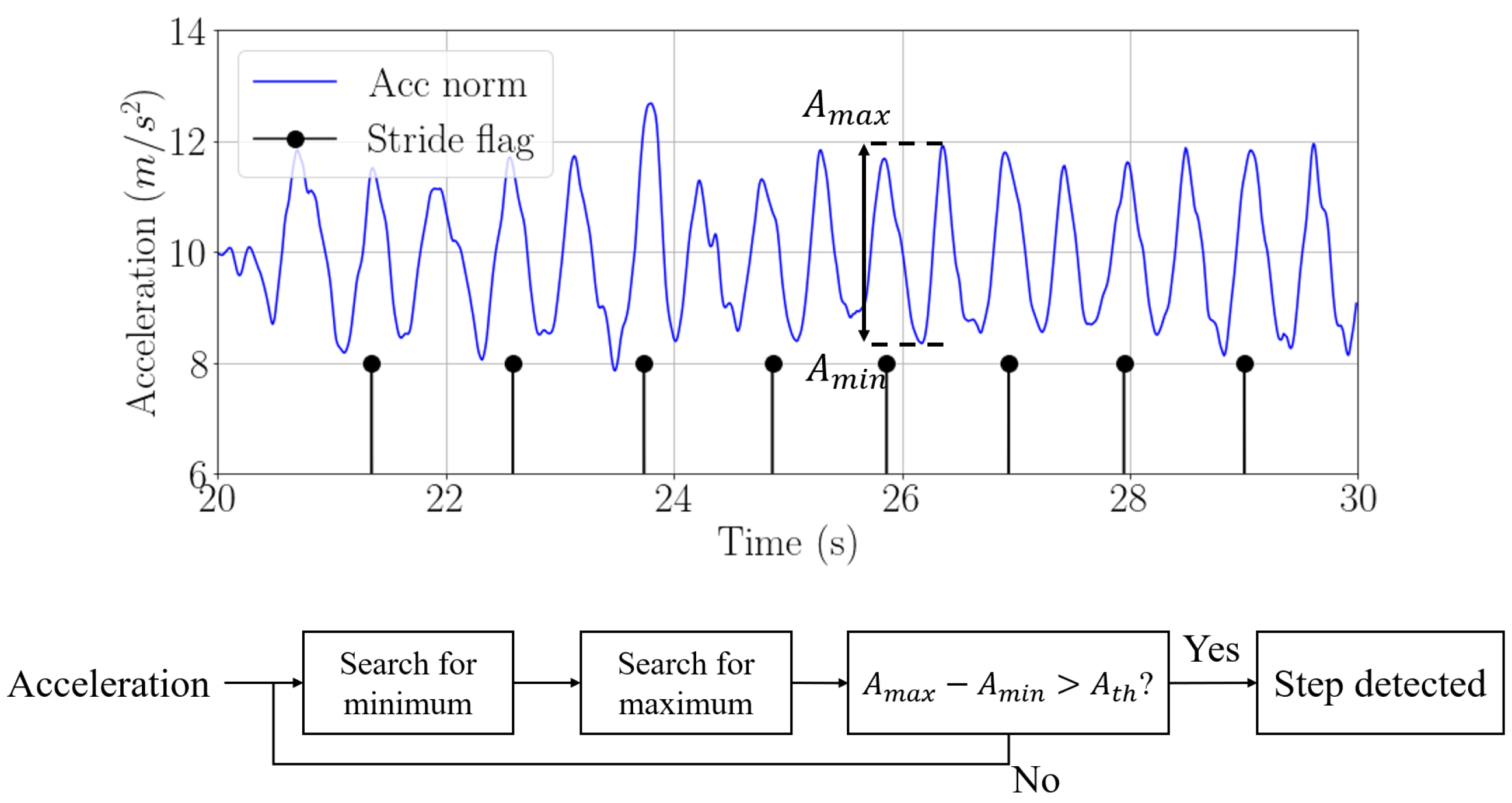

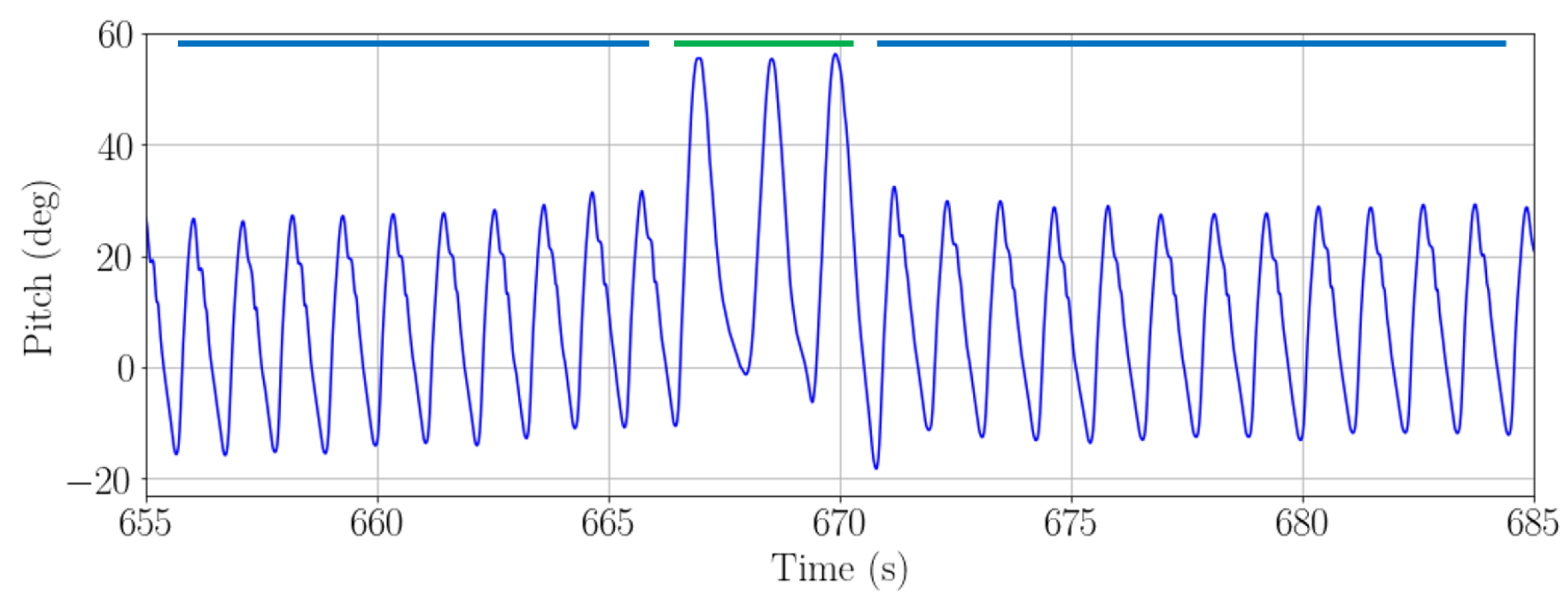

3.1. Step Detection

3.2. Step Length Estimation

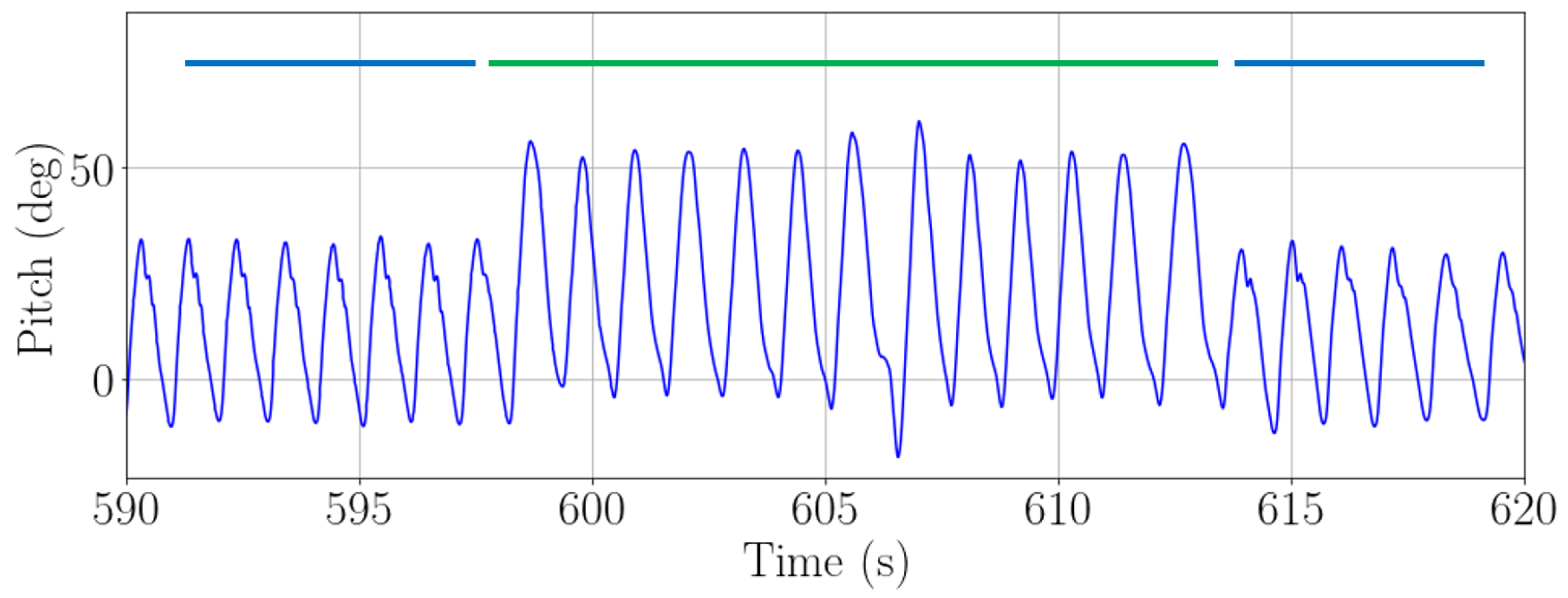

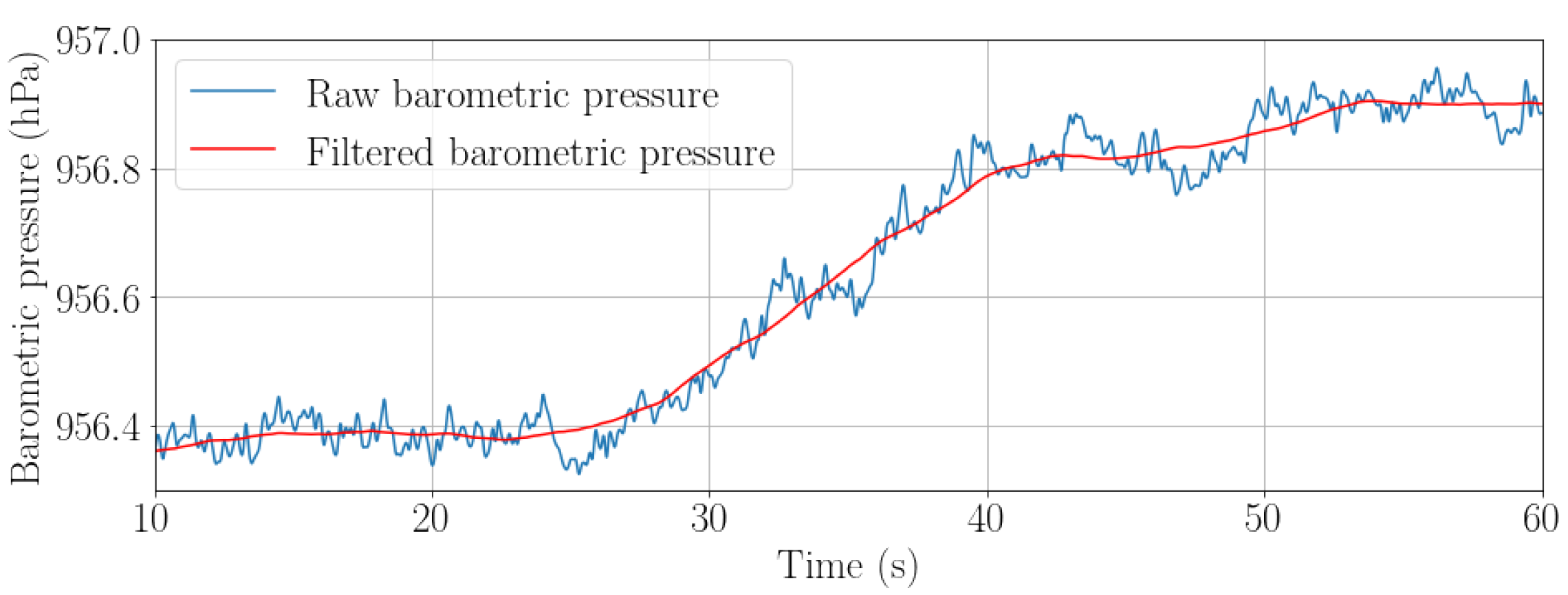

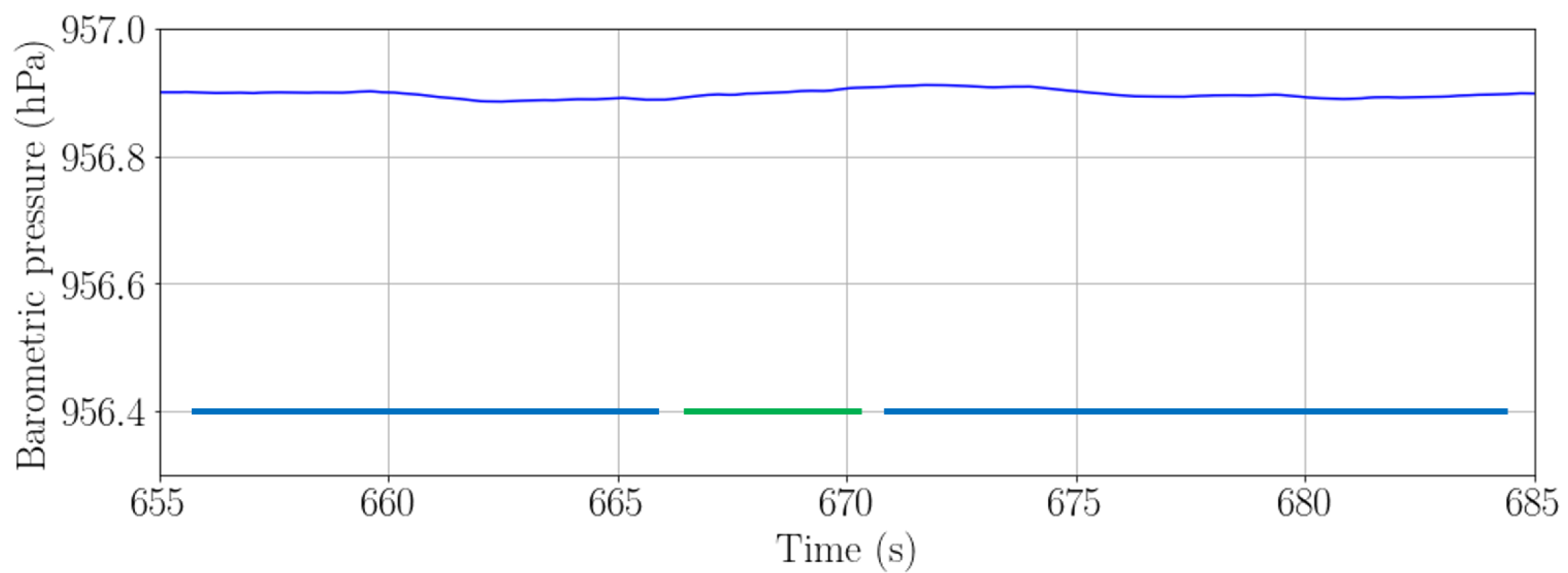

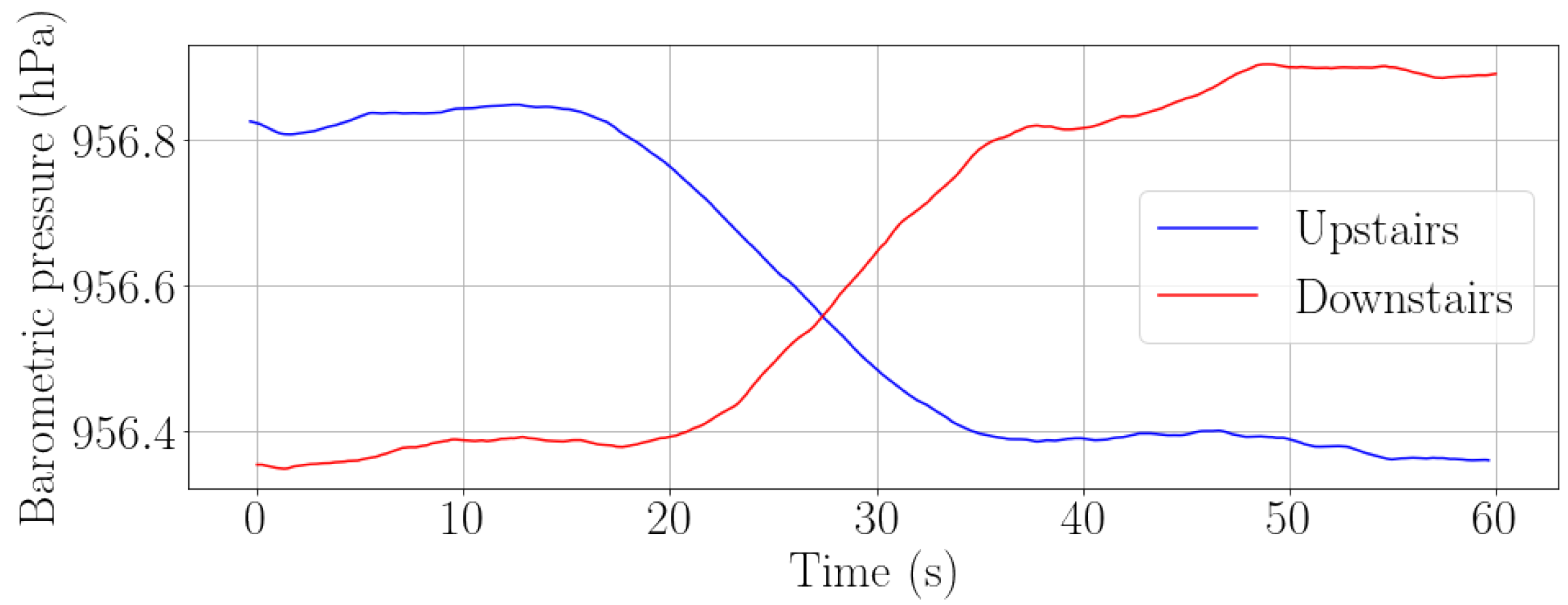

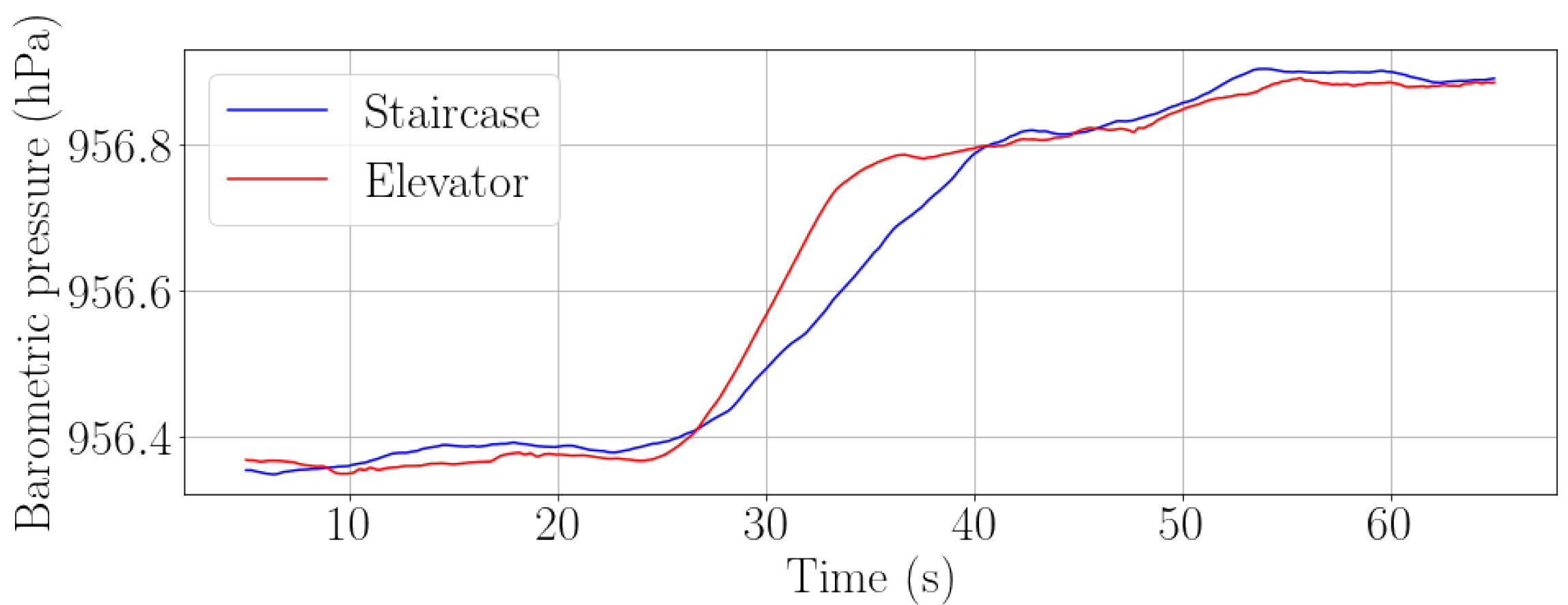

3.3. Vertical Displacement Estimation

3.4. Landmark Detection and Association

3.5. Orientation and Position Estimation

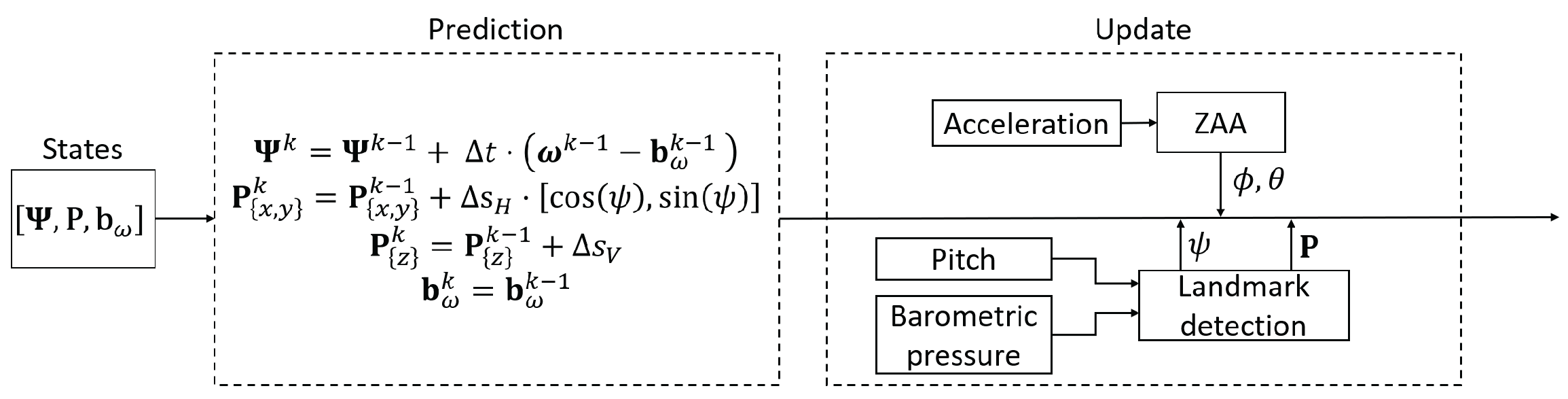

- The Euler angles ;

- The position vector ;

- The gyroscope bias .

- Zero Acceleration Assumption update (ZAA): This update is based on the detection of periods when the acceleration is zero or quasi-zero. During these periods of time, the accelerometers only measure gravity and the roll and pitch angles can be estimated as:where and are the roll and pitch angles, respectively, with which to update the UKF and is the acceleration measured in the smartphone in X, Y and Z directions at instant k.

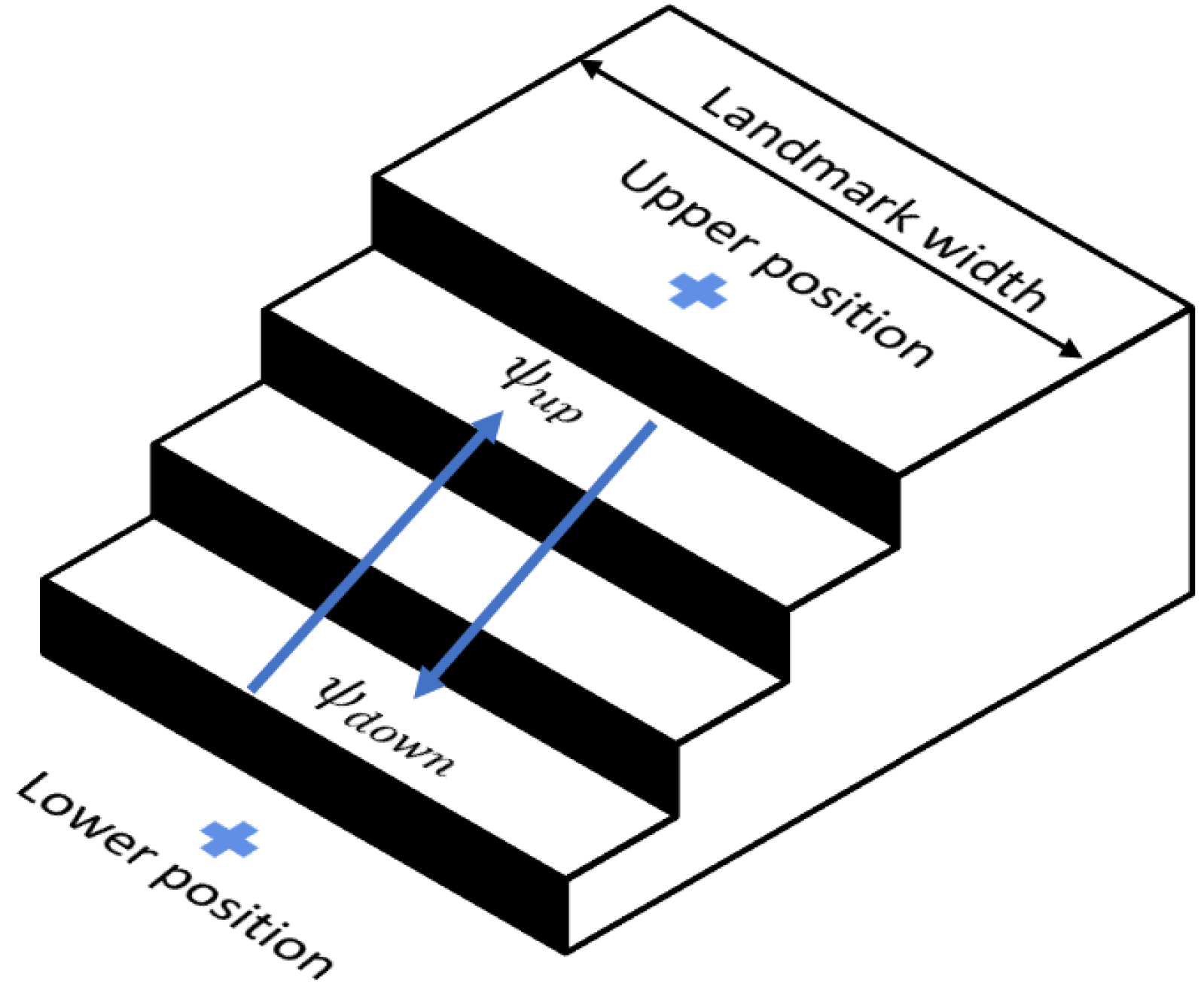

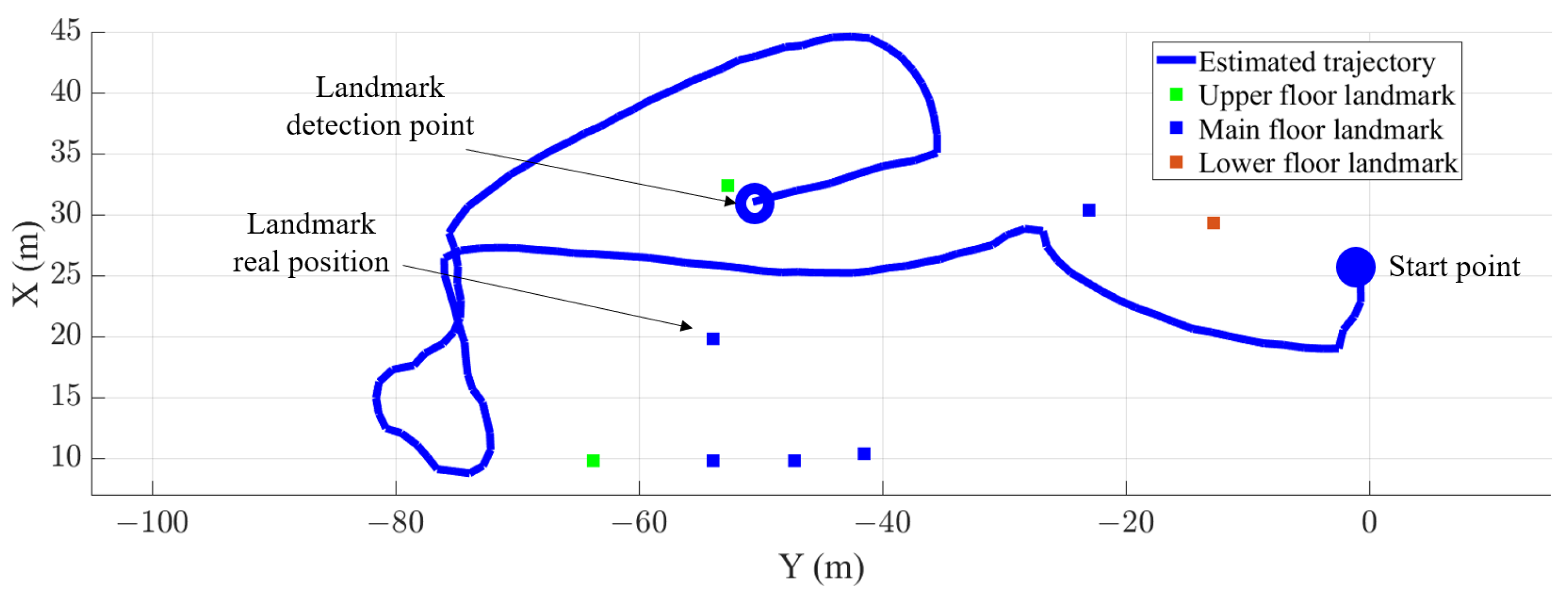

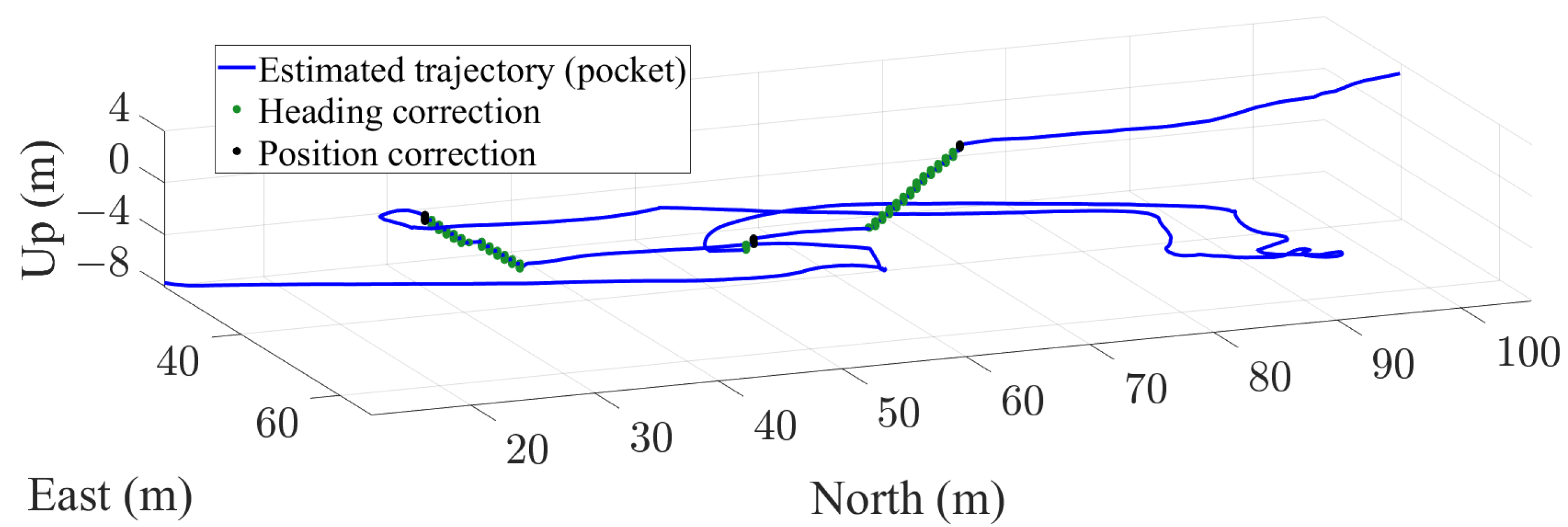

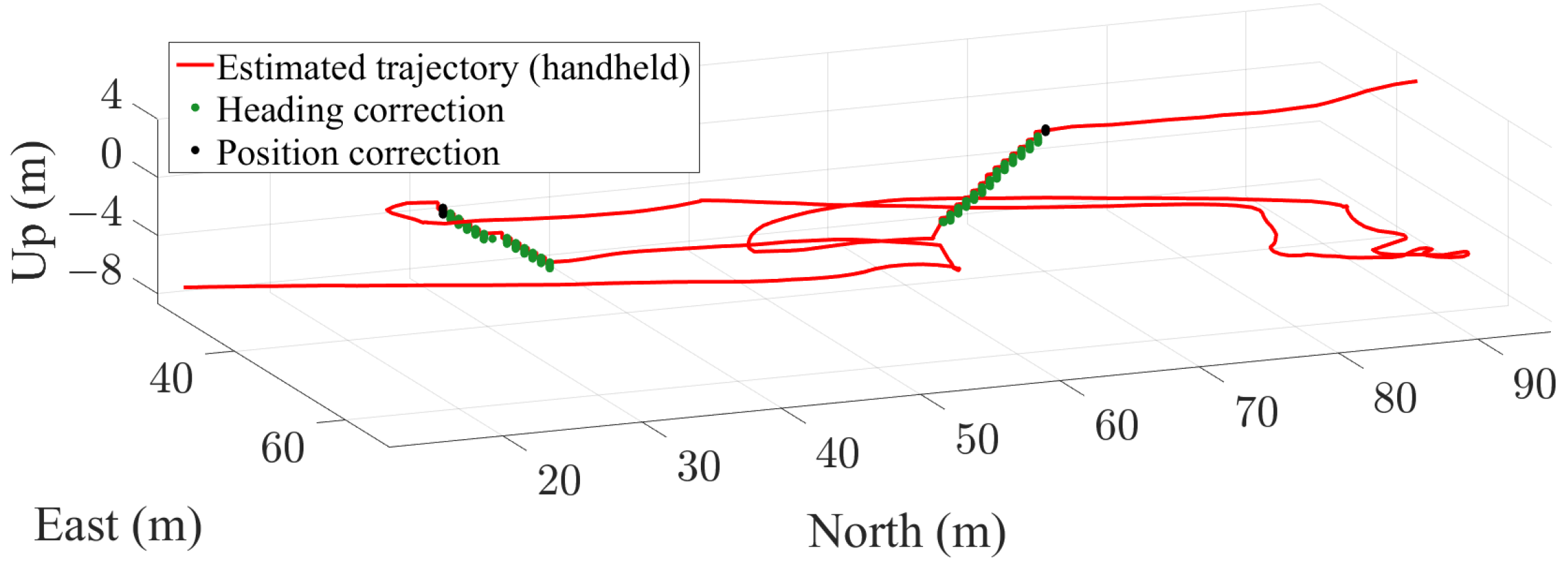

- Landmark based passenger’s position update: This update is based on the detection of landmarks in a traffic hub described in Section 3.4. Once a landmark is detected, its position is associated to the passenger’s position. The position of the landmark is taken from the landmark database and it is used to update the passenger’s position as follows:where represents the position that will be used to update the UKF and is the reference position of the associated landmark that is taken from the landmark database.The positon is updated only once when the passenger walks out of the landmark, i.e., when the passenger reaches the upper or lower part of a staircase or when walking out of an elevator, since the landmark database describes the landmark only with their upper and lower positions.The position update is performed towards the position in the database. However, there is an uncertainty in this update related to the physical dimensions of the landmark. In this case, we consider the landmark width as the uncertainty for updating the passenger’s position in X and Y coordinates, and the step height as the uncertainty for updating the passenger’s position in Z coordinate.

- Landmark based passenger’s heading update: This update is also based on the detection of landmarks described in Section 3.4. When a passenger walks a staircase, the passenger’s heading is bounded by the direction in which the staircase is oriented. Therefore, the physical orientation of the staircase can be used to update the passenger’s heading as follows:where represents the heading that will be used to update the UKF and is the reference heading of the associated landmark.The heading update is performed constantly while climbing the stairs. This update also has an uncertainty related to the physical width of the staircase, since the passenger can walk the staircase diagonally from one side to the other. We consider the uncertainty of the heading update to be the difference between the heading of a passenger walking the stairs diagonally and the heading when walking the stairs in a straight line.

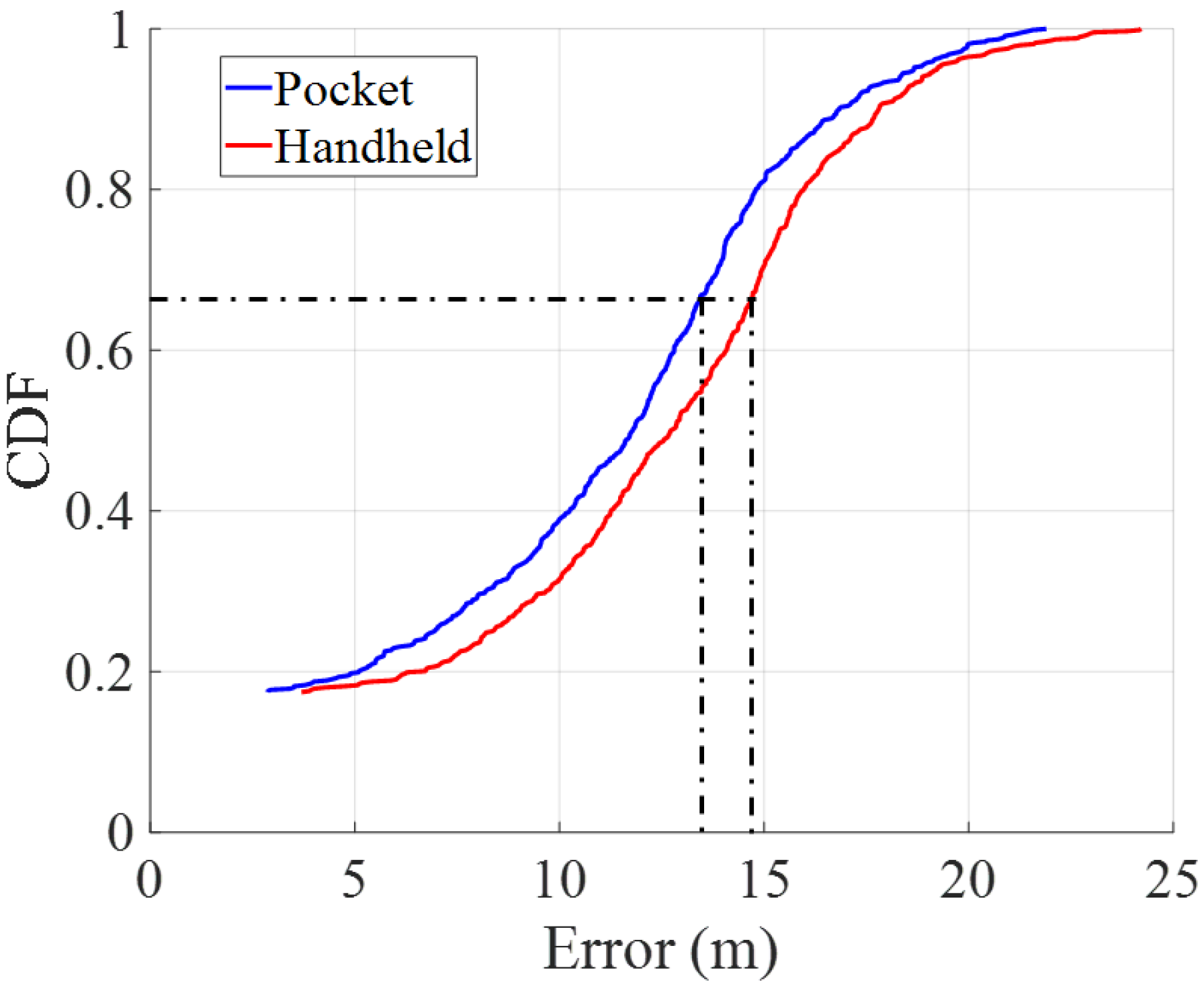

4. Evaluation

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Yang, C.; Shao, H.R. WiFi-based indoor positioning. IEEE Commun. Mag. 2015, 53, 150–157. [Google Scholar] [CrossRef]

- Chen, Z.; Zou, H.; Jiang, H.; Zhu, Q.; Soh, Y.C.; Xie, L. Fusion of WiFi, smartphone sensors and landmarks using the Kalman filter for indoor localization. Sensors 2015, 15, 715–732. [Google Scholar] [CrossRef] [PubMed]

- Faragher, R.; Harle, R. Location fingerprinting with bluetooth low energy beacons. IEEE J. Sel. Areas Commun. 2015, 33, 2418–2428. [Google Scholar] [CrossRef]

- Alarifi, A.; Al-Salman, A.; Alsaleh, M.; Alnafessah, A.; Al-Hadhrami, S.; Al-Ammar, M.A.; Al-Khalifa, H.S. Ultra wideband indoor positioning technologies: Analysis and recent advances. Sensors 2016, 16, 707. [Google Scholar] [CrossRef]

- Munoz Diaz, E.; Bousdar Ahmed, D.; Kaiser, S. A review of indoor localization methods based on inertial sensors. In Geographical and Fingerprinting Data to Create Systems for Indoor Positioning and Indoor/Outdoor Navigation; Elsevier: Amsterdam, The Netherlands, 2019; pp. 311–333. [Google Scholar]

- Yan, J.; He, G.; Basiri, A.; Hancock, C. Vision-aided indoor pedestrian dead reckoning. In Proceedings of the 2018 IEEE International Instrumentation and Measurement Technology Conference, Houston, TX, USA, 14–17 March 2018; pp. 1–6. [Google Scholar]

- Do, T.N.; Liu, R.; Yuen, C.; Zhang, M.; Tan, U.X. Personal dead reckoning using IMU mounted on upper torso and inverted pendulum model. IEEE Sens. J. 2016, 16, 7600–7608. [Google Scholar] [CrossRef]

- Bousdar Ahmed, D.; Munoz Diaz, E.; Garcia Dominguez, J.J. Automatic calibration of the step length model of a pocket INS by means of a foot inertial sensor. Sensors 2020, 20, 2083. [Google Scholar] [CrossRef] [PubMed]

- Zhang, W.; Wei, D.; Yuan, H. Novel Drift Reduction Methods in Foot-Mounted PDR System. Sensors 2019, 19, 3962. [Google Scholar] [CrossRef]

- Munoz Diaz, E. Inertial pocket navigation system: Unaided 3D positioning. Sensors 2015, 15, 9156–9178. [Google Scholar] [CrossRef] [PubMed]

- Zhao, H.; Zhang, L.; Qiu, S.; Wang, Z.; Yang, N.; Xu, J. Pedestrian dead reckoning using pocket-worn smartphone. IEEE Access 2019, 7, 91063–91073. [Google Scholar] [CrossRef]

- Susi, M.; Renaudin, V.; Lachapelle, G. Motion mode recognition and step detection algorithms for mobile phone users. Sensors 2013, 13, 1539–1562. [Google Scholar] [CrossRef]

- Huang, H.Y.; Hsieh, C.Y.; Liu, K.C.; Cheng, H.C.; Hsu, S.J.; Chan, C.T. Multi-sensor fusion approach for improving map-based indoor pedestrian localization. Sensors 2019, 19, 3786. [Google Scholar] [CrossRef] [PubMed]

- Broyles, D.; Kauffman, K.; Raquet, J.; Smagowski, P. Non-GNSS smartphone pedestrian navigation using barometric elevation and digital map-matching. Sensors 2018, 18, 2232. [Google Scholar] [CrossRef] [PubMed]

- Munoz Diaz, E.; Caamano, M.; Sánchez, F.J.F. Landmark-based drift compensation algorithm for inertial pedestrian navigation. Sensors 2017, 17, 1555. [Google Scholar] [CrossRef]

- Munoz Diaz, E.; Caamano, M. Landmark-based online drift compensation algorithm for inertial pedestrian navigation. In Proceedings of the 2017 International Conference on Indoor Positioning and Indoor Navigation (IPIN), Sapporo, Japan, 18–21 September 2017; pp. 1–7. [Google Scholar] [CrossRef]

- Liu, T.; Zhang, X.; Li, Q.; Fang, Z. Modeling of Structure Landmark for Indoor Pedestrian Localization. IEEE Access 2019, 7, 15654–15668. [Google Scholar] [CrossRef]

- Kaiser, S.; Lang, C. Integrating moving platforms in a SLAM agorithm for pedestrian navigation. Sensors 2018, 18, 4367. [Google Scholar] [CrossRef] [PubMed]

- Leica Geosystems. Leica TPS 1200 Series. High Performance Total Station. Available online: https://secure.fltgeosystems.com/uploads/tips/documents/39.pdf (accessed on 22 July 2022).

- Munoz Diaz, E.; Gonzalez, A.L.M.; de Ponte Müller, F. Standalone inertial pocket navigation system. In Proceedings of the 2014 IEEE/ION Position, Location and Navigation Symposium—PLANS 2014, Monterey, CA, USA, 5–8 May 2014; pp. 241–251. [Google Scholar] [CrossRef]

- Renaudin, V.; Demeule, V.; Ortiz, M. Adaptative pedestrian displacement estimation with a smartphone. In Proceedings of the International Conference on Indoor Positioning and Indoor Navigation, Montbeliard, France, 28–31 October 2013; pp. 1–9. [Google Scholar]

- Bosch. IMU BMI260. Available online: https://www.bosch-sensortec.com/products/motion-sensors/imus/bmi260/#technical (accessed on 27 July 2022).

| Name | Type | Upper Position | Lower Position | ||

|---|---|---|---|---|---|

| L1 | Staircase | m | m | ||

| L2 | Elevator | m | m | N/A | N/A |

| No Landmark Correction | Landmark Correction | |

|---|---|---|

| m | m | |

| Handheld | m | m |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jurado Romero, F.; Munoz Diaz, E.; Bousdar Ahmed, D. Smartphone-Based Localization for Passengers Commuting in Traffic Hubs. Sensors 2022, 22, 7199. https://doi.org/10.3390/s22197199

Jurado Romero F, Munoz Diaz E, Bousdar Ahmed D. Smartphone-Based Localization for Passengers Commuting in Traffic Hubs. Sensors. 2022; 22(19):7199. https://doi.org/10.3390/s22197199

Chicago/Turabian StyleJurado Romero, Francisco, Estefania Munoz Diaz, and Dina Bousdar Ahmed. 2022. "Smartphone-Based Localization for Passengers Commuting in Traffic Hubs" Sensors 22, no. 19: 7199. https://doi.org/10.3390/s22197199

APA StyleJurado Romero, F., Munoz Diaz, E., & Bousdar Ahmed, D. (2022). Smartphone-Based Localization for Passengers Commuting in Traffic Hubs. Sensors, 22(19), 7199. https://doi.org/10.3390/s22197199