Intelligent Posture Training: Machine-Learning-Powered Human Sitting Posture Recognition Based on a Pressure-Sensing IoT Cushion

Abstract

1. Introduction

- We designed an experimental setup for collecting real-world sitting posture and seated stretch pose data from a diverse participant group using a novel pressure sensing IoT cushion.

- We built sitting posture and seated stretch databases that comprise real-time user back pressure sensor data using an active posture labeling method based on a biomechanics posture model and on user body characteristics’ data (BMI).

- We applied and compared the performance of several machine learning classifiers in a sitting posture recognition task and achieved an accuracy of 98.82% in detecting 15 different sitting postures, using an easily deployable machine learning algorithm, outperforming previous efforts in human sitting posture recognition. We were able to correctly classify many more postures than in previous works that targeted on average between five and seven sitting postures.

- We applied and compared the performance of several machine learning classifiers in the seated stretching recognition tasks and achieved an accuracy of 97.94% in detecting six common chair-bound stretches, which are physiotherapist recommended and have not been investigated in related works. While previous works focused on sitting posture recognition alone, we extend our method to include specific chair-bound stretches.

- In the context of AI-powered device personalization, we show that user body mass index (BMI) is an important parameter to consider in sitting posture recognition and propose a novel strategy for a user-based optimization of the LifeChair system.

- We also demonstrate the portability and adaptability of our machine-learning-based posture classification in five different environments and discuss deployment strategies for handling new environments. This has not been investigated by previous works that focus on a single use case of their proposed systems. We demonstrate the impact of local sensor ablations on the performance of the machine learning models in sitting posture recognition.

- We propose, to the best of our knowledge, the first posture data-driven stretch pose recommendation system for personalized well-being guidance.

2. Related Works

3. Materials and Methods

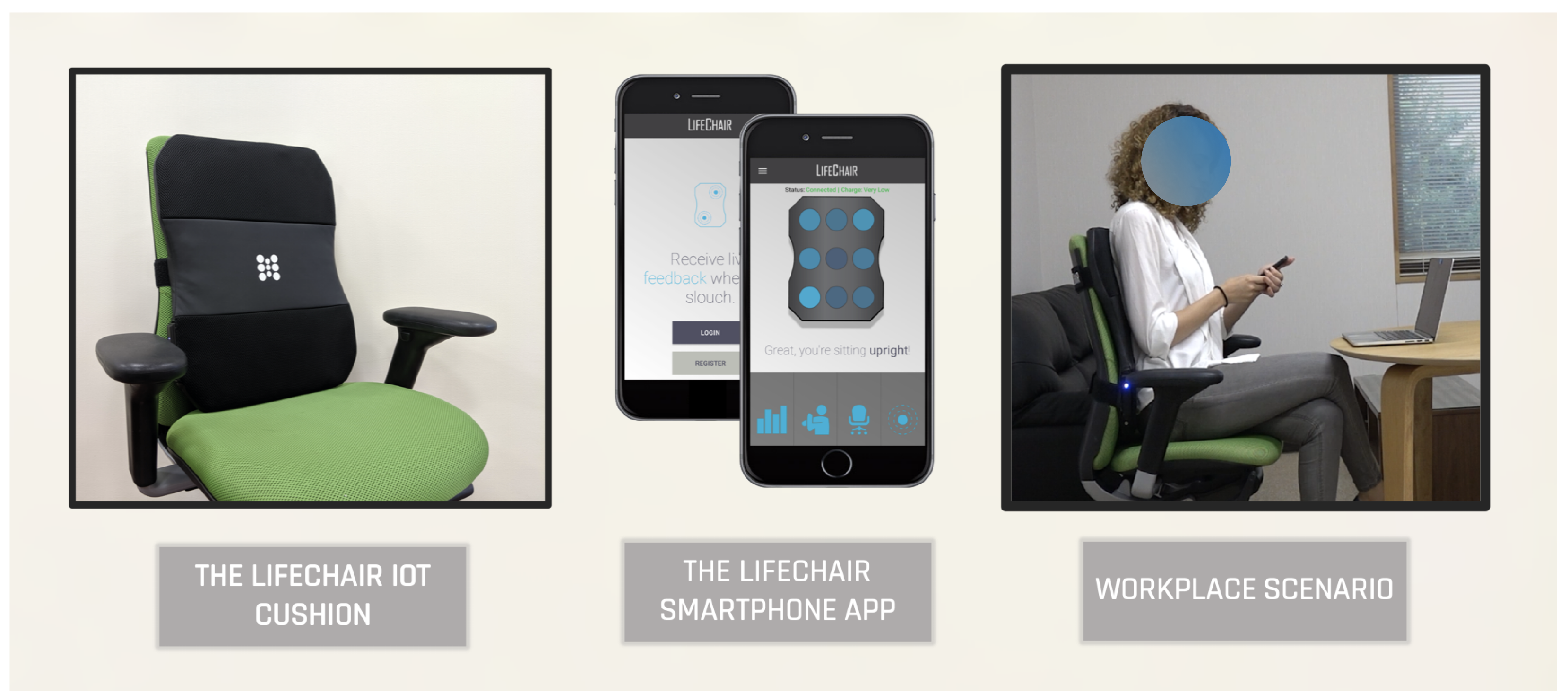

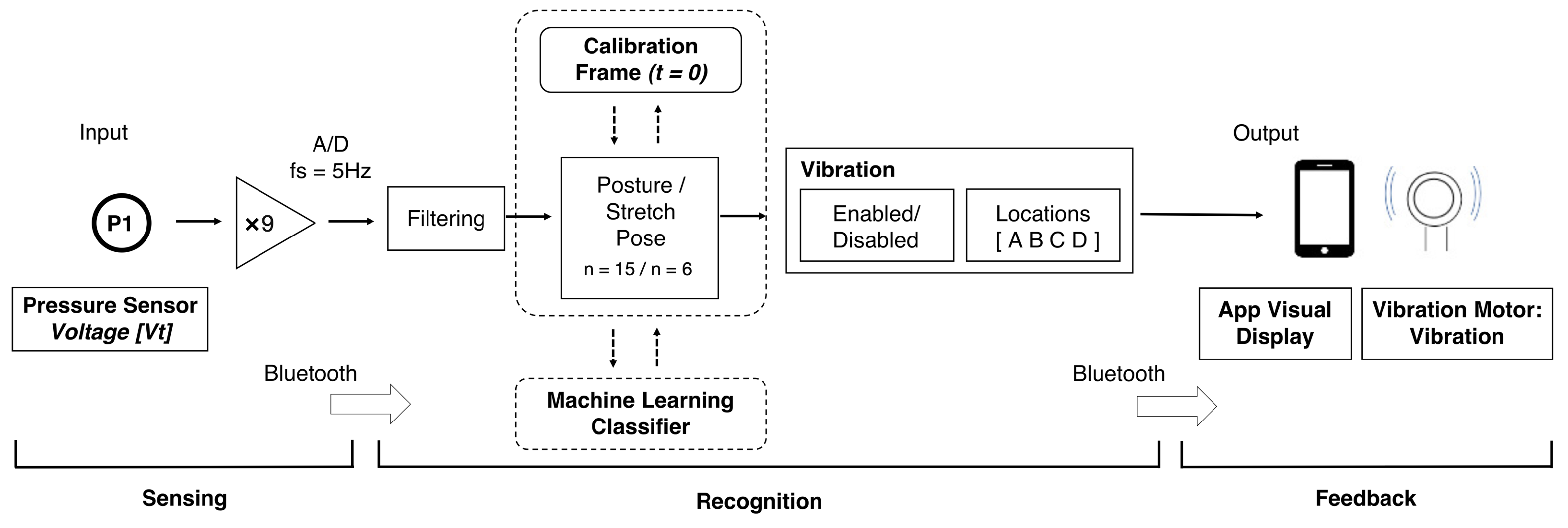

3.1. Sensing Interface

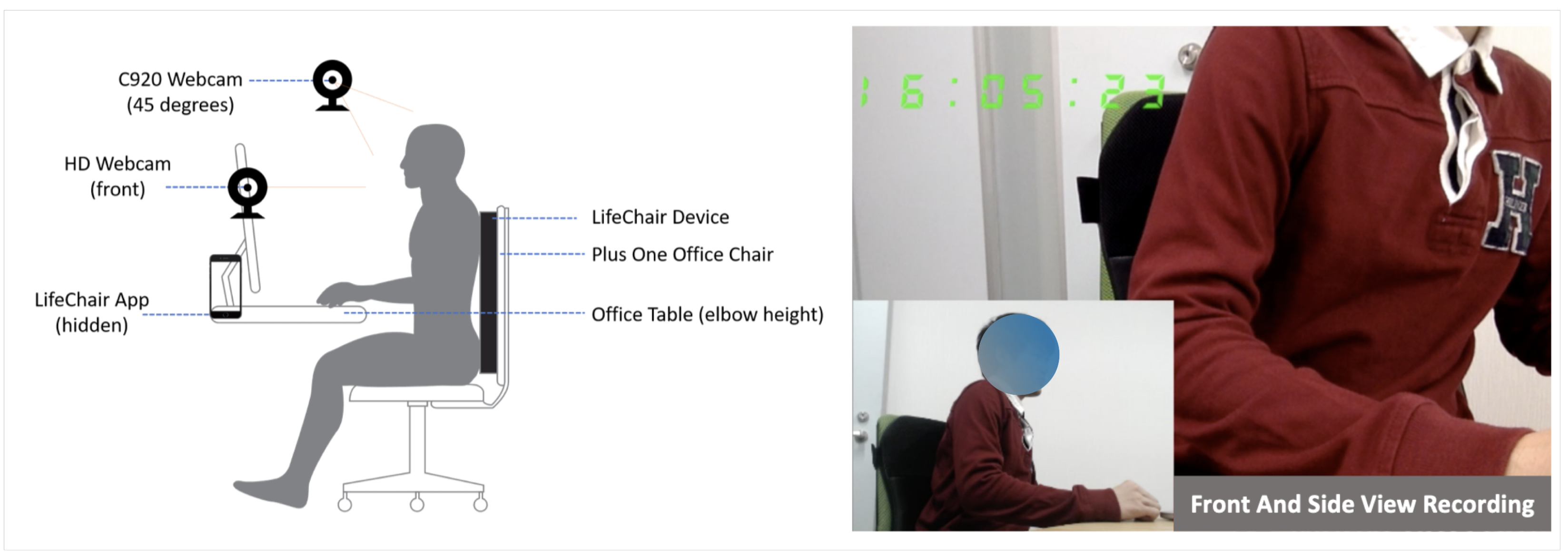

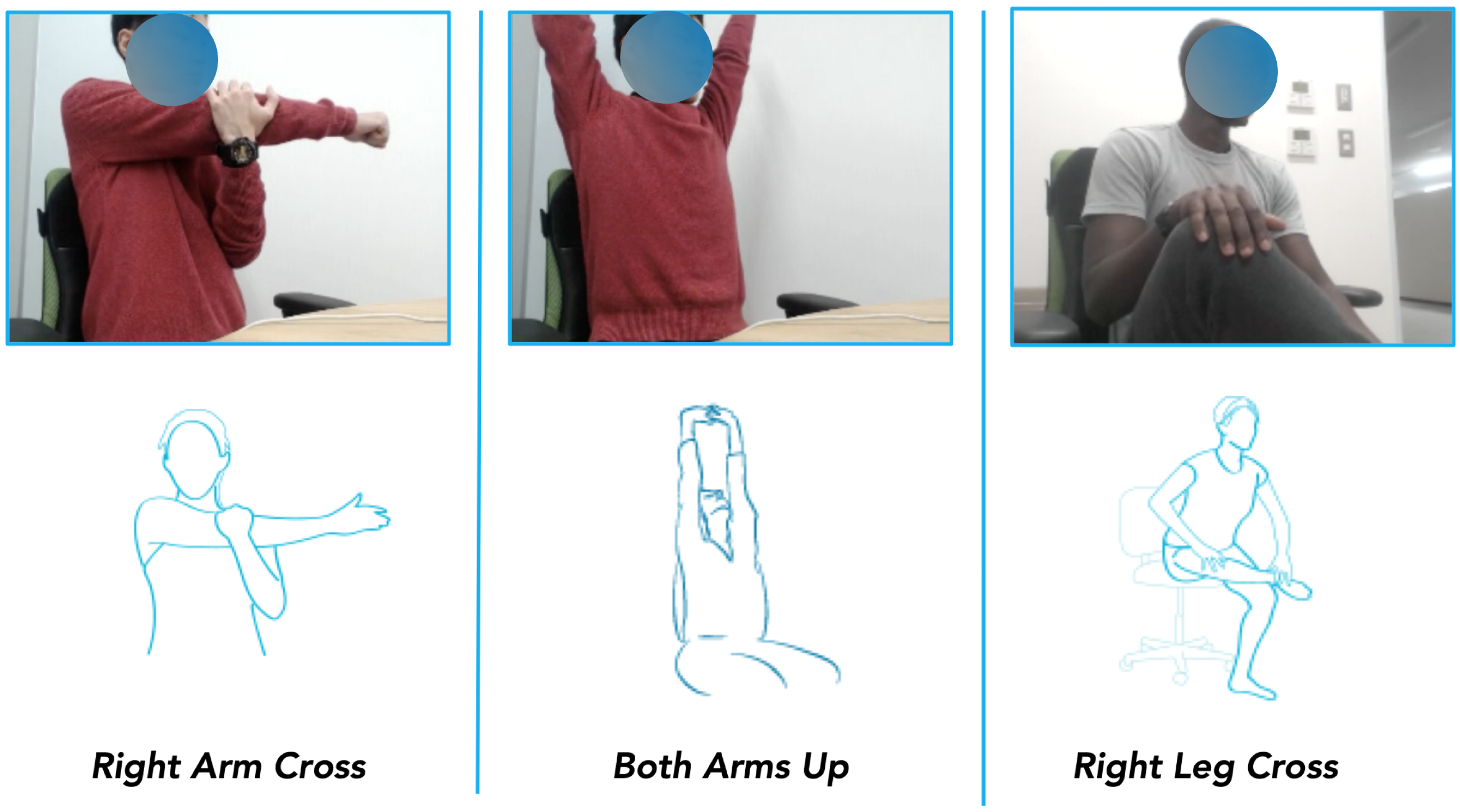

3.2. Sitting Posture and Stretch Pose Data

3.3. Machine-Learning-Based Posture Recognition

3.4. Portability Study

3.5. Posture–Pose Similarity Assessment

4. Results and Discussion

4.1. Sitting Posture Recognition

4.2. BMI Divergence

4.3. Portability and Adaptability

4.4. Seated Stretch Recognition

4.5. Posture–Stretch Recommendation System

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- Jung, S.I.; Lee, N.K.; Kang, K.W.; Kim, K.; Lee, D.Y. The effect of smartphone usage time on posture and respiratory function. J. Phys. Ther. Sci. 2016, 28, 186–189. [Google Scholar] [CrossRef]

- Harvey, J.A.; Chastin, S.F.; Skelton, D.A. How sedentary are older people? A systematic review of the amount of sedentary behavior. J. Aging Phys. Act. 2015, 23, 471–487. [Google Scholar] [CrossRef]

- BetterHealth, V.S.G.A. The Dangers of Sitting: Why Sitting Is the New Smoking. Available online: https://www.betterhealth.vic.gov.au/health/healthyliving/the-dangers-of-sitting (accessed on 21 January 2020).

- Lis, A.M.; Black, K.M.; Korn, H.; Nordin, M. Association between sitting and occupational LBP. Eur. Spine J. 2007, 16, 283–298. [Google Scholar] [CrossRef]

- Veerman, J.L.; Healy, G.N.; Cobiac, L.J.; Vos, T.; Winkler, E.A.H.; Owen, N.; Dunstan, D.W. Television viewing time and reduced life expectancy: A life table analysis. Br. J. Sport. Med. 2012, 46, 927–930. [Google Scholar] [CrossRef]

- Biswas, A.; Oh, P.I.; Faulkner, G.E.; Bajaj, R.R.; Silver, M.A.; Mitchell, M.S.; Alter, D.A. Sedentary time and its association with risk for disease incidence, mortality, and hospitalization in adults: A systematic review and meta-analysis. Ann. Intern. Med. 2015, 162, 123–132. [Google Scholar] [CrossRef]

- Dainese, R.; Serra, J.; Azpiroz, F.; Malagelada, J.R. Influence of body posture on intestinal transit of gas. Gut 2003, 52, 971–974. [Google Scholar] [CrossRef]

- Owen, N.; Healy, G.N.; Matthews, C.E.; Dunstan, D.W. Too much sitting: The population-health science of sedentary behavior. Exerc. Sport Sci. Rev. 2010, 38, 105. [Google Scholar] [CrossRef]

- Matthews, C.E.; George, S.M.; Moore, S.C.; Bowles, H.R.; Blair, A.; Park, Y.; Troiano, R.P.; Hollenbeck, A.; Schatzkin, A. Amount of time spent in sedentary behaviors and cause-specific mortality in US adults. Am. J. Clin. Nutr. 2012, 95, 437–445. [Google Scholar] [CrossRef]

- Moretti, A.; Menna, F.; Aulicino, M.; Paoletta, M.; Liguori, S.; Iolascon, G. Characterization of home working population during COVID-19 emergency: A cross-sectional analysis. Int. J. Environ. Res. Public Health 2020, 17, 6284. [Google Scholar] [CrossRef]

- Shariat, A.; Cleland, J.A.; Danaee, M.; Kargarfard, M.; Sangelaji, B.; Tamrin, S.B.M. Effects of stretching exercise training and ergonomic modifications on musculoskeletal discomforts of office workers: A randomized controlled trial. Braz. J. Phys. Ther. 2018, 22, 144–153. [Google Scholar] [CrossRef]

- Van Eerd, D.; Munhall, C.; Irvin, E.; Rempel, D.; Brewer, S.; van der Beek, A.J.; Dennerlein, J.T.; Tullar, J.; Skivington, K.; Pinion, C.; et al. Effectiveness of workplace interventions in the prevention of upper extremity musculoskeletal disorders and symptoms: An update of the evidence. Occup. Environ. Med. 2016, 73, 62–70. [Google Scholar] [CrossRef]

- Moore, T.M. A workplace stretching program: Physiologic and perception measurements before and after participation. AAOHN J. 1998, 46, 563–568. [Google Scholar] [CrossRef]

- Tomašev, N.; Cornebise, J.; Hutter, F.; Mohamed, S.; Picciariello, A.; Connelly, B.; Belgrave, D.; Ezer, D.; Haert, F.C.v.d.; Mugisha, F.; et al. AI for social good: Unlocking the opportunity for positive impact. Nat. Commun. 2020, 11, 2468. [Google Scholar] [CrossRef]

- MTG. Body Make Style Seat. Available online: https://www.mtg.gr.jp/brands/wellness/product/style/style/ (accessed on 21 January 2020).

- BetterBack. Available online: https://getbetterback.com/ (accessed on 21 January 2020).

- Bodystance. Backpod. Available online: https://www.bodystance.co.nz/en/backpod/ (accessed on 21 January 2020).

- Knoll. ReGeneration Chair. Available online: https://www.knoll.com/product/regeneration-by-knoll-fully-upholstered (accessed on 21 January 2020).

- Herman Miller. Embody Chairs. Available online: https://www.hermanmiller.com/ (accessed on 21 January 2020).

- Darma, co. Darma Smart Cushion. Available online: http://darma.co/ (accessed on 21 January 2020).

- Liang, G.; Cao, J.; Liu, X.; Han, X. Cushionware: A practical sitting posture-based interaction system. In Proceedings of the CHI’14 Extended Abstracts on Human Factors in Computing Systems, Toronto, ON, Canada, 26 April–1 May 2014; pp. 591–594. [Google Scholar]

- Xu, W.; Huang, M.C.; Amini, N.; He, L.; Sarrafzadeh, M. ecushion: A textile pressure sensor array design and calibration for sitting posture analysis. IEEE Sens. J. 2013, 13, 3926–3934. [Google Scholar] [CrossRef]

- Upright Go 2 Posture Trainer. Upright. Available online: https://www.uprightpose.com/products-2 (accessed on 21 January 2020).

- Matsuda, Y.; Hasegaway, T.; Arai, I.; Arakawa, Y.; Yasumoto, K. Waistonbelt 2: A belt-type wearable device for monitoring abdominal circumference, posture and activity. In Proceedings of the 2016 Ninth International Conference on Mobile Computing and Ubiquitous Networking (ICMU), Kaiserslautern, Germany, 4–6 October 2016; pp. 1–6. [Google Scholar]

- Lumo. Lumo Lift, Posture Tracker. Available online: https://www.lumobodytech.com/lumo-lift/ (accessed on 21 January 2020).

- Posture Pal. Available online: https://www.hackster.io/justin-shenk/posture-pal-computer-vision-cbe67c (accessed on 21 January 2020).

- Nasirahmadi, A.; Sturm, B.; Edwards, S.; Jeppsson, K.H.; Olsson, A.C.; Müller, S.; Hensel, O. Deep Learning and Machine Vision Approaches for Posture Detection of Individual Pigs. Sensors 2019, 19, 3738. [Google Scholar] [CrossRef]

- Estrada, J.; Vea, L. Sitting posture recognition for computer users using smartphones and a web camera. In Proceedings of the TENCON 2017-2017 IEEE Region 10 Conference, Penang, Malaysia, 5–8 November 2017; pp. 1520–1525. [Google Scholar]

- Li, M.; Jiang, Z.; Liu, Y.; Chen, S.; Wozniak, M.; Scherer, R.; Damasevicius, R.; Wei, W.; Li, Z.; Li, Z. Sitsen: Passive sitting posture sensing based on wireless devices. Int. J. Distrib. Sens. Netw. 2021, 17, 15501477211024846. [Google Scholar] [CrossRef]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. Human Activity Recognition on Smartphones Using a Multiclass Hardware-Friendly Support Vector Machine; Ambient Assisted Living and Home Care; Bravo, J., Hervás, R., Rodríguez, M., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 216–223. [Google Scholar]

- Wu, W.; Dasgupta, S.; Ramirez, E.E.; Peterson, C.; Norman, G.J. Classification Accuracies of Physical Activities Using Smartphone Motion Sensors. J. Med. Internet Res. 2012, 14, e130. [Google Scholar] [CrossRef]

- Cerqueira, S.M.; Moreira, L.; Alpoim, L.; Siva, A.; Santos, C.P. An inertial data-based upper body posture recognition tool: A machine learning study approach. In Proceedings of the 2020 IEEE International Conference on Autonomous Robot Systems and Competitions (ICARSC), Ponta Delgada, Portugal, 15–17 April 2020; pp. 4–9. [Google Scholar]

- Pizarro, F.; Villavicencio, P.; Yunge, D.; Rodríguez, M.; Hermosilla, G.; Leiva, A. Easy-to-Build Textile Pressure Sensor. Sensors 2018, 18, 1190. [Google Scholar] [CrossRef]

- Tan, H.Z.; Slivovsky, L.A.; Pentland, A. A sensing chair using pressure distribution sensors. IEEE/ASME Trans. Mechatron. 2001, 6, 261–268. [Google Scholar] [CrossRef]

- Mota, S.; Picard, R.W. Automated posture analysis for detecting learner’s interest level. In Proceedings of the 2003 Conference on Computer Vision and Pattern Recognition Workshop, Madison, WI, USA, 16–22 June 2003; Volume 5, p. 49. [Google Scholar]

- Roh, J.; Park, H.j.; Lee, K.J.; Hyeong, J.; Kim, S.; Lee, B. Sitting Posture Monitoring System Based on a Low-Cost Load Cell Using Machine Learning. Sensors 2018, 18, 208. [Google Scholar] [CrossRef]

- Zemp, R.; Tanadini, M.; Plüss, S.; Schnüriger, K.; Singh, N.B.; Taylor, W.R.; Lorenzetti, S. Application of machine learning approaches for classifying sitting posture based on force and acceleration sensors. BioMed Res. Int. 2016, 2016, 5978489. [Google Scholar] [CrossRef]

- Ma, C.; Li, W.; Gravina, R.; Fortino, G. Posture Detection Based on Smart Cushion for Wheelchair Users. Sensors 2017, 17, 719. [Google Scholar] [CrossRef]

- Hu, Q.; Tang, X.; Tang, W. A smart chair sitting posture recognition system using flex sensors and FPGA implemented artificial neural network. IEEE Sens. J. 2020, 20, 8007–8016. [Google Scholar] [CrossRef]

- Luna-Perejón, F.; Montes-Sánchez, J.M.; Durán-López, L.; Vazquez-Baeza, A.; Beasley-Bohórquez, I.; Sevillano-Ramos, J.L. IoT Device for Sitting Posture Classification Using Artificial Neural Networks. Electronics 2021, 10, 1825. [Google Scholar] [CrossRef]

- Jeong, H.; Park, W. Developing and evaluating a mixed sensor smart chair system for real-time posture classification: Combining pressure and distance sensors. IEEE J. Biomed. Health Inf. 2020, 25, 1805–1813. [Google Scholar] [CrossRef]

- Farhani, G.; Zhou, Y.; Danielson, P.; Trejos, A.L. Implementing Machine Learning Algorithms to Classify Postures and Forecast Motions When Using a Dynamic Chair. Sensors 2022, 22, 400. [Google Scholar] [CrossRef]

- Li, C.; Liu, D.; Xu, C.; Wang, Z.; Shu, S.; Sun, Z.; Tang, W.; Wang, Z.L. Sensing of joint and spinal bending or stretching via a retractable and wearable badge reel. Nat. Commun. 2021, 12, 2950. [Google Scholar] [CrossRef]

- Kim, Y.M.; Son, Y.; Kim, W.; Jin, B.; Yun, M.H. Classification of Children’s Sitting Postures Using Machine Learning Algorithms. Appl. Sci. 2018, 8, 1280. [Google Scholar] [CrossRef]

- Ishac, K.; Suzuki, K. LifeChair: A Conductive Fabric Sensor-Based Smart Cushion for Actively Shaping Sitting Posture. Sensors 2018, 18, 2261. [Google Scholar] [CrossRef]

- Bourahmoune, K.; Amagasa, T. AI-powered posture training: Application of machine learning in sitting posture recognition using the LifeChair smart cushion. In Proceedings of the 28th International Joint Conference on Artificial Intelligence (IJCAI-19), Macao, China, 10–16 August 2019; pp. 5808–5814. [Google Scholar]

- Sunny, J.T.; George, S.M.; Kizhakkethottam, J.J.; Sunny, J.T.; George, S.M.; Kizhakkethottam, J.J. Applications and challenges of human activity recognition using sensors in a smart environment. IJIRST Int. J. Innov. Res. Sci. Technol 2015, 2, 50–57. [Google Scholar]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Hastie, T.; Tibshirani, R.; Friedman, J.H.; Friedman, J.H. The Elements of Statistical Learning: Data Mining, Inference, and Prediction; Springer: Berlin/Heidelberg, Germany, 2009; Volume 2. [Google Scholar]

- Han, J.; Kamber, M.; Pei, J. 2—Getting to know your data. In Data Mining, 3rd ed.; Han, J., Kamber, M., Pei, J., Eds.; The Morgan Kaufmann Series in Data Management Systems; Morgan Kaufmann: Boston, MA, USA, 2012; pp. 39–82. [Google Scholar] [CrossRef]

- Lewoniewski, W.; Węcel, K.; Abramowicz, W. Quality and importance of Wikipedia articles in different languages. In Proceedings of the International Conference on Information and Software Technologies, Druskininkai, Lithuania, 13–15 October 2016; pp. 613–624. [Google Scholar]

- Fawagreh, K.; Gaber, M.M.; Elyan, E. Random forests: From early developments to recent advancements. Syst. Sci. Control. Eng. 2014, 2, 602–609. [Google Scholar] [CrossRef]

- Zhu, M.; Martinez, A.M.; Tan, H.Z. Template-based recognition of static sitting postures. In Proceedings of the 2003 Conference on Computer Vision and Pattern Recognition Workshop, Madison, WI, USA, 16–22 June 2003; Volume 5, p. 50. [Google Scholar]

- Lee, D.E.; Seo, S.M.; Woo, H.S.; Won, S.Y. Analysis of body imbalance in various writing sitting postures using sitting pressure measurement. J. Phys. Ther. Sci. 2018, 30, 343–346. [Google Scholar] [CrossRef][Green Version]

| Sensing Data | Algorithm | Sitting Postures | Reference |

|---|---|---|---|

| Load Cells | SVM, k-NN, LDA, QDA, NB, RF | 6 | [36] |

| Pressure Sensors | SVM, NN, RF, MNR | 7 | [37] |

| Pressure Sensors | J48 trees, SVM, MLP, NB, k-NN | 5 | [38] |

| FSRs | ANN | 7 | [40] |

| Flex Sensors | ANN | 7 | [39] |

| FSRs and Distance Sensors | k-NN | 11 | [41] |

| FSRs | RF, SVM, GDT | 7 | [42] |

| Algorithm | Sensors Only | Sensors + BMI |

|---|---|---|

| RF | 0.9709 | 0.9882 * |

| DT-CART | 0.9619 | 0.9843 |

| k-NN | 0.9213 | 0.9229 |

| NN (MLP) | 0.8009 | 0.8838 |

| LR | 0.5367 | 0.5520 |

| LDA | 0.5316 | 0.5529 |

| NB | 0.4171 | 0.4830 |

| Ablation Type | Ablated Sensor/s | Accuracy |

|---|---|---|

| Individual | 1 | 0.9589 |

| 2 | 0.9659 | |

| 3 | 0.9549 | |

| 4 | 0.9622 | |

| 5 | 0.9581 | |

| 6 | 0.9575 | |

| 7 | 0.9540 | |

| 8 | 0.9600 | |

| 9 | 0.9600 | |

| Horizontal | 1, 2, 3 | 0.8983 |

| 4, 5, 6 | 0.8805 | |

| 7, 8, 9 | 0.8982 | |

| Vertical | 1, 4, 7 | 0.9042 |

| 2, 5, 8 | 0.9288 | |

| 3, 6, 9 | 0.9042 |

| User Group | Accuracy | |

|---|---|---|

| Low BMI | BMI < 18.5 | 0.97001 * |

| Normal BMI | BMI | 0.9898 |

| High BMI | BMI > 25.0 | 0.9846 |

| Environment | Training Mode | Accuracy |

|---|---|---|

| Small Back | Global Training | 0.7600 |

| Group Training | 0.9801 | |

| Standard Back | Global Training | 0.9200 |

| Group Training | 0.9772 | |

| Mid Back | Global Training | 0.5600 |

| Group Training | 0.9719 | |

| High Back | Global Training | 0.8600 |

| Group Training | 0.9782 | |

| Wide Back | Global Training | 0.7600 |

| Group Training | 0.9829 |

| Algorithm | Sensors + BMI |

|---|---|

| RF | 0.9794 |

| DT-CART | 0.9658 |

| k-NN | 0.9143 |

| NN (MLP) | 0.8121 |

| LR | 0.5780 |

| LDA | 0.5526 |

| NB | 0.4936 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bourahmoune, K.; Ishac, K.; Amagasa, T. Intelligent Posture Training: Machine-Learning-Powered Human Sitting Posture Recognition Based on a Pressure-Sensing IoT Cushion. Sensors 2022, 22, 5337. https://doi.org/10.3390/s22145337

Bourahmoune K, Ishac K, Amagasa T. Intelligent Posture Training: Machine-Learning-Powered Human Sitting Posture Recognition Based on a Pressure-Sensing IoT Cushion. Sensors. 2022; 22(14):5337. https://doi.org/10.3390/s22145337

Chicago/Turabian StyleBourahmoune, Katia, Karlos Ishac, and Toshiyuki Amagasa. 2022. "Intelligent Posture Training: Machine-Learning-Powered Human Sitting Posture Recognition Based on a Pressure-Sensing IoT Cushion" Sensors 22, no. 14: 5337. https://doi.org/10.3390/s22145337

APA StyleBourahmoune, K., Ishac, K., & Amagasa, T. (2022). Intelligent Posture Training: Machine-Learning-Powered Human Sitting Posture Recognition Based on a Pressure-Sensing IoT Cushion. Sensors, 22(14), 5337. https://doi.org/10.3390/s22145337