Research on Chinese Speech Emotion Recognition Based on Deep Neural Network and Acoustic Features

Abstract

:1. Introduction

2. Related Techniques and Literature Review

2.1. Acoustic Features

2.1.1. Spectral Centroid

2.1.2. Spectral Flatness

2.1.3. Spectral Contrast

2.1.4. Spectral Roll-Off

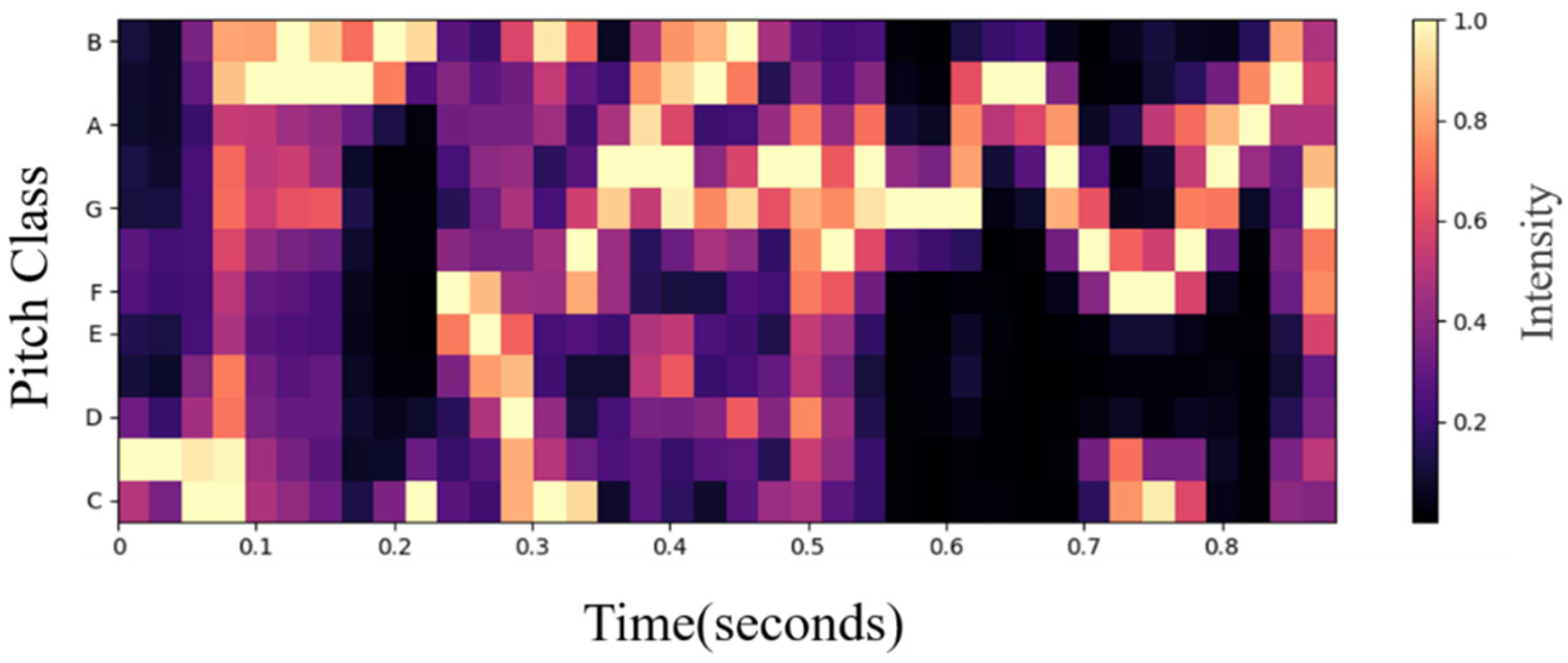

2.1.5. Chroma Feature

2.1.6. Zero-Crossing Rate (ZCR)

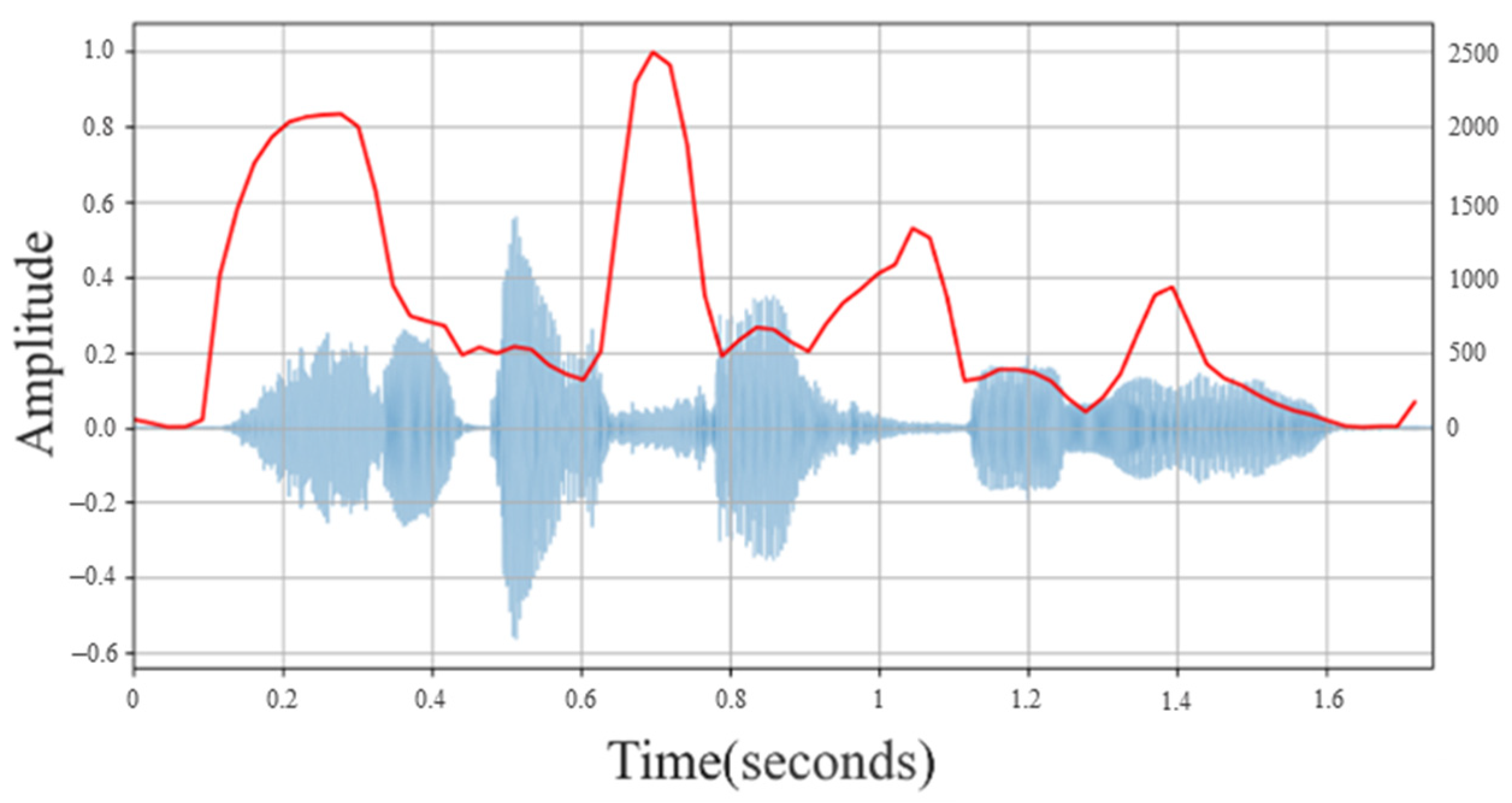

2.1.7. Root Mean Square Energy (RMSE)

2.1.8. Mel Frequency Cepstral Coefficient (MFCC)

- (1)

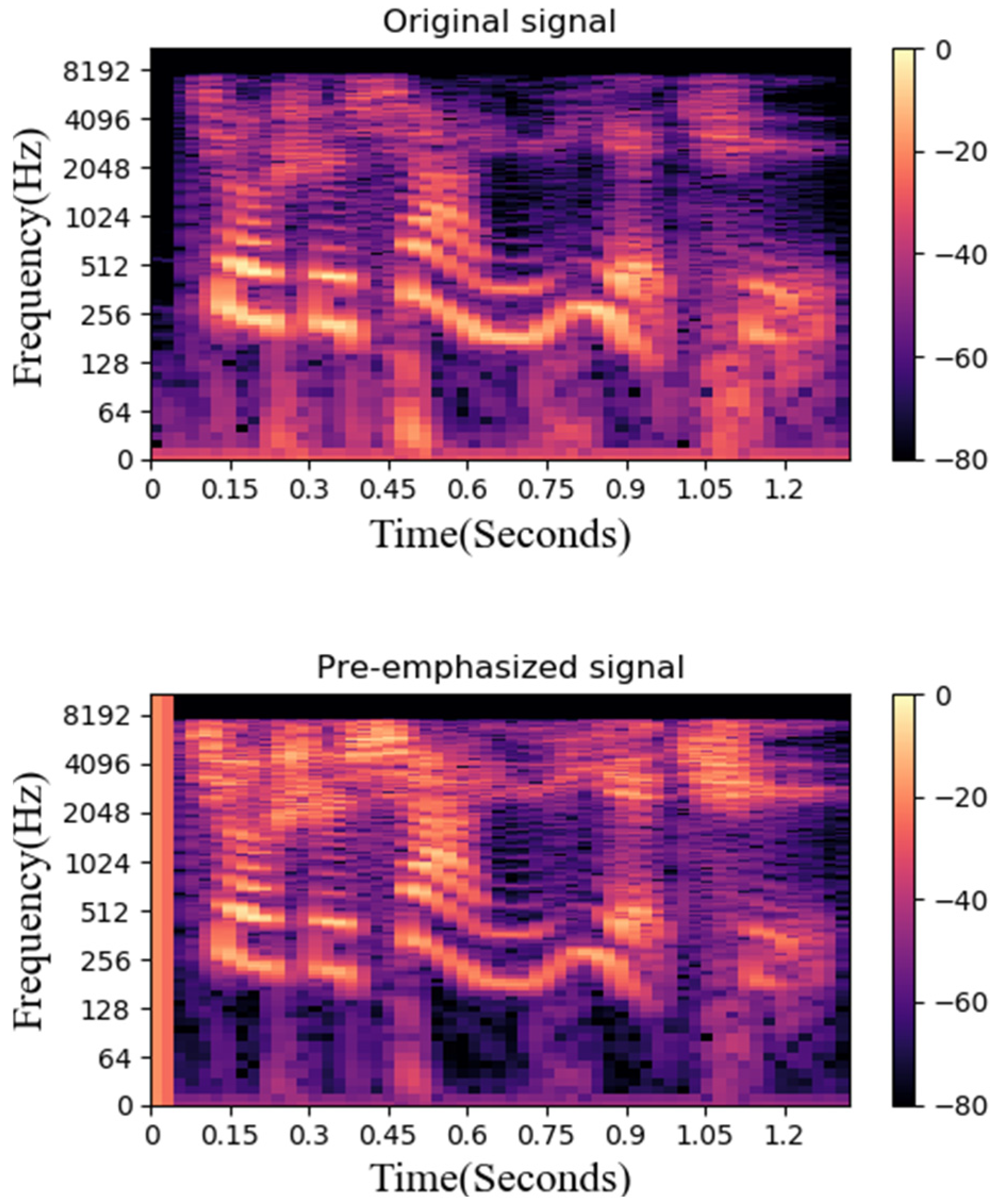

- Pre-emphasis: The signal is pre-emphasized, and the voice signal is passed through a high-pass filter, as in Equation (5), where is the output signal, is the original signal, and the value of is usually between 0.9 and 1.0; in this study, the default was 0.97. Pre-emphasis will boost the high-frequency part and flatten the spectrum of the audio, maintaining it in the entire frequency band from low to high frequencies, using the same signal-to-noise ratio to obtain the spectrum.

- (2)

- Frame blocking and Hamming: Frame blocking collects N sampling points into a frame and the value of N is set to 512. Subsequently, each frame is multiplied by a Hamming window to increase the continuity at the left and right ends of the frame. Assuming that the framed signal is , is the frame size and the windowed signal is as in Equation (6), and the Hamming window is calculated as in Equation (7), where a is set as 0.46 by default. After using the Hamming window, each frame is fast Fourier transformed to obtain the energy distribution in the spectrum, and the logarithmic energy and signal characteristics are obtained by 20 triangular bandpass filters.

2.2. Speech Representation and Emotion Recognition

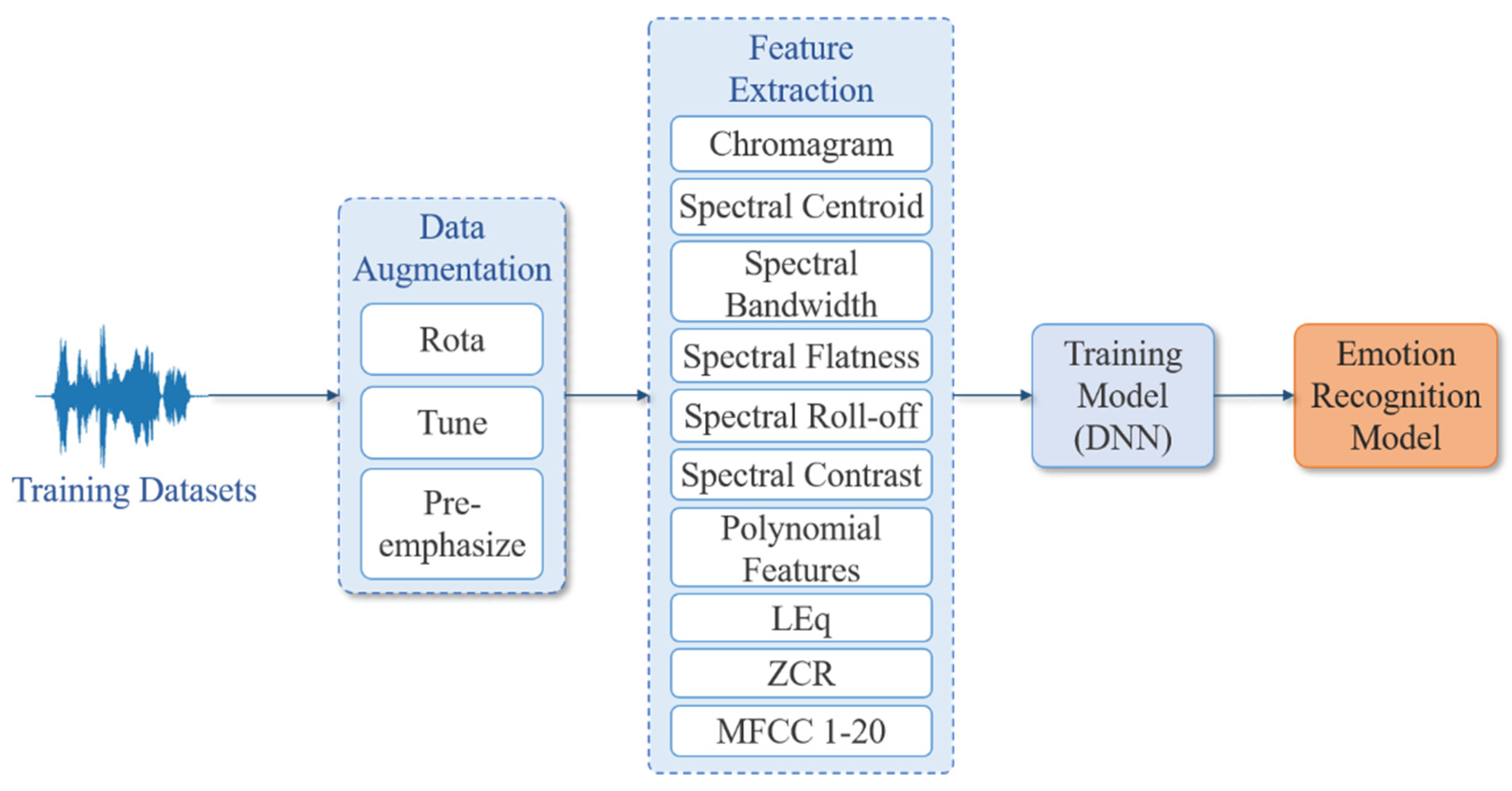

3. System Architecture and Research Method

3.1. System Architecture

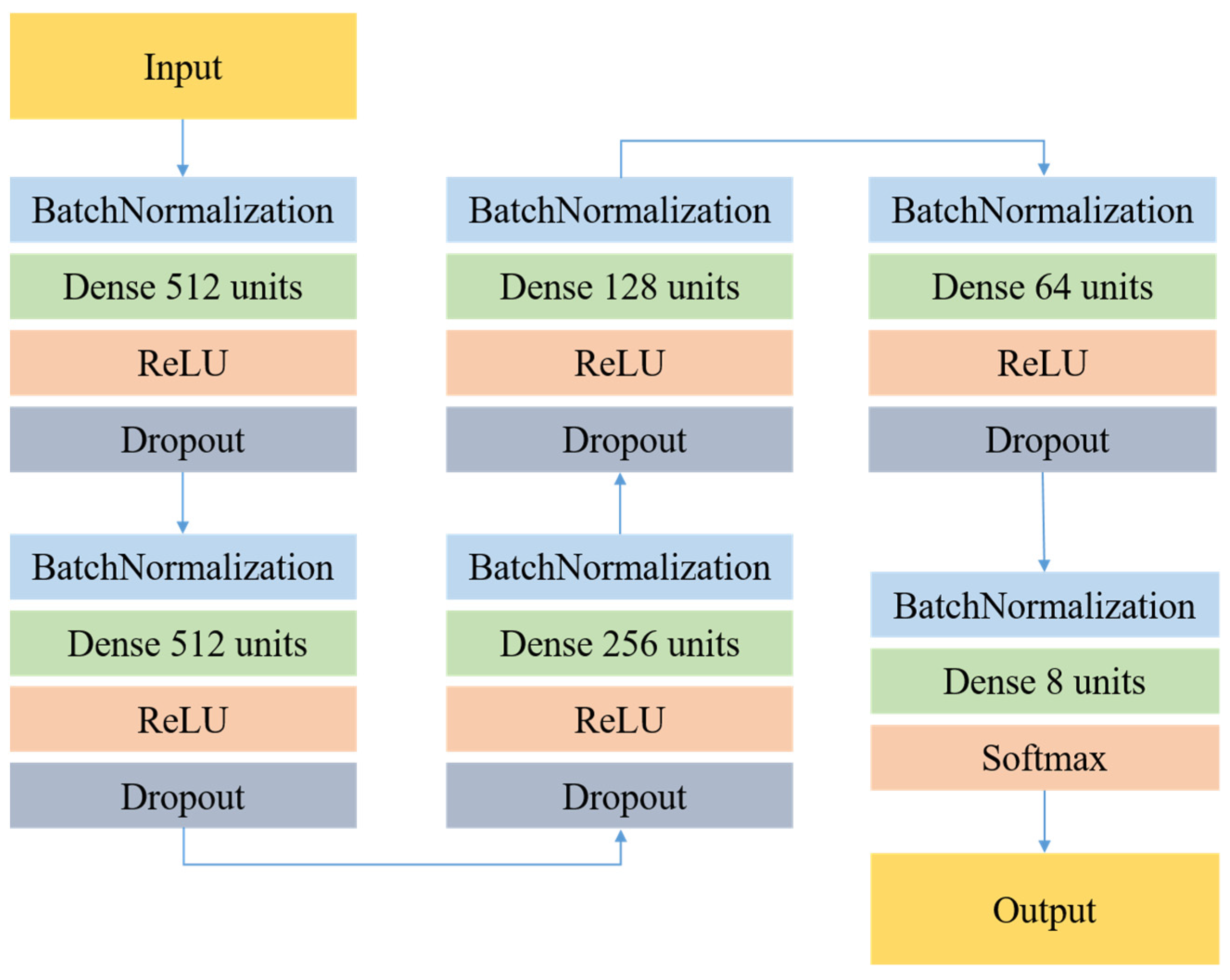

3.2. The Proposed DNN Model

3.3. Data Augmentation

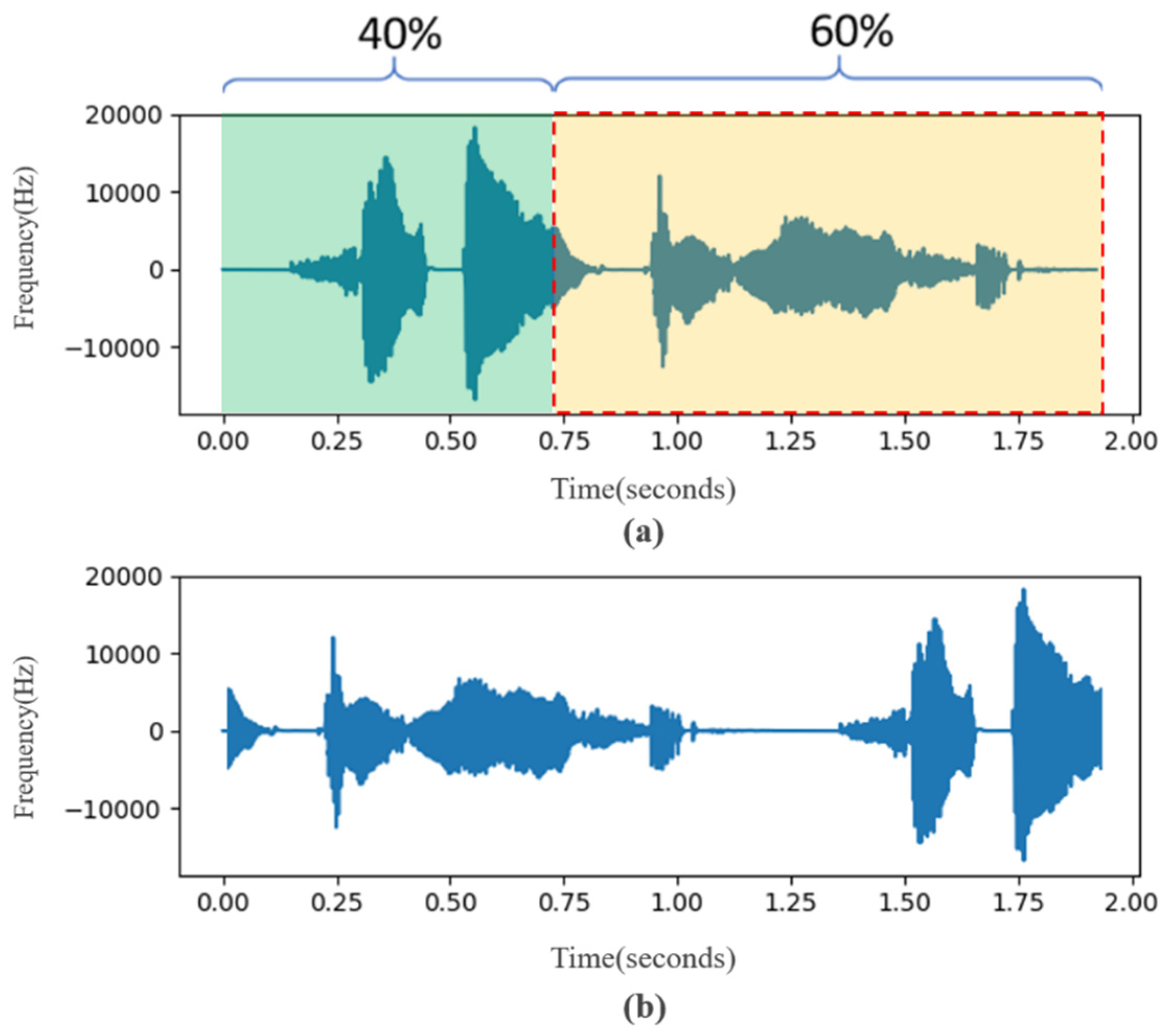

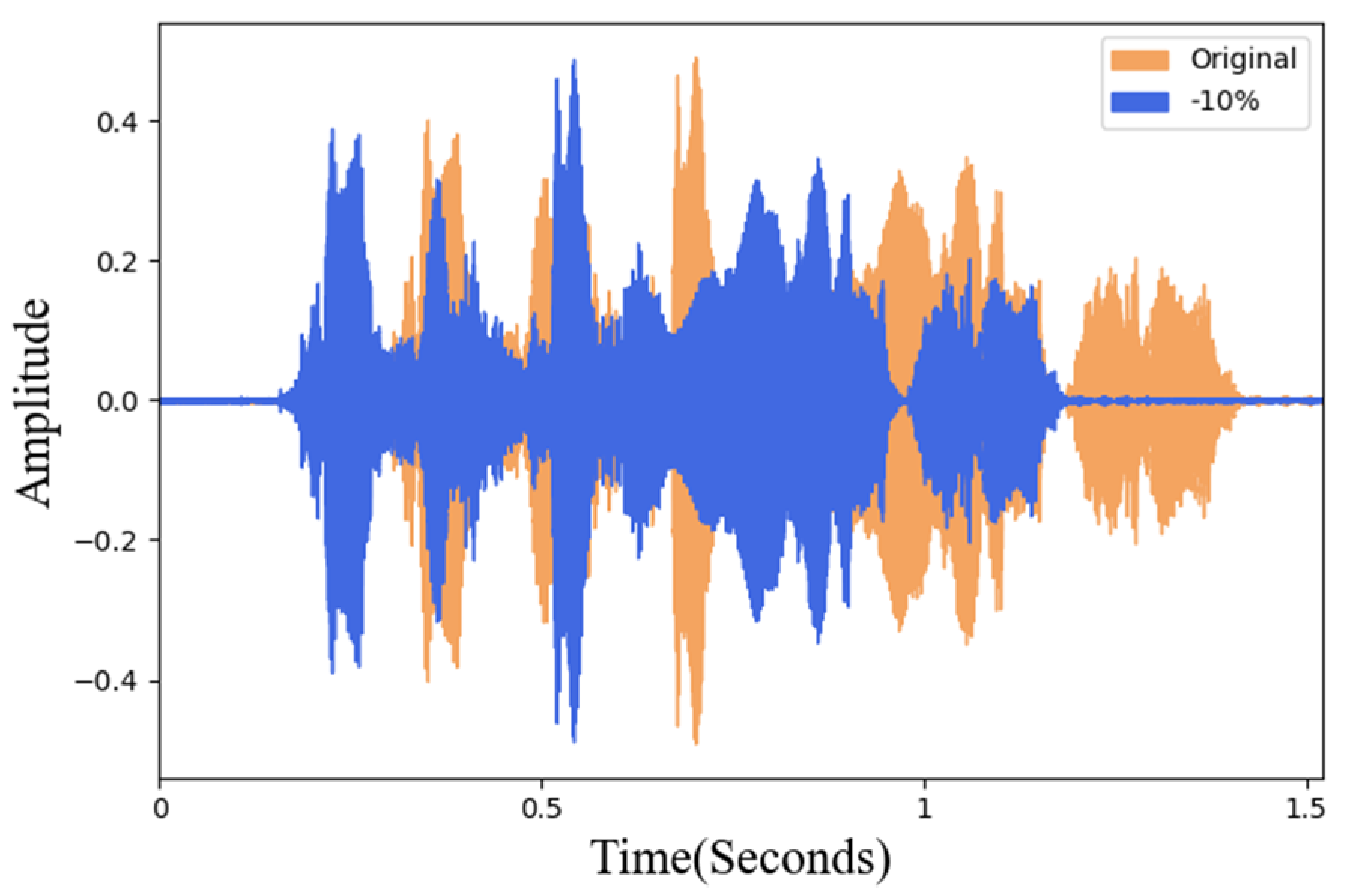

3.3.1. Waveform Adjustment

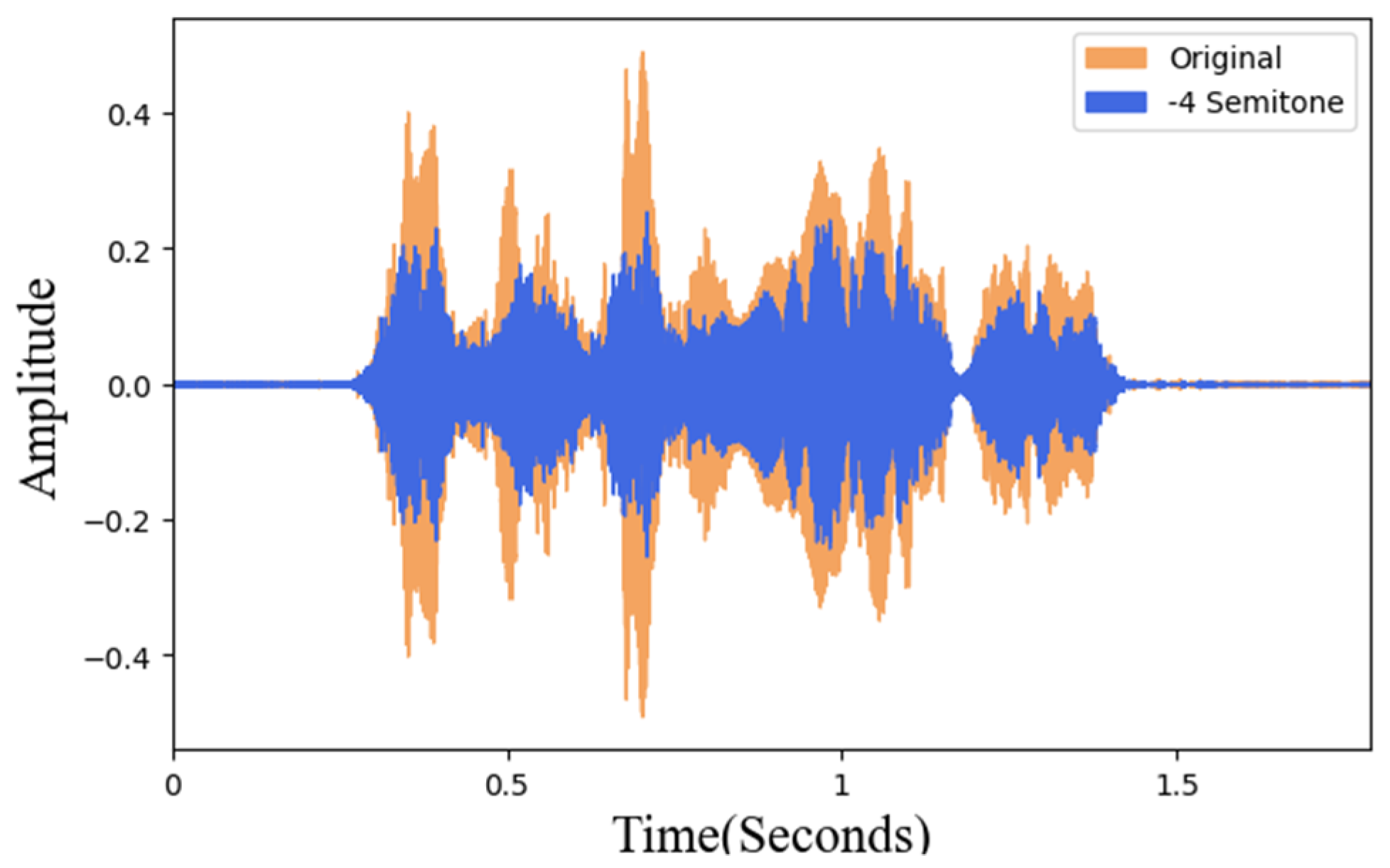

3.3.2. Pitch Adjustment

3.3.3. Pre-Emphasize

4. Experimental Results and Discussion

4.1. Experimental Environment

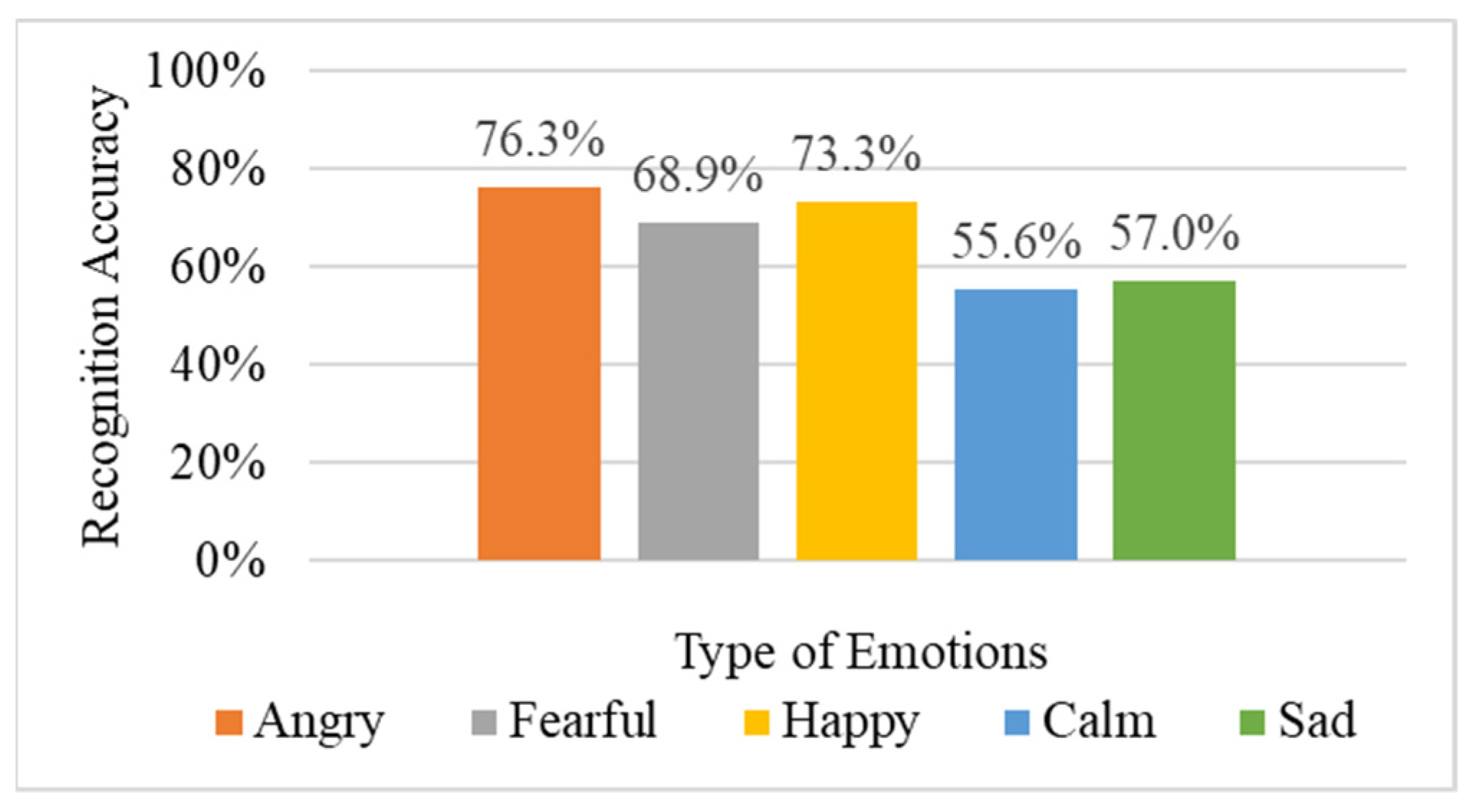

4.2. Experimental Results of the Original Method

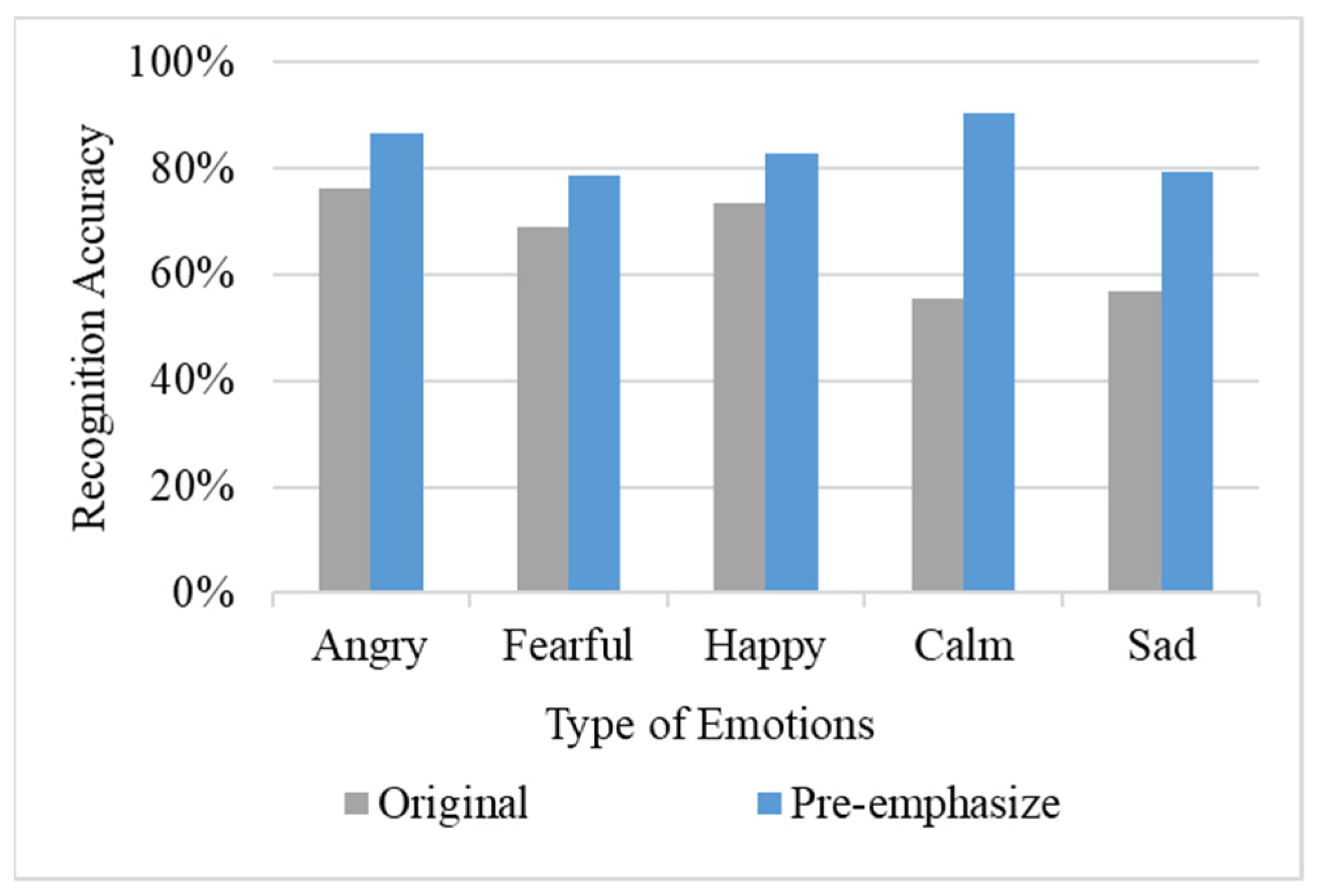

4.3. Experimental Results of the Pre-Emphasize

4.4. Rotating

4.5. Pitch Adjustment Analysis

4.5.1. Sound Frequency Adjustment

4.5.2. Pitch Adjustment

4.6. Comprehensive Adjustment

5. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Cowie, R.; Douglas-Cowie, E.; Tsapatsoulis, N.; Votsis, G.; Kollias, S.; Fellenz, W.; Taylor, J.G. Emotion recognition in human-computer interaction. IEEE Signal Process. Mag. 2001, 18, 32–80. [Google Scholar] [CrossRef]

- El Ayadi, M.; Kamel, M.S.; Karray, F. Survey on speech emotion recognition: Features, classification schemes, and databases. Pattern Recognit. 2011, 44, 572–587. [Google Scholar] [CrossRef]

- Koolagudi, S.G.; Rao, K.S. Emotion recognition from speech: A review. Int. J. Speech Technol. 2012, 15, 99–117. [Google Scholar] [CrossRef]

- Song, T.; Zheng, W.; Song, P.; Cui, Z. EEG emotion recognition using dynamical graph convolutional neural networks. IEEE Trans. Affect. Comput. 2018, 11, 532–541. [Google Scholar] [CrossRef] [Green Version]

- Zhang, J.; Yin, Z.; Chen, P.; Nichele, S. Emotion recognition using multi-modal data and machine learning techniques: A tutorial and review. Inf. Fusion 2020, 59, 103–126. [Google Scholar] [CrossRef]

- Akçay, M.B.; Oğuz, K. Speech emotion recognition: Emotional models, databases, features, preprocessing methods, supporting modalities, and classifiers. Speech Commun. 2020, 116, 56–76. [Google Scholar] [CrossRef]

- Kaur, J.; Singh, A.; Kadyan, V. Automatic speech recognition system for tonal languages: State-of-the-art survey. Arch. Comput. Methods Eng. 2020, 28, 1039–1068. [Google Scholar] [CrossRef]

- Schuller, B.W. Speech emotion recognition: Two decades in a nutshell, benchmarks, and ongoing trends. Commun. ACM 2018, 61, 90–99. [Google Scholar] [CrossRef]

- Li, L.; Zhao, Y.; Jiang, D.; Zhang, Y.; Wang, F.; Gonzalez, I.; Sahli, H. Hybrid deep neural network—Hidden Markov model (dnn-hmm) based speech emotion recognition. In Proceedings of the 2013 Humaine Association Conference on Affective Computing and Intelligent Interaction, Geneva, Switzerland, 2–5 September 2013; pp. 312–317. [Google Scholar]

- Mao, Q.; Dong, M.; Huang, Z.; Zhan, Y. Learning salient features for speech emotion recognition using convolutional neural networks. IEEE Trans. Multimed. 2014, 16, 2203–2213. [Google Scholar] [CrossRef]

- Zhang, S.; Zhang, S.; Huang, T.; Gao, W. Speech emotion recognition using deep convolutional neural network and discriminant temporal pyramid matching. IEEE Trans. Multimed. 2017, 20, 1576–1590. [Google Scholar] [CrossRef]

- Umamaheswari, J.; Akila, A. An enhanced human speech emotion recognition using hybrid of PRNN and KNN. In Proceedings of the 2019 International Conference on Machine Learning, Big Data, Cloud and Parallel Computing (COMITCon), Faridabad, India, 14–16 February 2019; pp. 177–183. [Google Scholar]

- Mustaqeem; Kwon, S. A CNN-assisted enhanced audio signal processing for speech emotion recognition. Sensors 2020, 20, 183. [Google Scholar]

- Li, D.; Liu, J.; Yang, Z.; Sun, L.; Wang, Z. Speech emotion recognition using recurrent neural networks with directional self-attention. Expert Syst. Appl. 2021, 173, 114683. [Google Scholar] [CrossRef]

- Abbaschian, B.J.; Sierra-Sosa, D.; Elmaghraby, A. Deep learning techniques for speech emotion recognition, from databases to models. Sensors 2021, 21, 1249. [Google Scholar] [CrossRef] [PubMed]

- Fahad, M.S.; Ranjan, A.; Yadav, J.; Deepak, A. A survey of speech emotion recognition in natural environment. Digit. Signal Processing 2021, 110, 102951. [Google Scholar] [CrossRef]

- Schuller, B.; Steidl, S.; Batliner, A.; Vinciarelli, A.; Scherer, K.; Ringeval, F.; Chetouani, M.; Weninger, F.; Eyben, F.; Marchi, E.; et al. The INTERSPEECH 2013 computational paralinguistics challenge: Social signals, conflict, emotion, autism. In Proceedings of the 14th Annual Conference of the International Speech Communication Association (INTERSPEECH 2013), Lyon, France, 25–29 August 2013. [Google Scholar]

- Eyben, F.; Scherer, K.R.; Schuller, B.W.; Sundberg, J.; André, E.; Busso, C.; Truong, K.P. The Geneva minimalistic acoustic parameter set (GeMAPS) for voice research and affective computing. IEEE Trans. Affect. Comput. 2015, 7, 190–202. [Google Scholar] [CrossRef] [Green Version]

- Grey, J.M.; Gordon, J.W. Perceptual effects of spectral modifications on musical timbres. J. Acoust. Soc. Am. 1978, 63, 1493–1500. [Google Scholar] [CrossRef]

- Johnston, J.D. Transform coding of audio signals using perceptual noise criteria. IEEE J. Sel. Areas Commun. 1988, 6, 314–323. [Google Scholar] [CrossRef] [Green Version]

- Jiang, D.N.; Lu, L.; Zhang, H.J.; Tao, J.H.; Cai, L.H. Music type classification by spectral contrast feature. In Proceedings of the IEEE International Conference on Multimedia and Expo, Lausanne, Switzerland, 26–29 August 2002; Volume 1, pp. 113–116. [Google Scholar]

- Peeters, G. A large set of audio features for sound description (similarity and classification) in the CUIDADO project. CUIDADO IST Proj. Rep. 2004, 54, 1–25. [Google Scholar]

- Cho, T.; Bello, J.P. On the relative importance of individual components of chord recognition systems. IEEE/ACM Trans. Audio Speech Lang. Process. 2013, 22, 477–492. [Google Scholar] [CrossRef]

- Gouyon, F.; Pachet, F.; Delerue, O. On the use of zero-crossing rate for an application of classification of percussive sounds. In Proceedings of the COST G-6 Conference on Digital Audio Effects (DAFX-00), Verona, Italy, 7–9 December 2000; Volume 5. [Google Scholar]

- Fletcher, H.; Munson, W.A. Loudness, its definition, measurement and calculation. Bell Syst. Tech. J. 1933, 12, 377–430. [Google Scholar] [CrossRef]

- Burkhardt, F.; Paeschke, A.; Rolfes, M.; Sendlmeier, W.F.; Weiss, B. A database of German emotional speech. In Proceedings of the 9th European Conference on Speech Communication and Technology, Lisboa, Portugal, 4–8 September 2005; Volume 5, pp. 1517–1520. [Google Scholar]

- Busso, C.; Bulut, M.; Lee, C.C.; Kazemzadeh, A.; Mower, E.; Kim, S.; Chang, J.N.; Lee, S.; Narayanan, S.S. IEMOCAP: Interactive emotional dyadic motion capture database. Lang. Resour. Eval. 2008, 42, 335–359. [Google Scholar] [CrossRef]

- Lin, Y.L.; Wei, G. Speech emotion recognition based on HMM and SVM. In Proceedings of the 2005 International Conference on Machine Learning and Cybernetics, Guangzhou, China, 18–21 August 2005; Volume 8, pp. 4898–4901. [Google Scholar]

- Chou, H.C.; Lin, W.C.; Chang, L.C.; Li, C.C.; Ma, H.P.; Lee, C.C. Nnime: The nthu-ntua Chinese interactive multimodal emotion corpus. In Proceedings of the 2017 Seventh International Conference on Affective Computing and Intelligent Interaction (ACII), San Antonio, TX, USA, 23–26 October 2017; pp. 292–298. [Google Scholar]

- Li, Y.; Tao, J.; Chao, L.; Bao, W.; Liu, Y. CHEAVD: A Chinese natural emotional audio–visual database. J. Ambient Intell. Humaniz. Comput. 2017, 8, 913–924. [Google Scholar] [CrossRef]

- Russell, J.A.; Pratt, G. A description of the affective quality attributed to environments. J. Personal. Soc. Psychol. 1980, 38, 311. [Google Scholar] [CrossRef]

- Schneider, S.; Baevski, A.; Collobert, R.; Auli, M. wav2vec: Unsupervised pre-training for speech recognition. arXiv 2019, arXiv:1904.05862. [Google Scholar]

- Baevski, A.; Zhou, Y.; Mohamed, A.; Auli, M. wav2vec 2.0: A framework for self-supervised learning of speech representations. Adv. Neural Inf. Process. Syst. 2020, 33, 12449–12460. [Google Scholar]

- Hsu, W.N.; Bolte, B.; Tsai, Y.H.H.; Lakhotia, K.; Salakhutdinov, R.; Mohamed, A. Hubert: Self-supervised speech representation learning by masked prediction of hidden units. IEEE/ACM Trans. Audio Speech Lang. Process. 2021, 29, 3451–3460. [Google Scholar] [CrossRef]

- Wang, C.; Wu, Y.; Qian, Y.; Kumatani, K.; Liu, S.; Wei, F.; Zeng, M.; Huang, X. Unispeech: Unified speech representation learning with labeled and unlabeled data. In Proceedings of the International Conference on Machine Learning, Virtual, 18–24 July 2021; pp. 10937–10947. [Google Scholar]

- Chen, S.; Wang, C.; Chen, Z.; Wu, Y.; Liu, S.; Chen, Z.; Li, J.; Kanda, N.; Yoshioka, T.; Xiao, X. Wavlm: Large-scale self-supervised pre-training for full stack speech processing. arXiv 2021, arXiv:2110.13900. [Google Scholar]

- Batliner, A.; Steidl, S.; Nöth, E. Releasing a thoroughly annotated and processed spontaneous emotional database: The FAU Aibo emotion corpus. In Proceedings of the Satellite Workshop of LREC, Marrakech, Morocco, 26–27 May 2008; Volume 28. [Google Scholar]

- Livingstone, S.R.; Russo, F.A. The Ryerson audio-visual database of emotional speech and song (RAVDESS): A dynamic, multimodal set of facial and vocal expressions in North American English. PLoS ONE 2018, 13, e0196391. [Google Scholar] [CrossRef] [Green Version]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Starner, T.; Pentland, A. Real-time American sign language recognition from video using hidden Markov models. In Motion-based Recognition; Springer: Dordrecht, The Netherlands, 1997; pp. 227–243. [Google Scholar]

- Povey, D.; Burget, L.; Agarwal, M.; Akyazi, P.; Kai, F.; Ghoshal, A.; Glembek, O.; Goel, N.; Karafiát, M.; Rastrow, A.; et al. The subspace Gaussian mixture model—A structured model for speech recognition. Comput. Speech Lang. 2011, 25, 404–439. [Google Scholar] [CrossRef] [Green Version]

- Lim, W.; Jang, D.; Lee, T. Speech emotion recognition using convolutional and recurrent neural networks. In Proceedings of the 2016 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA), Jeju, Korea, 13–15 December 2016; pp. 1–4. [Google Scholar]

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A. Inception-v4, inception-resnet and the impact of residual connections on learning. In Proceedings of the AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017; Volume 31. [Google Scholar]

| Model | Angry | Fearful | Happy | Calm | Sad | Average |

|---|---|---|---|---|---|---|

| Original | 76.3% | 68.9% | 73.3% | 55.6% | 57.0% | 66.2% |

| Pre-emphasize | 86.7% | 78.5% | 83.0% | 90.4% | 79.3% | 83.6% |

| Model | Angry | Fearful | Happy | Calm | Sad | Average |

|---|---|---|---|---|---|---|

| Original | 76.3% | 68.9% | 73.3% | 55.6% | 57.0% | 66.2% |

| 10% | 83.7% | 74.8% | 91.9% | 86.7% | 80.7% | 83.6% |

| 20% | 83.7% | 77.8% | 88.9% | 87.4% | 83.0% | 84.1% |

| 30% | 83.0% | 68.9% | 88.1% | 87.4% | 84.4% | 82.4% |

| 40% | 87.4% | 80.0% | 91.1% | 88.9% | 76.3% | 84.7% |

| 50% | 80.7% | 73.3% | 89.6% | 85.9% | 78.5% | 81.6% |

| 60% | 83.7% | 75.6% | 91.1% | 87.4% | 80.0% | 83.6% |

| 70% | 82.2% | 69.6% | 88.1% | 85.2% | 86.7% | 82.4% |

| 80% | 83.0% | 73.3% | 89.6% | 85.2% | 83.7% | 83.0% |

| 90% | 82.2% | 72.6% | 91.1% | 87.4% | 79.3% | 82.5% |

| Model | Angry | Fearful | Happy | Calm | Sad | Average |

|---|---|---|---|---|---|---|

| Original | 76.3% | 68.9% | 73.3% | 55.6% | 57.0% | 66.2% |

| −30% | 84.4% | 77.8% | 89.6% | 93.3% | 78.5% | 84.7% |

| −25% | 89.6% | 77.0% | 91.9% | 93.3% | 79.3% | 86.2% |

| −20% | 83.7% | 76.3% | 94.1% | 91.9% | 82.2% | 85.6% |

| −15% | 88.1% | 77.0% | 92.6% | 90.4% | 82.2% | 86.1% |

| −10% | 85.9% | 78.5% | 93.3% | 91.1% | 83.7% | 86.5% |

| −5% | 85.2% | 72.6% | 94.8% | 88.9% | 81.5% | 84.6% |

| 5% | 85.2% | 71.9% | 85.9% | 90.4% | 82.2% | 83.1% |

| 10% | 85.9% | 77.0% | 89.6% | 92.6% | 80.7% | 85.2% |

| 15% | 86.7% | 80.7% | 88.9% | 87.4% | 77.8% | 84.3% |

| 20% | 80.7% | 74.8% | 90.4% | 86.7% | 79.3% | 82.4% |

| 25% | 82.2% | 76.3% | 88.9% | 85.2% | 84.4% | 83.4% |

| 30% | 77.2% | 71.1% | 90.0% | 83.3% | 83.9% | 81.1% |

| Model | Angry | Fearful | Happy | Calm | Sad | Average |

|---|---|---|---|---|---|---|

| Original | 76.3% | 68.9% | 73.3% | 55.6% | 57.0% | 66.2% |

| 6 | 79.7% | 73.7% | 89.4% | 84.3% | 84.2% | 82.3% |

| 5 | 77.0% | 76.3% | 91.1% | 88.1% | 72.6% | 81.0% |

| 4 | 77.0% | 77.8% | 86.7% | 86.7% | 75.6% | 80.7% |

| 3 | 82.2% | 75.6% | 85.2% | 80.7% | 79.3% | 80.6% |

| 2 | 83.7% | 74.1% | 86.7% | 86.7% | 78.5% | 81.9% |

| 1 | 83.7% | 75.6% | 90.4% | 86.7% | 80.7% | 83.4% |

| −1 | 82.2% | 68.9% | 85.9% | 85.9% | 84.4% | 81.5% |

| −2 | 80.0% | 77.0% | 82.2% | 85.9% | 79.3% | 80.9% |

| −3 | 77.0% | 72.6% | 85.2% | 89.6% | 78.5% | 80.6% |

| −4 | 75.6% | 74.8% | 89.6% | 85.9% | 78.5% | 80.9% |

| −5 | 85.2% | 80.0% | 87.4% | 87.4% | 74.8% | 83.0% |

| −6 | 81.5% | 76.3% | 88.1% | 88.9% | 79.3% | 82.8% |

| Model | Angry | Fearful | Happy | Calm | Sad | Average |

|---|---|---|---|---|---|---|

| Original | 76.3% | 68.9% | 73.3% | 55.6% | 57.0% | 66.2% |

| R40%&Pre | 85.2% | 79.3% | 96.3% | 91.1% | 79.3% | 86.2% |

| R40%&T—10%&Pre | 83.7% | 77.8% | 93.3% | 93.3% | 83.0% | 86.2% |

| T—10%&Pre | 90.3% | 79.4% | 95.3% | 92.2% | 77.8% | 87.0% |

| R40%&T—10% | 93.3% | 82.2% | 97.8% | 93.3% | 77.8% | 88.9% |

| Predicted | ||||||

|---|---|---|---|---|---|---|

| Happy | Sad | Angry | Calm | Fearful | ||

| Actual | Happy | 93.3% | 0.0% | 4.4% | 2.2% | 0.0% |

| Sad | 0.0% | 82.2% | 0.0% | 0.0% | 17.8% | |

| Angry | 2.2% | 0.0% | 97.8% | 0.0% | 0.0% | |

| Calm | 0.0% | 0.0% | 0.0% | 93.3% | 6.7% | |

| Fearful | 0.0% | 20.0% | 0.0% | 2.2% | 77.8% | |

| Method | Training Time | Accuracy (Training) | Accuracy (Validation) | Accuracy (Testing) |

|---|---|---|---|---|

| KNN | 1.5 (sec) | 81.1% | - | 71.2% |

| GoogLeNet | 13.8 (min) | - | 65.1% | 51.2% |

| DNN | 25.4 (sec) | 93.3% | 72.8% | 66.2% |

| Method | Training Time | Accuracy (Training) | Accuracy (Validation) | Accuracy (Testing) |

|---|---|---|---|---|

| KNN | 5.4 (sec) | 82.5% | - | 76.6% |

| GoogLeNet | 43.5 (min) | - | 75.6% | 66.5% |

| DNN | 32.7 (sec) | 95.2% | 88.1% | 86.2% |

| Method | Training Time | Accuracy (Training) | Accuracy (Validation) | Accuracy (Testing) |

|---|---|---|---|---|

| KNN | 5.3 (sec) | 82.2% | - | 75.7% |

| GoogLeNet | 41.2 (min) | - | 72.4% | 66.7% |

| DNN | 64.9 (sec) | 97.0% | 92.7% | 88.9% |

| Method | Training Time | Accuracy (Training) | Accuracy (Validation) | Accuracy (Testing) |

|---|---|---|---|---|

| KNN | 9.7 (sec) | 84.1% | - | 77.9% |

| GoogLeNet | 56.8 (min) | - | 81.0% | 68.7% |

| DNN | 50.9 (sec) | 94.4% | 89.1% | 86.2% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lee, M.-C.; Yeh, S.-C.; Chang, J.-W.; Chen, Z.-Y. Research on Chinese Speech Emotion Recognition Based on Deep Neural Network and Acoustic Features. Sensors 2022, 22, 4744. https://doi.org/10.3390/s22134744

Lee M-C, Yeh S-C, Chang J-W, Chen Z-Y. Research on Chinese Speech Emotion Recognition Based on Deep Neural Network and Acoustic Features. Sensors. 2022; 22(13):4744. https://doi.org/10.3390/s22134744

Chicago/Turabian StyleLee, Ming-Che, Sheng-Cheng Yeh, Jia-Wei Chang, and Zhen-Yi Chen. 2022. "Research on Chinese Speech Emotion Recognition Based on Deep Neural Network and Acoustic Features" Sensors 22, no. 13: 4744. https://doi.org/10.3390/s22134744

APA StyleLee, M.-C., Yeh, S.-C., Chang, J.-W., & Chen, Z.-Y. (2022). Research on Chinese Speech Emotion Recognition Based on Deep Neural Network and Acoustic Features. Sensors, 22(13), 4744. https://doi.org/10.3390/s22134744