Towards Continuous Camera-Based Respiration Monitoring in Infants

Abstract

1. Introduction

2. Materials and Methods

2.1. Materials

2.1.1. Experimental Setup

2.1.2. Dataset

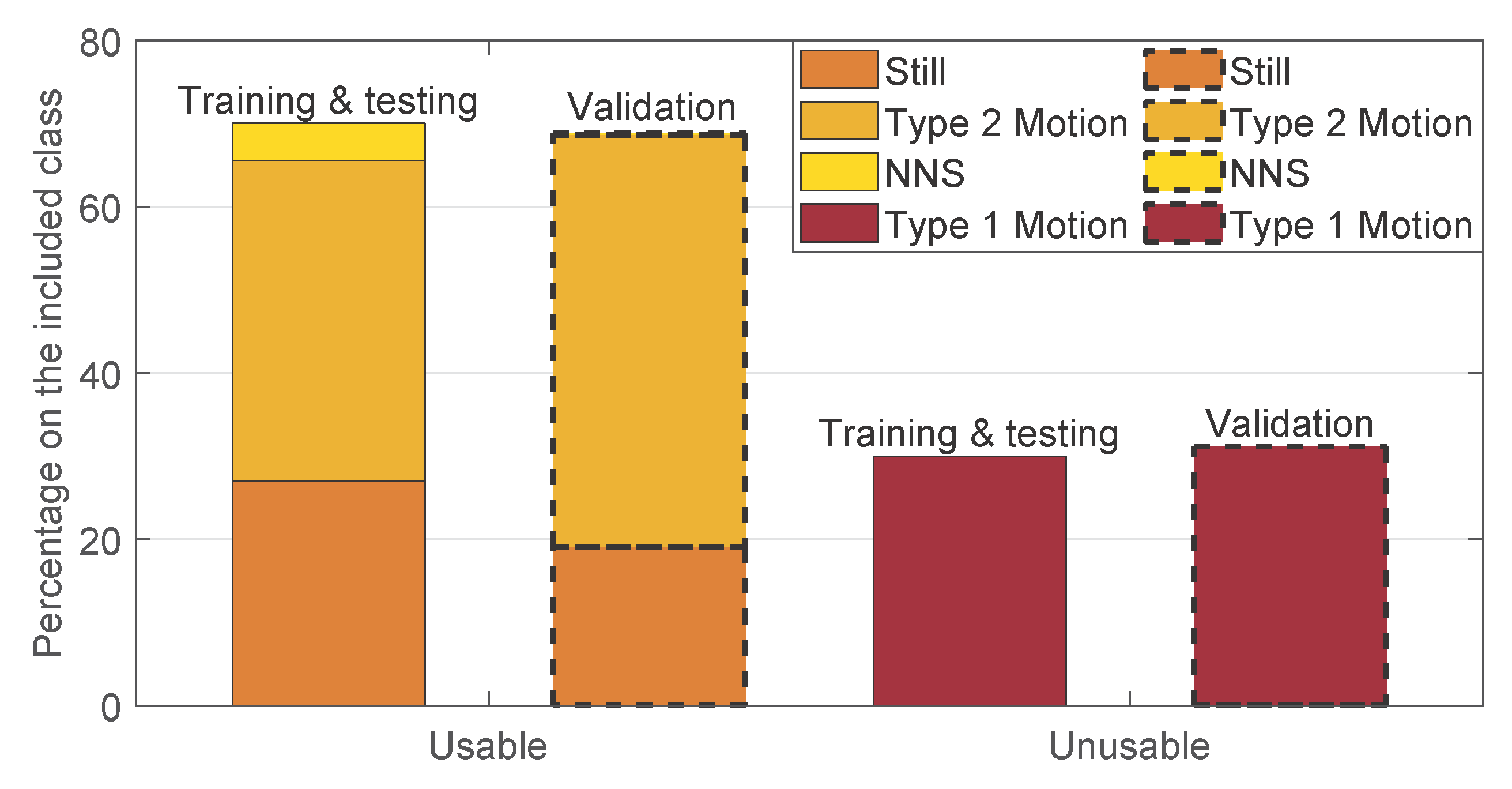

2.1.3. Manual Annotation

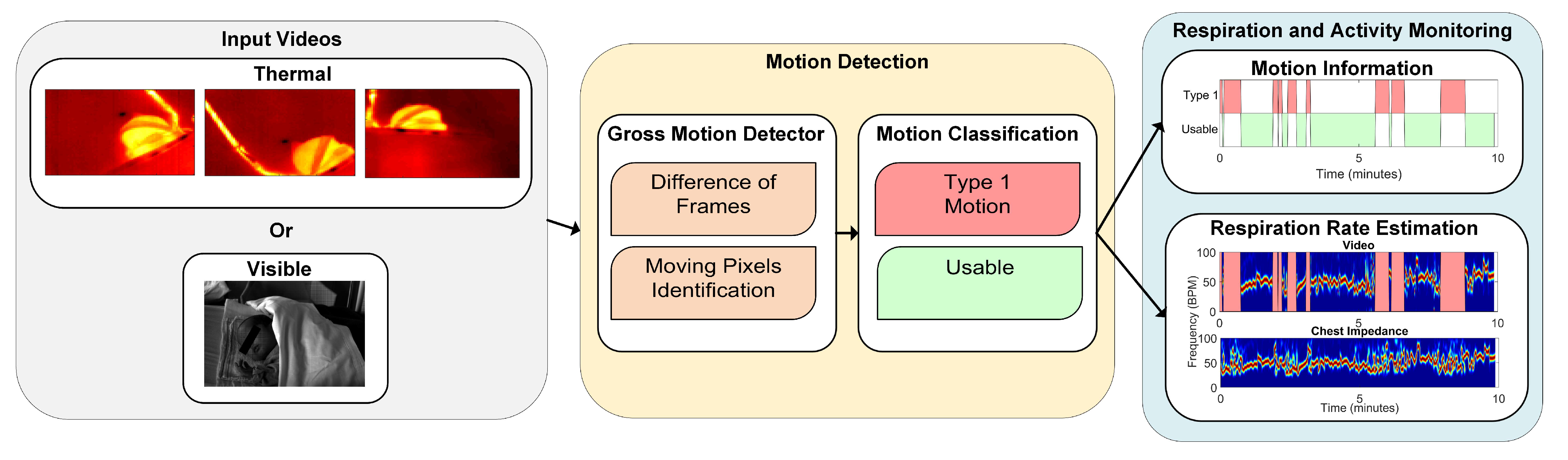

2.2. Method

2.2.1. Preprocessing

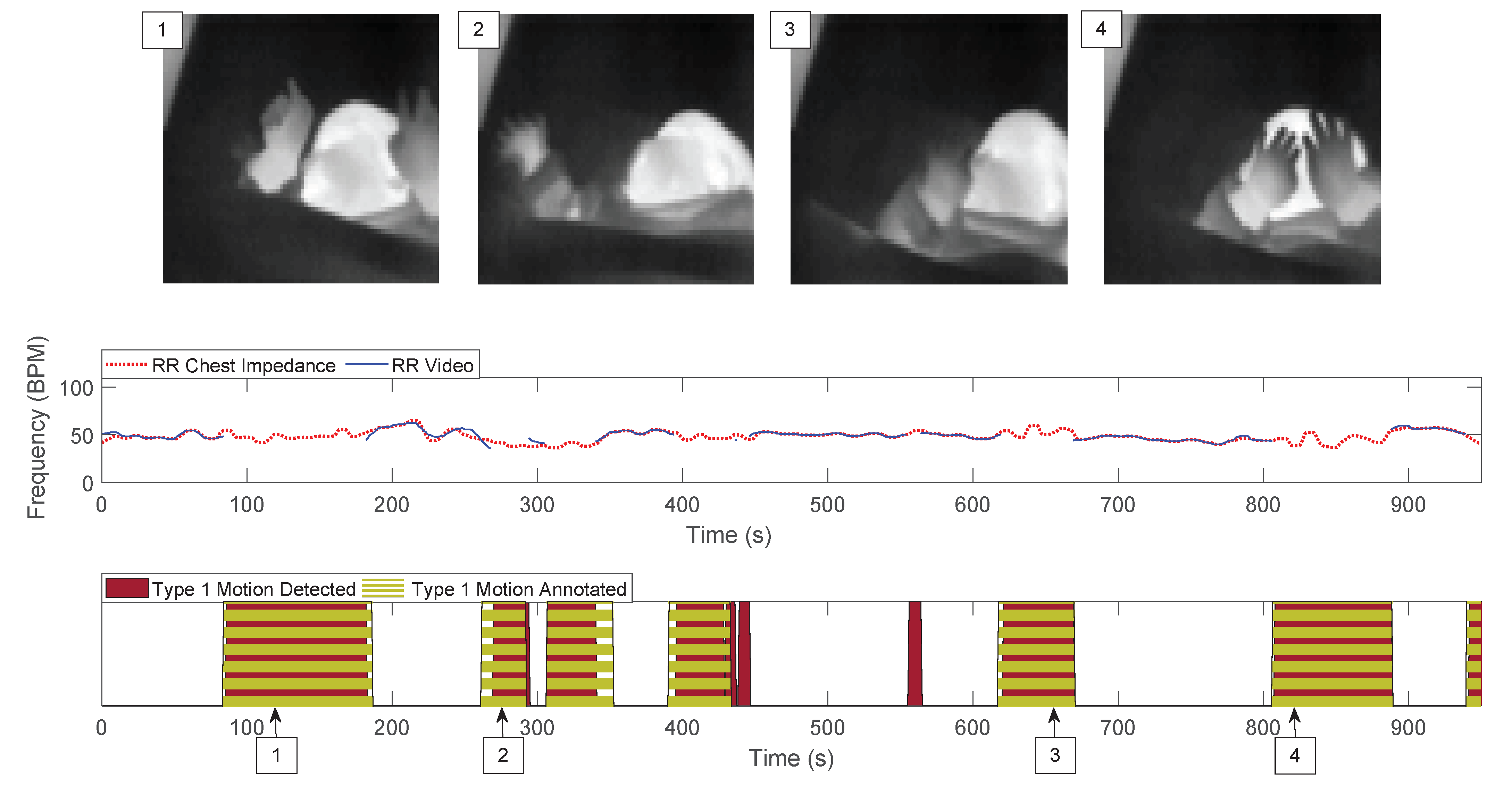

2.2.2. Motion Detection

- Gross Motion Detector: let be the frames in the jth window, with , and samples, corresponding to the samples in the jth window with a sampling period s. The gross motion detector was based on the absolute value of the Difference of Frames (DOFs) in the jth window. More formally:the operator represents the partial derivative with respect to the time dimension. contains the frames resulting from the absolute value of the difference of frames operation at each time sample, with . At this point, a first threshold value was introduced, which turns D into binary images identifying what we considered to be moving pixels:is a threshold that was introduced to differentiate the source of the change between noise and motion, it is defined as:the numerator represents the range of , i.e., the difference between the maximum value and the minimum value considering all the pixels of all the frames in , and is a value that was optimized. The ratio of moving pixels was then calculated as:Here, is an element of at the position and .

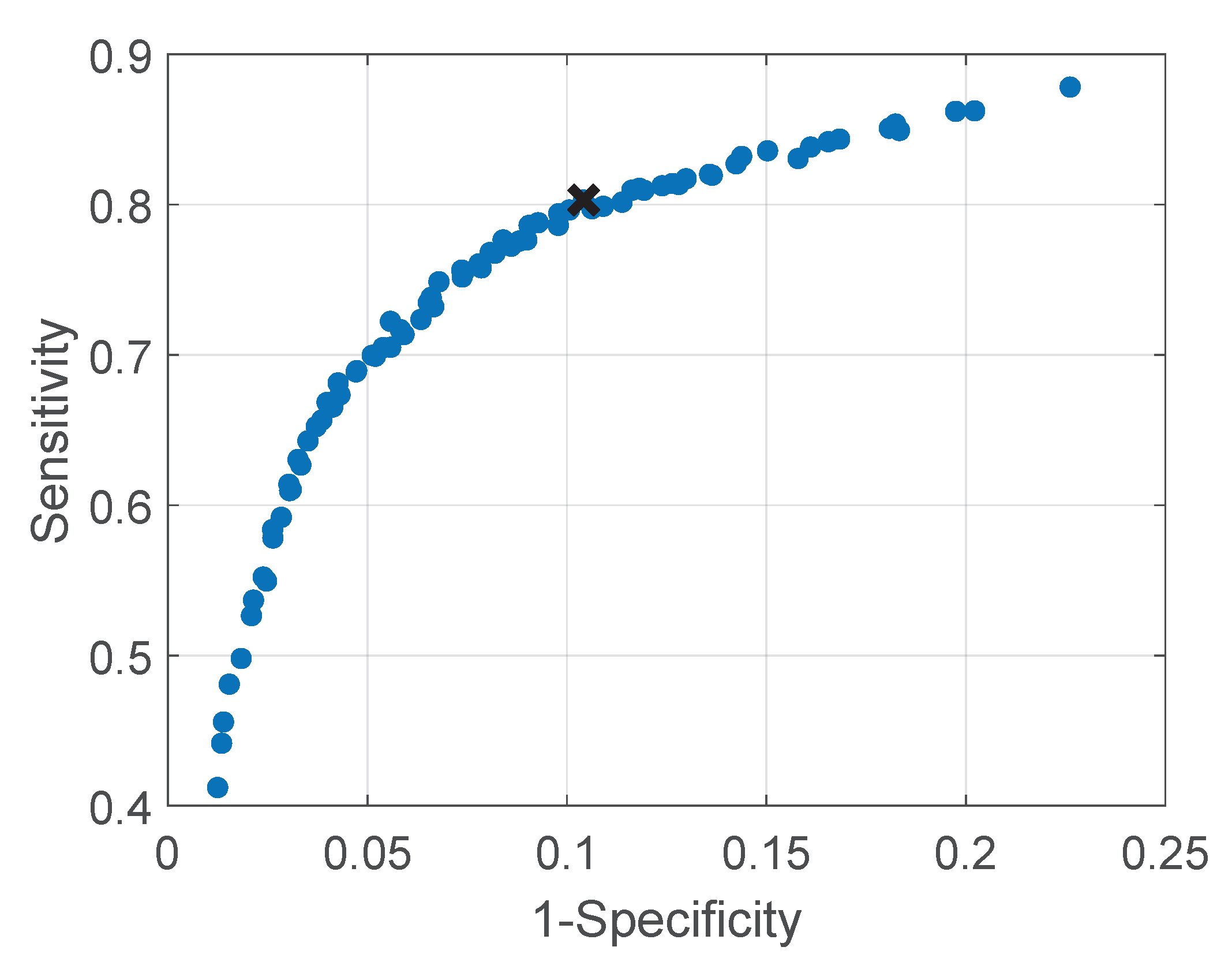

- Motion Classification: the ratio of moving pixels was used to perform the classification between usable and unusable segments for RR detection. In particular, we aim at detecting the unusable moments, i.e., the ones containing type 1 motion. The main assumption is that type 1 is part of a more complex kind of motion, typical of infants’ crying motion. Therefore, the simplest way to detect it is to assume that type 1 motion will result in more moving pixels compared to any of the usable segments.To perform a classification between the two, a second threshold was introduced, which was applied to the ratio of moving pixels . The final classification was, therefore, performed on a window-based fashion, i.e., each window was classified as containing type 1 motion, corresponding to 1, or usable, corresponding to 0.Since we used three cameras in the thermal setup, we applied this algorithm three times. For the RGB dataset this was not necessary, as there was only a single camera used. In the visible case the classification will be:For the thermal case instead:, , and are the ratios of moving pixels obtained from the three thermal views.

- Ground Truth: The ground truth used to evaluate the performance of our motion detector was obtained based on the manual annotations presented in Section 2.1.3. In particular, the ground truth was built using the sliding window approach. Each window was classified as excluded, as type 1 motion, or as usable. The condition used was the presence of at least a frame in the window which results in being true for one of those categories. The excluded class had the priority, if this was true for at least a frame in the window, the entire window was classified as excluded. If the latter was false then type 1 motion was taken into consideration in the same manner, and lastly if the two above were both false we classified the window as usable.

- Parameters Optimization: the factor , for the moving pixels detection, and the threshold , for the motion classification, were optimized. A leave-one-subject-out cross-validation was used to optimize the two parameters. The approach was chosen considering that environment changes, e.g., environment temperature, blankets type, and position, can influence the parameters values and therefore, the between-baby variability is more important than the within-baby variability. The set of parameters that resulted in the highest balanced accuracy for each fold was considered as a candidate set. The final chosen set was the most selected candidate set. This metric was preferred compared to the classic accuracy due to the imbalance in our two classes (usable was more frequent than type 1 motion). The optimization was performed on the training and testing set, presented in Table 1. This set includes 9 babies and therefore, 9 folds were performed in the cross-validation. Two sets of parameters were empirically chosen for the training and correspond to and . The most chosen set, used in the next steps, was and , more information on the results can be found in Section 3.

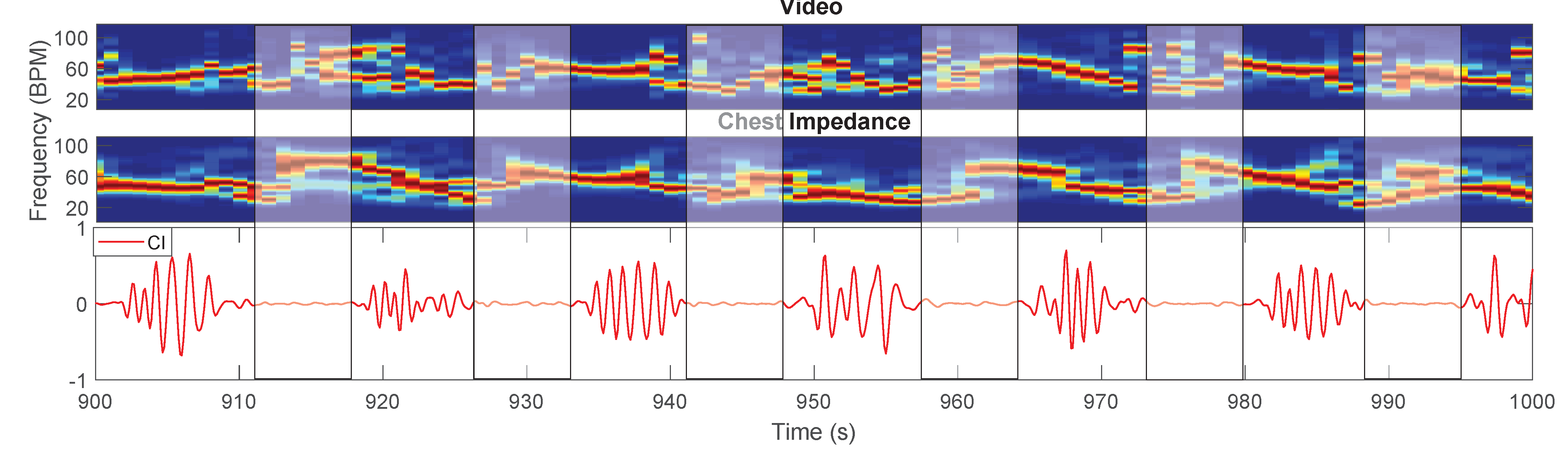

2.2.3. Respiration Rate Estimation

- Pseudo-Periodicity: this first feature is based on the assumption that respiration can be considered a periodic signal. This feature was not changed compared to [26]. A differential filter was used to attenuate low-frequencies resulting in filtered time domain signals called . The signals were zeropadded, reaching a length equal to , and multiplied for an Hanning window. Afterwards, a 1D Discrete Fourier Transform (DFT) was used to estimate the spectrum called with and . This feature consists of the calculation of the height of the normalized spectrum’s peak. More formally:Each represent the height of the peak of the spectrum of the pixel in position , are elements of the first feature .This feature is sensitive to the presence of type 2 motion. Regions moving due to this type of motion can generate a big variation in the pixels’ values (depending on the contrast). This variation can, therefore, produce a strong DC component, which will result in a high . The combination with the other features allows us to obtain motion robustness, Figure 3 presents an example during a type 2 motion and the pseudo-periodicity feature is visible in Figure 3b.

- Respiration Rate Clusters (RR Clusters): this feature is based on the observation that respiration pixels are not isolated but grouped in clusters. To automatically identify the pixels of interest more accurately, modifications were introduced to this feature to improve the robustness to the presence of NNS, typical when the infant has the soother, and to cope with the presence of the respiration’s first harmonic. The frequencies corresponding to the local maxima of the spectrum were found and the properties of the harmonic were checked:is a vector, obtained for the pixel in position , containing the frequencies of the local maxima in the band of interest, which is identified by and respectively and Hz. The length of the vector is, therefore, variable and dependent on the spectrum content of each pixel , this operation was performed using the MATLAB function findpeaks. The harmonic properties were checked:is the spectrum of the pixels’ time domain signal calculated as but without applying the differential filter and is an element of .We have, therefore, estimated the main frequency component for each pixel. To avoid erroneous RR estimation caused by higher frequencies components, e.g., caused by NNS, the that were higher than were put to zero. Therefore:The are elements of , an example is shown in Figure 3f. The non-linear filter introduced in [26] was applied:where r and o identify the kernel cell, whereas m and l indicate the pixel. is a constant empirically chosen and equal to 70 as indicated in our previous work [26]. The resulting frame will map the pixels having similar frequencies around them.It should be noted that the on which we imposed the value 0 in Equation (10), will not result in a high , even if there are clusters of zeros in . This is due to the equation of the filter that with will produce NaNs (Not a Number). The same will happen for regions with type 2 motion, where the main frequency component is the DC. This property allowed to avoid type 2 motion regions in the pixel selection phase achieving motion robustness, an example is visible in Figure 3e.

- Gradient: this last feature is based on the assumption that respiration motion can be only visualized at edges. This feature has been modified to make it independent of the setup used:where and represent the partial derivatives in the two spatial dimensions, is an empirical threshold equal to 16, which resulted in identifying the edges of both thermal and grayscale images and is the series of frames in the th window. is an average image representative of the current window evaluated as the average of all the images in , with elements . The resulting matrix will be the third feature . The use of to evaluate the gradient can also ensure robustness to some type 2 motion, whose regions will not be visible in the average image if the motion is transient enough. In the example in Figure 3c the pixels involved in the type 2 motion are still selected in the gradient feature, but RR Clusters ensures the correct pixels are chosen.

2.3. Evaluation Metrics

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Fairchild, K.; Mohr, M.; Paget-Brown, A.; Tabacaru, C.; Lake, D.; Delos, J.; Moorman, J.R.; Kattwinkel, J. Clinical associations of immature breathing in preterm infants: Part 1—central apnea. Pediatr. Res. 2016, 80, 21–27. [Google Scholar] [CrossRef] [PubMed]

- Baharestani, M.M. An overview of neonatal and pediatric wound care knowledge and considerations. Ostomy/Wound Manag. 2007, 53, 34–55. [Google Scholar]

- Di Fiore, J.M. Neonatal cardiorespiratory monitoring techniques. Semin. Neonatol. 2004, 9, 195–203. [Google Scholar] [CrossRef]

- Alinovi, D.; Ferrari, G.; Pisani, F.; Raheli, R. Respiratory rate monitoring by video processing using local motion magnification. In Proceedings of the 2018 26th European Signal Processing Conference (EUSIPCO), Rome, Italy, 3–7 September 2018; pp. 1780–1784. [Google Scholar]

- Sun, Y.; Wang, W.; Long, X.; Meftah, M.; Tan, T.; Shan, C.; Aarts, R.M.; de With, P.H.N. Respiration monitoring for premature neonates in NICU. Appl. Sci. 2019, 9, 5246. [Google Scholar] [CrossRef]

- Jorge, J.; Villarroel, M.; Chaichulee, S.; Guazzi, A.; Davis, S.; Green, G.; McCormick, K.; Tarassenko, L. Non-contact monitoring of respiration in the neonatal intensive care unit. In Proceedings of the 2017 12th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2017), Washington, DC, USA, 30 May–3 June 2017; pp. 286–293. [Google Scholar]

- Huang, X.; Sun, L.; Tian, T.; Huang, Z.; Clancy, E. Real-time non-contact infant respiratory monitoring using UWB radar. In Proceedings of the 2015 IEEE 16th International Conference on Communication Technology (ICCT), Hangzhou, China, 18–20 October 2015; pp. 493–496. [Google Scholar]

- Kim, J.D.; Lee, W.H.; Lee, Y.; Lee, H.J.; Cha, T.; Kim, S.H.; Song, K.M.; Lim, Y.H.; Cho, S.H.; Cho, S.H.; et al. Non-contact respiration monitoring using impulse radio ultrawideband radar in neonates. R. Soc. Open Sci. 2019, 6, 190149. [Google Scholar] [CrossRef]

- Mercuri, M.; Lorato, I.R.; Liu, Y.H.; Wieringa, F.; Van Hoof, C.; Torfs, T. Vital-sign monitoring and spatial tracking of multiple people using a contactless radar-based sensor. Nat. Electron. 2019, 2, 252–262. [Google Scholar] [CrossRef]

- Joshi, R.; Bierling, B.; Feijs, L.; van Pul, C.; Andriessen, P. Monitoring the respiratory rate of preterm infants using an ultrathin film sensor embedded in the bedding: A comparative feasibility study. Physiol. Meas. 2019, 40, 045003. [Google Scholar] [CrossRef]

- Bu, N.; Ueno, N.; Fukuda, O. Monitoring of respiration and heartbeat during sleep using a flexible piezoelectric film sensor and empirical mode decomposition. In Proceedings of the 2007 29th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Lyon, France, 22–26 August 2007; pp. 1362–1366. [Google Scholar]

- Bekele, A.; Nizami, S.; Dosso, Y.S.; Aubertin, C.; Greenwood, K.; Harrold, J.; Green, J.R. Real-time neonatal respiratory rate estimation using a pressure-sensitive mat. In Proceedings of the 2018 IEEE International Symposium on Medical Measurements and Applications (MeMeA), Rome, Italy, 11–13 June 2018; pp. 1–5. [Google Scholar]

- Abbas, A.K.; Heimann, K.; Jergus, K.; Orlikowsky, T.; Leonhardt, S. Neonatal non-contact respiratory monitoring based on real-time infrared thermography. Biomed. Eng. Online 2011, 10, 93. [Google Scholar] [CrossRef]

- Pereira, C.B.; Yu, X.; Czaplik, M.; Rossaint, R.; Blazek, V.; Leonhardt, S. Remote monitoring of breathing dynamics using infrared thermography. Biomed. Opt. Express 2015, 6, 4378–4394. [Google Scholar] [CrossRef]

- Scebba, G.; Da Poian, G.; Karlen, W. Multispectral Video Fusion for Non-contact Monitoring of Respiratory Rate and Apnea. IEEE Trans. Biomed. Eng. 2021, 68, 350–359. [Google Scholar] [CrossRef]

- Eichenwald, E.C. Apnea of prematurity. Pediatrics 2016, 137, e20153757. [Google Scholar] [CrossRef]

- Shao, D.; Liu, C.; Tsow, F. Noncontact Physiological Measurement Using a Camera: A Technical Review and Future Directions. ACS Sens. 2021, 6, 321–334. [Google Scholar] [CrossRef] [PubMed]

- Massaroni, C.; Nicolò, A.; Sacchetti, M.; Schena, E. Contactless Methods For Measuring Respiratory Rate: A Review. IEEE Sens. J. 2020. to be published. [Google Scholar] [CrossRef]

- Mercuri, E.; Pera, M.C.; Brogna, C. Neonatal hypotonia and neuromuscular conditions. In Handbook of Clinical Neurology; Elsevier: Amsterdam, The Netherlands, 2019; Volume 162, pp. 435–448. [Google Scholar]

- Mizrahi, E.M.; Clancy, R.R. Neonatal seizures: Early-onset seizure syndromes and their consequences for development. Ment. Retard. Dev. Disabil. Res. Rev. 2000, 6, 229–241. [Google Scholar] [CrossRef]

- Lim, K.; Jiang, H.; Marshall, A.P.; Salmon, B.; Gale, T.J.; Dargaville, P.A. Predicting apnoeic events in preterm infants. Front. Pediatr. 2020, 8, 570. [Google Scholar] [CrossRef]

- Joshi, R.; Kommers, D.; Oosterwijk, L.; Feijs, L.; Van Pul, C.; Andriessen, P. Predicting Neonatal Sepsis Using Features of Heart Rate Variability, Respiratory Characteristics, and ECG-Derived Estimates of Infant Motion. IEEE J. Biomed. Health Inform. 2019, 24, 681–692. [Google Scholar] [CrossRef]

- Alinovi, D.; Ferrari, G.; Pisani, F.; Raheli, R. Respiratory rate monitoring by maximum likelihood video processing. In Proceedings of the 2016 IEEE International Symposium on Signal Processing and Information Technology (ISSPIT), Limassol, Cyprus, 12–14 December 2016; pp. 172–177. [Google Scholar]

- Janssen, R.; Wang, W.; Moço, A.; de Haan, G. Video-based respiration monitoring with automatic region of interest detection. Physiol. Meas. 2015, 37, 100–114. [Google Scholar] [CrossRef]

- Villarroel, M.; Chaichulee, S.; Jorge, J.; Davis, S.; Green, G.; Arteta, C.; Zisserman, A.; McCormick, K.; Watkinson, P.; Tarassenko, L. Non-contact physiological monitoring of preterm infants in the Neonatal Intensive Care Unit. NPJ Digit. Med. 2019, 2, 1–18. [Google Scholar] [CrossRef] [PubMed]

- Lorato, I.; Stuijk, S.; Meftah, M.; Kommers, D.; Andriessen, P.; van Pul, C.; de Haan, G. Multi-camera infrared thermography for infant respiration monitoring. Biomed. Opt. Express 2020, 11, 4848–4861. [Google Scholar] [CrossRef]

- Sun, Y.; Kommers, D.; Wang, W.; Joshi, R.; Shan, C.; Tan, T.; Aarts, R.M.; van Pul, C.; Andriessen, P.; de With, P.H. Automatic and continuous discomfort detection for premature infants in a NICU using video-based motion analysis. In Proceedings of the 2019 41st Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Berlin, Germany, 23–27 July 2019; pp. 5995–5999. [Google Scholar]

- Hafström, M.; Lundquist, C.; Lindecrantz, K.; Larsson, K.; Kjellmer, I. Recording non-nutritive sucking in the neonate. Description of an automatized system for analysis. Acta Paediatr. 1997, 86, 82–90. [Google Scholar] [CrossRef] [PubMed]

- Pineda, R.; Dewey, K.; Jacobsen, A.; Smith, J. Non-nutritive sucking in the preterm infant. Am. J. Perinatol. 2019, 36, 268–276. [Google Scholar] [PubMed]

- Patel, M.; Mohr, M.; Lake, D.; Delos, J.; Moorman, J.R.; Sinkin, R.A.; Kattwinkel, J.; Fairchild, K. Clinical associations with immature breathing in preterm infants: Part 2—periodic breathing. Pediatr. Res. 2016, 80, 28–34. [Google Scholar] [CrossRef] [PubMed]

- Mohr, M.A.; Fairchild, K.D.; Patel, M.; Sinkin, R.A.; Clark, M.T.; Moorman, J.R.; Lake, D.E.; Kattwinkel, J.; Delos, J.B. Quantification of periodic breathing in premature infants. Physiol. Meas. 2015, 36, 1415–1427. [Google Scholar] [CrossRef] [PubMed]

- Lorato, I.; Stuijk, S.; Meftah, M.; Verkruijsse, W.; de Haan, G. Camera-Based On-Line Short Cessation of Breathing Detection. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision Workshop (ICCVW), Seoul, Korea, 27–28 October 2019; pp. 1656–1663. [Google Scholar]

- Lee, H.; Rusin, C.G.; Lake, D.E.; Clark, M.T.; Guin, L.; Smoot, T.J.; Paget-Brown, A.O.; Vergales, B.D.; Kattwinkel, J.; Moorman, J.R.; et al. A new algorithm for detecting central apnea in neonates. Physiol. Meas. 2011, 33, 1–17. [Google Scholar] [CrossRef] [PubMed]

| Infant | Video Type | Gestational Age (weeks + days) | Postnatal Age (days) | Sleeping Position | Duration (hours) | Set |

|---|---|---|---|---|---|---|

| 1 | Thermal | 26w 4d | 59 | Supine | 2.98 | T&T |

| 2 | Thermal | 38w 5d | 3 | Supine | 2.74 | T&T |

| 3 | Thermal | 34w 1d | 16 | Supine | 2.93 | T&T |

| 4 | Thermal | 26w 3d | 59 | Prone | 3.16 | T&T |

| 5 | Thermal | 39w | 2 | Lateral | 3.05 | T&T |

| 6 | Thermal | 40w 1d | 6 | Supine | 2.95 | T&T |

| 7 | Thermal | 40w 2d | 1 | Lateral | 0.92 | T&T |

| 8 | RGB | 36w | 47 | Supine | 0.30 | T&T |

| 9 | RGB | 30w | 34 | Supine and Lateral | 0.57 | T&T |

| 10 | Thermal | 26w 4d | 77 | Supine | 2.94 | V |

| 11 | Thermal | 26w 4d | 77 | Supine | 2.97 | V |

| 12 | Thermal | 33w 4d | 5 | Supine | 2.97 | V |

| 13 | Thermal | 34w 2d | 9 | Supine | 2.87 | V |

| 14 | Thermal | 32w 2d | 11 | Supine | 2.96 | V |

| 15 | Thermal | 35w 1d | 8 | Supine | 2.94 | V |

| 16 | Thermal | 38w 1d | 2 | Supine | 3.00 | V |

| 17 | Thermal | 27w 5d | 16 | Supine | 2.96 | V |

| Annotation Labels | Subcategories and Details | |

|---|---|---|

| Included | (i) Infant activity |

|

| (ii) NNS | - | |

| Excluded | (iii) Interventions | includes both parents and caregivers interventions |

| (iv) Other |

|

| Accuracy | Balanced Accuracy | Sensitivity | Specificity | |

|---|---|---|---|---|

| Training and testing set | 88.22% | 84.94% | 80.30% | 89.58% |

| Validation Set | 82.52% | 77.89% | 66.85% | 88.93% |

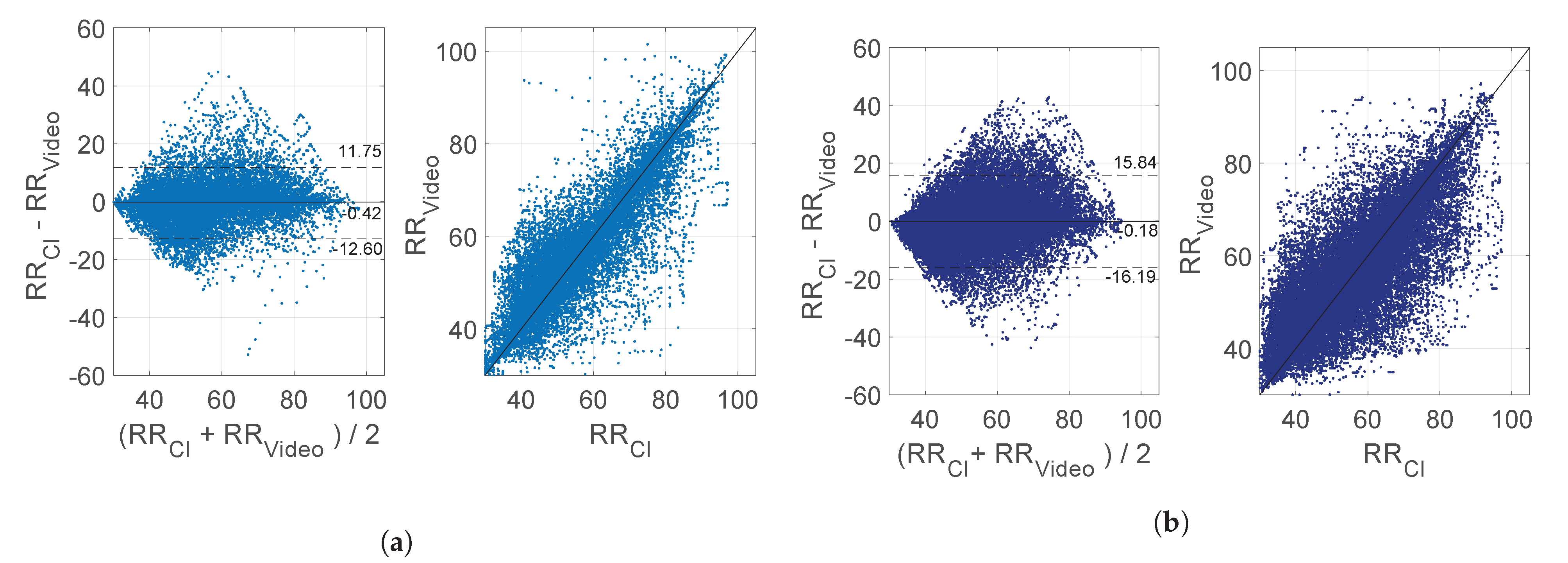

| Previous Version of Method [26] | Current Version of the Method | |||

|---|---|---|---|---|

| Usable | NNS Only | Usable | NNS Only | |

| MAE (BPM) | 4.54 ± 1.82 | 9.39 ± 3.68 | 3.55 ± 1.63 | 7.11 ± 4.15 |

| PT | 68.59% ± 13.29% | 4.59% ± 6.93% | 68.59% ± 13.29% | 4.59% ± 6.93% |

| Infant | Usable Excluding NNS | Type 2 motion Only | Still Only | ||||||

|---|---|---|---|---|---|---|---|---|---|

| MAE | RMSE | PR | PT | MAE | PT | MAE | PT | ||

| Training and testing | 1 | 1.86 | 3.34 | 83.61% | 70.38% | 1.57 | 27.92% | 1.51 | 34.61% |

| 2 | 2.87 | 3.97 | 73.71% | 40.60% | 2.56 | 20.90% | 2.64 | 13.02% | |

| 3 | 6.30 | 8.09 | 39.44% | 67.83% | 6.32 | 39.23% | 6.28 | 24.38% | |

| 4 | 4.43 | 6.21 | 60.16% | 72.75% | 4.99 | 44.09% | 2.49 | 20.39% | |

| 5 | 5.04 | 7.61 | 56.44% | 40.22% | 4.84 | 29.24% | 2.24 | 5.35% | |

| 6 | 2.97 | 4.73 | 71.34% | 66.74% | 3.70 | 29.96% | 1.94 | 31.69% | |

| 7 | 2.80 | 4.15 | 72.08% | 46.16% | 2.57 | 30.28% | 0.70 | 4.61% | |

| 8 | 1.89 | 3.40 | 88.63% | 89.71% | 1.76 | 11.47% | 1.91 | 77.84% | |

| 9 | 1.62 | 2.70 | 85.55% | 81.60% | 2.88 | 24.16% | 1.08 | 56.76% | |

| Average | 3.31 | 4.91 | 70.11% | 64.00% | 3.47 | 28.58% | 2.31 | 29.85% | |

| ± sd | ± 1.61 | ± 1.94 | ± 15.84% | ± 17.82% | ± 1.62 | ± 9.56% | ± 1.62 | ± 24.22% | |

| Validation | 10 | 4.46 | 6.62 | 61.41% | 63.62% | 5.52 | 34.40% | 2.44 | 22.78% |

| 11 | 3.79 | 5.54 | 64.96% | 55.55% | 4.01 | 34.62% | 2.27 | 12.29% | |

| 12 | 6.23 | 7.98 | 38.98% | 68.20% | 5.98 | 33.70% | 6.60 | 23.35% | |

| 13 | 6.29 | 8.51 | 44.00% | 69.53% | 6.30 | 51.04% | 3.59 | 6.13% | |

| 14 | 6.89 | 9.56 | 47.37% | 73.38% | 7.35 | 44.73% | 4.58 | 18.00% | |

| 15 | 4.75 | 6.65 | 54.11% | 78.86% | 4.83 | 42.08% | 4.39 | 26.81% | |

| 16 | 4.09 | 5.73 | 60.97% | 76.84% | 4.39 | 28.92% | 3.21 | 30.73% | |

| 17 | 6.40 | 8.78 | 47.79% | 71.22% | 7.64 | 40.14% | 3.15 | 19.60% | |

| Average | 5.36 | 7.42 | 52.45% | 69.65 % | 5.75 | 38.71% | 3.78 | 19.96% | |

| ± sd | ± 1.21 | ± 1.49 | ± 9.35% | ± 7.47% | ± 1.32 | ± 7.14% | ± 1.40 | ± 7.90% | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lorato, I.; Stuijk, S.; Meftah, M.; Kommers, D.; Andriessen, P.; van Pul, C.; de Haan, G. Towards Continuous Camera-Based Respiration Monitoring in Infants. Sensors 2021, 21, 2268. https://doi.org/10.3390/s21072268

Lorato I, Stuijk S, Meftah M, Kommers D, Andriessen P, van Pul C, de Haan G. Towards Continuous Camera-Based Respiration Monitoring in Infants. Sensors. 2021; 21(7):2268. https://doi.org/10.3390/s21072268

Chicago/Turabian StyleLorato, Ilde, Sander Stuijk, Mohammed Meftah, Deedee Kommers, Peter Andriessen, Carola van Pul, and Gerard de Haan. 2021. "Towards Continuous Camera-Based Respiration Monitoring in Infants" Sensors 21, no. 7: 2268. https://doi.org/10.3390/s21072268

APA StyleLorato, I., Stuijk, S., Meftah, M., Kommers, D., Andriessen, P., van Pul, C., & de Haan, G. (2021). Towards Continuous Camera-Based Respiration Monitoring in Infants. Sensors, 21(7), 2268. https://doi.org/10.3390/s21072268